Add files using upload-large-folder tool

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- ACertainThing_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +93 -0

- BakLLaVA-1_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +43 -0

- BioGPT-Large_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +181 -0

- BiomedNLP-BiomedBERT-base-uncased-abstract-fulltext_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +0 -0

- CLIP-GmP-ViT-L-14_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +127 -0

- CausalLM-14B-GGUF_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +459 -0

- ClinicalBERT_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +0 -0

- CodeLlama-7b-hf_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +0 -0

- ControlNetMediaPipeFace_finetunes_20250425_143346.csv_finetunes_20250425_143346.csv +213 -0

- DCLM-7B_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +294 -0

- GOT-OCR2_0_finetunes_20250424_193500.csv_finetunes_20250424_193500.csv +436 -0

- Ghibli-Diffusion_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +99 -0

- InternVL-Chat-V1-5_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +655 -0

- Juggernaut-XL-v9_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +69 -0

- LWM-Text-Chat-1M_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +128 -0

- Llama-2-13B-chat-GGML_finetunes_20250425_125929.csv_finetunes_20250425_125929.csv +299 -0

- Llama-3_1-Nemotron-Ultra-253B-v1_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +369 -0

- Llama3-8B-Chinese-Chat_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +0 -0

- Llama3-8B-Chinese-Chat_finetunes_20250425_125929.csv_finetunes_20250425_125929.csv +0 -0

- MiniCPM-V-2_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +390 -0

- MiniCPM-V_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +189 -0

- MistoLine_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +172 -0

- NV-Embed-v2_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +0 -0

- OpenELM_finetunes_20250424_193500.csv_finetunes_20250424_193500.csv +286 -0

- Phi-3-medium-128k-instruct_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +523 -0

- QwQ-32B-Preview_finetunes_20250424_193500.csv_finetunes_20250424_193500.csv +0 -0

- Qwen2-72B_finetunes_20250426_215237.csv_finetunes_20250426_215237.csv +0 -0

- Qwen2-7B-Instruct_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +0 -0

- ReaderLM-v2_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +305 -0

- SD-Silicon_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +90 -0

- Step-Audio-TTS-3B_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +164 -0

- Tencent-Hunyuan-Large_finetunes_20250425_143346.csv_finetunes_20250425_143346.csv +387 -0

- Tifa-Deepsex-14b-CoT-GGUF-Q4_finetunes_20250425_041137.csv_finetunes_20250425_041137.csv +217 -0

- TinyR1-32B-Preview_finetunes_20250426_121513.csv_finetunes_20250426_121513.csv +252 -0

- TripoSR_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +57 -0

- UI-TARS-7B-SFT_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +197 -0

- WanVideo_comfy_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +8 -0

- WhiteRabbitNeo-13B-v1_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +522 -0

- WizardLM-13B-Uncensored_finetunes_20250425_143346.csv_finetunes_20250425_143346.csv +239 -0

- WizardLM-2-8x22B_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +0 -0

- XTTS-v2_finetunes_20250424_150612.csv_finetunes_20250424_150612.csv +0 -0

- all-MiniLM-L12-v2_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +0 -0

- alpaca-lora-7b_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +34 -0

- blip-image-captioning-large_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +1130 -0

- bloom_finetunes_20250422_180448.csv +0 -0

- canary-1b-flash_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +641 -0

- chatgpt_paraphraser_on_T5_base_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +333 -0

- chilloutmix_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +63 -0

- deberta-v3-large_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +0 -0

- fasttext-language-identification_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +143 -0

ACertainThing_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv

ADDED

|

@@ -0,0 +1,93 @@

|

|

|

|

|

|

|

|

|

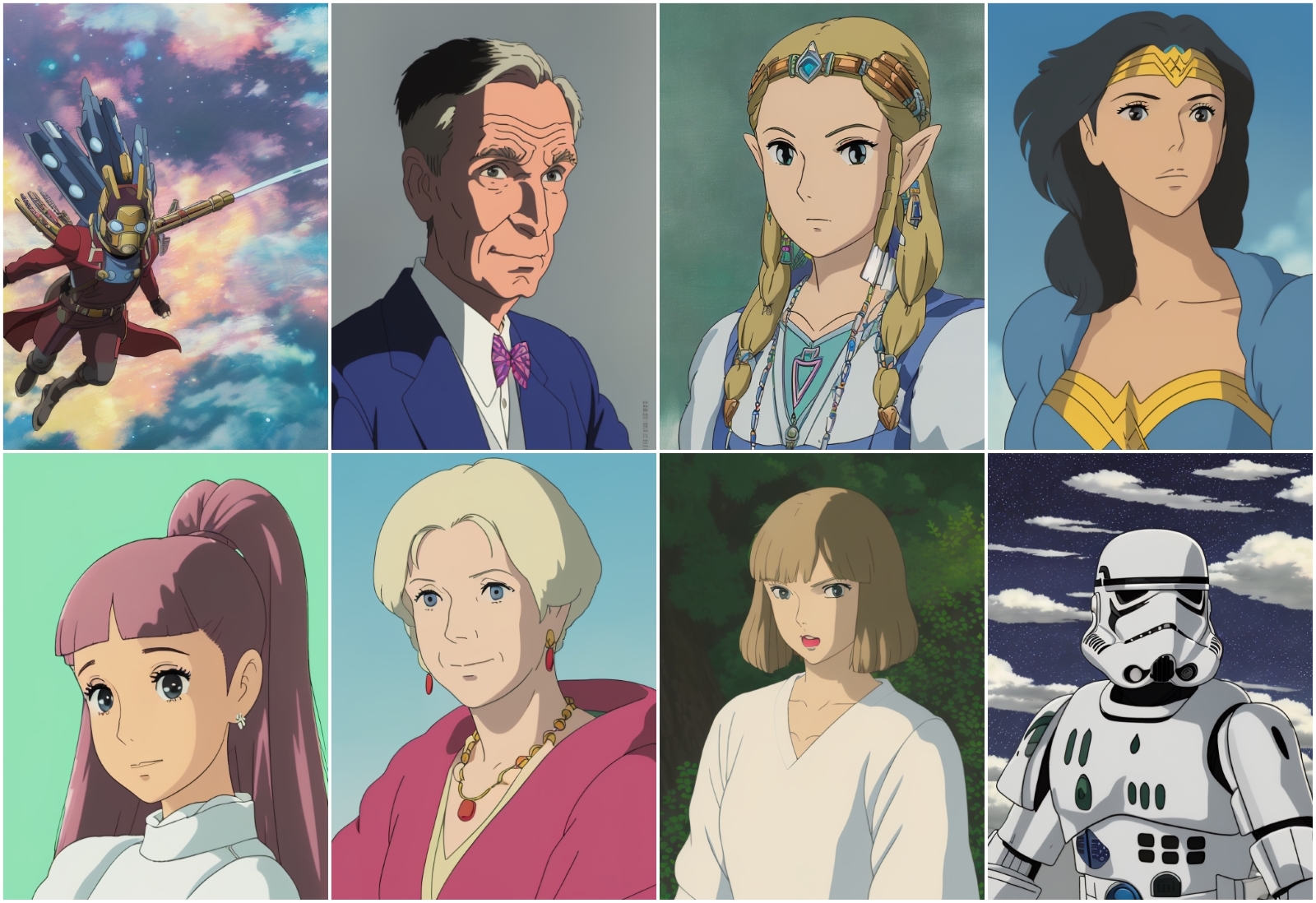

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

JosephusCheung/ACertainThing,"---

|

| 3 |

+

language:

|

| 4 |

+

- en

|

| 5 |

+

license: creativeml-openrail-m

|

| 6 |

+

tags:

|

| 7 |

+

- stable-diffusion

|

| 8 |

+

- stable-diffusion-diffusers

|

| 9 |

+

- text-to-image

|

| 10 |

+

- diffusers

|

| 11 |

+

inference: true

|

| 12 |

+

widget:

|

| 13 |

+

- text: ""masterpiece, best quality, 1girl, brown hair, green eyes, colorful, autumn, cumulonimbus clouds, lighting, blue sky, falling leaves, garden""

|

| 14 |

+

example_title: ""example 1girl""

|

| 15 |

+

- text: ""masterpiece, best quality, 1boy, brown hair, green eyes, colorful, autumn, cumulonimbus clouds, lighting, blue sky, falling leaves, garden""

|

| 16 |

+

example_title: ""example 1boy""

|

| 17 |

+

---

|

| 18 |

+

|

| 19 |

+

# ACertainThing

|

| 20 |

+

|

| 21 |

+

**Try full functions with Google Colab free T4** [](https://colab.research.google.com/drive/1gwJViXR0UxoXx01qiU6uTSEKGjTagOgp?usp=sharing)

|

| 22 |

+

|

| 23 |

+

Anything3.0 is an overfitted model that takes liberties when it shouldn't be generating human images and certain details. However, the community has given it a high rating, and I believe that is because many lazy people who don't know how to write a prompt can use this overfitted model to generate high-quality images even if their prompts are poorly written.

|

| 24 |

+

|

| 25 |

+

Here is a ACertain version of Anything3.0, made with Dreambooth (idea of [LoRA](https://arxiv.org/abs/2106.09685) integrated), initialized with [ACertainModel](https://huggingface.co/JosephusCheung/ACertainModel).

|

| 26 |

+

|

| 27 |

+

Although this model may produce better results for image generation, it is built on two major problems. Firstly, it does not always stay true to your prompts; it adds irrelevant details, and sometimes these details are highly homogenized. Secondly, it is an unstable, overfitted model, similar to Anything3.0, and is not suitable for any form of further training. As far as I know, Anything3.0 is obtained by merging several models in just the right way, but it is itself an overfitted model with defects in both its saturation and configuration. However, as I mentioned earlier, it can make even poorly written prompts produce good output images, which leads many lazy people who are incapable of writing good prompts to quickly surpass those who study the writing of prompts carefully. Despite these problems, I still want to release an extended version of the model that caters to the preferences of many people in the community. I hope would you like it.

|

| 28 |

+

|

| 29 |

+

**In my personal view, I oppose all forms of model merging as it has no scientific principle and is nothing but a waste of time. It is a desire to get results without putting in the effort. That is why I do not like Anything3.0, or this model that is being released. But I respect the choices and preferences of the community, and I hope that you can also respect and understand my thoughts.**

|

| 30 |

+

|

| 31 |

+

If you want your prompts to be accurately output and want to learn the correct skills for using prompts, it is recommended that you use the more balanced model [ACertainModel](https://huggingface.co/JosephusCheung/ACertainModel).

|

| 32 |

+

|

| 33 |

+

e.g. **_masterpiece, best quality, 1girl, brown hair, green eyes, colorful, autumn, cumulonimbus clouds, lighting, blue sky, falling leaves, garden_**

|

| 34 |

+

|

| 35 |

+

## About online preview with Hosted inference API, also generation with this model

|

| 36 |

+

|

| 37 |

+

Parameters are not allowed to be modified, as it seems that it is generated with *Clip skip: 1*, for better performance, it is strongly recommended to use *Clip skip: 2* instead.

|

| 38 |

+

|

| 39 |

+

Here is an example of inference settings, if it is applicable with you on your own server: *Steps: 28, Sampler: Euler a, CFG scale: 11, Clip skip: 2*.

|

| 40 |

+

|

| 41 |

+

## 🧨 Diffusers

|

| 42 |

+

|

| 43 |

+

This model can be used just like any other Stable Diffusion model. For more information,

|

| 44 |

+

please have a look at the [Stable Diffusion](https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion).

|

| 45 |

+

|

| 46 |

+

You can also export the model to [ONNX](https://huggingface.co/docs/diffusers/optimization/onnx), [MPS](https://huggingface.co/docs/diffusers/optimization/mps) and/or FLAX/JAX.

|

| 47 |

+

|

| 48 |

+

```python

|

| 49 |

+

from diffusers import StableDiffusionPipeline

|

| 50 |

+

import torch

|

| 51 |

+

|

| 52 |

+

model_id = ""JosephusCheung/ACertainThing""

|

| 53 |

+

branch_name= ""main""

|

| 54 |

+

|

| 55 |

+

pipe = StableDiffusionPipeline.from_pretrained(model_id, revision=branch_name, torch_dtype=torch.float16)

|

| 56 |

+

pipe = pipe.to(""cuda"")

|

| 57 |

+

|

| 58 |

+

prompt = ""pikachu""

|

| 59 |

+

image = pipe(prompt).images[0]

|

| 60 |

+

|

| 61 |

+

image.save(""./pikachu.png"")

|

| 62 |

+

```

|

| 63 |

+

|

| 64 |

+

## Examples

|

| 65 |

+

|

| 66 |

+

Below are some examples of images generated using this model, with better performance on framing and hand gestures, as well as moving objects, comparing to other analogues:

|

| 67 |

+

|

| 68 |

+

**Anime Girl:**

|

| 69 |

+

|

| 70 |

+

```

|

| 71 |

+

1girl, brown hair, green eyes, colorful, autumn, cumulonimbus clouds, lighting, blue sky, falling leaves, garden

|

| 72 |

+

Steps: 28, Sampler: Euler a, CFG scale: 11, Seed: 114514, Clip skip: 2

|

| 73 |

+

```

|

| 74 |

+

**Anime Boy:**

|

| 75 |

+

|

| 76 |

+

```

|

| 77 |

+

1boy, brown hair, green eyes, colorful, autumn, cumulonimbus clouds, lighting, blue sky, falling leaves, garden

|

| 78 |

+

Steps: 28, Sampler: Euler a, CFG scale: 11, Seed: 114514, Clip skip: 2

|

| 79 |

+

```

|

| 80 |

+

|

| 81 |

+

## License

|

| 82 |

+

|

| 83 |

+

This model is open access and available to all, with a CreativeML OpenRAIL-M license further specifying rights and usage.

|

| 84 |

+

The CreativeML OpenRAIL License specifies:

|

| 85 |

+

|

| 86 |

+

1. You can't use the model to deliberately produce nor share illegal or harmful outputs or content

|

| 87 |

+

2. The authors claims no rights on the outputs you generate, you are free to use them and are accountable for their use which must not go against the provisions set in the license

|

| 88 |

+

3. You may re-distribute the weights and use the model commercially and/or as a service. If you do, please be aware you have to include the same use restrictions as the ones in the license and share a copy of the CreativeML OpenRAIL-M to all your users (please read the license entirely and carefully)

|

| 89 |

+

[Please read the full license here](https://huggingface.co/spaces/CompVis/stable-diffusion-license)

|

| 90 |

+

|

| 91 |

+

## Is it a NovelAI based model? What is the relationship with SD1.2 and SD1.4?

|

| 92 |

+

|

| 93 |

+

See [ASimilarityCalculatior](https://huggingface.co/JosephusCheung/ASimilarityCalculatior)","{""id"": ""JosephusCheung/ACertainThing"", ""author"": ""JosephusCheung"", ""sha"": ""f29dbc8b2737fa20287a7ded5c47973619b5c012"", ""last_modified"": ""2022-12-20 03:16:02+00:00"", ""created_at"": ""2022-12-13 18:05:27+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 530, ""downloads_all_time"": null, ""likes"": 188, ""library_name"": ""diffusers"", ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""diffusers"", ""stable-diffusion"", ""stable-diffusion-diffusers"", ""text-to-image"", ""en"", ""arxiv:2106.09685"", ""doi:10.57967/hf/0197"", ""license:creativeml-openrail-m"", ""autotrain_compatible"", ""endpoints_compatible"", ""diffusers:StableDiffusionPipeline"", ""region:us""], ""pipeline_tag"": ""text-to-image"", ""mask_token"": null, ""trending_score"": null, ""card_data"": ""language:\n- en\nlicense: creativeml-openrail-m\ntags:\n- stable-diffusion\n- stable-diffusion-diffusers\n- text-to-image\n- diffusers\ninference: true\nwidget:\n- text: masterpiece, best quality, 1girl, brown hair, green eyes, colorful, autumn,\n cumulonimbus clouds, lighting, blue sky, falling leaves, garden\n example_title: example 1girl\n- text: masterpiece, best quality, 1boy, brown hair, green eyes, colorful, autumn,\n cumulonimbus clouds, lighting, blue sky, falling leaves, garden\n example_title: example 1boy"", ""widget_data"": [{""text"": ""masterpiece, best quality, 1girl, brown hair, green eyes, colorful, autumn, cumulonimbus clouds, lighting, blue sky, falling leaves, garden"", ""example_title"": ""example 1girl""}, {""text"": ""masterpiece, best quality, 1boy, brown hair, green eyes, colorful, autumn, cumulonimbus clouds, lighting, blue sky, falling leaves, garden"", ""example_title"": ""example 1boy""}], ""model_index"": null, ""config"": {""diffusers"": {""_class_name"": ""StableDiffusionPipeline""}}, ""transformers_info"": null, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='ACertainThing-half.ckpt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='ACertainThing.ckpt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='README.md', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='feature_extractor/preprocessor_config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model_index.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='safety_checker/config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='safety_checker/pytorch_model.bin', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='samples/acth-sample-1boy.png', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='samples/acth-sample-1girl.png', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='samples/anything3-sample-1boy.png', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='samples/anything3-sample-1girl.png', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='scheduler/scheduler_config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='text_encoder/config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='text_encoder/pytorch_model.bin', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer/merges.txt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer/special_tokens_map.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer/tokenizer_config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer/vocab.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='unet/config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='unet/diffusion_pytorch_model.bin', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='vae/config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='vae/diffusion_pytorch_model.bin', size=None, blob_id=None, lfs=None)""], ""spaces"": [""Yntec/ToyWorld"", ""Yntec/PrintingPress"", ""Nymbo/image_gen_supaqueue"", ""ennov8ion/3dart-Models"", ""phenixrhyder/NSFW-ToyWorld"", ""Yntec/blitz_diffusion"", ""sanaweb/text-to-image"", ""Vedits/6x_Image_diffusion"", ""John6666/Diffusion80XX4sg"", ""ennov8ion/comicbook-models"", ""John6666/PrintingPress4"", ""PeepDaSlan9/B2BMGMT_Diffusion60XX"", ""Daniela-C/6x_Image_diffusion"", ""phenixrhyder/PrintingPress"", ""John6666/hfd_test_nostopbutton"", ""mindtube/Diffusion50XX"", ""TheKitten/Fast-Images-Creature"", ""Nymbo/Diffusion80XX4sg"", ""kaleidoskop-hug/PrintingPress"", ""ennov8ion/stablediffusion-models"", ""John6666/ToyWorld4"", ""grzegorz2047/fast_diffusion"", ""Alfasign/dIFFU"", ""Nymbo/PrintingPress"", ""Rifd/Sdallmodels"", ""John6666/Diffusion80XX4g"", ""NativeAngels/HuggingfaceDiffusion"", ""ennov8ion/Scifi-Models"", ""ennov8ion/semirealistic-models"", ""ennov8ion/dreamlike-models"", ""ennov8ion/FantasyArt-Models"", ""noes14155/img_All_models"", ""ennov8ion/500models"", ""AnimeStudio/anime-models"", ""John6666/Diffusion80XX4"", ""K00B404/HuggingfaceDiffusion_custom"", ""John6666/blitz_diffusion4"", ""John6666/blitz_diffusion_builtin"", ""RhythmRemix14/PrintingPressDx"", ""sohoso/PrintingPress"", ""NativeAngels/ToyWorld"", ""mindtube/maximum_multiplier_places"", ""animeartstudio/AnimeArtmodels2"", ""animeartstudio/AnimeModels"", ""Binettebob22/fast_diffusion2"", ""pikto/Elite-Scifi-Models"", ""PixelistStudio/3dart-Models"", ""devmiles/zexxiai"", ""Nymbo/Diffusion60XX"", ""TheKitten/Images"", ""ennov8ion/anime-models"", ""jordonpeter01/Diffusion70"", ""ennov8ion/Landscapes-models"", ""sohoso/anime348756"", ""ucmisanddisinfo/thisApp"", ""johann22/chat-diffusion"", ""K00B404/generate_many_models"", ""manivannan7gp/Words2Image"", ""ennov8ion/art-models"", ""ennov8ion/photo-models"", ""ennov8ion/art-multi"", ""vih-v/x_mod"", ""NativeAngels/blitz_diffusion"", ""NativeAngels/PrintingPress4"", ""NativeAngels/PrintingPress"", ""dehua68/ToyWorld"", ""burman-ai/Printing-Press"", ""sk16er/ghibli_creator"", ""sheldon/JosephusCheung-ACertainThing"", ""vanessa9178/AI-Generator"", ""ennov8ion/abstractart-models"", ""ennov8ion/Scifiart-Models"", ""ennov8ion/interior-models"", ""ennov8ion/room-interior-models"", ""animeartstudio/AnimeArtModels1"", ""Yntec/top_100_diffusion"", ""AIlexDev/Diffusion60XX"", ""flatindo/all-models"", ""flatindo/all-models-v1"", ""flatindo/img_All_models"", ""johann22/chat-diffusion-describe"", ""wideprism/Ultimate-Model-Collection"", ""GAIneZis/FantasyArt-Models"", ""TheMaisk/Einfach.ImageAI"", ""ennov8ion/picasso-diffusion"", ""K00B404/stablediffusion-portal"", ""ennov8ion/anime-new-models"", ""ennov8ion/anime-multi-new-models"", ""ennov8ion/photo-multi"", ""ennov8ion/anime-multi"", ""Ashrafb/comicbook-models"", ""sohoso/architecture"", ""K00B404/image_gen_supaqueue_game_assets"", ""GhadaSaylami/text-to-image"", ""Geek7/mdztxi"", ""Geek7/mdztxi2"", ""NativeAngels/Diffusion80XX4sg"", ""GandalfTheBlack/PrintingPressDx"", ""GandalfTheBlack/IMG2IMG-695models"", ""tejani/PrintingPress""], ""safetensors"": null, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2022-12-20 03:16:02+00:00"", ""cardData"": ""language:\n- en\nlicense: creativeml-openrail-m\ntags:\n- stable-diffusion\n- stable-diffusion-diffusers\n- text-to-image\n- diffusers\ninference: true\nwidget:\n- text: masterpiece, best quality, 1girl, brown hair, green eyes, colorful, autumn,\n cumulonimbus clouds, lighting, blue sky, falling leaves, garden\n example_title: example 1girl\n- text: masterpiece, best quality, 1boy, brown hair, green eyes, colorful, autumn,\n cumulonimbus clouds, lighting, blue sky, falling leaves, garden\n example_title: example 1boy"", ""transformersInfo"": null, ""_id"": ""6398bee79d84601abcd5f0fb"", ""modelId"": ""JosephusCheung/ACertainThing"", ""usedStorage"": 12711483823}",0,,0,,0,,0,,0,"CompVis/stable-diffusion-license, Daniela-C/6x_Image_diffusion, John6666/Diffusion80XX4sg, John6666/PrintingPress4, John6666/ToyWorld4, John6666/hfd_test_nostopbutton, Nymbo/image_gen_supaqueue, PeepDaSlan9/B2BMGMT_Diffusion60XX, Yntec/PrintingPress, Yntec/ToyWorld, Yntec/blitz_diffusion, huggingface/InferenceSupport/discussions/new?title=JosephusCheung/ACertainThing&description=React%20to%20this%20comment%20with%20an%20emoji%20to%20vote%20for%20%5BJosephusCheung%2FACertainThing%5D(%2FJosephusCheung%2FACertainThing)%20to%20be%20supported%20by%20Inference%20Providers.%0A%0A(optional)%20Which%20providers%20are%20you%20interested%20in%3F%20(Novita%2C%20Hyperbolic%2C%20Together%E2%80%A6)%0A, kaleidoskop-hug/PrintingPress, phenixrhyder/NSFW-ToyWorld",14

|

BakLLaVA-1_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv

ADDED

|

@@ -0,0 +1,43 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

SkunkworksAI/BakLLaVA-1,"---

|

| 3 |

+

datasets:

|

| 4 |

+

- SkunkworksAI/BakLLaVA-1-FT

|

| 5 |

+

language:

|

| 6 |

+

- en

|

| 7 |

+

license: apache-2.0

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

<p><h1> BakLLaVA-1 </h1></p>

|

| 11 |

+

|

| 12 |

+

Thank you to our compute sponsors Together Compute (www.together.ai).

|

| 13 |

+

In collaboration with **Ontocord** (www.ontocord.ai) and **LAION** (www.laion.ai).

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

|

| 18 |

+

BakLLaVA 1 is a Mistral 7B base augmented with the LLaVA 1.5 architecture. In this first version, we showcase that a Mistral 7B base outperforms Llama 2 13B on several benchmarks.

|

| 19 |

+

You can run BakLLaVA-1 on our repo. We are currently updating it to make it easier for you to finetune and inference. (https://github.com/SkunkworksAI/BakLLaVA).

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

Note: BakLLaVA-1 is fully open-source but was trained on certain data that includes LLaVA's corpus which is not commercially permissive. We will fix this in the upcoming release.

|

| 23 |

+

|

| 24 |

+

|

| 25 |

+

BakLLaVA 2 is cooking with a significantly larger (commercially viable) dataset and a novel architecture that expands beyond the current LLaVA method. BakLLaVA-2 will do away with the restrictions of BakLLaVA-1.

|

| 26 |

+

|

| 27 |

+

|

| 28 |

+

# Evaluations

|

| 29 |

+

|

| 30 |

+

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

# Training dataset

|

| 34 |

+

|

| 35 |

+

- 558K filtered image-text pairs from LAION/CC/SBU, captioned by BLIP.

|

| 36 |

+

- 158K GPT-generated multimodal instruction-following data.

|

| 37 |

+

- 450K academic-task-oriented VQA data mixture.

|

| 38 |

+

- 40K ShareGPT data.

|

| 39 |

+

- Additional private data (permissive)

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

|

| 43 |

+

","{""id"": ""SkunkworksAI/BakLLaVA-1"", ""author"": ""SkunkworksAI"", ""sha"": ""d8e5fd9f1c8d021bdb9a1108b72d4bca8c32d61f"", ""last_modified"": ""2023-10-23 21:26:30+00:00"", ""created_at"": ""2023-10-12 13:12:21+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 133, ""downloads_all_time"": null, ""likes"": 379, ""library_name"": ""transformers"", ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""transformers"", ""pytorch"", ""llava_mistral"", ""text-generation"", ""en"", ""dataset:SkunkworksAI/BakLLaVA-1-FT"", ""license:apache-2.0"", ""autotrain_compatible"", ""endpoints_compatible"", ""region:us""], ""pipeline_tag"": ""text-generation"", ""mask_token"": null, ""trending_score"": null, ""card_data"": ""datasets:\n- SkunkworksAI/BakLLaVA-1-FT\nlanguage:\n- en\nlicense: apache-2.0"", ""widget_data"": [{""text"": ""My name is Julien and I like to""}, {""text"": ""I like traveling by train because""}, {""text"": ""Paris is an amazing place to visit,""}, {""text"": ""Once upon a time,""}], ""model_index"": null, ""config"": {""architectures"": [""LlavaMistralForCausalLM""], ""model_type"": ""llava_mistral"", ""tokenizer_config"": {""bos_token"": ""<s>"", ""eos_token"": ""</s>"", ""pad_token"": ""<unk>"", ""unk_token"": ""<unk>"", ""use_default_system_prompt"": true}}, ""transformers_info"": {""auto_model"": ""AutoModelForCausalLM"", ""custom_class"": null, ""pipeline_tag"": ""text-generation"", ""processor"": null}, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='README.md', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='added_tokens.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='generation_config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='pytorch_model-00001-of-00002.bin', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='pytorch_model-00002-of-00002.bin', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='pytorch_model.bin.index.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='special_tokens_map.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer.model', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer_config.json', size=None, blob_id=None, lfs=None)""], ""spaces"": [""limcheekin/BakLLaVA-1-GGUF"", ""QuantAsh/SkunkworksAI-BakLLaVA-1"", ""QuantAsh/SkunkworksAI-BakLLaVA-2"", ""SengTak/SkunkworksAI-BakLLaVA-1"", ""jasongoodison/SkunkworksAI-BakLLaVA-1"", ""scasella91/SkunkworksAI-BakLLaVA-1"", ""jerrybaba/moai-demo"", ""Meysam1986/SkunkworksAI-BakLLaVA-1"", ""jaemil/SkunkworksAI-BakLLaVA-1""], ""safetensors"": null, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2023-10-23 21:26:30+00:00"", ""cardData"": ""datasets:\n- SkunkworksAI/BakLLaVA-1-FT\nlanguage:\n- en\nlicense: apache-2.0"", ""transformersInfo"": {""auto_model"": ""AutoModelForCausalLM"", ""custom_class"": null, ""pipeline_tag"": ""text-generation"", ""processor"": null}, ""_id"": ""6527f0b551d1165df6760e12"", ""modelId"": ""SkunkworksAI/BakLLaVA-1"", ""usedStorage"": 196792796145}",0,,0,,0,,0,,0,"Meysam1986/SkunkworksAI-BakLLaVA-1, QuantAsh/SkunkworksAI-BakLLaVA-1, QuantAsh/SkunkworksAI-BakLLaVA-2, SengTak/SkunkworksAI-BakLLaVA-1, huggingface/InferenceSupport/discussions/new?title=SkunkworksAI/BakLLaVA-1&description=React%20to%20this%20comment%20with%20an%20emoji%20to%20vote%20for%20%5BSkunkworksAI%2FBakLLaVA-1%5D(%2FSkunkworksAI%2FBakLLaVA-1)%20to%20be%20supported%20by%20Inference%20Providers.%0A%0A(optional)%20Which%20providers%20are%20you%20interested%20in%3F%20(Novita%2C%20Hyperbolic%2C%20Together%E2%80%A6)%0A, jaemil/SkunkworksAI-BakLLaVA-1, jasongoodison/SkunkworksAI-BakLLaVA-1, jerrybaba/moai-demo, limcheekin/BakLLaVA-1-GGUF, scasella91/SkunkworksAI-BakLLaVA-1",10

|

BioGPT-Large_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv

ADDED

|

@@ -0,0 +1,181 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

microsoft/BioGPT-Large,"---

|

| 3 |

+

license: mit

|

| 4 |

+

datasets:

|

| 5 |

+

- pubmed

|

| 6 |

+

language:

|

| 7 |

+

- en

|

| 8 |

+

library_name: transformers

|

| 9 |

+

pipeline_tag: text-generation

|

| 10 |

+

tags:

|

| 11 |

+

- medical

|

| 12 |

+

widget:

|

| 13 |

+

- text: COVID-19 is

|

| 14 |

+

inference:

|

| 15 |

+

parameters:

|

| 16 |

+

max_new_tokens: 50

|

| 17 |

+

---

|

| 18 |

+

|

| 19 |

+

## BioGPT

|

| 20 |

+

|

| 21 |

+

Pre-trained language models have attracted increasing attention in the biomedical domain, inspired by their great success in the general natural language domain. Among the two main branches of pre-trained language models in the general language domain, i.e. BERT (and its variants) and GPT (and its variants), the first one has been extensively studied in the biomedical domain, such as BioBERT and PubMedBERT. While they have achieved great success on a variety of discriminative downstream biomedical tasks, the lack of generation ability constrains their application scope. In this paper, we propose BioGPT, a domain-specific generative Transformer language model pre-trained on large-scale biomedical literature. We evaluate BioGPT on six biomedical natural language processing tasks and demonstrate that our model outperforms previous models on most tasks. Especially, we get 44.98%, 38.42% and 40.76% F1 score on BC5CDR, KD-DTI and DDI end-to-end relation extraction tasks, respectively, and 78.2% accuracy on PubMedQA, creating a new record. Our case study on text generation further demonstrates the advantage of BioGPT on biomedical literature to generate fluent descriptions for biomedical terms.

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

## Citation

|

| 25 |

+

|

| 26 |

+

If you find BioGPT useful in your research, please cite the following paper:

|

| 27 |

+

|

| 28 |

+

```latex

|

| 29 |

+

@article{10.1093/bib/bbac409,

|

| 30 |

+

author = {Luo, Renqian and Sun, Liai and Xia, Yingce and Qin, Tao and Zhang, Sheng and Poon, Hoifung and Liu, Tie-Yan},

|

| 31 |

+

title = ""{BioGPT: generative pre-trained transformer for biomedical text generation and mining}"",

|

| 32 |

+

journal = {Briefings in Bioinformatics},

|

| 33 |

+

volume = {23},

|

| 34 |

+

number = {6},

|

| 35 |

+

year = {2022},

|

| 36 |

+

month = {09},

|

| 37 |

+

abstract = ""{Pre-trained language models have attracted increasing attention in the biomedical domain, inspired by their great success in the general natural language domain. Among the two main branches of pre-trained language models in the general language domain, i.e. BERT (and its variants) and GPT (and its variants), the first one has been extensively studied in the biomedical domain, such as BioBERT and PubMedBERT. While they have achieved great success on a variety of discriminative downstream biomedical tasks, the lack of generation ability constrains their application scope. In this paper, we propose BioGPT, a domain-specific generative Transformer language model pre-trained on large-scale biomedical literature. We evaluate BioGPT on six biomedical natural language processing tasks and demonstrate that our model outperforms previous models on most tasks. Especially, we get 44.98\%, 38.42\% and 40.76\% F1 score on BC5CDR, KD-DTI and DDI end-to-end relation extraction tasks, respectively, and 78.2\% accuracy on PubMedQA, creating a new record. Our case study on text generation further demonstrates the advantage of BioGPT on biomedical literature to generate fluent descriptions for biomedical terms.}"",

|

| 38 |

+

issn = {1477-4054},

|

| 39 |

+

doi = {10.1093/bib/bbac409},

|

| 40 |

+

url = {https://doi.org/10.1093/bib/bbac409},

|

| 41 |

+

note = {bbac409},

|

| 42 |

+

eprint = {https://academic.oup.com/bib/article-pdf/23/6/bbac409/47144271/bbac409.pdf},

|

| 43 |

+

}

|

| 44 |

+

```","{""id"": ""microsoft/BioGPT-Large"", ""author"": ""microsoft"", ""sha"": ""c6a5136a91c5e3150d9f05ab9d33927a3210a22e"", ""last_modified"": ""2023-02-05 06:18:14+00:00"", ""created_at"": ""2023-02-03 16:17:26+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 7980, ""downloads_all_time"": null, ""likes"": 196, ""library_name"": ""transformers"", ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""transformers"", ""pytorch"", ""biogpt"", ""text-generation"", ""medical"", ""en"", ""dataset:pubmed"", ""license:mit"", ""autotrain_compatible"", ""endpoints_compatible"", ""region:us""], ""pipeline_tag"": ""text-generation"", ""mask_token"": null, ""trending_score"": null, ""card_data"": ""datasets:\n- pubmed\nlanguage:\n- en\nlibrary_name: transformers\nlicense: mit\npipeline_tag: text-generation\ntags:\n- medical\nwidget:\n- text: COVID-19 is\ninference:\n parameters:\n max_new_tokens: 50"", ""widget_data"": [{""text"": ""COVID-19 is""}], ""model_index"": null, ""config"": {""architectures"": [""BioGptForCausalLM""], ""model_type"": ""biogpt"", ""tokenizer_config"": {""bos_token"": ""<s>"", ""eos_token"": ""</s>"", ""pad_token"": ""<pad>"", ""sep_token"": ""</s>"", ""unk_token"": ""<unk>""}}, ""transformers_info"": {""auto_model"": ""AutoModelForCausalLM"", ""custom_class"": null, ""pipeline_tag"": ""text-generation"", ""processor"": ""AutoTokenizer""}, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='README.md', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='generation_config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='merges.txt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='pytorch_model.bin', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='special_tokens_map.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer_config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='vocab.json', size=None, blob_id=None, lfs=None)""], ""spaces"": [""katielink/biogpt-large-demo"", ""Sharathhebbar24/One-stop-for-Open-source-models"", ""kadirnar/Whisper_M2M100_BioGpt"", ""katielink/compare-bio-llm"", ""flash64/biogpt-testing"", ""MMYang/microsoft-BioGPT-Large"", ""MohammedAlakhras/ChatDoctor"", ""RamboRogers/BioGPT"", ""arohcx/colab"", ""Vrda/microsoft-BioGPT-Large"", ""partnersfactory/microsoft-BioGPT-Large"", ""Mzeshle/microsoft-BioGPT-Large"", ""ZaddyMattty/microsoft-BioGPT-Large"", ""caliex/demo-med"", ""yejunbin/microsoft-BioGPT-Large"", ""yuzhengao/microsoft-BioGPT-Large"", ""Huggingmaces/microsoft-BioGPT-Large"", ""vlad-htg/microsoft-BioGPT-Large"", ""ayub567/medaichatbot"", ""ameykalpe/microsoft-BioGPT-Large"", ""UraniaLi/Energenesis_Biomedical_AI"", ""Yossefahmed68/microsoft-BioGPT-Large"", ""Krystal5299/microsoft-BioGPT-Large"", ""Ashraf/BioGPT_Chat"", ""Nurpeyis/microsoft-BioGPT-Large"", ""0xgokuz/microsoft-BioGPT-Large"", ""K00B404/One-stop-till-you-drop"", ""aidevlab/BioGPT_text_generation"", ""zaikaman/AIDoctor"", ""ihkaraman/medical-chatbot"", ""SeemaSaharan/Diagnosis_Clinical"", ""nroy8/symptom-checker-ai"", ""Ravithreni4/AI-Health-Assistent"", ""Ravithreni4/Health-Assistent"", ""drkareemkamal/pediatric_RAG"", ""S0umya/canceropinion.ai"", ""anaghanagesh/drug_discovery_using_LLMs"", ""anaghanagesh/Drug_Discovery_using_LLMs_"", ""kseth9852/health_report_analysis""], ""safetensors"": null, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2023-02-05 06:18:14+00:00"", ""cardData"": ""datasets:\n- pubmed\nlanguage:\n- en\nlibrary_name: transformers\nlicense: mit\npipeline_tag: text-generation\ntags:\n- medical\nwidget:\n- text: COVID-19 is\ninference:\n parameters:\n max_new_tokens: 50"", ""transformersInfo"": {""auto_model"": ""AutoModelForCausalLM"", ""custom_class"": null, ""pipeline_tag"": ""text-generation"", ""processor"": ""AutoTokenizer""}, ""_id"": ""63dd3396327159311ac515f7"", ""modelId"": ""microsoft/BioGPT-Large"", ""usedStorage"": 12569856117}",0,"https://huggingface.co/RobCzikkel/DoctorGPT, https://huggingface.co/Shaheer14326/Fine_tunned_Biogpt",2,,0,,0,,0,"MMYang/microsoft-BioGPT-Large, MohammedAlakhras/ChatDoctor, RamboRogers/BioGPT, Sharathhebbar24/One-stop-for-Open-source-models, anaghanagesh/Drug_Discovery_using_LLMs_, anaghanagesh/drug_discovery_using_LLMs, flash64/biogpt-testing, huggingface/InferenceSupport/discussions/1109, kadirnar/Whisper_M2M100_BioGpt, katielink/biogpt-large-demo, katielink/compare-bio-llm, nroy8/symptom-checker-ai, zaikaman/AIDoctor",13

|

| 45 |

+

RobCzikkel/DoctorGPT,"---

|

| 46 |

+

license: mit

|

| 47 |

+

base_model: microsoft/BioGPT-Large

|

| 48 |

+

tags:

|

| 49 |

+

- generated_from_trainer

|

| 50 |

+

model-index:

|

| 51 |

+

- name: bioGPT

|

| 52 |

+

results: []

|

| 53 |

+

---

|

| 54 |

+

|

| 55 |

+

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

|

| 56 |

+

should probably proofread and complete it, then remove this comment. -->

|

| 57 |

+

|

| 58 |

+

# DoctorGPT

|

| 59 |

+

|

| 60 |

+

This model is a fine-tuned version of [microsoft/BioGPT-Large](https://huggingface.co/microsoft/BioGPT-Large) on a formatted version of the MedQuad-MedicalQnADataset dataset.

|

| 61 |

+

It achieves the following results on the evaluation set:

|

| 62 |

+

- Loss: 1.1114

|

| 63 |

+

|

| 64 |

+

## Model description

|

| 65 |

+

|

| 66 |

+

The base model used is Microsoft's BioGPT, it was fine-tuned with a custom prompt for a conversational chatbot between a patient and a doctor.

|

| 67 |

+

The prompt used is as follows:

|

| 68 |

+

|

| 69 |

+

```py

|

| 70 |

+

""""""You are a Doctor. Below is a question from a patient. Write a response to the patient that answers their question\n\n""

|

| 71 |

+

|

| 72 |

+

### Patient: {question}""

|

| 73 |

+

|

| 74 |

+

### Doctor: {answer}

|

| 75 |

+

""""""

|

| 76 |

+

```

|

| 77 |

+

|

| 78 |

+

## Inference

|

| 79 |

+

|

| 80 |

+

The fine-tuned model has a saved generation config, to use it:

|

| 81 |

+

```py

|

| 82 |

+

model_config = GenerationConfig.from_pretrained(

|

| 83 |

+

DoctorGPT

|

| 84 |

+

)

|

| 85 |

+

```

|

| 86 |

+

|

| 87 |

+

This config is a diverse beam search strategy:

|

| 88 |

+

```py

|

| 89 |

+

diversebeamConfig = GenerationConfig(

|

| 90 |

+

min_length=20,

|

| 91 |

+

max_length=256,

|

| 92 |

+

do_sample=False,

|

| 93 |

+

num_beams=4,

|

| 94 |

+

num_beam_groups=4,

|

| 95 |

+

diversity_penalty=1.0,

|

| 96 |

+

repetition_penalty=3.0,

|

| 97 |

+

eos_token_id=model.config.eos_token_id,

|

| 98 |

+

pad_token_id=model.config.pad_token_id,

|

| 99 |

+

bos_token_id=model.config.bos_token_id,

|

| 100 |

+

)

|

| 101 |

+

```

|

| 102 |

+

|

| 103 |

+

For best results, please use this as your generator function:

|

| 104 |

+

```py

|

| 105 |

+

def generate(query):

|

| 106 |

+

sys = ""You are a Doctor. Below is a question from a patient. Write a response to the patient that answers their question\n\n""

|

| 107 |

+

patient = f""### Patient:\n{query}\n\n""

|

| 108 |

+

doctor = f""### Doctor:\n ""

|

| 109 |

+

|

| 110 |

+

prompt = sys+patient+doctor

|

| 111 |

+

|

| 112 |

+

inputs = tokenizer(prompt, return_tensors=""pt"").to(""cuda"")

|

| 113 |

+

generated_ids = model.generate(

|

| 114 |

+

**inputs,

|

| 115 |

+

generation_config=generation_config,

|

| 116 |

+

)

|

| 117 |

+

outputs = tokenizer.batch_decode(generated_ids, skip_special_tokens=True)

|

| 118 |

+

answer = '.'.join(answer.split('.')[:-1])

|

| 119 |

+

torch.cuda.empty_cache()

|

| 120 |

+

return answer + "".""

|

| 121 |

+

```

|

| 122 |

+

|

| 123 |

+

|

| 124 |

+

## Intended uses & limitations

|

| 125 |

+

|

| 126 |

+

This is a private project for fine-tuning a medical language model, it is not intended to be used as a source of medical advice.

|

| 127 |

+

|

| 128 |

+

## Training and evaluation data

|

| 129 |

+

|

| 130 |

+

More information needed

|

| 131 |

+

|

| 132 |

+

## Training procedure

|

| 133 |

+

|

| 134 |

+

### Training hyperparameters

|

| 135 |

+

|

| 136 |

+

The following hyperparameters were used during training:

|

| 137 |

+

- learning_rate: 0.0005

|

| 138 |

+

- train_batch_size: 4

|

| 139 |

+

- eval_batch_size: 4

|

| 140 |

+

- seed: 42

|

| 141 |

+

- gradient_accumulation_steps: 16

|

| 142 |

+

- total_train_batch_size: 64

|

| 143 |

+

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

|

| 144 |

+

- lr_scheduler_type: cosine

|

| 145 |

+

- lr_scheduler_warmup_ratio: 0.03

|

| 146 |

+

- num_epochs: 3

|

| 147 |

+

- mixed_precision_training: Native AMP

|

| 148 |

+

|

| 149 |

+

### Training results

|

| 150 |

+

|

| 151 |

+

| Training Loss | Epoch | Step | Validation Loss |

|

| 152 |

+

|:-------------:|:-----:|:----:|:---------------:|

|

| 153 |

+

| No log | 0.25 | 51 | 1.2418 |

|

| 154 |

+

| 1.3267 | 0.5 | 102 | 1.1900 |

|

| 155 |

+

| 1.3267 | 0.75 | 153 | 1.1348 |

|

| 156 |

+

| 1.1237 | 0.99 | 204 | 1.0887 |

|

| 157 |

+

| 1.1237 | 1.24 | 255 | 1.1018 |

|

| 158 |

+

| 0.7527 | 1.49 | 306 | 1.0770 |

|

| 159 |

+

| 0.7527 | 1.74 | 357 | 1.0464 |

|

| 160 |

+

| 0.7281 | 1.99 | 408 | 1.0233 |

|

| 161 |

+

| 0.7281 | 2.24 | 459 | 1.1212 |

|

| 162 |

+

| 0.4262 | 2.49 | 510 | 1.1177 |

|

| 163 |

+

| 0.4262 | 2.73 | 561 | 1.1125 |

|

| 164 |

+

| 0.4124 | 2.98 | 612 | 1.1114 |

|

| 165 |

+

|

| 166 |

+

|

| 167 |

+

### Framework versions

|

| 168 |

+

|

| 169 |

+

- Transformers 4.36.0.dev0

|

| 170 |

+

- Pytorch 2.1.0+cu118

|

| 171 |

+

- Datasets 2.15.0

|

| 172 |

+

- Tokenizers 0.15.0

|

| 173 |

+

","{""id"": ""RobCzikkel/DoctorGPT"", ""author"": ""RobCzikkel"", ""sha"": ""19413de7929ec6249358a48b5a8a8aea403a74df"", ""last_modified"": ""2023-12-04 17:49:55+00:00"", ""created_at"": ""2023-11-25 02:40:31+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 17, ""downloads_all_time"": null, ""likes"": 2, ""library_name"": ""transformers"", ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""transformers"", ""tensorboard"", ""safetensors"", ""biogpt"", ""text-generation"", ""generated_from_trainer"", ""base_model:microsoft/BioGPT-Large"", ""base_model:finetune:microsoft/BioGPT-Large"", ""license:mit"", ""autotrain_compatible"", ""endpoints_compatible"", ""region:us""], ""pipeline_tag"": ""text-generation"", ""mask_token"": null, ""trending_score"": null, ""card_data"": ""base_model: microsoft/BioGPT-Large\nlicense: mit\ntags:\n- generated_from_trainer\nmodel-index:\n- name: bioGPT\n results: []"", ""widget_data"": [{""text"": ""My name is Julien and I like to""}, {""text"": ""I like traveling by train because""}, {""text"": ""Paris is an amazing place to visit,""}, {""text"": ""Once upon a time,""}], ""model_index"": [{""name"": ""bioGPT"", ""results"": []}], ""config"": {""architectures"": [""BioGptForCausalLM""], ""model_type"": ""biogpt"", ""tokenizer_config"": {""bos_token"": ""<s>"", ""eos_token"": ""</s>"", ""pad_token"": ""<pad>"", ""sep_token"": ""</s>"", ""unk_token"": ""<unk>""}}, ""transformers_info"": {""auto_model"": ""AutoModelForCausalLM"", ""custom_class"": null, ""pipeline_tag"": ""text-generation"", ""processor"": ""AutoTokenizer""}, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='README.md', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='generation_config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='merges.txt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00001-of-00002.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model-00002-of-00002.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model.safetensors.index.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='runs/Nov24_21-12-11_87f33704e632/events.out.tfevents.1700860339.87f33704e632.744.0', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='runs/Nov24_21-16-03_87f33704e632/events.out.tfevents.1700860578.87f33704e632.4122.0', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='runs/Nov24_23-16-13_87f33704e632/events.out.tfevents.1700867787.87f33704e632.35427.0', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='special_tokens_map.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer_config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='training_args.bin', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='vocab.json', size=None, blob_id=None, lfs=None)""], ""spaces"": [""0xgokuz/RobCzikkel-DoctorGPT""], ""safetensors"": {""parameters"": {""F32"": 1571188800}, ""total"": 1571188800}, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2023-12-04 17:49:55+00:00"", ""cardData"": ""base_model: microsoft/BioGPT-Large\nlicense: mit\ntags:\n- generated_from_trainer\nmodel-index:\n- name: bioGPT\n results: []"", ""transformersInfo"": {""auto_model"": ""AutoModelForCausalLM"", ""custom_class"": null, ""pipeline_tag"": ""text-generation"", ""processor"": ""AutoTokenizer""}, ""_id"": ""65615e9f49f816b798400a06"", ""modelId"": ""RobCzikkel/DoctorGPT"", ""usedStorage"": 6284863196}",1,,0,,0,,0,,0,"0xgokuz/RobCzikkel-DoctorGPT, huggingface/InferenceSupport/discussions/new?title=RobCzikkel/DoctorGPT&description=React%20to%20this%20comment%20with%20an%20emoji%20to%20vote%20for%20%5BRobCzikkel%2FDoctorGPT%5D(%2FRobCzikkel%2FDoctorGPT)%20to%20be%20supported%20by%20Inference%20Providers.%0A%0A(optional)%20Which%20providers%20are%20you%20interested%20in%3F%20(Novita%2C%20Hyperbolic%2C%20Together%E2%80%A6)%0A",2

|

| 174 |

+

Shaheer14326/Fine_tunned_Biogpt,"---

|

| 175 |

+

license: mit

|

| 176 |

+

language:

|

| 177 |

+

- en

|

| 178 |

+

base_model:

|

| 179 |

+

- microsoft/BioGPT-Large

|

| 180 |

+

---

|

| 181 |

+

This is a fine tunned version of Bio GPT to analyze the doctor and patient conversation converted into text using whisper and then label the text as doctor or patient","{""id"": ""Shaheer14326/Fine_tunned_Biogpt"", ""author"": ""Shaheer14326"", ""sha"": ""0009f3651d98954a1cad147daf0cdfc90b8c6b87"", ""last_modified"": ""2025-02-25 21:17:52+00:00"", ""created_at"": ""2025-02-25 21:04:24+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 2, ""downloads_all_time"": null, ""likes"": 0, ""library_name"": null, ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""safetensors"", ""biogpt"", ""en"", ""base_model:microsoft/BioGPT-Large"", ""base_model:finetune:microsoft/BioGPT-Large"", ""license:mit"", ""region:us""], ""pipeline_tag"": null, ""mask_token"": null, ""trending_score"": null, ""card_data"": ""base_model:\n- microsoft/BioGPT-Large\nlanguage:\n- en\nlicense: mit"", ""widget_data"": null, ""model_index"": null, ""config"": {""architectures"": [""BioGptForSequenceClassification""], ""model_type"": ""biogpt"", ""tokenizer_config"": {""bos_token"": ""<s>"", ""eos_token"": ""</s>"", ""pad_token"": ""<pad>"", ""sep_token"": ""</s>"", ""unk_token"": ""<unk>""}}, ""transformers_info"": null, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='README.md', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='merges.txt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model.safetensors', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='special_tokens_map.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer_config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='vocab.json', size=None, blob_id=None, lfs=None)""], ""spaces"": [], ""safetensors"": {""parameters"": {""F32"": 346765312}, ""total"": 346765312}, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2025-02-25 21:17:52+00:00"", ""cardData"": ""base_model:\n- microsoft/BioGPT-Large\nlanguage:\n- en\nlicense: mit"", ""transformersInfo"": null, ""_id"": ""67be305890cc736e2f89a8f9"", ""modelId"": ""Shaheer14326/Fine_tunned_Biogpt"", ""usedStorage"": 1387103856}",1,,0,,0,,0,,0,huggingface/InferenceSupport/discussions/new?title=Shaheer14326/Fine_tunned_Biogpt&description=React%20to%20this%20comment%20with%20an%20emoji%20to%20vote%20for%20%5BShaheer14326%2FFine_tunned_Biogpt%5D(%2FShaheer14326%2FFine_tunned_Biogpt)%20to%20be%20supported%20by%20Inference%20Providers.%0A%0A(optional)%20Which%20providers%20are%20you%20interested%20in%3F%20(Novita%2C%20Hyperbolic%2C%20Together%E2%80%A6)%0A,1

|

BiomedNLP-BiomedBERT-base-uncased-abstract-fulltext_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

CLIP-GmP-ViT-L-14_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv

ADDED

|

@@ -0,0 +1,127 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

zer0int/CLIP-GmP-ViT-L-14,"---

|

| 3 |

+

license: mit

|

| 4 |

+

base_model: openai/clip-vit-large-patch14

|

| 5 |

+

datasets:

|

| 6 |

+

- SPRIGHT-T2I/spright_coco

|

| 7 |

+

---

|

| 8 |

+

## A fine-tune of CLIP-L. Original model: [openai/clip-vit-large-patch14](https://huggingface.co/openai/clip-vit-large-patch14)

|

| 9 |

+

- ❤️ this CLIP? [Help feed it](https://ko-fi.com/zer0int) if you can. Besides data, CLIP eats time & expensive electricity of DE. TY! 🤗

|

| 10 |

+

- Want to feed it yourself? All code for fine-tuning and much more is on [my GitHub](https://github.com/zer0int).

|

| 11 |

+

-----

|

| 12 |

+

## Update 23/SEP/2024:

|

| 13 |

+

- Huggingface Transformers / Diffusers pipeline now implemented.

|

| 14 |

+

- See here for an example script: [Integrating my CLIP-L with Flux.1](https://github.com/zer0int/CLIP-txt2img-diffusers-scripts)

|

| 15 |

+

- Otherwise, use as normal / any HF model:

|

| 16 |

+

```

|

| 17 |

+

from transformers import CLIPModel, CLIPProcessor, CLIPConfig

|

| 18 |

+

model_id = ""zer0int/CLIP-GmP-ViT-L-14""

|

| 19 |

+

config = CLIPConfig.from_pretrained(model_id)

|

| 20 |

+

```

|

| 21 |

+

## Update 03/SEP/2024 / edit 05/AUG:

|

| 22 |

+

|

| 23 |

+

## 👋 Looking for a Text Encoder for Flux.1 (or SD3, SDXL, SD, ...) to replace CLIP-L? 👀

|

| 24 |

+

You'll generally want the ""TE-only"" .safetensors:

|

| 25 |

+

|

| 26 |

+

- 👉 The ""TEXT"" model has superior prompt following, especially for text, but also for other details. [DOWNLOAD](https://huggingface.co/zer0int/CLIP-GmP-ViT-L-14/blob/main/ViT-L-14-TEXT-detail-improved-hiT-GmP-TE-only-HF.safetensors)

|

| 27 |

+

- 👉 The ""SMOOTH"" model can sometimes** have better details (when there's no text in the image). [DOWNLOAD](https://huggingface.co/zer0int/CLIP-GmP-ViT-L-14/blob/main/ViT-L-14-BEST-smooth-GmP-TE-only-HF-format.safetensors)

|

| 28 |

+

- The ""GmP"" initial fine-tune is deprecated / inferior to the above models. Still, you can [DOWNLOAD](https://huggingface.co/zer0int/CLIP-GmP-ViT-L-14/blob/main/ViT-L-14-GmP-ft-TE-only-HF-format.safetensors) it.

|

| 29 |

+

|

| 30 |

+

**: The ""TEXT"" model is the best for text. Full stop. But whether the ""SMOOTH"" model is better for your (text-free) scenario than the ""TEXT"" model really depends on the specific prompt. It might also be the case that the ""TEXT"" model leads to images that you prefer over ""SMOOTH""; the only way to know is to experiment with both.

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

|

| 34 |

+

## 🤓👨💻 In general (because we're not limited to text-to-image generative AI), I provide four versions / downloads:

|

| 35 |

+

|

| 36 |

+

- Text encoder only .safetensors.

|

| 37 |

+

- Full model .safetensors.

|

| 38 |

+

- State_dict pickle.

|

| 39 |

+

- Full model pickle (can be used as-is with ""import clip"" -> clip.load() after bypassing SHA checksum verification).

|

| 40 |

+

|

| 41 |

+

## The TEXT model has a modality gap of 0.80 (OpenAI pre-trained: 0.82).

|

| 42 |

+

- Trained with high temperature of 0.1 + tinkering.

|

| 43 |

+

- ImageNet/ObjectNet accuracy ~0.91 for both ""SMOOTH"" and ""TEXT"" models (pre-trained: ~0.84).

|

| 44 |

+

- The models (this plot = ""TEXT"" model on MSCOCO) are also golden retrievers: 🥰🐕

|

| 45 |

+

|

| 46 |

+

|

| 47 |

+

|

| 48 |

+

----

|

| 49 |

+

## Update 11/AUG/2024:

|

| 50 |

+

|

| 51 |

+

New Best-Performing CLIP ViT-L/14 'GmP-smooth' model added (simply download the files named *BEST*!):

|

| 52 |

+

|

| 53 |

+

|

| 54 |

+

|

| 55 |

+

Or just create a fine-tune yourself: [https://github.com/zer0int/CLIP-fine-tune](https://github.com/zer0int/CLIP-fine-tune)

|

| 56 |

+

|

| 57 |

+

How?

|

| 58 |

+

- Geometric Parametrization (GmP) (same as before)

|

| 59 |

+

- Activation Value manipulation for 'adverb neuron' (same as before)

|

| 60 |

+

- NEW: Custom loss function with label smoothing!

|

| 61 |

+

- For in-depth details, see my GitHub. 🤗

|

| 62 |

+

|

| 63 |

+

----

|

| 64 |

+

|

| 65 |

+

## A fine-tune of OpenAI / CLIP ViT-L/14 that has an unprecedented ImageNet/ObjectNet accuracy of ~0.90 (original pre-trained model / OpenAI's CLIP: ~0.85)**.

|

| 66 |

+

|

| 67 |

+

Made possible with Geometric Parametrization (GmP):

|

| 68 |

+

|

| 69 |

+

```

|

| 70 |

+

|

| 71 |

+

""Normal"" CLIP MLP (multi-layer perceptron):

|

| 72 |

+

|

| 73 |

+

(mlp): Sequential(

|

| 74 |

+

|-(c_fc): Linear(in_features=1024, out_features=4096, bias=True)

|

| 75 |

+

| (gelu): QuickGELU()

|

| 76 |

+

|-}-(c_proj): Linear(in_features=4096, out_features=1024, bias=True)

|

| 77 |

+

| |

|

| 78 |

+

| |-- visual.transformer.resblocks.0.mlp.c_fc.weight

|

| 79 |

+

| |-- visual.transformer.resblocks.0.mlp.c_fc.bias

|

| 80 |

+

|

|

| 81 |

+

|---- visual.transformer.resblocks.0.mlp.c_proj.weight

|

| 82 |

+

|---- visual.transformer.resblocks.0.mlp.c_proj.bias

|

| 83 |

+

|

| 84 |

+

|

| 85 |

+

GmP CLIP MLP:

|

| 86 |

+

|

| 87 |

+

Weight decomposition into:

|

| 88 |

+

- radial component 'r' as norm of pre-trained weights

|

| 89 |

+

- angular component 'theta' as normalized direction

|

| 90 |

+

-> preserves weight vectors' directionality and magnitude

|

| 91 |

+

|

| 92 |

+

(mlp): Sequential(

|

| 93 |

+

|-(c_fc): GeometricLinear()

|

| 94 |

+

| (gelu): QuickGELU()

|

| 95 |

+

|-}-(c_proj): GeometricLinear()

|

| 96 |

+

| |

|

| 97 |

+

| |-- visual.transformer.resblocks.0.mlp.c_fc.r

|

| 98 |

+

| |-- visual.transformer.resblocks.0.mlp.c_fc.theta

|

| 99 |

+

| |-- visual.transformer.resblocks.0.mlp.c_fc.bias

|

| 100 |

+

|

|

| 101 |

+

|---- visual.transformer.resblocks.0.mlp.c_proj.r

|

| 102 |

+

|---- visual.transformer.resblocks.0.mlp.c_proj.theta

|

| 103 |

+

|---- visual.transformer.resblocks.0.mlp.c_proj.bias

|

| 104 |

+

|

| 105 |

+

(Same thing for [text] transformer.resblocks)

|

| 106 |

+

|

| 107 |

+

```

|

| 108 |

+

|

| 109 |

+

|

| 110 |

+

|

| 111 |

+

✅ The model / state_dict I am sharing was converted back to .weight after fine-tuning - alas, it can be used in the same manner as any state_dict, e.g. for use with ComfyUI as the SDXL / SD3 Text Encoder! 🤗

|

| 112 |

+

|

| 113 |

+

- ** For details on training and those numbers / the eval, please see [https://github.com/zer0int/CLIP-fine-tune](https://github.com/zer0int/CLIP-fine-tune)

|

| 114 |

+

- -> You can use ""exp-acts-ft-finetune-OpenAI-CLIP-ViT-L-14-GmP-manipulate-neurons.py"" to replicate my exact model fine-tune.

|

| 115 |

+

|

| 116 |

+