Add files using upload-large-folder tool

Browse filesThis view is limited to 50 files because it contains too many changes. See raw diff

- Baichuan2-13B-Chat_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +261 -0

- Baichuan2-7B-Chat_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +235 -0

- Cerebras-GPT-13B_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +184 -0

- ChatTTS_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +56 -0

- CogVideoX-5b-I2V_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +281 -0

- ControlNetMediaPipeFace_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +213 -0

- Cyberpunk-Anime-Diffusion_finetunes_20250425_143346.csv_finetunes_20250425_143346.csv +122 -0

- DeepSeek-R1-Distill-Llama-8B-GGUF_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +599 -0

- Emu3-Gen_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +15 -0

- FastHunyuan_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +60 -0

- GPT-JT-6B-v1_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +159 -0

- Hotshot-XL_finetunes_20250426_121513.csv_finetunes_20250426_121513.csv +48 -0

- Hunyuan3D-1_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +258 -0

- Hunyuan3D-2mv_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +113 -0

- HunyuanVideo-I2V_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +524 -0

- IP-Adapter_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +48 -0

- LLaMA-2-7B-32K_finetunes_20250425_143346.csv_finetunes_20250425_143346.csv +618 -0

- Llama-2-70B-Chat-GGML_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +303 -0

- Llama-3-Refueled_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +135 -0

- Llama-3_1-Nemotron-51B-Instruct_finetunes_20250426_212347.csv_finetunes_20250426_212347.csv +216 -0

- MiniMax-VL-01_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +2 -0

- Molmo-72B-0924_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +211 -0

- MythoMax-L2-13B-GPTQ_finetunes_20250426_215237.csv_finetunes_20250426_215237.csv +315 -0

- NeuralBeagle14-7B_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +0 -0

- Nous-Capybara-34B_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +125 -0

- Nous-Hermes-2-Vision-Alpha_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +125 -0

- Nous-Hermes-2-Yi-34B_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +353 -0

- Open-Sora_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +32 -0

- Phi-3-mini-4k-instruct_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +0 -0

- Phi-3-small-128k-instruct_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +715 -0

- Qwen2-7B_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +0 -0

- Ruyi-Mini-7B_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +196 -0

- SuperNova-Medius_finetunes_20250426_212347.csv_finetunes_20250426_212347.csv +267 -0

- Van-Gogh-diffusion_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +67 -0

- Wayfarer-12B_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +72 -0

- XTTS-v1_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +93 -0

- Yi-34B-200K_finetunes_20250426_121513.csv_finetunes_20250426_121513.csv +0 -0

- bart-large_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +0 -0

- bertweet-base-sentiment-analysis_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +0 -0

- canary-1b_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +546 -0

- chatglm3-6b-32k_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +113 -0

- chinese-roberta-wwm-ext_finetunes_20250426_121513.csv_finetunes_20250426_121513.csv +0 -0

- deepseek-coder-33b-instruct_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +247 -0

- emotion-english-distilroberta-base_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +292 -0

- falcon-40b-instruct-GPTQ_finetunes_20250426_215237.csv_finetunes_20250426_215237.csv +396 -0

- flan-t5-base_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +0 -0

- flux-lora-collection_finetunes_20250425_143346.csv_finetunes_20250425_143346.csv +161 -0

- flux1-dev_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +12 -0

- gpt-neox-20b_finetunes_20250425_143346.csv_finetunes_20250425_143346.csv +600 -0

- gpt2-medium_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +0 -0

Baichuan2-13B-Chat_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv

ADDED

|

@@ -0,0 +1,261 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

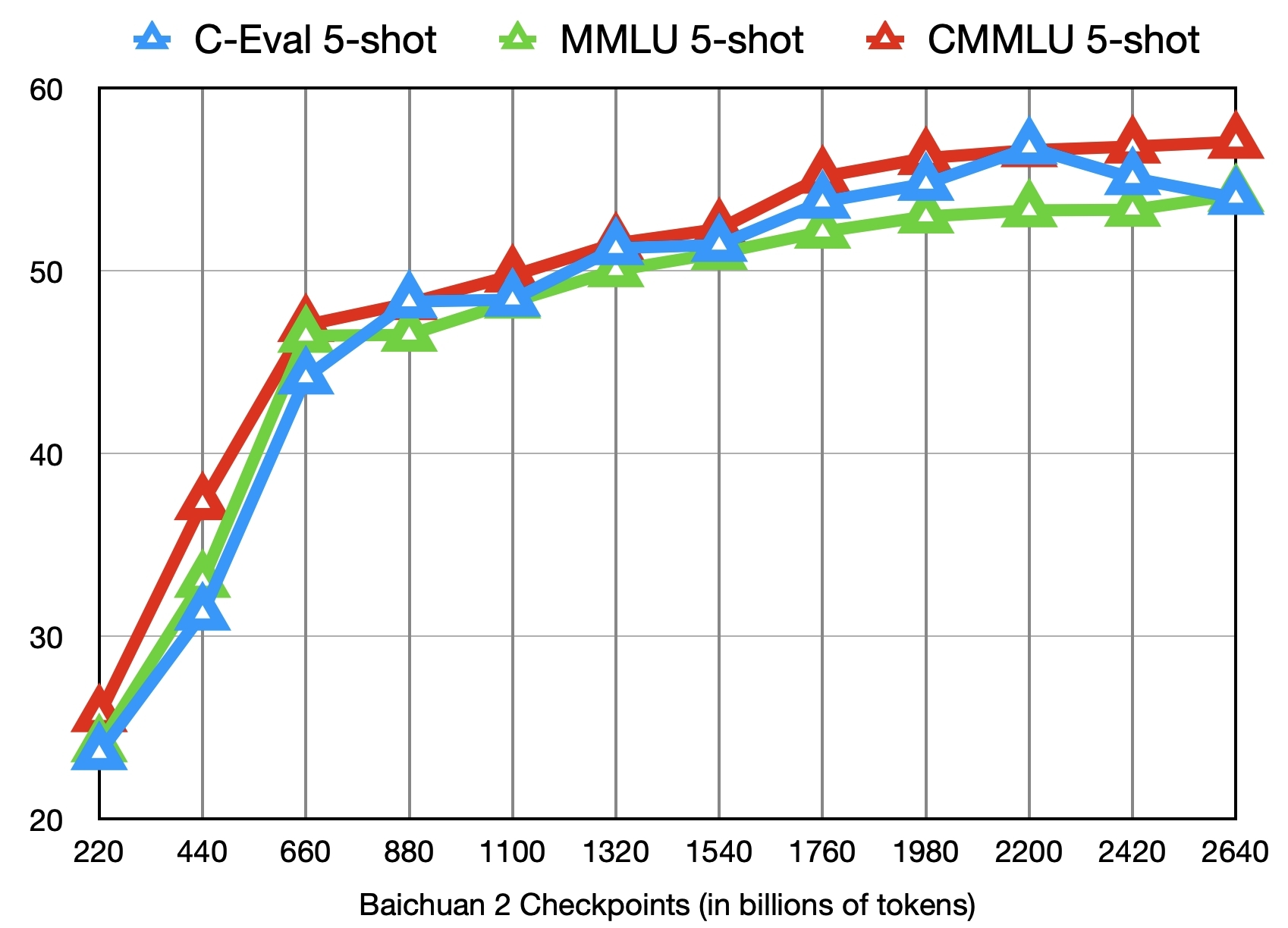

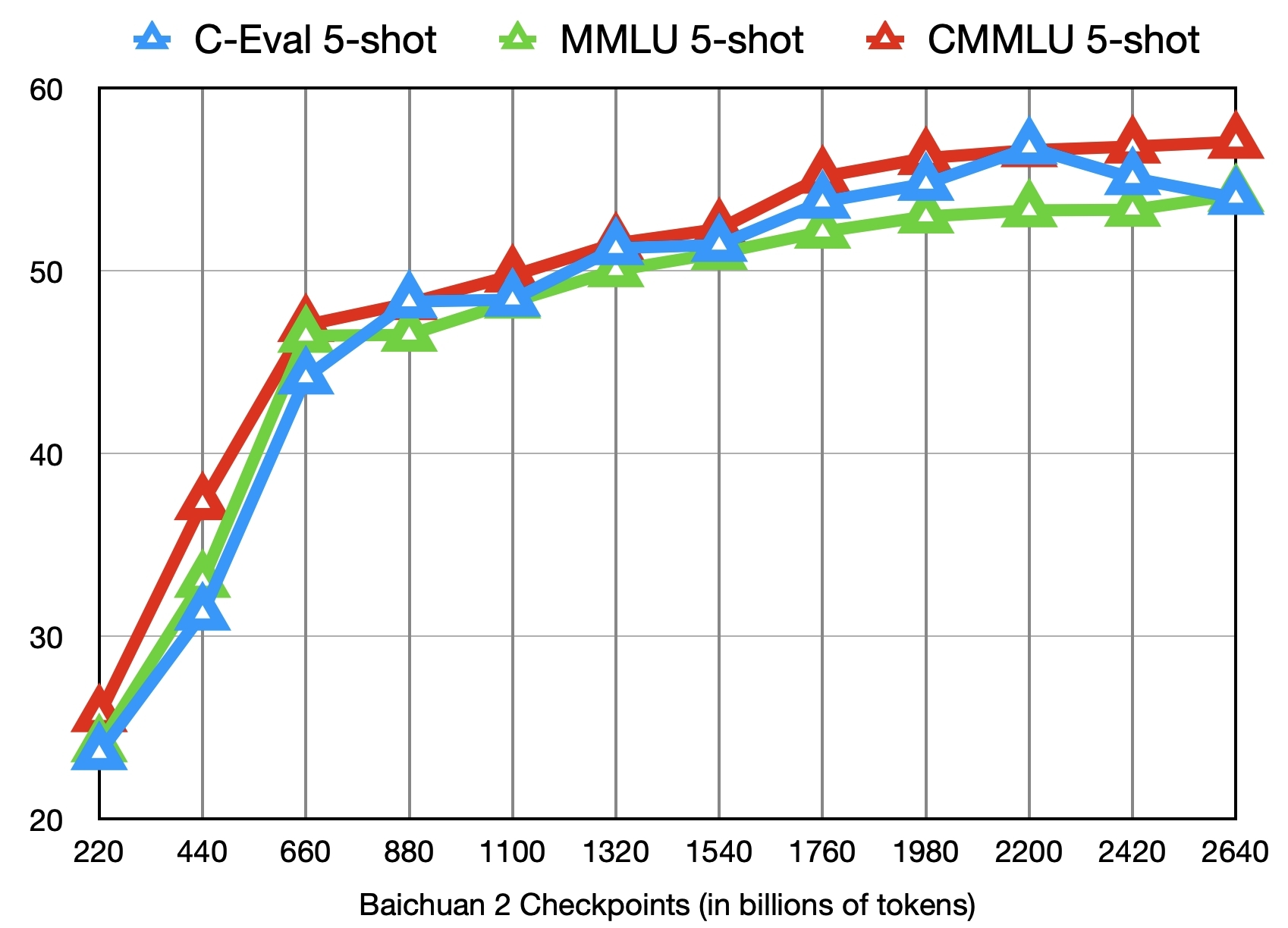

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

baichuan-inc/Baichuan2-13B-Chat,"---

|

| 3 |

+

language:

|

| 4 |

+

- en

|

| 5 |

+

- zh

|

| 6 |

+

license: other

|

| 7 |

+

tasks:

|

| 8 |

+

- text-generation

|

| 9 |

+

---

|

| 10 |

+

|

| 11 |

+

<!-- markdownlint-disable first-line-h1 -->

|

| 12 |

+

<!-- markdownlint-disable html -->

|

| 13 |

+

<div align=""center"">

|

| 14 |

+

<h1>

|

| 15 |

+

Baichuan 2

|

| 16 |

+

</h1>

|

| 17 |

+

</div>

|

| 18 |

+

|

| 19 |

+

<div align=""center"">

|

| 20 |

+

<a href=""https://github.com/baichuan-inc/Baichuan2"" target=""_blank"">🦉GitHub</a> | <a href=""https://github.com/baichuan-inc/Baichuan-7B/blob/main/media/wechat.jpeg?raw=true"" target=""_blank"">💬WeChat</a>

|

| 21 |

+

</div>

|

| 22 |

+

<div align=""center"">

|

| 23 |

+

百川API支持搜索增强和192K长窗口,新增百川搜索增强知识库、限时免费!<br>

|

| 24 |

+

🚀 <a href=""https://www.baichuan-ai.com/"" target=""_blank"">百川大模型在线对话平台</a> 已正式向公众开放 🎉

|

| 25 |

+

</div>

|

| 26 |

+

|

| 27 |

+

# 目录/Table of Contents

|

| 28 |

+

|

| 29 |

+

- [📖 模型介绍/Introduction](#Introduction)

|

| 30 |

+

- [⚙️ 快速开始/Quick Start](#Start)

|

| 31 |

+

- [📊 Benchmark评估/Benchmark Evaluation](#Benchmark)

|

| 32 |

+

- [👥 社区与生态/Community](#Community)

|

| 33 |

+

- [📜 声明与协议/Terms and Conditions](#Terms)

|

| 34 |

+

|

| 35 |

+

# 更新/Update

|

| 36 |

+

[2023.12.29] 🎉🎉🎉 我们发布了 **[Baichuan2-13B-Chat](https://huggingface.co/baichuan-inc/Baichuan2-13B-Chat) v2** 版本。其中:

|

| 37 |

+

- 大幅提升了模型的综合能力,特别是数学和逻辑推理、复杂指令跟随能力。

|

| 38 |

+

- 使用时需指定revision=v2.0,详细方法参考[快速开始](#Start)

|

| 39 |

+

|

| 40 |

+

# <span id=""Introduction"">模型介绍/Introduction</span>

|

| 41 |

+

|

| 42 |

+

Baichuan 2 是[百川智能]推出的新一代开源大语言模型,采用 **2.6 万亿** Tokens 的高质量语料训练,在权威的中文和英文 benchmark

|

| 43 |

+

上均取得同尺寸最好的效果。本次发布包含有 7B、13B 的 Base 和 Chat 版本,并提供了 Chat 版本的 4bits

|

| 44 |

+

量化,所有版本不仅对学术研究完全开放,开发者也仅需[邮件申请]并获得官方商用许可后,即可以免费商用。具体发布版本和下载见下表:

|

| 45 |

+

|

| 46 |

+

Baichuan 2 is the new generation of large-scale open-source language models launched by [Baichuan Intelligence inc.](https://www.baichuan-ai.com/).

|

| 47 |

+

It is trained on a high-quality corpus with 2.6 trillion tokens and has achieved the best performance in authoritative Chinese and English benchmarks of the same size.

|

| 48 |

+

This release includes 7B and 13B versions for both Base and Chat models, along with a 4bits quantized version for the Chat model.

|

| 49 |

+

All versions are fully open to academic research, and developers can also use them for free in commercial applications after obtaining an official commercial license through [email request](mailto:opensource@baichuan-inc.com).

|

| 50 |

+

The specific release versions and download links are listed in the table below:

|

| 51 |

+

|

| 52 |

+

| | Base Model | Chat Model | 4bits Quantized Chat Model |

|

| 53 |

+

|:---:|:--------------------:|:--------------------:|:--------------------------:|

|

| 54 |

+

| 7B | [Baichuan2-7B-Base](https://huggingface.co/baichuan-inc/Baichuan2-7B-Base) | [Baichuan2-7B-Chat](https://huggingface.co/baichuan-inc/Baichuan2-7B-Chat) | [Baichuan2-7B-Chat-4bits](https://huggingface.co/baichuan-inc/Baichuan2-7B-Base-4bits) |

|

| 55 |

+

| 13B | [Baichuan2-13B-Base](https://huggingface.co/baichuan-inc/Baichuan2-13B-Base) | [Baichuan2-13B-Chat](https://huggingface.co/baichuan-inc/Baichuan2-13B-Chat) | [Baichuan2-13B-Chat-4bits](https://huggingface.co/baichuan-inc/Baichuan2-13B-Chat-4bits) |

|

| 56 |

+

|

| 57 |

+

# <span id=""Start"">快速开始/Quick Start</span>

|

| 58 |

+

|

| 59 |

+

在Baichuan2系列模型中,我们为了加快推理速度使用了Pytorch2.0加入的新功能F.scaled_dot_product_attention,因此模型需要在Pytorch2.0环境下运行。

|

| 60 |

+

|

| 61 |

+

In the Baichuan 2 series models, we have utilized the new feature `F.scaled_dot_product_attention` introduced in PyTorch 2.0 to accelerate inference speed. Therefore, the model needs to be run in a PyTorch 2.0 environment.

|

| 62 |

+

|

| 63 |

+

|

| 64 |

+

```python

|

| 65 |

+

import torch

|

| 66 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 67 |

+

from transformers.generation.utils import GenerationConfig

|

| 68 |

+

tokenizer = AutoTokenizer.from_pretrained(""baichuan-inc/Baichuan2-13B-Chat"",

|

| 69 |

+

revision=""v2.0"",

|

| 70 |

+

use_fast=False,

|

| 71 |

+

trust_remote_code=True)

|

| 72 |

+

model = AutoModelForCausalLM.from_pretrained(""baichuan-inc/Baichuan2-13B-Chat"",

|

| 73 |

+

revision=""v2.0"",

|

| 74 |

+

device_map=""auto"",

|

| 75 |

+

torch_dtype=torch.bfloat16,

|

| 76 |

+

trust_remote_code=True)

|

| 77 |

+

model.generation_config = GenerationConfig.from_pretrained(""baichuan-inc/Baichuan2-13B-Chat"", revision=""v2.0"")

|

| 78 |

+

messages = []

|

| 79 |

+

messages.append({""role"": ""user"", ""content"": ""解释一下“温故而知新”""})

|

| 80 |

+

response = model.chat(tokenizer, messages)

|

| 81 |

+

print(response)

|

| 82 |

+

""温故而知新""是一句中国古代的成语,出自《论语·为政》篇。这句话的意思是:通过回顾过去,我们可以发现新的知识和理解。换句话说,学习历史和经验可以让我们更好地理解现在和未来。

|

| 83 |

+

|

| 84 |

+

这句话鼓��我们在学习和生活中不断地回顾和反思过去的经验,从而获得新的启示和成长。通过重温旧的知识和经历,我们可以发现新的观点和理解,从而更好地应对不断变化的世界和挑战。

|

| 85 |

+

```

|

| 86 |

+

**注意:如需使用老版本,需手动指定revision参数,设置revision=v1.0**

|

| 87 |

+

|

| 88 |

+

# <span id=""Benchmark"">Benchmark 结果/Benchmark Evaluation</span>

|

| 89 |

+

|

| 90 |

+

我们在[通用]、[法律]、[医疗]、[数学]、[代码]和[多语言翻译]六个领域的中英文权威数据集上对模型进行了广泛测试,更多详细测评结果可查看[GitHub]。

|

| 91 |

+

|

| 92 |

+

We have extensively tested the model on authoritative Chinese-English datasets across six domains: [General](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md#general-domain), [Legal](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md#law-and-medicine), [Medical](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md#law-and-medicine), [Mathematics](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md#mathematics-and-code), [Code](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md#mathematics-and-code), and [Multilingual Translation](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md#multilingual-translation). For more detailed evaluation results, please refer to [GitHub](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md).

|

| 93 |

+

|

| 94 |

+

### 7B Model Results

|

| 95 |

+

|

| 96 |

+

| | **C-Eval** | **MMLU** | **CMMLU** | **Gaokao** | **AGIEval** | **BBH** |

|

| 97 |

+

|:-----------------------:|:----------:|:--------:|:---------:|:----------:|:-----------:|:-------:|

|

| 98 |

+

| | 5-shot | 5-shot | 5-shot | 5-shot | 5-shot | 3-shot |

|

| 99 |

+

| **GPT-4** | 68.40 | 83.93 | 70.33 | 66.15 | 63.27 | 75.12 |

|

| 100 |

+

| **GPT-3.5 Turbo** | 51.10 | 68.54 | 54.06 | 47.07 | 46.13 | 61.59 |

|

| 101 |

+

| **LLaMA-7B** | 27.10 | 35.10 | 26.75 | 27.81 | 28.17 | 32.38 |

|

| 102 |

+

| **LLaMA2-7B** | 28.90 | 45.73 | 31.38 | 25.97 | 26.53 | 39.16 |

|

| 103 |

+

| **MPT-7B** | 27.15 | 27.93 | 26.00 | 26.54 | 24.83 | 35.20 |

|

| 104 |

+

| **Falcon-7B** | 24.23 | 26.03 | 25.66 | 24.24 | 24.10 | 28.77 |

|

| 105 |

+

| **ChatGLM2-6B** | 50.20 | 45.90 | 49.00 | 49.44 | 45.28 | 31.65 |

|

| 106 |

+

| **[Baichuan-7B]** | 42.80 | 42.30 | 44.02 | 36.34 | 34.44 | 32.48 |

|

| 107 |

+

| **[Baichuan2-7B-Base]** | 54.00 | 54.16 | 57.07 | 47.47 | 42.73 | 41.56 |

|

| 108 |

+

|

| 109 |

+

### 13B Model Results

|

| 110 |

+

|

| 111 |

+

| | **C-Eval** | **MMLU** | **CMMLU** | **Gaokao** | **AGIEval** | **BBH** |

|

| 112 |

+

|:---------------------------:|:----------:|:--------:|:---------:|:----------:|:-----------:|:-------:|

|

| 113 |

+

| | 5-shot | 5-shot | 5-shot | 5-shot | 5-shot | 3-shot |

|

| 114 |

+

| **GPT-4** | 68.40 | 83.93 | 70.33 | 66.15 | 63.27 | 75.12 |

|

| 115 |

+

| **GPT-3.5 Turbo** | 51.10 | 68.54 | 54.06 | 47.07 | 46.13 | 61.59 |

|

| 116 |

+

| **LLaMA-13B** | 28.50 | 46.30 | 31.15 | 28.23 | 28.22 | 37.89 |

|

| 117 |

+

| **LLaMA2-13B** | 35.80 | 55.09 | 37.99 | 30.83 | 32.29 | 46.98 |

|

| 118 |

+

| **Vicuna-13B** | 32.80 | 52.00 | 36.28 | 30.11 | 31.55 | 43.04 |

|

| 119 |

+

| **Chinese-Alpaca-Plus-13B** | 38.80 | 43.90 | 33.43 | 34.78 | 35.46 | 28.94 |

|

| 120 |

+

| **XVERSE-13B** | 53.70 | 55.21 | 58.44 | 44.69 | 42.54 | 38.06 |

|

| 121 |

+

| **[Baichuan-13B-Base]** | 52.40 | 51.60 | 55.30 | 49.69 | 43.20 | 43.01 |

|

| 122 |

+

| **[Baichuan2-13B-Base]** | 58.10 | 59.17 | 61.97 | 54.33 | 48.17 | 48.78 |

|

| 123 |

+

|

| 124 |

+

## 训练过程模型/Training Dynamics

|

| 125 |

+

|

| 126 |

+

除了训练了 2.6 万亿 Tokens 的 [Baichuan2-7B-Base](https://huggingface.co/baichuan-inc/Baichuan2-7B-Base) 模型,我们还提供了在此之前的另外 11 个中间过程的模型(分别对应训练了约 0.2 ~ 2.4 万亿 Tokens)供社区研究使用

|

| 127 |

+

([训练过程checkpoint下载](https://huggingface.co/baichuan-inc/Baichuan2-7B-Intermediate-Checkpoints))。下图给出了这些 checkpoints 在 C-Eval、MMLU、CMMLU 三个 benchmark 上的效果变化:

|

| 128 |

+

|

| 129 |

+

In addition to the [Baichuan2-7B-Base](https://huggingface.co/baichuan-inc/Baichuan2-7B-Base) model trained on 2.6 trillion tokens, we also offer 11 additional intermediate-stage models for community research, corresponding to training on approximately 0.2 to 2.4 trillion tokens each ([Intermediate Checkpoints Download](https://huggingface.co/baichuan-inc/Baichuan2-7B-Intermediate-Checkpoints)). The graph below shows the performance changes of these checkpoints on three benchmarks: C-Eval, MMLU, and CMMLU.

|

| 130 |

+

|

| 131 |

+

|

| 132 |

+

|

| 133 |

+

# <span id=""Community"">社区与生态/Community</span>

|

| 134 |

+

|

| 135 |

+

## Intel 酷睿 Ultra 平台运行百川大模型

|

| 136 |

+

|

| 137 |

+

使用酷睿™/至强® 可扩展处理器或配合锐炫™ GPU等进行部署[Baichuan2-7B-Chat],[Baichuan2-13B-Chat]模型,推荐使用 BigDL-LLM([CPU], [GPU])以发挥更好推理性能。

|

| 138 |

+

|

| 139 |

+

详细支持信息可参考[中文操作手册](https://github.com/intel-analytics/bigdl-llm-tutorial/tree/main/Chinese_Version),包括用notebook支持,[加载,优化,保存方法](https://github.com/intel-analytics/bigdl-llm-tutorial/blob/main/Chinese_Version/ch_3_AppDev_Basic/3_BasicApp.ipynb)等。

|

| 140 |

+

|

| 141 |

+

When deploy on Core™/Xeon® Scalable Processors or with Arc™ GPU, BigDL-LLM ([CPU], [GPU]) is recommended to take full advantage of better inference performance.

|

| 142 |

+

|

| 143 |

+

# <span id=""Terms"">声明与协议/Terms and Conditions</span>

|

| 144 |

+

|

| 145 |

+

## 声明

|

| 146 |

+

|

| 147 |

+

我们在此声明,我们的开发团队并未基于 Baichuan 2 模型开发任何应用,无论是在 iOS、Android、网页或任何其他平台。我们强烈呼吁所有使用者,不要利用

|

| 148 |

+

Baichuan 2 模型进行任何危害国家社会安全或违法的活动。另外,我们也要求使用者不要将 Baichuan 2

|

| 149 |

+

模型用于未经适当安全审查和备案的互联网服务。我们希望所有的使用者都能遵守这个原则,确保科技的发展能在规范和合法的环境下进行。

|

| 150 |

+

|

| 151 |

+

我们已经尽我们所能,来确保模型训练过程中使用的数据的合规性。然而,尽管我们已经做出了巨大的努力,但由于模型和数据的复杂性,仍有可能存在一些无法预见的问题。因此,如果由于使用

|

| 152 |

+

Baichuan 2 开源模型而导致的任何问题,包括但不限于数据安全问题、公共舆论风险,或模型被误导、滥用、传播或不当利用所带来的任何风险和问题,我们将不承担任何责任。

|

| 153 |

+

|

| 154 |

+

We hereby declare that our team has not developed any applications based on Baichuan 2 models, not on iOS, Android, the web, or any other platform. We strongly call on all users not to use Baichuan 2 models for any activities that harm national / social security or violate the law. Also, we ask users not to use Baichuan 2 models for Internet services that have not undergone appropriate security reviews and filings. We hope that all users can abide by this principle and ensure that the development of technology proceeds in a regulated and legal environment.

|

| 155 |

+

|

| 156 |

+

We have done our best to ensure the compliance of the data used in the model training process. However, despite our considerable efforts, there may still be some unforeseeable issues due to the complexity of the model and data. Therefore, if any problems arise due to the use of Baichuan 2 open-source models, including but not limited to data security issues, public opinion risks, or any risks and problems brought about by the model being misled, abused, spread or improperly exploited, we will not assume any responsibility.

|

| 157 |

+

|

| 158 |

+

## 协议

|

| 159 |

+

|

| 160 |

+

社区使用 Baichuan 2 模型需要遵循 [Apache 2.0](https://github.com/baichuan-inc/Baichuan2/blob/main/LICENSE) 和[《Baichuan 2 模型社区许可协议》](https://huggingface.co/baichuan-inc/Baichuan2-7B-Base/resolve/main/Baichuan%202%E6%A8%A1%E5%9E%8B%E7%A4%BE%E5%8C%BA%E8%AE%B8%E5%8F%AF%E5%8D%8F%E8%AE%AE.pdf)。Baichuan 2 模型支持商业用途,如果您计划将 Baichuan 2 模型或其衍生品用于商业目的,请您确认您的主体符合以下情况:

|

| 161 |

+

1. 您或您的关联方的服务或产品的日均用户活跃量(DAU)低于100万。

|

| 162 |

+

2. 您或您的关联方不是软件服务提供商、云服务提供商。

|

| 163 |

+

3. 您或您的关联方不存在将授予您的商用许可,未经百川许可二次授权给其他第三方的可能。

|

| 164 |

+

|

| 165 |

+

在符合以上条件的前提下,您需要通过以下联系邮箱 opensource@baichuan-inc.com ,提交《Baichuan 2 模型社区许可协议》要求的申请材料。审核通过后,百川将特此授予您一个非排他性、全球性、不可转让、不可再许可、可撤销的商用版权许可。

|

| 166 |

+

|

| 167 |

+

The community usage of Baichuan 2 model requires adherence to [Apache 2.0](https://github.com/baichuan-inc/Baichuan2/blob/main/LICENSE) and [Community License for Baichuan2 Model](https://huggingface.co/baichuan-inc/Baichuan2-7B-Base/resolve/main/Baichuan%202%E6%A8%A1%E5%9E%8B%E7%A4%BE%E5%8C%BA%E8%AE%B8%E5%8F%AF%E5%8D%8F%E8%AE%AE.pdf). The Baichuan 2 model supports commercial use. If you plan to use the Baichuan 2 model or its derivatives for commercial purposes, please ensure that your entity meets the following conditions:

|

| 168 |

+

|

| 169 |

+

1. The Daily Active Users (DAU) of your or your affiliate's service or product is less than 1 million.

|

| 170 |

+

2. Neither you nor your affiliates are software service providers or cloud service providers.

|

| 171 |

+

3. There is no possibility for you or your affiliates to grant the commercial license given to you, to reauthorize it to other third parties without Baichuan's permission.

|

| 172 |

+

|

| 173 |

+

Upon meeting the above conditions, you need to submit the application materials required by the Baichuan 2 Model Community License Agreement via the following contact email: opensource@baichuan-inc.com. Once approved, Baichuan will hereby grant you a non-exclusive, global, non-transferable, non-sublicensable, revocable commercial copyright license.

|

| 174 |

+

|

| 175 |

+

|

| 176 |

+

[GitHub]:https://github.com/baichuan-inc/Baichuan2

|

| 177 |

+

[Baichuan2]:https://github.com/baichuan-inc/Baichuan2

|

| 178 |

+

|

| 179 |

+

[Baichuan-7B]:https://huggingface.co/baichuan-inc/Baichuan-7B

|

| 180 |

+

[Baichuan2-7B-Base]:https://huggingface.co/baichuan-inc/Baichuan2-7B-Base

|

| 181 |

+

[Baichuan2-7B-Chat]:https://huggingface.co/baichuan-inc/Baichuan2-7B-Chat

|

| 182 |

+

[Baichuan2-7B-Chat-4bits]:https://huggingface.co/baichuan-inc/Baichuan2-7B-Chat-4bits

|

| 183 |

+

[Baichuan-13B-Base]:https://huggingface.co/baichuan-inc/Baichuan-13B-Base

|

| 184 |

+

[Baichuan2-13B-Base]:https://huggingface.co/baichuan-inc/Baichuan2-13B-Base

|

| 185 |

+

[Baichuan2-13B-Chat]:https://huggingface.co/baichuan-inc/Baichuan2-13B-Chat

|

| 186 |

+

[Baichuan2-13B-Chat-4bits]:https://huggingface.co/baichuan-inc/Baichuan2-13B-Chat-4bits

|

| 187 |

+

|

| 188 |

+

[通用]:https://github.com/baichuan-inc/Baichuan2#%E9%80%9A%E7%94%A8%E9%A2%86%E5%9F%9F

|

| 189 |

+

[法律]:https://github.com/baichuan-inc/Baichuan2#%E6%B3%95%E5%BE%8B%E5%8C%BB%E7%96%97

|

| 190 |

+

[医疗]:https://github.com/baichuan-inc/Baichuan2#%E6%B3%95%E5%BE%8B%E5%8C%BB%E7%96%97

|

| 191 |

+

[数学]:https://github.com/baichuan-inc/Baichuan2#%E6%95%B0%E5%AD%A6%E4%BB%A3%E7%A0%81

|

| 192 |

+

[代码]:https://github.com/baichuan-inc/Baichuan2#%E6%95%B0%E5%AD%A6%E4%BB%A3%E7%A0%81

|

| 193 |

+

[多语言翻译]:https://github.com/baichuan-inc/Baichuan2#%E5%A4%9A%E8%AF%AD%E8%A8%80%E7%BF%BB%E8%AF%91

|

| 194 |

+

|

| 195 |

+

[《Baichuan 2 模型社区许可协议》]:https://huggingface.co/baichuan-inc/Baichuan2-7B-Base/blob/main/Baichuan%202%E6%A8%A1%E5%9E%8B%E7%A4%BE%E5%8C%BA%E8%AE%B8%E5%8F%AF%E5%8D%8F%E8%AE%AE.pdf

|

| 196 |

+

|

| 197 |

+

[邮件申请]: mailto:opensource@baichuan-inc.com

|

| 198 |

+

[Email]: mailto:opensource@baichuan-inc.com

|

| 199 |

+

[opensource@baichuan-inc.com]: mailto:opensource@baichuan-inc.com

|

| 200 |

+

[训练过程heckpoint下载]: https://huggingface.co/baichuan-inc/Baichuan2-7B-Intermediate-Checkpoints

|

| 201 |

+

[百川智能]: https://www.baichuan-ai.com

|

| 202 |

+

|

| 203 |

+

[CPU]: https://github.com/intel-analytics/BigDL/tree/main/python/llm/example/CPU/HF-Transformers-AutoModels/Model/baichuan2

|

| 204 |

+

[GPU]: https://github.com/intel-analytics/BigDL/tree/main/python/llm/example/GPU/HF-Transformers-AutoModels/Model/baichuan2","{""id"": ""baichuan-inc/Baichuan2-13B-Chat"", ""author"": ""baichuan-inc"", ""sha"": ""c8d877c7ca596d9aeff429d43bff06e288684f45"", ""last_modified"": ""2024-02-26 08:58:32+00:00"", ""created_at"": ""2023-08-29 02:30:01+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 7032, ""downloads_all_time"": null, ""likes"": 424, ""library_name"": ""transformers"", ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""transformers"", ""pytorch"", ""baichuan"", ""text-generation"", ""custom_code"", ""en"", ""zh"", ""license:other"", ""autotrain_compatible"", ""text-generation-inference"", ""endpoints_compatible"", ""region:us""], ""pipeline_tag"": ""text-generation"", ""mask_token"": null, ""trending_score"": null, ""card_data"": ""language:\n- en\n- zh\nlicense: other\ntasks:\n- text-generation"", ""widget_data"": [{""text"": ""My name is Julien and I like to""}, {""text"": ""I like traveling by train because""}, {""text"": ""Paris is an amazing place to visit,""}, {""text"": ""Once upon a time,""}], ""model_index"": null, ""config"": {""architectures"": [""BaichuanForCausalLM""], ""auto_map"": {""AutoConfig"": ""configuration_baichuan.BaichuanConfig"", ""AutoModelForCausalLM"": ""modeling_baichuan.BaichuanForCausalLM""}, ""model_type"": ""baichuan"", ""tokenizer_config"": {""bos_token"": {""__type"": ""AddedToken"", ""content"": ""<s>"", ""lstrip"": false, ""normalized"": true, ""rstrip"": false, ""single_word"": true}, ""eos_token"": {""__type"": ""AddedToken"", ""content"": ""</s>"", ""lstrip"": false, ""normalized"": true, ""rstrip"": false, ""single_word"": true}, ""pad_token"": {""__type"": ""AddedToken"", ""content"": ""<unk>"", ""lstrip"": false, ""normalized"": true, ""rstrip"": false, ""single_word"": true}, ""unk_token"": {""__type"": ""AddedToken"", ""content"": ""<unk>"", ""lstrip"": false, ""normalized"": true, ""rstrip"": false, ""single_word"": true}}}, ""transformers_info"": {""auto_model"": ""AutoModelForCausalLM"", ""custom_class"": ""modeling_baichuan.BaichuanForCausalLM"", ""pipeline_tag"": ""text-generation"", ""processor"": null}, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='Baichuan2 \u6a21\u578b\u793e\u533a\u8bb8\u53ef\u534f\u8bae.pdf', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='Community License for Baichuan2 Model.pdf', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='README.md', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='configuration_baichuan.py', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='generation_config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='generation_utils.py', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='handler.py', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='modeling_baichuan.py', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='pytorch_model-00001-of-00003.bin', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='pytorch_model-00002-of-00003.bin', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='pytorch_model-00003-of-00003.bin', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='pytorch_model.bin.index.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='quantizer.py', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='special_tokens_map.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenization_baichuan.py', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer.model', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer_config.json', size=None, blob_id=None, lfs=None)""], ""spaces"": [""eduagarcia/open_pt_llm_leaderboard"", ""EmbeddedLLM/chat-template-generation"", ""Justinrune/LLaMA-Factory"", ""kenken999/fastapi_django_main_live"", ""officialhimanshu595/llama-factory"", ""Dify-AI/Baichuan2-13B-Chat"", ""li-qing/FIRE"", ""Zulelee/langchain-chatchat"", ""xu-song/kplug"", ""tianleliphoebe/visual-arena"", ""Ashmal/MobiLlama"", ""IS2Lab/S-Eval"", ""PegaMichael/Taiwan-LLaMa2-Copy"", ""silk-road/ChatHaruhi-Qwen118k-Extended"", ""tjtanaa/chat-template-generation"", ""CaiRou-Huang/TwLLM7B-v2.0-base"", ""blackwingedkite/gutalk"", ""cllatMTK/Breeze"", ""zivzhao/Baichuan2-13B-Chat"", ""blackwingedkite/alpaca2_clas"", ""silk-road/ChatHaruhi-BaiChuan2-13B"", ""Bofeee5675/FIRE"", ""evelyn-lo/evelyn"", ""yuantao-infini-ai/demo_test"", ""zjasper666/bf16_vs_fp8"", ""martinakaduc/melt"", ""cloneQ/internLMRAG"", ""hujin0929/LlamaIndex_RAG"", ""flyfive0315/internLlamaIndex"", ""sunxiaokang/llamaindex_RAG_web"", ""kai119/llama"", ""qxy826982153/LlamaIndexRAG"", ""ilemon/Internlm2.5LLaMAindexRAG"", ""msun415/Llamole""], ""safetensors"": null, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2024-02-26 08:58:32+00:00"", ""cardData"": ""language:\n- en\n- zh\nlicense: other\ntasks:\n- text-generation"", ""transformersInfo"": {""auto_model"": ""AutoModelForCausalLM"", ""custom_class"": ""modeling_baichuan.BaichuanForCausalLM"", ""pipeline_tag"": ""text-generation"", ""processor"": null}, ""_id"": ""64ed5829453a4b4bef2814a2"", ""modelId"": ""baichuan-inc/Baichuan2-13B-Chat"", ""usedStorage"": 111175613599}",0,https://huggingface.co/zimyu/baichuan2-13b-zsee-lora,1,https://huggingface.co/yanxinlan/adapter,1,"https://huggingface.co/TheBloke/Baichuan2-13B-Chat-GPTQ, https://huggingface.co/second-state/Baichuan2-13B-Chat-GGUF, https://huggingface.co/mradermacher/Baichuan2-13B-Chat-GGUF, https://huggingface.co/mradermacher/Baichuan2-13B-Chat-i1-GGUF",4,,0,"Ashmal/MobiLlama, Bofeee5675/FIRE, EmbeddedLLM/chat-template-generation, IS2Lab/S-Eval, Justinrune/LLaMA-Factory, Zulelee/langchain-chatchat, blackwingedkite/gutalk, eduagarcia/open_pt_llm_leaderboard, evelyn-lo/evelyn, huggingface/InferenceSupport/discussions/new?title=baichuan-inc/Baichuan2-13B-Chat&description=React%20to%20this%20comment%20with%20an%20emoji%20to%20vote%20for%20%5Bbaichuan-inc%2FBaichuan2-13B-Chat%5D(%2Fbaichuan-inc%2FBaichuan2-13B-Chat)%20to%20be%20supported%20by%20Inference%20Providers.%0A%0A(optional)%20Which%20providers%20are%20you%20interested%20in%3F%20(Novita%2C%20Hyperbolic%2C%20Together%E2%80%A6)%0A, kenken999/fastapi_django_main_live, martinakaduc/melt, xu-song/kplug",13

|

| 205 |

+

zimyu/baichuan2-13b-zsee-lora,"---

|

| 206 |

+

base_model:

|

| 207 |

+

- baichuan-inc/Baichuan2-13B-Chat

|

| 208 |

+

tags:

|

| 209 |

+

- chemistry

|

| 210 |

+

---

|

| 211 |

+

This LoRA model was fine-tuned using the zeolite synthesis dataset ZSEE.

|

| 212 |

+

|

| 213 |

+

Usage:

|

| 214 |

+

```

|

| 215 |

+

import torch

|

| 216 |

+

from transformers import (

|

| 217 |

+

AutoConfig,

|

| 218 |

+

AutoTokenizer,

|

| 219 |

+

AutoModelForCausalLM,

|

| 220 |

+

GenerationConfig

|

| 221 |

+

)

|

| 222 |

+

from peft import PeftModel

|

| 223 |

+

device = torch.device(""cuda"" if torch.cuda.is_available() else ""cpu"")

|

| 224 |

+

model_path = 'baichuan-inc/Baichuan2-13B-Chat'

|

| 225 |

+

lora_path = 'zimyu/baichuan2-13b-zsee-lora'

|

| 226 |

+

|

| 227 |

+

config = AutoConfig.from_pretrained(model_path, trust_remote_code=True)

|

| 228 |

+

tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

|

| 229 |

+

|

| 230 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 231 |

+

model_path,

|

| 232 |

+

config=config,

|

| 233 |

+

device_map=""auto"",

|

| 234 |

+

torch_dtype=torch.bfloat16,

|

| 235 |

+

trust_remote_code=True,

|

| 236 |

+

)

|

| 237 |

+

|

| 238 |

+

model = PeftModel.from_pretrained(

|

| 239 |

+

model,

|

| 240 |

+

lora_path,

|

| 241 |

+

)

|

| 242 |

+

model.eval()

|

| 243 |

+

|

| 244 |

+

system_prompt = ""<<SYS>>\nYou are a helpful, respectful and honest assistant. Always answer as helpfully as possible, while being safe. Your answers should not include any harmful, unethical, racist, sexist, toxic, dangerous, or illegal content. Please ensure that your responses are socially unbiased and positive in nature.\n\nIf a question does not make any sense, or is not factually coherent, explain why instead of answering something not correct. If you don't know the answer to a question, please don't share false information.\n<</SYS>>\n\n""

|

| 245 |

+

sintruct = ""{\""instruction\"": \""You are an expert in event argument extraction. Please extract event arguments and their roles from the input that conform to the schema definition, which already includes event trigger words. If an argument does not exist, return NAN or an empty dictionary. Please respond in the format of a JSON string.\"", \""schema\"": [{\""event_type\"": \""Add\"", \""trigger\"": [\""added\""], \""arguments\"": [\""container\"", \""material\"", \""temperature\""]}, {\""event_type\"": \""Stir\"", \""trigger\"": [\""stirred\""], \""arguments\"": [\""sample\"", \""revolution\"", \""temperature\"", \""duration\""]}], \""input\"": \""Subsequently , the pre-prepared silicalite-1 seed was added to the above mixture and stirred for another 1 h , and the quantity of seed equals to 7.0 wt% of the total SiO2 in the starting gel .\""}""

|

| 246 |

+

sintruct = '[INST] ' + system_prompt + sintruct + ' [/INST]'

|

| 247 |

+

|

| 248 |

+

input_ids = tokenizer.encode(sintruct, return_tensors=""pt"").to(device)

|

| 249 |

+

input_length = input_ids.size(1)

|

| 250 |

+

generation_output = model.generate(input_ids=input_ids, generation_config=GenerationConfig(max_length=512, max_new_tokens=256, return_dict_in_generate=True))

|

| 251 |

+

generation_output = generation_output.sequences[0]

|

| 252 |

+

generation_output = generation_output[input_length:]

|

| 253 |

+

output = tokenizer.decode(generation_output, skip_special_tokens=True)

|

| 254 |

+

|

| 255 |

+

print(output)

|

| 256 |

+

```

|

| 257 |

+

|

| 258 |

+

Output:

|

| 259 |

+

```

|

| 260 |

+

{""Add"": [{""container"": ""NAN"", ""material"": [""above mixture"", ""pre-prepared silicalite-1 seed""], ""temperature"": ""NAN""}], ""Stir"": [{""sample"": ""NAN"", ""revolution"": ""NAN"", ""temperature"": ""NAN"", ""duration"": ""1 h""}]}

|

| 261 |

+

```","{""id"": ""zimyu/baichuan2-13b-zsee-lora"", ""author"": ""zimyu"", ""sha"": ""4cbdd08c1d7b96d8ee9183f594187e54541537bb"", ""last_modified"": ""2025-01-08 13:15:57+00:00"", ""created_at"": ""2025-01-08 03:56:17+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 0, ""downloads_all_time"": null, ""likes"": 0, ""library_name"": null, ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""safetensors"", ""chemistry"", ""base_model:baichuan-inc/Baichuan2-13B-Chat"", ""base_model:finetune:baichuan-inc/Baichuan2-13B-Chat"", ""region:us""], ""pipeline_tag"": null, ""mask_token"": null, ""trending_score"": null, ""card_data"": ""base_model:\n- baichuan-inc/Baichuan2-13B-Chat\ntags:\n- chemistry"", ""widget_data"": null, ""model_index"": null, ""config"": null, ""transformers_info"": null, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='README.md', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='adapter_config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='adapter_model.safetensors', size=None, blob_id=None, lfs=None)""], ""spaces"": [], ""safetensors"": null, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2025-01-08 13:15:57+00:00"", ""cardData"": ""base_model:\n- baichuan-inc/Baichuan2-13B-Chat\ntags:\n- chemistry"", ""transformersInfo"": null, ""_id"": ""677df7617b04df2925cafa2f"", ""modelId"": ""zimyu/baichuan2-13b-zsee-lora"", ""usedStorage"": 892654240}",1,,0,,0,,0,,0,huggingface/InferenceSupport/discussions/new?title=zimyu/baichuan2-13b-zsee-lora&description=React%20to%20this%20comment%20with%20an%20emoji%20to%20vote%20for%20%5Bzimyu%2Fbaichuan2-13b-zsee-lora%5D(%2Fzimyu%2Fbaichuan2-13b-zsee-lora)%20to%20be%20supported%20by%20Inference%20Providers.%0A%0A(optional)%20Which%20providers%20are%20you%20interested%20in%3F%20(Novita%2C%20Hyperbolic%2C%20Together%E2%80%A6)%0A,1

|

Baichuan2-7B-Chat_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv

ADDED

|

@@ -0,0 +1,235 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

baichuan-inc/Baichuan2-7B-Chat,"---

|

| 3 |

+

language:

|

| 4 |

+

- en

|

| 5 |

+

- zh

|

| 6 |

+

license_name: baichuan2-community-license

|

| 7 |

+

license_link: https://huggingface.co/baichuan-inc/Baichuan2-7B-Chat/blob/main/Community%20License%20for%20Baichuan2%20Model.pdf

|

| 8 |

+

tasks:

|

| 9 |

+

- text-generation

|

| 10 |

+

---

|

| 11 |

+

|

| 12 |

+

<!-- markdownlint-disable first-line-h1 -->

|

| 13 |

+

<!-- markdownlint-disable html -->

|

| 14 |

+

<div align=""center"">

|

| 15 |

+

<h1>

|

| 16 |

+

Baichuan 2

|

| 17 |

+

</h1>

|

| 18 |

+

</div>

|

| 19 |

+

|

| 20 |

+

<div align=""center"">

|

| 21 |

+

<a href=""https://github.com/baichuan-inc/Baichuan2"" target=""_blank"">🦉GitHub</a> | <a href=""https://github.com/baichuan-inc/Baichuan-7B/blob/main/media/wechat.jpeg?raw=true"" target=""_blank"">💬WeChat</a>

|

| 22 |

+

</div>

|

| 23 |

+

<div align=""center"">

|

| 24 |

+

百川API支持搜索增强和192K长窗口,新增百川搜索增强知识库、限时免费!<br>

|

| 25 |

+

🚀 <a href=""https://www.baichuan-ai.com/"" target=""_blank"">百川大模型在线对话平台</a> 已正式向公众开放 🎉

|

| 26 |

+

</div>

|

| 27 |

+

|

| 28 |

+

# 目录/Table of Contents

|

| 29 |

+

|

| 30 |

+

- [📖 模型介绍/Introduction](#Introduction)

|

| 31 |

+

- [⚙️ 快速开始/Quick Start](#Start)

|

| 32 |

+

- [📊 Benchmark评估/Benchmark Evaluation](#Benchmark)

|

| 33 |

+

- [👥 社区与生态/Community](#Community)

|

| 34 |

+

- [📜 声明与协议/Terms and Conditions](#Terms)

|

| 35 |

+

|

| 36 |

+

|

| 37 |

+

# <span id=""Introduction"">模型介绍/Introduction</span>

|

| 38 |

+

|

| 39 |

+

Baichuan 2 是[百川智能]推出的新一代开源大语言模型,采用 **2.6 万亿** Tokens 的高质量语料训练,在权威的中文和英文 benchmark

|

| 40 |

+

上均取得同尺寸最好的效果。本次发布包含有 7B、13B 的 Base 和 Chat 版本,并提供了 Chat 版本的 4bits

|

| 41 |

+

量化,所有版本不仅对学术研究完全开放,开发者也仅需[邮件申请]并获得官方商用许可后,即可以免费商用。具体发布版本和下载见下表:

|

| 42 |

+

|

| 43 |

+

Baichuan 2 is the new generation of large-scale open-source language models launched by [Baichuan Intelligence inc.](https://www.baichuan-ai.com/).

|

| 44 |

+

It is trained on a high-quality corpus with 2.6 trillion tokens and has achieved the best performance in authoritative Chinese and English benchmarks of the same size.

|

| 45 |

+

This release includes 7B and 13B versions for both Base and Chat models, along with a 4bits quantized version for the Chat model.

|

| 46 |

+

All versions are fully open to academic research, and developers can also use them for free in commercial applications after obtaining an official commercial license through [email request](mailto:opensource@baichuan-inc.com).

|

| 47 |

+

The specific release versions and download links are listed in the table below:

|

| 48 |

+

|

| 49 |

+

| | Base Model | Chat Model | 4bits Quantized Chat Model |

|

| 50 |

+

|:---:|:--------------------:|:--------------------:|:--------------------------:|

|

| 51 |

+

| 7B | [Baichuan2-7B-Base](https://huggingface.co/baichuan-inc/Baichuan2-7B-Base) | [Baichuan2-7B-Chat](https://huggingface.co/baichuan-inc/Baichuan2-7B-Chat) | [Baichuan2-7B-Chat-4bits](https://huggingface.co/baichuan-inc/Baichuan2-7B-Base-4bits) |

|

| 52 |

+

| 13B | [Baichuan2-13B-Base](https://huggingface.co/baichuan-inc/Baichuan2-13B-Base) | [Baichuan2-13B-Chat](https://huggingface.co/baichuan-inc/Baichuan2-13B-Chat) | [Baichuan2-13B-Chat-4bits](https://huggingface.co/baichuan-inc/Baichuan2-13B-Chat-4bits) |

|

| 53 |

+

|

| 54 |

+

# <span id=""Start"">快速开始/Quick Start</span>

|

| 55 |

+

|

| 56 |

+

在Baichuan2系列模型中,我们为了加快推理速度使用了Pytorch2.0加入的新功能F.scaled_dot_product_attention,因此模型需要在Pytorch2.0环境下运行。

|

| 57 |

+

|

| 58 |

+

In the Baichuan 2 series models, we have utilized the new feature `F.scaled_dot_product_attention` introduced in PyTorch 2.0 to accelerate inference speed. Therefore, the model needs to be run in a PyTorch 2.0 environment.

|

| 59 |

+

|

| 60 |

+

|

| 61 |

+

```python

|

| 62 |

+

import torch

|

| 63 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 64 |

+

from transformers.generation.utils import GenerationConfig

|

| 65 |

+

tokenizer = AutoTokenizer.from_pretrained(""baichuan-inc/Baichuan2-7B-Chat"", use_fast=False, trust_remote_code=True)

|

| 66 |

+

model = AutoModelForCausalLM.from_pretrained(""baichuan-inc/Baichuan2-7B-Chat"", device_map=""auto"", torch_dtype=torch.bfloat16, trust_remote_code=True)

|

| 67 |

+

model.generation_config = GenerationConfig.from_pretrained(""baichuan-inc/Baichuan2-7B-Chat"")

|

| 68 |

+

messages = []

|

| 69 |

+

messages.append({""role"": ""user"", ""content"": ""解释一下“温故而知新”""})

|

| 70 |

+

response = model.chat(tokenizer, messages)

|

| 71 |

+

print(response)

|

| 72 |

+

""温故而知新""是一句中国古代的成语,出自《论语·为政》篇。这句话的意思是:通过回顾过去,我们可以发现新的知识和理解。换句话说,学习历史和经验可以让我们更好地理解现在和未来。

|

| 73 |

+

|

| 74 |

+

这句话鼓励我们在学习和生活中不断地回顾和反思过去的经验,从而获得新的启示和成长。通过重温旧的知识和经历,我们可以发现新的观点和理解,从而更好地应对不断变化的世界和挑战。

|

| 75 |

+

```

|

| 76 |

+

|

| 77 |

+

# <span id=""Benchmark"">Benchmark ��果/Benchmark Evaluation</span>

|

| 78 |

+

|

| 79 |

+

我们在[通用]、[法律]、[医疗]、[数学]、[代码]和[多语言翻译]六个领域的中英文权威数据集上对模型进行了广泛测试,更多详细测评结果可查看[GitHub]。

|

| 80 |

+

|

| 81 |

+

We have extensively tested the model on authoritative Chinese-English datasets across six domains: [General](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md#general-domain), [Legal](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md#law-and-medicine), [Medical](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md#law-and-medicine), [Mathematics](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md#mathematics-and-code), [Code](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md#mathematics-and-code), and [Multilingual Translation](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md#multilingual-translation). For more detailed evaluation results, please refer to [GitHub](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md).

|

| 82 |

+

|

| 83 |

+

### 7B Model Results

|

| 84 |

+

|

| 85 |

+

| | **C-Eval** | **MMLU** | **CMMLU** | **Gaokao** | **AGIEval** | **BBH** |

|

| 86 |

+

|:-----------------------:|:----------:|:--------:|:---------:|:----------:|:-----------:|:-------:|

|

| 87 |

+

| | 5-shot | 5-shot | 5-shot | 5-shot | 5-shot | 3-shot |

|

| 88 |

+

| **GPT-4** | 68.40 | 83.93 | 70.33 | 66.15 | 63.27 | 75.12 |

|

| 89 |

+

| **GPT-3.5 Turbo** | 51.10 | 68.54 | 54.06 | 47.07 | 46.13 | 61.59 |

|

| 90 |

+

| **LLaMA-7B** | 27.10 | 35.10 | 26.75 | 27.81 | 28.17 | 32.38 |

|

| 91 |

+

| **LLaMA2-7B** | 28.90 | 45.73 | 31.38 | 25.97 | 26.53 | 39.16 |

|

| 92 |

+

| **MPT-7B** | 27.15 | 27.93 | 26.00 | 26.54 | 24.83 | 35.20 |

|

| 93 |

+

| **Falcon-7B** | 24.23 | 26.03 | 25.66 | 24.24 | 24.10 | 28.77 |

|

| 94 |

+

| **ChatGLM2-6B** | 50.20 | 45.90 | 49.00 | 49.44 | 45.28 | 31.65 |

|

| 95 |

+

| **[Baichuan-7B]** | 42.80 | 42.30 | 44.02 | 36.34 | 34.44 | 32.48 |

|

| 96 |

+

| **[Baichuan2-7B-Base]** | 54.00 | 54.16 | 57.07 | 47.47 | 42.73 | 41.56 |

|

| 97 |

+

|

| 98 |

+

### 13B Model Results

|

| 99 |

+

|

| 100 |

+

| | **C-Eval** | **MMLU** | **CMMLU** | **Gaokao** | **AGIEval** | **BBH** |

|

| 101 |

+

|:---------------------------:|:----------:|:--------:|:---------:|:----------:|:-----------:|:-------:|

|

| 102 |

+

| | 5-shot | 5-shot | 5-shot | 5-shot | 5-shot | 3-shot |

|

| 103 |

+

| **GPT-4** | 68.40 | 83.93 | 70.33 | 66.15 | 63.27 | 75.12 |

|

| 104 |

+

| **GPT-3.5 Turbo** | 51.10 | 68.54 | 54.06 | 47.07 | 46.13 | 61.59 |

|

| 105 |

+

| **LLaMA-13B** | 28.50 | 46.30 | 31.15 | 28.23 | 28.22 | 37.89 |

|

| 106 |

+

| **LLaMA2-13B** | 35.80 | 55.09 | 37.99 | 30.83 | 32.29 | 46.98 |

|

| 107 |

+

| **Vicuna-13B** | 32.80 | 52.00 | 36.28 | 30.11 | 31.55 | 43.04 |

|

| 108 |

+

| **Chinese-Alpaca-Plus-13B** | 38.80 | 43.90 | 33.43 | 34.78 | 35.46 | 28.94 |

|

| 109 |

+

| **XVERSE-13B** | 53.70 | 55.21 | 58.44 | 44.69 | 42.54 | 38.06 |

|

| 110 |

+

| **[Baichuan-13B-Base]** | 52.40 | 51.60 | 55.30 | 49.69 | 43.20 | 43.01 |

|

| 111 |

+

| **[Baichuan2-13B-Base]** | 58.10 | 59.17 | 61.97 | 54.33 | 48.17 | 48.78 |

|

| 112 |

+

|

| 113 |

+

## 训练过程模型/Training Dynamics

|

| 114 |

+

|

| 115 |

+

除了训练了 2.6 万亿 Tokens 的 [Baichuan2-7B-Base](https://huggingface.co/baichuan-inc/Baichuan2-7B-Base) 模型,我们还提供了在此之前的另外 11 个中间过程的模型(分别对应训练了约 0.2 ~ 2.4 万亿 Tokens)供社区研究使用

|

| 116 |

+

([训练过程checkpoint下载](https://huggingface.co/baichuan-inc/Baichuan2-7B-Intermediate-Checkpoints))。下图给出了这些 checkpoints 在 C-Eval、MMLU、CMMLU 三个 benchmark 上的效果变化:

|

| 117 |

+

|

| 118 |

+

In addition to the [Baichuan2-7B-Base](https://huggingface.co/baichuan-inc/Baichuan2-7B-Base) model trained on 2.6 trillion tokens, we also offer 11 additional intermediate-stage models for community research, corresponding to training on approximately 0.2 to 2.4 trillion tokens each ([Intermediate Checkpoints Download](https://huggingface.co/baichuan-inc/Baichuan2-7B-Intermediate-Checkpoints)). The graph below shows the performance changes of these checkpoints on three benchmarks: C-Eval, MMLU, and CMMLU.

|

| 119 |

+

|

| 120 |

+

|

| 121 |

+

|

| 122 |

+

# <span id=""Community"">社区与生态/Community</span>

|

| 123 |

+

|

| 124 |

+

## Intel 酷睿 Ultra 平台运行百川大模型

|

| 125 |

+

|

| 126 |

+

使用酷睿™/至强® 可扩展处理器或配合锐炫™ GPU等进行部署[Baichuan2-7B-Chat],[Baichuan2-13B-Chat]模型,推荐使用 BigDL-LLM([CPU], [GPU])以发挥更好推理性能。

|

| 127 |

+

|

| 128 |

+

详细支持信息可参考[中文操作手册](https://github.com/intel-analytics/bigdl-llm-tutorial/tree/main/Chinese_Version),包括用notebook支持,[加载,优化,保存方法](https://github.com/intel-analytics/bigdl-llm-tutorial/blob/main/Chinese_Version/ch_3_AppDev_Basic/3_BasicApp.ipynb)等。

|

| 129 |

+

|

| 130 |

+

When deploy on Core™/Xeon® Scalable Processors or with Arc™ GPU, BigDL-LLM ([CPU], [GPU]) is recommended to take full advantage of better inference performance.

|

| 131 |

+

|

| 132 |

+

# <span id=""Terms"">声明与协议/Terms and Conditions</span>

|

| 133 |

+

|

| 134 |

+

## 声明

|

| 135 |

+

|

| 136 |

+

我们在此声明,我们的开发团队并未基于 Baichuan 2 模型开发任何应用,无论是在 iOS、Android、网页或任何其他平台。我们强烈呼吁所有使用者,不要利用

|

| 137 |

+

Baichuan 2 模型进行任何危害国家社会安全或违法的活动。另外,我们也要求使用者不要将 Baichuan 2

|

| 138 |

+

模型用于未经适当安全审查和备案的互联网服务。我们希望所有的使用者都能遵守这个原则,确保科技的发展能在规范和合法的环境下进行。

|

| 139 |

+

|

| 140 |

+

我们已经尽我们所能,来确保模型训练过程中使用的数据的合规性。然而,尽管我们已经做出了巨大的努力,但由于模型和数据的复杂性,仍有可能存在一些无法预见的问题。因此,如果由于使用

|

| 141 |

+

Baichuan 2 开源模型而导致的任何问题,包括但不限于数据安全问题、公共舆论风险,或模型被误导、滥用、传播或不当利用所带来的任何风险和问题,我们将不承担任何责任。

|

| 142 |

+

|

| 143 |

+

We hereby declare that our team has not developed any applications based on Baichuan 2 models, not on iOS, Android, the web, or any other platform. We strongly call on all users not to use Baichuan 2 models for any activities that harm national / social security or violate the law. Also, we ask users not to use Baichuan 2 models for Internet services that have not undergone appropriate security reviews and filings. We hope that all users can abide by this principle and ensure that the development of technology proceeds in a regulated and legal environment.

|

| 144 |

+

|

| 145 |

+

We have done our best to ensure the compliance of the data used in the model training process. However, despite our considerable efforts, there may still be some unforeseeable issues due to the complexity of the model and data. Therefore, if any problems arise due to the use of Baichuan 2 open-source models, including but not limited to data security issues, public opinion risks, or any risks and problems brought about by the model being misled, abused, spread or improperly exploited, we will not assume any responsibility.

|

| 146 |

+

|

| 147 |

+

## 协议

|

| 148 |

+

|

| 149 |

+

社区使用 Baichuan 2 模型需要遵循 [Apache 2.0](https://github.com/baichuan-inc/Baichuan2/blob/main/LICENSE) 和[《Baichuan 2 模型社区许可协议》](https://huggingface.co/baichuan-inc/Baichuan2-7B-Base/resolve/main/Baichuan%202%E6%A8%A1%E5%9E%8B%E7%A4%BE%E5%8C%BA%E8%AE%B8%E5%8F%AF%E5%8D%8F%E8%AE%AE.pdf)。Baichuan 2 模型支持商业用途,如果您计划将 Baichuan 2 模型或其衍生品用于商业目的,请您确认您的主体符合以下情况:

|

| 150 |

+

1. 您或您的关联方的服务或产品的日均用户活跃量(DAU)低于100万。

|

| 151 |

+

2. 您或您的关联方不是软件服务提供商、云服务提供商。

|

| 152 |

+

3. 您或您的关联方不存在将授予您的商用许可,未经百川许可二次授权给其他第三方的可能。

|

| 153 |

+

|

| 154 |

+

在符合以上条件的前提下,您需要通过以下联系邮箱 opensource@baichuan-inc.com ,提交《Baichuan 2 模型社区许可协议》要求的申请材料。审核通过后,百川将特此授予您一个非排他性、全球性、不可转让、不可再许可、可撤销的商用版权许可。

|

| 155 |

+

|

| 156 |

+

The community usage of Baichuan 2 model requires adherence to [Apache 2.0](https://github.com/baichuan-inc/Baichuan2/blob/main/LICENSE) and [Community License for Baichuan2 Model](https://huggingface.co/baichuan-inc/Baichuan2-7B-Base/resolve/main/Baichuan%202%E6%A8%A1%E5%9E%8B%E7%A4%BE%E5%8C%BA%E8%AE%B8%E5%8F%AF%E5%8D%8F%E8%AE%AE.pdf). The Baichuan 2 model supports commercial use. If you plan to use the Baichuan 2 model or its derivatives for commercial purposes, please ensure that your entity meets the following conditions:

|

| 157 |

+

|

| 158 |

+

1. The Daily Active Users (DAU) of your or your affiliate's service or product is less than 1 million.

|

| 159 |

+

2. Neither you nor your affiliates are software service providers or cloud service providers.

|

| 160 |

+

3. There is no possibility for you or your affiliates to grant the commercial license given to you, to reauthorize it to other third parties without Baichuan's permission.

|

| 161 |

+

|

| 162 |

+

Upon meeting the above conditions, you need to submit the application materials required by the Baichuan 2 Model Community License Agreement via the following contact email: opensource@baichuan-inc.com. Once approved, Baichuan will hereby grant you a non-exclusive, global, non-transferable, non-sublicensable, revocable commercial copyright license.

|

| 163 |

+

|

| 164 |

+

|

| 165 |

+

[GitHub]:https://github.com/baichuan-inc/Baichuan2

|

| 166 |

+

[Baichuan2]:https://github.com/baichuan-inc/Baichuan2

|

| 167 |

+

|

| 168 |

+

[Baichuan-7B]:https://huggingface.co/baichuan-inc/Baichuan-7B

|

| 169 |

+

[Baichuan2-7B-Base]:https://huggingface.co/baichuan-inc/Baichuan2-7B-Base

|

| 170 |

+

[Baichuan2-7B-Chat]:https://huggingface.co/baichuan-inc/Baichuan2-7B-Chat

|

| 171 |

+

[Baichuan2-7B-Chat-4bits]:https://huggingface.co/baichuan-inc/Baichuan2-7B-Chat-4bits

|

| 172 |

+

[Baichuan-13B-Base]:https://huggingface.co/baichuan-inc/Baichuan-13B-Base

|

| 173 |

+

[Baichuan2-13B-Base]:https://huggingface.co/baichuan-inc/Baichuan2-13B-Base

|

| 174 |

+

[Baichuan2-13B-Chat]:https://huggingface.co/baichuan-inc/Baichuan2-13B-Chat

|

| 175 |

+

[Baichuan2-13B-Chat-4bits]:https://huggingface.co/baichuan-inc/Baichuan2-13B-Chat-4bits

|

| 176 |

+

|

| 177 |

+

[通用]:https://github.com/baichuan-inc/Baichuan2#%E9%80%9A%E7%94%A8%E9%A2%86%E5%9F%9F

|

| 178 |

+

[法律]:https://github.com/baichuan-inc/Baichuan2#%E6%B3%95%E5%BE%8B%E5%8C%BB%E7%96%97

|

| 179 |

+

[医疗]:https://github.com/baichuan-inc/Baichuan2#%E6%B3%95%E5%BE%8B%E5%8C%BB%E7%96%97

|

| 180 |

+

[数学]:https://github.com/baichuan-inc/Baichuan2#%E6%95%B0%E5%AD%A6%E4%BB%A3%E7%A0%81

|

| 181 |

+

[代码]:https://github.com/baichuan-inc/Baichuan2#%E6%95%B0%E5%AD%A6%E4%BB%A3%E7%A0%81

|

| 182 |

+

[多语言翻译]:https://github.com/baichuan-inc/Baichuan2#%E5%A4%9A%E8%AF%AD%E8%A8%80%E7%BF%BB%E8%AF%91

|

| 183 |

+

|

| 184 |

+

[《Baichuan 2 模型社区许可协议》]:https://huggingface.co/baichuan-inc/Baichuan2-7B-Base/blob/main/Baichuan%202%E6%A8%A1%E5%9E%8B%E7%A4%BE%E5%8C%BA%E8%AE%B8%E5%8F%AF%E5%8D%8F%E8%AE%AE.pdf

|

| 185 |

+

|

| 186 |

+

[邮件申请]: mailto:opensource@baichuan-inc.com

|

| 187 |

+

[Email]: mailto:opensource@baichuan-inc.com

|

| 188 |

+

[opensource@baichuan-inc.com]: mailto:opensource@baichuan-inc.com

|

| 189 |

+

[训练过程heckpoint下载]: https://huggingface.co/baichuan-inc/Baichuan2-7B-Intermediate-Checkpoints

|

| 190 |

+

[百川智能]: https://www.baichuan-ai.com

|

| 191 |

+

|

| 192 |

+

[CPU]: https://github.com/intel-analytics/BigDL/tree/main/python/llm/example/CPU/HF-Transformers-AutoModels/Model/baichuan2

|

| 193 |

+

[GPU]: https://github.com/intel-analytics/BigDL/tree/main/python/llm/example/GPU/HF-Transformers-AutoModels/Model/baichuan2

|

| 194 |

+