Add files using upload-large-folder tool

Browse filesThis view is limited to 50 files because it contains too many changes. See raw diff

- 4x-Ultrasharp_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +2 -0

- Annotators_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +5 -0

- Arcane-Diffusion_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +59 -0

- BLOOMChat-176B-v1_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +479 -0

- Baichuan-7B_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +230 -0

- BioMistral-7B_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +0 -0

- CogView4-6B_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +330 -0

- ControlNet-modules-safetensors_finetunes_20250424_193500.csv_finetunes_20250424_193500.csv +4 -0

- CrisperWhisper_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +225 -0

- Cyberpunk-Anime-Diffusion_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +122 -0

- DeepSeek-Coder-V2-Instruct_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +286 -0

- DucHaitenAIart_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +70 -0

- EXAONE-Deep-32B_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +0 -0

- FalconLite_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +77 -0

- Flux-uncensored_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +116 -0

- Future-Diffusion_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +47 -0

- Hyper-SD_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +517 -0

- Inkpunk-Diffusion_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +23 -0

- Lag-Llama_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +100 -0

- Leffa_finetunes_20250426_121513.csv_finetunes_20250426_121513.csv +81 -0

- Llama-2-7B-GGUF_finetunes_20250426_221535.csv_finetunes_20250426_221535.csv +406 -0

- Llama-3-8B-Instruct-Gradient-1048k_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +161 -0

- MagicPrompt-Stable-Diffusion_finetunes_20250425_125929.csv_finetunes_20250425_125929.csv +31 -0

- Molmo-7B-O-0924_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +218 -0

- MythoMax-L2-13b_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +284 -0

- NuExtract_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +653 -0

- NuminaMath-7B-TIR_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +0 -0

- OOTDiffusion_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +37 -0

- PhotoMaker_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +2 -0

- Qwen-72B_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +808 -0

- Qwen2-72B-Instruct_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +0 -0

- SillyTavern-Presets_finetunes_20250426_121513.csv_finetunes_20250426_121513.csv +143 -0

- SmolVLM-256M-Instruct_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +0 -0

- Starling-LM-7B-alpha_finetunes_20250425_143346.csv_finetunes_20250425_143346.csv +345 -0

- Tifa-Deepsex-14b-CoT_finetunes_20250426_212347.csv_finetunes_20250426_212347.csv +290 -0

- VLM_WebSight_finetuned_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +127 -0

- bark_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +0 -0

- bart-large-cnn_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +0 -0

- controlnet-sd21_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv +79 -0

- distil-large-v3_finetunes_20250426_121513.csv_finetunes_20250426_121513.csv +0 -0

- distilgpt2_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +0 -0

- fashion-clip_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +207 -0

- flux-controlnet-collections_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +86 -0

- gte-multilingual-base_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +0 -0

- idefics2-8b_finetunes_20250425_165642.csv_finetunes_20250425_165642.csv +0 -0

- ip-composition-adapter_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +71 -0

- jina-clip-v2_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +0 -0

- llama-2-ko-7b_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv +640 -0

- m3e-base_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv +236 -0

- miqu-1-70b-sf_finetunes_20250426_171734.csv_finetunes_20250426_171734.csv +0 -0

4x-Ultrasharp_finetunes_20250427_003734.csv_finetunes_20250427_003734.csv

ADDED

|

@@ -0,0 +1,2 @@

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

lokCX/4x-Ultrasharp,N/A,"{""id"": ""lokCX/4x-Ultrasharp"", ""author"": ""lokCX"", ""sha"": ""1856559b50de25116a7c07261177dd128f1f5664"", ""last_modified"": ""2023-04-07 12:32:49+00:00"", ""created_at"": ""2023-04-07 12:15:33+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 0, ""downloads_all_time"": null, ""likes"": 158, ""library_name"": null, ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""region:us""], ""pipeline_tag"": null, ""mask_token"": null, ""trending_score"": null, ""card_data"": null, ""widget_data"": null, ""model_index"": null, ""config"": null, ""transformers_info"": null, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='4x-UltraSharp.pth', size=None, blob_id=None, lfs=None)""], ""spaces"": [""eienmojiki/AnyDiffuse""], ""safetensors"": null, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2023-04-07 12:32:49+00:00"", ""cardData"": null, ""transformersInfo"": null, ""_id"": ""6430096543a53c86b3fcb2a0"", ""modelId"": ""lokCX/4x-Ultrasharp"", ""usedStorage"": 66961958}",0,,0,,0,,0,,0,"eienmojiki/AnyDiffuse, huggingface/InferenceSupport/discussions/new?title=lokCX/4x-Ultrasharp&description=React%20to%20this%20comment%20with%20an%20emoji%20to%20vote%20for%20%5BlokCX%2F4x-Ultrasharp%5D(%2FlokCX%2F4x-Ultrasharp)%20to%20be%20supported%20by%20Inference%20Providers.%0A%0A(optional)%20Which%20providers%20are%20you%20interested%20in%3F%20(Novita%2C%20Hyperbolic%2C%20Together%E2%80%A6)%0A",2

|

Annotators_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv

ADDED

|

@@ -0,0 +1,5 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

lllyasviel/Annotators,"---

|

| 3 |

+

license: other

|

| 4 |

+

---

|

| 5 |

+

","{""id"": ""lllyasviel/Annotators"", ""author"": ""lllyasviel"", ""sha"": ""982e7edaec38759d914a963c48c4726685de7d96"", ""last_modified"": ""2023-08-27 01:01:06+00:00"", ""created_at"": ""2023-03-14 15:30:15+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 0, ""downloads_all_time"": null, ""likes"": 340, ""library_name"": null, ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""license:other"", ""region:us""], ""pipeline_tag"": null, ""mask_token"": null, ""trending_score"": null, ""card_data"": ""license: other"", ""widget_data"": null, ""model_index"": null, ""config"": null, ""transformers_info"": null, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='150_16_swin_l_oneformer_coco_100ep.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='250_16_swin_l_oneformer_ade20k_160k.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='ControlNetHED.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='ControlNetLama.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='README.md', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='RealESRGAN_x4plus.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='ZoeD_M12_N.pt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='body_pose_model.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='clip_g.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='dpt_hybrid-midas-501f0c75.pt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='erika.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='facenet.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='hand_pose_model.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='lama.ckpt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='latest_net_G.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='mlsd_large_512_fp32.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='netG.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='network-bsds500.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='res101.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='scannet.pt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='sk_model.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='sk_model2.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='table5_pidinet.pth', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='upernet_global_small.pth', size=None, blob_id=None, lfs=None)""], ""spaces"": [""hysts/ControlNet-v1-1"", ""okaris/omni-zero"", ""Anonymous-sub/Rerender"", ""TencentARC/T2I-Adapter-SDXL"", ""roubaofeipi/UltraPixel-demo"", ""linoyts/scribble-sdxl-flash"", ""shgao/EditAnything"", ""Shakker-Labs/FLUX.1-dev-ControlNet-Union-Pro"", ""KBlueLeaf/Sketch-Gen"", ""shi-labs/Prompt-Free-Diffusion"", ""Koi953215/NaRCan_demo"", ""ozgurkara/RAVE"", ""limingcv/ControlNet-Plus-Plus"", ""linoyts/scribble-sdxl"", ""openfree/ultpixgen"", ""fffiloni/AniDoc"", ""broyang/anime-ai"", ""feishen29/IMAGDressing-v1"", ""Shakker-Labs/FLUX.1-dev-ControlNet-Union-Pro-2.0"", ""fffiloni/MangaNinja-demo"", ""guardiancc/flux-advanced-explorer"", ""Kwai-Kolors/Kolors-Controlnet_and_IPA"", ""mikonvergence/mask-and-sketch"", ""broyang/interior-ai-designer"", ""vilarin/Diffutoon-ExVideo"", ""Deadmon/union-sdxl"", ""okaris/omni-zero-couples"", ""Kwai-Kolors/Kolors-Controlnet"", ""Sebastiankay/controlnet-and-ip-adapter-plus"", ""Superlang/ImageProcessor"", ""Robert001/UniControl-Demo"", ""dreamer-technoland/object-to-object-replace"", ""SakanaAI/Evo-Nishikie"", ""coreml-community/ControlNet-v1-1-Annotators-cpu"", ""Deadmon/scribble-pony-sdxl"", ""fantos/flxcontrol"", ""rupeshs/fastsdcpu"", ""Aatricks/LightDiffusion-Next"", ""OmPrakashSingh1704/ADVERTISE"", ""abhishek/sketch-to-image"", ""ameerazam08/Stable-Cascade-Super-Resolution"", ""fantos/EveryText"", ""wondervictor/ControlAR"", ""Make-A-Protagonist/Make-A-Protagonist-inference"", ""DMTuit/Diffusion-API"", ""jiuface/flux-controlnet-inpainting"", ""nowsyn/StyleShot"", ""stazizov/XFluxSpace"", ""PKUWilliamYang/FRESCO"", ""kevinwang676/ControlNet-with-GPT-4"", ""ilanser/Sketch-Stable-Diffusion"", ""egg22314/object-to-object-replace"", ""dreamer-technoland/object-to-object-replace-1"", ""HighCWu/control-lora-v3"", ""smartfeed/image2image"", ""willgibs/ControlNet-v1-1"", ""fantos/kolcontrl"", ""cbensimon/omni-zero-couples"", ""nuwandaa/StyleShot"", ""atatakun/ControlNet-v1-1-Annotators-cpu"", ""mikelalda/Drawing-Diffusion"", ""TheNetherWatcher/Vid2Vid-using-Text-prompt"", ""kevinwang676/Diffutoon"", ""K00B404/AnimateDiff-Lightning"", ""Gainward777/Sketcher"", ""dezzman/diffusion_models"", ""RobLi/ControlNet-v1-1"", ""barani/ControlNet"", ""cyberoleg/b2719240e190e2a649150d94db50be82838efeb0"", ""YEsssssssssssir/AIgen"", ""adorp/ControlNet-v1-1-duplicate"", ""flatindo/generate2"", ""flatindo/Image-Diffusion-WebUI"", ""kottu/stabble_diffusion_sketch"", ""killah-t-cell/EditAnything"", ""lloki/scribble-sdxl-flash"", ""itsVilen/trail"", ""Sebastiankay/controlnet-preprocessors-only"", ""terrapretapermaculture/ControlNet-v1-1-B"", ""smartfeed/turbo_fm"", ""jiuface/flux-controlnet-inpainting-large"", ""jordandotzel/ControlNet-v1-1"", ""svjack/AniDoc"", ""tianaco/tianacomakethedot"", ""anushriiyer/IMAGDressing-v1"", ""tejani/Another"", ""otmanheddouch/house_design"", ""VCHENZIJIA/Stable-Diffusion-ControlNet-WebUI"", ""bastibond/ControlNet-v1-1"", ""licasexko/ControlNet-v1-1"", ""serhatderya/ControlNet-v1-1-scribble"", ""imjunaidafzal/ControlNet-v1-1"", ""xawery/ControlNet-v1-1"", ""rishavraina/ControlNet-v1-1"", ""dddddahling/ControlNet-v1-1"", ""xing2024/ControlNet-v1-1"", ""lollo21/will-video-editor"", ""wesbuskirk/create"", ""777Andy777/ControlNet-v1-1"", ""wimvanhenden/ControlNet-v1-1""], ""safetensors"": null, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2023-08-27 01:01:06+00:00"", ""cardData"": ""license: other"", ""transformersInfo"": null, ""_id"": ""641093072a593afb553ee805"", ""modelId"": ""lllyasviel/Annotators"", ""usedStorage"": 12718642304}",0,,0,,0,,0,,0,"KBlueLeaf/Sketch-Gen, Koi953215/NaRCan_demo, Shakker-Labs/FLUX.1-dev-ControlNet-Union-Pro, broyang/anime-ai, huggingface/InferenceSupport/discussions/new?title=lllyasviel/Annotators&description=React%20to%20this%20comment%20with%20an%20emoji%20to%20vote%20for%20%5Blllyasviel%2FAnnotators%5D(%2Flllyasviel%2FAnnotators)%20to%20be%20supported%20by%20Inference%20Providers.%0A%0A(optional)%20Which%20providers%20are%20you%20interested%20in%3F%20(Novita%2C%20Hyperbolic%2C%20Together%E2%80%A6)%0A, hysts/ControlNet-v1-1, limingcv/ControlNet-Plus-Plus, linoyts/scribble-sdxl, linoyts/scribble-sdxl-flash, okaris/omni-zero, openfree/ultpixgen, ozgurkara/RAVE, roubaofeipi/UltraPixel-demo",13

|

Arcane-Diffusion_finetunes_20250424_223250.csv_finetunes_20250424_223250.csv

ADDED

|

@@ -0,0 +1,59 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

nitrosocke/Arcane-Diffusion,"---

|

| 3 |

+

license: creativeml-openrail-m

|

| 4 |

+

tags:

|

| 5 |

+

- stable-diffusion

|

| 6 |

+

- text-to-image

|

| 7 |

+

---

|

| 8 |

+

# Arcane Diffusion

|

| 9 |

+

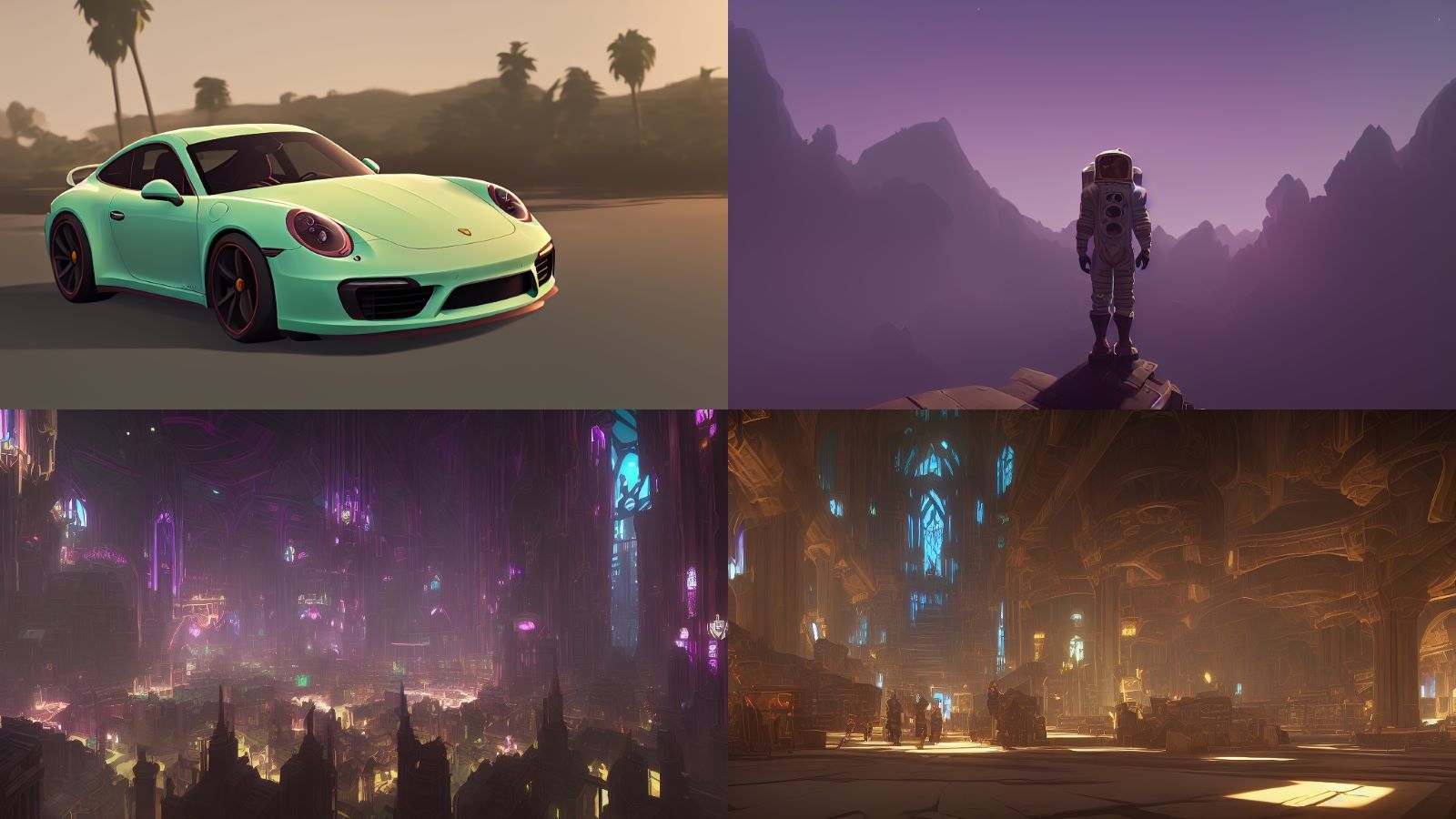

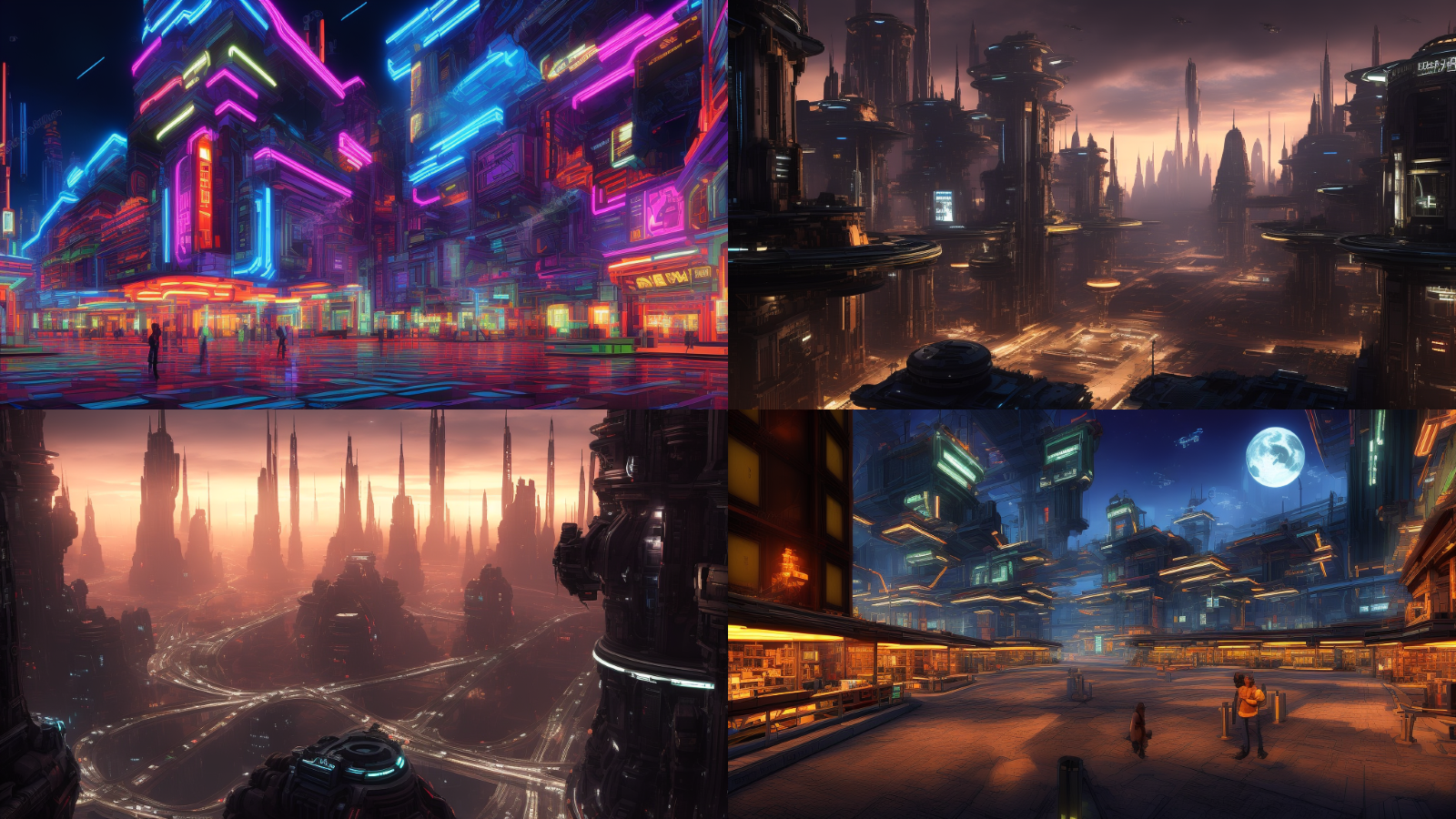

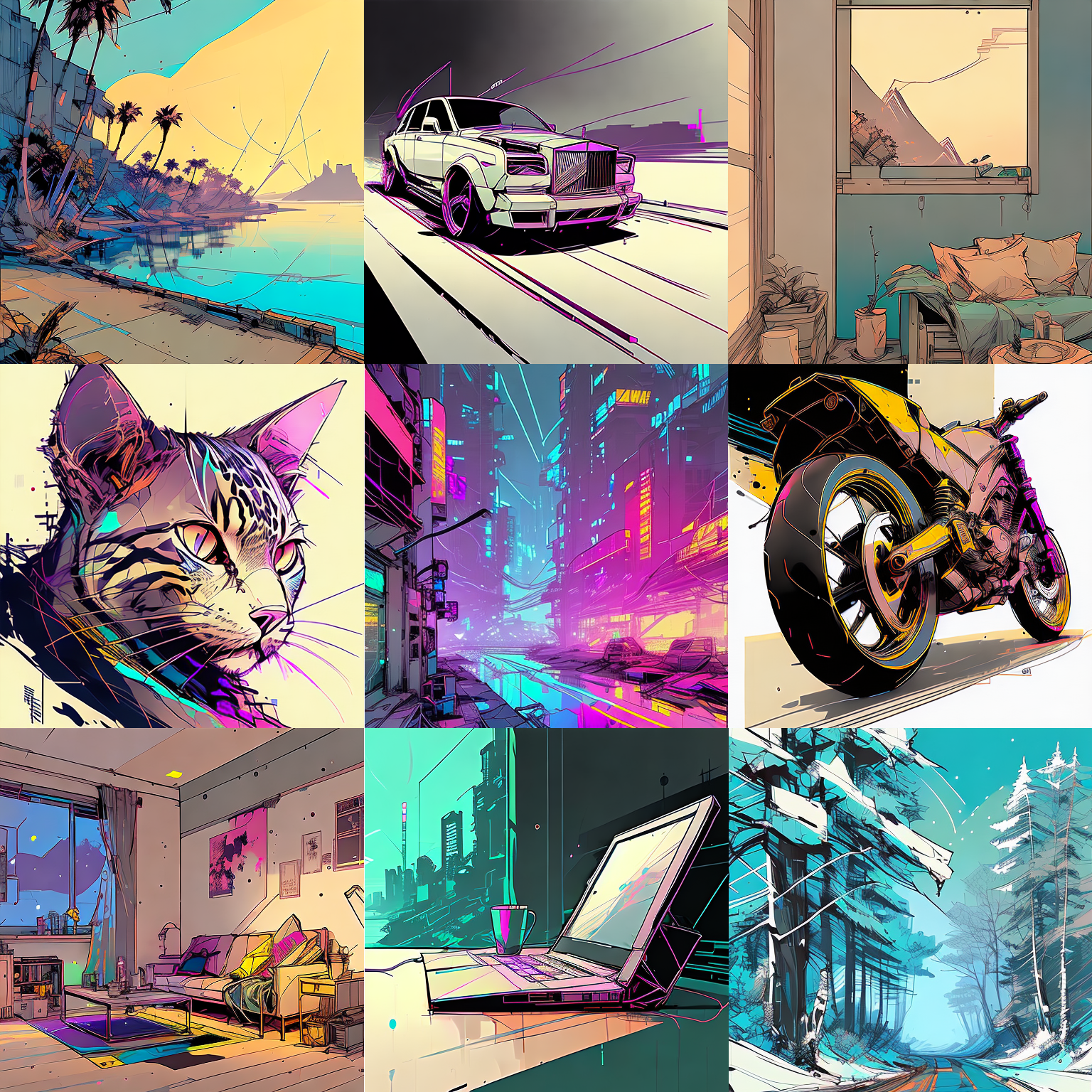

This is the fine-tuned Stable Diffusion model trained on images from the TV Show Arcane.

|

| 10 |

+

Use the tokens **_arcane style_** in your prompts for the effect.

|

| 11 |

+

|

| 12 |

+

**If you enjoy my work, please consider supporting me**

|

| 13 |

+

[](https://patreon.com/user?u=79196446)

|

| 14 |

+

|

| 15 |

+

### 🧨 Diffusers

|

| 16 |

+

|

| 17 |

+

This model can be used just like any other Stable Diffusion model. For more information,

|

| 18 |

+

please have a look at the [Stable Diffusion](https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion).

|

| 19 |

+

|

| 20 |

+

You can also export the model to [ONNX](https://huggingface.co/docs/diffusers/optimization/onnx), [MPS](https://huggingface.co/docs/diffusers/optimization/mps) and/or [FLAX/JAX]().

|

| 21 |

+

|

| 22 |

+

```python

|

| 23 |

+

#!pip install diffusers transformers scipy torch

|

| 24 |

+

from diffusers import StableDiffusionPipeline

|

| 25 |

+

import torch

|

| 26 |

+

|

| 27 |

+

model_id = ""nitrosocke/Arcane-Diffusion""

|

| 28 |

+

pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16)

|

| 29 |

+

pipe = pipe.to(""cuda"")

|

| 30 |

+

|

| 31 |

+

prompt = ""arcane style, a magical princess with golden hair""

|

| 32 |

+

image = pipe(prompt).images[0]

|

| 33 |

+

|

| 34 |

+

image.save(""./magical_princess.png"")

|

| 35 |

+

```

|

| 36 |

+

|

| 37 |

+

# Gradio & Colab

|

| 38 |

+

|

| 39 |

+

We also support a [Gradio](https://github.com/gradio-app/gradio) Web UI and Colab with Diffusers to run fine-tuned Stable Diffusion models:

|

| 40 |

+

[](https://huggingface.co/spaces/anzorq/finetuned_diffusion)

|

| 41 |

+

[](https://colab.research.google.com/drive/1j5YvfMZoGdDGdj3O3xRU1m4ujKYsElZO?usp=sharing)

|

| 42 |

+

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

### Sample images from v3:

|

| 46 |

+

|

| 47 |

+

|

| 48 |

+

### Sample images from the model:

|

| 49 |

+

|

| 50 |

+

### Sample images used for training:

|

| 51 |

+

|

| 52 |

+

|

| 53 |

+

**Version 3** (arcane-diffusion-v3): This version uses the new _train-text-encoder_ setting and improves the quality and edibility of the model immensely. Trained on 95 images from the show in 8000 steps.

|

| 54 |

+

|

| 55 |

+

**Version 2** (arcane-diffusion-v2): This uses the diffusers based dreambooth training and prior-preservation loss is way more effective. The diffusers where then converted with a script to a ckpt file in order to work with automatics repo.

|

| 56 |

+

Training was done with 5k steps for a direct comparison to v1 and results show that it needs more steps for a more prominent result. Version 3 will be tested with 11k steps.

|

| 57 |

+

|

| 58 |

+

**Version 1** (arcane-diffusion-5k): This model was trained using _Unfrozen Model Textual Inversion_ utilizing the _Training with prior-preservation loss_ methods. There is still a slight shift towards the style, while not using the arcane token.

|

| 59 |

+

","{""id"": ""nitrosocke/Arcane-Diffusion"", ""author"": ""nitrosocke"", ""sha"": ""c7d9af168e4885816a62e50f2c5dfb38419f0cb3"", ""last_modified"": ""2023-05-16 09:20:36+00:00"", ""created_at"": ""2022-10-02 11:41:27+00:00"", ""private"": false, ""gated"": false, ""disabled"": false, ""downloads"": 2448, ""downloads_all_time"": null, ""likes"": 753, ""library_name"": ""diffusers"", ""gguf"": null, ""inference"": null, ""inference_provider_mapping"": null, ""tags"": [""diffusers"", ""stable-diffusion"", ""text-to-image"", ""license:creativeml-openrail-m"", ""autotrain_compatible"", ""endpoints_compatible"", ""diffusers:StableDiffusionPipeline"", ""region:us""], ""pipeline_tag"": ""text-to-image"", ""mask_token"": null, ""trending_score"": null, ""card_data"": ""license: creativeml-openrail-m\ntags:\n- stable-diffusion\n- text-to-image"", ""widget_data"": null, ""model_index"": null, ""config"": {""diffusers"": {""_class_name"": ""StableDiffusionPipeline""}}, ""transformers_info"": null, ""siblings"": [""RepoSibling(rfilename='.gitattributes', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='README.md', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='arcane-diffusion-5k.ckpt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='arcane-diffusion-output-images.jpg', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='arcane-diffusion-training-images.jpg', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='arcane-diffusion-v2.ckpt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='arcane-diffusion-v3.ckpt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='arcane-v3-samples-01.jpg', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='arcane-v3-samples-02.jpg', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='feature_extractor/preprocessor_config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='magical_princess.png', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='model_index.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='safety_checker/config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='safety_checker/pytorch_model.bin', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='scheduler/scheduler_config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='text_encoder/config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='text_encoder/pytorch_model.bin', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer/merges.txt', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer/special_tokens_map.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer/tokenizer_config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='tokenizer/vocab.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='unet/config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='unet/diffusion_pytorch_model.bin', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='vae/config.json', size=None, blob_id=None, lfs=None)"", ""RepoSibling(rfilename='vae/diffusion_pytorch_model.bin', size=None, blob_id=None, lfs=None)""], ""spaces"": [""anzorq/finetuned_diffusion"", ""darkstorm2150/Stable-Diffusion-Protogen-x3.4-webui"", ""Yntec/ToyWorld"", ""darkstorm2150/protogen-web-ui"", ""akhaliq/anything-v3.0"", ""Yntec/PrintingPress"", ""vorstcavry/ai"", ""kamiyamai/stable-diffusion-webui"", ""yangheng/Super-Resolution-Anime-Diffusion"", ""Nymbo/image_gen_supaqueue"", ""ennov8ion/3dart-Models"", ""phenixrhyder/NSFW-ToyWorld"", ""akhaliq/openjourney"", ""Yntec/blitz_diffusion"", ""sanaweb/text-to-image"", ""BilalSardar/Text-To-image-AllModels"", ""AdamOswald1/finetuned_diffusion"", ""Vedits/6x_Image_diffusion"", ""John6666/Diffusion80XX4sg"", ""ennov8ion/comicbook-models"", ""diffusionai/ImgGenerator"", ""IAmXenos21/stable-diffusion-webui-VORST2"", ""John6666/PrintingPress4"", ""dotmet/Real-ESRGAN-Enhanced-Anime-Diffusion"", ""Nickhilearla135095/maximum_diffusion"", ""SUPERSHANKY/Finetuned_Diffusion_Max"", ""Rifd/ngees_doang"", ""PeepDaSlan9/B2BMGMT_Diffusion60XX"", ""Joeythemonster/Text-To-image-AllModels"", ""Evel/Evel_Space"", ""luisrguerra/sd-real-dream-lcm-cpu"", ""Daniela-C/6x_Image_diffusion"", ""riccardogiorato/playground_diffusion"", ""Dao3/Text-To-image-AllModels"", ""phenixrhyder/PrintingPress"", ""John6666/hfd_test_nostopbutton"", ""ConceptArtHouse/webui-gameasset"", ""mindtube/Diffusion50XX"", ""TheKitten/Fast-Images-Creature"", ""Nymbo/Diffusion80XX4sg"", ""YeOldHermit/StableDiffusion_AnythingV3_ModelCamenduru"", ""zwv9/webui-cpu"", ""duchaba/sd_prompt_helper"", ""kaleidoskop-hug/PrintingPress"", ""Adam111/stable-diffusion-webui"", ""vs4vijay/stable-diffusion"", ""Yasu55/stable-diffusion-webui"", ""ennov8ion/stablediffusion-models"", ""Shocky/Pink-Anime"", ""JoPmt/Multi-SD_Cntrl_Cny_Pse_Img2Img"", ""JoPmt/Img2Img_SD_Control_Canny_Pose_Multi"", ""ReiPlush64/finetuned_diffusion"", ""John6666/ToyWorld4"", ""akhaliq/EimisAnimeDiffusion_1.0v"", ""sasaro/webui"", ""YeOldHermit/Super-Resolution-Anime-Diffusion"", ""Omnibus-archive/Diffusion-Flood"", ""Crossper6/stable-diffusion-webui"", ""grzegorz2047/fast_diffusion"", ""Alfasign/dIFFU"", ""Nymbo/PrintingPress"", ""Rifd/Sdallmodels"", ""John6666/Diffusion80XX4g"", ""NativeAngels/HuggingfaceDiffusion"", ""TopdeckingLands/Diffusion_Space"", ""Malifex/CPU-Anything-V3.0-WebUI"", ""lianzhou/stable-diffusion-webui"", ""Missinginaction/stablediffusionwithnofilter"", ""arthurdias/Webui-Cpu-ExtensionV2-Publictest-WithCivitaiHelper"", ""thestasi/Webui-Cpu-ExtensionV2-Publictest-WithCivitaiHelper"", ""achyuth1344/stable-diffusion-webui"", ""ennov8ion/Scifi-Models"", ""ennov8ion/semirealistic-models"", ""Jackflack09/finetuned_diffusion2"", ""ennov8ion/dreamlike-models"", ""ennov8ion/FantasyArt-Models"", ""noes14155/img_All_models"", ""Nymbo/Game-Creator"", ""Minecraft3193092/Stable-Diffusion-8"", ""AnimeStudio/anime-models"", ""John6666/Diffusion80XX4"", ""K00B404/HuggingfaceDiffusion_custom"", ""John6666/blitz_diffusion4"", ""John6666/blitz_diffusion_builtin"", ""deaf1296/finetuned_diffusion"", ""fkunn1326/CoolJapaneseDiffusion"", ""mgxwrites/Mgx-Diffusion-v3.0"", ""pieeetre/stable-diffusion-webui"", ""luluneko1/stable-diffusion-webui"", ""Lyra121/finetuned_diffusion"", ""voltcutter/stable-diffusion-webui"", ""Mileena/anything-v3.0"", ""hylee/finetuned_diffusion"", ""Dao3/Top-20-Models"", ""Jackflack09/diffuse-custom"", ""SHOOL45/ImgGen"", ""ichsanbhrd/ImgGenerator"", ""RhythmRemix14/PrintingPressDx"", ""Minecraft3193092/Stable-Diffusion-7"", ""Omnibus/game-test""], ""safetensors"": null, ""security_repo_status"": null, ""xet_enabled"": null, ""lastModified"": ""2023-05-16 09:20:36+00:00"", ""cardData"": ""license: creativeml-openrail-m\ntags:\n- stable-diffusion\n- text-to-image"", ""transformersInfo"": null, ""_id"": ""633978e737faf14d3a94bec7"", ""modelId"": ""nitrosocke/Arcane-Diffusion"", ""usedStorage"": 41619195807}",0,,0,,0,,0,,0,"IAmXenos21/stable-diffusion-webui-VORST2, John6666/Diffusion80XX4sg, John6666/PrintingPress4, Nymbo/image_gen_supaqueue, Yntec/PrintingPress, Yntec/ToyWorld, Yntec/blitz_diffusion, anzorq/finetuned_diffusion, darkstorm2150/Stable-Diffusion-Protogen-x3.4-webui, diffusionai/ImgGenerator, huggingface/InferenceSupport/discussions/new?title=nitrosocke/Arcane-Diffusion&description=React%20to%20this%20comment%20with%20an%20emoji%20to%20vote%20for%20%5Bnitrosocke%2FArcane-Diffusion%5D(%2Fnitrosocke%2FArcane-Diffusion)%20to%20be%20supported%20by%20Inference%20Providers.%0A%0A(optional)%20Which%20providers%20are%20you%20interested%20in%3F%20(Novita%2C%20Hyperbolic%2C%20Together%E2%80%A6)%0A, phenixrhyder/NSFW-ToyWorld, vorstcavry/ai, yangheng/Super-Resolution-Anime-Diffusion",14

|

BLOOMChat-176B-v1_finetunes_20250426_014322.csv_finetunes_20250426_014322.csv

ADDED

|

@@ -0,0 +1,479 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

model_id,card,metadata,depth,children,children_count,adapters,adapters_count,quantized,quantized_count,merges,merges_count,spaces,spaces_count

|

| 2 |

+

sambanovasystems/BLOOMChat-176B-v1,"---

|

| 3 |

+

# For reference on model card metadata, see the spec: https://github.com/huggingface/hub-docs/blob/main/modelcard.md?plain=1

|

| 4 |

+

# Doc / guide: https://huggingface.co/docs/hub/model-cards

|

| 5 |

+

license: other

|

| 6 |

+

inference: false

|

| 7 |

+

---

|

| 8 |

+

|

| 9 |

+

# BLOOMChat V1.0

|

| 10 |

+

|

| 11 |

+

<!-- Provide a quick summary of what the model is/does. -->

|

| 12 |

+

|

| 13 |

+

BLOOMChat is a 176 billion parameter multilingual chat model. It is instruction tuned from [BLOOM (176B)](https://huggingface.co/bigscience/bloom) on assistant-style conversation datasets and supports conversation, question answering and generative answers in multiple languages.

|

| 14 |

+

|

| 15 |

+

## Model Details

|

| 16 |

+

|

| 17 |

+

### Model Description

|

| 18 |

+

|

| 19 |

+

<!-- Provide a longer summary of what this model is. -->

|

| 20 |

+

|

| 21 |

+

- **Developed by:** [SambaNova Systems](https://sambanova.ai/)

|

| 22 |

+

- **Co-developed by:** [Together Computer](https://www.together.xyz/)

|

| 23 |

+

- **Model type:** Language Model

|

| 24 |

+

- **Language(s):** Multiple; see [training data from BLOOM](https://huggingface.co/bigscience/bloom#training-data)

|

| 25 |

+

- **License:** BLOOMChat-176B LICENSE v1.0

|

| 26 |

+

- **Instruction Tuned from model:** [BigScience Group BLOOM](https://huggingface.co/bigscience/bloom)

|

| 27 |

+

|

| 28 |

+

### Basic Information

|

| 29 |

+

|

| 30 |

+

<!-- Provide the basic links for the model. -->

|

| 31 |

+

- **Blog Post**: [Link](https://sambanova.ai/blog/introducing-bloomchat-176b-the-multilingual-chat-based-llm/)

|

| 32 |

+

- **Discord**: [Link](https://discord.com/invite/8z2Pe7cpRv)

|

| 33 |

+

- **HF Hosting**: [Chat with me!](https://huggingface.co/spaces/sambanovasystems/BLOOMChat)

|

| 34 |

+

- **Github**: [Link](https://github.com/sambanova/bloomchat)

|

| 35 |

+

|

| 36 |

+

### Licensing

|

| 37 |

+

|

| 38 |

+

To increase accessibility and to support the open-source community, SambaNova is releasing BLOOMChat under a modified version of the Apache 2.0 license, which includes use-based restrictions from BLOOM’s RAIL license. While use-based restrictions are necessarily passed through, there are no blanket restrictions on reuse, distribution, commercialization or adaptation. [Please review SambaNova’s BLOOMChat-176B License](LICENSE)

|

| 39 |

+

|

| 40 |

+

## Uses

|

| 41 |

+

<details>

|

| 42 |

+

<summary>Click to expand</summary>

|

| 43 |

+

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

|

| 44 |

+

|

| 45 |

+

### Direct Use

|

| 46 |

+

|

| 47 |

+

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

|

| 48 |

+

This model is intended for commercial and research use.

|

| 49 |

+

|

| 50 |

+

|

| 51 |

+

### Out-of-Scope Use

|

| 52 |

+

|

| 53 |

+

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

|

| 54 |

+

|

| 55 |

+

|

| 56 |

+

BLOOMChat should NOT be used for:

|

| 57 |

+

|

| 58 |

+

- Mission-critical applications

|

| 59 |

+

- Applications that involve the safety of others

|

| 60 |

+

- Making highly important decisions

|

| 61 |

+

- Important automated pipelines

|

| 62 |

+

|

| 63 |

+

This model is still in early development and can be prone to mistakes and hallucinations, there is still room for improvement. This model is intended to provide the community with a multilingual chat LLM baseline.

|

| 64 |

+

|

| 65 |

+

### Recommendations

|

| 66 |

+

|

| 67 |

+

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

|

| 68 |

+

|

| 69 |

+

Users should be made aware of the risks, biases, limitations, and restrictions of the model, which are listed down at the bottom of the page.

|

| 70 |

+

|

| 71 |

+

</details>

|

| 72 |

+

|

| 73 |

+

|

| 74 |

+

---

|

| 75 |

+

## How to Get Started with the Model

|

| 76 |

+

|

| 77 |

+

<details>

|

| 78 |

+

<summary>Click to expand</summary>

|

| 79 |

+

|

| 80 |

+

### Loading in model with Huggingface

|

| 81 |

+

|

| 82 |

+

```python

|

| 83 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 84 |

+

|

| 85 |

+

tokenizer = AutoTokenizer.from_pretrained(""sambanovasystems/BLOOMChat-176B-v1"")

|

| 86 |

+

model = AutoModelForCausalLM.from_pretrained(""sambanovasystems/BLOOMChat-176B-v1"", device_map=""auto"", torch_dtype=""auto"")

|

| 87 |

+

```

|

| 88 |

+

|

| 89 |

+

### Quick Start Inference on SambaNova's in-house Reconfigurable Dataflow Unit (RDU)

|

| 90 |

+

|

| 91 |

+

The inference code to run the model can be found our [github repo](https://github.com/sambanova/bloomchat/blob/main/rdu_quick_start/inference.py). This code requires the [SambaFlow](https://docs.sambanova.ai/developer/latest/sambaflow-intro.html) SDK to execute. For those interested in running models on RDUs, [please feel free to get in touch](https://sambanova.ai/getstarted).

|

| 92 |

+

|

| 93 |

+

### Quick Start Inference on GPU

|

| 94 |

+

|

| 95 |

+

First create a python virtual environment for these packages

|

| 96 |

+

|

| 97 |

+

```

|

| 98 |

+

python3 -m venv bloomchat_venv

|

| 99 |

+

source bloomchat_venv/bin/activate

|

| 100 |

+

pip install --upgrade pip

|

| 101 |

+

```

|

| 102 |

+

|

| 103 |

+

<!-- Please follow this section [Inference solutions for BLOOM 176B](https://github.com/huggingface/transformers-bloom-inference#bloom-inference-via-command-line) in the Huggingface Tutorial for environment set up and stop before the [BLOOM inference via command-line

|

| 104 |

+

](https://github.com/huggingface/transformers-bloom-inference#bloom-inference-via-command-line) section. -->

|

| 105 |

+

|

| 106 |

+

```

|

| 107 |

+

pip install flask flask_api gunicorn pydantic accelerate huggingface_hub>=0.9.0 deepspeed>=0.7.3 deepspeed-mii==0.0.2

|

| 108 |

+

```

|

| 109 |

+

And then

|

| 110 |

+

```

|

| 111 |

+

pip install transformers==4.27.0

|

| 112 |

+

```

|

| 113 |

+

|

| 114 |

+

You will see messages like this

|

| 115 |

+

```

|

| 116 |

+

ERROR: deepspeed-mii 0.0.2 has requirement transformers==4.21.2, but you'll have transformers 4.27.0 which is incompatible.

|

| 117 |

+

Installing collected packages: transformers

|

| 118 |

+

Found existing installation: transformers 4.21.2

|

| 119 |

+

Uninstalling transformers-4.21.2:

|

| 120 |

+

Successfully uninstalled transformers-4.21.2

|

| 121 |

+

Successfully installed transformers-4.27.0

|

| 122 |

+

```

|

| 123 |

+

|

| 124 |

+

Now let's git clone the [huggingface/transformers-bloom-inference](https://github.com/huggingface/transformers-bloom-inference) repo.

|

| 125 |

+

```

|

| 126 |

+

git clone https://github.com/huggingface/transformers-bloom-inference.git

|

| 127 |

+

cd transformers-bloom-inference/

|

| 128 |

+

```

|

| 129 |

+

And then you need to modify two files in this [transformers-bloom-inference](https://github.com/huggingface/transformers-bloom-inference) repo:

|

| 130 |

+

|

| 131 |

+

- Modifying `inference_server/models/hf_accelerate.py`

|

| 132 |

+

- This is because for our testing of this repo we used 4 80GB A100 GPUs and would run into memory issues

|

| 133 |

+

- Modifying `inference_server/cli.py`

|

| 134 |

+

- This is because the model was trained using specific human, bot tags

|

| 135 |

+

- Trailing spaces may lead to subpar performance

|

| 136 |

+

|

| 137 |

+

Modifications for `inference_server/models/hf_accelerate.py`:

|

| 138 |

+

|

| 139 |

+

```diff

|

| 140 |

+

diff --git a/inference_server/models/hf_accelerate.py b/inference_server/models/hf_accelerate.py

|

| 141 |

+

index 9be3c3f..a8ecb1d 100644

|

| 142 |

+

--- a/inference_server/models/hf_accelerate.py

|

| 143 |

+

+++ b/inference_server/models/hf_accelerate.py

|

| 144 |

+

@@ -1,4 +1,5 @@

|

| 145 |

+

from argparse import Namespace

|

| 146 |

+

+from accelerate.utils.modeling import get_max_memory

|

| 147 |

+

|

| 148 |

+

import torch

|

| 149 |

+

|

| 150 |

+

@@ -12,6 +13,12 @@ class HFAccelerateModel(Model):

|

| 151 |

+

|

| 152 |

+

kwargs = {""pretrained_model_name_or_path"": args.model_name, ""device_map"": ""auto""}

|

| 153 |

+

|

| 154 |

+

+ original_max_memory_dict = get_max_memory()

|

| 155 |

+

+

|

| 156 |

+

+ reduce_max_memory_dict = {device_key: int(original_max_memory_dict[device_key] * 0.85) for device_key in original_max_memory_dict}

|

| 157 |

+

+

|

| 158 |

+

+ kwargs[""max_memory""] = reduce_max_memory_dict

|

| 159 |

+

+

|

| 160 |

+

if get_world_size() > 1:

|

| 161 |

+

kwargs[""device_map""] = ""balanced_low_0""

|

| 162 |

+

|

| 163 |

+

```

|

| 164 |

+

|

| 165 |

+

Modifications for `inference_server/cli.py`:

|

| 166 |

+

|

| 167 |

+

```diff

|

| 168 |

+

diff --git a/inference_server/cli.py b/inference_server/cli.py

|

| 169 |

+

index fc903d5..5450236 100644

|

| 170 |

+

--- a/inference_server/cli.py

|

| 171 |

+

+++ b/inference_server/cli.py

|

| 172 |

+

@@ -22,6 +22,9 @@ def main() -> None:

|

| 173 |

+

while True:

|

| 174 |

+

input_text = input(""Input text: "")

|

| 175 |

+

|

| 176 |

+

+ input_text = input_text.strip()

|

| 177 |

+

+ modified_input_text = f""<human>: {input_text}\n<bot>:""

|

| 178 |

+

+

|

| 179 |

+

if input(""change generate_kwargs? [y/n] "") == ""y"":

|

| 180 |

+

while True:

|

| 181 |

+

try:

|

| 182 |

+

@@ -33,7 +36,7 @@ def main() -> None:

|

| 183 |

+

print(""message ="", e_message)

|

| 184 |

+

continue

|

| 185 |

+

|

| 186 |

+

- response = model.generate(text=[input_text], generate_kwargs=generate_kwargs)

|

| 187 |

+

+ response = model.generate(text=[modified_input_text], generate_kwargs=generate_kwargs)

|

| 188 |

+

|

| 189 |

+

print_rank_0(""Output text:"", response.text[0])

|

| 190 |

+

print_rank_0(""Generated tokens:"", response.num_generated_tokens[0])

|

| 191 |

+

|

| 192 |

+

```

|

| 193 |

+

And now you are good to go!

|

| 194 |

+

|

| 195 |

+

Running command for bf16, NO sampling

|

| 196 |

+

```

|

| 197 |

+

python -m inference_server.cli --model_name sambanovasystems/BLOOMChat-176B-v1 --model_class AutoModelForCausalLM --dtype bf16 --deployment_framework hf_accelerate --generate_kwargs '{""do_sample"": false, ""max_new_tokens"": 512}'

|

| 198 |

+

```

|

| 199 |

+

Running command for bf16, YES sampling

|

| 200 |

+

```

|

| 201 |

+

python -m inference_server.cli --model_name sambanovasystems/BLOOMChat-176B-v1 --model_class AutoModelForCausalLM --dtype bf16 --deployment_framework hf_accelerate --generate_kwargs '{""do_sample"": true, ""temperature"": 0.8, ""repetition_penalty"": 1.2, ""top_p"": 0.9, ""max_new_tokens"": 512}'

|

| 202 |

+

```

|

| 203 |

+

---

|

| 204 |

+

Running command for int8 (sub optimal performance, but fast inference time) NO sampling:

|

| 205 |

+

```

|

| 206 |

+

python -m inference_server.cli --model_name sambanovasystems/BLOOMChat-176B-v1 --model_class AutoModelForCausalLM --dtype int8 --deployment_framework hf_accelerate --generate_kwargs '{""do_sample"": false, ""max_new_tokens"": 512}'

|

| 207 |

+

```

|

| 208 |

+

Running command for int8 (sub optimal performance, but fast inference time) YES sampling:

|

| 209 |

+

```

|

| 210 |

+

python -m inference_server.cli --model_name sambanovasystems/BLOOMChat-176B-v1 --model_class AutoModelForCausalLM --dtype int8 --deployment_framework hf_accelerate --generate_kwargs '{""do_sample"": true, ""temperature"": 0.8, ""repetition_penalty"": 1.2, ""top_p"": 0.9, ""max_new_tokens"": 512}'

|

| 211 |

+

```

|

| 212 |

+

|

| 213 |

+

**DISCLAIMER:** When using int8, the results will be subpar compared to bf16 as the model is being [quantized](https://huggingface.co/blog/hf-bitsandbytes-integration#introduction-to-model-quantization).

|

| 214 |

+

|

| 215 |

+

### Suggested Inference Parameters

|

| 216 |

+

- Temperature: 0.8

|

| 217 |

+

- Repetition penalty: 1.2

|

| 218 |

+

- Top-p: 0.9

|

| 219 |

+

- Max generated tokens: 512

|

| 220 |

+

|

| 221 |

+

### Suggested Prompts To Try in GPU Tutorial

|

| 222 |

+

```

|

| 223 |

+

Input text: Write a script in which Bob accidentally breaks his dad's guitar

|

| 224 |

+

```

|

| 225 |

+

|

| 226 |

+

```

|

| 227 |

+

Input text: Create an itemized list of tasks to complete to start a clothing brand

|

| 228 |

+

```

|

| 229 |

+

|

| 230 |

+

```

|

| 231 |

+

Input text: 十七岁的风是什么颜色的?

|

| 232 |

+

```

|

| 233 |

+

|

| 234 |

+

|

| 235 |

+

</details>

|

| 236 |

+

|

| 237 |

+

---

|

| 238 |

+

|

| 239 |

+

## Some example completions for English

|

| 240 |

+

|

| 241 |

+

<details>

|

| 242 |

+

<summary>Click to expand</summary>

|

| 243 |

+

|

| 244 |

+

```

|

| 245 |

+

<human>: What does it mean for the Fed to raise or lower interest rates?

|

| 246 |

+

<bot>: The Federal Reserve (also known as the Fed) is the central banking system of the United States. It was created in 1913, and is an independent agency of the federal government. The Fed has a dual mandate of maximizing employment and stabilizing prices. The Fed sets interest rates, and can influence the money supply and the credit markets through its actions.

|

| 247 |

+

|

| 248 |

+

Raising interest rates means that the Fed is trying to reduce the money supply in the economy, and is trying to discourage borrowing and encourage saving. Lowering interest rates means that the Fed is trying to stimulate economic growth by encouraging borrowing and reducing the cost of credit.

|

| 249 |

+

|

| 250 |

+

The Fed raises or lowers interest rates in order to influence the economy and achieve its goals for employment and price stability. Interest rates are one of the primary tools that the Fed uses to achieve its goals.

|

| 251 |

+

```

|

| 252 |

+

|

| 253 |

+

```

|

| 254 |

+

<human>: Write an engaging and well-written property listing description for selling a house. Address of the house and some of the details are given to you. Fill in the information gap with hallucinations if needed.

|

| 255 |

+

|

| 256 |

+

Property Address: 412 Monterey Ave, Capitola, CA 95010

|

| 257 |

+

Parking: 3 cars + a finished single car garage

|

| 258 |

+

Details:

|

| 259 |

+

- open floorplan

|

| 260 |

+

- window with views to park/mountains

|

| 261 |

+

- Kitchen with shaker cabinetry and white Calcatta quartz counters

|

| 262 |

+

<bot>: This home at 412 Monterey Ave, Capitola, CA 95010 is the perfect place to call home. With its open floor plan, it provides the perfect space for entertaining guests. The kitchen is equipped with shaker cabinetry and white Calcatta quartz counters, providing the perfect space to create culinary masterpieces. The window with views to the park and mountains provide a beautiful view of the outdoors, making this home feel like a piece of paradise. The three-car garage and finished single car garage provide ample parking space, making this home a must-see for anyone looking for a new home.

|

| 263 |

+

|

| 264 |

+

```

|

| 265 |

+

|

| 266 |

+

```

|

| 267 |

+

<human>: What is artificial intelligence? Explain it so an 8 year old will understand.

|

| 268 |

+

<bot>: Artificial Intelligence (AI) is a computer program or machine that can do things that would normally require human intelligence, such as recognizing patterns, making decisions, and learning from experience. AI can be found in many places, such as self-driving cars, smartphones, and even robots. AI is used to make things easier for people, like when your smartphone can translate text into another language or when your voice-controlled speaker can play music for you.

|

| 269 |

+

AI works by using algorithms and big data to learn and improve over time. For example, self-driving cars use AI to analyze the environment around them and make decisions about how to drive. The car gathers data from its sensors, processes it using algorithms, and adjusts its behavior accordingly. This process is called machine learning.

|

| 270 |

+

AI is still a developing technology, and there are still many things that AI programs cannot do yet. However, as AI continues to evolve, it is likely that it will become more and more capable of performing tasks that were once thought to require human intelligence.

|

| 271 |

+

|

| 272 |

+

```

|

| 273 |

+

|

| 274 |

+

</details>

|

| 275 |

+

|

| 276 |

+

---

|

| 277 |

+

|

| 278 |

+

## Some example completions for Multilingual

|

| 279 |

+

<details>

|

| 280 |

+

<summary>Click to expand</summary>

|

| 281 |

+

|

| 282 |

+

#### Arabic

|

| 283 |

+

|

| 284 |

+

```

|

| 285 |

+

<human>: هل العمل نصف دوام فكرة جيدة لمراهقين بين عمر ١٥ و ١٩ عام

|

| 286 |

+

<bot>: نعم، يمكن أن تكون فكرة جيدة لبعض المراهقين في هذا العمر. هناك العديد من الفوائد التي قد يحققها المراهقون إذا عملوا بنصف دوام:

|

| 287 |

+

|

| 288 |

+

- يساعدهم على تعلم المسؤولية وإدارة الوقت: عندما يعمل المراهق بنصف دوام، فإنه يتعلم كيفية إدارة وقته بشكل فعال وكيفية تقسيم ساعات عمله إلى مهام أصغر.

|

| 289 |

+

- يحسن مهارات التواصل والعمل الجماعي: غالبًا ما يكون مكان العمل بيئة متعددة الثقافات حيث يلتقي المراهقون بمجموعة متنوعة من الأشخاص ويتعلمون كيفية التعاون معهم وتبادل الأفكار.

|

| 290 |

+

- يوفر فرصة للتعرف على عالم العمل: يتيح العمل للمراهق التعرف على عالم العمل واكتساب خبرة عملية قيمة ستساعده لاحقًا عند البحث عن وظيفة أو بدء حياته المهنية.

|

| 291 |

+

- يعزز الشعور بالمسؤولية والثقة بالنفس: عندما يعمل المراهق بنصف دوام ويحصل على دخل خاص به، فإنه يكتسب شعورًا أكبر بالمسؤولية والثقة بالنفس.

|

| 292 |

+

|

| 293 |

+

ومع ذلك، لا ينبغي إجبار المراهقين على العمل بنصف دوام دون موافقتهم المسبقة. يجب أيضًا مراعاة أعمارهم وقدراتهم البدنية والعقلية قبل توظيفهم.

|

| 294 |

+

```

|

| 295 |

+

|

| 296 |

+

**DISCLAIMER**: Text ordering may be off for Arabic due to code block not being able to render

|

| 297 |

+

#### Chinese

|

| 298 |

+

|

| 299 |

+

```

|

| 300 |

+

<human>: 请向我推荐一部周末看的电影并说明原因。

|

| 301 |

+

<bot>: “强烈向您推荐《美丽人生》这部影片。该片由罗伯托·贝尼尼自导自演,讲述了二战时期一对犹太父子被送进了纳粹集中营,父亲用自己的想象力为儿子编织了一个美好童真的世界,让儿子的心灵没有受到丝毫伤害的故事。

|

| 302 |

+

|

| 303 |

+

该片被誉为“最伟大的战争片”、“人类有史以来最佳影片之一”,豆瓣评分高达9.6分。它不仅是一部感人的亲情片,更是一部富含哲理的励志片和人生启示录。片中父亲的乐观豁达、幽默风趣的人生态度,对任何事都抱有积极热情的态度,即使在残酷无比的集中营里也是如此,深深地感染着观众。同时,这也是一部关于爱与希望的电影,让我们更加珍惜当下所拥有的一切,学会如何去爱身边的每一个人。

|

| 304 |

+

|

| 305 |

+

总之,这是一部值得一看再看的好电影,相信您一定会喜欢的!

|

| 306 |

+

|

| 307 |

+

```

|

| 308 |

+

|

| 309 |

+

#### French

|

| 310 |

+

|

| 311 |

+

```

|

| 312 |

+

<human>: Il y a t’il un petit-déjeuner de 700-1000 calories sans oeuf mais qui inclut des protéines.

|

| 313 |

+

<bot>: Oui, voici une recette de petit déjeuner à base d'avoine et de fromage blanc :

|

| 314 |

+

Ingrédients :

|

| 315 |

+

- 1 tasse (250 ml) de lait d'amande ou autre lait végétal

|

| 316 |

+

- 2 cuillères à soupe bombées (30 g) de flocons d'avoine

|

| 317 |

+

- 1/2 banane mûre écrasée

|

| 318 |

+

- 1/4 de pomme fraîche épluchée et coupée en dés

|

| 319 |

+

- 1 cuillère à soupe de beurre d'amande ou autre purée d'oléagineux

|

| 320 |

+

- 1 cuillère à café de cannelle moulue

|

| 321 |

+

- 1 pincée de sel

|

| 322 |

+

Préparation :

|

| 323 |

+

1. Dans une petite casserole, faites chauffer le lait jusqu'à ce qu'il soit chaud.

|

| 324 |

+

2. Ajoutez les flocons d'avoine, la banane, la pomme, le beurre d'amande, la cannelle et le sel dans le bol du mixeur et mélangez bien pour obtenir une pâte homogène.

|

| 325 |

+

3. Versez le mélange dans le lait chaud et remuez rapidement avec une spatule en bois pour éviter que les flocons d'avoine ne s'agglutinent au fond de la casserole.

|

| 326 |

+

4. Laissez mijoter sur feu doux pendant environ 5 minutes tout en continuant de remuer régulièrement.

|

| 327 |

+

5. Transférez ensuite le porridge dans un bol et servez immédiatement. Vous pouvez ajouter quelques fruits rouges frais pour décorer et apporter encore plus de gourmandise à votre petit déjeuner ! Bon appétit !

|

| 328 |

+

```

|

| 329 |

+

|

| 330 |

+

#### Hindi

|

| 331 |

+

```

|

| 332 |

+

<human>: दिल्ली से जयपुर कैसे जाये? बस से या कार से? दोनो के बारे मे विस्तार मे बताये.

|

| 333 |

+

<bot>: दिल्ली से जयपुर जाने का सबसे आसान और तेज़ तरीका है कि आप अपनी खुद की कार लेकर जाएं, क्योंकि यह आपको अपने समय पर निर्भर रहने की स्वतंत्रता देता है। हालांकि, यदि आपके पास अपनी कार नहीं है, तो आप दिल्ली से जयपुर तक एक निजी वाहन किराए पर ले सकते हैं। यदि आप सार्वजनिक परिवहन (बस) का उपयोग करना चाहते हैं, तो दिल्ली में कई सरकारी और निजी बस सेवाएं उपलब्ध हैं जो जयपुर को जोड़ती हैं। दिल्ली से जयपुर जाने वाली कुछ प्रमुख बसें हैं-

|

| 334 |

+

1. राजस्थान रोडवेज: ये राज्य सरकार द्वारा संचालित बसें हैं जो दिल्ली से जयपुर के बीच चलती हैं। ये नियमित रूप से चलती हैं और इनके लिए अग्रिम आरक्षण आवश्यक नहीं है।

|

| 335 |

+

2. ALSA: यह एक निजी कंपनी है जो दिल्ली से जयपुर के बीच नियमित रूप से चलती है। इसके टिकटों की बुकिंग ऑनलाइन और ऑफलाइन दोनों माध्यमों से की जा सकती है।

|

| 336 |

+

3. RedBus: यह एक ऑनलाइन बस बुकिंग वेबसाइट है जहां से आप दिल्ली से जयपुर के बीच चलनेवाली विभिन्न कंपनियों की बसों में सीट आरक्षित करा सकते हैं।

|

| 337 |

+

4. Ola and Uber: इन ऐप्स का उपयोग करके आप द���ल्ली से जयपुर तक कैब राइड बुक कर सकते हैं, लेकिन ध्यान रखें कि यह विकल्प सस्ता नहीं होगा। दिल्ली से जयपुर जाने का सबसे अच्छा विकल्प आपकी व्यक्तिगत आवश्यकताओं, बजट, और उपलब्धता पर निर्भर करता है। यदि आप अपनी खुद की कार रखते हैं, तो इसका लाभ उठाएं अन्यथा किसी भी सार्वजनिक परिवहन साधन का चयन करें जो आपकी आवश्यकताओं को पूरा करे।

|

| 338 |

+

```

|

| 339 |

+

|

| 340 |

+

#### Spanish

|

| 341 |

+

|

| 342 |

+

```

|

| 343 |

+

<human>: ¿Me puedes dar algunas recomendaciones de juegos? Estoy buscando juegos de rol con un sistema de magia único, un sistema de construcción único y una buena historia. Por favor limítate a juegos que se puedan jugar en PC directamente, o por emulación.

|

| 344 |

+

<bot>: Claro! Aquí te dejo tres recomendaciones:

|

| 345 |

+

1. The Outer Worlds: es un juego de rol de ciencia ficción con un sistema de magia basado en la tecnología alienígena que permite al jugador personalizar sus habilidades para adaptarse a su estilo de juego.

|

| 346 |

+

2. Divinity: Original Sin 2 - Definitive Edition: este juego combina elementos de RPG con estrategia en tiempo real (RTS), lo que le da al jugador un control total sobre cómo construir y mejorar su personaje y el mundo que lo rodea.

|

| 347 |

+

3. Pillars of Eternity: esta aventura de fantasía oscura tiene lugar en un vasto mundo abierto donde los jugadores pueden elegir entre diferentes clases y desarrollar relaciones con otros personajes del juego. La mecánica de combate está basada en turnos y utiliza hechizos y armas cuerpo a cuerpo.

|

| 348 |

+

Estos son solo algunos ejemplos de juegos que podrían interesarte según tus criterios. Hay muchos más títulos disponibles en el mercado que podrían ajustarse a tu gusto, así que no dudes en explorar otras opciones si estos juegos no cumplen con tus expectativas.

|

| 349 |

+

```

|

| 350 |

+

|

| 351 |

+

|

| 352 |

+

</details>

|

| 353 |

+

|

| 354 |

+

---

|

| 355 |

+

|

| 356 |

+

## Evaluation Graphs

|

| 357 |

+

|

| 358 |

+

<details>

|

| 359 |

+

<summary>Click to expand</summary>

|

| 360 |

+

|

| 361 |

+

<!-- This section describes the evaluation protocols and provides the results. -->

|

| 362 |

+

|

| 363 |

+

|

| 364 |

+

<figure style=""text-align:center;"">

|

| 365 |

+

<figcaption><b>BLOOMChat vs Baselines Model in Human Preference Rankings</b></figcaption>

|

| 366 |

+

</figure>

|

| 367 |

+

<br>

|

| 368 |

+

|

| 369 |

+

|

| 370 |

+

<figure style=""text-align:center;"">

|

| 371 |

+

<figcaption><b>BLOOMChat vs GPT-4 in Human Preference Ranking</b></figcaption>

|

| 372 |

+

</figure>

|

| 373 |

+

<br>

|

| 374 |

+

|

| 375 |

+

|

| 376 |

+

<figure style=""text-align:center;"">

|

| 377 |

+

<figcaption><b>BLOOMChat surpasses other Bloom variants and state-of-the-art open-source chat models in translation tasks [NOTE: Evaluation of the BLOOM and BLOOMZ in WMT18 en->zh zh->en used (human, bot) ChatML tags due to an unintentional configuration. Results might be suboptimal.]</b></figcaption>

|

| 378 |

+

</figure>

|

| 379 |

+

<br>

|

| 380 |

+

|

| 381 |

+

</details>

|

| 382 |

+

|

| 383 |

+

---

|

| 384 |

+

|

| 385 |

+

## Training Details

|

| 386 |

+

|

| 387 |

+

<details>

|

| 388 |

+

<summary>Click to expand</summary>

|

| 389 |

+

|

| 390 |

+

### Training Data

|

| 391 |

+

|

| 392 |

+

<!-- This should link to a Data Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

|

| 393 |

+

|

| 394 |

+

- [OIG dataset from OpenChatKit](https://huggingface.co/datasets/laion/OIG)

|

| 395 |

+

- [Dolly 2.0](https://huggingface.co/datasets/databricks/databricks-dolly-15k)

|

| 396 |

+

- [Oasst1](https://huggingface.co/datasets/OpenAssistant/oasst1)

|

| 397 |

+

|

| 398 |

+

### Training Procedure

|

| 399 |

+

|

| 400 |

+

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

|

| 401 |

+

|

| 402 |

+

We trained BLOOMChat with [SambaNova DataScale systems](https://sambanova.ai/products/datascale/) with SambaNova's in-house Reconfigurable Dataflow Unit (RDU). We started from [BLOOM (176B)](https://huggingface.co/bigscience/bloom), an open-source multilingual LLM pretrained by the [BigScience group](https://huggingface.co/bigscience). We instruction-tune BLOOM (176B) on OpenChatKit with each data source subsampled to 100k for one epoch, followed by three epochs over the combined OpenChatKit and Dolly 2.0.

|

| 403 |

+

All of the code used to prepare the datasets and the scripts to run training and inference are open-sourced and freely available at [sambanova/bloomchat](https://github.com/sambanova/bloomchat/tree/main)

|

| 404 |

+

|

| 405 |

+

|

| 406 |

+

### Prompting Style Used For Training

|

| 407 |

+

```

|

| 408 |

+

<human>: {input1 that the user wants from the bot}

|

| 409 |

+

<bot>: {response1}</s>

|

| 410 |

+

<human>: {input2 that the user wants from the bot}

|

| 411 |

+

<bot>: {response2}</s>

|

| 412 |

+

```

|

| 413 |

+

|

| 414 |

+

### Hyperparameters

|

| 415 |

+

|

| 416 |

+

**Instruction-tuned Training on OIG**

|

| 417 |

+

|

| 418 |

+

- Hardware: SambaNova Reconfigurable Dataflow Unit (RDU)

|

| 419 |

+

- Optimizer: AdamW

|

| 420 |

+

- Grad accumulation: 1

|

| 421 |

+

- Epochs: 1

|

| 422 |

+

- Global Batch size: 128

|

| 423 |

+

- Batch tokens: 128 * 2048 = 262,144 tokens

|

| 424 |

+

- Learning Rate: 1e-5

|

| 425 |

+

- Learning Rate Scheduler: Cosine Schedule with Warmup

|

| 426 |

+

- Warmup Steps: 0

|

| 427 |

+

- End Learning Ratio: 0.1

|

| 428 |

+

- Weight decay: 0.1

|

| 429 |

+

|

| 430 |

+

**Instruction-tuned Training on Dolly 2.0 and Oasst1**

|

| 431 |

+

|

| 432 |

+

- Hardware: SambaNova Reconfigurable Dataflow Unit (RDU)

|

| 433 |

+

- Optimizer: AdamW

|

| 434 |

+

- Grad accumulation: 1

|

| 435 |

+

- Epochs: 3

|

| 436 |

+

- Global Batch size: 128

|

| 437 |

+

- Batch tokens: 128 * 2048 = 262,144 tokens

|

| 438 |

+

- Learning Rate: 1e-5

|

| 439 |

+

- Learning Rate Scheduler: Cosine Schedule with Warmup

|

| 440 |

+

- Warmup Steps: 0

|

| 441 |

+

- End Learning Ratio: 0.1

|

| 442 |

+

- Weight decay: 0.1

|

| 443 |

+

|

| 444 |

+

</details>

|

| 445 |

+

|

| 446 |

+

---

|

| 447 |

+

|

| 448 |

+

## Bias, Risks, and Limitations

|

| 449 |

+

|

| 450 |

+

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

|

| 451 |

+

|

| 452 |

+

Like all LLMs, BLOOMChat has certain limitations:

|

| 453 |

+