Datasets:

Commit

·

6329dfb

1

Parent(s):

82a0b5c

Update parquet files

Browse files- DocLayNet-base.py +0 -234

- DocLayNet_image_annotated_bounding_boxes_line.png → DocLayNet/doc_lay_net-base-test.parquet +2 -2

- DocLayNet_image_annotated_bounding_boxes_paragraph.png → DocLayNet/doc_lay_net-base-train-00000-of-00007.parquet +2 -2

- data/dataset_base.zip → DocLayNet/doc_lay_net-base-train-00001-of-00007.parquet +2 -2

- DocLayNet/doc_lay_net-base-train-00002-of-00007.parquet +3 -0

- DocLayNet/doc_lay_net-base-train-00003-of-00007.parquet +3 -0

- DocLayNet/doc_lay_net-base-train-00004-of-00007.parquet +3 -0

- DocLayNet/doc_lay_net-base-train-00005-of-00007.parquet +3 -0

- DocLayNet/doc_lay_net-base-train-00006-of-00007.parquet +3 -0

- DocLayNet/doc_lay_net-base-validation.parquet +3 -0

- README.md +0 -252

DocLayNet-base.py

DELETED

|

@@ -1,234 +0,0 @@

|

|

| 1 |

-

# Copyright 2020 The HuggingFace Datasets Authors and the current dataset script contributor.

|

| 2 |

-

#

|

| 3 |

-

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 4 |

-

# you may not use this file except in compliance with the License.

|

| 5 |

-

# You may obtain a copy of the License at

|

| 6 |

-

#

|

| 7 |

-

# http://www.apache.org/licenses/LICENSE-2.0

|

| 8 |

-

#

|

| 9 |

-

# Unless required by applicable law or agreed to in writing, software

|

| 10 |

-

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 11 |

-

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 12 |

-

# See the License for the specific language governing permissions and

|

| 13 |

-

# limitations under the License.

|

| 14 |

-

|

| 15 |

-

# DocLayNet License: https://github.com/DS4SD/DocLayNet/blob/main/LICENSE

|

| 16 |

-

# Apache License 2.0

|

| 17 |

-

|

| 18 |

-

"""

|

| 19 |

-

DocLayNet base is a about 10% of the dataset DocLayNet (more information at https://huggingface.co/datasets/pierreguillou/DocLayNet-base)

|

| 20 |

-

DocLayNet: A Large Human-Annotated Dataset for Document-Layout Analysis

|

| 21 |

-

DocLayNet dataset:

|

| 22 |

-

- DocLayNet core dataset: https://codait-cos-dax.s3.us.cloud-object-storage.appdomain.cloud/dax-doclaynet/1.0.0/DocLayNet_core.zip

|

| 23 |

-

- DocLayNet extra dataset: https://codait-cos-dax.s3.us.cloud-object-storage.appdomain.cloud/dax-doclaynet/1.0.0/DocLayNet_extra.zip

|

| 24 |

-

"""

|

| 25 |

-

|

| 26 |

-

import json

|

| 27 |

-

import os

|

| 28 |

-

import base64

|

| 29 |

-

from PIL import Image

|

| 30 |

-

import datasets

|

| 31 |

-

|

| 32 |

-

# Find for instance the citation on arxiv or on the dataset repo/website

|

| 33 |

-

_CITATION = """\

|

| 34 |

-

@article{doclaynet2022,

|

| 35 |

-

title = {DocLayNet: A Large Human-Annotated Dataset for Document-Layout Analysis},

|

| 36 |

-

doi = {10.1145/3534678.353904},

|

| 37 |

-

url = {https://arxiv.org/abs/2206.01062},

|

| 38 |

-

author = {Pfitzmann, Birgit and Auer, Christoph and Dolfi, Michele and Nassar, Ahmed S and Staar, Peter W J},

|

| 39 |

-

year = {2022}

|

| 40 |

-

}

|

| 41 |

-

"""

|

| 42 |

-

|

| 43 |

-

# You can copy an official description

|

| 44 |

-

_DESCRIPTION = """\

|

| 45 |

-

Accurate document layout analysis is a key requirement for high-quality PDF document conversion. With the recent availability of public, large ground-truth datasets such as PubLayNet and DocBank, deep-learning models have proven to be very effective at layout detection and segmentation. While these datasets are of adequate size to train such models, they severely lack in layout variability since they are sourced from scientific article repositories such as PubMed and arXiv only. Consequently, the accuracy of the layout segmentation drops significantly when these models are applied on more challenging and diverse layouts. In this paper, we present \textit{DocLayNet}, a new, publicly available, document-layout annotation dataset in COCO format. It contains 80863 manually annotated pages from diverse data sources to represent a wide variability in layouts. For each PDF page, the layout annotations provide labelled bounding-boxes with a choice of 11 distinct classes. DocLayNet also provides a subset of double- and triple-annotated pages to determine the inter-annotator agreement. In multiple experiments, we provide baseline accuracy scores (in mAP) for a set of popular object detection models. We also demonstrate that these models fall approximately 10\% behind the inter-annotator agreement. Furthermore, we provide evidence that DocLayNet is of sufficient size. Lastly, we compare models trained on PubLayNet, DocBank and DocLayNet, showing that layout predictions of the DocLayNet-trained models are more robust and thus the preferred choice for general-purpose document-layout analysis.

|

| 46 |

-

"""

|

| 47 |

-

|

| 48 |

-

_HOMEPAGE = "https://developer.ibm.com/exchanges/data/all/doclaynet/"

|

| 49 |

-

|

| 50 |

-

_LICENSE = "https://github.com/DS4SD/DocLayNet/blob/main/LICENSE"

|

| 51 |

-

|

| 52 |

-

# The HuggingFace Datasets library doesn't host the datasets but only points to the original files.

|

| 53 |

-

# This can be an arbitrary nested dict/list of URLs (see below in `_split_generators` method)

|

| 54 |

-

# _URLS = {

|

| 55 |

-

# "first_domain": "https://huggingface.co/great-new-dataset-first_domain.zip",

|

| 56 |

-

# "second_domain": "https://huggingface.co/great-new-dataset-second_domain.zip",

|

| 57 |

-

# }

|

| 58 |

-

|

| 59 |

-

# functions

|

| 60 |

-

def load_image(image_path):

|

| 61 |

-

image = Image.open(image_path).convert("RGB")

|

| 62 |

-

w, h = image.size

|

| 63 |

-

return image, (w, h)

|

| 64 |

-

|

| 65 |

-

logger = datasets.logging.get_logger(__name__)

|

| 66 |

-

|

| 67 |

-

|

| 68 |

-

class DocLayNetConfig(datasets.BuilderConfig):

|

| 69 |

-

"""BuilderConfig for DocLayNet base"""

|

| 70 |

-

|

| 71 |

-

def __init__(self, **kwargs):

|

| 72 |

-

"""BuilderConfig for DocLayNet base.

|

| 73 |

-

Args:

|

| 74 |

-

**kwargs: keyword arguments forwarded to super.

|

| 75 |

-

"""

|

| 76 |

-

super(DocLayNetConfig, self).__init__(**kwargs)

|

| 77 |

-

|

| 78 |

-

|

| 79 |

-

class DocLayNet(datasets.GeneratorBasedBuilder):

|

| 80 |

-

"""

|

| 81 |

-

DocLayNet base is a about 10% of the dataset DocLayNet (more information at https://huggingface.co/datasets/pierreguillou/DocLayNet-base)

|

| 82 |

-

DocLayNet: A Large Human-Annotated Dataset for Document-Layout Analysis

|

| 83 |

-

DocLayNet dataset:

|

| 84 |

-

- DocLayNet core dataset: https://codait-cos-dax.s3.us.cloud-object-storage.appdomain.cloud/dax-doclaynet/1.0.0/DocLayNet_core.zip

|

| 85 |

-

- DocLayNet extra dataset: https://codait-cos-dax.s3.us.cloud-object-storage.appdomain.cloud/dax-doclaynet/1.0.0/DocLayNet_extra.zip

|

| 86 |

-

"""

|

| 87 |

-

|

| 88 |

-

VERSION = datasets.Version("1.1.0")

|

| 89 |

-

|

| 90 |

-

# This is an example of a dataset with multiple configurations.

|

| 91 |

-

# If you don't want/need to define several sub-sets in your dataset,

|

| 92 |

-

# just remove the BUILDER_CONFIG_CLASS and the BUILDER_CONFIGS attributes.

|

| 93 |

-

|

| 94 |

-

# If you need to make complex sub-parts in the datasets with configurable options

|

| 95 |

-

# You can create your own builder configuration class to store attribute, inheriting from datasets.BuilderConfig

|

| 96 |

-

# BUILDER_CONFIG_CLASS = MyBuilderConfig

|

| 97 |

-

|

| 98 |

-

# You will be able to load one or the other configurations in the following list with

|

| 99 |

-

# data = datasets.load_dataset('my_dataset', 'first_domain')

|

| 100 |

-

# data = datasets.load_dataset('my_dataset', 'second_domain')

|

| 101 |

-

BUILDER_CONFIGS = [

|

| 102 |

-

DocLayNetConfig(name="DocLayNet", version=datasets.Version("1.0.0"), description="DocLayNeT base dataset"),

|

| 103 |

-

]

|

| 104 |

-

|

| 105 |

-

#DEFAULT_CONFIG_NAME = "DocLayNet" # It's not mandatory to have a default configuration. Just use one if it make sense.

|

| 106 |

-

|

| 107 |

-

def _info(self):

|

| 108 |

-

|

| 109 |

-

features = datasets.Features(

|

| 110 |

-

{

|

| 111 |

-

"id": datasets.Value("string"),

|

| 112 |

-

"texts": datasets.Sequence(datasets.Value("string")),

|

| 113 |

-

"bboxes_block": datasets.Sequence(datasets.Sequence(datasets.Value("int64"))),

|

| 114 |

-

"bboxes_line": datasets.Sequence(datasets.Sequence(datasets.Value("int64"))),

|

| 115 |

-

"categories": datasets.Sequence(

|

| 116 |

-

datasets.features.ClassLabel(

|

| 117 |

-

names=["Caption", "Footnote", "Formula", "List-item", "Page-footer", "Page-header", "Picture", "Section-header", "Table", "Text", "Title"]

|

| 118 |

-

)

|

| 119 |

-

),

|

| 120 |

-

"image": datasets.features.Image(),

|

| 121 |

-

"pdf": datasets.Value("string"),

|

| 122 |

-

"page_hash": datasets.Value("string"), # unique identifier, equal to filename

|

| 123 |

-

"original_filename": datasets.Value("string"), # original document filename

|

| 124 |

-

"page_no": datasets.Value("int32"), # page number in original document

|

| 125 |

-

"num_pages": datasets.Value("int32"), # total pages in original document

|

| 126 |

-

"original_width": datasets.Value("int32"), # width in pixels @72 ppi

|

| 127 |

-

"original_height": datasets.Value("int32"), # height in pixels @72 ppi

|

| 128 |

-

"coco_width": datasets.Value("int32"), # with in pixels in PNG and COCO format

|

| 129 |

-

"coco_height": datasets.Value("int32"), # with in pixels in PNG and COCO format

|

| 130 |

-

"collection": datasets.Value("string"), # sub-collection name

|

| 131 |

-

"doc_category": datasets.Value("string"), # category type of the document

|

| 132 |

-

}

|

| 133 |

-

)

|

| 134 |

-

|

| 135 |

-

return datasets.DatasetInfo(

|

| 136 |

-

# This is the description that will appear on the datasets page.

|

| 137 |

-

description=_DESCRIPTION,

|

| 138 |

-

# This defines the different columns of the dataset and their types

|

| 139 |

-

features=features, # Here we define them above because they are different between the two configurations

|

| 140 |

-

# If there's a common (input, target) tuple from the features, uncomment supervised_keys line below and

|

| 141 |

-

# specify them. They'll be used if as_supervised=True in builder.as_dataset.

|

| 142 |

-

# supervised_keys=("sentence", "label"),

|

| 143 |

-

# Homepage of the dataset for documentation

|

| 144 |

-

homepage=_HOMEPAGE,

|

| 145 |

-

# License for the dataset if available

|

| 146 |

-

license=_LICENSE,

|

| 147 |

-

# Citation for the dataset

|

| 148 |

-

citation=_CITATION,

|

| 149 |

-

)

|

| 150 |

-

|

| 151 |

-

def _split_generators(self, dl_manager):

|

| 152 |

-

# TODO: This method is tasked with downloading/extracting the data and defining the splits depending on the configuration

|

| 153 |

-

# If several configurations are possible (listed in BUILDER_CONFIGS), the configuration selected by the user is in self.config.name

|

| 154 |

-

|

| 155 |

-

# dl_manager is a datasets.download.DownloadManager that can be used to download and extract URLS

|

| 156 |

-

# It can accept any type or nested list/dict and will give back the same structure with the url replaced with path to local files.

|

| 157 |

-

# By default the archives will be extracted and a path to a cached folder where they are extracted is returned instead of the archive

|

| 158 |

-

|

| 159 |

-

downloaded_file = dl_manager.download_and_extract("https://huggingface.co/datasets/pierreguillou/DocLayNet-base/resolve/main/data/dataset_base.zip")

|

| 160 |

-

return [

|

| 161 |

-

datasets.SplitGenerator(

|

| 162 |

-

name=datasets.Split.TRAIN,

|

| 163 |

-

# These kwargs will be passed to _generate_examples

|

| 164 |

-

gen_kwargs={

|

| 165 |

-

"filepath": f"{downloaded_file}/base_dataset/train/",

|

| 166 |

-

"split": "train",

|

| 167 |

-

},

|

| 168 |

-

),

|

| 169 |

-

datasets.SplitGenerator(

|

| 170 |

-

name=datasets.Split.VALIDATION,

|

| 171 |

-

# These kwargs will be passed to _generate_examples

|

| 172 |

-

gen_kwargs={

|

| 173 |

-

"filepath": f"{downloaded_file}/base_dataset/val/",

|

| 174 |

-

"split": "dev",

|

| 175 |

-

},

|

| 176 |

-

),

|

| 177 |

-

datasets.SplitGenerator(

|

| 178 |

-

name=datasets.Split.TEST,

|

| 179 |

-

# These kwargs will be passed to _generate_examples

|

| 180 |

-

gen_kwargs={

|

| 181 |

-

"filepath": f"{downloaded_file}/base_dataset/test/",

|

| 182 |

-

"split": "test"

|

| 183 |

-

},

|

| 184 |

-

),

|

| 185 |

-

]

|

| 186 |

-

|

| 187 |

-

def _generate_examples(self, filepath, split):

|

| 188 |

-

logger.info("⏳ Generating examples from = %s", filepath)

|

| 189 |

-

ann_dir = os.path.join(filepath, "annotations")

|

| 190 |

-

img_dir = os.path.join(filepath, "images")

|

| 191 |

-

pdf_dir = os.path.join(filepath, "pdfs")

|

| 192 |

-

for guid, file in enumerate(sorted(os.listdir(ann_dir))):

|

| 193 |

-

texts = []

|

| 194 |

-

bboxes_block = []

|

| 195 |

-

bboxes_line = []

|

| 196 |

-

categories = []

|

| 197 |

-

|

| 198 |

-

# get json

|

| 199 |

-

file_path = os.path.join(ann_dir, file)

|

| 200 |

-

with open(file_path, "r", encoding="utf8") as f:

|

| 201 |

-

data = json.load(f)

|

| 202 |

-

|

| 203 |

-

# get image

|

| 204 |

-

image_path = os.path.join(img_dir, file)

|

| 205 |

-

image_path = image_path.replace("json", "png")

|

| 206 |

-

image, size = load_image(image_path)

|

| 207 |

-

|

| 208 |

-

# get pdf

|

| 209 |

-

pdf_path = os.path.join(pdf_dir, file)

|

| 210 |

-

pdf_path = pdf_path.replace("json", "pdf")

|

| 211 |

-

with open(pdf_path, "rb") as pdf_file:

|

| 212 |

-

pdf_bytes = pdf_file.read()

|

| 213 |

-

pdf_encoded_string = base64.b64encode(pdf_bytes)

|

| 214 |

-

|

| 215 |

-

for item in data["form"]:

|

| 216 |

-

text_example, category_example, bbox_block_example, bbox_line_example = item["text"], item["category"], item["box"], item["box_line"]

|

| 217 |

-

texts.append(text_example)

|

| 218 |

-

categories.append(category_example)

|

| 219 |

-

bboxes_block.append(bbox_block_example)

|

| 220 |

-

bboxes_line.append(bbox_line_example)

|

| 221 |

-

|

| 222 |

-

# get all metadadata

|

| 223 |

-

page_hash = data["metadata"]["page_hash"]

|

| 224 |

-

original_filename = data["metadata"]["original_filename"]

|

| 225 |

-

page_no = data["metadata"]["page_no"]

|

| 226 |

-

num_pages = data["metadata"]["num_pages"]

|

| 227 |

-

original_width = data["metadata"]["original_width"]

|

| 228 |

-

original_height = data["metadata"]["original_height"]

|

| 229 |

-

coco_width = data["metadata"]["coco_width"]

|

| 230 |

-

coco_height = data["metadata"]["coco_height"]

|

| 231 |

-

collection = data["metadata"]["collection"]

|

| 232 |

-

doc_category = data["metadata"]["doc_category"]

|

| 233 |

-

|

| 234 |

-

yield guid, {"id": str(guid), "texts": texts, "bboxes_block": bboxes_block, "bboxes_line": bboxes_line, "categories": categories, "image": image, "pdf": pdf_encoded_string, "page_hash": page_hash, "original_filename": original_filename, "page_no": page_no, "num_pages": num_pages, "original_width": original_width, "original_height": original_height, "coco_width": coco_width, "coco_height": coco_height, "collection": collection, "doc_category": doc_category}

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

DocLayNet_image_annotated_bounding_boxes_line.png → DocLayNet/doc_lay_net-base-test.parquet

RENAMED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:fbf1d8e83d96960a491bf5ba66438ae56a7330b9ba50404a728d19d02b85f6cb

|

| 3 |

+

size 258288762

|

DocLayNet_image_annotated_bounding_boxes_paragraph.png → DocLayNet/doc_lay_net-base-train-00000-of-00007.parquet

RENAMED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:bf75d041e3aaa4569b351c1e0af0a9c78dcdb7b766dbfa3beed03536c76f47aa

|

| 3 |

+

size 539896229

|

data/dataset_base.zip → DocLayNet/doc_lay_net-base-train-00001-of-00007.parquet

RENAMED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f4c73489ef4847b96899af032a22d88a98b6a238922f0aadd558453f87c5e615

|

| 3 |

+

size 548248203

|

DocLayNet/doc_lay_net-base-train-00002-of-00007.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:08980b4c471202a8584775bc6ace3939090c03ed17c8f1dab7d9ff80b477e1e2

|

| 3 |

+

size 513024539

|

DocLayNet/doc_lay_net-base-train-00003-of-00007.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e805ef3faa846dc528cf69fd27897350610868fbabbc7ed57f93afff0277e659

|

| 3 |

+

size 553568823

|

DocLayNet/doc_lay_net-base-train-00004-of-00007.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:6cf9fcd37314b29bd832129179ffd7592730a2d4aebe219a34b12115476fedc6

|

| 3 |

+

size 533439173

|

DocLayNet/doc_lay_net-base-train-00005-of-00007.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:be1828b64a84eb1a9b19ca9e8a30f94ff56267475e722bb81986e2a51f4b2604

|

| 3 |

+

size 524782450

|

DocLayNet/doc_lay_net-base-train-00006-of-00007.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:504d459fa30a15c00371c566d0231b4765649a24beeadcf7f8f588ee533326a4

|

| 3 |

+

size 472457669

|

DocLayNet/doc_lay_net-base-validation.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:322b14367bd366ef1ef65d724472c13ebfafc683474a6ea5bed0549e89b1ba5e

|

| 3 |

+

size 328851109

|

README.md

DELETED

|

@@ -1,252 +0,0 @@

|

|

| 1 |

-

---

|

| 2 |

-

annotations_creators:

|

| 3 |

-

- crowdsourced

|

| 4 |

-

license: other

|

| 5 |

-

pretty_name: DocLayNet base

|

| 6 |

-

size_categories:

|

| 7 |

-

- 0K<n<1K

|

| 8 |

-

tags:

|

| 9 |

-

- layout-segmentation

|

| 10 |

-

- COCO

|

| 11 |

-

- document-understanding

|

| 12 |

-

- PDF

|

| 13 |

-

- IBM

|

| 14 |

-

task_categories:

|

| 15 |

-

- object-detection

|

| 16 |

-

- image-segmentation

|

| 17 |

-

task_ids:

|

| 18 |

-

- instance-segmentation

|

| 19 |

-

---

|

| 20 |

-

|

| 21 |

-

# Dataset Card for DocLayNet base

|

| 22 |

-

|

| 23 |

-

## About this card (01/27/2023)

|

| 24 |

-

|

| 25 |

-

### Property and license

|

| 26 |

-

|

| 27 |

-

All information from this page but the content of this paragraph has been copied/pasted from [Dataset Card for DocLayNet](https://huggingface.co/datasets/ds4sd/DocLayNet).

|

| 28 |

-

|

| 29 |

-

DocLayNet is a dataset created by Deep Search (IBM Research) published under [license CDLA-Permissive-1.0](https://huggingface.co/datasets/ds4sd/DocLayNet#licensing-information).

|

| 30 |

-

|

| 31 |

-

I do not claim any rights to the data taken from this dataset and published on this page.

|

| 32 |

-

|

| 33 |

-

### DocLayNet dataset

|

| 34 |

-

|

| 35 |

-

[DocLayNet dataset](https://github.com/DS4SD/DocLayNet) (IBM) provides page-by-page layout segmentation ground-truth using bounding-boxes for 11 distinct class labels on 80863 unique pages from 6 document categories.

|

| 36 |

-

|

| 37 |

-

Until today, the dataset can be downloaded through direct links or as a dataset from Hugging Face datasets:

|

| 38 |

-

- direct links: [doclaynet_core.zip](https://codait-cos-dax.s3.us.cloud-object-storage.appdomain.cloud/dax-doclaynet/1.0.0/DocLayNet_core.zip) (28 GiB), [doclaynet_extra.zip](https://codait-cos-dax.s3.us.cloud-object-storage.appdomain.cloud/dax-doclaynet/1.0.0/DocLayNet_extra.zip) (7.5 GiB)

|

| 39 |

-

- Hugging Face dataset library: [dataset DocLayNet](https://huggingface.co/datasets/ds4sd/DocLayNet)

|

| 40 |

-

|

| 41 |

-

### Processing into a format facilitating it use by HF notebooks

|

| 42 |

-

|

| 43 |

-

These 2 options require the downloading of all the data (approximately 30GBi), which requires downloading time (about 45 mn in Google Colab) and a large space on the hard disk. These could limit experimentation for people with low resources.

|

| 44 |

-

|

| 45 |

-

Moreover, even when using the download via HF datasets library, it is necessary to download the EXTRA zip separately ([doclaynet_extra.zip](https://codait-cos-dax.s3.us.cloud-object-storage.appdomain.cloud/dax-doclaynet/1.0.0/DocLayNet_extra.zip), 7.5 GiB) to associate the annotated bounding boxes with the text extracted by OCR from the PDFs. This operation also requires additional code because the boundings boxes of the texts do not necessarily correspond to those annotated (a calculation of the percentage of area in common between the boundings boxes annotated and those of the texts makes it possible to make a comparison between them).

|

| 46 |

-

|

| 47 |

-

At last, in order to use Hugging Face notebooks on fine-tuning layout models like LayoutLMv3 or LiLT, DocLayNet data must be processed in a proper format.

|

| 48 |

-

|

| 49 |

-

For all these reasons, I decided to process the DocLayNet dataset:

|

| 50 |

-

- into 3 datasets of different sizes:

|

| 51 |

-

- [DocLayNet small](https://huggingface.co/datasets/pierreguillou/DocLayNet-small) < 1.000k document images (691 train, 64 val, 49 test)

|

| 52 |

-

- [DocLayNet base](https://huggingface.co/datasets/pierreguillou/DocLayNet-base) < 10.000k document images (6910 train, 648 val, 499 test)

|

| 53 |

-

- DocLayNet large with full dataset (to be done)

|

| 54 |

-

- with associated texts,

|

| 55 |

-

- and in a format facilitating their use by HF notebooks.

|

| 56 |

-

|

| 57 |

-

*Note: the layout HF notebooks will greatly help participants of the IBM [ICDAR 2023 Competition on Robust Layout Segmentation in Corporate Documents](https://ds4sd.github.io/icdar23-doclaynet/)!*

|

| 58 |

-

|

| 59 |

-

### Download & overview

|

| 60 |

-

|

| 61 |

-

```

|

| 62 |

-

# !pip install -q datasets

|

| 63 |

-

|

| 64 |

-

from datasets import load_dataset

|

| 65 |

-

|

| 66 |

-

dataset_base = load_dataset("pierreguillou/DocLayNet-base")

|

| 67 |

-

|

| 68 |

-

# overview of dataset_base

|

| 69 |

-

|

| 70 |

-

DatasetDict({

|

| 71 |

-

train: Dataset({

|

| 72 |

-

features: ['id', 'texts', 'bboxes_block', 'bboxes_line', 'categories', 'image', 'pdf', 'page_hash', 'original_filename', 'page_no', 'num_pages', 'original_width', 'original_height', 'coco_width', 'coco_height', 'collection', 'doc_category'],

|

| 73 |

-

num_rows: 6910

|

| 74 |

-

})

|

| 75 |

-

validation: Dataset({

|

| 76 |

-

features: ['id', 'texts', 'bboxes_block', 'bboxes_line', 'categories', 'image', 'pdf', 'page_hash', 'original_filename', 'page_no', 'num_pages', 'original_width', 'original_height', 'coco_width', 'coco_height', 'collection', 'doc_category'],

|

| 77 |

-

num_rows: 648

|

| 78 |

-

})

|

| 79 |

-

test: Dataset({

|

| 80 |

-

features: ['id', 'texts', 'bboxes_block', 'bboxes_line', 'categories', 'image', 'pdf', 'page_hash', 'original_filename', 'page_no', 'num_pages', 'original_width', 'original_height', 'coco_width', 'coco_height', 'collection', 'doc_category'],

|

| 81 |

-

num_rows: 499

|

| 82 |

-

})

|

| 83 |

-

})

|

| 84 |

-

```

|

| 85 |

-

|

| 86 |

-

## Annotated bounding boxes

|

| 87 |

-

|

| 88 |

-

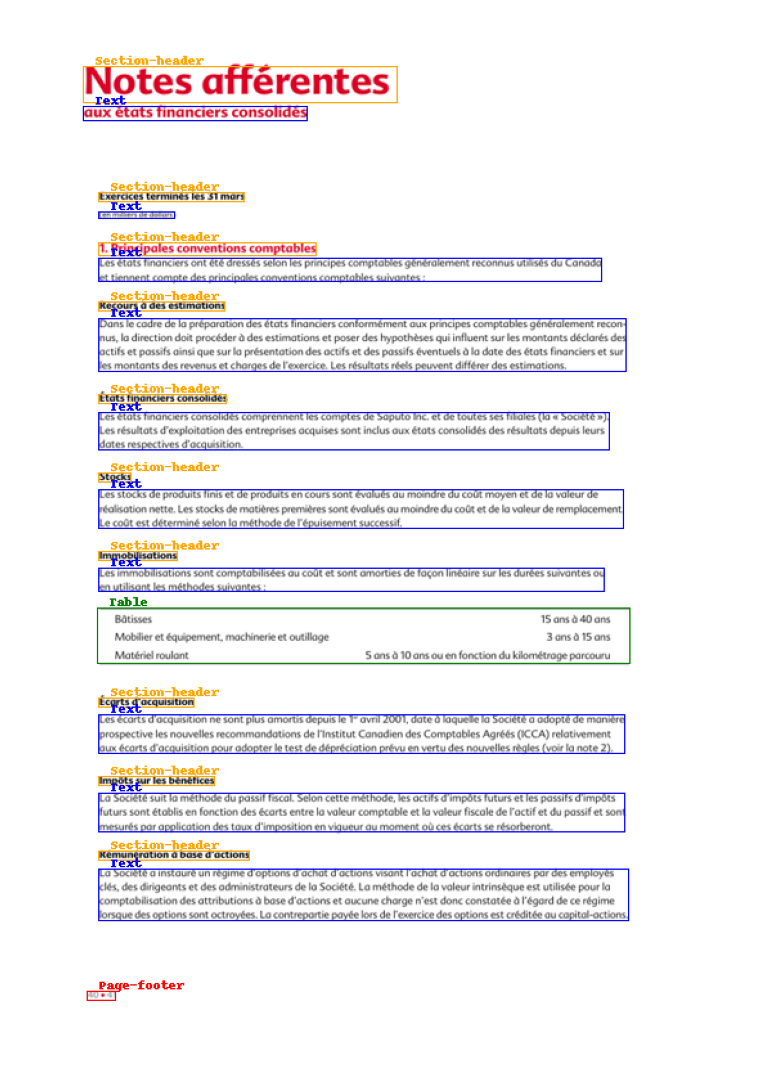

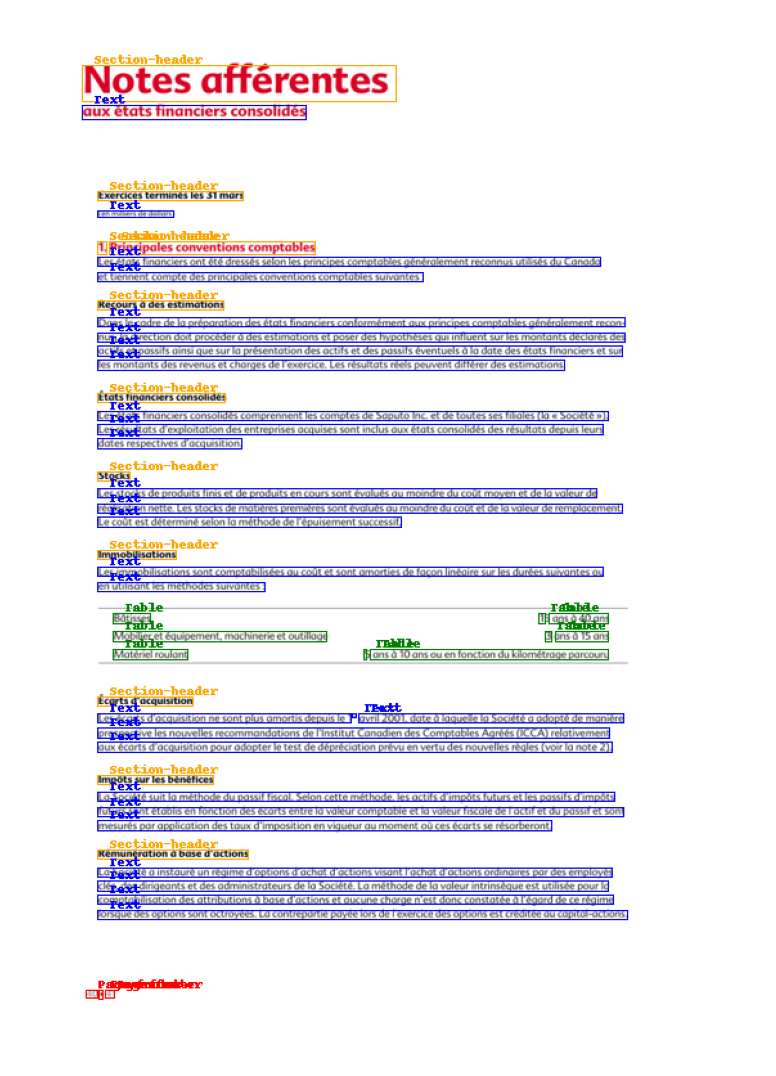

The DocLayNet base makes easy to display document image with the annotaed bounding boxes of paragraphes or lines.

|

| 89 |

-

|

| 90 |

-

Check the notebook [processing_DocLayNet_dataset_to_be_used_by_layout_models_of_HF_hub.ipynb]() in order to get the code.

|

| 91 |

-

|

| 92 |

-

### Paragraphes

|

| 93 |

-

|

| 94 |

-

|

| 95 |

-

|

| 96 |

-

### Lines

|

| 97 |

-

|

| 98 |

-

|

| 99 |

-

|

| 100 |

-

|

| 101 |

-

### HF notebooks

|

| 102 |

-

|

| 103 |

-

- [notebooks LayoutLM](https://github.com/NielsRogge/Transformers-Tutorials/tree/master/LayoutLM) (Niels Rogge)

|

| 104 |

-

- [notebooks LayoutLMv2](https://github.com/NielsRogge/Transformers-Tutorials/tree/master/LayoutLMv2) (Niels Rogge)

|

| 105 |

-

- [notebooks LayoutLMv3](https://github.com/NielsRogge/Transformers-Tutorials/tree/master/LayoutLMv3) (Niels Rogge)

|

| 106 |

-

- [notebooks LiLT](https://github.com/NielsRogge/Transformers-Tutorials/tree/master/LiLt) (Niels Rogge)

|

| 107 |

-

- [Document AI: Fine-tuning LiLT for document-understanding using Hugging Face Transformers](https://github.com/philschmid/document-ai-transformers/blob/main/training/lilt_funsd.ipynb) ([post](https://www.philschmid.de/fine-tuning-lilt#3-fine-tune-and-evaluate-lilt) of Phil Schmid)

|

| 108 |

-

|

| 109 |

-

## Table of Contents

|

| 110 |

-

- [Table of Contents](#table-of-contents)

|

| 111 |

-

- [Dataset Description](#dataset-description)

|

| 112 |

-

- [Dataset Summary](#dataset-summary)

|

| 113 |

-

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

|

| 114 |

-

- [Dataset Structure](#dataset-structure)

|

| 115 |

-

- [Data Fields](#data-fields)

|

| 116 |

-

- [Data Splits](#data-splits)

|

| 117 |

-

- [Dataset Creation](#dataset-creation)

|

| 118 |

-

- [Annotations](#annotations)

|

| 119 |

-

- [Additional Information](#additional-information)

|

| 120 |

-

- [Dataset Curators](#dataset-curators)

|

| 121 |

-

- [Licensing Information](#licensing-information)

|

| 122 |

-

- [Citation Information](#citation-information)

|

| 123 |

-

- [Contributions](#contributions)

|

| 124 |

-

|

| 125 |

-

## Dataset Description

|

| 126 |

-

|

| 127 |

-

- **Homepage:** https://developer.ibm.com/exchanges/data/all/doclaynet/

|

| 128 |

-

- **Repository:** https://github.com/DS4SD/DocLayNet

|

| 129 |

-

- **Paper:** https://doi.org/10.1145/3534678.3539043

|

| 130 |

-

- **Leaderboard:**

|

| 131 |

-

- **Point of Contact:**

|

| 132 |

-

|

| 133 |

-

### Dataset Summary

|

| 134 |

-

|

| 135 |

-

DocLayNet provides page-by-page layout segmentation ground-truth using bounding-boxes for 11 distinct class labels on 80863 unique pages from 6 document categories. It provides several unique features compared to related work such as PubLayNet or DocBank:

|

| 136 |

-

|

| 137 |

-

1. *Human Annotation*: DocLayNet is hand-annotated by well-trained experts, providing a gold-standard in layout segmentation through human recognition and interpretation of each page layout

|

| 138 |

-

2. *Large layout variability*: DocLayNet includes diverse and complex layouts from a large variety of public sources in Finance, Science, Patents, Tenders, Law texts and Manuals

|

| 139 |

-

3. *Detailed label set*: DocLayNet defines 11 class labels to distinguish layout features in high detail.

|

| 140 |

-

4. *Redundant annotations*: A fraction of the pages in DocLayNet are double- or triple-annotated, allowing to estimate annotation uncertainty and an upper-bound of achievable prediction accuracy with ML models

|

| 141 |

-

5. *Pre-defined train- test- and validation-sets*: DocLayNet provides fixed sets for each to ensure proportional representation of the class-labels and avoid leakage of unique layout styles across the sets.

|

| 142 |

-

|

| 143 |

-

### Supported Tasks and Leaderboards

|

| 144 |

-

|

| 145 |

-

We are hosting a competition in ICDAR 2023 based on the DocLayNet dataset. For more information see https://ds4sd.github.io/icdar23-doclaynet/.

|

| 146 |

-

|

| 147 |

-

## Dataset Structure

|

| 148 |

-

|

| 149 |

-

### Data Fields

|

| 150 |

-

|

| 151 |

-

DocLayNet provides four types of data assets:

|

| 152 |

-

|

| 153 |

-

1. PNG images of all pages, resized to square `1025 x 1025px`

|

| 154 |

-

2. Bounding-box annotations in COCO format for each PNG image

|

| 155 |

-

3. Extra: Single-page PDF files matching each PNG image

|

| 156 |

-

4. Extra: JSON file matching each PDF page, which provides the digital text cells with coordinates and content

|

| 157 |

-

|

| 158 |

-

The COCO image record are defined like this example

|

| 159 |

-

|

| 160 |

-

```js

|

| 161 |

-

...

|

| 162 |

-

{

|

| 163 |

-

"id": 1,

|

| 164 |

-

"width": 1025,

|

| 165 |

-

"height": 1025,

|

| 166 |

-

"file_name": "132a855ee8b23533d8ae69af0049c038171a06ddfcac892c3c6d7e6b4091c642.png",

|

| 167 |

-

|

| 168 |

-

// Custom fields:

|

| 169 |

-

"doc_category": "financial_reports" // high-level document category

|

| 170 |

-

"collection": "ann_reports_00_04_fancy", // sub-collection name

|

| 171 |

-

"doc_name": "NASDAQ_FFIN_2002.pdf", // original document filename

|

| 172 |

-

"page_no": 9, // page number in original document

|

| 173 |

-

"precedence": 0, // Annotation order, non-zero in case of redundant double- or triple-annotation

|

| 174 |

-

},

|

| 175 |

-

...

|

| 176 |

-

```

|

| 177 |

-

|

| 178 |

-

The `doc_category` field uses one of the following constants:

|

| 179 |

-

|

| 180 |

-

```

|

| 181 |

-

financial_reports,

|

| 182 |

-

scientific_articles,

|

| 183 |

-

laws_and_regulations,

|

| 184 |

-

government_tenders,

|

| 185 |

-

manuals,

|

| 186 |

-

patents

|

| 187 |

-

```

|

| 188 |

-

|

| 189 |

-

|

| 190 |

-

### Data Splits

|

| 191 |

-

|

| 192 |

-

The dataset provides three splits

|

| 193 |

-

- `train`

|

| 194 |

-

- `val`

|

| 195 |

-

- `test`

|

| 196 |

-

|

| 197 |

-

## Dataset Creation

|

| 198 |

-

|

| 199 |

-

### Annotations

|

| 200 |

-

|

| 201 |

-

#### Annotation process

|

| 202 |

-

|

| 203 |

-

The labeling guideline used for training of the annotation experts are available at [DocLayNet_Labeling_Guide_Public.pdf](https://raw.githubusercontent.com/DS4SD/DocLayNet/main/assets/DocLayNet_Labeling_Guide_Public.pdf).

|

| 204 |

-

|

| 205 |

-

|

| 206 |

-

#### Who are the annotators?

|

| 207 |

-

|

| 208 |

-

Annotations are crowdsourced.

|

| 209 |

-

|

| 210 |

-

|

| 211 |

-

## Additional Information

|

| 212 |

-

|

| 213 |

-

### Dataset Curators

|

| 214 |

-

|

| 215 |

-

The dataset is curated by the [Deep Search team](https://ds4sd.github.io/) at IBM Research.

|

| 216 |

-

You can contact us at [deepsearch-core@zurich.ibm.com](mailto:deepsearch-core@zurich.ibm.com).

|

| 217 |

-

|

| 218 |

-

Curators:

|

| 219 |

-

- Christoph Auer, [@cau-git](https://github.com/cau-git)

|

| 220 |

-

- Michele Dolfi, [@dolfim-ibm](https://github.com/dolfim-ibm)

|

| 221 |

-

- Ahmed Nassar, [@nassarofficial](https://github.com/nassarofficial)

|

| 222 |

-

- Peter Staar, [@PeterStaar-IBM](https://github.com/PeterStaar-IBM)

|

| 223 |

-

|

| 224 |

-

### Licensing Information

|

| 225 |

-

|

| 226 |

-

License: [CDLA-Permissive-1.0](https://cdla.io/permissive-1-0/)

|

| 227 |

-

|

| 228 |

-

|

| 229 |

-

### Citation Information

|

| 230 |

-

|

| 231 |

-

|

| 232 |

-

```bib

|

| 233 |

-

@article{doclaynet2022,

|

| 234 |

-

title = {DocLayNet: A Large Human-Annotated Dataset for Document-Layout Segmentation},

|

| 235 |

-

doi = {10.1145/3534678.353904},

|

| 236 |

-

url = {https://doi.org/10.1145/3534678.3539043},

|

| 237 |

-

author = {Pfitzmann, Birgit and Auer, Christoph and Dolfi, Michele and Nassar, Ahmed S and Staar, Peter W J},

|

| 238 |

-

year = {2022},

|

| 239 |

-

isbn = {9781450393850},

|

| 240 |

-

publisher = {Association for Computing Machinery},

|

| 241 |

-

address = {New York, NY, USA},

|

| 242 |

-

booktitle = {Proceedings of the 28th ACM SIGKDD Conference on Knowledge Discovery and Data Mining},

|

| 243 |

-

pages = {3743–3751},

|

| 244 |

-

numpages = {9},

|

| 245 |

-

location = {Washington DC, USA},

|

| 246 |

-

series = {KDD '22}

|

| 247 |

-

}

|

| 248 |

-

```

|

| 249 |

-

|

| 250 |

-

### Contributions

|

| 251 |

-

|

| 252 |

-

Thanks to [@dolfim-ibm](https://github.com/dolfim-ibm), [@cau-git](https://github.com/cau-git) for adding this dataset.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|