Add files using upload-large-folder tool

Browse filesThis view is limited to 50 files because it contains too many changes. See raw diff

- lerobot/docs/source/act.mdx +92 -0

- lerobot/docs/source/async.mdx +312 -0

- lerobot/docs/source/backwardcomp.mdx +151 -0

- lerobot/docs/source/bring_your_own_policies.mdx +175 -0

- lerobot/docs/source/cameras.mdx +206 -0

- lerobot/docs/source/contributing.md +1 -0

- lerobot/docs/source/debug_processor_pipeline.mdx +299 -0

- lerobot/docs/source/earthrover_mini_plus.mdx +225 -0

- lerobot/docs/source/env_processor.mdx +418 -0

- lerobot/docs/source/envhub.mdx +431 -0

- lerobot/docs/source/envhub_isaaclab_arena.mdx +510 -0

- lerobot/docs/source/envhub_leisaac.mdx +302 -0

- lerobot/docs/source/feetech.mdx +71 -0

- lerobot/docs/source/groot.mdx +131 -0

- lerobot/docs/source/hilserl.mdx +923 -0

- lerobot/docs/source/hilserl_sim.mdx +154 -0

- lerobot/docs/source/hope_jr.mdx +277 -0

- lerobot/docs/source/il_robots.mdx +620 -0

- lerobot/docs/source/implement_your_own_processor.mdx +273 -0

- lerobot/docs/source/index.mdx +23 -0

- lerobot/docs/source/installation.mdx +127 -0

- lerobot/docs/source/integrate_hardware.mdx +476 -0

- lerobot/docs/source/introduction_processors.mdx +314 -0

- lerobot/docs/source/koch.mdx +283 -0

- lerobot/docs/source/lekiwi.mdx +337 -0

- lerobot/docs/source/lerobot-dataset-v3.mdx +314 -0

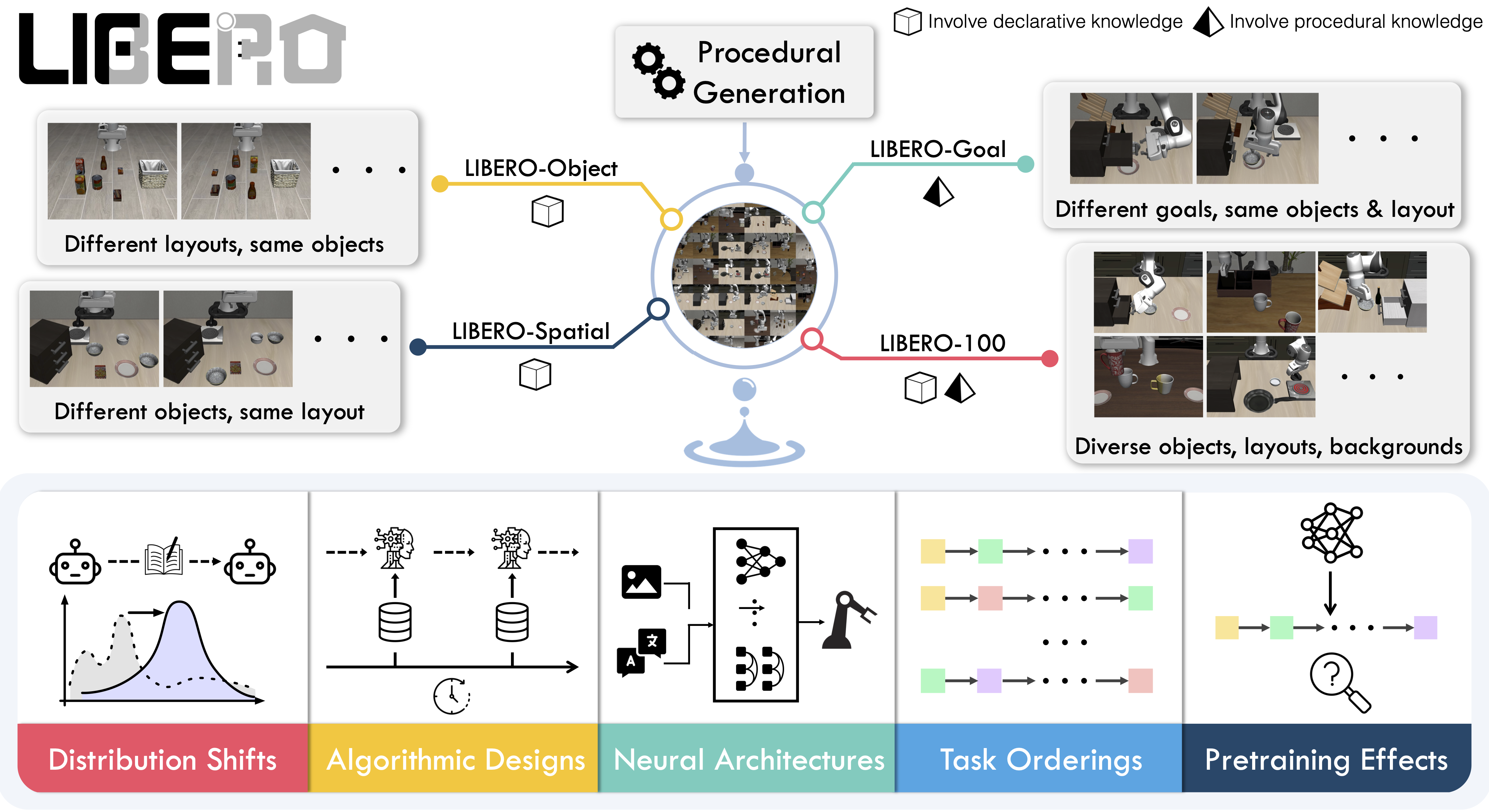

- lerobot/docs/source/libero.mdx +171 -0

- lerobot/docs/source/metaworld.mdx +80 -0

- lerobot/docs/source/multi_gpu_training.mdx +125 -0

- lerobot/docs/source/notebooks.mdx +29 -0

- lerobot/docs/source/peft_training.mdx +62 -0

- lerobot/docs/source/phone_teleop.mdx +191 -0

- lerobot/docs/source/pi0.mdx +101 -0

- lerobot/docs/source/pi05.mdx +123 -0

- lerobot/docs/source/pi0fast.mdx +246 -0

- lerobot/docs/source/policy_act_README.md +14 -0

- lerobot/docs/source/policy_diffusion_README.md +14 -0

- lerobot/docs/source/policy_groot_README.md +27 -0

- lerobot/docs/source/policy_smolvla_README.md +14 -0

- lerobot/docs/source/policy_tdmpc_README.md +14 -0

- lerobot/docs/source/policy_vqbet_README.md +14 -0

- lerobot/docs/source/policy_walloss_README.md +45 -0

- lerobot/docs/source/porting_datasets_v3.mdx +321 -0

- lerobot/docs/source/processors_robots_teleop.mdx +151 -0

- lerobot/docs/source/reachy2.mdx +303 -0

- lerobot/docs/source/rtc.mdx +188 -0

- lerobot/docs/source/sarm.mdx +592 -0

- lerobot/docs/source/smolvla.mdx +116 -0

- lerobot/docs/source/so100.mdx +640 -0

- lerobot/docs/source/so101.mdx +436 -0

lerobot/docs/source/act.mdx

ADDED

|

@@ -0,0 +1,92 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# ACT (Action Chunking with Transformers)

|

| 2 |

+

|

| 3 |

+

ACT is a **lightweight and efficient policy for imitation learning**, especially well-suited for fine-grained manipulation tasks. It's the **first model we recommend when you're starting out** with LeRobot due to its fast training time, low computational requirements, and strong performance.

|

| 4 |

+

|

| 5 |

+

<div class="video-container">

|

| 6 |

+

<iframe

|

| 7 |

+

width="100%"

|

| 8 |

+

height="415"

|

| 9 |

+

src="https://www.youtube.com/embed/ft73x0LfGpM"

|

| 10 |

+

title="LeRobot ACT Tutorial"

|

| 11 |

+

frameborder="0"

|

| 12 |

+

allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture"

|

| 13 |

+

allowfullscreen

|

| 14 |

+

></iframe>

|

| 15 |

+

</div>

|

| 16 |

+

|

| 17 |

+

_Watch this tutorial from the LeRobot team to learn how ACT works: [LeRobot ACT Tutorial](https://www.youtube.com/watch?v=ft73x0LfGpM)_

|

| 18 |

+

|

| 19 |

+

## Model Overview

|

| 20 |

+

|

| 21 |

+

Action Chunking with Transformers (ACT) was introduced in the paper [Learning Fine-Grained Bimanual Manipulation with Low-Cost Hardware](https://arxiv.org/abs/2304.13705) by Zhao et al. The policy was designed to enable precise, contact-rich manipulation tasks using affordable hardware and minimal demonstration data.

|

| 22 |

+

|

| 23 |

+

### Why ACT is Great for Beginners

|

| 24 |

+

|

| 25 |

+

ACT stands out as an excellent starting point for several reasons:

|

| 26 |

+

|

| 27 |

+

- **Fast Training**: Trains in a few hours on a single GPU

|

| 28 |

+

- **Lightweight**: Only ~80M parameters, making it efficient and easy to work with

|

| 29 |

+

- **Data Efficient**: Often achieves high success rates with just 50 demonstrations

|

| 30 |

+

|

| 31 |

+

### Architecture

|

| 32 |

+

|

| 33 |

+

ACT uses a transformer-based architecture with three main components:

|

| 34 |

+

|

| 35 |

+

1. **Vision Backbone**: ResNet-18 processes images from multiple camera viewpoints

|

| 36 |

+

2. **Transformer Encoder**: Synthesizes information from camera features, joint positions, and a learned latent variable

|

| 37 |

+

3. **Transformer Decoder**: Generates coherent action sequences using cross-attention

|

| 38 |

+

|

| 39 |

+

The policy takes as input:

|

| 40 |

+

|

| 41 |

+

- Multiple RGB images (e.g., from wrist cameras, front/top cameras)

|

| 42 |

+

- Current robot joint positions

|

| 43 |

+

- A latent style variable `z` (learned during training, set to zero during inference)

|

| 44 |

+

|

| 45 |

+

And outputs a chunk of `k` future action sequences.

|

| 46 |

+

|

| 47 |

+

## Installation Requirements

|

| 48 |

+

|

| 49 |

+

1. Install LeRobot by following our [Installation Guide](./installation).

|

| 50 |

+

2. ACT is included in the base LeRobot installation, so no additional dependencies are needed!

|

| 51 |

+

|

| 52 |

+

## Training ACT

|

| 53 |

+

|

| 54 |

+

ACT works seamlessly with the standard LeRobot training pipeline. Here's a complete example for training ACT on your dataset:

|

| 55 |

+

|

| 56 |

+

```bash

|

| 57 |

+

lerobot-train \

|

| 58 |

+

--dataset.repo_id=${HF_USER}/your_dataset \

|

| 59 |

+

--policy.type=act \

|

| 60 |

+

--output_dir=outputs/train/act_your_dataset \

|

| 61 |

+

--job_name=act_your_dataset \

|

| 62 |

+

--policy.device=cuda \

|

| 63 |

+

--wandb.enable=true \

|

| 64 |

+

--policy.repo_id=${HF_USER}/act_policy

|

| 65 |

+

```

|

| 66 |

+

|

| 67 |

+

### Training Tips

|

| 68 |

+

|

| 69 |

+

1. **Start with defaults**: ACT's default hyperparameters work well for most tasks

|

| 70 |

+

2. **Training duration**: Expect a few hours for 100k training steps on a single GPU

|

| 71 |

+

3. **Batch size**: Start with batch size 8 and adjust based on your GPU memory

|

| 72 |

+

|

| 73 |

+

### Train using Google Colab

|

| 74 |

+

|

| 75 |

+

If your local computer doesn't have a powerful GPU, you can utilize Google Colab to train your model by following the [ACT training notebook](./notebooks#training-act).

|

| 76 |

+

|

| 77 |

+

## Evaluating ACT

|

| 78 |

+

|

| 79 |

+

Once training is complete, you can evaluate your ACT policy using the `lerobot-record` command with your trained policy. This will run inference and record evaluation episodes:

|

| 80 |

+

|

| 81 |

+

```bash

|

| 82 |

+

lerobot-record \

|

| 83 |

+

--robot.type=so100_follower \

|

| 84 |

+

--robot.port=/dev/ttyACM0 \

|

| 85 |

+

--robot.id=my_robot \

|

| 86 |

+

--robot.cameras="{ front: {type: opencv, index_or_path: 0, width: 640, height: 480, fps: 30}}" \

|

| 87 |

+

--display_data=true \

|

| 88 |

+

--dataset.repo_id=${HF_USER}/eval_act_your_dataset \

|

| 89 |

+

--dataset.num_episodes=10 \

|

| 90 |

+

--dataset.single_task="Your task description" \

|

| 91 |

+

--policy.path=${HF_USER}/act_policy

|

| 92 |

+

```

|

lerobot/docs/source/async.mdx

ADDED

|

@@ -0,0 +1,312 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Asynchronous Inference

|

| 2 |

+

|

| 3 |

+

With our [SmolVLA](https://huggingface.co/papers/2506.01844) we introduced a new way to run inference on real-world robots, **decoupling action prediction from action execution**.

|

| 4 |

+

In this tutorial, we'll show how to use asynchronous inference (_async inference_) using a finetuned version of SmolVLA, and all the policies supported by LeRobot.

|

| 5 |

+

**Try async inference with all the policies** supported by LeRobot!

|

| 6 |

+

|

| 7 |

+

**What you'll learn:**

|

| 8 |

+

|

| 9 |

+

1. Why asynchronous inference matters and how it compares to, more traditional, sequential inference.

|

| 10 |

+

2. How to spin-up a `PolicyServer` and connect a `RobotClient` from the same machine, and even over the network.

|

| 11 |

+

3. How to tune key parameters (`actions_per_chunk`, `chunk_size_threshold`) for your robot and policy.

|

| 12 |

+

|

| 13 |

+

If you get stuck, hop into our [Discord community](https://discord.gg/s3KuuzsPFb)!

|

| 14 |

+

|

| 15 |

+

In a nutshell: with _async inference_, your robot keeps acting while the policy server is already busy computing the next chunk of actions---eliminating "wait-for-inference" lags and unlocking smoother, more reactive behaviours.

|

| 16 |

+

This is fundamentally different from synchronous inference (sync), where the robot stays idle while the policy computes the next chunk of actions.

|

| 17 |

+

|

| 18 |

+

---

|

| 19 |

+

|

| 20 |

+

## Getting started with async inference

|

| 21 |

+

|

| 22 |

+

You can read more information on asynchronous inference in our [blogpost](https://huggingface.co/blog/async-robot-inference). This guide is designed to help you quickly set up and run asynchronous inference in your environment.

|

| 23 |

+

|

| 24 |

+

First, install `lerobot` with the `async` tag, to install the extra dependencies required to run async inference.

|

| 25 |

+

|

| 26 |

+

```shell

|

| 27 |

+

pip install -e ".[async]"

|

| 28 |

+

```

|

| 29 |

+

|

| 30 |

+

Then, spin up a policy server (in one terminal, or in a separate machine) specifying the host address and port for the client to connect to.

|

| 31 |

+

You can spin up a policy server running:

|

| 32 |

+

|

| 33 |

+

```shell

|

| 34 |

+

python -m lerobot.async_inference.policy_server \

|

| 35 |

+

--host=127.0.0.1 \

|

| 36 |

+

--port=8080

|

| 37 |

+

```

|

| 38 |

+

|

| 39 |

+

This will start a policy server listening on `127.0.0.1:8080` (`localhost`, port 8080). At this stage, the policy server is empty, as all information related to which policy to run and with which parameters are specified during the first handshake with the client. Spin up a client with:

|

| 40 |

+

|

| 41 |

+

```shell

|

| 42 |

+

python -m lerobot.async_inference.robot_client \

|

| 43 |

+

--server_address=127.0.0.1:8080 \ # SERVER: the host address and port of the policy server

|

| 44 |

+

--robot.type=so100_follower \ # ROBOT: your robot type

|

| 45 |

+

--robot.port=/dev/tty.usbmodem585A0076841 \ # ROBOT: your robot port

|

| 46 |

+

--robot.id=follower_so100 \ # ROBOT: your robot id, to load calibration file

|

| 47 |

+

--robot.cameras="{ laptop: {type: opencv, index_or_path: 0, width: 1920, height: 1080, fps: 30}, phone: {type: opencv, index_or_path: 0, width: 1920, height: 1080, fps: 30}}" \ # POLICY: the cameras used to acquire frames, with keys matching the keys expected by the policy

|

| 48 |

+

--task="dummy" \ # POLICY: The task to run the policy on (`Fold my t-shirt`). Not necessarily defined for all policies, such as `act`

|

| 49 |

+

--policy_type=your_policy_type \ # POLICY: the type of policy to run (smolvla, act, etc)

|

| 50 |

+

--pretrained_name_or_path=user/model \ # POLICY: the model name/path on server to the checkpoint to run (e.g., lerobot/smolvla_base)

|

| 51 |

+

--policy_device=mps \ # POLICY: the device to run the policy on, on the server

|

| 52 |

+

--actions_per_chunk=50 \ # POLICY: the number of actions to output at once

|

| 53 |

+

--chunk_size_threshold=0.5 \ # CLIENT: the threshold for the chunk size before sending a new observation to the server

|

| 54 |

+

--aggregate_fn_name=weighted_average \ # CLIENT: the function to aggregate actions on overlapping portions

|

| 55 |

+

--debug_visualize_queue_size=True # CLIENT: whether to visualize the queue size at runtime

|

| 56 |

+

```

|

| 57 |

+

|

| 58 |

+

In summary, you need to specify instructions for:

|

| 59 |

+

|

| 60 |

+

- `SERVER`: the address and port of the policy server

|

| 61 |

+

- `ROBOT`: the type of robot to connect to, the port to connect to, and the local `id` of the robot

|

| 62 |

+

- `POLICY`: the type of policy to run, and the model name/path on server to the checkpoint to run. You also need to specify which device should the sever be using, and how many actions to output at once (capped at the policy max actions value).

|

| 63 |

+

- `CLIENT`: the threshold for the chunk size before sending a new observation to the server, and the function to aggregate actions on overlapping portions. Optionally, you can also visualize the queue size at runtime, to help you tune the `CLIENT` parameters.

|

| 64 |

+

|

| 65 |

+

Importantly,

|

| 66 |

+

|

| 67 |

+

- `actions_per_chunk` and `chunk_size_threshold` are key parameters to tune for your setup.

|

| 68 |

+

- `aggregate_fn_name` is the function to aggregate actions on overlapping portions. You can either add a new one to a registry of functions, or add your own in `robot_client.py` (see [here](NOTE:addlinktoLOC))

|

| 69 |

+

- `debug_visualize_queue_size` is a useful tool to tune the `CLIENT` parameters.

|

| 70 |

+

|

| 71 |

+

## Done! You should see your robot moving around by now 😉

|

| 72 |

+

|

| 73 |

+

## Async vs. synchronous inference

|

| 74 |

+

|

| 75 |

+

Synchronous inference relies on interleaving action chunk prediction and action execution. This inherently results in _idle frames_, frames where the robot awaits idle the policy's output: a new action chunk.

|

| 76 |

+

In turn, inference is plagued by evident real-time lags, where the robot simply stops acting due to the lack of available actions.

|

| 77 |

+

With robotics models increasing in size, this problem risks becoming only more severe.

|

| 78 |

+

|

| 79 |

+

<p align="center">

|

| 80 |

+

<img

|

| 81 |

+

src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/async-inference/sync.png"

|

| 82 |

+

width="80%"

|

| 83 |

+

></img>

|

| 84 |

+

</p>

|

| 85 |

+

<p align="center">

|

| 86 |

+

<i>Synchronous inference</i> makes the robot idle while the policy is

|

| 87 |

+

computing the next chunk of actions.

|

| 88 |

+

</p>

|

| 89 |

+

|

| 90 |

+

To overcome this, we design async inference, a paradigm where action planning and execution are decoupled, resulting in (1) higher adaptability and, most importantly, (2) no idle frames.

|

| 91 |

+

Crucially, with async inference, the next action chunk is computed _before_ the current one is exhausted, resulting in no idleness.

|

| 92 |

+

Higher adaptability is ensured by aggregating the different action chunks on overlapping portions, obtaining an up-to-date plan and a tighter control loop.

|

| 93 |

+

|

| 94 |

+

<p align="center">

|

| 95 |

+

<img

|

| 96 |

+

src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/async-inference/async.png"

|

| 97 |

+

width="80%"

|

| 98 |

+

></img>

|

| 99 |

+

</p>

|

| 100 |

+

<p align="center">

|

| 101 |

+

<i>Asynchronous inference</i> results in no idleness because the next chunk is

|

| 102 |

+

computed before the current chunk is exhausted.

|

| 103 |

+

</p>

|

| 104 |

+

|

| 105 |

+

---

|

| 106 |

+

|

| 107 |

+

## Start the Policy Server

|

| 108 |

+

|

| 109 |

+

Policy servers are wrappers around a `PreTrainedPolicy` interfacing them with observations coming from a robot client.

|

| 110 |

+

Policy servers are initialized as empty containers which are populated with the requested policy specified in the initial handshake between the robot client and the policy server.

|

| 111 |

+

As such, spinning up a policy server is as easy as specifying the host address and port. If you're running the policy server on the same machine as the robot client, you can use `localhost` as the host address.

|

| 112 |

+

|

| 113 |

+

<hfoptions id="start_policy_server">

|

| 114 |

+

<hfoption id="Command">

|

| 115 |

+

```bash

|

| 116 |

+

python -m lerobot.async_inference.policy_server \

|

| 117 |

+

--host=127.0.0.1 \

|

| 118 |

+

--port=8080

|

| 119 |

+

```

|

| 120 |

+

</hfoption>

|

| 121 |

+

<hfoption id="API example">

|

| 122 |

+

|

| 123 |

+

<!-- prettier-ignore-start -->

|

| 124 |

+

```python

|

| 125 |

+

from lerobot.async_inference.configs import PolicyServerConfig

|

| 126 |

+

from lerobot.async_inference.policy_server import serve

|

| 127 |

+

|

| 128 |

+

config = PolicyServerConfig(

|

| 129 |

+

host="localhost",

|

| 130 |

+

port=8080,

|

| 131 |

+

)

|

| 132 |

+

serve(config)

|

| 133 |

+

```

|

| 134 |

+

<!-- prettier-ignore-end -->

|

| 135 |

+

|

| 136 |

+

</hfoption>

|

| 137 |

+

</hfoptions>

|

| 138 |

+

|

| 139 |

+

This listens on `localhost:8080` for an incoming connection from the associated`RobotClient`, which will communicate which policy to run during the first client-server handshake.

|

| 140 |

+

|

| 141 |

+

---

|

| 142 |

+

|

| 143 |

+

## Launch the Robot Client

|

| 144 |

+

|

| 145 |

+

`RobotClient` is a wrapper around a `Robot` instance, which `RobotClient` connects to the (possibly remote) `PolicyServer`.

|

| 146 |

+

The `RobotClient` streams observations to the `PolicyServer`, and receives action chunks obtained running inference on the server (which we assume to have better computational resources than the robot controller).

|

| 147 |

+

|

| 148 |

+

<hfoptions id="start_robot_client">

|

| 149 |

+

<hfoption id="Command">

|

| 150 |

+

```bash

|

| 151 |

+

python -m lerobot.async_inference.robot_client \

|

| 152 |

+

--server_address=127.0.0.1:8080 \ # SERVER: the host address and port of the policy server

|

| 153 |

+

--robot.type=so100_follower \ # ROBOT: your robot type

|

| 154 |

+

--robot.port=/dev/tty.usbmodem585A0076841 \ # ROBOT: your robot port

|

| 155 |

+

--robot.id=follower_so100 \ # ROBOT: your robot id, to load calibration file

|

| 156 |

+

--robot.cameras="{ laptop: {type: opencv, index_or_path: 0, width: 1920, height: 1080, fps: 30}, phone: {type: opencv, index_or_path: 0, width: 1920, height: 1080, fps: 30}}" \ # POLICY: the cameras used to acquire frames, with keys matching the keys expected by the policy

|

| 157 |

+

--task="dummy" \ # POLICY: The task to run the policy on (`Fold my t-shirt`). Not necessarily defined for all policies, such as `act`

|

| 158 |

+

--policy_type=your_policy_type \ # POLICY: the type of policy to run (smolvla, act, etc)

|

| 159 |

+

--pretrained_name_or_path=user/model \ # POLICY: the model name/path on server to the checkpoint to run (e.g., lerobot/smolvla_base)

|

| 160 |

+

--policy_device=mps \ # POLICY: the device to run the policy on, on the server

|

| 161 |

+

--actions_per_chunk=50 \ # POLICY: the number of actions to output at once

|

| 162 |

+

--chunk_size_threshold=0.5 \ # CLIENT: the threshold for the chunk size before sending a new observation to the server

|

| 163 |

+

--aggregate_fn_name=weighted_average \ # CLIENT: the function to aggregate actions on overlapping portions

|

| 164 |

+

--debug_visualize_queue_size=True # CLIENT: whether to visualize the queue size at runtime

|

| 165 |

+

```

|

| 166 |

+

</hfoption>

|

| 167 |

+

<hfoption id="API example">

|

| 168 |

+

|

| 169 |

+

<!-- prettier-ignore-start -->

|

| 170 |

+

```python

|

| 171 |

+

import threading

|

| 172 |

+

from lerobot.robots.so_follower import SO100FollowerConfig

|

| 173 |

+

from lerobot.cameras.opencv.configuration_opencv import OpenCVCameraConfig

|

| 174 |

+

from lerobot.async_inference.configs import RobotClientConfig

|

| 175 |

+

from lerobot.async_inference.robot_client import RobotClient

|

| 176 |

+

from lerobot.async_inference.helpers import visualize_action_queue_size

|

| 177 |

+

|

| 178 |

+

# 1. Create the robot instance

|

| 179 |

+

"""Check out the cameras available in your setup by running `python lerobot/find_cameras.py`"""

|

| 180 |

+

# these cameras must match the ones expected by the policy

|

| 181 |

+

# check the config.json on the Hub for the policy you are using

|

| 182 |

+

camera_cfg = {

|

| 183 |

+

"top": OpenCVCameraConfig(index_or_path=0, width=640, height=480, fps=30),

|

| 184 |

+

"side": OpenCVCameraConfig(index_or_path=1, width=640, height=480, fps=30)

|

| 185 |

+

}

|

| 186 |

+

|

| 187 |

+

robot_cfg = SO100FollowerConfig(

|

| 188 |

+

port="/dev/tty.usbmodem585A0076841",

|

| 189 |

+

id="follower_so100",

|

| 190 |

+

cameras=camera_cfg

|

| 191 |

+

)

|

| 192 |

+

|

| 193 |

+

# 3. Create client configuration

|

| 194 |

+

client_cfg = RobotClientConfig(

|

| 195 |

+

robot=robot_cfg,

|

| 196 |

+

server_address="localhost:8080",

|

| 197 |

+

policy_device="mps",

|

| 198 |

+

policy_type="smolvla",

|

| 199 |

+

pretrained_name_or_path="<user>/smolvla_async",

|

| 200 |

+

chunk_size_threshold=0.5,

|

| 201 |

+

actions_per_chunk=50, # make sure this is less than the max actions of the policy

|

| 202 |

+

)

|

| 203 |

+

|

| 204 |

+

# 4. Create and start client

|

| 205 |

+

client = RobotClient(client_cfg)

|

| 206 |

+

|

| 207 |

+

# 5. Specify the task

|

| 208 |

+

task = "Don't do anything, stay still"

|

| 209 |

+

|

| 210 |

+

if client.start():

|

| 211 |

+

# Start action receiver thread

|

| 212 |

+

action_receiver_thread = threading.Thread(target=client.receive_actions, daemon=True)

|

| 213 |

+

action_receiver_thread.start()

|

| 214 |

+

|

| 215 |

+

try:

|

| 216 |

+

# Run the control loop

|

| 217 |

+

client.control_loop(task)

|

| 218 |

+

except KeyboardInterrupt:

|

| 219 |

+

client.stop()

|

| 220 |

+

action_receiver_thread.join()

|

| 221 |

+

# (Optionally) plot the action queue size

|

| 222 |

+

visualize_action_queue_size(client.action_queue_size)

|

| 223 |

+

```

|

| 224 |

+

<!-- prettier-ignore-end -->

|

| 225 |

+

|

| 226 |

+

</hfoption>

|

| 227 |

+

</hfoptions>

|

| 228 |

+

|

| 229 |

+

The following two parameters are key in every setup:

|

| 230 |

+

|

| 231 |

+

<table>

|

| 232 |

+

<thead>

|

| 233 |

+

<tr>

|

| 234 |

+

<th>Hyperparameter</th>

|

| 235 |

+

<th>Default</th>

|

| 236 |

+

<th>What it does</th>

|

| 237 |

+

</tr>

|

| 238 |

+

</thead>

|

| 239 |

+

<tbody>

|

| 240 |

+

<tr>

|

| 241 |

+

<td>

|

| 242 |

+

<code>actions_per_chunk</code>

|

| 243 |

+

</td>

|

| 244 |

+

<td>50</td>

|

| 245 |

+

<td>

|

| 246 |

+

How many actions the policy outputs at once. Typical values: 10-50.

|

| 247 |

+

</td>

|

| 248 |

+

</tr>

|

| 249 |

+

<tr>

|

| 250 |

+

<td>

|

| 251 |

+

<code>chunk_size_threshold</code>

|

| 252 |

+

</td>

|

| 253 |

+

<td>0.7</td>

|

| 254 |

+

<td>

|

| 255 |

+

When the queue is ≤ 50% full, the client sends a fresh observation.

|

| 256 |

+

Value in [0, 1].

|

| 257 |

+

</td>

|

| 258 |

+

</tr>

|

| 259 |

+

</tbody>

|

| 260 |

+

</table>

|

| 261 |

+

|

| 262 |

+

<Tip>

|

| 263 |

+

Different values of `actions_per_chunk` and `chunk_size_threshold` do result

|

| 264 |

+

in different behaviours.

|

| 265 |

+

</Tip>

|

| 266 |

+

|

| 267 |

+

On the one hand, increasing the value of `actions_per_chunk` will result in reducing the likelihood of ending up with no actions to execute, as more actions will be available when the new chunk is computed.

|

| 268 |

+

However, larger values of `actions_per_chunk` might also result in less precise actions, due to the compounding errors consequent to predicting actions over longer timespans.

|

| 269 |

+

|

| 270 |

+

On the other hand, increasing the value of `chunk_size_threshold` will result in sending out to the `PolicyServer` observations for inference more often, resulting in a larger number of updates action chunks, overlapping on significant portions. This results in high adaptability, in the limit predicting one action chunk for each observation, which is in turn only marginally consumed while a new one is produced.

|

| 271 |

+

This option does also put more pressure on the inference pipeline, as a consequence of the many requests. Conversely, values of `chunk_size_threshold` close to 0.0 collapse to the synchronous edge case, whereby new observations are only sent out whenever the current chunk is exhausted.

|

| 272 |

+

|

| 273 |

+

We found the default values of `actions_per_chunk` and `chunk_size_threshold` to work well in the experiments we developed for the [SmolVLA paper](https://huggingface.co/papers/2506.01844), but recommend experimenting with different values to find the best fit for your setup.

|

| 274 |

+

|

| 275 |

+

### Tuning async inference for your setup

|

| 276 |

+

|

| 277 |

+

1. **Choose your computational resources carefully.** [PI0](https://huggingface.co/lerobot/pi0) occupies 14GB of memory at inference time, while [SmolVLA](https://huggingface.co/lerobot/smolvla_base) requires only ~2GB. You should identify the best computational resource for your use case keeping in mind smaller policies require less computational resources. The combination of policy and device used (CPU-intensive, using MPS, or the number of CUDA cores on a given NVIDIA GPU) directly impacts the average inference latency you should expect.

|

| 278 |

+

2. **Adjust your `fps` based on inference latency.** While the server generates a new action chunk, the client is not idle and is stepping through its current action queue. If the two processes happen at fundamentally different speeds, the client might end up with an empty queue. As such, you should reduce your fps if you consistently run out of actions in queue.

|

| 279 |

+

3. **Adjust `chunk_size_threshold`**.

|

| 280 |

+

- Values closer to `0.0` result in almost sequential behavior. Values closer to `1.0` → send observation every step (more bandwidth, relies on good world-model).

|

| 281 |

+

- We found values around 0.5-0.6 to work well. If you want to tweak this, spin up a `RobotClient` setting the `--debug_visualize_queue_size` to `True`. This will plot the action queue size evolution at runtime, and you can use it to find the value of `chunk_size_threshold` that works best for your setup.

|

| 282 |

+

|

| 283 |

+

<p align="center">

|

| 284 |

+

<img

|

| 285 |

+

src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/async-inference/queues.png"

|

| 286 |

+

width="80%"

|

| 287 |

+

></img>

|

| 288 |

+

</p>

|

| 289 |

+

<p align="center">

|

| 290 |

+

<i>

|

| 291 |

+

The action queue size is plotted at runtime when the

|

| 292 |

+

`--debug_visualize_queue_size` flag is passed, for various levels of

|

| 293 |

+

`chunk_size_threshold` (`g` in the SmolVLA paper).

|

| 294 |

+

</i>

|

| 295 |

+

</p>

|

| 296 |

+

|

| 297 |

+

---

|

| 298 |

+

|

| 299 |

+

## Conclusion

|

| 300 |

+

|

| 301 |

+

Asynchronous inference represents a significant advancement in real-time robotics control, addressing the fundamental challenge of inference latency that has long plagued robotics applications. Through this tutorial, you've learned how to implement a complete async inference pipeline that eliminates idle frames and enables smoother, more reactive robot behaviors.

|

| 302 |

+

|

| 303 |

+

**Key Takeaways:**

|

| 304 |

+

|

| 305 |

+

- **Paradigm Shift**: Async inference decouples action prediction from execution, allowing robots to continue acting while new action chunks are computed in parallel

|

| 306 |

+

- **Performance Benefits**: Eliminates "wait-for-inference" lags that are inherent in synchronous approaches, becoming increasingly important as policy models grow larger

|

| 307 |

+

- **Flexible Architecture**: The server-client design enables distributed computing, where inference can run on powerful remote hardware while maintaining real-time robot control

|

| 308 |

+

- **Tunable Parameters**: Success depends on properly configuring `actions_per_chunk` and `chunk_size_threshold` for your specific hardware, policy, and task requirements

|

| 309 |

+

- **Universal Compatibility**: Works with all LeRobot-supported policies, from lightweight ACT models to vision-language models like SmolVLA

|

| 310 |

+

|

| 311 |

+

Start experimenting with the default parameters, monitor your action queue sizes, and iteratively refine your setup to achieve optimal performance for your specific use case.

|

| 312 |

+

If you want to discuss this further, hop into our [Discord community](https://discord.gg/s3KuuzsPFb), or open an issue on our [GitHub repository](https://github.com/lerobot/lerobot/issues).

|

lerobot/docs/source/backwardcomp.mdx

ADDED

|

@@ -0,0 +1,151 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Backward compatibility

|

| 2 |

+

|

| 3 |

+

## Policy Normalization Migration (PR #1452)

|

| 4 |

+

|

| 5 |

+

**Breaking Change**: LeRobot policies no longer have built-in normalization layers embedded in their weights. Normalization is now handled by external `PolicyProcessorPipeline` components.

|

| 6 |

+

|

| 7 |

+

### What changed?

|

| 8 |

+

|

| 9 |

+

| | Before PR #1452 | After PR #1452 |

|

| 10 |

+

| -------------------------- | ------------------------------------------------ | ------------------------------------------------------------ |

|

| 11 |

+

| **Normalization Location** | Embedded in model weights (`normalize_inputs.*`) | External `PolicyProcessorPipeline` components |

|

| 12 |

+

| **Model State Dict** | Contains normalization statistics | **Clean weights only** - no normalization parameters |

|

| 13 |

+

| **Usage** | `policy(batch)` handles everything | `preprocessor(batch)` → `policy(...)` → `postprocessor(...)` |

|

| 14 |

+

|

| 15 |

+

### Impact on existing models

|

| 16 |

+

|

| 17 |

+

- Models trained **before** PR #1452 have normalization embedded in their weights

|

| 18 |

+

- These models need migration to work with the new `PolicyProcessorPipeline` system

|

| 19 |

+

- The migration extracts normalization statistics and creates separate processor pipelines

|

| 20 |

+

|

| 21 |

+

### Migrating old models

|

| 22 |

+

|

| 23 |

+

Use the migration script to convert models with embedded normalization:

|

| 24 |

+

|

| 25 |

+

```shell

|

| 26 |

+

python src/lerobot/processor/migrate_policy_normalization.py \

|

| 27 |

+

--pretrained-path lerobot/act_aloha_sim_transfer_cube_human \

|

| 28 |

+

--push-to-hub \

|

| 29 |

+

--branch migrated

|

| 30 |

+

```

|

| 31 |

+

|

| 32 |

+

The script:

|

| 33 |

+

|

| 34 |

+

1. **Extracts** normalization statistics from model weights

|

| 35 |

+

2. **Creates** external preprocessor and postprocessor pipelines

|

| 36 |

+

3. **Removes** normalization layers from model weights

|

| 37 |

+

4. **Saves** clean model + processor pipelines

|

| 38 |

+

5. **Pushes** to Hub with automatic PR creation

|

| 39 |

+

|

| 40 |

+

### Using migrated models

|

| 41 |

+

|

| 42 |

+

```python

|

| 43 |

+

# New usage pattern (after migration)

|

| 44 |

+

from lerobot.policies.factory import make_policy, make_pre_post_processors

|

| 45 |

+

|

| 46 |

+

# Load model and processors separately

|

| 47 |

+

policy = make_policy(config, ds_meta=dataset.meta)

|

| 48 |

+

preprocessor, postprocessor = make_pre_post_processors(

|

| 49 |

+

policy_cfg=config,

|

| 50 |

+

dataset_stats=dataset.meta.stats

|

| 51 |

+

)

|

| 52 |

+

|

| 53 |

+

# Process data through pipeline

|

| 54 |

+

processed_batch = preprocessor(raw_batch)

|

| 55 |

+

action = policy.select_action(processed_batch)

|

| 56 |

+

final_action = postprocessor(action)

|

| 57 |

+

```

|

| 58 |

+

|

| 59 |

+

## Hardware API redesign

|

| 60 |

+

|

| 61 |

+

PR [#777](https://github.com/huggingface/lerobot/pull/777) improves the LeRobot calibration but is **not backward-compatible**. Below is a overview of what changed and how you can continue to work with datasets created before this pull request.

|

| 62 |

+

|

| 63 |

+

### What changed?

|

| 64 |

+

|

| 65 |

+

| | Before PR #777 | After PR #777 |

|

| 66 |

+

| --------------------------------- | ------------------------------------------------- | ------------------------------------------------------------ |

|

| 67 |

+

| **Joint range** | Degrees `-180...180°` | **Normalised range** Joints: `–100...100` Gripper: `0...100` |

|

| 68 |

+

| **Zero position (SO100 / SO101)** | Arm fully extended horizontally | **In middle of the range for each joint** |

|

| 69 |

+

| **Boundary handling** | Software safeguards to detect ±180 ° wrap-arounds | No wrap-around logic needed due to mid-range zero |

|

| 70 |

+

|

| 71 |

+

---

|

| 72 |

+

|

| 73 |

+

### Impact on existing datasets

|

| 74 |

+

|

| 75 |

+

- Recorded trajectories created **before** PR #777 will replay incorrectly if loaded directly:

|

| 76 |

+

- Joint angles are offset and incorrectly normalized.

|

| 77 |

+

- Any models directly finetuned or trained on the old data will need their inputs and outputs converted.

|

| 78 |

+

|

| 79 |

+

### Using datasets made with the previous calibration system

|

| 80 |

+

|

| 81 |

+

We provide a migration example script for replaying an episode recorded with the previous calibration here: `examples/backward_compatibility/replay.py`.

|

| 82 |

+

Below we take you through the modifications that are done in the example script to make the previous calibration datasets work.

|

| 83 |

+

|

| 84 |

+

```diff

|

| 85 |

+

+ key = f"{name.removeprefix('main_')}.pos"

|

| 86 |

+

action[key] = action_array[i].item()

|

| 87 |

+

+ action["shoulder_lift.pos"] = -(action["shoulder_lift.pos"] - 90)

|

| 88 |

+

+ action["elbow_flex.pos"] -= 90

|

| 89 |

+

```

|

| 90 |

+

|

| 91 |

+

Let's break this down.

|

| 92 |

+

New codebase uses `.pos` suffix for the position observations and we have removed `main_` prefix:

|

| 93 |

+

|

| 94 |

+

<!-- prettier-ignore-start -->

|

| 95 |

+

```python

|

| 96 |

+

key = f"{name.removeprefix('main_')}.pos"

|

| 97 |

+

```

|

| 98 |

+

<!-- prettier-ignore-end -->

|

| 99 |

+

|

| 100 |

+

For `"shoulder_lift"` (id = 2), the 0 position is changed by -90 degrees and the direction is reversed compared to old calibration/code.

|

| 101 |

+

|

| 102 |

+

<!-- prettier-ignore-start -->

|

| 103 |

+

```python

|

| 104 |

+

action["shoulder_lift.pos"] = -(action["shoulder_lift.pos"] - 90)

|

| 105 |

+

```

|

| 106 |

+

<!-- prettier-ignore-end -->

|

| 107 |

+

|

| 108 |

+

For `"elbow_flex"` (id = 3), the 0 position is changed by -90 degrees compared to old calibration/code.

|

| 109 |

+

|

| 110 |

+

<!-- prettier-ignore-start -->

|

| 111 |

+

```python

|

| 112 |

+

action["elbow_flex.pos"] -= 90

|

| 113 |

+

```

|

| 114 |

+

<!-- prettier-ignore-end -->

|

| 115 |

+

|

| 116 |

+

To use degrees normalization we then set the `--robot.use_degrees` option to `true`.

|

| 117 |

+

|

| 118 |

+

```diff

|

| 119 |

+

python examples/backward_compatibility/replay.py \

|

| 120 |

+

--robot.type=so101_follower \

|

| 121 |

+

--robot.port=/dev/tty.usbmodem5A460814411 \

|

| 122 |

+

--robot.id=blue \

|

| 123 |

+

+ --robot.use_degrees=true \

|

| 124 |

+

--dataset.repo_id=my_dataset_id \

|

| 125 |

+

--dataset.episode=0

|

| 126 |

+

```

|

| 127 |

+

|

| 128 |

+

### Using policies trained with the previous calibration system

|

| 129 |

+

|

| 130 |

+

Policies output actions in the same format as the datasets (`torch.Tensors`). Therefore, the same transformations should be applied.

|

| 131 |

+

|

| 132 |

+

To find these transformations, we recommend to first try and and replay an episode of the dataset your policy was trained on using the section above.

|

| 133 |

+

Then, add these same transformations on your inference script (shown here in the `record.py` script):

|

| 134 |

+

|

| 135 |

+

```diff

|

| 136 |

+

action_values = predict_action(

|

| 137 |

+

observation_frame,

|

| 138 |

+

policy,

|

| 139 |

+

get_safe_torch_device(policy.config.device),

|

| 140 |

+

policy.config.use_amp,

|

| 141 |

+

task=single_task,

|

| 142 |

+

robot_type=robot.robot_type,

|

| 143 |

+

)

|

| 144 |

+

action = {key: action_values[i].item() for i, key in enumerate(robot.action_features)}

|

| 145 |

+

|

| 146 |

+

+ action["shoulder_lift.pos"] = -(action["shoulder_lift.pos"] - 90)

|

| 147 |

+

+ action["elbow_flex.pos"] -= 90

|

| 148 |

+

robot.send_action(action)

|

| 149 |

+

```

|

| 150 |

+

|

| 151 |

+

If you have questions or run into migration issues, feel free to ask them on [Discord](https://discord.gg/s3KuuzsPFb)

|

lerobot/docs/source/bring_your_own_policies.mdx

ADDED

|

@@ -0,0 +1,175 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Bring Your Own Policies

|

| 2 |

+

|

| 3 |

+

This tutorial explains how to integrate your own custom policy implementations into the LeRobot ecosystem, allowing you to leverage all LeRobot tools for training, evaluation, and deployment while using your own algorithms.

|

| 4 |

+

|

| 5 |

+

## Step 1: Create a Policy Package

|

| 6 |

+

|

| 7 |

+

Your custom policy should be organized as an installable Python package following LeRobot's plugin conventions.

|

| 8 |

+

|

| 9 |

+

### Package Structure

|

| 10 |

+

|

| 11 |

+

Create a package with the prefix `lerobot_policy_` (IMPORTANT!) followed by your policy name:

|

| 12 |

+

|

| 13 |

+

```bash

|

| 14 |

+

lerobot_policy_my_custom_policy/

|

| 15 |

+

├── pyproject.toml

|

| 16 |

+

└── src/

|

| 17 |

+

└── lerobot_policy_my_custom_policy/

|

| 18 |

+

├── __init__.py

|

| 19 |

+

├── configuration_my_custom_policy.py

|

| 20 |

+

├── modeling_my_custom_policy.py

|

| 21 |

+

└── processor_my_custom_policy.py

|

| 22 |

+

```

|

| 23 |

+

|

| 24 |

+

### Package Configuration

|

| 25 |

+

|

| 26 |

+

Set up your `pyproject.toml`:

|

| 27 |

+

|

| 28 |

+

```toml

|

| 29 |

+

[project]

|

| 30 |

+

name = "lerobot_policy_my_custom_policy"

|

| 31 |

+

version = "0.1.0"

|

| 32 |

+

dependencies = [

|

| 33 |

+

# your policy-specific dependencies

|

| 34 |

+

]

|

| 35 |

+

requires-python = ">= 3.11"

|

| 36 |

+

|

| 37 |

+

[build-system]

|

| 38 |

+

build-backend = # your-build-backend

|

| 39 |

+

requires = # your-build-system

|

| 40 |

+

```

|

| 41 |

+

|

| 42 |

+

## Step 2: Define the Policy Configuration

|

| 43 |

+

|

| 44 |

+

Create a configuration class that inherits from `PreTrainedConfig` and registers your policy type:

|

| 45 |

+

|

| 46 |

+

```python

|

| 47 |

+

# configuration_my_custom_policy.py

|

| 48 |

+

from dataclasses import dataclass, field

|

| 49 |

+

from lerobot.configs.policies import PreTrainedConfig

|

| 50 |

+

from lerobot.configs.types import NormalizationMode

|

| 51 |

+

|

| 52 |

+

@PreTrainedConfig.register_subclass("my_custom_policy")

|

| 53 |

+

@dataclass

|

| 54 |

+

class MyCustomPolicyConfig(PreTrainedConfig):

|

| 55 |

+

"""Configuration class for MyCustomPolicy.

|

| 56 |

+

|

| 57 |

+

Args:

|

| 58 |

+

n_obs_steps: Number of observation steps to use as input

|

| 59 |

+

horizon: Action prediction horizon

|

| 60 |

+

n_action_steps: Number of action steps to execute

|

| 61 |

+

hidden_dim: Hidden dimension for the policy network

|

| 62 |

+

# Add your policy-specific parameters here

|

| 63 |

+

"""

|

| 64 |

+

# ...PreTrainedConfig fields...

|

| 65 |

+

pass

|

| 66 |

+

|

| 67 |

+

def __post_init__(self):

|

| 68 |

+

super().__post_init__()

|

| 69 |

+

# Add any validation logic here

|

| 70 |

+

|

| 71 |

+

def validate_features(self) -> None:

|

| 72 |

+

"""Validate input/output feature compatibility."""

|

| 73 |

+

# Implement validation logic for your policy's requirements

|

| 74 |

+

pass

|

| 75 |

+

```

|

| 76 |

+

|

| 77 |

+

## Step 3: Implement the Policy Class

|

| 78 |

+

|

| 79 |

+

Create your policy implementation by inheriting from LeRobot's base `PreTrainedPolicy` class:

|

| 80 |

+

|

| 81 |

+

```python

|

| 82 |

+

# modeling_my_custom_policy.py

|

| 83 |

+

import torch

|

| 84 |

+

import torch.nn as nn

|

| 85 |

+

from typing import Dict, Any

|

| 86 |

+

|

| 87 |

+

from lerobot.policies.pretrained import PreTrainedPolicy

|

| 88 |

+

from .configuration_my_custom_policy import MyCustomPolicyConfig

|

| 89 |

+

|

| 90 |

+

class MyCustomPolicy(PreTrainedPolicy):

|

| 91 |

+

config_class = MyCustomPolicyConfig

|

| 92 |

+

name = "my_custom_policy"

|

| 93 |

+

|

| 94 |

+

def __init__(self, config: MyCustomPolicyConfig, dataset_stats: Dict[str, Any] = None):

|

| 95 |

+

super().__init__(config, dataset_stats)

|

| 96 |

+

...

|

| 97 |

+

```

|

| 98 |

+

|

| 99 |

+

## Step 4: Add Data Processors

|

| 100 |

+

|

| 101 |

+

Create processor functions:

|

| 102 |

+

|

| 103 |

+

```python

|

| 104 |

+

# processor_my_custom_policy.py

|

| 105 |

+

from typing import Dict, Any

|

| 106 |

+

import torch

|

| 107 |

+

|

| 108 |

+

|

| 109 |

+

def make_my_custom_policy_pre_post_processors(

|

| 110 |

+

config,

|

| 111 |

+

) -> tuple[

|

| 112 |

+

PolicyProcessorPipeline[dict[str, Any], dict[str, Any]],

|

| 113 |

+

PolicyProcessorPipeline[PolicyAction, PolicyAction],

|

| 114 |

+

]:

|

| 115 |

+

"""Create preprocessing and postprocessing functions for your policy."""

|

| 116 |

+

pass # Define your preprocessing and postprocessing logic here

|

| 117 |

+

|

| 118 |

+

```

|

| 119 |

+

|

| 120 |

+

## Step 5: Package Initialization

|

| 121 |

+

|

| 122 |

+

Expose your classes in the package's `__init__.py`:

|

| 123 |

+

|

| 124 |

+

```python

|

| 125 |

+

# __init__.py

|

| 126 |

+

"""Custom policy package for LeRobot."""

|

| 127 |

+

|

| 128 |

+

try:

|

| 129 |

+

import lerobot # noqa: F401

|

| 130 |

+

except ImportError:

|

| 131 |

+

raise ImportError(

|

| 132 |

+

"lerobot is not installed. Please install lerobot to use this policy package."

|

| 133 |

+

)

|

| 134 |

+

|

| 135 |

+

from .configuration_my_custom_policy import MyCustomPolicyConfig

|

| 136 |

+

from .modeling_my_custom_policy import MyCustomPolicy

|

| 137 |

+

from .processor_my_custom_policy import make_my_custom_policy_pre_post_processors

|

| 138 |

+

|

| 139 |

+

__all__ = [

|

| 140 |

+

"MyCustomPolicyConfig",

|

| 141 |

+

"MyCustomPolicy",

|

| 142 |

+

"make_my_custom_policy_pre_post_processors",

|

| 143 |

+

]

|

| 144 |

+

```

|

| 145 |

+

|

| 146 |

+

## Step 6: Installation and Usage

|

| 147 |

+

|

| 148 |

+

### Install Your Policy Package

|

| 149 |

+

|

| 150 |

+

```bash

|

| 151 |

+

cd lerobot_policy_my_custom_policy

|

| 152 |

+

pip install -e .

|

| 153 |

+

|

| 154 |

+

# Or install from PyPI if published

|

| 155 |

+

pip install lerobot_policy_my_custom_policy

|

| 156 |

+

```

|

| 157 |

+

|

| 158 |

+

### Use Your Policy

|

| 159 |

+

|

| 160 |

+

Once installed, your policy automatically integrates with LeRobot's training and evaluation tools:

|

| 161 |

+

|

| 162 |

+

```bash

|

| 163 |

+

lerobot-train \

|

| 164 |

+

--policy.type my_custom_policy \

|

| 165 |

+

--env.type pusht \

|

| 166 |

+

--steps 200000

|

| 167 |

+

```

|

| 168 |

+

|

| 169 |

+

## Examples and Community Contributions

|

| 170 |

+

|

| 171 |

+

Check out these example policy implementations:

|

| 172 |

+

|

| 173 |

+