Upload 921 files

Browse filesThis view is limited to 50 files because it contains too many changes. See raw diff

- .github/ISSUE_TEMPLATE/bug_report.yml +91 -0

- .github/ISSUE_TEMPLATE/config.yml +1 -0

- .github/workflows/tests.yml +37 -0

- .gitignore +171 -0

- LICENSE +21 -0

- README.md +247 -0

- annotator/annotator_path.py +22 -0

- annotator/binary/__init__.py +14 -0

- annotator/canny/__init__.py +5 -0

- annotator/clip/__init__.py +39 -0

- annotator/clip_vision/config.json +171 -0

- annotator/clip_vision/merges.txt +0 -0

- annotator/clip_vision/preprocessor_config.json +19 -0

- annotator/clip_vision/tokenizer.json +0 -0

- annotator/clip_vision/tokenizer_config.json +34 -0

- annotator/clip_vision/vocab.json +0 -0

- annotator/color/__init__.py +20 -0

- annotator/hed/__init__.py +98 -0

- annotator/keypose/__init__.py +212 -0

- annotator/keypose/faster_rcnn_r50_fpn_coco.py +182 -0

- annotator/keypose/hrnet_w48_coco_256x192.py +169 -0

- annotator/lama/__init__.py +58 -0

- annotator/lama/config.yaml +157 -0

- annotator/lama/saicinpainting/__init__.py +0 -0

- annotator/lama/saicinpainting/training/__init__.py +0 -0

- annotator/lama/saicinpainting/training/data/__init__.py +0 -0

- annotator/lama/saicinpainting/training/data/masks.py +332 -0

- annotator/lama/saicinpainting/training/losses/__init__.py +0 -0

- annotator/lama/saicinpainting/training/losses/adversarial.py +177 -0

- annotator/lama/saicinpainting/training/losses/constants.py +152 -0

- annotator/lama/saicinpainting/training/losses/distance_weighting.py +126 -0

- annotator/lama/saicinpainting/training/losses/feature_matching.py +33 -0

- annotator/lama/saicinpainting/training/losses/perceptual.py +113 -0

- annotator/lama/saicinpainting/training/losses/segmentation.py +43 -0

- annotator/lama/saicinpainting/training/losses/style_loss.py +155 -0

- annotator/lama/saicinpainting/training/modules/__init__.py +31 -0

- annotator/lama/saicinpainting/training/modules/base.py +80 -0

- annotator/lama/saicinpainting/training/modules/depthwise_sep_conv.py +17 -0

- annotator/lama/saicinpainting/training/modules/fake_fakes.py +47 -0

- annotator/lama/saicinpainting/training/modules/ffc.py +485 -0

- annotator/lama/saicinpainting/training/modules/multidilated_conv.py +98 -0

- annotator/lama/saicinpainting/training/modules/multiscale.py +244 -0

- annotator/lama/saicinpainting/training/modules/pix2pixhd.py +669 -0

- annotator/lama/saicinpainting/training/modules/spatial_transform.py +49 -0

- annotator/lama/saicinpainting/training/modules/squeeze_excitation.py +20 -0

- annotator/lama/saicinpainting/training/trainers/__init__.py +29 -0

- annotator/lama/saicinpainting/training/trainers/base.py +293 -0

- annotator/lama/saicinpainting/training/trainers/default.py +175 -0

- annotator/lama/saicinpainting/training/visualizers/__init__.py +15 -0

- annotator/lama/saicinpainting/training/visualizers/base.py +73 -0

.github/ISSUE_TEMPLATE/bug_report.yml

ADDED

|

@@ -0,0 +1,91 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

name: Bug Report

|

| 2 |

+

description: Create a report

|

| 3 |

+

title: "[Bug]: "

|

| 4 |

+

labels: ["bug-report"]

|

| 5 |

+

|

| 6 |

+

body:

|

| 7 |

+

- type: checkboxes

|

| 8 |

+

attributes:

|

| 9 |

+

label: Is there an existing issue for this?

|

| 10 |

+

description: Please search to see if an issue already exists for the bug you encountered, and that it hasn't been fixed in a recent build/commit.

|

| 11 |

+

options:

|

| 12 |

+

- label: I have searched the existing issues and checked the recent builds/commits of both this extension and the webui

|

| 13 |

+

required: true

|

| 14 |

+

- type: markdown

|

| 15 |

+

attributes:

|

| 16 |

+

value: |

|

| 17 |

+

*Please fill this form with as much information as possible, don't forget to fill "What OS..." and "What browsers" and *provide screenshots if possible**

|

| 18 |

+

- type: textarea

|

| 19 |

+

id: what-did

|

| 20 |

+

attributes:

|

| 21 |

+

label: What happened?

|

| 22 |

+

description: Tell us what happened in a very clear and simple way

|

| 23 |

+

validations:

|

| 24 |

+

required: true

|

| 25 |

+

- type: textarea

|

| 26 |

+

id: steps

|

| 27 |

+

attributes:

|

| 28 |

+

label: Steps to reproduce the problem

|

| 29 |

+

description: Please provide us with precise step by step information on how to reproduce the bug

|

| 30 |

+

value: |

|

| 31 |

+

1. Go to ....

|

| 32 |

+

2. Press ....

|

| 33 |

+

3. ...

|

| 34 |

+

validations:

|

| 35 |

+

required: true

|

| 36 |

+

- type: textarea

|

| 37 |

+

id: what-should

|

| 38 |

+

attributes:

|

| 39 |

+

label: What should have happened?

|

| 40 |

+

description: Tell what you think the normal behavior should be

|

| 41 |

+

validations:

|

| 42 |

+

required: true

|

| 43 |

+

- type: textarea

|

| 44 |

+

id: commits

|

| 45 |

+

attributes:

|

| 46 |

+

label: Commit where the problem happens

|

| 47 |

+

description: Which commit of the extension are you running on? Please include the commit of both the extension and the webui (Do not write *Latest version/repo/commit*, as this means nothing and will have changed by the time we read your issue. Rather, copy the **Commit** link at the bottom of the UI, or from the cmd/terminal if you can't launch it.)

|

| 48 |

+

value: |

|

| 49 |

+

webui:

|

| 50 |

+

controlnet:

|

| 51 |

+

validations:

|

| 52 |

+

required: true

|

| 53 |

+

- type: dropdown

|

| 54 |

+

id: browsers

|

| 55 |

+

attributes:

|

| 56 |

+

label: What browsers do you use to access the UI ?

|

| 57 |

+

multiple: true

|

| 58 |

+

options:

|

| 59 |

+

- Mozilla Firefox

|

| 60 |

+

- Google Chrome

|

| 61 |

+

- Brave

|

| 62 |

+

- Apple Safari

|

| 63 |

+

- Microsoft Edge

|

| 64 |

+

- type: textarea

|

| 65 |

+

id: cmdargs

|

| 66 |

+

attributes:

|

| 67 |

+

label: Command Line Arguments

|

| 68 |

+

description: Are you using any launching parameters/command line arguments (modified webui-user .bat/.sh) ? If yes, please write them below. Write "No" otherwise.

|

| 69 |

+

render: Shell

|

| 70 |

+

validations:

|

| 71 |

+

required: true

|

| 72 |

+

- type: textarea

|

| 73 |

+

id: extensions

|

| 74 |

+

attributes:

|

| 75 |

+

label: List of enabled extensions

|

| 76 |

+

description: Please provide a full list of enabled extensions or screenshots of your "Extensions" tab.

|

| 77 |

+

validations:

|

| 78 |

+

required: true

|

| 79 |

+

- type: textarea

|

| 80 |

+

id: logs

|

| 81 |

+

attributes:

|

| 82 |

+

label: Console logs

|

| 83 |

+

description: Please provide full cmd/terminal logs from the moment you started UI to the end of it, after your bug happened. If it's very long, provide a link to pastebin or similar service.

|

| 84 |

+

render: Shell

|

| 85 |

+

validations:

|

| 86 |

+

required: true

|

| 87 |

+

- type: textarea

|

| 88 |

+

id: misc

|

| 89 |

+

attributes:

|

| 90 |

+

label: Additional information

|

| 91 |

+

description: Please provide us with any relevant additional info or context.

|

.github/ISSUE_TEMPLATE/config.yml

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

blank_issues_enabled: true

|

.github/workflows/tests.yml

ADDED

|

@@ -0,0 +1,37 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

name: Run basic features tests on CPU

|

| 2 |

+

|

| 3 |

+

on:

|

| 4 |

+

- push

|

| 5 |

+

- pull_request

|

| 6 |

+

|

| 7 |

+

jobs:

|

| 8 |

+

build:

|

| 9 |

+

runs-on: ubuntu-latest

|

| 10 |

+

steps:

|

| 11 |

+

- name: Checkout Code

|

| 12 |

+

uses: actions/checkout@v3

|

| 13 |

+

with:

|

| 14 |

+

repository: 'AUTOMATIC1111/stable-diffusion-webui'

|

| 15 |

+

path: 'stable-diffusion-webui'

|

| 16 |

+

ref: '5ab7f213bec2f816f9c5644becb32eb72c8ffb89'

|

| 17 |

+

|

| 18 |

+

- name: Checkout Code

|

| 19 |

+

uses: actions/checkout@v3

|

| 20 |

+

with:

|

| 21 |

+

repository: 'Mikubill/sd-webui-controlnet'

|

| 22 |

+

path: 'stable-diffusion-webui/extensions/sd-webui-controlnet'

|

| 23 |

+

|

| 24 |

+

- name: Set up Python 3.10

|

| 25 |

+

uses: actions/setup-python@v4

|

| 26 |

+

with:

|

| 27 |

+

python-version: 3.10.6

|

| 28 |

+

cache: pip

|

| 29 |

+

cache-dependency-path: |

|

| 30 |

+

**/requirements*txt

|

| 31 |

+

stable-diffusion-webui/requirements*txt

|

| 32 |

+

|

| 33 |

+

- run: |

|

| 34 |

+

pip install torch torchvision

|

| 35 |

+

curl -Lo stable-diffusion-webui/extensions/sd-webui-controlnet/models/control_canny-fp16.safetensors https://huggingface.co/webui/ControlNet-modules-safetensors/resolve/main/control_canny-fp16.safetensors

|

| 36 |

+

cd stable-diffusion-webui && python launch.py --no-half --disable-opt-split-attention --use-cpu all --skip-torch-cuda-test --api --tests ./extensions/sd-webui-controlnet/tests

|

| 37 |

+

rm -fr stable-diffusion-webui/extensions/sd-webui-controlnet/models/control_canny-fp16.safetensors

|

.gitignore

ADDED

|

@@ -0,0 +1,171 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Byte-compiled / optimized / DLL files

|

| 2 |

+

__pycache__/

|

| 3 |

+

*.py[cod]

|

| 4 |

+

*$py.class

|

| 5 |

+

|

| 6 |

+

# C extensions

|

| 7 |

+

*.so

|

| 8 |

+

|

| 9 |

+

# Distribution / packaging

|

| 10 |

+

.Python

|

| 11 |

+

build/

|

| 12 |

+

develop-eggs/

|

| 13 |

+

dist/

|

| 14 |

+

downloads/

|

| 15 |

+

eggs/

|

| 16 |

+

.eggs/

|

| 17 |

+

lib/

|

| 18 |

+

lib64/

|

| 19 |

+

parts/

|

| 20 |

+

sdist/

|

| 21 |

+

var/

|

| 22 |

+

wheels/

|

| 23 |

+

share/python-wheels/

|

| 24 |

+

*.egg-info/

|

| 25 |

+

.installed.cfg

|

| 26 |

+

*.egg

|

| 27 |

+

MANIFEST

|

| 28 |

+

|

| 29 |

+

# PyInstaller

|

| 30 |

+

# Usually these files are written by a python script from a template

|

| 31 |

+

# before PyInstaller builds the exe, so as to inject date/other infos into it.

|

| 32 |

+

*.manifest

|

| 33 |

+

*.spec

|

| 34 |

+

|

| 35 |

+

# Installer logs

|

| 36 |

+

pip-log.txt

|

| 37 |

+

pip-delete-this-directory.txt

|

| 38 |

+

|

| 39 |

+

# Unit test / coverage reports

|

| 40 |

+

htmlcov/

|

| 41 |

+

.tox/

|

| 42 |

+

.nox/

|

| 43 |

+

.coverage

|

| 44 |

+

.coverage.*

|

| 45 |

+

.cache

|

| 46 |

+

nosetests.xml

|

| 47 |

+

coverage.xml

|

| 48 |

+

*.cover

|

| 49 |

+

*.py,cover

|

| 50 |

+

.hypothesis/

|

| 51 |

+

.pytest_cache/

|

| 52 |

+

cover/

|

| 53 |

+

|

| 54 |

+

# Translations

|

| 55 |

+

*.mo

|

| 56 |

+

*.pot

|

| 57 |

+

|

| 58 |

+

# Django stuff:

|

| 59 |

+

*.log

|

| 60 |

+

local_settings.py

|

| 61 |

+

db.sqlite3

|

| 62 |

+

db.sqlite3-journal

|

| 63 |

+

|

| 64 |

+

# Flask stuff:

|

| 65 |

+

instance/

|

| 66 |

+

.webassets-cache

|

| 67 |

+

|

| 68 |

+

# Scrapy stuff:

|

| 69 |

+

.scrapy

|

| 70 |

+

|

| 71 |

+

# Sphinx documentation

|

| 72 |

+

docs/_build/

|

| 73 |

+

|

| 74 |

+

# PyBuilder

|

| 75 |

+

.pybuilder/

|

| 76 |

+

target/

|

| 77 |

+

|

| 78 |

+

# Jupyter Notebook

|

| 79 |

+

.ipynb_checkpoints

|

| 80 |

+

|

| 81 |

+

# IPython

|

| 82 |

+

profile_default/

|

| 83 |

+

ipython_config.py

|

| 84 |

+

|

| 85 |

+

# pyenv

|

| 86 |

+

# For a library or package, you might want to ignore these files since the code is

|

| 87 |

+

# intended to run in multiple environments; otherwise, check them in:

|

| 88 |

+

# .python-version

|

| 89 |

+

|

| 90 |

+

# pipenv

|

| 91 |

+

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

|

| 92 |

+

# However, in case of collaboration, if having platform-specific dependencies or dependencies

|

| 93 |

+

# having no cross-platform support, pipenv may install dependencies that don't work, or not

|

| 94 |

+

# install all needed dependencies.

|

| 95 |

+

#Pipfile.lock

|

| 96 |

+

|

| 97 |

+

# poetry

|

| 98 |

+

# Similar to Pipfile.lock, it is generally recommended to include poetry.lock in version control.

|

| 99 |

+

# This is especially recommended for binary packages to ensure reproducibility, and is more

|

| 100 |

+

# commonly ignored for libraries.

|

| 101 |

+

# https://python-poetry.org/docs/basic-usage/#commit-your-poetrylock-file-to-version-control

|

| 102 |

+

#poetry.lock

|

| 103 |

+

|

| 104 |

+

# pdm

|

| 105 |

+

# Similar to Pipfile.lock, it is generally recommended to include pdm.lock in version control.

|

| 106 |

+

#pdm.lock

|

| 107 |

+

# pdm stores project-wide configurations in .pdm.toml, but it is recommended to not include it

|

| 108 |

+

# in version control.

|

| 109 |

+

# https://pdm.fming.dev/#use-with-ide

|

| 110 |

+

.pdm.toml

|

| 111 |

+

|

| 112 |

+

# PEP 582; used by e.g. github.com/David-OConnor/pyflow and github.com/pdm-project/pdm

|

| 113 |

+

__pypackages__/

|

| 114 |

+

|

| 115 |

+

# Celery stuff

|

| 116 |

+

celerybeat-schedule

|

| 117 |

+

celerybeat.pid

|

| 118 |

+

|

| 119 |

+

# SageMath parsed files

|

| 120 |

+

*.sage.py

|

| 121 |

+

|

| 122 |

+

# Environments

|

| 123 |

+

.env

|

| 124 |

+

.venv

|

| 125 |

+

env/

|

| 126 |

+

venv/

|

| 127 |

+

ENV/

|

| 128 |

+

env.bak/

|

| 129 |

+

venv.bak/

|

| 130 |

+

|

| 131 |

+

# Spyder project settings

|

| 132 |

+

.spyderproject

|

| 133 |

+

.spyproject

|

| 134 |

+

|

| 135 |

+

# Rope project settings

|

| 136 |

+

.ropeproject

|

| 137 |

+

|

| 138 |

+

# mkdocs documentation

|

| 139 |

+

/site

|

| 140 |

+

|

| 141 |

+

# mypy

|

| 142 |

+

.mypy_cache/

|

| 143 |

+

.dmypy.json

|

| 144 |

+

dmypy.json

|

| 145 |

+

|

| 146 |

+

# Pyre type checker

|

| 147 |

+

.pyre/

|

| 148 |

+

|

| 149 |

+

# pytype static type analyzer

|

| 150 |

+

.pytype/

|

| 151 |

+

|

| 152 |

+

# Cython debug symbols

|

| 153 |

+

cython_debug/

|

| 154 |

+

|

| 155 |

+

# PyCharm

|

| 156 |

+

# JetBrains specific template is maintained in a separate JetBrains.gitignore that can

|

| 157 |

+

# be found at https://github.com/github/gitignore/blob/main/Global/JetBrains.gitignore

|

| 158 |

+

# and can be added to the global gitignore or merged into this file. For a more nuclear

|

| 159 |

+

# option (not recommended) you can uncomment the following to ignore the entire idea folder.

|

| 160 |

+

#.idea

|

| 161 |

+

*.pt

|

| 162 |

+

*.pth

|

| 163 |

+

*.ckpt

|

| 164 |

+

*.bin

|

| 165 |

+

*.safetensors

|

| 166 |

+

|

| 167 |

+

# Editor setting metadata

|

| 168 |

+

.idea/

|

| 169 |

+

.vscode/

|

| 170 |

+

detected_maps/

|

| 171 |

+

annotator/downloads/

|

LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT License

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2023 Kakigōri Maker

|

| 4 |

+

|

| 5 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 6 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 7 |

+

in the Software without restriction, including without limitation the rights

|

| 8 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 9 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 10 |

+

furnished to do so, subject to the following conditions:

|

| 11 |

+

|

| 12 |

+

The above copyright notice and this permission notice shall be included in all

|

| 13 |

+

copies or substantial portions of the Software.

|

| 14 |

+

|

| 15 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 16 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 17 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 18 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 19 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 20 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 21 |

+

SOFTWARE.

|

README.md

ADDED

|

@@ -0,0 +1,247 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

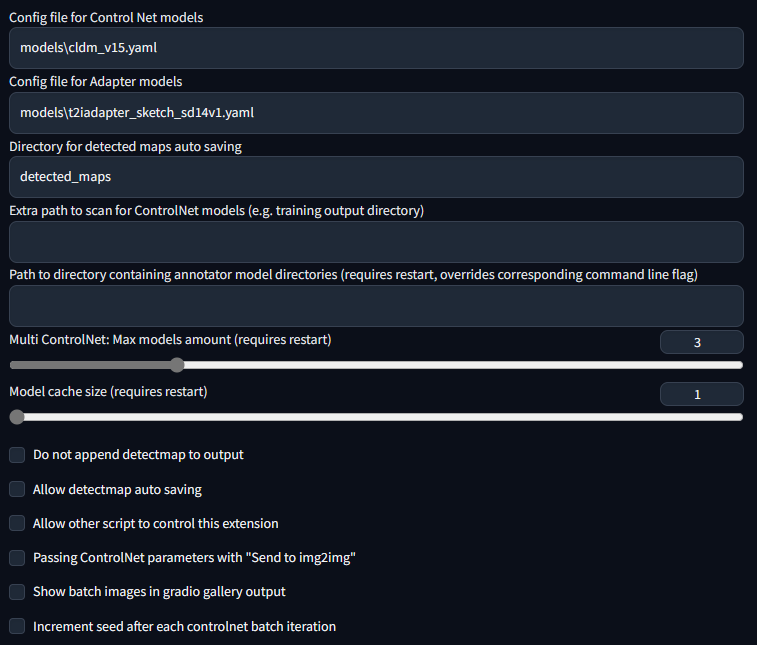

| 1 |

+

# ControlNet for Stable Diffusion WebUI

|

| 2 |

+

|

| 3 |

+

The WebUI extension for ControlNet and other injection-based SD controls.

|

| 4 |

+

|

| 5 |

+

|

| 6 |

+

|

| 7 |

+

This extension is for AUTOMATIC1111's [Stable Diffusion web UI](https://github.com/AUTOMATIC1111/stable-diffusion-webui), allows the Web UI to add [ControlNet](https://github.com/lllyasviel/ControlNet) to the original Stable Diffusion model to generate images. The addition is on-the-fly, the merging is not required.

|

| 8 |

+

|

| 9 |

+

# Installation

|

| 10 |

+

|

| 11 |

+

1. Open "Extensions" tab.

|

| 12 |

+

2. Open "Install from URL" tab in the tab.

|

| 13 |

+

3. Enter `https://github.com/Mikubill/sd-webui-controlnet.git` to "URL for extension's git repository".

|

| 14 |

+

4. Press "Install" button.

|

| 15 |

+

5. Wait for 5 seconds, and you will see the message "Installed into stable-diffusion-webui\extensions\sd-webui-controlnet. Use Installed tab to restart".

|

| 16 |

+

6. Go to "Installed" tab, click "Check for updates", and then click "Apply and restart UI". (The next time you can also use these buttons to update ControlNet.)

|

| 17 |

+

7. Completely restart A1111 webui including your terminal. (If you do not know what is a "terminal", you can reboot your computer to achieve the same effect.)

|

| 18 |

+

8. Download models (see below).

|

| 19 |

+

9. After you put models in the correct folder, you may need to refresh to see the models. The refresh button is right to your "Model" dropdown.

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

**Update from ControlNet 1.0 to 1.1:**

|

| 23 |

+

|

| 24 |

+

* If you are not sure, you can back up and remove the folder "stable-diffusion-webui\extensions\sd-webui-controlnet", and then start from the step 1 in the above Installation section.

|

| 25 |

+

|

| 26 |

+

* Or you can start from the step 6 in the above Install section.

|

| 27 |

+

|

| 28 |

+

# Download Models

|

| 29 |

+

|

| 30 |

+

Right now all the 14 models of ControlNet 1.1 are in the beta test.

|

| 31 |

+

|

| 32 |

+

Download the models from ControlNet 1.1: https://huggingface.co/lllyasviel/ControlNet-v1-1/tree/main

|

| 33 |

+

|

| 34 |

+

You need to download model files ending with ".pth" .

|

| 35 |

+

|

| 36 |

+

Put models in your "stable-diffusion-webui\extensions\sd-webui-controlnet\models". Now we have already included all "yaml" files. You only need to download "pth" files.

|

| 37 |

+

|

| 38 |

+

Do not right-click the filenames in HuggingFace website to download. Some users right-clicked those HuggingFace HTML websites and saved those HTML pages as PTH/YAML files. They are not downloading correct files. Instead, please click the small download arrow “↓” icon in HuggingFace to download.

|

| 39 |

+

|

| 40 |

+

Note: If you download models elsewhere, please make sure that yaml file names and model files names are same. Please manually rename all yaml files if you download from other sources. (Some models like "shuffle" needs the yaml file so that we know the outputs of ControlNet should pass a global average pooling before injecting to SD U-Nets.)

|

| 41 |

+

|

| 42 |

+

# New Features in ControlNet 1.1

|

| 43 |

+

|

| 44 |

+

### Perfect Support for All ControlNet 1.0/1.1 and T2I Adapter Models.

|

| 45 |

+

|

| 46 |

+

Now we have perfect support all available models and preprocessors, including perfect support for T2I style adapter and ControlNet 1.1 Shuffle. (Make sure that your YAML file names and model file names are same, see also YAML files in "stable-diffusion-webui\extensions\sd-webui-controlnet\models".)

|

| 47 |

+

|

| 48 |

+

### Perfect Support for A1111 High-Res. Fix

|

| 49 |

+

|

| 50 |

+

Now if you turn on High-Res Fix in A1111, each controlnet will output two different control images: a small one and a large one. The small one is for your basic generating, and the big one is for your High-Res Fix generating. The two control images are computed by a smart algorithm called "super high-quality control image resampling". This is turned on by default, and you do not need to change any setting.

|

| 51 |

+

|

| 52 |

+

### Perfect Support for All A1111 Img2Img or Inpaint Settings and All Mask Types

|

| 53 |

+

|

| 54 |

+

Now ControlNet is extensively tested with A1111's different types of masks, including "Inpaint masked"/"Inpaint not masked", and "Whole picture"/"Only masked", and "Only masked padding"&"Mask blur". The resizing perfectly matches A1111's "Just resize"/"Crop and resize"/"Resize and fill". This means you can use ControlNet in nearly everywhere in your A1111 UI without difficulty!

|

| 55 |

+

|

| 56 |

+

### The New "Pixel-Perfect" Mode

|

| 57 |

+

|

| 58 |

+

Now if you turn on pixel-perfect mode, you do not need to set preprocessor (annotator) resolutions manually. The ControlNet will automatically compute the best annotator resolution for you so that each pixel perfectly matches Stable Diffusion.

|

| 59 |

+

|

| 60 |

+

### User-Friendly GUI and Preprocessor Preview

|

| 61 |

+

|

| 62 |

+

We reorganized some previously confusing UI like "canvas width/height for new canvas" and it is in the 📝 button now. Now the preview GUI is controlled by the "allow preview" option and the trigger button 💥. The preview image size is better than before, and you do not need to scroll up and down - your a1111 GUI will not be messed up anymore!

|

| 63 |

+

|

| 64 |

+

### Support for Almost All Upscaling Scripts

|

| 65 |

+

|

| 66 |

+

Now ControlNet 1.1 can support almost all Upscaling/Tile methods. ControlNet 1.1 support the script "Ultimate SD upscale" and almost all other tile-based extensions. Please do not confuse ["Ultimate SD upscale"](https://github.com/Coyote-A/ultimate-upscale-for-automatic1111) with "SD upscale" - they are different scripts. Note that the most recommended upscaling method is ["Tiled VAE/Diffusion"](https://github.com/pkuliyi2015/multidiffusion-upscaler-for-automatic1111) but we test as many methods/extensions as possible. Note that "SD upscale" is supported since 1.1.117, and if you use it, you need to leave all ControlNet images as blank (We do not recommend "SD upscale" since it is somewhat buggy and cannot be maintained - use the "Ultimate SD upscale" instead).

|

| 67 |

+

|

| 68 |

+

### More Control Modes (previously called Guess Mode)

|

| 69 |

+

|

| 70 |

+

We have fixed many bugs in previous 1.0’s Guess Mode and now it is called Control Mode

|

| 71 |

+

|

| 72 |

+

|

| 73 |

+

|

| 74 |

+

Now you can control which aspect is more important (your prompt or your ControlNet):

|

| 75 |

+

|

| 76 |

+

* "Balanced": ControlNet on both sides of CFG scale, same as turning off "Guess Mode" in ControlNet 1.0

|

| 77 |

+

|

| 78 |

+

* "My prompt is more important": ControlNet on both sides of CFG scale, with progressively reduced SD U-Net injections (layer_weight*=0.825**I, where 0<=I <13, and the 13 means ControlNet injected SD 13 times). In this way, you can make sure that your prompts are perfectly displayed in your generated images.

|

| 79 |

+

|

| 80 |

+

* "ControlNet is more important": ControlNet only on the Conditional Side of CFG scale (the cond in A1111's batch-cond-uncond). This means the ControlNet will be X times stronger if your cfg-scale is X. For example, if your cfg-scale is 7, then ControlNet is 7 times stronger. Note that here the X times stronger is different from "Control Weights" since your weights are not modified. This "stronger" effect usually has less artifact and give ControlNet more room to guess what is missing from your prompts (and in the previous 1.0, it is called "Guess Mode").

|

| 81 |

+

|

| 82 |

+

<table width="100%">

|

| 83 |

+

<tr>

|

| 84 |

+

<td width="25%" style="text-align: center">Input (depth+canny+hed)</td>

|

| 85 |

+

<td width="25%" style="text-align: center">"Balanced"</td>

|

| 86 |

+

<td width="25%" style="text-align: center">"My prompt is more important"</td>

|

| 87 |

+

<td width="25%" style="text-align: center">"ControlNet is more important"</td>

|

| 88 |

+

</tr>

|

| 89 |

+

<tr>

|

| 90 |

+

<td width="25%" style="text-align: center"><img src="samples/cm1.png"></td>

|

| 91 |

+

<td width="25%" style="text-align: center"><img src="samples/cm2.png"></td>

|

| 92 |

+

<td width="25%" style="text-align: center"><img src="samples/cm3.png"></td>

|

| 93 |

+

<td width="25%" style="text-align: center"><img src="samples/cm4.png"></td>

|

| 94 |

+

</tr>

|

| 95 |

+

</table>

|

| 96 |

+

|

| 97 |

+

### Reference-Only Control

|

| 98 |

+

|

| 99 |

+

Now we have a `reference-only` preprocessor that does not require any control models. It can guide the diffusion directly using images as references.

|

| 100 |

+

|

| 101 |

+

(Prompt "a dog running on grassland, best quality, ...")

|

| 102 |

+

|

| 103 |

+

|

| 104 |

+

|

| 105 |

+

This method is similar to inpaint-based reference but it does not make your image disordered.

|

| 106 |

+

|

| 107 |

+

Many professional A1111 users know a trick to diffuse image with references by inpaint. For example, if you have a 512x512 image of a dog, and want to generate another 512x512 image with the same dog, some users will connect the 512x512 dog image and a 512x512 blank image into a 1024x512 image, send to inpaint, and mask out the blank 512x512 part to diffuse a dog with similar appearance. However, that method is usually not very satisfying since images are connected and many distortions will appear.

|

| 108 |

+

|

| 109 |

+

This `reference-only` ControlNet can directly link the attention layers of your SD to any independent images, so that your SD will read arbitary images for reference. You need at least ControlNet 1.1.153 to use it.

|

| 110 |

+

|

| 111 |

+

To use, just select `reference-only` as preprocessor and put an image. Your SD will just use the image as reference.

|

| 112 |

+

|

| 113 |

+

*Note that this method is as "non-opinioned" as possible. It only contains very basic connection codes, without any personal preferences, to connect the attention layers with your reference images. However, even if we tried best to not include any opinioned codes, we still need to write some subjective implementations to deal with weighting, cfg-scale, etc - tech report is on the way.*

|

| 114 |

+

|

| 115 |

+

More examples [here](https://github.com/Mikubill/sd-webui-controlnet/discussions/1236).

|

| 116 |

+

|

| 117 |

+

# Technical Documents

|

| 118 |

+

|

| 119 |

+

See also the documents of ControlNet 1.1:

|

| 120 |

+

|

| 121 |

+

https://github.com/lllyasviel/ControlNet-v1-1-nightly#model-specification

|

| 122 |

+

|

| 123 |

+

# Default Setting

|

| 124 |

+

|

| 125 |

+

This is my setting. If you run into any problem, you can use this setting as a sanity check

|

| 126 |

+

|

| 127 |

+

|

| 128 |

+

|

| 129 |

+

# Use Previous Models

|

| 130 |

+

|

| 131 |

+

### Use ControlNet 1.0 Models

|

| 132 |

+

|

| 133 |

+

https://huggingface.co/lllyasviel/ControlNet/tree/main/models

|

| 134 |

+

|

| 135 |

+

You can still use all previous models in the previous ControlNet 1.0. Now, the previous "depth" is now called "depth_midas", the previous "normal" is called "normal_midas", the previous "hed" is called "softedge_hed". And starting from 1.1, all line maps, edge maps, lineart maps, boundary maps will have black background and white lines.

|

| 136 |

+

|

| 137 |

+

### Use T2I-Adapter Models

|

| 138 |

+

|

| 139 |

+

(From TencentARC/T2I-Adapter)

|

| 140 |

+

|

| 141 |

+

To use T2I-Adapter models:

|

| 142 |

+

|

| 143 |

+

1. Download files from https://huggingface.co/TencentARC/T2I-Adapter/tree/main/models

|

| 144 |

+

2. Put them in "stable-diffusion-webui\extensions\sd-webui-controlnet\models".

|

| 145 |

+

3. Make sure that the file names of pth files and yaml files are consistent.

|

| 146 |

+

|

| 147 |

+

*Note that "CoAdapter" is not implemented yet.*

|

| 148 |

+

|

| 149 |

+

# Gallery

|

| 150 |

+

|

| 151 |

+

The below results are from ControlNet 1.0.

|

| 152 |

+

|

| 153 |

+

| Source | Input | Output |

|

| 154 |

+

|:-------------------------:|:-------------------------:|:-------------------------:|

|

| 155 |

+

| (no preprocessor) | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/bal-source.png?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/bal-gen.png?raw=true"> |

|

| 156 |

+

| (no preprocessor) | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/dog_rel.jpg?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/dog_rel.png?raw=true"> |

|

| 157 |

+

|<img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/mahiro_input.png?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/mahiro_canny.png?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/mahiro-out.png?raw=true"> |

|

| 158 |

+

|<img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/evt_source.jpg?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/evt_hed.png?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/evt_gen.png?raw=true"> |

|

| 159 |

+

|<img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/an-source.jpg?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/an-pose.png?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/an-gen.png?raw=true"> |

|

| 160 |

+

|<img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/sk-b-src.png?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/sk-b-dep.png?raw=true"> | <img width="256" alt="" src="https://github.com/Mikubill/sd-webui-controlnet/blob/main/samples/sk-b-out.png?raw=true"> |

|

| 161 |

+

|

| 162 |

+

The below examples are from T2I-Adapter.

|

| 163 |

+

|

| 164 |

+

From `t2iadapter_color_sd14v1.pth` :

|

| 165 |

+

|

| 166 |

+

| Source | Input | Output |

|

| 167 |

+

|:-------------------------:|:-------------------------:|:-------------------------:|

|

| 168 |

+

| <img width="256" alt="" src="https://user-images.githubusercontent.com/31246794/222947416-ec9e52a4-a1d0-48d8-bb81-736bf636145e.jpeg"> | <img width="256" alt="" src="https://user-images.githubusercontent.com/31246794/222947435-1164e7d8-d857-42f9-ab10-2d4a4b25f33a.png"> | <img width="256" alt="" src="https://user-images.githubusercontent.com/31246794/222947557-5520d5f8-88b4-474d-a576-5c9cd3acac3a.png"> |

|

| 169 |

+

|

| 170 |

+

From `t2iadapter_style_sd14v1.pth` :

|

| 171 |

+

|

| 172 |

+

| Source | Input | Output |

|

| 173 |

+

|:-------------------------:|:-------------------------:|:-------------------------:|

|

| 174 |

+

| <img width="256" alt="" src="https://user-images.githubusercontent.com/31246794/222947416-ec9e52a4-a1d0-48d8-bb81-736bf636145e.jpeg"> | (clip, non-image) | <img width="256" alt="" src="https://user-images.githubusercontent.com/31246794/222965711-7b884c9e-7095-45cb-a91c-e50d296ba3a2.png"> |

|

| 175 |

+

|

| 176 |

+

# Minimum Requirements

|

| 177 |

+

|

| 178 |

+

* (Windows) (NVIDIA: Ampere) 4gb - with `--xformers` enabled, and `Low VRAM` mode ticked in the UI, goes up to 768x832

|

| 179 |

+

|

| 180 |

+

# Multi-ControlNet

|

| 181 |

+

|

| 182 |

+

This option allows multiple ControlNet inputs for a single generation. To enable this option, change `Multi ControlNet: Max models amount (requires restart)` in the settings. Note that you will need to restart the WebUI for changes to take effect.

|

| 183 |

+

|

| 184 |

+

<table width="100%">

|

| 185 |

+

<tr>

|

| 186 |

+

<td width="25%" style="text-align: center">Source A</td>

|

| 187 |

+

<td width="25%" style="text-align: center">Source B</td>

|

| 188 |

+

<td width="25%" style="text-align: center">Output</td>

|

| 189 |

+

</tr>

|

| 190 |

+

<tr>

|

| 191 |

+

<td width="25%" style="text-align: center"><img src="https://user-images.githubusercontent.com/31246794/220448620-cd3ede92-8d3f-43d5-b771-32dd8417618f.png"></td>

|

| 192 |

+

<td width="25%" style="text-align: center"><img src="https://user-images.githubusercontent.com/31246794/220448619-beed9bdb-f6bb-41c2-a7df-aa3ef1f653c5.png"></td>

|

| 193 |

+

<td width="25%" style="text-align: center"><img src="https://user-images.githubusercontent.com/31246794/220448613-c99a9e04-0450-40fd-bc73-a9122cefaa2c.png"></td>

|

| 194 |

+

</tr>

|

| 195 |

+

</table>

|

| 196 |

+

|

| 197 |

+

# Control Weight/Start/End

|

| 198 |

+

|

| 199 |

+

Weight is the weight of the controlnet "influence". It's analogous to prompt attention/emphasis. E.g. (myprompt: 1.2). Technically, it's the factor by which to multiply the ControlNet outputs before merging them with original SD Unet.

|

| 200 |

+

|

| 201 |

+

Guidance Start/End is the percentage of total steps the controlnet applies (guidance strength = guidance end). It's analogous to prompt editing/shifting. E.g. \[myprompt::0.8\] (It applies from the beginning until 80% of total steps)

|

| 202 |

+

|

| 203 |

+

# Batch Mode

|

| 204 |

+

|

| 205 |

+

Put any unit into batch mode to activate batch mode for all units. Specify a batch directory for each unit, or use the new textbox in the img2img batch tab as a fallback. Although the textbox is located in the img2img batch tab, you can use it to generate images in the txt2img tab as well.

|

| 206 |

+

|

| 207 |

+

Note that this feature is only available in the gradio user interface. Call the APIs as many times as you want for custom batch scheduling.

|

| 208 |

+

|

| 209 |

+

# API and Script Access

|

| 210 |

+

|

| 211 |

+

This extension can accept txt2img or img2img tasks via API or external extension call. Note that you may need to enable `Allow other scripts to control this extension` in settings for external calls.

|

| 212 |

+

|

| 213 |

+

To use the API: start WebUI with argument `--api` and go to `http://webui-address/docs` for documents or checkout [examples](https://github.com/Mikubill/sd-webui-controlnet/blob/main/example/api_txt2img.ipynb).

|

| 214 |

+

|

| 215 |

+

To use external call: Checkout [Wiki](https://github.com/Mikubill/sd-webui-controlnet/wiki/API)

|

| 216 |

+

|

| 217 |

+

# Command Line Arguments

|

| 218 |

+

|

| 219 |

+

This extension adds these command line arguments to the webui:

|

| 220 |

+

|

| 221 |

+

```

|

| 222 |

+

--controlnet-dir <path to directory with controlnet models> ADD a controlnet models directory

|

| 223 |

+

--controlnet-annotator-models-path <path to directory with annotator model directories> SET the directory for annotator models

|

| 224 |

+

--no-half-controlnet load controlnet models in full precision

|

| 225 |

+

--controlnet-preprocessor-cache-size Cache size for controlnet preprocessor results

|

| 226 |

+

--controlnet-loglevel Log level for the controlnet extension

|

| 227 |

+

```

|

| 228 |

+

|

| 229 |

+

# MacOS Support

|

| 230 |

+

|

| 231 |

+

Tested with pytorch nightly: https://github.com/Mikubill/sd-webui-controlnet/pull/143#issuecomment-1435058285

|

| 232 |

+

|

| 233 |

+

To use this extension with mps and normal pytorch, currently you may need to start WebUI with `--no-half`.

|

| 234 |

+

|

| 235 |

+

# Archive of Deprecated Versions

|

| 236 |

+

|

| 237 |

+

The previous version (sd-webui-controlnet 1.0) is archived in

|

| 238 |

+

|

| 239 |

+

https://github.com/lllyasviel/webui-controlnet-v1-archived

|

| 240 |

+

|

| 241 |

+

Using this version is not a temporary stop of updates. You will stop all updates forever.

|

| 242 |

+

|

| 243 |

+

Please consider this version if you work with professional studios that requires 100% reproducing of all previous results pixel by pixel.

|

| 244 |

+

|

| 245 |

+

# Thanks

|

| 246 |

+

|

| 247 |

+

This implementation is inspired by kohya-ss/sd-webui-additional-networks

|

annotator/annotator_path.py

ADDED

|

@@ -0,0 +1,22 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import os

|

| 2 |

+

from modules import shared

|

| 3 |

+

|

| 4 |

+

models_path = shared.opts.data.get('control_net_modules_path', None)

|

| 5 |

+

if not models_path:

|

| 6 |

+

models_path = getattr(shared.cmd_opts, 'controlnet_annotator_models_path', None)

|

| 7 |

+

if not models_path:

|

| 8 |

+

models_path = os.path.join(os.path.dirname(os.path.abspath(__file__)), 'downloads')

|

| 9 |

+

|

| 10 |

+

if not os.path.isabs(models_path):

|

| 11 |

+

models_path = os.path.join(shared.data_path, models_path)

|

| 12 |

+

|

| 13 |

+

clip_vision_path = os.path.join(os.path.dirname(os.path.abspath(__file__)), 'clip_vision')

|

| 14 |

+

# clip vision is always inside controlnet "extensions\sd-webui-controlnet"

|

| 15 |

+

# and any problem can be solved by removing controlnet and reinstall

|

| 16 |

+

|

| 17 |

+

models_path = os.path.realpath(models_path)

|

| 18 |

+

os.makedirs(models_path, exist_ok=True)

|

| 19 |

+

print(f'ControlNet preprocessor location: {models_path}')

|

| 20 |

+

# Make sure that the default location is inside controlnet "extensions\sd-webui-controlnet"

|

| 21 |

+

# so that any problem can be solved by removing controlnet and reinstall

|

| 22 |

+

# if users do not change configs on their own (otherwise users will know what is wrong)

|

annotator/binary/__init__.py

ADDED

|

@@ -0,0 +1,14 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import cv2

|

| 2 |

+

|

| 3 |

+

|

| 4 |

+

def apply_binary(img, bin_threshold):

|

| 5 |

+

img_gray = cv2.cvtColor(img, cv2.COLOR_RGB2GRAY)

|

| 6 |

+

|

| 7 |

+

if bin_threshold == 0 or bin_threshold == 255:

|

| 8 |

+

# Otsu's threshold

|

| 9 |

+

otsu_threshold, img_bin = cv2.threshold(img_gray, 0, 255, cv2.THRESH_BINARY_INV + cv2.THRESH_OTSU)

|

| 10 |

+

print("Otsu threshold:", otsu_threshold)

|

| 11 |

+

else:

|

| 12 |

+

_, img_bin = cv2.threshold(img_gray, bin_threshold, 255, cv2.THRESH_BINARY_INV)

|

| 13 |

+

|

| 14 |

+

return cv2.cvtColor(img_bin, cv2.COLOR_GRAY2RGB)

|

annotator/canny/__init__.py

ADDED

|

@@ -0,0 +1,5 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import cv2

|

| 2 |

+

|

| 3 |

+

|

| 4 |

+

def apply_canny(img, low_threshold, high_threshold):

|

| 5 |

+

return cv2.Canny(img, low_threshold, high_threshold)

|

annotator/clip/__init__.py

ADDED

|

@@ -0,0 +1,39 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import torch

|

| 2 |

+

from transformers import CLIPProcessor, CLIPVisionModel

|

| 3 |

+

from modules import devices

|

| 4 |

+

import os

|

| 5 |

+

from annotator.annotator_path import clip_vision_path

|

| 6 |

+

|

| 7 |

+

|

| 8 |

+

remote_model_path = "https://huggingface.co/openai/clip-vit-large-patch14/resolve/main/pytorch_model.bin"

|

| 9 |

+

clip_path = clip_vision_path

|

| 10 |

+

print(f'ControlNet ClipVision location: {clip_path}')

|

| 11 |

+

|

| 12 |

+

clip_proc = None

|

| 13 |

+

clip_vision_model = None

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

def apply_clip(img):

|

| 17 |

+

global clip_proc, clip_vision_model

|

| 18 |

+

|

| 19 |

+

if clip_vision_model is None:

|

| 20 |

+

modelpath = os.path.join(clip_path, 'pytorch_model.bin')

|

| 21 |

+

if not os.path.exists(modelpath):

|

| 22 |

+

from basicsr.utils.download_util import load_file_from_url

|

| 23 |

+

load_file_from_url(remote_model_path, model_dir=clip_path)

|

| 24 |

+

|

| 25 |

+

clip_proc = CLIPProcessor.from_pretrained(clip_path)

|

| 26 |

+

clip_vision_model = CLIPVisionModel.from_pretrained(clip_path)

|

| 27 |

+

|

| 28 |

+

with torch.no_grad():

|

| 29 |

+

clip_vision_model = clip_vision_model.to(devices.get_device_for("controlnet"))

|

| 30 |

+

style_for_clip = clip_proc(images=img, return_tensors="pt")['pixel_values']

|

| 31 |

+

style_feat = clip_vision_model(style_for_clip.to(devices.get_device_for("controlnet")))['last_hidden_state']

|

| 32 |

+

|

| 33 |

+

return style_feat

|

| 34 |

+

|

| 35 |

+

|

| 36 |

+

def unload_clip_model():

|

| 37 |

+

global clip_proc, clip_vision_model

|

| 38 |

+

if clip_vision_model is not None:

|

| 39 |

+

clip_vision_model.cpu()

|

annotator/clip_vision/config.json

ADDED

|

@@ -0,0 +1,171 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "clip-vit-large-patch14/",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"CLIPModel"

|

| 5 |

+

],

|

| 6 |

+

"initializer_factor": 1.0,

|

| 7 |

+

"logit_scale_init_value": 2.6592,

|

| 8 |

+

"model_type": "clip",

|

| 9 |

+

"projection_dim": 768,

|

| 10 |

+

"text_config": {

|

| 11 |

+

"_name_or_path": "",

|

| 12 |

+

"add_cross_attention": false,

|

| 13 |

+

"architectures": null,

|

| 14 |

+

"attention_dropout": 0.0,

|

| 15 |

+

"bad_words_ids": null,

|

| 16 |

+

"bos_token_id": 0,

|

| 17 |

+

"chunk_size_feed_forward": 0,

|

| 18 |

+

"cross_attention_hidden_size": null,

|

| 19 |

+

"decoder_start_token_id": null,

|

| 20 |

+

"diversity_penalty": 0.0,

|

| 21 |

+

"do_sample": false,

|

| 22 |

+

"dropout": 0.0,

|

| 23 |

+

"early_stopping": false,

|

| 24 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 25 |

+

"eos_token_id": 2,

|

| 26 |

+

"finetuning_task": null,

|

| 27 |

+

"forced_bos_token_id": null,

|

| 28 |

+

"forced_eos_token_id": null,

|

| 29 |

+

"hidden_act": "quick_gelu",

|

| 30 |

+

"hidden_size": 768,

|

| 31 |

+

"id2label": {

|

| 32 |

+

"0": "LABEL_0",

|

| 33 |

+

"1": "LABEL_1"

|

| 34 |

+

},

|

| 35 |

+

"initializer_factor": 1.0,

|

| 36 |

+