diff --git a/.gitattributes b/.gitattributes

index a6344aac8c09253b3b630fb776ae94478aa0275b..38121deaa70df1b695383f1c100a24e90378ecd5 100644

--- a/.gitattributes

+++ b/.gitattributes

@@ -33,3 +33,17 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

*.zip filter=lfs diff=lfs merge=lfs -text

*.zst filter=lfs diff=lfs merge=lfs -text

*tfevents* filter=lfs diff=lfs merge=lfs -text

+exhm/detailer/stable-diffusion-webui-eyemask/models/shape_predictor_68_face_landmarks.dat filter=lfs diff=lfs merge=lfs -text

+exhm/extensions[[:space:]]img2/ComfyUI-nodes-hnmr/examples/workflow_mbw_multi.png filter=lfs diff=lfs merge=lfs -text

+exhm/extensions[[:space:]]img2/ComfyUI-nodes-hnmr/examples/workflow_xyz.png filter=lfs diff=lfs merge=lfs -text

+exhm/extensions[[:space:]]img2/latent-upscale/assets/default.png filter=lfs diff=lfs merge=lfs -text

+exhm/extensions[[:space:]]img2/latent-upscale/assets/img2img_latent_upscale_process.png filter=lfs diff=lfs merge=lfs -text

+exhm/extensions[[:space:]]img2/latent-upscale/assets/nearest-exact-normal1.png filter=lfs diff=lfs merge=lfs -text

+exhm/extensions[[:space:]]img2/latent-upscale/assets/nearest-exact-normal2.png filter=lfs diff=lfs merge=lfs -text

+exhm/extensions[[:space:]]img2/latent-upscale/assets/nearest-exact-simple1.png filter=lfs diff=lfs merge=lfs -text

+exhm/extensions[[:space:]]img2/latent-upscale/assets/nearest-exact-simple2.png filter=lfs diff=lfs merge=lfs -text

+exhm/extensions[[:space:]]img2/latent-upscale/assets/nearest-exact-simple8.png filter=lfs diff=lfs merge=lfs -text

+exhm/extensions[[:space:]]img2/sd-webui-img2txt/sd-webui-img2txt.gif filter=lfs diff=lfs merge=lfs -text

+exhm/extensions[[:space:]]img2/sd-webui-inpaint-anything/images/inpaint_anything_ui_image_1.png filter=lfs diff=lfs merge=lfs -text

+exhm/extensions[[:space:]]img2/sd-webui-manga-inpainting/manga_inpainting/repo/examples/representative.png filter=lfs diff=lfs merge=lfs -text

+exhm/extensions[[:space:]]img2/sd-webui-real-image-artifacts/examples/before.png filter=lfs diff=lfs merge=lfs -text

diff --git a/exhm/detailer/dddetailer/.gitignore b/exhm/detailer/dddetailer/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..a0e67413961089596eb61d1df811722abb7cc999

--- /dev/null

+++ b/exhm/detailer/dddetailer/.gitignore

@@ -0,0 +1,10 @@

+__pycache__

+*.ckpt

+*.pth

+/tmp

+/outputs

+/log

+.vscode

+/test-cases

+.mypy_cache/

+.ruff_cache/

diff --git a/exhm/detailer/dddetailer/README.md b/exhm/detailer/dddetailer/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..1f37f1650df1af4e0d524b2938b5d42cc748436b

--- /dev/null

+++ b/exhm/detailer/dddetailer/README.md

@@ -0,0 +1,62 @@

+# 돚거 Detection Detailer

+

+Dotgeo(hijack) Detection Detailer

+

+ddetailer with torch 2.0, mmcv 2.0, mmdet 3.0

+

+integrated with [noahge4/ddetailer](https://github.com/noahge4/ddetailer)

+

+AI실사채널 ChatGPT23님의 [ddetailer 수정본](https://arca.live/b/aireal/72297207) 병합됨

+

+## Installation

+

+1. remove original ddetailer extension - `stable-diffusion-webui/extensions/ddetailer` folder

+2. remove original model files - `stable-diffusion-webui/models/mmdet` folder

+3. install from the extensions tab with url `https://github.com/Bing-su/dddetailer`

+

+## Problem

+

+The predictive accuracy of the segmentation model has become very poor.

+

+# Detection Detailer

+An object detection and auto-mask extension for [Stable Diffusion web UI](https://github.com/AUTOMATIC1111/stable-diffusion-webui). See [Installation](https://github.com/dustysys/ddetailer#installation).

+

+

+

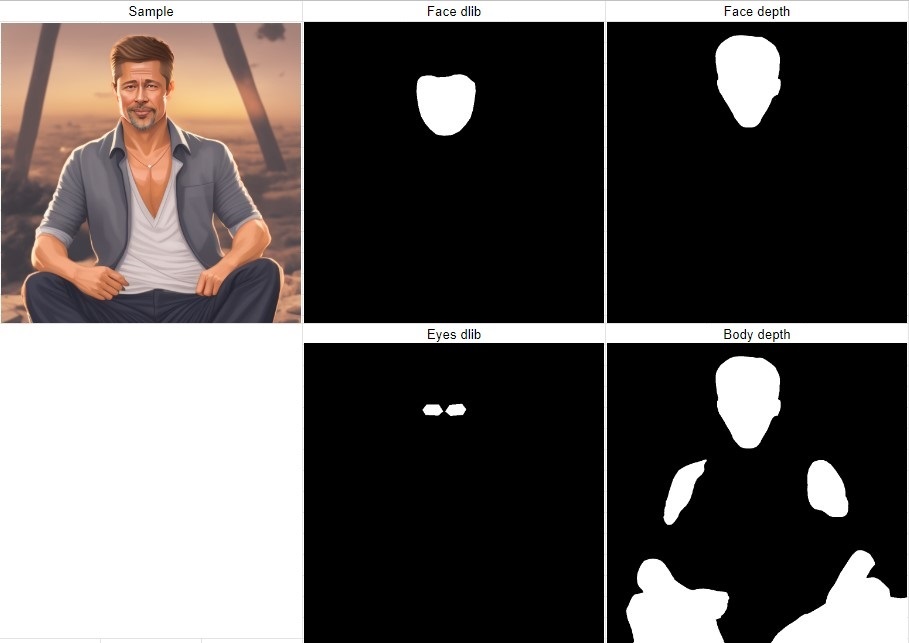

+### Segmentation

+Default models enable person and face instance segmentation.

+

+

+

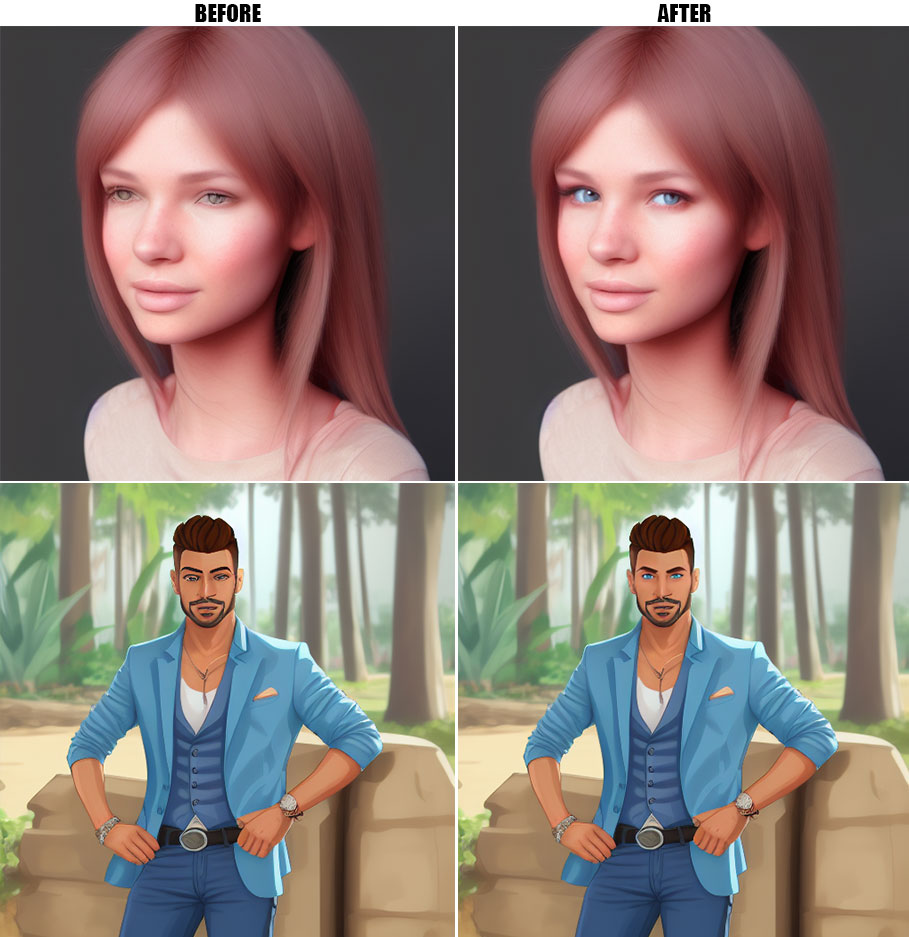

+### Detailing

+With full-resolution inpainting, the extension is handy for improving faces without the hassle of manual masking.

+

+

+

+## Installation

+1. Use `git clone https://github.com/dustysys/ddetailer.git` from your SD web UI `/extensions` folder. Alternatively, install from the extensions tab with url `https://github.com/dustysys/ddetailer`

+2. Start or reload SD web UI.

+

+The models and dependencies should download automatically. To install them manually, follow the [official instructions for installing mmdet](https://mmcv.readthedocs.io/en/latest/get_started/installation.html#install-with-mim-recommended). The models can be [downloaded here](https://huggingface.co/dustysys/ddetailer) and should be placed in `/models/mmdet/bbox` for bounding box (`anime-face_yolov3`) or `/models/mmdet/segm` for instance segmentation models (`dd-person_mask2former`). See the [MMDetection docs](https://mmdetection.readthedocs.io/en/latest/1_exist_data_model.html) for guidance on training your own models. For using official MMDetection pretrained models see [here](https://github.com/dustysys/ddetailer/issues/5#issuecomment-1311231989), these are trained for photorealism. See [Troubleshooting](https://github.com/dustysys/ddetailer#troubleshooting) if you encounter issues during installation.

+

+## Usage

+Select Detection Detailer as the script in SD web UI to use the extension. Click 'Generate' to run the script. Here are some tips:

+- `anime-face_yolov3` can detect the bounding box of faces as the primary model while `dd-person_mask2former` isolates the head's silhouette as the secondary model by using the bitwise AND option. Refer to [this example](https://github.com/dustysys/ddetailer/issues/4#issuecomment-1311200268).

+- The dilation factor expands the mask, while the x & y offsets move the mask around.

+- The script is available in txt2img mode as well and can improve the quality of your 10 pulls with moderate settings (low denoise).

+

+## Troubleshooting

+If you get the message ERROR: 'Failed building wheel for pycocotools' follow [these steps](https://github.com/dustysys/ddetailer/issues/1#issuecomment-1309415543).

+

+Any other issues installing, open an [issue](https://github.com/dustysys/ddetailer/issues).

+

+## Credits

+hysts/[anime-face-detector](https://github.com/hysts/anime-face-detector) - Creator of `anime-face_yolov3`, which has impressive performance on a variety of art styles.

+

+skytnt/[anime-segmentation](https://huggingface.co/datasets/skytnt/anime-segmentation) - Synthetic dataset used to train `dd-person_mask2former`.

+

+jerryli27/[AniSeg](https://github.com/jerryli27/AniSeg) - Annotated dataset used to train `dd-person_mask2former`.

+

+open-mmlab/[mmdetection](https://github.com/open-mmlab/mmdetection) - Object detection toolset. `dd-person_mask2former` was trained via transfer learning using their [R-50 Mask2Former instance segmentation model](https://github.com/open-mmlab/mmdetection/tree/master/configs/mask2former#instance-segmentation) as a base.

+

+AUTOMATIC1111/[stable-diffusion-webui](https://github.com/AUTOMATIC1111/stable-diffusion-webui) - Web UI for Stable Diffusion, base application for this extension.

diff --git a/exhm/detailer/dddetailer/config/coco_panoptic.py b/exhm/detailer/dddetailer/config/coco_panoptic.py

new file mode 100644

index 0000000000000000000000000000000000000000..954daaded2f2f5e9cf745506d4bc59ac519eebd3

--- /dev/null

+++ b/exhm/detailer/dddetailer/config/coco_panoptic.py

@@ -0,0 +1,98 @@

+# dataset settings

+dataset_type = "CocoPanopticDataset"

+data_root = 'data/coco/'

+

+# Example to use different file client

+# Method 1: simply set the data root and let the file I/O module

+# automatically infer from prefix (not support LMDB and Memcache yet)

+

+# data_root = "s3://openmmlab/datasets/detection/coco/"

+

+# Method 2: Use `backend_args`, `file_client_args` in versions before 3.0.0rc6

+# backend_args = dict(

+# backend='petrel',

+# path_mapping=dict({

+# './data/': 's3://openmmlab/datasets/detection/',

+# 'data/': 's3://openmmlab/datasets/detection/'

+# }))

+backend_args = None

+

+train_pipeline = [

+ dict(type="LoadImageFromFile", backend_args=backend_args),

+ dict(type="LoadPanopticAnnotations", backend_args=backend_args),

+ dict(type="Resize", scale=(1333, 800), keep_ratio=True),

+ dict(type="RandomFlip", prob=0.5),

+ dict(type="PackDetInputs"),

+]

+test_pipeline = [

+ dict(type="LoadImageFromFile", backend_args=backend_args),

+ dict(type="Resize", scale=(1333, 800), keep_ratio=True),

+ dict(type="LoadPanopticAnnotations", backend_args=backend_args),

+ dict(

+ type="PackDetInputs",

+ meta_keys=("img_id", "img_path", "ori_shape", "img_shape", "scale_factor"),

+ ),

+]

+

+train_dataloader = dict(

+ batch_size=2,

+ num_workers=2,

+ persistent_workers=True,

+ sampler=dict(type="DefaultSampler", shuffle=True),

+ batch_sampler=dict(type="AspectRatioBatchSampler"),

+ dataset=dict(

+ type=dataset_type,

+ data_root=data_root,

+ ann_file="annotations/panoptic_train2017.json",

+ data_prefix=dict(img="train2017/", seg="annotations/panoptic_train2017/"),

+ filter_cfg=dict(filter_empty_gt=True, min_size=32),

+ pipeline=train_pipeline,

+ backend_args=backend_args,

+ ),

+)

+val_dataloader = dict(

+ batch_size=1,

+ num_workers=2,

+ persistent_workers=True,

+ drop_last=False,

+ sampler=dict(type="DefaultSampler", shuffle=False),

+ dataset=dict(

+ type=dataset_type,

+ data_root=data_root,

+ ann_file="annotations/panoptic_val2017.json",

+ data_prefix=dict(img="val2017/", seg="annotations/panoptic_val2017/"),

+ test_mode=True,

+ pipeline=test_pipeline,

+ backend_args=backend_args,

+ ),

+)

+test_dataloader = val_dataloader

+

+val_evaluator = dict(

+ type="CocoPanopticMetric",

+ ann_file=data_root + "annotations/panoptic_val2017.json",

+ seg_prefix=data_root + "annotations/panoptic_val2017/",

+ backend_args=backend_args,

+)

+test_evaluator = val_evaluator

+

+# inference on test dataset and

+# format the output results for submission.

+# test_dataloader = dict(

+# batch_size=1,

+# num_workers=1,

+# persistent_workers=True,

+# drop_last=False,

+# sampler=dict(type='DefaultSampler', shuffle=False),

+# dataset=dict(

+# type=dataset_type,

+# data_root=data_root,

+# ann_file='annotations/panoptic_image_info_test-dev2017.json',

+# data_prefix=dict(img='test2017/'),

+# test_mode=True,

+# pipeline=test_pipeline))

+# test_evaluator = dict(

+# type='CocoPanopticMetric',

+# format_only=True,

+# ann_file=data_root + 'annotations/panoptic_image_info_test-dev2017.json',

+# outfile_prefix='./work_dirs/coco_panoptic/test')

diff --git a/exhm/detailer/dddetailer/config/mask2former_r50_8xb2-lsj-50e_coco-panoptic.py b/exhm/detailer/dddetailer/config/mask2former_r50_8xb2-lsj-50e_coco-panoptic.py

new file mode 100644

index 0000000000000000000000000000000000000000..b67d9b0310c2ecc8ac0cc692d9fc6a888ea63b3f

--- /dev/null

+++ b/exhm/detailer/dddetailer/config/mask2former_r50_8xb2-lsj-50e_coco-panoptic.py

@@ -0,0 +1,265 @@

+_base_ = ["./coco_panoptic.py"]

+image_size = (1024, 1024)

+batch_augments = [

+ dict(

+ type="BatchFixedSizePad",

+ size=image_size,

+ img_pad_value=0,

+ pad_mask=True,

+ mask_pad_value=0,

+ pad_seg=True,

+ seg_pad_value=255,

+ )

+]

+data_preprocessor = dict(

+ type="DetDataPreprocessor",

+ mean=[123.675, 116.28, 103.53],

+ std=[58.395, 57.12, 57.375],

+ bgr_to_rgb=True,

+ pad_size_divisor=32,

+ pad_mask=True,

+ mask_pad_value=0,

+ pad_seg=True,

+ seg_pad_value=255,

+ batch_augments=batch_augments,

+)

+

+num_things_classes = 1

+num_stuff_classes = 0

+num_classes = num_things_classes + num_stuff_classes

+model = dict(

+ type="Mask2Former",

+ data_preprocessor=data_preprocessor,

+ backbone=dict(

+ type="ResNet",

+ depth=50,

+ num_stages=4,

+ out_indices=(0, 1, 2, 3),

+ frozen_stages=-1,

+ norm_cfg=dict(type="BN", requires_grad=False),

+ norm_eval=True,

+ style="pytorch",

+ init_cfg=dict(type="Pretrained", checkpoint="torchvision://resnet50"),

+ ),

+ panoptic_head=dict(

+ type="Mask2FormerHead",

+ in_channels=[256, 512, 1024, 2048], # pass to pixel_decoder inside

+ strides=[4, 8, 16, 32],

+ feat_channels=256,

+ out_channels=256,

+ num_things_classes=num_things_classes,

+ num_stuff_classes=num_stuff_classes,

+ num_queries=100,

+ num_transformer_feat_level=3,

+ pixel_decoder=dict(

+ type="MSDeformAttnPixelDecoder",

+ num_outs=3,

+ norm_cfg=dict(type="GN", num_groups=32),

+ act_cfg=dict(type="ReLU"),

+ encoder=dict( # DeformableDetrTransformerEncoder

+ num_layers=6,

+ layer_cfg=dict( # DeformableDetrTransformerEncoderLayer

+ self_attn_cfg=dict( # MultiScaleDeformableAttention

+ embed_dims=256,

+ num_heads=8,

+ num_levels=3,

+ num_points=4,

+ dropout=0.0,

+ batch_first=True,

+ ),

+ ffn_cfg=dict(

+ embed_dims=256,

+ feedforward_channels=1024,

+ num_fcs=2,

+ ffn_drop=0.0,

+ act_cfg=dict(type="ReLU", inplace=True),

+ ),

+ ),

+ ),

+ positional_encoding=dict(num_feats=128, normalize=True),

+ ),

+ enforce_decoder_input_project=False,

+ positional_encoding=dict(num_feats=128, normalize=True),

+ transformer_decoder=dict( # Mask2FormerTransformerDecoder

+ return_intermediate=True,

+ num_layers=9,

+ layer_cfg=dict( # Mask2FormerTransformerDecoderLayer

+ self_attn_cfg=dict( # MultiheadAttention

+ embed_dims=256, num_heads=8, dropout=0.0, batch_first=True

+ ),

+ cross_attn_cfg=dict( # MultiheadAttention

+ embed_dims=256, num_heads=8, dropout=0.0, batch_first=True

+ ),

+ ffn_cfg=dict(

+ embed_dims=256,

+ feedforward_channels=2048,

+ num_fcs=2,

+ ffn_drop=0.0,

+ act_cfg=dict(type="ReLU", inplace=True),

+ ),

+ ),

+ init_cfg=None,

+ ),

+ loss_cls=dict(

+ type="CrossEntropyLoss",

+ use_sigmoid=False,

+ loss_weight=2.0,

+ reduction="mean",

+ class_weight=[1.0] * num_classes + [0.1],

+ ),

+ loss_mask=dict(

+ type="CrossEntropyLoss", use_sigmoid=True, reduction="mean", loss_weight=5.0

+ ),

+ loss_dice=dict(

+ type="DiceLoss",

+ use_sigmoid=True,

+ activate=True,

+ reduction="mean",

+ naive_dice=True,

+ eps=1.0,

+ loss_weight=5.0,

+ ),

+ ),

+ panoptic_fusion_head=dict(

+ type="MaskFormerFusionHead",

+ num_things_classes=num_things_classes,

+ num_stuff_classes=num_stuff_classes,

+ loss_panoptic=None,

+ init_cfg=None,

+ ),

+ train_cfg=dict(

+ num_points=12544,

+ oversample_ratio=3.0,

+ importance_sample_ratio=0.75,

+ assigner=dict(

+ type="HungarianAssigner",

+ match_costs=[

+ dict(type="ClassificationCost", weight=2.0),

+ dict(type="CrossEntropyLossCost", weight=5.0, use_sigmoid=True),

+ dict(type="DiceCost", weight=5.0, pred_act=True, eps=1.0),

+ ],

+ ),

+ sampler=dict(type="MaskPseudoSampler"),

+ ),

+ test_cfg=dict(

+ panoptic_on=True,

+ # For now, the dataset does not support

+ # evaluating semantic segmentation metric.

+ semantic_on=False,

+ instance_on=True,

+ # max_per_image is for instance segmentation.

+ max_per_image=100,

+ iou_thr=0.8,

+ # In Mask2Former's panoptic postprocessing,

+ # it will filter mask area where score is less than 0.5 .

+ filter_low_score=True,

+ ),

+ init_cfg=None,

+)

+

+# dataset settings

+data_root = "data/coco/"

+train_pipeline = [

+ dict(

+ type="LoadImageFromFile", to_float32=True, backend_args={{_base_.backend_args}}

+ ),

+ dict(

+ type="LoadPanopticAnnotations",

+ with_bbox=True,

+ with_mask=True,

+ with_seg=True,

+ backend_args={{_base_.backend_args}},

+ ),

+ dict(type="RandomFlip", prob=0.5),

+ # large scale jittering

+ dict(

+ type="RandomResize", scale=image_size, ratio_range=(0.1, 2.0), keep_ratio=True

+ ),

+ dict(

+ type="RandomCrop",

+ crop_size=image_size,

+ crop_type="absolute",

+ recompute_bbox=True,

+ allow_negative_crop=True,

+ ),

+ dict(type="PackDetInputs"),

+]

+

+train_dataloader = dict(dataset=dict(pipeline=train_pipeline))

+

+val_evaluator = [

+ dict(

+ type="CocoPanopticMetric",

+ ann_file=data_root + "annotations/panoptic_val2017.json",

+ seg_prefix=data_root + "annotations/panoptic_val2017/",

+ backend_args={{_base_.backend_args}},

+ ),

+ dict(

+ type="CocoMetric",

+ ann_file=data_root + "annotations/instances_val2017.json",

+ metric=["bbox", "segm"],

+ backend_args={{_base_.backend_args}},

+ ),

+]

+test_evaluator = val_evaluator

+

+# optimizer

+embed_multi = dict(lr_mult=1.0, decay_mult=0.0)

+optim_wrapper = dict(

+ type="OptimWrapper",

+ optimizer=dict(

+ type="AdamW", lr=0.0001, weight_decay=0.05, eps=1e-8, betas=(0.9, 0.999)

+ ),

+ paramwise_cfg=dict(

+ custom_keys={

+ "backbone": dict(lr_mult=0.1, decay_mult=1.0),

+ "query_embed": embed_multi,

+ "query_feat": embed_multi,

+ "level_embed": embed_multi,

+ },

+ norm_decay_mult=0.0,

+ ),

+ clip_grad=dict(max_norm=0.01, norm_type=2),

+)

+

+# learning policy

+max_iters = 368750

+param_scheduler = dict(

+ type="MultiStepLR",

+ begin=0,

+ end=max_iters,

+ by_epoch=False,

+ milestones=[327778, 355092],

+ gamma=0.1,

+)

+

+# Before 365001th iteration, we do evaluation every 5000 iterations.

+# After 365000th iteration, we do evaluation every 368750 iterations,

+# which means that we do evaluation at the end of training.

+interval = 5000

+dynamic_intervals = [(max_iters // interval * interval + 1, max_iters)]

+train_cfg = dict(

+ type="IterBasedTrainLoop",

+ max_iters=max_iters,

+ val_interval=interval,

+ dynamic_intervals=dynamic_intervals,

+)

+val_cfg = dict(type="ValLoop")

+test_cfg = dict(type="TestLoop")

+

+default_hooks = dict(

+ checkpoint=dict(

+ type="CheckpointHook",

+ by_epoch=False,

+ save_last=True,

+ max_keep_ckpts=3,

+ interval=interval,

+ )

+)

+log_processor = dict(type="LogProcessor", window_size=50, by_epoch=False)

+

+# Default setting for scaling LR automatically

+# - `enable` means enable scaling LR automatically

+# or not by default.

+# - `base_batch_size` = (8 GPUs) x (2 samples per GPU).

+auto_scale_lr = dict(enable=False, base_batch_size=16)

diff --git a/exhm/detailer/dddetailer/config/mmdet_anime-face_yolov3.py b/exhm/detailer/dddetailer/config/mmdet_anime-face_yolov3.py

new file mode 100644

index 0000000000000000000000000000000000000000..5d384b556c113baf491d96ea47317d3133eae9b5

--- /dev/null

+++ b/exhm/detailer/dddetailer/config/mmdet_anime-face_yolov3.py

@@ -0,0 +1,177 @@

+# _base_ = ["../_base_/schedules/schedule_1x.py", "../_base_/default_runtime.py"]

+# model settings

+data_preprocessor = dict(

+ type="DetDataPreprocessor",

+ mean=[0, 0, 0],

+ std=[255.0, 255.0, 255.0],

+ bgr_to_rgb=True,

+ pad_size_divisor=32,

+)

+model = dict(

+ type="YOLOV3",

+ data_preprocessor=data_preprocessor,

+ backbone=dict(

+ type="Darknet",

+ depth=53,

+ out_indices=(3, 4, 5),

+ init_cfg=dict(type="Pretrained", checkpoint="open-mmlab://darknet53"),

+ ),

+ neck=dict(

+ type="YOLOV3Neck",

+ num_scales=3,

+ in_channels=[1024, 512, 256],

+ out_channels=[512, 256, 128],

+ ),

+ bbox_head=dict(

+ type="YOLOV3Head",

+ num_classes=1,

+ in_channels=[512, 256, 128],

+ out_channels=[1024, 512, 256],

+ anchor_generator=dict(

+ type="YOLOAnchorGenerator",

+ base_sizes=[

+ [(116, 90), (156, 198), (373, 326)],

+ [(30, 61), (62, 45), (59, 119)],

+ [(10, 13), (16, 30), (33, 23)],

+ ],

+ strides=[32, 16, 8],

+ ),

+ bbox_coder=dict(type="YOLOBBoxCoder"),

+ featmap_strides=[32, 16, 8],

+ loss_cls=dict(

+ type="CrossEntropyLoss", use_sigmoid=True, loss_weight=1.0, reduction="sum"

+ ),

+ loss_conf=dict(

+ type="CrossEntropyLoss", use_sigmoid=True, loss_weight=1.0, reduction="sum"

+ ),

+ loss_xy=dict(

+ type="CrossEntropyLoss", use_sigmoid=True, loss_weight=2.0, reduction="sum"

+ ),

+ loss_wh=dict(type="MSELoss", loss_weight=2.0, reduction="sum"),

+ ),

+ # training and testing settings

+ train_cfg=dict(

+ assigner=dict(

+ type="GridAssigner", pos_iou_thr=0.5, neg_iou_thr=0.5, min_pos_iou=0

+ )

+ ),

+ test_cfg=dict(

+ nms_pre=1000,

+ min_bbox_size=0,

+ score_thr=0.05,

+ conf_thr=0.005,

+ nms=dict(type="nms", iou_threshold=0.45),

+ max_per_img=100,

+ ),

+)

+# dataset settings

+dataset_type = "CocoDataset"

+data_root = "data/coco/"

+

+# Example to use different file client

+# Method 1: simply set the data root and let the file I/O module

+# automatically infer from prefix (not support LMDB and Memcache yet)

+

+# data_root = 's3://openmmlab/datasets/detection/coco/'

+

+# Method 2: Use `backend_args`, `file_client_args` in versions before 3.0.0rc6

+# backend_args = dict(

+# backend='petrel',

+# path_mapping=dict({

+# './data/': 's3://openmmlab/datasets/detection/',

+# 'data/': 's3://openmmlab/datasets/detection/'

+# }))

+backend_args = None

+

+train_pipeline = [

+ dict(type="LoadImageFromFile", backend_args=backend_args),

+ dict(type="LoadAnnotations", with_bbox=True),

+ dict(

+ type="Expand",

+ mean=data_preprocessor["mean"],

+ to_rgb=data_preprocessor["bgr_to_rgb"],

+ ratio_range=(1, 2),

+ ),

+ dict(

+ type="MinIoURandomCrop",

+ min_ious=(0.4, 0.5, 0.6, 0.7, 0.8, 0.9),

+ min_crop_size=0.3,

+ ),

+ dict(type="RandomResize", scale=[(320, 320), (608, 608)], keep_ratio=True),

+ dict(type="RandomFlip", prob=0.5),

+ dict(type="PhotoMetricDistortion"),

+ dict(type="PackDetInputs"),

+]

+test_pipeline = [

+ dict(type="LoadImageFromFile", backend_args=backend_args),

+ dict(type="Resize", scale=(608, 608), keep_ratio=True),

+ dict(type="LoadAnnotations", with_bbox=True),

+ dict(

+ type="PackDetInputs",

+ meta_keys=("img_id", "img_path", "ori_shape", "img_shape", "scale_factor"),

+ ),

+]

+

+train_dataloader = dict(

+ batch_size=8,

+ num_workers=4,

+ persistent_workers=True,

+ sampler=dict(type="DefaultSampler", shuffle=True),

+ batch_sampler=dict(type="AspectRatioBatchSampler"),

+ dataset=dict(

+ type=dataset_type,

+ data_root=data_root,

+ ann_file="annotations/instances_train2017.json",

+ data_prefix=dict(img="train2017/"),

+ filter_cfg=dict(filter_empty_gt=True, min_size=32),

+ pipeline=train_pipeline,

+ backend_args=backend_args,

+ ),

+)

+val_dataloader = dict(

+ batch_size=1,

+ num_workers=2,

+ persistent_workers=True,

+ drop_last=False,

+ sampler=dict(type="DefaultSampler", shuffle=False),

+ dataset=dict(

+ type=dataset_type,

+ data_root=data_root,

+ ann_file="annotations/instances_val2017.json",

+ data_prefix=dict(img="val2017/"),

+ test_mode=True,

+ pipeline=test_pipeline,

+ backend_args=backend_args,

+ ),

+)

+test_dataloader = val_dataloader

+

+val_evaluator = dict(

+ type="CocoMetric",

+ ann_file=data_root + "annotations/instances_val2017.json",

+ metric="bbox",

+ backend_args=backend_args,

+)

+test_evaluator = val_evaluator

+

+train_cfg = dict(max_epochs=273, val_interval=7)

+

+# optimizer

+optim_wrapper = dict(

+ type="OptimWrapper",

+ optimizer=dict(type="SGD", lr=0.001, momentum=0.9, weight_decay=0.0005),

+ clip_grad=dict(max_norm=35, norm_type=2),

+)

+

+# learning policy

+param_scheduler = [

+ dict(type="LinearLR", start_factor=0.1, by_epoch=False, begin=0, end=2000),

+ dict(type="MultiStepLR", by_epoch=True, milestones=[218, 246], gamma=0.1),

+]

+

+default_hooks = dict(checkpoint=dict(type="CheckpointHook", interval=7))

+

+# NOTE: `auto_scale_lr` is for automatically scaling LR,

+# USER SHOULD NOT CHANGE ITS VALUES.

+# base_batch_size = (8 GPUs) x (8 samples per GPU)

+auto_scale_lr = dict(base_batch_size=64)

diff --git a/exhm/detailer/dddetailer/config/mmdet_dd-person_mask2former.py b/exhm/detailer/dddetailer/config/mmdet_dd-person_mask2former.py

new file mode 100644

index 0000000000000000000000000000000000000000..1b2e94f4aa79cb816aaec390c9891eb410584ce8

--- /dev/null

+++ b/exhm/detailer/dddetailer/config/mmdet_dd-person_mask2former.py

@@ -0,0 +1,105 @@

+_base_ = ["./mask2former_r50_8xb2-lsj-50e_coco-panoptic.py"]

+

+num_things_classes = 1

+num_stuff_classes = 0

+num_classes = num_things_classes + num_stuff_classes

+image_size = (1024, 1024)

+batch_augments = [

+ dict(

+ type="BatchFixedSizePad",

+ size=image_size,

+ img_pad_value=0,

+ pad_mask=True,

+ mask_pad_value=0,

+ pad_seg=False,

+ )

+]

+data_preprocessor = dict(

+ type="DetDataPreprocessor",

+ mean=[123.675, 116.28, 103.53],

+ std=[58.395, 57.12, 57.375],

+ bgr_to_rgb=True,

+ pad_size_divisor=32,

+ pad_mask=True,

+ mask_pad_value=0,

+ pad_seg=False,

+ batch_augments=batch_augments,

+)

+model = dict(

+ data_preprocessor=data_preprocessor,

+ panoptic_head=dict(

+ num_things_classes=num_things_classes,

+ num_stuff_classes=num_stuff_classes,

+ loss_cls=dict(class_weight=[1.0] * num_classes + [0.1]),

+ ),

+ panoptic_fusion_head=dict(

+ num_things_classes=num_things_classes, num_stuff_classes=num_stuff_classes

+ ),

+ test_cfg=dict(panoptic_on=False),

+)

+

+# dataset settings

+train_pipeline = [

+ dict(type="LoadImageFromFile", to_float32=True, backend_args=None),

+ dict(type="LoadAnnotations", with_bbox=True, with_mask=True),

+ dict(type="RandomFlip", prob=0.5),

+ # large scale jittering

+ dict(

+ type="RandomResize",

+ scale=image_size,

+ ratio_range=(0.1, 2.0),

+ resize_type="Resize",

+ keep_ratio=True,

+ ),

+ dict(

+ type="RandomCrop",

+ crop_size=image_size,

+ crop_type="absolute",

+ recompute_bbox=True,

+ allow_negative_crop=True,

+ ),

+ dict(type="FilterAnnotations", min_gt_bbox_wh=(1e-5, 1e-5), by_mask=True),

+ dict(type="PackDetInputs"),

+]

+

+test_pipeline = [

+ dict(type="LoadImageFromFile", to_float32=True, backend_args=None),

+ dict(type="Resize", scale=(1333, 800), keep_ratio=True),

+ # If you don't have a gt annotation, delete the pipeline

+ dict(type="LoadAnnotations", with_bbox=True, with_mask=True),

+ dict(

+ type="PackDetInputs",

+ meta_keys=("img_id", "img_path", "ori_shape", "img_shape", "scale_factor"),

+ ),

+]

+

+dataset_type = "CocoDataset"

+data_root = "data/coco/"

+

+train_dataloader = dict(

+ dataset=dict(

+ type=dataset_type,

+ ann_file="annotations/instances_train2017.json",

+ data_prefix=dict(img="train2017/"),

+ pipeline=train_pipeline,

+ )

+)

+val_dataloader = dict(

+ dataset=dict(

+ type=dataset_type,

+ ann_file="annotations/instances_val2017.json",

+ data_prefix=dict(img="val2017/"),

+ pipeline=test_pipeline,

+ )

+)

+test_dataloader = val_dataloader

+

+val_evaluator = dict(

+ _delete_=True,

+ type="CocoMetric",

+ ann_file=data_root + "annotations/instances_val2017.json",

+ metric=["bbox", "segm"],

+ format_only=False,

+ backend_args=None,

+)

+test_evaluator = val_evaluator

diff --git a/exhm/detailer/dddetailer/install.py b/exhm/detailer/dddetailer/install.py

new file mode 100644

index 0000000000000000000000000000000000000000..7954c7f36e79dd615a32d03f67620e386606be8b

--- /dev/null

+++ b/exhm/detailer/dddetailer/install.py

@@ -0,0 +1,71 @@

+import sys

+from pathlib import Path

+from textwrap import dedent

+

+from packaging import version

+

+import launch

+from launch import is_installed, run, run_pip

+

+try:

+ skip_install = launch.args.skip_install

+except Exception:

+ skip_install = False

+

+python = sys.executable

+

+def check_ddetailer() -> bool:

+ try:

+ from modules.paths import extensions_dir

+

+ extensions_path = Path(extensions_dir)

+ except ImportError:

+ from modules.paths import data_path

+

+ extensions_path = Path(data_path, "extensions")

+

+ ddetailer_exists = any(p.is_dir() and p.name.startswith("ddetailer") for p in extensions_path.iterdir())

+ return not ddetailer_exists

+

+

+def check_install() -> bool:

+ try:

+ import mmcv

+ import mmdet

+ from mmdet.evaluation import get_classes

+ except Exception:

+ return False

+

+ if not hasattr(mmcv, "__version__") or not hasattr(mmdet, "__version__"):

+ return False

+

+ v1 = version.parse(mmcv.__version__) >= version.parse("2.0.0")

+ v2 = version.parse(mmdet.__version__) >= version.parse("3.0.0")

+ return v1 and v2

+

+

+def install():

+ if not is_installed("pycocotools"):

+ run(f"{python} -m pip install pycocotools", live=True)

+

+ if not is_installed("mim"):

+ run_pip("install openmim", desc="openmim")

+

+ if not check_install():

+ print("Uninstalling mmcv mmdet... (if installed)")

+ run(f'"{python}" -m pip uninstall -y mmcv mmcv-full mmdet mmengine', live=True)

+ print("Installing mmcv mmdet...")

+ run(f'"{python}" -m mim install -U mmcv>=2.0.0 mmdet>=3.0.0', live=True)

+

+

+if not check_ddetailer():

+ message = """

+ [-] dddetailer: Please remove the following:

+ 1. the original ddetailer extension - "stable-diffusion-webui/extensions/ddetailer" folder.

+ 2. original model files - "stable-diffusion-webui/models/mmdet" folder.

+ """

+ message = dedent(message)

+ raise RuntimeError(message)

+

+if not skip_install:

+ install()

diff --git a/exhm/detailer/dddetailer/misc/ddetailer_example_1.png b/exhm/detailer/dddetailer/misc/ddetailer_example_1.png

new file mode 100644

index 0000000000000000000000000000000000000000..7d0d9ec848c4d2c1cddcc0faefd2353c4227d04b

Binary files /dev/null and b/exhm/detailer/dddetailer/misc/ddetailer_example_1.png differ

diff --git a/exhm/detailer/dddetailer/misc/ddetailer_example_2.png b/exhm/detailer/dddetailer/misc/ddetailer_example_2.png

new file mode 100644

index 0000000000000000000000000000000000000000..a6afadc683a6a77e7d9e092ea264b5e11e362f06

Binary files /dev/null and b/exhm/detailer/dddetailer/misc/ddetailer_example_2.png differ

diff --git a/exhm/detailer/dddetailer/misc/ddetailer_example_3.gif b/exhm/detailer/dddetailer/misc/ddetailer_example_3.gif

new file mode 100644

index 0000000000000000000000000000000000000000..74c0affa37784518689f150fb619623847b03d94

Binary files /dev/null and b/exhm/detailer/dddetailer/misc/ddetailer_example_3.gif differ

diff --git a/exhm/detailer/dddetailer/pyproject.toml b/exhm/detailer/dddetailer/pyproject.toml

new file mode 100644

index 0000000000000000000000000000000000000000..4c2570653064ed1657cf84690c65f7f61beb6f75

--- /dev/null

+++ b/exhm/detailer/dddetailer/pyproject.toml

@@ -0,0 +1,29 @@

+[project]

+name = "dddetailer"

+version = "23.8.0"

+description = "An object detection and auto-mask extension for Stable Diffusion web UI."

+authors = [

+ {name = "dowon", email = "ks2515@naver.com"},

+]

+requires-python = ">=3.8,<3.12"

+readme = "README.md"

+license = {text = "MIT"}

+

+[project.urls]

+repository = "https://github.com/Bing-su/dddetailer"

+

+[tool.isort]

+profile = "black"

+known_first_party = ["modules", "launch"]

+

+[tool.black]

+line-length = 120

+

+[tool.ruff]

+select = ["A", "B", "C4", "E", "F", "I001", "ISC", "N", "PIE", "PT", "RET", "SIM", "UP", "W"]

+ignore = ["B008", "B905", "E501"]

+unfixable = ["F401"]

+line-length = 120

+

+[tool.ruff.isort]

+known-first-party = ["modules", "launch"]

diff --git a/exhm/detailer/dddetailer/scripts/dddetailer.py b/exhm/detailer/dddetailer/scripts/dddetailer.py

new file mode 100644

index 0000000000000000000000000000000000000000..dfd3b1f80cbcaa7683bf1ef00216f586e0582e77

--- /dev/null

+++ b/exhm/detailer/dddetailer/scripts/dddetailer.py

@@ -0,0 +1,1057 @@

+import os

+import sys

+from copy import copy

+from pathlib import Path

+from textwrap import dedent

+

+import cv2

+import gradio as gr

+import numpy as np

+from basicsr.utils.download_util import load_file_from_url

+from packaging.version import parse

+from PIL import Image

+

+from launch import run

+from modules import (

+ devices,

+ images,

+ modelloader,

+ processing,

+ script_callbacks,

+ scripts,

+ shared,

+)

+from modules.paths import data_path, models_path

+from modules.processing import (

+ Processed,

+ StableDiffusionProcessingImg2Img,

+ StableDiffusionProcessingTxt2Img,

+)

+from modules.sd_models import model_hash

+from modules.shared import cmd_opts, opts, state

+

+DETECTION_DETAILER = "Detection Detailer"

+dd_models_path = os.path.join(models_path, "mmdet")

+python = sys.executable

+

+

+def check_ddetailer() -> bool:

+ try:

+ from modules.paths import extensions_dir

+

+ extensions_path = Path(extensions_dir)

+ except ImportError:

+ from modules.paths import data_path

+

+ extensions_path = Path(data_path, "extensions")

+

+ ddetailer_exists = any(p.is_dir() and p.name.startswith("ddetailer") for p in extensions_path.iterdir())

+ return not ddetailer_exists

+

+

+def check_install() -> bool:

+ try:

+ import mmcv

+ import mmdet

+ from mmdet.evaluation import get_classes

+ except Exception:

+ return False

+

+ if not hasattr(mmcv, "__version__") or not hasattr(mmdet, "__version__"):

+ return False

+

+ v1 = parse(mmcv.__version__) >= parse("2.0.0")

+ v2 = parse(mmdet.__version__) >= parse("3.0.0")

+ return v1 and v2

+

+

+def list_models(model_path):

+ model_list = modelloader.load_models(model_path=model_path, ext_filter=[".pth"])

+

+ def modeltitle(path, shorthash):

+ abspath = os.path.abspath(path)

+

+ if abspath.startswith(model_path):

+ name = abspath.replace(model_path, "")

+ else:

+ name = os.path.basename(path)

+

+ if name.startswith(("\\", "/")):

+ name = name[1:]

+

+ shortname = os.path.splitext(name.replace("/", "_").replace("\\", "_"))[0]

+

+ return f"{name} [{shorthash}]", shortname

+

+ models = []

+ for filename in model_list:

+ h = model_hash(filename)

+ title, short_model_name = modeltitle(filename, h)

+ models.append(title)

+

+ return models

+

+

+def startup():

+ if not check_ddetailer():

+ message = """

+ [-] dddetailer: dddetailer doesn't work with the original ddetailer extension.

+ dddetailer는 원본 ddetailer 확장이 있을 때 동작하지 않습니다.

+ """

+ raise RuntimeError(dedent(message))

+

+ if not check_install():

+ run(f'"{python}" -m pip uninstall -y mmcv mmcv-full mmdet mmengine')

+ run(f'"{python}" -m pip install openmim', desc="Installing openmim", errdesc="Couldn't install openmim")

+ run(

+ f'"{python}" -m mim install mmcv>=2.0.0 mmdet>=3.0.0',

+ desc="Installing mmdet",

+ errdesc="Couldn't install mmdet",

+ )

+

+ if len(list_models(dd_models_path)) == 0:

+ print("No detection models found, downloading...")

+ bbox_path = os.path.join(dd_models_path, "bbox")

+ segm_path = os.path.join(dd_models_path, "segm")

+ # bbox

+ load_file_from_url(

+ "https://huggingface.co/dustysys/ddetailer/resolve/main/mmdet/bbox/mmdet_anime-face_yolov3.pth",

+ bbox_path,

+ )

+ load_file_from_url(

+ "https://raw.githubusercontent.com/Bing-su/dddetailer/master/config/mmdet_anime-face_yolov3.py",

+ bbox_path,

+ )

+ # segm

+ load_file_from_url(

+ "https://github.com/Bing-su/dddetailer/releases/download/segm/mmdet_dd-person_mask2former.pth",

+ segm_path,

+ )

+ load_file_from_url(

+ "https://raw.githubusercontent.com/Bing-su/dddetailer/master/config/mmdet_dd-person_mask2former.py",

+ segm_path,

+ )

+ load_file_from_url(

+ "https://raw.githubusercontent.com/Bing-su/dddetailer/master/config/mask2former_r50_8xb2-lsj-50e_coco-panoptic.py",

+ segm_path,

+ )

+ load_file_from_url(

+ "https://raw.githubusercontent.com/Bing-su/dddetailer/master/config/coco_panoptic.py",

+ segm_path,

+ )

+

+

+startup()

+

+

+def gr_show(visible=True):

+ return {"visible": visible, "__type__": "update"}

+

+

+def ddetailer_extra_generation_params(

+ dd_prompt,

+ dd_neg_prompt,

+ dd_model_a,

+ dd_conf_a,

+ dd_dilation_factor_a,

+ dd_offset_x_a,

+ dd_offset_y_a,

+ dd_preprocess_b,

+ dd_bitwise_op,

+ dd_model_b,

+ dd_conf_b,

+ dd_dilation_factor_b,

+ dd_offset_x_b,

+ dd_offset_y_b,

+ dd_mask_blur,

+ dd_denoising_strength,

+ dd_inpaint_full_res,

+ dd_inpaint_full_res_padding,

+ dd_cfg_scale,

+):

+ params = {

+ "DDetailer prompt": dd_prompt,

+ "DDetailer neg prompt": dd_neg_prompt,

+ "DDetailer model a": dd_model_a,

+ "DDetailer conf a": dd_conf_a,

+ "DDetailer dilation a": dd_dilation_factor_a,

+ "DDetailer offset x a": dd_offset_x_a,

+ "DDetailer offset y a": dd_offset_y_a,

+ "DDetailer preprocess b": dd_preprocess_b,

+ "DDetailer bitwise": dd_bitwise_op,

+ "DDetailer model b": dd_model_b,

+ "DDetailer conf b": dd_conf_b,

+ "DDetailer dilation b": dd_dilation_factor_b,

+ "DDetailer offset x b": dd_offset_x_b,

+ "DDetailer offset y b": dd_offset_y_b,

+ "DDetailer mask blur": dd_mask_blur,

+ "DDetailer denoising": dd_denoising_strength,

+ "DDetailer inpaint full": dd_inpaint_full_res,

+ "DDetailer inpaint padding": dd_inpaint_full_res_padding,

+ "DDetailer cfg": dd_cfg_scale,

+ "Script": DETECTION_DETAILER,

+ }

+ if not dd_prompt:

+ params.pop("DDetailer prompt")

+ if not dd_neg_prompt:

+ params.pop("DDetailer neg prompt")

+ return params

+

+

+class DetectionDetailerScript(scripts.Script):

+ def title(self):

+ return DETECTION_DETAILER

+

+ def show(self, is_img2img):

+ return True

+

+ def ui(self, is_img2img):

+ import modules.ui

+

+ model_list = list_models(dd_models_path)

+ model_list.insert(0, "None")

+ if is_img2img:

+ info = gr.HTML(

+ 'Recommended settings: Use from inpaint tab, inpaint at full res ON, denoise < 0.5

'

+ )

+ else:

+ info = gr.HTML("")

+ dd_prompt = None

+ with gr.Group():

+ if not is_img2img:

+ with gr.Row():

+ dd_prompt = gr.Textbox(

+ label="dd_prompt",

+ elem_id="t2i_dd_prompt",

+ show_label=False,

+ lines=3,

+ placeholder="Ddetailer Prompt",

+ )

+

+ with gr.Row():

+ dd_neg_prompt = gr.Textbox(

+ label="dd_neg_prompt",

+ elem_id="t2i_dd_neg_prompt",

+ show_label=False,

+ lines=2,

+ placeholder="Ddetailer Negative prompt",

+ )

+

+ with gr.Row():

+ dd_model_a = gr.Dropdown(

+ label="Primary detection model (A)",

+ choices=model_list,

+ value="None",

+ visible=True,

+ type="value",

+ )

+

+ with gr.Row():

+ dd_conf_a = gr.Slider(

+ label="Detection confidence threshold % (A)",

+ minimum=0,

+ maximum=100,

+ step=1,

+ value=30,

+ visible=True,

+ )

+ dd_dilation_factor_a = gr.Slider(

+ label="Dilation factor (A)",

+ minimum=0,

+ maximum=255,

+ step=1,

+ value=4,

+ visible=True,

+ )

+

+ with gr.Row():

+ dd_offset_x_a = gr.Slider(

+ label="X offset (A)",

+ minimum=-200,

+ maximum=200,

+ step=1,

+ value=0,

+ visible=True,

+ )

+ dd_offset_y_a = gr.Slider(

+ label="Y offset (A)",

+ minimum=-200,

+ maximum=200,

+ step=1,

+ value=0,

+ visible=True,

+ )

+

+ with gr.Row():

+ dd_preprocess_b = gr.Checkbox(

+ label="Inpaint model B detections before model A runs",

+ value=False,

+ visible=True,

+ )

+ dd_bitwise_op = gr.Radio(

+ label="Bitwise operation",

+ choices=["None", "A&B", "A-B"],

+ value="None",

+ visible=True,

+ )

+

+ br = gr.HTML("Recommended settings: Use from inpaint tab, inpaint at full res ON, denoise <0.5

")

+ else:

+ info = gr.HTML("")

+ with gr.Group():

+ with gr.Row():

+ dd_model_a = gr.Dropdown(label="Primary detection model (A)", choices=model_list,value = "None", visible=True, type="value")

+

+ with gr.Row():

+ dd_conf_a = gr.Slider(label='Detection confidence threshold % (A)', minimum=0, maximum=100, step=1, value=30, visible=False)

+ dd_dilation_factor_a = gr.Slider(label='Dilation factor (A)', minimum=0, maximum=255, step=1, value=4, visible=False)

+

+ with gr.Row():

+ dd_offset_x_a = gr.Slider(label='X offset (A)', minimum=-200, maximum=200, step=1, value=0, visible=False)

+ dd_offset_y_a = gr.Slider(label='Y offset (A)', minimum=-200, maximum=200, step=1, value=0, visible=False)

+

+ with gr.Row():

+ dd_preprocess_b = gr.Checkbox(label='Inpaint model B detections before model A runs', value=False, visible=False)

+ dd_bitwise_op = gr.Radio(label='Bitwise operation', choices=['None', 'A&B', 'A-B'], value="None", visible=False)

+

+ br = gr.HTML("I2I All process target script

')

+ enable_script_names = gr.Textbox(label="Enable Script(Extension)", elem_id="enable_script_names", value='dynamic_thresholding;dynamic_prompting',show_label=True, lines=1, placeholder="Extension python file name(ex - dynamic_thresholding;dynamic_prompting)")

+

+ with gr.Accordion("Outpainting", open=False, elem_id='ddsd_outpaint_acc'):

+ with gr.Column():

+ outpaint_target_info = gr.HTML('I2I Outpainting

')

+ disable_outpaint = gr.Checkbox(label='Disable Outpaint', elem_id='disable_outpaint', value=True, visible=True)

+ outpaint_sample = gr.Dropdown(label='Outpaint Sampling', elem_id='outpaint_sample', choices=sample_list, value=sample_list[0], visible=False, type="value")

+ with gr.Tabs(elem_id = 'outpaint_arguments', visible=False) as outpaint_tabs_acc:

+ for outpaint_index in range(shared.opts.data.get('outpaint_count', 1)):

+ with gr.Tab(f'Outpaint {outpaint_index + 1} Argument', elem_id=f'outpaint_{outpaint_index+1}_argument_tab'):

+ outpaint_pixels = gr.Slider(label=f'Outpaint {outpaint_index+1} Pixels', minimum=0, maximum=256, value=64, step=16)

+ outpaint_direction = gr.Radio(choices=['None', 'Left','Right','Up','Down'], value='None', label=f'Outpaint {outpaint_index+1} Direction')

+ with gr.Row():

+ outpaint_positive = gr.Textbox(label=f'Positive {outpaint_index+1} Prompt', show_label=True, lines=2, placeholder='Outpaint Positive Prompt(Empty is Original)')

+ outpaint_negative = gr.Textbox(label=f'Negative {outpaint_index+1} Prompt', show_label=True, lines=2, placeholder='Outpaint Negative Prompt(Empty is Original)')

+ outpaint_denoise = gr.Slider(label=f'Outpaint {outpaint_index+1} Denoise', minimum=0, maximum=1.0, step=0.01, value=0.8)

+ outpaint_cfg = gr.Slider(label=f'Outpaint {outpaint_index+1} CFG(0 To Original)', minimum=0, maximum=500, step=0.5, value=0)

+ outpaint_steps = gr.Slider(label=f'Outpaint {outpaint_index+1} Steps(0 To Original)', minimum=0, maximum=150, step=1, value=0)

+ outpaint_positive_list.append(outpaint_positive)

+ outpaint_negative_list.append(outpaint_negative)

+ outpaint_denoise_list.append(outpaint_denoise)

+ outpaint_cfg_list.append(outpaint_cfg)

+ outpaint_steps_list.append(outpaint_steps)

+ outpaint_pixels_list.append(outpaint_pixels)

+ outpaint_direction_list.append(outpaint_direction)

+ outpaint_tabs = outpaint_tabs_acc

+ outpaint_mask_blur = gr.Slider(label='Outpaint Blur', elem_id='outpaint_mask_blur', minimum=0, maximum=128, step=4, value=8, visible=False)

+

+ with gr.Accordion("Upscaler", open=False, elem_id="ddsd_upscaler_acc"):

+ with gr.Column():

+ sd_upscale_target_info = gr.HTML('I2I Upscaler Option

')

+ disable_upscaler = gr.Checkbox(label='Disable Upscaler', elem_id='disable_upscaler', value=True, visible=True)

+ ddetailer_before_upscaler = gr.Checkbox(label='Upscaler before running detailer', elem_id='upscaler_before_running_detailer', value=False, visible=False)

+ with gr.Row():

+ upscaler_sample = gr.Dropdown(label='Upscaler Sampling', elem_id='upscaler_sample', choices=sample_list, value=sample_list[0], visible=False, type="value")

+ upscaler_index = gr.Dropdown(label='Upscaler', elem_id='upscaler_index', choices=[x.name for x in shared.sd_upscalers], value=shared.sd_upscalers[-1].name, type="index", visible=False)

+ with gr.Row():

+ upscaler_ckpt = gr.Dropdown(label='Upscaler CKPT Model', elem_id=f'upscaler_detect_ckpt', choices=ckpt_list, value=ckpt_list[0], visible=False)

+ upscaler_vae = gr.Dropdown(label='Upscaler VAE Model', elem_id=f'upscaler_detect_vae', choices=vae_list, value=vae_list[0], visible=False)

+ scalevalue = gr.Slider(minimum=1, maximum=16, step=0.5, elem_id='upscaler_scalevalue', label='Resize', value=2, visible=False)

+ overlap = gr.Slider(minimum=0, maximum=256, step=32, elem_id='upscaler_overlap', label='Tile overlap', value=32, visible=False)

+ with gr.Row():

+ rewidth = gr.Slider(minimum=0, maximum=1024, step=64, elem_id='upscaler_rewidth', label='Width(0 to No Inpainting)', value=512, visible=False)

+ reheight = gr.Slider(minimum=0, maximum=1024, step=64, elem_id='upscaler_reheight', label='Height(0 to No Inpainting)', value=512, visible=False)

+ denoising_strength = gr.Slider(minimum=0, maximum=1.0, step=0.01, elem_id='upscaler_denoising', label='Denoising strength', value=0.1, visible=False)

+

+ with gr.Accordion("DINO Detect", open=False, elem_id="ddsd_dino_detect_acc"):

+ with gr.Column():

+ ddetailer_target_info = gr.HTML('I2I Detection Detailer Option

')

+ disable_detailer = gr.Checkbox(label='Disable Detection Detailer', elem_id='disable_detailer',value=True, visible=True)

+ disable_mask_paint_mode = gr.Checkbox(label='Disable I2I Mask Paint Mode', value=True, visible=False)

+ inpaint_mask_mode = gr.Radio(choices=['Inner', 'Outer'], value='Inner', label='Inpaint Mask Paint Mode', visible=False, show_label=True)

+ detailer_sample = gr.Dropdown(label='Detailer Sampling', elem_id='detailer_sample', choices=sample_list, value=sample_list[0], visible=False, type="value")

+ with gr.Row():

+ detailer_sam_model = gr.Dropdown(label='Detailer SAM Model', elem_id='detailer_sam_model', choices=sam_model_list(), value=sam_model_list()[0], visible=False)

+ detailer_dino_model = gr.Dropdown(label='Detailer DINO Model', elem_id='detailer_dino_model', choices=dino_model_list(), value=dino_model_list()[0], visible=False)

+ with gr.Tabs(elem_id = 'dino_detct_arguments', visible=False) as dino_tabs_acc:

+ for index in range(shared.opts.data.get('dino_detect_count', 2)):

+ with gr.Tab(f'DINO {index + 1} Argument', elem_id=f'dino_{index + 1}_argument_tab'):

+ with gr.Row():

+ dino_detection_ckpt = gr.Dropdown(label='Detailer CKPT Model', elem_id=f'detailer_detect_ckpt_{index+1}', choices=ckpt_list, value=ckpt_list[0], visible=True)

+ dino_detection_vae = gr.Dropdown(label='Detailer VAE Model', elem_id=f'detailer_detect_vae_{index+1}', choices=vae_list, value=vae_list[0], visible=True)

+ dino_detection_prompt = gr.Textbox(label=f"Detect {index + 1} Prompt", elem_id=f"detailer_detect_prompt_{index + 1}", show_label=True, lines=2, placeholder="Detect Token Prompt(ex - face:level(0-2):threshold(0-1):dilation(0-128))", visible=True)

+ with gr.Row():

+ dino_detection_positive = gr.Textbox(label=f"Positive {index + 1} Prompt", elem_id=f"detailer_detect_positive_{index + 1}", show_label=True, lines=2, placeholder="Detect Mask Inpaint Positive(ex - perfect anatomy)", visible=True)

+ dino_detection_negative = gr.Textbox(label=f"Negative {index + 1} Prompt", elem_id=f"detailer_detect_negative_{index + 1}", show_label=True, lines=2, placeholder="Detect Mask Inpaint Negative(ex - nsfw)", visible=True)

+ dino_detection_denoise = gr.Slider(minimum=0, maximum=1.0, step=0.01, elem_id=f'dino_detect_{index+1}_denoising', label=f'DINO {index + 1} Denoising strength', value=0.4, visible=True)

+ dino_detection_cfg = gr.Slider(minimum=0, maximum=500, step=0.5, elem_id=f'dino_detect_{index+1}_cfg_scale', label=f'DINO {index + 1} CFG Scale(0 to Origin)', value=0, visible=True)

+ dino_detection_steps = gr.Slider(minimum=0, maximum=150, step=1, elem_id=f'dino_detect_{index+1}_steps', label=f'DINO {index + 1} Steps(0 to Origin)', value=0, visible=True)

+ dino_detection_spliter_disable = gr.Checkbox(label=f'Disable DINO {index + 1} Detect Split Mask', value=True, visible=True)

+ dino_detection_spliter_remove_area = gr.Slider(minimum=0, maximum=800, step=8, elem_id=f'dino_detect_{index+1}_remove_area', label=f'Remove {index + 1} Area', value=16, visible=True)

+ dino_detection_clip_skip = gr.Slider(minimum=0, maximum=10, step=1, elem_id=f'dino_detect_{index+1}_clip_skip', label=f'Clip skip {index + 1} Inpaint(0 to Origin)', value=0, visible=True)

+ dino_detection_ckpt_list.append(dino_detection_ckpt)

+ dino_detection_vae_list.append(dino_detection_vae)

+ dino_detection_prompt_list.append(dino_detection_prompt)

+ dino_detection_positive_list.append(dino_detection_positive)

+ dino_detection_negative_list.append(dino_detection_negative)

+ dino_detection_denoise_list.append(dino_detection_denoise)

+ dino_detection_cfg_list.append(dino_detection_cfg)

+ dino_detection_steps_list.append(dino_detection_steps)

+ dino_detection_spliter_disable_list.append(dino_detection_spliter_disable)

+ dino_detection_spliter_remove_area_list.append(dino_detection_spliter_remove_area)

+ dino_detection_clip_skip_list.append(dino_detection_clip_skip)

+ dino_tabs = dino_tabs_acc

+ dino_full_res_inpaint = gr.Checkbox(label='Inpaint at full resolution ', elem_id='detailer_full_res', value=True, visible = False)

+ with gr.Row():

+ dino_inpaint_padding = gr.Slider(label='Inpaint at full resolution padding, pixels ', elem_id='detailer_padding', minimum=0, maximum=256, step=4, value=0, visible=False)

+ detailer_mask_blur = gr.Slider(label='Detailer Blur', elem_id='detailer_mask_blur', minimum=0, maximum=64, step=1, value=4, visible=False)

+

+ with gr.Accordion("Postprocessing", open=False, elem_id='ddsd_post_processing'):

+ with gr.Column():

+ postprocess_info = gr.HTML('Postprocessing to the final image

')

+ disable_postprocess = gr.Checkbox(label='Disable PostProcess', elem_id='disable_postprocess',value=True, visible=True)

+ with gr.Tabs(elem_id = 'ddsd_postprocess_arguments', visible=False) as postprocess_tabs_acc:

+ for index in range(shared.opts.data.get('postprocessing_count', 1)):

+ with gr.Tab(f'Postprocessing {index + 1} Argument', elem_id=f'postprocessing_{index + 1}_argument_tab'):

+ pp_type = gr.Dropdown(label=f'Postprocessing type {index+1}', elem_id=f'postprocessing_{index+1}', choices=pp_types, value=pp_types[0], visible=True)

+ pp_saturation_strength = gr.Slider(label=f'Saturation strength {index+1}', minimum=0, maximum=3, step=0.01, value=1.1, visible=False)

+ pp_sharpening_radius = gr.Slider(label=f'Sharpening radius {index+1}', minimum=0, maximum=50, step=1, value=2, visible=False)

+ pp_sharpening_percent = gr.Slider(label=f'Sharpening percent {index+1}', minimum=0, maximum=300, step=1, value=150, visible=False)

+ pp_sharpening_threshold = gr.Slider(label=f'Sharpening threshold {index+1}', minimum=0, maximum=10, step=0.01, value=3, visible=False)

+ pp_gaussian_radius = gr.Slider(label=f'Gaussian Blur radius {index+1}', minimum=0, maximum=50, step=1, value=2, visible=False)

+ pp_brightness_strength = gr.Slider(label=f'Brightness strength {index+1}', minimum=0, maximum=5, step=0.01, value=1.1, visible=False)

+ pp_color_strength = gr.Slider(label=f'Color strength {index+1}', minimum=0, maximum=5, step=0.01, value=1.1, visible=False)

+ pp_contrast_strength = gr.Slider(label=f'Contrast strength {index+1}', minimum=0, maximum=5, step=0.01, value=1.1, visible=False)

+ pp_hue_strength = gr.Slider(label=f'Hue strength {index+1}', minimum=-1, maximum=1, step=0.01, value=0, visible=False)

+ pp_bilateral_sigmaC = gr.Slider(label=f'Bilateral sigmaC {index+1}', minimum=0, maximum=100, step=1, value=10, visible=False)

+ pp_bilateral_sigmaS = gr.Slider(label=f'Bilateral sigmaS {index+1}', minimum=0, maximum=30, step=1, value=10, visible=False)

+ pp_color_tint_type_name = gr.Radio(label=f'Color tint type name {index+1}',choices=['warm', 'cool'], value='warm', visible=False)

+ pp_color_tint_lut_name = gr.Dropdown(label=f'Color tint lut name {index+1}',choices=lut_model_list(), value=lut_model_list()[0], visible=False)

+ pp_type_list.append(pp_type)

+ pp_saturation_strength_list.append(pp_saturation_strength)

+ pp_sharpening_radius_list.append(pp_sharpening_radius)

+ pp_sharpening_percent_list.append(pp_sharpening_percent)

+ pp_sharpening_threshold_list.append(pp_sharpening_threshold)

+ pp_gaussian_radius_list.append(pp_gaussian_radius)

+ pp_brightness_strength_list.append(pp_brightness_strength)

+ pp_color_strength_list.append(pp_color_strength)

+ pp_contrast_strength_list.append(pp_contrast_strength)

+ pp_hue_strength_list.append(pp_hue_strength)

+ pp_bilateral_sigmaC_list.append(pp_bilateral_sigmaC)

+ pp_bilateral_sigmaS_list.append(pp_bilateral_sigmaS)

+ pp_color_tint_type_name_list.append(pp_color_tint_type_name)

+ pp_color_tint_lut_name_list.append(pp_color_tint_lut_name)

+ def pp_type_change_func(pp_saturation_strength,pp_sharpening_radius,pp_sharpening_percent,pp_sharpening_threshold,pp_gaussian_radius,pp_brightness_strength,pp_color_strength,pp_contrast_strength,pp_hue_strength,pp_bilateral_sigmaC,pp_bilateral_sigmaS,pp_color_tint_type_name,pp_color_tint_lut_name):

+ saturation_strength, sharpening_radius, sharpening_percent, sharpening_threshold, gaussian_radius, brightness_strength, color_strength, contrast_strength, hue_strength, bilateral_sigmaC, bilateral_sigmaS, color_tint_type_name, color_tint_lut_name = pp_saturation_strength,pp_sharpening_radius,pp_sharpening_percent,pp_sharpening_threshold,pp_gaussian_radius,pp_brightness_strength,pp_color_strength,pp_contrast_strength,pp_hue_strength,pp_bilateral_sigmaC,pp_bilateral_sigmaS,pp_color_tint_type_name,pp_color_tint_lut_name

+ return lambda data:{

+ saturation_strength:gr_show(data == 'saturation'),

+ sharpening_radius:gr_show(data == 'sharpening'),

+ sharpening_percent:gr_show(data == 'sharpening'),

+ sharpening_threshold:gr_show(data == 'sharpening'),

+ gaussian_radius:gr_show(data == 'gaussian blur'),

+ brightness_strength:gr_show(data == 'brightness'),

+ color_strength:gr_show(data == 'color'),

+ contrast_strength:gr_show(data == 'contrast'),

+ hue_strength:gr_show(data == 'hue'),

+ bilateral_sigmaC:gr_show(data == 'bilateral'),

+ bilateral_sigmaS:gr_show(data == 'bilateral'),

+ color_tint_type_name:gr_show(data == 'color tint(type)'),

+ color_tint_lut_name:gr_show(data == 'color tint(lut)')

+ }

+ def pp_type_change_func2(pp_saturation_strength,pp_sharpening_radius,pp_sharpening_percent,pp_sharpening_threshold,pp_gaussian_radius,pp_brightness_strength,pp_color_strength,pp_contrast_strength,pp_hue_strength,pp_bilateral_sigmaC,pp_bilateral_sigmaS,pp_color_tint_type_name,pp_color_tint_lut_name):

+ saturation_strength, sharpening_radius, sharpening_percent, sharpening_threshold, gaussian_radius, brightness_strength, color_strength, contrast_strength, hue_strength, bilateral_sigmaC, bilateral_sigmaS, color_tint_type_name, color_tint_lut_name = pp_saturation_strength,pp_sharpening_radius,pp_sharpening_percent,pp_sharpening_threshold,pp_gaussian_radius,pp_brightness_strength,pp_color_strength,pp_contrast_strength,pp_hue_strength,pp_bilateral_sigmaC,pp_bilateral_sigmaS,pp_color_tint_type_name,pp_color_tint_lut_name

+ return [saturation_strength, sharpening_radius, sharpening_percent, sharpening_threshold, gaussian_radius, brightness_strength, color_strength, contrast_strength, hue_strength, bilateral_sigmaC, bilateral_sigmaS, color_tint_type_name, color_tint_lut_name]

+ pp_type.change(

+ pp_type_change_func(pp_saturation_strength,pp_sharpening_radius,pp_sharpening_percent,pp_sharpening_threshold,pp_gaussian_radius,pp_brightness_strength,pp_color_strength,pp_contrast_strength,pp_hue_strength,pp_bilateral_sigmaC,pp_bilateral_sigmaS,pp_color_tint_type_name,pp_color_tint_lut_name),

+ inputs=[pp_type],

+ outputs=pp_type_change_func2(pp_saturation_strength,pp_sharpening_radius,pp_sharpening_percent,pp_sharpening_threshold,pp_gaussian_radius,pp_brightness_strength,pp_color_strength,pp_contrast_strength,pp_hue_strength,pp_bilateral_sigmaC,pp_bilateral_sigmaS,pp_color_tint_type_name,pp_color_tint_lut_name)

+ )

+ postprocess_tabs = postprocess_tabs_acc

+

+ with gr.Accordion("Watermark", open=False, elem_id='ddsd_watermark_option'):

+ with gr.Column():

+ watermark_info = gr.HTML('Add a watermark to the final saved image

')

+ disable_watermark = gr.Checkbox(label='Disable Watermark', elem_id='disable_watermark',value=True, visible=True)

+ with gr.Tabs(elem_id='watermark_tabs', visible=False) as watermark_tabs_acc:

+ for index in range(shared.opts.data.get('watermark_count', 1)):

+ with gr.Tab(f'Watermark {index + 1} Argument', elem_id=f'watermark_{index+1}_argument_tab'):

+ watermark_type = gr.Radio(choices=['Text','Image'], value='Text', label=f'Watermark {index+1} text')

+ watermark_position = gr.Dropdown(choices=['Left','Left-Top','Top','Right-Top','Right','Right-Bottom','Bottom','Left-Bottom','Center'], value='Center', label=f'Watermark {index+1} Position', elem_id=f'watermark_{index+1}_position')

+ with gr.Column():

+ watermark_image = gr.Image(label=f"Watermark {index+1} Upload image", visible=False)

+ with gr.Row():

+ watermark_image_size_width = gr.Slider(label=f'Watermark {index+1} Width', visible=False, minimum=50, maximum=500, step=10, value=100)

+ watermark_image_size_height = gr.Slider(label=f'Watermark {index+1} Height', visible=False, minimum=50, maximum=500, step=10, value=100)

+ with gr.Column():

+ watermark_text = gr.Textbox(placeholder='watermark text - ex) Copyright © NeoGraph. All Rights Reserved.', visible=True, value='')

+ with gr.Row():

+ watermark_text_color = gr.ColorPicker(label=f'Watermark {index+1} Color')

+ watermark_text_font = gr.Dropdown(label=f'Watermark {index+1} Fonts', choices=fonts_list, value=fonts_list[0])

+ watermark_text_size = gr.Slider(label=f'Watermark {index+1} Size', visible=True, minimum=10, maximum=500, step=1, value=50)

+ watermark_padding = gr.Slider(label=f'Watermark {index+1} Padding', visible=True, minimum=0, maximum=200, step=1, value=10)

+ watermark_alpha = gr.Slider(label=f'Watermark {index+1} Alpha', visible=True, minimum=0, maximum=1, step=0.01, value=0.4)

+ watermark_type_list.append(watermark_type)

+ watermark_position_list.append(watermark_position)

+ watermark_image_list.append(watermark_image)

+ watermark_image_size_width_list.append(watermark_image_size_width)

+ watermark_image_size_height_list.append(watermark_image_size_height)

+ watermark_text_list.append(watermark_text)

+ watermark_text_color_list.append(watermark_text_color)

+ watermark_text_font_list.append(watermark_text_font)

+ watermark_text_size_list.append(watermark_text_size)

+ watermark_padding_list.append(watermark_padding)

+ watermark_alpha_list.append(watermark_alpha)

+ def watermark_type_change_func(watermark_image, watermark_image_size_width, watermark_image_size_height, watermark_text, watermark_text_color, watermark_text_font, watermark_text_size):

+ image, image_size_width, iamge_size_height, text, text_color, text_font, text_size = watermark_image, watermark_image_size_width, watermark_image_size_height, watermark_text, watermark_text_color, watermark_text_font, watermark_text_size

+ return lambda data:{

+ image:gr_show(data == 'Image'),

+ image_size_width:gr_show(data == 'Image'),

+ iamge_size_height:gr_show(data == 'Image'),

+ text:gr_show(data == 'Text'),

+ text_color:gr_show(data == 'Text'),

+ text_font:gr_show(data == 'Text'),

+ text_size:gr_show(data == 'Text')

+ }

+ def watermark_type_change_func2(watermark_image, watermark_image_size_width, watermark_image_size_height, watermark_text, watermark_text_color, watermark_text_font, watermark_text_size):

+ image, image_size_width, iamge_size_height, text, text_color, text_font, text_size = watermark_image, watermark_image_size_width, watermark_image_size_height, watermark_text, watermark_text_color, watermark_text_font, watermark_text_size

+ return [image, image_size_width, iamge_size_height, text, text_color, text_font, text_size]

+ watermark_type.change(

+ watermark_type_change_func(watermark_image,watermark_image_size_width,watermark_image_size_height,watermark_text,watermark_text_color,watermark_text_font,watermark_text_size),

+ inputs=[watermark_type],

+ outputs=watermark_type_change_func2(watermark_image, watermark_image_size_width, watermark_image_size_height, watermark_text, watermark_text_color, watermark_text_font, watermark_text_size)

+ )

+ watermark_tabs = watermark_tabs_acc

+ disable_outpaint.change(

+ lambda disable:{

+ outpaint_sample:gr_show(not disable),

+ outpaint_tabs:gr_show(not disable),

+ outpaint_mask_blur:gr_show(not disable)

+ },

+ inputs=[disable_outpaint],

+ outputs=[outpaint_sample, outpaint_tabs, outpaint_mask_blur]

+ )

+ disable_watermark.change(

+ lambda disable:{

+ watermark_tabs:gr_show(not disable)

+ },

+ inputs=[disable_watermark],

+ outputs=watermark_tabs

+ )

+ disable_postprocess.change(

+ lambda disable:{

+ postprocess_tabs:gr_show(not disable)

+ },

+ inputs=[disable_postprocess],

+ outputs=postprocess_tabs

+ )

+ disable_upscaler.change(

+ lambda disable: {

+ ddetailer_before_upscaler:gr_show(not disable),

+ upscaler_sample:gr_show(not disable),

+ upscaler_index:gr_show(not disable),

+ upscaler_ckpt:gr_show(not disable),

+ upscaler_vae:gr_show(not disable),

+ scalevalue:gr_show(not disable),

+ overlap:gr_show(not disable),

+ rewidth:gr_show(not disable),

+ reheight:gr_show(not disable),

+ denoising_strength:gr_show(not disable),

+ },

+ inputs= [disable_upscaler],

+ outputs =[ddetailer_before_upscaler, upscaler_sample, upscaler_index, upscaler_ckpt, upscaler_vae, scalevalue, overlap, rewidth, reheight, denoising_strength]

+ )

+

+ disable_mask_paint_mode.change(

+ lambda disable:{

+ inpaint_mask_mode:gr_show(is_img2img and not disable)

+ },

+ inputs=[disable_mask_paint_mode],

+ outputs=inpaint_mask_mode

+ )

+

+ disable_detailer.change(

+ lambda disable, in_disable:{

+ disable_mask_paint_mode:gr_show(not disable and is_img2img),

+ inpaint_mask_mode:gr_show(not disable and is_img2img and not in_disable),

+ detailer_sample:gr_show(not disable),

+ detailer_sam_model:gr_show(not disable),

+ detailer_dino_model:gr_show(not disable),

+ dino_full_res_inpaint:gr_show(not disable),

+ dino_inpaint_padding:gr_show(not disable),

+ detailer_mask_blur:gr_show(not disable),

+ dino_tabs:gr_show(not disable)

+ },

+ inputs=[disable_detailer, disable_mask_paint_mode],

+ outputs=[

+ disable_mask_paint_mode,

+ inpaint_mask_mode,

+ detailer_sample,

+ detailer_sam_model,

+ detailer_dino_model,

+ dino_full_res_inpaint,

+ dino_inpaint_padding,

+ detailer_mask_blur,

+ dino_tabs

+ ]

+ )

+

+ ret += [enable_script_names]

+ ret += [disable_watermark, disable_postprocess]

+ ret += [disable_upscaler, ddetailer_before_upscaler, scalevalue, upscaler_sample, overlap, upscaler_index, rewidth, reheight, denoising_strength, upscaler_ckpt, upscaler_vae]

+ ret += [disable_detailer, disable_mask_paint_mode, inpaint_mask_mode, detailer_sample, detailer_sam_model, detailer_dino_model, dino_full_res_inpaint, dino_inpaint_padding, detailer_mask_blur]

+ ret += [disable_outpaint, outpaint_sample, outpaint_mask_blur]

+ ret += dino_detection_ckpt_list + \

+ dino_detection_vae_list + \

+ dino_detection_prompt_list + \

+ dino_detection_positive_list + \

+ dino_detection_negative_list + \

+ dino_detection_denoise_list + \

+ dino_detection_cfg_list + \

+ dino_detection_steps_list + \

+ dino_detection_spliter_disable_list + \

+ dino_detection_spliter_remove_area_list + \

+ dino_detection_clip_skip_list + \

+ watermark_type_list + \

+ watermark_position_list + \

+ watermark_image_list + \

+ watermark_image_size_width_list + \

+ watermark_image_size_height_list + \

+ watermark_text_list + \

+ watermark_text_color_list + \

+ watermark_text_font_list + \

+ watermark_text_size_list + \

+ watermark_padding_list + \

+ watermark_alpha_list + \

+ pp_type_list + \

+ pp_saturation_strength_list + \

+ pp_sharpening_radius_list + \

+ pp_sharpening_percent_list + \

+ pp_sharpening_threshold_list + \

+ pp_gaussian_radius_list + \

+ pp_brightness_strength_list + \

+ pp_color_strength_list + \

+ pp_contrast_strength_list + \

+ pp_hue_strength_list + \

+ pp_bilateral_sigmaC_list + \

+ pp_bilateral_sigmaS_list + \

+ pp_color_tint_type_name_list + \

+ pp_color_tint_lut_name_list + \

+ outpaint_positive_list + \

+ outpaint_negative_list + \

+ outpaint_denoise_list + \

+ outpaint_cfg_list + \

+ outpaint_steps_list + \

+ outpaint_pixels_list + \

+ outpaint_direction_list

+

+ def ds(*args):

+ args = list(args)

+ ddsd_save_path = args[0]

+ args = args[1:]

+ enable_script_names,disable_watermark,disable_postprocess,disable_upscaler,ddetailer_before_upscaler,scalevalue,upscaler_sample,overlap,upscaler_index,rewidth,reheight,denoising_strength,upscaler_ckpt,upscaler_vae,disable_detailer,disable_mask_paint_mode,inpaint_mask_mode,detailer_sample,detailer_sam_model,detailer_dino_model,dino_full_res_inpaint,dino_inpaint_padding,detailer_mask_blur,disable_outpaint, outpaint_sample, outpaint_mask_blur = args[:26]

+ result = {}

+ result['enable_script_names'] = enable_script_names

+ result['disable_watermark'] = disable_watermark

+ result['disable_postprocess'] = disable_postprocess

+ result['disable_upscaler'] = disable_upscaler

+ result['ddetailer_before_upscaler'] = ddetailer_before_upscaler

+ result['scalevalue'] = scalevalue

+ result['upscaler_sample'] = upscaler_sample

+ result['overlap'] = overlap

+ result['upscaler_index'] = shared.sd_upscalers[upscaler_index].name

+ result['rewidth'] = rewidth

+ result['reheight'] = reheight

+ result['denoising_strength'] = denoising_strength

+ result['upscaler_ckpt'] = upscaler_ckpt

+ result['upscaler_vae'] = upscaler_vae

+ result['disable_detailer'] = disable_detailer

+ result['disable_mask_paint_mode'] = disable_mask_paint_mode

+ result['inpaint_mask_mode'] = inpaint_mask_mode

+ result['detailer_sample'] = detailer_sample

+ result['detailer_sam_model'] = detailer_sam_model

+ result['detailer_dino_model'] = detailer_dino_model

+ result['dino_full_res_inpaint'] = dino_full_res_inpaint

+ result['dino_inpaint_padding'] = dino_inpaint_padding

+ result['detailer_mask_blur'] = detailer_mask_blur

+ result['disable_outpaint'] = disable_outpaint

+ result['outpaint_sample'] = outpaint_sample

+ result['outpaint_mask_blur'] = outpaint_mask_blur

+ args = args[26:]

+ result['dino_detect_count'] = shared.opts.data.get('dino_detect_count', 2)

+ for index in range(result['dino_detect_count']):

+ result[f'dino_detection_ckpt_{index+1}'] = args[index + result['dino_detect_count'] * 0]

+ result[f'dino_detection_vae_{index+1}'] = args[index + result['dino_detect_count'] * 1]

+ result[f'dino_detection_prompt_{index+1}'] = args[index + result['dino_detect_count'] * 2]

+ result[f'dino_detection_positive_{index+1}'] = args[index + result['dino_detect_count'] * 3]

+ result[f'dino_detection_negative_{index+1}'] = args[index + result['dino_detect_count'] * 4]

+ result[f'dino_detection_denoise_{index+1}'] = args[index + result['dino_detect_count'] * 5]

+ result[f'dino_detection_cfg_{index+1}'] = args[index + result['dino_detect_count'] * 6]

+ result[f'dino_detection_steps_{index+1}'] = args[index + result['dino_detect_count'] * 7]

+ result[f'dino_detection_spliter_disable_{index+1}'] = args[index + result['dino_detect_count'] * 8]

+ result[f'dino_detection_spliter_remove_area_{index+1}'] = args[index + result['dino_detect_count'] * 9]

+ result[f'dino_detection_clip_skip_{index+1}'] = args[index + result['dino_detect_count'] * 10]

+ args = args[result['dino_detect_count'] * 11:]

+ result['watermark_count'] = shared.opts.data.get('watermark_count', 1)

+ for index in range(result['watermark_count']):

+ result[f'watermark_type_{index+1}'] = args[index + result['watermark_count'] * 0]

+ result[f'watermark_position_{index+1}'] = args[index + result['watermark_count'] * 1]

+ result[f'watermark_image_{index+1}'] = None

+ result[f'watermark_image_size_width_{index+1}'] = args[index + result['watermark_count'] * 3]

+ result[f'watermark_image_size_height_{index+1}'] = args[index + result['watermark_count'] * 4]

+ result[f'watermark_text_{index+1}'] = args[index + result['watermark_count'] * 5]

+ result[f'watermark_text_color_{index+1}'] = args[index + result['watermark_count'] * 6]

+ result[f'watermark_text_font_{index+1}'] = args[index + result['watermark_count'] * 7]

+ result[f'watermark_text_size_{index+1}'] = args[index + result['watermark_count'] * 8]

+ result[f'watermark_padding_{index+1}'] = args[index + result['watermark_count'] * 9]

+ result[f'watermark_alpha_{index+1}'] = args[index + result['watermark_count'] * 10]

+ args = args[result['watermark_count'] * 11:]

+ result['postprocessing_count'] = shared.opts.data.get('postprocessing_count', 1)

+ for index in range(result['postprocessing_count']):

+ result[f'pp_type_{index+1}'] = args[index + result['postprocessing_count'] * 0]

+ result[f'pp_saturation_strength_{index+1}'] = args[index + result['postprocessing_count'] * 1]

+ result[f'pp_sharpening_radius_{index+1}'] = args[index + result['postprocessing_count'] * 2]

+ result[f'pp_sharpening_percent_{index+1}'] = args[index + result['postprocessing_count'] * 3]

+ result[f'pp_sharpening_threshold_{index+1}'] = args[index + result['postprocessing_count'] * 4]

+ result[f'pp_gaussian_radius_{index+1}'] = args[index + result['postprocessing_count'] * 5]

+ result[f'pp_brightness_strength_{index+1}'] = args[index + result['postprocessing_count'] * 6]

+ result[f'pp_color_strength_{index+1}'] = args[index + result['postprocessing_count'] * 7]

+ result[f'pp_contrast_strength_{index+1}'] = args[index + result['postprocessing_count'] * 8]

+ result[f'pp_hue_strength_{index+1}'] = args[index + result['postprocessing_count'] * 9]

+ result[f'pp_bilateral_sigmaC_{index+1}'] = args[index + result['postprocessing_count'] * 10]

+ result[f'pp_bilateral_sigmaS_{index+1}'] = args[index + result['postprocessing_count'] * 11]

+ result[f'pp_color_tint_type_name_{index+1}'] = args[index + result['postprocessing_count'] * 12]

+ result[f'pp_color_tint_lut_name_{index+1}'] = args[index + result['postprocessing_count'] * 13]

+ args = args[result['postprocessing_count'] * 13:]

+ result['outpaint_count'] = shared.opts.data.get('outpaint_count', 1)

+ for index in range(result['outpaint_count']):

+ result[f'outpaint_positive_{index+1}'] = args[index + result['outpaint_count'] * 0]

+ result[f'outpaint_negative_{index+1}'] = args[index + result['outpaint_count'] * 1]

+ result[f'outpaint_denoise_{index+1}'] = args[index + result['outpaint_count'] * 2]

+ result[f'outpaint_cfg_{index+1}'] = args[index + result['outpaint_count'] * 3]

+ result[f'outpaint_steps_{index+1}'] = args[index + result['outpaint_count'] * 4]

+ result[f'outpaint_pixels_{index+1}'] = args[index + result['outpaint_count'] * 5]

+ result[f'outpaint_direction_{index+1}'] = args[index + result['outpaint_count'] * 6]

+ args = args[result['outpaint_count'] * 6:]

+ if not os.path.exists(ddsd_config_path):

+ os.mkdir(ddsd_config_path)

+ with open(os.path.join(ddsd_config_path, f'{ddsd_save_path}.ddcfg'), 'w', encoding='utf-8') as f:

+ f.write(json_write(result))

+ choices = [x[:-6] for x in os.listdir(ddsd_config_path) if x.endswith('.ddcfg')]

+ return {

+ ddsd_load_path:gr_list_refresh(choices, choices[0])

+ }

+ def dl(ddsd_load_path):

+ with open(os.path.join(ddsd_config_path, f'{ddsd_load_path}.ddcfg'), 'r', encoding='utf-8') as f:

+ result = json_read(f)

+ results = [result['enable_script_names'],result['disable_watermark'],result['disable_postprocess'],result['disable_upscaler'],result['ddetailer_before_upscaler'],result['scalevalue'],result['upscaler_sample'],result['overlap'],result['upscaler_index'],result['rewidth'],result['reheight'],result['denoising_strength'],result['upscaler_ckpt'],result['upscaler_vae'],result['disable_detailer'],result['disable_mask_paint_mode'],result['inpaint_mask_mode'],result['detailer_sample'],result['detailer_sam_model'],result['detailer_dino_model'],result['dino_full_res_inpaint'],result['dino_inpaint_padding'],result['detailer_mask_blur'],result['disable_outpaint'],result['outpaint_sample'],result['outpaint_mask_blur']]

+ def result_create(token,file_count,count, default):