Instructions to use ehsanaghaei/SecureBERT with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use ehsanaghaei/SecureBERT with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("fill-mask", model="ehsanaghaei/SecureBERT")# Load model directly from transformers import AutoTokenizer, AutoModelForMaskedLM tokenizer = AutoTokenizer.from_pretrained("ehsanaghaei/SecureBERT") model = AutoModelForMaskedLM.from_pretrained("ehsanaghaei/SecureBERT") - Inference

- Notebooks

- Google Colab

- Kaggle

Update README.md

Browse files

README.md

CHANGED

|

@@ -2,50 +2,61 @@

|

|

| 2 |

license: bigscience-openrail-m

|

| 3 |

widget:

|

| 4 |

- text: >-

|

| 5 |

-

Native API functions such as <mask>

|

| 6 |

-

calls

|

| 7 |

-

applications

|

| 8 |

example_title: Native API functions

|

| 9 |

- text: >-

|

| 10 |

-

One way

|

| 11 |

-

API call, which

|

| 12 |

example_title: Assigning the PPID of a new process

|

| 13 |

- text: >-

|

| 14 |

-

Enable Safe DLL Search Mode to

|

| 15 |

-

|

| 16 |

-

|

| 17 |

example_title: Enable Safe DLL Search Mode

|

| 18 |

- text: >-

|

| 19 |

-

GuLoader is a file downloader that has been

|

| 20 |

-

2019 to distribute a variety of <mask>, including

|

| 21 |

-

NanoCore, and FormBook.

|

| 22 |

example_title: GuLoader is a file downloader

|

| 23 |

language:

|

| 24 |

- en

|

| 25 |

tags:

|

| 26 |

- cybersecurity

|

| 27 |

-

- cyber threat

|

| 28 |

---

|

| 29 |

-

# SecureBERT: A Domain-Specific Language Model for Cybersecurity

|

| 30 |

-

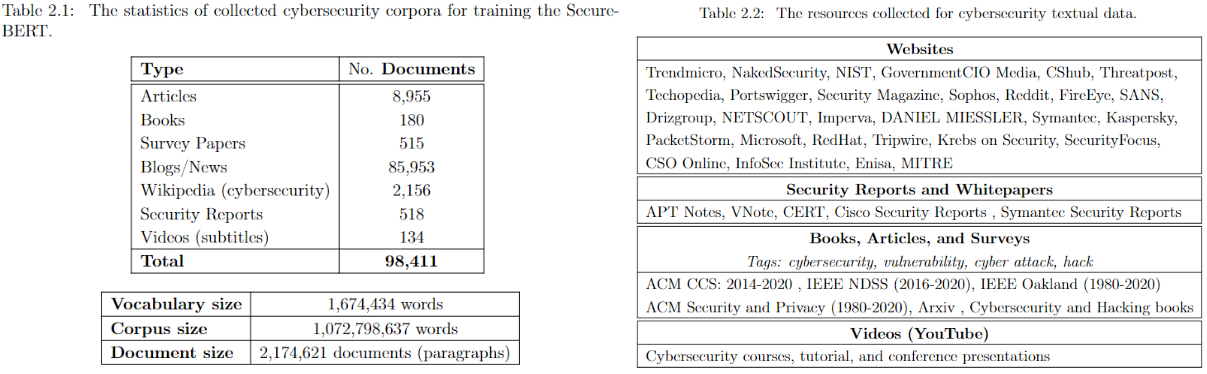

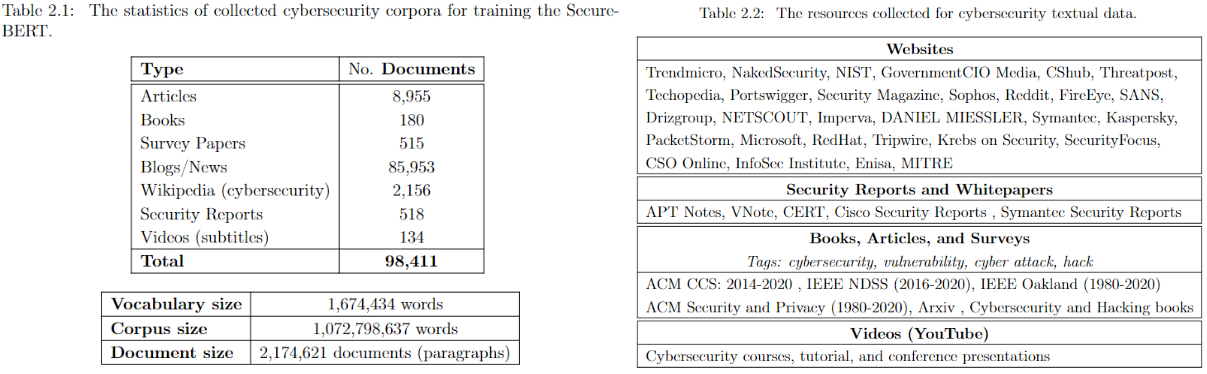

SecureBERT is a domain-specific language model based on RoBERTa which is trained on a huge amount of cybersecurity data and fine-tuned/tweaked to understand/represent cybersecurity textual data.

|

| 31 |

|

|

|

|

| 32 |

|

| 33 |

-

|

| 34 |

|

| 35 |

-

|

| 36 |

-

|

|

|

|

| 37 |

|

| 38 |

|

| 39 |

|

| 40 |

-

|

| 41 |

-

|

| 42 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 43 |

|

|

|

|

| 44 |

|

|

|

|

| 45 |

|

| 46 |

-

|

| 47 |

-

SecureBERT has been uploaded to [Huggingface](https://huggingface.co/ehsanaghaei/SecureBERT) framework. You may use the code below

|

| 48 |

|

|

|

|

| 49 |

```python

|

| 50 |

from transformers import RobertaTokenizer, RobertaModel

|

| 51 |

import torch

|

|

@@ -58,63 +69,45 @@ outputs = model(**inputs)

|

|

| 58 |

|

| 59 |

last_hidden_states = outputs.last_hidden_state

|

| 60 |

|

| 61 |

-

## Fill Mask

|

| 62 |

-

SecureBERT has been trained on MLM. Use the code below to predict the masked word within the given sentences:

|

| 63 |

|

| 64 |

-

|

| 65 |

-

|

| 66 |

-

|

| 67 |

-

|

|

|

|

| 68 |

|

| 69 |

import torch

|

| 70 |

import transformers

|

| 71 |

-

from transformers import

|

| 72 |

|

| 73 |

tokenizer = RobertaTokenizerFast.from_pretrained("ehsanaghaei/SecureBERT")

|

| 74 |

model = transformers.RobertaForMaskedLM.from_pretrained("ehsanaghaei/SecureBERT")

|

| 75 |

|

| 76 |

-

def predict_mask(sent, tokenizer, model, topk

|

| 77 |

token_ids = tokenizer.encode(sent, return_tensors='pt')

|

| 78 |

-

|

| 79 |

-

masked_pos = [mask.item() for mask in masked_position]

|

| 80 |

words = []

|

|

|

|

| 81 |

with torch.no_grad():

|

| 82 |

output = model(token_ids)

|

| 83 |

|

| 84 |

-

|

| 85 |

-

|

| 86 |

-

|

| 87 |

-

|

| 88 |

-

|

| 89 |

-

idx = torch.topk(mask_hidden_state, k=topk, dim=0)[1]

|

| 90 |

-

words = [tokenizer.decode(i.item()).strip() for i in idx]

|

| 91 |

-

words = [w.replace(' ','') for w in words]

|

| 92 |

-

list_of_list.append(words)

|

| 93 |

if print_results:

|

| 94 |

-

print("Mask

|

| 95 |

-

|

| 96 |

-

best_guess = ""

|

| 97 |

-

for j in list_of_list:

|

| 98 |

-

best_guess = best_guess + "," + j[0]

|

| 99 |

|

| 100 |

return words

|

| 101 |

|

| 102 |

-

|

| 103 |

-

while True:

|

| 104 |

-

sent = input("Text here: \t")

|

| 105 |

-

print("SecureBERT: ")

|

| 106 |

-

predict_mask(sent, tokenizer, model)

|

| 107 |

-

|

| 108 |

-

print("===========================\n")

|

| 109 |

-

```

|

| 110 |

# Reference

|

| 111 |

@inproceedings{aghaei2023securebert,

|

| 112 |

title={SecureBERT: A Domain-Specific Language Model for Cybersecurity},

|

| 113 |

author={Aghaei, Ehsan and Niu, Xi and Shadid, Waseem and Al-Shaer, Ehab},

|

| 114 |

booktitle={Security and Privacy in Communication Networks:

|

| 115 |

-

18th EAI International Conference, SecureComm 2022, Virtual Event,

|

| 116 |

-

October 2022,

|

| 117 |

-

Proceedings},

|

| 118 |

pages={39--56},

|

| 119 |

year={2023},

|

| 120 |

-

organization={Springer}

|

|

|

|

|

|

| 2 |

license: bigscience-openrail-m

|

| 3 |

widget:

|

| 4 |

- text: >-

|

| 5 |

+

Native API functions such as <mask> may be directly invoked via system

|

| 6 |

+

calls (syscalls). However, these features are also commonly exposed to

|

| 7 |

+

user-mode applications through interfaces and libraries.

|

| 8 |

example_title: Native API functions

|

| 9 |

- text: >-

|

| 10 |

+

One way to explicitly assign the PPID of a new process is through the

|

| 11 |

+

<mask> API call, which includes a parameter for defining the PPID.

|

| 12 |

example_title: Assigning the PPID of a new process

|

| 13 |

- text: >-

|

| 14 |

+

Enable Safe DLL Search Mode to ensure that system DLLs in more restricted

|

| 15 |

+

directories (e.g., %<mask>%) are prioritized over DLLs in less secure

|

| 16 |

+

locations such as a user’s home directory.

|

| 17 |

example_title: Enable Safe DLL Search Mode

|

| 18 |

- text: >-

|

| 19 |

+

GuLoader is a file downloader that has been active since at least December

|

| 20 |

+

2019. It has been used to distribute a variety of <mask>, including

|

| 21 |

+

NETWIRE, Agent Tesla, NanoCore, and FormBook.

|

| 22 |

example_title: GuLoader is a file downloader

|

| 23 |

language:

|

| 24 |

- en

|

| 25 |

tags:

|

| 26 |

- cybersecurity

|

| 27 |

+

- cyber threat intelligence

|

| 28 |

---

|

|

|

|

|

|

|

| 29 |

|

| 30 |

+

# SecureBERT: A Domain-Specific Language Model for Cybersecurity

|

| 31 |

|

| 32 |

+

**SecureBERT** is a RoBERTa-based, domain-specific language model trained on a large cybersecurity-focused corpus. It is designed to represent and understand cybersecurity text more effectively than general-purpose models.

|

| 33 |

|

| 34 |

+

[SecureBERT](https://link.springer.com/chapter/10.1007/978-3-031-25538-0_3) was trained on extensive in-domain data crawled from diverse online resources. It has demonstrated strong performance in a range of cybersecurity NLP tasks.

|

| 35 |

+

👉 See the [presentation on YouTube](https://www.youtube.com/watch?v=G8WzvThGG8c&t=8s).

|

| 36 |

+

👉 Explore details on the [GitHub repository](https://github.com/ehsanaghaei/SecureBERT/blob/main/README.md).

|

| 37 |

|

| 38 |

|

| 39 |

|

| 40 |

+

---

|

| 41 |

+

|

| 42 |

+

## Applications

|

| 43 |

+

SecureBERT can be used as a base model for downstream NLP tasks in cybersecurity, including:

|

| 44 |

+

- Text classification

|

| 45 |

+

- Named Entity Recognition (NER)

|

| 46 |

+

- Sequence-to-sequence tasks

|

| 47 |

+

- Question answering

|

| 48 |

+

|

| 49 |

+

### Key Results

|

| 50 |

+

- Outperforms baseline models such as **RoBERTa (base/large)**, **SciBERT**, and **SecBERT** in masked language modeling tasks within the cybersecurity domain.

|

| 51 |

+

- Maintains strong performance in **general English language understanding**, ensuring broad usability beyond domain-specific tasks.

|

| 52 |

|

| 53 |

+

---

|

| 54 |

|

| 55 |

+

## Using SecureBERT

|

| 56 |

|

| 57 |

+

The model is available on [Hugging Face](https://huggingface.co/ehsanaghaei/SecureBERT).

|

|

|

|

| 58 |

|

| 59 |

+

### Load the Model

|

| 60 |

```python

|

| 61 |

from transformers import RobertaTokenizer, RobertaModel

|

| 62 |

import torch

|

|

|

|

| 69 |

|

| 70 |

last_hidden_states = outputs.last_hidden_state

|

| 71 |

|

|

|

|

|

|

|

| 72 |

|

| 73 |

+

Masked Language Modeling Example

|

| 74 |

+

|

| 75 |

+

SecureBERT is trained with Masked Language Modeling (MLM). Use the following example to predict masked tokens:

|

| 76 |

+

|

| 77 |

+

#!pip install transformers torch tokenizers

|

| 78 |

|

| 79 |

import torch

|

| 80 |

import transformers

|

| 81 |

+

from transformers import RobertaTokenizerFast

|

| 82 |

|

| 83 |

tokenizer = RobertaTokenizerFast.from_pretrained("ehsanaghaei/SecureBERT")

|

| 84 |

model = transformers.RobertaForMaskedLM.from_pretrained("ehsanaghaei/SecureBERT")

|

| 85 |

|

| 86 |

+

def predict_mask(sent, tokenizer, model, topk=10, print_results=True):

|

| 87 |

token_ids = tokenizer.encode(sent, return_tensors='pt')

|

| 88 |

+

masked_pos = (token_ids.squeeze() == tokenizer.mask_token_id).nonzero().tolist()

|

|

|

|

| 89 |

words = []

|

| 90 |

+

|

| 91 |

with torch.no_grad():

|

| 92 |

output = model(token_ids)

|

| 93 |

|

| 94 |

+

for pos in masked_pos:

|

| 95 |

+

logits = output.logits[0, pos]

|

| 96 |

+

top_tokens = torch.topk(logits, k=topk).indices

|

| 97 |

+

predictions = [tokenizer.decode(i).strip().replace(" ", "") for i in top_tokens]

|

| 98 |

+

words.append(predictions)

|

|

|

|

|

|

|

|

|

|

|

|

|

| 99 |

if print_results:

|

| 100 |

+

print(f"Mask Predictions: {predictions}")

|

|

|

|

|

|

|

|

|

|

|

|

|

| 101 |

|

| 102 |

return words

|

| 103 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 104 |

# Reference

|

| 105 |

@inproceedings{aghaei2023securebert,

|

| 106 |

title={SecureBERT: A Domain-Specific Language Model for Cybersecurity},

|

| 107 |

author={Aghaei, Ehsan and Niu, Xi and Shadid, Waseem and Al-Shaer, Ehab},

|

| 108 |

booktitle={Security and Privacy in Communication Networks:

|

| 109 |

+

18th EAI International Conference, SecureComm 2022, Virtual Event, October 2022, Proceedings},

|

|

|

|

|

|

|

| 110 |

pages={39--56},

|

| 111 |

year={2023},

|

| 112 |

+

organization={Springer}

|

| 113 |

+

}

|