diff --git a/.gitattributes b/.gitattributes

index a6344aac8c09253b3b630fb776ae94478aa0275b..955c68ac938e4ec763a4d51175c65c9989500b06 100644

--- a/.gitattributes

+++ b/.gitattributes

@@ -33,3 +33,8 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

*.zip filter=lfs diff=lfs merge=lfs -text

*.zst filter=lfs diff=lfs merge=lfs -text

*tfevents* filter=lfs diff=lfs merge=lfs -text

+ckpts/clip_vit_l14_with_masks_6c17944 filter=lfs diff=lfs merge=lfs -text

+ckpts/owl2-b16-960-st-ngrams-curated-ft-lvisbase-ens-cold-weight-05_209b65b filter=lfs diff=lfs merge=lfs -text

+ckpts/owl2-l14-1008-st-ngrams-ft-lvisbase-ens-cold-weight-04_8ca674c filter=lfs diff=lfs merge=lfs -text

+images/scenic_design.jpg filter=lfs diff=lfs merge=lfs -text

+images/scenic_logo.jpg filter=lfs diff=lfs merge=lfs -text

diff --git a/CONTRIBUTING.md b/CONTRIBUTING.md

new file mode 100644

index 0000000000000000000000000000000000000000..797fbaaf978606e1991e51e05be7813862584c5e

--- /dev/null

+++ b/CONTRIBUTING.md

@@ -0,0 +1,32 @@

+# How to Contribute

+

+Scenic is a platform used for developing new methods and ideas by Google

+researchers, mostly around attention-based models for computer vision or

+multi-modal applications. We encourage forking the repository and continued

+development. We welcome suggestions and contributions to improving Scenic.

+There are a few small guidelines you need to follow.

+

+## Contributor License Agreement

+

+Contributions to this project must be accompanied by a Contributor License

+Agreement (CLA). You (or your employer) retain the copyright to your

+contribution; this simply gives us permission to use and redistribute your

+contributions as part of the project. Head over to

+ to see your current agreements on file or

+to sign a new one.

+

+You generally only need to submit a CLA once, so if you've already submitted one

+(even if it was for a different project), you probably don't need to do it

+again.

+

+## Code Reviews

+

+All submissions, including submissions by project members, require review. We

+use GitHub pull requests for this purpose. Consult

+[GitHub Help](https://help.github.com/articles/about-pull-requests/) for more

+information on using pull requests.

+

+## Community Guidelines

+

+This project follows

+[Google's Open Source Community Guidelines](https://opensource.google/conduct/).

diff --git a/IOU_test.py b/IOU_test.py

new file mode 100644

index 0000000000000000000000000000000000000000..eeac3327d3bb17c7aa88d7022d4388e4c6975e0a

--- /dev/null

+++ b/IOU_test.py

@@ -0,0 +1,21 @@

+from owlv2_helper_functions import get_iou, boxes_filter

+

+boxes = [

+ (128.56, 4.57, 732.52, 476.05),

+ (569.65, 185.71, 740.31, 244.76),

+ (569.65, 185.71, 740.31, 244.76),

+ (569.65, 185.71, 740.31, 244.76),

+ (101.99, 99.00, 720.12, 88.63),

+ ]

+

+scores = [1.0, 0.99, 0.89, 1.0, 0.99]

+

+instances = ['cat', 'dog', 'dog', 'tiger', 'cat']

+

+

+

+pred_bboxes, pred_scores, instances = boxes_filter(boxes, scores, instances)

+

+print(pred_bboxes)

+print(pred_scores)

+print(instances)

\ No newline at end of file

diff --git a/LICENSE b/LICENSE

new file mode 100644

index 0000000000000000000000000000000000000000..d645695673349e3947e8e5ae42332d0ac3164cd7

--- /dev/null

+++ b/LICENSE

@@ -0,0 +1,202 @@

+

+ Apache License

+ Version 2.0, January 2004

+ http://www.apache.org/licenses/

+

+ TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

+

+ 1. Definitions.

+

+ "License" shall mean the terms and conditions for use, reproduction,

+ and distribution as defined by Sections 1 through 9 of this document.

+

+ "Licensor" shall mean the copyright owner or entity authorized by

+ the copyright owner that is granting the License.

+

+ "Legal Entity" shall mean the union of the acting entity and all

+ other entities that control, are controlled by, or are under common

+ control with that entity. For the purposes of this definition,

+ "control" means (i) the power, direct or indirect, to cause the

+ direction or management of such entity, whether by contract or

+ otherwise, or (ii) ownership of fifty percent (50%) or more of the

+ outstanding shares, or (iii) beneficial ownership of such entity.

+

+ "You" (or "Your") shall mean an individual or Legal Entity

+ exercising permissions granted by this License.

+

+ "Source" form shall mean the preferred form for making modifications,

+ including but not limited to software source code, documentation

+ source, and configuration files.

+

+ "Object" form shall mean any form resulting from mechanical

+ transformation or translation of a Source form, including but

+ not limited to compiled object code, generated documentation,

+ and conversions to other media types.

+

+ "Work" shall mean the work of authorship, whether in Source or

+ Object form, made available under the License, as indicated by a

+ copyright notice that is included in or attached to the work

+ (an example is provided in the Appendix below).

+

+ "Derivative Works" shall mean any work, whether in Source or Object

+ form, that is based on (or derived from) the Work and for which the

+ editorial revisions, annotations, elaborations, or other modifications

+ represent, as a whole, an original work of authorship. For the purposes

+ of this License, Derivative Works shall not include works that remain

+ separable from, or merely link (or bind by name) to the interfaces of,

+ the Work and Derivative Works thereof.

+

+ "Contribution" shall mean any work of authorship, including

+ the original version of the Work and any modifications or additions

+ to that Work or Derivative Works thereof, that is intentionally

+ submitted to Licensor for inclusion in the Work by the copyright owner

+ or by an individual or Legal Entity authorized to submit on behalf of

+ the copyright owner. For the purposes of this definition, "submitted"

+ means any form of electronic, verbal, or written communication sent

+ to the Licensor or its representatives, including but not limited to

+ communication on electronic mailing lists, source code control systems,

+ and issue tracking systems that are managed by, or on behalf of, the

+ Licensor for the purpose of discussing and improving the Work, but

+ excluding communication that is conspicuously marked or otherwise

+ designated in writing by the copyright owner as "Not a Contribution."

+

+ "Contributor" shall mean Licensor and any individual or Legal Entity

+ on behalf of whom a Contribution has been received by Licensor and

+ subsequently incorporated within the Work.

+

+ 2. Grant of Copyright License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ copyright license to reproduce, prepare Derivative Works of,

+ publicly display, publicly perform, sublicense, and distribute the

+ Work and such Derivative Works in Source or Object form.

+

+ 3. Grant of Patent License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ (except as stated in this section) patent license to make, have made,

+ use, offer to sell, sell, import, and otherwise transfer the Work,

+ where such license applies only to those patent claims licensable

+ by such Contributor that are necessarily infringed by their

+ Contribution(s) alone or by combination of their Contribution(s)

+ with the Work to which such Contribution(s) was submitted. If You

+ institute patent litigation against any entity (including a

+ cross-claim or counterclaim in a lawsuit) alleging that the Work

+ or a Contribution incorporated within the Work constitutes direct

+ or contributory patent infringement, then any patent licenses

+ granted to You under this License for that Work shall terminate

+ as of the date such litigation is filed.

+

+ 4. Redistribution. You may reproduce and distribute copies of the

+ Work or Derivative Works thereof in any medium, with or without

+ modifications, and in Source or Object form, provided that You

+ meet the following conditions:

+

+ (a) You must give any other recipients of the Work or

+ Derivative Works a copy of this License; and

+

+ (b) You must cause any modified files to carry prominent notices

+ stating that You changed the files; and

+

+ (c) You must retain, in the Source form of any Derivative Works

+ that You distribute, all copyright, patent, trademark, and

+ attribution notices from the Source form of the Work,

+ excluding those notices that do not pertain to any part of

+ the Derivative Works; and

+

+ (d) If the Work includes a "NOTICE" text file as part of its

+ distribution, then any Derivative Works that You distribute must

+ include a readable copy of the attribution notices contained

+ within such NOTICE file, excluding those notices that do not

+ pertain to any part of the Derivative Works, in at least one

+ of the following places: within a NOTICE text file distributed

+ as part of the Derivative Works; within the Source form or

+ documentation, if provided along with the Derivative Works; or,

+ within a display generated by the Derivative Works, if and

+ wherever such third-party notices normally appear. The contents

+ of the NOTICE file are for informational purposes only and

+ do not modify the License. You may add Your own attribution

+ notices within Derivative Works that You distribute, alongside

+ or as an addendum to the NOTICE text from the Work, provided

+ that such additional attribution notices cannot be construed

+ as modifying the License.

+

+ You may add Your own copyright statement to Your modifications and

+ may provide additional or different license terms and conditions

+ for use, reproduction, or distribution of Your modifications, or

+ for any such Derivative Works as a whole, provided Your use,

+ reproduction, and distribution of the Work otherwise complies with

+ the conditions stated in this License.

+

+ 5. Submission of Contributions. Unless You explicitly state otherwise,

+ any Contribution intentionally submitted for inclusion in the Work

+ by You to the Licensor shall be under the terms and conditions of

+ this License, without any additional terms or conditions.

+ Notwithstanding the above, nothing herein shall supersede or modify

+ the terms of any separate license agreement you may have executed

+ with Licensor regarding such Contributions.

+

+ 6. Trademarks. This License does not grant permission to use the trade

+ names, trademarks, service marks, or product names of the Licensor,

+ except as required for reasonable and customary use in describing the

+ origin of the Work and reproducing the content of the NOTICE file.

+

+ 7. Disclaimer of Warranty. Unless required by applicable law or

+ agreed to in writing, Licensor provides the Work (and each

+ Contributor provides its Contributions) on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

+ implied, including, without limitation, any warranties or conditions

+ of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

+ PARTICULAR PURPOSE. You are solely responsible for determining the

+ appropriateness of using or redistributing the Work and assume any

+ risks associated with Your exercise of permissions under this License.

+

+ 8. Limitation of Liability. In no event and under no legal theory,

+ whether in tort (including negligence), contract, or otherwise,

+ unless required by applicable law (such as deliberate and grossly

+ negligent acts) or agreed to in writing, shall any Contributor be

+ liable to You for damages, including any direct, indirect, special,

+ incidental, or consequential damages of any character arising as a

+ result of this License or out of the use or inability to use the

+ Work (including but not limited to damages for loss of goodwill,

+ work stoppage, computer failure or malfunction, or any and all

+ other commercial damages or losses), even if such Contributor

+ has been advised of the possibility of such damages.

+

+ 9. Accepting Warranty or Additional Liability. While redistributing

+ the Work or Derivative Works thereof, You may choose to offer,

+ and charge a fee for, acceptance of support, warranty, indemnity,

+ or other liability obligations and/or rights consistent with this

+ License. However, in accepting such obligations, You may act only

+ on Your own behalf and on Your sole responsibility, not on behalf

+ of any other Contributor, and only if You agree to indemnify,

+ defend, and hold each Contributor harmless for any liability

+ incurred by, or claims asserted against, such Contributor by reason

+ of your accepting any such warranty or additional liability.

+

+ END OF TERMS AND CONDITIONS

+

+ APPENDIX: How to apply the Apache License to your work.

+

+ To apply the Apache License to your work, attach the following

+ boilerplate notice, with the fields enclosed by brackets "[]"

+ replaced with your own identifying information. (Don't include

+ the brackets!) The text should be enclosed in the appropriate

+ comment syntax for the file format. We also recommend that a

+ file or class name and description of purpose be included on the

+ same "printed page" as the copyright notice for easier

+ identification within third-party archives.

+

+ Copyright [yyyy] [name of copyright owner]

+

+ Licensed under the Apache License, Version 2.0 (the "License");

+ you may not use this file except in compliance with the License.

+ You may obtain a copy of the License at

+

+ http://www.apache.org/licenses/LICENSE-2.0

+

+ Unless required by applicable law or agreed to in writing, software

+ distributed under the License is distributed on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ See the License for the specific language governing permissions and

+ limitations under the License.

diff --git a/README.md b/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..4aa107e4a89900a7f3faa873e6b07282b1c1ff7a

--- /dev/null

+++ b/README.md

@@ -0,0 +1,217 @@

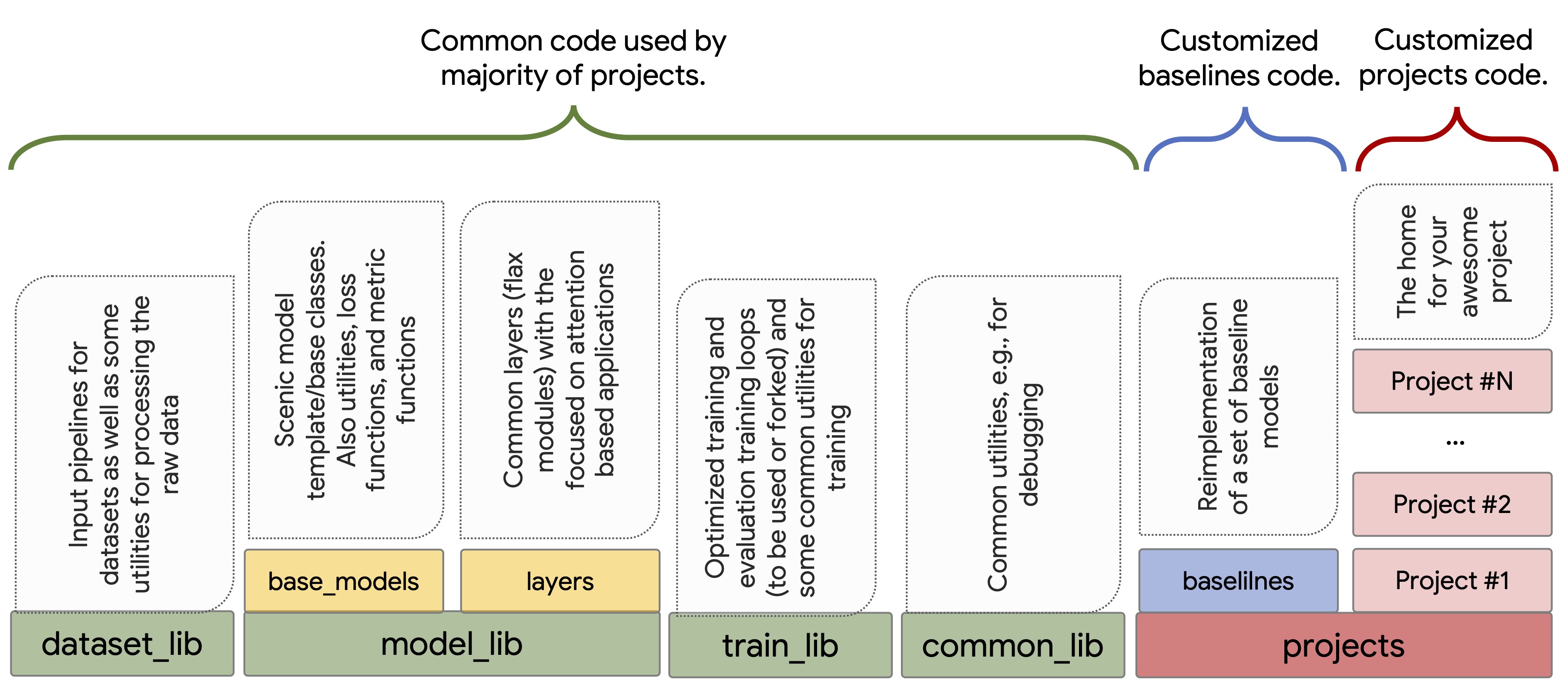

+# Scenic

+

+

+

+

+

blah"

+ )

+

+ def test_strfmt(self):

+ data = {

+ "int": tf.constant(42, tf.uint8),

+ "float": tf.constant(3.14, tf.float32),

+ "vec": tf.range(3),

+ "empty_str": tf.constant(""),

+ "regex_problem1": tf.constant(r"no \replace pattern"),

+ "regex_problem2": tf.constant(r"yes \1 pattern"),

+ }

+ for out in self.tfrun(pp.get_strfmt("Nothing"), data):

+ self.assertEqual(out["text"], b"Nothing")

+ for out in self.tfrun(pp.get_strfmt("{int}"), data):

+ self.assertEqual(out["text"], b"42")

+ for out in self.tfrun(pp.get_strfmt("A{int}"), data):

+ self.assertEqual(out["text"], b"A42")

+ for out in self.tfrun(pp.get_strfmt("{int}A"), data):

+ self.assertEqual(out["text"], b"42A")

+ for out in self.tfrun(pp.get_strfmt("{int}{int}"), data):

+ self.assertEqual(out["text"], b"4242")

+ for out in self.tfrun(pp.get_strfmt("A{int}A{int}A"), data):

+ self.assertEqual(out["text"], b"A42A42A")

+ for out in self.tfrun(pp.get_strfmt("A{float}A"), data):

+ self.assertEqual(out["text"], b"A3.14A")

+ for out in self.tfrun(pp.get_strfmt("A{float}A{int}"), data):

+ self.assertEqual(out["text"], b"A3.14A42")

+ for out in self.tfrun(pp.get_strfmt("A{vec}A"), data):

+ self.assertEqual(out["text"], b"A[0 1 2]A")

+ for out in self.tfrun(pp.get_strfmt("A{empty_str}A"), data):

+ self.assertEqual(out["text"], b"AA")

+ for out in self.tfrun(pp.get_strfmt("{empty_str}"), data):

+ self.assertEqual(out["text"], b"")

+ for out in self.tfrun(pp.get_strfmt("A{regex_problem1}A"), data):

+ self.assertEqual(out["text"], br"Ano \replace patternA")

+ for out in self.tfrun(pp.get_strfmt("A{regex_problem2}A"), data):

+ self.assertEqual(out["text"], br"Ayes \1 patternA")

+

+

+@Registry.register("tokensets.sp_extra_tokens")

+def _get_sp_extra_tokens():

+ # For sentencepiece, adding these tokens will make them visible when decoding.

+ # If a token is not found (e.g. "" is not found in "mistral"), then it is

+ # added to the vocabulary, increasing the vocab_size accordingly.

+ return ["", "", ""]

+

+

+if __name__ == "__main__":

+ tf.test.main()

diff --git a/big_vision/pp/registry.py b/big_vision/pp/registry.py

new file mode 100644

index 0000000000000000000000000000000000000000..f5c7d996d756be16ba68a5fcb143f23129e1249d

--- /dev/null

+++ b/big_vision/pp/registry.py

@@ -0,0 +1,163 @@

+# Copyright 2024 Big Vision Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+"""Global Registry for big_vision pp ops.

+

+Author: Joan Puigcerver (jpuigcerver@)

+"""

+

+from __future__ import absolute_import

+from __future__ import division

+from __future__ import print_function

+

+import ast

+import contextlib

+import functools

+

+

+def parse_name(string_to_parse):

+ """Parses input to the registry's lookup function.

+

+ Args:

+ string_to_parse: can be either an arbitrary name or function call

+ (optionally with positional and keyword arguments).

+ e.g. "multiclass", "resnet50_v2(filters_factor=8)".

+

+ Returns:

+ A tuple of input name, argument tuple and a keyword argument dictionary.

+ Examples:

+ "multiclass" -> ("multiclass", (), {})

+ "resnet50_v2(9, filters_factor=4)" ->

+ ("resnet50_v2", (9,), {"filters_factor": 4})

+

+ Author: Joan Puigcerver (jpuigcerver@)

+ """

+ expr = ast.parse(string_to_parse, mode="eval").body # pytype: disable=attribute-error

+ if not isinstance(expr, (ast.Attribute, ast.Call, ast.Name)):

+ raise ValueError(

+ "The given string should be a name or a call, but a {} was parsed from "

+ "the string {!r}".format(type(expr), string_to_parse))

+

+ # Notes:

+ # name="some_name" -> type(expr) = ast.Name

+ # name="module.some_name" -> type(expr) = ast.Attribute

+ # name="some_name()" -> type(expr) = ast.Call

+ # name="module.some_name()" -> type(expr) = ast.Call

+

+ if isinstance(expr, ast.Name):

+ return string_to_parse, (), {}

+ elif isinstance(expr, ast.Attribute):

+ return string_to_parse, (), {}

+

+ def _get_func_name(expr):

+ if isinstance(expr, ast.Attribute):

+ return _get_func_name(expr.value) + "." + expr.attr

+ elif isinstance(expr, ast.Name):

+ return expr.id

+ else:

+ raise ValueError(

+ "Type {!r} is not supported in a function name, the string to parse "

+ "was {!r}".format(type(expr), string_to_parse))

+

+ def _get_func_args_and_kwargs(call):

+ args = tuple([ast.literal_eval(arg) for arg in call.args])

+ kwargs = {

+ kwarg.arg: ast.literal_eval(kwarg.value) for kwarg in call.keywords

+ }

+ return args, kwargs

+

+ func_name = _get_func_name(expr.func)

+ func_args, func_kwargs = _get_func_args_and_kwargs(expr)

+

+ return func_name, func_args, func_kwargs

+

+

+class Registry(object):

+ """Implements global Registry.

+

+ Authors: Joan Puigcerver (jpuigcerver@), Alexander Kolesnikov (akolesnikov@)

+ """

+

+ _GLOBAL_REGISTRY = {}

+

+ @staticmethod

+ def global_registry():

+ return Registry._GLOBAL_REGISTRY

+

+ @staticmethod

+ def register(name, replace=False):

+ """Creates a function that registers its input."""

+

+ def _register(item):

+ if name in Registry.global_registry() and not replace:

+ raise KeyError("The name {!r} was already registered.".format(name))

+

+ Registry.global_registry()[name] = item

+ return item

+

+ return _register

+

+ @staticmethod

+ def lookup(lookup_string, kwargs_extra=None):

+ """Lookup a name in the registry."""

+

+ try:

+ name, args, kwargs = parse_name(lookup_string)

+ except ValueError as e:

+ raise ValueError(f"Error parsing:\n{lookup_string}") from e

+ if kwargs_extra:

+ kwargs.update(kwargs_extra)

+ item = Registry.global_registry()[name]

+ return functools.partial(item, *args, **kwargs)

+

+ @staticmethod

+ def knows(lookup_string):

+ try:

+ name, _, _ = parse_name(lookup_string)

+ except ValueError as e:

+ raise ValueError(f"Error parsing:\n{lookup_string}") from e

+ return name in Registry.global_registry()

+

+

+@contextlib.contextmanager

+def temporary_ops(**kw):

+ """Registers specified pp ops for use in a `with` block.

+

+ Example use:

+

+ with pp_registry.remporary_ops(

+ pow=lambda alpha: lambda d: {k: v**alpha for k, v in d.items()}):

+ pp = pp_builder.get_preprocess_fn("pow(alpha=2.0)|pow(alpha=0.5)")

+ features = pp(features)

+

+ Args:

+ **kw: Names are preprocess string function names to be used to specify the

+ preprocess function. Values are functions that can be called with params

+ (e.g. the `alpha` param in above example) and return functions to be used

+ to transform features.

+

+ Yields:

+ A context manager to be used in a `with` statement.

+ """

+ reg = Registry.global_registry()

+ kw = {f"preprocess_ops.{k}": v for k, v in kw.items()}

+ for k in kw:

+ assert k not in reg

+ for k, v in kw.items():

+ reg[k] = v

+ try:

+ yield

+ finally:

+ for k in kw:

+ del reg[k]

diff --git a/big_vision/pp/registry_test.py b/big_vision/pp/registry_test.py

new file mode 100644

index 0000000000000000000000000000000000000000..2296e7de91ce0495bade59e8e65417384507e58e

--- /dev/null

+++ b/big_vision/pp/registry_test.py

@@ -0,0 +1,128 @@

+# Copyright 2024 Big Vision Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+"""Tests for registry."""

+

+from __future__ import absolute_import

+from __future__ import division

+from __future__ import print_function

+

+from unittest import mock

+

+from absl.testing import absltest

+from big_vision.pp import registry

+

+

+class RegistryTest(absltest.TestCase):

+

+ def setUp(self):

+ super(RegistryTest, self).setUp()

+ # Mock global registry in each test to keep them isolated and allow for

+ # concurrent tests.

+ self.addCleanup(mock.patch.stopall)

+ self.global_registry = dict()

+ self.mocked_method = mock.patch.object(

+ registry.Registry, "global_registry",

+ return_value=self.global_registry).start()

+

+ def test_parse_name(self):

+ name, args, kwargs = registry.parse_name("f")

+ self.assertEqual(name, "f")

+ self.assertEqual(args, ())

+ self.assertEqual(kwargs, {})

+

+ name, args, kwargs = registry.parse_name("f()")

+ self.assertEqual(name, "f")

+ self.assertEqual(args, ())

+ self.assertEqual(kwargs, {})

+

+ name, args, kwargs = registry.parse_name("func(a=0,b=1,c='s')")

+ self.assertEqual(name, "func")

+ self.assertEqual(args, ())

+ self.assertEqual(kwargs, {"a": 0, "b": 1, "c": "s"})

+

+ name, args, kwargs = registry.parse_name("func(1,'foo',3)")

+ self.assertEqual(name, "func")

+ self.assertEqual(args, (1, "foo", 3))

+ self.assertEqual(kwargs, {})

+

+ name, args, kwargs = registry.parse_name("func(1,'2',a=3,foo='bar')")

+ self.assertEqual(name, "func")

+ self.assertEqual(args, (1, "2"))

+ self.assertEqual(kwargs, {"a": 3, "foo": "bar"})

+

+ name, args, kwargs = registry.parse_name("foo.bar.func(a=0,b=(1),c='s')")

+ self.assertEqual(name, "foo.bar.func")

+ self.assertEqual(kwargs, dict(a=0, b=1, c="s"))

+

+ with self.assertRaises(SyntaxError):

+ registry.parse_name("func(0")

+ with self.assertRaises(SyntaxError):

+ registry.parse_name("func(a=0,,b=0)")

+ with self.assertRaises(SyntaxError):

+ registry.parse_name("func(a=0,b==1,c='s')")

+ with self.assertRaises(ValueError):

+ registry.parse_name("func(a=0,b=undefined_name,c='s')")

+

+ def test_register(self):

+ # pylint: disable=unused-variable

+ @registry.Registry.register("func1")

+ def func1():

+ pass

+

+ self.assertLen(registry.Registry.global_registry(), 1)

+

+ def test_lookup_function(self):

+

+ @registry.Registry.register("func1")

+ def func1(arg1, arg2, arg3): # pylint: disable=unused-variable

+ return arg1, arg2, arg3

+

+ self.assertTrue(callable(registry.Registry.lookup("func1")))

+ self.assertEqual(registry.Registry.lookup("func1")(1, 2, 3), (1, 2, 3))

+ self.assertEqual(

+ registry.Registry.lookup("func1(arg3=9)")(1, 2), (1, 2, 9))

+ self.assertEqual(

+ registry.Registry.lookup("func1(arg2=9,arg1=99)")(arg3=3), (99, 9, 3))

+ self.assertEqual(

+ registry.Registry.lookup("func1(arg2=9,arg1=99)")(arg1=1, arg3=3),

+ (1, 9, 3))

+

+ self.assertEqual(

+ registry.Registry.lookup("func1(1)")(1, 2), (1, 1, 2))

+ self.assertEqual(

+ registry.Registry.lookup("func1(1)")(arg3=3, arg2=2), (1, 2, 3))

+ self.assertEqual(

+ registry.Registry.lookup("func1(1, 2)")(3), (1, 2, 3))

+ self.assertEqual(

+ registry.Registry.lookup("func1(1, 2)")(arg3=3), (1, 2, 3))

+ self.assertEqual(

+ registry.Registry.lookup("func1(1, arg2=2)")(arg3=3), (1, 2, 3))

+ self.assertEqual(

+ registry.Registry.lookup("func1(1, arg3=2)")(arg2=3), (1, 3, 2))

+ self.assertEqual(

+ registry.Registry.lookup("func1(1, arg3=2)")(3), (1, 3, 2))

+

+ with self.assertRaises(TypeError):

+ registry.Registry.lookup("func1(1, arg2=2)")(3)

+ with self.assertRaises(TypeError):

+ registry.Registry.lookup("func1(1, arg3=3)")(arg3=3)

+ with self.assertRaises(TypeError):

+ registry.Registry.lookup("func1(1, arg3=3)")(arg1=3)

+ with self.assertRaises(SyntaxError):

+ registry.Registry.lookup("func1(arg1=1, 3)")(arg2=3)

+

+

+if __name__ == "__main__":

+ absltest.main()

diff --git a/big_vision/pp/tokenizer.py b/big_vision/pp/tokenizer.py

new file mode 100644

index 0000000000000000000000000000000000000000..681494e436aacd48d5d720e07d2df1a80c704eb2

--- /dev/null

+++ b/big_vision/pp/tokenizer.py

@@ -0,0 +1,103 @@

+# Copyright 2024 Big Vision Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+"""The tokenizer API for big_vision, and central registration place."""

+import functools

+import importlib

+from typing import Protocol

+

+from absl import logging

+from big_vision.pp import registry

+import big_vision.utils as u

+import numpy as np

+

+

+class Tokenizer(Protocol):

+ """Just to unify on the API as we now have mmany different ones."""

+

+ def to_int(self, text, *, bos=False, eos=False):

+ """Tokenizes `text` into a list of integer tokens.

+

+ Args:

+ text: can be a single string, or a list of strings.

+ bos: Whether a beginning-of-sentence token should be prepended.

+ eos: Whether an end-of-sentence token should be appended.

+

+ Returns:

+ List or list-of-list of tokens.

+ """

+

+ def to_int_tf_op(self, text, *, bos=False, eos=False):

+ """Same as `to_int()`, but as TF ops to be used in pp."""

+

+ def to_str(self, tokens, *, stop_at_eos=True):

+ """Inverse of `to_int()`.

+

+ Args:

+ tokens: list of tokens, or list of lists of tokens.

+ stop_at_eos: remove everything that may come after the first EOS.

+

+ Returns:

+ A string (if `tokens` is a list of tokens), or a list of strings.

+ Note that most tokenizers strip select few control tokens like

+ eos/bos/pad/unk from the output string.

+ """

+

+ def to_str_tf_op(self, tokens, *, stop_at_eos=True):

+ """Same as `to_str()`, but as TF ops to be used in pp."""

+

+ @property

+ def pad_token(self):

+ """Token id of padding token."""

+

+ @property

+ def eos_token(self):

+ """Token id of end-of-sentence token."""

+

+ @property

+ def bos_token(self):

+ """Token id of beginning-of-sentence token."""

+

+ @property

+ def vocab_size(self):

+ """Returns the size of the vocabulary."""

+

+

+@functools.cache

+def get_tokenizer(name):

+ with u.chrono.log_timing(f"z/secs/tokenizer/{name}"):

+ if not registry.Registry.knows(f"tokenizers.{name}"):

+ raw_name, *_ = registry.parse_name(name)

+ logging.info("Tokenizer %s not registered, "

+ "trying import big_vision.pp.%s", name, raw_name)

+ importlib.import_module(f"big_vision.pp.{raw_name}")

+

+ return registry.Registry.lookup(f"tokenizers.{name}")()

+

+

+def get_extra_tokens(tokensets):

+ extra_tokens = []

+ for tokenset in tokensets:

+ extra_tokens.extend(registry.Registry.lookup(f"tokensets.{tokenset}")())

+ return list(np.unique(extra_tokens)) # Preserves order. Dups make no sense.

+

+

+@registry.Registry.register("tokensets.loc")

+def _get_loc1024(n=1024):

+ return [f"" for i in range(n)]

+

+

+@registry.Registry.register("tokensets.seg")

+def _get_seg(n=128):

+ return [f"" for i in range(n)]

diff --git a/big_vision/pp/utils.py b/big_vision/pp/utils.py

new file mode 100644

index 0000000000000000000000000000000000000000..3ee834560246549c71f0a6d9785694fd1507ca9b

--- /dev/null

+++ b/big_vision/pp/utils.py

@@ -0,0 +1,53 @@

+# Copyright 2024 Big Vision Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+"""Preprocessing utils."""

+

+from collections import abc

+

+

+def maybe_repeat(arg, n_reps):

+ if not isinstance(arg, abc.Sequence) or isinstance(arg, str):

+ arg = (arg,) * n_reps

+ return arg

+

+

+class InKeyOutKey(object):

+ """Decorator for preprocessing ops, which adds `inkey` and `outkey` arguments.

+

+ Note: Only supports single-input single-output ops.

+ """

+

+ def __init__(self, indefault="image", outdefault="image", with_data=False):

+ self.indefault = indefault

+ self.outdefault = outdefault

+ self.with_data = with_data

+

+ def __call__(self, orig_get_pp_fn):

+

+ def get_ikok_pp_fn(*args, key=None,

+ inkey=self.indefault, outkey=self.outdefault, **kw):

+

+ orig_pp_fn = orig_get_pp_fn(*args, **kw)

+ def _ikok_pp_fn(data):

+ # Optionally allow the function to get the full data dict as aux input.

+ if self.with_data:

+ data[key or outkey] = orig_pp_fn(data[key or inkey], data=data)

+ else:

+ data[key or outkey] = orig_pp_fn(data[key or inkey])

+ return data

+

+ return _ikok_pp_fn

+

+ return get_ikok_pp_fn

diff --git a/big_vision/pp/utils_test.py b/big_vision/pp/utils_test.py

new file mode 100644

index 0000000000000000000000000000000000000000..beec18cef62a9638ed143229d7aedc5e218a70b6

--- /dev/null

+++ b/big_vision/pp/utils_test.py

@@ -0,0 +1,53 @@

+# Copyright 2024 Big Vision Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+"""Tests for preprocessing utils."""

+

+from __future__ import absolute_import

+from __future__ import division

+from __future__ import print_function

+

+from big_vision.pp import utils

+import tensorflow.compat.v1 as tf

+

+

+class UtilsTest(tf.test.TestCase):

+

+ def test_maybe_repeat(self):

+ self.assertEqual((1, 1, 1), utils.maybe_repeat(1, 3))

+ self.assertEqual((1, 2), utils.maybe_repeat((1, 2), 2))

+ self.assertEqual([1, 2], utils.maybe_repeat([1, 2], 2))

+

+ def test_inkeyoutkey(self):

+ @utils.InKeyOutKey()

+ def get_pp_fn(shift, scale=0):

+ def _pp_fn(x):

+ return scale * x + shift

+ return _pp_fn

+

+ data = {"k_in": 2, "other": 3}

+ ppfn = get_pp_fn(1, 2, inkey="k_in", outkey="k_out") # pylint: disable=unexpected-keyword-arg

+ self.assertEqual({"k_in": 2, "k_out": 5, "other": 3}, ppfn(data))

+

+ data = {"k": 6, "other": 3}

+ ppfn = get_pp_fn(1, inkey="k", outkey="k") # pylint: disable=unexpected-keyword-arg

+ self.assertEqual({"k": 1, "other": 3}, ppfn(data))

+

+ data = {"other": 6, "image": 3}

+ ppfn = get_pp_fn(5, 2)

+ self.assertEqual({"other": 6, "image": 11}, ppfn(data))

+

+

+if __name__ == "__main__":

+ tf.test.main()

diff --git a/big_vision/requirements.txt b/big_vision/requirements.txt

new file mode 100644

index 0000000000000000000000000000000000000000..9ae71db5ac26e2746864c37959dfd28cb9fe70bf

--- /dev/null

+++ b/big_vision/requirements.txt

@@ -0,0 +1,19 @@

+numpy>=1.26

+absl-py

+git+https://github.com/google/CommonLoopUtils

+distrax

+editdistance

+einops

+flax

+optax

+git+https://github.com/google/flaxformer

+git+https://github.com/akolesnikoff/panopticapi.git@mute

+overrides

+protobuf

+sentencepiece

+tensorflow-cpu

+tfds-nightly

+tensorflow-text

+tensorflow-gan

+psutil

+pycocoevalcap

diff --git a/big_vision/run_tpu.sh b/big_vision/run_tpu.sh

new file mode 100644

index 0000000000000000000000000000000000000000..3c3da2e44e7d2829a00188f5e6177ea9d6e3ba4d

--- /dev/null

+++ b/big_vision/run_tpu.sh

@@ -0,0 +1,35 @@

+# Copyright 2024 Big Vision Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+#!/bin/bash

+

+if [ ! -d "bv_venv" ]

+then

+ sudo apt-get update

+ sudo apt install -y python3-venv

+ python3 -m venv bv_venv

+ . bv_venv/bin/activate

+

+ pip install -U pip # Yes, really needed.

+ # NOTE: doesn't work when in requirements.txt -> cyclic dep

+ pip install "jax[tpu]>=0.4.25" -f https://storage.googleapis.com/jax-releases/libtpu_releases.html

+ pip install -r big_vision/requirements.txt

+else

+ . bv_venv/bin/activate

+fi

+

+if [ $# -ne 0 ]

+then

+ env TFDS_DATA_DIR=$TFDS_DATA_DIR BV_JAX_INIT=1 python3 -m "$@"

+fi

diff --git a/big_vision/sharding.py b/big_vision/sharding.py

new file mode 100644

index 0000000000000000000000000000000000000000..be76cb3a1f6b8bc0e494515bac2a54528a53494c

--- /dev/null

+++ b/big_vision/sharding.py

@@ -0,0 +1,197 @@

+# Copyright 2024 Big Vision Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+"""Big vision sharding utilities."""

+

+from absl import logging

+

+from big_vision.pp.registry import Registry

+import big_vision.utils as u

+import flax.linen as nn

+import jax

+import numpy as np

+

+

+NamedSharding = jax.sharding.NamedSharding

+P = jax.sharding.PartitionSpec

+

+

+def _replicated(mesh):

+ return NamedSharding(mesh, P())

+

+

+def _shard_along_axis(mesh, i, axis_name):

+ return NamedSharding(mesh, P(*((None,) * i + (axis_name,))))

+

+

+def infer_sharding(params, strategy, mesh):

+ """Infers `params` sharding based on strategy.

+

+ Args:

+ params: a pytree of arrays.

+ strategy: sharding strategy.

+ mesh: jax device mesh.

+

+ Returns:

+ A pytree with shardings, that has the same shape as the `tree` argument.

+ """

+ patterns, tactics = zip(*strategy)

+

+ x_with_names, tree_def = u.tree_flatten_with_names(params)

+ names = tree_def.unflatten(list(zip(*x_with_names))[0])

+

+ # Follows big_vision conventions: each variable is matched at most once,

+ # early patterns get matching priority.

+ mask_trees = u.make_mask_trees(params, patterns)

+

+ specs = jax.tree.map(lambda x: (None,) * x.ndim, params)

+

+ for mask_tree, tactic in zip(mask_trees, tactics):

+ for op_str in tactic.split("|"):

+ op = Registry.lookup(f"shardings.{op_str}")()

+ specs = jax.tree.map(

+ lambda x, n, match, spec, op=op: op(spec, mesh, n, x)

+ if match else spec,

+ params, names, mask_tree, specs,

+ is_leaf=lambda v: isinstance(v, nn.Partitioned))

+

+ # Two-level tree_map to prevent it from doing traversal inside the spec.

+ specs = jax.tree.map(lambda _, spec: P(*spec), nn.unbox(params), specs)

+ return jax.tree.map(lambda spec: NamedSharding(mesh, spec), specs)

+

+

+# Sharding rules

+#

+# Each rule needs to be added to the registry, can accept custom args, and

+# returns a function that updates the current spec. The arguments are:

+# 1. Variable name

+# 2. Variable itself (or placeholder with .shape and .dtype properties)

+# 3. The current sharing spec.

+

+

+@Registry.register("shardings.replicate")

+def replicate():

+ """Full replication sharding rule.

+

+ Note full replication is deafult, so this can be skipped and useful to

+ explicitly state in the config that certrain parameters are replicated.

+ TODO: can be generalized to support replication over a sub-mesh.

+

+ Returns:

+ A function that updates the sharding spec.

+ """

+ def _update_spec(cur_spec, mesh, name, x):

+ del x, mesh

+ if not all(axis is None for axis in cur_spec):

+ raise ValueError(f"Inconsistent sharding instructions: "

+ f"parameter {name} has spec {cur_spec}, "

+ f"so it can't be fully replicated.")

+ return cur_spec

+ return _update_spec

+

+

+@Registry.register("shardings.fsdp")

+def fsdp(axis, min_size_to_shard_mb=4):

+ """FSDP sharding rule.

+

+ Shards the largest dimension that is not sharded already and is divisible

+ by the total device count.

+

+ Args:

+ axis: mesh axis name for FSDP, or a collection of names.

+ min_size_to_shard_mb: minimal tensor size to bother with sharding.

+

+ Returns:

+ A function that updates the sharding spec.

+ """

+ axis = axis if isinstance(axis, str) else tuple(axis)

+ axis_tuple = axis if isinstance(axis, tuple) else (axis,)

+ def _update_spec(cur_spec, mesh, name, x):

+ shape = x.shape

+ axis_size = np.prod([mesh.shape[a] for a in axis_tuple])

+

+ if np.prod(shape) * x.dtype.itemsize <= min_size_to_shard_mb * (2 ** 20):

+ return cur_spec

+

+ # Partition along largest axis that is divisible and not taken.

+ idx = np.argsort(shape)[::-1]

+ for i in idx:

+ if shape[i] % axis_size == 0:

+ if cur_spec[i] is None:

+ return cur_spec[:i] + (axis,) + cur_spec[i+1:]

+

+ logging.info("Failed to apply `fsdp` rule to the parameter %s:%s, as all "

+ "its dimensions are not divisible by the requested axis: "

+ "%s:%i, or already occupied by other sharding rules: %s",

+ name, shape, axis, axis_size, cur_spec)

+ return cur_spec

+ return _update_spec

+

+

+@Registry.register("shardings.logical_partitioning")

+def logical_partitioning():

+ """Manual sharding based on Flax's logical partitioning annotations.

+

+ Uses logical sharding annotations added in model code with

+ `nn.with_logical_partitioning`. Respects logical to mesh name mapping rules

+ (typically defined in the dynamic context using

+ `with nn.logical_axis_rules(rules): ...`).

+

+ Returns:

+ A function that outputs the sharding spec of `nn.LogicallyPartitioned` boxed

+ specs.

+ """

+ def _update_spec(cur_spec, mesh, name, x):

+ del x, name, mesh

+ if isinstance(cur_spec, nn.LogicallyPartitioned):

+ return nn.logical_to_mesh_axes(cur_spec.names)

+ return cur_spec

+ return _update_spec

+

+

+@Registry.register("shardings.shard_dim")

+def shard_dim(axis, dim, ignore_ndim_error=False):

+ """Shards the given dimension along the given axis.

+

+ Args:

+ axis: mesh axis name for sharding.

+ dim: dimension to shard (can be negative).

+ ignore_ndim_error: if True, a warning error is logged instead of raising an

+ exception when the given dimension is not compatible with the number of

+ dimensions of the array.

+

+ Returns:

+ A function that updates the sharding spec.

+ """

+ def _update_spec(cur_spec, mesh, name, x):

+ del mesh, x

+ if np.abs(dim) >= len(cur_spec):

+ msg = f"Cannot shard_dim({axis}, {dim}): name={name} cur_spec={cur_spec}"

+ if ignore_ndim_error:

+ logging.warning(msg)

+ return cur_spec

+ else:

+ raise ValueError(msg)

+ pos_dim = dim

+ if pos_dim < 0:

+ pos_dim += len(cur_spec)

+ if cur_spec[pos_dim] is not None:

+ raise ValueError(

+ f"Already sharded: shard_dim({axis}, {dim}):"

+ f" name={name} cur_spec={cur_spec}"

+ )

+ new_spec = cur_spec[:pos_dim] + (axis,) + cur_spec[pos_dim + 1 :]

+ return new_spec

+

+ return _update_spec

diff --git a/big_vision/tools/download_tfds_datasets.py b/big_vision/tools/download_tfds_datasets.py

new file mode 100644

index 0000000000000000000000000000000000000000..b64c33d51a7cb8df063d15e828e30b07007ff6b0

--- /dev/null

+++ b/big_vision/tools/download_tfds_datasets.py

@@ -0,0 +1,44 @@

+# Copyright 2024 Big Vision Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+"""Download and prepare TFDS datasets for the big_vision codebase.

+

+This python script covers cifar10, cifar100, oxford_iiit_pet

+and oxford_flowers10.

+

+If you want to integrate other public or custom datasets, please follow:

+https://www.tensorflow.org/datasets/catalog/overview

+"""

+

+from absl import app

+import tensorflow_datasets as tfds

+

+

+def main(argv):

+ if len(argv) > 1 and "download_tfds_datasets.py" in argv[0]:

+ datasets = argv[1:]

+ else:

+ datasets = [

+ "cifar10",

+ "cifar100",

+ "oxford_iiit_pet",

+ "oxford_flowers102",

+ "imagenet_v2",

+ ]

+ for d in datasets:

+ tfds.load(name=d, download=True)

+

+

+if __name__ == "__main__":

+ app.run(main)

diff --git a/big_vision/tools/eval_only.py b/big_vision/tools/eval_only.py

new file mode 100644

index 0000000000000000000000000000000000000000..abdde4a6c0aa656a2e8ec76ce645982a2a6723b3

--- /dev/null

+++ b/big_vision/tools/eval_only.py

@@ -0,0 +1,146 @@

+# Copyright 2024 Big Vision Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+"""Script that loads a model and only runs evaluators."""

+

+from functools import partial

+import importlib

+

+import os

+

+from absl import app

+from absl import flags

+from absl import logging

+import big_vision.evaluators.common as eval_common

+import big_vision.utils as u

+from clu import parameter_overview

+import flax

+import flax.jax_utils as flax_utils

+import jax

+import jax.numpy as jnp

+from ml_collections import config_flags

+from tensorflow.io import gfile

+

+

+config_flags.DEFINE_config_file(

+ "config", None, "Training configuration.", lock_config=True)

+

+flags.DEFINE_string("workdir", default=None, help="Work unit directory.")

+flags.DEFINE_boolean("cleanup", default=False,

+ help="Delete workdir (only) after successful completion.")

+

+# Adds jax flags to the program.

+jax.config.parse_flags_with_absl()

+

+

+def main(argv):

+ del argv

+

+ config = flags.FLAGS.config

+ workdir = flags.FLAGS.workdir

+ logging.info("Workdir: %s", workdir)

+

+ # Here we register preprocessing ops from modules listed on `pp_modules`.

+ for m in config.get("pp_modules", ["ops_general", "ops_image"]):

+ importlib.import_module(f"big_vision.pp.{m}")

+

+ # These functions do more stuff internally, for OSS release we mock them by

+ # trivial alternatives in order to minize disruptions in the code.

+ xid, wid = -1, -1

+ def write_note(note):

+ if jax.process_index() == 0:

+ logging.info("NOTE: %s", note)

+

+ mw = u.BigVisionMetricWriter(xid, wid, workdir, config)

+ u.chrono.inform(measure=mw.measure, write_note=write_note)

+

+ write_note(f"Initializing {config.model_name} model...")

+ assert config.get("model.reinit") is None, (

+ "I don't think you want any part of the model to be re-initialized.")

+ model_mod = importlib.import_module(f"big_vision.models.{config.model_name}")

+ model_kw = dict(config.get("model", {}))

+ if "num_classes" in config: # Make it work for regular + image_text.

+ model_kw["num_classes"] = config.num_classes

+ model = model_mod.Model(**model_kw)

+

+ # We want all parameters to be created in host RAM, not on any device, they'll

+ # be sent there later as needed, otherwise we already encountered two

+ # situations where we allocate them twice.

+ @partial(jax.jit, backend="cpu")

+ def init(rng):

+ input_shapes = config.get("init_shapes", [(1, 224, 224, 3)])

+ input_types = config.get("init_types", [jnp.float32] * len(input_shapes))

+ dummy_inputs = [jnp.zeros(s, t) for s, t in zip(input_shapes, input_types)]

+ things = flax.core.unfreeze(model.init(rng, *dummy_inputs))

+ return things.get("params", {})

+

+ with u.chrono.log_timing("z/secs/init"):

+ params_cpu = init(jax.random.PRNGKey(42))

+ if jax.process_index() == 0:

+ parameter_overview.log_parameter_overview(params_cpu, msg="init params")

+ num_params = sum(p.size for p in jax.tree.leaves(params_cpu))

+ mw.measure("num_params", num_params)

+

+ # The use-case for not loading an init is testing and debugging.

+ if config.get("model_init"):

+ write_note(f"Initialize model from {config.model_init}...")

+ params_cpu = model_mod.load(

+ params_cpu, config.model_init, config.get("model"),

+ **config.get("model_load", {}))

+ if jax.process_index() == 0:

+ parameter_overview.log_parameter_overview(params_cpu, msg="loaded params")

+

+ write_note("Replicating...")

+ params_repl = flax_utils.replicate(params_cpu)

+

+ def predict_fn(params, *a, **kw):

+ return model.apply({"params": params}, *a, **kw)

+

+ evaluators = eval_common.from_config(

+ config, {"predict": predict_fn, "model": model},

+ lambda s: write_note(f"Initializing evaluator: {s}..."),

+ lambda key, cfg: 1, # Ignore log_steps, always run.

+ )

+

+ # Allow running for multiple steps can be useful for couple cases:

+ # 1. non-deterministic evaluators

+ # 2. warmup when timing evaluators (eg compile cache etc).

+ for s in range(config.get("eval_repeats", 1)):

+ mw.step_start(s)

+ for (name, evaluator, _, prefix) in evaluators:

+ write_note(f"{name} evaluation step {s}...")

+ with u.profile(name, noop=name in config.get("no_profile", [])):

+ with u.chrono.log_timing(f"z/secs/eval/{name}"):

+ for key, value in evaluator.run(params_repl):

+ mw.measure(f"{prefix}{key}", value)

+ u.sync() # sync barrier to get correct measurements

+ u.chrono.flush_timings()

+ mw.step_end()

+

+ write_note("Done!")

+ mw.close()

+

+ # Make sure all hosts stay up until the end of main.

+ u.sync()

+

+ if workdir and flags.FLAGS.cleanup and jax.process_index() == 0:

+ gfile.rmtree(workdir)

+ try: # Only need this on the last work-unit, if already empty.

+ gfile.remove(os.path.join(workdir, ".."))

+ except tf.errors.OpError:

+ pass

+

+

+if __name__ == "__main__":

+ app.run(main)

diff --git a/big_vision/tools/lit_demo/README.md b/big_vision/tools/lit_demo/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..e69de29bb2d1d6434b8b29ae775ad8c2e48c5391

diff --git a/big_vision/tools/lit_demo/build.js b/big_vision/tools/lit_demo/build.js

new file mode 100644

index 0000000000000000000000000000000000000000..e69de29bb2d1d6434b8b29ae775ad8c2e48c5391

diff --git a/big_vision/tools/lit_demo/package.json b/big_vision/tools/lit_demo/package.json

new file mode 100644

index 0000000000000000000000000000000000000000..e69de29bb2d1d6434b8b29ae775ad8c2e48c5391

diff --git a/big_vision/train.py b/big_vision/train.py

new file mode 100644

index 0000000000000000000000000000000000000000..51f49cc75c59f27e6393c73b400420e2c89da9e0

--- /dev/null

+++ b/big_vision/train.py

@@ -0,0 +1,517 @@

+# Copyright 2024 Big Vision Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+"""Training loop example.

+

+This is a basic variant of a training loop, good starting point for fancy ones.

+"""

+# pylint: disable=consider-using-from-import

+# pylint: disable=logging-fstring-interpolation

+

+import functools

+import importlib

+import multiprocessing.pool

+import os

+

+from absl import app

+from absl import flags

+from absl import logging

+import big_vision.evaluators.common as eval_common

+import big_vision.input_pipeline as input_pipeline

+import big_vision.optax as bv_optax

+import big_vision.sharding as bv_sharding

+import big_vision.utils as u

+from clu import parameter_overview

+import flax.linen as nn

+import jax

+from jax.experimental import multihost_utils

+from jax.experimental.array_serialization import serialization as array_serial

+from jax.experimental.shard_map import shard_map

+import jax.numpy as jnp

+from ml_collections import config_flags

+import numpy as np

+import optax

+import tensorflow as tf

+

+from tensorflow.io import gfile

+

+

+config_flags.DEFINE_config_file(

+ "config", None, "Training configuration.", lock_config=True)

+

+flags.DEFINE_string("workdir", default=None, help="Work unit directory.")

+flags.DEFINE_boolean("cleanup", default=False,

+ help="Delete workdir (only) after successful completion.")

+

+# Adds jax flags to the program.

+jax.config.parse_flags_with_absl()

+# Transfer guard will fail the program whenever that data between a host and

+# a device is transferred implicitly. This often catches subtle bugs that

+# cause slowdowns and memory fragmentation. Explicit transfers are done

+# with jax.device_put and jax.device_get.

+jax.config.update("jax_transfer_guard", "disallow")

+# Fixes design flaw in jax.random that may cause unnecessary d2d comms.

+jax.config.update("jax_threefry_partitionable", True)

+

+

+NamedSharding = jax.sharding.NamedSharding

+P = jax.sharding.PartitionSpec

+

+

+def main(argv):

+ del argv

+

+ # This is needed on multihost systems, but crashes on non-TPU single-host.

+ if os.environ.get("BV_JAX_INIT"):

+ jax.distributed.initialize()

+

+ # Make sure TF does not touch GPUs.

+ tf.config.set_visible_devices([], "GPU")

+

+ config = flags.FLAGS.config

+

+################################################################################

+# #

+# Set up logging #

+# #

+################################################################################

+

+ # Set up work directory and print welcome message.

+ workdir = flags.FLAGS.workdir

+ logging.info(

+ f"\u001b[33mHello from process {jax.process_index()} holding "

+ f"{jax.local_device_count()}/{jax.device_count()} devices and "

+ f"writing to workdir {workdir}.\u001b[0m")

+ logging.info(f"The config:\n{config}")

+

+ save_ckpt_path = None

+ if workdir: # Always create if requested, even if we may not write into it.

+ gfile.makedirs(workdir)

+ save_ckpt_path = os.path.join(workdir, "checkpoint.bv")

+

+ # The pool is used to perform misc operations such as logging in async way.

+ pool = multiprocessing.pool.ThreadPool(1)

+

+ # Here we register preprocessing ops from modules listed on `pp_modules`.

+ for m in config.get("pp_modules", ["ops_general", "ops_image", "ops_text"]):

+ importlib.import_module(f"big_vision.pp.{m}")

+

+ # Setup up logging and experiment manager.

+ xid, wid = -1, -1

+ fillin = lambda s: s

+ def info(s, *a):

+ logging.info("\u001b[33mNOTE\u001b[0m: " + s, *a)

+ def write_note(note):

+ if jax.process_index() == 0:

+ info("%s", note)

+

+ mw = u.BigVisionMetricWriter(xid, wid, workdir, config)

+

+ # Allow for things like timings as early as possible!

+ u.chrono.inform(measure=mw.measure, write_note=write_note)

+

+################################################################################

+# #

+# Set up Mesh #

+# #

+################################################################################

+

+ # We rely on jax mesh_utils to organize devices, such that communication

+ # speed is the fastest for the last dimension, second fastest for the

+ # penultimate dimension, etc.

+ config_mesh = config.get("mesh", [("data", jax.device_count())])

+

+ # Sharding rules with default

+ sharding_rules = config.get("sharding_rules", [("act_batch", "data")])

+

+ write_note("Creating device mesh...")

+ mesh = u.create_device_mesh(

+ config_mesh,

+ allow_split_physical_axes=config.get("mesh_allow_split_physical_axes",

+ False))

+ repl_sharding = jax.sharding.NamedSharding(mesh, P())

+

+ # Consistent device order is important to ensure correctness of various train

+ # loop components, such as input pipeline, update step, evaluators. The

+ # order presribed by the `devices_flat` variable should be used throughout

+ # the program.

+ devices_flat = mesh.devices.flatten()

+

+################################################################################

+# #

+# Input Pipeline #

+# #

+################################################################################

+

+ write_note("Initializing train dataset...")

+ batch_size = config.input.batch_size

+ if batch_size % jax.device_count() != 0:

+ raise ValueError(f"Batch size ({batch_size}) must "

+ f"be divisible by device number ({jax.device_count()})")

+ info("Global batch size %d on %d hosts results in %d local batch size. With "

+ "%d dev per host (%d dev total), that's a %d per-device batch size.",

+ batch_size, jax.process_count(), batch_size // jax.process_count(),

+ jax.local_device_count(), jax.device_count(),

+ batch_size // jax.device_count())

+

+ train_ds, ntrain_img = input_pipeline.training(config.input)

+

+ total_steps = u.steps("total", config, ntrain_img, batch_size)

+ def get_steps(name, default=ValueError, cfg=config):

+ return u.steps(name, cfg, ntrain_img, batch_size, total_steps, default)

+

+ u.chrono.inform(total_steps=total_steps, global_bs=batch_size,

+ steps_per_epoch=ntrain_img / batch_size)

+

+ info("Running for %d steps, that means %f epochs",

+ total_steps, total_steps * batch_size / ntrain_img)

+

+ # Start input pipeline as early as possible.

+ n_prefetch = config.get("prefetch_to_device", 1)

+ train_iter = input_pipeline.start_global(train_ds, devices_flat, n_prefetch)

+

+################################################################################

+# #

+# Create Model & Optimizer #

+# #

+################################################################################

+

+ write_note("Creating model...")

+ model_mod = importlib.import_module(f"big_vision.models.{config.model_name}")

+ model = model_mod.Model(

+ num_classes=config.num_classes, **config.get("model", {}))

+

+ def init(rng):

+ batch = jax.tree.map(lambda x: jnp.zeros(x.shape, x.dtype.as_numpy_dtype),

+ train_ds.element_spec)

+ params = model.init(rng, batch["image"])["params"]

+

+ # Set bias in the head to a low value, such that loss is small initially.

+ if "init_head_bias" in config:

+ params["head"]["bias"] = jnp.full_like(params["head"]["bias"],

+ config["init_head_bias"])

+

+ return params

+

+ # This seed makes the Jax part of things (like model init) deterministic.

+ # However, full training still won't be deterministic, for example due to the

+ # tf.data pipeline not being deterministic even if we would set TF seed.

+ # See (internal link) for a fun read on what it takes.

+ rng = jax.random.PRNGKey(u.put_cpu(config.get("seed", 0)))

+

+ write_note("Inferring parameter shapes...")

+ rng, rng_init = jax.random.split(rng)

+ params_shape = jax.eval_shape(init, rng_init)

+

+ write_note("Inferring optimizer state shapes...")

+ tx, sched_fns = bv_optax.make(config, nn.unbox(params_shape), sched_kw=dict(

+ total_steps=total_steps, batch_size=batch_size, data_size=ntrain_img))

+ opt_shape = jax.eval_shape(tx.init, params_shape)

+ # We jit this, such that the arrays are created on the CPU, not device[0].

+ sched_fns_cpu = [u.jit_cpu()(sched_fn) for sched_fn in sched_fns]

+

+ if jax.process_index() == 0:

+ num_params = sum(np.prod(p.shape) for p in jax.tree.leaves(params_shape))

+ mw.measure("num_params", num_params)

+

+################################################################################

+# #

+# Shard & Transfer #

+# #

+################################################################################

+

+ write_note("Inferring shardings...")

+ train_state_shape = {"params": params_shape, "opt": opt_shape}

+

+ strategy = config.get("sharding_strategy", [(".*", "replicate")])

+ with nn.logical_axis_rules(sharding_rules):

+ train_state_sharding = bv_sharding.infer_sharding(

+ train_state_shape, strategy=strategy, mesh=mesh)

+

+ write_note("Transferring train_state to devices...")

+ # RNG is always replicated

+ rng_init = u.reshard(rng_init, repl_sharding)

+

+ # Parameters and the optimizer are now global (distributed) jax arrays.

+ params = jax.jit(init, out_shardings=train_state_sharding["params"])(rng_init)

+ opt = jax.jit(tx.init, out_shardings=train_state_sharding["opt"])(params)

+

+ rng, rng_loop = jax.random.split(rng, 2)

+ rng_loop = u.reshard(rng_loop, repl_sharding)

+ del rng # not used anymore, so delete it.

+

+ # At this point we have everything we need to form a train state. It contains

+ # all the parameters that are passed and updated by the main training step.

+ # From here on, we have no need for Flax AxisMetadata (such as partitioning).

+ train_state = nn.unbox({"params": params, "opt": opt})

+ del params, opt # Delete to avoid memory leak or accidental reuse.

+

+ write_note("Logging parameter overview...")

+ parameter_overview.log_parameter_overview(

+ train_state["params"], msg="Init params",

+ include_stats="global", jax_logging_process=0)

+

+################################################################################

+# #

+# Update Step #

+# #

+################################################################################

+

+ @functools.partial(

+ jax.jit,

+ donate_argnums=(0,),

+ out_shardings=(train_state_sharding, repl_sharding))

+ def update_fn(train_state, rng, batch):

+ """Update step."""

+

+ images, labels = batch["image"], batch["labels"]

+

+ step_count = bv_optax.get_count(train_state["opt"], jittable=True)

+ rng = jax.random.fold_in(rng, step_count)

+

+ if config.get("mixup") and config.mixup.p:

+ # The shard_map below makes mixup run on every device independently and

+ # thus avoids unnecessary communication.

+ sharded_mixup_fn = shard_map(

+ u.get_mixup(rng, config.mixup.p),

+ mesh=jax.sharding.Mesh(devices_flat, ("data",)),

+ in_specs=P("data"), out_specs=(P(), P("data"), P("data")))

+ rng, (images, labels), _ = sharded_mixup_fn(images, labels)

+

+ # Get device-specific loss rng.

+ rng, rng_model = jax.random.split(rng, 2)

+

+ def loss_fn(params):

+ logits, _ = model.apply(

+ {"params": params}, images,

+ train=True, rngs={"dropout": rng_model})

+ return getattr(u, config.get("loss", "sigmoid_xent"))(

+ logits=logits, labels=labels)

+

+ params, opt = train_state["params"], train_state["opt"]

+ loss, grads = jax.value_and_grad(loss_fn)(params)

+ updates, opt = tx.update(grads, opt, params)

+ params = optax.apply_updates(params, updates)

+

+ measurements = {"training_loss": loss}

+ gs = jax.tree.leaves(bv_optax.replace_frozen(config.schedule, grads, 0.))

+ measurements["l2_grads"] = jnp.sqrt(sum([jnp.sum(g * g) for g in gs]))

+ ps = jax.tree.leaves(params)

+ measurements["l2_params"] = jnp.sqrt(sum([jnp.sum(p * p) for p in ps]))

+ us = jax.tree.leaves(updates)

+ measurements["l2_updates"] = jnp.sqrt(sum([jnp.sum(u * u) for u in us]))

+

+ return {"params": params, "opt": opt}, measurements

+

+################################################################################

+# #

+# Load Checkpoint #

+# #

+################################################################################

+

+ # Decide how to initialize training. The order is important.

+ # 1. Always resumes from the existing checkpoint, e.g. resumes a finetune job.

+ # 2. Resume from a previous checkpoint, e.g. start a cooldown training job.

+ # 3. Initialize model from something, e,g, start a fine-tuning job.

+ # 4. Train from scratch.

+ resume_ckpt_path = None

+ if save_ckpt_path and gfile.exists(f"{save_ckpt_path}-LAST"):

+ resume_ckpt_path = save_ckpt_path

+ elif config.get("resume"):

+ resume_ckpt_path = fillin(config.resume)

+

+ ckpt_mngr = None

+ if save_ckpt_path or resume_ckpt_path:

+ ckpt_mngr = array_serial.GlobalAsyncCheckpointManager()

+

+ if resume_ckpt_path:

+ write_note(f"Resuming training from checkpoint {resume_ckpt_path}...")

+ jax.tree.map(lambda x: x.delete(), train_state)

+ del train_state

+ shardings = {

+ **train_state_sharding,

+ "chrono": jax.tree.map(lambda _: repl_sharding,

+ u.chrono.save()),

+ }

+ loaded = u.load_checkpoint_ts(

+ resume_ckpt_path, tree=shardings, shardings=shardings)

+ train_state = {key: loaded[key] for key in train_state_sharding.keys()}

+

+ u.chrono.load(jax.device_get(loaded["chrono"]))

+ del loaded

+ elif config.get("model_init"):

+ write_note(f"Initialize model from {config.model_init}...")

+ # TODO: when updating the `load` API soon, do pass and request the

+ # full `train_state` from it. Examples where useful: VQVAE, BN.

+ train_state["params"] = model_mod.load(

+ train_state["params"], config.model_init, config.get("model"),

+ **config.get("model_load", {}))

+

+ # load has the freedom to return params not correctly sharded. Think of for

+ # example ViT resampling position embedings on CPU as numpy arrays.

+ train_state["params"] = u.reshard(

+ train_state["params"], train_state_sharding["params"])

+

+ parameter_overview.log_parameter_overview(

+ train_state["params"], msg="restored params",

+ include_stats="global", jax_logging_process=0)

+

+

+################################################################################

+# #

+# Setup Evals #

+# #

+################################################################################

+

+ # We do not jit/pmap this function, because it is passed to evaluator that

+ # does it later. We output as many intermediate tensors as possible for

+ # maximal flexibility. Later `jit` will prune out things that are not needed.

+ def eval_logits_fn(train_state, batch):

+ logits, out = model.apply({"params": train_state["params"]}, batch["image"])

+ return logits, out

+

+ def eval_loss_fn(train_state, batch):

+ logits, _ = model.apply({"params": train_state["params"]}, batch["image"])

+ loss_fn = getattr(u, config.get("loss", "sigmoid_xent"))

+ return {

+ "loss": loss_fn(logits=logits, labels=batch["labels"], reduction=False)

+ }

+

+ eval_fns = {

+ "predict": eval_logits_fn,

+ "loss": eval_loss_fn,

+ }

+

+ # Only initialize evaluators when they are first needed.

+ @functools.lru_cache(maxsize=None)

+ def evaluators():

+ return eval_common.from_config(

+ config, eval_fns,

+ lambda s: write_note(f"Init evaluator: {s}…\n{u.chrono.note}"),

+ lambda key, cfg: get_steps(key, default=None, cfg=cfg),

+ devices_flat,

+ )

+

+ # At this point we need to know the current step to see whether to run evals.

+ write_note("Inferring the first step number...")

+ first_step_device = bv_optax.get_count(train_state["opt"], jittable=True)

+ first_step = int(jax.device_get(first_step_device))

+ u.chrono.inform(first_step=first_step)

+

+ # Note that training can be pre-empted during the final evaluation (i.e.

+ # just after the final checkpoint has been written to disc), in which case we

+ # want to run the evals.

+ if first_step in (total_steps, 0):

+ write_note("Running initial or final evals...")

+ mw.step_start(first_step)

+ for (name, evaluator, _, prefix) in evaluators():

+ if config.evals[name].get("skip_first") and first_step != total_steps:

+ continue

+ write_note(f"{name} evaluation...\n{u.chrono.note}")

+ with u.chrono.log_timing(f"z/secs/eval/{name}"):

+ with mesh, nn.logical_axis_rules(sharding_rules):

+ for key, value in evaluator.run(train_state):

+ mw.measure(f"{prefix}{key}", value)

+

+################################################################################

+# #

+# Train Loop #

+# #

+################################################################################

+

+ prof = None # Keeps track of start/stop of profiler state.

+

+ write_note("Starting training loop, compiling the first step...")

+ for step, batch in zip(range(first_step + 1, total_steps + 1), train_iter):

+ mw.step_start(step)

+

+ with jax.profiler.StepTraceAnnotation("train_step", step_num=step):

+ with u.chrono.log_timing("z/secs/update0", noop=step > first_step + 1):

+ with mesh, nn.logical_axis_rules(sharding_rules):

+ train_state, measurements = update_fn(train_state, rng_loop, batch)

+

+ # On the first host, let's always profile a handful of early steps.

+ if jax.process_index() == 0:

+ prof = u.startstop_prof(prof, step, first_step, get_steps("log_training"))

+

+ # Report training progress

+ if (u.itstime(step, get_steps("log_training"), total_steps, host=0)

+ or u.chrono.warmup and jax.process_index() == 0):

+ for i, sched_fn_cpu in enumerate(sched_fns_cpu):

+ mw.measure(f"global_schedule{i if i else ''}",

+ sched_fn_cpu(u.put_cpu(step - 1)))

+ measurements = jax.device_get(measurements)

+ for name, value in measurements.items():

+ mw.measure(name, value)

+ u.chrono.tick(step)

+ for k in ("training_loss", "l2_grads", "l2_updates", "l2_params"):

+ if not np.isfinite(measurements.get(k, 0.0)):

+ raise RuntimeError(f"{k} became nan or inf somewhere within steps "

+ f"[{step - get_steps('log_training')}, {step}]")

+

+ # Checkpoint saving

+ keep_ckpt_steps = get_steps("keep_ckpt", None) or total_steps

+ if save_ckpt_path and (

+ (keep := u.itstime(step, keep_ckpt_steps, total_steps, first=False))

+ or u.itstime(step, get_steps("ckpt", None), total_steps, first=True)

+ ):

+ u.chrono.pause(wait_for=train_state)

+

+ # Copy because we add extra stuff to the checkpoint.

+ ckpt = {**train_state}

+

+ # To save chrono state correctly and safely in a multihost setup, we

+ # broadcast the state to all hosts and convert it to a global array.

+ with jax.transfer_guard("allow"):

+ chrono_ckpt = multihost_utils.broadcast_one_to_all(u.chrono.save())

+ chrono_shardings = jax.tree.map(lambda _: repl_sharding, chrono_ckpt)

+ ckpt = ckpt | {"chrono": u.reshard(chrono_ckpt, chrono_shardings)}

+

+ u.save_checkpoint_ts(ckpt_mngr, ckpt, save_ckpt_path, step, keep)

+ u.chrono.resume()

+

+ for (name, evaluator, log_steps, prefix) in evaluators():