File size: 4,834 Bytes

947084c 6afff48 947084c b64c479 2bfced4 947084c b64c479 947084c 913e55c 2bfced4 efe1705 2bfced4 913e55c 2bfced4 c2491c3 2bfced4 751dc21 913e55c 2bfced4 efe1705 2bfced4 0de06ca 2bfced4 913e55c 2bfced4 913e55c 2bfced4 913e55c 947084c 0de06ca 947084c 913e55c 947084c 913e55c bdca4ff 947084c 6afff48 947084c 8f39e71 947084c 8f39e71 e76abfb 947084c b28a07b c9dc71b 522c183 01d810c c9dc71b 522c183 10a76f9 c9dc71b 79196eb 69fe7b3 79196eb 8bf1a1e 79196eb c105dba 79196eb |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 |

---

tags:

- gguf-connector

widget:

- text: a cat in a hat

output:

url: https://raw.githubusercontent.com/calcuis/gguf-pack/master/w8g.png

- text: a raccoon in a hat

output:

url: https://raw.githubusercontent.com/calcuis/gguf-pack/master/w8f.png

- text: a raccoon in a hat

output:

url: https://raw.githubusercontent.com/calcuis/gguf-pack/master/w6a.png

- text: a dog walking in a cyber city with joy

output:

url: https://raw.githubusercontent.com/calcuis/gguf-pack/master/w6b.png

- text: a dog walking in a cyber city with joy

output:

url: https://raw.githubusercontent.com/calcuis/gguf-pack/master/w6c.png

- text: a dog walking in a cyber city with joy

output:

url: https://raw.githubusercontent.com/calcuis/gguf-pack/master/w8e.png

---

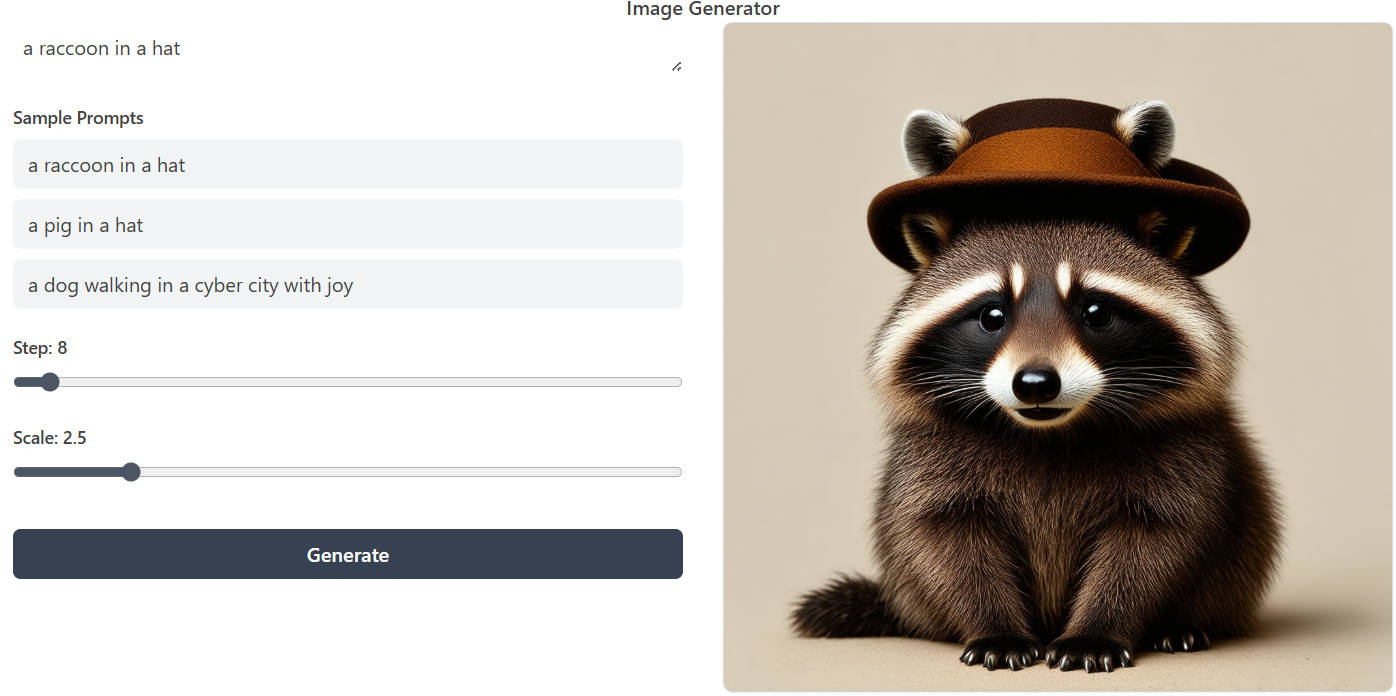

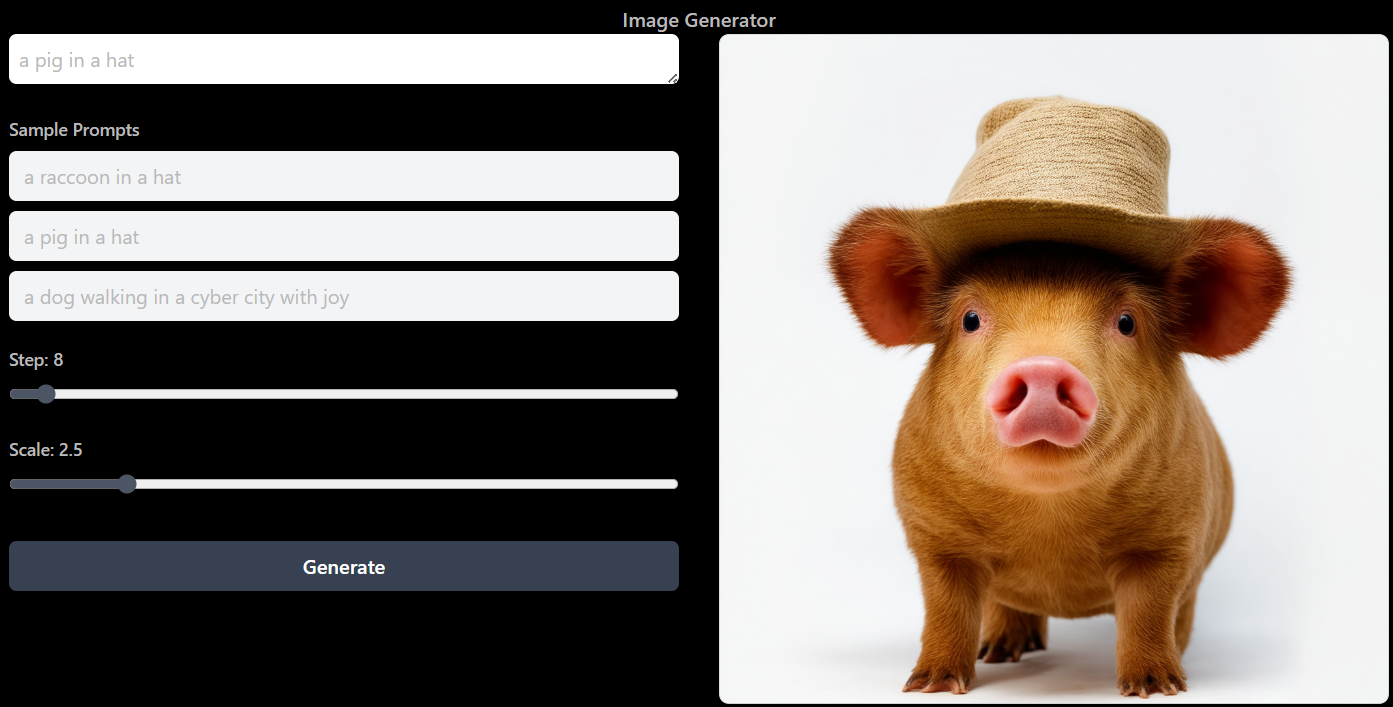

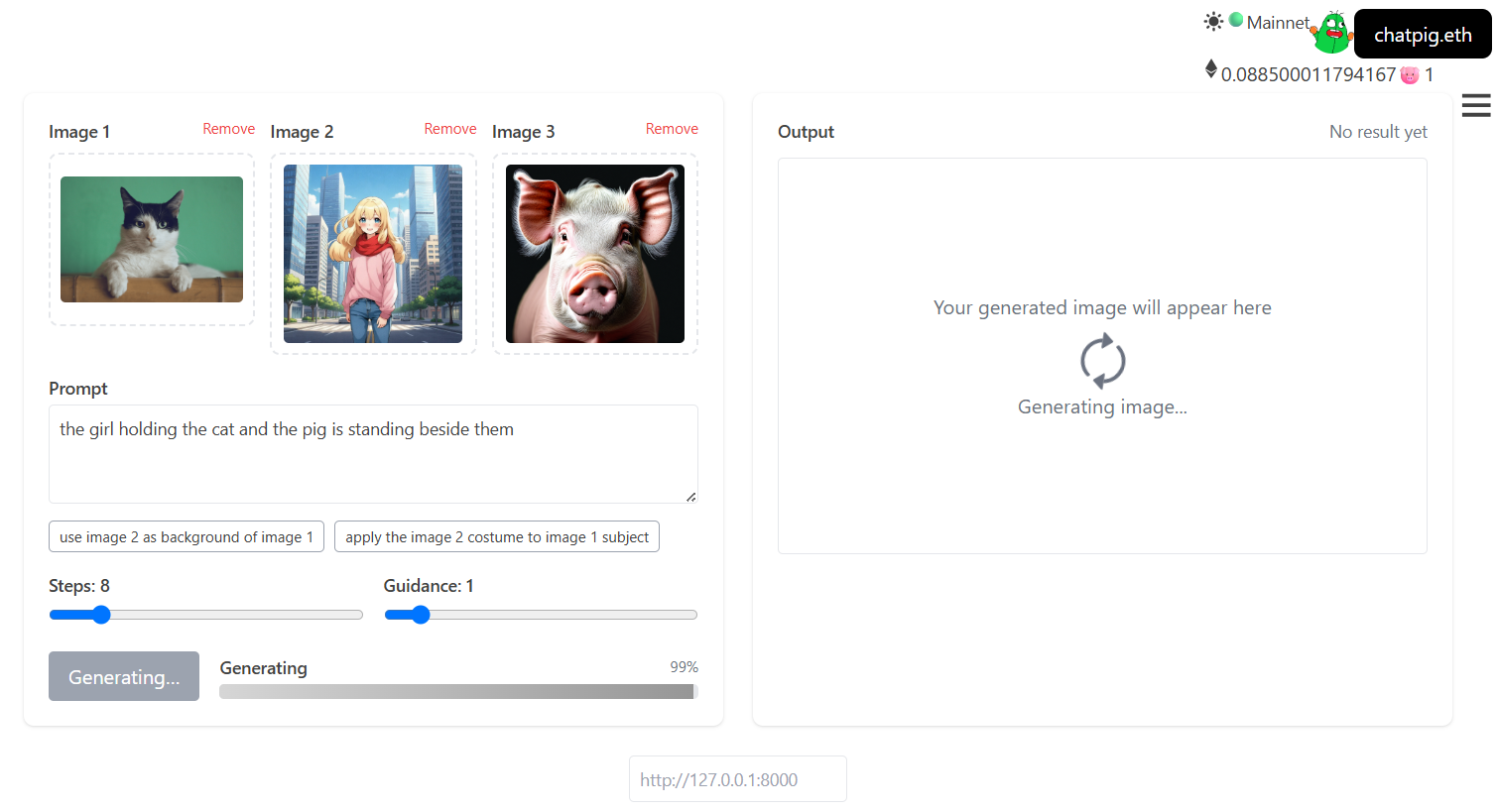

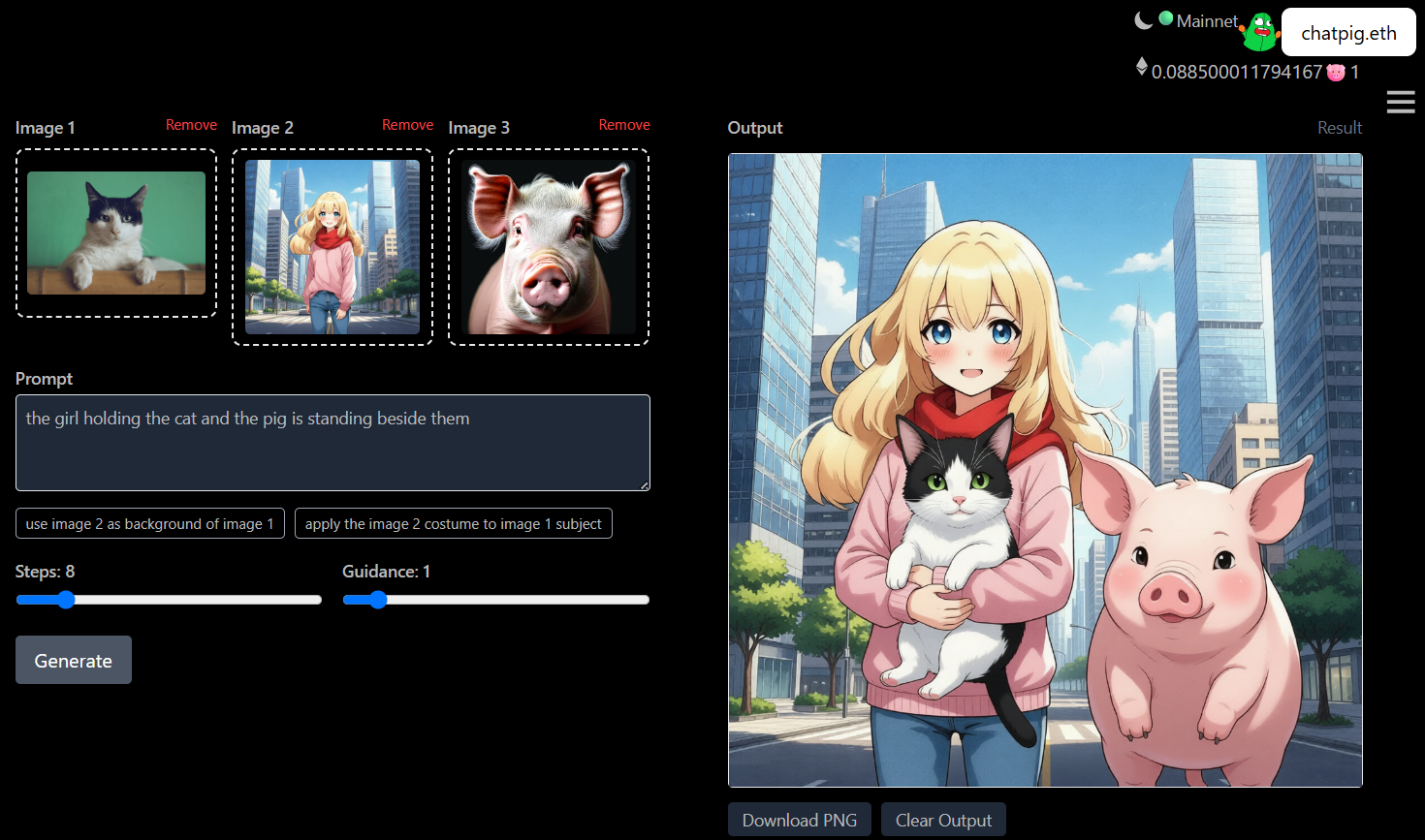

## self-hosted api

- run it with `gguf-connector`; activate the backend in console/terminal by

```

ggc w8

```

- choose your model* file

>

>GGUF available. Select which one to use:

>

>1. sd3.5-2b-lite-iq4_nl.gguf [[1.74GB](https://huggingface.co/calcuis/sd3.5-lite-gguf/blob/main/sd3.5-2b-lite-iq4_nl.gguf)]

>2. sd3.5-2b-lite-mxfp4_moe.gguf [[2.86GB](https://huggingface.co/calcuis/sd3.5-lite-gguf/blob/main/sd3.5-2b-lite-mxfp4_moe.gguf)]

>

>Enter your choice (1 to 2): _

>

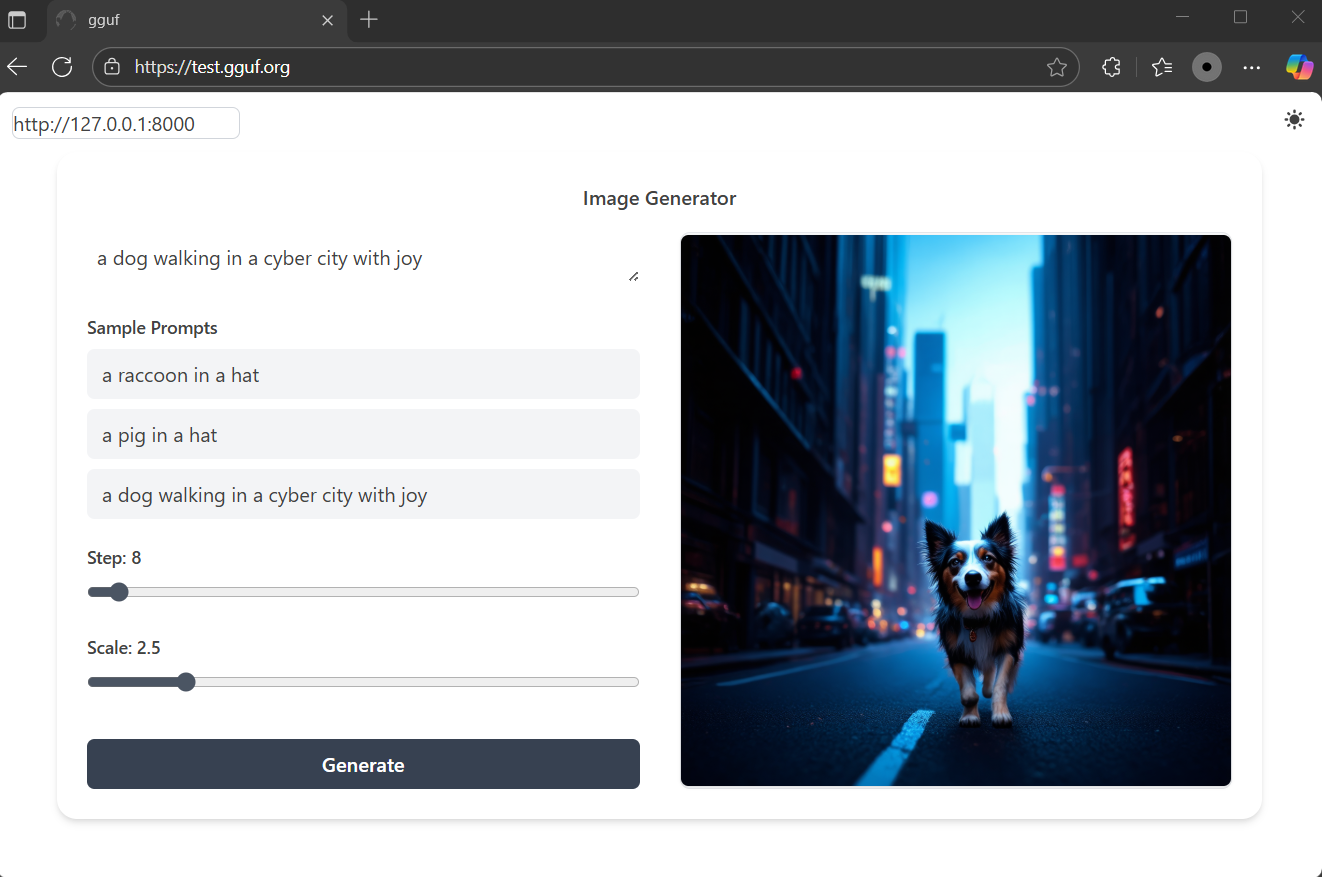

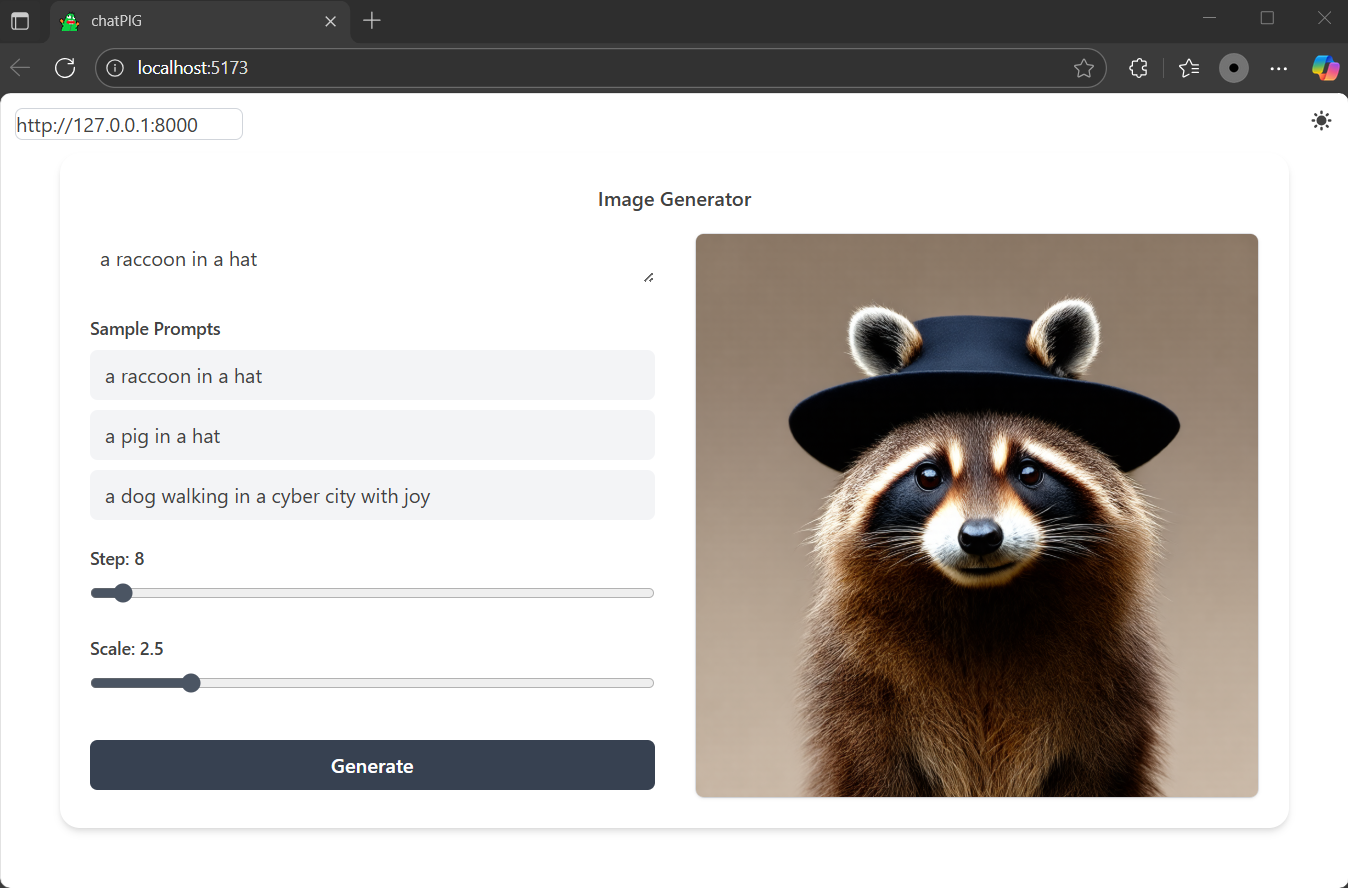

*accept sd3.5 2b model gguf recently, this will give you the fastest experience for even low tier gpu; frontend https://test.gguf.org or localhost (see **decentralized frontend** section below)

- or opt fastapi **lumina** connector

```

ggc w7

```

- choose your model* file

>

>GGUF available. Select which one to use:

>

>1. lumina2-q4_0.gguf [[1.47GB](https://huggingface.co/calcuis/lumina-gguf/blob/main/lumina2-q4_0.gguf)]

>2. lumina2-q8_0.gguf [[2.77GB](https://huggingface.co/calcuis/lumina-gguf/blob/main/lumina2-q8_0.gguf)]

>

>Enter your choice (1 to 2): _

>

*as lumina is no lite version recently, might need to increase the step to around 25 for better output

- or opt fastapi **flux** connector

```

ggc w6

```

- choose your model* file

>

>GGUF available. Select which one to use:

>

>1. flux-dev-lite-q2_k.gguf [[4.08GB](https://huggingface.co/calcuis/krea-gguf/blob/main/flux-dev-lite-q2_k.gguf)]

>2. flux-krea-lite-q2_k.gguf [[4.08GB](https://huggingface.co/calcuis/krea-gguf/blob/main/flux-krea-lite-q2_k.gguf)]

>

>Enter your choice (1 to 2): _

>

*accept any flux model gguf, lite is recommended for saving loading time

- flexible frontend choice (see below)

## decentralized frontend

- option 1: navigate to https://test.gguf.org

- option 2: localhost; keep the backend running and open a new terminal session then execute

```

ggc b

```

<Gallery />

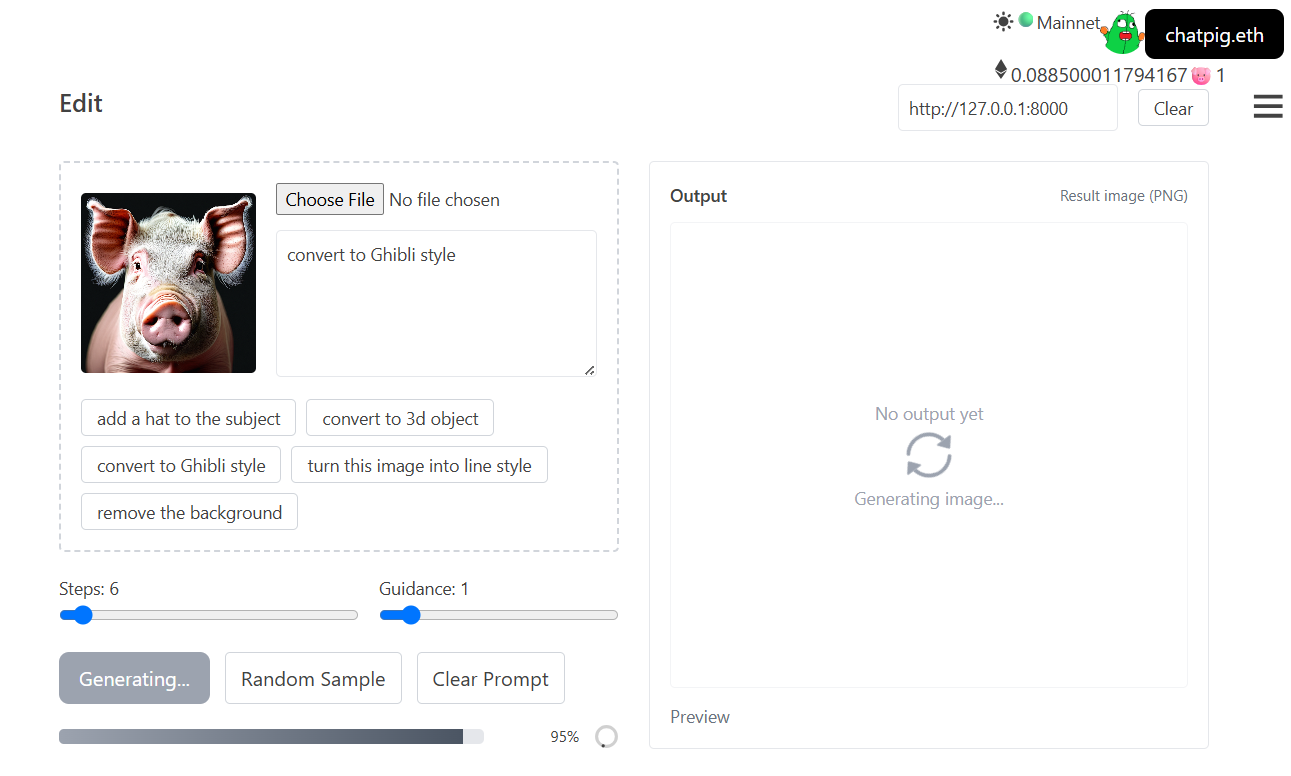

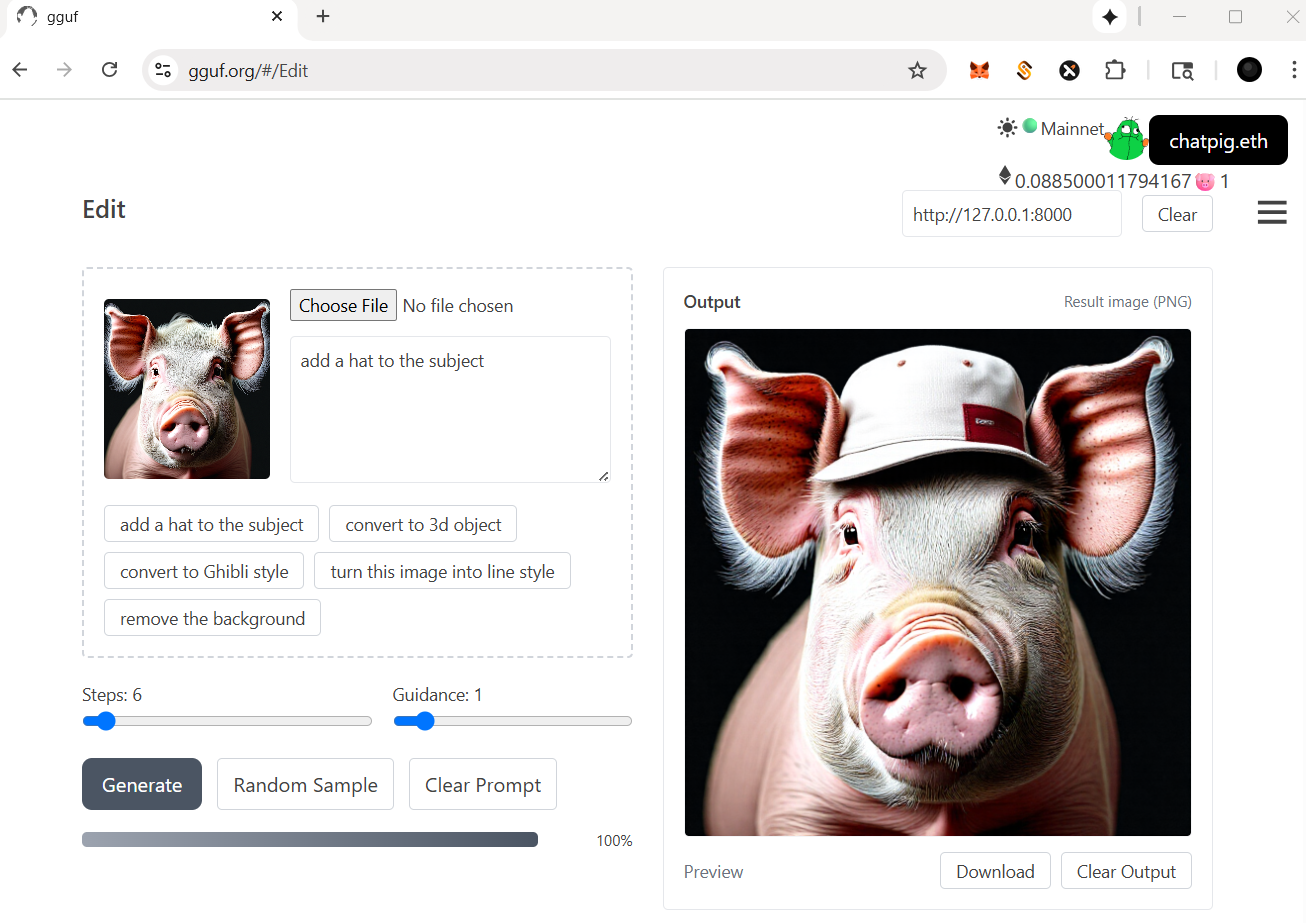

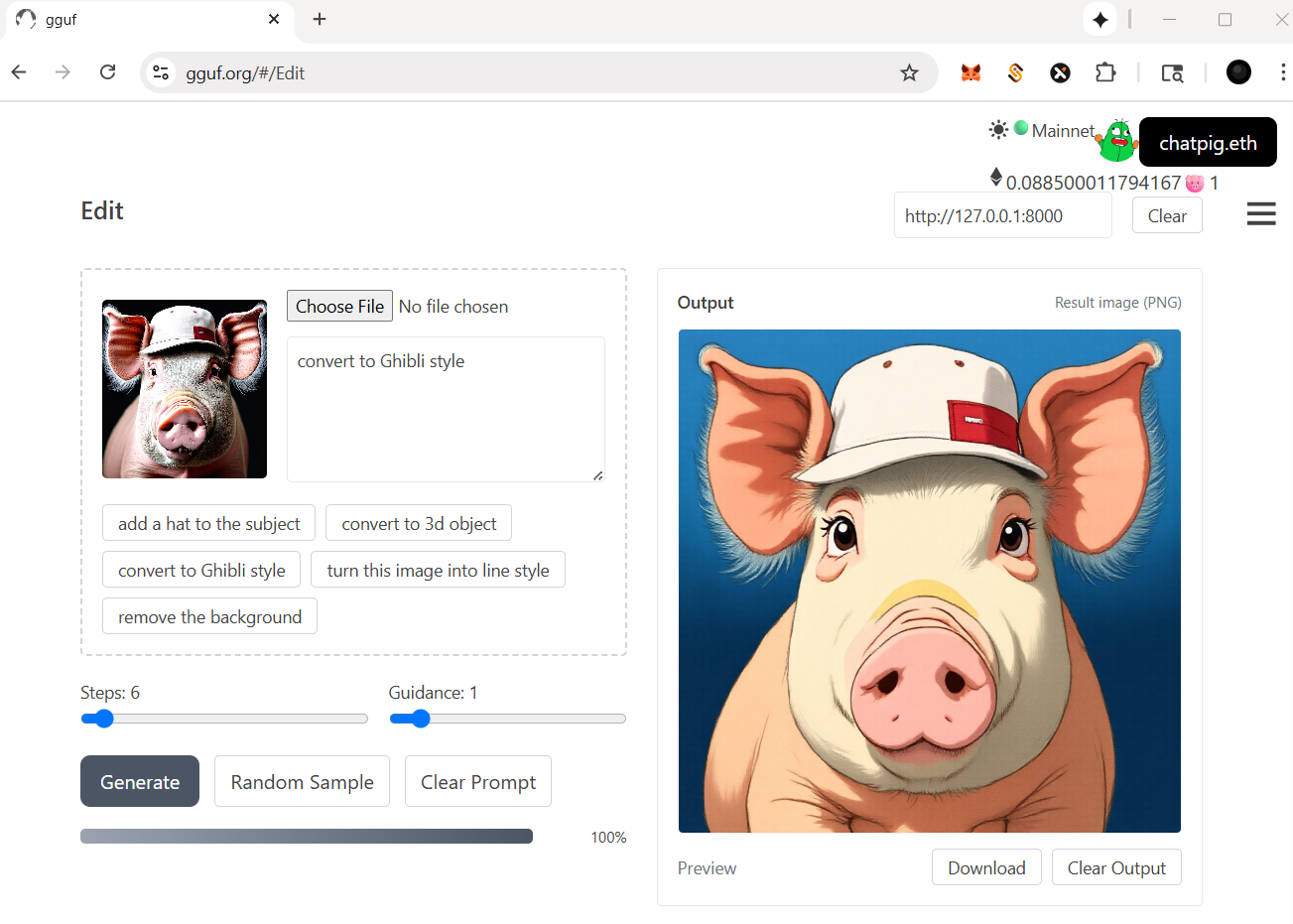

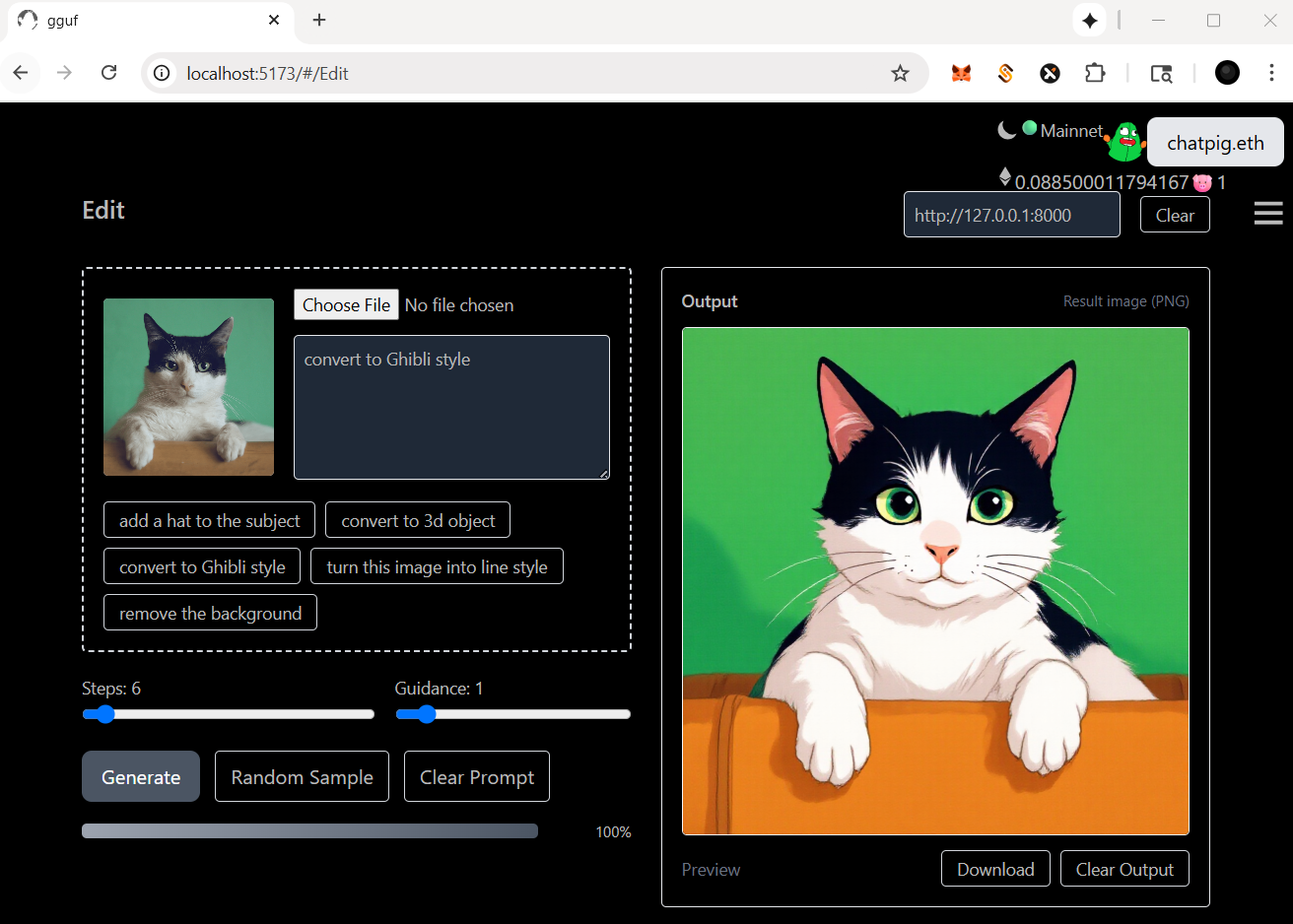

## self-hosted api (edit)

- run it with `gguf-connector`; activate the backend in console/terminal by

```

ggc e8

```

- choose your model file

>

>GGUF available. Select which one to use:

>

>1. flux-kontext-lite-q2_k.gguf [[4.08GB](https://huggingface.co/calcuis/kontext-gguf/blob/main/flux-kontext-lite-q2_k.gguf)]

>

>Enter your choice (1 to 1): _

>

## decentralized frontend - opt `Edit` from pulldown menu (stage 1: exclusive for 🐷 holder trial recently)

- option 1: navigate to https://gguf.org

- option 2: localhost; keep the backend running and open a new terminal session then execute

```

ggc a

```

## self-hosted api (plus)

- run it with `gguf-connector`; activate the backend in console/terminal by

```

ggc e9

```

- choose your model file

>

>Safetensors available. Select which one to use:

>

>1. sketch-s9-20b-fp4.safetensors (for blackwell card [11.9GB](https://huggingface.co/calcuis/sketch/blob/main/sketch-s9-20b-fp4.safetensors))

>2. sketch-s9-20b-int4.safetensors (for non-blackwell card [11.5GB](https://huggingface.co/calcuis/sketch/blob/main/sketch-s9-20b-int4.safetensors))

>

>Enter your choice (1 to 2): _

>

## decentralized frontend - opt `Plus` from pulldown menu (stage 1: exclusive for 🐷 holder trial recently)

- option 1: navigate to https://gguf.org

- option 2: localhost; keep the backend running and open a new terminal session then execute

```

ggc a

```

|