Create README.md

Browse files

README.md

ADDED

|

@@ -0,0 +1,75 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

base_model:

|

| 3 |

+

- Tongyi-MAI/Z-Image-Turbo

|

| 4 |

+

pipeline_tag: text-to-image

|

| 5 |

+

library_name: diffusers

|

| 6 |

+

license: apache-2.0

|

| 7 |

+

---

|

| 8 |

+

|

| 9 |

+

<h1 align="center">TwinFlow: Realizing One-step Generation on Large Models with Self-adversarial Flows</h1>

|

| 10 |

+

|

| 11 |

+

<div align="center">

|

| 12 |

+

|

| 13 |

+

[](https://zhenglin-cheng.com/twinflow)

|

| 14 |

+

[](https://huggingface.co/inclusionAI/TwinFlow)

|

| 15 |

+

[](https://huggingface.co/inclusionAI/TwinFlow-Z-Image-Turbo)

|

| 16 |

+

[](https://github.com/inclusionAI/TwinFlow)

|

| 17 |

+

<a href="https://arxiv.org/abs/2512.05150" target="_blank"><img src="https://img.shields.io/badge/Paper-b5212f.svg?logo=arxiv" height="21px"></a>

|

| 18 |

+

|

| 19 |

+

|

| 20 |

+

</div>

|

| 21 |

+

|

| 22 |

+

## News

|

| 23 |

+

|

| 24 |

+

- We release experimental version of faster Z-Image-Turbo!

|

| 25 |

+

- We release **TwinFlow-Qwen-Image-v1.0**! And we are also working on **Z-Image-Turbo to make it more faster**!

|

| 26 |

+

|

| 27 |

+

## TwinFlow

|

| 28 |

+

|

| 29 |

+

Checkout 2-NFE visualization of TwinFlow-Z-Image-Turbo-exp 👇

|

| 30 |

+

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

|

| 34 |

+

### Overview

|

| 35 |

+

|

| 36 |

+

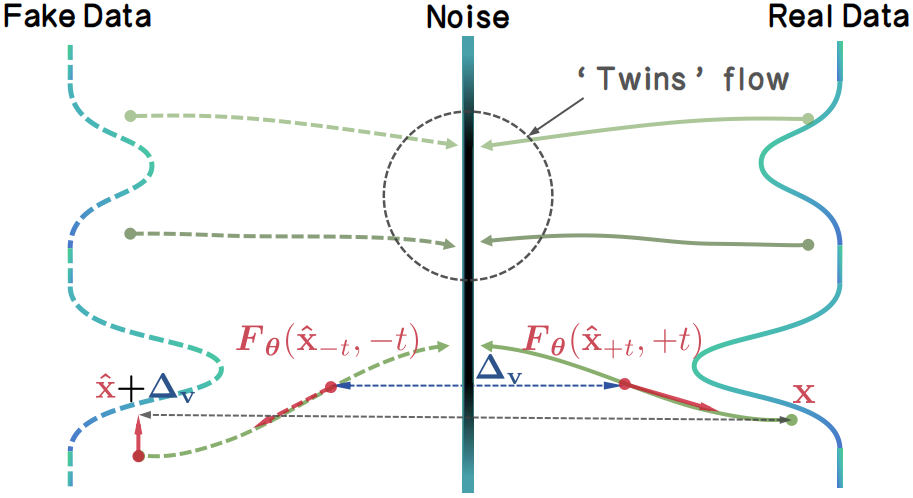

We introduce TwinFlow, a framework that realizes high-quality 1-step and few-step generation without the pipeline bloat.

|

| 37 |

+

|

| 38 |

+

Instead of relying on external discriminators or frozen teachers, TwinFlow creates an internal "twin trajectory". By extending the time interval to $t\in[−1,1]$, we utilize the negative time branch to map noise to "fake" data, creating a self-adversarial signal directly within the model.

|

| 39 |

+

|

| 40 |

+

Then, the model can rectify itself by minimizing the difference of the velocity fields between real trajectory and fake trajectory, i.e. the $\Delta_\mathrm{v}$. The rectification performs distribution matching as velocity matching, which gradually transforms the model into a 1-step/few-step generator.

|

| 41 |

+

|

| 42 |

+

|

| 43 |

+

|

| 44 |

+

Key Advantages:

|

| 45 |

+

- **One-model Simplicity.** We eliminate the need for any auxiliary networks. The model learns to rectify its own flow field, acting as the generator, fake/real score. No extra GPU memory is wasted on frozen teachers or discriminators during training.

|

| 46 |

+

- **Scalability on Large Models.** TwinFlow is **easy to scale on 20B full-parameter training** due to the one-model simplicity. In contrast, methods like VSD, SiD, and DMD/DMD2 require maintaining three separate models for distillation, which not only significantly increases memory consumption—often leading OOM, but also introduces substantial complexity when scaling to large-scale training regimes.

|

| 47 |

+

|

| 48 |

+

### Inference Demo

|

| 49 |

+

|

| 50 |

+

Install the latest diffusers:

|

| 51 |

+

|

| 52 |

+

```bash

|

| 53 |

+

pip install git+https://github.com/huggingface/diffusers

|

| 54 |

+

```

|

| 55 |

+

|

| 56 |

+

Run inference demo `inference.py`:

|

| 57 |

+

|

| 58 |

+

```python

|

| 59 |

+

python inference.py

|

| 60 |

+

```

|

| 61 |

+

|

| 62 |

+

## Citation

|

| 63 |

+

|

| 64 |

+

```bibtex

|

| 65 |

+

@article{cheng2025twinflow,

|

| 66 |

+

title={TwinFlow: Realizing One-step Generation on Large Models with Self-adversarial Flows},

|

| 67 |

+

author={Cheng, Zhenglin and Sun, Peng and Li, Jianguo and Lin, Tao},

|

| 68 |

+

journal={arXiv preprint arXiv:2512.05150},

|

| 69 |

+

year={2025}

|

| 70 |

+

}

|

| 71 |

+

```

|

| 72 |

+

|

| 73 |

+

## Acknowledgement

|

| 74 |

+

|

| 75 |

+

TwinFlow is built upon [RCGM](https://github.com/LINs-lab/RCGM) and [UCGM](https://github.com/LINs-lab/UCGM), with much support from [InclusionAI](https://github.com/inclusionAI).

|