Commit ·

68877a9

0

Parent(s):

Duplicate from unsloth/Qwen3-Coder-Next-GGUF

Browse filesCo-authored-by: Daniel (Unsloth) <danielhanchen@users.noreply.huggingface.co>

- .gitattributes +98 -0

- BF16/Qwen3-Coder-Next-BF16-00001-of-00004.gguf +3 -0

- BF16/Qwen3-Coder-Next-BF16-00002-of-00004.gguf +3 -0

- BF16/Qwen3-Coder-Next-BF16-00003-of-00004.gguf +3 -0

- BF16/Qwen3-Coder-Next-BF16-00004-of-00004.gguf +3 -0

- Q4_1/Qwen3-Coder-Next-Q4_1-00001-of-00003.gguf +3 -0

- Q4_1/Qwen3-Coder-Next-Q4_1-00002-of-00003.gguf +3 -0

- Q4_1/Qwen3-Coder-Next-Q4_1-00003-of-00003.gguf +3 -0

- Q5_K_M/Qwen3-Coder-Next-Q5_K_M-00001-of-00003.gguf +3 -0

- Q5_K_M/Qwen3-Coder-Next-Q5_K_M-00002-of-00003.gguf +3 -0

- Q5_K_M/Qwen3-Coder-Next-Q5_K_M-00003-of-00003.gguf +3 -0

- Q5_K_S/Qwen3-Coder-Next-Q5_K_S-00001-of-00003.gguf +3 -0

- Q5_K_S/Qwen3-Coder-Next-Q5_K_S-00002-of-00003.gguf +3 -0

- Q5_K_S/Qwen3-Coder-Next-Q5_K_S-00003-of-00003.gguf +3 -0

- Q6_K/Qwen3-Coder-Next-Q6_K-00001-of-00003.gguf +3 -0

- Q6_K/Qwen3-Coder-Next-Q6_K-00002-of-00003.gguf +3 -0

- Q6_K/Qwen3-Coder-Next-Q6_K-00003-of-00003.gguf +3 -0

- Q8_0/Qwen3-Coder-Next-Q8_0-00001-of-00003.gguf +3 -0

- Q8_0/Qwen3-Coder-Next-Q8_0-00002-of-00003.gguf +3 -0

- Q8_0/Qwen3-Coder-Next-Q8_0-00003-of-00003.gguf +3 -0

- Qwen3-Coder-Next-IQ4_NL.gguf +3 -0

- Qwen3-Coder-Next-IQ4_XS.gguf +3 -0

- Qwen3-Coder-Next-MXFP4_MOE.gguf +3 -0

- Qwen3-Coder-Next-Q2_K.gguf +3 -0

- Qwen3-Coder-Next-Q2_K_L.gguf +3 -0

- Qwen3-Coder-Next-Q3_K_M.gguf +3 -0

- Qwen3-Coder-Next-Q3_K_S.gguf +3 -0

- Qwen3-Coder-Next-Q4_0.gguf +3 -0

- Qwen3-Coder-Next-Q4_K_M.gguf +3 -0

- Qwen3-Coder-Next-Q4_K_S.gguf +3 -0

- Qwen3-Coder-Next-UD-IQ1_M.gguf +3 -0

- Qwen3-Coder-Next-UD-IQ1_S.gguf +3 -0

- Qwen3-Coder-Next-UD-IQ2_XXS.gguf +3 -0

- Qwen3-Coder-Next-UD-IQ3_XXS.gguf +3 -0

- Qwen3-Coder-Next-UD-Q2_K_XL.gguf +3 -0

- Qwen3-Coder-Next-UD-Q3_K_XL.gguf +3 -0

- Qwen3-Coder-Next-UD-Q4_K_XL.gguf +3 -0

- Qwen3-Coder-Next-UD-TQ1_0.gguf +3 -0

- README.md +246 -0

- UD-Q5_K_XL/Qwen3-Coder-Next-UD-Q5_K_XL-00001-of-00003.gguf +3 -0

- UD-Q5_K_XL/Qwen3-Coder-Next-UD-Q5_K_XL-00002-of-00003.gguf +3 -0

- UD-Q5_K_XL/Qwen3-Coder-Next-UD-Q5_K_XL-00003-of-00003.gguf +3 -0

- UD-Q6_K_XL/Qwen3-Coder-Next-UD-Q6_K_XL-00001-of-00003.gguf +3 -0

- UD-Q6_K_XL/Qwen3-Coder-Next-UD-Q6_K_XL-00002-of-00003.gguf +3 -0

- UD-Q6_K_XL/Qwen3-Coder-Next-UD-Q6_K_XL-00003-of-00003.gguf +3 -0

- UD-Q8_K_XL/Qwen3-Coder-Next-UD-Q8_K_XL-00001-of-00003.gguf +3 -0

- UD-Q8_K_XL/Qwen3-Coder-Next-UD-Q8_K_XL-00002-of-00003.gguf +3 -0

- UD-Q8_K_XL/Qwen3-Coder-Next-UD-Q8_K_XL-00003-of-00003.gguf +3 -0

- imatrix_unsloth.gguf_file +3 -0

.gitattributes

ADDED

|

@@ -0,0 +1,98 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

BF16/Qwen3-Coder-Next-BF16-00004-of-00004.gguf filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

BF16/Qwen3-Coder-Next-BF16-00003-of-00004.gguf filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

BF16/Qwen3-Coder-Next-BF16-00001-of-00004.gguf filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

BF16/Qwen3-Coder-Next-BF16-00002-of-00004.gguf filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

Qwen3-Coder-Next-UD-TQ1_0.gguf filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

Qwen3-Coder-Next-UD-IQ1_S.gguf filter=lfs diff=lfs merge=lfs -text

|

| 42 |

+

Qwen3-Coder-Next-UD-Q3_K_XL.gguf filter=lfs diff=lfs merge=lfs -text

|

| 43 |

+

Qwen3-Coder-Next-UD-Q2_K_XL.gguf filter=lfs diff=lfs merge=lfs -text

|

| 44 |

+

Qwen3-Coder-Next-UD-Q4_K_XL.gguf filter=lfs diff=lfs merge=lfs -text

|

| 45 |

+

Qwen3-Coder-Next-UD-IQ1_M.gguf filter=lfs diff=lfs merge=lfs -text

|

| 46 |

+

Qwen3-Coder-Next-Q4_K_M.gguf filter=lfs diff=lfs merge=lfs -text

|

| 47 |

+

Qwen3-Coder-Next-UD-IQ3_XXS.gguf filter=lfs diff=lfs merge=lfs -text

|

| 48 |

+

UD-Q5_K_XL/Qwen3-Coder-Next-UD-Q5_K_XL-00001-of-00002.gguf filter=lfs diff=lfs merge=lfs -text

|

| 49 |

+

UD-Q5_K_XL/Qwen3-Coder-Next-UD-Q5_K_XL-00002-of-00002.gguf filter=lfs diff=lfs merge=lfs -text

|

| 50 |

+

Qwen3-Coder-Next-Q2_K.gguf filter=lfs diff=lfs merge=lfs -text

|

| 51 |

+

Qwen3-Coder-Next-UD-IQ2_XXS.gguf filter=lfs diff=lfs merge=lfs -text

|

| 52 |

+

Qwen3-Coder-Next-Q3_K_M.gguf filter=lfs diff=lfs merge=lfs -text

|

| 53 |

+

Q6_K/Qwen3-Coder-Next-Q6_K-00001-of-00002.gguf filter=lfs diff=lfs merge=lfs -text

|

| 54 |

+

Q6_K/Qwen3-Coder-Next-Q6_K-00002-of-00002.gguf filter=lfs diff=lfs merge=lfs -text

|

| 55 |

+

Qwen3-Coder-Next-Q2_K_L.gguf filter=lfs diff=lfs merge=lfs -text

|

| 56 |

+

Qwen3-Coder-Next-IQ4_XS.gguf filter=lfs diff=lfs merge=lfs -text

|

| 57 |

+

Qwen3-Coder-Next-IQ4_NL.gguf filter=lfs diff=lfs merge=lfs -text

|

| 58 |

+

Q5_K_M/Qwen3-Coder-Next-Q5_K_M-00001-of-00002.gguf filter=lfs diff=lfs merge=lfs -text

|

| 59 |

+

Q5_K_M/Qwen3-Coder-Next-Q5_K_M-00002-of-00002.gguf filter=lfs diff=lfs merge=lfs -text

|

| 60 |

+

Qwen3-Coder-Next-Q3_K_S.gguf filter=lfs diff=lfs merge=lfs -text

|

| 61 |

+

Qwen3-Coder-Next-Q4_0.gguf filter=lfs diff=lfs merge=lfs -text

|

| 62 |

+

Qwen3-Coder-Next-Q4_K_S.gguf filter=lfs diff=lfs merge=lfs -text

|

| 63 |

+

Q4_1/Qwen3-Coder-Next-Q4_1-00001-of-00002.gguf filter=lfs diff=lfs merge=lfs -text

|

| 64 |

+

Q4_1/Qwen3-Coder-Next-Q4_1-00002-of-00002.gguf filter=lfs diff=lfs merge=lfs -text

|

| 65 |

+

UD-Q6_K_XL/Qwen3-Coder-Next-UD-Q6_K_XL-00001-of-00002.gguf filter=lfs diff=lfs merge=lfs -text

|

| 66 |

+

UD-Q6_K_XL/Qwen3-Coder-Next-UD-Q6_K_XL-00002-of-00002.gguf filter=lfs diff=lfs merge=lfs -text

|

| 67 |

+

Q5_K_S/Qwen3-Coder-Next-Q5_K_S-00001-of-00002.gguf filter=lfs diff=lfs merge=lfs -text

|

| 68 |

+

Q5_K_S/Qwen3-Coder-Next-Q5_K_S-00002-of-00002.gguf filter=lfs diff=lfs merge=lfs -text

|

| 69 |

+

Q8_0/Qwen3-Coder-Next-Q8_0-00001-of-00002.gguf filter=lfs diff=lfs merge=lfs -text

|

| 70 |

+

Q8_0/Qwen3-Coder-Next-Q8_0-00002-of-00002.gguf filter=lfs diff=lfs merge=lfs -text

|

| 71 |

+

UD-Q8_K_XL/Qwen3-Coder-Next-UD-Q8_K_XL-00001-of-00002.gguf filter=lfs diff=lfs merge=lfs -text

|

| 72 |

+

UD-Q8_K_XL/Qwen3-Coder-Next-UD-Q8_K_XL-00002-of-00002.gguf filter=lfs diff=lfs merge=lfs -text

|

| 73 |

+

imatrix_unsloth.gguf_file filter=lfs diff=lfs merge=lfs -text

|

| 74 |

+

Qwen3-Coder-Next-MXFP4_MOE.gguf filter=lfs diff=lfs merge=lfs -text

|

| 75 |

+

Q6_K/Qwen3-Coder-Next-Q6_K-00001-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 76 |

+

Q6_K/Qwen3-Coder-Next-Q6_K-00002-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 77 |

+

Q6_K/Qwen3-Coder-Next-Q6_K-00003-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 78 |

+

UD-Q5_K_XL/Qwen3-Coder-Next-UD-Q5_K_XL-00001-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 79 |

+

UD-Q5_K_XL/Qwen3-Coder-Next-UD-Q5_K_XL-00002-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 80 |

+

UD-Q5_K_XL/Qwen3-Coder-Next-UD-Q5_K_XL-00003-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 81 |

+

UD-Q8_K_XL/Qwen3-Coder-Next-UD-Q8_K_XL-00001-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 82 |

+

UD-Q8_K_XL/Qwen3-Coder-Next-UD-Q8_K_XL-00002-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 83 |

+

UD-Q8_K_XL/Qwen3-Coder-Next-UD-Q8_K_XL-00003-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 84 |

+

Q8_0/Qwen3-Coder-Next-Q8_0-00001-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 85 |

+

Q8_0/Qwen3-Coder-Next-Q8_0-00002-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 86 |

+

Q8_0/Qwen3-Coder-Next-Q8_0-00003-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 87 |

+

Q4_1/Qwen3-Coder-Next-Q4_1-00001-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 88 |

+

Q4_1/Qwen3-Coder-Next-Q4_1-00002-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 89 |

+

Q4_1/Qwen3-Coder-Next-Q4_1-00003-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 90 |

+

UD-Q6_K_XL/Qwen3-Coder-Next-UD-Q6_K_XL-00001-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 91 |

+

UD-Q6_K_XL/Qwen3-Coder-Next-UD-Q6_K_XL-00002-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 92 |

+

UD-Q6_K_XL/Qwen3-Coder-Next-UD-Q6_K_XL-00003-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 93 |

+

Q5_K_S/Qwen3-Coder-Next-Q5_K_S-00001-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 94 |

+

Q5_K_S/Qwen3-Coder-Next-Q5_K_S-00002-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 95 |

+

Q5_K_S/Qwen3-Coder-Next-Q5_K_S-00003-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 96 |

+

Q5_K_M/Qwen3-Coder-Next-Q5_K_M-00001-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 97 |

+

Q5_K_M/Qwen3-Coder-Next-Q5_K_M-00002-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

| 98 |

+

Q5_K_M/Qwen3-Coder-Next-Q5_K_M-00003-of-00003.gguf filter=lfs diff=lfs merge=lfs -text

|

BF16/Qwen3-Coder-Next-BF16-00001-of-00004.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f49d3bfde5b8588f49d78610ef3a25af5c0ced0a4c4ab77bb8dca53062ba78a3

|

| 3 |

+

size 49004196032

|

BF16/Qwen3-Coder-Next-BF16-00002-of-00004.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f3258410cc3966a4888f5565eca2bc98e791b659a7041c3da419a751a5035a9a

|

| 3 |

+

size 49426194752

|

BF16/Qwen3-Coder-Next-BF16-00003-of-00004.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:83b2f953e1bf2f162588430b0110cb0938245f3bf7d488ff8ca15790b5790d37

|

| 3 |

+

size 49447199840

|

BF16/Qwen3-Coder-Next-BF16-00004-of-00004.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:89280f8d77456c2ea0286646384bef74f96d06e4cdb9e65b7632aeb52f9ef499

|

| 3 |

+

size 11580824608

|

Q4_1/Qwen3-Coder-Next-Q4_1-00001-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:6997125c01586ef1778b90ce5f78fe51a5fa965dc719f31c7efd114e74081d36

|

| 3 |

+

size 5936320

|

Q4_1/Qwen3-Coder-Next-Q4_1-00002-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:121a419a310ff0c9545fb729a7f904ea610d8ca830a9c9b80b4c04f2404ec4ef

|

| 3 |

+

size 49715376992

|

Q4_1/Qwen3-Coder-Next-Q4_1-00003-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0ffcdbf9c258e69a140213af9ba1f1c90b4b3166f7f94ffccf04146f790ee07c

|

| 3 |

+

size 336109888

|

Q5_K_M/Qwen3-Coder-Next-Q5_K_M-00001-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:183e233cc93de594cf343d914bb2ba21f7ae252ac4699b52b83c1200327f4b11

|

| 3 |

+

size 5936320

|

Q5_K_M/Qwen3-Coder-Next-Q5_K_M-00002-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:bcfc3c900a2b6ed0d7d5c51397edd55261a0562a1ce64b62204c4576c3ecafd0

|

| 3 |

+

size 49604927264

|

Q5_K_M/Qwen3-Coder-Next-Q5_K_M-00003-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9b49c5c452924ca3b0864c6a32b3e5ebd14d6a063c5a89c976947347ed9a802c

|

| 3 |

+

size 7237023104

|

Q5_K_S/Qwen3-Coder-Next-Q5_K_S-00001-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:85b3695c221fe4716b5d1397da1db7643d44dc697e03513b35c6a36aca479683

|

| 3 |

+

size 5936320

|

Q5_K_S/Qwen3-Coder-Next-Q5_K_S-00002-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:cdd131e37d7d656897004f434ced764c230cfc962232328ab907c4e6f3d4d1e2

|

| 3 |

+

size 49756868608

|

Q5_K_S/Qwen3-Coder-Next-Q5_K_S-00003-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:086f85116ece1e651a21f937268987214a9504dd5320842f6d450f765ba9c11f

|

| 3 |

+

size 5292475552

|

Q6_K/Qwen3-Coder-Next-Q6_K-00001-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b29fc953bb49f2f7857a7112dbe32dc17b7842c9afeaa4895a431437c692df50

|

| 3 |

+

size 5936320

|

Q6_K/Qwen3-Coder-Next-Q6_K-00002-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f28f4a3579b187ff5b2b67ae12cd7e060733775a6ccc9f2f8b59c3c17645b84b

|

| 3 |

+

size 49795838592

|

Q6_K/Qwen3-Coder-Next-Q6_K-00003-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4b08b8b13480d9ae269e77c09931dfbe45b8d002836da979d6b9078d0786ba61

|

| 3 |

+

size 15812217376

|

Q8_0/Qwen3-Coder-Next-Q8_0-00001-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:22a7b3e9ac874942f06255527a0c4b884f777097e87e53c3e53922145f3ba8ed

|

| 3 |

+

size 5936320

|

Q8_0/Qwen3-Coder-Next-Q8_0-00002-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:491753e1ed8f20a5b898ae2f47ca58bed2707b56c00a3ec7c0b681b46d953ff8

|

| 3 |

+

size 49772613984

|

Q8_0/Qwen3-Coder-Next-Q8_0-00003-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4dced2b07a817f1e0c085fcc332b76c6e32bbab5e0c43311e0224f6249e03366

|

| 3 |

+

size 35033705312

|

Qwen3-Coder-Next-IQ4_NL.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f18845b505e2393eb106635b3eaae37c26690497b20edfce8d457ccc0b521e76

|

| 3 |

+

size 45130344480

|

Qwen3-Coder-Next-IQ4_XS.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9039c9ebc67360f8760f32c8c72f800a74454b17c3fa287c0e2f34a55794e463

|

| 3 |

+

size 42676135968

|

Qwen3-Coder-Next-MXFP4_MOE.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:08fd6cfddf165a636debf1f928a67cec37484e82aa999a5240a11c319a4e8062

|

| 3 |

+

size 43741630240

|

Qwen3-Coder-Next-Q2_K.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2ac738bc947bc3470e37962c2ee0ab390fa953740e9873575695276980abad87

|

| 3 |

+

size 29224450080

|

Qwen3-Coder-Next-Q2_K_L.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:1c77ccf43a2ebf5f4d7232d268aaa5f8cb7f7397d6576de188576007859a84e4

|

| 3 |

+

size 29297379360

|

Qwen3-Coder-Next-Q3_K_M.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b12ec74934e548b3e9cb80d05660a9a6a5af1a2aec6fbf5f9e9b4a36626aee75

|

| 3 |

+

size 38322487328

|

Qwen3-Coder-Next-Q3_K_S.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0b076707e566e68fc8e307ff2a14cd2cfa7822e60467da31793e22c0cdc9e70c

|

| 3 |

+

size 34603089952

|

Qwen3-Coder-Next-Q4_0.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e981b4171e7e1c7bd73a6928d123d8d8591e8d9d64e1a29889752c336b4022a9

|

| 3 |

+

size 45330098208

|

Qwen3-Coder-Next-Q4_K_M.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9e6032d2f3b50a60f17ce8bf5a1d85c71af9b53b89c7978020ae7c660f29b090

|

| 3 |

+

size 48528320544

|

Qwen3-Coder-Next-Q4_K_S.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:95e490ddf8d25eb911557ee1f4604ab7207e43f00a2bcf16223040ca0484cdb5

|

| 3 |

+

size 45531949088

|

Qwen3-Coder-Next-UD-IQ1_M.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:cde92c821c5afed845f19eaafe3fdd74781ca8325f95989f5a6f04f2efa85922

|

| 3 |

+

size 24155007008

|

Qwen3-Coder-Next-UD-IQ1_S.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:98c98964d9dbc8aaba3153abe2aca35f6202a867e9e3ba2568b982621815d4ce

|

| 3 |

+

size 21508749344

|

Qwen3-Coder-Next-UD-IQ2_XXS.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:7b479c178312cc7d2a133e1641779c5c7074f25bf1db7e7fe37f6da27b284edb

|

| 3 |

+

size 26285897760

|

Qwen3-Coder-Next-UD-IQ3_XXS.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:539ab176968feb2b94cc38afe094064c1fab430939de2a26f2fc86744c1fec31

|

| 3 |

+

size 32706197536

|

Qwen3-Coder-Next-UD-Q2_K_XL.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a481e87afcb15e9d53442fa656b8c3b6b040da540c928495c9ba2b29637ae339

|

| 3 |

+

size 30228875296

|

Qwen3-Coder-Next-UD-Q3_K_XL.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:cfb4dacced22b5304c8d8e1aafe80ae9e330ca01e440122a9c563fde9bd5753e

|

| 3 |

+

size 35474659360

|

Qwen3-Coder-Next-UD-Q4_K_XL.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:bda283baab0b17b595a931957855b926d583094725e1add13068b0ba33c45ab5

|

| 3 |

+

size 44570617888

|

Qwen3-Coder-Next-UD-TQ1_0.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:352049921f1dc861bf33fd03f5d2c25a08f248ee808179fe63ce16307357551c

|

| 3 |

+

size 18941835296

|

README.md

ADDED

|

@@ -0,0 +1,246 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

tags:

|

| 3 |

+

- qwen3_next

|

| 4 |

+

- unsloth

|

| 5 |

+

- qwen

|

| 6 |

+

- qwen3

|

| 7 |

+

base_model:

|

| 8 |

+

- Qwen/Qwen3-Coder-Next

|

| 9 |

+

license: apache-2.0

|

| 10 |

+

license_link: https://huggingface.co/Qwen/Qwen3-Coder-Next/blob/main/LICENSE

|

| 11 |

+

pipeline_tag: text-generation

|

| 12 |

+

---

|

| 13 |

+

<div>

|

| 14 |

+

<p style="margin-bottom: 0; margin-top: 0;">

|

| 15 |

+

<h1 style="margin-top: 0rem;">To Run Qwen3-Coder-Next locally - <a href="https://unsloth.ai/docs/models/qwen3-coder-next">Read our Guide!</a></h1>

|

| 16 |

+

</p>

|

| 17 |

+

|

| 18 |

+

<p style="margin-top: 0;margin-bottom: 0;">

|

| 19 |

+

<em><a href="https://unsloth.ai/docs/basics/unsloth-dynamic-2.0-ggufs">Unsloth Dynamic 2.0</a> achieves superior accuracy & outperforms other leading quants.</em>

|

| 20 |

+

</p>

|

| 21 |

+

<div style="margin-top: 0;display: flex; gap: 5px; align-items: center; ">

|

| 22 |

+

<a href="https://github.com/unslothai/unsloth/">

|

| 23 |

+

<img src="https://github.com/unslothai/unsloth/raw/main/images/unsloth%20new%20logo.png" width="133">

|

| 24 |

+

</a>

|

| 25 |

+

<a href="https://discord.gg/unsloth">

|

| 26 |

+

<img src="https://github.com/unslothai/unsloth/raw/main/images/Discord%20button.png" width="173">

|

| 27 |

+

</a>

|

| 28 |

+

<a href="https://unsloth.ai/docs/models/qwen3-coder-next">

|

| 29 |

+

<img src="https://raw.githubusercontent.com/unslothai/unsloth/refs/heads/main/images/documentation%20green%20button.png" width="143">

|

| 30 |

+

</a>

|

| 31 |

+

</div>

|

| 32 |

+

</div>

|

| 33 |

+

|

| 34 |

+

- **Feb 19 update**: Tool-calling should now be even better after llama.cpp fixes parsing.

|

| 35 |

+

- **Quantization benchmarks**: See third-party Aider, LiveCodeBench v6, MMLU Pro, GPQA [benchmarks for GGUFs here](https://unsloth.ai/docs/models/qwen3-coder-next#gguf-quantization-benchmarks).</h2>

|

| 36 |

+

- **Feb 4 update**: llama.cpp fixed a bug that caused Qwen to loop and have poor outputs.<br>We updated GGUFs - please re-download and update llama.cpp for improved outputs.</h2>

|

| 37 |

+

|

| 38 |

+

## Qwen3-Coder-Next Usage Guidelines

|

| 39 |

+

- It is recommended to have >45GB unified memory or RAM/VRAM to run 4-bit quants.

|

| 40 |

+

- For best results, use any 2-bit XL quant or above (requires >30GB unified memory /combined RAM + VRAM).

|

| 41 |

+

- See how to run the model via [Claude Code & OpenAI Codex](https://unsloth.ai/docs/models/qwen3-coder-next#claude-codex).

|

| 42 |

+

- For complete detailed instructions (sampling parameters etc.), see our guide: [docs.unsloth.ai/models/qwen3-coder-next](https://unsloth.ai/docs/models/qwen3-coder-next)

|

| 43 |

+

|

| 44 |

+

---

|

| 45 |

+

|

| 46 |

+

# Qwen3-Coder-Next

|

| 47 |

+

|

| 48 |

+

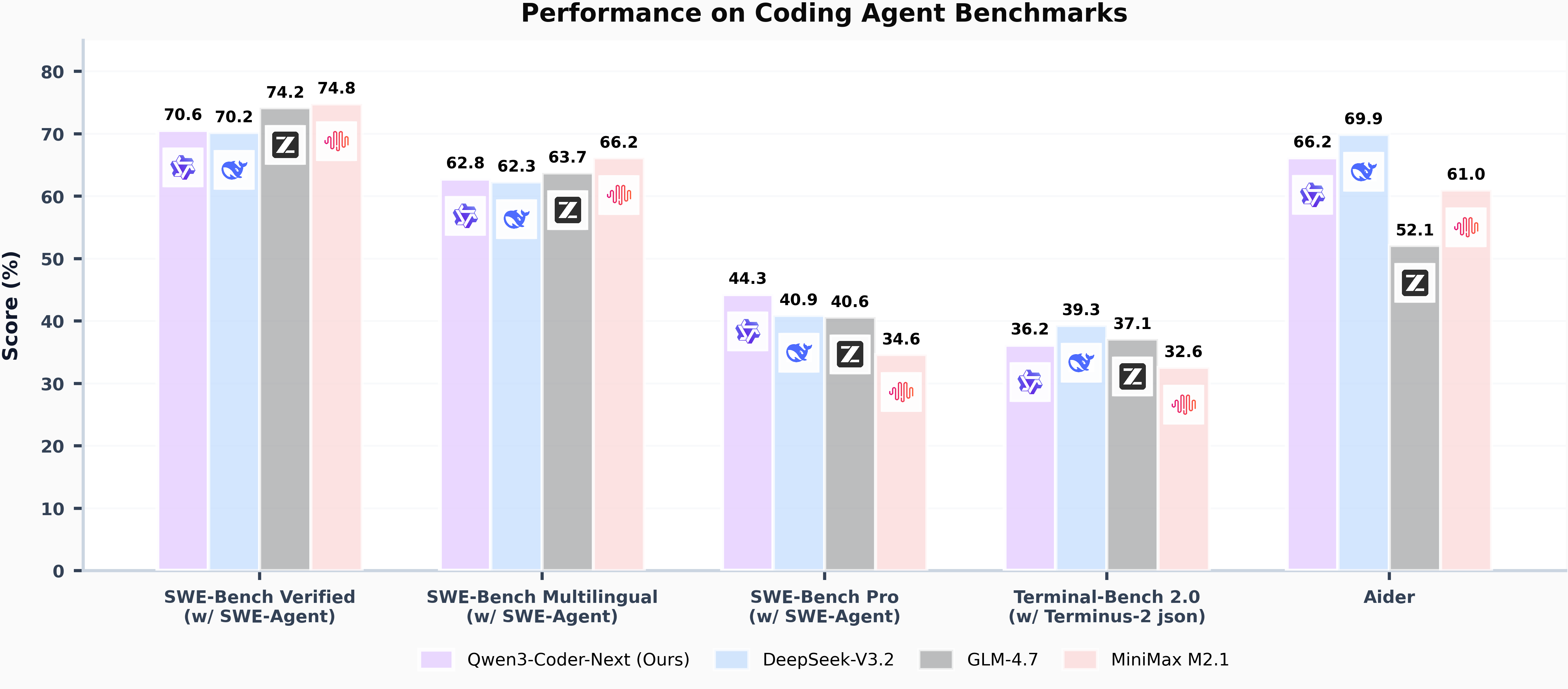

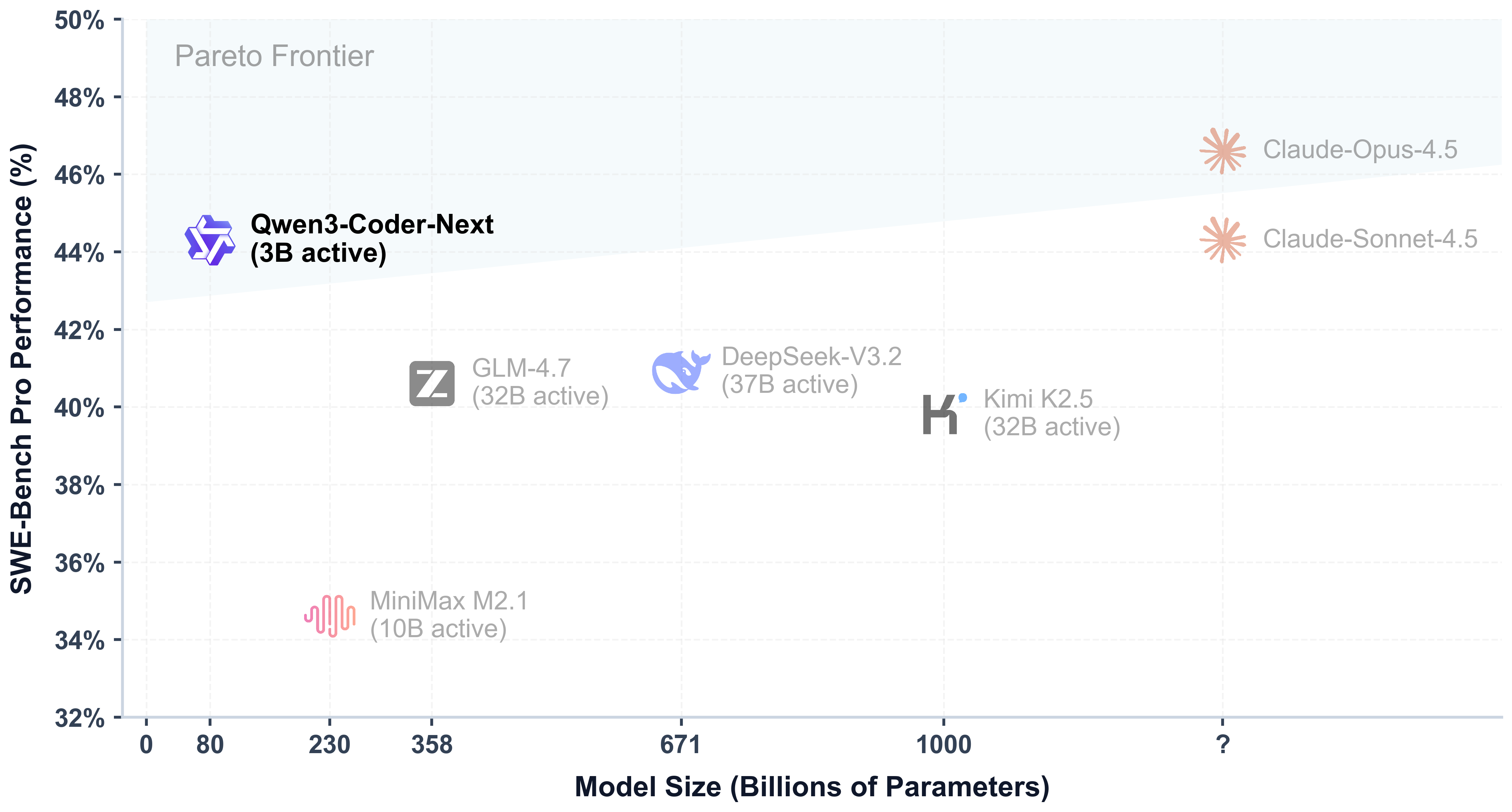

## Highlights

|

| 49 |

+

|

| 50 |

+

Today, we're announcing **Qwen3-Coder-Next**, an open-weight language model designed specifically for coding agents and local development. It features the following key enhancements:

|

| 51 |

+

|

| 52 |

+

- **Super Efficient with Significant Performance**: With only 3B activated parameters (80B total parameters), it achieves performance comparable to models with 10–20x more active parameters, making it highly cost-effective for agent deployment.

|

| 53 |

+

- **Advanced Agentic Capabilities**: Through an elaborate training recipe, it excels at long-horizon reasoning, complex tool usage, and recovery from execution failures, ensuring robust performance in dynamic coding tasks.

|

| 54 |

+

- **Versatile Integration with Real-World IDE**: Its 256k context length, combined with adaptability to various scaffold templates, enables seamless integration with different CLI/IDE platforms (e.g., Claude Code, Qwen Code, Qoder, Kilo, Trae, Cline, etc.), supporting diverse development environments.

|

| 55 |

+

|

| 56 |

+

|

| 57 |

+

|

| 58 |

+

|

| 59 |

+

|

| 60 |

+

## Model Overview

|

| 61 |

+

|

| 62 |

+

**Qwen3-Coder-Next** has the following features:

|

| 63 |

+

- Type: Causal Language Models

|

| 64 |

+

- Training Stage: Pretraining & Post-training

|

| 65 |

+

- Number of Parameters: 80B in total and 3B activated

|

| 66 |

+

- Number of Parameters (Non-Embedding): 79B

|

| 67 |

+

- Hidden Dimension: 2048

|

| 68 |

+

- Number of Layers: 48

|

| 69 |

+

- Hybrid Layout: 12 \* (3 \* (Gated DeltaNet -> MoE) -> 1 \* (Gated Attention -> MoE))

|

| 70 |

+

- Gated Attention:

|

| 71 |

+

- Number of Attention Heads: 16 for Q and 2 for KV

|

| 72 |

+

- Head Dimension: 256

|

| 73 |

+

- Rotary Position Embedding Dimension: 64

|

| 74 |

+

- Gated DeltaNet:

|

| 75 |

+

- Number of Linear Attention Heads: 32 for V and 16 for QK

|

| 76 |

+

- Head Dimension: 128

|

| 77 |

+

- Mixture of Experts:

|

| 78 |

+

- Number of Experts: 512

|

| 79 |

+

- Number of Activated Experts: 10

|

| 80 |

+

- Number of Shared Experts: 1

|

| 81 |

+

- Expert Intermediate Dimension: 512

|

| 82 |

+

- Context Length: 262,144 natively

|

| 83 |

+

|

| 84 |

+

**NOTE: This model supports only non-thinking mode and does not generate ``<think></think>`` blocks in its output. Meanwhile, specifying `enable_thinking=False` is no longer required.**

|

| 85 |

+

|

| 86 |

+

For more details, including benchmark evaluation, hardware requirements, and inference performance, please refer to our [blog](https://qwen.ai/blog?id=qwen3-coder-next), [GitHub](https://github.com/QwenLM/Qwen3-Coder), and [Documentation](https://qwen.readthedocs.io/en/latest/).

|

| 87 |

+

|

| 88 |

+

|

| 89 |

+

## Quickstart

|

| 90 |

+

|

| 91 |

+

We advise you to use the latest version of `transformers`.

|

| 92 |

+

|

| 93 |

+

The following contains a code snippet illustrating how to use the model generate content based on given inputs.

|

| 94 |

+

```python

|

| 95 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 96 |

+

|

| 97 |

+

model_name = "Qwen/Qwen3-Coder-Next"

|

| 98 |

+

|

| 99 |

+

# load the tokenizer and the model

|

| 100 |

+

tokenizer = AutoTokenizer.from_pretrained(model_name)

|

| 101 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 102 |

+

model_name,

|

| 103 |

+

torch_dtype="auto",

|

| 104 |

+

device_map="auto"

|

| 105 |

+

)

|

| 106 |

+

|

| 107 |

+

# prepare the model input

|

| 108 |

+

prompt = "Write a quick sort algorithm."

|

| 109 |

+

messages = [

|

| 110 |

+

{"role": "user", "content": prompt}

|

| 111 |

+

]

|

| 112 |

+

text = tokenizer.apply_chat_template(

|

| 113 |

+

messages,

|

| 114 |

+

tokenize=False,

|

| 115 |

+

add_generation_prompt=True,

|

| 116 |

+

)

|

| 117 |

+

model_inputs = tokenizer([text], return_tensors="pt").to(model.device)

|

| 118 |

+

|

| 119 |

+

# conduct text completion

|

| 120 |

+

generated_ids = model.generate(

|

| 121 |

+

**model_inputs,

|

| 122 |

+

max_new_tokens=65536

|

| 123 |

+

)

|

| 124 |

+

output_ids = generated_ids[0][len(model_inputs.input_ids[0]):].tolist()

|

| 125 |

+

|

| 126 |

+

content = tokenizer.decode(output_ids, skip_special_tokens=True)

|

| 127 |

+

|

| 128 |

+

print("content:", content)

|

| 129 |

+

```

|

| 130 |

+

|

| 131 |

+

**Note: If you encounter out-of-memory (OOM) issues, consider reducing the context length to a shorter value, such as `32,768`.**

|

| 132 |

+

|

| 133 |

+

For local use, applications such as Ollama, LMStudio, MLX-LM, llama.cpp, and KTransformers have also supported Qwen3.

|

| 134 |

+

|

| 135 |

+

## Deployment

|

| 136 |

+

|

| 137 |

+

For deployment, you can use the latest `sglang` or `vllm` to create an OpenAI-compatible API endpoint.

|

| 138 |

+

|

| 139 |

+

### SGLang

|

| 140 |

+

|

| 141 |

+

[SGLang](https://github.com/sgl-project/sglang) is a fast serving framework for large language models and vision language models.

|

| 142 |

+

SGLang could be used to launch a server with OpenAI-compatible API service.

|

| 143 |

+

|

| 144 |

+

`sglang>=v0.5.8` is required for Qwen3-Coder-Next, which can be installed using:

|

| 145 |

+

```shell

|

| 146 |

+

pip install 'sglang[all]>=v0.5.8'

|

| 147 |

+

```

|

| 148 |

+

See [its documentation](https://docs.sglang.ai/get_started/install.html) for more details.

|

| 149 |

+

|

| 150 |

+

The following command can be used to create an API endpoint at `http://localhost:30000/v1` with maximum context length 256K tokens using tensor parallel on 4 GPUs.

|

| 151 |

+

```shell

|

| 152 |

+

python -m sglang.launch_server --model Qwen/Qwen3-Coder-Next --port 30000 --tp-size 2 --tool-call-parser qwen3_coder```

|

| 153 |

+

```

|

| 154 |

+

|

| 155 |

+

> [!Note]

|

| 156 |

+

> The default context length is 256K. Consider reducing the context length to a smaller value, e.g., `32768`, if the server fails to start.

|

| 157 |

+

|

| 158 |

+

|

| 159 |

+

### vLLM

|

| 160 |

+

|

| 161 |

+

[vLLM](https://github.com/vllm-project/vllm) is a high-throughput and memory-efficient inference and serving engine for LLMs.

|

| 162 |

+

vLLM could be used to launch a server with OpenAI-compatible API service.

|

| 163 |

+

|

| 164 |

+

`vllm>=0.15.0` is required for Qwen3-Coder-Next, which can be installed using:

|

| 165 |

+

```shell

|

| 166 |

+

pip install 'vllm>=0.15.0'

|

| 167 |

+

```

|

| 168 |

+

See [its documentation](https://docs.vllm.ai/en/stable/getting_started/installation/index.html) for more details.

|

| 169 |

+

|

| 170 |

+

The following command can be used to create an API endpoint at `http://localhost:8000/v1` with maximum context length 256K tokens using tensor parallel on 4 GPUs.

|

| 171 |

+

```shell

|

| 172 |

+

vllm serve Qwen/Qwen3-Coder-Next --port 8000 --tensor-parallel-size 2 --enable-auto-tool-choice --tool-call-parser qwen3_coder

|

| 173 |

+

```

|

| 174 |

+

|

| 175 |

+

> [!Note]

|

| 176 |

+

> The default context length is 256K. Consider reducing the context length to a smaller value, e.g., `32768`, if the server fails to start.

|

| 177 |

+

|

| 178 |

+

|

| 179 |

+

## Agentic Coding

|

| 180 |

+

|

| 181 |

+

Qwen3-Coder-Next excels in tool calling capabilities.

|

| 182 |

+

|

| 183 |

+

You can simply define or use any tools as following example.

|

| 184 |

+

```python

|

| 185 |

+

# Your tool implementation

|

| 186 |

+

def square_the_number(num: float) -> dict:

|

| 187 |

+

return num ** 2

|

| 188 |

+

|

| 189 |

+

# Define Tools

|

| 190 |

+

tools=[

|

| 191 |

+

{

|

| 192 |

+

"type":"function",

|

| 193 |

+

"function":{

|

| 194 |

+

"name": "square_the_number",

|

| 195 |

+

"description": "output the square of the number.",

|

| 196 |

+

"parameters": {

|

| 197 |

+

"type": "object",

|

| 198 |

+

"required": ["input_num"],

|

| 199 |

+

"properties": {

|

| 200 |

+

'input_num': {

|

| 201 |

+

'type': 'number',

|

| 202 |

+

'description': 'input_num is a number that will be squared'

|

| 203 |

+

}

|

| 204 |

+

},

|

| 205 |

+

}

|

| 206 |

+

}

|

| 207 |

+

}

|

| 208 |

+

]

|

| 209 |

+

|

| 210 |

+

from openai import OpenAI

|

| 211 |

+

# Define LLM

|

| 212 |

+

client = OpenAI(

|

| 213 |

+

# Use a custom endpoint compatible with OpenAI API

|

| 214 |

+

base_url='http://localhost:8000/v1', # api_base

|

| 215 |

+

api_key="EMPTY"

|

| 216 |

+

)

|

| 217 |

+

|

| 218 |

+

messages = [{'role': 'user', 'content': 'square the number 1024'}]

|

| 219 |

+

|

| 220 |

+

completion = client.chat.completions.create(

|

| 221 |

+

messages=messages,

|

| 222 |

+

model="Qwen3-Coder-Next",

|

| 223 |

+

max_tokens=65536,

|

| 224 |

+

tools=tools,

|

| 225 |

+

)

|

| 226 |

+

|

| 227 |

+

print(completion.choices[0])

|

| 228 |

+

```

|

| 229 |

+

|

| 230 |

+

## Best Practices

|

| 231 |

+

|

| 232 |

+

To achieve optimal performance, we recommend the following sampling parameters: `temperature=1.0`, `top_p=0.95`, `top_k=40`.

|

| 233 |

+

|

| 234 |

+

|

| 235 |

+

## Citation

|

| 236 |

+

|

| 237 |

+

If you find our work helpful, feel free to give us a cite.

|

| 238 |

+

|

| 239 |

+

```

|

| 240 |

+

@techreport{qwen_qwen3_coder_next_tech_report,

|

| 241 |

+

title = {Qwen3-Coder-Next Technical Report},

|

| 242 |

+

author = {{Qwen Team}},

|

| 243 |

+

url = {https://github.com/QwenLM/Qwen3-Coder/blob/main/qwen3_coder_next_tech_report.pdf},

|

| 244 |

+

note = {Accessed: 2026-02-03}

|

| 245 |

+

}

|

| 246 |

+

```

|

UD-Q5_K_XL/Qwen3-Coder-Next-UD-Q5_K_XL-00001-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:183e233cc93de594cf343d914bb2ba21f7ae252ac4699b52b83c1200327f4b11

|

| 3 |

+

size 5936320

|

UD-Q5_K_XL/Qwen3-Coder-Next-UD-Q5_K_XL-00002-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:74f215a6f67175799dfa8dbd8a23a42fefda706fae9c791f0cd887670e5d481d

|

| 3 |

+

size 49699283136

|

UD-Q5_K_XL/Qwen3-Coder-Next-UD-Q5_K_XL-00003-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a45b96260b23e0e893b1136e4085c543d5d114ac4576e6935a1005d9d10ef104

|

| 3 |

+

size 7142454240

|

UD-Q6_K_XL/Qwen3-Coder-Next-UD-Q6_K_XL-00001-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b29fc953bb49f2f7857a7112dbe32dc17b7842c9afeaa4895a431437c692df50

|

| 3 |

+

size 5936320

|

UD-Q6_K_XL/Qwen3-Coder-Next-UD-Q6_K_XL-00002-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:79d3ff1945594f4bb2656d1f94b63f08166a80b1d17e620da6f8bbedd9c02a4d

|

| 3 |

+

size 49862115456

|

UD-Q6_K_XL/Qwen3-Coder-Next-UD-Q6_K_XL-00003-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e3fa402a998a9700c71654f50751e16b6cd4dcd8515e88b271490af37c5e6115

|

| 3 |

+

size 18722638912

|

UD-Q8_K_XL/Qwen3-Coder-Next-UD-Q8_K_XL-00001-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:22a7b3e9ac874942f06255527a0c4b884f777097e87e53c3e53922145f3ba8ed

|

| 3 |

+

size 5936320

|

UD-Q8_K_XL/Qwen3-Coder-Next-UD-Q8_K_XL-00002-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b222065b1809a41bac05a7a0c79b75142b916f9bf71b9d80efd26fbf34a08bc3

|

| 3 |

+

size 49950429152

|

UD-Q8_K_XL/Qwen3-Coder-Next-UD-Q8_K_XL-00003-of-00003.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:97089ba1b4b587b1a335d043b3667b4aa466d7531791f2ae9a7fe236042d41d9

|

| 3 |

+

size 43399982304

|

imatrix_unsloth.gguf_file

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:071cacd3e65aefe55510a4bb1fea1d4a0a7d9cfc9f439c9896a877d243a0eb53

|

| 3 |

+

size 457007872

|