Update README.md

Browse files

README.md

CHANGED

|

@@ -14,7 +14,7 @@ tags:

|

|

| 14 |

|

| 15 |

# Model Card for Model ID

|

| 16 |

|

| 17 |

-

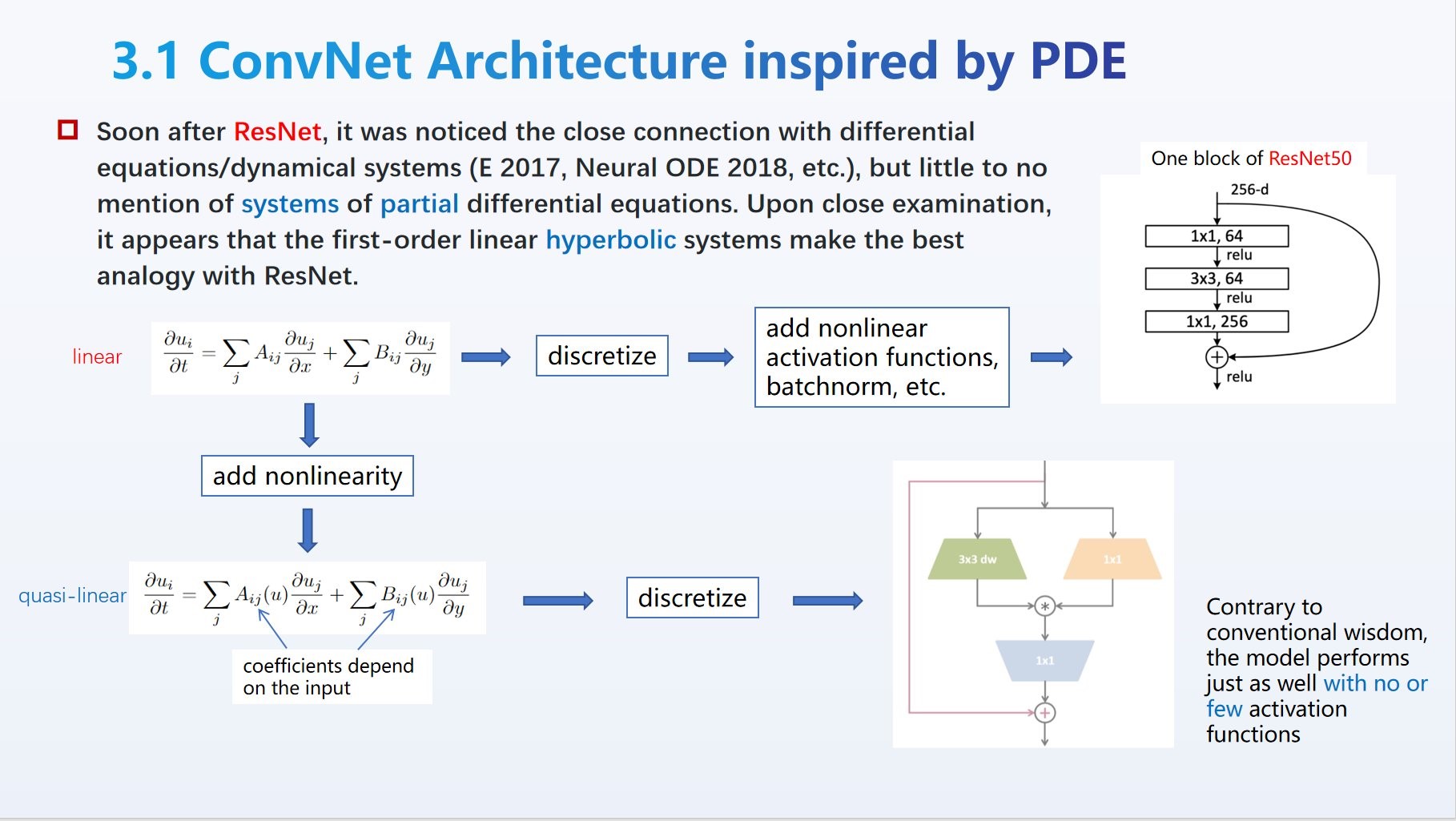

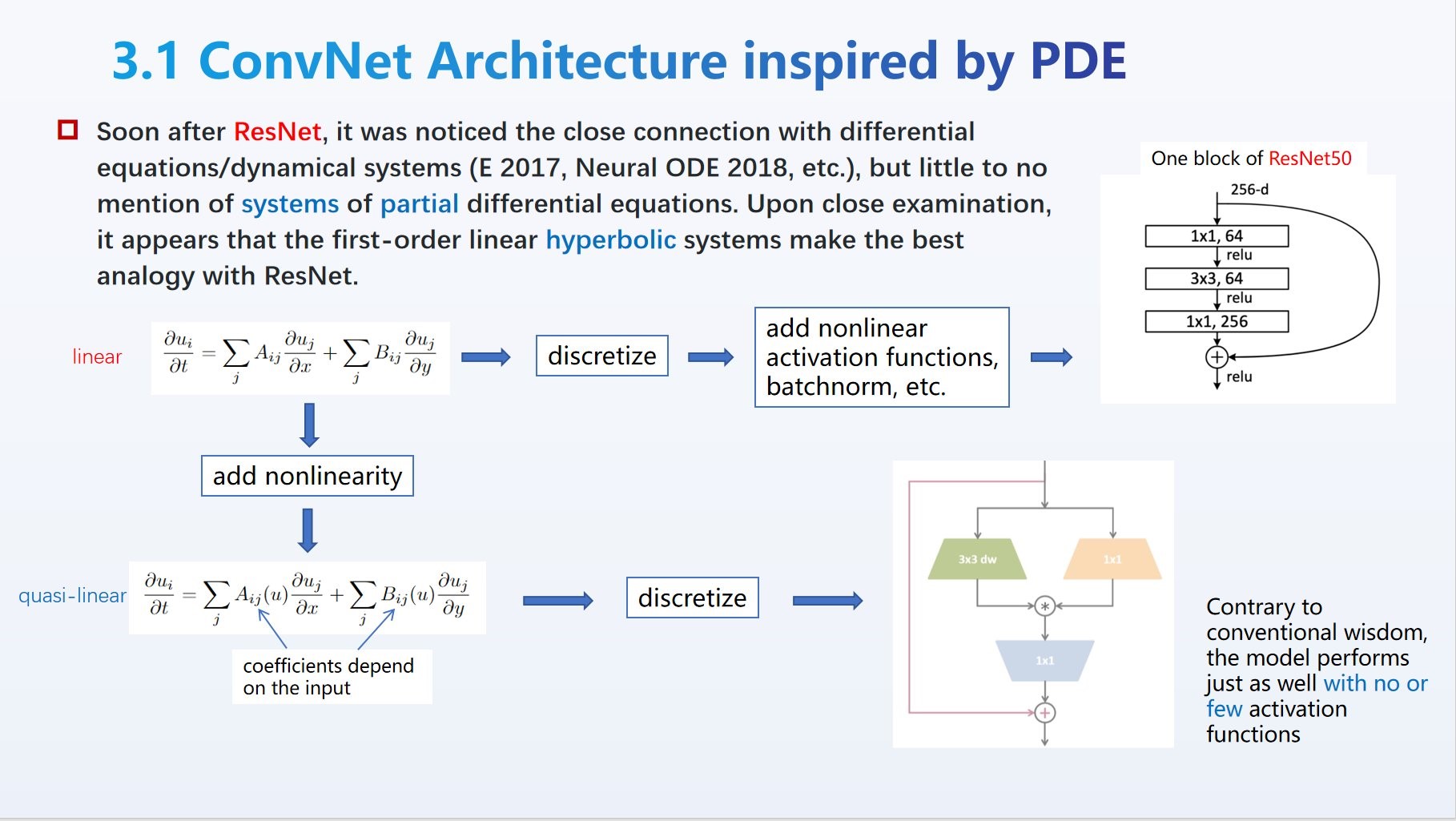

Based on a class of partial differential equations called **quasi-linear hyperbolic systems** [[Liu et al, 2023](https://github.com/liuyao12/ConvNets-PDE-perspective)], the QLNet

|

| 18 |

|

| 19 |

|

| 20 |

|

|

@@ -24,13 +24,13 @@ One notable feature is that the architecture (trained or not) admits a *continuo

|

|

| 24 |

FAQ (as the author imagines):

|

| 25 |

|

| 26 |

- Q: Who needs another ConvNet, when the SOTA for ImageNet-1k is now in the low 80s with models of comparable size?

|

| 27 |

-

- A: Aside from

|

| 28 |

- Q: Multiplication is too simple, someone must have tried it?

|

| 29 |

- A: Perhaps. My bet is whoever tried it soon found the model fail to train with standard ReLU. Without the belief in the underlying PDE perspective, maybe it wasn't pushed to its limit.

|

| 30 |

- Q: Is it not similar to attention in Transformer?

|

| 31 |

- A: It is, indeed. It's natural to wonder if the activation functions in Transformer could be removed (or reduced) while still achieve comparable performance.

|

| 32 |

- Q: If the weight/parameter space has a symmetry (other than permutations), perhaps there's redundancy in the weights.

|

| 33 |

-

- A: The transformation in

|

| 34 |

|

| 35 |

*This modelcard aims to be a base template for new models. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/modelcard_template.md?plain=1).*

|

| 36 |

|

|

|

|

| 14 |

|

| 15 |

# Model Card for Model ID

|

| 16 |

|

| 17 |

+

Based on a class of partial differential equations called **quasi-linear hyperbolic systems** [[Liu et al, 2023](https://github.com/liuyao12/ConvNets-PDE-perspective)], the QLNet breaks into uncharted waters of ConvNet model space marked by the use of (element-wise) multiplication in lieu of ReLU as the primary nonlinearity. It achieves comparable performance as ResNet50 on ImageNet-1k (acc=**78.61**), demonstrating that it has the same level of capacity/expressivity, and deserves more analysis and study (hyper-paremeter tuning, optimizer, etc.) by the academic community.

|

| 18 |

|

| 19 |

|

| 20 |

|

|

|

|

| 24 |

FAQ (as the author imagines):

|

| 25 |

|

| 26 |

- Q: Who needs another ConvNet, when the SOTA for ImageNet-1k is now in the low 80s with models of comparable size?

|

| 27 |

+

- A: Aside from lack of resources to perform extensive experiments, the real answer is that the new symmetry has the potential to be exploited (e.g., symmetry-aware optimization). The non-activation nonlinearity does have more "naturalness" (coordinate independence) that is innate in many equations in mathematics and physics. Activation is but a legacy from the early days of models inspired by *biological* neural networks.

|

| 28 |

- Q: Multiplication is too simple, someone must have tried it?

|

| 29 |

- A: Perhaps. My bet is whoever tried it soon found the model fail to train with standard ReLU. Without the belief in the underlying PDE perspective, maybe it wasn't pushed to its limit.

|

| 30 |

- Q: Is it not similar to attention in Transformer?

|

| 31 |

- A: It is, indeed. It's natural to wonder if the activation functions in Transformer could be removed (or reduced) while still achieve comparable performance.

|

| 32 |

- Q: If the weight/parameter space has a symmetry (other than permutations), perhaps there's redundancy in the weights.

|

| 33 |

+

- A: The transformation in our demo indeed can be used to reduce the weights from the get-go. However, there are variants of the model that admit a much larger symmetry. It is also related to the phenomenon of "flat minima" found empirically in some conventional neural networks.

|

| 34 |

|

| 35 |

*This modelcard aims to be a base template for new models. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/modelcard_template.md?plain=1).*

|

| 36 |

|