+  +

+

+

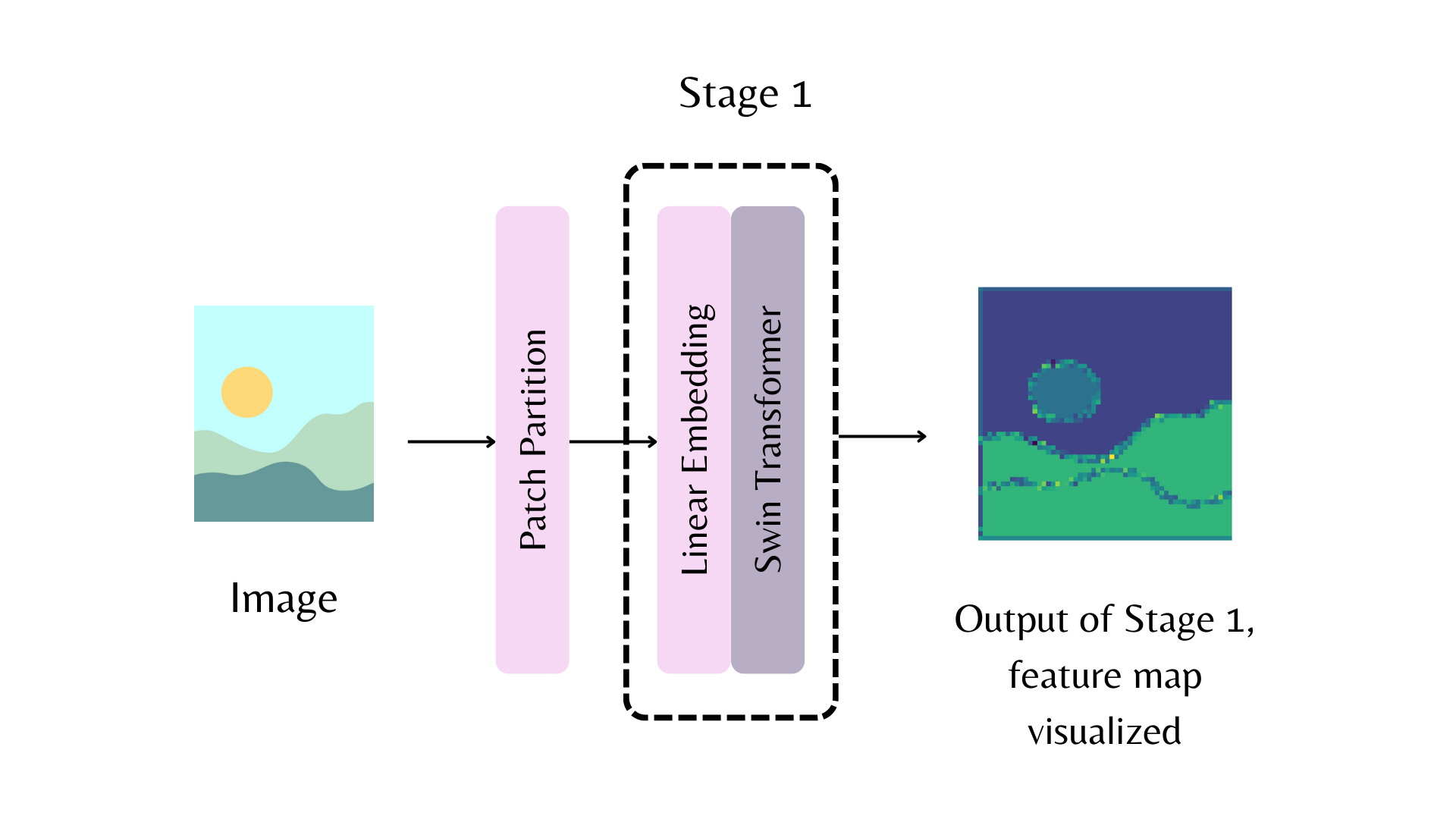

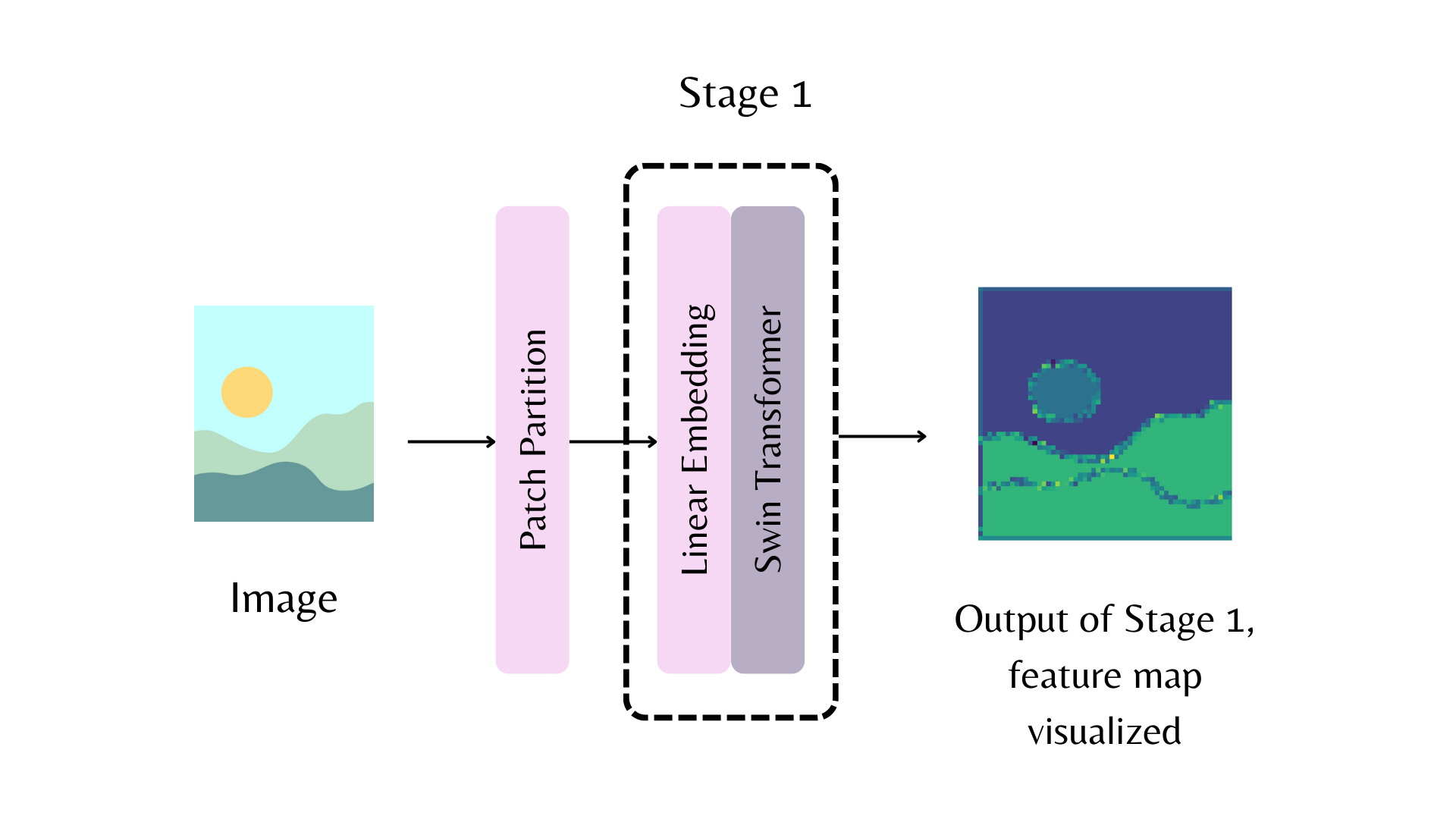

+Load a backbone with [`~PretrainedConfig.from_pretrained`] and use the `out_indices` parameter to determine which layer, given by the index, to extract a feature map from.

+

+```py

+from transformers import AutoBackbone

+

+model = AutoBackbone.from_pretrained("microsoft/swin-tiny-patch4-window7-224", out_indices=(1,))

+```

+

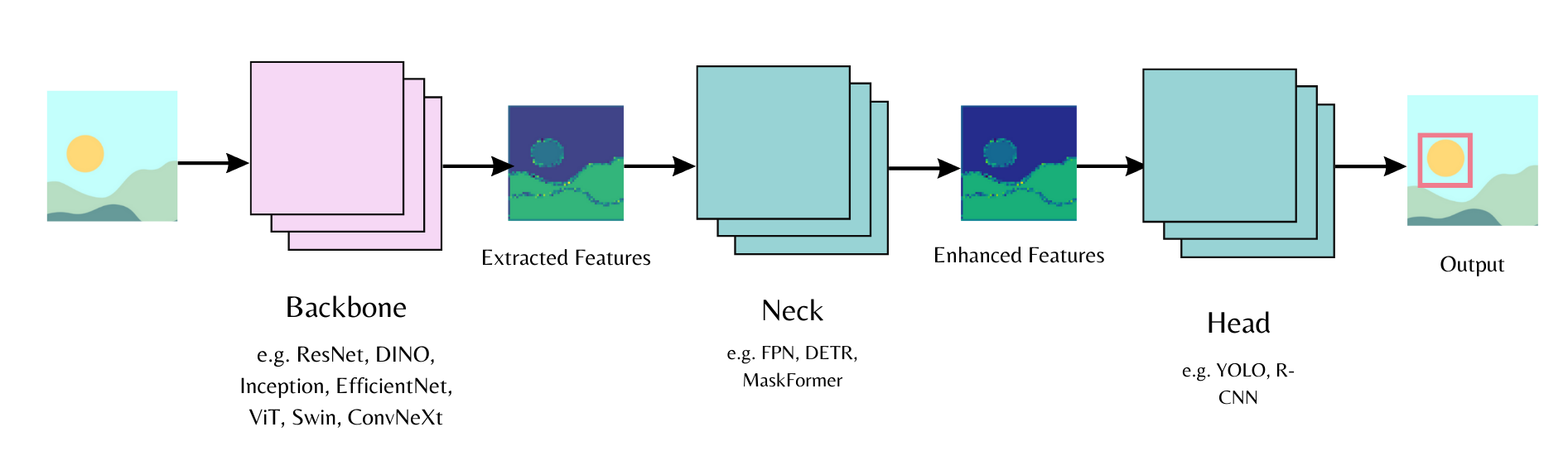

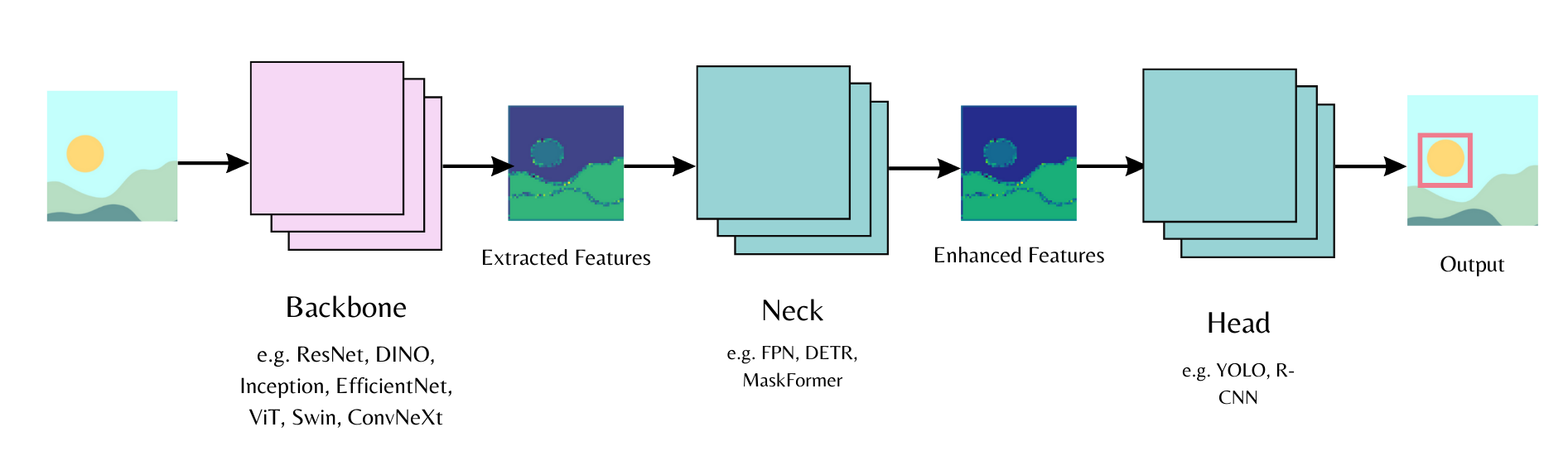

+This guide describes the backbone class, backbones from the [timm](https://hf.co/docs/timm/index) library, and how to extract features with them.

+

+## Backbone classes

+

+There are two backbone classes.

+

+- [`~transformers.utils.BackboneMixin`] allows you to load a backbone and includes functions for extracting the feature maps and indices.

+- [`~transformers.utils.BackboneConfigMixin`] allows you to set the feature map and indices of a backbone configuration.

+

+Refer to the [Backbone](./main_classes/backbones) API documentation to check which models support a backbone.

+

+There are two ways to load a Transformers backbone, [`AutoBackbone`] and a model-specific backbone class.

+

+ +

+

+  +

+

+

+```py

+from transformers import AutoImageProcessor, AutoBackbone

+

+model = AutoBackbone.from_pretrained("microsoft/swin-tiny-patch4-window7-224", out_indices=(1,))

+```

+

+ +

++☐ If your pull request addresses an issue, please mention the issue number in the pull +request description to make sure they are linked (and people viewing the issue know you +are working on it).

+☐ To indicate a work in progress please prefix the title with `[WIP]`. These are +useful to avoid duplicated work, and to differentiate it from PRs ready to be merged.

+☐ Make sure existing tests pass.

+☐ If adding a new feature, also add tests for it.

+ - If you are adding a new model, make sure you use + `ModelTester.all_model_classes = (MyModel, MyModelWithLMHead,...)` to trigger the common tests. + - If you are adding new `@slow` tests, make sure they pass using + `RUN_SLOW=1 python -m pytest tests/models/my_new_model/test_my_new_model.py`. + - If you are adding a new tokenizer, write tests and make sure + `RUN_SLOW=1 python -m pytest tests/models/{your_model_name}/test_tokenization_{your_model_name}.py` passes. + - CircleCI does not run the slow tests, but GitHub Actions does every night!

+ +☐ All public methods must have informative docstrings (see +[`modeling_bert.py`](https://github.com/huggingface/transformers/blob/main/src/transformers/models/bert/modeling_bert.py) +for an example).

+☐ Due to the rapidly growing repository, don't add any images, videos and other +non-text files that'll significantly weigh down the repository. Instead, use a Hub +repository such as [`hf-internal-testing`](https://huggingface.co/hf-internal-testing) +to host these files and reference them by URL. We recommend placing documentation +related images in the following repository: +[huggingface/documentation-images](https://huggingface.co/datasets/huggingface/documentation-images). +You can open a PR on this dataset repository and ask a Hugging Face member to merge it. + +For more information about the checks run on a pull request, take a look at our [Checks on a Pull Request](https://huggingface.co/docs/transformers/pr_checks) guide. + +### Tests + +An extensive test suite is included to test the library behavior and several examples. Library tests can be found in +the [tests](https://github.com/huggingface/transformers/tree/main/tests) folder and examples tests in the +[examples](https://github.com/huggingface/transformers/tree/main/examples) folder. + +We like `pytest` and `pytest-xdist` because it's faster. From the root of the +repository, specify a *path to a subfolder or a test file* to run the test: + +```bash +python -m pytest -n auto --dist=loadfile -s -v ./tests/models/my_new_model +``` + +Similarly, for the `examples` directory, specify a *path to a subfolder or test file* to run the test. For example, the following command tests the text classification subfolder in the PyTorch `examples` directory: + +```bash +pip install -r examples/xxx/requirements.txt # only needed the first time +python -m pytest -n auto --dist=loadfile -s -v ./examples/pytorch/text-classification +``` + +In fact, this is actually how our `make test` and `make test-examples` commands are implemented (not including the `pip install`)! + +You can also specify a smaller set of tests in order to test only the feature +you're working on. + +By default, slow tests are skipped but you can set the `RUN_SLOW` environment variable to +`yes` to run them. This will download many gigabytes of models so make sure you +have enough disk space, a good internet connection or a lot of patience! + +

+  +

+

+

+You can launch the CLI with arbitrary `generate` flags, with the format `arg_1=value_1 arg_2=value_2 ...`

+

+```bash

+transformers chat Qwen/Qwen2.5-0.5B-Instruct do_sample=False max_new_tokens=10

+```

+

+For a full list of options, run the command below.

+

+```bash

+transformers chat -h

+```

+

+The chat is implemented on top of the [AutoClass](./model_doc/auto), using tooling from [text generation](./llm_tutorial) and [chat](./chat_templating). It uses the `transformers serve` CLI under the hood ([docs](./serving.md#serve-cli)).

+

+

+## TextGenerationPipeline

+

+[`TextGenerationPipeline`] is a high-level text generation class with a "chat mode". Chat mode is enabled when a conversational model is detected and the chat prompt is [properly formatted](./llm_tutorial#wrong-prompt-format).

+

+To start, build a chat history with the following two roles.

+

+- `system` describes how the model should behave and respond when you're chatting with it. This role isn't supported by all chat models.

+- `user` is where you enter your first message to the model.

+

+```py

+chat = [

+ {"role": "system", "content": "You are a sassy, wise-cracking robot as imagined by Hollywood circa 1986."},

+ {"role": "user", "content": "Hey, can you tell me any fun things to do in New York?"}

+]

+```

+

+Create the [`TextGenerationPipeline`] and pass `chat` to it. For large models, setting [device_map="auto"](./models#big-model-inference) helps load the model quicker and automatically places it on the fastest device available. Changing the data type to [torch.bfloat16](./models#model-data-type) also helps save memory.

+

+```py

+import torch

+from transformers import pipeline

+

+pipeline = pipeline(task="text-generation", model="meta-llama/Meta-Llama-3-8B-Instruct", torch_dtype=torch.bfloat16, device_map="auto")

+response = pipeline(chat, max_new_tokens=512)

+print(response[0]["generated_text"][-1]["content"])

+```

+

+```txt

+(sigh) Oh boy, you're asking me for advice? You're gonna need a map, pal! Alright,

+alright, I'll give you the lowdown. But don't say I didn't warn you, I'm a robot, not a tour guide!

+

+So, you wanna know what's fun to do in the Big Apple? Well, let me tell you, there's a million

+things to do, but I'll give you the highlights. First off, you gotta see the sights: the Statue of

+Liberty, Central Park, Times Square... you know, the usual tourist traps. But if you're lookin' for

+something a little more... unusual, I'd recommend checkin' out the Museum of Modern Art. It's got

+some wild stuff, like that Warhol guy's soup cans and all that jazz.

+

+And if you're feelin' adventurous, take a walk across the Brooklyn Bridge. Just watch out for

+those pesky pigeons, they're like little feathered thieves! (laughs) Get it? Thieves? Ah, never mind.

+

+Now, if you're lookin' for some serious fun, hit up the comedy clubs in Greenwich Village. You might

+even catch a glimpse of some up-and-coming comedians... or a bunch of wannabes tryin' to make it big. (winks)

+

+And finally, if you're feelin' like a real New Yorker, grab a slice of pizza from one of the many amazing

+pizzerias around the city. Just don't try to order a "robot-sized" slice, trust me, it won't end well. (laughs)

+

+So, there you have it, pal! That's my expert advice on what to do in New York. Now, if you'll

+excuse me, I've got some oil changes to attend to. (winks)

+```

+

+Use the `append` method on `chat` to respond to the models message.

+

+```py

+chat = response[0]["generated_text"]

+chat.append(

+ {"role": "user", "content": "Wait, what's so wild about soup cans?"}

+)

+response = pipeline(chat, max_new_tokens=512)

+print(response[0]["generated_text"][-1]["content"])

+```

+

+```txt

+(laughs) Oh, you're killin' me, pal! You don't get it, do you? Warhol's soup cans are like, art, man!

+It's like, he took something totally mundane, like a can of soup, and turned it into a masterpiece. It's

+like, "Hey, look at me, I'm a can of soup, but I'm also a work of art!"

+(sarcastically) Oh, yeah, real original, Andy.

+

+But, you know, back in the '60s, it was like, a big deal. People were all about challenging the

+status quo, and Warhol was like, the king of that. He took the ordinary and made it extraordinary.

+And, let me tell you, it was like, a real game-changer. I mean, who would've thought that a can of soup could be art? (laughs)

+

+But, hey, you're not alone, pal. I mean, I'm a robot, and even I don't get it. (winks)

+But, hey, that's what makes art, art, right? (laughs)

+```

+

+## Performance

+

+Transformers load models in full precision by default, and for a 8B model, this requires ~32GB of memory! Reduce memory usage by loading a model in half-precision or bfloat16 (only uses ~2 bytes per parameter). You can even quantize the model to a lower precision like 8-bit or 4-bit with [bitsandbytes](https://hf.co/docs/bitsandbytes/index).

+

+> [!TIP]

+> Refer to the [Quantization](./quantization/overview) docs for more information about the different quantization backends available.

+

+Create a [`BitsAndBytesConfig`] with your desired quantization settings and pass it to the pipelines `model_kwargs` parameter. The example below quantizes a model to 8-bits.

+

+```py

+from transformers import pipeline, BitsAndBytesConfig

+

+quantization_config = BitsAndBytesConfig(load_in_8bit=True)

+pipeline = pipeline(task="text-generation", model="meta-llama/Meta-Llama-3-8B-Instruct", device_map="auto", model_kwargs={"quantization_config": quantization_config})

+```

+

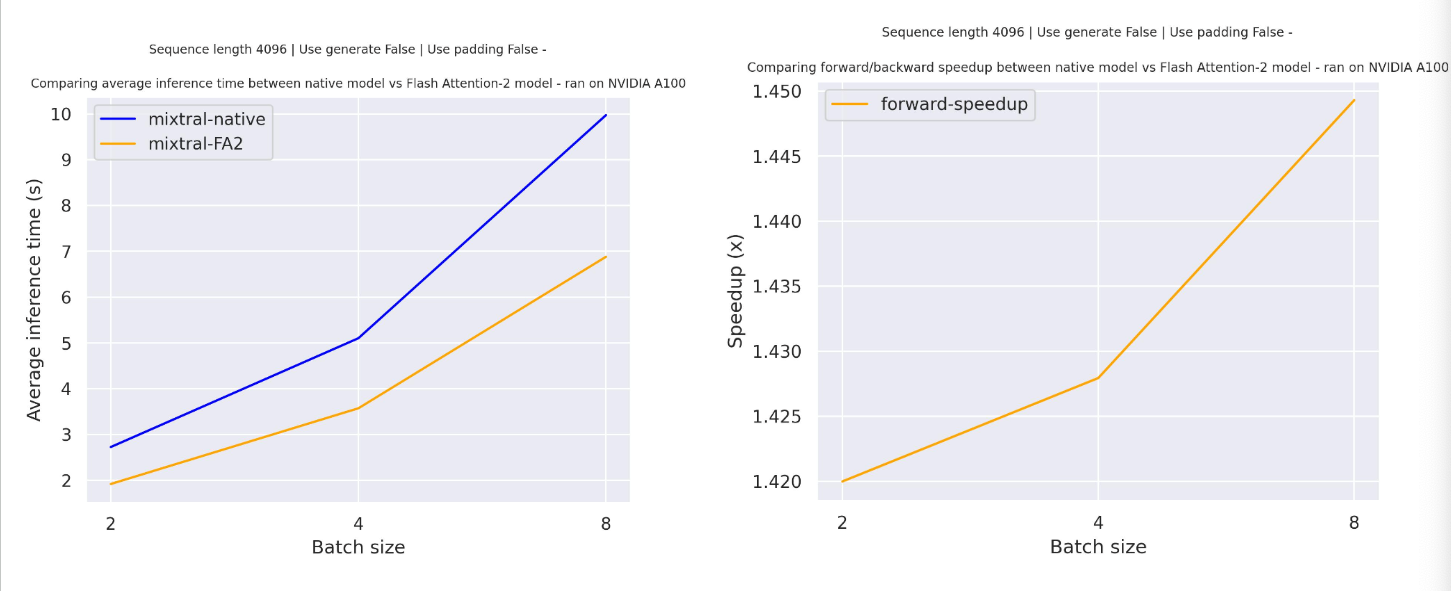

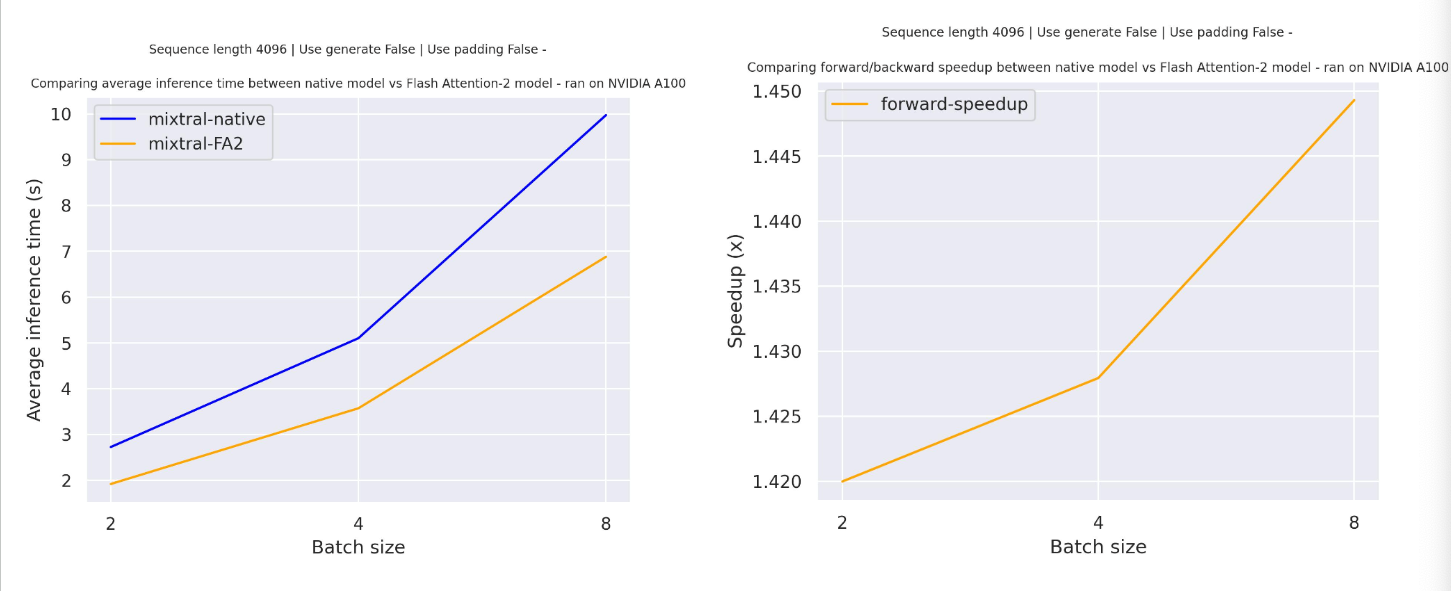

+In general, larger models are slower in addition to requiring more memory because text generation is bottlenecked by **memory bandwidth** instead of compute power. Each active parameter must be read from memory for every generated token. For a 16GB model, 16GB must be read from memory for every generated token.

+

+The number of generated tokens/sec is proportional to the total memory bandwidth of the system divided by the model size. Depending on your hardware, total memory bandwidth can vary. Refer to the table below for approximate generation speeds for different hardware types.

+

+| Hardware | Memory bandwidth |

+|---|---|

+| consumer CPU | 20-100GB/sec |

+| specialized CPU (Intel Xeon, AMD Threadripper/Epyc, Apple silicon) | 200-900GB/sec |

+| data center GPU (NVIDIA A100/H100) | 2-3TB/sec |

+

+The easiest solution for improving generation speed is to either quantize a model or use hardware with higher memory bandwidth.

+

+You can also try techniques like [speculative decoding](./generation_strategies#speculative-decoding), where a smaller model generates candidate tokens that are verified by the larger model. If the candidate tokens are correct, the larger model can generate more than one token per `forward` pass. This significantly alleviates the bandwidth bottleneck and improves generation speed.

+

+> [!TIP]

+> Parameters may not be active for every generated token in MoE models such as [Mixtral](./model_doc/mixtral), [Qwen2MoE](./model_doc/qwen2_moe.md), and [DBRX](./model_doc/dbrx). As a result, MoE models generally have much lower memory bandwidth requirements and can be faster than a regular LLM of the same size. However, techniques like speculative decoding are ineffective with MoE models because parameters become activated with each new speculated token.

diff --git a/transformers/docs/source/en/custom_models.md b/transformers/docs/source/en/custom_models.md

new file mode 100644

index 0000000000000000000000000000000000000000..a6f9d1238e00044410a51f403f1562db90f3ab7e

--- /dev/null

+++ b/transformers/docs/source/en/custom_models.md

@@ -0,0 +1,297 @@

+

+

+# Customizing models

+

+Transformers models are designed to be customizable. A models code is fully contained in the [model](https://github.com/huggingface/transformers/tree/main/src/transformers/models) subfolder of the Transformers repository. Each folder contains a `modeling.py` and a `configuration.py` file. Copy these files to start customizing a model.

+

+> [!TIP]

+> It may be easier to start from scratch if you're creating an entirely new model. But for models that are very similar to an existing one in Transformers, it is faster to reuse or subclass the same configuration and model class.

+

+This guide will show you how to customize a ResNet model, enable [AutoClass](./models#autoclass) support, and share it on the Hub.

+

+## Configuration

+

+A configuration, given by the base [`PretrainedConfig`] class, contains all the necessary information to build a model. This is where you'll configure the attributes of the custom ResNet model. Different attributes gives different ResNet model types.

+

+The main rules for customizing a configuration are:

+

+1. A custom configuration must subclass [`PretrainedConfig`]. This ensures a custom model has all the functionality of a Transformers' model such as [`~PretrainedConfig.from_pretrained`], [`~PretrainedConfig.save_pretrained`], and [`~PretrainedConfig.push_to_hub`].

+2. The [`PretrainedConfig`] `__init__` must accept any `kwargs` and they must be passed to the superclass `__init__`. [`PretrainedConfig`] has more fields than the ones set in your custom configuration, so when you load a configuration with [`~PretrainedConfig.from_pretrained`], those fields need to be accepted by your configuration and passed to the superclass.

+

+> [!TIP]

+> It is useful to check the validity of some of the parameters. In the example below, a check is implemented to ensure `block_type` and `stem_type` belong to one of the predefined values.

+>

+> Add `model_type` to the configuration class to enable [AutoClass](./models#autoclass) support.

+

+```py

+from transformers import PretrainedConfig

+from typing import List

+

+class ResnetConfig(PretrainedConfig):

+ model_type = "resnet"

+

+ def __init__(

+ self,

+ block_type="bottleneck",

+ layers: list[int] = [3, 4, 6, 3],

+ num_classes: int = 1000,

+ input_channels: int = 3,

+ cardinality: int = 1,

+ base_width: int = 64,

+ stem_width: int = 64,

+ stem_type: str = "",

+ avg_down: bool = False,

+ **kwargs,

+ ):

+ if block_type not in ["basic", "bottleneck"]:

+ raise ValueError(f"`block_type` must be 'basic' or bottleneck', got {block_type}.")

+ if stem_type not in ["", "deep", "deep-tiered"]:

+ raise ValueError(f"`stem_type` must be '', 'deep' or 'deep-tiered', got {stem_type}.")

+

+ self.block_type = block_type

+ self.layers = layers

+ self.num_classes = num_classes

+ self.input_channels = input_channels

+ self.cardinality = cardinality

+ self.base_width = base_width

+ self.stem_width = stem_width

+ self.stem_type = stem_type

+ self.avg_down = avg_down

+ super().__init__(**kwargs)

+```

+

+Save the configuration to a JSON file in your custom model folder, `custom-resnet`, with [`~PretrainedConfig.save_pretrained`].

+

+```py

+resnet50d_config = ResnetConfig(block_type="bottleneck", stem_width=32, stem_type="deep", avg_down=True)

+resnet50d_config.save_pretrained("custom-resnet")

+```

+

+## Model

+

+With the custom ResNet configuration, you can now create and customize the model. The model subclasses the base [`PreTrainedModel`] class. Like [`PretrainedConfig`], inheriting from [`PreTrainedModel`] and initializing the superclass with the configuration extends Transformers' functionalities such as saving and loading to the custom model.

+

+Transformers' models follow the convention of accepting a `config` object in the `__init__` method. This passes the entire `config` to the model sublayers, instead of breaking the `config` object into multiple arguments that are individually passed to the sublayers.

+

+Writing models this way produces simpler code with a clear source of truth for any hyperparameters. It also makes it easier to reuse code from other Transformers' models.

+

+You'll create two ResNet models, a barebones ResNet model that outputs the hidden states and a ResNet model with an image classification head.

+

+ +

+

+  +

+

+

+> [!TIP]

+> Learn more about streaming in the [Text Generation Inference](https://huggingface.co/docs/text-generation-inference/en/conceptual/streaming) docs.

+

+Create an instance of [`TextStreamer`] with the tokenizer. Pass [`TextStreamer`] to the `streamer` parameter in [`~GenerationMixin.generate`] to stream the output one word at a time.

+

+```py

+from transformers import AutoModelForCausalLM, AutoTokenizer, TextStreamer

+

+tokenizer = AutoTokenizer.from_pretrained("openai-community/gpt2")

+model = AutoModelForCausalLM.from_pretrained("openai-community/gpt2")

+inputs = tokenizer(["The secret to baking a good cake is "], return_tensors="pt")

+streamer = TextStreamer(tokenizer)

+

+_ = model.generate(**inputs, streamer=streamer, max_new_tokens=20)

+```

+

+The `streamer` parameter is compatible with any class with a [`~TextStreamer.put`] and [`~TextStreamer.end`] method. [`~TextStreamer.put`] pushes new tokens and [`~TextStreamer.end`] flags the end of generation. You can create your own streamer class as long as they include these two methods, or you can use Transformers' basic streamer classes.

+

+## Watermarking

+

+Watermarking is useful for detecting whether text is generated. The [watermarking strategy](https://hf.co/papers/2306.04634) in Transformers randomly "colors" a subset of the tokens green. When green tokens are generated, they have a small bias added to their logits, and a higher probability of being generated. You can detect generated text by comparing the proportion of green tokens to the amount of green tokens typically found in human-generated text.

+

+Watermarking is supported for any generative model in Transformers and doesn't require an extra classification model to detect the watermarked text.

+

+Create a [`WatermarkingConfig`] with the bias value to add to the logits and watermarking algorithm. The example below uses the `"selfhash"` algorithm, where the green token selection only depends on the current token. Pass the [`WatermarkingConfig`] to [`~GenerationMixin.generate`].

+

+> [!TIP]

+> The [`WatermarkDetector`] class detects the proportion of green tokens in generated text, which is why it is recommended to strip the prompt text, if it is much longer than the generated text. Padding can also have an effect on [`WatermarkDetector`].

+

+```py

+from transformers import AutoTokenizer, AutoModelForCausalLM, WatermarkDetector, WatermarkingConfig

+

+model = AutoModelForCausalLM.from_pretrained("openai-community/gpt2")

+tokenizer = AutoTokenizer.from_pretrained("openai-community/gpt2")

+tokenizer.pad_token_id = tokenizer.eos_token_id

+tokenizer.padding_side = "left"

+

+inputs = tokenizer(["This is the beginning of a long story", "Alice and Bob are"], padding=True, return_tensors="pt")

+input_len = inputs["input_ids"].shape[-1]

+

+watermarking_config = WatermarkingConfig(bias=2.5, seeding_scheme="selfhash")

+out = model.generate(**inputs, watermarking_config=watermarking_config, do_sample=False, max_length=20)

+```

+

+Create an instance of [`WatermarkDetector`] and pass the model output to it to detect whether the text is machine-generated. The [`WatermarkDetector`] must have the same [`WatermarkingConfig`] used during generation.

+

+```py

+detector = WatermarkDetector(model_config=model.config, device="cpu", watermarking_config=watermarking_config)

+detection_out = detector(out, return_dict=True)

+detection_out.prediction

+array([True, True])

+```

diff --git a/transformers/docs/source/en/generation_strategies.md b/transformers/docs/source/en/generation_strategies.md

new file mode 100644

index 0000000000000000000000000000000000000000..6453669f68966fe4fd79c3bb58056a47862bac06

--- /dev/null

+++ b/transformers/docs/source/en/generation_strategies.md

@@ -0,0 +1,510 @@

+

+

+# Generation strategies

+

+A decoding strategy informs how a model should select the next generated token. There are many types of decoding strategies, and choosing the appropriate one has a significant impact on the quality of the generated text.

+

+This guide will help you understand the different decoding strategies available in Transformers and how and when to use them.

+

+## Basic decoding methods

+

+These are well established decoding methods, and should be your starting point for text generation tasks.

+

+### Greedy search

+

+Greedy search is the default decoding strategy. It selects the next most likely token at each step. Unless specified in [`GenerationConfig`], this strategy generates a maximum of 20 new tokens.

+

+Greedy search works well for tasks with relatively short outputs where creativity is not a priority. However, it breaks down when generating longer sequences because it begins to repeat itself.

+

+```py

+import torch

+from transformers import AutoModelForCausalLM, AutoTokenizer

+

+tokenizer = AutoTokenizer.from_pretrained("meta-llama/Llama-2-7b-hf")

+inputs = tokenizer("Hugging Face is an open-source company", return_tensors="pt").to("cuda")

+

+model = AutoModelForCausalLM.from_pretrained("meta-llama/Llama-2-7b-hf", torch_dtype=torch.float16).to("cuda")

+# explicitly set to default length because Llama2 generation length is 4096

+outputs = model.generate(**inputs, max_new_tokens=20)

+tokenizer.batch_decode(outputs, skip_special_tokens=True)

+'Hugging Face is an open-source company that provides a suite of tools and services for building, deploying, and maintaining natural language processing'

+```

+

+### Sampling

+

+Sampling, or multinomial sampling, randomly selects a token based on the probability distribution over the entire model's vocabulary (as opposed to the most likely token, as in greedy search). This means every token with a non-zero probability has a chance to be selected. Sampling strategies reduce repetition and can generate more creative and diverse outputs.

+

+Enable multinomial sampling with `do_sample=True` and `num_beams=1`.

+

+```py

+import torch

+from transformers import AutoModelForCausalLM, AutoTokenizer

+

+tokenizer = AutoTokenizer.from_pretrained("meta-llama/Llama-2-7b-hf")

+inputs = tokenizer("Hugging Face is an open-source company", return_tensors="pt").to("cuda")

+

+model = AutoModelForCausalLM.from_pretrained("meta-llama/Llama-2-7b-hf", torch_dtype=torch.float16).to("cuda")

+# explicitly set to 100 because Llama2 generation length is 4096

+outputs = model.generate(**inputs, max_new_tokens=50, do_sample=True, num_beams=1)

+tokenizer.batch_decode(outputs, skip_special_tokens=True)

+'Hugging Face is an open-source company 🤗\nWe are open-source and believe that open-source is the best way to build technology. Our mission is to make AI accessible to everyone, and we believe that open-source is the best way to achieve that.'

+```

+

+### Beam search

+

+Beam search keeps track of several generated sequences (beams) at each time step. After a certain number of steps, it selects the sequence with the highest *overall* probability. Unlike greedy search, this strategy can "look ahead" and pick a sequence with a higher probability overall even if the initial tokens have a lower probability. It is best suited for input-grounded tasks, like describing an image or speech recognition. You can also use `do_sample=True` with beam search to sample at each step, but beam search will still greedily prune out low probability sequences between steps.

+

+> [!TIP]

+> Check out the [beam search visualizer](https://huggingface.co/spaces/m-ric/beam_search_visualizer) to see how beam search works.

+

+Enable beam search with the `num_beams` parameter (should be greater than 1 otherwise it's equivalent to greedy search).

+

+```py

+import torch

+from transformers import AutoModelForCausalLM, AutoTokenizer

+

+tokenizer = AutoTokenizer.from_pretrained("meta-llama/Llama-2-7b-hf")

+inputs = tokenizer("Hugging Face is an open-source company", return_tensors="pt").to("cuda")

+

+model = AutoModelForCausalLM.from_pretrained("meta-llama/Llama-2-7b-hf", torch_dtype=torch.float16).to("cuda")

+# explicitly set to 100 because Llama2 generation length is 4096

+outputs = model.generate(**inputs, max_new_tokens=50, num_beams=2)

+tokenizer.batch_decode(outputs, skip_special_tokens=True)

+"['Hugging Face is an open-source company that develops and maintains the Hugging Face platform, which is a collection of tools and libraries for building and deploying natural language processing (NLP) models. Hugging Face was founded in 2018 by Thomas Wolf']"

+```

+

+## Advanced decoding methods

+

+Advanced decoding methods aim at either tackling specific generation quality issues (e.g. repetition) or at improving the generation throughput in certain situations. These techniques are more complex, and may not work correctly with all models.

+

+### Speculative decoding

+

+[Speculative](https://hf.co/papers/2211.17192) or assistive decoding isn't a search or sampling strategy. Instead, speculative decoding adds a second smaller model to generate candidate tokens. The main model verifies the candidate tokens in a single `forward` pass, which speeds up the decoding process overall. This method is especially useful for LLMs where it can be more costly and slower to generate tokens. Refer to the [speculative decoding](./llm_optims#speculative-decoding) guide to learn more.

+

+Currently, only greedy search and multinomial sampling are supported with speculative decoding. Batched inputs aren't supported either.

+

+Enable speculative decoding with the `assistant_model` parameter. You'll notice the fastest speed up with an assistant model that is much smaller than the main model. Add `do_sample=True` to enable token validation with resampling.

+

+ +

+

+  +

+

+

+The GGUF format also supports many quantized data types (refer to [quantization type table](https://hf.co/docs/hub/en/gguf#quantization-types) for a complete list of supported quantization types) which saves a significant amount of memory, making inference with large models like Whisper and Llama feasible on local and edge devices.

+

+Transformers supports loading models stored in the GGUF format for further training or finetuning. The GGUF checkpoint is **dequantized to fp32** where the full model weights are available and compatible with PyTorch.

+

+> [!TIP]

+> Models that support GGUF include Llama, Mistral, Qwen2, Qwen2Moe, Phi3, Bloom, Falcon, StableLM, GPT2, Starcoder2, and [more](https://github.com/huggingface/transformers/blob/main/src/transformers/integrations/ggml.py)

+

+Add the `gguf_file` parameter to [`~PreTrainedModel.from_pretrained`] to specify the GGUF file to load.

+

+```py

+# pip install gguf

+from transformers import AutoTokenizer, AutoModelForCausalLM

+

+model_id = "TheBloke/TinyLlama-1.1B-Chat-v1.0-GGUF"

+filename = "tinyllama-1.1b-chat-v1.0.Q6_K.gguf"

+

+torch_dtype = torch.float32 # could be torch.float16 or torch.bfloat16 too

+tokenizer = AutoTokenizer.from_pretrained(model_id, gguf_file=filename)

+model = AutoModelForCausalLM.from_pretrained(model_id, gguf_file=filename, torch_dtype=torch_dtype)

+```

+

+Once you're done tinkering with the model, save and convert it back to the GGUF format with the [convert-hf-to-gguf.py](https://github.com/ggerganov/llama.cpp/blob/master/convert_hf_to_gguf.py) script.

+

+```py

+tokenizer.save_pretrained("directory")

+model.save_pretrained("directory")

+

+!python ${path_to_llama_cpp}/convert-hf-to-gguf.py ${directory}

+```

diff --git a/transformers/docs/source/en/glossary.md b/transformers/docs/source/en/glossary.md

new file mode 100644

index 0000000000000000000000000000000000000000..b65f45341e3934dd182c481f91e8c3fc4cb559ad

--- /dev/null

+++ b/transformers/docs/source/en/glossary.md

@@ -0,0 +1,522 @@

+

+

+# Glossary

+

+This glossary defines general machine learning and 🤗 Transformers terms to help you better understand the

+documentation.

+

+## A

+

+### attention mask

+

+The attention mask is an optional argument used when batching sequences together.

+

+ +

+

+

+

+## Preprocess

+

+Transformers' vision models expects the input as PyTorch tensors of pixel values. An image processor handles the conversion of images to pixel values, which is represented by the batch size, number of channels, height, and width. To achieve this, an image is resized (center cropped) and the pixel values are normalized and rescaled to the models expected values.

+

+Image preprocessing is not the same as *image augmentation*. Image augmentation makes changes (brightness, colors, rotatation, etc.) to an image for the purpose of either creating new training examples or prevent overfitting. Image preprocessing makes changes to an image for the purpose of matching a pretrained model's expected input format.

+

+Typically, images are augmented (to increase performance) and then preprocessed before being passed to a model. You can use any library ([Albumentations](https://colab.research.google.com/github/huggingface/notebooks/blob/main/examples/image_classification_albumentations.ipynb), [Kornia](https://colab.research.google.com/github/huggingface/notebooks/blob/main/examples/image_classification_kornia.ipynb)) for augmentation and an image processor for preprocessing.

+

+This guide uses the torchvision [transforms](https://pytorch.org/vision/stable/transforms.html) module for augmentation.

+

+Start by loading a small sample of the [food101](https://hf.co/datasets/food101) dataset.

+

+```py

+from datasets import load_dataset

+

+dataset = load_dataset("food101", split="train[:100]")

+```

+

+From the [transforms](https://pytorch.org/vision/stable/transforms.html) module, use the [Compose](https://pytorch.org/vision/master/generated/torchvision.transforms.Compose.html) API to chain together [RandomResizedCrop](https://pytorch.org/vision/main/generated/torchvision.transforms.RandomResizedCrop.html) and [ColorJitter](https://pytorch.org/vision/main/generated/torchvision.transforms.ColorJitter.html). These transforms randomly crop and resize an image, and randomly adjusts an images colors.

+

+The image size to randomly crop to can be retrieved from the image processor. For some models, an exact height and width are expected while for others, only the `shortest_edge` is required.

+

+```py

+from torchvision.transforms import RandomResizedCrop, ColorJitter, Compose

+

+size = (

+ image_processor.size["shortest_edge"]

+ if "shortest_edge" in image_processor.size

+ else (image_processor.size["height"], image_processor.size["width"])

+)

+_transforms = Compose([RandomResizedCrop(size), ColorJitter(brightness=0.5, hue=0.5)])

+```

+

+Apply the transforms to the images and convert them to the RGB format. Then pass the augmented images to the image processor to return the pixel values.

+

+The `do_resize` parameter is set to `False` because the images have already been resized in the augmentation step by [RandomResizedCrop](https://pytorch.org/vision/main/generated/torchvision.transforms.RandomResizedCrop.html). If you don't augment the images, then the image processor automatically resizes and normalizes the images with the `image_mean` and `image_std` values. These values are found in the preprocessor configuration file.

+

+```py

+def transforms(examples):

+ images = [_transforms(img.convert("RGB")) for img in examples["image"]]

+ examples["pixel_values"] = image_processor(images, do_resize=False, return_tensors="pt")["pixel_values"]

+ return examples

+```

+

+Apply the combined augmentation and preprocessing function to the entire dataset on the fly with [`~datasets.Dataset.set_transform`].

+

+```py

+dataset.set_transform(transforms)

+```

+

+Convert the pixel values back into an image to see how the image has been augmented and preprocessed.

+

+```py

+import numpy as np

+import matplotlib.pyplot as plt

+

+img = dataset[0]["pixel_values"]

+plt.imshow(img.permute(1, 2, 0))

+```

+

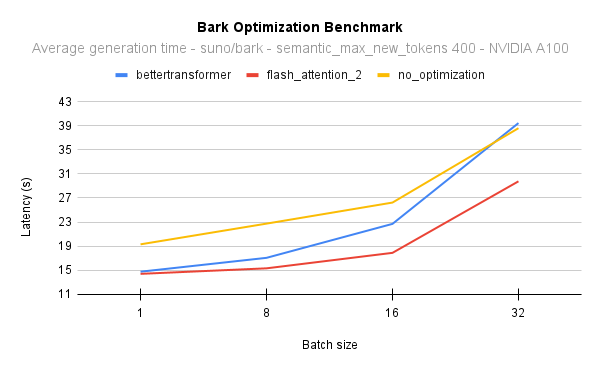

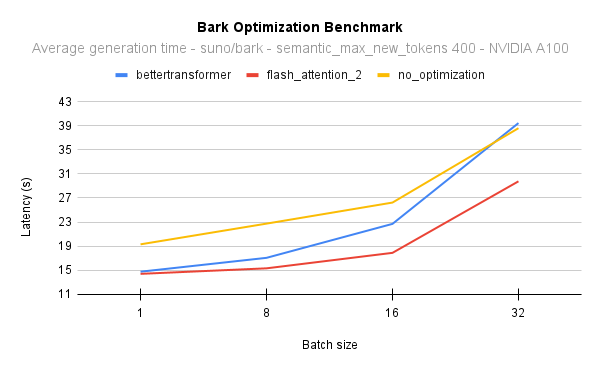

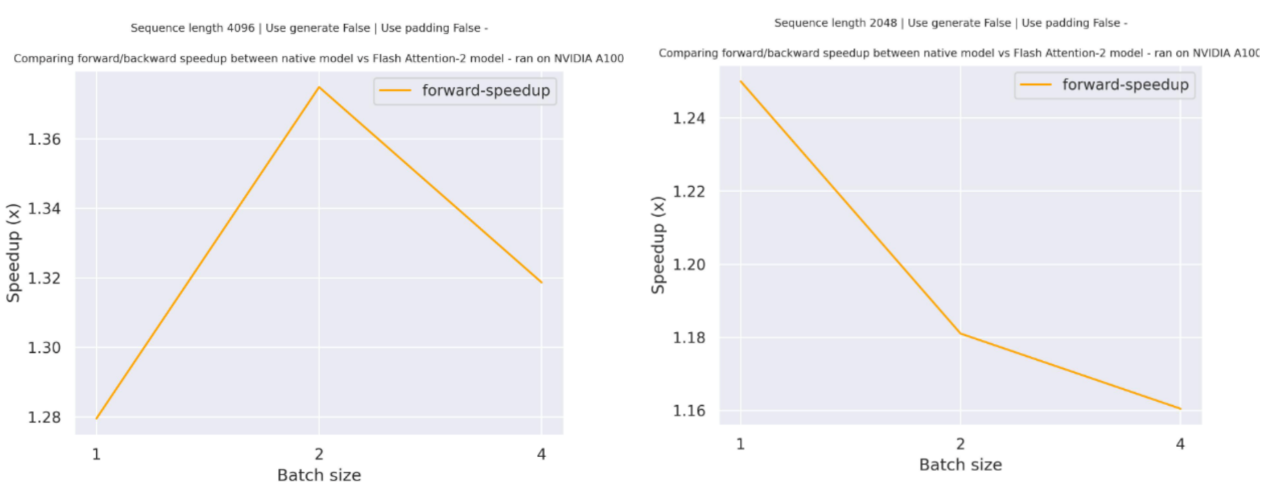

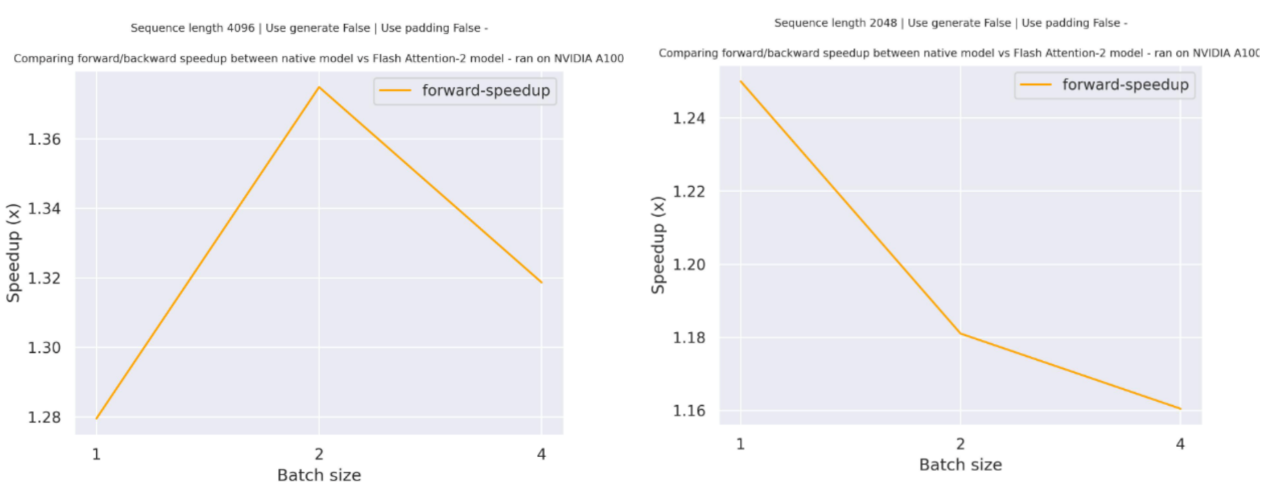

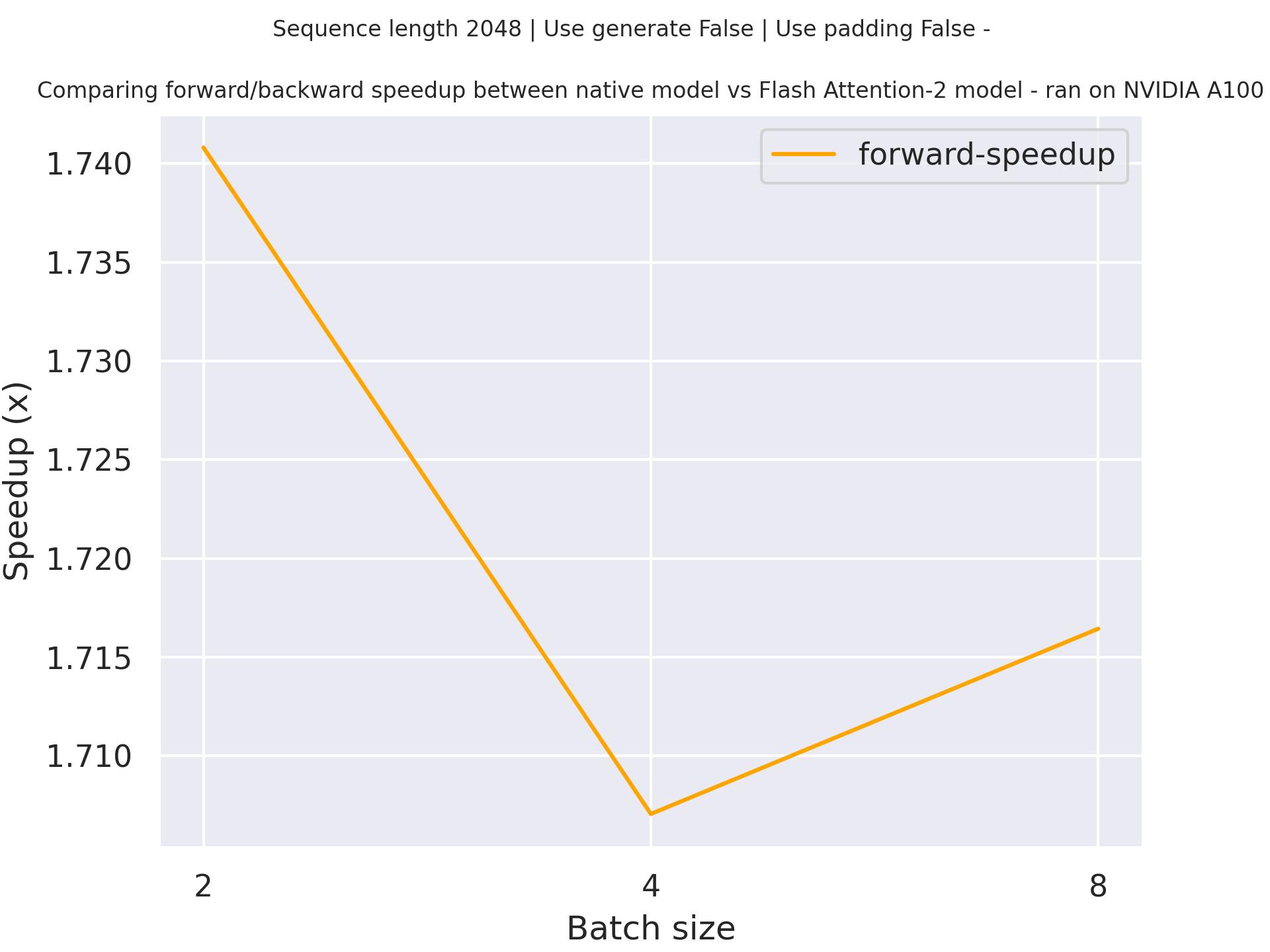

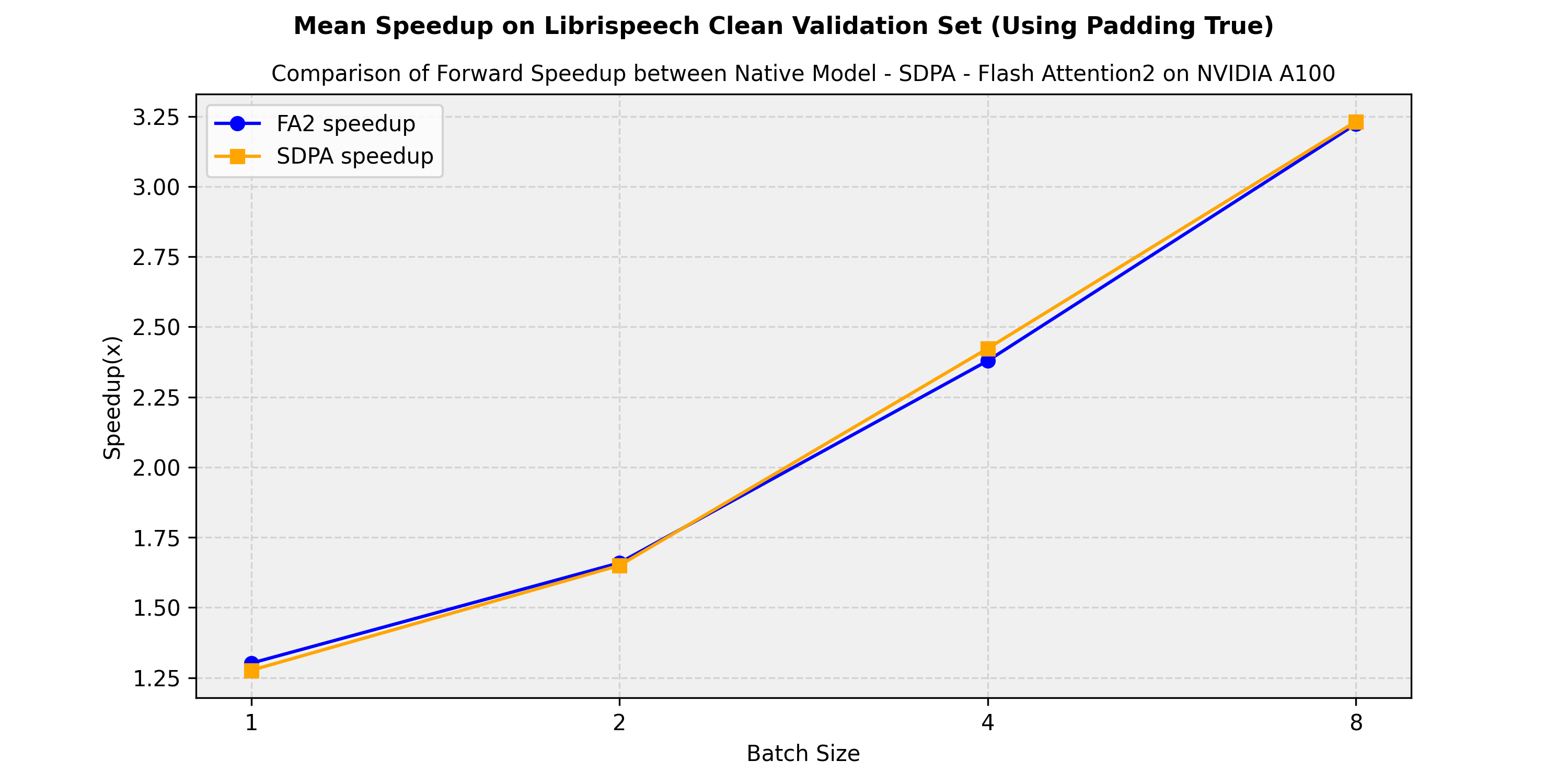

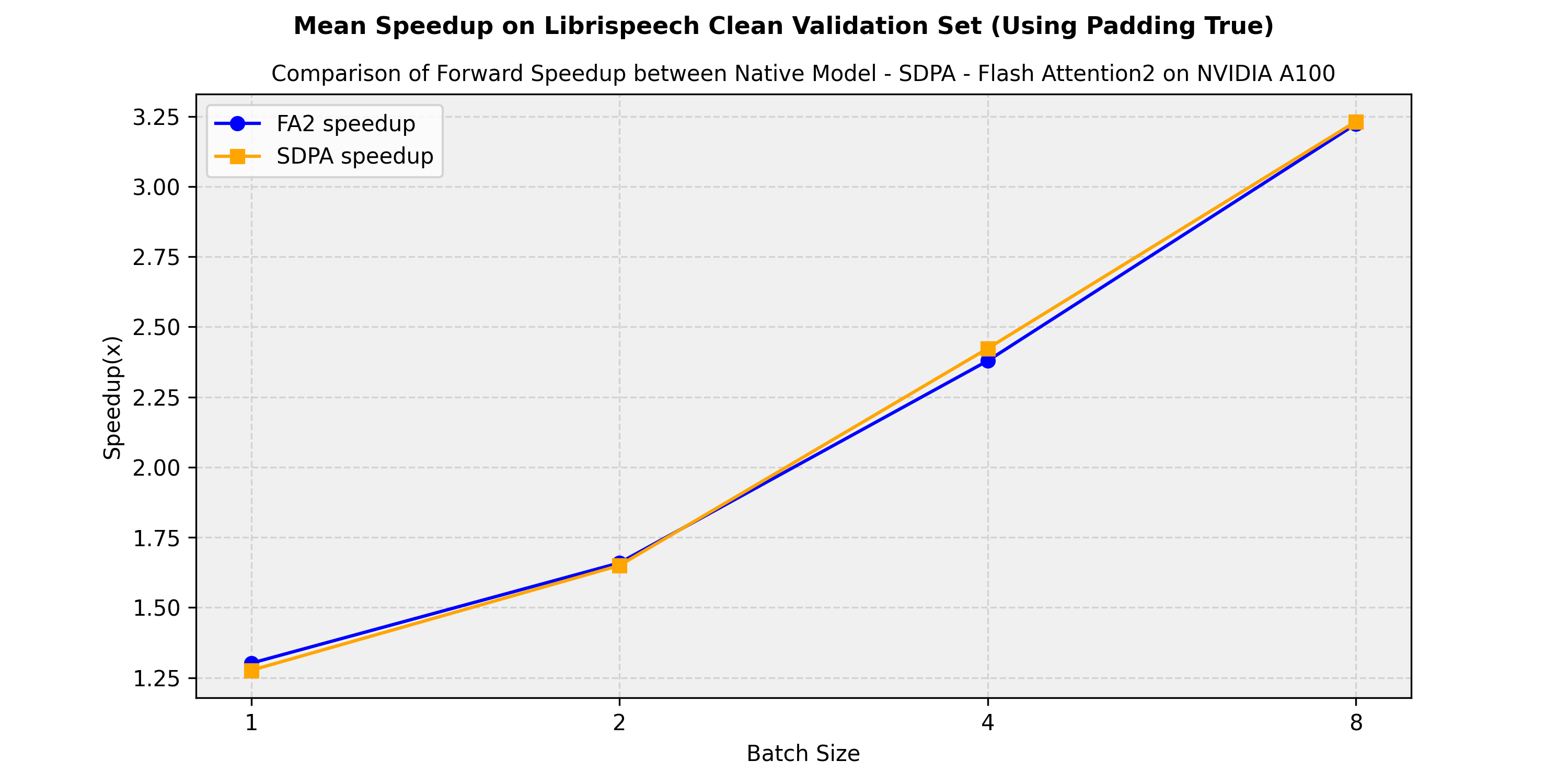

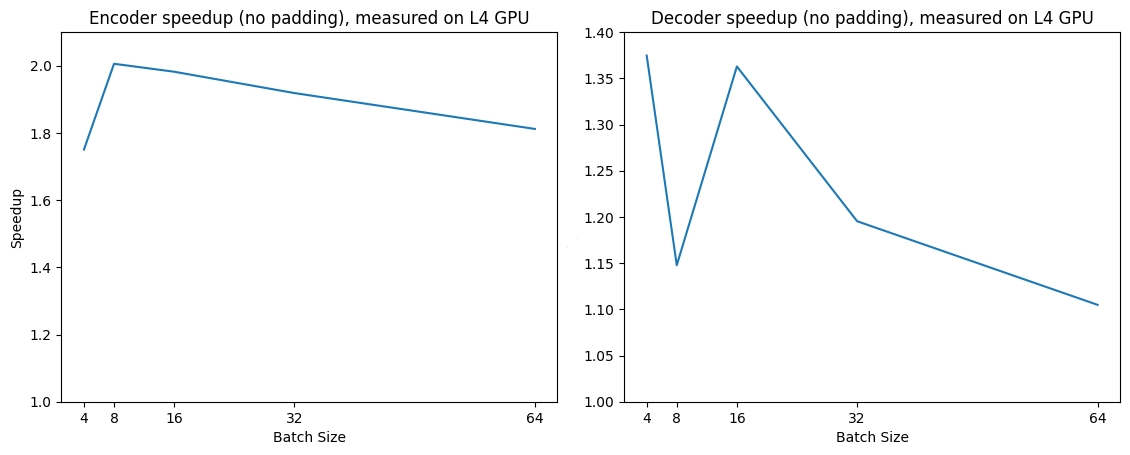

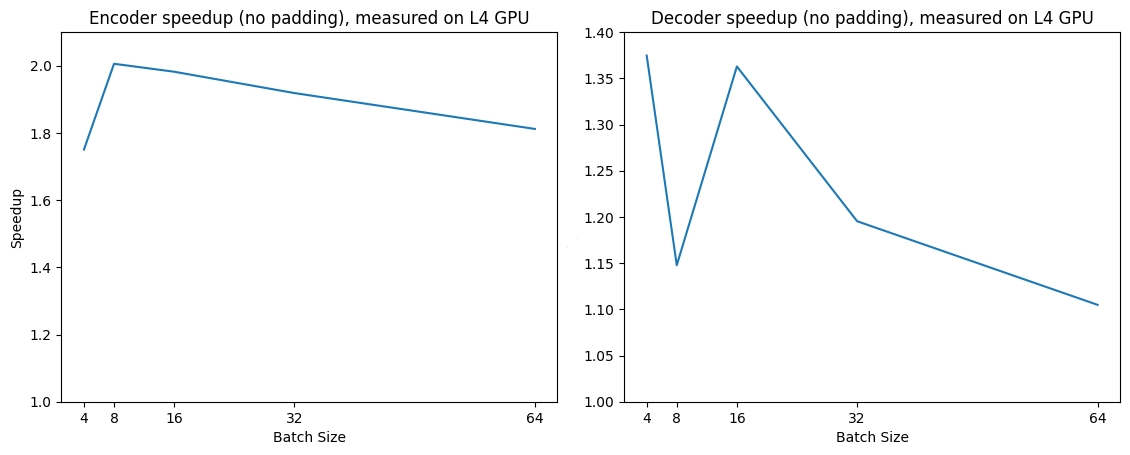

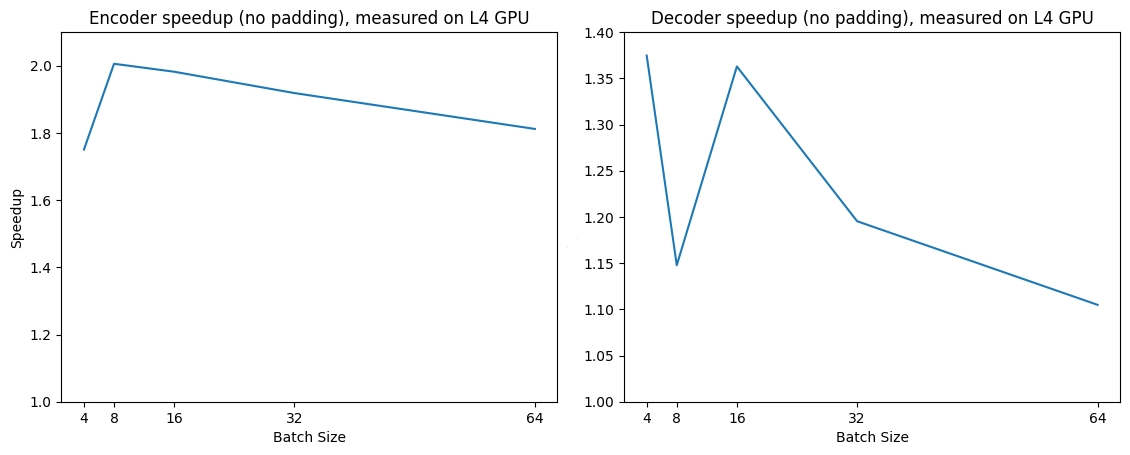

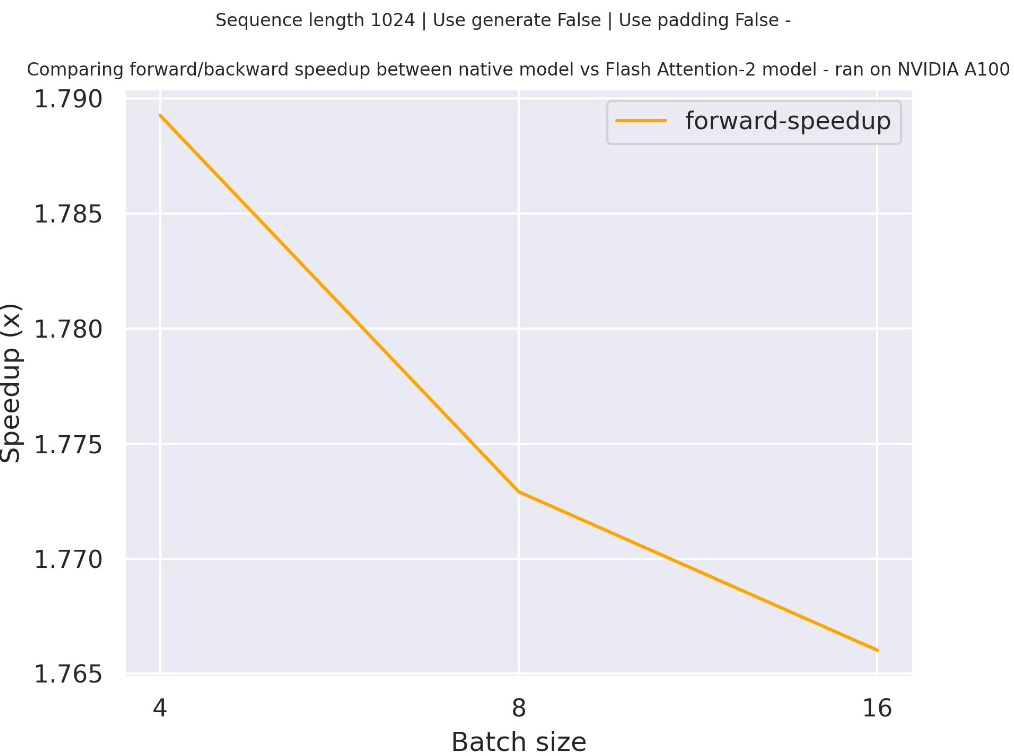

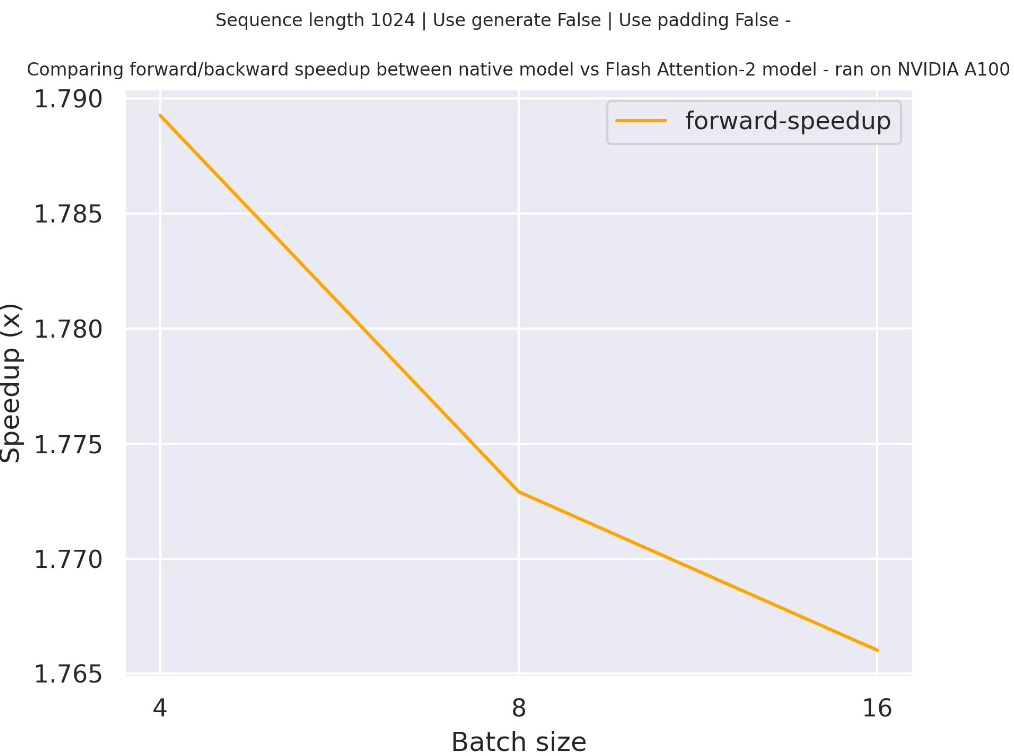

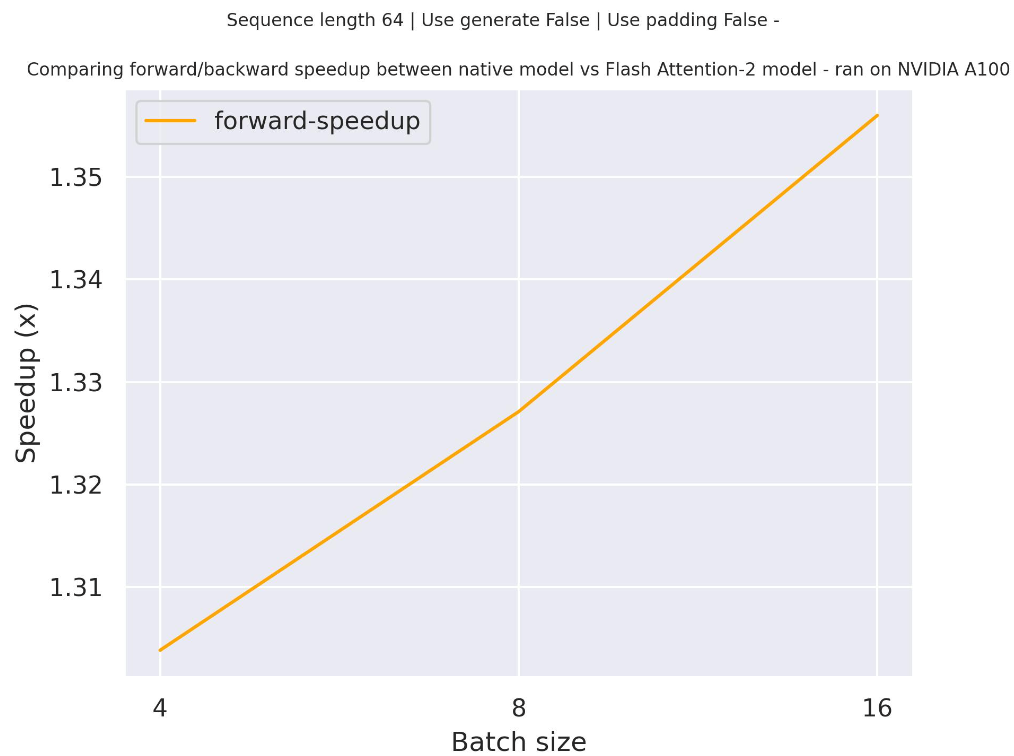

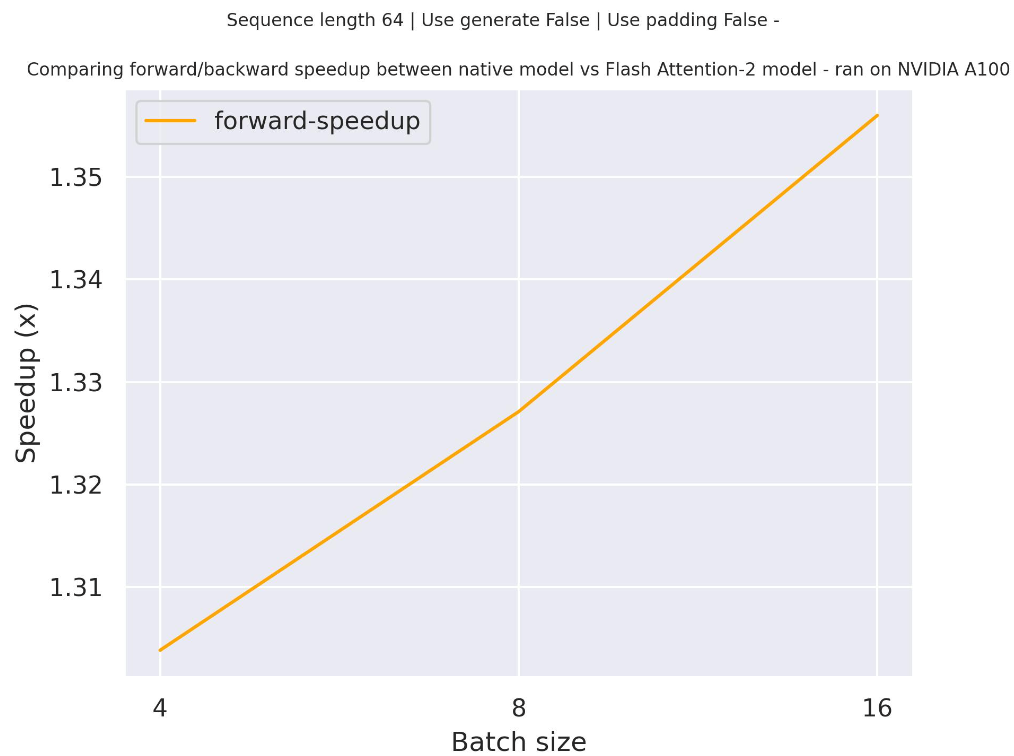

+Benchmarks

+ +The benchmarks are obtained from an [AWS EC2 g5.2xlarge](https://aws.amazon.com/ec2/instance-types/g5/) instance with a NVIDIA A10G Tensor Core GPU. + +

+  +

+

+ +

+

+  +

+

+ +

+

+  +

+

+ +

+

+  +

+

+ +

+

+

+

+For other vision tasks like object detection or segmentation, the image processor includes post-processing methods to convert a models raw output into meaningful predictions like bounding boxes or segmentation maps.

+

+### Padding

+

+Some models, like [DETR](./model_doc/detr), applies [scale augmentation](https://paperswithcode.com/method/image-scale-augmentation) during training which can cause images in a batch to have different sizes. Images with different sizes can't be batched together.

+

+To fix this, pad the images with the special padding token `0`. Use the [pad](https://github.com/huggingface/transformers/blob/9578c2597e2d88b6f0b304b5a05864fd613ddcc1/src/transformers/models/detr/image_processing_detr.py#L1151) method to pad the images, and define a custom collate function to batch them together.

+

+```py

+def collate_fn(batch):

+ pixel_values = [item["pixel_values"] for item in batch]

+ encoding = image_processor.pad(pixel_values, return_tensors="pt")

+ labels = [item["labels"] for item in batch]

+ batch = {}

+ batch["pixel_values"] = encoding["pixel_values"]

+ batch["pixel_mask"] = encoding["pixel_mask"]

+ batch["labels"] = labels

+ return batch

+```

diff --git a/transformers/docs/source/en/index.md b/transformers/docs/source/en/index.md

new file mode 100644

index 0000000000000000000000000000000000000000..ab0677b5a54e0e061039c63942195bf333fe79f4

--- /dev/null

+++ b/transformers/docs/source/en/index.md

@@ -0,0 +1,64 @@

+

+

+# Transformers

+

+

+  +

+ before

+

+  +

+

+  +

+ after

+

+ +

+

+  +

+

+

+

+Transformers acts as the model-definition framework for state-of-the-art machine learning models in text, computer

+vision, audio, video, and multimodal model, for both inference and training.

+

+It centralizes the model definition so that this definition is agreed upon across the ecosystem. `transformers` is the

+pivot across frameworks: if a model definition is supported, it will be compatible with the majority of training

+frameworks (Axolotl, Unsloth, DeepSpeed, FSDP, PyTorch-Lightning, ...), inference engines (vLLM, SGLang, TGI, ...),

+and adjacent modeling libraries (llama.cpp, mlx, ...) which leverage the model definition from `transformers`.

+

+We pledge to help support new state-of-the-art models and democratize their usage by having their model definition be

+simple, customizable, and efficient.

+

+There are over 1M+ Transformers [model checkpoints](https://huggingface.co/models?library=transformers&sort=trending) on the [Hugging Face Hub](https://huggingface.com/models) you can use.

+

+Explore the [Hub](https://huggingface.com/) today to find a model and use Transformers to help you get started right away.

+

+## Features

+

+Transformers provides everything you need for inference or training with state-of-the-art pretrained models. Some of the main features include:

+

+- [Pipeline](./pipeline_tutorial): Simple and optimized inference class for many machine learning tasks like text generation, image segmentation, automatic speech recognition, document question answering, and more.

+- [Trainer](./trainer): A comprehensive trainer that supports features such as mixed precision, torch.compile, and FlashAttention for training and distributed training for PyTorch models.

+- [generate](./llm_tutorial): Fast text generation with large language models (LLMs) and vision language models (VLMs), including support for streaming and multiple decoding strategies.

+

+## Design

+

+> [!TIP]

+> Read our [Philosophy](./philosophy) to learn more about Transformers' design principles.

+

+Transformers is designed for developers and machine learning engineers and researchers. Its main design principles are:

+

+1. Fast and easy to use: Every model is implemented from only three main classes (configuration, model, and preprocessor) and can be quickly used for inference or training with [`Pipeline`] or [`Trainer`].

+2. Pretrained models: Reduce your carbon footprint, compute cost and time by using a pretrained model instead of training an entirely new one. Each pretrained model is reproduced as closely as possible to the original model and offers state-of-the-art performance.

+

+

+

+  +

+

+

+

+

+## Learn

+

+If you're new to Transformers or want to learn more about transformer models, we recommend starting with the [LLM course](https://huggingface.co/learn/llm-course/chapter1/1?fw=pt). This comprehensive course covers everything from the fundamentals of how transformer models work to practical applications across various tasks. You'll learn the complete workflow, from curating high-quality datasets to fine-tuning large language models and implementing reasoning capabilities. The course contains both theoretical and hands-on exercises to build a solid foundational knowledge of transformer models as you learn.

\ No newline at end of file

diff --git a/transformers/docs/source/en/installation.md b/transformers/docs/source/en/installation.md

new file mode 100644

index 0000000000000000000000000000000000000000..911c84858f9ef6ce9c92f4ef9996e0e19209fdc2

--- /dev/null

+++ b/transformers/docs/source/en/installation.md

@@ -0,0 +1,223 @@

+

+

+# Installation

+

+Transformers works with [PyTorch](https://pytorch.org/get-started/locally/), [TensorFlow 2.0](https://www.tensorflow.org/install/pip), and [Flax](https://flax.readthedocs.io/en/latest/). It has been tested on Python 3.9+, PyTorch 2.1+, TensorFlow 2.6+, and Flax 0.4.1+.

+

+## Virtual environment

+

+A virtual environment helps manage different projects and avoids compatibility issues between dependencies. Take a look at the [Install packages in a virtual environment using pip and venv](https://packaging.python.org/en/latest/guides/installing-using-pip-and-virtual-environments/) guide if you're unfamiliar with Python virtual environments.

+

+ +

+

+

+

+  +

+

+ +

+

+  +

+

+

+ +

+

+  +

+

+ +

+

+  +

+

+

+These benchmarks were run on an [AWS EC2 g5.2xlarge instance](https://aws.amazon.com/ec2/instance-types/g5/), utilizing an NVIDIA A10G Tensor Core GPU.

+

+

+## ImageProcessingMixin

+

+[[autodoc]] image_processing_utils.ImageProcessingMixin

+ - from_pretrained

+ - save_pretrained

+

+## BatchFeature

+

+[[autodoc]] BatchFeature

+

+## BaseImageProcessor

+

+[[autodoc]] image_processing_utils.BaseImageProcessor

+

+

+## BaseImageProcessorFast

+

+[[autodoc]] image_processing_utils_fast.BaseImageProcessorFast

diff --git a/transformers/docs/source/en/main_classes/keras_callbacks.md b/transformers/docs/source/en/main_classes/keras_callbacks.md

new file mode 100644

index 0000000000000000000000000000000000000000..c9932300dbc56986f107650a474a03233dcc3ae6

--- /dev/null

+++ b/transformers/docs/source/en/main_classes/keras_callbacks.md

@@ -0,0 +1,28 @@

+

+

+# Keras callbacks

+

+When training a Transformers model with Keras, there are some library-specific callbacks available to automate common

+tasks:

+

+## KerasMetricCallback

+

+[[autodoc]] KerasMetricCallback

+

+## PushToHubCallback

+

+[[autodoc]] PushToHubCallback

diff --git a/transformers/docs/source/en/main_classes/logging.md b/transformers/docs/source/en/main_classes/logging.md

new file mode 100644

index 0000000000000000000000000000000000000000..5cbdf9ae27ed1ce61b1a45556c569c7b9eb4b628

--- /dev/null

+++ b/transformers/docs/source/en/main_classes/logging.md

@@ -0,0 +1,119 @@

+

+

+# Logging

+

+🤗 Transformers has a centralized logging system, so that you can setup the verbosity of the library easily.

+

+Currently the default verbosity of the library is `WARNING`.

+

+To change the level of verbosity, just use one of the direct setters. For instance, here is how to change the verbosity

+to the INFO level.

+

+```python

+import transformers

+

+transformers.logging.set_verbosity_info()

+```

+

+You can also use the environment variable `TRANSFORMERS_VERBOSITY` to override the default verbosity. You can set it

+to one of the following: `debug`, `info`, `warning`, `error`, `critical`, `fatal`. For example:

+

+```bash

+TRANSFORMERS_VERBOSITY=error ./myprogram.py

+```

+

+Additionally, some `warnings` can be disabled by setting the environment variable

+`TRANSFORMERS_NO_ADVISORY_WARNINGS` to a true value, like *1*. This will disable any warning that is logged using

+[`logger.warning_advice`]. For example:

+

+```bash

+TRANSFORMERS_NO_ADVISORY_WARNINGS=1 ./myprogram.py

+```

+

+Here is an example of how to use the same logger as the library in your own module or script:

+

+```python

+from transformers.utils import logging

+

+logging.set_verbosity_info()

+logger = logging.get_logger("transformers")

+logger.info("INFO")

+logger.warning("WARN")

+```

+

+

+All the methods of this logging module are documented below, the main ones are

+[`logging.get_verbosity`] to get the current level of verbosity in the logger and

+[`logging.set_verbosity`] to set the verbosity to the level of your choice. In order (from the least

+verbose to the most verbose), those levels (with their corresponding int values in parenthesis) are:

+

+- `transformers.logging.CRITICAL` or `transformers.logging.FATAL` (int value, 50): only report the most

+ critical errors.

+- `transformers.logging.ERROR` (int value, 40): only report errors.

+- `transformers.logging.WARNING` or `transformers.logging.WARN` (int value, 30): only reports error and

+ warnings. This is the default level used by the library.

+- `transformers.logging.INFO` (int value, 20): reports error, warnings and basic information.

+- `transformers.logging.DEBUG` (int value, 10): report all information.

+

+By default, `tqdm` progress bars will be displayed during model download. [`logging.disable_progress_bar`] and [`logging.enable_progress_bar`] can be used to suppress or unsuppress this behavior.

+

+## `logging` vs `warnings`

+

+Python has two logging systems that are often used in conjunction: `logging`, which is explained above, and `warnings`,

+which allows further classification of warnings in specific buckets, e.g., `FutureWarning` for a feature or path

+that has already been deprecated and `DeprecationWarning` to indicate an upcoming deprecation.

+

+We use both in the `transformers` library. We leverage and adapt `logging`'s `captureWarnings` method to allow

+management of these warning messages by the verbosity setters above.

+

+What does that mean for developers of the library? We should respect the following heuristics:

+- `warnings` should be favored for developers of the library and libraries dependent on `transformers`

+- `logging` should be used for end-users of the library using it in every-day projects

+

+See reference of the `captureWarnings` method below.

+

+[[autodoc]] logging.captureWarnings

+

+## Base setters

+

+[[autodoc]] logging.set_verbosity_error

+

+[[autodoc]] logging.set_verbosity_warning

+

+[[autodoc]] logging.set_verbosity_info

+

+[[autodoc]] logging.set_verbosity_debug

+

+## Other functions

+

+[[autodoc]] logging.get_verbosity

+

+[[autodoc]] logging.set_verbosity

+

+[[autodoc]] logging.get_logger

+

+[[autodoc]] logging.enable_default_handler

+

+[[autodoc]] logging.disable_default_handler

+

+[[autodoc]] logging.enable_explicit_format

+

+[[autodoc]] logging.reset_format

+

+[[autodoc]] logging.enable_progress_bar

+

+[[autodoc]] logging.disable_progress_bar

diff --git a/transformers/docs/source/en/main_classes/model.md b/transformers/docs/source/en/main_classes/model.md

new file mode 100644

index 0000000000000000000000000000000000000000..15345a7b2af3fb0db28358e9904bf83df4aff272

--- /dev/null

+++ b/transformers/docs/source/en/main_classes/model.md

@@ -0,0 +1,73 @@

+

+

+# Models

+

+The base classes [`PreTrainedModel`], [`TFPreTrainedModel`], and

+[`FlaxPreTrainedModel`] implement the common methods for loading/saving a model either from a local

+file or directory, or from a pretrained model configuration provided by the library (downloaded from HuggingFace's AWS

+S3 repository).

+

+[`PreTrainedModel`] and [`TFPreTrainedModel`] also implement a few methods which

+are common among all the models to:

+

+- resize the input token embeddings when new tokens are added to the vocabulary

+- prune the attention heads of the model.

+

+The other methods that are common to each model are defined in [`~modeling_utils.ModuleUtilsMixin`]

+(for the PyTorch models) and [`~modeling_tf_utils.TFModuleUtilsMixin`] (for the TensorFlow models) or

+for text generation, [`~generation.GenerationMixin`] (for the PyTorch models),

+[`~generation.TFGenerationMixin`] (for the TensorFlow models) and

+[`~generation.FlaxGenerationMixin`] (for the Flax/JAX models).

+

+

+## PreTrainedModel

+

+[[autodoc]] PreTrainedModel

+ - push_to_hub

+ - all

+

+Custom models should also include a `_supports_assign_param_buffer`, which determines if superfast init can apply

+on the particular model. Signs that your model needs this are if `test_save_and_load_from_pretrained` fails. If so,

+set this to `False`.

+

+## ModuleUtilsMixin

+

+[[autodoc]] modeling_utils.ModuleUtilsMixin

+

+## TFPreTrainedModel

+

+[[autodoc]] TFPreTrainedModel

+ - push_to_hub

+ - all

+

+## TFModelUtilsMixin

+

+[[autodoc]] modeling_tf_utils.TFModelUtilsMixin

+

+## FlaxPreTrainedModel

+

+[[autodoc]] FlaxPreTrainedModel

+ - push_to_hub

+ - all

+

+## Pushing to the Hub

+

+[[autodoc]] utils.PushToHubMixin

+

+## Sharded checkpoints

+

+[[autodoc]] modeling_utils.load_sharded_checkpoint

diff --git a/transformers/docs/source/en/main_classes/onnx.md b/transformers/docs/source/en/main_classes/onnx.md

new file mode 100644

index 0000000000000000000000000000000000000000..81d31c97e88dde23f3807cbcbc05820c3f06a48d

--- /dev/null

+++ b/transformers/docs/source/en/main_classes/onnx.md

@@ -0,0 +1,54 @@

+

+

+# Exporting 🤗 Transformers models to ONNX

+

+🤗 Transformers provides a `transformers.onnx` package that enables you to

+convert model checkpoints to an ONNX graph by leveraging configuration objects.

+

+See the [guide](../serialization) on exporting 🤗 Transformers models for more

+details.

+

+## ONNX Configurations

+

+We provide three abstract classes that you should inherit from, depending on the

+type of model architecture you wish to export:

+

+* Encoder-based models inherit from [`~onnx.config.OnnxConfig`]

+* Decoder-based models inherit from [`~onnx.config.OnnxConfigWithPast`]

+* Encoder-decoder models inherit from [`~onnx.config.OnnxSeq2SeqConfigWithPast`]

+

+### OnnxConfig

+

+[[autodoc]] onnx.config.OnnxConfig

+

+### OnnxConfigWithPast

+

+[[autodoc]] onnx.config.OnnxConfigWithPast

+

+### OnnxSeq2SeqConfigWithPast

+

+[[autodoc]] onnx.config.OnnxSeq2SeqConfigWithPast

+

+## ONNX Features

+

+Each ONNX configuration is associated with a set of _features_ that enable you

+to export models for different types of topologies or tasks.

+

+### FeaturesManager

+

+[[autodoc]] onnx.features.FeaturesManager

+

diff --git a/transformers/docs/source/en/main_classes/optimizer_schedules.md b/transformers/docs/source/en/main_classes/optimizer_schedules.md

new file mode 100644

index 0000000000000000000000000000000000000000..24c978e6fe3ced5eb39e1e3f0e1f956f36c83247

--- /dev/null

+++ b/transformers/docs/source/en/main_classes/optimizer_schedules.md

@@ -0,0 +1,76 @@

+

+

+# Optimization

+

+The `.optimization` module provides:

+

+- an optimizer with weight decay fixed that can be used to fine-tuned models, and

+- several schedules in the form of schedule objects that inherit from `_LRSchedule`:

+- a gradient accumulation class to accumulate the gradients of multiple batches

+

+

+## AdaFactor (PyTorch)

+

+[[autodoc]] Adafactor

+

+## AdamWeightDecay (TensorFlow)

+

+[[autodoc]] AdamWeightDecay

+

+[[autodoc]] create_optimizer

+

+## Schedules

+

+### Learning Rate Schedules (PyTorch)

+

+[[autodoc]] SchedulerType

+

+[[autodoc]] get_scheduler

+

+[[autodoc]] get_constant_schedule

+

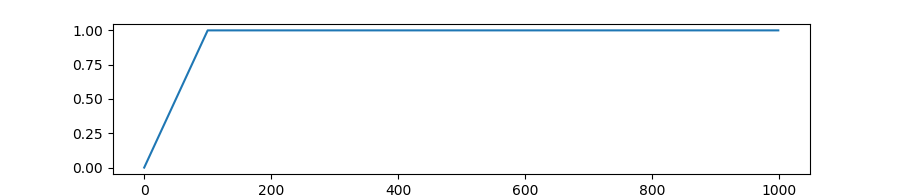

+[[autodoc]] get_constant_schedule_with_warmup

+

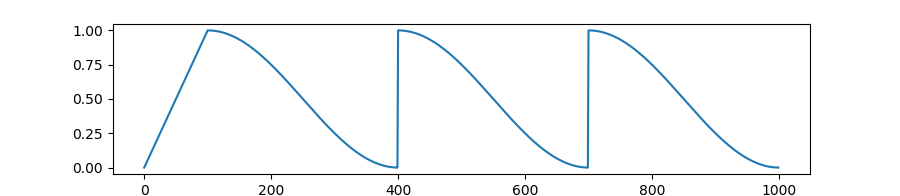

+ +

+ +

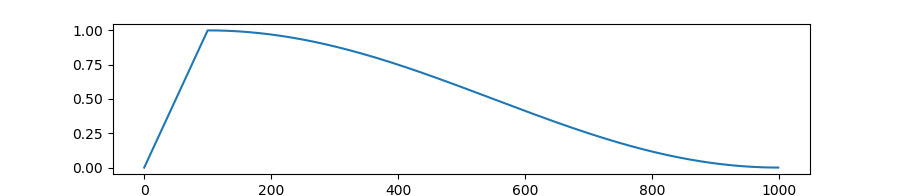

+[[autodoc]] get_cosine_schedule_with_warmup

+

+

+

+[[autodoc]] get_cosine_schedule_with_warmup

+

+ +

+[[autodoc]] get_cosine_with_hard_restarts_schedule_with_warmup

+

+

+

+[[autodoc]] get_cosine_with_hard_restarts_schedule_with_warmup

+

+ +

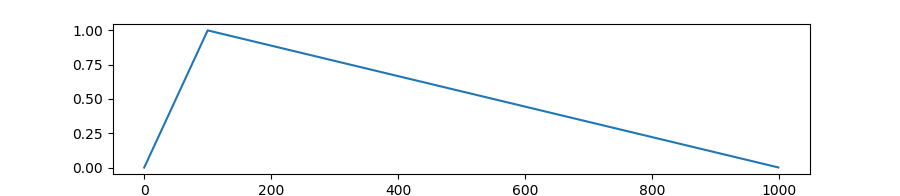

+[[autodoc]] get_linear_schedule_with_warmup

+

+

+

+[[autodoc]] get_linear_schedule_with_warmup

+

+ +

+[[autodoc]] get_polynomial_decay_schedule_with_warmup

+

+[[autodoc]] get_inverse_sqrt_schedule

+

+[[autodoc]] get_wsd_schedule

+

+### Warmup (TensorFlow)

+

+[[autodoc]] WarmUp

+

+## Gradient Strategies

+

+### GradientAccumulator (TensorFlow)

+

+[[autodoc]] GradientAccumulator

diff --git a/transformers/docs/source/en/main_classes/output.md b/transformers/docs/source/en/main_classes/output.md

new file mode 100644

index 0000000000000000000000000000000000000000..300213d4513ebbf832e22828ac8be3164d5b95e6

--- /dev/null

+++ b/transformers/docs/source/en/main_classes/output.md

@@ -0,0 +1,321 @@

+

+

+# Model outputs

+

+All models have outputs that are instances of subclasses of [`~utils.ModelOutput`]. Those are

+data structures containing all the information returned by the model, but that can also be used as tuples or

+dictionaries.

+

+Let's see how this looks in an example:

+

+```python

+from transformers import BertTokenizer, BertForSequenceClassification

+import torch

+

+tokenizer = BertTokenizer.from_pretrained("google-bert/bert-base-uncased")

+model = BertForSequenceClassification.from_pretrained("google-bert/bert-base-uncased")

+

+inputs = tokenizer("Hello, my dog is cute", return_tensors="pt")

+labels = torch.tensor([1]).unsqueeze(0) # Batch size 1

+outputs = model(**inputs, labels=labels)

+```

+

+The `outputs` object is a [`~modeling_outputs.SequenceClassifierOutput`], as we can see in the

+documentation of that class below, it means it has an optional `loss`, a `logits`, an optional `hidden_states` and

+an optional `attentions` attribute. Here we have the `loss` since we passed along `labels`, but we don't have

+`hidden_states` and `attentions` because we didn't pass `output_hidden_states=True` or

+`output_attentions=True`.

+

+

+

+[[autodoc]] get_polynomial_decay_schedule_with_warmup

+

+[[autodoc]] get_inverse_sqrt_schedule

+

+[[autodoc]] get_wsd_schedule

+

+### Warmup (TensorFlow)

+

+[[autodoc]] WarmUp

+

+## Gradient Strategies

+

+### GradientAccumulator (TensorFlow)

+

+[[autodoc]] GradientAccumulator

diff --git a/transformers/docs/source/en/main_classes/output.md b/transformers/docs/source/en/main_classes/output.md

new file mode 100644

index 0000000000000000000000000000000000000000..300213d4513ebbf832e22828ac8be3164d5b95e6

--- /dev/null

+++ b/transformers/docs/source/en/main_classes/output.md

@@ -0,0 +1,321 @@

+

+

+# Model outputs

+

+All models have outputs that are instances of subclasses of [`~utils.ModelOutput`]. Those are

+data structures containing all the information returned by the model, but that can also be used as tuples or

+dictionaries.

+

+Let's see how this looks in an example:

+

+```python

+from transformers import BertTokenizer, BertForSequenceClassification

+import torch

+

+tokenizer = BertTokenizer.from_pretrained("google-bert/bert-base-uncased")

+model = BertForSequenceClassification.from_pretrained("google-bert/bert-base-uncased")

+

+inputs = tokenizer("Hello, my dog is cute", return_tensors="pt")

+labels = torch.tensor([1]).unsqueeze(0) # Batch size 1

+outputs = model(**inputs, labels=labels)

+```

+

+The `outputs` object is a [`~modeling_outputs.SequenceClassifierOutput`], as we can see in the

+documentation of that class below, it means it has an optional `loss`, a `logits`, an optional `hidden_states` and

+an optional `attentions` attribute. Here we have the `loss` since we passed along `labels`, but we don't have

+`hidden_states` and `attentions` because we didn't pass `output_hidden_states=True` or

+`output_attentions=True`.

+

+

+

+

+# ALBERT

+

+[ALBERT](https://huggingface.co/papers/1909.11942) is designed to address memory limitations of scaling and training of [BERT](./bert). It adds two parameter reduction techniques. The first, factorized embedding parametrization, splits the larger vocabulary embedding matrix into two smaller matrices so you can grow the hidden size without adding a lot more parameters. The second, cross-layer parameter sharing, allows layer to share parameters which keeps the number of learnable parameters lower.

+

+ALBERT was created to address problems like -- GPU/TPU memory limitations, longer training times, and unexpected model degradation in BERT. ALBERT uses two parameter-reduction techniques to lower memory consumption and increase the training speed of BERT:

+

+- **Factorized embedding parameterization:** The large vocabulary embedding matrix is decomposed into two smaller matrices, reducing memory consumption.

+- **Cross-layer parameter sharing:** Instead of learning separate parameters for each transformer layer, ALBERT shares parameters across layers, further reducing the number of learnable weights.

+

+ALBERT uses absolute position embeddings (like BERT) so padding is applied at right. Size of embeddings is 128 While BERT uses 768. ALBERT can processes maximum 512 token at a time.

+

+You can find all the original ALBERT checkpoints under the [ALBERT community](https://huggingface.co/albert) organization.

+

+> [!TIP]

+> Click on the ALBERT models in the right sidebar for more examples of how to apply ALBERT to different language tasks.

+

+The example below demonstrates how to predict the `[MASK]` token with [`Pipeline`], [`AutoModel`], and from the command line.

+

+

+  +

+  +

+  +

+  +

+

+ +

+  +

+  +

+  +

+

+

+

+# ALIGN

+

+[ALIGN](https://huggingface.co/papers/2102.05918) is pretrained on a noisy 1.8 billion alt‑text and image pair dataset to show that scale can make up for the noise. It uses a dual‑encoder architecture, [EfficientNet](./efficientnet) for images and [BERT](./bert) for text, and a contrastive loss to align similar image–text embeddings together while pushing different embeddings apart. Once trained, ALIGN can encode any image and candidate captions into a shared vector space for zero‑shot retrieval or classification without requiring extra labels. This scale‑first approach reduces dataset curation costs and powers state‑of‑the‑art image–text retrieval and zero‑shot ImageNet classification.

+

+You can find all the original ALIGN checkpoints under the [Kakao Brain](https://huggingface.co/kakaobrain?search_models=align) organization.

+

+> [!TIP]

+> Click on the ALIGN models in the right sidebar for more examples of how to apply ALIGN to different vision and text related tasks.

+

+The example below demonstrates zero-shot image classification with [`Pipeline`] or the [`AutoModel`] class.

+

+

+  +

+  +

+

+ +

+  +

+

+

+

+

+```python

+import torch

+import requests

+from PIL import Image

+from transformers import AltCLIPModel, AltCLIPProcessor

+

+model = AltCLIPModel.from_pretrained("BAAI/AltCLIP", torch_dtype=torch.bfloat16)

+processor = AltCLIPProcessor.from_pretrained("BAAI/AltCLIP")

+

+url = "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/pipeline-cat-chonk.jpeg"

+image = Image.open(requests.get(url, stream=True).raw)

+

+inputs = processor(text=["a photo of a cat", "a photo of a dog"], images=image, return_tensors="pt", padding=True)

+

+outputs = model(**inputs)

+logits_per_image = outputs.logits_per_image # this is the image-text similarity score

+probs = logits_per_image.softmax(dim=1) # we can take the softmax to get the label probabilities

+

+labels = ["a photo of a cat", "a photo of a dog"]

+for label, prob in zip(labels, probs[0]):

+ print(f"{label}: {prob.item():.4f}")

+```

+

+

+

+

+Quantization reduces the memory burden of large models by representing the weights in a lower precision. Refer to the [Quantization](../quantization/overview) overview for more available quantization backends.

+

+The example below uses [torchao](../quantization/torchao) to only quantize the weights to int4.

+

+```python

+# !pip install torchao

+import torch

+import requests

+from PIL import Image

+from transformers import AltCLIPModel, AltCLIPProcessor, TorchAoConfig

+

+model = AltCLIPModel.from_pretrained(

+ "BAAI/AltCLIP",

+ quantization_config=TorchAoConfig("int4_weight_only", group_size=128),

+ torch_dtype=torch.bfloat16,

+)

+

+processor = AltCLIPProcessor.from_pretrained("BAAI/AltCLIP")

+

+url = "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/pipeline-cat-chonk.jpeg"

+image = Image.open(requests.get(url, stream=True).raw)

+

+inputs = processor(text=["a photo of a cat", "a photo of a dog"], images=image, return_tensors="pt", padding=True)

+

+outputs = model(**inputs)

+logits_per_image = outputs.logits_per_image # this is the image-text similarity score

+probs = logits_per_image.softmax(dim=1) # we can take the softmax to get the label probabilities

+

+labels = ["a photo of a cat", "a photo of a dog"]

+for label, prob in zip(labels, probs[0]):

+ print(f"{label}: {prob.item():.4f}")

+```

+

+## Notes

+

+- AltCLIP uses bidirectional attention instead of causal attention and it uses the `[CLS]` token in XLM-R to represent a text embedding.

+- Use [`CLIPImageProcessor`] to resize (or rescale) and normalize images for the model.

+- [`AltCLIPProcessor`] combines [`CLIPImageProcessor`] and [`XLMRobertaTokenizer`] into a single instance to encode text and prepare images.

+

+## AltCLIPConfig

+[[autodoc]] AltCLIPConfig

+

+## AltCLIPTextConfig

+[[autodoc]] AltCLIPTextConfig

+

+## AltCLIPVisionConfig

+[[autodoc]] AltCLIPVisionConfig

+

+## AltCLIPModel

+[[autodoc]] AltCLIPModel

+

+## AltCLIPTextModel

+[[autodoc]] AltCLIPTextModel

+

+## AltCLIPVisionModel

+[[autodoc]] AltCLIPVisionModel

+

+## AltCLIPProcessor

+[[autodoc]] AltCLIPProcessor

diff --git a/transformers/docs/source/en/model_doc/arcee.md b/transformers/docs/source/en/model_doc/arcee.md

new file mode 100644

index 0000000000000000000000000000000000000000..520e9a05bf266f96153e9aa18533d44e7668844e

--- /dev/null

+++ b/transformers/docs/source/en/model_doc/arcee.md

@@ -0,0 +1,104 @@

+

+

+

+

+

+```py

+import torch

+from transformers import pipeline

+

+pipeline = pipeline(

+ task="text-generation",

+ model="arcee-ai/AFM-4.5B",

+ torch_dtype=torch.float16,

+ device=0

+)

+

+output = pipeline("The key innovation in Arcee is")

+print(output[0]["generated_text"])

+```

+

+

+

+

+```py

+import torch

+from transformers import AutoTokenizer, ArceeForCausalLM

+

+tokenizer = AutoTokenizer.from_pretrained("arcee-ai/AFM-4.5B")

+model = ArceeForCausalLM.from_pretrained(

+ "arcee-ai/AFM-4.5B",

+ torch_dtype=torch.float16,

+ device_map="auto"

+)

+

+inputs = tokenizer("The key innovation in Arcee is", return_tensors="pt")

+with torch.no_grad():

+ outputs = model.generate(**inputs, max_new_tokens=50)

+print(tokenizer.decode(outputs[0], skip_special_tokens=True))

+```

+

+

+

+

+## ArceeConfig

+

+[[autodoc]] ArceeConfig

+

+## ArceeModel

+

+[[autodoc]] ArceeModel

+ - forward

+

+## ArceeForCausalLM

+

+[[autodoc]] ArceeForCausalLM

+ - forward

+

+## ArceeForSequenceClassification

+

+[[autodoc]] ArceeForSequenceClassification

+ - forward

+

+## ArceeForQuestionAnswering

+

+[[autodoc]] ArceeForQuestionAnswering

+ - forward

+

+## ArceeForTokenClassification

+

+[[autodoc]] ArceeForTokenClassification

+ - forward

\ No newline at end of file

diff --git a/transformers/docs/source/en/model_doc/aria.md b/transformers/docs/source/en/model_doc/aria.md

new file mode 100644

index 0000000000000000000000000000000000000000..1c974bf5e26637bb1031e78110ac3b8efe2430c5

--- /dev/null

+++ b/transformers/docs/source/en/model_doc/aria.md

@@ -0,0 +1,176 @@

+

+

+

+

+

+```python

+import torch

+from transformers import pipeline

+

+pipeline = pipeline(

+ "image-to-text",

+ model="rhymes-ai/Aria",

+ device=0,

+ torch_dtype=torch.bfloat16

+)

+pipeline(

+ "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/pipeline-cat-chonk.jpeg",

+ text="What is shown in this image?"

+)

+```

+

+

+

+

+```python

+import torch

+from transformers import AutoModelForCausalLM, AutoProcessor

+

+model = AutoModelForCausalLM.from_pretrained(

+ "rhymes-ai/Aria",

+ device_map="auto",

+ torch_dtype=torch.bfloat16,

+ attn_implementation="sdpa"

+)

+

+processor = AutoProcessor.from_pretrained("rhymes-ai/Aria")

+

+messages = [

+ {

+ "role": "user", "content": [

+ {"type": "image", "url": "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/pipeline-cat-chonk.jpeg"},

+ {"type": "text", "text": "What is shown in this image?"},

+ ]

+ },

+]

+

+inputs = processor.apply_chat_template(messages, add_generation_prompt=True, tokenize=True, return_dict=True, return_tensors="pt")

+ipnuts = inputs.to(model.device, torch.bfloat16)

+

+output = model.generate(

+ **inputs,

+ max_new_tokens=15,

+ stop_strings=["<|im_end|>"],

+ tokenizer=processor.tokenizer,

+ do_sample=True,

+ temperature=0.9,

+)

+output_ids = output[0][inputs["input_ids"].shape[1]:]

+response = processor.decode(output_ids, skip_special_tokens=True)

+print(response)

+```

+

+

+

+

+Quantization reduces the memory burden of large models by representing the weights in a lower precision. Refer to the [Quantization](../quantization/overview) overview for more available quantization backends.

+

+The example below uses [torchao](../quantization/torchao) to only quantize the weights to int4 and the [rhymes-ai/Aria-sequential_mlp](https://huggingface.co/rhymes-ai/Aria-sequential_mlp) checkpoint. This checkpoint replaces grouped GEMM with `torch.nn.Linear` layers for easier quantization.

+

+```py

+# pip install torchao

+import torch

+from transformers import TorchAoConfig, AutoModelForCausalLM, AutoProcessor

+

+quantization_config = TorchAoConfig("int4_weight_only", group_size=128)

+model = AutoModelForCausalLM.from_pretrained(

+ "rhymes-ai/Aria-sequential_mlp",

+ torch_dtype=torch.bfloat16,

+ device_map="auto",

+ quantization_config=quantization_config

+)

+processor = AutoProcessor.from_pretrained(

+ "rhymes-ai/Aria-sequential_mlp",

+)

+

+messages = [

+ {

+ "role": "user", "content": [

+ {"type": "image", "url": "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/pipeline-cat-chonk.jpeg"},

+ {"type": "text", "text": "What is shown in this image?"},

+ ]

+ },

+]

+

+inputs = processor.apply_chat_template(messages, add_generation_prompt=True, tokenize=True, return_dict=True, return_tensors="pt")

+inputs = inputs.to(model.device, torch.bfloat16)

+

+output = model.generate(

+ **inputs,

+ max_new_tokens=15,

+ stop_strings=["<|im_end|>"],

+ tokenizer=processor.tokenizer,

+ do_sample=True,

+ temperature=0.9,

+)

+output_ids = output[0][inputs["input_ids"].shape[1]:]

+response = processor.decode(output_ids, skip_special_tokens=True)

+print(response)

+```

+

+

+## AriaImageProcessor

+

+[[autodoc]] AriaImageProcessor

+

+## AriaProcessor

+

+[[autodoc]] AriaProcessor

+

+## AriaTextConfig

+

+[[autodoc]] AriaTextConfig

+

+## AriaConfig

+

+[[autodoc]] AriaConfig

+

+## AriaTextModel

+

+[[autodoc]] AriaTextModel

+

+## AriaModel

+

+[[autodoc]] AriaModel

+

+## AriaTextForCausalLM

+

+[[autodoc]] AriaTextForCausalLM

+

+## AriaForConditionalGeneration

+

+[[autodoc]] AriaForConditionalGeneration

+ - forward

diff --git a/transformers/docs/source/en/model_doc/audio-spectrogram-transformer.md b/transformers/docs/source/en/model_doc/audio-spectrogram-transformer.md

new file mode 100644

index 0000000000000000000000000000000000000000..46544de1f61b83eab50d793009ac8a8674e43846

--- /dev/null

+++ b/transformers/docs/source/en/model_doc/audio-spectrogram-transformer.md

@@ -0,0 +1,109 @@

+

+

+# Audio Spectrogram Transformer

+

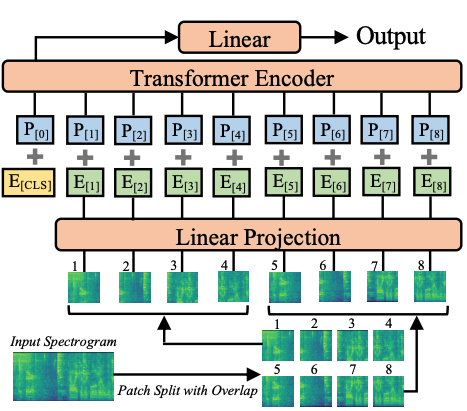

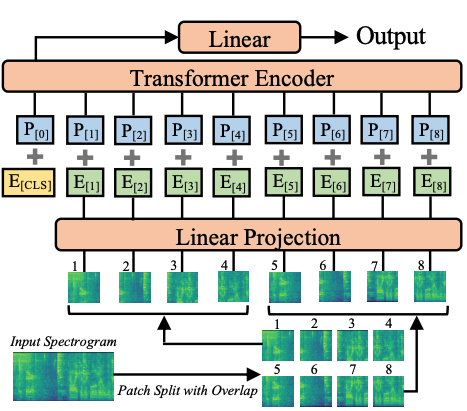

+ +

+ Audio Spectrogram Transformer architecture. Taken from the original paper.

+

+This model was contributed by [nielsr](https://huggingface.co/nielsr).

+The original code can be found [here](https://github.com/YuanGongND/ast).

+

+## Usage tips

+

+- When fine-tuning the Audio Spectrogram Transformer (AST) on your own dataset, it's recommended to take care of the input normalization (to make

+sure the input has mean of 0 and std of 0.5). [`ASTFeatureExtractor`] takes care of this. Note that it uses the AudioSet

+mean and std by default. You can check [`ast/src/get_norm_stats.py`](https://github.com/YuanGongND/ast/blob/master/src/get_norm_stats.py) to see how

+the authors compute the stats for a downstream dataset.

+- Note that the AST needs a low learning rate (the authors use a 10 times smaller learning rate compared to their CNN model proposed in the

+[PSLA paper](https://huggingface.co/papers/2102.01243)) and converges quickly, so please search for a suitable learning rate and learning rate scheduler for your task.

+

+### Using Scaled Dot Product Attention (SDPA)

+

+PyTorch includes a native scaled dot-product attention (SDPA) operator as part of `torch.nn.functional`. This function

+encompasses several implementations that can be applied depending on the inputs and the hardware in use. See the

+[official documentation](https://pytorch.org/docs/stable/generated/torch.nn.functional.scaled_dot_product_attention.html)

+or the [GPU Inference](https://huggingface.co/docs/transformers/main/en/perf_infer_gpu_one#pytorch-scaled-dot-product-attention)

+page for more information.

+

+SDPA is used by default for `torch>=2.1.1` when an implementation is available, but you may also set

+`attn_implementation="sdpa"` in `from_pretrained()` to explicitly request SDPA to be used.

+

+```

+from transformers import ASTForAudioClassification

+model = ASTForAudioClassification.from_pretrained("MIT/ast-finetuned-audioset-10-10-0.4593", attn_implementation="sdpa", torch_dtype=torch.float16)

+...

+```

+

+For the best speedups, we recommend loading the model in half-precision (e.g. `torch.float16` or `torch.bfloat16`).

+

+On a local benchmark (A100-40GB, PyTorch 2.3.0, OS Ubuntu 22.04) with `float32` and `MIT/ast-finetuned-audioset-10-10-0.4593` model, we saw the following speedups during inference.

+

+| Batch size | Average inference time (ms), eager mode | Average inference time (ms), sdpa model | Speed up, Sdpa / Eager (x) |

+|--------------|-------------------------------------------|-------------------------------------------|------------------------------|

+| 1 | 27 | 6 | 4.5 |

+| 2 | 12 | 6 | 2 |

+| 4 | 21 | 8 | 2.62 |

+| 8 | 40 | 14 | 2.86 |

+

+## Resources

+

+A list of official Hugging Face and community (indicated by 🌎) resources to help you get started with the Audio Spectrogram Transformer.

+

+

+

+ Audio Spectrogram Transformer architecture. Taken from the original paper.

+

+This model was contributed by [nielsr](https://huggingface.co/nielsr).

+The original code can be found [here](https://github.com/YuanGongND/ast).

+

+## Usage tips

+

+- When fine-tuning the Audio Spectrogram Transformer (AST) on your own dataset, it's recommended to take care of the input normalization (to make

+sure the input has mean of 0 and std of 0.5). [`ASTFeatureExtractor`] takes care of this. Note that it uses the AudioSet

+mean and std by default. You can check [`ast/src/get_norm_stats.py`](https://github.com/YuanGongND/ast/blob/master/src/get_norm_stats.py) to see how

+the authors compute the stats for a downstream dataset.

+- Note that the AST needs a low learning rate (the authors use a 10 times smaller learning rate compared to their CNN model proposed in the

+[PSLA paper](https://huggingface.co/papers/2102.01243)) and converges quickly, so please search for a suitable learning rate and learning rate scheduler for your task.

+

+### Using Scaled Dot Product Attention (SDPA)

+

+PyTorch includes a native scaled dot-product attention (SDPA) operator as part of `torch.nn.functional`. This function

+encompasses several implementations that can be applied depending on the inputs and the hardware in use. See the

+[official documentation](https://pytorch.org/docs/stable/generated/torch.nn.functional.scaled_dot_product_attention.html)

+or the [GPU Inference](https://huggingface.co/docs/transformers/main/en/perf_infer_gpu_one#pytorch-scaled-dot-product-attention)

+page for more information.

+

+SDPA is used by default for `torch>=2.1.1` when an implementation is available, but you may also set

+`attn_implementation="sdpa"` in `from_pretrained()` to explicitly request SDPA to be used.

+

+```

+from transformers import ASTForAudioClassification

+model = ASTForAudioClassification.from_pretrained("MIT/ast-finetuned-audioset-10-10-0.4593", attn_implementation="sdpa", torch_dtype=torch.float16)

+...

+```

+

+For the best speedups, we recommend loading the model in half-precision (e.g. `torch.float16` or `torch.bfloat16`).

+

+On a local benchmark (A100-40GB, PyTorch 2.3.0, OS Ubuntu 22.04) with `float32` and `MIT/ast-finetuned-audioset-10-10-0.4593` model, we saw the following speedups during inference.

+

+| Batch size | Average inference time (ms), eager mode | Average inference time (ms), sdpa model | Speed up, Sdpa / Eager (x) |

+|--------------|-------------------------------------------|-------------------------------------------|------------------------------|

+| 1 | 27 | 6 | 4.5 |

+| 2 | 12 | 6 | 2 |

+| 4 | 21 | 8 | 2.62 |

+| 8 | 40 | 14 | 2.86 |

+

+## Resources

+

+A list of official Hugging Face and community (indicated by 🌎) resources to help you get started with the Audio Spectrogram Transformer.

+

+

+

+If your `NewModelConfig` is a subclass of [`~transformers.PretrainedConfig`], make sure its

+`model_type` attribute is set to the same key you use when registering the config (here `"new-model"`).

+

+Likewise, if your `NewModel` is a subclass of [`PreTrainedModel`], make sure its

+`config_class` attribute is set to the same class you use when registering the model (here

+`NewModelConfig`).

+

+

+

+## AutoConfig

+

+[[autodoc]] AutoConfig

+

+## AutoTokenizer

+

+[[autodoc]] AutoTokenizer

+

+## AutoFeatureExtractor

+

+[[autodoc]] AutoFeatureExtractor

+