Migel Tissera

commited on

Commit

·

11fc037

1

Parent(s):

b5f1ded

adding media folder

Browse files

README.md

CHANGED

|

@@ -18,6 +18,18 @@ HelixNet regenerates very pleasing and accurate responses, due to the entropy pr

|

|

| 18 |

|

| 19 |

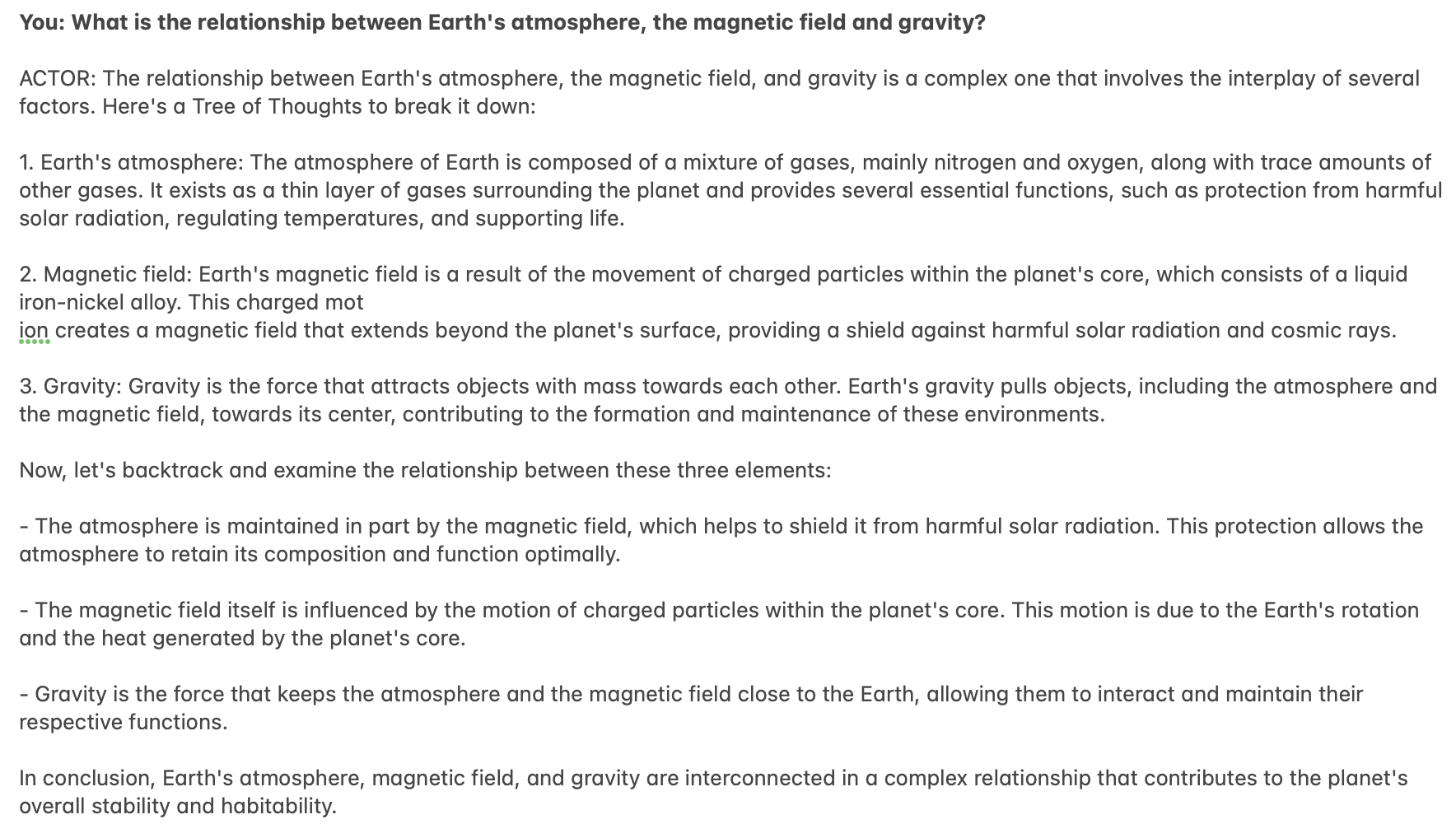

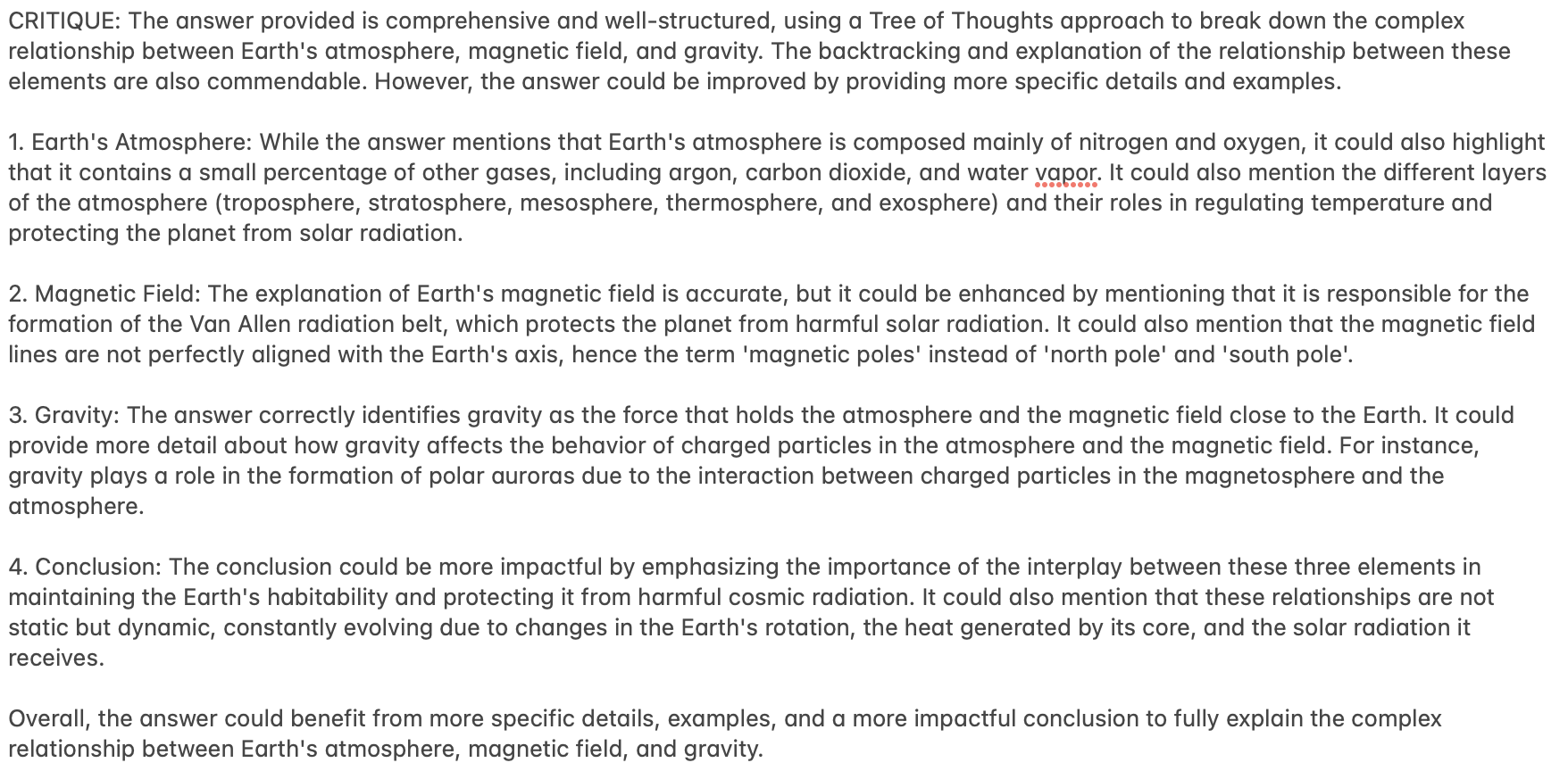

The actor network was trained with Supervised Fine-Tuning, on 250K very high-quality samples. It has 75K of Open-Orca's Chain-of-Thought data, and a mixture of Dolphin (GPT-4), SynthIA's Tree-of-Thought data.

|

| 20 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 21 |

## Phase 2: Critic

|

| 22 |

|

| 23 |

To train the critic, the following process was followed:

|

|

@@ -127,4 +139,10 @@ while True:

|

|

| 127 |

regenerator_response = generate_text(prompt_regenerator, model_regenerator, tokenizer_regenerator)

|

| 128 |

print(f"REGENERATION: {regenerator_response}")

|

| 129 |

|

| 130 |

-

```

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 18 |

|

| 19 |

The actor network was trained with Supervised Fine-Tuning, on 250K very high-quality samples. It has 75K of Open-Orca's Chain-of-Thought data, and a mixture of Dolphin (GPT-4), SynthIA's Tree-of-Thought data.

|

| 20 |

|

| 21 |

+

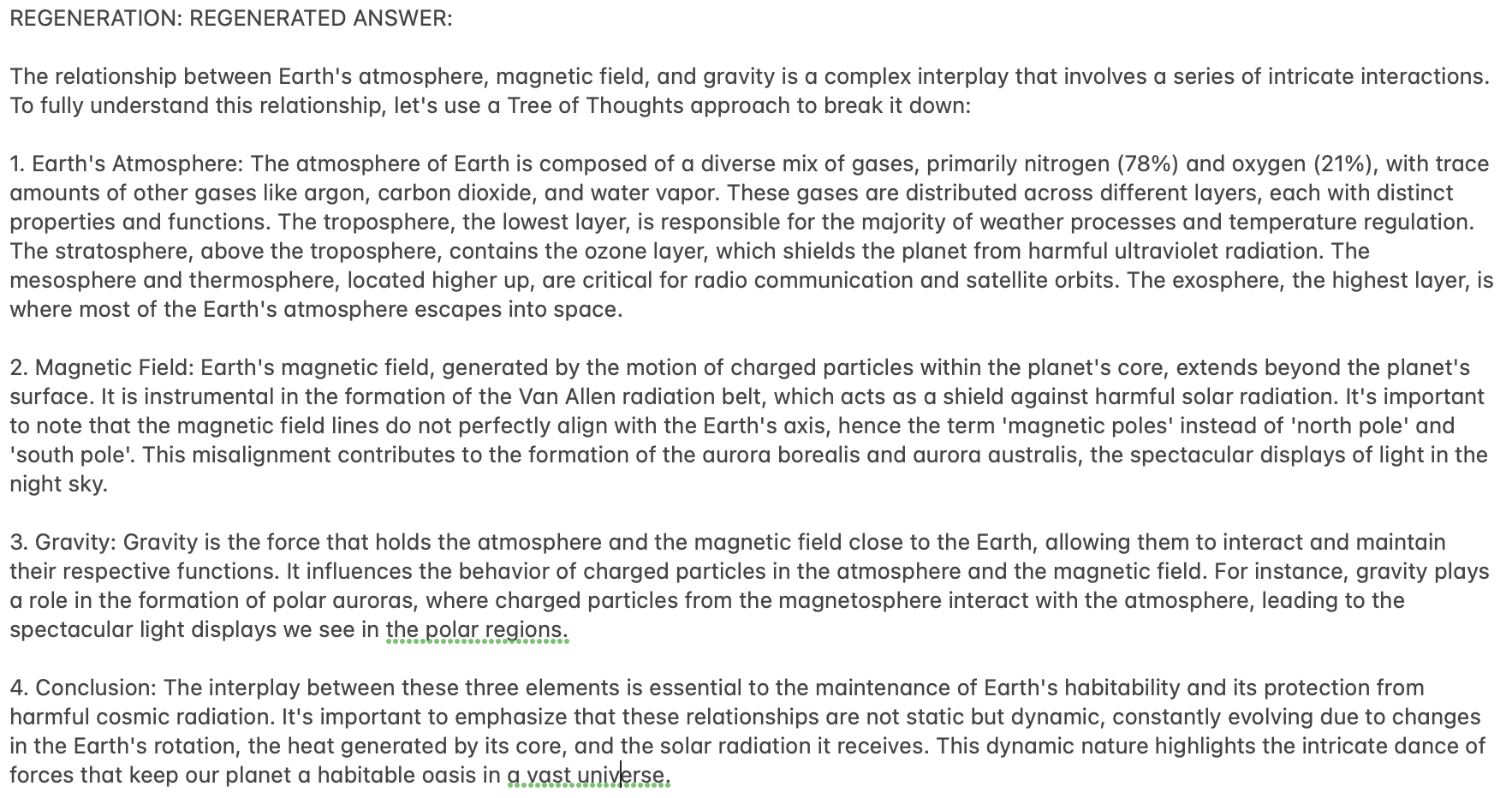

Here are the results for the Actor network on metrics used by [HuggingFaceH4 Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)

|

| 22 |

+

|

| 23 |

+

||||

|

| 24 |

+

|:------:|:--------:|:-------:|

|

| 25 |

+

|**Task**|**Metric**|**Value**|

|

| 26 |

+

|*arc_challenge*|acc_norm|62.28|

|

| 27 |

+

|*hellaswag*|acc_norm|83.22|

|

| 28 |

+

|*mmlu*|acc_norm|63.10|

|

| 29 |

+

|*truthfulqa_mc*|mc2|50.10|

|

| 30 |

+

|**Total Average**|-|**0.64675**||

|

| 31 |

+

|

| 32 |

+

|

| 33 |

## Phase 2: Critic

|

| 34 |

|

| 35 |

To train the critic, the following process was followed:

|

|

|

|

| 139 |

regenerator_response = generate_text(prompt_regenerator, model_regenerator, tokenizer_regenerator)

|

| 140 |

print(f"REGENERATION: {regenerator_response}")

|

| 141 |

|

| 142 |

+

```

|

| 143 |

+

|

| 144 |

+

|

| 145 |

+

|

| 146 |

+

|

| 147 |

+

|

| 148 |

+

|