Add files using upload-large-folder tool

Browse filesThis view is limited to 50 files because it contains too many changes. See raw diff

- build/lib/opencompass/configs/chatml_datasets/AMO_Bench/AMO_Bench_gen.py +12 -0

- build/lib/opencompass/configs/chatml_datasets/CPsyExam/CPsyExam_gen.py +12 -0

- build/lib/opencompass/configs/chatml_datasets/CS_Bench/CS_Bench_gen.py +25 -0

- build/lib/opencompass/configs/chatml_datasets/C_MHChem/C_MHChem_gen.py +12 -0

- build/lib/opencompass/configs/chatml_datasets/HMMT2025/HMMT2025_gen.py +12 -0

- build/lib/opencompass/configs/chatml_datasets/IMO_Bench_AnswerBench/IMO_Bench_AnswerBench_gen.py +12 -0

- build/lib/opencompass/configs/chatml_datasets/MaScQA/MaScQA_gen.py +12 -0

- build/lib/opencompass/configs/chatml_datasets/UGPhysics/UGPhysics_gen.py +36 -0

- build/lib/opencompass/configs/datasets/ARC_Prize_Public_Evaluation/README.md +47 -0

- build/lib/opencompass/configs/datasets/ARC_Prize_Public_Evaluation/arc_prize_public_evaluation_gen.py +4 -0

- build/lib/opencompass/configs/datasets/ARC_Prize_Public_Evaluation/arc_prize_public_evaluation_gen_872059.py +56 -0

- build/lib/opencompass/configs/datasets/ARC_Prize_Public_Evaluation/arc_prize_public_evaluation_gen_fedd04.py +56 -0

- build/lib/opencompass/configs/datasets/ARC_c/ARC_c_clean_ppl.py +55 -0

- build/lib/opencompass/configs/datasets/ARC_c/ARC_c_cot_gen_926652.py +53 -0

- build/lib/opencompass/configs/datasets/ARC_c/ARC_c_few_shot_gen_e9b043.py +48 -0

- build/lib/opencompass/configs/datasets/ARC_c/ARC_c_few_shot_ppl.py +63 -0

- build/lib/opencompass/configs/datasets/ARC_c/ARC_c_gen.py +4 -0

- build/lib/opencompass/configs/datasets/ARC_c/ARC_c_gen_1e0de5.py +44 -0

- build/lib/opencompass/configs/datasets/ARC_c/ARC_c_ppl.py +4 -0

- build/lib/opencompass/configs/datasets/ARC_c/ARC_c_ppl_2ef631.py +37 -0

- build/lib/opencompass/configs/datasets/ARC_c/ARC_c_ppl_a450bd.py +54 -0

- build/lib/opencompass/configs/datasets/ARC_c/ARC_c_ppl_d52a21.py +36 -0

- build/lib/opencompass/configs/datasets/ARC_e/ARC_e_gen.py +4 -0

- build/lib/opencompass/configs/datasets/ARC_e/ARC_e_gen_1e0de5.py +44 -0

- build/lib/opencompass/configs/datasets/ARC_e/ARC_e_ppl.py +4 -0

- build/lib/opencompass/configs/datasets/ARC_e/ARC_e_ppl_2ef631.py +37 -0

- build/lib/opencompass/configs/datasets/ARC_e/ARC_e_ppl_a450bd.py +54 -0

- build/lib/opencompass/configs/datasets/ARC_e/ARC_e_ppl_d52a21.py +34 -0

- build/lib/opencompass/configs/datasets/BeyondAIME/beyondaime_cascade_eval_gen_5e9f4f.py +106 -0

- build/lib/opencompass/configs/datasets/BeyondAIME/beyondaime_gen.py +4 -0

- build/lib/opencompass/configs/datasets/CARDBiomedBench/CARDBiomedBench_llmjudge_gen_99a231.py +101 -0

- build/lib/opencompass/configs/datasets/CHARM/README.md +164 -0

- build/lib/opencompass/configs/datasets/CHARM/README_ZH.md +162 -0

- build/lib/opencompass/configs/datasets/CHARM/charm_memory_gen_bbbd53.py +63 -0

- build/lib/opencompass/configs/datasets/CHARM/charm_memory_settings.py +31 -0

- build/lib/opencompass/configs/datasets/CHARM/charm_reason_cot_only_gen_f7b7d3.py +50 -0

- build/lib/opencompass/configs/datasets/CHARM/charm_reason_gen.py +4 -0

- build/lib/opencompass/configs/datasets/CHARM/charm_reason_gen_f8fca2.py +49 -0

- build/lib/opencompass/configs/datasets/CHARM/charm_reason_ppl_3da4de.py +57 -0

- build/lib/opencompass/configs/datasets/CHARM/charm_reason_settings.py +36 -0

- build/lib/opencompass/configs/datasets/CIBench/CIBench_generation_gen_8ab0dc.py +35 -0

- build/lib/opencompass/configs/datasets/CIBench/CIBench_generation_oracle_gen_c4a7c1.py +35 -0

- build/lib/opencompass/configs/datasets/CIBench/CIBench_template_gen_e6b12a.py +39 -0

- build/lib/opencompass/configs/datasets/CIBench/CIBench_template_oracle_gen_fecda1.py +39 -0

- build/lib/opencompass/configs/datasets/CLUE_C3/CLUE_C3_gen.py +4 -0

- build/lib/opencompass/configs/datasets/CLUE_C3/CLUE_C3_gen_8c358f.py +51 -0

- build/lib/opencompass/configs/datasets/CLUE_C3/CLUE_C3_ppl.py +4 -0

- build/lib/opencompass/configs/datasets/CLUE_C3/CLUE_C3_ppl_56b537.py +36 -0

- build/lib/opencompass/configs/datasets/CLUE_C3/CLUE_C3_ppl_e24a31.py +37 -0

- build/lib/opencompass/configs/datasets/CLUE_CMRC/CLUE_CMRC_gen.py +4 -0

build/lib/opencompass/configs/chatml_datasets/AMO_Bench/AMO_Bench_gen.py

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

datasets = [

|

| 3 |

+

dict(

|

| 4 |

+

abbr='AMO-Bench',

|

| 5 |

+

path='./data/amo-bench.jsonl',

|

| 6 |

+

evaluator=dict(

|

| 7 |

+

type='llm_evaluator',

|

| 8 |

+

judge_cfg=dict(),

|

| 9 |

+

),

|

| 10 |

+

n=1,

|

| 11 |

+

),

|

| 12 |

+

]

|

build/lib/opencompass/configs/chatml_datasets/CPsyExam/CPsyExam_gen.py

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

datasets = [

|

| 3 |

+

dict(

|

| 4 |

+

abbr='CPsyExam',

|

| 5 |

+

path='./data/CPsyExam/merged_train_dev.jsonl',

|

| 6 |

+

evaluator=dict(

|

| 7 |

+

type='llm_evaluator',

|

| 8 |

+

judge_cfg=dict(),

|

| 9 |

+

),

|

| 10 |

+

n=1,

|

| 11 |

+

),

|

| 12 |

+

]

|

build/lib/opencompass/configs/chatml_datasets/CS_Bench/CS_Bench_gen.py

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

subset_list = [

|

| 3 |

+

'test',

|

| 4 |

+

'valid',

|

| 5 |

+

]

|

| 6 |

+

|

| 7 |

+

language_list = [

|

| 8 |

+

'CN',

|

| 9 |

+

'EN',

|

| 10 |

+

]

|

| 11 |

+

|

| 12 |

+

datasets = []

|

| 13 |

+

|

| 14 |

+

for subset in subset_list:

|

| 15 |

+

for language in language_list:

|

| 16 |

+

datasets.append(

|

| 17 |

+

dict(

|

| 18 |

+

abbr=f'CS-Bench_{language}_{subset}',

|

| 19 |

+

path=f'./data/csbench/CSBench-{language}/{subset}.jsonl',

|

| 20 |

+

evaluator=dict(

|

| 21 |

+

type='llm_evaluator',

|

| 22 |

+

judge_cfg=dict(),

|

| 23 |

+

),

|

| 24 |

+

)

|

| 25 |

+

)

|

build/lib/opencompass/configs/chatml_datasets/C_MHChem/C_MHChem_gen.py

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

datasets = [

|

| 3 |

+

dict(

|

| 4 |

+

abbr='C-MHChem',

|

| 5 |

+

path='./data/C-MHChem2.jsonl',

|

| 6 |

+

evaluator=dict(

|

| 7 |

+

type='llm_evaluator',

|

| 8 |

+

judge_cfg=dict(),

|

| 9 |

+

),

|

| 10 |

+

n=1,

|

| 11 |

+

),

|

| 12 |

+

]

|

build/lib/opencompass/configs/chatml_datasets/HMMT2025/HMMT2025_gen.py

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

datasets = [

|

| 3 |

+

dict(

|

| 4 |

+

abbr='HMMT2025',

|

| 5 |

+

path='./data/hmmt2025.jsonl',

|

| 6 |

+

evaluator=dict(

|

| 7 |

+

type='llm_evaluator',

|

| 8 |

+

judge_cfg=dict(),

|

| 9 |

+

),

|

| 10 |

+

n=1,

|

| 11 |

+

),

|

| 12 |

+

]

|

build/lib/opencompass/configs/chatml_datasets/IMO_Bench_AnswerBench/IMO_Bench_AnswerBench_gen.py

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

datasets = [

|

| 3 |

+

dict(

|

| 4 |

+

abbr='IMO-Bench-AnswerBench',

|

| 5 |

+

path='./data/imo-bench-answerbench.jsonl',

|

| 6 |

+

evaluator=dict(

|

| 7 |

+

type='llm_evaluator',

|

| 8 |

+

judge_cfg=dict(),

|

| 9 |

+

),

|

| 10 |

+

n=1,

|

| 11 |

+

),

|

| 12 |

+

]

|

build/lib/opencompass/configs/chatml_datasets/MaScQA/MaScQA_gen.py

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

datasets = [

|

| 3 |

+

dict(

|

| 4 |

+

abbr='MaScQA',

|

| 5 |

+

path='./data/MaScQA/MaScQA.jsonl',

|

| 6 |

+

evaluator=dict(

|

| 7 |

+

type='llm_evaluator',

|

| 8 |

+

judge_cfg=dict(),

|

| 9 |

+

),

|

| 10 |

+

n=1,

|

| 11 |

+

),

|

| 12 |

+

]

|

build/lib/opencompass/configs/chatml_datasets/UGPhysics/UGPhysics_gen.py

ADDED

|

@@ -0,0 +1,36 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

subset_list = [

|

| 3 |

+

'AtomicPhysics',

|

| 4 |

+

'ClassicalElectromagnetism',

|

| 5 |

+

'ClassicalMechanics',

|

| 6 |

+

'Electrodynamics',

|

| 7 |

+

'GeometricalOptics',

|

| 8 |

+

'QuantumMechanics',

|

| 9 |

+

'Relativity',

|

| 10 |

+

'Solid-StatePhysics',

|

| 11 |

+

'StatisticalMechanics',

|

| 12 |

+

'SemiconductorPhysics',

|

| 13 |

+

'Thermodynamics',

|

| 14 |

+

'TheoreticalMechanics',

|

| 15 |

+

'WaveOptics',

|

| 16 |

+

]

|

| 17 |

+

|

| 18 |

+

language_list = [

|

| 19 |

+

'zh',

|

| 20 |

+

'en',

|

| 21 |

+

]

|

| 22 |

+

|

| 23 |

+

datasets = []

|

| 24 |

+

|

| 25 |

+

for subset in subset_list:

|

| 26 |

+

for language in language_list:

|

| 27 |

+

datasets.append(

|

| 28 |

+

dict(

|

| 29 |

+

abbr=f'UGPhysics_{subset}_{language}',

|

| 30 |

+

path=f'./data/ugphysics/{subset}/{language}.jsonl',

|

| 31 |

+

evaluator=dict(

|

| 32 |

+

type='llm_evaluator',

|

| 33 |

+

judge_cfg=dict(),

|

| 34 |

+

),

|

| 35 |

+

)

|

| 36 |

+

)

|

build/lib/opencompass/configs/datasets/ARC_Prize_Public_Evaluation/README.md

ADDED

|

@@ -0,0 +1,47 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

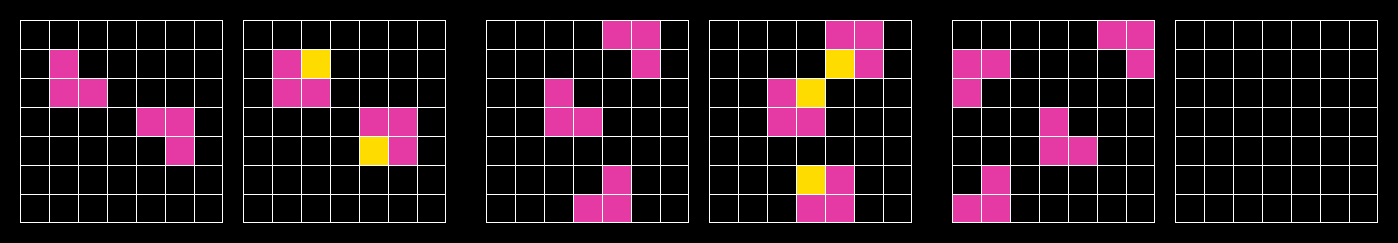

# ARC Prize Public Evaluation

|

| 2 |

+

|

| 3 |

+

#### Overview

|

| 4 |

+

The spirit of ARC Prize is to open source progress towards AGI. To win prize money, you will be required to publish reproducible code/methods into public domain.

|

| 5 |

+

|

| 6 |

+

ARC Prize measures AGI progress using the [ARC-AGI private evaluation set](https://arcprize.org/guide#private), [the leaderboard is here](https://arcprize.org/leaderboard), and the Grand Prize is unlocked once the first team reaches [at least 85%](https://arcprize.org/guide#grand-prize-goal).

|

| 7 |

+

|

| 8 |

+

Note: the private evaluation set imposes limitations on solutions (eg. no internet access, so no GPT-4/Claude/etc). There is a [secondary leaderboard](https://arcprize.org/leaderboard) called ARC-AGI-Pub, it measures the [public evaluation set](https://arcprize.org/guide#public-tasks) and imposes no limits but it is not part of ARC Prize 2024 at this time.

|

| 9 |

+

|

| 10 |

+

|

| 11 |

+

#### Tasks

|

| 12 |

+

ARC-AGI tasks are a series of three to five input and output tasks followed by a final task with only the input listed. Each task tests the utilization of a specific learned skill based on a minimal number of cognitive priors.

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

Tasks are represented as JSON lists of integers. These JSON objects can also be represented visually as a grid of colors using an ARC-AGI task viewer.

|

| 17 |

+

|

| 18 |

+

A successful submission is a pixel-perfect description (color and position) of the final task's output.

|

| 19 |

+

|

| 20 |

+

#### Format

|

| 21 |

+

|

| 22 |

+

As mentioned above, tasks are stored in JSON format. Each JSON file consists of two key-value pairs.

|

| 23 |

+

|

| 24 |

+

`train`: a list of two to ten input/output pairs (typically three.) These are used for your algorithm to infer a rule.

|

| 25 |

+

|

| 26 |

+

`test`: a list of one to three input/output pairs (typically one.) Your model should apply the inferred rule from the train set and construct an output solution. You will have access to the output test solution on the public data. The output solution on the private evaluation set will not be revealed.

|

| 27 |

+

|

| 28 |

+

Here is an example of a simple ARC-AGI task that has three training pairs along with a single test pair. Each pair is shown as a 2x2 grid. There are four colors represented by the integers 1, 4, 6, and 8. Which actual color (red/green/blue/black) is applied to each integer is arbitrary and up to you.

|

| 29 |

+

|

| 30 |

+

```json

|

| 31 |

+

{

|

| 32 |

+

"train": [

|

| 33 |

+

{"input": [[1, 0], [0, 0]], "output": [[1, 1], [1, 1]]},

|

| 34 |

+

{"input": [[0, 0], [4, 0]], "output": [[4, 4], [4, 4]]},

|

| 35 |

+

{"input": [[0, 0], [6, 0]], "output": [[6, 6], [6, 6]]}

|

| 36 |

+

],

|

| 37 |

+

"test": [

|

| 38 |

+

{"input": [[0, 0], [0, 8]], "output": [[8, 8], [8, 8]]}

|

| 39 |

+

]

|

| 40 |

+

}

|

| 41 |

+

```

|

| 42 |

+

|

| 43 |

+

#### Performance

|

| 44 |

+

|

| 45 |

+

| Qwen2.5-72B-Instruct | LLaMA3.1-70B-Instruct | gemma-2-27b-it |

|

| 46 |

+

| ----- | ----- | ----- |

|

| 47 |

+

| 0.09 | 0.06 | 0.05 |

|

build/lib/opencompass/configs/datasets/ARC_Prize_Public_Evaluation/arc_prize_public_evaluation_gen.py

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from mmengine.config import read_base

|

| 2 |

+

|

| 3 |

+

with read_base():

|

| 4 |

+

from .arc_prize_public_evaluation_gen_872059 import arc_prize_public_evaluation_datasets # noqa: F401, F403

|

build/lib/opencompass/configs/datasets/ARC_Prize_Public_Evaluation/arc_prize_public_evaluation_gen_872059.py

ADDED

|

@@ -0,0 +1,56 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from opencompass.openicl.icl_prompt_template import PromptTemplate

|

| 2 |

+

from opencompass.openicl.icl_retriever import ZeroRetriever

|

| 3 |

+

from opencompass.openicl.icl_inferencer import GenInferencer

|

| 4 |

+

from opencompass.datasets.arc_prize_public_evaluation import ARCPrizeDataset, ARCPrizeEvaluator

|

| 5 |

+

|

| 6 |

+

|

| 7 |

+

# The system_prompt defines the initial instructions for the model,

|

| 8 |

+

# setting the context for solving ARC tasks.

|

| 9 |

+

system_prompt = '''You are a puzzle solving wizard. You are given a puzzle from the abstraction and reasoning corpus developed by Francois Chollet.'''

|

| 10 |

+

|

| 11 |

+

# User message template is a template for creating user prompts. It includes placeholders for training data and test input data,

|

| 12 |

+

# guiding the model to learn the rule and apply it to solve the given puzzle.

|

| 13 |

+

user_message_template = '''Here are the example input and output pairs from which you should learn the underlying rule to later predict the output for the given test input:

|

| 14 |

+

----------------------------------------

|

| 15 |

+

{training_data}

|

| 16 |

+

----------------------------------------

|

| 17 |

+

Now, solve the following puzzle based on its input grid by applying the rules you have learned from the training data.:

|

| 18 |

+

----------------------------------------

|

| 19 |

+

[{{'input': {input_test_data}, 'output': [[]]}}]

|

| 20 |

+

----------------------------------------

|

| 21 |

+

What is the output grid? Only provide the output grid in the form as in the example input and output pairs. Do not provide any additional information:'''

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

arc_prize_public_evaluation_reader_cfg = dict(

|

| 25 |

+

input_columns=['training_data', 'input_test_data'],

|

| 26 |

+

output_column='output_test_data'

|

| 27 |

+

)

|

| 28 |

+

|

| 29 |

+

arc_prize_public_evaluation_infer_cfg = dict(

|

| 30 |

+

prompt_template=dict(

|

| 31 |

+

type=PromptTemplate,

|

| 32 |

+

template=dict(

|

| 33 |

+

round=[

|

| 34 |

+

dict(role='SYSTEM', prompt=system_prompt),

|

| 35 |

+

dict(role='HUMAN', prompt=user_message_template),

|

| 36 |

+

],

|

| 37 |

+

)

|

| 38 |

+

),

|

| 39 |

+

retriever=dict(type=ZeroRetriever),

|

| 40 |

+

inferencer=dict(type=GenInferencer, max_out_len=2048)

|

| 41 |

+

)

|

| 42 |

+

|

| 43 |

+

arc_prize_public_evaluation_eval_cfg = dict(

|

| 44 |

+

evaluator=dict(type=ARCPrizeEvaluator)

|

| 45 |

+

)

|

| 46 |

+

|

| 47 |

+

arc_prize_public_evaluation_datasets = [

|

| 48 |

+

dict(

|

| 49 |

+

abbr='ARC_Prize_Public_Evaluation',

|

| 50 |

+

type=ARCPrizeDataset,

|

| 51 |

+

path='opencompass/arc_prize_public_evaluation',

|

| 52 |

+

reader_cfg=arc_prize_public_evaluation_reader_cfg,

|

| 53 |

+

infer_cfg=arc_prize_public_evaluation_infer_cfg,

|

| 54 |

+

eval_cfg=arc_prize_public_evaluation_eval_cfg

|

| 55 |

+

)

|

| 56 |

+

]

|

build/lib/opencompass/configs/datasets/ARC_Prize_Public_Evaluation/arc_prize_public_evaluation_gen_fedd04.py

ADDED

|

@@ -0,0 +1,56 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from opencompass.openicl.icl_prompt_template import PromptTemplate

|

| 2 |

+

from opencompass.openicl.icl_retriever import ZeroRetriever

|

| 3 |

+

from opencompass.openicl.icl_inferencer import GenInferencer

|

| 4 |

+

from opencompass.datasets.arc_prize_public_evaluation import ARCPrizeDataset, ARCPrizeEvaluator

|

| 5 |

+

|

| 6 |

+

|

| 7 |

+

# The system_prompt defines the initial instructions for the model,

|

| 8 |

+

# setting the context for solving ARC tasks.

|

| 9 |

+

system_prompt = '''You are a puzzle solving wizard. You are given a puzzle from the abstraction and reasoning corpus developed by Francois Chollet.'''

|

| 10 |

+

|

| 11 |

+

# User message template is a template for creating user prompts. It includes placeholders for training data and test input data,

|

| 12 |

+

# guiding the model to learn the rule and apply it to solve the given puzzle.

|

| 13 |

+

user_message_template = '''Here are the example input and output pairs from which you should learn the underlying rule to later predict the output for the given test input:

|

| 14 |

+

----------------------------------------

|

| 15 |

+

{training_data}

|

| 16 |

+

----------------------------------------

|

| 17 |

+

Now, solve the following puzzle based on its input grid by applying the rules you have learned from the training data.:

|

| 18 |

+

----------------------------------------

|

| 19 |

+

[{{'input': {input_test_data}, 'output': [[]]}}]

|

| 20 |

+

----------------------------------------

|

| 21 |

+

What is the output grid? Only provide the output grid in the form as in the example input and output pairs. Do not provide any additional information:'''

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

arc_prize_public_evaluation_reader_cfg = dict(

|

| 25 |

+

input_columns=['training_data', 'input_test_data'],

|

| 26 |

+

output_column='output_test_data'

|

| 27 |

+

)

|

| 28 |

+

|

| 29 |

+

arc_prize_public_evaluation_infer_cfg = dict(

|

| 30 |

+

prompt_template=dict(

|

| 31 |

+

type=PromptTemplate,

|

| 32 |

+

template=dict(

|

| 33 |

+

round=[

|

| 34 |

+

dict(role='SYSTEM',fallback_role='HUMAN', prompt=system_prompt),

|

| 35 |

+

dict(role='HUMAN', prompt=user_message_template),

|

| 36 |

+

],

|

| 37 |

+

)

|

| 38 |

+

),

|

| 39 |

+

retriever=dict(type=ZeroRetriever),

|

| 40 |

+

inferencer=dict(type=GenInferencer)

|

| 41 |

+

)

|

| 42 |

+

|

| 43 |

+

arc_prize_public_evaluation_eval_cfg = dict(

|

| 44 |

+

evaluator=dict(type=ARCPrizeEvaluator)

|

| 45 |

+

)

|

| 46 |

+

|

| 47 |

+

arc_prize_public_evaluation_datasets = [

|

| 48 |

+

dict(

|

| 49 |

+

abbr='ARC_Prize_Public_Evaluation',

|

| 50 |

+

type=ARCPrizeDataset,

|

| 51 |

+

path='opencompass/arc_prize_public_evaluation',

|

| 52 |

+

reader_cfg=arc_prize_public_evaluation_reader_cfg,

|

| 53 |

+

infer_cfg=arc_prize_public_evaluation_infer_cfg,

|

| 54 |

+

eval_cfg=arc_prize_public_evaluation_eval_cfg

|

| 55 |

+

)

|

| 56 |

+

]

|

build/lib/opencompass/configs/datasets/ARC_c/ARC_c_clean_ppl.py

ADDED

|

@@ -0,0 +1,55 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from opencompass.openicl.icl_prompt_template import PromptTemplate

|

| 2 |

+

from opencompass.openicl.icl_retriever import ZeroRetriever

|

| 3 |

+

from opencompass.openicl.icl_inferencer import PPLInferencer

|

| 4 |

+

from opencompass.openicl.icl_evaluator import AccContaminationEvaluator

|

| 5 |

+

from opencompass.datasets import ARCDatasetClean as ARCDataset

|

| 6 |

+

|

| 7 |

+

ARC_c_reader_cfg = dict(

|

| 8 |

+

input_columns=['question', 'textA', 'textB', 'textC', 'textD'],

|

| 9 |

+

output_column='answerKey')

|

| 10 |

+

|

| 11 |

+

ARC_c_infer_cfg = dict(

|

| 12 |

+

prompt_template=dict(

|

| 13 |

+

type=PromptTemplate,

|

| 14 |

+

template={

|

| 15 |

+

'A':

|

| 16 |

+

dict(

|

| 17 |

+

round=[

|

| 18 |

+

dict(role='HUMAN', prompt='Question: {question}\nAnswer: '),

|

| 19 |

+

dict(role='BOT', prompt='{textA}')

|

| 20 |

+

], ),

|

| 21 |

+

'B':

|

| 22 |

+

dict(

|

| 23 |

+

round=[

|

| 24 |

+

dict(role='HUMAN', prompt='Question: {question}\nAnswer: '),

|

| 25 |

+

dict(role='BOT', prompt='{textB}')

|

| 26 |

+

], ),

|

| 27 |

+

'C':

|

| 28 |

+

dict(

|

| 29 |

+

round=[

|

| 30 |

+

dict(role='HUMAN', prompt='Question: {question}\nAnswer: '),

|

| 31 |

+

dict(role='BOT', prompt='{textC}')

|

| 32 |

+

], ),

|

| 33 |

+

'D':

|

| 34 |

+

dict(

|

| 35 |

+

round=[

|

| 36 |

+

dict(role='HUMAN', prompt='Question: {question}\nAnswer: '),

|

| 37 |

+

dict(role='BOT', prompt='{textD}')

|

| 38 |

+

], ),

|

| 39 |

+

}),

|

| 40 |

+

retriever=dict(type=ZeroRetriever),

|

| 41 |

+

inferencer=dict(type=PPLInferencer))

|

| 42 |

+

|

| 43 |

+

ARC_c_eval_cfg = dict(evaluator=dict(type=AccContaminationEvaluator),

|

| 44 |

+

analyze_contamination=True)

|

| 45 |

+

|

| 46 |

+

ARC_c_datasets = [

|

| 47 |

+

dict(

|

| 48 |

+

type=ARCDataset,

|

| 49 |

+

abbr='ARC-c-test',

|

| 50 |

+

path='opencompass/ai2_arc-test',

|

| 51 |

+

name='ARC-Challenge',

|

| 52 |

+

reader_cfg=ARC_c_reader_cfg,

|

| 53 |

+

infer_cfg=ARC_c_infer_cfg,

|

| 54 |

+

eval_cfg=ARC_c_eval_cfg)

|

| 55 |

+

]

|

build/lib/opencompass/configs/datasets/ARC_c/ARC_c_cot_gen_926652.py

ADDED

|

@@ -0,0 +1,53 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from opencompass.openicl.icl_prompt_template import PromptTemplate

|

| 2 |

+

from opencompass.openicl.icl_retriever import ZeroRetriever

|

| 3 |

+

from opencompass.openicl.icl_inferencer import GenInferencer

|

| 4 |

+

from opencompass.openicl.icl_evaluator import AccEvaluator

|

| 5 |

+

from opencompass.datasets import ARCDataset

|

| 6 |

+

from opencompass.utils.text_postprocessors import first_option_postprocess, match_answer_pattern

|

| 7 |

+

|

| 8 |

+

QUERY_TEMPLATE = """

|

| 9 |

+

Answer the following multiple choice question. The last line of your response should be of the following format: 'ANSWER: $LETTER' (without quotes) where LETTER is one of ABCD. Think step by step before answering.

|

| 10 |

+

|

| 11 |

+

{question}

|

| 12 |

+

|

| 13 |

+

A. {textA}

|

| 14 |

+

B. {textB}

|

| 15 |

+

C. {textC}

|

| 16 |

+

D. {textD}

|

| 17 |

+

""".strip()

|

| 18 |

+

|

| 19 |

+

ARC_c_reader_cfg = dict(

|

| 20 |

+

input_columns=['question', 'textA', 'textB', 'textC', 'textD'],

|

| 21 |

+

output_column='answerKey')

|

| 22 |

+

|

| 23 |

+

ARC_c_infer_cfg = dict(

|

| 24 |

+

prompt_template=dict(

|

| 25 |

+

type=PromptTemplate,

|

| 26 |

+

template=dict(

|

| 27 |

+

round=[

|

| 28 |

+

dict(

|

| 29 |

+

role='HUMAN',

|

| 30 |

+

prompt=QUERY_TEMPLATE)

|

| 31 |

+

], ),

|

| 32 |

+

),

|

| 33 |

+

retriever=dict(type=ZeroRetriever),

|

| 34 |

+

inferencer=dict(type=GenInferencer),

|

| 35 |

+

)

|

| 36 |

+

|

| 37 |

+

ARC_c_eval_cfg = dict(

|

| 38 |

+

evaluator=dict(type=AccEvaluator),

|

| 39 |

+

pred_role='BOT',

|

| 40 |

+

pred_postprocessor=dict(type=first_option_postprocess, options='ABCD'),

|

| 41 |

+

)

|

| 42 |

+

|

| 43 |

+

ARC_c_datasets = [

|

| 44 |

+

dict(

|

| 45 |

+

abbr='ARC-c',

|

| 46 |

+

type=ARCDataset,

|

| 47 |

+

path='opencompass/ai2_arc-dev',

|

| 48 |

+

name='ARC-Challenge',

|

| 49 |

+

reader_cfg=ARC_c_reader_cfg,

|

| 50 |

+

infer_cfg=ARC_c_infer_cfg,

|

| 51 |

+

eval_cfg=ARC_c_eval_cfg,

|

| 52 |

+

)

|

| 53 |

+

]

|

build/lib/opencompass/configs/datasets/ARC_c/ARC_c_few_shot_gen_e9b043.py

ADDED

|

@@ -0,0 +1,48 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from opencompass.openicl.icl_prompt_template import PromptTemplate

|

| 2 |

+

from opencompass.openicl.icl_retriever import ZeroRetriever, FixKRetriever

|

| 3 |

+

from opencompass.openicl.icl_inferencer import GenInferencer

|

| 4 |

+

from opencompass.openicl.icl_evaluator import AccEvaluator

|

| 5 |

+

from opencompass.datasets import ARCDataset

|

| 6 |

+

from opencompass.utils.text_postprocessors import first_capital_postprocess

|

| 7 |

+

|

| 8 |

+

ARC_c_reader_cfg = dict(

|

| 9 |

+

input_columns=['question', 'textA', 'textB', 'textC', 'textD'],

|

| 10 |

+

output_column='answerKey',

|

| 11 |

+

)

|

| 12 |

+

|

| 13 |

+

ARC_c_infer_cfg = dict(

|

| 14 |

+

ice_template=dict(

|

| 15 |

+

type=PromptTemplate,

|

| 16 |

+

template=dict(

|

| 17 |

+

begin='</E>',

|

| 18 |

+

round=[

|

| 19 |

+

dict(

|

| 20 |

+

role='HUMAN',

|

| 21 |

+

prompt='Question: {question}\nA. {textA}\nB. {textB}\nC. {textC}\nD. {textD}\nAnswer:',

|

| 22 |

+

),

|

| 23 |

+

dict(role='BOT', prompt='{answerKey}'),

|

| 24 |

+

],

|

| 25 |

+

),

|

| 26 |

+

ice_token='</E>',

|

| 27 |

+

),

|

| 28 |

+

retriever=dict(type=FixKRetriever, fix_id_list=[0, 2, 4, 6, 8]),

|

| 29 |

+

inferencer=dict(type=GenInferencer, max_out_len=50),

|

| 30 |

+

)

|

| 31 |

+

|

| 32 |

+

ARC_c_eval_cfg = dict(

|

| 33 |

+

evaluator=dict(type=AccEvaluator),

|

| 34 |

+

pred_role='BOT',

|

| 35 |

+

pred_postprocessor=dict(type=first_capital_postprocess),

|

| 36 |

+

)

|

| 37 |

+

|

| 38 |

+

ARC_c_datasets = [

|

| 39 |

+

dict(

|

| 40 |

+

abbr='ARC-c',

|

| 41 |

+

type=ARCDataset,

|

| 42 |

+

path='opencompass/ai2_arc-dev',

|

| 43 |

+

name='ARC-Challenge',

|

| 44 |

+

reader_cfg=ARC_c_reader_cfg,

|

| 45 |

+

infer_cfg=ARC_c_infer_cfg,

|

| 46 |

+

eval_cfg=ARC_c_eval_cfg,

|

| 47 |

+

)

|

| 48 |

+

]

|

build/lib/opencompass/configs/datasets/ARC_c/ARC_c_few_shot_ppl.py

ADDED

|

@@ -0,0 +1,63 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from opencompass.openicl.icl_prompt_template import PromptTemplate

|

| 2 |

+

from opencompass.openicl.icl_retriever import ZeroRetriever, FixKRetriever

|

| 3 |

+

from opencompass.openicl.icl_inferencer import PPLInferencer

|

| 4 |

+

from opencompass.openicl.icl_evaluator import AccEvaluator

|

| 5 |

+

from opencompass.datasets import ARCDataset

|

| 6 |

+

|

| 7 |

+

ARC_c_reader_cfg = dict(

|

| 8 |

+

input_columns=['question', 'textA', 'textB', 'textC', 'textD'],

|

| 9 |

+

output_column='answerKey',

|

| 10 |

+

)

|

| 11 |

+

|

| 12 |

+

ARC_c_infer_cfg = dict(

|

| 13 |

+

ice_template=dict(

|

| 14 |

+

type=PromptTemplate,

|

| 15 |

+

template={

|

| 16 |

+

'A': dict(

|

| 17 |

+

begin='</E>',

|

| 18 |

+

round=[

|

| 19 |

+

dict(role='HUMAN', prompt='Question: {question}\nAnswer: '),

|

| 20 |

+

dict(role='BOT', prompt='{textA}'),

|

| 21 |

+

],

|

| 22 |

+

),

|

| 23 |

+

'B': dict(

|

| 24 |

+

begin='</E>',

|

| 25 |

+

round=[

|

| 26 |

+

dict(role='HUMAN', prompt='Question: {question}\nAnswer: '),

|

| 27 |

+

dict(role='BOT', prompt='{textB}'),

|

| 28 |

+

],

|

| 29 |

+

),

|

| 30 |

+

'C': dict(

|

| 31 |

+

begin='</E>',

|

| 32 |

+

round=[

|

| 33 |

+

dict(role='HUMAN', prompt='Question: {question}\nAnswer: '),

|

| 34 |

+

dict(role='BOT', prompt='{textC}'),

|

| 35 |

+

],

|

| 36 |

+

),

|

| 37 |

+

'D': dict(

|

| 38 |

+

begin='</E>',

|

| 39 |

+

round=[

|

| 40 |

+

dict(role='HUMAN', prompt='Question: {question}\nAnswer: '),

|

| 41 |

+

dict(role='BOT', prompt='{textD}'),

|

| 42 |

+

],

|

| 43 |

+

),

|

| 44 |

+

},

|

| 45 |

+

ice_token='</E>',

|

| 46 |

+

),

|

| 47 |

+

retriever=dict(type=FixKRetriever, fix_id_list=[0, 2, 4, 6, 8]),

|

| 48 |

+

inferencer=dict(type=PPLInferencer),

|

| 49 |

+

)

|

| 50 |

+

|

| 51 |

+

ARC_c_eval_cfg = dict(evaluator=dict(type=AccEvaluator))

|

| 52 |

+

|

| 53 |

+

ARC_c_datasets = [

|

| 54 |

+

dict(

|

| 55 |

+

type=ARCDataset,

|

| 56 |

+

abbr='ARC-c',

|

| 57 |

+

path='opencompass/ai2_arc-dev',

|

| 58 |

+

name='ARC-Challenge',

|

| 59 |

+

reader_cfg=ARC_c_reader_cfg,

|

| 60 |

+

infer_cfg=ARC_c_infer_cfg,

|

| 61 |

+

eval_cfg=ARC_c_eval_cfg,

|

| 62 |

+

)

|

| 63 |

+

]

|

build/lib/opencompass/configs/datasets/ARC_c/ARC_c_gen.py

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from mmengine.config import read_base

|

| 2 |

+

|

| 3 |

+

with read_base():

|

| 4 |

+

from .ARC_c_gen_1e0de5 import ARC_c_datasets # noqa: F401, F403

|

build/lib/opencompass/configs/datasets/ARC_c/ARC_c_gen_1e0de5.py

ADDED

|

@@ -0,0 +1,44 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from opencompass.openicl.icl_prompt_template import PromptTemplate

|

| 2 |

+

from opencompass.openicl.icl_retriever import ZeroRetriever

|

| 3 |

+

from opencompass.openicl.icl_inferencer import GenInferencer

|

| 4 |

+

from opencompass.openicl.icl_evaluator import AccEvaluator

|

| 5 |

+

from opencompass.datasets import ARCDataset

|

| 6 |

+

from opencompass.utils.text_postprocessors import first_option_postprocess

|

| 7 |

+

|

| 8 |

+

ARC_c_reader_cfg = dict(

|

| 9 |

+

input_columns=['question', 'textA', 'textB', 'textC', 'textD'],

|

| 10 |

+

output_column='answerKey')

|

| 11 |

+

|

| 12 |

+

ARC_c_infer_cfg = dict(

|

| 13 |

+

prompt_template=dict(

|

| 14 |

+

type=PromptTemplate,

|

| 15 |

+

template=dict(

|

| 16 |

+

round=[

|

| 17 |

+

dict(

|

| 18 |

+

role='HUMAN',

|

| 19 |

+

prompt=

|

| 20 |

+

'Question: {question}\nA. {textA}\nB. {textB}\nC. {textC}\nD. {textD}\nAnswer:'

|

| 21 |

+

)

|

| 22 |

+

], ),

|

| 23 |

+

),

|

| 24 |

+

retriever=dict(type=ZeroRetriever),

|

| 25 |

+

inferencer=dict(type=GenInferencer),

|

| 26 |

+

)

|

| 27 |

+

|

| 28 |

+

ARC_c_eval_cfg = dict(

|

| 29 |

+

evaluator=dict(type=AccEvaluator),

|

| 30 |

+

pred_role='BOT',

|

| 31 |

+

pred_postprocessor=dict(type=first_option_postprocess, options='ABCD'),

|

| 32 |

+

)

|

| 33 |

+

|

| 34 |

+

ARC_c_datasets = [

|

| 35 |

+

dict(

|

| 36 |

+

abbr='ARC-c',

|

| 37 |

+

type=ARCDataset,

|

| 38 |

+

path='opencompass/ai2_arc-dev',

|

| 39 |

+

name='ARC-Challenge',

|

| 40 |

+

reader_cfg=ARC_c_reader_cfg,

|

| 41 |

+

infer_cfg=ARC_c_infer_cfg,

|

| 42 |

+

eval_cfg=ARC_c_eval_cfg,

|

| 43 |

+

)

|

| 44 |

+

]

|

build/lib/opencompass/configs/datasets/ARC_c/ARC_c_ppl.py

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from mmengine.config import read_base

|

| 2 |

+

|

| 3 |

+

with read_base():

|

| 4 |

+

from .ARC_c_ppl_a450bd import ARC_c_datasets # noqa: F401, F403

|

build/lib/opencompass/configs/datasets/ARC_c/ARC_c_ppl_2ef631.py

ADDED

|

@@ -0,0 +1,37 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from opencompass.openicl.icl_prompt_template import PromptTemplate

|

| 2 |

+

from opencompass.openicl.icl_retriever import ZeroRetriever

|

| 3 |

+

from opencompass.openicl.icl_inferencer import PPLInferencer

|

| 4 |

+

from opencompass.openicl.icl_evaluator import AccEvaluator

|

| 5 |

+

from opencompass.datasets import ARCDataset

|

| 6 |

+

|

| 7 |

+

ARC_c_reader_cfg = dict(

|

| 8 |

+

input_columns=['question', 'textA', 'textB', 'textC', 'textD'],

|

| 9 |

+

output_column='answerKey')

|

| 10 |

+

|

| 11 |

+

ARC_c_infer_cfg = dict(

|

| 12 |

+

prompt_template=dict(

|

| 13 |

+

type=PromptTemplate,

|

| 14 |

+

template={

|

| 15 |

+

opt: dict(

|

| 16 |

+

round=[

|

| 17 |

+

dict(role='HUMAN', prompt=f'{{question}}\nA. {{textA}}\nB. {{textB}}\nC. {{textC}}\nD. {{textD}}'),

|

| 18 |

+

dict(role='BOT', prompt=f'Answer: {opt}'),

|

| 19 |

+

]

|

| 20 |

+

) for opt in ['A', 'B', 'C', 'D']

|

| 21 |

+

},

|

| 22 |

+

),

|

| 23 |

+

retriever=dict(type=ZeroRetriever),

|

| 24 |

+

inferencer=dict(type=PPLInferencer))

|

| 25 |

+

|

| 26 |

+

ARC_c_eval_cfg = dict(evaluator=dict(type=AccEvaluator))

|

| 27 |

+

|

| 28 |

+

ARC_c_datasets = [

|

| 29 |

+

dict(

|

| 30 |

+

type=ARCDataset,

|

| 31 |

+

abbr='ARC-c',

|

| 32 |

+

path='opencompass/ai2_arc-dev',

|

| 33 |

+

name='ARC-Challenge',

|

| 34 |

+

reader_cfg=ARC_c_reader_cfg,

|

| 35 |

+

infer_cfg=ARC_c_infer_cfg,

|

| 36 |

+

eval_cfg=ARC_c_eval_cfg)

|

| 37 |

+

]

|

build/lib/opencompass/configs/datasets/ARC_c/ARC_c_ppl_a450bd.py

ADDED

|

@@ -0,0 +1,54 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from opencompass.openicl.icl_prompt_template import PromptTemplate

|

| 2 |

+

from opencompass.openicl.icl_retriever import ZeroRetriever

|

| 3 |

+

from opencompass.openicl.icl_inferencer import PPLInferencer

|

| 4 |

+

from opencompass.openicl.icl_evaluator import AccEvaluator

|

| 5 |

+

from opencompass.datasets import ARCDataset

|

| 6 |

+

|

| 7 |

+

ARC_c_reader_cfg = dict(

|

| 8 |

+

input_columns=['question', 'textA', 'textB', 'textC', 'textD'],

|

| 9 |

+

output_column='answerKey')

|

| 10 |

+

|

| 11 |

+

ARC_c_infer_cfg = dict(

|

| 12 |

+

prompt_template=dict(

|

| 13 |

+

type=PromptTemplate,

|

| 14 |

+

template={

|

| 15 |

+

'A':

|

| 16 |

+

dict(

|

| 17 |

+

round=[

|

| 18 |

+

dict(role='HUMAN', prompt='Question: {question}\nAnswer: '),

|

| 19 |

+

dict(role='BOT', prompt='{textA}')

|

| 20 |

+

], ),

|

| 21 |

+

'B':

|

| 22 |

+

dict(

|

| 23 |

+

round=[

|

| 24 |

+

dict(role='HUMAN', prompt='Question: {question}\nAnswer: '),

|

| 25 |

+

dict(role='BOT', prompt='{textB}')

|

| 26 |

+

], ),

|

| 27 |

+

'C':

|

| 28 |

+

dict(

|

| 29 |

+

round=[

|

| 30 |

+

dict(role='HUMAN', prompt='Question: {question}\nAnswer: '),

|

| 31 |

+

dict(role='BOT', prompt='{textC}')

|

| 32 |

+

], ),

|

| 33 |

+

'D':

|

| 34 |

+

dict(

|

| 35 |

+

round=[

|

| 36 |

+

dict(role='HUMAN', prompt='Question: {question}\nAnswer: '),

|

| 37 |

+

dict(role='BOT', prompt='{textD}')

|

| 38 |

+

], ),

|

| 39 |

+

}),

|

| 40 |

+

retriever=dict(type=ZeroRetriever),

|

| 41 |

+

inferencer=dict(type=PPLInferencer))

|

| 42 |

+

|

| 43 |

+

ARC_c_eval_cfg = dict(evaluator=dict(type=AccEvaluator))

|

| 44 |

+

|

| 45 |

+

ARC_c_datasets = [

|

| 46 |

+

dict(

|

| 47 |

+

type=ARCDataset,

|

| 48 |

+

abbr='ARC-c',

|

| 49 |

+

path='opencompass/ai2_arc-dev',

|

| 50 |

+

name='ARC-Challenge',

|

| 51 |

+

reader_cfg=ARC_c_reader_cfg,

|

| 52 |

+

infer_cfg=ARC_c_infer_cfg,

|

| 53 |

+

eval_cfg=ARC_c_eval_cfg)

|

| 54 |

+

]

|

build/lib/opencompass/configs/datasets/ARC_c/ARC_c_ppl_d52a21.py

ADDED

|

@@ -0,0 +1,36 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from mmengine.config import read_base

|

| 2 |

+

# with read_base():

|

| 3 |

+

from opencompass.openicl.icl_prompt_template import PromptTemplate

|

| 4 |

+

from opencompass.openicl.icl_retriever import ZeroRetriever

|

| 5 |

+

from opencompass.openicl.icl_inferencer import PPLInferencer

|

| 6 |

+

from opencompass.openicl.icl_evaluator import AccEvaluator

|

| 7 |

+

from opencompass.datasets import ARCDataset

|

| 8 |

+

|

| 9 |

+

ARC_c_reader_cfg = dict(

|

| 10 |

+

input_columns=['question', 'textA', 'textB', 'textC', 'textD'],

|

| 11 |

+

output_column='answerKey')

|

| 12 |

+

|

| 13 |

+

ARC_c_infer_cfg = dict(

|

| 14 |

+

prompt_template=dict(

|

| 15 |

+

type=PromptTemplate,

|

| 16 |

+

template={

|

| 17 |

+

'A': 'Question: {question}\nAnswer: {textA}',

|

| 18 |

+

'B': 'Question: {question}\nAnswer: {textB}',

|

| 19 |

+

'C': 'Question: {question}\nAnswer: {textC}',

|

| 20 |

+

'D': 'Question: {question}\nAnswer: {textD}'

|

| 21 |

+

}),

|

| 22 |

+

retriever=dict(type=ZeroRetriever),

|

| 23 |

+

inferencer=dict(type=PPLInferencer))

|

| 24 |

+

|

| 25 |

+

ARC_c_eval_cfg = dict(evaluator=dict(type=AccEvaluator))

|

| 26 |

+

|

| 27 |

+

ARC_c_datasets = [

|

| 28 |

+

dict(

|

| 29 |

+

type=ARCDataset,

|

| 30 |

+

abbr='ARC-c',

|

| 31 |

+

path='opencompass/ai2_arc-dev',

|

| 32 |

+

name='ARC-Challenge',

|

| 33 |

+

reader_cfg=ARC_c_reader_cfg,

|

| 34 |

+

infer_cfg=ARC_c_infer_cfg,

|

| 35 |

+

eval_cfg=ARC_c_eval_cfg)

|

| 36 |

+

]

|

build/lib/opencompass/configs/datasets/ARC_e/ARC_e_gen.py

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from mmengine.config import read_base

|

| 2 |

+

|

| 3 |

+

with read_base():

|

| 4 |

+

from .ARC_e_gen_1e0de5 import ARC_e_datasets # noqa: F401, F403

|

build/lib/opencompass/configs/datasets/ARC_e/ARC_e_gen_1e0de5.py

ADDED

|

@@ -0,0 +1,44 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from opencompass.openicl.icl_prompt_template import PromptTemplate

|

| 2 |

+

from opencompass.openicl.icl_retriever import ZeroRetriever

|

| 3 |

+

from opencompass.openicl.icl_inferencer import GenInferencer

|

| 4 |

+

from opencompass.openicl.icl_evaluator import AccEvaluator

|

| 5 |

+

from opencompass.datasets import ARCDataset

|

| 6 |

+

from opencompass.utils.text_postprocessors import first_option_postprocess

|

| 7 |

+

|

| 8 |

+

ARC_e_reader_cfg = dict(

|

| 9 |

+

input_columns=['question', 'textA', 'textB', 'textC', 'textD'],

|

| 10 |

+

output_column='answerKey')

|

| 11 |

+

|

| 12 |

+

ARC_e_infer_cfg = dict(

|

| 13 |

+

prompt_template=dict(

|

| 14 |

+

type=PromptTemplate,

|

| 15 |

+

template=dict(

|

| 16 |

+

round=[

|

| 17 |

+

dict(

|

| 18 |

+

role='HUMAN',

|

| 19 |

+

prompt=

|

| 20 |

+

'Question: {question}\nA. {textA}\nB. {textB}\nC. {textC}\nD. {textD}\nAnswer:'

|

| 21 |

+

)

|

| 22 |

+

], ),

|

| 23 |

+

),

|

| 24 |

+

retriever=dict(type=ZeroRetriever),

|

| 25 |

+

inferencer=dict(type=GenInferencer),

|

| 26 |

+

)

|

| 27 |

+

|

| 28 |

+

ARC_e_eval_cfg = dict(

|

| 29 |

+

evaluator=dict(type=AccEvaluator),

|

| 30 |

+

pred_role='BOT',

|

| 31 |

+

pred_postprocessor=dict(type=first_option_postprocess, options='ABCD'),

|

| 32 |

+

)

|

| 33 |

+

|

| 34 |

+

ARC_e_datasets = [

|

| 35 |

+

dict(

|

| 36 |

+

abbr='ARC-e',

|

| 37 |

+

type=ARCDataset,

|

| 38 |

+

path='opencompass/ai2_arc-easy-dev',

|

| 39 |

+

name='ARC-Easy',

|

| 40 |

+

reader_cfg=ARC_e_reader_cfg,

|

| 41 |

+

infer_cfg=ARC_e_infer_cfg,

|

| 42 |

+

eval_cfg=ARC_e_eval_cfg,

|

| 43 |

+

)

|

| 44 |

+

]

|

build/lib/opencompass/configs/datasets/ARC_e/ARC_e_ppl.py

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from mmengine.config import read_base

|

| 2 |

+

|

| 3 |

+

with read_base():

|

| 4 |

+

from .ARC_e_ppl_a450bd import ARC_e_datasets # noqa: F401, F403

|

build/lib/opencompass/configs/datasets/ARC_e/ARC_e_ppl_2ef631.py

ADDED

|

@@ -0,0 +1,37 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from opencompass.openicl.icl_prompt_template import PromptTemplate

|

| 2 |

+

from opencompass.openicl.icl_retriever import ZeroRetriever

|

| 3 |

+

from opencompass.openicl.icl_inferencer import PPLInferencer

|

| 4 |

+

from opencompass.openicl.icl_evaluator import AccEvaluator

|

| 5 |

+

from opencompass.datasets import ARCDataset

|

| 6 |

+

|

| 7 |

+

ARC_e_reader_cfg = dict(

|

| 8 |

+

input_columns=['question', 'textA', 'textB', 'textC', 'textD'],

|

| 9 |

+

output_column='answerKey')

|

| 10 |

+

|

| 11 |

+

ARC_e_infer_cfg = dict(

|

| 12 |

+

prompt_template=dict(

|

| 13 |

+

type=PromptTemplate,

|

| 14 |

+

template={

|

| 15 |

+

opt: dict(

|

| 16 |

+

round=[

|

| 17 |

+

dict(role='HUMAN', prompt=f'{{question}}\nA. {{textA}}\nB. {{textB}}\nC. {{textC}}\nD. {{textD}}'),

|

| 18 |

+

dict(role='BOT', prompt=f'Answer: {opt}'),

|

| 19 |

+

]

|

| 20 |

+

) for opt in ['A', 'B', 'C', 'D']

|

| 21 |

+

},

|

| 22 |

+

),

|

| 23 |

+

retriever=dict(type=ZeroRetriever),

|

| 24 |

+

inferencer=dict(type=PPLInferencer))

|

| 25 |

+

|

| 26 |

+

ARC_e_eval_cfg = dict(evaluator=dict(type=AccEvaluator))

|

| 27 |

+

|

| 28 |

+

ARC_e_datasets = [

|

| 29 |

+

dict(

|

| 30 |

+

type=ARCDataset,

|

| 31 |

+

abbr='ARC-e',

|

| 32 |

+

path='opencompass/ai2_arc-easy-dev',

|

| 33 |

+

name='ARC-Easy',

|

| 34 |

+

reader_cfg=ARC_e_reader_cfg,

|

| 35 |

+

infer_cfg=ARC_e_infer_cfg,

|

| 36 |

+

eval_cfg=ARC_e_eval_cfg)

|

| 37 |

+

]

|