Add files using upload-large-folder tool

Browse filesThis view is limited to 50 files because it contains too many changes. See raw diff

- build/lib/opencompass/tasks/fla2/ops/generalized_delta_rule/dplr/chunk_h_bwd.py +173 -0

- build/lib/opencompass/tasks/fla2/ops/generalized_delta_rule/dplr/chunk_h_fwd.py +173 -0

- build/lib/opencompass/tasks/fla2/ops/generalized_delta_rule/dplr/chunk_o_bwd.py +428 -0

- build/lib/opencompass/tasks/fla2/ops/generalized_delta_rule/dplr/chunk_o_fwd.py +123 -0

- build/lib/opencompass/tasks/fla2/ops/generalized_delta_rule/dplr/fused_recurrent.py +273 -0

- build/lib/opencompass/tasks/fla2/ops/generalized_delta_rule/dplr/naive.py +96 -0

- build/lib/opencompass/tasks/fla2/ops/generalized_delta_rule/dplr/wy_fast_bwd.py +164 -0

- build/lib/opencompass/tasks/fla2/ops/generalized_delta_rule/dplr/wy_fast_fwd.py +284 -0

- build/lib/opencompass/tasks/fla2/ops/generalized_delta_rule/iplr/__init__.py +7 -0

- build/lib/opencompass/tasks/fla2/ops/generalized_delta_rule/iplr/chunk.py +500 -0

- build/lib/opencompass/tasks/fla2/ops/generalized_delta_rule/iplr/fused_recurrent.py +452 -0

- build/lib/opencompass/tasks/fla2/ops/generalized_delta_rule/iplr/naive.py +69 -0

- build/lib/opencompass/tasks/fla2/ops/generalized_delta_rule/iplr/wy_fast.py +300 -0

- docs/en/.readthedocs.yaml +17 -0

- docs/en/Makefile +20 -0

- docs/en/_static/css/readthedocs.css +62 -0

- docs/en/_static/image/logo.svg +79 -0

- docs/en/_static/image/logo_icon.svg +31 -0

- docs/en/_static/js/custom.js +20 -0

- docs/en/_templates/404.html +18 -0

- docs/en/_templates/autosummary/class.rst +13 -0

- docs/en/_templates/callable.rst +14 -0

- docs/en/advanced_guides/accelerator_intro.md +142 -0

- docs/en/advanced_guides/circular_eval.md +113 -0

- docs/en/advanced_guides/code_eval.md +104 -0

- docs/en/advanced_guides/code_eval_service.md +224 -0

- docs/en/advanced_guides/contamination_eval.md +124 -0

- docs/en/advanced_guides/custom_dataset.md +267 -0

- docs/en/advanced_guides/evaluation_lightllm.md +71 -0

- docs/en/advanced_guides/evaluation_lmdeploy.md +88 -0

- docs/en/advanced_guides/llm_judge.md +370 -0

- docs/en/advanced_guides/longeval.md +169 -0

- docs/en/advanced_guides/math_verify.md +190 -0

- docs/en/advanced_guides/needleinahaystack_eval.md +138 -0

- docs/en/advanced_guides/new_dataset.md +105 -0

- docs/en/advanced_guides/new_model.md +73 -0

- docs/en/advanced_guides/objective_judgelm_evaluation.md +186 -0

- docs/en/advanced_guides/persistence.md +65 -0

- docs/en/advanced_guides/prompt_attack.md +108 -0

- docs/en/advanced_guides/subjective_evaluation.md +171 -0

- docs/en/conf.py +234 -0

- docs/en/docutils.conf +2 -0

- docs/en/get_started/faq.md +128 -0

- docs/en/get_started/installation.md +142 -0

- docs/en/get_started/quick_start.md +300 -0

- docs/en/index.rst +99 -0

- docs/en/notes/academic.md +106 -0

- docs/en/notes/contribution_guide.md +158 -0

- docs/en/notes/news.md +40 -0

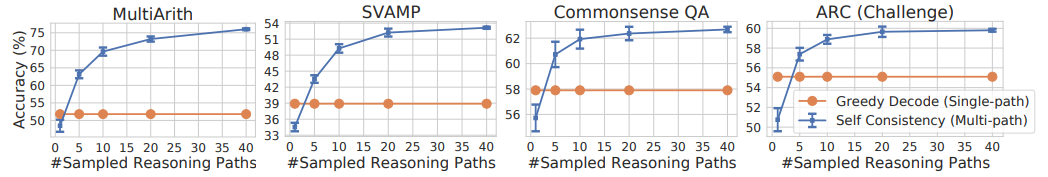

- docs/en/prompt/chain_of_thought.md +127 -0

build/lib/opencompass/tasks/fla2/ops/generalized_delta_rule/dplr/chunk_h_bwd.py

ADDED

|

@@ -0,0 +1,173 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# -*- coding: utf-8 -*-

|

| 2 |

+

# Copyright (c) 2023-2025, Songlin Yang, Yu Zhang

|

| 3 |

+

|

| 4 |

+

from typing import Optional, Tuple

|

| 5 |

+

|

| 6 |

+

import torch

|

| 7 |

+

import triton

|

| 8 |

+

import triton.language as tl

|

| 9 |

+

|

| 10 |

+

from ....ops.utils import prepare_chunk_indices, prepare_chunk_offsets

|

| 11 |

+

from ....ops.utils.op import exp

|

| 12 |

+

from ....utils import check_shared_mem, use_cuda_graph

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

@triton.heuristics({

|

| 16 |

+

'USE_FINAL_STATE_GRADIENT': lambda args: args['dht'] is not None,

|

| 17 |

+

'USE_INITIAL_STATE': lambda args: args['dh0'] is not None,

|

| 18 |

+

'IS_VARLEN': lambda args: args['cu_seqlens'] is not None,

|

| 19 |

+

})

|

| 20 |

+

@triton.autotune(

|

| 21 |

+

configs=[

|

| 22 |

+

triton.Config({}, num_warps=num_warps, num_stages=num_stages)

|

| 23 |

+

for num_warps in [2, 4, 8, 16, 32]

|

| 24 |

+

for num_stages in [2, 3, 4]

|

| 25 |

+

],

|

| 26 |

+

key=['BT', 'BK', 'BV', "V"],

|

| 27 |

+

use_cuda_graph=use_cuda_graph,

|

| 28 |

+

)

|

| 29 |

+

@triton.jit(do_not_specialize=['T'])

|

| 30 |

+

def chunk_dplr_bwd_kernel_dhu(

|

| 31 |

+

qg,

|

| 32 |

+

bg,

|

| 33 |

+

w,

|

| 34 |

+

gk,

|

| 35 |

+

dht,

|

| 36 |

+

dh0,

|

| 37 |

+

do,

|

| 38 |

+

dh,

|

| 39 |

+

dv,

|

| 40 |

+

dv2,

|

| 41 |

+

cu_seqlens,

|

| 42 |

+

chunk_offsets,

|

| 43 |

+

T,

|

| 44 |

+

H: tl.constexpr,

|

| 45 |

+

K: tl.constexpr,

|

| 46 |

+

V: tl.constexpr,

|

| 47 |

+

BT: tl.constexpr,

|

| 48 |

+

BC: tl.constexpr,

|

| 49 |

+

BK: tl.constexpr,

|

| 50 |

+

BV: tl.constexpr,

|

| 51 |

+

USE_FINAL_STATE_GRADIENT: tl.constexpr,

|

| 52 |

+

USE_INITIAL_STATE: tl.constexpr,

|

| 53 |

+

IS_VARLEN: tl.constexpr,

|

| 54 |

+

):

|

| 55 |

+

i_k, i_v, i_nh = tl.program_id(0), tl.program_id(1), tl.program_id(2)

|

| 56 |

+

i_n, i_h = i_nh // H, i_nh % H

|

| 57 |

+

if IS_VARLEN:

|

| 58 |

+

bos, eos = tl.load(cu_seqlens + i_n).to(tl.int32), tl.load(cu_seqlens + i_n + 1).to(tl.int32)

|

| 59 |

+

T = eos - bos

|

| 60 |

+

NT = tl.cdiv(T, BT)

|

| 61 |

+

boh = tl.load(chunk_offsets + i_n).to(tl.int32)

|

| 62 |

+

else:

|

| 63 |

+

bos, eos = i_n * T, i_n * T + T

|

| 64 |

+

NT = tl.cdiv(T, BT)

|

| 65 |

+

boh = i_n * NT

|

| 66 |

+

|

| 67 |

+

# [BK, BV]

|

| 68 |

+

b_dh = tl.zeros([BK, BV], dtype=tl.float32)

|

| 69 |

+

if USE_FINAL_STATE_GRADIENT:

|

| 70 |

+

p_dht = tl.make_block_ptr(dht + i_nh * K*V, (K, V), (V, 1), (i_k * BK, i_v * BV), (BK, BV), (1, 0))

|

| 71 |

+

b_dh += tl.load(p_dht, boundary_check=(0, 1))

|

| 72 |

+

|

| 73 |

+

mask_k = tl.arange(0, BK) < K

|

| 74 |

+

for i_t in range(NT - 1, -1, -1):

|

| 75 |

+

p_dh = tl.make_block_ptr(dh + ((boh+i_t) * H + i_h) * K*V, (K, V), (V, 1), (i_k * BK, i_v * BV), (BK, BV), (1, 0))

|

| 76 |

+

tl.store(p_dh, b_dh.to(p_dh.dtype.element_ty), boundary_check=(0, 1))

|

| 77 |

+

b_dh_tmp = tl.zeros([BK, BV], dtype=tl.float32)

|

| 78 |

+

for i_c in range(tl.cdiv(BT, BC) - 1, -1, -1):

|

| 79 |

+

p_qg = tl.make_block_ptr(qg+(bos*H+i_h)*K, (K, T), (1, H*K), (i_k * BK, i_t * BT + i_c * BC), (BK, BC), (0, 1))

|

| 80 |

+

p_bg = tl.make_block_ptr(bg+(bos*H+i_h)*K, (T, K), (H*K, 1), (i_t * BT + i_c * BC, i_k * BK), (BC, BK), (1, 0))

|

| 81 |

+

p_w = tl.make_block_ptr(w+(bos*H+i_h)*K, (K, T), (1, H*K), (i_k * BK, i_t * BT + i_c * BC), (BK, BC), (0, 1))

|

| 82 |

+

p_dv = tl.make_block_ptr(dv+(bos*H+i_h)*V, (T, V), (H*V, 1), (i_t*BT + i_c * BC, i_v * BV), (BC, BV), (1, 0))

|

| 83 |

+

p_do = tl.make_block_ptr(do+(bos*H+i_h)*V, (T, V), (H*V, 1), (i_t*BT + i_c * BC, i_v * BV), (BC, BV), (1, 0))

|

| 84 |

+

p_dv2 = tl.make_block_ptr(dv2+(bos*H+i_h)*V, (T, V), (H*V, 1), (i_t*BT + i_c * BC, i_v * BV), (BC, BV), (1, 0))

|

| 85 |

+

# [BK, BT]

|

| 86 |

+

b_qg = tl.load(p_qg, boundary_check=(0, 1))

|

| 87 |

+

# [BT, BK]

|

| 88 |

+

b_bg = tl.load(p_bg, boundary_check=(0, 1))

|

| 89 |

+

b_w = tl.load(p_w, boundary_check=(0, 1))

|

| 90 |

+

# [BT, V]

|

| 91 |

+

b_do = tl.load(p_do, boundary_check=(0, 1))

|

| 92 |

+

b_dv = tl.load(p_dv, boundary_check=(0, 1))

|

| 93 |

+

b_dv2 = b_dv + tl.dot(b_bg, b_dh.to(b_bg.dtype))

|

| 94 |

+

tl.store(p_dv2, b_dv2.to(p_dv.dtype.element_ty), boundary_check=(0, 1))

|

| 95 |

+

# [BK, BV]

|

| 96 |

+

b_dh_tmp += tl.dot(b_qg, b_do.to(b_qg.dtype))

|

| 97 |

+

b_dh_tmp += tl.dot(b_w, b_dv2.to(b_qg.dtype))

|

| 98 |

+

last_idx = min((i_t + 1) * BT, T) - 1

|

| 99 |

+

bg_last = tl.load(gk + ((bos + last_idx) * H + i_h) * K + tl.arange(0, BK), mask=mask_k)

|

| 100 |

+

b_dh *= exp(bg_last)[:, None]

|

| 101 |

+

b_dh += b_dh_tmp

|

| 102 |

+

|

| 103 |

+

if USE_INITIAL_STATE:

|

| 104 |

+

p_dh0 = tl.make_block_ptr(dh0 + i_nh * K*V, (K, V), (V, 1), (i_k * BK, i_v * BV), (BK, BV), (1, 0))

|

| 105 |

+

tl.store(p_dh0, b_dh.to(p_dh0.dtype.element_ty), boundary_check=(0, 1))

|

| 106 |

+

|

| 107 |

+

|

| 108 |

+

def chunk_dplr_bwd_dhu(

|

| 109 |

+

qg: torch.Tensor,

|

| 110 |

+

bg: torch.Tensor,

|

| 111 |

+

w: torch.Tensor,

|

| 112 |

+

gk: torch.Tensor,

|

| 113 |

+

h0: torch.Tensor,

|

| 114 |

+

dht: Optional[torch.Tensor],

|

| 115 |

+

do: torch.Tensor,

|

| 116 |

+

dv: torch.Tensor,

|

| 117 |

+

cu_seqlens: Optional[torch.LongTensor] = None,

|

| 118 |

+

chunk_size: int = 64

|

| 119 |

+

) -> Tuple[torch.Tensor, torch.Tensor, torch.Tensor]:

|

| 120 |

+

B, T, H, K, V = *qg.shape, do.shape[-1]

|

| 121 |

+

BT = min(chunk_size, max(triton.next_power_of_2(T), 16))

|

| 122 |

+

BK = triton.next_power_of_2(K)

|

| 123 |

+

assert BK <= 256, "current kernel does not support head dimension being larger than 256."

|

| 124 |

+

# H100

|

| 125 |

+

if check_shared_mem('hopper', qg.device.index):

|

| 126 |

+

BV = 64

|

| 127 |

+

BC = 64 if K <= 128 else 32

|

| 128 |

+

elif check_shared_mem('ampere', qg.device.index): # A100

|

| 129 |

+

BV = 32

|

| 130 |

+

BC = 32

|

| 131 |

+

else: # Etc: 4090

|

| 132 |

+

BV = 16

|

| 133 |

+

BC = 16

|

| 134 |

+

|

| 135 |

+

chunk_indices = prepare_chunk_indices(cu_seqlens, BT) if cu_seqlens is not None else None

|

| 136 |

+

# N: the actual number of sequences in the batch with either equal or variable lengths

|

| 137 |

+

if cu_seqlens is None:

|

| 138 |

+

N, NT, chunk_offsets = B, triton.cdiv(T, BT), None

|

| 139 |

+

else:

|

| 140 |

+

N, NT, chunk_offsets = len(cu_seqlens) - 1, len(chunk_indices), prepare_chunk_offsets(cu_seqlens, BT)

|

| 141 |

+

|

| 142 |

+

BC = min(BT, BC)

|

| 143 |

+

NK, NV = triton.cdiv(K, BK), triton.cdiv(V, BV)

|

| 144 |

+

assert NK == 1, 'NK > 1 is not supported because it involves time-consuming synchronization'

|

| 145 |

+

|

| 146 |

+

dh = qg.new_empty(B, NT, H, K, V)

|

| 147 |

+

dh0 = torch.empty_like(h0, dtype=torch.float32) if h0 is not None else None

|

| 148 |

+

dv2 = torch.zeros_like(dv)

|

| 149 |

+

|

| 150 |

+

grid = (NK, NV, N * H)

|

| 151 |

+

chunk_dplr_bwd_kernel_dhu[grid](

|

| 152 |

+

qg=qg,

|

| 153 |

+

bg=bg,

|

| 154 |

+

w=w,

|

| 155 |

+

gk=gk,

|

| 156 |

+

dht=dht,

|

| 157 |

+

dh0=dh0,

|

| 158 |

+

do=do,

|

| 159 |

+

dh=dh,

|

| 160 |

+

dv=dv,

|

| 161 |

+

dv2=dv2,

|

| 162 |

+

cu_seqlens=cu_seqlens,

|

| 163 |

+

chunk_offsets=chunk_offsets,

|

| 164 |

+

T=T,

|

| 165 |

+

H=H,

|

| 166 |

+

K=K,

|

| 167 |

+

V=V,

|

| 168 |

+

BT=BT,

|

| 169 |

+

BC=BC,

|

| 170 |

+

BK=BK,

|

| 171 |

+

BV=BV,

|

| 172 |

+

)

|

| 173 |

+

return dh, dh0, dv2

|

build/lib/opencompass/tasks/fla2/ops/generalized_delta_rule/dplr/chunk_h_fwd.py

ADDED

|

@@ -0,0 +1,173 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# -*- coding: utf-8 -*-

|

| 2 |

+

# Copyright (c) 2023-2025, Songlin Yang, Yu Zhang

|

| 3 |

+

|

| 4 |

+

from typing import Optional, Tuple

|

| 5 |

+

|

| 6 |

+

import torch

|

| 7 |

+

import triton

|

| 8 |

+

import triton.language as tl

|

| 9 |

+

|

| 10 |

+

from ....ops.utils import prepare_chunk_indices, prepare_chunk_offsets

|

| 11 |

+

from ....ops.utils.op import exp

|

| 12 |

+

from ....utils import check_shared_mem, use_cuda_graph

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

@triton.heuristics({

|

| 16 |

+

'USE_INITIAL_STATE': lambda args: args['h0'] is not None,

|

| 17 |

+

'STORE_FINAL_STATE': lambda args: args['ht'] is not None,

|

| 18 |

+

'IS_VARLEN': lambda args: args['cu_seqlens'] is not None,

|

| 19 |

+

})

|

| 20 |

+

@triton.autotune(

|

| 21 |

+

configs=[

|

| 22 |

+

triton.Config({}, num_warps=num_warps, num_stages=num_stages)

|

| 23 |

+

for num_warps in [2, 4, 8, 16, 32]

|

| 24 |

+

for num_stages in [2, 3, 4]

|

| 25 |

+

],

|

| 26 |

+

key=['BT', 'BK', 'BV'],

|

| 27 |

+

use_cuda_graph=use_cuda_graph,

|

| 28 |

+

)

|

| 29 |

+

@triton.jit(do_not_specialize=['T'])

|

| 30 |

+

def chunk_dplr_fwd_kernel_h(

|

| 31 |

+

kg,

|

| 32 |

+

v,

|

| 33 |

+

w,

|

| 34 |

+

bg,

|

| 35 |

+

u,

|

| 36 |

+

v_new,

|

| 37 |

+

gk,

|

| 38 |

+

h,

|

| 39 |

+

h0,

|

| 40 |

+

ht,

|

| 41 |

+

cu_seqlens,

|

| 42 |

+

chunk_offsets,

|

| 43 |

+

T,

|

| 44 |

+

H: tl.constexpr,

|

| 45 |

+

K: tl.constexpr,

|

| 46 |

+

V: tl.constexpr,

|

| 47 |

+

BT: tl.constexpr,

|

| 48 |

+

BC: tl.constexpr,

|

| 49 |

+

BK: tl.constexpr,

|

| 50 |

+

BV: tl.constexpr,

|

| 51 |

+

USE_INITIAL_STATE: tl.constexpr,

|

| 52 |

+

STORE_FINAL_STATE: tl.constexpr,

|

| 53 |

+

IS_VARLEN: tl.constexpr,

|

| 54 |

+

):

|

| 55 |

+

i_k, i_v, i_nh = tl.program_id(0), tl.program_id(1), tl.program_id(2)

|

| 56 |

+

i_n, i_h = i_nh // H, i_nh % H

|

| 57 |

+

if IS_VARLEN:

|

| 58 |

+

bos, eos = tl.load(cu_seqlens + i_n).to(tl.int32), tl.load(cu_seqlens + i_n + 1).to(tl.int32)

|

| 59 |

+

T = eos - bos

|

| 60 |

+

NT = tl.cdiv(T, BT)

|

| 61 |

+

boh = tl.load(chunk_offsets + i_n).to(tl.int32)

|

| 62 |

+

else:

|

| 63 |

+

bos, eos = i_n * T, i_n * T + T

|

| 64 |

+

NT = tl.cdiv(T, BT)

|

| 65 |

+

boh = i_n * NT

|

| 66 |

+

o_k = i_k * BK + tl.arange(0, BK)

|

| 67 |

+

|

| 68 |

+

# [BK, BV]

|

| 69 |

+

b_h = tl.zeros([BK, BV], dtype=tl.float32)

|

| 70 |

+

if USE_INITIAL_STATE:

|

| 71 |

+

p_h0 = tl.make_block_ptr(h0 + i_nh * K*V, (K, V), (V, 1), (i_k * BK, i_v * BV), (BK, BV), (1, 0))

|

| 72 |

+

b_h = tl.load(p_h0, boundary_check=(0, 1)).to(tl.float32)

|

| 73 |

+

|

| 74 |

+

for i_t in range(NT):

|

| 75 |

+

p_h = tl.make_block_ptr(h + ((boh + i_t) * H + i_h) * K*V, (K, V), (V, 1), (i_k * BK, i_v * BV), (BK, BV), (1, 0))

|

| 76 |

+

tl.store(p_h, b_h.to(p_h.dtype.element_ty), boundary_check=(0, 1))

|

| 77 |

+

|

| 78 |

+

b_hc = tl.zeros([BK, BV], dtype=tl.float32)

|

| 79 |

+

# since we need to make all DK in the SRAM. we face serve SRAM memory burden. By subchunking we allievate such burden

|

| 80 |

+

for i_c in range(tl.cdiv(min(BT, T - i_t * BT), BC)):

|

| 81 |

+

p_kg = tl.make_block_ptr(kg+(bos*H+i_h)*K, (K, T), (1, H*K), (i_k * BK, i_t * BT + i_c * BC), (BK, BC), (0, 1))

|

| 82 |

+

p_bg = tl.make_block_ptr(bg+(bos*H+i_h)*K, (K, T), (1, H*K), (i_k * BK, i_t * BT + i_c * BC), (BK, BC), (0, 1))

|

| 83 |

+

p_w = tl.make_block_ptr(w+(bos*H+i_h)*K, (T, K), (H*K, 1), (i_t * BT + i_c * BC, i_k * BK), (BC, BK), (1, 0))

|

| 84 |

+

p_v = tl.make_block_ptr(v+(bos*H+i_h)*V, (T, V), (H*V, 1), (i_t * BT + i_c * BC, i_v * BV), (BC, BV), (1, 0))

|

| 85 |

+

p_u = tl.make_block_ptr(u+(bos*H+i_h)*V, (T, V), (H*V, 1), (i_t * BT + i_c * BC, i_v * BV), (BC, BV), (1, 0))

|

| 86 |

+

p_v_new = tl.make_block_ptr(v_new+(bos*H+i_h)*V, (T, V), (H*V, 1), (i_t*BT+i_c*BC, i_v * BV), (BC, BV), (1, 0))

|

| 87 |

+

# [BK, BC]

|

| 88 |

+

b_kg = tl.load(p_kg, boundary_check=(0, 1))

|

| 89 |

+

b_v = tl.load(p_v, boundary_check=(0, 1))

|

| 90 |

+

b_w = tl.load(p_w, boundary_check=(0, 1))

|

| 91 |

+

b_bg = tl.load(p_bg, boundary_check=(0, 1))

|

| 92 |

+

b_v2 = tl.dot(b_w, b_h.to(b_w.dtype)) + tl.load(p_u, boundary_check=(0, 1))

|

| 93 |

+

b_hc += tl.dot(b_kg, b_v)

|

| 94 |

+

b_hc += tl.dot(b_bg.to(b_hc.dtype), b_v2)

|

| 95 |

+

tl.store(p_v_new, b_v2.to(p_v_new.dtype.element_ty), boundary_check=(0, 1))

|

| 96 |

+

|

| 97 |

+

last_idx = min((i_t + 1) * BT, T) - 1

|

| 98 |

+

b_g_last = tl.load(gk + (bos + last_idx) * H*K + i_h * K + o_k, mask=o_k < K).to(tl.float32)

|

| 99 |

+

b_h *= exp(b_g_last[:, None])

|

| 100 |

+

b_h += b_hc

|

| 101 |

+

|

| 102 |

+

if STORE_FINAL_STATE:

|

| 103 |

+

p_ht = tl.make_block_ptr(ht + i_nh * K*V, (K, V), (V, 1), (i_k * BK, i_v * BV), (BK, BV), (1, 0))

|

| 104 |

+

tl.store(p_ht, b_h.to(p_ht.dtype.element_ty, fp_downcast_rounding="rtne"), boundary_check=(0, 1))

|

| 105 |

+

|

| 106 |

+

|

| 107 |

+

def chunk_dplr_fwd_h(

|

| 108 |

+

kg: torch.Tensor,

|

| 109 |

+

v: torch.Tensor,

|

| 110 |

+

w: torch.Tensor,

|

| 111 |

+

u: torch.Tensor,

|

| 112 |

+

bg: torch.Tensor,

|

| 113 |

+

gk: torch.Tensor,

|

| 114 |

+

initial_state: Optional[torch.Tensor] = None,

|

| 115 |

+

output_final_state: bool = False,

|

| 116 |

+

cu_seqlens: Optional[torch.LongTensor] = None,

|

| 117 |

+

chunk_size: int = 64

|

| 118 |

+

) -> Tuple[torch.Tensor, torch.Tensor]:

|

| 119 |

+

B, T, H, K, V = *kg.shape, u.shape[-1]

|

| 120 |

+

BT = min(chunk_size, max(triton.next_power_of_2(T), 16))

|

| 121 |

+

|

| 122 |

+

chunk_indices = prepare_chunk_indices(cu_seqlens, BT) if cu_seqlens is not None else None

|

| 123 |

+

# N: the actual number of sequences in the batch with either equal or variable lengths

|

| 124 |

+

if cu_seqlens is None:

|

| 125 |

+

N, NT, chunk_offsets = B, triton.cdiv(T, BT), None

|

| 126 |

+

else:

|

| 127 |

+

N, NT, chunk_offsets = len(cu_seqlens) - 1, len(chunk_indices), prepare_chunk_offsets(cu_seqlens, BT)

|

| 128 |

+

BK = triton.next_power_of_2(K)

|

| 129 |

+

assert BK <= 256, "current kernel does not support head dimension larger than 256."

|

| 130 |

+

# H100 can have larger block size

|

| 131 |

+

|

| 132 |

+

if check_shared_mem('hopper', kg.device.index):

|

| 133 |

+

BV = 64

|

| 134 |

+

BC = 64 if K <= 128 else 32

|

| 135 |

+

elif check_shared_mem('ampere', kg.device.index): # A100

|

| 136 |

+

BV = 32

|

| 137 |

+

BC = 32

|

| 138 |

+

else:

|

| 139 |

+

BV = 16

|

| 140 |

+

BC = 16

|

| 141 |

+

|

| 142 |

+

BC = min(BT, BC)

|

| 143 |

+

NK = triton.cdiv(K, BK)

|

| 144 |

+

NV = triton.cdiv(V, BV)

|

| 145 |

+

assert NK == 1, 'NK > 1 is not supported because it involves time-consuming synchronization'

|

| 146 |

+

|

| 147 |

+

h = kg.new_empty(B, NT, H, K, V)

|

| 148 |

+

final_state = kg.new_empty(N, H, K, V, dtype=torch.float32) if output_final_state else None

|

| 149 |

+

v_new = torch.empty_like(u)

|

| 150 |

+

grid = (NK, NV, N * H)

|

| 151 |

+

chunk_dplr_fwd_kernel_h[grid](

|

| 152 |

+

kg=kg,

|

| 153 |

+

v=v,

|

| 154 |

+

w=w,

|

| 155 |

+

bg=bg,

|

| 156 |

+

u=u,

|

| 157 |

+

v_new=v_new,

|

| 158 |

+

h=h,

|

| 159 |

+

gk=gk,

|

| 160 |

+

h0=initial_state,

|

| 161 |

+

ht=final_state,

|

| 162 |

+

cu_seqlens=cu_seqlens,

|

| 163 |

+

chunk_offsets=chunk_offsets,

|

| 164 |

+

T=T,

|

| 165 |

+

H=H,

|

| 166 |

+

K=K,

|

| 167 |

+

V=V,

|

| 168 |

+

BT=BT,

|

| 169 |

+

BC=BC,

|

| 170 |

+

BK=BK,

|

| 171 |

+

BV=BV,

|

| 172 |

+

)

|

| 173 |

+

return h, v_new, final_state

|

build/lib/opencompass/tasks/fla2/ops/generalized_delta_rule/dplr/chunk_o_bwd.py

ADDED

|

@@ -0,0 +1,428 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# -*- coding: utf-8 -*-

|

| 2 |

+

# Copyright (c) 2023-2025, Songlin Yang, Yu Zhang

|

| 3 |

+

|

| 4 |

+

from typing import Optional, Tuple

|

| 5 |

+

|

| 6 |

+

import torch

|

| 7 |

+

import triton

|

| 8 |

+

import triton.language as tl

|

| 9 |

+

|

| 10 |

+

from ....ops.utils import prepare_chunk_indices

|

| 11 |

+

from ....ops.utils.op import exp

|

| 12 |

+

from ....utils import check_shared_mem, use_cuda_graph

|

| 13 |

+

|

| 14 |

+

BK_LIST = [32, 64, 128] if check_shared_mem() else [16, 32]

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

@triton.heuristics({

|

| 18 |

+

'IS_VARLEN': lambda args: args['cu_seqlens'] is not None

|

| 19 |

+

})

|

| 20 |

+

@triton.autotune(

|

| 21 |

+

configs=[

|

| 22 |

+

triton.Config({}, num_warps=num_warps, num_stages=num_stages)

|

| 23 |

+

for num_warps in [2, 4, 8, 16, 32]

|

| 24 |

+

for num_stages in [2, 3, 4]

|

| 25 |

+

],

|

| 26 |

+

key=['BV', 'BT'],

|

| 27 |

+

use_cuda_graph=use_cuda_graph,

|

| 28 |

+

)

|

| 29 |

+

@triton.jit(do_not_specialize=['T'])

|

| 30 |

+

def chunk_dplr_bwd_kernel_dAu(

|

| 31 |

+

v,

|

| 32 |

+

do,

|

| 33 |

+

v_new,

|

| 34 |

+

A_qb,

|

| 35 |

+

dA_qk,

|

| 36 |

+

dA_qb,

|

| 37 |

+

dv_new,

|

| 38 |

+

cu_seqlens,

|

| 39 |

+

chunk_indices,

|

| 40 |

+

scale: tl.constexpr,

|

| 41 |

+

T,

|

| 42 |

+

H: tl.constexpr,

|

| 43 |

+

V: tl.constexpr,

|

| 44 |

+

BT: tl.constexpr,

|

| 45 |

+

BV: tl.constexpr,

|

| 46 |

+

IS_VARLEN: tl.constexpr,

|

| 47 |

+

):

|

| 48 |

+

i_t, i_bh = tl.program_id(0), tl.program_id(1)

|

| 49 |

+

i_b, i_h = i_bh // H, i_bh % H

|

| 50 |

+

if IS_VARLEN:

|

| 51 |

+

i_n, i_t = tl.load(chunk_indices + i_t * 2).to(tl.int32), tl.load(chunk_indices + i_t * 2 + 1).to(tl.int32)

|

| 52 |

+

bos, eos = tl.load(cu_seqlens + i_n).to(tl.int32), tl.load(cu_seqlens + i_n + 1).to(tl.int32)

|

| 53 |

+

else:

|

| 54 |

+

bos, eos = i_b * T, i_b * T + T

|

| 55 |

+

T = eos - bos

|

| 56 |

+

|

| 57 |

+

b_dA_qk = tl.zeros([BT, BT], dtype=tl.float32)

|

| 58 |

+

b_dA_qb = tl.zeros([BT, BT], dtype=tl.float32)

|

| 59 |

+

|

| 60 |

+

p_A_qb = tl.make_block_ptr(A_qb + (bos * H + i_h) * BT, (T, BT), (H*BT, 1), (i_t * BT, 0), (BT, BT), (1, 0))

|

| 61 |

+

|

| 62 |

+

b_A_qb = tl.load(p_A_qb, boundary_check=(0, 1))

|

| 63 |

+

# causal mask

|

| 64 |

+

b_A_qb = tl.where(tl.arange(0, BT)[:, None] >= tl.arange(0, BT)[None, :], b_A_qb, 0.).to(b_A_qb.dtype)

|

| 65 |

+

|

| 66 |

+

for i_v in range(tl.cdiv(V, BV)):

|

| 67 |

+

p_do = tl.make_block_ptr(do + (bos*H + i_h) * V, (T, V), (H*V, 1), (i_t * BT, i_v * BV), (BT, BV), (1, 0))

|

| 68 |

+

p_v = tl.make_block_ptr(v + (bos*H + i_h) * V, (V, T), (1, H*V), (i_v * BV, i_t * BT), (BV, BT), (0, 1))

|

| 69 |

+

p_v_new = tl.make_block_ptr(v_new + (bos*H + i_h) * V, (V, T), (1, H*V), (i_v * BV, i_t * BT), (BV, BT), (0, 1))

|

| 70 |

+

p_dv_new = tl.make_block_ptr(dv_new + (bos*H + i_h) * V, (T, V), (H*V, 1), (i_t * BT, i_v * BV), (BT, BV), (1, 0))

|

| 71 |

+

b_v = tl.load(p_v, boundary_check=(0, 1))

|

| 72 |

+

b_do = tl.load(p_do, boundary_check=(0, 1))

|

| 73 |

+

b_v_new = tl.load(p_v_new, boundary_check=(0, 1))

|

| 74 |

+

b_dA_qk += tl.dot(b_do, b_v)

|

| 75 |

+

b_dA_qb += tl.dot(b_do, b_v_new)

|

| 76 |

+

b_dv_new = tl.dot(tl.trans(b_A_qb), b_do)

|

| 77 |

+

# for recurrent

|

| 78 |

+

tl.store(p_dv_new, b_dv_new.to(p_dv_new.dtype.element_ty), boundary_check=(0, 1))

|

| 79 |

+

|

| 80 |

+

p_dA_qk = tl.make_block_ptr(dA_qk + (bos * H + i_h) * BT, (T, BT), (H*BT, 1), (i_t * BT, 0), (BT, BT), (1, 0))

|

| 81 |

+

p_dA_qb = tl.make_block_ptr(dA_qb + (bos * H + i_h) * BT, (T, BT), (H*BT, 1), (i_t * BT, 0), (BT, BT), (1, 0))

|

| 82 |

+

m_s = tl.arange(0, BT)[:, None] >= tl.arange(0, BT)[None, :]

|

| 83 |

+

b_dA_qk = tl.where(m_s, b_dA_qk * scale, 0.)

|

| 84 |

+

tl.store(p_dA_qk, b_dA_qk.to(p_dA_qk.dtype.element_ty), boundary_check=(0, 1))

|

| 85 |

+

b_dA_qb = tl.where(m_s, b_dA_qb * scale, 0.)

|

| 86 |

+

tl.store(p_dA_qb, b_dA_qb.to(p_dA_qb.dtype.element_ty), boundary_check=(0, 1))

|

| 87 |

+

|

| 88 |

+

|

| 89 |

+

@triton.heuristics({

|

| 90 |

+

'IS_VARLEN': lambda args: args['cu_seqlens'] is not None,

|

| 91 |

+

})

|

| 92 |

+

@triton.autotune(

|

| 93 |

+

configs=[

|

| 94 |

+

triton.Config({}, num_warps=num_warps, num_stages=num_stages)

|

| 95 |

+

for num_warps in [2, 4, 8, 16, 32]

|

| 96 |

+

for num_stages in [2, 3, 4]

|

| 97 |

+

],

|

| 98 |

+

key=['BT', 'BK', 'BV'],

|

| 99 |

+

use_cuda_graph=use_cuda_graph,

|

| 100 |

+

)

|

| 101 |

+

@triton.jit

|

| 102 |

+

def chunk_dplr_bwd_o_kernel(

|

| 103 |

+

v,

|

| 104 |

+

v_new,

|

| 105 |

+

h,

|

| 106 |

+

do,

|

| 107 |

+

dh,

|

| 108 |

+

dk,

|

| 109 |

+

db,

|

| 110 |

+

w,

|

| 111 |

+

dq,

|

| 112 |

+

dv,

|

| 113 |

+

dw,

|

| 114 |

+

gk,

|

| 115 |

+

dgk_last,

|

| 116 |

+

k,

|

| 117 |

+

b,

|

| 118 |

+

cu_seqlens,

|

| 119 |

+

chunk_indices,

|

| 120 |

+

T,

|

| 121 |

+

H: tl.constexpr,

|

| 122 |

+

K: tl.constexpr,

|

| 123 |

+

V: tl.constexpr,

|

| 124 |

+

BT: tl.constexpr,

|

| 125 |

+

BK: tl.constexpr,

|

| 126 |

+

BV: tl.constexpr,

|

| 127 |

+

IS_VARLEN: tl.constexpr,

|

| 128 |

+

):

|

| 129 |

+

i_k, i_t, i_bh = tl.program_id(0), tl.program_id(1), tl.program_id(2)

|

| 130 |

+

i_b, i_h = i_bh // H, i_bh % H

|

| 131 |

+

|

| 132 |

+

if IS_VARLEN:

|

| 133 |

+

i_tg = i_t

|

| 134 |

+

i_n, i_t = tl.load(chunk_indices + i_t * 2).to(tl.int32), tl.load(chunk_indices + i_t * 2 + 1).to(tl.int32)

|

| 135 |

+

bos, eos = tl.load(cu_seqlens + i_n).to(tl.int32), tl.load(cu_seqlens + i_n + 1).to(tl.int32)

|

| 136 |

+

T = eos - bos

|

| 137 |

+

NT = tl.cdiv(T, BT)

|

| 138 |

+

else:

|

| 139 |

+

NT = tl.cdiv(T, BT)

|

| 140 |

+

i_tg = i_b * NT + i_t

|

| 141 |

+

bos, eos = i_b * T, i_b * T + T

|

| 142 |

+

|

| 143 |

+

# offset calculation

|

| 144 |

+

v += (bos * H + i_h) * V

|

| 145 |

+

v_new += (bos * H + i_h) * V

|

| 146 |

+

do += (bos * H + i_h) * V

|

| 147 |

+

h += (i_tg * H + i_h) * K * V

|

| 148 |

+

dh += (i_tg * H + i_h) * K * V

|

| 149 |

+

dk += (bos * H + i_h) * K

|

| 150 |

+

k += (bos * H + i_h) * K

|

| 151 |

+

db += (bos * H + i_h) * K

|

| 152 |

+

b += (bos * H + i_h) * K

|

| 153 |

+

dw += (bos * H + i_h) * K

|

| 154 |

+

dv += (bos * H + i_h) * V

|

| 155 |

+

dq += (bos * H + i_h) * K

|

| 156 |

+

w += (bos * H + i_h) * K

|

| 157 |

+

|

| 158 |

+

dgk_last += (i_tg * H + i_h) * K

|

| 159 |

+

gk += (bos * H + i_h) * K

|

| 160 |

+

|

| 161 |

+

stride_qk = H*K

|

| 162 |

+

stride_vo = H*V

|

| 163 |

+

|

| 164 |

+

b_dq = tl.zeros([BT, BK], dtype=tl.float32)

|

| 165 |

+

b_dk = tl.zeros([BT, BK], dtype=tl.float32)

|

| 166 |

+

b_dw = tl.zeros([BT, BK], dtype=tl.float32)

|

| 167 |

+

b_db = tl.zeros([BT, BK], dtype=tl.float32)

|

| 168 |

+

b_dgk_last = tl.zeros([BK], dtype=tl.float32)

|

| 169 |

+

|

| 170 |

+

for i_v in range(tl.cdiv(V, BV)):

|

| 171 |

+

p_v = tl.make_block_ptr(v, (T, V), (stride_vo, 1), (i_t * BT, i_v * BV), (BT, BV), (1, 0))

|

| 172 |

+

p_v_new = tl.make_block_ptr(v_new, (T, V), (stride_vo, 1), (i_t * BT, i_v * BV), (BT, BV), (1, 0))

|

| 173 |

+

p_do = tl.make_block_ptr(do, (T, V), (stride_vo, 1), (i_t * BT, i_v * BV), (BT, BV), (1, 0))

|

| 174 |

+

p_h = tl.make_block_ptr(h, (V, K), (1, V), (i_v * BV, i_k * BK), (BV, BK), (0, 1))

|

| 175 |

+

p_dh = tl.make_block_ptr(dh, (V, K), (1, V), (i_v * BV, i_k * BK), (BV, BK), (0, 1))

|

| 176 |

+

# [BT, BV]

|

| 177 |

+

b_v = tl.load(p_v, boundary_check=(0, 1))

|

| 178 |

+

b_v_new = tl.load(p_v_new, boundary_check=(0, 1))

|

| 179 |

+

b_do = tl.load(p_do, boundary_check=(0, 1))

|

| 180 |

+

# [BV, BK]

|

| 181 |

+

b_h = tl.load(p_h, boundary_check=(0, 1))

|

| 182 |

+

b_dh = tl.load(p_dh, boundary_check=(0, 1))

|

| 183 |

+

b_dgk_last += tl.sum((b_h * b_dh).to(tl.float32), axis=0)

|

| 184 |

+

|

| 185 |

+

# [BT, BV] @ [BV, BK] -> [BT, BK]

|

| 186 |

+

b_dq += tl.dot(b_do, b_h.to(b_do.dtype))

|

| 187 |

+

# [BT, BV] @ [BV, BK] -> [BT, BK]

|

| 188 |

+

b_dk += tl.dot(b_v, b_dh.to(b_v.dtype))

|

| 189 |

+

b_db += tl.dot(b_v_new, b_dh.to(b_v_new.dtype))

|

| 190 |

+

p_dv = tl.make_block_ptr(dv, (T, V), (stride_vo, 1), (i_t * BT, i_v * BV), (BT, BV), (1, 0))

|

| 191 |

+

b_dv = tl.load(p_dv, boundary_check=(0, 1))

|

| 192 |

+

b_dw += tl.dot(b_dv.to(b_v.dtype), b_h.to(b_v.dtype))

|

| 193 |

+

|

| 194 |

+

m_k = (i_k*BK+tl.arange(0, BK)) < K

|

| 195 |

+

last_idx = min(i_t * BT + BT, T) - 1

|

| 196 |

+

b_gk_last = tl.load(gk + last_idx * stride_qk + i_k*BK + tl.arange(0, BK), mask=m_k, other=float('-inf'))

|

| 197 |

+

b_dgk_last *= exp(b_gk_last)

|

| 198 |

+

p_k = tl.make_block_ptr(k, (T, K), (stride_qk, 1), (i_t * BT, i_k * BK), (BT, BK), (1, 0))

|

| 199 |

+

p_b = tl.make_block_ptr(b, (T, K), (stride_qk, 1), (i_t * BT, i_k * BK), (BT, BK), (1, 0))

|

| 200 |

+

b_k = tl.load(p_k, boundary_check=(0, 1))

|

| 201 |

+

b_b = tl.load(p_b, boundary_check=(0, 1))

|

| 202 |

+

b_dgk_last += tl.sum(b_k * b_dk, axis=0)

|

| 203 |

+

b_dgk_last += tl.sum(b_b * b_db, axis=0)

|

| 204 |

+

tl.store(dgk_last + tl.arange(0, BK) + i_k * BK, b_dgk_last, mask=m_k)

|

| 205 |

+

|

| 206 |

+

p_dw = tl.make_block_ptr(dw, (T, K), (stride_qk, 1), (i_t * BT, i_k * BK), (BT, BK), (1, 0))

|

| 207 |

+

p_dk = tl.make_block_ptr(dk, (T, K), (stride_qk, 1), (i_t * BT, i_k * BK), (BT, BK), (1, 0))

|

| 208 |

+

p_db = tl.make_block_ptr(db, (T, K), (stride_qk, 1), (i_t * BT, i_k * BK), (BT, BK), (1, 0))

|

| 209 |

+

p_dq = tl.make_block_ptr(dq, (T, K), (stride_qk, 1), (i_t * BT, i_k * BK), (BT, BK), (1, 0))

|

| 210 |

+

tl.store(p_dw, b_dw.to(p_dw.dtype.element_ty), boundary_check=(0, 1))

|

| 211 |

+

tl.store(p_dk, b_dk.to(p_dk.dtype.element_ty), boundary_check=(0, 1))

|

| 212 |

+

tl.store(p_db, b_db.to(p_db.dtype.element_ty), boundary_check=(0, 1))

|

| 213 |

+

tl.store(p_dq, b_dq.to(p_dq.dtype.element_ty), boundary_check=(0, 1))

|

| 214 |

+

|

| 215 |

+

|

| 216 |

+

@triton.heuristics({

|

| 217 |

+

'IS_VARLEN': lambda args: args['cu_seqlens'] is not None,

|

| 218 |

+

})

|

| 219 |

+

@triton.autotune(

|

| 220 |

+

configs=[

|

| 221 |

+

triton.Config({'BK': BK, 'BV': BV}, num_warps=num_warps, num_stages=num_stages)

|

| 222 |

+

for num_warps in [2, 4, 8, 16, 32]

|

| 223 |

+

for num_stages in [2, 3, 4]

|

| 224 |

+

for BK in BK_LIST

|

| 225 |

+

for BV in BK_LIST

|

| 226 |

+

],

|

| 227 |

+

key=['BT'],

|

| 228 |

+

use_cuda_graph=use_cuda_graph,

|

| 229 |

+

)

|

| 230 |

+

@triton.jit

|

| 231 |

+

def chunk_dplr_bwd_kernel_dv(

|

| 232 |

+

A_qk,

|

| 233 |

+

kg,

|

| 234 |

+

do,

|

| 235 |

+

dv,

|

| 236 |

+

dh,

|

| 237 |

+

cu_seqlens,

|

| 238 |

+

chunk_indices,

|

| 239 |

+

T,

|

| 240 |

+

H: tl.constexpr,

|

| 241 |

+

K: tl.constexpr,

|

| 242 |

+

V: tl.constexpr,

|

| 243 |

+

BT: tl.constexpr,

|

| 244 |

+

BK: tl.constexpr,

|

| 245 |

+

BV: tl.constexpr,

|

| 246 |

+

IS_VARLEN: tl.constexpr,

|

| 247 |

+

):

|

| 248 |

+

i_v, i_t, i_bh = tl.program_id(0), tl.program_id(1), tl.program_id(2)

|

| 249 |

+

i_b, i_h = i_bh // H, i_bh % H

|

| 250 |

+

if IS_VARLEN:

|

| 251 |

+

i_tg = i_t

|

| 252 |

+

i_n, i_t = tl.load(chunk_indices + i_t * 2).to(tl.int32), tl.load(chunk_indices + i_t * 2 + 1).to(tl.int32)

|

| 253 |

+

bos, eos = tl.load(cu_seqlens + i_n).to(tl.int32), tl.load(cu_seqlens + i_n + 1).to(tl.int32)

|

| 254 |

+

T = eos - bos

|

| 255 |

+

NT = tl.cdiv(T, BT)

|

| 256 |

+

else:

|

| 257 |

+

NT = tl.cdiv(T, BT)

|

| 258 |

+

i_tg = i_b * NT + i_t

|

| 259 |

+

bos, eos = i_b * T, i_b * T + T

|

| 260 |

+

|

| 261 |

+

b_dv = tl.zeros([BT, BV], dtype=tl.float32)

|

| 262 |

+

|

| 263 |

+

# offset calculation

|

| 264 |

+

A_qk += (bos * H + i_h) * BT

|

| 265 |

+

do += (bos * H + i_h) * V

|

| 266 |

+

dv += (bos * H + i_h) * V

|

| 267 |

+

kg += (bos * H + i_h) * K

|

| 268 |

+

dh += (i_tg * H + i_h) * K*V

|

| 269 |

+

|

| 270 |

+

stride_qk = H*K

|

| 271 |

+

stride_vo = H*V

|

| 272 |

+

stride_A = H*BT

|

| 273 |

+

|

| 274 |

+

for i_k in range(tl.cdiv(K, BK)):

|

| 275 |

+

p_dh = tl.make_block_ptr(dh, (K, V), (V, 1), (i_k * BK, i_v * BV), (BK, BV), (1, 0))

|

| 276 |

+

p_kg = tl.make_block_ptr(kg, (T, K), (stride_qk, 1), (i_t * BT, i_k * BK), (BT, BK), (1, 0))

|

| 277 |

+

b_dh = tl.load(p_dh, boundary_check=(0, 1))

|

| 278 |

+

b_kg = tl.load(p_kg, boundary_check=(0, 1))

|

| 279 |

+

b_dv += tl.dot(b_kg, b_dh.to(b_kg.dtype))

|

| 280 |

+

|

| 281 |

+

p_Aqk = tl.make_block_ptr(A_qk, (BT, T), (1, stride_A), (0, i_t * BT), (BT, BT), (0, 1))

|

| 282 |

+

b_A = tl.where(tl.arange(0, BT)[:, None] <= tl.arange(0, BT)[None, :], tl.load(p_Aqk, boundary_check=(0, 1)), 0)

|

| 283 |

+

p_do = tl.make_block_ptr(do, (T, V), (stride_vo, 1), (i_t * BT, i_v * BV), (BT, BV), (1, 0))

|

| 284 |

+

p_dv = tl.make_block_ptr(dv, (T, V), (stride_vo, 1), (i_t * BT, i_v * BV), (BT, BV), (1, 0))

|

| 285 |

+

b_do = tl.load(p_do, boundary_check=(0, 1))

|

| 286 |

+

b_dv += tl.dot(b_A.to(b_do.dtype), b_do)

|

| 287 |

+

tl.store(p_dv, b_dv.to(p_dv.dtype.element_ty), boundary_check=(0, 1))

|

| 288 |

+

|

| 289 |

+

|

| 290 |

+

def chunk_dplr_bwd_dv(

|

| 291 |

+

A_qk: torch.Tensor,

|

| 292 |

+

kg: torch.Tensor,

|

| 293 |

+

do: torch.Tensor,

|

| 294 |

+

dh: torch.Tensor,

|

| 295 |

+

cu_seqlens: Optional[torch.LongTensor] = None,

|

| 296 |

+

chunk_size: int = 64

|

| 297 |

+

) -> torch.Tensor:

|

| 298 |

+

B, T, H, K, V = *kg.shape, do.shape[-1]

|

| 299 |

+

BT = min(chunk_size, max(16, triton.next_power_of_2(T)))

|

| 300 |

+

|

| 301 |

+

chunk_indices = prepare_chunk_indices(cu_seqlens, BT) if cu_seqlens is not None else None

|

| 302 |

+

NT = triton.cdiv(T, BT) if cu_seqlens is None else len(chunk_indices)

|

| 303 |

+

|

| 304 |

+

dv = torch.empty_like(do)

|

| 305 |

+

|

| 306 |

+

def grid(meta): return (triton.cdiv(V, meta['BV']), NT, B * H)

|

| 307 |

+

chunk_dplr_bwd_kernel_dv[grid](

|

| 308 |

+

A_qk=A_qk,

|

| 309 |

+

kg=kg,

|

| 310 |

+

do=do,

|

| 311 |

+

dv=dv,

|

| 312 |

+

dh=dh,

|

| 313 |

+

cu_seqlens=cu_seqlens,

|

| 314 |

+

chunk_indices=chunk_indices,

|

| 315 |

+

T=T,

|

| 316 |

+

H=H,

|

| 317 |

+

K=K,

|

| 318 |

+

V=V,

|

| 319 |

+

BT=BT,

|

| 320 |

+

)

|

| 321 |

+

return dv

|

| 322 |

+

|

| 323 |

+

|

| 324 |

+

def chunk_dplr_bwd_o(

|

| 325 |

+

k: torch.Tensor,

|

| 326 |

+

b: torch.Tensor,

|

| 327 |

+

v: torch.Tensor,

|

| 328 |

+

v_new: torch.Tensor,

|

| 329 |

+

gk: torch.Tensor,

|

| 330 |

+

do: torch.Tensor,

|

| 331 |

+

h: torch.Tensor,

|

| 332 |

+

dh: torch.Tensor,

|

| 333 |

+

dv: torch.Tensor,

|

| 334 |

+

w: torch.Tensor,

|

| 335 |

+

cu_seqlens: Optional[torch.LongTensor] = None,

|

| 336 |

+

chunk_size: int = 64,

|

| 337 |

+

scale: float = 1.0,

|

| 338 |

+

) -> Tuple[torch.Tensor, torch.Tensor, torch.Tensor]:

|

| 339 |

+

|

| 340 |

+

B, T, H, K, V = *w.shape, v.shape[-1]

|

| 341 |

+

|

| 342 |

+

BT = min(chunk_size, max(16, triton.next_power_of_2(T)))

|

| 343 |

+

chunk_indices = prepare_chunk_indices(cu_seqlens, BT) if cu_seqlens is not None else None

|

| 344 |

+

NT = triton.cdiv(T, BT) if cu_seqlens is None else len(chunk_indices)

|

| 345 |

+

|

| 346 |

+

BK = min(triton.next_power_of_2(K), 64) if check_shared_mem() else min(triton.next_power_of_2(K), 32)

|

| 347 |

+

BV = min(triton.next_power_of_2(V), 64) if check_shared_mem() else min(triton.next_power_of_2(K), 32)

|

| 348 |

+

NK = triton.cdiv(K, BK)

|

| 349 |

+

dq = torch.empty_like(k)

|

| 350 |

+

dk = torch.empty_like(k)

|

| 351 |

+

dw = torch.empty_like(w)

|

| 352 |

+

db = torch.empty_like(b)

|

| 353 |

+

grid = (NK, NT, B * H)

|

| 354 |

+

|

| 355 |

+

dgk_last = torch.empty(B, NT, H, K, dtype=torch.float, device=w.device)

|

| 356 |

+

|

| 357 |

+

chunk_dplr_bwd_o_kernel[grid](

|

| 358 |

+

k=k,

|

| 359 |

+

b=b,

|

| 360 |

+

v=v,

|

| 361 |

+

v_new=v_new,

|

| 362 |

+

h=h,

|

| 363 |

+

do=do,

|

| 364 |

+

dh=dh,

|

| 365 |

+

dq=dq,

|

| 366 |

+

dk=dk,

|

| 367 |

+

db=db,

|

| 368 |

+

dgk_last=dgk_last,

|

| 369 |

+

w=w,

|

| 370 |

+

dv=dv,

|

| 371 |

+

dw=dw,

|

| 372 |

+

gk=gk,

|

| 373 |

+

cu_seqlens=cu_seqlens,

|

| 374 |

+

chunk_indices=chunk_indices,

|

| 375 |

+

T=T,

|

| 376 |

+

H=H,

|

| 377 |

+

K=K,

|

| 378 |

+

V=V,

|

| 379 |

+

BT=BT,

|

| 380 |

+

BK=BK,

|

| 381 |

+

BV=BV,

|

| 382 |

+

)

|

| 383 |

+

return dq, dk, dw, db, dgk_last

|

| 384 |

+

|

| 385 |

+

|

| 386 |

+

def chunk_dplr_bwd_dAu(

|

| 387 |

+

v: torch.Tensor,

|

| 388 |

+

v_new: torch.Tensor,

|

| 389 |

+

do: torch.Tensor,

|

| 390 |

+

A_qb: torch.Tensor,

|

| 391 |

+

scale: float,

|

| 392 |

+

cu_seqlens: Optional[torch.LongTensor] = None,

|

| 393 |

+

chunk_size: int = 64

|

| 394 |

+

) -> torch.Tensor:

|

| 395 |

+

B, T, H, V = v.shape

|

| 396 |

+

BT = min(chunk_size, max(16, triton.next_power_of_2(T)))

|

| 397 |

+

chunk_indices = prepare_chunk_indices(cu_seqlens, BT) if cu_seqlens is not None else None

|

| 398 |

+

NT = triton.cdiv(T, BT) if cu_seqlens is None else len(chunk_indices)

|

| 399 |

+

|

| 400 |

+

if check_shared_mem('ampere'): # A100

|

| 401 |

+

BV = min(triton.next_power_of_2(V), 128)

|

| 402 |

+

elif check_shared_mem('ada'): # 4090

|

| 403 |

+

BV = min(triton.next_power_of_2(V), 64)

|

| 404 |

+

else:

|

| 405 |

+

BV = min(triton.next_power_of_2(V), 32)

|

| 406 |

+

|

| 407 |

+

grid = (NT, B * H)

|

| 408 |

+

dA_qk = torch.empty(B, T, H, BT, dtype=torch.float, device=v.device)

|

| 409 |

+

dA_qb = torch.empty(B, T, H, BT, dtype=torch.float, device=v.device)

|

| 410 |

+

dv_new = torch.empty_like(v_new)

|

| 411 |

+

chunk_dplr_bwd_kernel_dAu[grid](

|

| 412 |

+

v=v,

|

| 413 |

+

do=do,

|

| 414 |

+

v_new=v_new,

|

| 415 |

+

A_qb=A_qb,

|

| 416 |

+

dA_qk=dA_qk,

|

| 417 |

+

dA_qb=dA_qb,

|

| 418 |

+

dv_new=dv_new,

|

| 419 |

+

cu_seqlens=cu_seqlens,

|

| 420 |

+

chunk_indices=chunk_indices,

|

| 421 |

+

scale=scale,

|

| 422 |

+

T=T,

|

| 423 |

+

H=H,

|

| 424 |

+

V=V,

|

| 425 |

+

BT=BT,

|

| 426 |

+

BV=BV,

|

| 427 |

+

)

|

| 428 |

+

return dv_new, dA_qk, dA_qb

|

build/lib/opencompass/tasks/fla2/ops/generalized_delta_rule/dplr/chunk_o_fwd.py

ADDED

|

@@ -0,0 +1,123 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+