File size: 8,183 Bytes

0772518 96e3aeb 0772518 96e3aeb ae8e8c3 96e3aeb ae8e8c3 96e3aeb ae8e8c3 96e3aeb | 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 | ---

license: other

license_link: LICENSE.md

tags:

- lighting-estimation

- hdr

- environment-map

- diffusion

- pytorch

- video

- transformer

- lora

---

## Model description

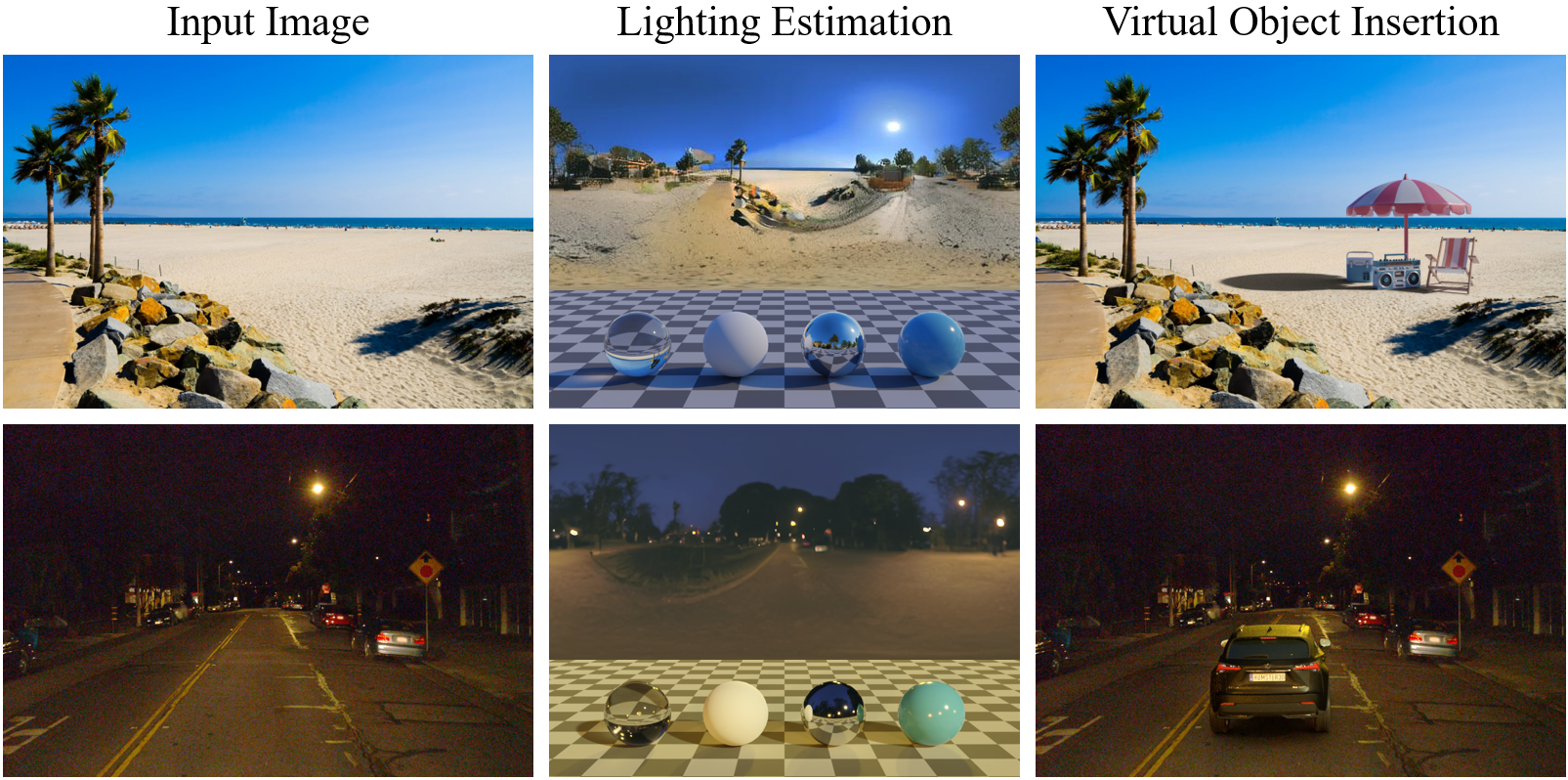

LuxDiT is a generative lighting estimation model that predicts high-quality HDR environment maps from visual input. It produces accurate lighting while preserving scene semantics, enabling realistic virtual object insertion under diverse lighting conditions. This model is ready for non-commercial use.

- **Checkpoint**: Video model (image and video-finetuned)

- **LoRA**: Included for real-scene generalization

- **Paper**: [LuxDiT: Lighting Estimation with Video Diffusion Transformer](https://arxiv.org/abs/2509.03680)

- **Project page**: https://research.nvidia.com/labs/toronto-ai/LuxDiT/

**Use case:** LuxDiT supports studies and prototyping in video lighting estimation. This release is an open-source implementation of our research paper, intended for AI research, development, and benchmarking for lighting estimation research.

### Model architecture

- **Architecture type:** Transformer (based on [CogVideoX](https://github.com/THUDM/CogVideoX))

- **Parameters:** 5B

- **Input:** RGB video frames; shape `[batch_size, num_frames, height, width, 3]`; recommended resolution 480×720

- **Output:** RGB video frames (dual tonemapped LDR and log); output resolution 256×512; use the HDR merger to obtain `.exr` HDR envmaps

### Software and hardware

- **Runtime:** Python and PyTorch

- **Supported hardware:** NVIDIA Ampere (e.g. A100 GPUs)

- **Operating system:** Linux

## How to use

### Download from Hugging Face

From the [LuxDiT repository](https://github.com/nv-tlabs/LuxDiT) root:

```bash

python download_weights.py --repo_id nvidia/LuxDiT

```

This saves the checkpoint to `checkpoints/LuxDiT` by default. Use `--local_dir` to override.

### Image Inference: synthetic images (in-domain)

```bash

DIT_PATH=checkpoints/LuxDiT/luxdit_image

INPUT_DIR=examples/input_demo/synthetic_images

OUTPUT_DIR=test_output/synthetic_images

python inference_luxdit.py \

--config configs/luxdit_base.yaml \

--transformer_path $DIT_PATH \

--input_dir $INPUT_DIR \

--output_dir $OUTPUT_DIR \

--resolution 480 720 \

--guidance_scale 2.5 \

--num_inference_steps 50 \

--seed 33

python hdr_merger.py \

--model_path checkpoints/hdr_merge_mlp \

--input_dir $OUTPUT_DIR/ldr_log \

--output_dir $OUTPUT_DIR/hdr

```

### Image Inference: real scenes (with LoRA)

Use the LoRA adapter in this checkpoint for better generalization to real photos:

```bash

DIT_PATH=checkpoints/LuxDiT/luxdit_image

LORA_PATH=checkpoints/luxdit_image/lora

INPUT_DIR=examples/input_demo/scene_images

OUTPUT_DIR=test_output/scene_images

python inference_luxdit.py \

--config configs/luxdit_base.yaml \

--transformer_path $DIT_PATH \

--lora_dir $LORA_PATH \

--lora_scale 0.8 \

--input_dir $INPUT_DIR \

--output_dir $OUTPUT_DIR \

--resolution 480 720 \

--guidance_scale 2.5 \

--num_inference_steps 50 \

--seed 33

python hdr_merger.py \

--input_dir $OUTPUT_DIR/ldr_log \

--output_dir $OUTPUT_DIR/hdr

```

Adjust `lora_scale` (e.g. 0.0–1.0) to control how much the input scene is merged into the estimated envmap.

### Video Inference: synthetic videos (in-domain)

Requires `--data_type video`:

```bash

DIT_PATH=checkpoints/LuxDiT/luxdit_video

INPUT_DIR=examples/input_demo/synthetic_videos

OUTPUT_DIR=test_output/synthetic_videos

python inference_luxdit.py \

--config configs/luxdit_base.yaml \

--transformer_path $DIT_PATH \

--input_dir $INPUT_DIR \

--output_dir $OUTPUT_DIR \

--resolution 480 720 \

--guidance_scale 2.5 \

--num_inference_steps 40 \

--seed 33 \

--data_type video

python hdr_merger.py \

--input_dir $OUTPUT_DIR/ldr_log \

--output_dir $OUTPUT_DIR/hdr

```

### Video Inference: real scenes from video (with LoRA)

Use the LoRA adapter in this checkpoint for better generalization to real video:

```bash

DIT_PATH=checkpoints/LuxDiT/luxdit_video

LORA_PATH=checkpoints/LuxDiT/luxdit_video/lora

INPUT_DIR=examples/input_demo/scene_videos

OUTPUT_DIR=test_output/scene_videos

python inference_luxdit.py \

--config configs/luxdit_base.yaml \

--transformer_path $DIT_PATH \

--lora_dir $LORA_PATH \

--lora_scale 0.8 \

--input_dir $INPUT_DIR \

--output_dir $OUTPUT_DIR \

--resolution 480 720 \

--guidance_scale 2.5 \

--num_inference_steps 40 \

--seed 33 \

--data_type video

python hdr_merger.py \

--input_dir $OUTPUT_DIR/ldr_log \

--output_dir $OUTPUT_DIR/hdr

```

Adjust `lora_scale` (e.g. 0.0–1.0) to control how much the input scene is merged into the estimated envmap.

### Inference: object video scan with camera poses

For multi-view object captures, you can optionally provide camera poses (`--camera_pose_file`, OpenCV format) to align the estimated envmap to a canonical layout:

```bash

DIT_PATH=checkpoints/LuxDiT/luxdit_video

LORA_PATH=checkpoints/LuxDiT/luxdit_video/lora

INPUT_DIR=examples/input_demo/object_scans/antman

OUTPUT_DIR=test_output/object_scans/antman

CAM_FILE=examples/input_demo/object_scans/antman/antman.camera.json

python inference_luxdit.py \

--config configs/luxdit_base.yaml \

--transformer_path $DIT_PATH \

--lora_dir $LORA_PATH \

--lora_scale 0.0 \

--input_dir $INPUT_DIR \

--output_dir $OUTPUT_DIR \

--resolution 512 512 \

--guidance_scale 2.5 \

--num_inference_steps 40 \

--seed 33 \

--data_type video \

--camera_pose_file $CAM_FILE

python hdr_merger.py \

--input_dir $OUTPUT_DIR/ldr_log \

--output_dir $OUTPUT_DIR/hdr

```

## Related checkpoints

| Checkpoint | Description |

|-------------|-------------|

| [luxdit_image](https://huggingface.co/nvidia/LuxDiT/tree/main/luxdit_image) | Image-finetuned, with LoRA for real scenes |

| [luxdit_video](https://huggingface.co/nvidia/LuxDiT/tree/main/luxdit_video) | Video-finetuned, with LoRA for real scenes |

For video inputs and object scans, use **luxdit_video** instead.

## Training data

This checkpoint is video-finetuned on the **SyntheticScenes** dataset:

- **Modality:** Video

- **Scale:** ~108,000 rendered videos; each video has 57 frames at 704×1280 resolution

- **Collection:** Synthetic data generated with an OptiX-based physically based path tracer

- **Labels:** Produced by the renderer (no manual labeling)

- **Per sample:** Input RGB (LDR) video and HDR environment lighting

Testing and evaluation use held-out 10% splits of the same dataset.

## Output format

The model outputs dual tonemapped environment maps (LDR and log); use the HDR merger to get `.exr` HDR envmaps. By default, the camera pose of the input image defines the world frame. See the [main README](https://github.com/NVIDIA/LuxDiT#output-format) for the exact layout of LDR/log vs merged HDR.

## Ethical considerations

NVIDIA believes Trustworthy AI is a shared responsibility. When using this model in accordance with the terms of service, ensure it meets requirements for your use case and addresses potential misuse. You are responsible for having proper rights and permissions for all input image and video content; if content includes people, personal health information, or intellectual property, generated outputs will not blur or preserve proportions of subjects. Users are responsible for model inputs and outputs and for implementing appropriate guardrails and safety mechanisms before deployment. To report model quality, risk, security vulnerabilities, or other concerns, see [NVIDIA AI Concerns](https://app.intigriti.com/programs/nvidia/nvidiavdp/detail).

## License

NVIDIA OneWay Noncommercial License. See the [LICENSE](LICENSE.md) in the LuxDiT repository.

## Citation

```bibtex

@article{liang2025luxdit,

title={Luxdit: Lighting estimation with video diffusion transformer},

author={Liang, Ruofan and He, Kai and Gojcic, Zan and Gilitschenski, Igor and Fidler, Sanja and Vijaykumar, Nandita and Wang, Zian},

journal={arXiv preprint arXiv:2509.03680},

year={2025}

}

```

|