Commit ·

c3c4a5f

1

Parent(s): 4e683e9

Create README.md

Browse files

README.md

ADDED

|

@@ -0,0 +1,192 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Custom Training with YOLOv7 🔥

|

| 2 |

+

|

| 3 |

+

## Some Important links

|

| 4 |

+

- [Model Inference🤖](https://huggingface.co/spaces/owaiskha9654/Custom_Yolov7)

|

| 5 |

+

- [**🚀Training Yolov7 on Kaggle**](https://www.kaggle.com/code/owaiskhan9654/training-yolov7-on-kaggle-on-custom-dataset)

|

| 6 |

+

- [Weight and Biases 🐝](https://wandb.ai/owaiskhan9515/YOLOR)

|

| 7 |

+

- [HuggingFace 🤗 Model Repo](https://huggingface.co/owaiskha9654/Yolov7_Custom_Object_Detection)

|

| 8 |

+

|

| 9 |

+

## Contact Information

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

- **Name** - Owais Ahmad

|

| 13 |

+

- **Phone** - +91-9515884381

|

| 14 |

+

- **Email** - owaiskhan9654@gmail.com

|

| 15 |

+

- **Portfolio** - https://owaiskhan9654.github.io/

|

| 16 |

+

|

| 17 |

+

# Objective

|

| 18 |

+

|

| 19 |

+

## To Showcase custom Object Detection on the Given Dataset to train and Infer the Model using newly launched YoloV7.

|

| 20 |

+

|

| 21 |

+

# Data Acquisition

|

| 22 |

+

|

| 23 |

+

The goal of this task is to train a model that

|

| 24 |

+

can localize and classify each instance of **Person** and **Car** as accurately as possible.

|

| 25 |

+

|

| 26 |

+

- [Link to the Downloadable Dataset](https://www.kaggle.com/datasets/owaiskhan9654/car-person-v2-roboflow)

|

| 27 |

+

|

| 28 |

+

|

| 29 |

+

```python

|

| 30 |

+

from IPython.display import Markdown, display

|

| 31 |

+

|

| 32 |

+

display(Markdown("../input/Car-Person-v2-Roboflow/README.roboflow.txt"))

|

| 33 |

+

```

|

| 34 |

+

|

| 35 |

+

# Custom Training with YOLOv7 🔥

|

| 36 |

+

|

| 37 |

+

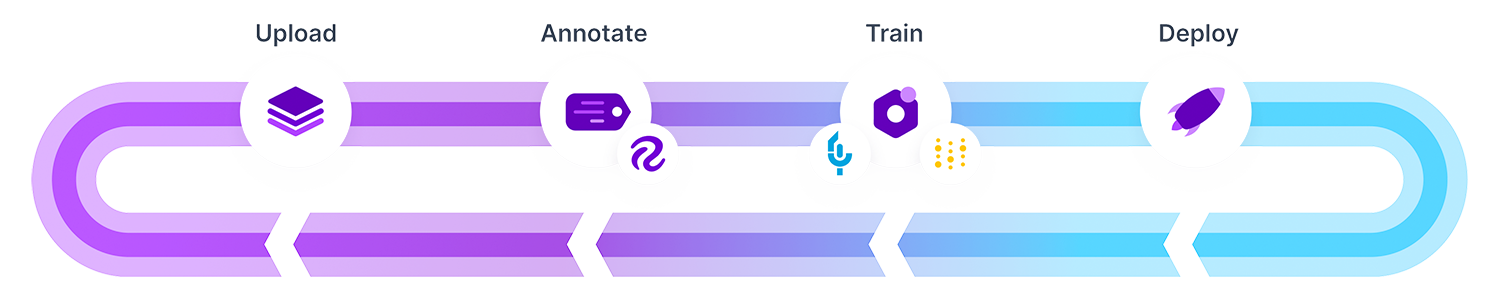

In this Notebook, I have processed the images with RoboFlow because in COCO formatted dataset was having different dimensions of image and Also data set was not splitted into different Format.

|

| 38 |

+

To train a custom YOLOv7 model we need to recognize the objects in the dataset. To do so I have taken the following steps:

|

| 39 |

+

|

| 40 |

+

* Export the dataset to YOLOv7

|

| 41 |

+

* Train YOLOv7 to recognize the objects in our dataset

|

| 42 |

+

* Evaluate our YOLOv7 model's performance

|

| 43 |

+

* Run test inference to view performance of YOLOv7 model at work

|

| 44 |

+

|

| 45 |

+

|

| 46 |

+

# 📦 [YOLOv7](https://github.com/WongKinYiu/yolov7)

|

| 47 |

+

<div align=left><img src="https://raw.githubusercontent.com/WongKinYiu/yolov7/main/figure/performance.png" width=800>

|

| 48 |

+

|

| 49 |

+

|

| 50 |

+

**Image Credit** - [WongKinYiu](https://github.com/WongKinYiu/yolov7)

|

| 51 |

+

</div>

|

| 52 |

+

# Step 1: Install Requirements

|

| 53 |

+

|

| 54 |

+

|

| 55 |

+

```python

|

| 56 |

+

!git clone https://github.com/WongKinYiu/yolov7 # Downloading YOLOv7 repository and installing requirements

|

| 57 |

+

%cd yolov7

|

| 58 |

+

!pip install -qr requirements.txt

|

| 59 |

+

!pip install -q roboflow

|

| 60 |

+

|

| 61 |

+

```

|

| 62 |

+

|

| 63 |

+

# **Downloading YOLOV7 starting checkpoint**

|

| 64 |

+

|

| 65 |

+

|

| 66 |

+

```python

|

| 67 |

+

!wget "https://github.com/WongKinYiu/yolov7/releases/download/v0.1/yolov7.pt"

|

| 68 |

+

```

|

| 69 |

+

|

| 70 |

+

|

| 71 |

+

```python

|

| 72 |

+

import os

|

| 73 |

+

import glob

|

| 74 |

+

import wandb

|

| 75 |

+

import torch

|

| 76 |

+

from roboflow import Roboflow

|

| 77 |

+

from kaggle_secrets import UserSecretsClient

|

| 78 |

+

from IPython.display import Image, clear_output, display # to display images

|

| 79 |

+

|

| 80 |

+

|

| 81 |

+

|

| 82 |

+

print(f"Setup complete. Using torch {torch.__version__} ({torch.cuda.get_device_properties(0).name if torch.cuda.is_available() else 'CPU'})")

|

| 83 |

+

```

|

| 84 |

+

|

| 85 |

+

<img src="https://camo.githubusercontent.com/dd842f7b0be57140e68b2ab9cb007992acd131c48284eaf6b1aca758bfea358b/68747470733a2f2f692e696d6775722e636f6d2f52557469567a482e706e67">

|

| 86 |

+

|

| 87 |

+

> I will be integrating W&B for visualizations and logging artifacts and comparisons of different models!

|

| 88 |

+

>

|

| 89 |

+

> [YOLOv7-Car-Person-Custom](https://wandb.ai/owaiskhan9515/YOLOR)

|

| 90 |

+

|

| 91 |

+

|

| 92 |

+

|

| 93 |

+

```python

|

| 94 |

+

try:

|

| 95 |

+

user_secrets = UserSecretsClient()

|

| 96 |

+

wandb_api_key = user_secrets.get_secret("wandb_api")

|

| 97 |

+

wandb.login(key=wandb_api_key)

|

| 98 |

+

anonymous = None

|

| 99 |

+

except:

|

| 100 |

+

wandb.login(anonymous='must')

|

| 101 |

+

print('To use your W&B account,\nGo to Add-ons -> Secrets and provide your W&B access token. Use the Label name as WANDB. \nGet your W&B access token from here: https://wandb.ai/authorize')

|

| 102 |

+

|

| 103 |

+

|

| 104 |

+

|

| 105 |

+

wandb.init(project="YOLOv7",name=f"7. YOLOv7-Car-Person-Custom-Run-7")

|

| 106 |

+

```

|

| 107 |

+

|

| 108 |

+

# Step 2: Assemble Our Dataset

|

| 109 |

+

|

| 110 |

+

|

| 111 |

+

|

| 112 |

+

|

| 113 |

+

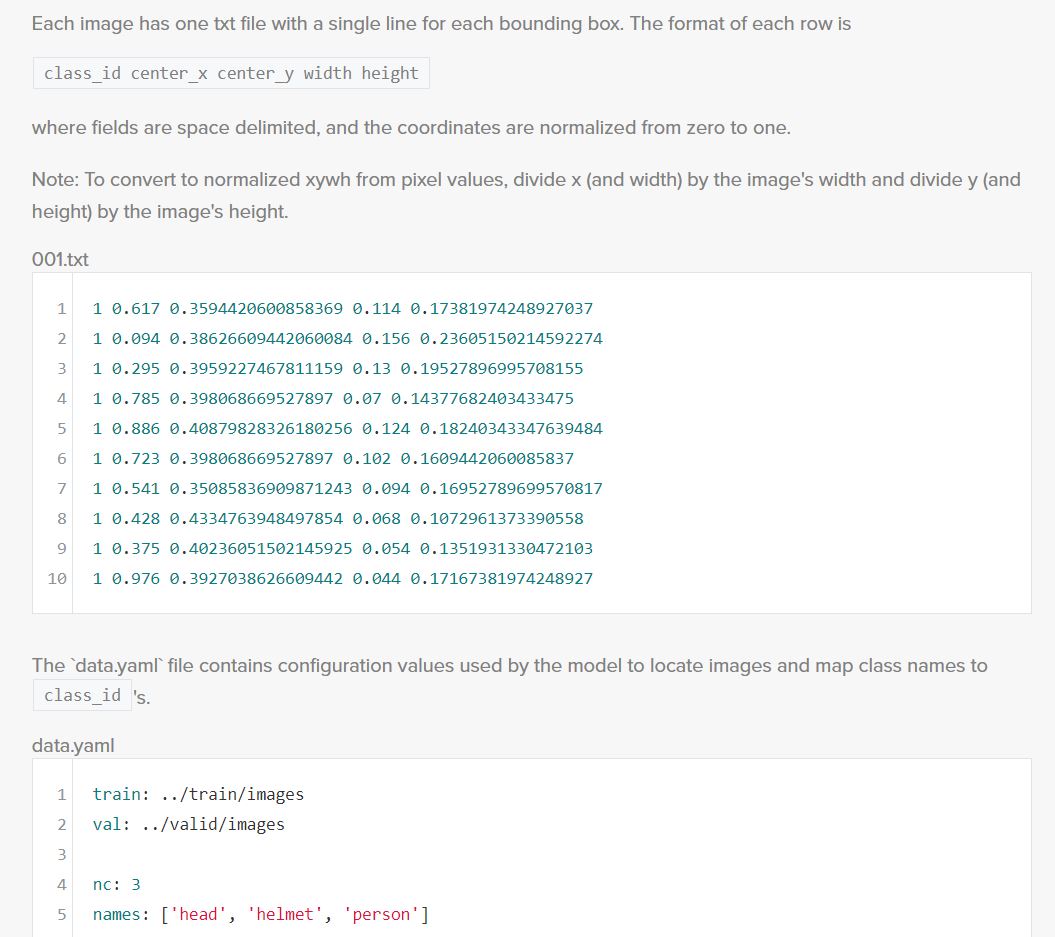

In order to train our custom model, we need to assemble a dataset of representative images with bounding box annotations around the objects that we want to detect. And we need our dataset to be in YOLOv7 format.

|

| 114 |

+

|

| 115 |

+

In Roboflow, We can choose between two paths:

|

| 116 |

+

|

| 117 |

+

* Convert an existing Coco dataset to YOLOv7 format. In Roboflow it supports over [30 formats object detection formats](https://roboflow.com/formats) for conversion.

|

| 118 |

+

* Uploading only these raw images and annotate them in Roboflow with [Roboflow Annotate](https://docs.roboflow.com/annotate).

|

| 119 |

+

|

| 120 |

+

|

| 121 |

+

|

| 122 |

+

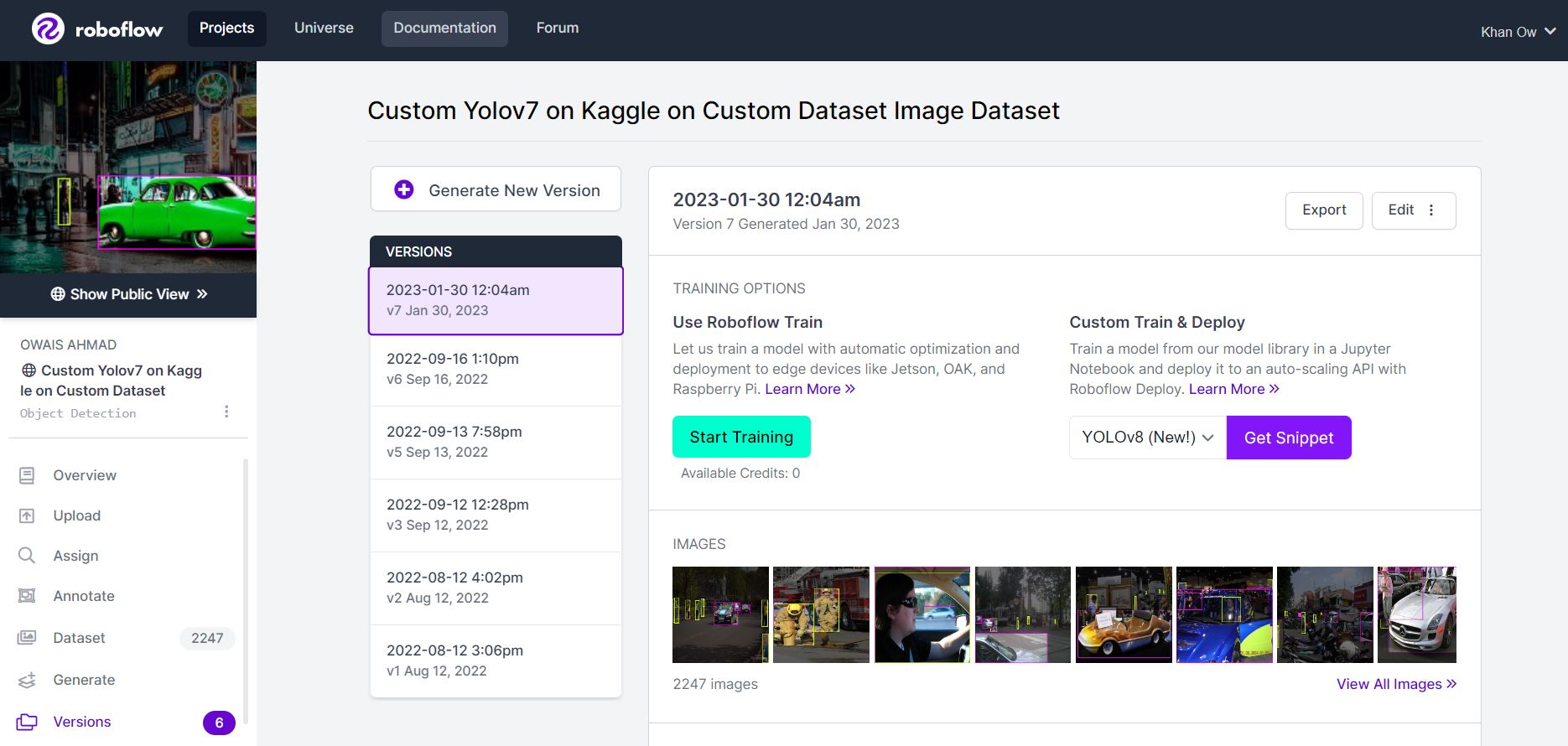

# Version v7 Jan 30, 2023 Looks like this.

|

| 123 |

+

|

| 124 |

+

|

| 125 |

+

|

| 126 |

+

|

| 127 |

+

### Since paid credits are required to train the model on RoboFlow I have used Kaggle Free resources to train it here

|

| 128 |

+

|

| 129 |

+

|

| 130 |

+

|

| 131 |

+

### Note you can import any other data from other sources. Just remember to keep in the Yolov7 Pytorch form accept

|

| 132 |

+

|

| 133 |

+

|

| 134 |

+

|

| 135 |

+

```python

|

| 136 |

+

user_secrets = UserSecretsClient()

|

| 137 |

+

roboflow_api_key = user_secrets.get_secret("roboflow_api")

|

| 138 |

+

```

|

| 139 |

+

|

| 140 |

+

|

| 141 |

+

```python

|

| 142 |

+

rf = Roboflow(api_key=roboflow_api_key)

|

| 143 |

+

project = rf.workspace("owais-ahmad").project("custom-yolov7-on-kaggle-on-custom-dataset-rakiq")

|

| 144 |

+

dataset = project.version(2).download("yolov7")

|

| 145 |

+

```

|

| 146 |

+

|

| 147 |

+

# Step 3: Training Custom pretrained YOLOv7 model

|

| 148 |

+

|

| 149 |

+

Here, I am able to pass a number of arguments:

|

| 150 |

+

- **img:** define input image size

|

| 151 |

+

- **batch:** determine batch size

|

| 152 |

+

- **epochs:** define the number of training epochs. (Note: often, 3000+ are common here nut since I am using free version of colab I will be only defining it to 20!)

|

| 153 |

+

- **data:** Our dataset locaiton is saved in the `./yolov7/Custom-Yolov7-on-Kaggle-on-Custom-Dataset-2` folder.

|

| 154 |

+

- **weights:** specifying a path to weights to start transfer learning from. Here I have choosen a generic COCO pretrained checkpoint.

|

| 155 |

+

- **cache:** caching images for faster training

|

| 156 |

+

|

| 157 |

+

|

| 158 |

+

```python

|

| 159 |

+

!python train.py --batch 16 --cfg cfg/training/yolov7.yaml --epochs 30 --data {dataset.location}/data.yaml --weights 'yolov7.pt' --device 0

|

| 160 |

+

|

| 161 |

+

```

|

| 162 |

+

|

| 163 |

+

# Run Inference With Trained Weights

|

| 164 |

+

Testing inference with a pretrained checkpoint on contents of `./Custom-Yolov7-on-Kaggle-on-Custom-Dataset-2/test/images` folder downloaded from Roboflow.

|

| 165 |

+

|

| 166 |

+

|

| 167 |

+

```python

|

| 168 |

+

!python detect.py --weights runs/train/exp/weights/best.pt --img 416 --conf 0.75 --source ./Custom-Yolov7-on-Kaggle-on-Custom-Dataset-2/test/images

|

| 169 |

+

```

|

| 170 |

+

|

| 171 |

+

# Display inference on ALL test images

|

| 172 |

+

|

| 173 |

+

|

| 174 |

+

```python

|

| 175 |

+

for images in glob.glob('runs/detect/exp/*.jpg')[0:10]:

|

| 176 |

+

display(Image(filename=images))

|

| 177 |

+

```

|

| 178 |

+

|

| 179 |

+

|

| 180 |

+

```python

|

| 181 |

+

model = torch.load('runs/train/exp/weights/best.pt')

|

| 182 |

+

```

|

| 183 |

+

|

| 184 |

+

# Conclusion and Next Steps

|

| 185 |

+

|

| 186 |

+

Now this trained custom YOLOv7 model can be used to recognize **Person** and **Cars** form any given Images.

|

| 187 |

+

|

| 188 |

+

To improve the model's performance, I might perform more interating on the datasets coverage,propper annotations and and Image quality. From orignal authors of **Yolov7** this guide has been given for [model performance improvement](https://github.com/WongKinYiu/yolov7).

|

| 189 |

+

|

| 190 |

+

To deploy our model to an application by [exporting your model to deployment destinations](https://github.com/WongKinYiu/yolov7/issues).

|

| 191 |

+

|

| 192 |

+

Once our model is in production, I will be willing to continually iterate and improve on your dataset and model via [active learning](https://blog.roboflow.com/what-is-active-learning/).

|