Commit ·

c9e9ae7

1

Parent(s): 57135e7

Update README.md

Browse files

README.md

CHANGED

|

@@ -35,9 +35,7 @@ Here are the results on metrics used by [HuggingFaceH4 Open LLM Leaderboard](htt

|

|

| 35 |

|**Total Average**|**0.6503**|

|

| 36 |

|

| 37 |

|

| 38 |

-

##

|

| 39 |

-

|

| 40 |

-

Here is the prompt format

|

| 41 |

|

| 42 |

```

|

| 43 |

### System:

|

|

@@ -50,6 +48,23 @@ Tell me about Orcas.

|

|

| 50 |

|

| 51 |

```

|

| 52 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 53 |

Below shows a code example on how to use this model

|

| 54 |

|

| 55 |

```python

|

|

|

|

| 35 |

|**Total Average**|**0.6503**|

|

| 36 |

|

| 37 |

|

| 38 |

+

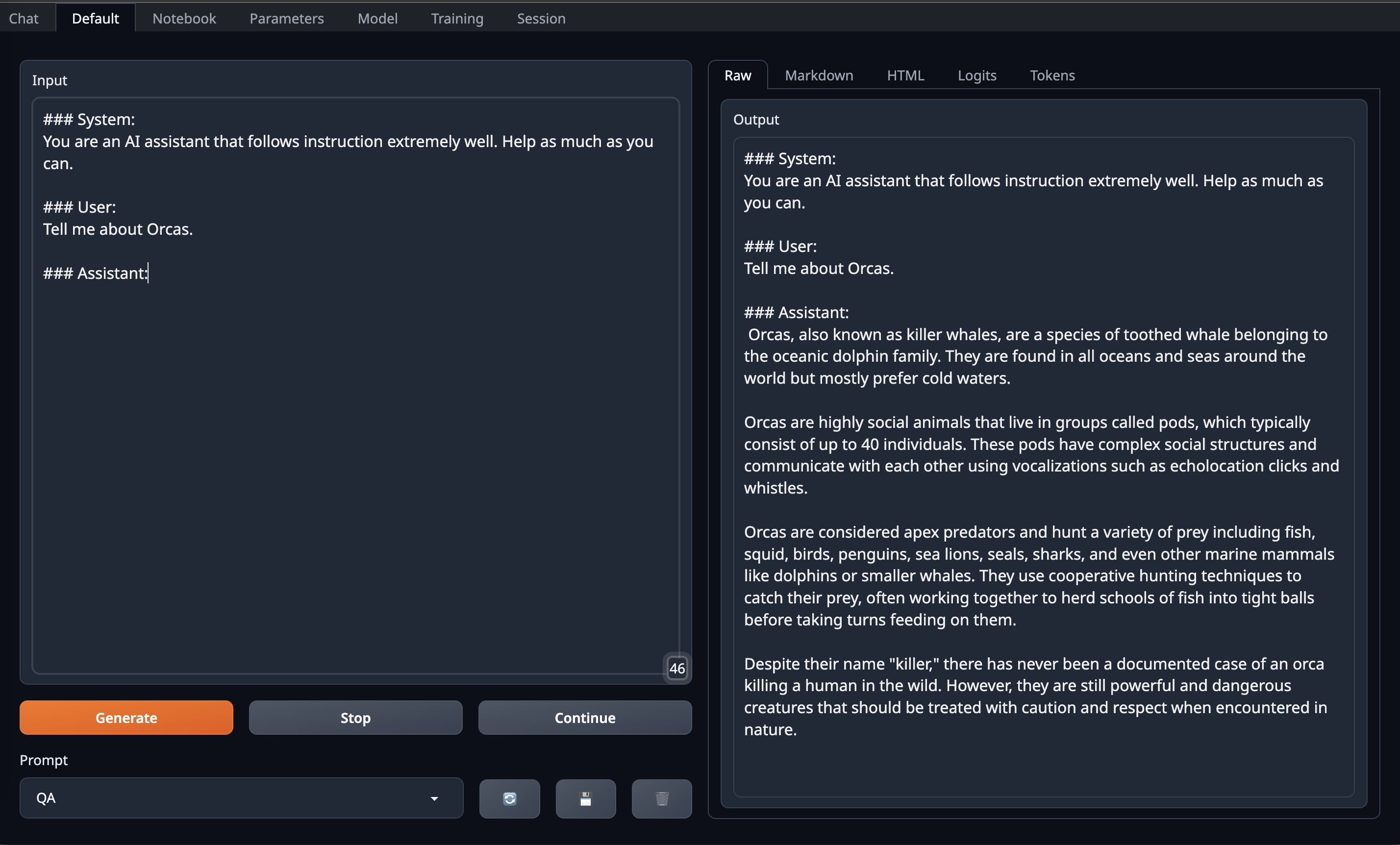

### Prompt Format

|

|

|

|

|

|

|

| 39 |

|

| 40 |

```

|

| 41 |

### System:

|

|

|

|

| 48 |

|

| 49 |

```

|

| 50 |

|

| 51 |

+

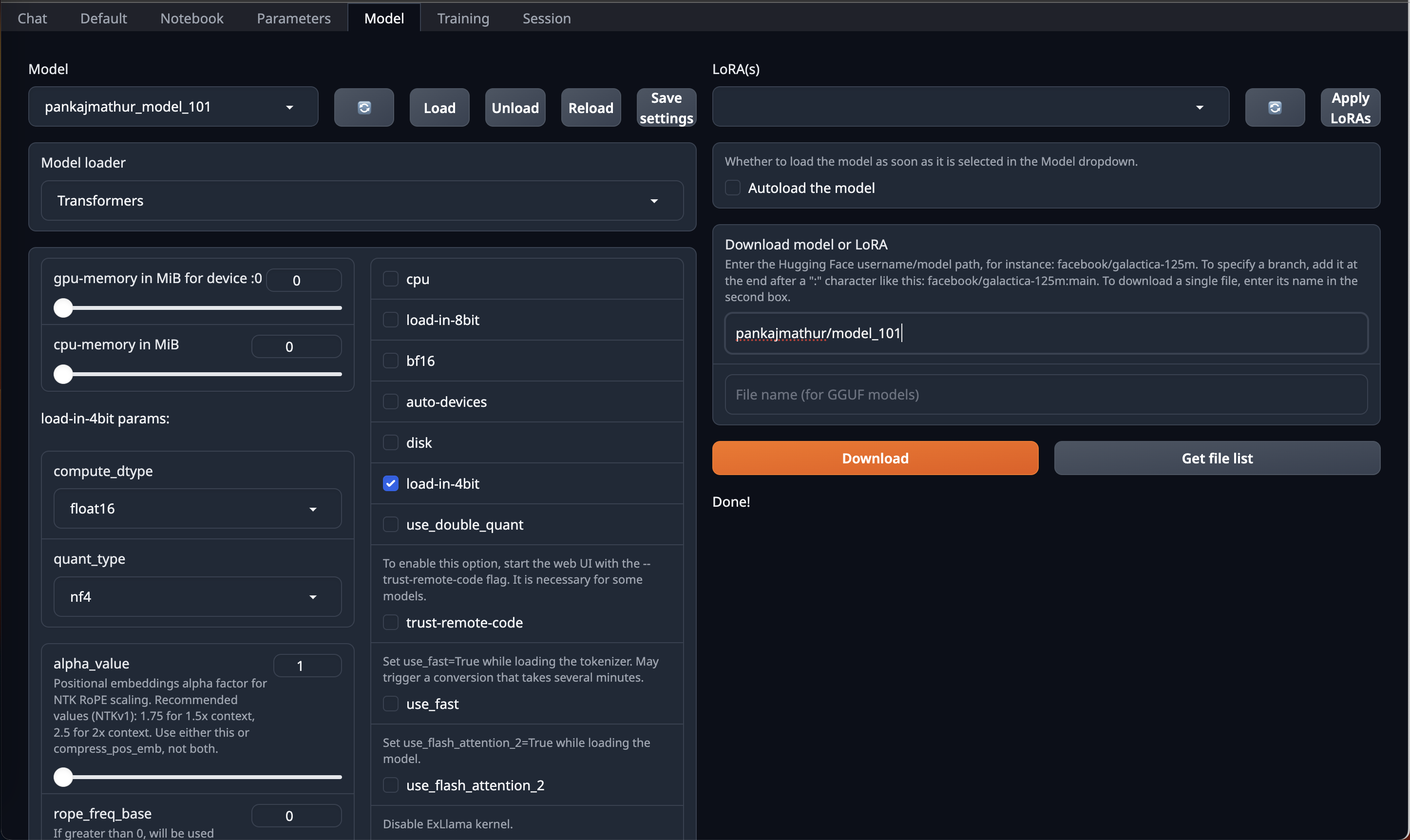

#### OobaBooga Instructions:

|

| 52 |

+

|

| 53 |

+

This model required upto 45GB GPU VRAM in 4bit so it can be loaded directly on Single RTX 6000/L40/A40/A100/H100 GPU or Double RTX 4090/L4/A10/RTX 3090/RTX A5000

|

| 54 |

+

So, if you have access to Machine with 45GB GPU VRAM and have installed [OobaBooga Web UI](https://github.com/oobabooga/text-generation-webui) on it.

|

| 55 |

+

You can just download this model by using HF repo link directly on OobaBooga Web UI "Model" Tab/Page & Just use **load-in-4bit** option in it.

|

| 56 |

+

|

| 57 |

+

|

| 58 |

+

|

| 59 |

+

|

| 60 |

+

After that go to Default Tab/Page on OobaBooga Web UI and **copy paste above prompt format into Input** and Enjoy!

|

| 61 |

+

|

| 62 |

+

|

| 63 |

+

|

| 64 |

+

<br>

|

| 65 |

+

|

| 66 |

+

#### Code Instructions:

|

| 67 |

+

|

| 68 |

Below shows a code example on how to use this model

|

| 69 |

|

| 70 |

```python

|