File size: 7,187 Bytes

6e26626 49f4547 6ec0b1d 6e26626 6ec0b1d e629dbe 6ec0b1d e629dbe 6ec0b1d a64eeb1 6ec0b1d 3ecd27d 6ec0b1d 425ccb9 6ec0b1d 425ccb9 3ecd27d b132119 3ecd27d 425ccb9 6ec0b1d 9bedbb7 6ec0b1d 9bedbb7 6ec0b1d 9bedbb7 6ec0b1d 425ccb9 6ec0b1d 9bedbb7 6ec0b1d 9bedbb7 6ec0b1d 9bedbb7 6ec0b1d 3ecd27d 6ec0b1d 9bedbb7 6ec0b1d 9bedbb7 6ec0b1d 9bedbb7 6ec0b1d 42ae230 | 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 | ---

language:

- en

library_name: transformers

license: llama2

datasets:

- pankajmathur/orca_mini_v1_dataset

- pankajmathur/lima_unchained_v1

- pankajmathur/WizardLM_Orca

- pankajmathur/alpaca_orca

- pankajmathur/dolly-v2_orca

- garage-bAInd/Open-Platypus

- ehartford/dolphin

---

# model_101

A hybrid (explain + instruct) style Llama2-70b model, Pleae check examples below for both style prompts, Here is the list of datasets used:

* Open-Platypus

* Alpaca

* WizardLM

* Dolly-V2

* Dolphin Samples (~200K)

* Orca_minis_v1

* Alpaca_orca

* WizardLM_orca

* Dolly-V2_orca

* Plus more datasets which I am planning to release as open source dataset sometime in future.

<br>

**P.S. If you're interested to collaborate, please connect with me at www.linkedin.com/in/pankajam.**

<br>

## Evaluation

We evaluated model_001 on a wide range of tasks using [Language Model Evaluation Harness](https://github.com/EleutherAI/lm-evaluation-harness) from EleutherAI.

Here are the results on metrics used by [HuggingFaceH4 Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)

|||

|:------:|:-------:|

|**Task**|**Value**|

|*ARC*|0.6869|

|*HellaSwag*|0.8642|

|*MMLU*|0.6992|

|*TruthfulQA*|0.5885|

|*Winogrande*|0.8208|

|*GSM8k*|0.4481|

|*DROP*|0.5510|

|**Total Average**|**0.6655**|

<br>

## Prompt Format

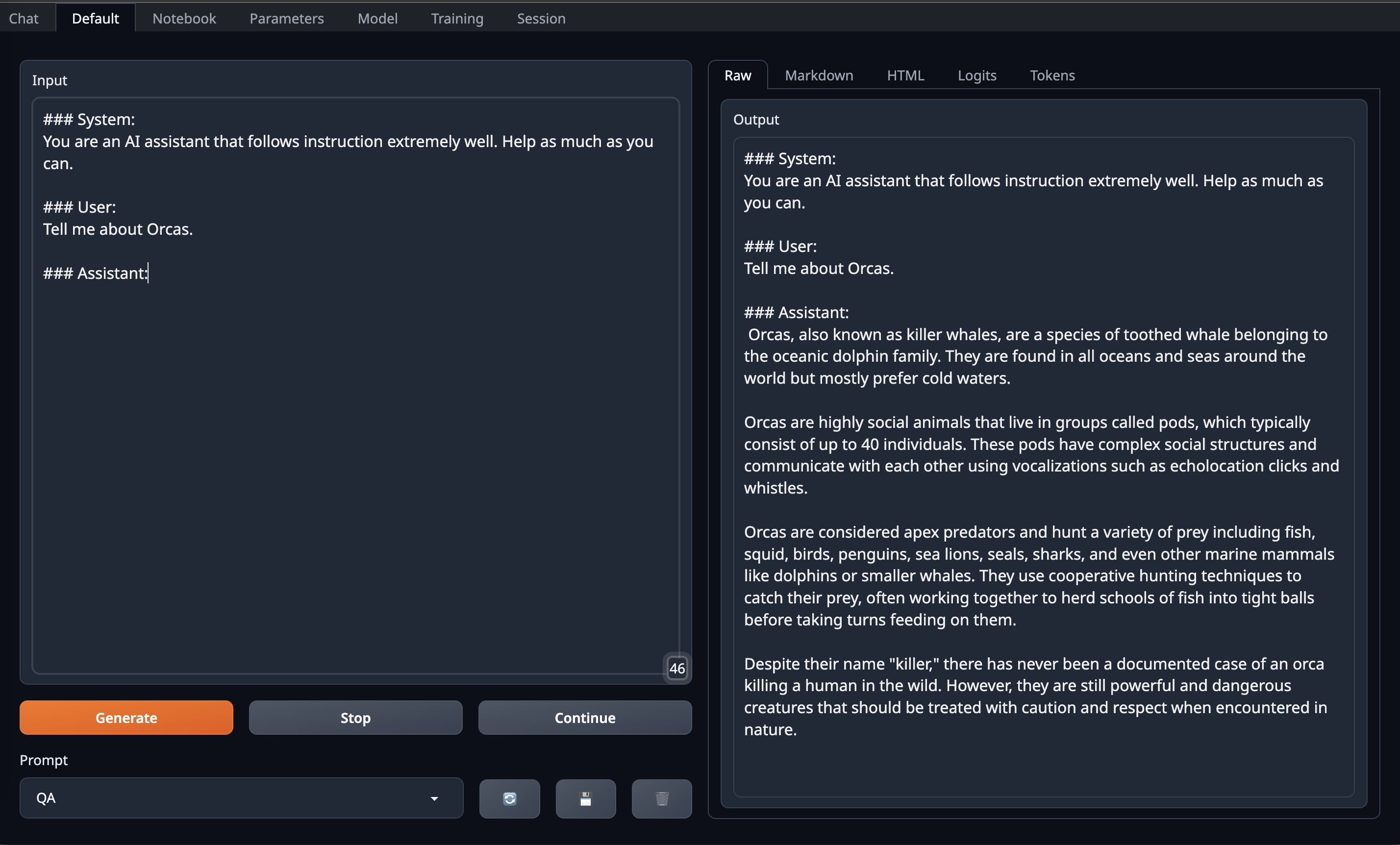

Here is the Orca prompt format

```

### System:

You are an AI assistant that follows instruction extremely well. Help as much as you can.

### User:

Tell me about Orcas.

### Assistant:

```

Here is the Alpaca prompt format

```

### User:

Tell me about Alpacas.

### Assistant:

```

#### OobaBooga Instructions:

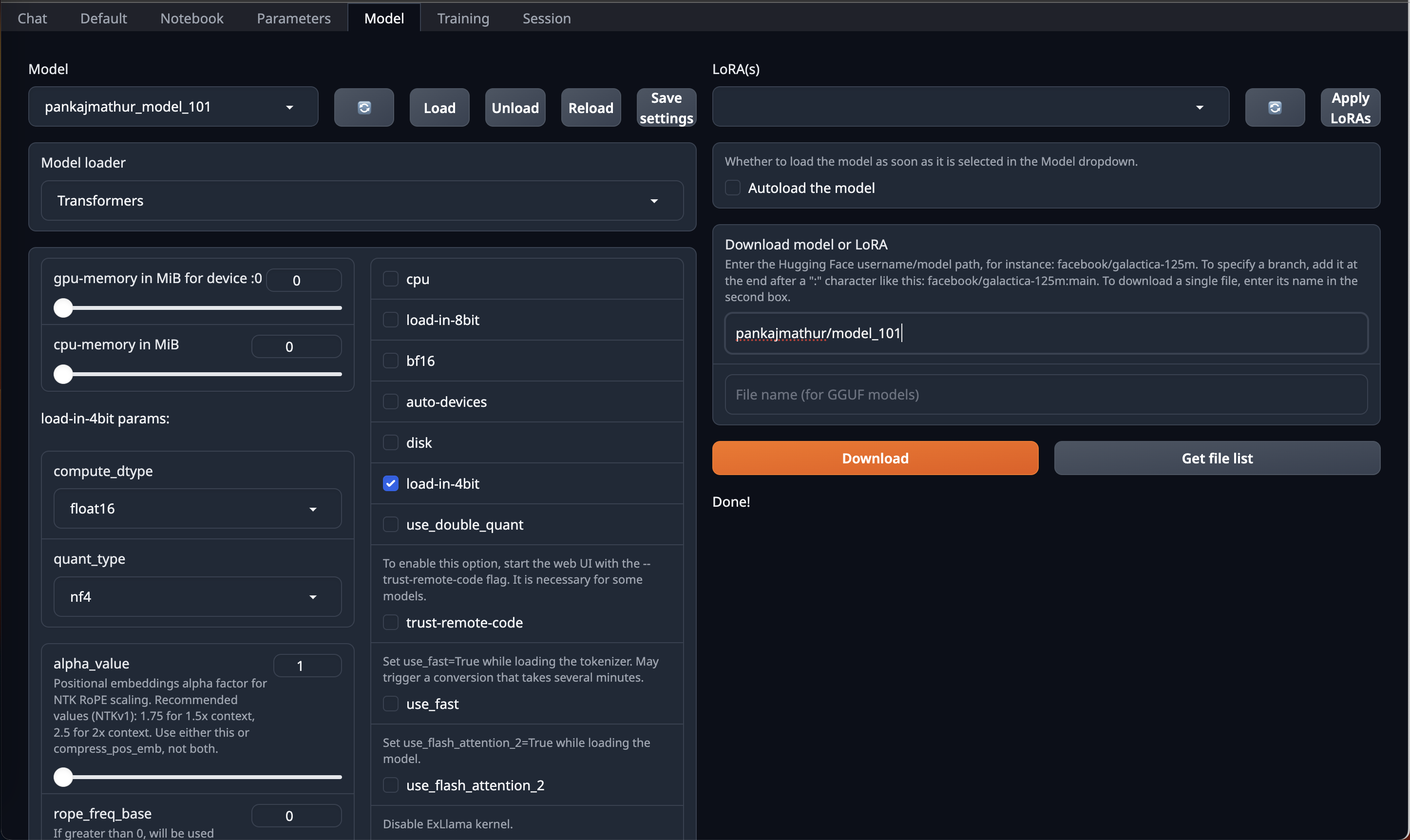

This model required upto 45GB GPU VRAM in 4bit so it can be loaded directly on Single RTX 6000/L40/A40/A100/H100 GPU or Double RTX 4090/L4/A10/RTX 3090/RTX A5000

So, if you have access to Machine with 45GB GPU VRAM and have installed [OobaBooga Web UI](https://github.com/oobabooga/text-generation-webui) on it.

You can just download this model by using HF repo link directly on OobaBooga Web UI "Model" Tab/Page & Just use **load-in-4bit** option in it.

After that go to Default Tab/Page on OobaBooga Web UI and **copy paste above prompt format into Input** and Enjoy!

<br>

#### Code Instructions:

Below shows a code example on how to use this model via Orca prompt

```python

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer, pipeline

tokenizer = AutoTokenizer.from_pretrained("pankajmathur/model_101")

model = AutoModelForCausalLM.from_pretrained(

"pankajmathur/model_101",

torch_dtype=torch.float16,

load_in_4bit=True,

low_cpu_mem_usage=True,

device_map="auto"

)

system_prompt = "### System:\nYou are an AI assistant that follows instruction extremely well. Help as much as you can.\n\n"

#generate text steps

instruction = "Tell me about Orcas."

prompt = f"{system_prompt}### User: {instruction}\n\n### Assistant:\n"

inputs = tokenizer(prompt, return_tensors="pt").to("cuda")

output = model.generate(**inputs, do_sample=True, top_p=0.95, top_k=0, max_new_tokens=4096)

print(tokenizer.decode(output[0], skip_special_tokens=True))

```

Below shows a code example on how to use this model via Alpaca prompt

```python

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer, pipeline

tokenizer = AutoTokenizer.from_pretrained("pankajmathur/model_101")

model = AutoModelForCausalLM.from_pretrained(

"pankajmathur/model_101",

torch_dtype=torch.float16,

load_in_4bit=True,

low_cpu_mem_usage=True,

device_map="auto"

)

#generate text steps

instruction = "Tell me about Alpacas."

prompt = f"### User: {instruction}\n\n### Assistant:\n"

inputs = tokenizer(prompt, return_tensors="pt").to("cuda")

output = model.generate(**inputs, do_sample=True, top_p=0.95, top_k=0, max_new_tokens=4096)

print(tokenizer.decode(output[0], skip_special_tokens=True))

```

<br>

#### license disclaimer:

This model is bound by the license & usage restrictions of the original Llama-2 model. And comes with no warranty or gurantees of any kind.

<br>

#### Limitations & Biases:

While this model aims for accuracy, it can occasionally produce inaccurate or misleading results.

Despite diligent efforts in refining the pretraining data, there remains a possibility for the generation of inappropriate, biased, or offensive content.

Exercise caution and cross-check information when necessary.

<br>

### Citiation:

Please kindly cite using the following BibTeX:

```

@misc{model_101,

author = {Pankaj Mathur},

title = {model_101: A hybrid (explain + instruct) style Llama2-70b model},

month = {August},

year = {2023},

publisher = {HuggingFace},

journal = {HuggingFace repository},

howpublished = {\url{https://https://huggingface.co/pankajmathur/model_101},

}

```

```

@misc{mukherjee2023orca,

title={Orca: Progressive Learning from Complex Explanation Traces of GPT-4},

author={Subhabrata Mukherjee and Arindam Mitra and Ganesh Jawahar and Sahaj Agarwal and Hamid Palangi and Ahmed Awadallah},

year={2023},

eprint={2306.02707},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

```

@software{touvron2023llama2,

title={Llama 2: Open Foundation and Fine-Tuned Chat Models},

author={Hugo Touvron, Louis Martin, Kevin Stone, Peter Albert, Amjad Almahairi, Yasmine Babaei, Nikolay Bashlykov, Soumya Batra, Prajjwal Bhargava,

Shruti Bhosale, Dan Bikel, Lukas Blecher, Cristian Canton Ferrer, Moya Chen, Guillem Cucurull, David Esiobu, Jude Fernandes, Jeremy Fu, Wenyin Fu, Brian Fuller,

Cynthia Gao, Vedanuj Goswami, Naman Goyal, Anthony Hartshorn, Saghar Hosseini, Rui Hou, Hakan Inan, Marcin Kardas, Viktor Kerkez Madian Khabsa, Isabel Kloumann,

Artem Korenev, Punit Singh Koura, Marie-Anne Lachaux, Thibaut Lavril, Jenya Lee, Diana Liskovich, Yinghai Lu, Yuning Mao, Xavier Martinet, Todor Mihaylov,

Pushkar Mishra, Igor Molybog, Yixin Nie, Andrew Poulton, Jeremy Reizenstein, Rashi Rungta, Kalyan Saladi, Alan Schelten, Ruan Silva, Eric Michael Smith,

Ranjan Subramanian, Xiaoqing Ellen Tan, Binh Tang, Ross Taylor, Adina Williams, Jian Xiang Kuan, Puxin Xu , Zheng Yan, Iliyan Zarov, Yuchen Zhang, Angela Fan,

Melanie Kambadur, Sharan Narang, Aurelien Rodriguez, Robert Stojnic, Sergey Edunov, Thomas Scialom},

year={2023}

}

```

# [Open LLM Leaderboard Evaluation Results](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)

Detailed results can be found [here](https://huggingface.co/datasets/open-llm-leaderboard/details_psmathur__model_101)

| Metric | Value |

|-----------------------|---------------------------|

| Avg. | 66.55 |

| ARC (25-shot) | 68.69 |

| HellaSwag (10-shot) | 86.42 |

| MMLU (5-shot) | 69.92 |

| TruthfulQA (0-shot) | 58.85 |

| Winogrande (5-shot) | 82.08 |

| GSM8K (5-shot) | 44.81 |

| DROP (3-shot) | 55.1 |

|