v0.45.0

Browse filesSee https://github.com/quic/ai-hub-models/releases/v0.45.0 for changelog.

- LICENSE +1 -0

- README.md +236 -0

- precompiled/qualcomm-qcm6690/InternImage_w8a8.bin +3 -0

- precompiled/qualcomm-qcm6690/InternImage_w8a8.onnx.zip +3 -0

- precompiled/qualcomm-qcm6690/tool-versions.yaml +4 -0

- precompiled/qualcomm-qcs6490/InternImage_w8a8.bin +3 -0

- precompiled/qualcomm-qcs6490/InternImage_w8a8.onnx.zip +3 -0

- precompiled/qualcomm-qcs6490/tool-versions.yaml +4 -0

- precompiled/qualcomm-qcs8275-proxy/InternImage_float.bin +3 -0

- precompiled/qualcomm-qcs8275-proxy/InternImage_w8a8.bin +3 -0

- precompiled/qualcomm-qcs8275-proxy/tool-versions.yaml +3 -0

- precompiled/qualcomm-qcs8450-proxy/InternImage_float.bin +3 -0

- precompiled/qualcomm-qcs8450-proxy/InternImage_w8a8.bin +3 -0

- precompiled/qualcomm-qcs8450-proxy/tool-versions.yaml +3 -0

- precompiled/qualcomm-qcs8550-proxy/InternImage_float.bin +3 -0

- precompiled/qualcomm-qcs8550-proxy/InternImage_float.onnx.zip +3 -0

- precompiled/qualcomm-qcs8550-proxy/InternImage_w8a8.bin +3 -0

- precompiled/qualcomm-qcs8550-proxy/InternImage_w8a8.onnx.zip +3 -0

- precompiled/qualcomm-qcs8550-proxy/tool-versions.yaml +4 -0

- precompiled/qualcomm-qcs9075-proxy/InternImage_float.bin +3 -0

- precompiled/qualcomm-qcs9075-proxy/InternImage_w8a8.bin +3 -0

- precompiled/qualcomm-qcs9075-proxy/tool-versions.yaml +3 -0

- precompiled/qualcomm-snapdragon-7gen4/InternImage_w8a8.bin +3 -0

- precompiled/qualcomm-snapdragon-7gen4/InternImage_w8a8.onnx.zip +3 -0

- precompiled/qualcomm-snapdragon-7gen4/tool-versions.yaml +4 -0

- precompiled/qualcomm-snapdragon-8-elite-for-galaxy/InternImage_float.bin +3 -0

- precompiled/qualcomm-snapdragon-8-elite-for-galaxy/InternImage_float.onnx.zip +3 -0

- precompiled/qualcomm-snapdragon-8-elite-for-galaxy/InternImage_w8a8.bin +3 -0

- precompiled/qualcomm-snapdragon-8-elite-for-galaxy/InternImage_w8a8.onnx.zip +3 -0

- precompiled/qualcomm-snapdragon-8-elite-for-galaxy/tool-versions.yaml +4 -0

- precompiled/qualcomm-snapdragon-8-elite-gen5/InternImage_float.bin +3 -0

- precompiled/qualcomm-snapdragon-8-elite-gen5/InternImage_float.onnx.zip +3 -0

- precompiled/qualcomm-snapdragon-8-elite-gen5/InternImage_w8a8.bin +3 -0

- precompiled/qualcomm-snapdragon-8-elite-gen5/InternImage_w8a8.onnx.zip +3 -0

- precompiled/qualcomm-snapdragon-8-elite-gen5/tool-versions.yaml +4 -0

- precompiled/qualcomm-snapdragon-8gen3/InternImage_float.bin +3 -0

- precompiled/qualcomm-snapdragon-8gen3/InternImage_float.onnx.zip +3 -0

- precompiled/qualcomm-snapdragon-8gen3/InternImage_w8a8.bin +3 -0

- precompiled/qualcomm-snapdragon-8gen3/InternImage_w8a8.onnx.zip +3 -0

- precompiled/qualcomm-snapdragon-8gen3/tool-versions.yaml +4 -0

- precompiled/qualcomm-snapdragon-x-elite/InternImage_float.bin +3 -0

- precompiled/qualcomm-snapdragon-x-elite/InternImage_float.onnx.zip +3 -0

- precompiled/qualcomm-snapdragon-x-elite/InternImage_w8a8.bin +3 -0

- precompiled/qualcomm-snapdragon-x-elite/InternImage_w8a8.onnx.zip +3 -0

- precompiled/qualcomm-snapdragon-x-elite/tool-versions.yaml +4 -0

LICENSE

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

The license of the original trained model can be found at https://github.com/OpenGVLab/InternImage/tree/master?tab=MIT-1-ov-file.

|

README.md

ADDED

|

@@ -0,0 +1,236 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

library_name: pytorch

|

| 3 |

+

license: other

|

| 4 |

+

tags:

|

| 5 |

+

- bu_auto

|

| 6 |

+

- android

|

| 7 |

+

pipeline_tag: image-classification

|

| 8 |

+

|

| 9 |

+

---

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

|

| 13 |

+

# InternImage: Optimized for Mobile Deployment

|

| 14 |

+

## InternImage Large-Scale Vision Foundation Model

|

| 15 |

+

|

| 16 |

+

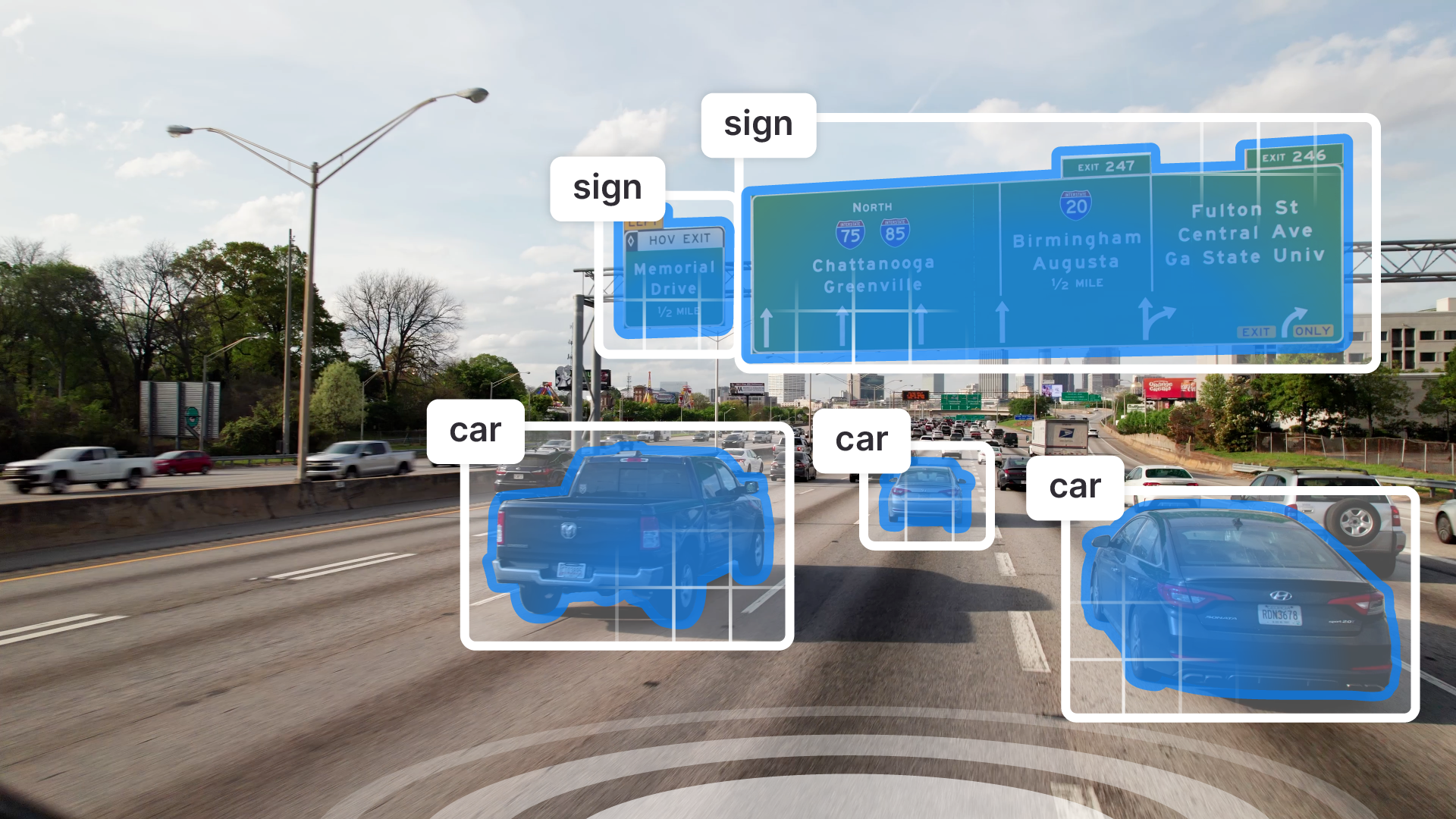

InternImage employs DCNv3 as its core operator to equips the model with dynamic and effective receptive fields required for downstream tasks like object detection and segmentation, while enabling adaptive spatial aggregation.

|

| 17 |

+

|

| 18 |

+

This repository provides scripts to run InternImage on Qualcomm® devices.

|

| 19 |

+

More details on model performance across various devices, can be found

|

| 20 |

+

[here](https://aihub.qualcomm.com/models/internimage).

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

### Model Details

|

| 25 |

+

|

| 26 |

+

- **Model Type:** Model_use_case.image_classification

|

| 27 |

+

- **Model Stats:**

|

| 28 |

+

- Model checkpoint: internimage_t_1k_224

|

| 29 |

+

- Input resolution: 1x3x224x224

|

| 30 |

+

- Number of parameters: 30.6M

|

| 31 |

+

- Model size (float): 117 MB

|

| 32 |

+

|

| 33 |

+

| Model | Precision | Device | Chipset | Target Runtime | Inference Time (ms) | Peak Memory Range (MB) | Primary Compute Unit | Target Model

|

| 34 |

+

|---|---|---|---|---|---|---|---|---|

|

| 35 |

+

| InternImage | float | QCS8275 (Proxy) | Qualcomm® QCS8275 (Proxy) | QNN_CONTEXT_BINARY | 95.662 ms | 1 - 9 MB | NPU | Use Export Script |

|

| 36 |

+

| InternImage | float | QCS8450 (Proxy) | Qualcomm® QCS8450 (Proxy) | QNN_CONTEXT_BINARY | 60.046 ms | 1 - 11 MB | NPU | Use Export Script |

|

| 37 |

+

| InternImage | float | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | QNN_CONTEXT_BINARY | 50.336 ms | 1 - 3 MB | NPU | Use Export Script |

|

| 38 |

+

| InternImage | float | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | PRECOMPILED_QNN_ONNX | 50.286 ms | 0 - 81 MB | NPU | Use Export Script |

|

| 39 |

+

| InternImage | float | QCS9075 (Proxy) | Qualcomm® QCS9075 (Proxy) | QNN_CONTEXT_BINARY | 52.454 ms | 1 - 10 MB | NPU | Use Export Script |

|

| 40 |

+

| InternImage | float | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | QNN_CONTEXT_BINARY | 35.017 ms | 1 - 19 MB | NPU | Use Export Script |

|

| 41 |

+

| InternImage | float | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | PRECOMPILED_QNN_ONNX | 34.782 ms | 3 - 10 MB | NPU | Use Export Script |

|

| 42 |

+

| InternImage | float | Samsung Galaxy S25 | Snapdragon® 8 Elite For Galaxy Mobile | QNN_CONTEXT_BINARY | 25.639 ms | 0 - 17 MB | NPU | Use Export Script |

|

| 43 |

+

| InternImage | float | Samsung Galaxy S25 | Snapdragon® 8 Elite For Galaxy Mobile | PRECOMPILED_QNN_ONNX | 26.287 ms | 1 - 9 MB | NPU | Use Export Script |

|

| 44 |

+

| InternImage | float | Snapdragon 8 Elite Gen 5 QRD | Snapdragon® 8 Elite Gen 5 Mobile | QNN_CONTEXT_BINARY | 20.074 ms | 0 - 11 MB | NPU | Use Export Script |

|

| 45 |

+

| InternImage | float | Snapdragon 8 Elite Gen 5 QRD | Snapdragon® 8 Elite Gen 5 Mobile | PRECOMPILED_QNN_ONNX | 20.279 ms | 1 - 11 MB | NPU | Use Export Script |

|

| 46 |

+

| InternImage | float | Snapdragon X Elite CRD | Snapdragon® X Elite | QNN_CONTEXT_BINARY | 52.693 ms | 1 - 1 MB | NPU | Use Export Script |

|

| 47 |

+

| InternImage | float | Snapdragon X Elite CRD | Snapdragon® X Elite | PRECOMPILED_QNN_ONNX | 52.506 ms | 66 - 66 MB | NPU | Use Export Script |

|

| 48 |

+

| InternImage | w8a8 | Dragonwing Q-6690 MTP | Qualcomm® QCM6690 | QNN_CONTEXT_BINARY | 146.519 ms | 0 - 6 MB | NPU | Use Export Script |

|

| 49 |

+

| InternImage | w8a8 | Dragonwing Q-6690 MTP | Qualcomm® QCM6690 | PRECOMPILED_QNN_ONNX | 146.541 ms | 0 - 6 MB | NPU | Use Export Script |

|

| 50 |

+

| InternImage | w8a8 | Dragonwing RB3 Gen 2 Vision Kit | Qualcomm® QCS6490 | QNN_CONTEXT_BINARY | 86.241 ms | 0 - 2 MB | NPU | Use Export Script |

|

| 51 |

+

| InternImage | w8a8 | Dragonwing RB3 Gen 2 Vision Kit | Qualcomm® QCS6490 | PRECOMPILED_QNN_ONNX | 85.806 ms | 0 - 3 MB | NPU | Use Export Script |

|

| 52 |

+

| InternImage | w8a8 | QCS8275 (Proxy) | Qualcomm® QCS8275 (Proxy) | QNN_CONTEXT_BINARY | 82.426 ms | 0 - 8 MB | NPU | Use Export Script |

|

| 53 |

+

| InternImage | w8a8 | QCS8450 (Proxy) | Qualcomm® QCS8450 (Proxy) | QNN_CONTEXT_BINARY | 48.108 ms | 1 - 9 MB | NPU | Use Export Script |

|

| 54 |

+

| InternImage | w8a8 | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | QNN_CONTEXT_BINARY | 47.613 ms | 0 - 2 MB | NPU | Use Export Script |

|

| 55 |

+

| InternImage | w8a8 | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | PRECOMPILED_QNN_ONNX | 47.113 ms | 0 - 41 MB | NPU | Use Export Script |

|

| 56 |

+

| InternImage | w8a8 | QCS9075 (Proxy) | Qualcomm® QCS9075 (Proxy) | QNN_CONTEXT_BINARY | 222.035 ms | 0 - 7 MB | NPU | Use Export Script |

|

| 57 |

+

| InternImage | w8a8 | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | QNN_CONTEXT_BINARY | 32.489 ms | 0 - 7 MB | NPU | Use Export Script |

|

| 58 |

+

| InternImage | w8a8 | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | PRECOMPILED_QNN_ONNX | 32.397 ms | 0 - 7 MB | NPU | Use Export Script |

|

| 59 |

+

| InternImage | w8a8 | Samsung Galaxy S25 | Snapdragon® 8 Elite For Galaxy Mobile | QNN_CONTEXT_BINARY | 22.486 ms | 0 - 13 MB | NPU | Use Export Script |

|

| 60 |

+

| InternImage | w8a8 | Samsung Galaxy S25 | Snapdragon® 8 Elite For Galaxy Mobile | PRECOMPILED_QNN_ONNX | 23.461 ms | 0 - 8 MB | NPU | Use Export Script |

|

| 61 |

+

| InternImage | w8a8 | Snapdragon 7 Gen 4 QRD | Snapdragon® 7 Gen 4 Mobile | QNN_CONTEXT_BINARY | 45.092 ms | 6 - 13 MB | NPU | Use Export Script |

|

| 62 |

+

| InternImage | w8a8 | Snapdragon 7 Gen 4 QRD | Snapdragon® 7 Gen 4 Mobile | PRECOMPILED_QNN_ONNX | 45.137 ms | 6 - 13 MB | NPU | Use Export Script |

|

| 63 |

+

| InternImage | w8a8 | Snapdragon 8 Elite Gen 5 QRD | Snapdragon® 8 Elite Gen 5 Mobile | QNN_CONTEXT_BINARY | 17.335 ms | 0 - 9 MB | NPU | Use Export Script |

|

| 64 |

+

| InternImage | w8a8 | Snapdragon 8 Elite Gen 5 QRD | Snapdragon® 8 Elite Gen 5 Mobile | PRECOMPILED_QNN_ONNX | 17.872 ms | 0 - 11 MB | NPU | Use Export Script |

|

| 65 |

+

| InternImage | w8a8 | Snapdragon X Elite CRD | Snapdragon® X Elite | QNN_CONTEXT_BINARY | 48.496 ms | 0 - 0 MB | NPU | Use Export Script |

|

| 66 |

+

| InternImage | w8a8 | Snapdragon X Elite CRD | Snapdragon® X Elite | PRECOMPILED_QNN_ONNX | 47.849 ms | 39 - 39 MB | NPU | Use Export Script |

|

| 67 |

+

|

| 68 |

+

|

| 69 |

+

|

| 70 |

+

|

| 71 |

+

## Installation

|

| 72 |

+

|

| 73 |

+

|

| 74 |

+

Install the package via pip:

|

| 75 |

+

```bash

|

| 76 |

+

pip install qai-hub-models

|

| 77 |

+

```

|

| 78 |

+

|

| 79 |

+

|

| 80 |

+

## Configure Qualcomm® AI Hub Workbench to run this model on a cloud-hosted device

|

| 81 |

+

|

| 82 |

+

Sign-in to [Qualcomm® AI Hub Workbench](https://workbench.aihub.qualcomm.com/) with your

|

| 83 |

+

Qualcomm® ID. Once signed in navigate to `Account -> Settings -> API Token`.

|

| 84 |

+

|

| 85 |

+

With this API token, you can configure your client to run models on the cloud

|

| 86 |

+

hosted devices.

|

| 87 |

+

```bash

|

| 88 |

+

qai-hub configure --api_token API_TOKEN

|

| 89 |

+

```

|

| 90 |

+

Navigate to [docs](https://workbench.aihub.qualcomm.com/docs/) for more information.

|

| 91 |

+

|

| 92 |

+

|

| 93 |

+

|

| 94 |

+

## Demo off target

|

| 95 |

+

|

| 96 |

+

The package contains a simple end-to-end demo that downloads pre-trained

|

| 97 |

+

weights and runs this model on a sample input.

|

| 98 |

+

|

| 99 |

+

```bash

|

| 100 |

+

python -m qai_hub_models.models.internimage.demo

|

| 101 |

+

```

|

| 102 |

+

|

| 103 |

+

The above demo runs a reference implementation of pre-processing, model

|

| 104 |

+

inference, and post processing.

|

| 105 |

+

|

| 106 |

+

**NOTE**: If you want running in a Jupyter Notebook or Google Colab like

|

| 107 |

+

environment, please add the following to your cell (instead of the above).

|

| 108 |

+

```

|

| 109 |

+

%run -m qai_hub_models.models.internimage.demo

|

| 110 |

+

```

|

| 111 |

+

|

| 112 |

+

|

| 113 |

+

### Run model on a cloud-hosted device

|

| 114 |

+

|

| 115 |

+

In addition to the demo, you can also run the model on a cloud-hosted Qualcomm®

|

| 116 |

+

device. This script does the following:

|

| 117 |

+

* Performance check on-device on a cloud-hosted device

|

| 118 |

+

* Downloads compiled assets that can be deployed on-device for Android.

|

| 119 |

+

* Accuracy check between PyTorch and on-device outputs.

|

| 120 |

+

|

| 121 |

+

```bash

|

| 122 |

+

python -m qai_hub_models.models.internimage.export

|

| 123 |

+

```

|

| 124 |

+

|

| 125 |

+

|

| 126 |

+

|

| 127 |

+

## How does this work?

|

| 128 |

+

|

| 129 |

+

This [export script](https://aihub.qualcomm.com/models/internimage/qai_hub_models/models/InternImage/export.py)

|

| 130 |

+

leverages [Qualcomm® AI Hub](https://aihub.qualcomm.com/) to optimize, validate, and deploy this model

|

| 131 |

+

on-device. Lets go through each step below in detail:

|

| 132 |

+

|

| 133 |

+

Step 1: **Compile model for on-device deployment**

|

| 134 |

+

|

| 135 |

+

To compile a PyTorch model for on-device deployment, we first trace the model

|

| 136 |

+

in memory using the `jit.trace` and then call the `submit_compile_job` API.

|

| 137 |

+

|

| 138 |

+

```python

|

| 139 |

+

import torch

|

| 140 |

+

|

| 141 |

+

import qai_hub as hub

|

| 142 |

+

from qai_hub_models.models.internimage import Model

|

| 143 |

+

|

| 144 |

+

# Load the model

|

| 145 |

+

torch_model = Model.from_pretrained()

|

| 146 |

+

|

| 147 |

+

# Device

|

| 148 |

+

device = hub.Device("Samsung Galaxy S25")

|

| 149 |

+

|

| 150 |

+

# Trace model

|

| 151 |

+

input_shape = torch_model.get_input_spec()

|

| 152 |

+

sample_inputs = torch_model.sample_inputs()

|

| 153 |

+

|

| 154 |

+

pt_model = torch.jit.trace(torch_model, [torch.tensor(data[0]) for _, data in sample_inputs.items()])

|

| 155 |

+

|

| 156 |

+

# Compile model on a specific device

|

| 157 |

+

compile_job = hub.submit_compile_job(

|

| 158 |

+

model=pt_model,

|

| 159 |

+

device=device,

|

| 160 |

+

input_specs=torch_model.get_input_spec(),

|

| 161 |

+

)

|

| 162 |

+

|

| 163 |

+

# Get target model to run on-device

|

| 164 |

+

target_model = compile_job.get_target_model()

|

| 165 |

+

|

| 166 |

+

```

|

| 167 |

+

|

| 168 |

+

|

| 169 |

+

Step 2: **Performance profiling on cloud-hosted device**

|

| 170 |

+

|

| 171 |

+

After compiling models from step 1. Models can be profiled model on-device using the

|

| 172 |

+

`target_model`. Note that this scripts runs the model on a device automatically

|

| 173 |

+

provisioned in the cloud. Once the job is submitted, you can navigate to a

|

| 174 |

+

provided job URL to view a variety of on-device performance metrics.

|

| 175 |

+

```python

|

| 176 |

+

profile_job = hub.submit_profile_job(

|

| 177 |

+

model=target_model,

|

| 178 |

+

device=device,

|

| 179 |

+

)

|

| 180 |

+

|

| 181 |

+

```

|

| 182 |

+

|

| 183 |

+

Step 3: **Verify on-device accuracy**

|

| 184 |

+

|

| 185 |

+

To verify the accuracy of the model on-device, you can run on-device inference

|

| 186 |

+

on sample input data on the same cloud hosted device.

|

| 187 |

+

```python

|

| 188 |

+

input_data = torch_model.sample_inputs()

|

| 189 |

+

inference_job = hub.submit_inference_job(

|

| 190 |

+

model=target_model,

|

| 191 |

+

device=device,

|

| 192 |

+

inputs=input_data,

|

| 193 |

+

)

|

| 194 |

+

on_device_output = inference_job.download_output_data()

|

| 195 |

+

|

| 196 |

+

```

|

| 197 |

+

With the output of the model, you can compute like PSNR, relative errors or

|

| 198 |

+

spot check the output with expected output.

|

| 199 |

+

|

| 200 |

+

**Note**: This on-device profiling and inference requires access to Qualcomm®

|

| 201 |

+

AI Hub Workbench. [Sign up for access](https://myaccount.qualcomm.com/signup).

|

| 202 |

+

|

| 203 |

+

|

| 204 |

+

|

| 205 |

+

|

| 206 |

+

## Deploying compiled model to Android

|

| 207 |

+

|

| 208 |

+

|

| 209 |

+

The models can be deployed using multiple runtimes:

|

| 210 |

+

- TensorFlow Lite (`.tflite` export): [This

|

| 211 |

+

tutorial](https://www.tensorflow.org/lite/android/quickstart) provides a

|

| 212 |

+

guide to deploy the .tflite model in an Android application.

|

| 213 |

+

|

| 214 |

+

|

| 215 |

+

- QNN (`.so` export ): This [sample

|

| 216 |

+

app](https://docs.qualcomm.com/bundle/publicresource/topics/80-63442-50/sample_app.html)

|

| 217 |

+

provides instructions on how to use the `.so` shared library in an Android application.

|

| 218 |

+

|

| 219 |

+

|

| 220 |

+

## View on Qualcomm® AI Hub

|

| 221 |

+

Get more details on InternImage's performance across various devices [here](https://aihub.qualcomm.com/models/internimage).

|

| 222 |

+

Explore all available models on [Qualcomm® AI Hub](https://aihub.qualcomm.com/)

|

| 223 |

+

|

| 224 |

+

|

| 225 |

+

## License

|

| 226 |

+

* The license for the original implementation of InternImage can be found

|

| 227 |

+

[here](https://github.com/OpenGVLab/InternImage/tree/master?tab=MIT-1-ov-file).

|

| 228 |

+

|

| 229 |

+

|

| 230 |

+

|

| 231 |

+

|

| 232 |

+

## Community

|

| 233 |

+

* Join [our AI Hub Slack community](https://aihub.qualcomm.com/community/slack) to collaborate, post questions and learn more about on-device AI.

|

| 234 |

+

* For questions or feedback please [reach out to us](mailto:ai-hub-support@qti.qualcomm.com).

|

| 235 |

+

|

| 236 |

+

|

precompiled/qualcomm-qcm6690/InternImage_w8a8.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:1dc5b59d016699e21982ef8a6fad4c48eb36618806a90d5ed8560c32309a7433

|

| 3 |

+

size 41750528

|

precompiled/qualcomm-qcm6690/InternImage_w8a8.onnx.zip

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0e0bce7c614dbac7d5d91acda0778d67d62ea415f8abe4f66a856fd2d617f0a9

|

| 3 |

+

size 30702816

|

precompiled/qualcomm-qcm6690/tool-versions.yaml

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

tool_versions:

|

| 2 |

+

precompiled_qnn_onnx:

|

| 3 |

+

qairt: 2.37.1.250807093845_124904

|

| 4 |

+

onnx_runtime: 1.23.0

|

precompiled/qualcomm-qcs6490/InternImage_w8a8.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ce1bfb0199c2b1d934e74ca128f2123b9098e9874a4a1ca48624dbe680686799

|

| 3 |

+

size 43220992

|

precompiled/qualcomm-qcs6490/InternImage_w8a8.onnx.zip

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:24f1ffe9929ba1c2385aff43dbf620227f732749229faeb836a6423303123bf2

|

| 3 |

+

size 30827011

|

precompiled/qualcomm-qcs6490/tool-versions.yaml

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

tool_versions:

|

| 2 |

+

precompiled_qnn_onnx:

|

| 3 |

+

qairt: 2.37.1.250807093845_124904

|

| 4 |

+

onnx_runtime: 1.23.0

|

precompiled/qualcomm-qcs8275-proxy/InternImage_float.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a4458d9de46a112e906d1d8dd42550ad76a0d2dee3ea056c2342517a7c7a11a0

|

| 3 |

+

size 69480448

|

precompiled/qualcomm-qcs8275-proxy/InternImage_w8a8.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4b02a2c5a02b88ab47a891c2b0f3002185aafbe579c4234e7ecda5cb78e44b2b

|

| 3 |

+

size 39874560

|

precompiled/qualcomm-qcs8275-proxy/tool-versions.yaml

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

tool_versions:

|

| 2 |

+

qnn_context_binary:

|

| 3 |

+

qairt: 2.41.0.251128145156_191518-auto

|

precompiled/qualcomm-qcs8450-proxy/InternImage_float.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9c1f24b64f8542f4adc3b0d15f5f2c08d8f256bd9bf72a5f21a54fe986958a5a

|

| 3 |

+

size 71995392

|

precompiled/qualcomm-qcs8450-proxy/InternImage_w8a8.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c9ef10a9261f782a331da90d355ef025a4916d6d87ea8b4e071ffb7416a1ccca

|

| 3 |

+

size 41635840

|

precompiled/qualcomm-qcs8450-proxy/tool-versions.yaml

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

tool_versions:

|

| 2 |

+

qnn_context_binary:

|

| 3 |

+

qairt: 2.41.0.251128145156_191518

|

precompiled/qualcomm-qcs8550-proxy/InternImage_float.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:952854e524ba6b41a6d1257e2fbd18965fa066b4aad134e1ea753b4ce8ab0fae

|

| 3 |

+

size 69505024

|

precompiled/qualcomm-qcs8550-proxy/InternImage_float.onnx.zip

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:436e05d6711c145558223137882016057349007c19eafcc815baaf1015688671

|

| 3 |

+

size 58274418

|

precompiled/qualcomm-qcs8550-proxy/InternImage_w8a8.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:19e7157a380771522c249b345c8f84c391f7d826734694b99b5f6725e3161600

|

| 3 |

+

size 39882752

|

precompiled/qualcomm-qcs8550-proxy/InternImage_w8a8.onnx.zip

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c3e98c3b1492949f2c1a713f03d0b28dcf169af14c760bc962f9d203e4d171f0

|

| 3 |

+

size 29656729

|

precompiled/qualcomm-qcs8550-proxy/tool-versions.yaml

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

tool_versions:

|

| 2 |

+

precompiled_qnn_onnx:

|

| 3 |

+

qairt: 2.37.1.250807093845_124904

|

| 4 |

+

onnx_runtime: 1.23.0

|

precompiled/qualcomm-qcs9075-proxy/InternImage_float.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c089a9745c744b83a80479c3c318eb015b35484ceef16eb26c4d5e6767fbe57e

|

| 3 |

+

size 69509120

|

precompiled/qualcomm-qcs9075-proxy/InternImage_w8a8.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:dd737f1586503a3d139e7e3eca55692ebbd99dd677c25d1dc731f006c04dea5c

|

| 3 |

+

size 39882752

|

precompiled/qualcomm-qcs9075-proxy/tool-versions.yaml

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

tool_versions:

|

| 2 |

+

qnn_context_binary:

|

| 3 |

+

qairt: 2.41.0.251128145156_191518-auto

|

precompiled/qualcomm-snapdragon-7gen4/InternImage_w8a8.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:02275d583957c57a91d39a6683bfb228823bebb1835f6a295e7bfb9d342dfbd3

|

| 3 |

+

size 40075264

|

precompiled/qualcomm-snapdragon-7gen4/InternImage_w8a8.onnx.zip

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9a545167f7cc6523294d6fd0da33c2bed649e49c89344e21e54f6e2531395e69

|

| 3 |

+

size 29842048

|

precompiled/qualcomm-snapdragon-7gen4/tool-versions.yaml

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

tool_versions:

|

| 2 |

+

precompiled_qnn_onnx:

|

| 3 |

+

qairt: 2.37.1.250807093845_124904

|

| 4 |

+

onnx_runtime: 1.23.0

|

precompiled/qualcomm-snapdragon-8-elite-for-galaxy/InternImage_float.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:132239744d865c26a3b8ae5c03e130faea0a4e5d7371b4bcf93bb100343fa01f

|

| 3 |

+

size 69332992

|

precompiled/qualcomm-snapdragon-8-elite-for-galaxy/InternImage_float.onnx.zip

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:8c58a505b18bfd1cc064390d30806499a396461c639a9c094907a17e166d9071

|

| 3 |

+

size 58166902

|

precompiled/qualcomm-snapdragon-8-elite-for-galaxy/InternImage_w8a8.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:7ef0ac1d61dddc0b40982b04a24292171d725c517f77881c62c8a545acf5e232

|

| 3 |

+

size 39866368

|

precompiled/qualcomm-snapdragon-8-elite-for-galaxy/InternImage_w8a8.onnx.zip

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:58bc5dd3af7f24daf35057316c0cd3b5d3eaf49759f21bb2defd8dad42b594d1

|

| 3 |

+

size 29614453

|

precompiled/qualcomm-snapdragon-8-elite-for-galaxy/tool-versions.yaml

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

tool_versions:

|

| 2 |

+

precompiled_qnn_onnx:

|

| 3 |

+

qairt: 2.37.1.250807093845_124904

|

| 4 |

+

onnx_runtime: 1.23.0

|

precompiled/qualcomm-snapdragon-8-elite-gen5/InternImage_float.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2495aa4ad100a66642c3840e6fe7c234eaca29f7397ae270a3913812364f1451

|

| 3 |

+

size 69349376

|

precompiled/qualcomm-snapdragon-8-elite-gen5/InternImage_float.onnx.zip

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:88325d05772110ee3a99c64a50e76d737724472bf49d55188f40c68f282ba8ba

|

| 3 |

+

size 58132741

|

precompiled/qualcomm-snapdragon-8-elite-gen5/InternImage_w8a8.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4dfaacfe7b0b70e6ec4f95288f0437c448019f72231f5b3b21ca8e194371d194

|

| 3 |

+

size 39866368

|

precompiled/qualcomm-snapdragon-8-elite-gen5/InternImage_w8a8.onnx.zip

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:8409227fff16c5ae99790cbbf7afbf8683f99e851911435d4796f99f2ab0aced

|

| 3 |

+

size 29604166

|

precompiled/qualcomm-snapdragon-8-elite-gen5/tool-versions.yaml

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

tool_versions:

|

| 2 |

+

precompiled_qnn_onnx:

|

| 3 |

+

qairt: 2.37.1.250807093845_124904

|

| 4 |

+

onnx_runtime: 1.23.0

|

precompiled/qualcomm-snapdragon-8gen3/InternImage_float.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:47148a2d97082cc84aec6287b5c5ed6e8dd8649b9b055ff67b6f6c8cbf95beb9

|

| 3 |

+

size 69476352

|

precompiled/qualcomm-snapdragon-8gen3/InternImage_float.onnx.zip

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:7ba949fd0a0e70cbd72bc693b9eda802ef855d14e04787e9eaba103e5765a22f

|

| 3 |

+

size 58273385

|

precompiled/qualcomm-snapdragon-8gen3/InternImage_w8a8.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:1e832f7ef2714e9d7257db4f1e84599dc6de8d8ec6f27ea7f46e4d5dbfa6cd26

|

| 3 |

+

size 39878656

|

precompiled/qualcomm-snapdragon-8gen3/InternImage_w8a8.onnx.zip

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:84a1f6ac9838d6523ba82089d7e4757518c84ebe5456eb58c321a41727df6e10

|

| 3 |

+

size 29673111

|

precompiled/qualcomm-snapdragon-8gen3/tool-versions.yaml

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

tool_versions:

|

| 2 |

+

precompiled_qnn_onnx:

|

| 3 |

+

qairt: 2.37.1.250807093845_124904

|

| 4 |

+

onnx_runtime: 1.23.0

|

precompiled/qualcomm-snapdragon-x-elite/InternImage_float.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e0588561b5b43b61f782c6bb2d1efd4dcf94057f2144af669c72f6aa8311958c

|

| 3 |

+

size 69505024

|

precompiled/qualcomm-snapdragon-x-elite/InternImage_float.onnx.zip

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:bd597dd7ebfeace8e797b9a71a2381aadd5e4f5c24ab56e71d62aa0595c283bf

|

| 3 |

+

size 58273621

|

precompiled/qualcomm-snapdragon-x-elite/InternImage_w8a8.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:746d6dfe0c56c6af0da97c1ada64b626a516c8ddff9eca8c6dd92aca76d360d4

|

| 3 |

+

size 39882752

|

precompiled/qualcomm-snapdragon-x-elite/InternImage_w8a8.onnx.zip

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:cc9bd1c0faa4d1d12284ce58cbbce5c43c7f28027a8e546aba557c8554de3b73

|

| 3 |

+

size 29658836

|

precompiled/qualcomm-snapdragon-x-elite/tool-versions.yaml

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

tool_versions:

|

| 2 |

+

precompiled_qnn_onnx:

|

| 3 |

+

qairt: 2.37.1.250807093845_124904

|

| 4 |

+

onnx_runtime: 1.23.0

|