v0.37.0

Browse filesSee https://github.com/quic/ai-hub-models/releases/v0.37.0 for changelog.

- .gitattributes +2 -0

- DEPLOYMENT_MODEL_LICENSE.pdf +3 -0

- LICENSE +2 -0

- README.md +262 -0

- Sequencer2D_float.dlc +3 -0

- Sequencer2D_float.onnx.zip +3 -0

- Sequencer2D_float.tflite +3 -0

- Sequencer2D_w8a8.onnx.zip +3 -0

- Sequencer2D_w8a8.tflite +3 -0

- precompiled/qualcomm-snapdragon-x-elite/Sequencer2D_float.bin +3 -0

- precompiled/qualcomm-snapdragon-x-elite/Sequencer2D_float.onnx.zip +3 -0

- precompiled/qualcomm-snapdragon-x-elite/tool-versions.yaml +3 -0

- tool-versions.yaml +4 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,5 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

DEPLOYMENT_MODEL_LICENSE.pdf filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

Sequencer2D_float.dlc filter=lfs diff=lfs merge=lfs -text

|

DEPLOYMENT_MODEL_LICENSE.pdf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4409f93b0e82531303b3e10f52f1fdfb56467a25f05b7441c6bbd8bb8a64b42c

|

| 3 |

+

size 109629

|

LICENSE

ADDED

|

@@ -0,0 +1,2 @@

|

|

|

|

|

|

|

|

|

|

| 1 |

+

The license of the original trained model can be found at https://github.com/facebookresearch/LeViT?tab=Apache-2.0-1-ov-file.

|

| 2 |

+

The license for the deployable model files (.tflite, .onnx, .dlc, .bin, etc.) can be found in DEPLOYMENT_MODEL_LICENSE.pdf.

|

README.md

ADDED

|

@@ -0,0 +1,262 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

library_name: pytorch

|

| 3 |

+

license: other

|

| 4 |

+

tags:

|

| 5 |

+

- android

|

| 6 |

+

pipeline_tag: image-classification

|

| 7 |

+

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

# Sequencer2D: Optimized for Mobile Deployment

|

| 13 |

+

## Imagenet classifier and general purpose backbone

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

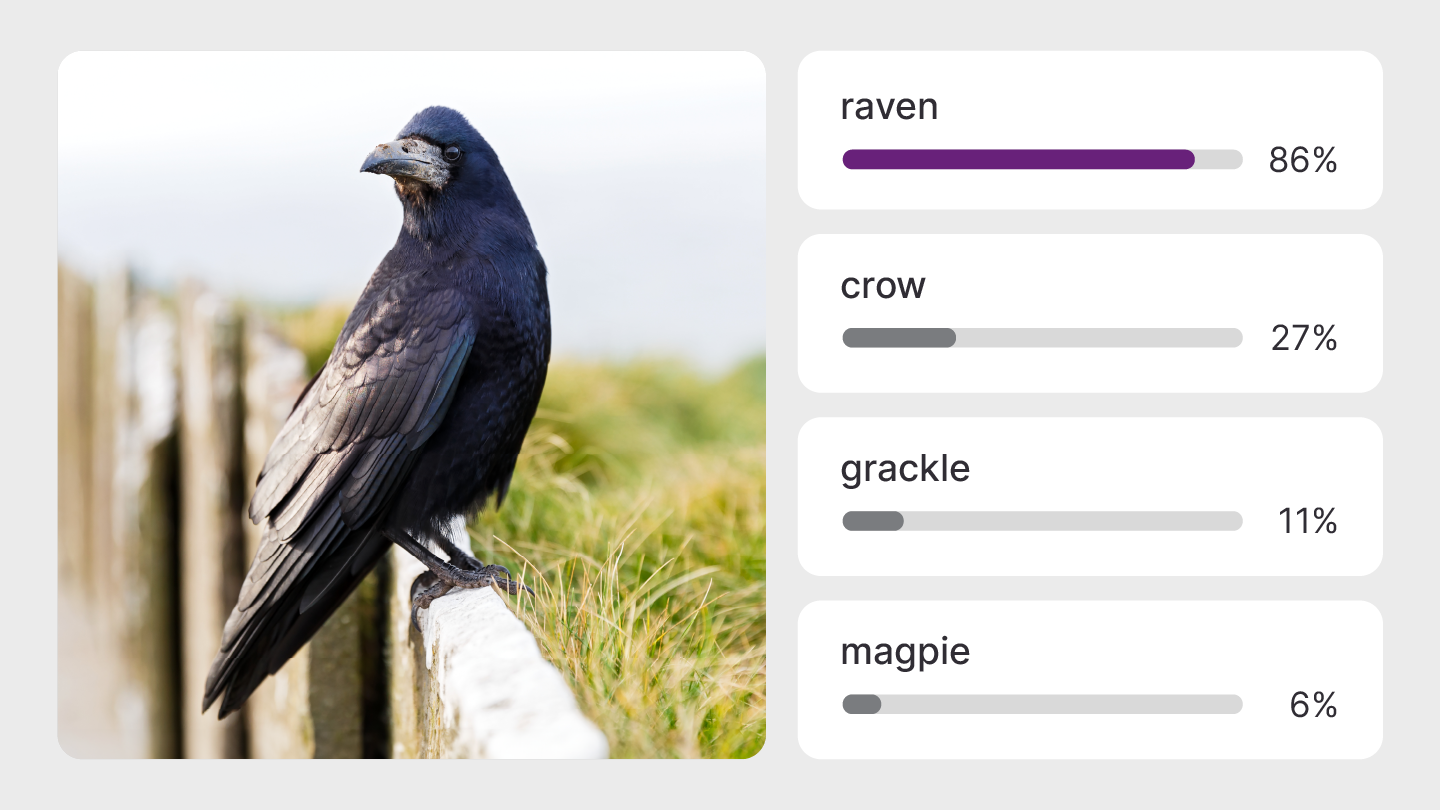

sequencer2d is a vision transformer model that can classify images from the Imagenet dataset.

|

| 17 |

+

|

| 18 |

+

This model is an implementation of Sequencer2D found [here](https://github.com/okojoalg/sequencer).

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

This repository provides scripts to run Sequencer2D on Qualcomm® devices.

|

| 22 |

+

More details on model performance across various devices, can be found

|

| 23 |

+

[here](https://aihub.qualcomm.com/models/sequencer2d).

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

### Model Details

|

| 28 |

+

|

| 29 |

+

- **Model Type:** Model_use_case.image_classification

|

| 30 |

+

- **Model Stats:**

|

| 31 |

+

- Model checkpoint: sequencer2d_s

|

| 32 |

+

- Input resolution: 224x224

|

| 33 |

+

- Number of parameters: 27.6M

|

| 34 |

+

- Model size (float): 106 MB

|

| 35 |

+

- Model size (w8a16): 69.1 MB

|

| 36 |

+

|

| 37 |

+

| Model | Precision | Device | Chipset | Target Runtime | Inference Time (ms) | Peak Memory Range (MB) | Primary Compute Unit | Target Model

|

| 38 |

+

|---|---|---|---|---|---|---|---|---|

|

| 39 |

+

| Sequencer2D | float | QCS8275 (Proxy) | Qualcomm® QCS8275 (Proxy) | TFLITE | 116.219 ms | 0 - 538 MB | NPU | [Sequencer2D.tflite](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.tflite) |

|

| 40 |

+

| Sequencer2D | float | QCS8275 (Proxy) | Qualcomm® QCS8275 (Proxy) | QNN_DLC | 89.881 ms | 1 - 594 MB | NPU | [Sequencer2D.dlc](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.dlc) |

|

| 41 |

+

| Sequencer2D | float | QCS8450 (Proxy) | Qualcomm® QCS8450 (Proxy) | TFLITE | 57.652 ms | 0 - 416 MB | NPU | [Sequencer2D.tflite](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.tflite) |

|

| 42 |

+

| Sequencer2D | float | QCS8450 (Proxy) | Qualcomm® QCS8450 (Proxy) | QNN_DLC | 68.099 ms | 0 - 433 MB | NPU | [Sequencer2D.dlc](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.dlc) |

|

| 43 |

+

| Sequencer2D | float | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | TFLITE | 59.971 ms | 0 - 80 MB | NPU | [Sequencer2D.tflite](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.tflite) |

|

| 44 |

+

| Sequencer2D | float | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | QNN_DLC | 42.334 ms | 0 - 89 MB | NPU | [Sequencer2D.dlc](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.dlc) |

|

| 45 |

+

| Sequencer2D | float | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | ONNX | 61.945 ms | 0 - 50 MB | NPU | [Sequencer2D.onnx.zip](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.onnx.zip) |

|

| 46 |

+

| Sequencer2D | float | QCS9075 (Proxy) | Qualcomm® QCS9075 (Proxy) | TFLITE | 61.928 ms | 0 - 539 MB | NPU | [Sequencer2D.tflite](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.tflite) |

|

| 47 |

+

| Sequencer2D | float | QCS9075 (Proxy) | Qualcomm® QCS9075 (Proxy) | QNN_DLC | 44.001 ms | 0 - 587 MB | NPU | [Sequencer2D.dlc](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.dlc) |

|

| 48 |

+

| Sequencer2D | float | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | TFLITE | 60.11 ms | 0 - 76 MB | NPU | [Sequencer2D.tflite](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.tflite) |

|

| 49 |

+

| Sequencer2D | float | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | QNN_DLC | 41.816 ms | 0 - 87 MB | NPU | [Sequencer2D.dlc](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.dlc) |

|

| 50 |

+

| Sequencer2D | float | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | ONNX | 63.251 ms | 0 - 38 MB | NPU | [Sequencer2D.onnx.zip](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.onnx.zip) |

|

| 51 |

+

| Sequencer2D | float | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | TFLITE | 44.179 ms | 0 - 544 MB | NPU | [Sequencer2D.tflite](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.tflite) |

|

| 52 |

+

| Sequencer2D | float | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | QNN_DLC | 30.463 ms | 1 - 1026 MB | NPU | [Sequencer2D.dlc](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.dlc) |

|

| 53 |

+

| Sequencer2D | float | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | ONNX | 53.55 ms | 7 - 32 MB | NPU | [Sequencer2D.onnx.zip](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.onnx.zip) |

|

| 54 |

+

| Sequencer2D | float | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | TFLITE | 45.568 ms | 0 - 547 MB | NPU | [Sequencer2D.tflite](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.tflite) |

|

| 55 |

+

| Sequencer2D | float | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | QNN_DLC | 22.335 ms | 1 - 582 MB | NPU | [Sequencer2D.dlc](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.dlc) |

|

| 56 |

+

| Sequencer2D | float | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | ONNX | 54.391 ms | 8 - 32 MB | NPU | [Sequencer2D.onnx.zip](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.onnx.zip) |

|

| 57 |

+

| Sequencer2D | float | Snapdragon X Elite CRD | Snapdragon® X Elite | QNN_DLC | 44.533 ms | 469 - 469 MB | NPU | [Sequencer2D.dlc](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.dlc) |

|

| 58 |

+

| Sequencer2D | float | Snapdragon X Elite CRD | Snapdragon® X Elite | ONNX | 25.592 ms | 3 - 3 MB | NPU | [Sequencer2D.onnx.zip](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D.onnx.zip) |

|

| 59 |

+

| Sequencer2D | w8a8 | QCS8275 (Proxy) | Qualcomm® QCS8275 (Proxy) | TFLITE | 82.161 ms | 0 - 491 MB | NPU | [Sequencer2D.tflite](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D_w8a8.tflite) |

|

| 60 |

+

| Sequencer2D | w8a8 | QCS8450 (Proxy) | Qualcomm® QCS8450 (Proxy) | TFLITE | 40.075 ms | 0 - 387 MB | NPU | [Sequencer2D.tflite](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D_w8a8.tflite) |

|

| 61 |

+

| Sequencer2D | w8a8 | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | TFLITE | 43.482 ms | 0 - 65 MB | NPU | [Sequencer2D.tflite](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D_w8a8.tflite) |

|

| 62 |

+

| Sequencer2D | w8a8 | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | ONNX | 62.202 ms | 127 - 255 MB | NPU | [Sequencer2D.onnx.zip](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D_w8a8.onnx.zip) |

|

| 63 |

+

| Sequencer2D | w8a8 | QCS9075 (Proxy) | Qualcomm® QCS9075 (Proxy) | TFLITE | 45.82 ms | 0 - 489 MB | NPU | [Sequencer2D.tflite](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D_w8a8.tflite) |

|

| 64 |

+

| Sequencer2D | w8a8 | RB3 Gen 2 (Proxy) | Qualcomm® QCS6490 (Proxy) | ONNX | 261.522 ms | 19 - 40 MB | CPU | [Sequencer2D.onnx.zip](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D_w8a8.onnx.zip) |

|

| 65 |

+

| Sequencer2D | w8a8 | RB5 (Proxy) | Qualcomm® QCS8250 (Proxy) | ONNX | 271.335 ms | 16 - 48 MB | CPU | [Sequencer2D.onnx.zip](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D_w8a8.onnx.zip) |

|

| 66 |

+

| Sequencer2D | w8a8 | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | TFLITE | 43.548 ms | 0 - 63 MB | NPU | [Sequencer2D.tflite](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D_w8a8.tflite) |

|

| 67 |

+

| Sequencer2D | w8a8 | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | ONNX | 57.675 ms | 123 - 254 MB | NPU | [Sequencer2D.onnx.zip](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D_w8a8.onnx.zip) |

|

| 68 |

+

| Sequencer2D | w8a8 | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | TFLITE | 32.596 ms | 0 - 497 MB | NPU | [Sequencer2D.tflite](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D_w8a8.tflite) |

|

| 69 |

+

| Sequencer2D | w8a8 | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | ONNX | 55.021 ms | 161 - 2609 MB | NPU | [Sequencer2D.onnx.zip](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D_w8a8.onnx.zip) |

|

| 70 |

+

| Sequencer2D | w8a8 | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | TFLITE | 31.918 ms | 0 - 487 MB | NPU | [Sequencer2D.tflite](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D_w8a8.tflite) |

|

| 71 |

+

| Sequencer2D | w8a8 | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | ONNX | 36.684 ms | 163 - 1165 MB | NPU | [Sequencer2D.onnx.zip](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D_w8a8.onnx.zip) |

|

| 72 |

+

| Sequencer2D | w8a8 | Snapdragon X Elite CRD | Snapdragon® X Elite | ONNX | 52.765 ms | 232 - 232 MB | NPU | [Sequencer2D.onnx.zip](https://huggingface.co/qualcomm/Sequencer2D/blob/main/Sequencer2D_w8a8.onnx.zip) |

|

| 73 |

+

|

| 74 |

+

|

| 75 |

+

|

| 76 |

+

|

| 77 |

+

## Installation

|

| 78 |

+

|

| 79 |

+

|

| 80 |

+

Install the package via pip:

|

| 81 |

+

```bash

|

| 82 |

+

pip install "qai-hub-models[sequencer2d]"

|

| 83 |

+

```

|

| 84 |

+

|

| 85 |

+

|

| 86 |

+

## Configure Qualcomm® AI Hub to run this model on a cloud-hosted device

|

| 87 |

+

|

| 88 |

+

Sign-in to [Qualcomm® AI Hub](https://app.aihub.qualcomm.com/) with your

|

| 89 |

+

Qualcomm® ID. Once signed in navigate to `Account -> Settings -> API Token`.

|

| 90 |

+

|

| 91 |

+

With this API token, you can configure your client to run models on the cloud

|

| 92 |

+

hosted devices.

|

| 93 |

+

```bash

|

| 94 |

+

qai-hub configure --api_token API_TOKEN

|

| 95 |

+

```

|

| 96 |

+

Navigate to [docs](https://app.aihub.qualcomm.com/docs/) for more information.

|

| 97 |

+

|

| 98 |

+

|

| 99 |

+

|

| 100 |

+

## Demo off target

|

| 101 |

+

|

| 102 |

+

The package contains a simple end-to-end demo that downloads pre-trained

|

| 103 |

+

weights and runs this model on a sample input.

|

| 104 |

+

|

| 105 |

+

```bash

|

| 106 |

+

python -m qai_hub_models.models.sequencer2d.demo

|

| 107 |

+

```

|

| 108 |

+

|

| 109 |

+

The above demo runs a reference implementation of pre-processing, model

|

| 110 |

+

inference, and post processing.

|

| 111 |

+

|

| 112 |

+

**NOTE**: If you want running in a Jupyter Notebook or Google Colab like

|

| 113 |

+

environment, please add the following to your cell (instead of the above).

|

| 114 |

+

```

|

| 115 |

+

%run -m qai_hub_models.models.sequencer2d.demo

|

| 116 |

+

```

|

| 117 |

+

|

| 118 |

+

|

| 119 |

+

### Run model on a cloud-hosted device

|

| 120 |

+

|

| 121 |

+

In addition to the demo, you can also run the model on a cloud-hosted Qualcomm®

|

| 122 |

+

device. This script does the following:

|

| 123 |

+

* Performance check on-device on a cloud-hosted device

|

| 124 |

+

* Downloads compiled assets that can be deployed on-device for Android.

|

| 125 |

+

* Accuracy check between PyTorch and on-device outputs.

|

| 126 |

+

|

| 127 |

+

```bash

|

| 128 |

+

python -m qai_hub_models.models.sequencer2d.export

|

| 129 |

+

```

|

| 130 |

+

|

| 131 |

+

|

| 132 |

+

|

| 133 |

+

## How does this work?

|

| 134 |

+

|

| 135 |

+

This [export script](https://aihub.qualcomm.com/models/sequencer2d/qai_hub_models/models/Sequencer2D/export.py)

|

| 136 |

+

leverages [Qualcomm® AI Hub](https://aihub.qualcomm.com/) to optimize, validate, and deploy this model

|

| 137 |

+

on-device. Lets go through each step below in detail:

|

| 138 |

+

|

| 139 |

+

Step 1: **Compile model for on-device deployment**

|

| 140 |

+

|

| 141 |

+

To compile a PyTorch model for on-device deployment, we first trace the model

|

| 142 |

+

in memory using the `jit.trace` and then call the `submit_compile_job` API.

|

| 143 |

+

|

| 144 |

+

```python

|

| 145 |

+

import torch

|

| 146 |

+

|

| 147 |

+

import qai_hub as hub

|

| 148 |

+

from qai_hub_models.models.sequencer2d import Model

|

| 149 |

+

|

| 150 |

+

# Load the model

|

| 151 |

+

torch_model = Model.from_pretrained()

|

| 152 |

+

|

| 153 |

+

# Device

|

| 154 |

+

device = hub.Device("Samsung Galaxy S24")

|

| 155 |

+

|

| 156 |

+

# Trace model

|

| 157 |

+

input_shape = torch_model.get_input_spec()

|

| 158 |

+

sample_inputs = torch_model.sample_inputs()

|

| 159 |

+

|

| 160 |

+

pt_model = torch.jit.trace(torch_model, [torch.tensor(data[0]) for _, data in sample_inputs.items()])

|

| 161 |

+

|

| 162 |

+

# Compile model on a specific device

|

| 163 |

+

compile_job = hub.submit_compile_job(

|

| 164 |

+

model=pt_model,

|

| 165 |

+

device=device,

|

| 166 |

+

input_specs=torch_model.get_input_spec(),

|

| 167 |

+

)

|

| 168 |

+

|

| 169 |

+

# Get target model to run on-device

|

| 170 |

+

target_model = compile_job.get_target_model()

|

| 171 |

+

|

| 172 |

+

```

|

| 173 |

+

|

| 174 |

+

|

| 175 |

+

Step 2: **Performance profiling on cloud-hosted device**

|

| 176 |

+

|

| 177 |

+

After compiling models from step 1. Models can be profiled model on-device using the

|

| 178 |

+

`target_model`. Note that this scripts runs the model on a device automatically

|

| 179 |

+

provisioned in the cloud. Once the job is submitted, you can navigate to a

|

| 180 |

+

provided job URL to view a variety of on-device performance metrics.

|

| 181 |

+

```python

|

| 182 |

+

profile_job = hub.submit_profile_job(

|

| 183 |

+

model=target_model,

|

| 184 |

+

device=device,

|

| 185 |

+

)

|

| 186 |

+

|

| 187 |

+

```

|

| 188 |

+

|

| 189 |

+

Step 3: **Verify on-device accuracy**

|

| 190 |

+

|

| 191 |

+

To verify the accuracy of the model on-device, you can run on-device inference

|

| 192 |

+

on sample input data on the same cloud hosted device.

|

| 193 |

+

```python

|

| 194 |

+

input_data = torch_model.sample_inputs()

|

| 195 |

+

inference_job = hub.submit_inference_job(

|

| 196 |

+

model=target_model,

|

| 197 |

+

device=device,

|

| 198 |

+

inputs=input_data,

|

| 199 |

+

)

|

| 200 |

+

on_device_output = inference_job.download_output_data()

|

| 201 |

+

|

| 202 |

+

```

|

| 203 |

+

With the output of the model, you can compute like PSNR, relative errors or

|

| 204 |

+

spot check the output with expected output.

|

| 205 |

+

|

| 206 |

+

**Note**: This on-device profiling and inference requires access to Qualcomm®

|

| 207 |

+

AI Hub. [Sign up for access](https://myaccount.qualcomm.com/signup).

|

| 208 |

+

|

| 209 |

+

|

| 210 |

+

|

| 211 |

+

## Run demo on a cloud-hosted device

|

| 212 |

+

|

| 213 |

+

You can also run the demo on-device.

|

| 214 |

+

|

| 215 |

+

```bash

|

| 216 |

+

python -m qai_hub_models.models.sequencer2d.demo --eval-mode on-device

|

| 217 |

+

```

|

| 218 |

+

|

| 219 |

+

**NOTE**: If you want running in a Jupyter Notebook or Google Colab like

|

| 220 |

+

environment, please add the following to your cell (instead of the above).

|

| 221 |

+

```

|

| 222 |

+

%run -m qai_hub_models.models.sequencer2d.demo -- --eval-mode on-device

|

| 223 |

+

```

|

| 224 |

+

|

| 225 |

+

|

| 226 |

+

## Deploying compiled model to Android

|

| 227 |

+

|

| 228 |

+

|

| 229 |

+

The models can be deployed using multiple runtimes:

|

| 230 |

+

- TensorFlow Lite (`.tflite` export): [This

|

| 231 |

+

tutorial](https://www.tensorflow.org/lite/android/quickstart) provides a

|

| 232 |

+

guide to deploy the .tflite model in an Android application.

|

| 233 |

+

|

| 234 |

+

|

| 235 |

+

- QNN (`.so` export ): This [sample

|

| 236 |

+

app](https://docs.qualcomm.com/bundle/publicresource/topics/80-63442-50/sample_app.html)

|

| 237 |

+

provides instructions on how to use the `.so` shared library in an Android application.

|

| 238 |

+

|

| 239 |

+

|

| 240 |

+

## View on Qualcomm® AI Hub

|

| 241 |

+

Get more details on Sequencer2D's performance across various devices [here](https://aihub.qualcomm.com/models/sequencer2d).

|

| 242 |

+

Explore all available models on [Qualcomm® AI Hub](https://aihub.qualcomm.com/)

|

| 243 |

+

|

| 244 |

+

|

| 245 |

+

## License

|

| 246 |

+

* The license for the original implementation of Sequencer2D can be found

|

| 247 |

+

[here](https://github.com/facebookresearch/LeViT?tab=Apache-2.0-1-ov-file).

|

| 248 |

+

* The license for the compiled assets for on-device deployment can be found [here](https://qaihub-public-assets.s3.us-west-2.amazonaws.com/qai-hub-models/Qualcomm+AI+Hub+Proprietary+License.pdf)

|

| 249 |

+

|

| 250 |

+

|

| 251 |

+

|

| 252 |

+

## References

|

| 253 |

+

* [Sequencer: Deep LSTM for Image Classification](https://arxiv.org/abs/2205.01972)

|

| 254 |

+

* [Source Model Implementation](https://github.com/okojoalg/sequencer)

|

| 255 |

+

|

| 256 |

+

|

| 257 |

+

|

| 258 |

+

## Community

|

| 259 |

+

* Join [our AI Hub Slack community](https://aihub.qualcomm.com/community/slack) to collaborate, post questions and learn more about on-device AI.

|

| 260 |

+

* For questions or feedback please [reach out to us](mailto:ai-hub-support@qti.qualcomm.com).

|

| 261 |

+

|

| 262 |

+

|

Sequencer2D_float.dlc

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ff97dc2a87bf0dd53a43998215d2339cd6657c055d789cc8eefd2a4e6ade71a5

|

| 3 |

+

size 123433100

|

Sequencer2D_float.onnx.zip

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:aaf3cf9a79f086c8931d81e6c91512bf9c242d86877bb221ccdd70b51ba76a6d

|

| 3 |

+

size 102956250

|

Sequencer2D_float.tflite

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c599d8d8bfcd01d80c2fbd42075148f62e23700ade6ecd317b5bb7cf1d9c954f

|

| 3 |

+

size 114050624

|

Sequencer2D_w8a8.onnx.zip

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c10c83e34090c0dd145b42a34a72657acc8ba1a3c74dc987313ad85c15d7e93e

|

| 3 |

+

size 72442797

|

Sequencer2D_w8a8.tflite

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:90d42a4992e36950dd4ac68bc31d4693f56dca6c29fcd8403d529fde53454fe2

|

| 3 |

+

size 65551016

|

precompiled/qualcomm-snapdragon-x-elite/Sequencer2D_float.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:cf9ddab8109edf3ee71d5483ffd8307591799b3cdf206507589cf07c743d4fae

|

| 3 |

+

size 66490368

|

precompiled/qualcomm-snapdragon-x-elite/Sequencer2D_float.onnx.zip

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:41523ce15d512408ad21b3744be17f5207f24d45f23d74d4f719a563c6d71fb5

|

| 3 |

+

size 54937161

|

precompiled/qualcomm-snapdragon-x-elite/tool-versions.yaml

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

tool_versions:

|

| 2 |

+

precompiled_qnn_onnx:

|

| 3 |

+

qairt: 2.36.4.250725200057_123280

|

tool-versions.yaml

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

tool_versions:

|

| 2 |

+

onnx:

|

| 3 |

+

qairt: 2.36.4.250725200057_123280

|

| 4 |

+

onnx_runtime: 1.22.2

|