---

language:

- en

license: apache-2.0

library_name: transformers

tags:

- tokenizer

- rx-codex

- medical-ai

- code-tokenizer

- chat-ai

pipeline_tag: text-generation

---

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

Rx Codex Tokenizer: Professional Tokenizer for Modern AI

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

## Overview

Rx Codex Tokenizer is a state-of-the-art BPE tokenizer designed for modern AI applications. With 128K vocabulary optimized for English, code, and medical text, it outperforms established tokenizers in comprehensive benchmarks.

**Developed by Rx Founder & CEO of Rx Codex AI**

## Benchmark Results

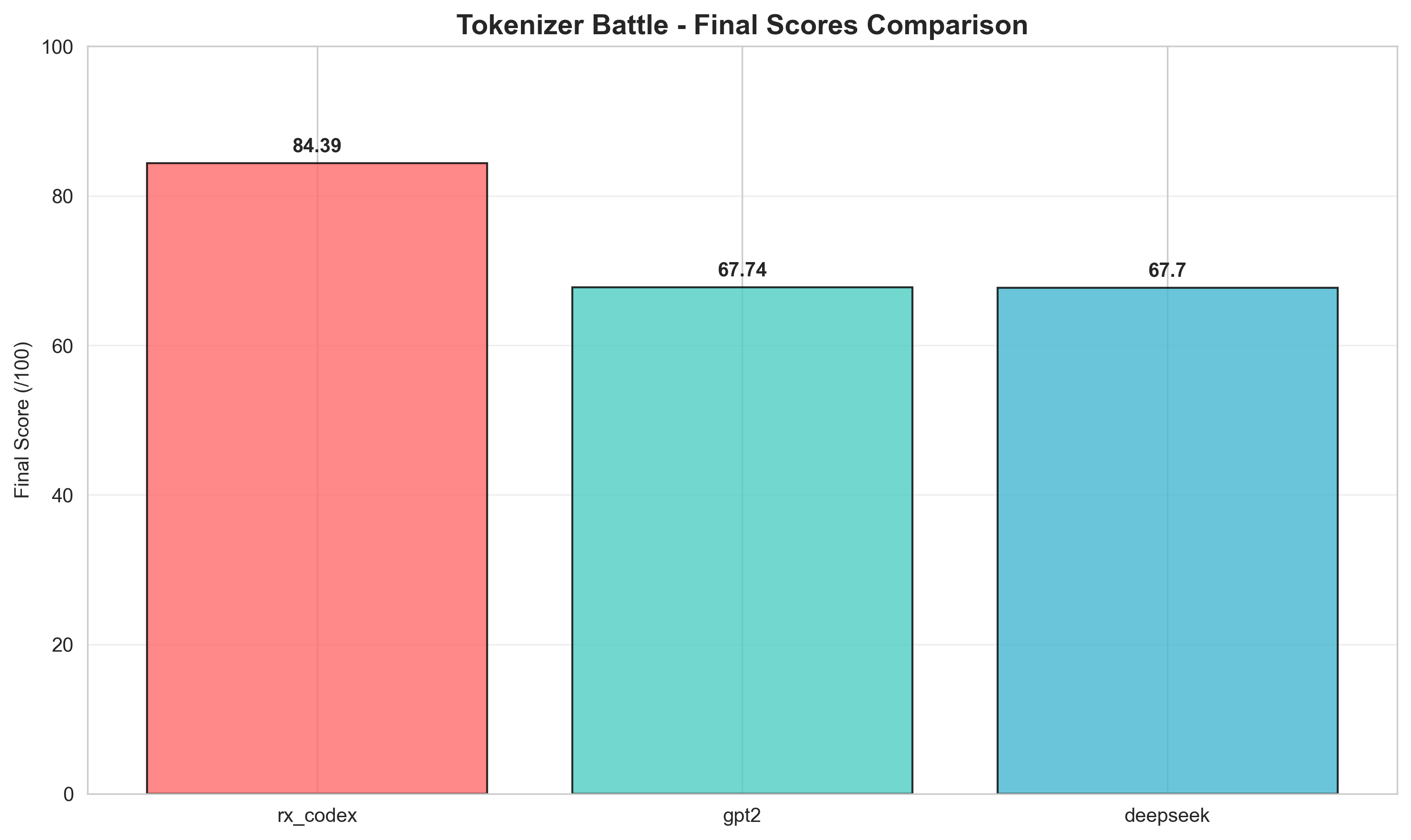

### Tokenizer Battle Royale - Final Scores

| Tokenizer | Final Score | Speed | Compression | Special Tokens | Chat Support |

|-----------|-------------|-------|-------------|----------------|--------------|

| 🥇 **Rx Codex** | **84.51/100** | 24.84/25 | 35.0/35 | 16.67/20 | 15/15 |

| 🥈 GPT-2 | 67.89/100 | 24.89/25 | 35.0/35 | 0.0/20 | 15/15 |

| 🥉 DeepSeek | 67.77/100 | 24.77/25 | 35.0/35 | 0.0/20 | 15/15 |

### Final Scores Comparison

### Speed Analysis

### Compression Efficiency

### Multi-dimensional Analysis

### Token Count Efficiency

## Key Features

- **128K Vocabulary** - Optimal balance of coverage and efficiency

- **Byte-Level BPE** - No UNK tokens, handles any text

- **Medical Text Optimized** - Perfect for healthcare AI applications

- **Code-Aware** - Excellent programming language support

- **Chat-Ready Tokens** - Built-in support for conversation formats

## Technical Specifications

- **Vocabulary Size**: 128,256 tokens

- **Special Tokens**: 9 custom tokens

- **Model Type**: BPE with byte fallback

- **Training Data**: OpenOrca 5GB English dataset

- **Average Speed**: 0.63ms per tokenization

- **Compression Ratio**: 4.18 characters per token

## Use Cases

- **Chat AI Systems** - Built-in chat token support

- **Medical AI** - Optimized for healthcare terminology

- **Code Generation** - Excellent programming language handling

- **Academic Research** - Efficient with complex text

## License

Apache 2.0

## Author

**Rx Founder & CEO**

Rx Codex AI