Upload README.md with huggingface_hub

Browse files

README.md

CHANGED

|

@@ -67,6 +67,12 @@ tags:

|

|

| 67 |

|

| 68 |

## Quantized GGUF Models

|

| 69 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 70 |

| Name | Quant method | Bits | Size | Use case |

|

| 71 |

| ---- | ---- | ---- | ---- | ----- |

|

| 72 |

| [v2-1_768-nonema-pruned-Q4_0.gguf](https://huggingface.co/second-state/stable-diffusion-2-1-GGUF/blob/main/v2-1_768-nonema-pruned-Q4_0.gguf) | Q4_0 | 2 | 1.70 GB | |

|

|

|

|

| 67 |

|

| 68 |

## Quantized GGUF Models

|

| 69 |

|

| 70 |

+

Using formats of different precisions will yield results of varying quality.

|

| 71 |

+

|

| 72 |

+

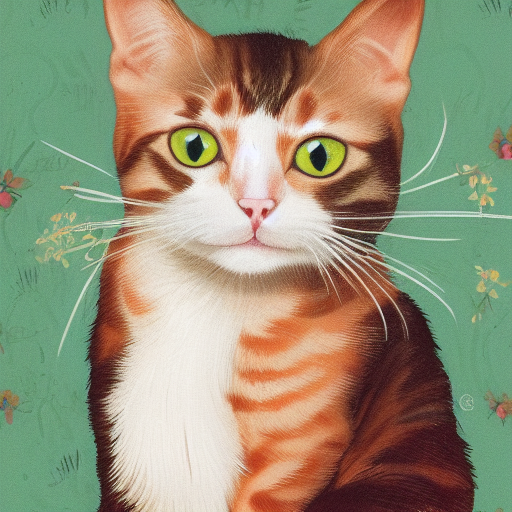

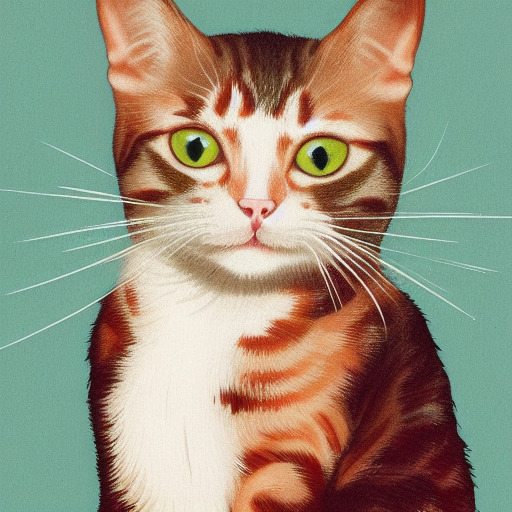

| f32 | f16 |q8_0 |q5_0 |q5_1 |q4_0 |q4_1 |

|

| 73 |

+

| ---- |---- |---- |---- |---- |---- |---- |

|

| 74 |

+

|  | | | | | | |

|

| 75 |

+

|

| 76 |

| Name | Quant method | Bits | Size | Use case |

|

| 77 |

| ---- | ---- | ---- | ---- | ----- |

|

| 78 |

| [v2-1_768-nonema-pruned-Q4_0.gguf](https://huggingface.co/second-state/stable-diffusion-2-1-GGUF/blob/main/v2-1_768-nonema-pruned-Q4_0.gguf) | Q4_0 | 2 | 1.70 GB | |

|