Upload Spark-Somya-TTS model

Browse files- .gitattributes +1 -0

- BiCodec/config.yaml +60 -0

- BiCodec/model.safetensors +3 -0

- README.md +148 -3

- added_tokens.json +0 -0

- chat_template.jinja +54 -0

- config.json +55 -0

- config.yaml +7 -0

- generation_config.json +9 -0

- merges.txt +0 -0

- model.safetensors +3 -0

- special_tokens_map.json +31 -0

- tokenizer.json +3 -0

- tokenizer_config.json +0 -0

- vocab.json +0 -0

- wav2vec2-large-xlsr-53/README.md +29 -0

- wav2vec2-large-xlsr-53/config.json +83 -0

- wav2vec2-large-xlsr-53/preprocessor_config.json +9 -0

- wav2vec2-large-xlsr-53/pytorch_model.bin +3 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

BiCodec/config.yaml

ADDED

|

@@ -0,0 +1,60 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

audio_tokenizer:

|

| 2 |

+

mel_params:

|

| 3 |

+

sample_rate: 16000

|

| 4 |

+

n_fft: 1024

|

| 5 |

+

win_length: 640

|

| 6 |

+

hop_length: 320

|

| 7 |

+

mel_fmin: 10

|

| 8 |

+

mel_fmax: null

|

| 9 |

+

num_mels: 128

|

| 10 |

+

|

| 11 |

+

encoder:

|

| 12 |

+

input_channels: 1024

|

| 13 |

+

vocos_dim: 384

|

| 14 |

+

vocos_intermediate_dim: 2048

|

| 15 |

+

vocos_num_layers: 12

|

| 16 |

+

out_channels: 1024

|

| 17 |

+

sample_ratios: [1,1]

|

| 18 |

+

|

| 19 |

+

decoder:

|

| 20 |

+

input_channel: 1024

|

| 21 |

+

channels: 1536

|

| 22 |

+

rates: [8, 5, 4, 2]

|

| 23 |

+

kernel_sizes: [16,11,8,4]

|

| 24 |

+

|

| 25 |

+

quantizer:

|

| 26 |

+

input_dim: 1024

|

| 27 |

+

codebook_size: 8192

|

| 28 |

+

codebook_dim: 8

|

| 29 |

+

commitment: 0.25

|

| 30 |

+

codebook_loss_weight: 2.0

|

| 31 |

+

use_l2_normlize: True

|

| 32 |

+

threshold_ema_dead_code: 0.2

|

| 33 |

+

|

| 34 |

+

speaker_encoder:

|

| 35 |

+

input_dim: 128

|

| 36 |

+

out_dim: 1024

|

| 37 |

+

latent_dim: 128

|

| 38 |

+

token_num: 32

|

| 39 |

+

fsq_levels: [4, 4, 4, 4, 4, 4]

|

| 40 |

+

fsq_num_quantizers: 1

|

| 41 |

+

|

| 42 |

+

prenet:

|

| 43 |

+

input_channels: 1024

|

| 44 |

+

vocos_dim: 384

|

| 45 |

+

vocos_intermediate_dim: 2048

|

| 46 |

+

vocos_num_layers: 12

|

| 47 |

+

out_channels: 1024

|

| 48 |

+

condition_dim: 1024

|

| 49 |

+

sample_ratios: [1,1]

|

| 50 |

+

use_tanh_at_final: False

|

| 51 |

+

|

| 52 |

+

postnet:

|

| 53 |

+

input_channels: 1024

|

| 54 |

+

vocos_dim: 384

|

| 55 |

+

vocos_intermediate_dim: 2048

|

| 56 |

+

vocos_num_layers: 6

|

| 57 |

+

out_channels: 1024

|

| 58 |

+

use_tanh_at_final: False

|

| 59 |

+

|

| 60 |

+

|

BiCodec/model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e9940cd48d4446e4340ced82d234bf5618350dd9f5db900ebe47a4fdb03867ec

|

| 3 |

+

size 625518756

|

README.md

CHANGED

|

@@ -1,3 +1,148 @@

|

|

| 1 |

-

---

|

| 2 |

-

license:

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

language:

|

| 4 |

+

- hi

|

| 5 |

+

- kn

|

| 6 |

+

- ta

|

| 7 |

+

- bn

|

| 8 |

+

- gu

|

| 9 |

+

- te

|

| 10 |

+

- mr

|

| 11 |

+

- en

|

| 12 |

+

tags:

|

| 13 |

+

- text-to-speech

|

| 14 |

+

- tts

|

| 15 |

+

- indic

|

| 16 |

+

- zero-shot

|

| 17 |

+

- voice-cloning

|

| 18 |

+

pipeline_tag: text-to-speech

|

| 19 |

+

---

|

| 20 |

+

|

| 21 |

+

# Spark-Somya-TTS

|

| 22 |

+

|

| 23 |

+

Zero-shot voice cloning TTS model for Indic languages, fine-tuned from Spark-TTS-0.5B.

|

| 24 |

+

|

| 25 |

+

## Supported Languages

|

| 26 |

+

|

| 27 |

+

- Hindi (hi)

|

| 28 |

+

- Kannada (kn)

|

| 29 |

+

- Tamil (ta)

|

| 30 |

+

- Bengali (bn)

|

| 31 |

+

- Gujarati (gu)

|

| 32 |

+

- Telugu (te)

|

| 33 |

+

- Marathi (mr)

|

| 34 |

+

- English (en)

|

| 35 |

+

|

| 36 |

+

## Quick Start

|

| 37 |

+

|

| 38 |

+

### Installation

|

| 39 |

+

|

| 40 |

+

```bash

|

| 41 |

+

pip install torch transformers huggingface_hub unsloth soundfile librosa numpy

|

| 42 |

+

```

|

| 43 |

+

|

| 44 |

+

### Download Model

|

| 45 |

+

|

| 46 |

+

```python

|

| 47 |

+

from huggingface_hub import snapshot_download

|

| 48 |

+

|

| 49 |

+

model_dir = snapshot_download("somyalab/Spark_somya_TTS")

|

| 50 |

+

```

|

| 51 |

+

|

| 52 |

+

### Inference

|

| 53 |

+

|

| 54 |

+

```python

|

| 55 |

+

import torch

|

| 56 |

+

import numpy as np

|

| 57 |

+

import soundfile as sf

|

| 58 |

+

from unsloth import FastLanguageModel

|

| 59 |

+

|

| 60 |

+

# Load model

|

| 61 |

+

model, tokenizer = FastLanguageModel.from_pretrained(

|

| 62 |

+

model_name=model_dir,

|

| 63 |

+

max_seq_length=2048,

|

| 64 |

+

dtype=torch.bfloat16,

|

| 65 |

+

load_in_4bit=False,

|

| 66 |

+

)

|

| 67 |

+

FastLanguageModel.for_inference(model)

|

| 68 |

+

|

| 69 |

+

# Load audio tokenizer (BiCodec)

|

| 70 |

+

import sys

|

| 71 |

+

sys.path.insert(0, model_dir)

|

| 72 |

+

from sparktts.models.audio_tokenizer import BiCodecTokenizer

|

| 73 |

+

|

| 74 |

+

audio_tokenizer = BiCodecTokenizer(model_dir, "cuda")

|

| 75 |

+

|

| 76 |

+

# Reference audio for voice cloning

|

| 77 |

+

import librosa

|

| 78 |

+

ref_audio, ref_sr = librosa.load("reference_voice.wav", sr=None)

|

| 79 |

+

ref_global_tokens, _ = audio_tokenizer.tokenize_audio(ref_audio, ref_sr)

|

| 80 |

+

|

| 81 |

+

# Generate speech

|

| 82 |

+

text = "नमस्ते, यह एक परीक्षण है।"

|

| 83 |

+

|

| 84 |

+

prompt = "".join([

|

| 85 |

+

"<|task_tts|>",

|

| 86 |

+

"<|start_content|>",

|

| 87 |

+

text,

|

| 88 |

+

"<|end_content|>",

|

| 89 |

+

"<|start_global_token|>",

|

| 90 |

+

ref_global_tokens,

|

| 91 |

+

"<|end_global_token|>",

|

| 92 |

+

"<|start_semantic_token|>",

|

| 93 |

+

])

|

| 94 |

+

|

| 95 |

+

inputs = tokenizer([prompt], return_tensors="pt").to("cuda")

|

| 96 |

+

outputs = model.generate(

|

| 97 |

+

**inputs,

|

| 98 |

+

max_new_tokens=2048,

|

| 99 |

+

do_sample=True,

|

| 100 |

+

temperature=0.7,

|

| 101 |

+

)

|

| 102 |

+

|

| 103 |

+

# Decode to audio

|

| 104 |

+

generated_ids = outputs[:, inputs.input_ids.shape[1]:]

|

| 105 |

+

generated_tokens = tokenizer.convert_ids_to_tokens(generated_ids[0].tolist())

|

| 106 |

+

|

| 107 |

+

# Extract semantic token IDs

|

| 108 |

+

semantic_ids = []

|

| 109 |

+

for t in generated_tokens:

|

| 110 |

+

if t.startswith("<|bicodec_semantic_") and t.endswith("|>"):

|

| 111 |

+

semantic_ids.append(int(t[18:-2]))

|

| 112 |

+

|

| 113 |

+

# Detokenize to waveform

|

| 114 |

+

import re

|

| 115 |

+

global_matches = re.findall(r"<\|bicodec_global_(\d+)\|>", ref_global_tokens)

|

| 116 |

+

global_ids = torch.tensor([int(t) for t in global_matches]).unsqueeze(0).unsqueeze(0)

|

| 117 |

+

semantic_ids = torch.tensor(semantic_ids).unsqueeze(0)

|

| 118 |

+

|

| 119 |

+

wav = audio_tokenizer.detokenize(

|

| 120 |

+

global_ids.to("cuda").squeeze(0),

|

| 121 |

+

semantic_ids.to("cuda"),

|

| 122 |

+

)

|

| 123 |

+

|

| 124 |

+

sf.write("output.wav", wav, 16000)

|

| 125 |

+

```

|

| 126 |

+

|

| 127 |

+

## Model Architecture

|

| 128 |

+

|

| 129 |

+

- Base: Qwen2ForCausalLM (0.5B parameters)

|

| 130 |

+

- Fine-tuned for Indic languages with extended tokenizer

|

| 131 |

+

- Uses BiCodec for audio tokenization/detokenization

|

| 132 |

+

|

| 133 |

+

## Citation

|

| 134 |

+

|

| 135 |

+

If you use this model, please cite:

|

| 136 |

+

|

| 137 |

+

```bibtex

|

| 138 |

+

@misc{spark-somya-tts,

|

| 139 |

+

title={Spark-Somya-TTS},

|

| 140 |

+

author={Somya Lab},

|

| 141 |

+

year={2025},

|

| 142 |

+

url={https://huggingface.co/somyalab/Spark_somya_TTS}

|

| 143 |

+

}

|

| 144 |

+

```

|

| 145 |

+

|

| 146 |

+

## License

|

| 147 |

+

|

| 148 |

+

Apache 2.0

|

added_tokens.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

chat_template.jinja

ADDED

|

@@ -0,0 +1,54 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{%- if tools %}

|

| 2 |

+

{{- '<|im_start|>system\n' }}

|

| 3 |

+

{%- if messages[0]['role'] == 'system' %}

|

| 4 |

+

{{- messages[0]['content'] }}

|

| 5 |

+

{%- else %}

|

| 6 |

+

{{- 'You are Qwen, created by Alibaba Cloud. You are a helpful assistant.' }}

|

| 7 |

+

{%- endif %}

|

| 8 |

+

{{- "\n\n# Tools\n\nYou may call one or more functions to assist with the user query.\n\nYou are provided with function signatures within <tools></tools> XML tags:\n<tools>" }}

|

| 9 |

+

{%- for tool in tools %}

|

| 10 |

+

{{- "\n" }}

|

| 11 |

+

{{- tool | tojson }}

|

| 12 |

+

{%- endfor %}

|

| 13 |

+

{{- "\n</tools>\n\nFor each function call, return a json object with function name and arguments within <tool_call></tool_call> XML tags:\n<tool_call>\n{\"name\": <function-name>, \"arguments\": <args-json-object>}\n</tool_call><|im_end|>\n" }}

|

| 14 |

+

{%- else %}

|

| 15 |

+

{%- if messages[0]['role'] == 'system' %}

|

| 16 |

+

{{- '<|im_start|>system\n' + messages[0]['content'] + '<|im_end|>\n' }}

|

| 17 |

+

{%- else %}

|

| 18 |

+

{{- '<|im_start|>system\nYou are Qwen, created by Alibaba Cloud. You are a helpful assistant.<|im_end|>\n' }}

|

| 19 |

+

{%- endif %}

|

| 20 |

+

{%- endif %}

|

| 21 |

+

{%- for message in messages %}

|

| 22 |

+

{%- if (message.role == "user") or (message.role == "system" and not loop.first) or (message.role == "assistant" and not message.tool_calls) %}

|

| 23 |

+

{{- '<|im_start|>' + message.role + '\n' + message.content + '<|im_end|>' + '\n' }}

|

| 24 |

+

{%- elif message.role == "assistant" %}

|

| 25 |

+

{{- '<|im_start|>' + message.role }}

|

| 26 |

+

{%- if message.content %}

|

| 27 |

+

{{- '\n' + message.content }}

|

| 28 |

+

{%- endif %}

|

| 29 |

+

{%- for tool_call in message.tool_calls %}

|

| 30 |

+

{%- if tool_call.function is defined %}

|

| 31 |

+

{%- set tool_call = tool_call.function %}

|

| 32 |

+

{%- endif %}

|

| 33 |

+

{{- '\n<tool_call>\n{"name": "' }}

|

| 34 |

+

{{- tool_call.name }}

|

| 35 |

+

{{- '", "arguments": ' }}

|

| 36 |

+

{{- tool_call.arguments | tojson }}

|

| 37 |

+

{{- '}\n</tool_call>' }}

|

| 38 |

+

{%- endfor %}

|

| 39 |

+

{{- '<|im_end|>\n' }}

|

| 40 |

+

{%- elif message.role == "tool" %}

|

| 41 |

+

{%- if (loop.index0 == 0) or (messages[loop.index0 - 1].role != "tool") %}

|

| 42 |

+

{{- '<|im_start|>user' }}

|

| 43 |

+

{%- endif %}

|

| 44 |

+

{{- '\n<tool_response>\n' }}

|

| 45 |

+

{{- message.content }}

|

| 46 |

+

{{- '\n</tool_response>' }}

|

| 47 |

+

{%- if loop.last or (messages[loop.index0 + 1].role != "tool") %}

|

| 48 |

+

{{- '<|im_end|>\n' }}

|

| 49 |

+

{%- endif %}

|

| 50 |

+

{%- endif %}

|

| 51 |

+

{%- endfor %}

|

| 52 |

+

{%- if add_generation_prompt %}

|

| 53 |

+

{{- '<|im_start|>assistant\n' }}

|

| 54 |

+

{%- endif %}

|

config.json

ADDED

|

@@ -0,0 +1,55 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"Qwen2ForCausalLM"

|

| 4 |

+

],

|

| 5 |

+

"attention_dropout": 0.0,

|

| 6 |

+

"dtype": "bfloat16",

|

| 7 |

+

"eos_token_id": 151645,

|

| 8 |

+

"hidden_act": "silu",

|

| 9 |

+

"hidden_size": 896,

|

| 10 |

+

"initializer_range": 0.02,

|

| 11 |

+

"intermediate_size": 4864,

|

| 12 |

+

"layer_types": [

|

| 13 |

+

"full_attention",

|

| 14 |

+

"full_attention",

|

| 15 |

+

"full_attention",

|

| 16 |

+

"full_attention",

|

| 17 |

+

"full_attention",

|

| 18 |

+

"full_attention",

|

| 19 |

+

"full_attention",

|

| 20 |

+

"full_attention",

|

| 21 |

+

"full_attention",

|

| 22 |

+

"full_attention",

|

| 23 |

+

"full_attention",

|

| 24 |

+

"full_attention",

|

| 25 |

+

"full_attention",

|

| 26 |

+

"full_attention",

|

| 27 |

+

"full_attention",

|

| 28 |

+

"full_attention",

|

| 29 |

+

"full_attention",

|

| 30 |

+

"full_attention",

|

| 31 |

+

"full_attention",

|

| 32 |

+

"full_attention",

|

| 33 |

+

"full_attention",

|

| 34 |

+

"full_attention",

|

| 35 |

+

"full_attention",

|

| 36 |

+

"full_attention"

|

| 37 |

+

],

|

| 38 |

+

"max_position_embeddings": 32768,

|

| 39 |

+

"max_window_layers": 21,

|

| 40 |

+

"model_type": "qwen2",

|

| 41 |

+

"num_attention_heads": 14,

|

| 42 |

+

"num_hidden_layers": 24,

|

| 43 |

+

"num_key_value_heads": 2,

|

| 44 |

+

"pad_token_id": 151643,

|

| 45 |

+

"rms_norm_eps": 1e-06,

|

| 46 |

+

"rope_scaling": null,

|

| 47 |

+

"rope_theta": 1000000.0,

|

| 48 |

+

"sliding_window": null,

|

| 49 |

+

"tie_word_embeddings": true,

|

| 50 |

+

"transformers_version": "4.57.3",

|

| 51 |

+

"unsloth_version": "2026.1.2",

|

| 52 |

+

"use_cache": true,

|

| 53 |

+

"use_sliding_window": false,

|

| 54 |

+

"vocab_size": 174868

|

| 55 |

+

}

|

config.yaml

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

highpass_cutoff_freq: 40

|

| 2 |

+

sample_rate: 16000

|

| 3 |

+

segment_duration: 2.4 # (s)

|

| 4 |

+

max_val_duration: 12 # (s)

|

| 5 |

+

latent_hop_length: 320

|

| 6 |

+

ref_segment_duration: 6

|

| 7 |

+

volume_normalize: true

|

generation_config.json

ADDED

|

@@ -0,0 +1,9 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"eos_token_id": [

|

| 4 |

+

151645

|

| 5 |

+

],

|

| 6 |

+

"max_length": 32768,

|

| 7 |

+

"pad_token_id": 151643,

|

| 8 |

+

"transformers_version": "4.57.3"

|

| 9 |

+

}

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c497b93d43965e57a78af5595f4a5a3a85075cb0e20f981e45f99cb7eb8e67f3

|

| 3 |

+

size 1029191992

|

special_tokens_map.json

ADDED

|

@@ -0,0 +1,31 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"additional_special_tokens": [

|

| 3 |

+

"<|im_start|>",

|

| 4 |

+

"<|im_end|>",

|

| 5 |

+

"<|object_ref_start|>",

|

| 6 |

+

"<|object_ref_end|>",

|

| 7 |

+

"<|box_start|>",

|

| 8 |

+

"<|box_end|>",

|

| 9 |

+

"<|quad_start|>",

|

| 10 |

+

"<|quad_end|>",

|

| 11 |

+

"<|vision_start|>",

|

| 12 |

+

"<|vision_end|>",

|

| 13 |

+

"<|vision_pad|>",

|

| 14 |

+

"<|image_pad|>",

|

| 15 |

+

"<|video_pad|>"

|

| 16 |

+

],

|

| 17 |

+

"eos_token": {

|

| 18 |

+

"content": "<|im_end|>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": false,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

},

|

| 24 |

+

"pad_token": {

|

| 25 |

+

"content": "<|endoftext|>",

|

| 26 |

+

"lstrip": false,

|

| 27 |

+

"normalized": false,

|

| 28 |

+

"rstrip": false,

|

| 29 |

+

"single_word": false

|

| 30 |

+

}

|

| 31 |

+

}

|

tokenizer.json

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a900c59389404c6b6d1c599ca05a91f9fd6349b57ad2751821e5e049d3df6f72

|

| 3 |

+

size 15962899

|

tokenizer_config.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

wav2vec2-large-xlsr-53/README.md

ADDED

|

@@ -0,0 +1,29 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language: multilingual

|

| 3 |

+

datasets:

|

| 4 |

+

- common_voice

|

| 5 |

+

tags:

|

| 6 |

+

- speech

|

| 7 |

+

license: apache-2.0

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

# Wav2Vec2-XLSR-53

|

| 11 |

+

|

| 12 |

+

[Facebook's XLSR-Wav2Vec2](https://ai.facebook.com/blog/wav2vec-20-learning-the-structure-of-speech-from-raw-audio/)

|

| 13 |

+

|

| 14 |

+

The base model pretrained on 16kHz sampled speech audio. When using the model make sure that your speech input is also sampled at 16Khz. Note that this model should be fine-tuned on a downstream task, like Automatic Speech Recognition. Check out [this blog](https://huggingface.co/blog/fine-tune-wav2vec2-english) for more information.

|

| 15 |

+

|

| 16 |

+

[Paper](https://arxiv.org/abs/2006.13979)

|

| 17 |

+

|

| 18 |

+

Authors: Alexis Conneau, Alexei Baevski, Ronan Collobert, Abdelrahman Mohamed, Michael Auli

|

| 19 |

+

|

| 20 |

+

**Abstract**

|

| 21 |

+

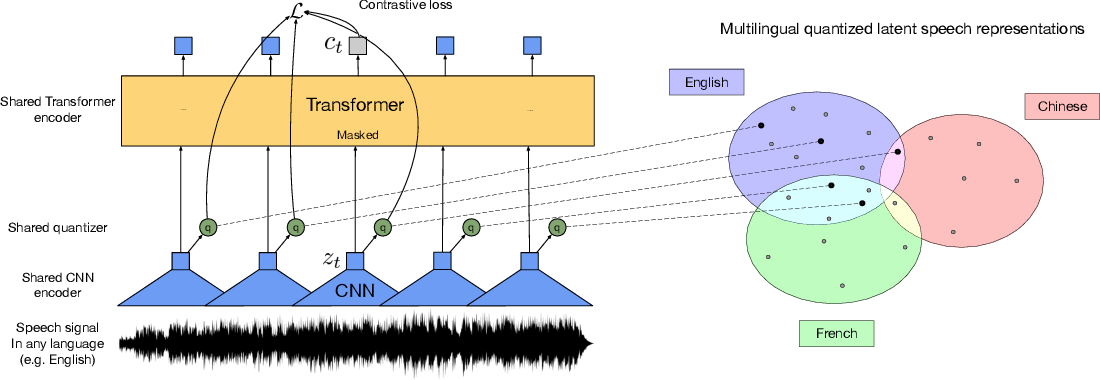

This paper presents XLSR which learns cross-lingual speech representations by pretraining a single model from the raw waveform of speech in multiple languages. We build on wav2vec 2.0 which is trained by solving a contrastive task over masked latent speech representations and jointly learns a quantization of the latents shared across languages. The resulting model is fine-tuned on labeled data and experiments show that cross-lingual pretraining significantly outperforms monolingual pretraining. On the CommonVoice benchmark, XLSR shows a relative phoneme error rate reduction of 72% compared to the best known results. On BABEL, our approach improves word error rate by 16% relative compared to a comparable system. Our approach enables a single multilingual speech recognition model which is competitive to strong individual models. Analysis shows that the latent discrete speech representations are shared across languages with increased sharing for related languages. We hope to catalyze research in low-resource speech understanding by releasing XLSR-53, a large model pretrained in 53 languages.

|

| 22 |

+

|

| 23 |

+

The original model can be found under https://github.com/pytorch/fairseq/tree/master/examples/wav2vec#wav2vec-20.

|

| 24 |

+

|

| 25 |

+

# Usage

|

| 26 |

+

|

| 27 |

+

See [this notebook](https://colab.research.google.com/github/patrickvonplaten/notebooks/blob/master/Fine_Tune_XLSR_Wav2Vec2_on_Turkish_ASR_with_%F0%9F%A4%97_Transformers.ipynb) for more information on how to fine-tune the model.

|

| 28 |

+

|

| 29 |

+

|

wav2vec2-large-xlsr-53/config.json

ADDED

|

@@ -0,0 +1,83 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"activation_dropout": 0.0,

|

| 3 |

+

"apply_spec_augment": true,

|

| 4 |

+

"architectures": [

|

| 5 |

+

"Wav2Vec2ForPreTraining"

|

| 6 |

+

],

|

| 7 |

+

"attention_dropout": 0.1,

|

| 8 |

+

"bos_token_id": 1,

|

| 9 |

+

"codevector_dim": 768,

|

| 10 |

+

"contrastive_logits_temperature": 0.1,

|

| 11 |

+

"conv_bias": true,

|

| 12 |

+

"conv_dim": [

|

| 13 |

+

512,

|

| 14 |

+

512,

|

| 15 |

+

512,

|

| 16 |

+

512,

|

| 17 |

+

512,

|

| 18 |

+

512,

|

| 19 |

+

512

|

| 20 |

+

],

|

| 21 |

+

"conv_kernel": [

|

| 22 |

+

10,

|

| 23 |

+

3,

|

| 24 |

+

3,

|

| 25 |

+

3,

|

| 26 |

+

3,

|

| 27 |

+

2,

|

| 28 |

+

2

|

| 29 |

+

],

|

| 30 |

+

"conv_stride": [

|

| 31 |

+

5,

|

| 32 |

+

2,

|

| 33 |

+

2,

|

| 34 |

+

2,

|

| 35 |

+

2,

|

| 36 |

+

2,

|

| 37 |

+

2

|

| 38 |

+

],

|

| 39 |

+

"ctc_loss_reduction": "sum",

|

| 40 |

+

"ctc_zero_infinity": false,

|

| 41 |

+

"diversity_loss_weight": 0.1,

|

| 42 |

+

"do_stable_layer_norm": true,

|

| 43 |

+

"eos_token_id": 2,

|

| 44 |

+

"feat_extract_activation": "gelu",

|

| 45 |

+

"feat_extract_dropout": 0.0,

|

| 46 |

+

"feat_extract_norm": "layer",

|

| 47 |

+

"feat_proj_dropout": 0.1,

|

| 48 |

+

"feat_quantizer_dropout": 0.0,

|

| 49 |

+

"final_dropout": 0.0,

|

| 50 |

+

"gradient_checkpointing": false,

|

| 51 |

+

"hidden_act": "gelu",

|

| 52 |

+

"hidden_dropout": 0.1,

|

| 53 |

+

"hidden_size": 1024,

|

| 54 |

+

"initializer_range": 0.02,

|

| 55 |

+

"intermediate_size": 4096,

|

| 56 |

+

"layer_norm_eps": 1e-05,

|

| 57 |

+

"layerdrop": 0.1,

|

| 58 |

+

"mask_channel_length": 10,

|

| 59 |

+

"mask_channel_min_space": 1,

|

| 60 |

+

"mask_channel_other": 0.0,

|

| 61 |

+

"mask_channel_prob": 0.0,

|

| 62 |

+

"mask_channel_selection": "static",

|

| 63 |

+

"mask_feature_length": 10,

|

| 64 |

+

"mask_feature_prob": 0.0,

|

| 65 |

+

"mask_time_length": 10,

|

| 66 |

+

"mask_time_min_space": 1,

|

| 67 |

+

"mask_time_other": 0.0,

|

| 68 |

+

"mask_time_prob": 0.075,

|

| 69 |

+

"mask_time_selection": "static",

|

| 70 |

+

"model_type": "wav2vec2",

|

| 71 |

+

"num_attention_heads": 16,

|

| 72 |

+

"num_codevector_groups": 2,

|

| 73 |

+

"num_codevectors_per_group": 320,

|

| 74 |

+

"num_conv_pos_embedding_groups": 16,

|

| 75 |

+

"num_conv_pos_embeddings": 128,

|

| 76 |

+

"num_feat_extract_layers": 7,

|

| 77 |

+

"num_hidden_layers": 24,

|

| 78 |

+

"num_negatives": 100,

|

| 79 |

+

"pad_token_id": 0,

|

| 80 |

+

"proj_codevector_dim": 768,

|

| 81 |

+

"transformers_version": "4.7.0.dev0",

|

| 82 |

+

"vocab_size": 32

|

| 83 |

+

}

|

wav2vec2-large-xlsr-53/preprocessor_config.json

ADDED

|

@@ -0,0 +1,9 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"do_normalize": true,

|

| 3 |

+

"feature_extractor_type": "Wav2Vec2FeatureExtractor",

|

| 4 |

+

"feature_size": 1,

|

| 5 |

+

"padding_side": "right",

|

| 6 |

+

"padding_value": 0,

|

| 7 |

+

"return_attention_mask": true,

|

| 8 |

+

"sampling_rate": 16000

|

| 9 |

+

}

|

wav2vec2-large-xlsr-53/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:314340227371a608f71adcd5f0de5933824fe77e55822aa4b24dba9c1c364dcb

|

| 3 |

+

size 1269737156

|