File size: 6,135 Bytes

6c71465 e8243f2 d782a6e e8243f2 6c71465 9ee8d8f | 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 | ---

title: CV Model Comparison In PyTorch

emoji: 📊

colorFrom: indigo

colorTo: gray

sdk: gradio

sdk_version: 6.8.0

app_file: app.py

pinned: false

license: mit

short_description: PyTorch CV models comparison.

models:

- AIOmarRehan/PyTorch_Unified_CNN_Model

datasets:

- AIOmarRehan/Vehicles

---

# PyTorch Model Comparison: From Custom CNNs to Advanced Transfer Learning

---

## Overview

This project compares **three computer vision approaches in PyTorch** on a vehicle classification task:

1. Custom CNN (trained from scratch)

2. Vision Transformer (DeiT-Tiny)

3. Xception with two-phase transfer learning

The goal is to answer a practical question:

> On small or moderately sized datasets, should you train from scratch or use transfer learning?

The results clearly show that **transfer learning dramatically improves generalization and reliability**, especially when data and compute are limited.

---

## Architectures Compared

### Custom CNN (From Scratch)

A traditional convolutional network built manually with Conv → ReLU → Pooling blocks and fully connected layers.

**Philosophy:** Full architectural control, no pre-training.

Minimal structure:

```python

class CustomCNN(nn.Module):

def __init__(self, num_classes):

super().__init__()

self.features = nn.Sequential(

nn.Conv2d(3, 32, 3, padding=1),

nn.ReLU(),

nn.MaxPool2d(2),

nn.Conv2d(32, 64, 3, padding=1),

nn.ReLU(),

nn.MaxPool2d(2)

)

self.classifier = nn.Sequential(

nn.Linear(64 * 56 * 56, 256),

nn.ReLU(),

nn.Dropout(0.5),

nn.Linear(256, num_classes)

)

```

**Reality on small datasets:**

* Slower convergence

* Higher variance

* Larger generalization gap

---

### Vision Transformer (DeiT-Tiny)

Using Hugging Face's pre-trained Vision Transformer:

```python

model = AutoModelForImageClassification.from_pretrained(

"facebook/deit-tiny-patch16-224",

num_labels=num_classes,

ignore_mismatched_sizes=True

)

```

Trained with the Hugging Face `Trainer` API.

**Advantages:**

* Stable convergence

* Lightweight

* Easy deployment

* Good performance-to-efficiency ratio

---

### Xception (Two-Phase Transfer Learning)

Implemented using `timm`.

### Phase 1 - Train Classifier Head Only

```python

model = timm.create_model("xception", pretrained=True)

for param in model.parameters():

param.requires_grad = False

model.fc = nn.Sequential(

nn.Linear(in_features, 512),

nn.ReLU(),

nn.Dropout(0.5),

nn.Linear(512, num_classes)

)

```

### Phase 2 - Fine-Tune Selected Layers

```python

for name, param in model.named_parameters():

if "block14" in name or "fc" in name:

param.requires_grad = True

```

Lower learning rate used during fine-tuning.

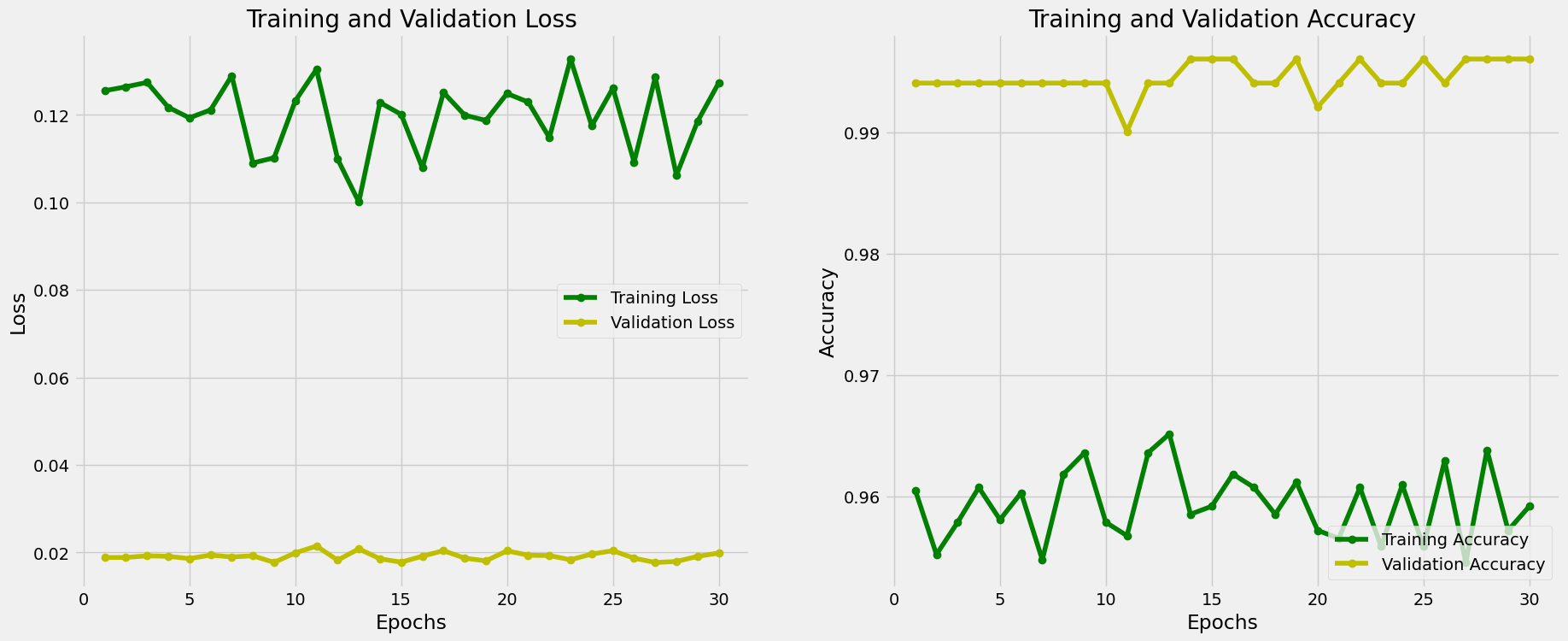

**Result:**

- Smoothest training curves

- Lowest validation loss

- Highest test accuracy

- Strongest performance on unseen internet images

---

## Comparative Results

| Model | Validation Performance | Generalization | Stability |

| ---------- | ---------------------- | -------------- | ----------- |

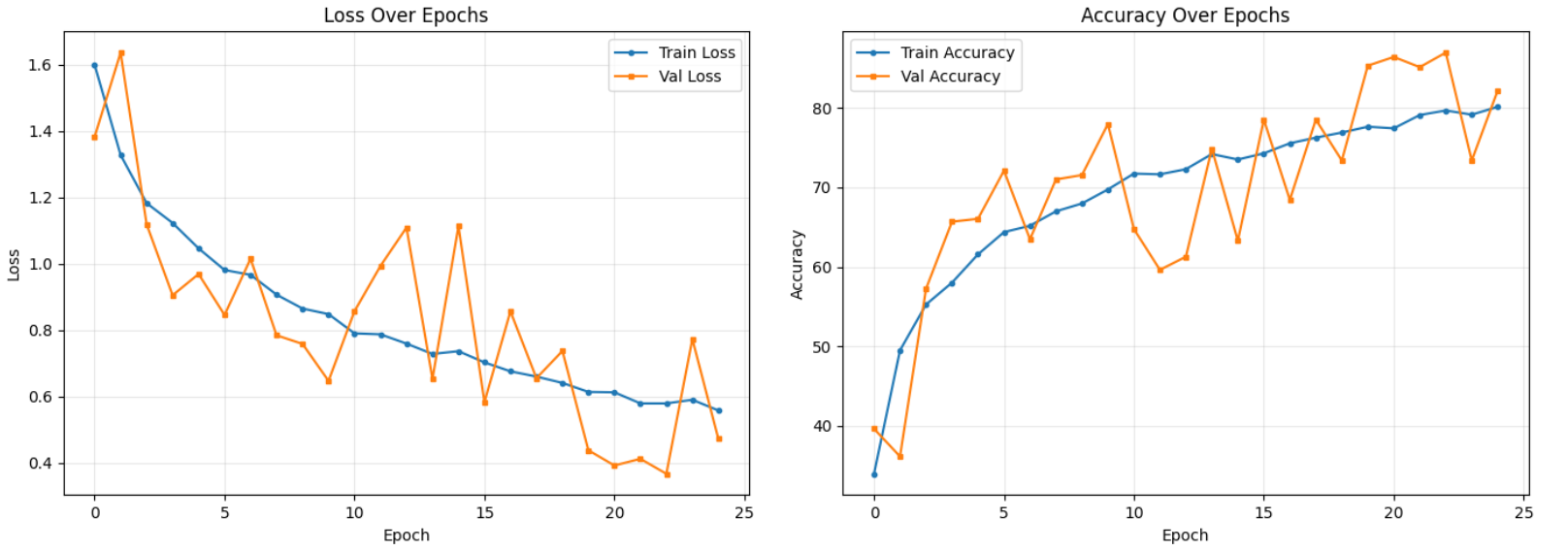

| Custom CNN | High variance | Weak | Unstable |

| DeiT-Tiny | Strong | Good | Stable |

| Xception | Best | Excellent | Very Stable |

### Key Insight

> High validation accuracy does NOT guarantee real-world reliability.

Custom CNN achieved strong validation scores (~87%) but struggled more on distribution shifts.

Xception consistently generalized better.

---

## Experimental Visualizations

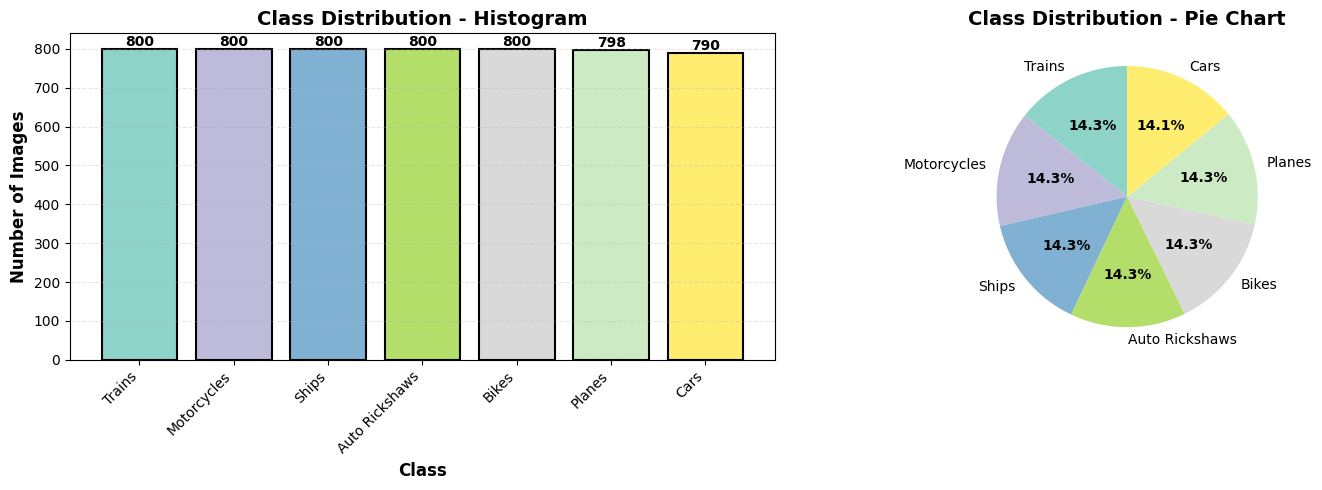

### Dataset Distribution Across All Three Models:

---

### Xception Model:

### Custom CNN Model:

---

### Confusion Matrix between both Models:

| **Custom CNN** | **Xception** |

|------------|----------|

| <img src="https://files.catbox.moe/aulaxo.webp" width="100%"> | <img src="https://files.catbox.moe/gy6yno.webp" width="100%"> |

---

## Example Test Results (Custom CNN)

```

Test Accuracy: 87.89%

Macro Avg:

Precision: 0.8852

Recall: 0.8794

F1-Score: 0.8789

```

Despite solid metrics, performance dropped more noticeably on unseen real-world images compared to Xception.

---

## Deployment

### Run Locally

```bash

pip install -r requirements.txt

python app.py

```

Access at:

```

http://localhost:7860

```

---

## When to Use Each Approach

### Use Custom CNN if:

* Domain is highly specialized

* Pre-trained features don’t apply

* You need full architectural control

### Use Transfer Learning (e.g. DeiT or Xception) if:

* You want fast experimentation

* Efficiency matters

* You prefer high-level APIs

* You want best accuracy

* You care about generalization

* You need production-grade reliability

---

## Final Conclusion

On small or moderately sized datasets:

> Transfer learning isn’t an optimization - it’s a necessity.

Training from scratch forces the model to learn both general visual features and task-specific knowledge simultaneously.

Pre-trained models already understand edges, textures, and spatial structure.

Your dataset only needs to teach classification boundaries.

For most real-world tasks:

* Start with transfer learning

* Fine-tune carefully

* Only train from scratch if absolutely necessary

---

## Results

<p align="center">

<a href="https://files.catbox.moe/ss5ohr.mp4">

<img src="https://files.catbox.moe/3x5mp7.webp" width="400">

</a>

</p> |