Spaces:

Runtime error

Runtime error

Delete open_clip

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- open_clip/.github/workflows/ci.yml +0 -141

- open_clip/.github/workflows/clear-cache.yml +0 -29

- open_clip/.github/workflows/python-publish.yml +0 -37

- open_clip/.gitignore +0 -153

- open_clip/CITATION.cff +0 -33

- open_clip/HISTORY.md +0 -176

- open_clip/LICENSE +0 -23

- open_clip/MANIFEST.in +0 -3

- open_clip/Makefile +0 -12

- open_clip/README.md +0 -798

- open_clip/docs/CLIP.png +0 -0

- open_clip/docs/Interacting_with_open_clip.ipynb +0 -0

- open_clip/docs/Interacting_with_open_coca.ipynb +0 -118

- open_clip/docs/clip_conceptual_captions.md +0 -13

- open_clip/docs/clip_loss.png +0 -0

- open_clip/docs/clip_recall.png +0 -0

- open_clip/docs/clip_val_loss.png +0 -0

- open_clip/docs/clip_zeroshot.png +0 -0

- open_clip/docs/effective_robustness.png +0 -3

- open_clip/docs/laion2b_clip_zeroshot_b32.png +0 -0

- open_clip/docs/laion_clip_zeroshot.png +0 -0

- open_clip/docs/laion_clip_zeroshot_b16.png +0 -0

- open_clip/docs/laion_clip_zeroshot_b16_plus_240.png +0 -0

- open_clip/docs/laion_clip_zeroshot_l14.png +0 -0

- open_clip/docs/laion_openai_compare_b32.jpg +0 -0

- open_clip/docs/scaling.png +0 -0

- open_clip/docs/script_examples/stability_example.sh +0 -60

- open_clip/pytest.ini +0 -3

- open_clip/requirements-test.txt +0 -4

- open_clip/requirements-training.txt +0 -12

- open_clip/requirements.txt +0 -9

- open_clip/setup.py +0 -61

- open_clip/src/open_clip/__init__.py +0 -15

- open_clip/src/open_clip/bpe_simple_vocab_16e6.txt.gz +0 -3

- open_clip/src/open_clip/coca_model.py +0 -458

- open_clip/src/open_clip/constants.py +0 -2

- open_clip/src/open_clip/factory.py +0 -366

- open_clip/src/open_clip/generation_utils.py +0 -0

- open_clip/src/open_clip/hf_configs.py +0 -56

- open_clip/src/open_clip/hf_model.py +0 -193

- open_clip/src/open_clip/loss.py +0 -212

- open_clip/src/open_clip/model.py +0 -448

- open_clip/src/open_clip/model_configs/RN101-quickgelu.json +0 -22

- open_clip/src/open_clip/model_configs/RN101.json +0 -21

- open_clip/src/open_clip/model_configs/RN50-quickgelu.json +0 -22

- open_clip/src/open_clip/model_configs/RN50.json +0 -21

- open_clip/src/open_clip/model_configs/RN50x16.json +0 -21

- open_clip/src/open_clip/model_configs/RN50x4.json +0 -21

- open_clip/src/open_clip/model_configs/RN50x64.json +0 -21

- open_clip/src/open_clip/model_configs/ViT-B-16-plus-240.json +0 -16

open_clip/.github/workflows/ci.yml

DELETED

|

@@ -1,141 +0,0 @@

|

|

| 1 |

-

name: Continuous integration

|

| 2 |

-

|

| 3 |

-

on:

|

| 4 |

-

push:

|

| 5 |

-

branches:

|

| 6 |

-

- main

|

| 7 |

-

paths-ignore:

|

| 8 |

-

- '**.md'

|

| 9 |

-

- 'CITATION.cff'

|

| 10 |

-

- 'LICENSE'

|

| 11 |

-

- '.gitignore'

|

| 12 |

-

- 'docs/**'

|

| 13 |

-

pull_request:

|

| 14 |

-

branches:

|

| 15 |

-

- main

|

| 16 |

-

paths-ignore:

|

| 17 |

-

- '**.md'

|

| 18 |

-

- 'CITATION.cff'

|

| 19 |

-

- 'LICENSE'

|

| 20 |

-

- '.gitignore'

|

| 21 |

-

- 'docs/**'

|

| 22 |

-

workflow_dispatch:

|

| 23 |

-

inputs:

|

| 24 |

-

manual_revision_reference:

|

| 25 |

-

required: false

|

| 26 |

-

type: string

|

| 27 |

-

manual_revision_test:

|

| 28 |

-

required: false

|

| 29 |

-

type: string

|

| 30 |

-

|

| 31 |

-

env:

|

| 32 |

-

REVISION_REFERENCE: v2.8.2

|

| 33 |

-

#9d31b2ec4df6d8228f370ff20c8267ec6ba39383 earliest compatible v2.7.0 + pretrained_hf param

|

| 34 |

-

|

| 35 |

-

jobs:

|

| 36 |

-

Tests:

|

| 37 |

-

strategy:

|

| 38 |

-

matrix:

|

| 39 |

-

os: [ ubuntu-latest ] #, macos-latest ]

|

| 40 |

-

python: [ 3.8 ]

|

| 41 |

-

job_num: [ 4 ]

|

| 42 |

-

job: [ 1, 2, 3, 4 ]

|

| 43 |

-

runs-on: ${{ matrix.os }}

|

| 44 |

-

steps:

|

| 45 |

-

- uses: actions/checkout@v3

|

| 46 |

-

with:

|

| 47 |

-

fetch-depth: 0

|

| 48 |

-

ref: ${{ inputs.manual_revision_test }}

|

| 49 |

-

- name: Set up Python ${{ matrix.python }}

|

| 50 |

-

id: pythonsetup

|

| 51 |

-

uses: actions/setup-python@v4

|

| 52 |

-

with:

|

| 53 |

-

python-version: ${{ matrix.python }}

|

| 54 |

-

- name: Venv cache

|

| 55 |

-

id: venv-cache

|

| 56 |

-

uses: actions/cache@v3

|

| 57 |

-

with:

|

| 58 |

-

path: .env

|

| 59 |

-

key: venv-${{ matrix.os }}-${{ steps.pythonsetup.outputs.python-version }}-${{ hashFiles('requirements*') }}

|

| 60 |

-

- name: Pytest durations cache

|

| 61 |

-

uses: actions/cache@v3

|

| 62 |

-

with:

|

| 63 |

-

path: .test_durations

|

| 64 |

-

key: test_durations-${{ matrix.os }}-${{ steps.pythonsetup.outputs.python-version }}-${{ matrix.job }}-${{ github.run_id }}

|

| 65 |

-

restore-keys: test_durations-0-

|

| 66 |

-

- name: Setup

|

| 67 |

-

if: steps.venv-cache.outputs.cache-hit != 'true'

|

| 68 |

-

run: |

|

| 69 |

-

python3 -m venv .env

|

| 70 |

-

source .env/bin/activate

|

| 71 |

-

make install

|

| 72 |

-

make install-test

|

| 73 |

-

make install-training

|

| 74 |

-

- name: Prepare test data

|

| 75 |

-

run: |

|

| 76 |

-

source .env/bin/activate

|

| 77 |

-

python -m pytest \

|

| 78 |

-

--quiet --co \

|

| 79 |

-

--splitting-algorithm least_duration \

|

| 80 |

-

--splits ${{ matrix.job_num }} \

|

| 81 |

-

--group ${{ matrix.job }} \

|

| 82 |

-

-m regression_test \

|

| 83 |

-

tests \

|

| 84 |

-

| head -n -2 | grep -Po 'test_inference_with_data\[\K[^]]*(?=-False]|-True])' \

|

| 85 |

-

> models_gh_runner.txt

|

| 86 |

-

if [ -n "${{ inputs.manual_revision_reference }}" ]; then

|

| 87 |

-

REVISION_REFERENCE=${{ inputs.manual_revision_reference }}

|

| 88 |

-

fi

|

| 89 |

-

python tests/util_test.py \

|

| 90 |

-

--save_model_list models_gh_runner.txt \

|

| 91 |

-

--model_list models_gh_runner.txt \

|

| 92 |

-

--git_revision $REVISION_REFERENCE

|

| 93 |

-

- name: Unit tests

|

| 94 |

-

run: |

|

| 95 |

-

source .env/bin/activate

|

| 96 |

-

touch .test_durations

|

| 97 |

-

cp .test_durations durations_1

|

| 98 |

-

mv .test_durations durations_2

|

| 99 |

-

python -m pytest \

|

| 100 |

-

-x -s -v \

|

| 101 |

-

--splitting-algorithm least_duration \

|

| 102 |

-

--splits ${{ matrix.job_num }} \

|

| 103 |

-

--group ${{ matrix.job }} \

|

| 104 |

-

--store-durations \

|

| 105 |

-

--durations-path durations_1 \

|

| 106 |

-

--clean-durations \

|

| 107 |

-

-m "not regression_test" \

|

| 108 |

-

tests

|

| 109 |

-

OPEN_CLIP_TEST_REG_MODELS=models_gh_runner.txt python -m pytest \

|

| 110 |

-

-x -s -v \

|

| 111 |

-

--store-durations \

|

| 112 |

-

--durations-path durations_2 \

|

| 113 |

-

--clean-durations \

|

| 114 |

-

-m "regression_test" \

|

| 115 |

-

tests

|

| 116 |

-

jq -s -S 'add' durations_* > .test_durations

|

| 117 |

-

- name: Collect pytest durations

|

| 118 |

-

uses: actions/upload-artifact@v3

|

| 119 |

-

with:

|

| 120 |

-

name: pytest_durations_${{ matrix.os }}-${{ matrix.python }}-${{ matrix.job }}

|

| 121 |

-

path: .test_durations

|

| 122 |

-

|

| 123 |

-

Collect:

|

| 124 |

-

needs: Tests

|

| 125 |

-

runs-on: ubuntu-latest

|

| 126 |

-

steps:

|

| 127 |

-

- name: Cache

|

| 128 |

-

uses: actions/cache@v3

|

| 129 |

-

with:

|

| 130 |

-

path: .test_durations

|

| 131 |

-

key: test_durations-0-${{ github.run_id }}

|

| 132 |

-

- name: Collect

|

| 133 |

-

uses: actions/download-artifact@v3

|

| 134 |

-

with:

|

| 135 |

-

path: artifacts

|

| 136 |

-

- name: Consolidate

|

| 137 |

-

run: |

|

| 138 |

-

jq -n -S \

|

| 139 |

-

'reduce (inputs | to_entries[]) as {$key, $value} ({}; .[$key] += $value)' \

|

| 140 |

-

artifacts/pytest_durations_*/.test_durations > .test_durations

|

| 141 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

open_clip/.github/workflows/clear-cache.yml

DELETED

|

@@ -1,29 +0,0 @@

|

|

| 1 |

-

name: Clear cache

|

| 2 |

-

|

| 3 |

-

on:

|

| 4 |

-

workflow_dispatch:

|

| 5 |

-

|

| 6 |

-

permissions:

|

| 7 |

-

actions: write

|

| 8 |

-

|

| 9 |

-

jobs:

|

| 10 |

-

clear-cache:

|

| 11 |

-

runs-on: ubuntu-latest

|

| 12 |

-

steps:

|

| 13 |

-

- name: Clear cache

|

| 14 |

-

uses: actions/github-script@v6

|

| 15 |

-

with:

|

| 16 |

-

script: |

|

| 17 |

-

const caches = await github.rest.actions.getActionsCacheList({

|

| 18 |

-

owner: context.repo.owner,

|

| 19 |

-

repo: context.repo.repo,

|

| 20 |

-

})

|

| 21 |

-

for (const cache of caches.data.actions_caches) {

|

| 22 |

-

console.log(cache)

|

| 23 |

-

await github.rest.actions.deleteActionsCacheById({

|

| 24 |

-

owner: context.repo.owner,

|

| 25 |

-

repo: context.repo.repo,

|

| 26 |

-

cache_id: cache.id,

|

| 27 |

-

})

|

| 28 |

-

}

|

| 29 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

open_clip/.github/workflows/python-publish.yml

DELETED

|

@@ -1,37 +0,0 @@

|

|

| 1 |

-

name: Release

|

| 2 |

-

|

| 3 |

-

on:

|

| 4 |

-

push:

|

| 5 |

-

branches:

|

| 6 |

-

- main

|

| 7 |

-

jobs:

|

| 8 |

-

deploy:

|

| 9 |

-

runs-on: ubuntu-latest

|

| 10 |

-

steps:

|

| 11 |

-

- uses: actions/checkout@v2

|

| 12 |

-

- uses: actions-ecosystem/action-regex-match@v2

|

| 13 |

-

id: regex-match

|

| 14 |

-

with:

|

| 15 |

-

text: ${{ github.event.head_commit.message }}

|

| 16 |

-

regex: '^Release ([^ ]+)'

|

| 17 |

-

- name: Set up Python

|

| 18 |

-

uses: actions/setup-python@v2

|

| 19 |

-

with:

|

| 20 |

-

python-version: '3.8'

|

| 21 |

-

- name: Install dependencies

|

| 22 |

-

run: |

|

| 23 |

-

python -m pip install --upgrade pip

|

| 24 |

-

pip install setuptools wheel twine

|

| 25 |

-

- name: Release

|

| 26 |

-

if: ${{ steps.regex-match.outputs.match != '' }}

|

| 27 |

-

uses: softprops/action-gh-release@v1

|

| 28 |

-

with:

|

| 29 |

-

tag_name: v${{ steps.regex-match.outputs.group1 }}

|

| 30 |

-

- name: Build and publish

|

| 31 |

-

if: ${{ steps.regex-match.outputs.match != '' }}

|

| 32 |

-

env:

|

| 33 |

-

TWINE_USERNAME: __token__

|

| 34 |

-

TWINE_PASSWORD: ${{ secrets.PYPI_PASSWORD }}

|

| 35 |

-

run: |

|

| 36 |

-

python setup.py sdist bdist_wheel

|

| 37 |

-

twine upload dist/*

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

open_clip/.gitignore

DELETED

|

@@ -1,153 +0,0 @@

|

|

| 1 |

-

logs/

|

| 2 |

-

wandb/

|

| 3 |

-

models/

|

| 4 |

-

features/

|

| 5 |

-

results/

|

| 6 |

-

|

| 7 |

-

tests/data/

|

| 8 |

-

*.pt

|

| 9 |

-

|

| 10 |

-

# Byte-compiled / optimized / DLL files

|

| 11 |

-

__pycache__/

|

| 12 |

-

*.py[cod]

|

| 13 |

-

*$py.class

|

| 14 |

-

|

| 15 |

-

# C extensions

|

| 16 |

-

*.so

|

| 17 |

-

|

| 18 |

-

# Distribution / packaging

|

| 19 |

-

.Python

|

| 20 |

-

build/

|

| 21 |

-

develop-eggs/

|

| 22 |

-

dist/

|

| 23 |

-

downloads/

|

| 24 |

-

eggs/

|

| 25 |

-

.eggs/

|

| 26 |

-

lib/

|

| 27 |

-

lib64/

|

| 28 |

-

parts/

|

| 29 |

-

sdist/

|

| 30 |

-

var/

|

| 31 |

-

wheels/

|

| 32 |

-

pip-wheel-metadata/

|

| 33 |

-

share/python-wheels/

|

| 34 |

-

*.egg-info/

|

| 35 |

-

.installed.cfg

|

| 36 |

-

*.egg

|

| 37 |

-

MANIFEST

|

| 38 |

-

|

| 39 |

-

# PyInstaller

|

| 40 |

-

# Usually these files are written by a python script from a template

|

| 41 |

-

# before PyInstaller builds the exe, so as to inject date/other infos into it.

|

| 42 |

-

*.manifest

|

| 43 |

-

*.spec

|

| 44 |

-

|

| 45 |

-

# Installer logs

|

| 46 |

-

pip-log.txt

|

| 47 |

-

pip-delete-this-directory.txt

|

| 48 |

-

|

| 49 |

-

# Unit test / coverage reports

|

| 50 |

-

htmlcov/

|

| 51 |

-

.tox/

|

| 52 |

-

.nox/

|

| 53 |

-

.coverage

|

| 54 |

-

.coverage.*

|

| 55 |

-

.cache

|

| 56 |

-

nosetests.xml

|

| 57 |

-

coverage.xml

|

| 58 |

-

*.cover

|

| 59 |

-

*.py,cover

|

| 60 |

-

.hypothesis/

|

| 61 |

-

.pytest_cache/

|

| 62 |

-

|

| 63 |

-

# Translations

|

| 64 |

-

*.mo

|

| 65 |

-

*.pot

|

| 66 |

-

|

| 67 |

-

# Django stuff:

|

| 68 |

-

*.log

|

| 69 |

-

local_settings.py

|

| 70 |

-

db.sqlite3

|

| 71 |

-

db.sqlite3-journal

|

| 72 |

-

|

| 73 |

-

# Flask stuff:

|

| 74 |

-

instance/

|

| 75 |

-

.webassets-cache

|

| 76 |

-

|

| 77 |

-

# Scrapy stuff:

|

| 78 |

-

.scrapy

|

| 79 |

-

|

| 80 |

-

# Sphinx documentation

|

| 81 |

-

docs/_build/

|

| 82 |

-

|

| 83 |

-

# PyBuilder

|

| 84 |

-

target/

|

| 85 |

-

|

| 86 |

-

# Jupyter Notebook

|

| 87 |

-

.ipynb_checkpoints

|

| 88 |

-

|

| 89 |

-

# IPython

|

| 90 |

-

profile_default/

|

| 91 |

-

ipython_config.py

|

| 92 |

-

|

| 93 |

-

# pyenv

|

| 94 |

-

.python-version

|

| 95 |

-

|

| 96 |

-

# pipenv

|

| 97 |

-

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

|

| 98 |

-

# However, in case of collaboration, if having platform-specific dependencies or dependencies

|

| 99 |

-

# having no cross-platform support, pipenv may install dependencies that don't work, or not

|

| 100 |

-

# install all needed dependencies.

|

| 101 |

-

#Pipfile.lock

|

| 102 |

-

|

| 103 |

-

# PEP 582; used by e.g. github.com/David-OConnor/pyflow

|

| 104 |

-

__pypackages__/

|

| 105 |

-

|

| 106 |

-

# Celery stuff

|

| 107 |

-

celerybeat-schedule

|

| 108 |

-

celerybeat.pid

|

| 109 |

-

|

| 110 |

-

# SageMath parsed files

|

| 111 |

-

*.sage.py

|

| 112 |

-

|

| 113 |

-

# Environments

|

| 114 |

-

.env

|

| 115 |

-

.venv

|

| 116 |

-

env/

|

| 117 |

-

venv/

|

| 118 |

-

ENV/

|

| 119 |

-

env.bak/

|

| 120 |

-

venv.bak/

|

| 121 |

-

|

| 122 |

-

# Spyder project settings

|

| 123 |

-

.spyderproject

|

| 124 |

-

.spyproject

|

| 125 |

-

|

| 126 |

-

# Rope project settings

|

| 127 |

-

.ropeproject

|

| 128 |

-

|

| 129 |

-

# mkdocs documentation

|

| 130 |

-

/site

|

| 131 |

-

|

| 132 |

-

# mypy

|

| 133 |

-

.mypy_cache/

|

| 134 |

-

.dmypy.json

|

| 135 |

-

dmypy.json

|

| 136 |

-

|

| 137 |

-

# Pyre type checker

|

| 138 |

-

.pyre/

|

| 139 |

-

sync.sh

|

| 140 |

-

gpu1sync.sh

|

| 141 |

-

.idea

|

| 142 |

-

*.pdf

|

| 143 |

-

**/._*

|

| 144 |

-

**/*DS_*

|

| 145 |

-

**.jsonl

|

| 146 |

-

src/sbatch

|

| 147 |

-

src/misc

|

| 148 |

-

.vscode

|

| 149 |

-

src/debug

|

| 150 |

-

core.*

|

| 151 |

-

|

| 152 |

-

# Allow

|

| 153 |

-

!src/evaluation/misc/results_dbs/*

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

open_clip/CITATION.cff

DELETED

|

@@ -1,33 +0,0 @@

|

|

| 1 |

-

cff-version: 1.1.0

|

| 2 |

-

message: If you use this software, please cite it as below.

|

| 3 |

-

authors:

|

| 4 |

-

- family-names: Ilharco

|

| 5 |

-

given-names: Gabriel

|

| 6 |

-

- family-names: Wortsman

|

| 7 |

-

given-names: Mitchell

|

| 8 |

-

- family-names: Wightman

|

| 9 |

-

given-names: Ross

|

| 10 |

-

- family-names: Gordon

|

| 11 |

-

given-names: Cade

|

| 12 |

-

- family-names: Carlini

|

| 13 |

-

given-names: Nicholas

|

| 14 |

-

- family-names: Taori

|

| 15 |

-

given-names: Rohan

|

| 16 |

-

- family-names: Dave

|

| 17 |

-

given-names: Achal

|

| 18 |

-

- family-names: Shankar

|

| 19 |

-

given-names: Vaishaal

|

| 20 |

-

- family-names: Namkoong

|

| 21 |

-

given-names: Hongseok

|

| 22 |

-

- family-names: Miller

|

| 23 |

-

given-names: John

|

| 24 |

-

- family-names: Hajishirzi

|

| 25 |

-

given-names: Hannaneh

|

| 26 |

-

- family-names: Farhadi

|

| 27 |

-

given-names: Ali

|

| 28 |

-

- family-names: Schmidt

|

| 29 |

-

given-names: Ludwig

|

| 30 |

-

title: OpenCLIP

|

| 31 |

-

version: v0.1

|

| 32 |

-

doi: 10.5281/zenodo.5143773

|

| 33 |

-

date-released: 2021-07-28

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

open_clip/HISTORY.md

DELETED

|

@@ -1,176 +0,0 @@

|

|

| 1 |

-

## 2.18.0

|

| 2 |

-

|

| 3 |

-

* Enable int8 inference without `.weight` attribute

|

| 4 |

-

|

| 5 |

-

## 2.17.2

|

| 6 |

-

|

| 7 |

-

* Update push_to_hf_hub

|

| 8 |

-

|

| 9 |

-

## 2.17.0

|

| 10 |

-

|

| 11 |

-

* Add int8 support

|

| 12 |

-

* Update notebook demo

|

| 13 |

-

* Refactor zero-shot classification code

|

| 14 |

-

|

| 15 |

-

## 2.16.2

|

| 16 |

-

|

| 17 |

-

* Fixes for context_length and vocab_size attributes

|

| 18 |

-

|

| 19 |

-

## 2.16.1

|

| 20 |

-

|

| 21 |

-

* Fixes for context_length and vocab_size attributes

|

| 22 |

-

* Fix --train-num-samples logic

|

| 23 |

-

* Add HF BERT configs for PubMed CLIP model

|

| 24 |

-

|

| 25 |

-

## 2.16.0

|

| 26 |

-

|

| 27 |

-

* Add improved g-14 weights

|

| 28 |

-

* Update protobuf version

|

| 29 |

-

|

| 30 |

-

## 2.15.0

|

| 31 |

-

|

| 32 |

-

* Add convnext_xxlarge weights

|

| 33 |

-

* Fixed import in readme

|

| 34 |

-

* Add samples per second per gpu logging

|

| 35 |

-

* Fix slurm example

|

| 36 |

-

|

| 37 |

-

## 2.14.0

|

| 38 |

-

|

| 39 |

-

* Move dataset mixtures logic to shard level

|

| 40 |

-

* Fix CoCa accum-grad training

|

| 41 |

-

* Safer transformers import guard

|

| 42 |

-

* get_labels refactoring

|

| 43 |

-

|

| 44 |

-

## 2.13.0

|

| 45 |

-

|

| 46 |

-

* Add support for dataset mixtures with different sampling weights

|

| 47 |

-

* Make transformers optional again

|

| 48 |

-

|

| 49 |

-

## 2.12.0

|

| 50 |

-

|

| 51 |

-

* Updated convnext configs for consistency

|

| 52 |

-

* Added input_patchnorm option

|

| 53 |

-

* Clean and improve CoCa generation

|

| 54 |

-

* Support model distillation

|

| 55 |

-

* Add ConvNeXt-Large 320x320 fine-tune weights

|

| 56 |

-

|

| 57 |

-

## 2.11.1

|

| 58 |

-

|

| 59 |

-

* Make transformers optional

|

| 60 |

-

* Add MSCOCO CoCa finetunes to pretrained models

|

| 61 |

-

|

| 62 |

-

## 2.11.0

|

| 63 |

-

|

| 64 |

-

* coca support and weights

|

| 65 |

-

* ConvNeXt-Large weights

|

| 66 |

-

|

| 67 |

-

## 2.10.1

|

| 68 |

-

|

| 69 |

-

* `hf-hub:org/model_id` support for loading models w/ config and weights in Hugging Face Hub

|

| 70 |

-

|

| 71 |

-

## 2.10.0

|

| 72 |

-

|

| 73 |

-

* Added a ViT-bigG-14 model.

|

| 74 |

-

* Added an up-to-date example slurm script for large training jobs.

|

| 75 |

-

* Added a option to sync logs and checkpoints to S3 during training.

|

| 76 |

-

* New options for LR schedulers, constant and constant with cooldown

|

| 77 |

-

* Fix wandb autoresuming when resume is not set

|

| 78 |

-

* ConvNeXt `base` & `base_w` pretrained models added

|

| 79 |

-

* `timm-` model prefix removed from configs

|

| 80 |

-

* `timm` augmentation + regularization (dropout / drop-path) supported

|

| 81 |

-

|

| 82 |

-

## 2.9.3

|

| 83 |

-

|

| 84 |

-

* Fix wandb collapsing multiple parallel runs into a single one

|

| 85 |

-

|

| 86 |

-

## 2.9.2

|

| 87 |

-

|

| 88 |

-

* Fix braceexpand memory explosion for complex webdataset urls

|

| 89 |

-

|

| 90 |

-

## 2.9.1

|

| 91 |

-

|

| 92 |

-

* Fix release

|

| 93 |

-

|

| 94 |

-

## 2.9.0

|

| 95 |

-

|

| 96 |

-

* Add training feature to auto-resume from the latest checkpoint on restart via `--resume latest`

|

| 97 |

-

* Allow webp in webdataset

|

| 98 |

-

* Fix logging for number of samples when using gradient accumulation

|

| 99 |

-

* Add model configs for convnext xxlarge

|

| 100 |

-

|

| 101 |

-

## 2.8.2

|

| 102 |

-

|

| 103 |

-

* wrapped patchdropout in a torch.nn.Module

|

| 104 |

-

|

| 105 |

-

## 2.8.1

|

| 106 |

-

|

| 107 |

-

* relax protobuf dependency

|

| 108 |

-

* override the default patch dropout value in 'vision_cfg'

|

| 109 |

-

|

| 110 |

-

## 2.8.0

|

| 111 |

-

|

| 112 |

-

* better support for HF models

|

| 113 |

-

* add support for gradient accumulation

|

| 114 |

-

* CI fixes

|

| 115 |

-

* add support for patch dropout

|

| 116 |

-

* add convnext configs

|

| 117 |

-

|

| 118 |

-

|

| 119 |

-

## 2.7.0

|

| 120 |

-

|

| 121 |

-

* add multilingual H/14 xlm roberta large

|

| 122 |

-

|

| 123 |

-

## 2.6.1

|

| 124 |

-

|

| 125 |

-

* fix setup.py _read_reqs

|

| 126 |

-

|

| 127 |

-

## 2.6.0

|

| 128 |

-

|

| 129 |

-

* Make openclip training usable from pypi.

|

| 130 |

-

* Add xlm roberta large vit h 14 config.

|

| 131 |

-

|

| 132 |

-

## 2.5.0

|

| 133 |

-

|

| 134 |

-

* pretrained B/32 xlm roberta base: first multilingual clip trained on laion5B

|

| 135 |

-

* pretrained B/32 roberta base: first clip trained using an HF text encoder

|

| 136 |

-

|

| 137 |

-

## 2.4.1

|

| 138 |

-

|

| 139 |

-

* Add missing hf_tokenizer_name in CLIPTextCfg.

|

| 140 |

-

|

| 141 |

-

## 2.4.0

|

| 142 |

-

|

| 143 |

-

* Fix #211, missing RN50x64 config. Fix type of dropout param for ResNet models

|

| 144 |

-

* Bring back LayerNorm impl that casts to input for non bf16/fp16

|

| 145 |

-

* zero_shot.py: set correct tokenizer based on args

|

| 146 |

-

* training/params.py: remove hf params and get them from model config

|

| 147 |

-

|

| 148 |

-

## 2.3.1

|

| 149 |

-

|

| 150 |

-

* Implement grad checkpointing for hf model.

|

| 151 |

-

* custom_text: True if hf_model_name is set

|

| 152 |

-

* Disable hf tokenizer parallelism

|

| 153 |

-

|

| 154 |

-

## 2.3.0

|

| 155 |

-

|

| 156 |

-

* Generalizable Text Transformer with HuggingFace Models (@iejMac)

|

| 157 |

-

|

| 158 |

-

## 2.2.0

|

| 159 |

-

|

| 160 |

-

* Support for custom text tower

|

| 161 |

-

* Add checksum verification for pretrained model weights

|

| 162 |

-

|

| 163 |

-

## 2.1.0

|

| 164 |

-

|

| 165 |

-

* lot including sota models, bfloat16 option, better loading, better metrics

|

| 166 |

-

|

| 167 |

-

## 1.2.0

|

| 168 |

-

|

| 169 |

-

* ViT-B/32 trained on Laion2B-en

|

| 170 |

-

* add missing openai RN50x64 model

|

| 171 |

-

|

| 172 |

-

## 1.1.1

|

| 173 |

-

|

| 174 |

-

* ViT-B/16+

|

| 175 |

-

* Add grad checkpointing support

|

| 176 |

-

* more robust data loader

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

open_clip/LICENSE

DELETED

|

@@ -1,23 +0,0 @@

|

|

| 1 |

-

Copyright (c) 2012-2021 Gabriel Ilharco, Mitchell Wortsman,

|

| 2 |

-

Nicholas Carlini, Rohan Taori, Achal Dave, Vaishaal Shankar,

|

| 3 |

-

John Miller, Hongseok Namkoong, Hannaneh Hajishirzi, Ali Farhadi,

|

| 4 |

-

Ludwig Schmidt

|

| 5 |

-

|

| 6 |

-

Permission is hereby granted, free of charge, to any person obtaining

|

| 7 |

-

a copy of this software and associated documentation files (the

|

| 8 |

-

"Software"), to deal in the Software without restriction, including

|

| 9 |

-

without limitation the rights to use, copy, modify, merge, publish,

|

| 10 |

-

distribute, sublicense, and/or sell copies of the Software, and to

|

| 11 |

-

permit persons to whom the Software is furnished to do so, subject to

|

| 12 |

-

the following conditions:

|

| 13 |

-

|

| 14 |

-

The above copyright notice and this permission notice shall be

|

| 15 |

-

included in all copies or substantial portions of the Software.

|

| 16 |

-

|

| 17 |

-

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND,

|

| 18 |

-

EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF

|

| 19 |

-

MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND

|

| 20 |

-

NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE

|

| 21 |

-

LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION

|

| 22 |

-

OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION

|

| 23 |

-

WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

open_clip/MANIFEST.in

DELETED

|

@@ -1,3 +0,0 @@

|

|

| 1 |

-

include src/open_clip/bpe_simple_vocab_16e6.txt.gz

|

| 2 |

-

include src/open_clip/model_configs/*.json

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

open_clip/Makefile

DELETED

|

@@ -1,12 +0,0 @@

|

|

| 1 |

-

install: ## [Local development] Upgrade pip, install requirements, install package.

|

| 2 |

-

python -m pip install -U pip

|

| 3 |

-

python -m pip install -e .

|

| 4 |

-

|

| 5 |

-

install-training:

|

| 6 |

-

python -m pip install -r requirements-training.txt

|

| 7 |

-

|

| 8 |

-

install-test: ## [Local development] Install test requirements

|

| 9 |

-

python -m pip install -r requirements-test.txt

|

| 10 |

-

|

| 11 |

-

test: ## [Local development] Run unit tests

|

| 12 |

-

python -m pytest -x -s -v tests

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

open_clip/README.md

DELETED

|

@@ -1,798 +0,0 @@

|

|

| 1 |

-

# OpenCLIP

|

| 2 |

-

|

| 3 |

-

[[Paper]](https://arxiv.org/abs/2212.07143) [[Clip Colab]](https://colab.research.google.com/github/mlfoundations/open_clip/blob/master/docs/Interacting_with_open_clip.ipynb) [[Coca Colab]](https://colab.research.google.com/github/mlfoundations/open_clip/blob/master/docs/Interacting_with_open_coca.ipynb)

|

| 4 |

-

[](https://pypi.python.org/pypi/open_clip_torch)

|

| 5 |

-

|

| 6 |

-

Welcome to an open source implementation of OpenAI's [CLIP](https://arxiv.org/abs/2103.00020) (Contrastive Language-Image Pre-training).

|

| 7 |

-

|

| 8 |

-

The goal of this repository is to enable training models with contrastive image-text supervision, and to investigate their properties such as robustness to distribution shift. Our starting point is an implementation of CLIP that matches the accuracy of the original CLIP models when trained on the same dataset.

|

| 9 |

-

Specifically, a ResNet-50 model trained with our codebase on OpenAI's [15 million image subset of YFCC](https://github.com/openai/CLIP/blob/main/data/yfcc100m.md) achieves **32.7%** top-1 accuracy on ImageNet. OpenAI's CLIP model reaches **31.3%** when trained on the same subset of YFCC. For ease of experimentation, we also provide code for training on the 3 million images in the [Conceptual Captions](https://ai.google.com/research/ConceptualCaptions/download) dataset, where a ResNet-50x4 trained with our codebase reaches 22.2% top-1 ImageNet accuracy.

|

| 10 |

-

|

| 11 |

-

We further this with a replication study on a dataset of comparable size to OpenAI's, [LAION-400M](https://arxiv.org/abs/2111.02114), and with the larger [LAION-2B](https://laion.ai/blog/laion-5b/) superset. In addition, we study scaling behavior in a paper on [reproducible scaling laws for contrastive language-image learning](https://arxiv.org/abs/2212.07143).

|

| 12 |

-

|

| 13 |

-

We have trained the following ViT CLIP models:

|

| 14 |

-

* ViT-B/32 on LAION-400M with a accuracy of **62.9%**, comparable to OpenAI's **63.2%**, zero-shot top-1 on ImageNet-1k

|

| 15 |

-

* ViT-B/32 on LAION-2B with a accuracy of **66.6%**.

|

| 16 |

-

* ViT-B/16 on LAION-400M achieving an accuracy of **67.1%**, lower than OpenAI's **68.3%** (as measured here, 68.6% in paper)

|

| 17 |

-

* ViT-B/16+ 240x240 (~50% more FLOPS than B/16 224x224) on LAION-400M achieving an accuracy of **69.2%**

|

| 18 |

-

* ViT-B/16 on LAION-2B with a accuracy of **70.2%**.

|

| 19 |

-

* ViT-L/14 on LAION-400M with an accuracy of **72.77%**, vs OpenAI's **75.5%** (as measured here, 75.3% in paper)

|

| 20 |

-

* ViT-L/14 on LAION-2B with an accuracy of **75.3%**, vs OpenAI's **75.5%** (as measured here, 75.3% in paper)

|

| 21 |

-

* CoCa ViT-L/14 on LAION-2B with an accuracy of **75.5%** (currently only 13B samples seen) vs. CLIP ViT-L/14 73.1% (on the same dataset and samples seen)

|

| 22 |

-

* ViT-H/14 on LAION-2B with an accuracy of **78.0**. The second best in1k zero-shot for released, open-source weights thus far.

|

| 23 |

-

* ViT-g/14 on LAION-2B with an accuracy of **76.6**. This was trained on reduced 12B samples seen schedule, same samples seen as 400M models.

|

| 24 |

-

* ViT-g/14 on LAION-2B with an accuracy of **78.5**. Full 34B samples seen schedule.

|

| 25 |

-

* ViT-G/14 on LAION-2B with an accuracy of **80.1**. The best in1k zero-shot for released, open-source weights thus far.

|

| 26 |

-

|

| 27 |

-

And the following ConvNeXt CLIP models:

|

| 28 |

-

* ConvNext-Base @ 224x224 on LAION-400M with an ImageNet-1k zero-shot top-1 of **66.3%**

|

| 29 |

-

* ConvNext-Base (W) @ 256x256 on LAION-2B with an ImageNet-1k zero-shot top-1 of **70.8%**

|

| 30 |

-

* ConvNext-Base (W) @ 256x256 /w augreg (extra augmentation + regularization) on LAION-2B with a top-1 of **71.5%**

|

| 31 |

-

* ConvNext-Base (W) @ 256x256 on LAION-A (900M sample aesthetic subset of 2B) with a top-1 of **71.0%**

|

| 32 |

-

* ConvNext-Base (W) @ 320x320 on LAION-A with a top-1 of **71.7%** (eval at 384x384 is **71.0**)

|

| 33 |

-

* ConvNext-Base (W) @ 320x320 /w augreg on LAION-A with a top-1 of **71.3%** (eval at 384x384 is **72.2%**)

|

| 34 |

-

* ConvNext-Large (D) @ 256x256 /w augreg on LAION-2B with a top-1 of **75.9%**

|

| 35 |

-

* ConvNext-Large (D) @ 320x320 fine-tune of 256x256 weights above for ~2.5B more samples on LAION-2B, top-1 of **76.6%**

|

| 36 |

-

* ConvNext-Large (D) @ 320x320 soup of 3 fine-tunes of 256x256 weights above on LAION-2B, top-1 of **76.9%**

|

| 37 |

-

* ConvNext-XXLarge @ 256x256 original run **79.1%**

|

| 38 |

-

* ConvNext-XXLarge @ 256x256 rewind of last 10% **79.3%**

|

| 39 |

-

* ConvNext-XXLarge @ 256x256 soup of original + rewind **79.4%**

|

| 40 |

-

|

| 41 |

-

Model cards w/ additional model specific details can be found on the Hugging Face Hub under the OpenCLIP library tag: https://huggingface.co/models?library=open_clip

|

| 42 |

-

|

| 43 |

-

As we describe in more detail [below](#why-are-low-accuracy-clip-models-interesting), CLIP models in a medium accuracy regime already allow us to draw conclusions about the robustness of larger CLIP models since the models follow [reliable scaling laws](https://arxiv.org/abs/2107.04649).

|

| 44 |

-

|

| 45 |

-

This codebase is work in progress, and we invite all to contribute in making it more accessible and useful. In the future, we plan to add support for TPU training and release larger models. We hope this codebase facilitates and promotes further research in contrastive image-text learning. Please submit an issue or send an email if you have any other requests or suggestions.

|

| 46 |

-

|

| 47 |

-

Note that portions of `src/open_clip/` modelling and tokenizer code are adaptations of OpenAI's official [repository](https://github.com/openai/CLIP).

|

| 48 |

-

|

| 49 |

-

## Approach

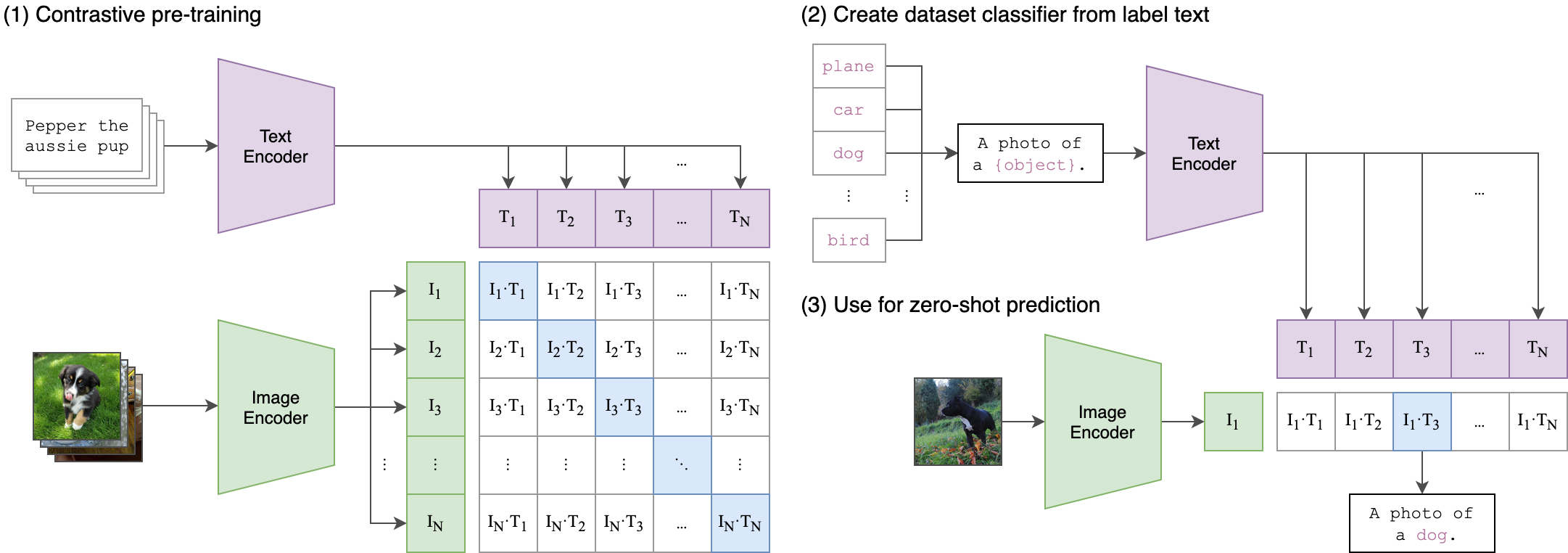

|

| 50 |

-

|

| 51 |

-

|  |

|

| 52 |

-

|:--:|

|

| 53 |

-

| Image Credit: https://github.com/openai/CLIP |

|

| 54 |

-

|

| 55 |

-

## Usage

|

| 56 |

-

|

| 57 |

-

```

|

| 58 |

-

pip install open_clip_torch

|

| 59 |

-

```

|

| 60 |

-

|

| 61 |

-

```python

|

| 62 |

-

import torch

|

| 63 |

-

from PIL import Image

|

| 64 |

-

import open_clip

|

| 65 |

-

|

| 66 |

-

model, _, preprocess = open_clip.create_model_and_transforms('ViT-B-32', pretrained='laion2b_s34b_b79k')

|

| 67 |

-

tokenizer = open_clip.get_tokenizer('ViT-B-32')

|

| 68 |

-

|

| 69 |

-

image = preprocess(Image.open("CLIP.png")).unsqueeze(0)

|

| 70 |

-

text = tokenizer(["a diagram", "a dog", "a cat"])

|

| 71 |

-

|

| 72 |

-

with torch.no_grad(), torch.cuda.amp.autocast():

|

| 73 |

-

image_features = model.encode_image(image)

|

| 74 |

-

text_features = model.encode_text(text)

|

| 75 |

-

image_features /= image_features.norm(dim=-1, keepdim=True)

|

| 76 |

-

text_features /= text_features.norm(dim=-1, keepdim=True)

|

| 77 |

-

|

| 78 |

-

text_probs = (100.0 * image_features @ text_features.T).softmax(dim=-1)

|

| 79 |

-

|

| 80 |

-

print("Label probs:", text_probs) # prints: [[1., 0., 0.]]

|

| 81 |

-

```

|

| 82 |

-

See also this [[Clip Colab]](https://colab.research.google.com/github/mlfoundations/open_clip/blob/master/docs/Interacting_with_open_clip.ipynb)

|

| 83 |

-

|

| 84 |

-

To compute billions of embeddings efficiently, you can use [clip-retrieval](https://github.com/rom1504/clip-retrieval) which has openclip support.

|

| 85 |

-

|

| 86 |

-

## Fine-tuning on classification tasks

|

| 87 |

-

|

| 88 |

-

This repository is focused on training CLIP models. To fine-tune a *trained* zero-shot model on a downstream classification task such as ImageNet, please see [our other repository: WiSE-FT](https://github.com/mlfoundations/wise-ft). The [WiSE-FT repository](https://github.com/mlfoundations/wise-ft) contains code for our paper on [Robust Fine-tuning of Zero-shot Models](https://arxiv.org/abs/2109.01903), in which we introduce a technique for fine-tuning zero-shot models while preserving robustness under distribution shift.

|

| 89 |

-

|

| 90 |

-

## Data

|

| 91 |

-

|

| 92 |

-

To download datasets as webdataset, we recommend [img2dataset](https://github.com/rom1504/img2dataset)

|

| 93 |

-

|

| 94 |

-

### Conceptual Captions

|

| 95 |

-

|

| 96 |

-

See [cc3m img2dataset example](https://github.com/rom1504/img2dataset/blob/main/dataset_examples/cc3m.md)

|

| 97 |

-

|

| 98 |

-

### YFCC and other datasets

|

| 99 |

-

|

| 100 |

-

In addition to specifying the training data via CSV files as mentioned above, our codebase also supports [webdataset](https://github.com/webdataset/webdataset), which is recommended for larger scale datasets. The expected format is a series of `.tar` files. Each of these `.tar` files should contain two files for each training example, one for the image and one for the corresponding text. Both files should have the same name but different extensions. For instance, `shard_001.tar` could contain files such as `abc.jpg` and `abc.txt`. You can learn more about `webdataset` at [https://github.com/webdataset/webdataset](https://github.com/webdataset/webdataset). We use `.tar` files with 1,000 data points each, which we create using [tarp](https://github.com/webdataset/tarp).

|

| 101 |

-

|

| 102 |

-

You can download the YFCC dataset from [Multimedia Commons](http://mmcommons.org/).

|

| 103 |

-

Similar to OpenAI, we used a subset of YFCC to reach the aforementioned accuracy numbers.

|

| 104 |

-

The indices of images in this subset are in [OpenAI's CLIP repository](https://github.com/openai/CLIP/blob/main/data/yfcc100m.md).

|

| 105 |

-

|

| 106 |

-

|

| 107 |

-

## Training CLIP

|

| 108 |

-

|

| 109 |

-

### Install

|

| 110 |

-

|

| 111 |

-

We advise you first create a virtual environment with:

|

| 112 |

-

|

| 113 |

-

```

|

| 114 |

-

python3 -m venv .env

|

| 115 |

-

source .env/bin/activate

|

| 116 |

-

pip install -U pip

|

| 117 |

-

```

|

| 118 |

-

|

| 119 |

-

You can then install openclip for training with `pip install 'open_clip_torch[training]'`.

|

| 120 |

-

|

| 121 |

-

#### Development

|

| 122 |

-

|

| 123 |

-

If you want to make changes to contribute code, you can close openclip then run `make install` in openclip folder (after creating a virtualenv)

|

| 124 |

-

|

| 125 |

-

Install pip PyTorch as per https://pytorch.org/get-started/locally/

|

| 126 |

-

|

| 127 |

-

You may run `make install-training` to install training deps

|

| 128 |

-

|

| 129 |

-

#### Testing

|

| 130 |

-

|

| 131 |

-

Test can be run with `make install-test` then `make test`

|

| 132 |

-

|

| 133 |

-

`python -m pytest -x -s -v tests -k "training"` to run a specific test

|

| 134 |

-

|

| 135 |

-

Running regression tests against a specific git revision or tag:

|

| 136 |

-

1. Generate testing data

|

| 137 |

-

```sh

|

| 138 |

-

python tests/util_test.py --model RN50 RN101 --save_model_list models.txt --git_revision 9d31b2ec4df6d8228f370ff20c8267ec6ba39383

|

| 139 |

-

```

|

| 140 |

-

**_WARNING_: This will invoke git and modify your working tree, but will reset it to the current state after data has been generated! \

|

| 141 |

-

Don't modify your working tree while test data is being generated this way.**

|

| 142 |

-

|

| 143 |

-

2. Run regression tests

|

| 144 |

-

```sh

|

| 145 |

-

OPEN_CLIP_TEST_REG_MODELS=models.txt python -m pytest -x -s -v -m regression_test

|

| 146 |

-

```

|

| 147 |

-

|

| 148 |

-

### Sample single-process running code:

|

| 149 |

-

|

| 150 |

-

```bash

|

| 151 |

-

python -m training.main \

|

| 152 |

-

--save-frequency 1 \

|

| 153 |

-

--zeroshot-frequency 1 \

|

| 154 |

-

--report-to tensorboard \

|

| 155 |

-

--train-data="/path/to/train_data.csv" \

|

| 156 |

-

--val-data="/path/to/validation_data.csv" \

|

| 157 |

-

--csv-img-key filepath \

|

| 158 |

-

--csv-caption-key title \

|

| 159 |

-

--imagenet-val=/path/to/imagenet/root/val/ \

|

| 160 |

-

--warmup 10000 \

|

| 161 |

-

--batch-size=128 \

|

| 162 |

-

--lr=1e-3 \

|

| 163 |

-

--wd=0.1 \

|

| 164 |

-

--epochs=30 \

|

| 165 |

-

--workers=8 \

|

| 166 |

-

--model RN50

|

| 167 |

-

```

|

| 168 |

-

|

| 169 |

-

Note: `imagenet-val` is the path to the *validation* set of ImageNet for zero-shot evaluation, not the training set!

|

| 170 |

-

You can remove this argument if you do not want to perform zero-shot evaluation on ImageNet throughout training. Note that the `val` folder should contain subfolders. If it doest not, please use [this script](https://raw.githubusercontent.com/soumith/imagenetloader.torch/master/valprep.sh).

|

| 171 |

-

|

| 172 |

-

### Multi-GPU and Beyond

|

| 173 |

-

|

| 174 |

-

This code has been battle tested up to 1024 A100s and offers a variety of solutions

|

| 175 |

-

for distributed training. We include native support for SLURM clusters.

|

| 176 |

-

|

| 177 |

-

As the number of devices used to train increases, so does the space complexity of

|

| 178 |

-

the the logit matrix. Using a naïve all-gather scheme, space complexity will be

|

| 179 |

-

`O(n^2)`. Instead, complexity may become effectively linear if the flags

|

| 180 |

-

`--gather-with-grad` and `--local-loss` are used. This alteration results in one-to-one

|

| 181 |

-

numerical results as the naïve method.

|

| 182 |

-

|

| 183 |

-

#### Epochs

|

| 184 |

-

|

| 185 |

-

For larger datasets (eg Laion2B), we recommend setting --train-num-samples to a lower value than the full epoch, for example `--train-num-samples 135646078` to 1/16 of an epoch in conjunction with --dataset-resampled to do sampling with replacement. This allows having frequent checkpoints to evaluate more often.

|

| 186 |

-

|

| 187 |

-

#### Patch Dropout

|

| 188 |

-

|

| 189 |

-

<a href="https://arxiv.org/abs/2212.00794">Recent research</a> has shown that one can dropout half to three-quarters of the visual tokens, leading to up to 2-3x training speeds without loss of accuracy.

|

| 190 |

-

|

| 191 |

-

You can set this on your visual transformer config with the key `patch_dropout`.

|

| 192 |

-

|

| 193 |

-

In the paper, they also finetuned without the patch dropout at the end. You can do this with the command-line argument `--force-patch-dropout 0.`

|

| 194 |

-

|

| 195 |

-

#### Multiple data sources

|

| 196 |

-

|

| 197 |

-

OpenCLIP supports using multiple data sources, by separating different data paths with `::`.

|

| 198 |

-

For instance, to train on CC12M and on LAION, one might use `--train-data '/data/cc12m/cc12m-train-{0000..2175}.tar'::/data/LAION-400M/{00000..41455}.tar"`.

|

| 199 |

-

Using `--dataset-resampled` is recommended for these cases.

|

| 200 |

-

|

| 201 |

-

By default, on expectation the amount of times the model will see a sample from each source is proportional to the size of the source.

|

| 202 |

-

For instance, when training on one data source with size 400M and one with size 10M, samples from the first source are 40x more likely to be seen in expectation.

|

| 203 |

-

|

| 204 |

-

We also support different weighting of the data sources, by using the `--train-data-upsampling-factors` flag.

|

| 205 |

-

For instance, using `--train-data-upsampling-factors=1::1` in the above scenario is equivalent to not using the flag, and `--train-data-upsampling-factors=1::2` is equivalent to upsampling the second data source twice.

|

| 206 |

-

If you want to sample from data sources with the same frequency, the upsampling factors should be inversely proportional to the sizes of the data sources.

|

| 207 |

-

For instance, if dataset `A` has 1000 samples and dataset `B` has 100 samples, you can use `--train-data-upsampling-factors=0.001::0.01` (or analogously, `--train-data-upsampling-factors=1::10`).

|

| 208 |

-

|

| 209 |

-

#### Single-Node

|

| 210 |

-

|

| 211 |

-

We make use of `torchrun` to launch distributed jobs. The following launches a

|

| 212 |

-

a job on a node of 4 GPUs:

|

| 213 |

-

|

| 214 |

-

```bash

|

| 215 |

-

cd open_clip/src

|

| 216 |

-

torchrun --nproc_per_node 4 -m training.main \

|

| 217 |

-

--train-data '/data/cc12m/cc12m-train-{0000..2175}.tar' \

|

| 218 |

-

--train-num-samples 10968539 \

|

| 219 |

-

--dataset-type webdataset \

|

| 220 |

-

--batch-size 320 \

|

| 221 |

-

--precision amp \

|

| 222 |

-

--workers 4 \

|

| 223 |

-

--imagenet-val /data/imagenet/validation/

|

| 224 |

-

```

|

| 225 |

-

|

| 226 |

-

#### Multi-Node

|

| 227 |

-

|

| 228 |

-

The same script above works, so long as users include information about the number

|

| 229 |

-

of nodes and host node.

|

| 230 |

-

|

| 231 |

-

```bash

|

| 232 |

-

cd open_clip/src

|

| 233 |

-

torchrun --nproc_per_node=4 \

|

| 234 |

-

--rdzv_endpoint=$HOSTE_NODE_ADDR \

|

| 235 |

-

-m training.main \

|

| 236 |

-

--train-data '/data/cc12m/cc12m-train-{0000..2175}.tar' \

|

| 237 |

-

--train-num-samples 10968539 \

|

| 238 |

-

--dataset-type webdataset \

|

| 239 |

-

--batch-size 320 \

|

| 240 |

-

--precision amp \

|

| 241 |

-

--workers 4 \

|

| 242 |

-

--imagenet-val /data/imagenet/validation/

|

| 243 |

-

```

|

| 244 |

-

|

| 245 |

-

#### SLURM

|

| 246 |

-

|

| 247 |

-

This is likely the easiest solution to utilize. The following script was used to

|

| 248 |

-

train our largest models:

|

| 249 |

-

|

| 250 |

-

```bash

|

| 251 |

-

#!/bin/bash -x

|

| 252 |

-

#SBATCH --nodes=32

|

| 253 |

-

#SBATCH --gres=gpu:4

|

| 254 |

-

#SBATCH --ntasks-per-node=4

|

| 255 |

-

#SBATCH --cpus-per-task=6

|

| 256 |

-

#SBATCH --wait-all-nodes=1

|

| 257 |

-

#SBATCH --job-name=open_clip

|

| 258 |

-

#SBATCH --account=ACCOUNT_NAME

|

| 259 |

-

#SBATCH --partition PARTITION_NAME

|

| 260 |

-

|

| 261 |

-

eval "$(/path/to/conda/bin/conda shell.bash hook)" # init conda

|

| 262 |

-

conda activate open_clip

|

| 263 |

-

export CUDA_VISIBLE_DEVICES=0,1,2,3

|

| 264 |

-

export MASTER_PORT=12802

|

| 265 |

-

|

| 266 |

-

master_addr=$(scontrol show hostnames "$SLURM_JOB_NODELIST" | head -n 1)

|

| 267 |

-

export MASTER_ADDR=$master_addr

|

| 268 |

-

|

| 269 |

-

cd /shared/open_clip

|

| 270 |

-

export PYTHONPATH="$PYTHONPATH:$PWD/src"

|

| 271 |

-

srun --cpu_bind=v --accel-bind=gn python -u src/training/main.py \

|

| 272 |

-

--save-frequency 1 \

|

| 273 |

-

--report-to tensorboard \

|

| 274 |

-

--train-data="/data/LAION-400M/{00000..41455}.tar" \

|

| 275 |

-