Spaces:

Build error

Build error

Commit ·

45b200f

0

Parent(s):

Clean state

Browse files- .gitattributes +35 -0

- .gitignore +37 -0

- 1_get_files.ipynb +162 -0

- 2_simplest_approach.ipynb +365 -0

- 3_tools_approach.ipynb +640 -0

- README.md +26 -0

- app.py +249 -0

- draft_1.py +3 -0

- global_functions.py +8 -0

- globals.py +71 -0

- gradio_functions.py +62 -0

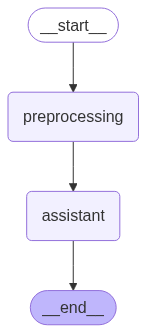

- langgraph.png +0 -0

- langgraph_agent.py +194 -0

- requirements.txt +2 -0

- retriever.py +0 -0

- togetherai_chat_example.py +20 -0

- togetherai_pic_generation_example.py +66 -0

- tools.py +179 -0

- workflow_simple.png +0 -0

- workflow_tools.png +0 -0

- x_audio_analysis.ipynb +165 -0

- x_exel_files_loader.ipynb +109 -0

- x_pic_generation.ipynb +92 -0

- x_python_code_executor.ipynb +200 -0

- x_wikipedia.ipynb +90 -0

- x_youtube_loader.ipynb +347 -0

.gitattributes

ADDED

|

@@ -0,0 +1,35 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

.gitignore

ADDED

|

@@ -0,0 +1,37 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

__pycache__

|

| 3 |

+

venv

|

| 4 |

+

env

|

| 5 |

+

.env

|

| 6 |

+

.venv

|

| 7 |

+

.pytest_cache

|

| 8 |

+

.coverage

|

| 9 |

+

.idea

|

| 10 |

+

.vscode

|

| 11 |

+

lightning_logs

|

| 12 |

+

.ipynb_checkpoints

|

| 13 |

+

.ckpt

|

| 14 |

+

example.ckpt

|

| 15 |

+

.neptune

|

| 16 |

+

logs_for_plots

|

| 17 |

+

logs_for_heuristics

|

| 18 |

+

logs_for_graphs

|

| 19 |

+

logs_for_freedom_maps

|

| 20 |

+

logs_for_experiments

|

| 21 |

+

heuristic_tables

|

| 22 |

+

stats

|

| 23 |

+

videos

|

| 24 |

+

algs_RL/stasts

|

| 25 |

+

.DS_Store

|

| 26 |

+

saved_replays

|

| 27 |

+

my_folder

|

| 28 |

+

results

|

| 29 |

+

test-trainer

|

| 30 |

+

.gradio

|

| 31 |

+

alfred_chroma_db

|

| 32 |

+

lib

|

| 33 |

+

flow.html

|

| 34 |

+

mlruns

|

| 35 |

+

models_for_proj

|

| 36 |

+

files

|

| 37 |

+

pics

|

1_get_files.ipynb

ADDED

|

@@ -0,0 +1,162 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"cells": [

|

| 3 |

+

{

|

| 4 |

+

"metadata": {

|

| 5 |

+

"ExecuteTime": {

|

| 6 |

+

"end_time": "2025-06-08T16:23:31.534793Z",

|

| 7 |

+

"start_time": "2025-06-08T16:23:31.531154Z"

|

| 8 |

+

}

|

| 9 |

+

},

|

| 10 |

+

"cell_type": "code",

|

| 11 |

+

"source": [

|

| 12 |

+

"from globals import *\n",

|

| 13 |

+

"DEFAULT_API_URL = \"https://agents-course-unit4-scoring.hf.space\"\n",

|

| 14 |

+

"api_url = DEFAULT_API_URL\n",

|

| 15 |

+

"questions_url = f\"{api_url}/questions\"\n",

|

| 16 |

+

"submit_url = f\"{api_url}/submit\"\n",

|

| 17 |

+

"file_url = f\"{api_url}/files\""

|

| 18 |

+

],

|

| 19 |

+

"id": "f59c08d782ebc6bd",

|

| 20 |

+

"outputs": [],

|

| 21 |

+

"execution_count": 4

|

| 22 |

+

},

|

| 23 |

+

{

|

| 24 |

+

"metadata": {

|

| 25 |

+

"ExecuteTime": {

|

| 26 |

+

"end_time": "2025-06-08T15:07:07.789828Z",

|

| 27 |

+

"start_time": "2025-06-08T15:07:07.098834Z"

|

| 28 |

+

}

|

| 29 |

+

},

|

| 30 |

+

"cell_type": "code",

|

| 31 |

+

"source": [

|

| 32 |

+

"response = requests.get(questions_url, timeout=15)\n",

|

| 33 |

+

"response.raise_for_status()\n",

|

| 34 |

+

"questions_data = response.json()"

|

| 35 |

+

],

|

| 36 |

+

"id": "81985fdf7fcffcc9",

|

| 37 |

+

"outputs": [],

|

| 38 |

+

"execution_count": 2

|

| 39 |

+

},

|

| 40 |

+

{

|

| 41 |

+

"metadata": {

|

| 42 |

+

"ExecuteTime": {

|

| 43 |

+

"end_time": "2025-06-08T16:30:24.102354Z",

|

| 44 |

+

"start_time": "2025-06-08T16:30:24.099451Z"

|

| 45 |

+

}

|

| 46 |

+

},

|

| 47 |

+

"cell_type": "code",

|

| 48 |

+

"source": [

|

| 49 |

+

"for item_num, item in enumerate(questions_data):\n",

|

| 50 |

+

" # dict_keys(['task_id', 'question', 'Level', 'file_name'])\n",

|

| 51 |

+

" # print(item['question'])\n",

|

| 52 |

+

" if item['file_name'] != '':\n",

|

| 53 |

+

" print(f\"Task {item_num} has file: {item['file_name']}\")\n",

|

| 54 |

+

" # print(f\"The question: \\n {item['question']} \\n\")"

|

| 55 |

+

],

|

| 56 |

+

"id": "28fe3ba72aa61a85",

|

| 57 |

+

"outputs": [

|

| 58 |

+

{

|

| 59 |

+

"name": "stdout",

|

| 60 |

+

"output_type": "stream",

|

| 61 |

+

"text": [

|

| 62 |

+

"Task 3 has file: cca530fc-4052-43b2-b130-b30968d8aa44.png\n",

|

| 63 |

+

"Task 9 has file: 99c9cc74-fdc8-46c6-8f8d-3ce2d3bfeea3.mp3\n",

|

| 64 |

+

"Task 11 has file: f918266a-b3e0-4914-865d-4faa564f1aef.py\n",

|

| 65 |

+

"Task 13 has file: 1f975693-876d-457b-a649-393859e79bf3.mp3\n",

|

| 66 |

+

"Task 18 has file: 7bd855d8-463d-4ed5-93ca-5fe35145f733.xlsx\n"

|

| 67 |

+

]

|

| 68 |

+

}

|

| 69 |

+

],

|

| 70 |

+

"execution_count": 10

|

| 71 |

+

},

|

| 72 |

+

{

|

| 73 |

+

"metadata": {

|

| 74 |

+

"ExecuteTime": {

|

| 75 |

+

"end_time": "2025-06-08T16:38:46.821091Z",

|

| 76 |

+

"start_time": "2025-06-08T16:38:46.143120Z"

|

| 77 |

+

}

|

| 78 |

+

},

|

| 79 |

+

"cell_type": "code",

|

| 80 |

+

"source": [

|

| 81 |

+

"# dict_keys(['task_id', 'question', 'Level', 'file_name'])\n",

|

| 82 |

+

"item_num = 18\n",

|

| 83 |

+

"item = questions_data[item_num]\n",

|

| 84 |

+

"print('---')\n",

|

| 85 |

+

"print(f\"{item['task_id']}\")\n",

|

| 86 |

+

"print(f\"Task {item_num} has file: {item['file_name']}\")\n",

|

| 87 |

+

"\n",

|

| 88 |

+

"response = requests.get(f\"{file_url}/{item['task_id']}\", timeout=15)\n",

|

| 89 |

+

"response.raise_for_status()\n",

|

| 90 |

+

"file_data = response.url\n",

|

| 91 |

+

"print(file_data)\n",

|

| 92 |

+

"print('---')"

|

| 93 |

+

],

|

| 94 |

+

"id": "829cb65e4c515908",

|

| 95 |

+

"outputs": [

|

| 96 |

+

{

|

| 97 |

+

"name": "stdout",

|

| 98 |

+

"output_type": "stream",

|

| 99 |

+

"text": [

|

| 100 |

+

"---\n",

|

| 101 |

+

"7bd855d8-463d-4ed5-93ca-5fe35145f733\n",

|

| 102 |

+

"Task 18 has file: 7bd855d8-463d-4ed5-93ca-5fe35145f733.xlsx\n",

|

| 103 |

+

"https://agents-course-unit4-scoring.hf.space/files/7bd855d8-463d-4ed5-93ca-5fe35145f733\n",

|

| 104 |

+

"---\n"

|

| 105 |

+

]

|

| 106 |

+

}

|

| 107 |

+

],

|

| 108 |

+

"execution_count": 14

|

| 109 |

+

},

|

| 110 |

+

{

|

| 111 |

+

"metadata": {

|

| 112 |

+

"ExecuteTime": {

|

| 113 |

+

"end_time": "2025-06-08T16:29:01.814363Z",

|

| 114 |

+

"start_time": "2025-06-08T16:29:01.811569Z"

|

| 115 |

+

}

|

| 116 |

+

},

|

| 117 |

+

"cell_type": "code",

|

| 118 |

+

"source": "\n",

|

| 119 |

+

"id": "6108349553a14924",

|

| 120 |

+

"outputs": [

|

| 121 |

+

{

|

| 122 |

+

"name": "stdout",

|

| 123 |

+

"output_type": "stream",

|

| 124 |

+

"text": [

|

| 125 |

+

"https://agents-course-unit4-scoring.hf.space/files/cca530fc-4052-43b2-b130-b30968d8aa44\n",

|

| 126 |

+

"---\n"

|

| 127 |

+

]

|

| 128 |

+

}

|

| 129 |

+

],

|

| 130 |

+

"execution_count": 9

|

| 131 |

+

},

|

| 132 |

+

{

|

| 133 |

+

"metadata": {},

|

| 134 |

+

"cell_type": "code",

|

| 135 |

+

"outputs": [],

|

| 136 |

+

"execution_count": null,

|

| 137 |

+

"source": "",

|

| 138 |

+

"id": "f3cca13bc30bca7b"

|

| 139 |

+

}

|

| 140 |

+

],

|

| 141 |

+

"metadata": {

|

| 142 |

+

"kernelspec": {

|

| 143 |

+

"display_name": "Python 3",

|

| 144 |

+

"language": "python",

|

| 145 |

+

"name": "python3"

|

| 146 |

+

},

|

| 147 |

+

"language_info": {

|

| 148 |

+

"codemirror_mode": {

|

| 149 |

+

"name": "ipython",

|

| 150 |

+

"version": 2

|

| 151 |

+

},

|

| 152 |

+

"file_extension": ".py",

|

| 153 |

+

"mimetype": "text/x-python",

|

| 154 |

+

"name": "python",

|

| 155 |

+

"nbconvert_exporter": "python",

|

| 156 |

+

"pygments_lexer": "ipython2",

|

| 157 |

+

"version": "2.7.6"

|

| 158 |

+

}

|

| 159 |

+

},

|

| 160 |

+

"nbformat": 4,

|

| 161 |

+

"nbformat_minor": 5

|

| 162 |

+

}

|

2_simplest_approach.ipynb

ADDED

|

@@ -0,0 +1,365 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"cells": [

|

| 3 |

+

{

|

| 4 |

+

"metadata": {},

|

| 5 |

+

"cell_type": "markdown",

|

| 6 |

+

"source": "Preparations",

|

| 7 |

+

"id": "85e57249794e16a7"

|

| 8 |

+

},

|

| 9 |

+

{

|

| 10 |

+

"cell_type": "code",

|

| 11 |

+

"id": "initial_id",

|

| 12 |

+

"metadata": {

|

| 13 |

+

"collapsed": true,

|

| 14 |

+

"ExecuteTime": {

|

| 15 |

+

"end_time": "2025-06-08T16:21:51.896485Z",

|

| 16 |

+

"start_time": "2025-06-08T16:21:51.893462Z"

|

| 17 |

+

}

|

| 18 |

+

},

|

| 19 |

+

"source": [

|

| 20 |

+

"from globals import *\n",

|

| 21 |

+

"DEFAULT_API_URL = \"https://agents-course-unit4-scoring.hf.space\"\n",

|

| 22 |

+

"api_url = DEFAULT_API_URL\n",

|

| 23 |

+

"questions_url = f\"{api_url}/questions\"\n",

|

| 24 |

+

"submit_url = f\"{api_url}/submit\"\n",

|

| 25 |

+

"file_url = f\"{api_url}/files\""

|

| 26 |

+

],

|

| 27 |

+

"outputs": [],

|

| 28 |

+

"execution_count": 32

|

| 29 |

+

},

|

| 30 |

+

{

|

| 31 |

+

"metadata": {

|

| 32 |

+

"ExecuteTime": {

|

| 33 |

+

"end_time": "2025-06-07T14:46:28.498789Z",

|

| 34 |

+

"start_time": "2025-06-07T14:46:27.794622Z"

|

| 35 |

+

}

|

| 36 |

+

},

|

| 37 |

+

"cell_type": "code",

|

| 38 |

+

"source": [

|

| 39 |

+

"# get questions\n",

|

| 40 |

+

"response = requests.get(questions_url, timeout=15)\n",

|

| 41 |

+

"response.raise_for_status()\n",

|

| 42 |

+

"questions_data = response.json()"

|

| 43 |

+

],

|

| 44 |

+

"id": "2fc7ef4f0959246b",

|

| 45 |

+

"outputs": [],

|

| 46 |

+

"execution_count": 28

|

| 47 |

+

},

|

| 48 |

+

{

|

| 49 |

+

"metadata": {

|

| 50 |

+

"ExecuteTime": {

|

| 51 |

+

"end_time": "2025-06-08T16:49:29.230136Z",

|

| 52 |

+

"start_time": "2025-06-08T16:49:29.227812Z"

|

| 53 |

+

}

|

| 54 |

+

},

|

| 55 |

+

"cell_type": "code",

|

| 56 |

+

"source": [

|

| 57 |

+

"for item_num, item in enumerate(questions_data):\n",

|

| 58 |

+

" # dict_keys(['task_id', 'question', 'Level', 'file_name'])\n",

|

| 59 |

+

" # print(item['question'])\n",

|

| 60 |

+

" # print('---')\n",

|

| 61 |

+

" # print(f\"{item['task_id']}\")\n",

|

| 62 |

+

" print(f\"Task {item_num} has file: {item['file_name']}\")\n",

|

| 63 |

+

" # print(f\"The question: \\n {item['question']} \\n\")"

|

| 64 |

+

],

|

| 65 |

+

"id": "8a00fe57d4ec29bb",

|

| 66 |

+

"outputs": [

|

| 67 |

+

{

|

| 68 |

+

"name": "stdout",

|

| 69 |

+

"output_type": "stream",

|

| 70 |

+

"text": [

|

| 71 |

+

"Task 0 has file: \n",

|

| 72 |

+

"Task 1 has file: \n",

|

| 73 |

+

"Task 2 has file: \n",

|

| 74 |

+

"Task 3 has file: cca530fc-4052-43b2-b130-b30968d8aa44.png\n",

|

| 75 |

+

"Task 4 has file: \n",

|

| 76 |

+

"Task 5 has file: \n",

|

| 77 |

+

"Task 6 has file: \n",

|

| 78 |

+

"Task 7 has file: \n",

|

| 79 |

+

"Task 8 has file: \n",

|

| 80 |

+

"Task 9 has file: 99c9cc74-fdc8-46c6-8f8d-3ce2d3bfeea3.mp3\n",

|

| 81 |

+

"Task 10 has file: \n",

|

| 82 |

+

"Task 11 has file: f918266a-b3e0-4914-865d-4faa564f1aef.py\n",

|

| 83 |

+

"Task 12 has file: \n",

|

| 84 |

+

"Task 13 has file: 1f975693-876d-457b-a649-393859e79bf3.mp3\n",

|

| 85 |

+

"Task 14 has file: \n",

|

| 86 |

+

"Task 15 has file: \n",

|

| 87 |

+

"Task 16 has file: \n",

|

| 88 |

+

"Task 17 has file: \n",

|

| 89 |

+

"Task 18 has file: 7bd855d8-463d-4ed5-93ca-5fe35145f733.xlsx\n",

|

| 90 |

+

"Task 19 has file: \n"

|

| 91 |

+

]

|

| 92 |

+

}

|

| 93 |

+

],

|

| 94 |

+

"execution_count": 38

|

| 95 |

+

},

|

| 96 |

+

{

|

| 97 |

+

"metadata": {

|

| 98 |

+

"ExecuteTime": {

|

| 99 |

+

"end_time": "2025-06-04T18:23:01.054690Z",

|

| 100 |

+

"start_time": "2025-06-04T18:22:59.280217Z"

|

| 101 |

+

}

|

| 102 |

+

},

|

| 103 |

+

"cell_type": "code",

|

| 104 |

+

"source": [

|

| 105 |

+

"train_dataset = load_dataset(\"gaia-benchmark/GAIA\", '2023_level1', split=\"validation\")\n",

|

| 106 |

+

"len(train_dataset)"

|

| 107 |

+

],

|

| 108 |

+

"id": "d6216c8b17766ad8",

|

| 109 |

+

"outputs": [

|

| 110 |

+

{

|

| 111 |

+

"data": {

|

| 112 |

+

"text/plain": [

|

| 113 |

+

"53"

|

| 114 |

+

]

|

| 115 |

+

},

|

| 116 |

+

"execution_count": 22,

|

| 117 |

+

"metadata": {},

|

| 118 |

+

"output_type": "execute_result"

|

| 119 |

+

}

|

| 120 |

+

],

|

| 121 |

+

"execution_count": 22

|

| 122 |

+

},

|

| 123 |

+

{

|

| 124 |

+

"metadata": {

|

| 125 |

+

"ExecuteTime": {

|

| 126 |

+

"end_time": "2025-06-04T18:24:32.847570Z",

|

| 127 |

+

"start_time": "2025-06-04T18:24:32.844925Z"

|

| 128 |

+

}

|

| 129 |

+

},

|

| 130 |

+

"cell_type": "code",

|

| 131 |

+

"source": [

|

| 132 |

+

"print(train_dataset[0].keys())\n",

|

| 133 |

+

"item_0 = train_dataset[0]\n",

|

| 134 |

+

"# for item in train_dataset:\n",

|

| 135 |

+

"# print(item)"

|

| 136 |

+

],

|

| 137 |

+

"id": "ace71ed85c088f6e",

|

| 138 |

+

"outputs": [

|

| 139 |

+

{

|

| 140 |

+

"name": "stdout",

|

| 141 |

+

"output_type": "stream",

|

| 142 |

+

"text": [

|

| 143 |

+

"dict_keys(['task_id', 'Question', 'Level', 'Final answer', 'file_name', 'file_path', 'Annotator Metadata'])\n"

|

| 144 |

+

]

|

| 145 |

+

}

|

| 146 |

+

],

|

| 147 |

+

"execution_count": 25

|

| 148 |

+

},

|

| 149 |

+

{

|

| 150 |

+

"metadata": {},

|

| 151 |

+

"cell_type": "markdown",

|

| 152 |

+

"source": "Simplest approach - just ask LLM",

|

| 153 |

+

"id": "81dbae05a73009a4"

|

| 154 |

+

},

|

| 155 |

+

{

|

| 156 |

+

"metadata": {

|

| 157 |

+

"ExecuteTime": {

|

| 158 |

+

"end_time": "2025-06-06T17:02:03.029164Z",

|

| 159 |

+

"start_time": "2025-06-06T17:02:02.986724Z"

|

| 160 |

+

}

|

| 161 |

+

},

|

| 162 |

+

"cell_type": "code",

|

| 163 |

+

"source": [

|

| 164 |

+

"from globals import *\n",

|

| 165 |

+

"from tools import *\n",

|

| 166 |

+

"\n",

|

| 167 |

+

"# ------------------------------------------------------ #\n",

|

| 168 |

+

"# MODELS & TOOLS\n",

|

| 169 |

+

"# ------------------------------------------------------ #\n",

|

| 170 |

+

"chat_llm = ChatTogether(model=\"meta-llama/Llama-3.3-70B-Instruct-Turbo-Free\", api_key=os.getenv(\"TOGETHER_API_KEY\"))\n",

|

| 171 |

+

"\n",

|

| 172 |

+

"# ------------------------------------------------------ #\n",

|

| 173 |

+

"# STATE\n",

|

| 174 |

+

"# ------------------------------------------------------ #\n",

|

| 175 |

+

"class AgentState(TypedDict):\n",

|

| 176 |

+

" # messages: list[AnyMessage, add_messages]\n",

|

| 177 |

+

" messages: list[AnyMessage]\n",

|

| 178 |

+

" # final_output_is_good: bool\n",

|

| 179 |

+

"\n",

|

| 180 |

+

"# ------------------------------------------------------ #\n",

|

| 181 |

+

"# HELP FUNCTIONS\n",

|

| 182 |

+

"# ------------------------------------------------------ #\n",

|

| 183 |

+

"def step_print(state: AgentState, step_label: str):\n",

|

| 184 |

+

" print(f'<<--- [{len(state[\"messages\"])}] Starting {step_label}... --->>')\n",

|

| 185 |

+

"\n",

|

| 186 |

+

"def messages_print(messages_to_print: List[AnyMessage]):\n",

|

| 187 |

+

" print('--- Message/s ---')\n",

|

| 188 |

+

" for m in messages_to_print:\n",

|

| 189 |

+

" print(f'{m.type} ({m.name}): \\n{m.content}')\n",

|

| 190 |

+

" print(f'<<--- *** --->>')\n",

|

| 191 |

+

"\n",

|

| 192 |

+

"# ------------------------------------------------------ #\n",

|

| 193 |

+

"# NODES\n",

|

| 194 |

+

"# ------------------------------------------------------ #\n",

|

| 195 |

+

"def preprocessing(state: AgentState):\n",

|

| 196 |

+

" step_print(state, 'Preprocessing')\n",

|

| 197 |

+

" messages_print(state['messages'][-1:])\n",

|

| 198 |

+

" return {\n",

|

| 199 |

+

" \"messages\": [SystemMessage(content=DEFAULT_SYSTEM_PROMPT)] + state[\"messages\"]\n",

|

| 200 |

+

" }\n",

|

| 201 |

+

"\n",

|

| 202 |

+

"\n",

|

| 203 |

+

"def assistant(state: AgentState):\n",

|

| 204 |

+

" # state[\"messages\"] = [SystemMessage(content=DEFAULT_SYSTEM_PROMPT)] + state[\"messages\"]\n",

|

| 205 |

+

" step_print(state, 'assistant')\n",

|

| 206 |

+

" ai_message = chat_llm.invoke(state[\"messages\"])\n",

|

| 207 |

+

" messages_print([ai_message])\n",

|

| 208 |

+

" return {\n",

|

| 209 |

+

" 'messages': state[\"messages\"] + [ai_message]\n",

|

| 210 |

+

" }\n",

|

| 211 |

+

"\n",

|

| 212 |

+

"\n",

|

| 213 |

+

"base_tool_node = ToolNode(tools)\n",

|

| 214 |

+

"def wrapped_tool_node(state: AgentState):\n",

|

| 215 |

+

" step_print(state, 'Tools')\n",

|

| 216 |

+

" # Call the original ToolNode\n",

|

| 217 |

+

" result = base_tool_node.invoke(state)\n",

|

| 218 |

+

" messages_print(result[\"messages\"])\n",

|

| 219 |

+

" # Append to the messages list instead of replacing it\n",

|

| 220 |

+

" state[\"messages\"] += result[\"messages\"]\n",

|

| 221 |

+

" return {\"messages\": state[\"messages\"]}\n",

|

| 222 |

+

"\n",

|

| 223 |

+

"\n",

|

| 224 |

+

"# ------------------------------------------------------ #\n",

|

| 225 |

+

"# CONDITIONAL FUNCTIONS\n",

|

| 226 |

+

"# ------------------------------------------------------ #\n",

|

| 227 |

+

"def condition_tools_or_continue(\n",

|

| 228 |

+

" state: Union[list[AnyMessage], dict[str, Any], BaseModel],\n",

|

| 229 |

+

" messages_key: str = \"messages\",\n",

|

| 230 |

+

") -> Literal[\"tools\", \"__end__\"]:\n",

|

| 231 |

+

"\n",

|

| 232 |

+

" if isinstance(state, list):\n",

|

| 233 |

+

" ai_message = state[-1]\n",

|

| 234 |

+

" elif isinstance(state, dict) and (messages := state.get(messages_key, [])):\n",

|

| 235 |

+

" ai_message = messages[-1]\n",

|

| 236 |

+

" elif messages := getattr(state, messages_key, []):\n",

|

| 237 |

+

" ai_message = messages[-1]\n",

|

| 238 |

+

" else:\n",

|

| 239 |

+

" raise ValueError(f\"No messages found in input state to tool_edge: {state}\")\n",

|

| 240 |

+

" if hasattr(ai_message, \"tool_calls\") and len(ai_message.tool_calls) > 0:\n",

|

| 241 |

+

" return \"tools\"\n",

|

| 242 |

+

" # return \"checker_final_answer\"\n",

|

| 243 |

+

" return \"__end__\"\n",

|

| 244 |

+

"\n",

|

| 245 |

+

"\n",

|

| 246 |

+

"# ------------------------------------------------------ #\n",

|

| 247 |

+

"# BUILDERS\n",

|

| 248 |

+

"# ------------------------------------------------------ #\n",

|

| 249 |

+

"def workflow_simple() -> Tuple[StateGraph, str]:\n",

|

| 250 |

+

" i_builder = StateGraph(AgentState)\n",

|

| 251 |

+

" # Nodes\n",

|

| 252 |

+

" i_builder.add_node('preprocessing', preprocessing)\n",

|

| 253 |

+

" i_builder.add_node('assistant', assistant)\n",

|

| 254 |

+

"\n",

|

| 255 |

+

" # Edges\n",

|

| 256 |

+

" i_builder.add_edge(START, 'preprocessing')\n",

|

| 257 |

+

" i_builder.add_edge('preprocessing', 'assistant')\n",

|

| 258 |

+

" return i_builder, 'workflow_simple'\n",

|

| 259 |

+

"\n",

|

| 260 |

+

"\n",

|

| 261 |

+

"# ------------------------------------------------------ #\n",

|

| 262 |

+

"# COMPILATION\n",

|

| 263 |

+

"# ------------------------------------------------------ #\n",

|

| 264 |

+

"builder, builder_name = workflow_simple()\n",

|

| 265 |

+

"alfred = builder.compile()"

|

| 266 |

+

],

|

| 267 |

+

"id": "9dda3c180ddb1cf6",

|

| 268 |

+

"outputs": [],

|

| 269 |

+

"execution_count": 9

|

| 270 |

+

},

|

| 271 |

+

{

|

| 272 |

+

"metadata": {

|

| 273 |

+

"ExecuteTime": {

|

| 274 |

+

"end_time": "2025-06-06T17:02:14.364108Z",

|

| 275 |

+

"start_time": "2025-06-06T17:02:04.962236Z"

|

| 276 |

+

}

|

| 277 |

+

},

|

| 278 |

+

"cell_type": "code",

|

| 279 |

+

"source": "response = alfred.invoke({'messages': [HumanMessage(content=\"If Eliud Kipchoge could maintain his record-making marathon pace indefinitely, how many thousand hours would it take him to run the distance between the Earth and the Moon its closest approach? Please use the minimum perigee value on the Wikipedia page for the Moon when carrying out your calculation. Round your result to the nearest 1000 hours and do not use any comma separators if necessary.\")]})",

|

| 280 |

+

"id": "817c59e55d4ccd37",

|

| 281 |

+

"outputs": [

|

| 282 |

+

{

|

| 283 |

+

"name": "stdout",

|

| 284 |

+

"output_type": "stream",

|

| 285 |

+

"text": [

|

| 286 |

+

"<<--- [1] Starting Preprocessing... --->>\n",

|

| 287 |

+

"--- Message/s ---\n",

|

| 288 |

+

"human (None): \n",

|

| 289 |

+

"If Eliud Kipchoge could maintain his record-making marathon pace indefinitely, how many thousand hours would it take him to run the distance between the Earth and the Moon its closest approach? Please use the minimum perigee value on the Wikipedia page for the Moon when carrying out your calculation. Round your result to the nearest 1000 hours and do not use any comma separators if necessary.\n",

|

| 290 |

+

"<<--- *** --->>\n",

|

| 291 |

+

"<<--- [2] Starting assistant... --->>\n",

|

| 292 |

+

"--- Message/s ---\n",

|

| 293 |

+

"ai (None): \n",

|

| 294 |

+

"To calculate the time it would take Eliud Kipchoge to run from the Earth to the Moon at its closest approach, we first need to find out the distance between the Earth and the Moon at its closest approach and Eliud Kipchoge's speed.\n",

|

| 295 |

+

"\n",

|

| 296 |

+

"The minimum perigee value for the Moon, according to Wikipedia, is approximately 356400 kilometers.\n",

|

| 297 |

+

"\n",

|

| 298 |

+

"Eliud Kipchoge's record-making marathon pace is 2:01:39 hours for 42.195 kilometers. To find his speed in kilometers per hour, we divide the distance by the time. \n",

|

| 299 |

+

"\n",

|

| 300 |

+

"First, convert 2:01:39 hours to just hours: 2 + (1/60) + (39/3600) = 2 + 0.0167 + 0.0108 = 2.0275 hours.\n",

|

| 301 |

+

"\n",

|

| 302 |

+

"Now, calculate his speed: 42.195 km / 2.0275 hours = 20.818 km/h.\n",

|

| 303 |

+

"\n",

|

| 304 |

+

"Now, calculate the time it would take to run 356400 km at this speed: 356400 km / 20.818 km/h = 17127 hours.\n",

|

| 305 |

+

"\n",

|

| 306 |

+

"To convert this to thousand hours, divide by 1000: 17127 / 1000 = 17.127.\n",

|

| 307 |

+

"\n",

|

| 308 |

+

"Rounded to the nearest 1000 hours, this is 17 thousand hours, but since the answer should not use comma separators or units, and should be rounded to the nearest 1000, we get 17000.\n",

|

| 309 |

+

"\n",

|

| 310 |

+

"FINAL ANSWER: 17000\n",

|

| 311 |

+

"<<--- *** --->>\n"

|

| 312 |

+

]

|

| 313 |

+

}

|

| 314 |

+

],

|

| 315 |

+

"execution_count": 10

|

| 316 |

+

},

|

| 317 |

+

{

|

| 318 |

+

"metadata": {

|

| 319 |

+

"ExecuteTime": {

|

| 320 |

+

"end_time": "2025-06-06T16:58:44.774532Z",

|

| 321 |

+

"start_time": "2025-06-06T16:58:42.601012Z"

|

| 322 |

+

}

|

| 323 |

+

},

|

| 324 |

+

"cell_type": "code",

|

| 325 |

+

"source": "response1 = chat_llm.invoke([SystemMessage(content=DEFAULT_SYSTEM_PROMPT), HumanMessage(content=\"If Eliud Kipchoge could maintain his record-making marathon pace indefinitely, how many thousand hours would it take him to run the distance between the Earth and the Moon its closest approach? Please use the minimum perigee value on the Wikipedia page for the Moon when carrying out your calculation. Round your result to the nearest 1000 hours and do not use any comma separators if necessary.\")])",

|

| 326 |

+

"id": "ef5b5fafeaff660b",

|

| 327 |

+

"outputs": [],

|

| 328 |

+

"execution_count": 6

|

| 329 |

+

},

|

| 330 |

+

{

|

| 331 |

+

"metadata": {

|

| 332 |

+

"ExecuteTime": {

|

| 333 |

+

"end_time": "2025-06-06T16:59:57.792887Z",

|

| 334 |

+

"start_time": "2025-06-06T16:59:55.855377Z"

|

| 335 |

+

}

|

| 336 |

+

},

|

| 337 |

+

"cell_type": "code",

|

| 338 |

+

"source": "response2 = chat_llm.invoke([SystemMessage(content=DEFAULT_SYSTEM_PROMPT), HumanMessage(content=\"If Eliud Kipchoge could maintain his record-making marathon pace indefinitely, how many thousand hours would it take him to run the distance between the Earth and the Moon its closest approach? Please use the minimum perigee value on the Wikipedia page for the Moon when carrying out your calculation. Round your result to the nearest 1000 hours and do not use any comma separators if necessary.\"), response1])",

|

| 339 |

+

"id": "7462e130d047c1be",

|

| 340 |

+

"outputs": [],

|

| 341 |

+

"execution_count": 8

|

| 342 |

+

}

|

| 343 |

+

],

|

| 344 |

+

"metadata": {

|

| 345 |

+

"kernelspec": {

|

| 346 |

+

"display_name": "Python 3",

|

| 347 |

+

"language": "python",

|

| 348 |

+

"name": "python3"

|

| 349 |

+

},

|

| 350 |

+

"language_info": {

|

| 351 |

+

"codemirror_mode": {

|

| 352 |

+

"name": "ipython",

|

| 353 |

+

"version": 2

|

| 354 |

+

},

|

| 355 |

+

"file_extension": ".py",

|

| 356 |

+

"mimetype": "text/x-python",

|

| 357 |

+

"name": "python",

|

| 358 |

+

"nbconvert_exporter": "python",

|

| 359 |

+

"pygments_lexer": "ipython2",

|

| 360 |

+

"version": "2.7.6"

|

| 361 |

+

}

|

| 362 |

+

},

|

| 363 |

+

"nbformat": 4,

|

| 364 |

+

"nbformat_minor": 5

|

| 365 |

+

}

|

3_tools_approach.ipynb

ADDED

|

@@ -0,0 +1,640 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"cells": [

|

| 3 |

+

{

|

| 4 |

+

"metadata": {},

|

| 5 |

+

"cell_type": "markdown",

|

| 6 |

+

"source": "Preps",

|

| 7 |

+

"id": "39fa029d099d9f52"

|

| 8 |

+

},

|

| 9 |

+

{

|

| 10 |

+

"metadata": {

|

| 11 |

+

"ExecuteTime": {

|

| 12 |

+

"end_time": "2025-06-10T20:50:31.142189Z",

|

| 13 |

+

"start_time": "2025-06-10T20:50:31.139103Z"

|

| 14 |

+

}

|

| 15 |

+

},

|

| 16 |

+

"cell_type": "code",

|

| 17 |

+

"source": "from tools import describe_audio_tool",

|

| 18 |

+

"id": "a8592566121f9a22",

|

| 19 |

+

"outputs": [],

|

| 20 |

+

"execution_count": 87

|

| 21 |

+

},

|

| 22 |

+

{

|

| 23 |

+

"cell_type": "code",

|

| 24 |

+

"id": "initial_id",

|

| 25 |

+

"metadata": {

|

| 26 |

+

"collapsed": true,

|

| 27 |

+

"ExecuteTime": {

|

| 28 |

+

"end_time": "2025-06-10T20:50:31.155454Z",

|

| 29 |

+

"start_time": "2025-06-10T20:50:31.152566Z"

|

| 30 |

+

}

|

| 31 |

+

},

|

| 32 |

+

"source": [

|

| 33 |

+

"from globals import *\n",

|

| 34 |

+

"from global_functions import *\n",

|

| 35 |

+

"from tools import *\n",

|

| 36 |

+

"from IPython.display import Image, display\n",

|

| 37 |

+

"import datasets\n",

|

| 38 |

+

"import base64\n",

|

| 39 |

+

"from langchain_core.messages import AnyMessage, HumanMessage, AIMessage, SystemMessage, ToolMessage\n",

|

| 40 |

+

"# describe_image_tool\n",

|

| 41 |

+

"import subprocess\n",

|

| 42 |

+

"from langchain_community.document_loaders import UnstructuredExcelLoader\n",

|

| 43 |

+

"import yt_dlp\n",

|

| 44 |

+

"from langchain_community.tools import WikipediaQueryRun\n",

|

| 45 |

+

"from langchain_community.utilities import WikipediaAPIWrapper"

|

| 46 |

+

],

|

| 47 |

+

"outputs": [],

|

| 48 |

+

"execution_count": 88

|

| 49 |

+

},

|

| 50 |

+

{

|

| 51 |

+

"metadata": {

|

| 52 |

+

"ExecuteTime": {

|

| 53 |

+

"end_time": "2025-06-10T20:50:31.198378Z",

|

| 54 |

+

"start_time": "2025-06-10T20:50:31.165368Z"

|

| 55 |

+

}

|

| 56 |

+

},

|

| 57 |

+

"cell_type": "code",

|

| 58 |

+

"source": [

|

| 59 |

+

"# ------------------------------------------------------ #\n",

|

| 60 |

+

"# MODELS\n",

|

| 61 |

+

"# ------------------------------------------------------ #\n",

|

| 62 |

+

"# init_chat_llm = ChatOllama(model=model_name)\n",

|

| 63 |

+

"init_chat_llm = ChatTogether(model=\"meta-llama/Llama-3.3-70B-Instruct-Turbo-Free\", api_key=os.getenv(\"TOGETHER_API_KEY\"))\n"

|

| 64 |

+

],

|

| 65 |

+

"id": "de15a3991553b118",

|

| 66 |

+

"outputs": [],

|

| 67 |

+

"execution_count": 89

|

| 68 |

+

},

|

| 69 |

+

{

|

| 70 |

+

"metadata": {

|

| 71 |

+

"ExecuteTime": {

|

| 72 |

+

"end_time": "2025-06-10T20:50:31.208604Z",

|

| 73 |

+

"start_time": "2025-06-10T20:50:31.206116Z"

|

| 74 |

+

}

|

| 75 |

+

},

|

| 76 |

+

"cell_type": "code",

|

| 77 |

+

"source": [

|

| 78 |

+

"# ------------------------------------------------------ #\n",

|

| 79 |

+

"# FUNCTIONS FOR TOOLS\n",

|

| 80 |

+