diff --git a/.dockerignore b/.dockerignore

new file mode 100644

index 0000000000000000000000000000000000000000..8771911bd9b6ef9f6390b603a5ce8a5242958c4b

--- /dev/null

+++ b/.dockerignore

@@ -0,0 +1,6 @@

+.git

+.env

+.claude

+__pycache__

+*.pyc

+outputs/

diff --git a/.env.example b/.env.example

new file mode 100644

index 0000000000000000000000000000000000000000..632ee4515aefc80e66060d18d7bf26f8a80bdead

--- /dev/null

+++ b/.env.example

@@ -0,0 +1,10 @@

+# Required

+GEMINI_API_KEY=your_gemini_api_key

+SUPABASE_URL=your_supabase_project_url

+SUPABASE_ANON_KEY=your_supabase_anon_key

+

+# Optional (auto-detected on Linux, only needed for custom installs)

+# TESSERACT_CMD=/usr/bin/tesseract

+# POPPLER_PATH=/usr/bin

+# PORT=7860

+# FLASK_DEBUG=false

diff --git a/.gitattributes b/.gitattributes

index a6344aac8c09253b3b630fb776ae94478aa0275b..6b61c5fd0906fef9908d8e77e7b3094b94ce67d9 100644

--- a/.gitattributes

+++ b/.gitattributes

@@ -1,35 +1,44 @@

-*.7z filter=lfs diff=lfs merge=lfs -text

-*.arrow filter=lfs diff=lfs merge=lfs -text

-*.bin filter=lfs diff=lfs merge=lfs -text

-*.bz2 filter=lfs diff=lfs merge=lfs -text

-*.ckpt filter=lfs diff=lfs merge=lfs -text

-*.ftz filter=lfs diff=lfs merge=lfs -text

-*.gz filter=lfs diff=lfs merge=lfs -text

-*.h5 filter=lfs diff=lfs merge=lfs -text

-*.joblib filter=lfs diff=lfs merge=lfs -text

-*.lfs.* filter=lfs diff=lfs merge=lfs -text

-*.mlmodel filter=lfs diff=lfs merge=lfs -text

-*.model filter=lfs diff=lfs merge=lfs -text

-*.msgpack filter=lfs diff=lfs merge=lfs -text

-*.npy filter=lfs diff=lfs merge=lfs -text

-*.npz filter=lfs diff=lfs merge=lfs -text

-*.onnx filter=lfs diff=lfs merge=lfs -text

-*.ot filter=lfs diff=lfs merge=lfs -text

-*.parquet filter=lfs diff=lfs merge=lfs -text

-*.pb filter=lfs diff=lfs merge=lfs -text

-*.pickle filter=lfs diff=lfs merge=lfs -text

-*.pkl filter=lfs diff=lfs merge=lfs -text

-*.pt filter=lfs diff=lfs merge=lfs -text

-*.pth filter=lfs diff=lfs merge=lfs -text

-*.rar filter=lfs diff=lfs merge=lfs -text

-*.safetensors filter=lfs diff=lfs merge=lfs -text

-saved_model/**/* filter=lfs diff=lfs merge=lfs -text

-*.tar.* filter=lfs diff=lfs merge=lfs -text

-*.tar filter=lfs diff=lfs merge=lfs -text

-*.tflite filter=lfs diff=lfs merge=lfs -text

-*.tgz filter=lfs diff=lfs merge=lfs -text

-*.wasm filter=lfs diff=lfs merge=lfs -text

-*.xz filter=lfs diff=lfs merge=lfs -text

-*.zip filter=lfs diff=lfs merge=lfs -text

-*.zst filter=lfs diff=lfs merge=lfs -text

-*tfevents* filter=lfs diff=lfs merge=lfs -text

+# Auto detect text files and perform LF normalization

+* text=auto

+Images/OCRSheet.jpg filter=lfs diff=lfs merge=lfs -text

+Images/OcrSheetMarked.jpg filter=lfs diff=lfs merge=lfs -text

+Images/omr_answer_key.pdf filter=lfs diff=lfs merge=lfs -text

+Images/omr_answer_key.png filter=lfs diff=lfs merge=lfs -text

+Images/OMRSheet.jpg filter=lfs diff=lfs merge=lfs -text

+Images/OMRSheet2.jpg filter=lfs diff=lfs merge=lfs -text

+Images/OMRSheet3.jpg filter=lfs diff=lfs merge=lfs -text

+Images/OMRTest.jpg filter=lfs diff=lfs merge=lfs -text

+Images/question_paper.png filter=lfs diff=lfs merge=lfs -text

+Images/test.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/inputs/OMRImage.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/outputs/CheckedOMRs/OcrSheetMarked.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/outputs/CheckedOMRs/OMRImage.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/outputs/CheckedOMRs/OMRSheet.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/outputs/Images/CheckedOMRs/OMRSheet.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/answer-key/weighted-answers/images/adrian_omr.png filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/community/Sandeep-1507/omr-1.png filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/community/Sandeep-1507/omr-2.png filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/community/Sandeep-1507/omr-3.png filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/community/Shamanth/omr_sheet_01.png filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/community/UmarFarootAPS/scans/scan-type-1.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/community/UmarFarootAPS/scans/scan-type-2.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/community/UPSC-mock/answer_key.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/community/UPSC-mock/scan-angles/angle-1.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/community/UPSC-mock/scan-angles/angle-2.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/community/UPSC-mock/scan-angles/angle-3.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/sample1/MobileCamera/sheet1.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/sample2/AdrianSample/adrian_omr.png filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/sample3/colored-thick-sheet/rgb-100-gsm.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/sample3/xeroxed-thin-sheet/grayscale-80-gsm.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/sample4/IMG_20201116_143512.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/sample4/IMG_20201116_150717658.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/sample4/IMG_20201116_150750830.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/sample5/ScanBatch1/camscanner-1.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/sample5/ScanBatch2/camscanner-2.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/sample6/doc-scans/sample_roll_01.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/sample6/doc-scans/sample_roll_02.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/sample6/doc-scans/sample_roll_03.jpg filter=lfs diff=lfs merge=lfs -text

+OMRChecker/samples/sample6/reference.png filter=lfs diff=lfs merge=lfs -text

+OMRChecker/src/tests/test_samples/sample2/sample.jpg filter=lfs diff=lfs merge=lfs -text

+output/test_ocr_res_img.jpg filter=lfs diff=lfs merge=lfs -text

+output/test_preprocessed_img.jpg filter=lfs diff=lfs merge=lfs -text

diff --git a/.gitignore b/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..c0d9bca9ec94a13bea3f3ab28d790fae28fab60f

--- /dev/null

+++ b/.gitignore

@@ -0,0 +1,188 @@

+# Byte-compiled / optimized / DLL files

+__pycache__/

+*.py[cod]

+*$py.class

+

+# C extensions

+*.so

+

+# Distribution / packaging

+.Python

+build/

+develop-eggs/

+dist/

+downloads/

+eggs/

+.eggs/

+lib/

+lib64/

+parts/

+sdist/

+var/

+wheels/

+share/python-wheels/

+*.egg-info/

+.installed.cfg

+*.egg

+MANIFEST

+

+# PyInstaller

+# Usually these files are written by a python script from a template

+# before PyInstaller builds the exe, so as to inject date/other infos into it.

+*.manifest

+*.spec

+

+# Installer logs

+pip-log.txt

+pip-delete-this-directory.txt

+

+# Unit test / coverage reports

+htmlcov/

+.tox/

+.nox/

+.coverage

+.coverage.*

+.cache

+nosetests.xml

+coverage.xml

+*.cover

+*.py,cover

+.hypothesis/

+.pytest_cache/

+cover/

+

+# Translations

+*.mo

+*.pot

+

+# Django stuff:

+*.log

+local_settings.py

+db.sqlite3

+db.sqlite3-journal

+

+# Flask stuff:

+instance/

+.webassets-cache

+

+# Scrapy stuff:

+.scrapy

+

+# Sphinx documentation

+docs/_build/

+

+# PyBuilder

+.pybuilder/

+target/

+

+# Jupyter Notebook

+.ipynb_checkpoints

+

+# IPython

+profile_default/

+ipython_config.py

+

+# pyenv

+# For a library or package, you might want to ignore these files since the code is

+# intended to run in multiple environments; otherwise, check them in:

+# .python-version

+

+# pipenv

+# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

+# However, in case of collaboration, if having platform-specific dependencies or dependencies

+# having no cross-platform support, pipenv may install dependencies that don't work, or not

+# install all needed dependencies.

+#Pipfile.lock

+

+# UV

+# Similar to Pipfile.lock, it is generally recommended to include uv.lock in version control.

+# This is especially recommended for binary packages to ensure reproducibility, and is more

+# commonly ignored for libraries.

+#uv.lock

+

+# poetry

+# Similar to Pipfile.lock, it is generally recommended to include poetry.lock in version control.

+# This is especially recommended for binary packages to ensure reproducibility, and is more

+# commonly ignored for libraries.

+# https://python-poetry.org/docs/basic-usage/#commit-your-poetrylock-file-to-version-control

+#poetry.lock

+

+# pdm

+# Similar to Pipfile.lock, it is generally recommended to include pdm.lock in version control.

+#pdm.lock

+# pdm stores project-wide configurations in .pdm.toml, but it is recommended to not include it

+# in version control.

+# https://pdm.fming.dev/latest/usage/project/#working-with-version-control

+.pdm.toml

+.pdm-python

+.pdm-build/

+

+# PEP 582; used by e.g. github.com/David-OConnor/pyflow and github.com/pdm-project/pdm

+__pypackages__/

+

+# Celery stuff

+celerybeat-schedule

+celerybeat.pid

+

+# SageMath parsed files

+*.sage.py

+

+# Environments

+.env

+.venv

+env/

+venv/

+ENV/

+env.bak/

+venv.bak/

+

+# Spyder project settings

+.spyderproject

+.spyproject

+

+# Rope project settings

+.ropeproject

+

+# mkdocs documentation

+/site

+

+# mypy

+.mypy_cache/

+.dmypy.json

+dmypy.json

+

+# Pyre type checker

+.pyre/

+

+# pytype static type analyzer

+.pytype/

+

+# Cython debug symbols

+cython_debug/

+

+# PyCharm

+# JetBrains specific template is maintained in a separate JetBrains.gitignore that can

+# be found at https://github.com/github/gitignore/blob/main/Global/JetBrains.gitignore

+# and can be added to the global gitignore or merged into this file. For a more nuclear

+# option (not recommended) you can uncomment the following to ignore the entire idea folder.

+#.idea/

+

+# App outputs

+outputs/

+.claude/

+

+# Temp/debug images

+Images/debug_*.png

+

+# Ruff stuff:

+.ruff_cache/

+

+# PyPI configuration file

+.pypirc

+

+# Cursor

+# Cursor is an AI-powered code editor.`.cursorignore` specifies files/directories to

+# exclude from AI features like autocomplete and code analysis. Recommended for sensitive data

+# refer to https://docs.cursor.com/context/ignore-files

+.cursorignore

+.cursorindexingignore

\ No newline at end of file

diff --git a/Dockerfile b/Dockerfile

new file mode 100644

index 0000000000000000000000000000000000000000..5161358c714a9d7a803d0d1e061582f88f339358

--- /dev/null

+++ b/Dockerfile

@@ -0,0 +1,30 @@

+FROM python:3.11-slim

+

+# System deps: tesseract, poppler, opencv libs

+RUN apt-get update && apt-get install -y --no-install-recommends \

+ tesseract-ocr \

+ poppler-utils \

+ libgl1 \

+ libglib2.0-0 \

+ && rm -rf /var/lib/apt/lists/*

+

+WORKDIR /app

+

+# Install CPU-only PyTorch first (avoids ~4GB of NVIDIA CUDA libs)

+RUN pip install --no-cache-dir torch torchvision --index-url https://download.pytorch.org/whl/cpu

+

+# Install remaining Python deps

+COPY requirements.txt .

+RUN pip install --no-cache-dir -r requirements.txt

+

+# Copy application code

+COPY . .

+

+# Create required directories

+RUN mkdir -p OMRChecker/inputs outputs/Results outputs/CheckedOMRs outputs/Manual outputs/Evaluation Images

+

+# HF Spaces expects port 7860

+ENV PORT=7860

+EXPOSE 7860

+

+CMD ["python", "app.py"]

diff --git a/Images/GK_Question_Paper_A4.pdf b/Images/GK_Question_Paper_A4.pdf

new file mode 100644

index 0000000000000000000000000000000000000000..12edfc5cdbfb2cfbef01e6624510cfea5ac342da

Binary files /dev/null and b/Images/GK_Question_Paper_A4.pdf differ

diff --git a/Images/OCR2.jpg b/Images/OCR2.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..d235818d3997a061dde34a148b53b56947acd61b

Binary files /dev/null and b/Images/OCR2.jpg differ

diff --git a/Images/OCRSheet.jpg b/Images/OCRSheet.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..c0f7e5ce9292cf397fa5dd921dc926968529c444

--- /dev/null

+++ b/Images/OCRSheet.jpg

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:76d3a863bc910ffa2de222ead17eb7021999e594c9fd5a6a0116dc3ed7149fff

+size 112779

diff --git a/Images/OCRTest.pdf b/Images/OCRTest.pdf

new file mode 100644

index 0000000000000000000000000000000000000000..5e464937d24356e93f2457e463659deec9b21605

Binary files /dev/null and b/Images/OCRTest.pdf differ

diff --git a/Images/OMRSheet.jpg b/Images/OMRSheet.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..c9e3dcccfc8af7c60becb3a4cc25f650861db98e

--- /dev/null

+++ b/Images/OMRSheet.jpg

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:4439b6a479cb9fea92de9656b900a528052616ae8e8962a6c33b8e81ed7f327a

+size 267398

diff --git a/Images/OMRSheet2.jpg b/Images/OMRSheet2.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..42fcf6eb88cc072090a6296e2faa9e335963715c

--- /dev/null

+++ b/Images/OMRSheet2.jpg

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:4c6661dce53a4ff8292ee218a2acf5f0aa921c3f337cc0117bda6c71f487735b

+size 232847

diff --git a/Images/OMRSheet3.jpg b/Images/OMRSheet3.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..aa0600c4643d69ea5302f406486f5aaef764a7b2

--- /dev/null

+++ b/Images/OMRSheet3.jpg

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:2072f72607f9e9363cba21b700c86e5dffd3fce0cf2d7e2d1288c23b5066b257

+size 237950

diff --git a/Images/OMRTest.jpg b/Images/OMRTest.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..97e9257fa2472bf70f02de39c51f999c39f5ec8a

--- /dev/null

+++ b/Images/OMRTest.jpg

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:daaa90fa7b3fe40fbf1dd0fee2941de0c0d138290f1d32523979b95bcd253907

+size 273119

diff --git a/Images/OcrSheetMarked.jpg b/Images/OcrSheetMarked.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..87ec203468051d0ce456111cd85b5d117e141f10

--- /dev/null

+++ b/Images/OcrSheetMarked.jpg

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:328a515ffb5367799509a284fc85bca8114508337db76049e86c9c8415b96221

+size 221191

diff --git a/Images/omr_answer_key.pdf b/Images/omr_answer_key.pdf

new file mode 100644

index 0000000000000000000000000000000000000000..e769a97a4d0fd07a11655997a915b9892c3085f2

--- /dev/null

+++ b/Images/omr_answer_key.pdf

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:879c8f7aa369a6f0cbc65f9a5279a18ae42a35d541ba53e70b140178e83acf3f

+size 105714

diff --git a/Images/omr_answer_key.png b/Images/omr_answer_key.png

new file mode 100644

index 0000000000000000000000000000000000000000..c9e3dcccfc8af7c60becb3a4cc25f650861db98e

--- /dev/null

+++ b/Images/omr_answer_key.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:4439b6a479cb9fea92de9656b900a528052616ae8e8962a6c33b8e81ed7f327a

+size 267398

diff --git a/Images/question.png b/Images/question.png

new file mode 100644

index 0000000000000000000000000000000000000000..4d10d743f3d7f5fb533a86ff4e0c1a287a8e75c0

Binary files /dev/null and b/Images/question.png differ

diff --git a/Images/question_paper.pdf b/Images/question_paper.pdf

new file mode 100644

index 0000000000000000000000000000000000000000..5e464937d24356e93f2457e463659deec9b21605

Binary files /dev/null and b/Images/question_paper.pdf differ

diff --git a/Images/question_paper.png b/Images/question_paper.png

new file mode 100644

index 0000000000000000000000000000000000000000..a5a2f9cf04c0bb5f790a542f3480199bf24670c3

--- /dev/null

+++ b/Images/question_paper.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:ebf1ff49a9b76cf2259890ec30c7c369f60c900331d06bc81d096e2d3efbe134

+size 199992

diff --git a/Images/test.jpg b/Images/test.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..3344b5f30842cb22944fede561995c526e3bc3d7

--- /dev/null

+++ b/Images/test.jpg

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:dc40095b38d1a4e8248d464ba1e14d943e6ce2eaf5a0173316c4ce3bcbfabf5e

+size 331992

diff --git a/OMRChecker/.pre-commit-config.yaml b/OMRChecker/.pre-commit-config.yaml

new file mode 100644

index 0000000000000000000000000000000000000000..1fe9847c20f354b81701b994f9589c0e37488464

--- /dev/null

+++ b/OMRChecker/.pre-commit-config.yaml

@@ -0,0 +1,59 @@

+exclude: "__snapshots__/.*$"

+default_install_hook_types: [pre-commit, pre-push]

+repos:

+ - repo: https://github.com/pre-commit/pre-commit-hooks

+ rev: v4.4.0

+ hooks:

+ - id: check-yaml

+ stages: [commit]

+ - id: check-added-large-files

+ args: ['--maxkb=300']

+ fail_fast: false

+ stages: [commit]

+ - id: pretty-format-json

+ args: ['--autofix', '--no-sort-keys']

+ - id: end-of-file-fixer

+ exclude_types: ["csv", "json"]

+ stages: [commit]

+ - id: trailing-whitespace

+ stages: [commit]

+ - repo: https://github.com/pycqa/isort

+ rev: 5.12.0

+ hooks:

+ - id: isort

+ args: ["--profile", "black"]

+ stages: [commit]

+ - repo: https://github.com/psf/black

+ rev: 23.3.0

+ hooks:

+ - id: black

+ fail_fast: true

+ stages: [commit]

+ - repo: https://github.com/pycqa/flake8

+ rev: 6.0.0

+ hooks:

+ - id: flake8

+ args:

+ - "--ignore=E501,W503,E203,E741,F541" # Line too long, Line break occurred before a binary operator, Whitespace before ':'

+ fail_fast: true

+ stages: [commit]

+ - repo: local

+ hooks:

+ - id: pytest-on-commit

+ name: Running single sample test

+ entry: python3 -m pytest -rfpsxEX --disable-warnings --verbose -k sample1

+ language: system

+ pass_filenames: false

+ always_run: true

+ fail_fast: true

+ stages: [commit]

+ - repo: local

+ hooks:

+ - id: pytest-on-push

+ name: Running all tests before push...

+ entry: python3 -m pytest -rfpsxEX --disable-warnings --verbose --durations=3

+ language: system

+ pass_filenames: false

+ always_run: true

+ fail_fast: true

+ stages: [push]

diff --git a/OMRChecker/.pylintrc b/OMRChecker/.pylintrc

new file mode 100644

index 0000000000000000000000000000000000000000..369568f5560bc52faf695ad91c6df93c568195f4

--- /dev/null

+++ b/OMRChecker/.pylintrc

@@ -0,0 +1,43 @@

+[BASIC]

+# Regular expression matching correct variable names. Overrides variable-naming-style.

+# snake_case with single letter regex -

+variable-rgx=[a-z0-9_]{1,30}$

+

+# Good variable names which should always be accepted, separated by a comma.

+good-names=x,y,pt

+

+[MESSAGES CONTROL]

+

+# Disable the message, report, category or checker with the given id(s). You

+# can either give multiple identifiers separated by comma (,) or put this

+# option multiple times (only on the command line, not in the configuration

+# file where it should appear only once). You can also use "--disable=all" to

+# disable everything first and then reenable specific checks. For example, if

+# you want to run only the similarities checker, you can use "--disable=all

+# --enable=similarities". If you want to run only the classes checker, but have

+# no Warning level messages displayed, use "--disable=all --enable=classes

+# --disable=W".

+disable=import-error,

+ unresolved-import,

+ too-few-public-methods,

+ missing-docstring,

+ relative-beyond-top-level,

+ too-many-instance-attributes,

+ bad-continuation,

+ no-member

+

+# Note: bad-continuation is a false positive showing bug in pylint

+# https://github.com/psf/black/issues/48

+

+

+[REPORTS]

+# Set the output format. Available formats are text, parseable, colorized, json

+# and msvs (visual studio). You can also give a reporter class, e.g.

+# mypackage.mymodule.MyReporterClass.

+output-format=text

+

+# Tells whether to display a full report or only the messages.

+reports=no

+

+# Activate the evaluation score.

+score=yes

diff --git a/OMRChecker/CODE_OF_CONDUCT.md b/OMRChecker/CODE_OF_CONDUCT.md

new file mode 100644

index 0000000000000000000000000000000000000000..4a84b4a0ddccf4a14ef9b5c0b65f6eafdb5549cc

--- /dev/null

+++ b/OMRChecker/CODE_OF_CONDUCT.md

@@ -0,0 +1,133 @@

+

+# Contributor Covenant Code of Conduct

+

+## Our Pledge

+

+We as members, contributors, and leaders pledge to make participation in our

+community a harassment-free experience for everyone, regardless of age, body

+size, visible or invisible disability, ethnicity, sex characteristics, gender

+identity and expression, level of experience, education, socio-economic status,

+nationality, personal appearance, race, caste, color, religion, or sexual

+identity and orientation.

+

+We pledge to act and interact in ways that contribute to an open, welcoming,

+diverse, inclusive, and healthy community.

+

+## Our Standards

+

+Examples of behavior that contributes to a positive environment for our

+community include:

+

+* Demonstrating empathy and kindness toward other people

+* Being respectful of differing opinions, viewpoints, and experiences

+* Giving and gracefully accepting constructive feedback

+* Accepting responsibility and apologizing to those affected by our mistakes,

+ and learning from the experience

+* Focusing on what is best not just for us as individuals, but for the overall

+ community

+

+Examples of unacceptable behavior include:

+

+* The use of sexualized language or imagery, and sexual attention or advances of

+ any kind

+* Trolling, insulting or derogatory comments, and personal or political attacks

+* Public or private harassment

+* Publishing others' private information, such as a physical or email address,

+ without their explicit permission

+* Other conduct which could reasonably be considered inappropriate in a

+ professional setting

+

+## Enforcement Responsibilities

+

+Community leaders are responsible for clarifying and enforcing our standards of

+acceptable behavior and will take appropriate and fair corrective action in

+response to any behavior that they deem inappropriate, threatening, offensive,

+or harmful.

+

+Community leaders have the right and responsibility to remove, edit, or reject

+comments, commits, code, wiki edits, issues, and other contributions that are

+not aligned to this Code of Conduct, and will communicate reasons for moderation

+decisions when appropriate.

+

+## Scope

+

+This Code of Conduct applies within all community spaces, and also applies when

+an individual is officially representing the community in public spaces.

+Examples of representing our community include using an official email address,

+posting via an official social media account, or acting as an appointed

+representative at an online or offline event.

+

+## Enforcement

+

+Instances of abusive, harassing, or otherwise unacceptable behavior may be

+reported to the community leaders responsible for enforcement at

+[INSERT CONTACT METHOD].

+All complaints will be reviewed and investigated promptly and fairly.

+

+All community leaders are obligated to respect the privacy and security of the

+reporter of any incident.

+

+## Enforcement Guidelines

+

+Community leaders will follow these Community Impact Guidelines in determining

+the consequences for any action they deem in violation of this Code of Conduct:

+

+### 1. Correction

+

+**Community Impact**: Use of inappropriate language or other behavior deemed

+unprofessional or unwelcome in the community.

+

+**Consequence**: A private, written warning from community leaders, providing

+clarity around the nature of the violation and an explanation of why the

+behavior was inappropriate. A public apology may be requested.

+

+### 2. Warning

+

+**Community Impact**: A violation through a single incident or series of

+actions.

+

+**Consequence**: A warning with consequences for continued behavior. No

+interaction with the people involved, including unsolicited interaction with

+those enforcing the Code of Conduct, for a specified period of time. This

+includes avoiding interactions in community spaces as well as external channels

+like social media. Violating these terms may lead to a temporary or permanent

+ban.

+

+### 3. Temporary Ban

+

+**Community Impact**: A serious violation of community standards, including

+sustained inappropriate behavior.

+

+**Consequence**: A temporary ban from any sort of interaction or public

+communication with the community for a specified period of time. No public or

+private interaction with the people involved, including unsolicited interaction

+with those enforcing the Code of Conduct, is allowed during this period.

+Violating these terms may lead to a permanent ban.

+

+### 4. Permanent Ban

+

+**Community Impact**: Demonstrating a pattern of violation of community

+standards, including sustained inappropriate behavior, harassment of an

+individual, or aggression toward or disparagement of classes of individuals.

+

+**Consequence**: A permanent ban from any sort of public interaction within the

+community.

+

+## Attribution

+

+This Code of Conduct is adapted from the [Contributor Covenant][homepage],

+version 2.1, available at

+[https://www.contributor-covenant.org/version/2/1/code_of_conduct.html][v2.1].

+

+Community Impact Guidelines were inspired by

+[Mozilla's code of conduct enforcement ladder][Mozilla CoC].

+

+For answers to common questions about this code of conduct, see the FAQ at

+[https://www.contributor-covenant.org/faq][FAQ]. Translations are available at

+[https://www.contributor-covenant.org/translations][translations].

+

+[homepage]: https://www.contributor-covenant.org

+[v2.1]: https://www.contributor-covenant.org/version/2/1/code_of_conduct.html

+[Mozilla CoC]: https://github.com/mozilla/diversity

+[FAQ]: https://www.contributor-covenant.org/faq

+[translations]: https://www.contributor-covenant.org/translations

diff --git a/OMRChecker/CONTRIBUTING.md b/OMRChecker/CONTRIBUTING.md

new file mode 100644

index 0000000000000000000000000000000000000000..c649a048362ecfe9ac4e260feac8b0f3f8447a94

--- /dev/null

+++ b/OMRChecker/CONTRIBUTING.md

@@ -0,0 +1,32 @@

+# How to contribute

+So you want to write code and get it landed in the official OMRChecker repository?

+First, fork our repository into your own GitHub account, and create a local clone of it as described in the installation instructions.

+The latter will be used to get new features implemented or bugs fixed.

+

+Once done and you have the code locally on the disk, you can get started. We advise you to not work directly on the master branch,

+but to create a separate branch for each issue you are working on. That way you can easily switch between different work,

+and you can update each one for the latest changes on the upstream master individually.

+

+

+# Writing Code

+For writing the code just follow the [Pep8 Python style](https://peps.python.org/pep-0008/) guide, If there is something unclear about the style, just look at existing code which might help you to understand it better.

+

+Also, try to use commits with [conventional messages](https://www.conventionalcommits.org/en/v1.0.0/#summary).

+

+

+# Code Formatting

+Before committing your code, make sure to run the following command to format your code according to the PEP8 style guide:

+```.sh

+pip install -r requirements.dev.txt && pre-commit install

+```

+

+Run `pre-commit` before committing your changes:

+```.sh

+git add .

+pre-commit run -a

+```

+

+# Where to contribute from

+

+- You can pickup any open [issues](https://github.com/Udayraj123/OMRChecker/issues) to solve.

+- You can also check out the [ideas list](https://github.com/users/Udayraj123/projects/2/views/1)

diff --git a/OMRChecker/Contributors.md b/OMRChecker/Contributors.md

new file mode 100644

index 0000000000000000000000000000000000000000..b7a428a77223e4e227c9b74c3e54eb43444f9017

--- /dev/null

+++ b/OMRChecker/Contributors.md

@@ -0,0 +1,22 @@

+# Contributors

+

+- [Udayraj123](https://github.com/Udayraj123)

+- [leongwaikay](https://github.com/leongwaikay)

+- [deepakgouda](https://github.com/deepakgouda)

+- [apurva91](https://github.com/apurva91)

+- [sparsh2706](https://github.com/sparsh2706)

+- [namit2saxena](https://github.com/namit2saxena)

+- [Harsh-Kapoorr](https://github.com/Harsh-Kapoorr)

+- [Sandeep-1507](https://github.com/Sandeep-1507)

+- [SpyzzVVarun](https://github.com/SpyzzVVarun)

+- [asc249](https://github.com/asc249)

+- [05Alston](https://github.com/05Alston)

+- [Antibodyy](https://github.com/Antibodyy)

+- [infinity1729](https://github.com/infinity1729)

+- [Rohan-G](https://github.com/Rohan-G)

+- [UjjwalMahar](https://github.com/UjjwalMahar)

+- [Kurtsley](https://github.com/Kurtsley)

+- [gaursagar21](https://github.com/gaursagar21)

+- [aayushibansal2001](https://github.com/aayushibansal2001)

+- [ShamanthVallem](https://github.com/ShamanthVallem)

+- [rudrapsc](https://github.com/rudrapsc)

diff --git a/OMRChecker/LICENSE b/OMRChecker/LICENSE

new file mode 100644

index 0000000000000000000000000000000000000000..33cdce9d8e013750ea0e519ffc9a03b2d14d4d0c

--- /dev/null

+++ b/OMRChecker/LICENSE

@@ -0,0 +1,22 @@

+MIT License

+

+Copyright (c) 2024-present Udayraj Deshmukh and other contributors

+

+Permission is hereby granted, free of charge, to any person obtaining

+a copy of this software and associated documentation files (the

+"Software"), to deal in the Software without restriction, including

+without limitation the rights to use, copy, modify, merge, publish,

+distribute, sublicense, and/or sell copies of the Software, and to

+permit persons to whom the Software is furnished to do so, subject to

+the following conditions:

+

+The above copyright notice and this permission notice shall be

+included in all copies or substantial portions of the Software.

+

+THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND,

+EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF

+MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND

+NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE

+LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION

+OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION

+WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

diff --git a/OMRChecker/README.md b/OMRChecker/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..fba176b81f7766e0aacd394b79d0db5573015512

--- /dev/null

+++ b/OMRChecker/README.md

@@ -0,0 +1,359 @@

+# OMR Checker

+

+Read OMR sheets fast and accurately using a scanner 🖨 or your phone 🤳.

+

+## What is OMR?

+

+OMR stands for Optical Mark Recognition, used to detect and interpret human-marked data on documents. OMR refers to the process of reading and evaluating OMR sheets, commonly used in exams, surveys, and other forms.

+

+#### **Quick Links**

+

+- [Installation](#getting-started)

+- [User Guide](https://github.com/Udayraj123/OMRChecker/wiki)

+- [Contributor Guide](https://github.com/Udayraj123/OMRChecker/blob/master/CONTRIBUTING.md)

+- [Project Ideas List](https://github.com/users/Udayraj123/projects/2/views/1)

+

+

+

+[](https://github.com/Udayraj123/OMRChecker/pull/new/master)

+[](https://github.com/Udayraj123/OMRChecker/pulls?q=is%3Aclosed)

+[](https://GitHub.com/Udayraj123/OMRChecker/issues?q=is%3Aissue+is%3Aclosed)

+[](https://github.com/Udayraj123/OMRChecker/issues/5)

+

+

+

+[](https://GitHub.com/Udayraj123/OMRChecker/stargazers/)

+[](https://discord.gg/qFv2Vqf)

+

+

+

+## 🎯 Features

+

+A full-fledged OMR checking software that can read and evaluate OMR sheets scanned at any angle and having any color.

+

+| Specs ![]() |

|   ![]() |

+| :--------------------- | :--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

+| 💯 **Accurate** | Currently nearly 100% accurate on good quality document scans; and about 90% accurate on mobile images. |

+| 💪🏿 **Robust** | Supports low resolution, xeroxed sheets. See [**Robustness**](https://github.com/Udayraj123/OMRChecker/wiki/Robustness) for more. |

+| ⏩ **Fast** | Current processing speed without any optimization is 200 OMRs/minute. |

+| ✅ **Customizable** | [Easily apply](https://github.com/Udayraj123/OMRChecker/wiki/User-Guide) to custom OMR layouts, surveys, etc. |

+| 📊 **Visually Rich** | [Get insights](https://github.com/Udayraj123/OMRChecker/wiki/Rich-Visuals) to configure and debug easily. |

+| 🎈 **Lightweight** | Very minimal core code size. |

+| 🏫 **Large Scale** | Tested on a large scale at [Technothlon](https://en.wikipedia.org/wiki/Technothlon). |

+| 👩🏿💻 **Dev Friendly** | [Pylinted](http://pylint.pycqa.org/) and [Black formatted](https://github.com/psf/black) code. Also has a [developer community](https://discord.gg/qFv2Vqf) on discord. |

+

+Note: For solving interesting challenges, developers can check out [**TODOs**](https://github.com/Udayraj123/OMRChecker/wiki/TODOs).

+

+See the complete guide and details at [Project Wiki](https://github.com/Udayraj123/OMRChecker/wiki/).

+

+

+

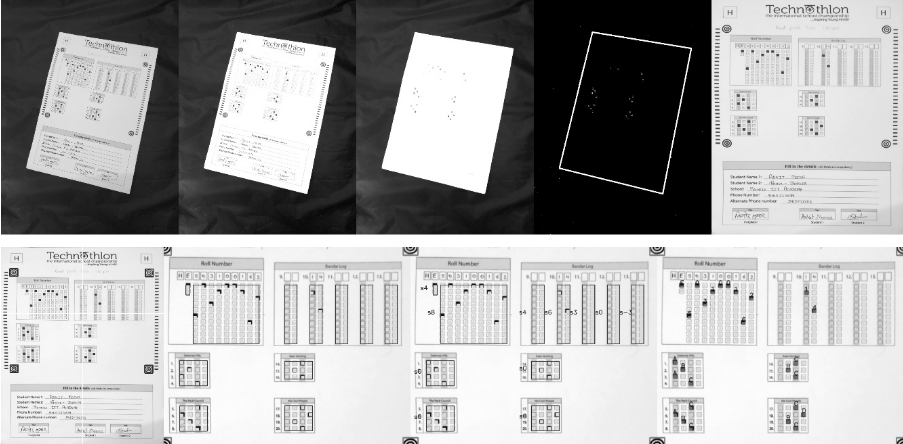

+## 💡 What can OMRChecker do for me?

+

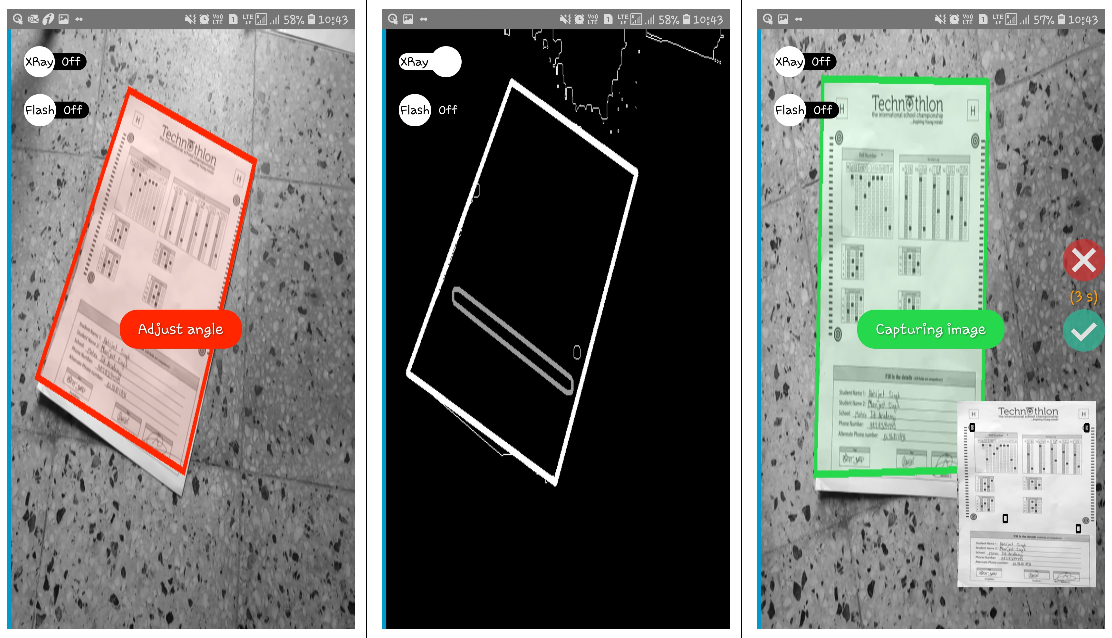

+Once you configure the OMR layout, just throw images of the sheets at the software; and you'll get back the marked responses in an excel sheet!

+

+Images can be taken from various angles as shown below-

+

+

|

+| :--------------------- | :--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

+| 💯 **Accurate** | Currently nearly 100% accurate on good quality document scans; and about 90% accurate on mobile images. |

+| 💪🏿 **Robust** | Supports low resolution, xeroxed sheets. See [**Robustness**](https://github.com/Udayraj123/OMRChecker/wiki/Robustness) for more. |

+| ⏩ **Fast** | Current processing speed without any optimization is 200 OMRs/minute. |

+| ✅ **Customizable** | [Easily apply](https://github.com/Udayraj123/OMRChecker/wiki/User-Guide) to custom OMR layouts, surveys, etc. |

+| 📊 **Visually Rich** | [Get insights](https://github.com/Udayraj123/OMRChecker/wiki/Rich-Visuals) to configure and debug easily. |

+| 🎈 **Lightweight** | Very minimal core code size. |

+| 🏫 **Large Scale** | Tested on a large scale at [Technothlon](https://en.wikipedia.org/wiki/Technothlon). |

+| 👩🏿💻 **Dev Friendly** | [Pylinted](http://pylint.pycqa.org/) and [Black formatted](https://github.com/psf/black) code. Also has a [developer community](https://discord.gg/qFv2Vqf) on discord. |

+

+Note: For solving interesting challenges, developers can check out [**TODOs**](https://github.com/Udayraj123/OMRChecker/wiki/TODOs).

+

+See the complete guide and details at [Project Wiki](https://github.com/Udayraj123/OMRChecker/wiki/).

+

+

+

+## 💡 What can OMRChecker do for me?

+

+Once you configure the OMR layout, just throw images of the sheets at the software; and you'll get back the marked responses in an excel sheet!

+

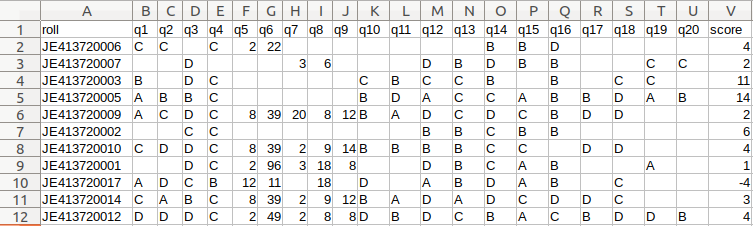

+Images can be taken from various angles as shown below-

+

+

+  +

+

+

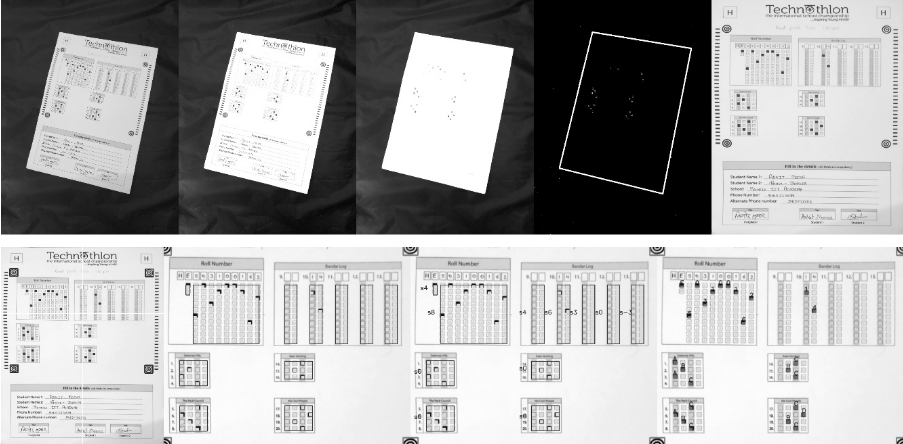

+### Code in action on images taken by scanner:

+

+

+  +

+

+

+

+

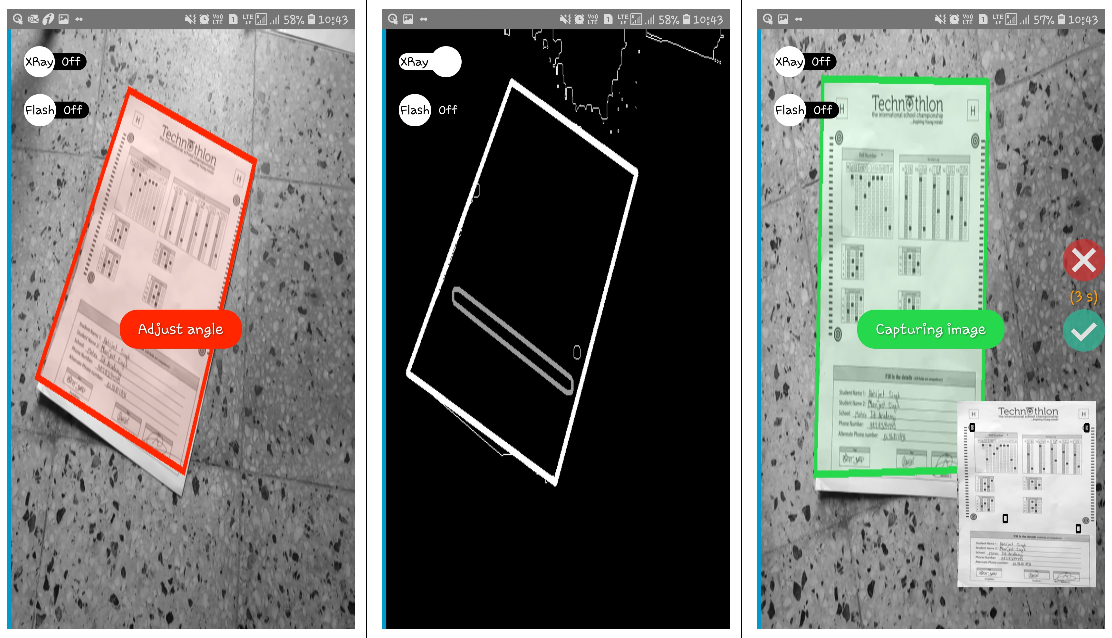

+### Code in action on images taken by a mobile phone:

+

+

+  +

+

+

+## Visuals

+

+### Processing steps

+

+See step-by-step processing of any OMR sheet:

+

+

+

+  +

+

+

+

+ *Note: This image is generated by the code itself!*

+

+

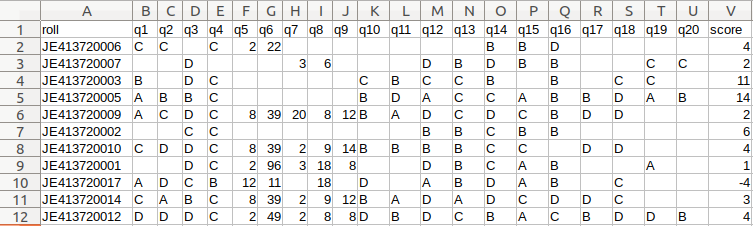

+### Output

+

+Get a CSV sheet containing the detected responses and evaluated scores:

+

+

+

+  +

+

+

+

+

+We now support [colored outputs](https://github.com/Udayraj123/OMRChecker/wiki/%5Bv2%5D-About-Evaluation) as well. Here's a sample output on another image -

+

+

+  +

+

+

+

+

+#### There are many more visuals in the wiki. Check them out [here!](https://github.com/Udayraj123/OMRChecker/wiki/Rich-Visuals)

+

+## Getting started

+

+

+

+**Operating system:** OSX or Linux is recommended although Windows is also supported.

+

+### 1. Install global dependencies

+

+

+

+To check if python3 and pip is already installed:

+

+```bash

+python3 --version

+python3 -m pip --version

+```

+

+

+ Install Python3

+

+To install python3 follow instructions [here](https://www.python.org/downloads/)

+

+To install pip - follow instructions [here](https://pip.pypa.io/en/stable/installation/)

+

+

+

+Install OpenCV

+

+**Any installation method is fine.**

+

+Recommended:

+

+```bash

+python3 -m pip install --user --upgrade pip

+python3 -m pip install --user opencv-python

+python3 -m pip install --user opencv-contrib-python

+```

+

+More details on pip install openCV [here](https://www.pyimagesearch.com/2018/09/19/pip-install-opencv/).

+

+

+

+

+

+Extra steps(for Linux users only)

+

+Installing missing libraries(if any):

+

+On a fresh computer, some of the libraries may get missing in event after a successful pip install. Install them using following commands[(ref)](https://www.pyimagesearch.com/2018/05/28/ubuntu-18-04-how-to-install-opencv/):

+

+```bash

+sudo apt-get install -y build-essential cmake unzip pkg-config

+sudo apt-get install -y libjpeg-dev libpng-dev libtiff-dev

+sudo apt-get install -y libavcodec-dev libavformat-dev libswscale-dev libv4l-dev

+sudo apt-get install -y libatlas-base-dev gfortran

+```

+

+

+

+### 2. Install project dependencies

+

+Clone the repo

+

+```bash

+git clone https://github.com/Udayraj123/OMRChecker

+cd OMRChecker/

+```

+

+Install pip requirements

+

+```bash

+python3 -m pip install --user -r requirements.txt

+```

+

+_**Note:** If you face a distutils error in pip, use `--ignore-installed` flag in above command._

+

+

+

+### 3. Run the code

+

+1. First copy and examine the sample data to know how to structure your inputs:

+ ```bash

+ cp -r ./samples/sample1 inputs/

+ # Note: you may remove previous inputs (if any) with `mv inputs/* ~/.trash`

+ # Change the number N in sampleN to see more examples

+ ```

+2. Run OMRChecker:

+ ```bash

+ python3 main.py

+ ```

+

+Alternatively you can also use `python3 main.py -i ./samples/sample1`.

+

+Each example in the samples folder demonstrates different ways in which OMRChecker can be used.

+

+### Common Issues

+

+

+

+ 1. [Windows] ERROR: Could not open requirements file

+

+Command: python3 -m pip install --user -r requirements.txt

+

+ Link to Solution: #54

+

+

+

+2. [Linux] ERROR: No module named pip

+

+Command: python3 -m pip install --user --upgrade pip

+

+ Link to Solution: #70

+

+

+## OMRChecker for custom OMR Sheets

+

+1. First, [create your own template.json](https://github.com/Udayraj123/OMRChecker/wiki/User-Guide).

+2. Configure the tuning parameters.

+3. Run OMRChecker with appropriate arguments (See full usage).

+

+

+## Full Usage

+

+```

+python3 main.py [--setLayout] [--inputDir dir1] [--outputDir dir1]

+```

+

+Explanation for the arguments:

+

+`--setLayout`: Set up OMR template layout - modify your json file and run again until the template is set.

+

+`--inputDir`: Specify an input directory.

+

+`--outputDir`: Specify an output directory.

+

+

+

+ Deprecation logs

+

+

+- The old `--noCropping` flag has been replaced with the 'CropPage' plugin in "preProcessors" of the template.json(see [samples](https://github.com/Udayraj123/OMRChecker/tree/master/samples)).

+- The `--autoAlign` flag is deprecated due to low performance on a generic OMR sheet

+- The `--template` flag is deprecated and instead it's recommended to keep the template file at the parent folder containing folders of different images

+

+

+

+

+## FAQ

+

+

+

+Why is this software free?

+

+

+This project was born out of a student-led organization called as [Technothlon](https://technothlon.techniche.org.in). It is a logic-based international school championship organized by students of IIT Guwahati. Being a non-profit organization, and after seeing it work fabulously at such a large scale we decided to share this tool with the world. The OMR checking processes still involves so much tediousness which we aim to reduce dramatically.

+

+We believe in the power of open source! Currently, OMRChecker is in an intermediate stage where only developers can use it. We hope to see it become more user-friendly as well as robust from exposure to different inputs from you all!

+

+[](https://github.com/ellerbrock/open-source-badges/)

+

+

+

+

+

+Can I use this code in my (public) work?

+

+

+OMRChecker can be forked and modified. You are encouraged to play with it and we would love to see your own projects in action!

+

+It is published under the [MIT license](https://github.com/Udayraj123/OMRChecker/blob/master/LICENSE).

+

+

+

+

+

+What are the ways to contribute?

+

+

+

+

+- Join the developer community on [Discord](https://discord.gg/qFv2Vqf) to fix [issues](https://github.com/Udayraj123/OMRChecker/issues) with OMRChecker.

+

+- If this project saved you large costs on OMR Software licenses, or saved efforts to make one. Consider donating an amount of your choice(donate section).

+

+

+

+

+

+

+

+## Credits

+

+_A Huge thanks to:_

+_**Adrian Rosebrock** for his exemplary blog:_ https://pyimagesearch.com

+

+_**Harrison Kinsley** aka sentdex for his [video tutorials](https://www.youtube.com/watch?v=Z78zbnLlPUA&list=PLQVvvaa0QuDdttJXlLtAJxJetJcqmqlQq) and many other resources._

+

+_**Satya Mallic** for his resourceful blog:_ https://www.learnopencv.com

+

+_And to other amazing people from all over the globe who've made significant improvements in this project._

+

+_Thank you!_

+

+

+

+## Related Projects

+

+Here's a snapshot of the [Android OMR Helper App (archived)](https://github.com/Udayraj123/AndroidOMRHelper):

+

+

+

+  +

+

+

+

+

+## Stargazers over time

+

+[](https://starchart.cc/Udayraj123/OMRChecker)

+

+---

+

+Made with ❤️ by Awesome Contributors

+

+

+  +

+

+---

+

+### License

+

+[](https://github.com/Udayraj123/OMRChecker/blob/master/LICENSE)

+

+For more details see [LICENSE](https://github.com/Udayraj123/OMRChecker/blob/master/LICENSE).

+

+### Donate

+

+

+

+

+---

+

+### License

+

+[](https://github.com/Udayraj123/OMRChecker/blob/master/LICENSE)

+

+For more details see [LICENSE](https://github.com/Udayraj123/OMRChecker/blob/master/LICENSE).

+

+### Donate

+

+ [](https://www.paypal.me/Udayraj123/500)

+

+_Find OMRChecker on_ [**_Product Hunt_**](https://www.producthunt.com/posts/omr-checker/) **|** [**_Reddit_**](https://www.reddit.com/r/computervision/comments/ccbj6f/omrchecker_grade_exams_using_python_and_opencv/) **|** [**Discord**](https://discord.gg/qFv2Vqf) **|** [**Linkedin**](https://www.linkedin.com/pulse/open-source-talks-udayraj-udayraj-deshmukh/) **|** [**goodfirstissue.dev**](https://goodfirstissue.dev/language/python) **|** [**codepeak.tech**](https://www.codepeak.tech/) **|** [**fossoverflow.dev**](https://fossoverflow.dev/projects) **|** [**Interview on Console by CodeSee**](https://console.substack.com/p/console-140) **|** [**Open Source Hub**](https://opensourcehub.io/udayraj123/omrchecker)

+

+

+

diff --git a/OMRChecker/docs/assets/colored_output.jpg b/OMRChecker/docs/assets/colored_output.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..3cafa473b9a1134f845858be4cf0e98f3bd3bb7c

Binary files /dev/null and b/OMRChecker/docs/assets/colored_output.jpg differ

diff --git a/OMRChecker/inputs/OMRImage.jpg b/OMRChecker/inputs/OMRImage.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..c9e3dcccfc8af7c60becb3a4cc25f650861db98e

--- /dev/null

+++ b/OMRChecker/inputs/OMRImage.jpg

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:4439b6a479cb9fea92de9656b900a528052616ae8e8962a6c33b8e81ed7f327a

+size 267398

diff --git a/OMRChecker/inputs/template.json b/OMRChecker/inputs/template.json

new file mode 100644

index 0000000000000000000000000000000000000000..823fe2e58a4182ef04d1fff99ecf146b5ec53aae

--- /dev/null

+++ b/OMRChecker/inputs/template.json

@@ -0,0 +1,37 @@

+{

+ "pageDimensions": [

+ 1122,

+ 1600

+ ],

+ "bubbleDimensions": [

+ 48,

+ 50

+ ],

+ "fieldBlocks": {

+ "q01block": {

+ "origin": [

+ 100,

+ 175

+ ],

+ "bubblesGap": 55,

+ "labelsGap": 67,

+ "fieldLabels": [

+ "q1..10"

+ ],

+ "fieldType": "QTYPE_MCQ4"

+ },

+ "q02block": {

+ "origin": [

+ 100,

+ 845

+ ],

+ "bubblesGap": 58,

+ "labelsGap": 70,

+ "fieldLabels": [

+ "q11..20"

+ ],

+ "fieldType": "QTYPE_MCQ4"

+ }

+ }

+

+}

\ No newline at end of file

diff --git a/OMRChecker/main.py b/OMRChecker/main.py

new file mode 100644

index 0000000000000000000000000000000000000000..19e3ebd43d4be2a096cf709b04817247f9952506

--- /dev/null

+++ b/OMRChecker/main.py

@@ -0,0 +1,99 @@

+"""

+

+ OMRChecker

+

+ Author: Udayraj Deshmukh

+ Github: https://github.com/Udayraj123

+

+"""

+

+import argparse

+import sys

+from pathlib import Path

+

+from src.entry import entry_point

+from src.logger import logger

+

+

+def parse_args():

+ # construct the argument parse and parse the arguments

+ argparser = argparse.ArgumentParser()

+

+ argparser.add_argument(

+ "-i",

+ "--inputDir",

+ default=["inputs"],

+ # https://docs.python.org/3/library/argparse.html#nargs

+ nargs="*",

+ required=False,

+ type=str,

+ dest="input_paths",

+ help="Specify an input directory.",

+ )

+

+ argparser.add_argument(

+ "-d",

+ "--debug",

+ required=False,

+ dest="debug",

+ action="store_false",

+ help="Enables debugging mode for showing detailed errors",

+ )

+

+ argparser.add_argument(

+ "-o",

+ "--outputDir",

+ default="outputs",

+ required=False,

+ dest="output_dir",

+ help="Specify an output directory.",

+ )

+

+ argparser.add_argument(

+ "-a",

+ "--autoAlign",

+ required=False,

+ dest="autoAlign",

+ action="store_true",

+ help="(experimental) Enables automatic template alignment - \

+ use if the scans show slight misalignments.",

+ )

+

+ argparser.add_argument(

+ "-l",

+ "--setLayout",

+ required=False,

+ dest="setLayout",

+ action="store_true",

+ help="Set up OMR template layout - modify your json file and \

+ run again until the template is set.",

+ )

+

+ (

+ args,

+ unknown,

+ ) = argparser.parse_known_args()

+

+ args = vars(args)

+

+ if len(unknown) > 0:

+ logger.warning(f"\nError: Unknown arguments: {unknown}", unknown)

+ argparser.print_help()

+ exit(11)

+ return args

+

+

+def entry_point_for_args(args):

+ if args["debug"] is True:

+ # Disable tracebacks

+ sys.tracebacklimit = 0

+ for root in args["input_paths"]:

+ entry_point(

+ Path(root),

+ args,

+ )

+

+

+if __name__ == "__main__":

+ args = parse_args()

+ entry_point_for_args(args)

diff --git a/OMRChecker/outputs/AdrianSample/Manual/ErrorFiles.csv b/OMRChecker/outputs/AdrianSample/Manual/ErrorFiles.csv

new file mode 100644

index 0000000000000000000000000000000000000000..23529519c2c8778ff89dd2a8f5c7ce602a34a826

--- /dev/null

+++ b/OMRChecker/outputs/AdrianSample/Manual/ErrorFiles.csv

@@ -0,0 +1 @@

+"file_id","input_path","output_path","score","q1","q2","q3","q4","q5"

diff --git a/OMRChecker/outputs/AdrianSample/Manual/MultiMarkedFiles.csv b/OMRChecker/outputs/AdrianSample/Manual/MultiMarkedFiles.csv

new file mode 100644

index 0000000000000000000000000000000000000000..23529519c2c8778ff89dd2a8f5c7ce602a34a826

--- /dev/null

+++ b/OMRChecker/outputs/AdrianSample/Manual/MultiMarkedFiles.csv

@@ -0,0 +1 @@

+"file_id","input_path","output_path","score","q1","q2","q3","q4","q5"

diff --git a/OMRChecker/outputs/AdrianSample/Results/Results_07PM.csv b/OMRChecker/outputs/AdrianSample/Results/Results_07PM.csv

new file mode 100644

index 0000000000000000000000000000000000000000..23529519c2c8778ff89dd2a8f5c7ce602a34a826

--- /dev/null

+++ b/OMRChecker/outputs/AdrianSample/Results/Results_07PM.csv

@@ -0,0 +1 @@

+"file_id","input_path","output_path","score","q1","q2","q3","q4","q5"

diff --git a/OMRChecker/outputs/CheckedOMRs/OMRImage.jpg b/OMRChecker/outputs/CheckedOMRs/OMRImage.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..aa91e5cd858dfa684d288a51acb4bc1b59b838cd

--- /dev/null

+++ b/OMRChecker/outputs/CheckedOMRs/OMRImage.jpg

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:b9104889d79a25e0550e8e1bd258f1c8520ae1162e41b567d5cdfebad64c2ab4

+size 401565

diff --git a/OMRChecker/outputs/CheckedOMRs/OMRSheet.jpg b/OMRChecker/outputs/CheckedOMRs/OMRSheet.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..d8d1a2325170014745edf3503b40e7c7bda160dc

--- /dev/null

+++ b/OMRChecker/outputs/CheckedOMRs/OMRSheet.jpg

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:f16c45dda1d02bf06e33a6d219c8282c363bf3943b94960eede1efe2bc6204d9

+size 408744

diff --git a/OMRChecker/outputs/CheckedOMRs/OcrSheetMarked.jpg b/OMRChecker/outputs/CheckedOMRs/OcrSheetMarked.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..aa91e5cd858dfa684d288a51acb4bc1b59b838cd

--- /dev/null

+++ b/OMRChecker/outputs/CheckedOMRs/OcrSheetMarked.jpg

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:b9104889d79a25e0550e8e1bd258f1c8520ae1162e41b567d5cdfebad64c2ab4

+size 401565

diff --git a/OMRChecker/outputs/Images/CheckedOMRs/OMRSheet.jpg b/OMRChecker/outputs/Images/CheckedOMRs/OMRSheet.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..3a968368fa191241dda21ea0c41f5d2d840f2e52

--- /dev/null

+++ b/OMRChecker/outputs/Images/CheckedOMRs/OMRSheet.jpg

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:7e9678555042535c83ab920d0b616d4f99f9f08017629d46a15eb64d7c3abd3c

+size 407394

diff --git a/OMRChecker/outputs/Images/Manual/ErrorFiles.csv b/OMRChecker/outputs/Images/Manual/ErrorFiles.csv

new file mode 100644

index 0000000000000000000000000000000000000000..84597b76dd63112496537204330caf1a7d436621

--- /dev/null

+++ b/OMRChecker/outputs/Images/Manual/ErrorFiles.csv

@@ -0,0 +1 @@

+"file_id","input_path","output_path","score","q1","q2","q3","q4","q5","q6","q7","q8","q9","q10","q11","q12","q13","q14","q15","q16","q17","q18","q19","q20"

diff --git a/OMRChecker/outputs/Images/Manual/MultiMarkedFiles.csv b/OMRChecker/outputs/Images/Manual/MultiMarkedFiles.csv

new file mode 100644

index 0000000000000000000000000000000000000000..84597b76dd63112496537204330caf1a7d436621

--- /dev/null

+++ b/OMRChecker/outputs/Images/Manual/MultiMarkedFiles.csv

@@ -0,0 +1 @@

+"file_id","input_path","output_path","score","q1","q2","q3","q4","q5","q6","q7","q8","q9","q10","q11","q12","q13","q14","q15","q16","q17","q18","q19","q20"

diff --git a/OMRChecker/outputs/Images/Results/Results_10PM.csv b/OMRChecker/outputs/Images/Results/Results_10PM.csv

new file mode 100644

index 0000000000000000000000000000000000000000..f3b83ec4b12f9e002ca0824ee43fc41f1f9ae30a

--- /dev/null

+++ b/OMRChecker/outputs/Images/Results/Results_10PM.csv

@@ -0,0 +1,2 @@

+"file_id","input_path","output_path","score","q1","q2","q3","q4","q5","q6","q7","q8","q9","q10","q11","q12","q13","q14","q15","q16","q17","q18","q19","q20"

+"OMRSheet.jpg","inputs\Images\OMRSheet.jpg","outputs\Images\CheckedOMRs\OMRSheet.jpg","0","C","D","A","C","B","C","C","D","D","B","B","B","D","C","C","ABCD","B","ABCD","C","ABCD"

diff --git a/OMRChecker/outputs/Manual/ErrorFiles.csv b/OMRChecker/outputs/Manual/ErrorFiles.csv

new file mode 100644

index 0000000000000000000000000000000000000000..a9bb85c601677eefe49c6a75b39d5bd404506322

--- /dev/null

+++ b/OMRChecker/outputs/Manual/ErrorFiles.csv

@@ -0,0 +1,3 @@

+"file_id","input_path","output_path","score","q1","q2","q3","q4","q5","q6","q7","q8","q9","q10","q11","q12","q13","q14","q15","q16","q17","q18","q19","q20"

+"OMRSheet.jpg","inputs\OMRSheet.jpg","outputs\Manual\ErrorFiles\OMRSheet.jpg","NA","","","","","","","","","","","","","","","","","","","",""

+"OMRSheet.jpg","inputs\OMRSheet.jpg","outputs\Manual\ErrorFiles\OMRSheet.jpg","NA","","","","","","","","","","","","","","","","","","","",""

diff --git a/OMRChecker/outputs/Manual/MultiMarkedFiles.csv b/OMRChecker/outputs/Manual/MultiMarkedFiles.csv

new file mode 100644

index 0000000000000000000000000000000000000000..84597b76dd63112496537204330caf1a7d436621

--- /dev/null

+++ b/OMRChecker/outputs/Manual/MultiMarkedFiles.csv

@@ -0,0 +1 @@

+"file_id","input_path","output_path","score","q1","q2","q3","q4","q5","q6","q7","q8","q9","q10","q11","q12","q13","q14","q15","q16","q17","q18","q19","q20"

diff --git a/OMRChecker/outputs/MobileCamera/Manual/ErrorFiles.csv b/OMRChecker/outputs/MobileCamera/Manual/ErrorFiles.csv

new file mode 100644

index 0000000000000000000000000000000000000000..16614444af8b0e2c2eb65aeabd26d58f85ddce32

--- /dev/null

+++ b/OMRChecker/outputs/MobileCamera/Manual/ErrorFiles.csv

@@ -0,0 +1 @@

+"file_id","input_path","output_path","score","Roll","q1","q2","q3","q4","q5","q6","q7","q8","q9","q10","q11","q12","q13","q14","q15","q16","q17","q18","q19","q20"

diff --git a/OMRChecker/outputs/MobileCamera/Manual/MultiMarkedFiles.csv b/OMRChecker/outputs/MobileCamera/Manual/MultiMarkedFiles.csv

new file mode 100644

index 0000000000000000000000000000000000000000..16614444af8b0e2c2eb65aeabd26d58f85ddce32

--- /dev/null

+++ b/OMRChecker/outputs/MobileCamera/Manual/MultiMarkedFiles.csv

@@ -0,0 +1 @@

+"file_id","input_path","output_path","score","Roll","q1","q2","q3","q4","q5","q6","q7","q8","q9","q10","q11","q12","q13","q14","q15","q16","q17","q18","q19","q20"

diff --git a/OMRChecker/outputs/MobileCamera/Results/Results_09PM.csv b/OMRChecker/outputs/MobileCamera/Results/Results_09PM.csv

new file mode 100644

index 0000000000000000000000000000000000000000..16614444af8b0e2c2eb65aeabd26d58f85ddce32

--- /dev/null

+++ b/OMRChecker/outputs/MobileCamera/Results/Results_09PM.csv

@@ -0,0 +1 @@

+"file_id","input_path","output_path","score","Roll","q1","q2","q3","q4","q5","q6","q7","q8","q9","q10","q11","q12","q13","q14","q15","q16","q17","q18","q19","q20"

diff --git a/OMRChecker/pyproject.toml b/OMRChecker/pyproject.toml

new file mode 100644

index 0000000000000000000000000000000000000000..efa55e8fc8bf1623d8569abd406fef4d81270291

--- /dev/null

+++ b/OMRChecker/pyproject.toml

@@ -0,0 +1,18 @@

+[tool.black]

+exclude = '''

+(

+ /(

+ \.eggs # exclude a few common directories in the

+ | \.git # root of the project

+ | \.venv

+ | _build

+ | build

+ | dist

+ )/

+ | foo.py # also separately exclude a file named foo.py in

+ # the root of the project

+)

+'''

+include = '\.pyi?$'

+line-length = 88

+target-version = ['py37']

diff --git a/OMRChecker/pytest.ini b/OMRChecker/pytest.ini

new file mode 100644

index 0000000000000000000000000000000000000000..417c4a2dd810294aa9afaa9b4f73464fc9808c96

--- /dev/null

+++ b/OMRChecker/pytest.ini

@@ -0,0 +1,6 @@

+# pytest.ini

+[pytest]

+minversion = 7.0

+addopts = -qq --capture=no

+testpaths =

+ src/tests

diff --git a/OMRChecker/requirements.dev.txt b/OMRChecker/requirements.dev.txt

new file mode 100644

index 0000000000000000000000000000000000000000..fb31b5f80558481a3f2a5edbb741b363a1bd1089

--- /dev/null

+++ b/OMRChecker/requirements.dev.txt

@@ -0,0 +1,7 @@

+-r requirements.txt

+flake8>=6.0.0

+freezegun>=1.2.2

+pre-commit>=3.3.3

+pytest-mock>=3.11.1

+pytest>=7.4.0

+syrupy>=4.0.4

diff --git a/OMRChecker/requirements.txt b/OMRChecker/requirements.txt

new file mode 100644

index 0000000000000000000000000000000000000000..d0c3af875835b492a48ef689c3dc9335eb7cdca7

--- /dev/null

+++ b/OMRChecker/requirements.txt

@@ -0,0 +1,8 @@

+deepmerge>=1.1.0

+dotmap>=1.3.30

+jsonschema>=4.17.3

+matplotlib>=3.7.1

+numpy>=1.25.0

+pandas>=2.0.2

+rich>=13.4.2

+screeninfo>=0.8.1

diff --git a/OMRChecker/samples/answer-key/using-csv/adrian_omr.png b/OMRChecker/samples/answer-key/using-csv/adrian_omr.png

new file mode 100644

index 0000000000000000000000000000000000000000..d8db0994df2dcfaeb67ff667abd2edb55b47f927

Binary files /dev/null and b/OMRChecker/samples/answer-key/using-csv/adrian_omr.png differ

diff --git a/OMRChecker/samples/answer-key/using-csv/answer_key.csv b/OMRChecker/samples/answer-key/using-csv/answer_key.csv

new file mode 100644

index 0000000000000000000000000000000000000000..acf09be611a66dfaeb7127e7249eadfbba6b0881

--- /dev/null

+++ b/OMRChecker/samples/answer-key/using-csv/answer_key.csv

@@ -0,0 +1,5 @@

+q1,C

+q2,E

+q3,A

+q4,B

+q5,B

\ No newline at end of file

diff --git a/OMRChecker/samples/answer-key/using-csv/evaluation.json b/OMRChecker/samples/answer-key/using-csv/evaluation.json

new file mode 100644

index 0000000000000000000000000000000000000000..69b0ef8c94df580b6190a41bb1eeacba73323762

--- /dev/null

+++ b/OMRChecker/samples/answer-key/using-csv/evaluation.json

@@ -0,0 +1,14 @@

+{

+ "source_type": "csv",

+ "options": {

+ "answer_key_csv_path": "answer_key.csv",

+ "should_explain_scoring": true

+ },

+ "marking_schemes": {

+ "DEFAULT": {

+ "correct": "1",

+ "incorrect": "0",

+ "unmarked": "0"

+ }

+ }

+}

diff --git a/OMRChecker/samples/answer-key/using-csv/template.json b/OMRChecker/samples/answer-key/using-csv/template.json

new file mode 100644

index 0000000000000000000000000000000000000000..25db408b27c65a7ec5b570507403b9e439a26452

--- /dev/null

+++ b/OMRChecker/samples/answer-key/using-csv/template.json

@@ -0,0 +1,35 @@

+{

+ "pageDimensions": [

+ 300,

+ 400

+ ],

+ "bubbleDimensions": [

+ 25,

+ 25

+ ],

+ "preProcessors": [

+ {

+ "name": "CropPage",

+ "options": {

+ "morphKernel": [

+ 10,

+ 10

+ ]

+ }

+ }

+ ],

+ "fieldBlocks": {

+ "MCQ_Block_1": {

+ "fieldType": "QTYPE_MCQ5",

+ "origin": [

+ 65,

+ 60

+ ],

+ "fieldLabels": [

+ "q1..5"

+ ],

+ "labelsGap": 52,

+ "bubblesGap": 41

+ }

+ }

+}

diff --git a/OMRChecker/samples/answer-key/weighted-answers/evaluation.json b/OMRChecker/samples/answer-key/weighted-answers/evaluation.json

new file mode 100644

index 0000000000000000000000000000000000000000..09508b18e3490efcd68d6cb0b57a03d6e203f2ca

--- /dev/null

+++ b/OMRChecker/samples/answer-key/weighted-answers/evaluation.json

@@ -0,0 +1,35 @@

+{

+ "source_type": "custom",

+ "options": {

+ "questions_in_order": [

+ "q1..5"

+ ],

+ "answers_in_order": [

+ "C",

+ "E",

+ [

+ "A",

+ "C"

+ ],

+ [

+ [

+ "B",

+ 2

+ ],

+ [

+ "C",

+ "3/2"

+ ]

+ ],

+ "C"

+ ],

+ "should_explain_scoring": true

+ },

+ "marking_schemes": {

+ "DEFAULT": {

+ "correct": "3",

+ "incorrect": "-1",

+ "unmarked": "0"

+ }

+ }

+}

diff --git a/OMRChecker/samples/answer-key/weighted-answers/images/adrian_omr.png b/OMRChecker/samples/answer-key/weighted-answers/images/adrian_omr.png

new file mode 100644

index 0000000000000000000000000000000000000000..215ef154d463851576758b4eeababd196359e9ac

--- /dev/null

+++ b/OMRChecker/samples/answer-key/weighted-answers/images/adrian_omr.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:85b11881d4b3a62002dfb411a2a4fa1b5ee3816d4d26e07924681e29c3fe68e4

+size 105167

diff --git a/OMRChecker/samples/answer-key/weighted-answers/images/adrian_omr_2.png b/OMRChecker/samples/answer-key/weighted-answers/images/adrian_omr_2.png

new file mode 100644

index 0000000000000000000000000000000000000000..d8db0994df2dcfaeb67ff667abd2edb55b47f927

Binary files /dev/null and b/OMRChecker/samples/answer-key/weighted-answers/images/adrian_omr_2.png differ

diff --git a/OMRChecker/samples/answer-key/weighted-answers/template.json b/OMRChecker/samples/answer-key/weighted-answers/template.json

new file mode 100644

index 0000000000000000000000000000000000000000..25db408b27c65a7ec5b570507403b9e439a26452

--- /dev/null

+++ b/OMRChecker/samples/answer-key/weighted-answers/template.json

@@ -0,0 +1,35 @@

+{

+ "pageDimensions": [

+ 300,

+ 400

+ ],

+ "bubbleDimensions": [

+ 25,

+ 25

+ ],

+ "preProcessors": [

+ {

+ "name": "CropPage",

+ "options": {

+ "morphKernel": [

+ 10,

+ 10

+ ]

+ }

+ }

+ ],

+ "fieldBlocks": {

+ "MCQ_Block_1": {

+ "fieldType": "QTYPE_MCQ5",

+ "origin": [

+ 65,

+ 60

+ ],

+ "fieldLabels": [

+ "q1..5"

+ ],

+ "labelsGap": 52,

+ "bubblesGap": 41

+ }

+ }

+}

diff --git a/OMRChecker/samples/community/Antibodyy/simple_omr_sheet.jpg b/OMRChecker/samples/community/Antibodyy/simple_omr_sheet.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..661d5f4fa73d7eea57e73837cf3862a25b9efcc3

Binary files /dev/null and b/OMRChecker/samples/community/Antibodyy/simple_omr_sheet.jpg differ

diff --git a/OMRChecker/samples/community/Antibodyy/template.json b/OMRChecker/samples/community/Antibodyy/template.json

new file mode 100644

index 0000000000000000000000000000000000000000..55e016e8eb402156d62f6553067b68abb37377ee

--- /dev/null

+++ b/OMRChecker/samples/community/Antibodyy/template.json

@@ -0,0 +1,35 @@

+{

+ "pageDimensions": [

+ 299,

+ 398

+ ],

+ "bubbleDimensions": [

+ 42,

+ 42

+ ],

+ "fieldBlocks": {

+ "MCQBlock1": {

+ "fieldType": "QTYPE_MCQ5",

+ "origin": [

+ 65,

+ 79

+ ],

+ "bubblesGap": 43,

+ "labelsGap": 50,

+ "fieldLabels": [

+ "q1..6"

+ ]

+ }

+ },

+ "preProcessors": [

+ {

+ "name": "CropPage",

+ "options": {

+ "morphKernel": [

+ 10,

+ 10

+ ]

+ }

+ }

+ ]

+}

diff --git a/OMRChecker/samples/community/Sandeep-1507/omr-1.png b/OMRChecker/samples/community/Sandeep-1507/omr-1.png

new file mode 100644

index 0000000000000000000000000000000000000000..5ed00d194a806d58c3db1dab7831796df7d4aaef

--- /dev/null

+++ b/OMRChecker/samples/community/Sandeep-1507/omr-1.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:ed3024e5b709fbbdb1bcbef35dcfa50203ddb811f74b26e657d3f1b86c7e8c94

+size 381247

diff --git a/OMRChecker/samples/community/Sandeep-1507/omr-2.png b/OMRChecker/samples/community/Sandeep-1507/omr-2.png

new file mode 100644

index 0000000000000000000000000000000000000000..d250db2c94980defb7ab78f9114ca56c1f223024

--- /dev/null

+++ b/OMRChecker/samples/community/Sandeep-1507/omr-2.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:571109a06a09b792694a0ecac329283da199ac4495b1f10ffb7e5925c76a75c9

+size 130973

diff --git a/OMRChecker/samples/community/Sandeep-1507/omr-3.png b/OMRChecker/samples/community/Sandeep-1507/omr-3.png

new file mode 100644

index 0000000000000000000000000000000000000000..c414be1c6fa93808d3419715e2e610884e233926

--- /dev/null

+++ b/OMRChecker/samples/community/Sandeep-1507/omr-3.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:3976c705b45d889b06acc4bf82b27f129ad55c4875ee2aa6eb01aecf5a0250f6

+size 144032

diff --git a/OMRChecker/samples/community/Sandeep-1507/template.json b/OMRChecker/samples/community/Sandeep-1507/template.json

new file mode 100644

index 0000000000000000000000000000000000000000..bdb929ccd1a61c3ae54df4cdd6a8cf4281369733

--- /dev/null

+++ b/OMRChecker/samples/community/Sandeep-1507/template.json

@@ -0,0 +1,234 @@

+{

+ "pageDimensions": [

+ 1189,

+ 1682

+ ],

+ "bubbleDimensions": [

+ 15,

+ 15

+ ],

+ "preProcessors": [

+ {

+ "name": "GaussianBlur",

+ "options": {

+ "kSize": [

+ 3,

+ 3

+ ],

+ "sigmaX": 0

+ }

+ }

+ ],

+ "customLabels": {

+ "Booklet_No": [

+ "b1..7"

+ ]

+ },

+ "fieldBlocks": {

+ "Booklet_No": {

+ "fieldType": "QTYPE_INT",

+ "origin": [

+ 112,

+ 530

+ ],

+ "fieldLabels": [

+ "b1..7"

+ ],

+ "emptyValue": "no",

+ "bubblesGap": 28,

+ "labelsGap": 26.5

+ },

+ "MCQBlock1a1": {

+ "fieldType": "QTYPE_MCQ4",

+ "fieldLabels": [

+ "q1..10"

+ ],

+ "bubblesGap": 28.7,

+ "labelsGap": 26.7,

+ "origin": [

+ 476,

+ 100

+ ]

+ },

+ "MCQBlock1a2": {

+ "fieldType": "QTYPE_MCQ4",

+ "fieldLabels": [

+ "q11..20"

+ ],

+ "bubblesGap": 28.7,

+ "labelsGap": 26.7,

+ "origin": [

+ 476,

+ 370

+ ]

+ },

+ "MCQBlock1a3": {

+ "fieldType": "QTYPE_MCQ4",

+ "fieldLabels": [

+ "q21..35"

+ ],

+ "bubblesGap": 28.7,

+ "labelsGap": 26.7,

+ "origin": [

+ 476,

+ 638

+ ]

+ },

+ "MCQBlock2a1": {

+ "fieldType": "QTYPE_MCQ4",

+ "fieldLabels": [

+ "q51..60"

+ ],

+ "bubblesGap": 28.7,

+ "labelsGap": 26.7,

+ "origin": [

+ 645,

+ 100

+ ]

+ },

+ "MCQBlock2a2": {

+ "fieldType": "QTYPE_MCQ4",

+ "fieldLabels": [

+ "q61..70"

+ ],

+ "bubblesGap": 28.7,

+ "labelsGap": 26.7,

+ "origin": [

+ 645,

+ 370

+ ]

+ },

+ "MCQBlock2a3": {

+ "fieldType": "QTYPE_MCQ4",

+ "fieldLabels": [

+ "q71..85"

+ ],

+ "bubblesGap": 28.7,

+ "labelsGap": 26.7,

+ "origin": [

+ 645,

+ 638

+ ]

+ },

+ "MCQBlock3a1": {

+ "fieldType": "QTYPE_MCQ4",

+ "fieldLabels": [

+ "q101..110"

+ ],

+ "bubblesGap": 28.7,

+ "labelsGap": 26.7,

+ "origin": [

+ 815,

+ 100

+ ]

+ },

+ "MCQBlock3a2": {

+ "fieldType": "QTYPE_MCQ4",

+ "fieldLabels": [

+ "q111..120"

+ ],

+ "bubblesGap": 28.7,

+ "labelsGap": 26.7,

+ "origin": [

+ 815,

+ 370

+ ]

+ },

+ "MCQBlock3a3": {

+ "fieldType": "QTYPE_MCQ4",

+ "fieldLabels": [

+ "q121..135"

+ ],

+ "bubblesGap": 28.7,

+ "labelsGap": 26.7,

+ "origin": [

+ 815,

+ 638

+ ]

+ },

+ "MCQBlock4a1": {

+ "fieldType": "QTYPE_MCQ4",

+ "fieldLabels": [

+ "q151..160"

+ ],

+ "bubblesGap": 28.7,

+ "labelsGap": 26.7,

+ "origin": [

+ 983,

+ 100

+ ]

+ },

+ "MCQBlock4a2": {

+ "fieldType": "QTYPE_MCQ4",

+ "fieldLabels": [

+ "q161..170"

+ ],

+ "bubblesGap": 28.7,

+ "labelsGap": 26.7,

+ "origin": [

+ 983,

+ 370

+ ]

+ },

+ "MCQBlock4a3": {

+ "fieldType": "QTYPE_MCQ4",

+ "fieldLabels": [

+ "q171..185"

+ ],

+ "bubblesGap": 28.7,

+ "labelsGap": 26.7,

+ "origin": [

+ 983,

+ 638

+ ]

+ },

+ "MCQBlock1a": {

+ "fieldType": "QTYPE_MCQ4",

+ "fieldLabels": [

+ "q36..50"

+ ],

+ "bubblesGap": 28.7,

+ "labelsGap": 26.7,

+ "origin": [

+ 480,

+ 1061

+ ]

+ },

+ "MCQBlock2a": {

+ "fieldType": "QTYPE_MCQ4",

+ "fieldLabels": [

+ "q86..100"

+ ],

+ "bubblesGap": 28.7,

+ "labelsGap": 26.7,

+ "origin": [

+ 648,

+ 1061

+ ]

+ },

+ "MCQBlock3a": {

+ "fieldType": "QTYPE_MCQ4",

+ "fieldLabels": [

+ "q136..150"

+ ],

+ "bubblesGap": 28.7,

+ "labelsGap": 26.7,

+ "origin": [

+ 815,

+ 1061

+ ]

+ },

+ "MCQBlock4a": {

+ "fieldType": "QTYPE_MCQ4",

+ "fieldLabels": [

+ "q186..200"

+ ],

+ "bubblesGap": 28.7,

+ "labelsGap": 26.6,

+ "origin": [

+ 986,

+ 1061

+ ]

+ }

+ }

+}

diff --git a/OMRChecker/samples/community/Shamanth/omr_sheet_01.png b/OMRChecker/samples/community/Shamanth/omr_sheet_01.png

new file mode 100644

index 0000000000000000000000000000000000000000..7c317db90f80fdd8d3dcfbbadcb1f9b764e619e3

--- /dev/null

+++ b/OMRChecker/samples/community/Shamanth/omr_sheet_01.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:9dd72b86ce9c6544f4a628187b4021df5746e9579222e7598c4d88846f2fbeb3

+size 107647

diff --git a/OMRChecker/samples/community/Shamanth/template.json b/OMRChecker/samples/community/Shamanth/template.json

new file mode 100644

index 0000000000000000000000000000000000000000..7d8c34d5e4b7af5ca94415b5096c82300156bcbc

--- /dev/null

+++ b/OMRChecker/samples/community/Shamanth/template.json

@@ -0,0 +1,25 @@

+{

+ "pageDimensions": [

+ 300,

+ 400

+ ],

+ "bubbleDimensions": [

+ 20,

+ 20

+ ],

+ "fieldBlocks": {

+ "MCQBlock1": {

+ "fieldType": "QTYPE_MCQ4",

+ "origin": [

+ 78,

+ 41

+ ],

+ "fieldLabels": [

+ "q21..28"

+ ],

+ "bubblesGap": 56,

+ "labelsGap": 46

+ }

+ },

+ "preProcessors": []

+}

diff --git a/OMRChecker/samples/community/UPSC-mock/answer_key.jpg b/OMRChecker/samples/community/UPSC-mock/answer_key.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..9d39b263e8fe5c23e391ad39bd0905efbf976038

--- /dev/null

+++ b/OMRChecker/samples/community/UPSC-mock/answer_key.jpg

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:13bb31818680ca8497ee4c640a15d4acab2048cf11c973d758c8c75fd71c73be

+size 291737

diff --git a/OMRChecker/samples/community/UPSC-mock/config.json b/OMRChecker/samples/community/UPSC-mock/config.json

new file mode 100644

index 0000000000000000000000000000000000000000..388060a33b04db4b550d0efecafd32aa277355d5

--- /dev/null

+++ b/OMRChecker/samples/community/UPSC-mock/config.json