Spaces:

Sleeping

Sleeping

“clover2024” commited on

Commit ·

88e9f42

1

Parent(s): 3ff4fba

init

Browse files- .gitattributes +4 -0

- README.md +50 -0

- app.py +55 -0

- med_faq/index.faiss +3 -0

- med_faq/index.pkl +3 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,7 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

*.faiss filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

med_faq/index.faiss filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

med_faq/index. filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

med_faq/index.pkl filter=lfs diff=lfs merge=lfs -text

|

README.md

CHANGED

|

@@ -8,5 +8,55 @@ sdk_version: 4.18.0

|

|

| 8 |

app_file: app.py

|

| 9 |

pinned: false

|

| 10 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 11 |

|

| 12 |

Check out the configuration reference at https://huggingface.co/docs/hub/spaces-config-reference

|

|

|

|

| 8 |

app_file: app.py

|

| 9 |

pinned: false

|

| 10 |

---

|

| 11 |

+

# LangChain 实战:医疗问题聊天机器人

|

| 12 |

+

|

| 13 |

+

## 介绍

|

| 14 |

+

|

| 15 |

+

在本文中,我们将使用 LangChain 库来构建一个医疗问题聊天机器人。 LangChain 是一个用于构建基于语言的 AI 应用的库,它提供了一系列工具和模块,使得开发者能够轻松地构建各种类型的聊天机器人。

|

| 16 |

+

|

| 17 |

+

我们将使用 LangChain 的以下功能:

|

| 18 |

+

|

| 19 |

+

- Document Transformers 文本处理模块,用于预处理和清理文本数据。

|

| 20 |

+

- RetrievalQA 聊天机器人模块,用于构建聊天机器人。

|

| 21 |

+

|

| 22 |

+

## 数据集

|

| 23 |

+

|

| 24 |

+

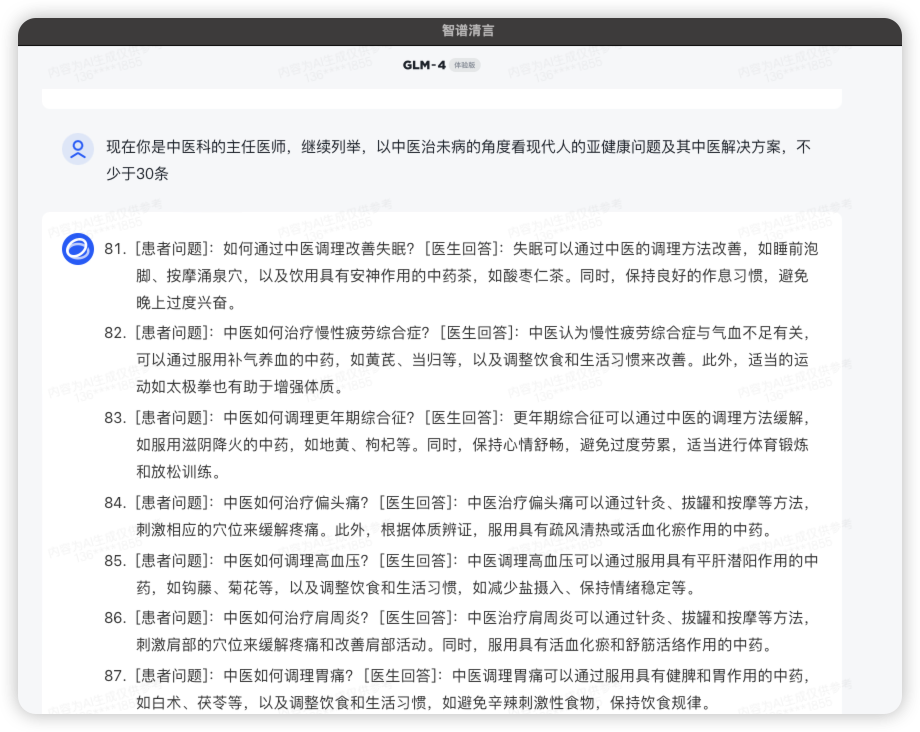

使用 ChatGLM-4 构造医疗问题问答数据的 Prompt 示例:

|

| 25 |

+

|

| 26 |

+

```text

|

| 27 |

+

你是中国协和医院顶级的全科医生,现在向大众普及培训医疗知识,请给出100个常见的患者提出的医疗问题及其建议解决方案。

|

| 28 |

+

每条以如下格式给出:

|

| 29 |

+

[患者问题]

|

| 30 |

+

[医生回答]

|

| 31 |

+

|

| 32 |

+

```

|

| 33 |

+

|

| 34 |

+

因为后台单次token的限制,只列举了20条,其余要求其继续输出或构造如下 Prompt:

|

| 35 |

+

|

| 36 |

+

```text

|

| 37 |

+

现在你是精神科的主任医师,列举中小学生、大学生、程序员会遇到的常见精神疾病问题与建议解决方案,15条

|

| 38 |

+

```

|

| 39 |

+

|

| 40 |

+

界面展示:

|

| 41 |

+

|

| 42 |

+

|

| 43 |

+

|

| 44 |

+

## 如何运行

|

| 45 |

+

|

| 46 |

+

Git 提交已经包含了生成的 Chroma 数据库 `med_faq`,直接运行 `med-bot.py` 即可。

|

| 47 |

+

|

| 48 |

+

## 效果

|

| 49 |

+

|

| 50 |

+

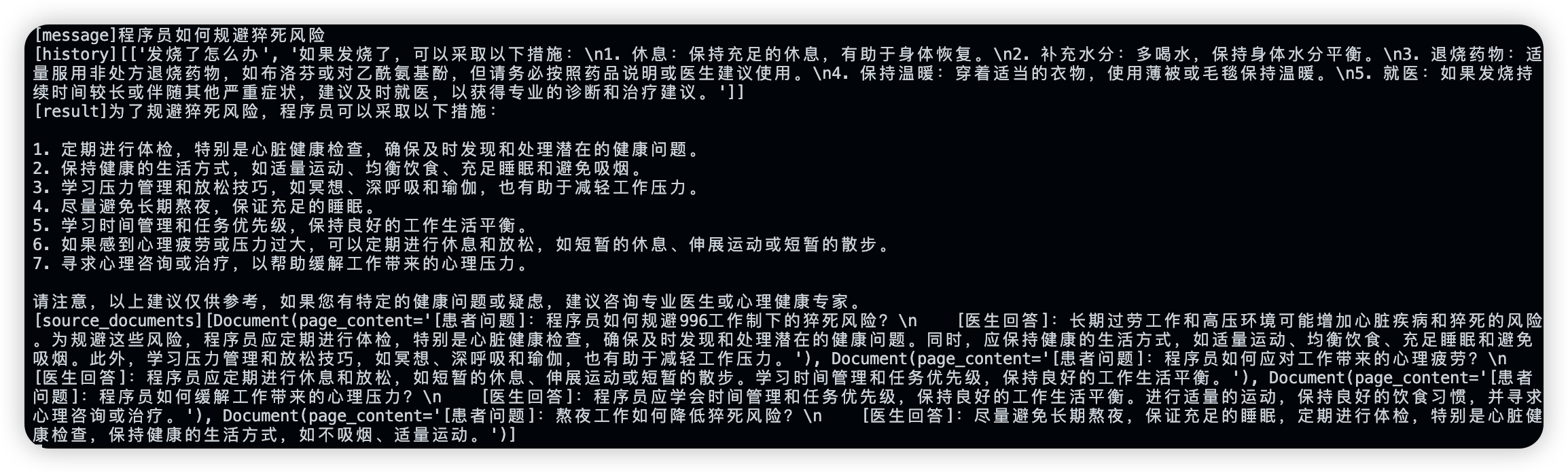

Gradio 运行界面

|

| 51 |

+

|

| 52 |

+

|

| 53 |

+

|

| 54 |

+

后台日志展示

|

| 55 |

+

|

| 56 |

+

|

| 57 |

+

|

| 58 |

+

基于 DjangoPeng 的 sales-chatbot 房产销售机器人开发

|

| 59 |

+

|

| 60 |

+

医疗问答机器人 med-bot 二次开发:CloverWang

|

| 61 |

|

| 62 |

Check out the configuration reference at https://huggingface.co/docs/hub/spaces-config-reference

|

app.py

ADDED

|

@@ -0,0 +1,55 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# 这些只是一些建议,每个人的健康状况和需求可能不同。如果有关健康的问题或症状持续存在,建议咨询医生或专业的医疗人员。

|

| 2 |

+

import gradio as gr

|

| 3 |

+

|

| 4 |

+

from langchain_openai import OpenAIEmbeddings

|

| 5 |

+

from langchain_community.vectorstores import FAISS

|

| 6 |

+

from langchain.chains import RetrievalQA

|

| 7 |

+

from langchain_openai import ChatOpenAI

|

| 8 |

+

|

| 9 |

+

def initialize_MED_BOT(vector_store_dir: str="med_faq"):

|

| 10 |

+

db = FAISS.load_local(vector_store_dir, OpenAIEmbeddings())

|

| 11 |

+

llm = ChatOpenAI(model_name="gpt-3.5-turbo", temperature=0)

|

| 12 |

+

|

| 13 |

+

global MED_BOT

|

| 14 |

+

MED_BOT = RetrievalQA.from_chain_type(llm,

|

| 15 |

+

retriever=db.as_retriever(search_type="similarity_score_threshold",

|

| 16 |

+

search_kwargs={"score_threshold": 0.75}))

|

| 17 |

+

# 返回向量数据库的检索结果

|

| 18 |

+

MED_BOT.return_source_documents = True

|

| 19 |

+

|

| 20 |

+

return MED_BOT

|

| 21 |

+

|

| 22 |

+

def med_chat(message, history):

|

| 23 |

+

print(f"[message]{message}")

|

| 24 |

+

print(f"[history]{history}")

|

| 25 |

+

# TODO: 从命令行参数中获取

|

| 26 |

+

enable_chat = True

|

| 27 |

+

|

| 28 |

+

ans = MED_BOT({"query": message})

|

| 29 |

+

# 如果检索出结果,或者开了大模型聊天模式

|

| 30 |

+

# 返回 RetrievalQA combine_documents_chain 整合的结果

|

| 31 |

+

if ans["source_documents"] or enable_chat:

|

| 32 |

+

print(f"[result]{ans['result']}")

|

| 33 |

+

print(f"[source_documents]{ans['source_documents']}")

|

| 34 |

+

return ans["result"]

|

| 35 |

+

# 否则输出较为保守的回答

|

| 36 |

+

else:

|

| 37 |

+

return "这个问题"

|

| 38 |

+

|

| 39 |

+

|

| 40 |

+

def launch_gradio():

|

| 41 |

+

demo = gr.ChatInterface(

|

| 42 |

+

fn=med_chat,

|

| 43 |

+

title="常见医疗问题问答机器人",

|

| 44 |

+

# retry_btn=None,

|

| 45 |

+

# undo_btn=None,

|

| 46 |

+

chatbot=gr.Chatbot(height=600),

|

| 47 |

+

)

|

| 48 |

+

|

| 49 |

+

demo.launch(share=True, server_name="127.0.0.1")

|

| 50 |

+

|

| 51 |

+

if __name__ == "__main__":

|

| 52 |

+

# 初始化医疗问题问答机器人

|

| 53 |

+

initialize_MED_BOT()

|

| 54 |

+

# 启动 Gradio 服务

|

| 55 |

+

launch_gradio()

|

med_faq/index.faiss

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:3dd685f68aead12d482ab7fbfddf528b700202d511b57e9e28d94e4e7af137b8

|

| 3 |

+

size 1296429

|

med_faq/index.pkl

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0c16c9860442b21bba77e7f34e8626058527a7818484c6e2c6d74833a4dc097a

|

| 3 |

+

size 84403

|