+

+  +

+  +

+ +

+ +

+ +

++  +

+ |

+

| Source Video | +Output Video | +

|---|---|

|

+ raccoon playing a guitar

+ +  +

+ |

+

+ a panda, playing a guitar, sitting in a pink boat, in the ocean, mountains in background, realistic, high quality

+ +  +

+ |

+

+  +

+ |

+

+  +

+ |

+

+  +

+ |

+

+  +

+ |

+ ||

+  +

+ |

+

+  +

+ |

+

| Source Video | +Output Video | +

|---|---|

|

+ raccoon playing a guitar

+ +  +

+ |

+

+ panda playing a guitar

+ +  +

+ |

+

|

+ closeup of margot robbie, fireworks in the background, high quality

+ +  +

+ |

+

+ closeup of tony stark, robert downey jr, fireworks

+ +  +

+ |

+

| Source Video | +Output Video | +

|---|---|

|

+ anime girl, dancing

+ +  +

+ |

+

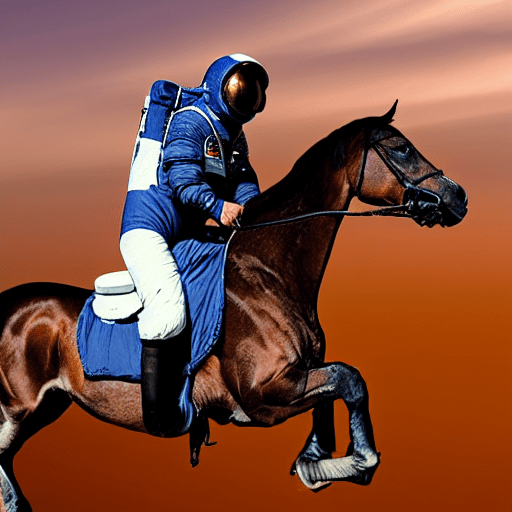

+ astronaut in space, dancing

+ +  +

+ |

+

+  +

+ |

+

+  +

+ |

+

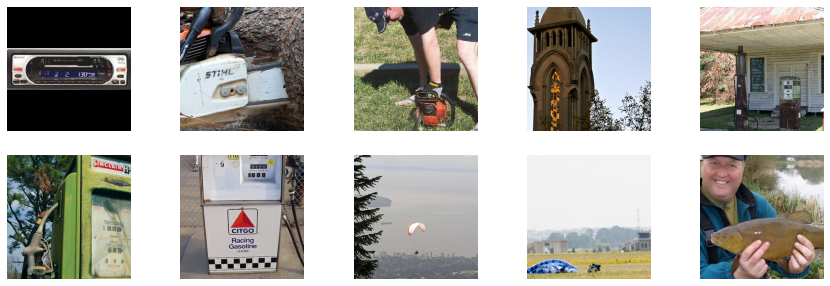

| Without FreeInit enabled | +With FreeInit enabled | +

|---|---|

|

+ panda playing a guitar

+ +  +

+ |

+

+ panda playing a guitar

+ +  +

+ |

+

+  +

+ |

+

+  +

+ |

+

+  +

+ |

+

+ +Pipelines do not offer any training functionality. You'll notice PyTorch's autograd is disabled by decorating the [`~DiffusionPipeline.__call__`] method with a [`torch.no_grad`](https://pytorch.org/docs/stable/generated/torch.no_grad.html) decorator because pipelines should not be used for training. If you're interested in training, please take a look at the [Training](../../training/overview) guides instead! + +

+  +

+ |

+

+  +

+ |

+

| + Pipeline + | ++ Supported tasks + | ++ 🤗 Space + | +

|---|---|---|

| + StableDiffusion + | +text-to-image | + +

+ |

+

| + StableDiffusionImg2Img + | +image-to-image | + +

+ |

+

| + StableDiffusionInpaint + | +inpainting | + +

+ |

+

| + StableDiffusionDepth2Img + | +depth-to-image | + +

+ |

+

| + StableDiffusionImageVariation + | +image variation | + +

+ |

+

| + StableDiffusionPipelineSafe + | +filtered text-to-image | + +

+ |

+

| + StableDiffusion2 + | +text-to-image, inpainting, depth-to-image, super-resolution | + +

+ |

+

| + StableDiffusionXL + | +text-to-image, image-to-image | + +

+ |

+

| + StableDiffusionLatentUpscale + | +super-resolution | + +

+ |

+

| + StableDiffusionUpscale + | +super-resolution | +|

| + StableDiffusionLDM3D + | +text-to-rgb, text-to-depth, text-to-pano | + +

+ |

+

| + StableDiffusionUpscaleLDM3D + | +ldm3d super-resolution | +

+ +Check out the [Stability AI](https://huggingface.co/stabilityai) Hub organization for the [base](https://huggingface.co/stabilityai/stable-video-diffusion-img2vid) and [extended frame](https://huggingface.co/stabilityai/stable-video-diffusion-img2vid-xt) checkpoints! + +

+  +

+ |

+ +  +

+ |

+

+  +

+ |

+

| Project Name | +Description | +

|---|---|

| dream-textures | +Stable Diffusion built-in to Blender | +

| HiDiffusion | +Increases the resolution and speed of your diffusion model by only adding a single line of code | +

| IC-Light | +IC-Light is a project to manipulate the illumination of images | +

| InstantID | +InstantID : Zero-shot Identity-Preserving Generation in Seconds | +

| IOPaint | +Image inpainting tool powered by SOTA AI Model. Remove any unwanted object, defect, people from your pictures or erase and replace(powered by stable diffusion) any thing on your pictures. | +

| Kohya | +Gradio GUI for Kohya's Stable Diffusion trainers | +

| MagicAnimate | +MagicAnimate: Temporally Consistent Human Image Animation using Diffusion Model | +

| OOTDiffusion | +Outfitting Fusion based Latent Diffusion for Controllable Virtual Try-on | +

| SD.Next | +SD.Next: Advanced Implementation of Stable Diffusion and other Diffusion-based generative image models | +

| stable-dreamfusion | +Text-to-3D & Image-to-3D & Mesh Exportation with NeRF + Diffusion | +

| StoryDiffusion | +StoryDiffusion can create a magic story by generating consistent images and videos. | +

| StreamDiffusion | +A Pipeline-Level Solution for Real-Time Interactive Generation | +

| Stable Diffusion Server | +A server configured for Inpainting/Generation/img2img with one stable diffusion model | +

+

+Whichever way you choose to contribute, we strive to be part of an open, welcoming, and kind community. Please, read our [code of conduct](https://github.com/huggingface/diffusers/blob/main/CODE_OF_CONDUCT.md) and be mindful to respect it during your interactions. We also recommend you become familiar with the [ethical guidelines](https://huggingface.co/docs/diffusers/conceptual/ethical_guidelines) that guide our project and ask you to adhere to the same principles of transparency and responsibility.

+

+We enormously value feedback from the community, so please do not be afraid to speak up if you believe you have valuable feedback that can help improve the library - every message, comment, issue, and pull request (PR) is read and considered.

+

+## Overview

+

+You can contribute in many ways ranging from answering questions on issues and discussions to adding new diffusion models to the core library.

+

+In the following, we give an overview of different ways to contribute, ranked by difficulty in ascending order. All of them are valuable to the community.

+

+* 1. Asking and answering questions on [the Diffusers discussion forum](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers) or on [Discord](https://discord.gg/G7tWnz98XR).

+* 2. Opening new issues on [the GitHub Issues tab](https://github.com/huggingface/diffusers/issues/new/choose) or new discussions on [the GitHub Discussions tab](https://github.com/huggingface/diffusers/discussions/new/choose).

+* 3. Answering issues on [the GitHub Issues tab](https://github.com/huggingface/diffusers/issues) or discussions on [the GitHub Discussions tab](https://github.com/huggingface/diffusers/discussions).

+* 4. Fix a simple issue, marked by the "Good first issue" label, see [here](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22good+first+issue%22).

+* 5. Contribute to the [documentation](https://github.com/huggingface/diffusers/tree/main/docs/source).

+* 6. Contribute a [Community Pipeline](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3Acommunity-examples).

+* 7. Contribute to the [examples](https://github.com/huggingface/diffusers/tree/main/examples).

+* 8. Fix a more difficult issue, marked by the "Good second issue" label, see [here](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22Good+second+issue%22).

+* 9. Add a new pipeline, model, or scheduler, see ["New Pipeline/Model"](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22New+pipeline%2Fmodel%22) and ["New scheduler"](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22New+scheduler%22) issues. For this contribution, please have a look at [Design Philosophy](https://github.com/huggingface/diffusers/blob/main/PHILOSOPHY.md).

+

+As said before, **all contributions are valuable to the community**.

+In the following, we will explain each contribution a bit more in detail.

+

+For all contributions 4 - 9, you will need to open a PR. It is explained in detail how to do so in [Opening a pull request](#how-to-open-a-pr).

+

+### 1. Asking and answering questions on the Diffusers discussion forum or on the Diffusers Discord

+

+Any question or comment related to the Diffusers library can be asked on the [discussion forum](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/) or on [Discord](https://discord.gg/G7tWnz98XR). Such questions and comments include (but are not limited to):

+- Reports of training or inference experiments in an attempt to share knowledge

+- Presentation of personal projects

+- Questions to non-official training examples

+- Project proposals

+- General feedback

+- Paper summaries

+- Asking for help on personal projects that build on top of the Diffusers library

+- General questions

+- Ethical questions regarding diffusion models

+- ...

+

+Every question that is asked on the forum or on Discord actively encourages the community to publicly

+share knowledge and might very well help a beginner in the future who has the same question you're

+having. Please do pose any questions you might have.

+In the same spirit, you are of immense help to the community by answering such questions because this way you are publicly documenting knowledge for everybody to learn from.

+

+**Please** keep in mind that the more effort you put into asking or answering a question, the higher

+the quality of the publicly documented knowledge. In the same way, well-posed and well-answered questions create a high-quality knowledge database accessible to everybody, while badly posed questions or answers reduce the overall quality of the public knowledge database.

+In short, a high quality question or answer is *precise*, *concise*, *relevant*, *easy-to-understand*, *accessible*, and *well-formatted/well-posed*. For more information, please have a look through the [How to write a good issue](#how-to-write-a-good-issue) section.

+

+**NOTE about channels**:

+[*The forum*](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/63) is much better indexed by search engines, such as Google. Posts are ranked by popularity rather than chronologically. Hence, it's easier to look up questions and answers that we posted some time ago.

+In addition, questions and answers posted in the forum can easily be linked to.

+In contrast, *Discord* has a chat-like format that invites fast back-and-forth communication.

+While it will most likely take less time for you to get an answer to your question on Discord, your

+question won't be visible anymore over time. Also, it's much harder to find information that was posted a while back on Discord. We therefore strongly recommend using the forum for high-quality questions and answers in an attempt to create long-lasting knowledge for the community. If discussions on Discord lead to very interesting answers and conclusions, we recommend posting the results on the forum to make the information more available for future readers.

+

+### 2. Opening new issues on the GitHub issues tab

+

+The 🧨 Diffusers library is robust and reliable thanks to the users who notify us of

+the problems they encounter. So thank you for reporting an issue.

+

+Remember, GitHub issues are reserved for technical questions directly related to the Diffusers library, bug reports, feature requests, or feedback on the library design.

+

+In a nutshell, this means that everything that is **not** related to the **code of the Diffusers library** (including the documentation) should **not** be asked on GitHub, but rather on either the [forum](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/63) or [Discord](https://discord.gg/G7tWnz98XR).

+

+**Please consider the following guidelines when opening a new issue**:

+- Make sure you have searched whether your issue has already been asked before (use the search bar on GitHub under Issues).

+- Please never report a new issue on another (related) issue. If another issue is highly related, please

+open a new issue nevertheless and link to the related issue.

+- Make sure your issue is written in English. Please use one of the great, free online translation services, such as [DeepL](https://www.deepl.com/translator) to translate from your native language to English if you are not comfortable in English.

+- Check whether your issue might be solved by updating to the newest Diffusers version. Before posting your issue, please make sure that `python -c "import diffusers; print(diffusers.__version__)"` is higher or matches the latest Diffusers version.

+- Remember that the more effort you put into opening a new issue, the higher the quality of your answer will be and the better the overall quality of the Diffusers issues.

+

+New issues usually include the following.

+

+#### 2.1. Reproducible, minimal bug reports

+

+A bug report should always have a reproducible code snippet and be as minimal and concise as possible.

+This means in more detail:

+- Narrow the bug down as much as you can, **do not just dump your whole code file**.

+- Format your code.

+- Do not include any external libraries except for Diffusers depending on them.

+- **Always** provide all necessary information about your environment; for this, you can run: `diffusers-cli env` in your shell and copy-paste the displayed information to the issue.

+- Explain the issue. If the reader doesn't know what the issue is and why it is an issue, (s)he cannot solve it.

+- **Always** make sure the reader can reproduce your issue with as little effort as possible. If your code snippet cannot be run because of missing libraries or undefined variables, the reader cannot help you. Make sure your reproducible code snippet is as minimal as possible and can be copy-pasted into a simple Python shell.

+- If in order to reproduce your issue a model and/or dataset is required, make sure the reader has access to that model or dataset. You can always upload your model or dataset to the [Hub](https://huggingface.co) to make it easily downloadable. Try to keep your model and dataset as small as possible, to make the reproduction of your issue as effortless as possible.

+

+For more information, please have a look through the [How to write a good issue](#how-to-write-a-good-issue) section.

+

+You can open a bug report [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=bug&projects=&template=bug-report.yml).

+

+#### 2.2. Feature requests

+

+A world-class feature request addresses the following points:

+

+1. Motivation first:

+* Is it related to a problem/frustration with the library? If so, please explain

+why. Providing a code snippet that demonstrates the problem is best.

+* Is it related to something you would need for a project? We'd love to hear

+about it!

+* Is it something you worked on and think could benefit the community?

+Awesome! Tell us what problem it solved for you.

+2. Write a *full paragraph* describing the feature;

+3. Provide a **code snippet** that demonstrates its future use;

+4. In case this is related to a paper, please attach a link;

+5. Attach any additional information (drawings, screenshots, etc.) you think may help.

+

+You can open a feature request [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=&template=feature_request.md&title=).

+

+#### 2.3 Feedback

+

+Feedback about the library design and why it is good or not good helps the core maintainers immensely to build a user-friendly library. To understand the philosophy behind the current design philosophy, please have a look [here](https://huggingface.co/docs/diffusers/conceptual/philosophy). If you feel like a certain design choice does not fit with the current design philosophy, please explain why and how it should be changed. If a certain design choice follows the design philosophy too much, hence restricting use cases, explain why and how it should be changed.

+If a certain design choice is very useful for you, please also leave a note as this is great feedback for future design decisions.

+

+You can open an issue about feedback [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=&template=feedback.md&title=).

+

+#### 2.4 Technical questions

+

+Technical questions are mainly about why certain code of the library was written in a certain way, or what a certain part of the code does. Please make sure to link to the code in question and please provide details on

+why this part of the code is difficult to understand.

+

+You can open an issue about a technical question [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=bug&template=bug-report.yml).

+

+#### 2.5 Proposal to add a new model, scheduler, or pipeline

+

+If the diffusion model community released a new model, pipeline, or scheduler that you would like to see in the Diffusers library, please provide the following information:

+

+* Short description of the diffusion pipeline, model, or scheduler and link to the paper or public release.

+* Link to any of its open-source implementation(s).

+* Link to the model weights if they are available.

+

+If you are willing to contribute to the model yourself, let us know so we can best guide you. Also, don't forget

+to tag the original author of the component (model, scheduler, pipeline, etc.) by GitHub handle if you can find it.

+

+You can open a request for a model/pipeline/scheduler [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=New+model%2Fpipeline%2Fscheduler&template=new-model-addition.yml).

+

+### 3. Answering issues on the GitHub issues tab

+

+Answering issues on GitHub might require some technical knowledge of Diffusers, but we encourage everybody to give it a try even if you are not 100% certain that your answer is correct.

+Some tips to give a high-quality answer to an issue:

+- Be as concise and minimal as possible.

+- Stay on topic. An answer to the issue should concern the issue and only the issue.

+- Provide links to code, papers, or other sources that prove or encourage your point.

+- Answer in code. If a simple code snippet is the answer to the issue or shows how the issue can be solved, please provide a fully reproducible code snippet.

+

+Also, many issues tend to be simply off-topic, duplicates of other issues, or irrelevant. It is of great

+help to the maintainers if you can answer such issues, encouraging the author of the issue to be

+more precise, provide the link to a duplicated issue or redirect them to [the forum](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/63) or [Discord](https://discord.gg/G7tWnz98XR).

+

+If you have verified that the issued bug report is correct and requires a correction in the source code,

+please have a look at the next sections.

+

+For all of the following contributions, you will need to open a PR. It is explained in detail how to do so in the [Opening a pull request](#how-to-open-a-pr) section.

+

+### 4. Fixing a "Good first issue"

+

+*Good first issues* are marked by the [Good first issue](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22good+first+issue%22) label. Usually, the issue already

+explains how a potential solution should look so that it is easier to fix.

+If the issue hasn't been closed and you would like to try to fix this issue, you can just leave a message "I would like to try this issue.". There are usually three scenarios:

+- a.) The issue description already proposes a fix. In this case and if the solution makes sense to you, you can open a PR or draft PR to fix it.

+- b.) The issue description does not propose a fix. In this case, you can ask what a proposed fix could look like and someone from the Diffusers team should answer shortly. If you have a good idea of how to fix it, feel free to directly open a PR.

+- c.) There is already an open PR to fix the issue, but the issue hasn't been closed yet. If the PR has gone stale, you can simply open a new PR and link to the stale PR. PRs often go stale if the original contributor who wanted to fix the issue suddenly cannot find the time anymore to proceed. This often happens in open-source and is very normal. In this case, the community will be very happy if you give it a new try and leverage the knowledge of the existing PR. If there is already a PR and it is active, you can help the author by giving suggestions, reviewing the PR or even asking whether you can contribute to the PR.

+

+

+### 5. Contribute to the documentation

+

+A good library **always** has good documentation! The official documentation is often one of the first points of contact for new users of the library, and therefore contributing to the documentation is a **highly

+valuable contribution**.

+

+Contributing to the library can have many forms:

+

+- Correcting spelling or grammatical errors.

+- Correct incorrect formatting of the docstring. If you see that the official documentation is weirdly displayed or a link is broken, we would be very happy if you take some time to correct it.

+- Correct the shape or dimensions of a docstring input or output tensor.

+- Clarify documentation that is hard to understand or incorrect.

+- Update outdated code examples.

+- Translating the documentation to another language.

+

+Anything displayed on [the official Diffusers doc page](https://huggingface.co/docs/diffusers/index) is part of the official documentation and can be corrected, adjusted in the respective [documentation source](https://github.com/huggingface/diffusers/tree/main/docs/source).

+

+Please have a look at [this page](https://github.com/huggingface/diffusers/tree/main/docs) on how to verify changes made to the documentation locally.

+

+### 6. Contribute a community pipeline

+

+> [!TIP]

+> Read the [Community pipelines](../using-diffusers/custom_pipeline_overview#community-pipelines) guide to learn more about the difference between a GitHub and Hugging Face Hub community pipeline. If you're interested in why we have community pipelines, take a look at GitHub Issue [#841](https://github.com/huggingface/diffusers/issues/841) (basically, we can't maintain all the possible ways diffusion models can be used for inference but we also don't want to prevent the community from building them).

+

+Contributing a community pipeline is a great way to share your creativity and work with the community. It lets you build on top of the [`DiffusionPipeline`] so that anyone can load and use it by setting the `custom_pipeline` parameter. This section will walk you through how to create a simple pipeline where the UNet only does a single forward pass and calls the scheduler once (a "one-step" pipeline).

+

+1. Create a one_step_unet.py file for your community pipeline. This file can contain whatever package you want to use as long as it's installed by the user. Make sure you only have one pipeline class that inherits from [`DiffusionPipeline`] to load model weights and the scheduler configuration from the Hub. Add a UNet and scheduler to the `__init__` function.

+

+ You should also add the `register_modules` function to ensure your pipeline and its components can be saved with [`~DiffusionPipeline.save_pretrained`].

+

+```py

+from diffusers import DiffusionPipeline

+import torch

+

+class UnetSchedulerOneForwardPipeline(DiffusionPipeline):

+ def __init__(self, unet, scheduler):

+ super().__init__()

+

+ self.register_modules(unet=unet, scheduler=scheduler)

+```

+

+1. In the forward pass (which we recommend defining as `__call__`), you can add any feature you'd like. For the "one-step" pipeline, create a random image and call the UNet and scheduler once by setting `timestep=1`.

+

+```py

+ from diffusers import DiffusionPipeline

+ import torch

+

+ class UnetSchedulerOneForwardPipeline(DiffusionPipeline):

+ def __init__(self, unet, scheduler):

+ super().__init__()

+

+ self.register_modules(unet=unet, scheduler=scheduler)

+

+ def __call__(self):

+ image = torch.randn(

+ (1, self.unet.config.in_channels, self.unet.config.sample_size, self.unet.config.sample_size),

+ )

+ timestep = 1

+

+ model_output = self.unet(image, timestep).sample

+ scheduler_output = self.scheduler.step(model_output, timestep, image).prev_sample

+

+ return scheduler_output

+```

+

+Now you can run the pipeline by passing a UNet and scheduler to it or load pretrained weights if the pipeline structure is identical.

+

+```py

+from diffusers import DDPMScheduler, UNet2DModel

+

+scheduler = DDPMScheduler()

+unet = UNet2DModel()

+

+pipeline = UnetSchedulerOneForwardPipeline(unet=unet, scheduler=scheduler)

+output = pipeline()

+# load pretrained weights

+pipeline = UnetSchedulerOneForwardPipeline.from_pretrained("google/ddpm-cifar10-32", use_safetensors=True)

+output = pipeline()

+```

+

+You can either share your pipeline as a GitHub community pipeline or Hub community pipeline.

+

+

+

+Whichever way you choose to contribute, we strive to be part of an open, welcoming, and kind community. Please, read our [code of conduct](https://github.com/huggingface/diffusers/blob/main/CODE_OF_CONDUCT.md) and be mindful to respect it during your interactions. We also recommend you become familiar with the [ethical guidelines](https://huggingface.co/docs/diffusers/conceptual/ethical_guidelines) that guide our project and ask you to adhere to the same principles of transparency and responsibility.

+

+We enormously value feedback from the community, so please do not be afraid to speak up if you believe you have valuable feedback that can help improve the library - every message, comment, issue, and pull request (PR) is read and considered.

+

+## Overview

+

+You can contribute in many ways ranging from answering questions on issues and discussions to adding new diffusion models to the core library.

+

+In the following, we give an overview of different ways to contribute, ranked by difficulty in ascending order. All of them are valuable to the community.

+

+* 1. Asking and answering questions on [the Diffusers discussion forum](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers) or on [Discord](https://discord.gg/G7tWnz98XR).

+* 2. Opening new issues on [the GitHub Issues tab](https://github.com/huggingface/diffusers/issues/new/choose) or new discussions on [the GitHub Discussions tab](https://github.com/huggingface/diffusers/discussions/new/choose).

+* 3. Answering issues on [the GitHub Issues tab](https://github.com/huggingface/diffusers/issues) or discussions on [the GitHub Discussions tab](https://github.com/huggingface/diffusers/discussions).

+* 4. Fix a simple issue, marked by the "Good first issue" label, see [here](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22good+first+issue%22).

+* 5. Contribute to the [documentation](https://github.com/huggingface/diffusers/tree/main/docs/source).

+* 6. Contribute a [Community Pipeline](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3Acommunity-examples).

+* 7. Contribute to the [examples](https://github.com/huggingface/diffusers/tree/main/examples).

+* 8. Fix a more difficult issue, marked by the "Good second issue" label, see [here](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22Good+second+issue%22).

+* 9. Add a new pipeline, model, or scheduler, see ["New Pipeline/Model"](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22New+pipeline%2Fmodel%22) and ["New scheduler"](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22New+scheduler%22) issues. For this contribution, please have a look at [Design Philosophy](https://github.com/huggingface/diffusers/blob/main/PHILOSOPHY.md).

+

+As said before, **all contributions are valuable to the community**.

+In the following, we will explain each contribution a bit more in detail.

+

+For all contributions 4 - 9, you will need to open a PR. It is explained in detail how to do so in [Opening a pull request](#how-to-open-a-pr).

+

+### 1. Asking and answering questions on the Diffusers discussion forum or on the Diffusers Discord

+

+Any question or comment related to the Diffusers library can be asked on the [discussion forum](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/) or on [Discord](https://discord.gg/G7tWnz98XR). Such questions and comments include (but are not limited to):

+- Reports of training or inference experiments in an attempt to share knowledge

+- Presentation of personal projects

+- Questions to non-official training examples

+- Project proposals

+- General feedback

+- Paper summaries

+- Asking for help on personal projects that build on top of the Diffusers library

+- General questions

+- Ethical questions regarding diffusion models

+- ...

+

+Every question that is asked on the forum or on Discord actively encourages the community to publicly

+share knowledge and might very well help a beginner in the future who has the same question you're

+having. Please do pose any questions you might have.

+In the same spirit, you are of immense help to the community by answering such questions because this way you are publicly documenting knowledge for everybody to learn from.

+

+**Please** keep in mind that the more effort you put into asking or answering a question, the higher

+the quality of the publicly documented knowledge. In the same way, well-posed and well-answered questions create a high-quality knowledge database accessible to everybody, while badly posed questions or answers reduce the overall quality of the public knowledge database.

+In short, a high quality question or answer is *precise*, *concise*, *relevant*, *easy-to-understand*, *accessible*, and *well-formatted/well-posed*. For more information, please have a look through the [How to write a good issue](#how-to-write-a-good-issue) section.

+

+**NOTE about channels**:

+[*The forum*](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/63) is much better indexed by search engines, such as Google. Posts are ranked by popularity rather than chronologically. Hence, it's easier to look up questions and answers that we posted some time ago.

+In addition, questions and answers posted in the forum can easily be linked to.

+In contrast, *Discord* has a chat-like format that invites fast back-and-forth communication.

+While it will most likely take less time for you to get an answer to your question on Discord, your

+question won't be visible anymore over time. Also, it's much harder to find information that was posted a while back on Discord. We therefore strongly recommend using the forum for high-quality questions and answers in an attempt to create long-lasting knowledge for the community. If discussions on Discord lead to very interesting answers and conclusions, we recommend posting the results on the forum to make the information more available for future readers.

+

+### 2. Opening new issues on the GitHub issues tab

+

+The 🧨 Diffusers library is robust and reliable thanks to the users who notify us of

+the problems they encounter. So thank you for reporting an issue.

+

+Remember, GitHub issues are reserved for technical questions directly related to the Diffusers library, bug reports, feature requests, or feedback on the library design.

+

+In a nutshell, this means that everything that is **not** related to the **code of the Diffusers library** (including the documentation) should **not** be asked on GitHub, but rather on either the [forum](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/63) or [Discord](https://discord.gg/G7tWnz98XR).

+

+**Please consider the following guidelines when opening a new issue**:

+- Make sure you have searched whether your issue has already been asked before (use the search bar on GitHub under Issues).

+- Please never report a new issue on another (related) issue. If another issue is highly related, please

+open a new issue nevertheless and link to the related issue.

+- Make sure your issue is written in English. Please use one of the great, free online translation services, such as [DeepL](https://www.deepl.com/translator) to translate from your native language to English if you are not comfortable in English.

+- Check whether your issue might be solved by updating to the newest Diffusers version. Before posting your issue, please make sure that `python -c "import diffusers; print(diffusers.__version__)"` is higher or matches the latest Diffusers version.

+- Remember that the more effort you put into opening a new issue, the higher the quality of your answer will be and the better the overall quality of the Diffusers issues.

+

+New issues usually include the following.

+

+#### 2.1. Reproducible, minimal bug reports

+

+A bug report should always have a reproducible code snippet and be as minimal and concise as possible.

+This means in more detail:

+- Narrow the bug down as much as you can, **do not just dump your whole code file**.

+- Format your code.

+- Do not include any external libraries except for Diffusers depending on them.

+- **Always** provide all necessary information about your environment; for this, you can run: `diffusers-cli env` in your shell and copy-paste the displayed information to the issue.

+- Explain the issue. If the reader doesn't know what the issue is and why it is an issue, (s)he cannot solve it.

+- **Always** make sure the reader can reproduce your issue with as little effort as possible. If your code snippet cannot be run because of missing libraries or undefined variables, the reader cannot help you. Make sure your reproducible code snippet is as minimal as possible and can be copy-pasted into a simple Python shell.

+- If in order to reproduce your issue a model and/or dataset is required, make sure the reader has access to that model or dataset. You can always upload your model or dataset to the [Hub](https://huggingface.co) to make it easily downloadable. Try to keep your model and dataset as small as possible, to make the reproduction of your issue as effortless as possible.

+

+For more information, please have a look through the [How to write a good issue](#how-to-write-a-good-issue) section.

+

+You can open a bug report [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=bug&projects=&template=bug-report.yml).

+

+#### 2.2. Feature requests

+

+A world-class feature request addresses the following points:

+

+1. Motivation first:

+* Is it related to a problem/frustration with the library? If so, please explain

+why. Providing a code snippet that demonstrates the problem is best.

+* Is it related to something you would need for a project? We'd love to hear

+about it!

+* Is it something you worked on and think could benefit the community?

+Awesome! Tell us what problem it solved for you.

+2. Write a *full paragraph* describing the feature;

+3. Provide a **code snippet** that demonstrates its future use;

+4. In case this is related to a paper, please attach a link;

+5. Attach any additional information (drawings, screenshots, etc.) you think may help.

+

+You can open a feature request [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=&template=feature_request.md&title=).

+

+#### 2.3 Feedback

+

+Feedback about the library design and why it is good or not good helps the core maintainers immensely to build a user-friendly library. To understand the philosophy behind the current design philosophy, please have a look [here](https://huggingface.co/docs/diffusers/conceptual/philosophy). If you feel like a certain design choice does not fit with the current design philosophy, please explain why and how it should be changed. If a certain design choice follows the design philosophy too much, hence restricting use cases, explain why and how it should be changed.

+If a certain design choice is very useful for you, please also leave a note as this is great feedback for future design decisions.

+

+You can open an issue about feedback [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=&template=feedback.md&title=).

+

+#### 2.4 Technical questions

+

+Technical questions are mainly about why certain code of the library was written in a certain way, or what a certain part of the code does. Please make sure to link to the code in question and please provide details on

+why this part of the code is difficult to understand.

+

+You can open an issue about a technical question [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=bug&template=bug-report.yml).

+

+#### 2.5 Proposal to add a new model, scheduler, or pipeline

+

+If the diffusion model community released a new model, pipeline, or scheduler that you would like to see in the Diffusers library, please provide the following information:

+

+* Short description of the diffusion pipeline, model, or scheduler and link to the paper or public release.

+* Link to any of its open-source implementation(s).

+* Link to the model weights if they are available.

+

+If you are willing to contribute to the model yourself, let us know so we can best guide you. Also, don't forget

+to tag the original author of the component (model, scheduler, pipeline, etc.) by GitHub handle if you can find it.

+

+You can open a request for a model/pipeline/scheduler [here](https://github.com/huggingface/diffusers/issues/new?assignees=&labels=New+model%2Fpipeline%2Fscheduler&template=new-model-addition.yml).

+

+### 3. Answering issues on the GitHub issues tab

+

+Answering issues on GitHub might require some technical knowledge of Diffusers, but we encourage everybody to give it a try even if you are not 100% certain that your answer is correct.

+Some tips to give a high-quality answer to an issue:

+- Be as concise and minimal as possible.

+- Stay on topic. An answer to the issue should concern the issue and only the issue.

+- Provide links to code, papers, or other sources that prove or encourage your point.

+- Answer in code. If a simple code snippet is the answer to the issue or shows how the issue can be solved, please provide a fully reproducible code snippet.

+

+Also, many issues tend to be simply off-topic, duplicates of other issues, or irrelevant. It is of great

+help to the maintainers if you can answer such issues, encouraging the author of the issue to be

+more precise, provide the link to a duplicated issue or redirect them to [the forum](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/63) or [Discord](https://discord.gg/G7tWnz98XR).

+

+If you have verified that the issued bug report is correct and requires a correction in the source code,

+please have a look at the next sections.

+

+For all of the following contributions, you will need to open a PR. It is explained in detail how to do so in the [Opening a pull request](#how-to-open-a-pr) section.

+

+### 4. Fixing a "Good first issue"

+

+*Good first issues* are marked by the [Good first issue](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22good+first+issue%22) label. Usually, the issue already

+explains how a potential solution should look so that it is easier to fix.

+If the issue hasn't been closed and you would like to try to fix this issue, you can just leave a message "I would like to try this issue.". There are usually three scenarios:

+- a.) The issue description already proposes a fix. In this case and if the solution makes sense to you, you can open a PR or draft PR to fix it.

+- b.) The issue description does not propose a fix. In this case, you can ask what a proposed fix could look like and someone from the Diffusers team should answer shortly. If you have a good idea of how to fix it, feel free to directly open a PR.

+- c.) There is already an open PR to fix the issue, but the issue hasn't been closed yet. If the PR has gone stale, you can simply open a new PR and link to the stale PR. PRs often go stale if the original contributor who wanted to fix the issue suddenly cannot find the time anymore to proceed. This often happens in open-source and is very normal. In this case, the community will be very happy if you give it a new try and leverage the knowledge of the existing PR. If there is already a PR and it is active, you can help the author by giving suggestions, reviewing the PR or even asking whether you can contribute to the PR.

+

+

+### 5. Contribute to the documentation

+

+A good library **always** has good documentation! The official documentation is often one of the first points of contact for new users of the library, and therefore contributing to the documentation is a **highly

+valuable contribution**.

+

+Contributing to the library can have many forms:

+

+- Correcting spelling or grammatical errors.

+- Correct incorrect formatting of the docstring. If you see that the official documentation is weirdly displayed or a link is broken, we would be very happy if you take some time to correct it.

+- Correct the shape or dimensions of a docstring input or output tensor.

+- Clarify documentation that is hard to understand or incorrect.

+- Update outdated code examples.

+- Translating the documentation to another language.

+

+Anything displayed on [the official Diffusers doc page](https://huggingface.co/docs/diffusers/index) is part of the official documentation and can be corrected, adjusted in the respective [documentation source](https://github.com/huggingface/diffusers/tree/main/docs/source).

+

+Please have a look at [this page](https://github.com/huggingface/diffusers/tree/main/docs) on how to verify changes made to the documentation locally.

+

+### 6. Contribute a community pipeline

+

+> [!TIP]

+> Read the [Community pipelines](../using-diffusers/custom_pipeline_overview#community-pipelines) guide to learn more about the difference between a GitHub and Hugging Face Hub community pipeline. If you're interested in why we have community pipelines, take a look at GitHub Issue [#841](https://github.com/huggingface/diffusers/issues/841) (basically, we can't maintain all the possible ways diffusion models can be used for inference but we also don't want to prevent the community from building them).

+

+Contributing a community pipeline is a great way to share your creativity and work with the community. It lets you build on top of the [`DiffusionPipeline`] so that anyone can load and use it by setting the `custom_pipeline` parameter. This section will walk you through how to create a simple pipeline where the UNet only does a single forward pass and calls the scheduler once (a "one-step" pipeline).

+

+1. Create a one_step_unet.py file for your community pipeline. This file can contain whatever package you want to use as long as it's installed by the user. Make sure you only have one pipeline class that inherits from [`DiffusionPipeline`] to load model weights and the scheduler configuration from the Hub. Add a UNet and scheduler to the `__init__` function.

+

+ You should also add the `register_modules` function to ensure your pipeline and its components can be saved with [`~DiffusionPipeline.save_pretrained`].

+

+```py

+from diffusers import DiffusionPipeline

+import torch

+

+class UnetSchedulerOneForwardPipeline(DiffusionPipeline):

+ def __init__(self, unet, scheduler):

+ super().__init__()

+

+ self.register_modules(unet=unet, scheduler=scheduler)

+```

+

+1. In the forward pass (which we recommend defining as `__call__`), you can add any feature you'd like. For the "one-step" pipeline, create a random image and call the UNet and scheduler once by setting `timestep=1`.

+

+```py

+ from diffusers import DiffusionPipeline

+ import torch

+

+ class UnetSchedulerOneForwardPipeline(DiffusionPipeline):

+ def __init__(self, unet, scheduler):

+ super().__init__()

+

+ self.register_modules(unet=unet, scheduler=scheduler)

+

+ def __call__(self):

+ image = torch.randn(

+ (1, self.unet.config.in_channels, self.unet.config.sample_size, self.unet.config.sample_size),

+ )

+ timestep = 1

+

+ model_output = self.unet(image, timestep).sample

+ scheduler_output = self.scheduler.step(model_output, timestep, image).prev_sample

+

+ return scheduler_output

+```

+

+Now you can run the pipeline by passing a UNet and scheduler to it or load pretrained weights if the pipeline structure is identical.

+

+```py

+from diffusers import DDPMScheduler, UNet2DModel

+

+scheduler = DDPMScheduler()

+unet = UNet2DModel()

+

+pipeline = UnetSchedulerOneForwardPipeline(unet=unet, scheduler=scheduler)

+output = pipeline()

+# load pretrained weights

+pipeline = UnetSchedulerOneForwardPipeline.from_pretrained("google/ddpm-cifar10-32", use_safetensors=True)

+output = pipeline()

+```

+

+You can either share your pipeline as a GitHub community pipeline or Hub community pipeline.

+

+

+

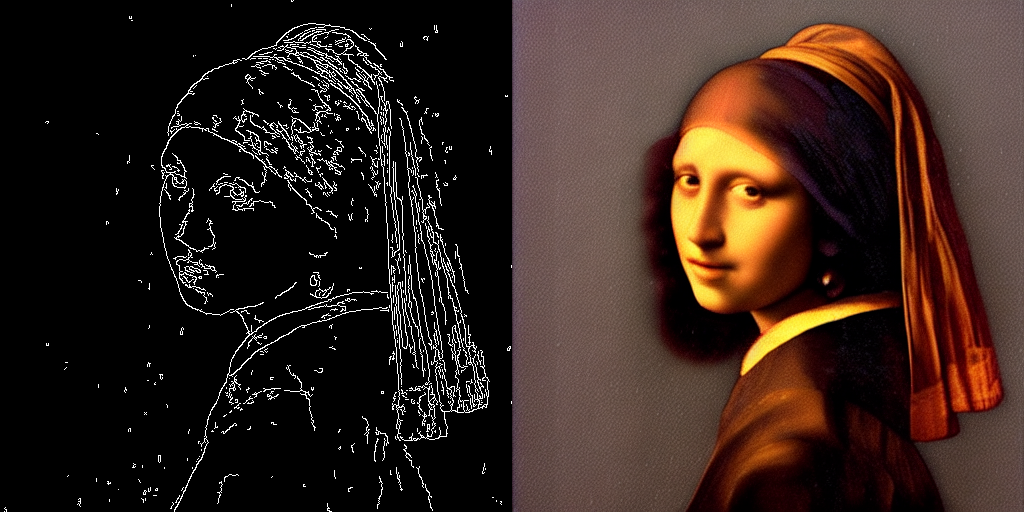

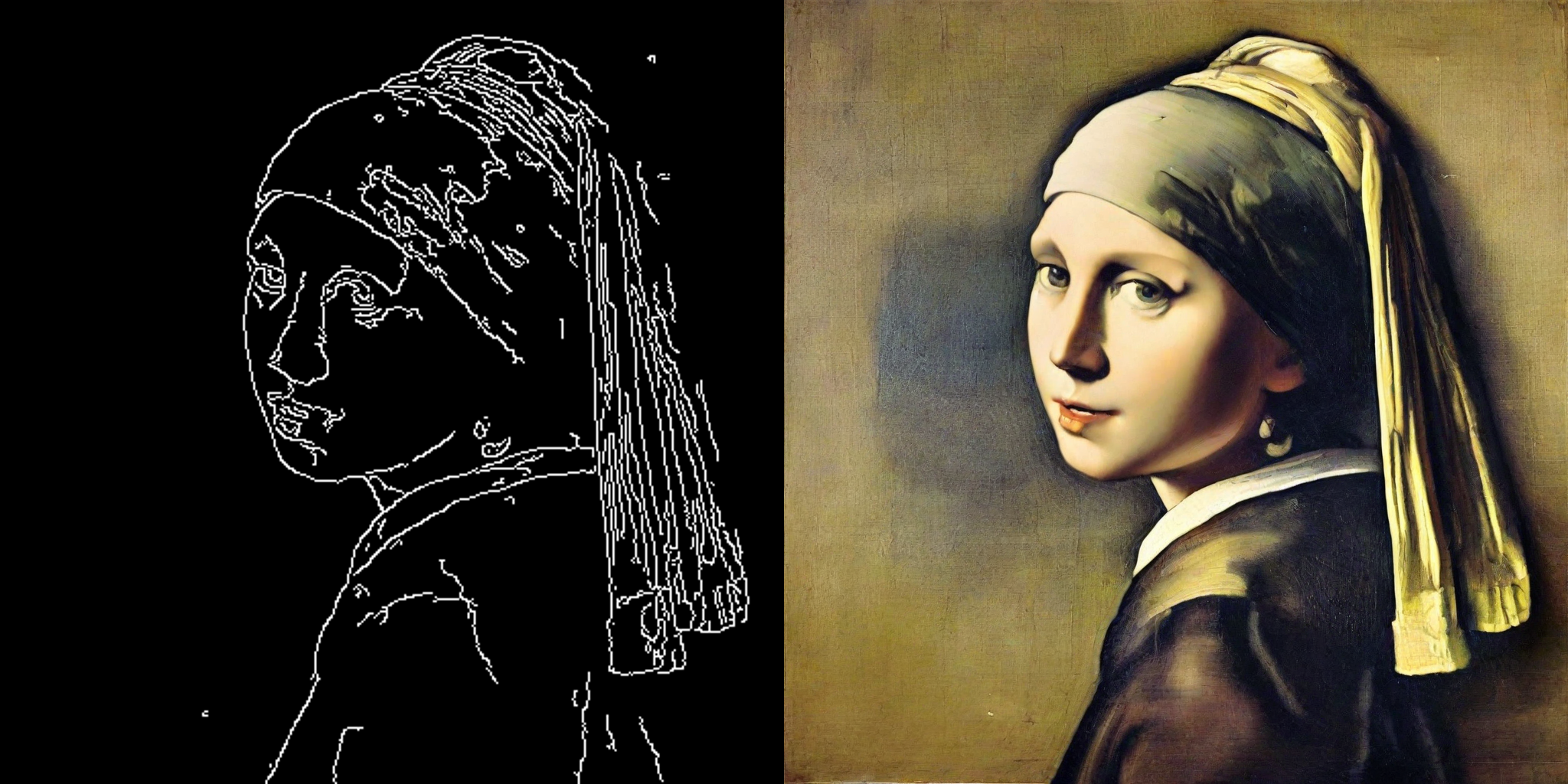

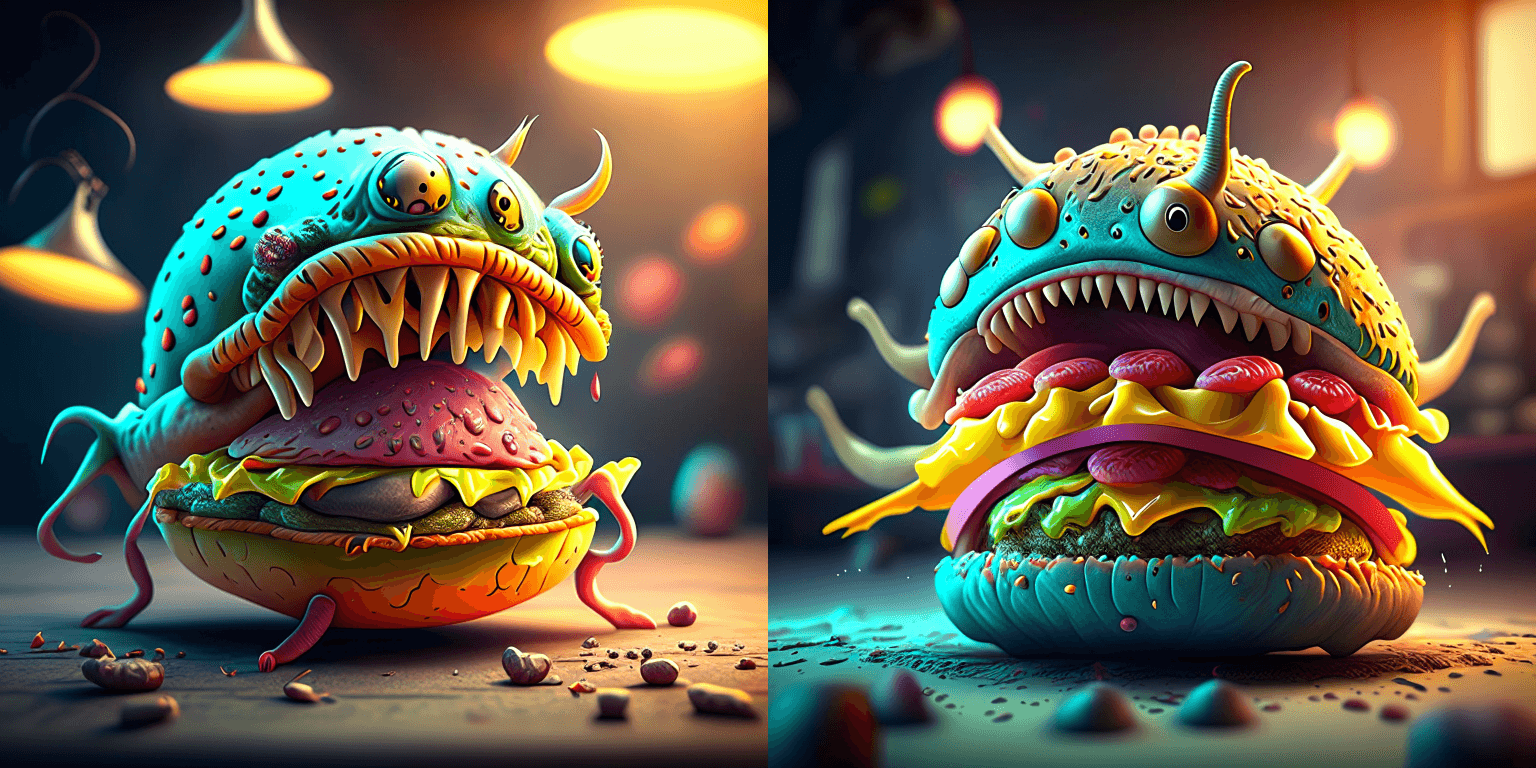

+ Real images.

+

+

+ Fake images.

+

+

+  +

+

+

Learn the fundamental skills you need to start generating outputs, build your own diffusion system, and train a diffusion model. We recommend starting here if you're using 🤗 Diffusers for the first time!

+ +Practical guides for helping you load pipelines, models, and schedulers. You'll also learn how to use pipelines for specific tasks, control how outputs are generated, optimize for inference speed, and different training techniques.

+ +Understand why the library was designed the way it was, and learn more about the ethical guidelines and safety implementations for using the library.

+ +Technical descriptions of how 🤗 Diffusers classes and methods work.

+ + +

+ +

+  +

+  +

+  +

+ +

+ +

+no compile** | **torch nightly -

no compile** | **torch 2.0 -

compile** | **torch nightly -

compile** | +|:---:|:---:|:---:|:---:|:---:| +| SD - txt2img | 21.66 | 23.13 | 44.03 | 49.74 | +| SD - img2img | 21.81 | 22.40 | 43.92 | 46.32 | +| SD - inpaint | 22.24 | 23.23 | 43.76 | 49.25 | +| SD - controlnet | 15.02 | 15.82 | 32.13 | 36.08 | +| IF | 20.21 /

13.84 /

24.00 | 20.12 /

13.70 /

24.03 | ❌ | 97.34 /

27.23 /

111.66 | +| SDXL - txt2img | 8.64 | 9.9 | - | - | + +### A100 (batch size: 4) + +| **Pipeline** | **torch 2.0 -

no compile** | **torch nightly -

no compile** | **torch 2.0 -

compile** | **torch nightly -

compile** | +|:---:|:---:|:---:|:---:|:---:| +| SD - txt2img | 11.6 | 13.12 | 14.62 | 17.27 | +| SD - img2img | 11.47 | 13.06 | 14.66 | 17.25 | +| SD - inpaint | 11.67 | 13.31 | 14.88 | 17.48 | +| SD - controlnet | 8.28 | 9.38 | 10.51 | 12.41 | +| IF | 25.02 | 18.04 | ❌ | 48.47 | +| SDXL - txt2img | 2.44 | 2.74 | - | - | + +### A100 (batch size: 16) + +| **Pipeline** | **torch 2.0 -

no compile** | **torch nightly -

no compile** | **torch 2.0 -

compile** | **torch nightly -

compile** | +|:---:|:---:|:---:|:---:|:---:| +| SD - txt2img | 3.04 | 3.6 | 3.83 | 4.68 | +| SD - img2img | 2.98 | 3.58 | 3.83 | 4.67 | +| SD - inpaint | 3.04 | 3.66 | 3.9 | 4.76 | +| SD - controlnet | 2.15 | 2.58 | 2.74 | 3.35 | +| IF | 8.78 | 9.82 | ❌ | 16.77 | +| SDXL - txt2img | 0.64 | 0.72 | - | - | + +### V100 (batch size: 1) + +| **Pipeline** | **torch 2.0 -

no compile** | **torch nightly -

no compile** | **torch 2.0 -

compile** | **torch nightly -

compile** | +|:---:|:---:|:---:|:---:|:---:| +| SD - txt2img | 18.99 | 19.14 | 20.95 | 22.17 | +| SD - img2img | 18.56 | 19.18 | 20.95 | 22.11 | +| SD - inpaint | 19.14 | 19.06 | 21.08 | 22.20 | +| SD - controlnet | 13.48 | 13.93 | 15.18 | 15.88 | +| IF | 20.01 /

9.08 /

23.34 | 19.79 /

8.98 /

24.10 | ❌ | 55.75 /

11.57 /

57.67 | + +### V100 (batch size: 4) + +| **Pipeline** | **torch 2.0 -

no compile** | **torch nightly -

no compile** | **torch 2.0 -

compile** | **torch nightly -

compile** | +|:---:|:---:|:---:|:---:|:---:| +| SD - txt2img | 5.96 | 5.89 | 6.83 | 6.86 | +| SD - img2img | 5.90 | 5.91 | 6.81 | 6.82 | +| SD - inpaint | 5.99 | 6.03 | 6.93 | 6.95 | +| SD - controlnet | 4.26 | 4.29 | 4.92 | 4.93 | +| IF | 15.41 | 14.76 | ❌ | 22.95 | + +### V100 (batch size: 16) + +| **Pipeline** | **torch 2.0 -

no compile** | **torch nightly -

no compile** | **torch 2.0 -

compile** | **torch nightly -

compile** | +|:---:|:---:|:---:|:---:|:---:| +| SD - txt2img | 1.66 | 1.66 | 1.92 | 1.90 | +| SD - img2img | 1.65 | 1.65 | 1.91 | 1.89 | +| SD - inpaint | 1.69 | 1.69 | 1.95 | 1.93 | +| SD - controlnet | 1.19 | 1.19 | OOM after warmup | 1.36 | +| IF | 5.43 | 5.29 | ❌ | 7.06 | + +### T4 (batch size: 1) + +| **Pipeline** | **torch 2.0 -

no compile** | **torch nightly -

no compile** | **torch 2.0 -

compile** | **torch nightly -

compile** | +|:---:|:---:|:---:|:---:|:---:| +| SD - txt2img | 6.9 | 6.95 | 7.3 | 7.56 | +| SD - img2img | 6.84 | 6.99 | 7.04 | 7.55 | +| SD - inpaint | 6.91 | 6.7 | 7.01 | 7.37 | +| SD - controlnet | 4.89 | 4.86 | 5.35 | 5.48 | +| IF | 17.42 /

2.47 /

18.52 | 16.96 /

2.45 /

18.69 | ❌ | 24.63 /

2.47 /

23.39 | +| SDXL - txt2img | 1.15 | 1.16 | - | - | + +### T4 (batch size: 4) + +| **Pipeline** | **torch 2.0 -

no compile** | **torch nightly -

no compile** | **torch 2.0 -

compile** | **torch nightly -

compile** | +|:---:|:---:|:---:|:---:|:---:| +| SD - txt2img | 1.79 | 1.79 | 2.03 | 1.99 | +| SD - img2img | 1.77 | 1.77 | 2.05 | 2.04 | +| SD - inpaint | 1.81 | 1.82 | 2.09 | 2.09 | +| SD - controlnet | 1.34 | 1.27 | 1.47 | 1.46 | +| IF | 5.79 | 5.61 | ❌ | 7.39 | +| SDXL - txt2img | 0.288 | 0.289 | - | - | + +### T4 (batch size: 16) + +| **Pipeline** | **torch 2.0 -

no compile** | **torch nightly -

no compile** | **torch 2.0 -

compile** | **torch nightly -

compile** | +|:---:|:---:|:---:|:---:|:---:| +| SD - txt2img | 2.34s | 2.30s | OOM after 2nd iteration | 1.99s | +| SD - img2img | 2.35s | 2.31s | OOM after warmup | 2.00s | +| SD - inpaint | 2.30s | 2.26s | OOM after 2nd iteration | 1.95s | +| SD - controlnet | OOM after 2nd iteration | OOM after 2nd iteration | OOM after warmup | OOM after warmup | +| IF * | 1.44 | 1.44 | ❌ | 1.94 | +| SDXL - txt2img | OOM | OOM | - | - | + +### RTX 3090 (batch size: 1) + +| **Pipeline** | **torch 2.0 -

no compile** | **torch nightly -

no compile** | **torch 2.0 -

compile** | **torch nightly -

compile** | +|:---:|:---:|:---:|:---:|:---:| +| SD - txt2img | 22.56 | 22.84 | 23.84 | 25.69 | +| SD - img2img | 22.25 | 22.61 | 24.1 | 25.83 | +| SD - inpaint | 22.22 | 22.54 | 24.26 | 26.02 | +| SD - controlnet | 16.03 | 16.33 | 17.38 | 18.56 | +| IF | 27.08 /

9.07 /

31.23 | 26.75 /

8.92 /

31.47 | ❌ | 68.08 /

11.16 /

65.29 | + +### RTX 3090 (batch size: 4) + +| **Pipeline** | **torch 2.0 -

no compile** | **torch nightly -

no compile** | **torch 2.0 -

compile** | **torch nightly -

compile** | +|:---:|:---:|:---:|:---:|:---:| +| SD - txt2img | 6.46 | 6.35 | 7.29 | 7.3 | +| SD - img2img | 6.33 | 6.27 | 7.31 | 7.26 | +| SD - inpaint | 6.47 | 6.4 | 7.44 | 7.39 | +| SD - controlnet | 4.59 | 4.54 | 5.27 | 5.26 | +| IF | 16.81 | 16.62 | ❌ | 21.57 | + +### RTX 3090 (batch size: 16) + +| **Pipeline** | **torch 2.0 -

no compile** | **torch nightly -

no compile** | **torch 2.0 -

compile** | **torch nightly -

compile** | +|:---:|:---:|:---:|:---:|:---:| +| SD - txt2img | 1.7 | 1.69 | 1.93 | 1.91 | +| SD - img2img | 1.68 | 1.67 | 1.93 | 1.9 | +| SD - inpaint | 1.72 | 1.71 | 1.97 | 1.94 | +| SD - controlnet | 1.23 | 1.22 | 1.4 | 1.38 | +| IF | 5.01 | 5.00 | ❌ | 6.33 | + +### RTX 4090 (batch size: 1) + +| **Pipeline** | **torch 2.0 -

no compile** | **torch nightly -

no compile** | **torch 2.0 -

compile** | **torch nightly -

compile** | +|:---:|:---:|:---:|:---:|:---:| +| SD - txt2img | 40.5 | 41.89 | 44.65 | 49.81 | +| SD - img2img | 40.39 | 41.95 | 44.46 | 49.8 | +| SD - inpaint | 40.51 | 41.88 | 44.58 | 49.72 | +| SD - controlnet | 29.27 | 30.29 | 32.26 | 36.03 | +| IF | 69.71 /

18.78 /

85.49 | 69.13 /

18.80 /

85.56 | ❌ | 124.60 /

26.37 /

138.79 | +| SDXL - txt2img | 6.8 | 8.18 | - | - | + +### RTX 4090 (batch size: 4) + +| **Pipeline** | **torch 2.0 -

no compile** | **torch nightly -

no compile** | **torch 2.0 -

compile** | **torch nightly -

compile** | +|:---:|:---:|:---:|:---:|:---:| +| SD - txt2img | 12.62 | 12.84 | 15.32 | 15.59 | +| SD - img2img | 12.61 | 12,.79 | 15.35 | 15.66 | +| SD - inpaint | 12.65 | 12.81 | 15.3 | 15.58 | +| SD - controlnet | 9.1 | 9.25 | 11.03 | 11.22 | +| IF | 31.88 | 31.14 | ❌ | 43.92 | +| SDXL - txt2img | 2.19 | 2.35 | - | - | + +### RTX 4090 (batch size: 16) + +| **Pipeline** | **torch 2.0 -

no compile** | **torch nightly -

no compile** | **torch 2.0 -

compile** | **torch nightly -

compile** | +|:---:|:---:|:---:|:---:|:---:| +| SD - txt2img | 3.17 | 3.2 | 3.84 | 3.85 | +| SD - img2img | 3.16 | 3.2 | 3.84 | 3.85 | +| SD - inpaint | 3.17 | 3.2 | 3.85 | 3.85 | +| SD - controlnet | 2.23 | 2.3 | 2.7 | 2.75 | +| IF | 9.26 | 9.2 | ❌ | 13.31 | +| SDXL - txt2img | 0.52 | 0.53 | - | - | + +## Notes + +* Follow this [PR](https://github.com/huggingface/diffusers/pull/3313) for more details on the environment used for conducting the benchmarks. +* For the DeepFloyd IF pipeline where batch sizes > 1, we only used a batch size of > 1 in the first IF pipeline for text-to-image generation and NOT for upscaling. That means the two upscaling pipelines received a batch size of 1. + +*Thanks to [Horace He](https://github.com/Chillee) from the PyTorch team for their support in improving our support of `torch.compile()` in Diffusers.* diff --git a/diffusers/docs/source/en/optimization/xdit.md b/diffusers/docs/source/en/optimization/xdit.md new file mode 100644 index 0000000000000000000000000000000000000000..eab87f1c17bb14d431b4daa53bcb4aad0b5cac07 --- /dev/null +++ b/diffusers/docs/source/en/optimization/xdit.md @@ -0,0 +1,122 @@ +# xDiT + +[xDiT](https://github.com/xdit-project/xDiT) is an inference engine designed for the large scale parallel deployment of Diffusion Transformers (DiTs). xDiT provides a suite of efficient parallel approaches for Diffusion Models, as well as GPU kernel accelerations. + +There are four parallel methods supported in xDiT, including [Unified Sequence Parallelism](https://arxiv.org/abs/2405.07719), [PipeFusion](https://arxiv.org/abs/2405.14430), CFG parallelism and data parallelism. The four parallel methods in xDiT can be configured in a hybrid manner, optimizing communication patterns to best suit the underlying network hardware. + +Optimization orthogonal to parallelization focuses on accelerating single GPU performance. In addition to utilizing well-known Attention optimization libraries, we leverage compilation acceleration technologies such as torch.compile and onediff. + +The overview of xDiT is shown as follows. + +

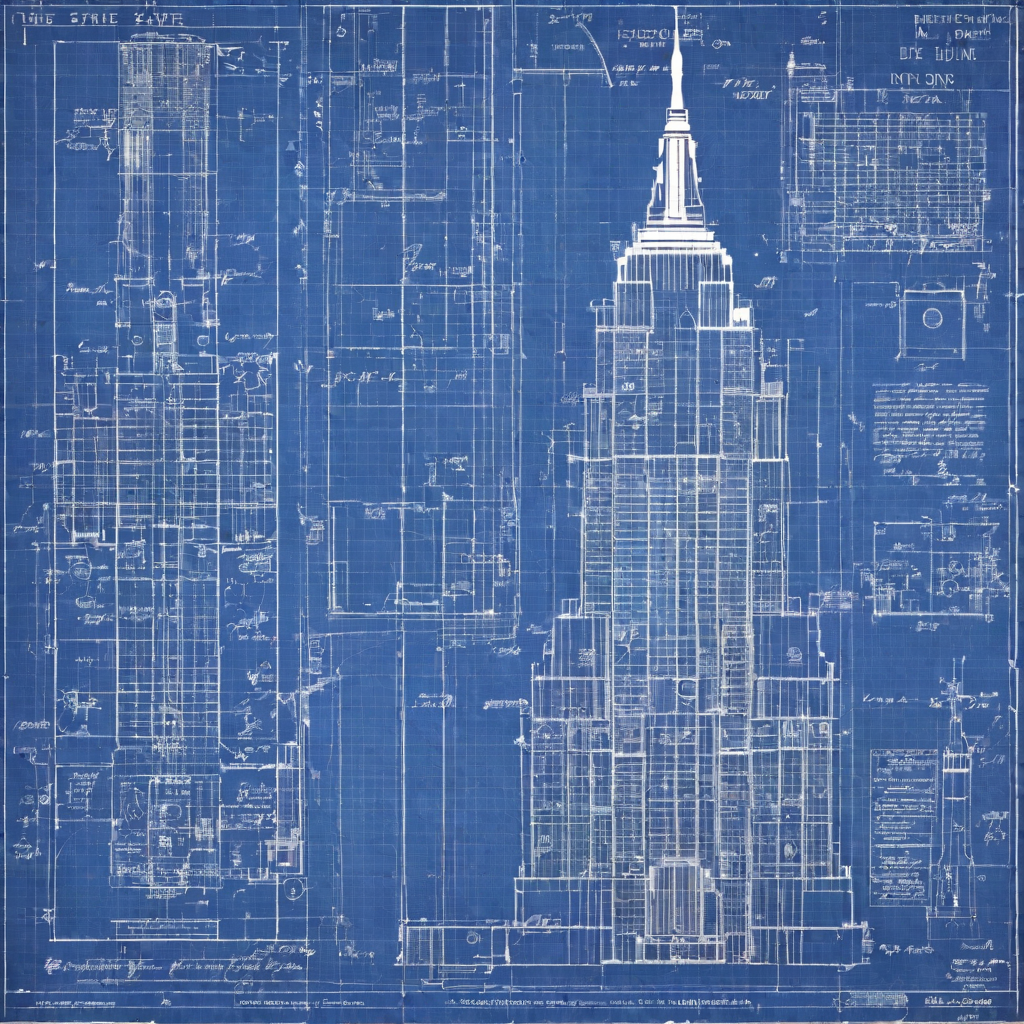

+

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

++ +To monitor training progress with Weights and Biases, add the `--report_to=wandb` parameter to the training command and specify a validation image with `--val_image_url` and a validation prompt with `--validation_prompt`. This can be really useful for debugging the model. + +

+

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+ +

+  +

+  +

+  +

+  +

+ +

+  +

+  +

+  +

+  +

+ fine-tuning** | **Comments** | +| :-------------------------------------------------: | :----------------: | :-------------------------------------: | :---------------------------------------------------------------------------------------------: | +| [InstructPix2Pix](#instruct-pix2pix) | ✅ | ❌ | Can additionally be

fine-tuned for better

performance on specific

edit instructions. | +| [Pix2Pix Zero](#pix2pix-zero) | ✅ | ❌ | | +| [Attend and Excite](#attend-and-excite) | ✅ | ❌ | | +| [Semantic Guidance](#semantic-guidance-sega) | ✅ | ❌ | | +| [Self-attention Guidance](#self-attention-guidance-sag) | ✅ | ❌ | | +| [Depth2Image](#depth2image) | ✅ | ❌ | | +| [MultiDiffusion Panorama](#multidiffusion-panorama) | ✅ | ❌ | | +| [DreamBooth](#dreambooth) | ❌ | ✅ | | +| [Textual Inversion](#textual-inversion) | ❌ | ✅ | | +| [ControlNet](#controlnet) | ✅ | ❌ | A ControlNet can be

trained/fine-tuned on

a custom conditioning. | +| [Prompt Weighting](#prompt-weighting) | ✅ | ❌ | | +| [Custom Diffusion](#custom-diffusion) | ❌ | ✅ | | +| [Model Editing](#model-editing) | ✅ | ❌ | | +| [DiffEdit](#diffedit) | ✅ | ❌ | | +| [T2I-Adapter](#t2i-adapter) | ✅ | ❌ | | +| [Fabric](#fabric) | ✅ | ❌ | | +## InstructPix2Pix + +[Paper](https://arxiv.org/abs/2211.09800) + +[InstructPix2Pix](../api/pipelines/pix2pix) is fine-tuned from Stable Diffusion to support editing input images. It takes as inputs an image and a prompt describing an edit, and it outputs the edited image. +InstructPix2Pix has been explicitly trained to work well with [InstructGPT](https://openai.com/blog/instruction-following/)-like prompts. + +## Pix2Pix Zero + +[Paper](https://arxiv.org/abs/2302.03027) + +[Pix2Pix Zero](../api/pipelines/pix2pix_zero) allows modifying an image so that one concept or subject is translated to another one while preserving general image semantics. + +The denoising process is guided from one conceptual embedding towards another conceptual embedding. The intermediate latents are optimized during the denoising process to push the attention maps towards reference attention maps. The reference attention maps are from the denoising process of the input image and are used to encourage semantic preservation. + +Pix2Pix Zero can be used both to edit synthetic images as well as real images. + +- To edit synthetic images, one first generates an image given a caption. + Next, we generate image captions for the concept that shall be edited and for the new target concept. We can use a model like [Flan-T5](https://huggingface.co/docs/transformers/model_doc/flan-t5) for this purpose. Then, "mean" prompt embeddings for both the source and target concepts are created via the text encoder. Finally, the pix2pix-zero algorithm is used to edit the synthetic image. +- To edit a real image, one first generates an image caption using a model like [BLIP](https://huggingface.co/docs/transformers/model_doc/blip). Then one applies DDIM inversion on the prompt and image to generate "inverse" latents. Similar to before, "mean" prompt embeddings for both source and target concepts are created and finally the pix2pix-zero algorithm in combination with the "inverse" latents is used to edit the image. + +

+

+  +

+  +

+ +

+  +

+  +

+  +

+  +

+ +

+  +

+  +

+  +

+  +

+ +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  |

|  |

diff --git a/diffusers/docs/source/en/using-diffusers/diffedit.md b/diffusers/docs/source/en/using-diffusers/diffedit.md

new file mode 100644

index 0000000000000000000000000000000000000000..f7e19fd3200102aa12fd004a94ae22a8c2029d5b

--- /dev/null

+++ b/diffusers/docs/source/en/using-diffusers/diffedit.md

@@ -0,0 +1,285 @@

+

+

+# DiffEdit

+

+[[open-in-colab]]

+

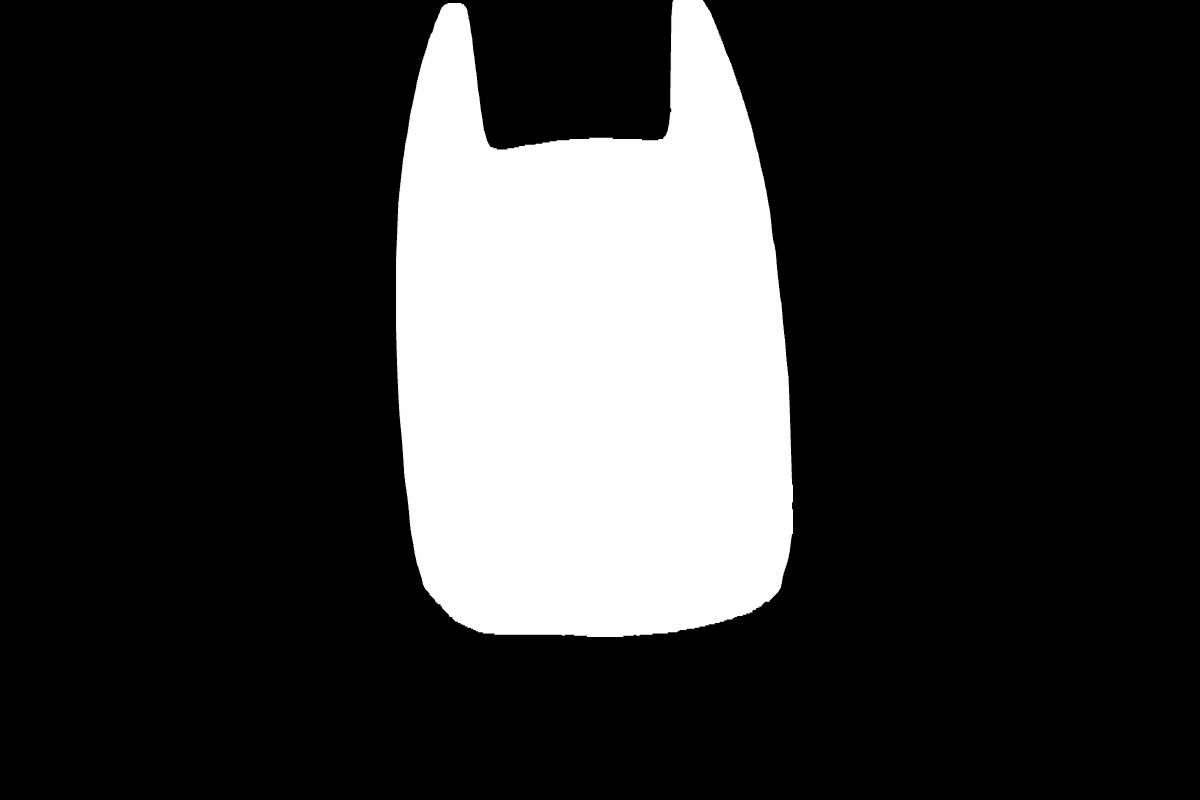

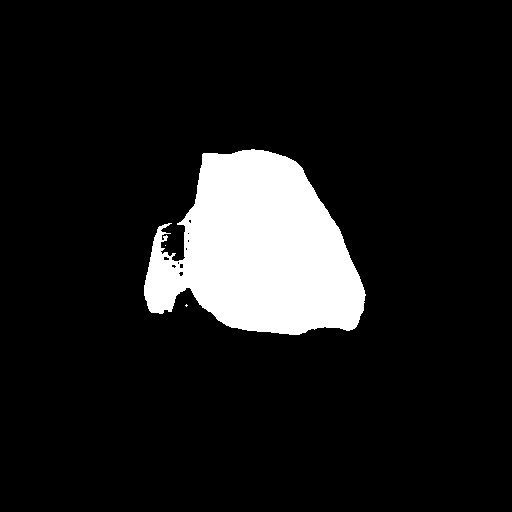

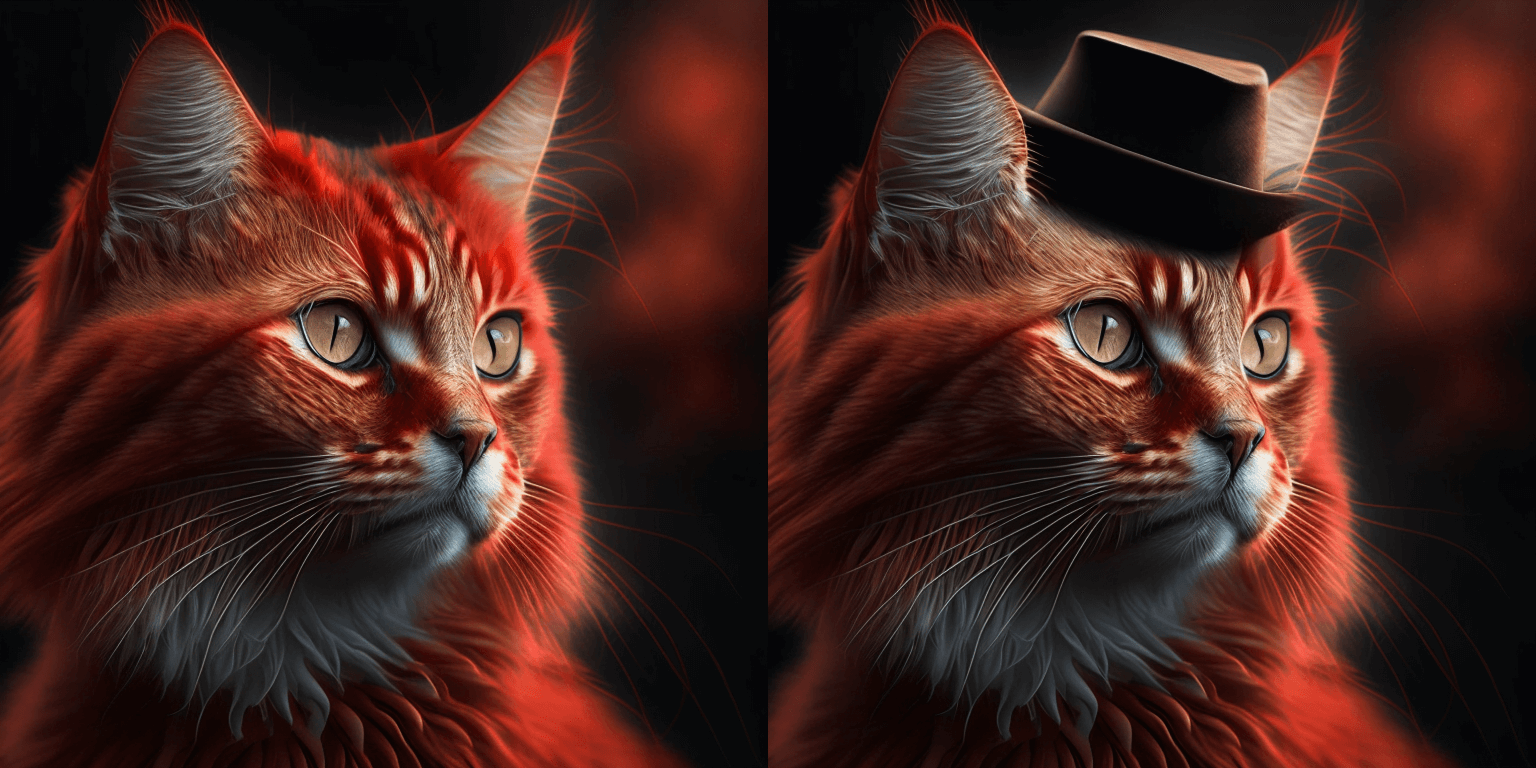

+Image editing typically requires providing a mask of the area to be edited. DiffEdit automatically generates the mask for you based on a text query, making it easier overall to create a mask without image editing software. The DiffEdit algorithm works in three steps:

+

+1. the diffusion model denoises an image conditioned on some query text and reference text which produces different noise estimates for different areas of the image; the difference is used to infer a mask to identify which area of the image needs to be changed to match the query text

+2. the input image is encoded into latent space with DDIM

+3. the latents are decoded with the diffusion model conditioned on the text query, using the mask as a guide such that pixels outside the mask remain the same as in the input image

+

+This guide will show you how to use DiffEdit to edit images without manually creating a mask.

+

+Before you begin, make sure you have the following libraries installed:

+

+```py

+# uncomment to install the necessary libraries in Colab

+#!pip install -q diffusers transformers accelerate

+```

+

+The [`StableDiffusionDiffEditPipeline`] requires an image mask and a set of partially inverted latents. The image mask is generated from the [`~StableDiffusionDiffEditPipeline.generate_mask`] function, and includes two parameters, `source_prompt` and `target_prompt`. These parameters determine what to edit in the image. For example, if you want to change a bowl of *fruits* to a bowl of *pears*, then:

+

+```py

+source_prompt = "a bowl of fruits"

+target_prompt = "a bowl of pears"

+```

+

+The partially inverted latents are generated from the [`~StableDiffusionDiffEditPipeline.invert`] function, and it is generally a good idea to include a `prompt` or *caption* describing the image to help guide the inverse latent sampling process. The caption can often be your `source_prompt`, but feel free to experiment with other text descriptions!

+

+Let's load the pipeline, scheduler, inverse scheduler, and enable some optimizations to reduce memory usage:

+

+```py

+import torch

+from diffusers import DDIMScheduler, DDIMInverseScheduler, StableDiffusionDiffEditPipeline

+

+pipeline = StableDiffusionDiffEditPipeline.from_pretrained(

+ "stabilityai/stable-diffusion-2-1",

+ torch_dtype=torch.float16,

+ safety_checker=None,

+ use_safetensors=True,

+)

+pipeline.scheduler = DDIMScheduler.from_config(pipeline.scheduler.config)

+pipeline.inverse_scheduler = DDIMInverseScheduler.from_config(pipeline.scheduler.config)

+pipeline.enable_model_cpu_offload()

+pipeline.enable_vae_slicing()

+```

+

+Load the image to edit:

+

+```py

+from diffusers.utils import load_image, make_image_grid

+

+img_url = "https://github.com/Xiang-cd/DiffEdit-stable-diffusion/raw/main/assets/origin.png"

+raw_image = load_image(img_url).resize((768, 768))

+raw_image

+```

+

+Use the [`~StableDiffusionDiffEditPipeline.generate_mask`] function to generate the image mask. You'll need to pass it the `source_prompt` and `target_prompt` to specify what to edit in the image:

+

+```py

+from PIL import Image

+

+source_prompt = "a bowl of fruits"

+target_prompt = "a basket of pears"

+mask_image = pipeline.generate_mask(

+ image=raw_image,

+ source_prompt=source_prompt,

+ target_prompt=target_prompt,

+)

+Image.fromarray((mask_image.squeeze()*255).astype("uint8"), "L").resize((768, 768))

+```

+

+Next, create the inverted latents and pass it a caption describing the image:

+

+```py

+inv_latents = pipeline.invert(prompt=source_prompt, image=raw_image).latents

+```

+

+Finally, pass the image mask and inverted latents to the pipeline. The `target_prompt` becomes the `prompt` now, and the `source_prompt` is used as the `negative_prompt`:

+

+```py

+output_image = pipeline(

+ prompt=target_prompt,

+ mask_image=mask_image,

+ image_latents=inv_latents,

+ negative_prompt=source_prompt,

+).images[0]

+mask_image = Image.fromarray((mask_image.squeeze()*255).astype("uint8"), "L").resize((768, 768))

+make_image_grid([raw_image, mask_image, output_image], rows=1, cols=3)

+```

+

+

|

diff --git a/diffusers/docs/source/en/using-diffusers/diffedit.md b/diffusers/docs/source/en/using-diffusers/diffedit.md

new file mode 100644

index 0000000000000000000000000000000000000000..f7e19fd3200102aa12fd004a94ae22a8c2029d5b

--- /dev/null

+++ b/diffusers/docs/source/en/using-diffusers/diffedit.md

@@ -0,0 +1,285 @@

+

+

+# DiffEdit

+

+[[open-in-colab]]

+

+Image editing typically requires providing a mask of the area to be edited. DiffEdit automatically generates the mask for you based on a text query, making it easier overall to create a mask without image editing software. The DiffEdit algorithm works in three steps:

+

+1. the diffusion model denoises an image conditioned on some query text and reference text which produces different noise estimates for different areas of the image; the difference is used to infer a mask to identify which area of the image needs to be changed to match the query text

+2. the input image is encoded into latent space with DDIM

+3. the latents are decoded with the diffusion model conditioned on the text query, using the mask as a guide such that pixels outside the mask remain the same as in the input image

+

+This guide will show you how to use DiffEdit to edit images without manually creating a mask.

+

+Before you begin, make sure you have the following libraries installed:

+

+```py

+# uncomment to install the necessary libraries in Colab

+#!pip install -q diffusers transformers accelerate

+```

+

+The [`StableDiffusionDiffEditPipeline`] requires an image mask and a set of partially inverted latents. The image mask is generated from the [`~StableDiffusionDiffEditPipeline.generate_mask`] function, and includes two parameters, `source_prompt` and `target_prompt`. These parameters determine what to edit in the image. For example, if you want to change a bowl of *fruits* to a bowl of *pears*, then:

+

+```py

+source_prompt = "a bowl of fruits"

+target_prompt = "a bowl of pears"

+```

+

+The partially inverted latents are generated from the [`~StableDiffusionDiffEditPipeline.invert`] function, and it is generally a good idea to include a `prompt` or *caption* describing the image to help guide the inverse latent sampling process. The caption can often be your `source_prompt`, but feel free to experiment with other text descriptions!

+

+Let's load the pipeline, scheduler, inverse scheduler, and enable some optimizations to reduce memory usage:

+

+```py

+import torch

+from diffusers import DDIMScheduler, DDIMInverseScheduler, StableDiffusionDiffEditPipeline

+

+pipeline = StableDiffusionDiffEditPipeline.from_pretrained(

+ "stabilityai/stable-diffusion-2-1",

+ torch_dtype=torch.float16,

+ safety_checker=None,

+ use_safetensors=True,

+)

+pipeline.scheduler = DDIMScheduler.from_config(pipeline.scheduler.config)

+pipeline.inverse_scheduler = DDIMInverseScheduler.from_config(pipeline.scheduler.config)

+pipeline.enable_model_cpu_offload()

+pipeline.enable_vae_slicing()

+```

+

+Load the image to edit:

+

+```py

+from diffusers.utils import load_image, make_image_grid

+

+img_url = "https://github.com/Xiang-cd/DiffEdit-stable-diffusion/raw/main/assets/origin.png"

+raw_image = load_image(img_url).resize((768, 768))

+raw_image

+```

+

+Use the [`~StableDiffusionDiffEditPipeline.generate_mask`] function to generate the image mask. You'll need to pass it the `source_prompt` and `target_prompt` to specify what to edit in the image:

+

+```py

+from PIL import Image

+

+source_prompt = "a bowl of fruits"

+target_prompt = "a basket of pears"

+mask_image = pipeline.generate_mask(

+ image=raw_image,

+ source_prompt=source_prompt,

+ target_prompt=target_prompt,

+)

+Image.fromarray((mask_image.squeeze()*255).astype("uint8"), "L").resize((768, 768))

+```

+

+Next, create the inverted latents and pass it a caption describing the image:

+

+```py

+inv_latents = pipeline.invert(prompt=source_prompt, image=raw_image).latents

+```

+

+Finally, pass the image mask and inverted latents to the pipeline. The `target_prompt` becomes the `prompt` now, and the `source_prompt` is used as the `negative_prompt`:

+

+```py

+output_image = pipeline(

+ prompt=target_prompt,

+ mask_image=mask_image,

+ image_latents=inv_latents,

+ negative_prompt=source_prompt,

+).images[0]

+mask_image = Image.fromarray((mask_image.squeeze()*255).astype("uint8"), "L").resize((768, 768))

+make_image_grid([raw_image, mask_image, output_image], rows=1, cols=3)

+```

+

+ +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+ +

+ +

+ +

+  +

+  +

+  +

+  +

+  +

+  +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+ +

+ +

+  +

+  +

+ +

+  +

+  +

+  +

+ + +Kandinsky 3 has a more concise architecture and it doesn't require a prior model. This means it's usage is identical to other diffusion models like [Stable Diffusion XL](sdxl). + +

+

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+  +

+  +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+ +

+ +

+ +

+  +

+  +

+ +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+ +

+ +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+ +

+ +

+ +

+  +

+  +

+  +

+  +

+  +

+ +

+ +

+ +

+ +

+  +

+  +

+  +

+  +

+ +

+  +

+  +

+ +

+ +

+  +

+  +

+ +

+  +

+  +

+  +

+  +

+ +

+ +

+  +

+  +

+  +

+  +

+  +

+  +

+ +

+  +

+  +

+  +

+  +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+  +

+  +

+ +

+

+

+  +

+

+

出力の生成、独自の拡散システムの構築、拡散モデルのトレーニングを開始するために必要な基本的なスキルを学ぶことができます。初めて 🤗Diffusersを使用する場合は、ここから始めることをおすすめします!

+ +パイプライン、モデル、スケジューラの読み込みに役立つ実践的なガイドです。また、特定のタスクにパイプラインを使用する方法、出力の生成方法を制御する方法、生成速度を最適化する方法、さまざまなトレーニング手法についても学ぶことができます。

+ +ライブラリがなぜこのように設計されたのかを理解し、ライブラリを利用する際の倫理的ガイドラインや安全対策について詳しく学べます。

+ +🤗 Diffusersのクラスとメソッドがどのように機能するかについての技術的な説明です。

+ + +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+어떤 방식으로든 기여하려는 경우, 우리는 개방적이고 환영하며 친근한 커뮤니티의 일부가 되기 위해 노력하고 있습니다. 우리의 [행동 강령](https://github.com/huggingface/diffusers/blob/main/CODE_OF_CONDUCT.md)을 읽고 상호 작용 중에 이를 존중하도록 주의해주시기 바랍니다. 또한 프로젝트를 안내하는 [윤리 지침](https://huggingface.co/docs/diffusers/conceptual/ethical_guidelines)에 익숙해지고 동일한 투명성과 책임성의 원칙을 준수해주시기를 부탁드립니다.

+

+우리는 커뮤니티로부터의 피드백을 매우 중요하게 생각하므로, 라이브러리를 개선하는 데 도움이 될 가치 있는 피드백이 있다고 생각되면 망설이지 말고 의견을 제시해주세요 - 모든 메시지, 댓글, 이슈, Pull Request(PR)는 읽히고 고려됩니다.

+

+## 개요 [[overview]]

+

+이슈에 있는 질문에 답변하는 것에서부터 코어 라이브러리에 새로운 diffusion 모델을 추가하는 것까지 다양한 방법으로 기여를 할 수 있습니다.

+

+이어지는 부분에서 우리는 다양한 방법의 기여에 대한 개요를 난이도에 따라 오름차순으로 정리하였습니다. 모든 기여는 커뮤니티에게 가치가 있습니다.

+

+1. [Diffusers 토론 포럼](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers)이나 [Discord](https://discord.gg/G7tWnz98XR)에서 질문에 대답하거나 질문을 할 수 있습니다.

+2. [GitHub Issues 탭](https://github.com/huggingface/diffusers/issues/new/choose)에서 새로운 이슈를 열 수 있습니다.

+3. [GitHub Issues 탭](https://github.com/huggingface/diffusers/issues)에서 이슈에 대답할 수 있습니다.

+4. "Good first issue" 라벨이 지정된 간단한 이슈를 수정할 수 있습니다. [여기](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22good+first+issue%22)를 참조하세요.

+5. [문서](https://github.com/huggingface/diffusers/tree/main/docs/source)에 기여할 수 있습니다.

+6. [Community Pipeline](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3Acommunity-examples)에 기여할 수 있습니다.

+7. [예제](https://github.com/huggingface/diffusers/tree/main/examples)에 기여할 수 있습니다.

+8. "Good second issue" 라벨이 지정된 어려운 이슈를 수정할 수 있습니다. [여기](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22Good+second+issue%22)를 참조하세요.

+9. 새로운 파이프라인, 모델 또는 스케줄러를 추가할 수 있습니다. ["새로운 파이프라인/모델"](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22New+pipeline%2Fmodel%22) 및 ["새로운 스케줄러"](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22New+scheduler%22) 이슈를 참조하세요. 이 기여에 대해서는 [디자인 철학](https://github.com/huggingface/diffusers/blob/main/PHILOSOPHY.md)을 확인해주세요.

+

+앞서 말한 대로, **모든 기여는 커뮤니티에게 가치가 있습니다**. 이어지는 부분에서 각 기여에 대해 조금 더 자세히 설명하겠습니다.

+

+4부터 9까지의 모든 기여에는 Pull Request을 열어야 합니다. [Pull Request 열기](#how-to-open-a-pr)에서 자세히 설명되어 있습니다.

+

+### 1. Diffusers 토론 포럼이나 Diffusers Discord에서 질문하고 답변하기 [[1-asking-and-answering-questions-on-the-diffusers-discussion-forum-or-on-the-diffusers-discord]]

+

+Diffusers 라이브러리와 관련된 모든 질문이나 의견은 [토론 포럼](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/63)이나 [Discord](https://discord.gg/G7tWnz98XR)에서 할 수 있습니다. 이러한 질문과 의견에는 다음과 같은 내용이 포함됩니다(하지만 이에 국한되지는 않습니다):

+- 지식을 공유하기 위해서 훈련 또는 추론 실험에 대한 결과 보고

+- 개인 프로젝트 소개

+- 비공식 훈련 예제에 대한 질문

+- 프로젝트 제안

+- 일반적인 피드백

+- 논문 요약

+- Diffusers 라이브러리를 기반으로 하는 개인 프로젝트에 대한 도움 요청

+- 일반적인 질문

+- Diffusion 모델에 대한 윤리적 질문

+- ...

+

+포럼이나 Discord에서 질문을 하면 커뮤니티가 지식을 공개적으로 공유하도록 장려되며, 향후 동일한 질문을 가진 초보자에게도 도움이 될 수 있습니다. 따라서 궁금한 질문은 언제든지 하시기 바랍니다.

+또한, 이러한 질문에 답변하는 것은 커뮤니티에게 매우 큰 도움이 됩니다. 왜냐하면 이렇게 하면 모두가 학습할 수 있는 공개적인 지식을 문서화하기 때문입니다.

+

+**주의**하십시오. 질문이나 답변에 투자하는 노력이 많을수록 공개적으로 문서화된 지식의 품질이 높아집니다. 마찬가지로, 잘 정의되고 잘 답변된 질문은 모두에게 접근 가능한 고품질 지식 데이터베이스를 만들어줍니다. 반면에 잘못된 질문이나 답변은 공개 지식 데이터베이스의 전반적인 품질을 낮출 수 있습니다.

+간단히 말해서, 고품질의 질문이나 답변은 *명확하고 간결하며 관련성이 있으며 이해하기 쉽고 접근 가능하며 잘 형식화되어 있어야* 합니다. 자세한 내용은 [좋은 이슈 작성 방법](#how-to-write-a-good-issue) 섹션을 참조하십시오.

+

+**채널에 대한 참고사항**:

+[*포럼*](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/63)은 구글과 같은 검색 엔진에서 더 잘 색인화됩니다. 게시물은 인기에 따라 순위가 매겨지며, 시간순으로 정렬되지 않습니다. 따라서 이전에 게시한 질문과 답변을 쉽게 찾을 수 있습니다.

+또한, 포럼에 게시된 질문과 답변은 쉽게 링크할 수 있습니다.

+반면 *Discord*는 채팅 형식으로 되어 있어 빠른 대화를 유도합니다.

+질문에 대한 답변을 빠르게 받을 수는 있겠지만, 시간이 지나면 질문이 더 이상 보이지 않습니다. 또한, Discord에서 이전에 게시된 정보를 찾는 것은 훨씬 어렵습니다. 따라서 포럼을 사용하여 고품질의 질문과 답변을 하여 커뮤니티를 위한 오래 지속되는 지식을 만들기를 권장합니다. Discord에서의 토론이 매우 흥미로운 답변과 결론을 이끌어내는 경우, 해당 정보를 포럼에 게시하여 향후 독자들에게 더 쉽게 액세스할 수 있도록 권장합니다.