Spaces:

Sleeping

Sleeping

Commit ·

5fe15cc

0

Parent(s):

Duplicate from dog/fastapi-document-qa

Browse filesCo-authored-by: Dr. President Admiral Roar Dreezy <dog@users.noreply.huggingface.co>

- .gitattributes +34 -0

- Dockerfile +31 -0

- README.md +11 -0

- main.py +34 -0

- requirements.txt +8 -0

- use_api_in_python.png +0 -0

.gitattributes

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 34 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

Dockerfile

ADDED

|

@@ -0,0 +1,31 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Use the official Python 3.9 image

|

| 2 |

+

FROM python:3.9

|

| 3 |

+

|

| 4 |

+

RUN apt-get update && apt-get install -y \

|

| 5 |

+

tesseract-ocr-all \

|

| 6 |

+

&& rm -rf /var/lib/apt/lists/*

|

| 7 |

+

|

| 8 |

+

# Set the working directory to /code

|

| 9 |

+

WORKDIR /code

|

| 10 |

+

|

| 11 |

+

# Copy the current directory contents into the container at /code

|

| 12 |

+

COPY ./requirements.txt /code/requirements.txt

|

| 13 |

+

|

| 14 |

+

# Install requirements.txt

|

| 15 |

+

RUN pip install --no-cache-dir --upgrade -r /code/requirements.txt

|

| 16 |

+

|

| 17 |

+

# Set up a new user named "user" with user ID 1000

|

| 18 |

+

RUN useradd -m -u 1000 user

|

| 19 |

+

# Switch to the "user" user

|

| 20 |

+

USER user

|

| 21 |

+

# Set home to the user's home directory

|

| 22 |

+

ENV HOME=/home/user \

|

| 23 |

+

PATH=/home/user/.local/bin:$PATH

|

| 24 |

+

|

| 25 |

+

# Set the working directory to the user's home directory

|

| 26 |

+

WORKDIR $HOME/app

|

| 27 |

+

|

| 28 |

+

# Copy the current directory contents into the container at $HOME/app setting the owner to the user

|

| 29 |

+

COPY --chown=user . $HOME/app

|

| 30 |

+

|

| 31 |

+

CMD ["uvicorn", "main:app", "--host", "0.0.0.0", "--port", "7860"]

|

README.md

ADDED

|

@@ -0,0 +1,11 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

title: Fastapi Document Qa

|

| 3 |

+

emoji: 🐠

|

| 4 |

+

colorFrom: gray

|

| 5 |

+

colorTo: yellow

|

| 6 |

+

sdk: docker

|

| 7 |

+

pinned: false

|

| 8 |

+

duplicated_from: dog/fastapi-document-qa

|

| 9 |

+

---

|

| 10 |

+

|

| 11 |

+

Check out the configuration reference at https://huggingface.co/docs/hub/spaces-config-reference

|

main.py

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from base64 import b64decode, b64encode

|

| 2 |

+

from io import BytesIO

|

| 3 |

+

|

| 4 |

+

from fastapi import FastAPI, File, Form

|

| 5 |

+

from PIL import Image

|

| 6 |

+

from transformers import pipeline

|

| 7 |

+

|

| 8 |

+

|

| 9 |

+

description = """

|

| 10 |

+

## DocQA with 🤗 transformers, FastAPI, and Docker

|

| 11 |

+

|

| 12 |

+

This app shows how to do Document Question Answering using

|

| 13 |

+

FastAPI in a Docker Space 🚀

|

| 14 |

+

Check out the docs for the `/predict` endpoint below to try it out!

|

| 15 |

+

"""

|

| 16 |

+

|

| 17 |

+

# NOTE - we configure docs_url to serve the interactive Docs at the root path

|

| 18 |

+

# of the app. This way, we can use the docs as a landing page for the app on Spaces.

|

| 19 |

+

app = FastAPI(docs_url="/", description=description)

|

| 20 |

+

|

| 21 |

+

pipe = pipeline("document-question-answering", model="impira/layoutlm-document-qa")

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

@app.post("/predict")

|

| 25 |

+

def predict(image_file: bytes = File(...), question: str = Form(...)):

|

| 26 |

+

"""

|

| 27 |

+

Using the document-question-answering pipeline from `transformers`, take

|

| 28 |

+

a given input document (image) and a question about it, and return the

|

| 29 |

+

predicted answer. The model used is available on the hub at:

|

| 30 |

+

[`impira/layoutlm-document-qa`](https://huggingface.co/impira/layoutlm-document-qa).

|

| 31 |

+

"""

|

| 32 |

+

image = Image.open(BytesIO(image_file))

|

| 33 |

+

output = pipe(image, question)

|

| 34 |

+

return output

|

requirements.txt

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

fastapi==0.74.*

|

| 2 |

+

requests==2.27.*

|

| 3 |

+

uvicorn[standard]==0.17.*

|

| 4 |

+

sentencepiece==0.1.*

|

| 5 |

+

torch==1.11.*

|

| 6 |

+

transformers[vision]==4.*

|

| 7 |

+

pytesseract==0.3.10

|

| 8 |

+

python-multipart==0.0.6

|

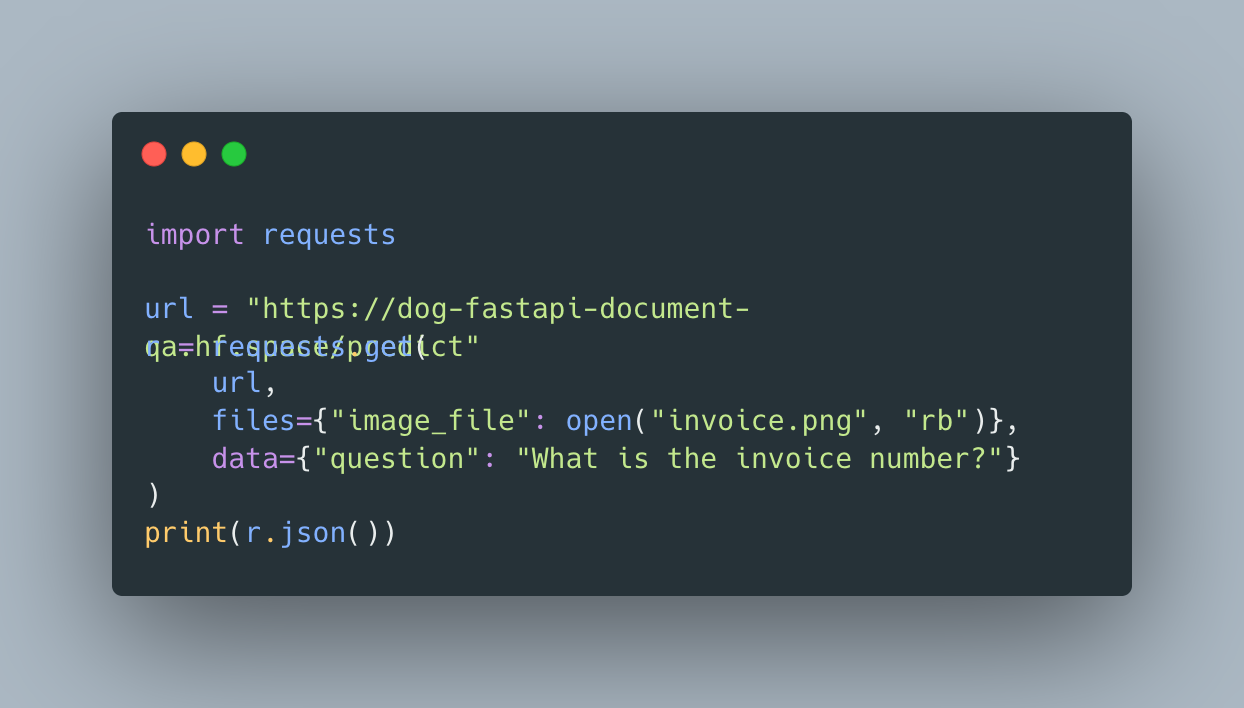

use_api_in_python.png

ADDED

|