Spaces:

Runtime error

Runtime error

Upload folder using huggingface_hub

Browse files- .gitattributes +2 -0

- .gitignore +12 -0

- .gradio/certificate.pem +31 -0

- README.md +91 -7

- app.py +8 -0

- chatinterface.png +0 -0

- chatinterface_with_customization.png +3 -0

- composition.py +10 -0

- custom_app.py +10 -0

- hyperbolic-animated.gif +3 -0

- hyperbolic-gradio.png +0 -0

- hyperbolic_gradio/__init__.py +82 -0

- pyproject.toml +34 -0

- requirements.txt +2 -0

- space.yaml +3 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,5 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

chatinterface_with_customization.png filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

hyperbolic-animated.gif filter=lfs diff=lfs merge=lfs -text

|

.gitignore

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Python cache files

|

| 2 |

+

__pycache__/

|

| 3 |

+

*.pyc

|

| 4 |

+

|

| 5 |

+

# Virtual environment

|

| 6 |

+

env/

|

| 7 |

+

.venv/

|

| 8 |

+

|

| 9 |

+

# Package artifacts

|

| 10 |

+

dist/

|

| 11 |

+

build/

|

| 12 |

+

*.egg-info/

|

.gradio/certificate.pem

ADDED

|

@@ -0,0 +1,31 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

-----BEGIN CERTIFICATE-----

|

| 2 |

+

MIIFazCCA1OgAwIBAgIRAIIQz7DSQONZRGPgu2OCiwAwDQYJKoZIhvcNAQELBQAw

|

| 3 |

+

TzELMAkGA1UEBhMCVVMxKTAnBgNVBAoTIEludGVybmV0IFNlY3VyaXR5IFJlc2Vh

|

| 4 |

+

cmNoIEdyb3VwMRUwEwYDVQQDEwxJU1JHIFJvb3QgWDEwHhcNMTUwNjA0MTEwNDM4

|

| 5 |

+

WhcNMzUwNjA0MTEwNDM4WjBPMQswCQYDVQQGEwJVUzEpMCcGA1UEChMgSW50ZXJu

|

| 6 |

+

ZXQgU2VjdXJpdHkgUmVzZWFyY2ggR3JvdXAxFTATBgNVBAMTDElTUkcgUm9vdCBY

|

| 7 |

+

MTCCAiIwDQYJKoZIhvcNAQEBBQADggIPADCCAgoCggIBAK3oJHP0FDfzm54rVygc

|

| 8 |

+

h77ct984kIxuPOZXoHj3dcKi/vVqbvYATyjb3miGbESTtrFj/RQSa78f0uoxmyF+

|

| 9 |

+

0TM8ukj13Xnfs7j/EvEhmkvBioZxaUpmZmyPfjxwv60pIgbz5MDmgK7iS4+3mX6U

|

| 10 |

+

A5/TR5d8mUgjU+g4rk8Kb4Mu0UlXjIB0ttov0DiNewNwIRt18jA8+o+u3dpjq+sW

|

| 11 |

+

T8KOEUt+zwvo/7V3LvSye0rgTBIlDHCNAymg4VMk7BPZ7hm/ELNKjD+Jo2FR3qyH

|

| 12 |

+

B5T0Y3HsLuJvW5iB4YlcNHlsdu87kGJ55tukmi8mxdAQ4Q7e2RCOFvu396j3x+UC

|

| 13 |

+

B5iPNgiV5+I3lg02dZ77DnKxHZu8A/lJBdiB3QW0KtZB6awBdpUKD9jf1b0SHzUv

|

| 14 |

+

KBds0pjBqAlkd25HN7rOrFleaJ1/ctaJxQZBKT5ZPt0m9STJEadao0xAH0ahmbWn

|

| 15 |

+

OlFuhjuefXKnEgV4We0+UXgVCwOPjdAvBbI+e0ocS3MFEvzG6uBQE3xDk3SzynTn

|

| 16 |

+

jh8BCNAw1FtxNrQHusEwMFxIt4I7mKZ9YIqioymCzLq9gwQbooMDQaHWBfEbwrbw

|

| 17 |

+

qHyGO0aoSCqI3Haadr8faqU9GY/rOPNk3sgrDQoo//fb4hVC1CLQJ13hef4Y53CI

|

| 18 |

+

rU7m2Ys6xt0nUW7/vGT1M0NPAgMBAAGjQjBAMA4GA1UdDwEB/wQEAwIBBjAPBgNV

|

| 19 |

+

HRMBAf8EBTADAQH/MB0GA1UdDgQWBBR5tFnme7bl5AFzgAiIyBpY9umbbjANBgkq

|

| 20 |

+

hkiG9w0BAQsFAAOCAgEAVR9YqbyyqFDQDLHYGmkgJykIrGF1XIpu+ILlaS/V9lZL

|

| 21 |

+

ubhzEFnTIZd+50xx+7LSYK05qAvqFyFWhfFQDlnrzuBZ6brJFe+GnY+EgPbk6ZGQ

|

| 22 |

+

3BebYhtF8GaV0nxvwuo77x/Py9auJ/GpsMiu/X1+mvoiBOv/2X/qkSsisRcOj/KK

|

| 23 |

+

NFtY2PwByVS5uCbMiogziUwthDyC3+6WVwW6LLv3xLfHTjuCvjHIInNzktHCgKQ5

|

| 24 |

+

ORAzI4JMPJ+GslWYHb4phowim57iaztXOoJwTdwJx4nLCgdNbOhdjsnvzqvHu7Ur

|

| 25 |

+

TkXWStAmzOVyyghqpZXjFaH3pO3JLF+l+/+sKAIuvtd7u+Nxe5AW0wdeRlN8NwdC

|

| 26 |

+

jNPElpzVmbUq4JUagEiuTDkHzsxHpFKVK7q4+63SM1N95R1NbdWhscdCb+ZAJzVc

|

| 27 |

+

oyi3B43njTOQ5yOf+1CceWxG1bQVs5ZufpsMljq4Ui0/1lvh+wjChP4kqKOJ2qxq

|

| 28 |

+

4RgqsahDYVvTH9w7jXbyLeiNdd8XM2w9U/t7y0Ff/9yi0GE44Za4rF2LN9d11TPA

|

| 29 |

+

mRGunUHBcnWEvgJBQl9nJEiU0Zsnvgc/ubhPgXRR4Xq37Z0j4r7g1SgEEzwxA57d

|

| 30 |

+

emyPxgcYxn/eR44/KJ4EBs+lVDR3veyJm+kXQ99b21/+jh5Xos1AnX5iItreGCc=

|

| 31 |

+

-----END CERTIFICATE-----

|

README.md

CHANGED

|

@@ -1,12 +1,96 @@

|

|

| 1 |

---

|

| 2 |

-

title:

|

| 3 |

-

|

| 4 |

-

colorFrom: green

|

| 5 |

-

colorTo: gray

|

| 6 |

sdk: gradio

|

| 7 |

sdk_version: 5.14.0

|

| 8 |

-

app_file: app.py

|

| 9 |

-

pinned: false

|

| 10 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 11 |

|

| 12 |

-

|

|

|

|

|

|

| 1 |

---

|

| 2 |

+

title: chatbotappgradio

|

| 3 |

+

app_file: app.py

|

|

|

|

|

|

|

| 4 |

sdk: gradio

|

| 5 |

sdk_version: 5.14.0

|

|

|

|

|

|

|

| 6 |

---

|

| 7 |

+

# `hyperbolic-gradio`

|

| 8 |

+

|

| 9 |

+

is a Python package that makes it very easy for developers to create machine learning apps that are powered by Hyperbolic AI's API.

|

| 10 |

+

|

| 11 |

+

# Installation

|

| 12 |

+

|

| 13 |

+

You can install `hyperbolic-gradio` directly using pip:

|

| 14 |

+

|

| 15 |

+

```bash

|

| 16 |

+

pip install hyperbolic-gradio

|

| 17 |

+

```

|

| 18 |

+

|

| 19 |

+

That's it!

|

| 20 |

+

|

| 21 |

+

# Basic Usage

|

| 22 |

+

|

| 23 |

+

Just like if you were to use the `hyperbolic` API, you should first save your Hyperbolic API key to this environment variable:

|

| 24 |

+

|

| 25 |

+

```

|

| 26 |

+

export HYPERBOLIC_API_KEY=<your token>

|

| 27 |

+

```

|

| 28 |

+

|

| 29 |

+

Then in a Python file, write:

|

| 30 |

+

|

| 31 |

+

```python

|

| 32 |

+

import gradio as gr

|

| 33 |

+

import hyperbolic_gradio

|

| 34 |

+

|

| 35 |

+

gr.load(

|

| 36 |

+

name='meta-llama/Meta-Llama-3-70B-Instruct',

|

| 37 |

+

src=hyperbolic_gradio.registry,

|

| 38 |

+

).launch()

|

| 39 |

+

```

|

| 40 |

+

|

| 41 |

+

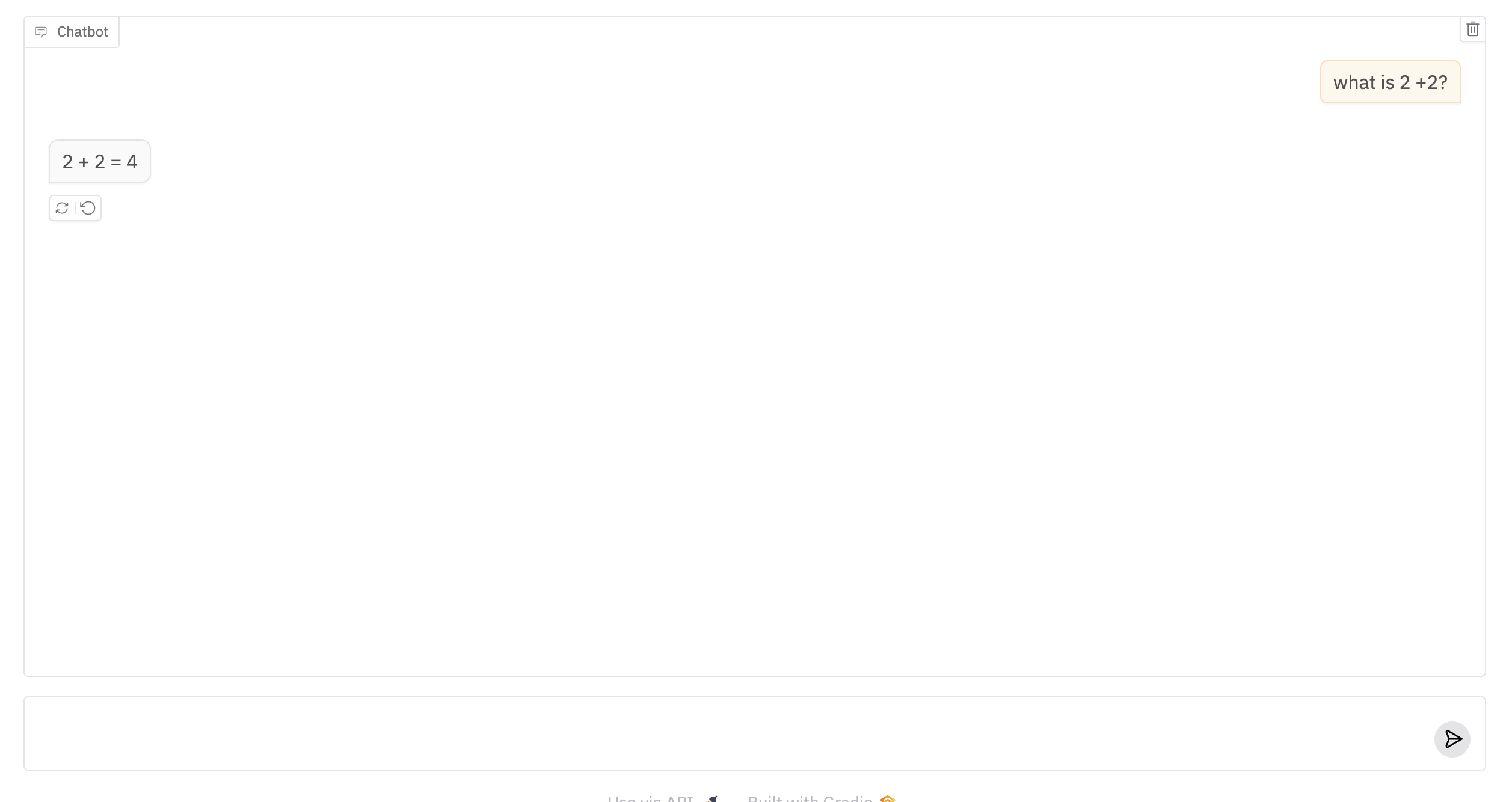

Run the Python file, and you should see a Gradio Interface connected to the model on Hyperbolic AI!

|

| 42 |

+

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

# Customization

|

| 46 |

+

|

| 47 |

+

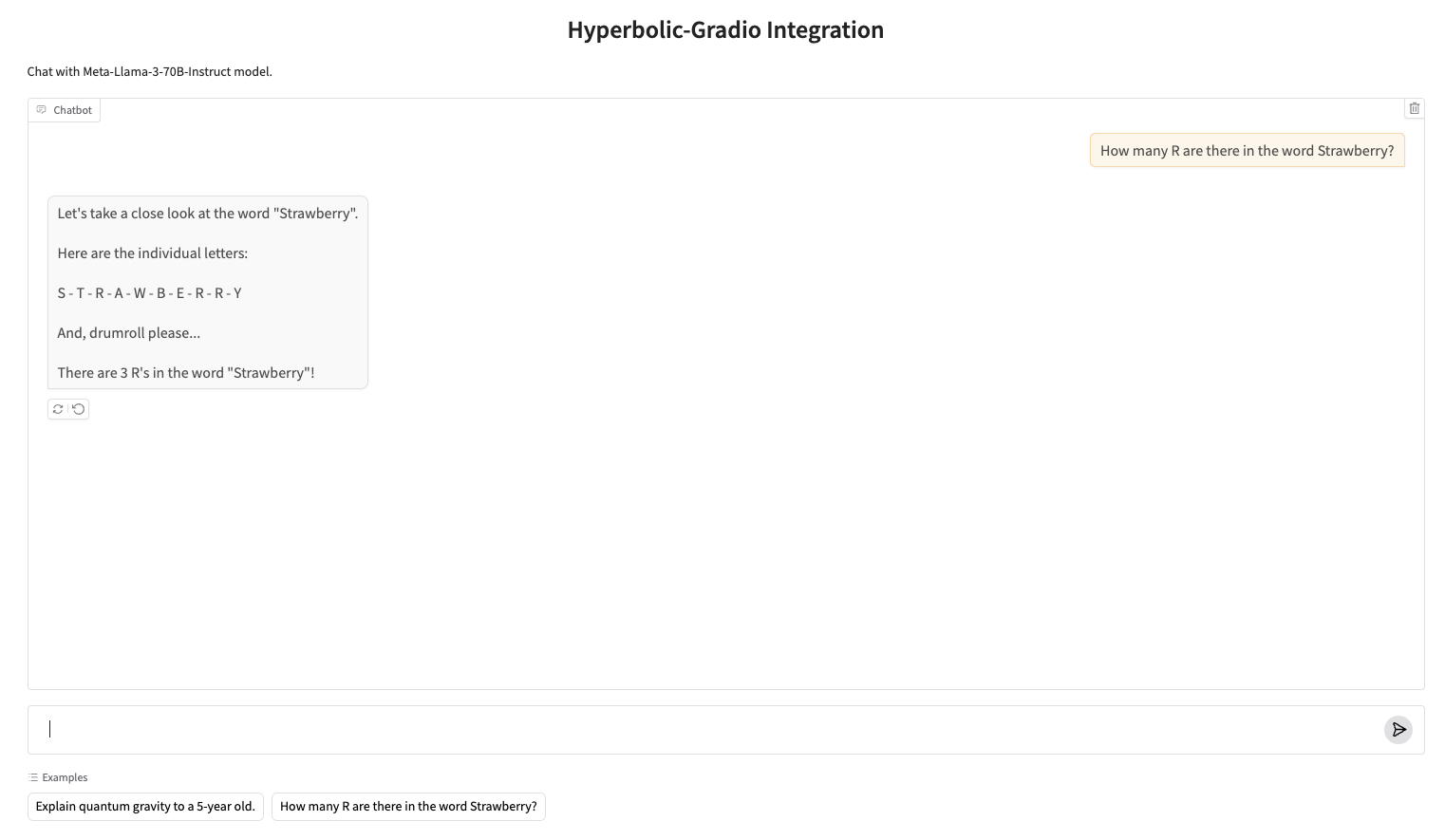

Once you can create a Gradio UI from an Hyperbolic API endpoint, you can customize it by setting your own input and output components, or any other arguments to `gr.Interface`. For example, the screenshot below was generated with:

|

| 48 |

+

|

| 49 |

+

```py

|

| 50 |

+

import gradio as gr

|

| 51 |

+

import hyperbolic_gradio

|

| 52 |

+

|

| 53 |

+

gr.load(

|

| 54 |

+

name='meta-llama/Meta-Llama-3-70B-Instruct',

|

| 55 |

+

src=hyperbolic_gradio.registry,

|

| 56 |

+

title='Hyperbolic-Gradio Integration',

|

| 57 |

+

description="Chat with Meta-Llama-3-70B-Instruct model.",

|

| 58 |

+

examples=["Explain quantum gravity to a 5-year old.", "How many R are there in the word Strawberry?"]

|

| 59 |

+

).launch()

|

| 60 |

+

```

|

| 61 |

+

|

| 62 |

+

|

| 63 |

+

# Composition

|

| 64 |

+

|

| 65 |

+

Or use your loaded Interface within larger Gradio Web UIs, e.g.

|

| 66 |

+

|

| 67 |

+

```python

|

| 68 |

+

import gradio as gr

|

| 69 |

+

import hyperbolic_gradio

|

| 70 |

+

|

| 71 |

+

with gr.Blocks() as demo:

|

| 72 |

+

with gr.Tab("Meta-Llama-3-70B-Instruct"):

|

| 73 |

+

gr.load('meta-llama/Meta-Llama-3-70B-Instruct', src=hyperbolic_gradio.registry)

|

| 74 |

+

with gr.Tab("Llama-3.2-3B-Instruct"):

|

| 75 |

+

gr.load('meta-llama/Llama-3.2-3B-Instruct', src=hyperbolic_gradio.registry)

|

| 76 |

+

|

| 77 |

+

demo.launch()

|

| 78 |

+

```

|

| 79 |

+

|

| 80 |

+

# Under the Hood

|

| 81 |

+

|

| 82 |

+

The `hyperbolic-gradio` Python library has two dependencies: `hyperbolic` and `gradio`. It defines a "registry" function `hyperbolic_gradio.registry`, which takes in a model name and returns a Gradio app.

|

| 83 |

+

|

| 84 |

+

# Supported Models in Hyperbolic AI

|

| 85 |

+

|

| 86 |

+

All chat API models supported by Hyperbolic AI are compatible with this integration. For a comprehensive list of available models and their specifications, please refer to the [Hyperbolic AI Models documentation](https://platform.hyperbolic.ai/docs/models).

|

| 87 |

+

|

| 88 |

+

-------

|

| 89 |

+

|

| 90 |

+

Note: if you are getting a 401 authentication error, then the Hyperbolic API Client is not able to get the API token from the environment variable. This happened to me as well, in which case save it in your Python session, like this:

|

| 91 |

+

|

| 92 |

+

```py

|

| 93 |

+

import os

|

| 94 |

|

| 95 |

+

os.environ["HYPERBOLIC_API_KEY"] = ...

|

| 96 |

+

```

|

app.py

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import gradio as gr

|

| 2 |

+

import hyperbolic_gradio

|

| 3 |

+

|

| 4 |

+

gr.load(

|

| 5 |

+

name='meta-llama/Meta-Llama-3-70B-Instruct',

|

| 6 |

+

src=hyperbolic_gradio.registry,

|

| 7 |

+

type="messages"

|

| 8 |

+

).launch()

|

chatinterface.png

ADDED

|

chatinterface_with_customization.png

ADDED

|

Git LFS Details

|

composition.py

ADDED

|

@@ -0,0 +1,10 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import gradio as gr

|

| 2 |

+

import hyperbolic_gradio

|

| 3 |

+

|

| 4 |

+

with gr.Blocks() as demo:

|

| 5 |

+

with gr.Tab("Meta-Llama-3-70B-Instruct"):

|

| 6 |

+

gr.load('meta-llama/Meta-Llama-3-70B-Instruct', src=hyperbolic_gradio.registry)

|

| 7 |

+

with gr.Tab("Llama-3.2-3B-Instruct"):

|

| 8 |

+

gr.load('meta-llama/Llama-3.2-3B-Instruct', src=hyperbolic_gradio.registry)

|

| 9 |

+

|

| 10 |

+

demo.launch()

|

custom_app.py

ADDED

|

@@ -0,0 +1,10 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import gradio as gr

|

| 2 |

+

import hyperbolic_gradio

|

| 3 |

+

|

| 4 |

+

gr.load(

|

| 5 |

+

name='meta-llama/Meta-Llama-3-70B-Instruct',

|

| 6 |

+

src=hyperbolic_gradio.registry,

|

| 7 |

+

title='Hyperbolic-Gradio Integration',

|

| 8 |

+

description="Chat with Meta-Llama-3-70B-Instruct model.",

|

| 9 |

+

examples=["Explain quantum gravity to a 5-year old.", "How many R are there in the word Strawberry?"]

|

| 10 |

+

).launch()

|

hyperbolic-animated.gif

ADDED

|

Git LFS Details

|

hyperbolic-gradio.png

ADDED

|

hyperbolic_gradio/__init__.py

ADDED

|

@@ -0,0 +1,82 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import os

|

| 2 |

+

from openai import OpenAI

|

| 3 |

+

import gradio as gr

|

| 4 |

+

from typing import Callable

|

| 5 |

+

|

| 6 |

+

__version__ = "0.0.5"

|

| 7 |

+

|

| 8 |

+

|

| 9 |

+

def get_fn(model_name: str, preprocess: Callable, postprocess: Callable, api_key: str, base_url: str = None):

|

| 10 |

+

def fn(message, history):

|

| 11 |

+

inputs = preprocess(message, history)

|

| 12 |

+

client = OpenAI(

|

| 13 |

+

api_key=api_key,

|

| 14 |

+

base_url="https://api.hyperbolic.xyz/v1"

|

| 15 |

+

)

|

| 16 |

+

completion = client.chat.completions.create(

|

| 17 |

+

model=model_name,

|

| 18 |

+

messages=[

|

| 19 |

+

*inputs["messages"]

|

| 20 |

+

],

|

| 21 |

+

stream=True,

|

| 22 |

+

temperature=0.7,

|

| 23 |

+

max_tokens=1024,

|

| 24 |

+

)

|

| 25 |

+

response_text = ""

|

| 26 |

+

for chunk in completion:

|

| 27 |

+

delta = chunk.choices[0].delta.content or ""

|

| 28 |

+

response_text += delta

|

| 29 |

+

yield postprocess(response_text)

|

| 30 |

+

|

| 31 |

+

return fn

|

| 32 |

+

|

| 33 |

+

|

| 34 |

+

def get_interface_args(pipeline):

|

| 35 |

+

if pipeline == "chat":

|

| 36 |

+

inputs = None

|

| 37 |

+

outputs = None

|

| 38 |

+

|

| 39 |

+

def preprocess(message, history):

|

| 40 |

+

messages = []

|

| 41 |

+

for user_msg, assistant_msg in history:

|

| 42 |

+

messages.append({"role": "user", "content": user_msg})

|

| 43 |

+

messages.append({"role": "assistant", "content": assistant_msg})

|

| 44 |

+

messages.append({"role": "user", "content": message})

|

| 45 |

+

return {"messages": messages}

|

| 46 |

+

|

| 47 |

+

postprocess = lambda x: x # No post-processing needed

|

| 48 |

+

else:

|

| 49 |

+

# Add other pipeline types when they will be needed

|

| 50 |

+

raise ValueError(f"Unsupported pipeline type: {pipeline}")

|

| 51 |

+

return inputs, outputs, preprocess, postprocess

|

| 52 |

+

|

| 53 |

+

|

| 54 |

+

def get_pipeline(model_name):

|

| 55 |

+

# Determine the pipeline type based on the model name

|

| 56 |

+

# For simplicity, assuming all models are chat models at the moment

|

| 57 |

+

return "chat"

|

| 58 |

+

|

| 59 |

+

|

| 60 |

+

def registry(name: str, token: str | None = None, base_url: str | None = None, **kwargs):

|

| 61 |

+

"""

|

| 62 |

+

Create a Gradio Interface for a model on Hyperbolic.

|

| 63 |

+

|

| 64 |

+

Parameters:

|

| 65 |

+

- name (str): The name of the model.

|

| 66 |

+

- token (str, optional): The Hyperbolic API key. If not provided, will look for HYPERBOLIC_API_KEY env variable.

|

| 67 |

+

- base_url (str, optional): The base URL for the Hyperbolic API.

|

| 68 |

+

"""

|

| 69 |

+

api_key = token or os.environ.get("HYPERBOLIC_API_KEY")

|

| 70 |

+

if not api_key:

|

| 71 |

+

raise ValueError("API key is not set. Please provide a token or set HYPERBOLIC_API_KEY environment variable.")

|

| 72 |

+

|

| 73 |

+

pipeline = get_pipeline(name)

|

| 74 |

+

inputs, outputs, preprocess, postprocess = get_interface_args(pipeline)

|

| 75 |

+

fn = get_fn(name, preprocess, postprocess, api_key, base_url)

|

| 76 |

+

|

| 77 |

+

if pipeline == "chat":

|

| 78 |

+

interface = gr.ChatInterface(fn=fn, **kwargs)

|

| 79 |

+

else:

|

| 80 |

+

interface = gr.Interface(fn=fn, inputs=inputs, outputs=outputs, **kwargs)

|

| 81 |

+

|

| 82 |

+

return interface

|

pyproject.toml

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

[build-system]

|

| 2 |

+

requires = ["hatchling"]

|

| 3 |

+

build-backend = "hatchling.build"

|

| 4 |

+

|

| 5 |

+

[project]

|

| 6 |

+

name = "hyperbolic-gradio"

|

| 7 |

+

version = "0.0.5"

|

| 8 |

+

description = "A Python package for creating Gradio applications with Hyperbolic AI models"

|

| 9 |

+

authors = [

|

| 10 |

+

{ name = "AK", email = "ahsen.khaliq@gmail.com" },

|

| 11 |

+

{ name = "YuchenJin", email = "yuchenj@cs.washington.edu"}

|

| 12 |

+

]

|

| 13 |

+

readme = "README.md"

|

| 14 |

+

requires-python = ">=3.10"

|

| 15 |

+

classifiers = [

|

| 16 |

+

"Programming Language :: Python :: 3",

|

| 17 |

+

"License :: OSI Approved :: MIT License",

|

| 18 |

+

"Operating System :: OS Independent",

|

| 19 |

+

]

|

| 20 |

+

dependencies = [

|

| 21 |

+

"gradio>=5.5.0",

|

| 22 |

+

"openai",

|

| 23 |

+

]

|

| 24 |

+

|

| 25 |

+

[project.urls]

|

| 26 |

+

homepage = "https://github.com/HyperbolicLabs/hyperbolic-gradio"

|

| 27 |

+

repository = "https://github.com/HyperbolicLabs/hyperbolic-gradio"

|

| 28 |

+

|

| 29 |

+

[project.optional-dependencies]

|

| 30 |

+

dev = ["pytest"]

|

| 31 |

+

|

| 32 |

+

[tool.hatch.build.targets.wheel]

|

| 33 |

+

packages = ["hyperbolic_gradio"]

|

| 34 |

+

|

requirements.txt

ADDED

|

@@ -0,0 +1,2 @@

|

|

|

|

|

|

|

|

|

|

| 1 |

+

openai

|

| 2 |

+

gradio==5.5.0

|

space.yaml

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

sdk: gradio

|

| 2 |

+

hardware:

|

| 3 |

+

accelerator: GPU

|