-

-

-

- - Watch the Demo Video -

-

-  -

-

-

-

- Watch the Demo Video

-

-  -

-

-  -

-

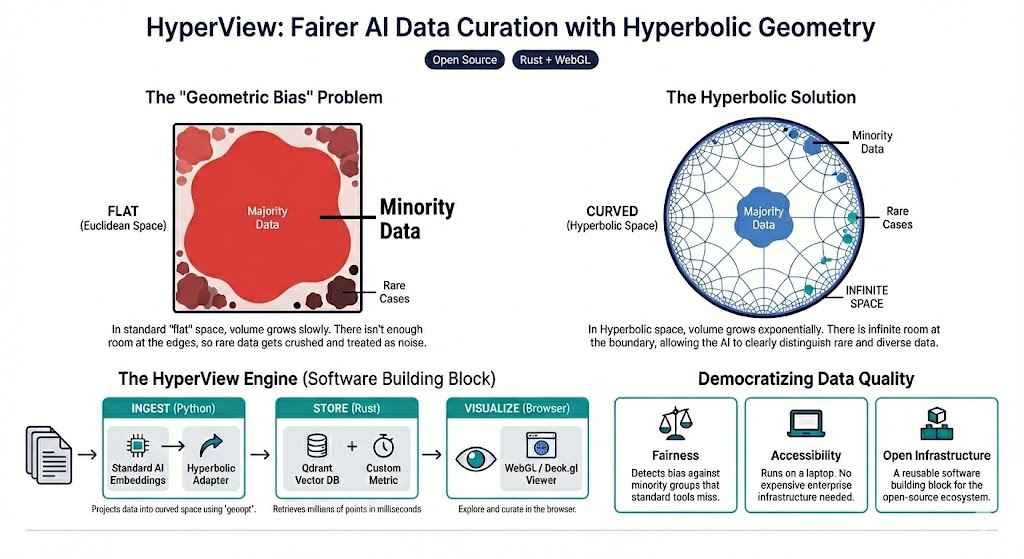

- Drag to Pan. Experience the "infinite" space. - Notice how the red "Rare" points expand and separate as you bring them towards the center. -

-- Make sure the HyperView backend is running on port 6262. -

-HyperView is running in Colab. " - f"" - "Open HyperView in a new tab.

" - ) - ) - display(HTML(f"{app_url}

")) - return - except Exception: - # Fall through to the generic notebook behavior. - pass - - # Default: open in a new browser tab (works well for Jupyter). - try: - from IPython.display import HTML, Javascript, display - - display( - HTML( - "HyperView is running. " - f"Open in a new tab." - "