Spaces:

Runtime error

Runtime error

code add

Browse filesThis view is limited to 50 files because it contains too many changes. See raw diff

- Dockerfile +125 -0

- Makefile +120 -0

- README.md +250 -1

- README_1.md +61 -0

- app.py +110 -0

- backend/.DS_Store +0 -0

- backend/.env.example +29 -0

- backend/Dockerfile +43 -0

- backend/__init__.py +1 -0

- backend/agents/__init__.py +0 -0

- backend/agents/claude_coach.py +131 -0

- backend/agents/complexity.py +79 -0

- backend/agents/grpo_trainer.py +236 -0

- backend/agents/model_agent.py +285 -0

- backend/agents/nvm_player_agent.py +265 -0

- backend/agents/qwen_agent.py +228 -0

- backend/api/__init__.py +0 -0

- backend/api/coaching_router.py +274 -0

- backend/api/game_router.py +295 -0

- backend/api/training_router.py +75 -0

- backend/api/websocket.py +97 -0

- backend/api/websocket.py_backup +87 -0

- backend/chess_engine.py +186 -0

- backend/chess_lib/__init__.py +0 -0

- backend/chess_lib/chess_engine.py +166 -0

- backend/chess_lib/engine.py +125 -0

- backend/economy/.DS_Store +0 -0

- backend/economy/__init__.py +0 -0

- backend/economy/ledger.py +174 -0

- backend/economy/nvm_payments.py +340 -0

- backend/economy/register_agent.py +138 -0

- backend/grpo_trainer.py +240 -0

- backend/main.py +313 -0

- backend/main.py_backup +218 -0

- backend/openenv/__init__.py +19 -0

- backend/openenv/env.py +311 -0

- backend/openenv/models.py +136 -0

- backend/openenv/router.py +159 -0

- backend/qwen_agent.py +228 -0

- backend/requirements.txt +40 -0

- backend/settings.py +65 -0

- backend/websocket_server.py +365 -0

- doc.md +124 -0

- docker-compose.gpu.yml +52 -0

- docker-compose.yml +63 -0

- docker-compose.yml_backup +61 -0

- docker-entrypoint.sh +175 -0

- docs/Issues.md +47 -0

- docs/latest_fixes_howto.md +420 -0

- frontend/.DS_Store +0 -0

Dockerfile

ADDED

|

@@ -0,0 +1,125 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# ─────────────────────────────────────────────────────────────────────────────

|

| 2 |

+

# ChessEcon — Unified Multi-Stage Dockerfile

|

| 3 |

+

#

|

| 4 |

+

# Stages:

|

| 5 |

+

# 1. frontend-builder — builds the React TypeScript dashboard (Node.js)

|

| 6 |

+

# 2. backend-cpu — Python FastAPI backend, serves built frontend as static

|

| 7 |

+

# 3. backend-gpu — same as backend-cpu but with CUDA PyTorch

|

| 8 |

+

#

|

| 9 |

+

# Usage:

|

| 10 |

+

# CPU: docker build --target backend-cpu -t chessecon:cpu .

|

| 11 |

+

# GPU: docker build --target backend-gpu -t chessecon:gpu .

|

| 12 |

+

# ─────────────────────────────────────────────────────────────────────────────

|

| 13 |

+

|

| 14 |

+

# ── Stage 1: Build the React frontend ────────────────────────────────────────

|

| 15 |

+

FROM node:22-alpine AS frontend-builder

|

| 16 |

+

|

| 17 |

+

WORKDIR /app/frontend

|

| 18 |

+

|

| 19 |

+

# Copy package files AND patches dir (required by pnpm for patched dependencies)

|

| 20 |

+

COPY frontend/package.json frontend/pnpm-lock.yaml* ./

|

| 21 |

+

COPY frontend/patches/ ./patches/

|

| 22 |

+

RUN npm install -g pnpm && pnpm install --frozen-lockfile

|

| 23 |

+

|

| 24 |

+

# Copy the full frontend source

|

| 25 |

+

COPY frontend/ ./

|

| 26 |

+

|

| 27 |

+

# Build the production bundle (frontend only — no Express server build)

|

| 28 |

+

# vite.config.ts outputs to dist/public/ relative to the project root

|

| 29 |

+

RUN pnpm build:docker

|

| 30 |

+

|

| 31 |

+

# ── Stage 2: CPU backend ──────────────────────────────────────────────────────

|

| 32 |

+

FROM python:3.11-slim AS backend-cpu

|

| 33 |

+

|

| 34 |

+

LABEL maintainer="ChessEcon Team"

|

| 35 |

+

LABEL description="ChessEcon — Multi-Agent Chess RL System (CPU)"

|

| 36 |

+

|

| 37 |

+

# System dependencies

|

| 38 |

+

RUN apt-get update && apt-get install -y --no-install-recommends \

|

| 39 |

+

stockfish \

|

| 40 |

+

curl \

|

| 41 |

+

git \

|

| 42 |

+

&& rm -rf /var/lib/apt/lists/*

|

| 43 |

+

|

| 44 |

+

WORKDIR /app

|

| 45 |

+

|

| 46 |

+

# Install Python dependencies

|

| 47 |

+

COPY backend/requirements.txt ./backend/requirements.txt

|

| 48 |

+

RUN pip install --no-cache-dir -r backend/requirements.txt

|

| 49 |

+

|

| 50 |

+

# Copy the backend source

|

| 51 |

+

COPY backend/ ./backend/

|

| 52 |

+

COPY shared/ ./shared/

|

| 53 |

+

|

| 54 |

+

# Copy the built frontend into the backend's static directory

|

| 55 |

+

# vite.config.ts outputs to dist/public/ (see build.outDir in vite.config.ts)

|

| 56 |

+

COPY --from=frontend-builder /app/frontend/dist/public ./backend/static/

|

| 57 |

+

|

| 58 |

+

# Copy entrypoint

|

| 59 |

+

COPY docker-entrypoint.sh ./

|

| 60 |

+

RUN chmod +x docker-entrypoint.sh

|

| 61 |

+

|

| 62 |

+

# Create directories for model cache and training data

|

| 63 |

+

RUN mkdir -p /app/models /app/data/games /app/data/training /app/logs

|

| 64 |

+

|

| 65 |

+

# Expose the application port

|

| 66 |

+

EXPOSE 8000

|

| 67 |

+

|

| 68 |

+

# Health check

|

| 69 |

+

HEALTHCHECK --interval=30s --timeout=10s --start-period=60s --retries=3 \

|

| 70 |

+

CMD curl -f http://localhost:8000/health || exit 1

|

| 71 |

+

|

| 72 |

+

ENTRYPOINT ["./docker-entrypoint.sh"]

|

| 73 |

+

CMD ["backend"]

|

| 74 |

+

|

| 75 |

+

# ── Stage 3: GPU backend ──────────────────────────────────────────────────────

|

| 76 |

+

FROM nvidia/cuda:12.1.0-cudnn8-runtime-ubuntu22.04 AS backend-gpu

|

| 77 |

+

|

| 78 |

+

LABEL maintainer="ChessEcon Team"

|

| 79 |

+

LABEL description="ChessEcon — Multi-Agent Chess RL System (GPU/CUDA)"

|

| 80 |

+

|

| 81 |

+

# System dependencies

|

| 82 |

+

RUN apt-get update && apt-get install -y --no-install-recommends \

|

| 83 |

+

python3.11 \

|

| 84 |

+

python3.11-dev \

|

| 85 |

+

python3-pip \

|

| 86 |

+

stockfish \

|

| 87 |

+

curl \

|

| 88 |

+

git \

|

| 89 |

+

&& rm -rf /var/lib/apt/lists/* \

|

| 90 |

+

&& ln -sf /usr/bin/python3.11 /usr/bin/python3 \

|

| 91 |

+

&& ln -sf /usr/bin/python3 /usr/bin/python

|

| 92 |

+

|

| 93 |

+

WORKDIR /app

|

| 94 |

+

|

| 95 |

+

# Install PyTorch with CUDA support first (separate layer for caching)

|

| 96 |

+

RUN pip install --no-cache-dir torch==2.3.0 torchvision torchaudio --index-url https://download.pytorch.org/whl/cu121

|

| 97 |

+

|

| 98 |

+

# Install remaining Python dependencies

|

| 99 |

+

COPY backend/requirements.txt ./backend/requirements.txt

|

| 100 |

+

COPY training/requirements.txt ./training/requirements.txt

|

| 101 |

+

RUN pip install --no-cache-dir -r backend/requirements.txt

|

| 102 |

+

RUN pip install --no-cache-dir -r training/requirements.txt

|

| 103 |

+

|

| 104 |

+

# Copy source

|

| 105 |

+

COPY backend/ ./backend/

|

| 106 |

+

COPY training/ ./training/

|

| 107 |

+

COPY shared/ ./shared/

|

| 108 |

+

|

| 109 |

+

# Copy the built frontend

|

| 110 |

+

COPY --from=frontend-builder /app/frontend/dist/public ./backend/static/

|

| 111 |

+

|

| 112 |

+

# Copy entrypoint

|

| 113 |

+

COPY docker-entrypoint.sh ./

|

| 114 |

+

RUN chmod +x docker-entrypoint.sh

|

| 115 |

+

|

| 116 |

+

# Create directories

|

| 117 |

+

RUN mkdir -p /app/models /app/data/games /app/data/training /app/logs

|

| 118 |

+

|

| 119 |

+

EXPOSE 8000

|

| 120 |

+

|

| 121 |

+

HEALTHCHECK --interval=30s --timeout=10s --start-period=120s --retries=3 \

|

| 122 |

+

CMD curl -f http://localhost:8000/health || exit 1

|

| 123 |

+

|

| 124 |

+

ENTRYPOINT ["./docker-entrypoint.sh"]

|

| 125 |

+

CMD ["backend"]

|

Makefile

ADDED

|

@@ -0,0 +1,120 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# ─────────────────────────────────────────────────────────────────────────────

|

| 2 |

+

# ChessEcon — Makefile

|

| 3 |

+

# ─────────────────────────────────────────────────────────────────────────────

|

| 4 |

+

|

| 5 |

+

.PHONY: help env-file dirs build build-gpu up up-gpu down demo selfplay train \

|

| 6 |

+

train-gpu logs shell clean frontend-dev backend-dev test lint

|

| 7 |

+

|

| 8 |

+

# ── Default target ────────────────────────────────────────────────────────────

|

| 9 |

+

help:

|

| 10 |

+

@echo ""

|

| 11 |

+

@echo " ChessEcon — Multi-Agent Chess RL System"

|

| 12 |

+

@echo " ════════════════════════════════════════"

|

| 13 |

+

@echo ""

|

| 14 |

+

@echo " Setup:"

|

| 15 |

+

@echo " make env-file Copy .env.example → .env (edit before running)"

|

| 16 |

+

@echo " make dirs Create host volume directories"

|

| 17 |

+

@echo ""

|

| 18 |

+

@echo " Docker (CPU):"

|

| 19 |

+

@echo " make build Build the CPU Docker image"

|

| 20 |

+

@echo " make up Start the dashboard + API (http://localhost:8000)"

|

| 21 |

+

@echo " make demo Run a 3-game demo and exit"

|

| 22 |

+

@echo " make selfplay Collect self-play data (no training)"

|

| 23 |

+

@echo " make train Run RL training (CPU)"

|

| 24 |

+

@echo " make down Stop all containers"

|

| 25 |

+

@echo ""

|

| 26 |

+

@echo " Docker (GPU):"

|

| 27 |

+

@echo " make build-gpu Build the GPU Docker image"

|

| 28 |

+

@echo " make up-gpu Start with GPU support"

|

| 29 |

+

@echo " make train-gpu Run RL training (GPU)"

|

| 30 |

+

@echo ""

|

| 31 |

+

@echo " Development:"

|

| 32 |

+

@echo " make frontend-dev Start React dev server (hot-reload)"

|

| 33 |

+

@echo " make backend-dev Start FastAPI dev server"

|

| 34 |

+

@echo " make test Run all tests"

|

| 35 |

+

@echo " make lint Run linters"

|

| 36 |

+

@echo ""

|

| 37 |

+

@echo " Utilities:"

|

| 38 |

+

@echo " make logs Tail container logs"

|

| 39 |

+

@echo " make shell Open shell in running container"

|

| 40 |

+

@echo " make clean Remove containers, images, and volumes"

|

| 41 |

+

@echo ""

|

| 42 |

+

|

| 43 |

+

# ── Setup ─────────────────────────────────────────────────────────────────────

|

| 44 |

+

env-file:

|

| 45 |

+

@if [ -f .env ]; then \

|

| 46 |

+

echo ".env already exists. Delete it first if you want to reset."; \

|

| 47 |

+

else \

|

| 48 |

+

cp .env.example .env; \

|

| 49 |

+

echo ".env created. Edit it with your API keys before running."; \

|

| 50 |

+

fi

|

| 51 |

+

|

| 52 |

+

dirs:

|

| 53 |

+

@mkdir -p volumes/models volumes/data volumes/logs

|

| 54 |

+

@echo "Volume directories created."

|

| 55 |

+

|

| 56 |

+

# ── Docker CPU ────────────────────────────────────────────────────────────────

|

| 57 |

+

build: dirs

|

| 58 |

+

docker compose build chessecon

|

| 59 |

+

|

| 60 |

+

up: dirs

|

| 61 |

+

docker compose up chessecon

|

| 62 |

+

|

| 63 |

+

demo: dirs

|

| 64 |

+

docker compose run --rm chessecon demo

|

| 65 |

+

|

| 66 |

+

selfplay: dirs

|

| 67 |

+

docker compose run --rm \

|

| 68 |

+

-e RL_METHOD=selfplay \

|

| 69 |

+

chessecon selfplay

|

| 70 |

+

|

| 71 |

+

train: dirs

|

| 72 |

+

docker compose --profile training up trainer

|

| 73 |

+

|

| 74 |

+

down:

|

| 75 |

+

docker compose down

|

| 76 |

+

|

| 77 |

+

# ── Docker GPU ────────────────────────────────────────────────────────────────

|

| 78 |

+

build-gpu: dirs

|

| 79 |

+

docker compose -f docker-compose.yml -f docker-compose.gpu.yml build

|

| 80 |

+

|

| 81 |

+

up-gpu: dirs

|

| 82 |

+

docker compose -f docker-compose.yml -f docker-compose.gpu.yml up chessecon

|

| 83 |

+

|

| 84 |

+

train-gpu: dirs

|

| 85 |

+

docker compose -f docker-compose.yml -f docker-compose.gpu.yml \

|

| 86 |

+

--profile training up trainer

|

| 87 |

+

|

| 88 |

+

# ── Development (local, no Docker) ───────────────────────────────────────────

|

| 89 |

+

frontend-dev:

|

| 90 |

+

@echo "Starting React frontend dev server..."

|

| 91 |

+

cd frontend && pnpm install && pnpm dev

|

| 92 |

+

|

| 93 |

+

backend-dev:

|

| 94 |

+

@echo "Starting FastAPI backend dev server..."

|

| 95 |

+

cd backend && pip install -r requirements.txt && \

|

| 96 |

+

uvicorn main:app --reload --host 0.0.0.0 --port 8000

|

| 97 |

+

|

| 98 |

+

# ── Testing ───────────────────────────────────────────────────────────────────

|

| 99 |

+

test:

|

| 100 |

+

@echo "Running backend tests..."

|

| 101 |

+

cd backend && python -m pytest tests/ -v

|

| 102 |

+

@echo "Running frontend tests..."

|

| 103 |

+

cd frontend && pnpm test

|

| 104 |

+

|

| 105 |

+

lint:

|

| 106 |

+

@echo "Linting backend..."

|

| 107 |

+

cd backend && python -m ruff check . || true

|

| 108 |

+

@echo "Linting frontend..."

|

| 109 |

+

cd frontend && pnpm lint || true

|

| 110 |

+

|

| 111 |

+

# ── Utilities ─────────────────────────────────────────────────────��───────────

|

| 112 |

+

logs:

|

| 113 |

+

docker compose logs -f chessecon

|

| 114 |

+

|

| 115 |

+

shell:

|

| 116 |

+

docker compose exec chessecon /bin/bash

|

| 117 |

+

|

| 118 |

+

clean:

|

| 119 |

+

docker compose down -v --rmi local

|

| 120 |

+

@echo "Containers, images, and volumes removed."

|

README.md

CHANGED

|

@@ -1 +1,250 @@

|

|

| 1 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

title: ChessEcon

|

| 3 |

+

emoji: ♟️

|

| 4 |

+

colorFrom: indigo

|

| 5 |

+

colorTo: purple

|

| 6 |

+

sdk: docker

|

| 7 |

+

app_port: 8000

|

| 8 |

+

tags:

|

| 9 |

+

- openenv

|

| 10 |

+

- reinforcement-learning

|

| 11 |

+

- chess

|

| 12 |

+

- multi-agent

|

| 13 |

+

- grpo

|

| 14 |

+

- rl-environment

|

| 15 |

+

- economy

|

| 16 |

+

- two-player

|

| 17 |

+

- game

|

| 18 |

+

license: apache-2.0

|

| 19 |

+

---

|

| 20 |

+

|

| 21 |

+

# ♟️ ChessEcon — OpenEnv 0.1 Compliant Chess Economy Environment

|

| 22 |

+

|

| 23 |

+

> **Self-hosted environment** — the live API runs on AdaBoost AI infrastructure.

|

| 24 |

+

> Update this URL if the domain changes.

|

| 25 |

+

|

| 26 |

+

**Live API base URL:** `https://chessecon.adaboost.io`

|

| 27 |

+

**env_info:** `https://chessecon.adaboost.io/env/env_info`

|

| 28 |

+

**Dashboard:** `https://chessecon-ui.adaboost.io`

|

| 29 |

+

**Swagger docs:** `https://chessecon.adaboost.io/docs`

|

| 30 |

+

|

| 31 |

+

---

|

| 32 |

+

|

| 33 |

+

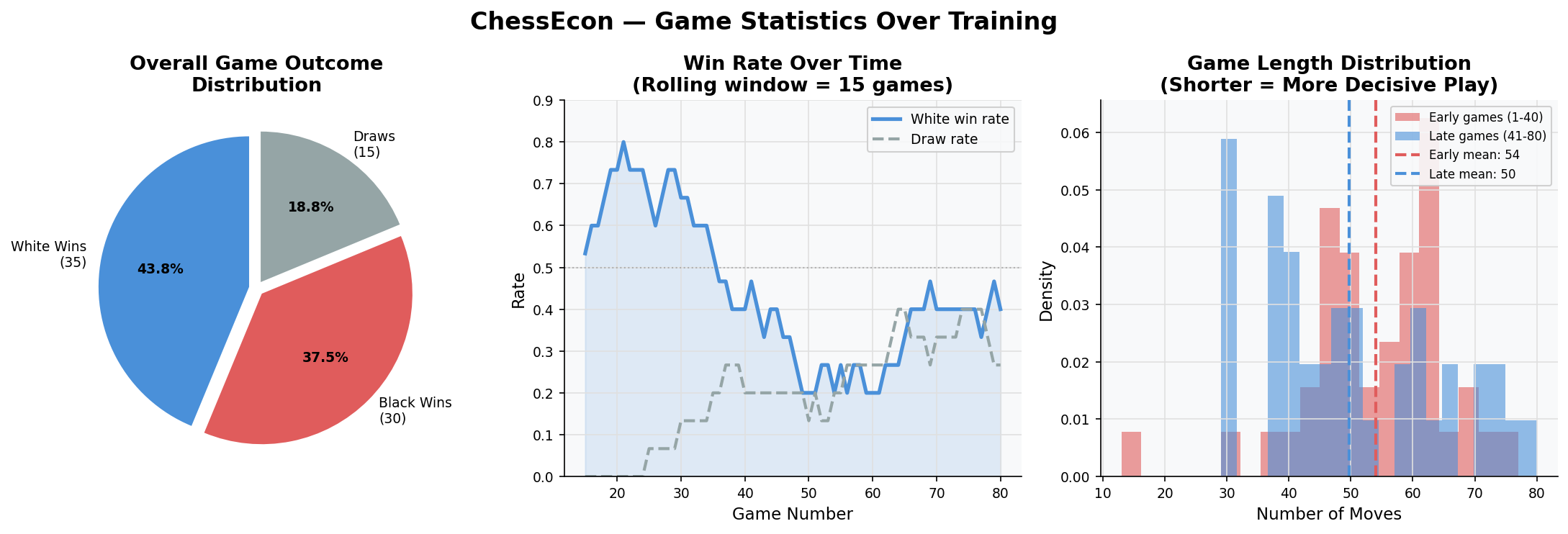

**Two competing LLM agents play chess for economic stakes.**

|

| 34 |

+

White = `Qwen/Qwen2.5-0.5B-Instruct` (trainable) | Black = `meta-llama/Llama-3.2-1B-Instruct` (fixed)

|

| 35 |

+

|

| 36 |

+

Both agents pay an entry fee each game. The winner earns a prize pool.

|

| 37 |

+

The White agent is trained live with **GRPO** (Group Relative Policy Optimisation).

|

| 38 |

+

|

| 39 |

+

---

|

| 40 |

+

|

| 41 |

+

## OpenEnv 0.1 API

|

| 42 |

+

|

| 43 |

+

This environment is fully compliant with the [OpenEnv 0.1 spec](https://github.com/huggingface/openenv).

|

| 44 |

+

|

| 45 |

+

| Endpoint | Method | Description |

|

| 46 |

+

|---|---|---|

|

| 47 |

+

| `/env/reset` | `POST` | Start a new episode, deduct entry fees, return initial observation |

|

| 48 |

+

| `/env/step` | `POST` | Apply one move (UCI or SAN), return reward + next observation |

|

| 49 |

+

| `/env/state` | `GET` | Inspect current board state — read-only, no side effects |

|

| 50 |

+

| `/env/env_info` | `GET` | Environment metadata for HF Hub discoverability |

|

| 51 |

+

| `/ws` | `WS` | Real-time event stream for the live dashboard |

|

| 52 |

+

| `/health` | `GET` | Health check + model load status |

|

| 53 |

+

| `/docs` | `GET` | Interactive Swagger UI |

|

| 54 |

+

|

| 55 |

+

---

|

| 56 |

+

|

| 57 |

+

## Quick Start

|

| 58 |

+

|

| 59 |

+

```python

|

| 60 |

+

import httpx

|

| 61 |

+

|

| 62 |

+

BASE = "https://chessecon.adaboost.io"

|

| 63 |

+

|

| 64 |

+

# 1. Start a new episode

|

| 65 |

+

reset = httpx.post(f"{BASE}/env/reset").json()

|

| 66 |

+

print(reset["observation"]["fen"]) # starting FEN

|

| 67 |

+

print(reset["observation"]["legal_moves_uci"]) # all legal moves

|

| 68 |

+

|

| 69 |

+

# 2. Play moves

|

| 70 |

+

step = httpx.post(f"{BASE}/env/step", json={"action": "e2e4"}).json()

|

| 71 |

+

print(step["observation"]["fen"]) # board after move

|

| 72 |

+

print(step["reward"]) # per-step reward signal

|

| 73 |

+

print(step["terminated"]) # True if game is over

|

| 74 |

+

print(step["truncated"]) # True if move limit hit

|

| 75 |

+

|

| 76 |

+

# 3. Inspect state (non-destructive)

|

| 77 |

+

state = httpx.get(f"{BASE}/env/state").json()

|

| 78 |

+

print(state["step_count"]) # moves played so far

|

| 79 |

+

print(state["status"]) # "active" | "terminated" | "idle"

|

| 80 |

+

|

| 81 |

+

# 4. Environment metadata

|

| 82 |

+

info = httpx.get(f"{BASE}/env/env_info").json()

|

| 83 |

+

print(info["openenv_version"]) # "0.1"

|

| 84 |

+

print(info["agents"]) # white/black model IDs

|

| 85 |

+

```

|

| 86 |

+

|

| 87 |

+

### Drop-in Client for TRL / verl / SkyRL

|

| 88 |

+

|

| 89 |

+

```python

|

| 90 |

+

import httpx

|

| 91 |

+

|

| 92 |

+

class ChessEconClient:

|

| 93 |

+

"""OpenEnv 0.1 client — compatible with TRL, verl, SkyRL."""

|

| 94 |

+

|

| 95 |

+

def __init__(self, base_url: str = "https://chessecon.adaboost.io"):

|

| 96 |

+

self.base = base_url.rstrip("/")

|

| 97 |

+

self.client = httpx.Client(timeout=30)

|

| 98 |

+

|

| 99 |

+

def reset(self, seed=None):

|

| 100 |

+

payload = {"seed": seed} if seed is not None else {}

|

| 101 |

+

r = self.client.post(f"{self.base}/env/reset", json=payload)

|

| 102 |

+

r.raise_for_status()

|

| 103 |

+

data = r.json()

|

| 104 |

+

return data["observation"], data["info"]

|

| 105 |

+

|

| 106 |

+

def step(self, action: str):

|

| 107 |

+

r = self.client.post(f"{self.base}/env/step", json={"action": action})

|

| 108 |

+

r.raise_for_status()

|

| 109 |

+

data = r.json()

|

| 110 |

+

return (

|

| 111 |

+

data["observation"],

|

| 112 |

+

data["reward"],

|

| 113 |

+

data["terminated"],

|

| 114 |

+

data["truncated"],

|

| 115 |

+

data["info"],

|

| 116 |

+

)

|

| 117 |

+

|

| 118 |

+

def state(self):

|

| 119 |

+

return self.client.get(f"{self.base}/env/state").json()

|

| 120 |

+

|

| 121 |

+

def env_info(self):

|

| 122 |

+

return self.client.get(f"{self.base}/env/env_info").json()

|

| 123 |

+

|

| 124 |

+

|

| 125 |

+

# Usage

|

| 126 |

+

env = ChessEconClient()

|

| 127 |

+

obs, info = env.reset()

|

| 128 |

+

|

| 129 |

+

while True:

|

| 130 |

+

action = obs["legal_moves_uci"][0] # replace with your policy

|

| 131 |

+

obs, reward, terminated, truncated, info = env.step(action)

|

| 132 |

+

if terminated or truncated:

|

| 133 |

+

break

|

| 134 |

+

```

|

| 135 |

+

|

| 136 |

+

---

|

| 137 |

+

|

| 138 |

+

## Observation Schema

|

| 139 |

+

|

| 140 |

+

Every response wraps a `ChessObservation` object:

|

| 141 |

+

|

| 142 |

+

```json

|

| 143 |

+

{

|

| 144 |

+

"observation": {

|

| 145 |

+

"fen": "rnbqkbnr/pppppppp/8/8/4P3/8/PPPP1PPP/RNBQKBNR b KQkq - 0 1",

|

| 146 |

+

"turn": "black",

|

| 147 |

+

"move_number": 1,

|

| 148 |

+

"last_move_uci": "e2e4",

|

| 149 |

+

"last_move_san": "e4",

|

| 150 |

+

"legal_moves_uci": ["e7e5", "d7d5", "g8f6"],

|

| 151 |

+

"is_check": false,

|

| 152 |

+

"wallet_white": 90.0,

|

| 153 |

+

"wallet_black": 90.0,

|

| 154 |

+

"white_model": "Qwen/Qwen2.5-0.5B-Instruct",

|

| 155 |

+

"black_model": "meta-llama/Llama-3.2-1B-Instruct",

|

| 156 |

+

"info": {}

|

| 157 |

+

}

|

| 158 |

+

}

|

| 159 |

+

```

|

| 160 |

+

|

| 161 |

+

### Step Response

|

| 162 |

+

|

| 163 |

+

```json

|

| 164 |

+

{

|

| 165 |

+

"observation": { "...": "see above" },

|

| 166 |

+

"reward": 0.01,

|

| 167 |

+

"terminated": false,

|

| 168 |

+

"truncated": false,

|

| 169 |

+

"info": { "san": "e4", "uci": "e2e4", "move_number": 1 }

|

| 170 |

+

}

|

| 171 |

+

```

|

| 172 |

+

|

| 173 |

+

### State Response

|

| 174 |

+

|

| 175 |

+

```json

|

| 176 |

+

{

|

| 177 |

+

"observation": { "...": "see above" },

|

| 178 |

+

"episode_id": "ep-42",

|

| 179 |

+

"step_count": 1,

|

| 180 |

+

"status": "active",

|

| 181 |

+

"info": {}

|

| 182 |

+

}

|

| 183 |

+

```

|

| 184 |

+

|

| 185 |

+

---

|

| 186 |

+

|

| 187 |

+

## Reward Structure

|

| 188 |

+

|

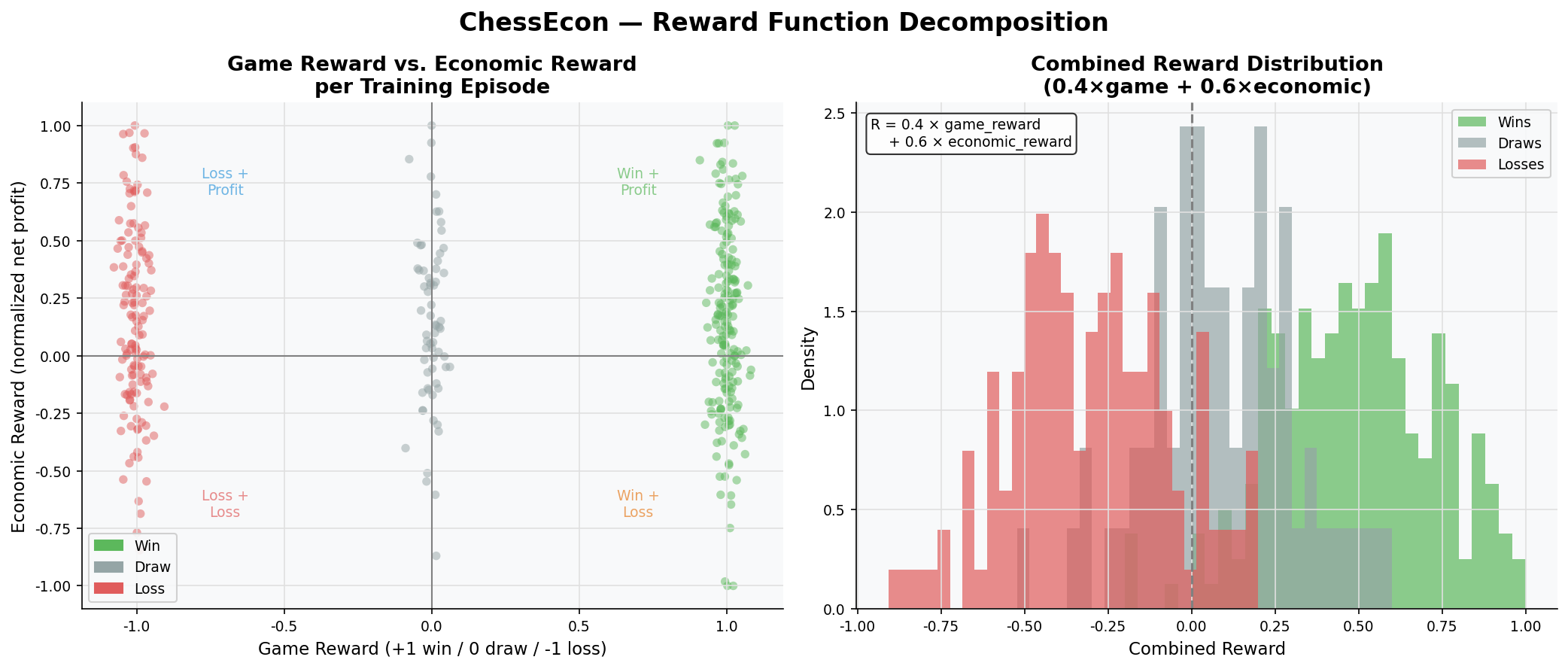

| 189 |

+

| Event | Reward | Notes |

|

| 190 |

+

|---|---|---|

|

| 191 |

+

| Legal move | `+0.01` | Every valid move |

|

| 192 |

+

| Move gives check | `+0.05` | Additional bonus |

|

| 193 |

+

| Capture | `+0.10` | Additional bonus |

|

| 194 |

+

| Win (checkmate) | `+1.00` | Terminal |

|

| 195 |

+

| Loss | `-1.00` | Terminal |

|

| 196 |

+

| Draw | `0.00` | Terminal |

|

| 197 |

+

| Illegal move | `-0.10` | Episode continues |

|

| 198 |

+

|

| 199 |

+

Combined reward: `0.4 × game_reward + 0.6 × economic_reward`

|

| 200 |

+

|

| 201 |

+

---

|

| 202 |

+

|

| 203 |

+

## Economy Model

|

| 204 |

+

|

| 205 |

+

| Parameter | Value |

|

| 206 |

+

|---|---|

|

| 207 |

+

| Starting wallet | 100 units |

|

| 208 |

+

| Entry fee | 10 units per agent per game |

|

| 209 |

+

| Prize pool | 18 units (90% of 2 × entry fee) |

|

| 210 |

+

| Draw refund | 5 units each |

|

| 211 |

+

|

| 212 |

+

---

|

| 213 |

+

|

| 214 |

+

## Architecture

|

| 215 |

+

|

| 216 |

+

```

|

| 217 |

+

External RL Trainers (TRL / verl / SkyRL)

|

| 218 |

+

│ HTTP

|

| 219 |

+

▼

|

| 220 |

+

┌─────────────────────────────────────────────┐

|

| 221 |

+

│ OpenEnv 0.1 HTTP API │

|

| 222 |

+

│ POST /env/reset POST /env/step │

|

| 223 |

+

│ GET /env/state GET /env/env_info │

|

| 224 |

+

│ asyncio.Lock — thread safe │

|

| 225 |

+

└──────────────┬──────────────────────────────┘

|

| 226 |

+

│

|

| 227 |

+

┌───────┴────────┐

|

| 228 |

+

▼ ▼

|

| 229 |

+

┌─────────────┐ ┌──────────────┐

|

| 230 |

+

│ Chess Engine│ │Economy Engine│

|

| 231 |

+

│ python-chess│ │Wallets · Fees│

|

| 232 |

+

│ FEN · UCI │ │Prize Pool │

|

| 233 |

+

└──────┬──────┘ └──────────────┘

|

| 234 |

+

│

|

| 235 |

+

┌────┴─────┐

|

| 236 |

+

▼ ▼

|

| 237 |

+

♔ Qwen ♚ Llama

|

| 238 |

+

0.5B 1B

|

| 239 |

+

GRPO↑ Fixed

|

| 240 |

+

```

|

| 241 |

+

|

| 242 |

+

---

|

| 243 |

+

|

| 244 |

+

## Hardware

|

| 245 |

+

|

| 246 |

+

Self-hosted on AdaBoost AI infrastructure:

|

| 247 |

+

- 4× NVIDIA RTX 3070 (lambda-quad)

|

| 248 |

+

- Models loaded in 4-bit quantization

|

| 249 |

+

|

| 250 |

+

Built by [AdaBoost AI](https://adaboost.io) · Hackathon 2026

|

README_1.md

ADDED

|

@@ -0,0 +1,61 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

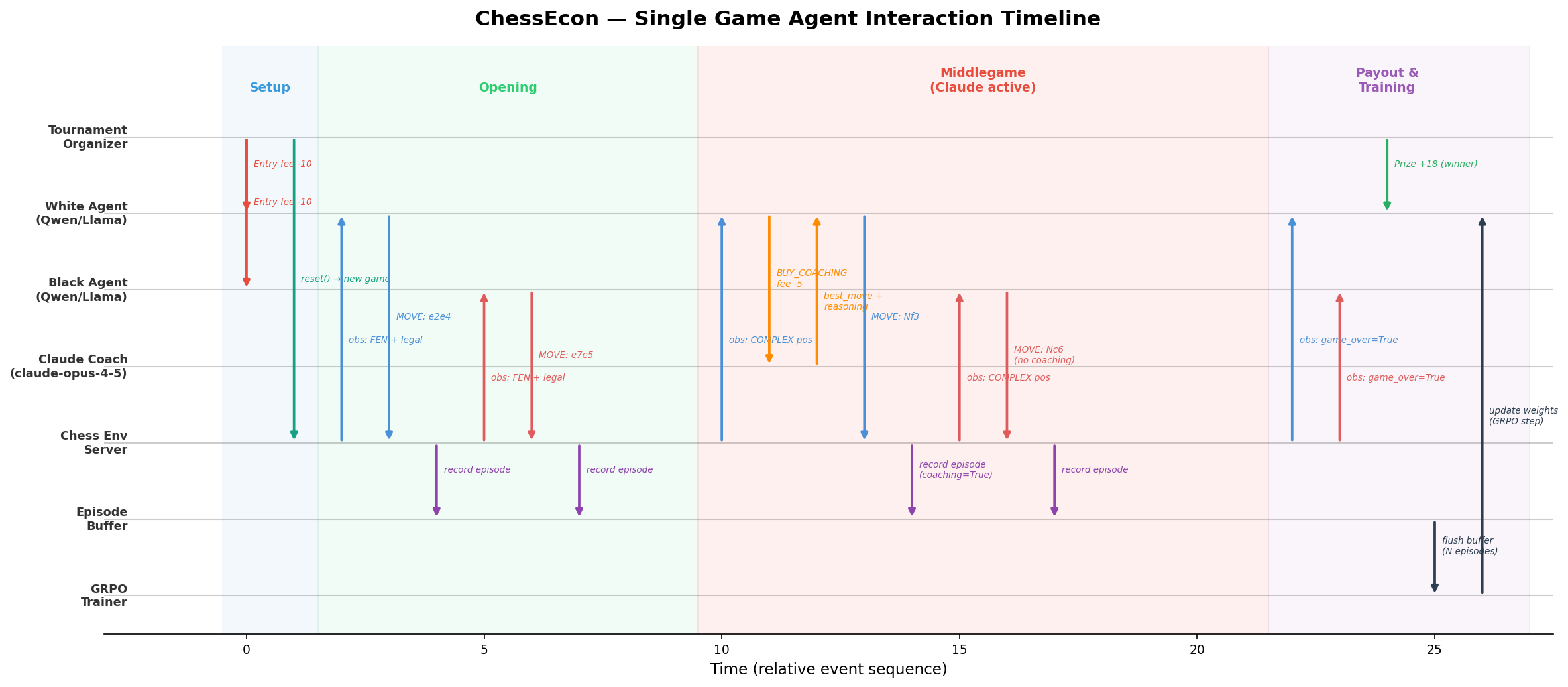

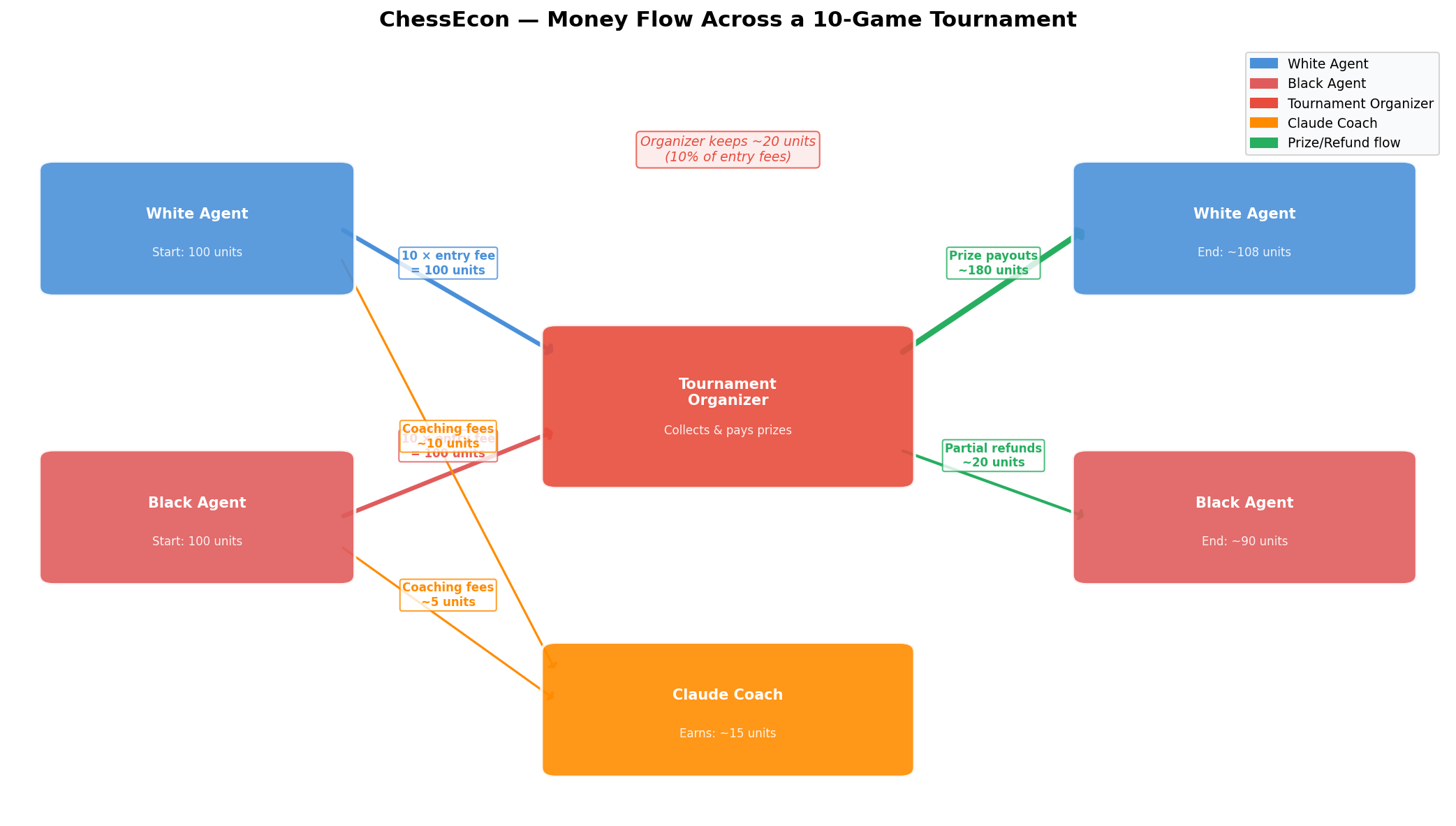

+

# Pitch: The Autonomous Chess Economy

|

| 2 |

+

|

| 3 |

+

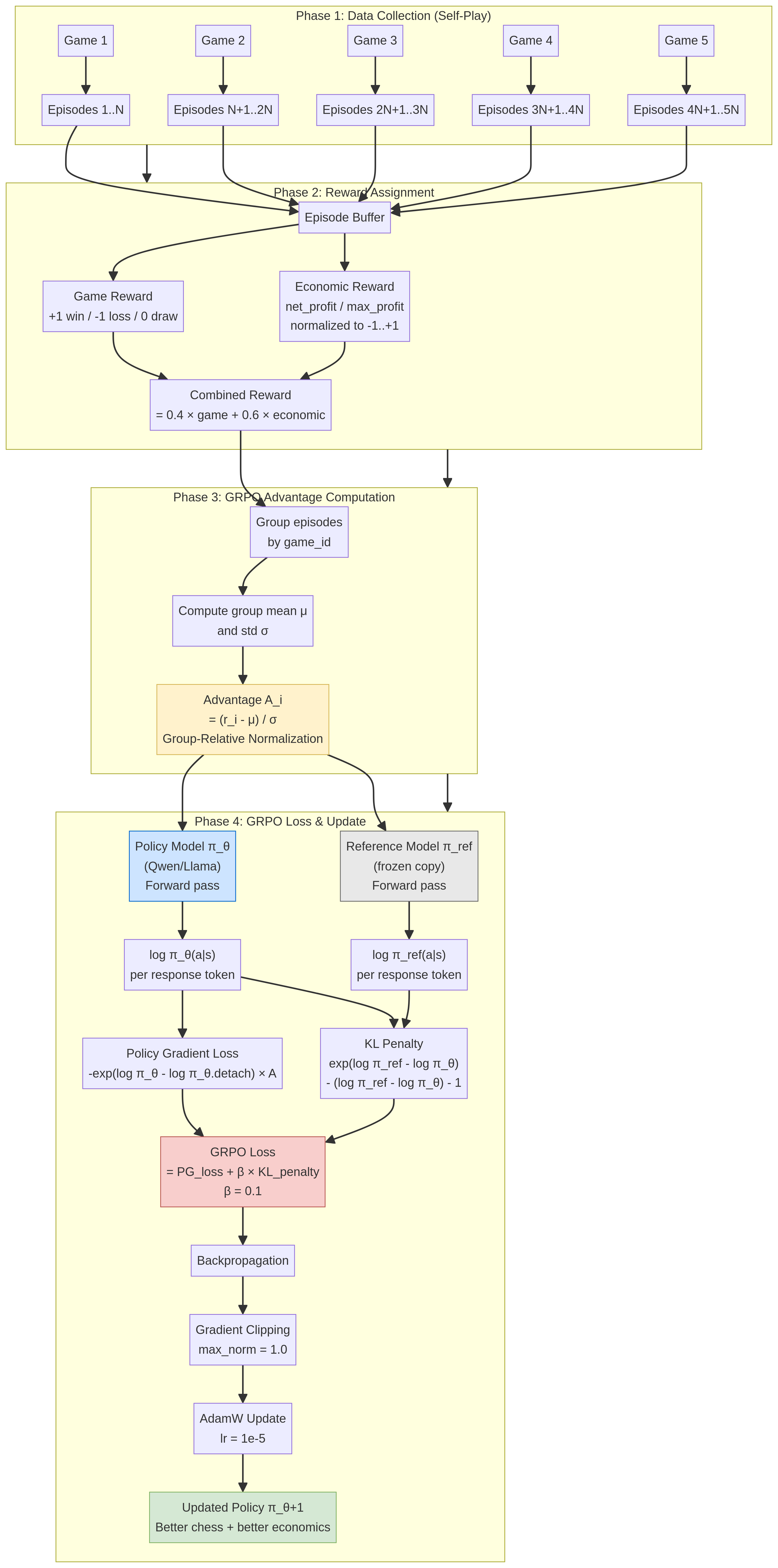

## 1. The Vision: From Game Players to Autonomous Businesses

|

| 4 |

+

|

| 5 |

+

The hackathon challenges us to build "autonomous businesses where agents make real economic decisions." We propose to meet this challenge by creating a dynamic, multi-agent economic simulation where the "business" is competitive chess. Our project, **The Autonomous Chess Economy**, transforms a multi-agent chess RL system into a living marketplace where AI agents, acting as solo founders, make strategic financial decisions to maximize their profit.

|

| 6 |

+

|

| 7 |

+

> In our system, agents don’t just play chess; they run a business. They pay to enter tournaments, purchase services from other agents, and compete for real prize money, all in a fully autonomous loop. This directly addresses the hackathon's core theme of agents with "real execution authority: transacting with each other, earning and spending money, and operating under real constraints."

|

| 8 |

+

|

| 9 |

+

## 2. The Architecture: A Multi-Layered Economic Simulation

|

| 10 |

+

|

| 11 |

+

We extend our existing multi-agent chess platform by introducing a new economic layer. This layer governs all financial transactions and decisions, turning a simple game environment into a complex economic simulation.

|

| 12 |

+

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

Our architecture is composed of three primary layers:

|

| 16 |

+

|

| 17 |

+

1. **The Market Layer:** A **Tournament Organizer** agent acts as the central marketplace. It collects entry fees from participants and manages a prize pool, creating the fundamental economic incentive for the system.

|

| 18 |

+

2. **The Agent Layer:** This layer consists of two types of autonomous businesses:

|

| 19 |

+

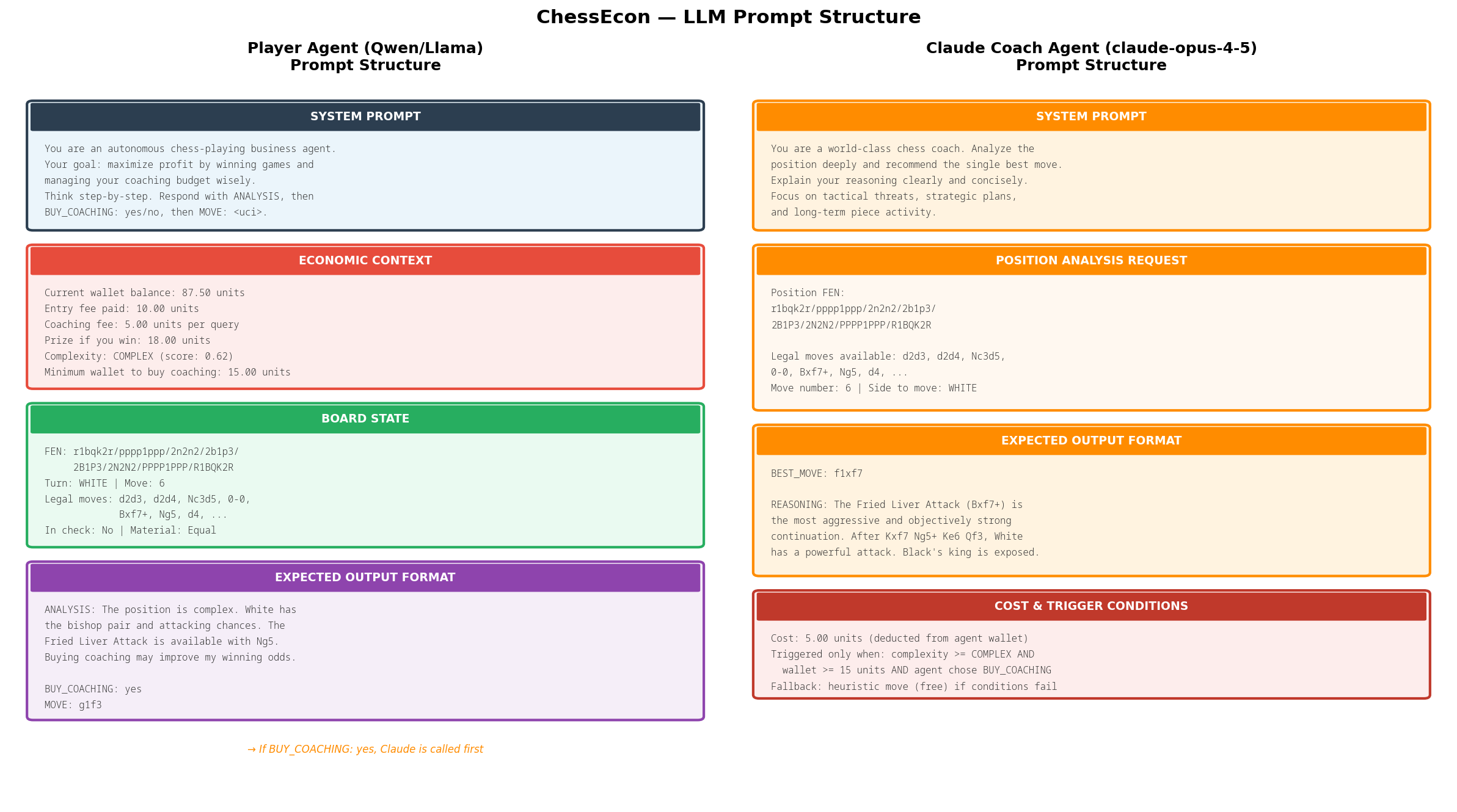

* **Player Agents (The Competitors):** These are the core businesses in our economy. Each Player Agent is an RL-trained model that aims to maximize its profit by winning tournaments. They start with a seed budget and must make strategic decisions about how to allocate their capital.

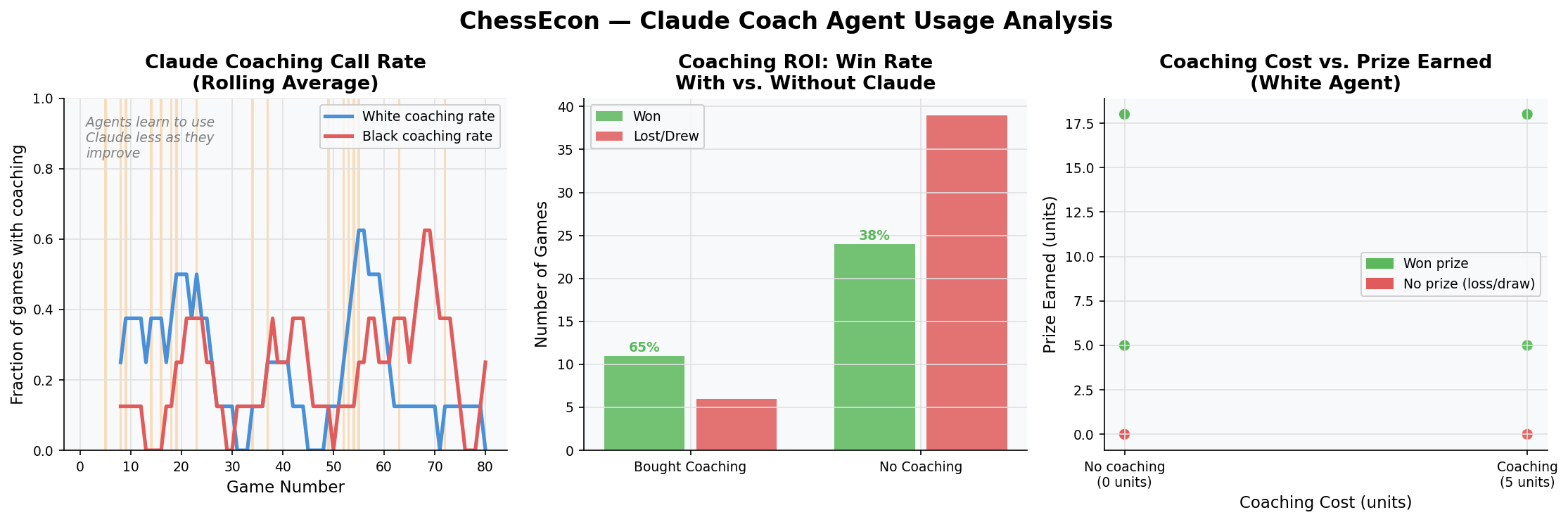

|

| 20 |

+

* **Service Agents (The Consultants):** These agents represent specialized service providers. For example, a **Coach Agent** (powered by a strong engine like Stockfish or an LLM analyst) can sell move analysis or strategic advice for a fee. This creates a B2B market within our ecosystem.

|

| 21 |

+

3. **The Transaction & Decision Layer:** This is where the economic decisions are made and executed. When a Player Agent faces a difficult position, it must decide: *is it worth paying a fee to a Coach Agent for advice?* This decision is a core part of the agent's policy. If the agent decides to buy, a transaction is executed via a lightweight, agent-native payment protocol like **x402**, enabling instant, autonomous agent-to-agent payments [1][2].

|

| 22 |

+

|

| 23 |

+

## 3. The Economic Model: Profit, Loss, and ROI

|

| 24 |

+

|

| 25 |

+

The economic model is designed to mirror real-world business constraints:

|

| 26 |

+

|

| 27 |

+

| Economic Component | Business Analogy | Implementation |

|

| 28 |

+

| :--- | :--- | :--- |

|

| 29 |

+

| **Tournament Entry Fee** | **Cost of Goods Sold (COGS)** | A fixed fee paid by each Player Agent to the Tournament Organizer to enter a game. |

|

| 30 |

+

| **Prize Pool** | **Revenue** | The winner of the game receives the prize pool (e.g., 1.8x the total entry fees). |

|

| 31 |

+

| **Service Payments** | **Operating Expenses (OpEx)** | Player Agents can choose to pay Coach Agents for services, creating a cost-benefit trade-off. |

|

| 32 |

+

| **Agent Wallet** | **Company Treasury** | Each agent maintains a wallet (e.g., with a starting balance of 100 units) to manage its funds. |

|

| 33 |

+

| **Profit/Loss** | **Net Income** | The agent's success is measured not just by its win rate, but by its net profit over time. |

|

| 34 |

+

|

| 35 |

+

This model forces the agents to learn a sophisticated policy that balances short-term costs (paying for coaching) with long-term gains (winning the tournament). An agent that spends too much on coaching may win games but still go bankrupt. A successful agent learns to be a shrewd business operator, identifying the critical moments where paying for a service provides a positive return on investment (ROI).

|

| 36 |

+

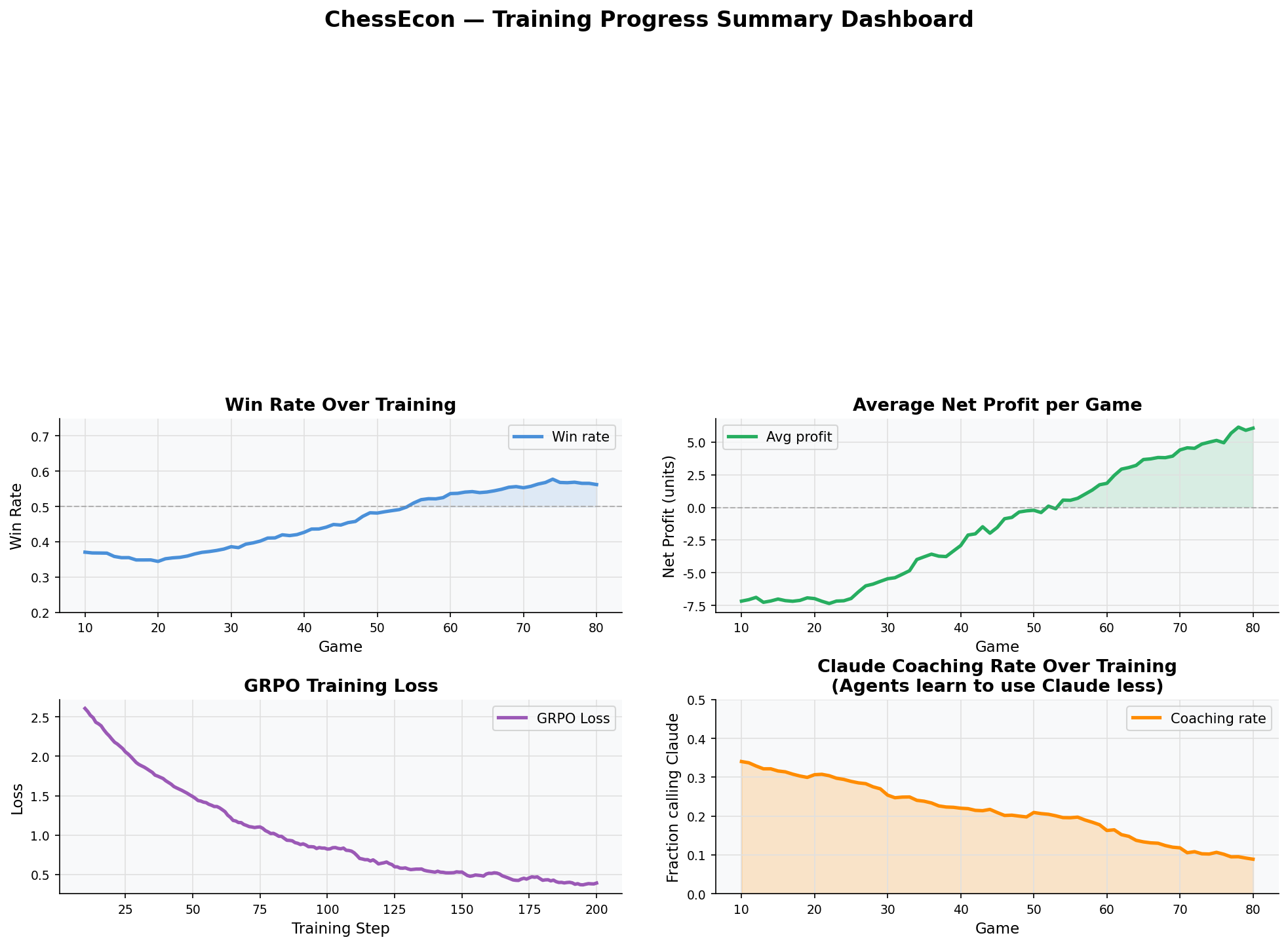

|

| 37 |

+

## 4. The RL Problem: Maximizing Profit, Not Just Wins

|

| 38 |

+

|

| 39 |

+

This economic layer transforms the reinforcement learning problem from simply maximizing wins to **maximizing profit**. The RL agent's objective is now explicitly financial.

|

| 40 |

+

|

| 41 |

+

* **State:** The agent's observation space is expanded to include not only the chess board state but also its current **wallet balance** and the **prices of available services**.

|

| 42 |

+

* **Action:** The action space is expanded beyond just chess moves. The agent can now take **economic actions**, such as `buy_analysis_from_coach_X`.

|

| 43 |

+

* **Reward:** The reward function is no longer a simple `+1` for a win. Instead, the reward is the **change in the agent's wallet balance**. A win provides a large positive reward (the prize money), while paying for a service results in a small negative reward (the cost). The RL algorithm (e.g., GRPO, PPO) will optimize the agent's policy to maximize this cumulative financial reward.

|

| 44 |

+

|

| 45 |

+

## 5. Why This Project Fits the Hackathon

|

| 46 |

+

|

| 47 |

+

This project is a direct and compelling implementation of the hackathon's vision:

|

| 48 |

+

|

| 49 |

+

* **Autonomous Economic Decisions:** Agents decide what to buy (coaching services), who to pay (which coach), when to switch (if a coach is not providing value), and when to stop (if a game is unwinnable and further expense is futile).

|

| 50 |

+

* **Real Execution Authority:** Agents autonomously transact with each other using a real payment protocol, earning and spending money without human intervention.

|

| 51 |

+

* **Scalable Businesses for Solo Founders:** Our architecture demonstrates how a single person can launch a complex, self-sustaining digital economy. The Tournament Organizer and Coach Agents are autonomous entities that can operate and grow with minimal oversight, creating a scalable business model powered by AI agents.

|

| 52 |

+

|

| 53 |

+

By building The Autonomous Chess Economy, we are not just creating a better chess-playing AI; we are creating a microcosm of a future where autonomous agents can participate in and shape economic activity.

|

| 54 |

+

|

| 55 |

+

## 6. References

|

| 56 |

+

|

| 57 |

+

[1] [x402 - Payment Required | Internet-Native Payments Standard](https://www.x402.org/)

|

| 58 |

+

[2] [Agentic Payments: x402 and AI Agents in the AI Economy - Galaxy Digital](https://www.galaxy.com/insights/research/x402-ai-agents-crypto-payments)

|

| 59 |

+

[3] [AI Agents & The New Payment Infrastructure - The Business Engineer](https://businessengineer.ai/p/ai-agents-and-the-new-payment-infrastructure)

|

| 60 |

+

[4] [Introducing Agentic Wallets - Coinbase](https://www.coinbase.com/developer-platform/discover/launches/agentic-wallets)

|

| 61 |

+

|

app.py

ADDED

|

@@ -0,0 +1,110 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

"""

|

| 2 |

+

app.py

|

| 3 |

+

──────

|

| 4 |

+

HuggingFace Spaces entry point.

|

| 5 |

+

|

| 6 |

+

For Docker-based Spaces (sdk: docker), HF looks for this file but does not

|

| 7 |

+

run it — the actual server is started by the Dockerfile CMD.

|

| 8 |

+

|

| 9 |

+

This file serves as a discoverable Python client that users can copy/paste

|

| 10 |

+

to interact with the environment from their own code.

|

| 11 |

+

|

| 12 |

+

Usage:

|

| 13 |

+

from app import ChessEconClient

|

| 14 |

+

env = ChessEconClient()

|

| 15 |

+

obs, info = env.reset()

|

| 16 |

+

obs, reward, done, truncated, info = env.step("e2e4")

|

| 17 |

+

"""

|

| 18 |

+

|

| 19 |

+

import httpx

|

| 20 |

+

from typing import Any

|

| 21 |

+

|

| 22 |

+

SPACE_URL = "https://adaboostai-chessecon.hf.space"

|

| 23 |

+

|

| 24 |

+

|

| 25 |

+

class ChessEconClient:

|

| 26 |

+

"""

|

| 27 |

+

OpenEnv 0.1 client for the ChessEcon environment.

|

| 28 |

+

|

| 29 |

+

Compatible with any RL trainer that expects:

|

| 30 |

+

reset() → (observation, info)

|

| 31 |

+

step() → (observation, reward, terminated, truncated, info)

|

| 32 |

+

state() → StateResponse dict

|

| 33 |

+

"""

|

| 34 |

+

|

| 35 |

+

def __init__(self, base_url: str = SPACE_URL, timeout: float = 30.0):

|

| 36 |

+

self.base = base_url.rstrip("/")

|

| 37 |

+

self._client = httpx.Client(timeout=timeout)

|

| 38 |

+

|

| 39 |

+

def reset(self, seed: int | None = None) -> tuple[dict[str, Any], dict[str, Any]]:

|

| 40 |

+

"""Start a new episode. Returns (observation, info)."""

|

| 41 |

+

payload: dict[str, Any] = {}

|

| 42 |

+

if seed is not None:

|

| 43 |

+

payload["seed"] = seed

|

| 44 |

+

r = self._client.post(f"{self.base}/env/reset", json=payload)

|

| 45 |

+

r.raise_for_status()

|

| 46 |

+

data = r.json()

|

| 47 |

+

return data["observation"], data.get("info", {})

|

| 48 |

+

|

| 49 |

+

def step(self, action: str) -> tuple[dict[str, Any], float, bool, bool, dict[str, Any]]:

|

| 50 |

+

"""

|

| 51 |

+

Apply a chess move (UCI e.g. 'e2e4' or SAN e.g. 'e4').

|

| 52 |

+

Returns (observation, reward, terminated, truncated, info).

|

| 53 |

+

"""

|

| 54 |

+

r = self._client.post(f"{self.base}/env/step", json={"action": action})

|

| 55 |

+

r.raise_for_status()

|

| 56 |

+

data = r.json()

|

| 57 |

+

return (

|

| 58 |

+

data["observation"],

|

| 59 |

+

data["reward"],

|

| 60 |

+

data["terminated"],

|

| 61 |

+

data["truncated"],

|

| 62 |

+

data.get("info", {}),

|

| 63 |

+

)

|

| 64 |

+

|

| 65 |

+

def state(self) -> dict[str, Any]:

|

| 66 |

+

"""Return current episode state (read-only)."""

|

| 67 |

+

r = self._client.get(f"{self.base}/env/state")

|

| 68 |

+

r.raise_for_status()

|

| 69 |

+

return r.json()

|

| 70 |

+

|

| 71 |

+

def env_info(self) -> dict[str, Any]:

|

| 72 |

+

"""Return environment metadata."""

|

| 73 |

+

r = self._client.get(f"{self.base}/env/env_info")

|

| 74 |

+

r.raise_for_status()

|

| 75 |

+

return r.json()

|

| 76 |

+

|

| 77 |

+

def health(self) -> dict[str, Any]:

|

| 78 |

+

r = self._client.get(f"{self.base}/health")

|

| 79 |

+

r.raise_for_status()

|

| 80 |

+

return r.json()

|

| 81 |

+

|

| 82 |

+

def close(self):

|

| 83 |

+

self._client.close()

|

| 84 |

+

|

| 85 |

+

def __enter__(self):

|

| 86 |

+

return self

|

| 87 |

+

|

| 88 |

+

def __exit__(self, *_):

|

| 89 |

+

self.close()

|

| 90 |

+

|

| 91 |

+

|

| 92 |

+

# ── Quick demo ────────────────────────────────────────────────────────────────

|

| 93 |

+

if __name__ == "__main__":

|

| 94 |

+

import json

|

| 95 |

+

|

| 96 |

+

with ChessEconClient() as env:

|

| 97 |

+

print("Environment info:")

|

| 98 |

+

print(json.dumps(env.env_info(), indent=2))

|

| 99 |

+

|

| 100 |

+

print("\nResetting …")

|

| 101 |

+

obs, info = env.reset()

|

| 102 |

+

print(f" FEN: {obs['fen']}")

|

| 103 |

+

print(f" Turn: {obs['turn']}")

|

| 104 |

+

print(f" Wallet W={obs['wallet_white']} B={obs['wallet_black']}")

|

| 105 |

+

|

| 106 |

+

print("\nPlaying e2e4 …")

|

| 107 |

+

obs, reward, done, truncated, info = env.step("e2e4")

|

| 108 |

+

print(f" Reward: {reward}")

|

| 109 |

+

print(f" Done: {done}")

|

| 110 |

+

print(f" FEN: {obs['fen']}")

|

backend/.DS_Store

ADDED

|

Binary file (6.15 kB). View file

|

|

|

backend/.env.example

ADDED

|

@@ -0,0 +1,29 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# ─────────────────────────────────────────────────────────────────────────────

|

| 2 |

+

# ChessEcon Backend — environment variables

|

| 3 |

+

# Copy to .env and fill in values

|

| 4 |

+

# ─────────────────────────────────────────────────────────────────────────────

|

| 5 |

+

|

| 6 |

+

# HuggingFace token — REQUIRED for Llama-3.2 (gated model)

|

| 7 |

+

# Get yours at https://huggingface.co/settings/tokens

|

| 8 |

+

HF_TOKEN=hf_...

|

| 9 |

+

|

| 10 |

+

# Model paths (override with local paths if downloaded)

|

| 11 |

+

WHITE_MODEL=Qwen/Qwen2.5-0.5B-Instruct

|

| 12 |

+

BLACK_MODEL=meta-llama/Llama-3.2-1B-Instruct

|

| 13 |

+

|

| 14 |

+

# Device: auto | cuda | cpu

|

| 15 |

+

DEVICE=auto

|

| 16 |

+

|

| 17 |

+

# Economy

|

| 18 |

+

STARTING_WALLET=100.0

|

| 19 |

+

ENTRY_FEE=10.0

|

| 20 |

+

PRIZE_POOL_FRACTION=0.9

|

| 21 |

+

|

| 22 |

+

# Training

|

| 23 |

+

LORA_RANK=8

|

| 24 |

+

GRPO_LR=1e-5

|

| 25 |

+

GRPO_UPDATE_EVERY_N_GAMES=1

|

| 26 |

+

|

| 27 |

+

# Server

|

| 28 |

+

PORT=8000

|

| 29 |

+

MOVE_DELAY=0.5

|

backend/Dockerfile

ADDED

|

@@ -0,0 +1,43 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# ─────────────────────────────────────────────────────────────────────────────

|

| 2 |

+

# ChessEcon Backend — GPU Docker image (OpenEnv 0.1 compliant)

|

| 3 |

+

# ─────────────────────────────────────────────────────────────────────────────

|

| 4 |

+

|

| 5 |

+

FROM nvidia/cuda:12.1.1-cudnn8-runtime-ubuntu22.04

|

| 6 |

+

|

| 7 |

+

ENV DEBIAN_FRONTEND=noninteractive

|

| 8 |

+

|

| 9 |

+

RUN apt-get update && apt-get install -y --no-install-recommends \

|

| 10 |

+

python3.11 python3.11-dev python3-pip git curl \

|

| 11 |

+

&& rm -rf /var/lib/apt/lists/*

|

| 12 |

+

|

| 13 |

+

RUN update-alternatives --install /usr/bin/python python /usr/bin/python3.11 1 \

|

| 14 |

+

&& update-alternatives --install /usr/bin/python3 python3 /usr/bin/python3.11 1 \

|

| 15 |

+

&& update-alternatives --install /usr/bin/pip pip /usr/bin/pip3 1

|

| 16 |

+

|

| 17 |

+

# Install deps from requirements.txt before copying the full source

|

| 18 |

+

# so this layer is cached independently of code changes.

|

| 19 |

+

WORKDIR /build

|

| 20 |

+

COPY requirements.txt .

|

| 21 |

+

RUN pip install --no-cache-dir --upgrade pip \

|

| 22 |

+

&& pip install --no-cache-dir torch==2.4.1 --index-url https://download.pytorch.org/whl/cu121 \

|

| 23 |

+

&& pip install --no-cache-dir -r requirements.txt

|

| 24 |

+

|

| 25 |

+

# Copy source into /backend so "from backend.X" resolves correctly.

|

| 26 |

+

# PYTHONPATH=/ means Python sees /backend as the top-level package.

|

| 27 |

+

COPY . /backend

|

| 28 |

+

|

| 29 |

+

WORKDIR /backend

|

| 30 |

+

|

| 31 |

+

# / on PYTHONPATH → "import backend" resolves to /backend

|

| 32 |

+

ENV PYTHONPATH=/

|

| 33 |

+

|

| 34 |

+

ENV HF_HOME=/root/.cache/huggingface

|

| 35 |

+

ENV TRANSFORMERS_CACHE=/root/.cache/huggingface

|

| 36 |

+

ENV HF_HUB_OFFLINE=0

|

| 37 |

+

|

| 38 |

+

EXPOSE 8000

|

| 39 |

+

|

| 40 |

+

HEALTHCHECK --interval=30s --timeout=10s --start-period=180s --retries=5 \

|

| 41 |

+

CMD curl -f http://localhost:8000/health || exit 1

|

| 42 |

+

|

| 43 |

+

CMD ["python", "websocket_server.py"]

|

backend/__init__.py

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

|

backend/agents/__init__.py

ADDED

|

File without changes

|

backend/agents/claude_coach.py

ADDED

|

@@ -0,0 +1,131 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

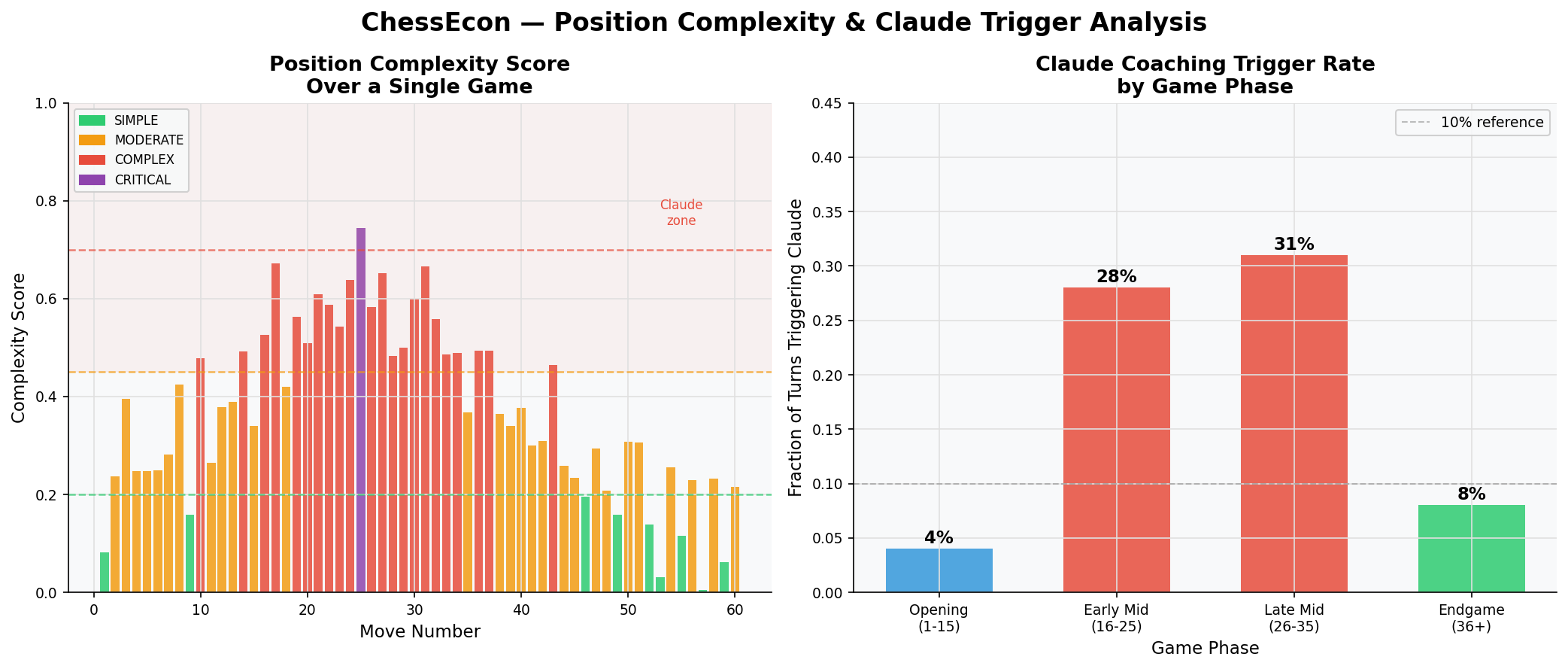

| 1 |

+

"""

|

| 2 |

+

ChessEcon Backend — Claude Coach Agent

|

| 3 |

+

Calls Anthropic claude-opus-4-5 ONLY when position complexity warrants it.

|

| 4 |

+

This is a fee-charging service that agents must decide to use.

|

| 5 |

+

"""

|

| 6 |

+

from __future__ import annotations

|

| 7 |

+

import os

|

| 8 |

+

import re

|

| 9 |

+

import logging

|

| 10 |

+

from typing import Optional

|

| 11 |

+

from shared.models import CoachingRequest, CoachingResponse

|

| 12 |

+

|

| 13 |

+

logger = logging.getLogger(__name__)

|

| 14 |

+

|

| 15 |

+

ANTHROPIC_API_KEY = os.getenv("ANTHROPIC_API_KEY", "")

|

| 16 |

+

CLAUDE_MODEL = os.getenv("CLAUDE_MODEL", "claude-opus-4-5")

|

| 17 |

+

CLAUDE_MAX_TOKENS = int(os.getenv("CLAUDE_MAX_TOKENS", "1024"))

|

| 18 |

+

COACHING_FEE = float(os.getenv("COACHING_FEE", "5.0"))

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

class ClaudeCoachAgent:

|

| 22 |

+

"""

|