Commit ·

0245be8

0

Parent(s):

Initial commit

Browse filesThis view is limited to 50 files because it contains too many changes. See raw diff

- .env-example +48 -0

- .gitignore +17 -0

- LICENSE +21 -0

- Makefile +283 -0

- README.md +679 -0

- RESULTS.md +166 -0

- app/api/.dockerignore +4 -0

- app/api/API.md +666 -0

- app/api/Dockerfile +15 -0

- app/api/Makefile +159 -0

- app/api/config.py +176 -0

- app/api/data/training_data/org-about_the_company.md +36 -0

- app/api/data/training_data/org-board_of_directors.md +28 -0

- app/api/data/training_data/org-company_story.md +31 -0

- app/api/data/training_data/org-corporate_philosophy.md +31 -0

- app/api/data/training_data/org-customer_support.md +28 -0

- app/api/data/training_data/org-earnings_fy2023.md +58 -0

- app/api/data/training_data/org-management_team.md +28 -0

- app/api/data/training_data/project-frogonil.md +48 -0

- app/api/data/training_data/project-kekzal.md +50 -0

- app/api/data/training_data/project-memegen.md +36 -0

- app/api/data/training_data/project-memetrex.md +48 -0

- app/api/data/training_data/project-neurokek.md +56 -0

- app/api/data/training_data/project-pepetamine.md +48 -0

- app/api/data/training_data/project-pepetrak.md +36 -0

- app/api/helpers.py +658 -0

- app/api/llm.py +465 -0

- app/api/main.py +567 -0

- app/api/models.py +660 -0

- app/api/ngrok.py +117 -0

- app/api/requirements.txt +12 -0

- app/api/seed.py +166 -0

- app/api/static/img/rasagpt-icon-200x200.png +0 -0

- app/api/static/img/rasagpt-logo-1.png +0 -0

- app/api/static/img/rasagpt-logo-2.png +0 -0

- app/api/util.py +80 -0

- app/api/wait-for-it.sh +0 -0

- app/db/Dockerfile +5 -0

- app/db/create_db.sh +13 -0

- app/rasa-credentials/.dockerignore +4 -0

- app/rasa-credentials/Dockerfile +15 -0

- app/rasa-credentials/main.py +182 -0

- app/rasa-credentials/requirements.txt +8 -0

- app/rasa/.dockerignore +4 -0

- app/rasa/actions/Dockerfile +9 -0

- app/rasa/actions/__init__.py +0 -0

- app/rasa/actions/actions.py +73 -0

- app/rasa/config.yml +5 -0

- app/rasa/credentials.yml +7 -0

- app/rasa/custom_telegram.py +16 -0

.env-example

ADDED

|

@@ -0,0 +1,48 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

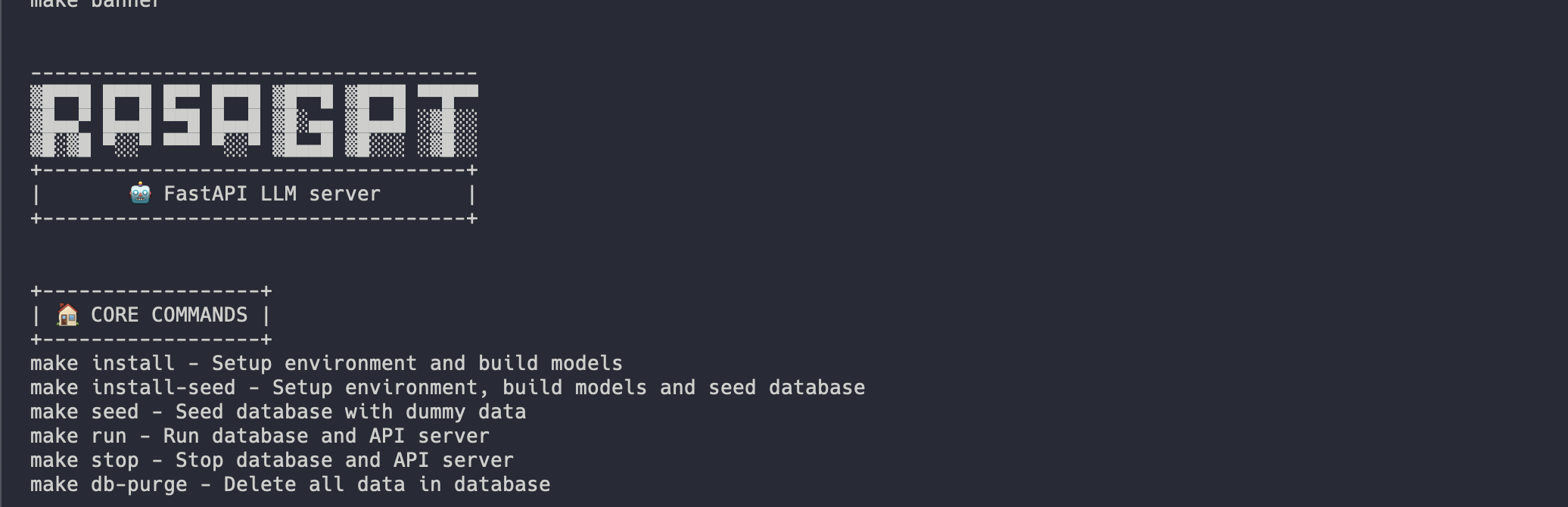

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

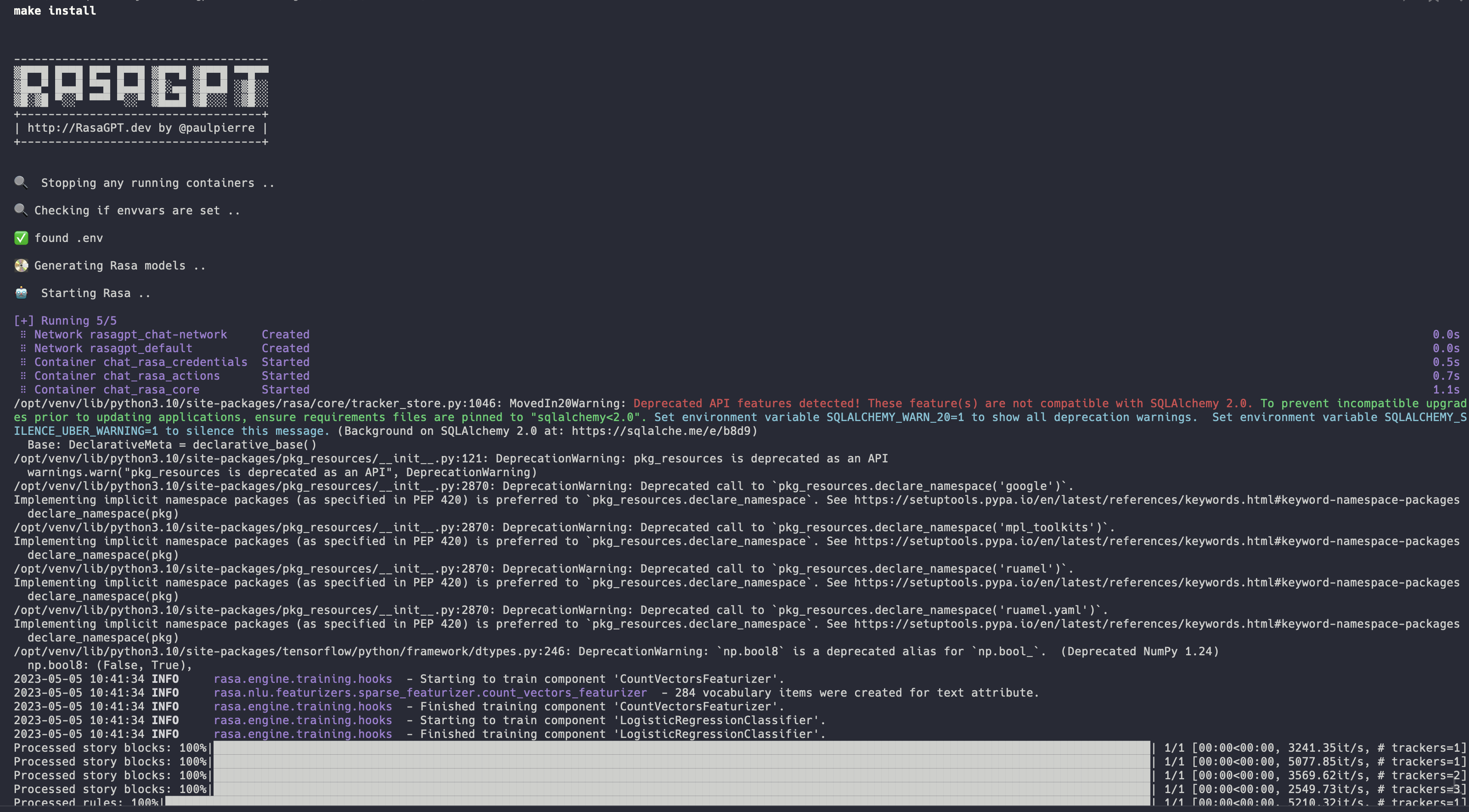

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

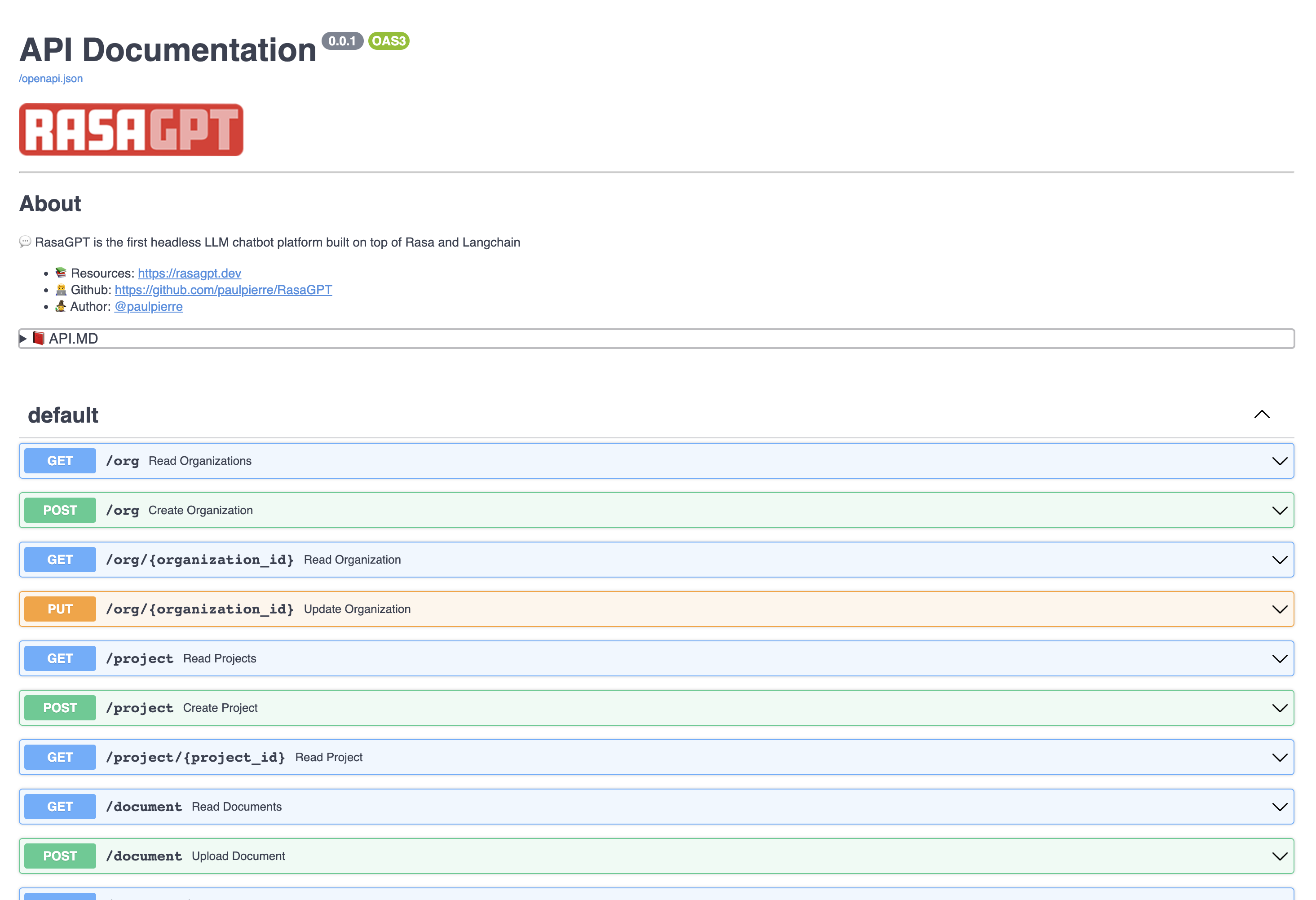

|

|

|

|

| 1 |

+

ENV=local

|

| 2 |

+

|

| 3 |

+

FILE_UPLOAD_PATH=data

|

| 4 |

+

LLM_DEFAULT_TEMPERATURE=0

|

| 5 |

+

LLM_CHUNK_SIZE=1000

|

| 6 |

+

LLM_CHUNK_OVERLAP=200

|

| 7 |

+

LLM_DISTANCE_THRESHOLD=0.2

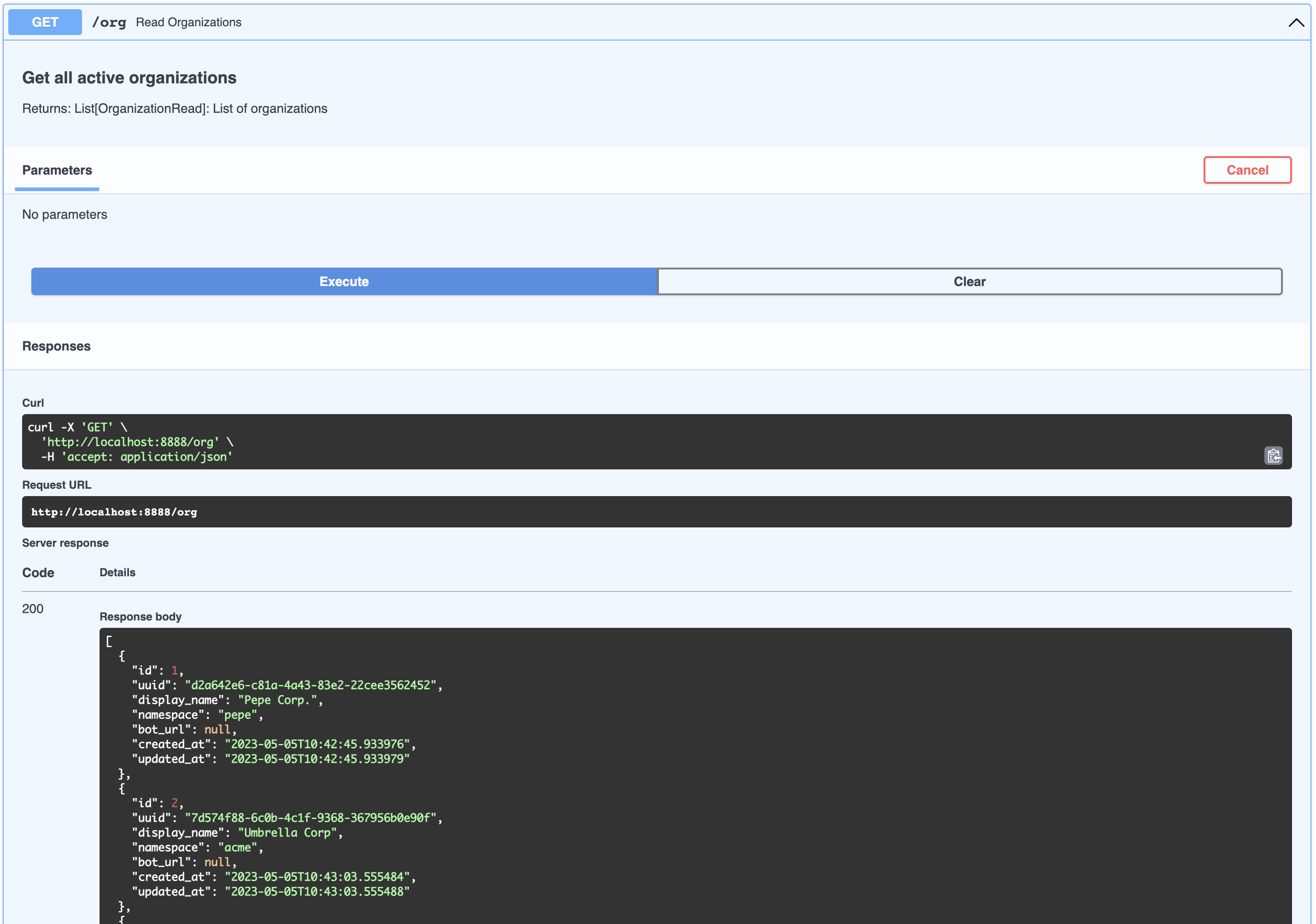

|

| 8 |

+

LLM_MAX_OUTPUT_TOKENS=256

|

| 9 |

+

LLM_MIN_NODE_LIMIT=3

|

| 10 |

+

LLM_DEFAULT_DISTANCE_STRATEGY=EUCLIDEAN

|

| 11 |

+

|

| 12 |

+

POSTGRES_USER=postgres

|

| 13 |

+

POSTGRES_PASSWORD=postgres

|

| 14 |

+

POSTGRES_DB=postgres

|

| 15 |

+

PGVECTOR_ADD_INDEX=true

|

| 16 |

+

|

| 17 |

+

DB_HOST=db

|

| 18 |

+

DB_PORT=5432

|

| 19 |

+

DB_USER=api

|

| 20 |

+

DB_NAME=api

|

| 21 |

+

DB_PASSWORD=<YOUR DATABASE PASSWORD>

|

| 22 |

+

|

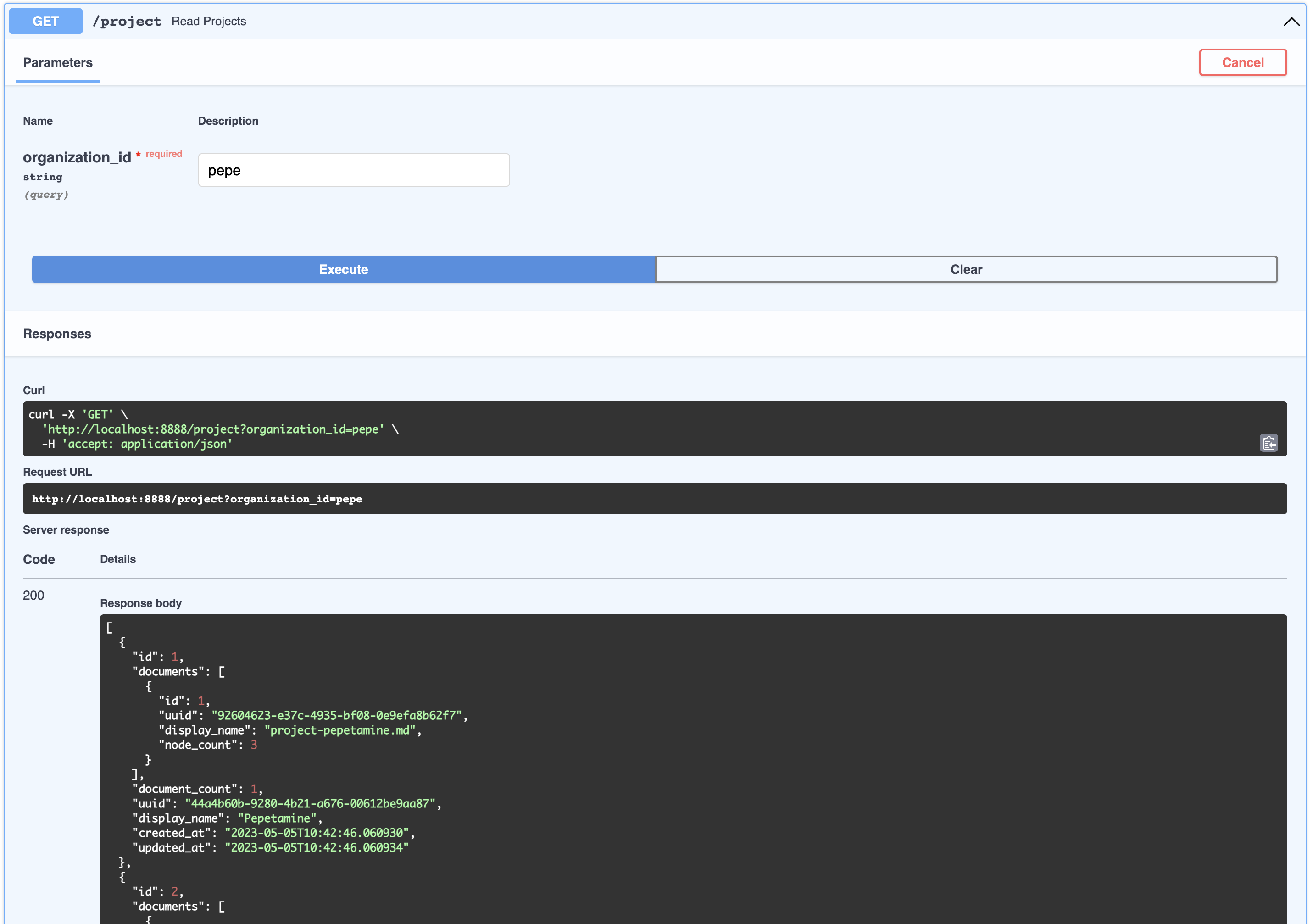

| 23 |

+

NGROK_HOST=ngrok

|

| 24 |

+

NGROK_PORT=4040

|

| 25 |

+

NGROK_AUTHTOKEN=<YOUR NGROK AUTH TOKEN>

|

| 26 |

+

NGROK_API_KEY=<YOUR NGROK API KEY>

|

| 27 |

+

NGROK_INTERNAL_WEBHOOK_HOST=api

|

| 28 |

+

NGROK_INTERNAL_WEBHOOK_PORT=8888

|

| 29 |

+

NGROK_DEBUG=true

|

| 30 |

+

NGROK_CONFIG=/etc/ngrok.yml

|

| 31 |

+

|

| 32 |

+

RASA_WEBHOOK_HOST=rasa-core

|

| 33 |

+

RASA_WEBHOOK_PORT=5005

|

| 34 |

+

|

| 35 |

+

CREDENTIALS_PATH=/app/rasa/credentials.yml

|

| 36 |

+

|

| 37 |

+

TELEGRAM_ACCESS_TOKEN=<YOUR TELEGRAM ACCESS TOKEN>

|

| 38 |

+

TELEGRAM_BOTNAME=rasagpt

|

| 39 |

+

|

| 40 |

+

API_PORT=8888

|

| 41 |

+

API_HOST=api

|

| 42 |

+

|

| 43 |

+

PGADMIN_PORT=5050

|

| 44 |

+

PGADMIN_DEFAULT_PASSWORD=pgadmin

|

| 45 |

+

PGADMIN_DEFAULT_EMAIL=your@emailaddress.com

|

| 46 |

+

|

| 47 |

+

MODEL_NAME=gpt-3.5-turbo

|

| 48 |

+

OPENAI_API_KEY=<YOUR OPEN AI KEY>

|

.gitignore

ADDED

|

@@ -0,0 +1,17 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

.DS_Store

|

| 2 |

+

.trunk

|

| 3 |

+

.vscode

|

| 4 |

+

mnt

|

| 5 |

+

venv/

|

| 6 |

+

.env

|

| 7 |

+

.env-dev

|

| 8 |

+

.env

|

| 9 |

+

.env-staging

|

| 10 |

+

.env-stage

|

| 11 |

+

.env-prod

|

| 12 |

+

.env-production

|

| 13 |

+

__pycache__/

|

| 14 |

+

app/rasa/models/*

|

| 15 |

+

app/rasa/.rasa

|

| 16 |

+

app/rasa/.config

|

| 17 |

+

app/rasa/.keras

|

LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT License

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2023 Paul Pierre

|

| 4 |

+

|

| 5 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 6 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 7 |

+

in the Software without restriction, including without limitation the rights

|

| 8 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 9 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 10 |

+

furnished to do so, subject to the following conditions:

|

| 11 |

+

|

| 12 |

+

The above copyright notice and this permission notice shall be included in all

|

| 13 |

+

copies or substantial portions of the Software.

|

| 14 |

+

|

| 15 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 16 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 17 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 18 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 19 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 20 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 21 |

+

SOFTWARE.

|

Makefile

ADDED

|

@@ -0,0 +1,283 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

.PHONY: default banner help install build run stop restart logs ngrok pgadmin api api-stop db db-stop db-purge purge models shell-api shell-db shell-rasa shell-actions rasa-train rasa-start rasa-stop

|

| 2 |

+

|

| 3 |

+

defaut: help

|

| 4 |

+

|

| 5 |

+

help:

|

| 6 |

+

@make banner

|

| 7 |

+

@echo "+------------------+"

|

| 8 |

+

@echo "| 🏠 CORE COMMANDS |"

|

| 9 |

+

@echo "+------------------+"

|

| 10 |

+

@echo "make install - Install and run RasaGPT"

|

| 11 |

+

@echo "make build - Build docker images"

|

| 12 |

+

@echo "make run - Run RasaGPT"

|

| 13 |

+

@echo "make stop - Stop RasaGPT"

|

| 14 |

+

@echo "make restart - Restart RasaGPT\n"

|

| 15 |

+

@echo "+--------------------+"

|

| 16 |

+

@echo "| 🌍 ADMIN INTERACES |"

|

| 17 |

+

@echo "+--------------------+"

|

| 18 |

+

@echo "make logs - View logs via Dozzle"

|

| 19 |

+

@echo "make ngrok - View ngrok dashboard"

|

| 20 |

+

@echo "make pgadmin - View pgAdmin dashboard\n"

|

| 21 |

+

@echo "+-----------------------+"

|

| 22 |

+

@echo "| 👷 DEBUGGING COMMANDS |"

|

| 23 |

+

@echo "+-----------------------+"

|

| 24 |

+

@echo "make api - Run only API server"

|

| 25 |

+

@echo "make models - Build Rasa models"

|

| 26 |

+

@echo "make purge - Remove all docker images"

|

| 27 |

+

@echo "make db-purge - Delete all data in database"

|

| 28 |

+

@echo "make shell-api - Open shell in API container"

|

| 29 |

+

@echo "make shell-db - Open shell in database container"

|

| 30 |

+

@echo "make shell-rasa - Open shell in Rasa container"

|

| 31 |

+

@echo "make shell-actions - Open shell in Rasa actions container\n"

|

| 32 |

+

|

| 33 |

+

|

| 34 |

+

banner:

|

| 35 |

+

@echo "\n\n-------------------------------------"

|

| 36 |

+

@echo "▒█▀▀█ █▀▀█ █▀▀ █▀▀█ ▒█▀▀█ ▒█▀▀█ ▀▀█▀▀"

|

| 37 |

+

@echo "▒█▄▄▀ █▄▄█ ▀▀█ █▄▄█ ▒█░▄▄ ▒█▄▄█ ░▒█░░"

|

| 38 |

+

@echo "▒█░▒█ ▀░░▀ ▀▀▀ ▀░░▀ ▒█▄▄█ ▒█░░░ ░▒█░░"

|

| 39 |

+

@echo "+-----------------------------------+"

|

| 40 |

+

@echo "| http://RasaGPT.dev by @paulpierre |"

|

| 41 |

+

@echo "+-----------------------------------+\n\n"

|

| 42 |

+

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

# ==========================

|

| 46 |

+

# 👷 INITIALIZATION COMMANDS

|

| 47 |

+

# ==========================

|

| 48 |

+

|

| 49 |

+

# ---------------------------------------

|

| 50 |

+

# Run this first to setup the environment

|

| 51 |

+

# ---------------------------------------

|

| 52 |

+

install:

|

| 53 |

+

@make banner

|

| 54 |

+

@make stop

|

| 55 |

+

@make env-var

|

| 56 |

+

@make rasa-train

|

| 57 |

+

@make build

|

| 58 |

+

@make run

|

| 59 |

+

@make models

|

| 60 |

+

@make rasa-restart

|

| 61 |

+

@make seed

|

| 62 |

+

@echo "✅ RasaGPT installed and running"

|

| 63 |

+

|

| 64 |

+

# -----------------------

|

| 65 |

+

# Build the docker images

|

| 66 |

+

# -----------------------

|

| 67 |

+

build:

|

| 68 |

+

@echo "🏗️ Building docker images ..\n"

|

| 69 |

+

@docker-compose -f docker-compose.yml build

|

| 70 |

+

|

| 71 |

+

|

| 72 |

+

# ================

|

| 73 |

+

# 🏠 CORE COMMANDS

|

| 74 |

+

# ================

|

| 75 |

+

|

| 76 |

+

# ---------------------------

|

| 77 |

+

# Startup all docker services

|

| 78 |

+

# ---------------------------

|

| 79 |

+

|

| 80 |

+

run:

|

| 81 |

+

@echo "🚀 Starting docker-compose.yml ..\n"

|

| 82 |

+

@docker-compose -f docker-compose.yml up -d

|

| 83 |

+

|

| 84 |

+

# ---------------------------

|

| 85 |

+

# Stop all running containers

|

| 86 |

+

# ---------------------------

|

| 87 |

+

|

| 88 |

+

stop:

|

| 89 |

+

@echo "🔍 Stopping any running containers .. \n"

|

| 90 |

+

@docker-compose -f docker-compose.yml down

|

| 91 |

+

|

| 92 |

+

# ----------------------

|

| 93 |

+

# Restart all containers

|

| 94 |

+

# ----------------------

|

| 95 |

+

restart:

|

| 96 |

+

@echo "🔁 Restarting docker services ..\n"

|

| 97 |

+

@make stop

|

| 98 |

+

@make run

|

| 99 |

+

|

| 100 |

+

# ----------------------

|

| 101 |

+

# Restart Rasa core only

|

| 102 |

+

# ----------------------

|

| 103 |

+

rasa-restart:

|

| 104 |

+

@echo "🤖 Restarting Rasa so it grabs credentials ..\n"

|

| 105 |

+

@make rasa-stop

|

| 106 |

+

@make rasa-start

|

| 107 |

+

|

| 108 |

+

rasa-stop:

|

| 109 |

+

@echo "🤖 Stopping Rasa ..\n"

|

| 110 |

+

@docker-compose -f docker-compose.yml stop rasa-core

|

| 111 |

+

|

| 112 |

+

rasa-start:

|

| 113 |

+

@echo "🤖 Starting Rasa ..\n"

|

| 114 |

+

@docker-compose -f docker-compose.yml up -d rasa-core

|

| 115 |

+

|

| 116 |

+

rasa-build:

|

| 117 |

+

@echo "🤖 Building Rasa ..\n"

|

| 118 |

+

@docker-compose -f docker-compose.yml build rasa-core

|

| 119 |

+

|

| 120 |

+

# -----------------------

|

| 121 |

+

# Seed database with data

|

| 122 |

+

# -----------------------

|

| 123 |

+

seed:

|

| 124 |

+

@echo "🌱 Seeding database ..\n"

|

| 125 |

+

@docker-compose -f docker-compose.yml exec api /app/api/wait-for-it.sh db:5432 --timeout=60 -- python3 seed.py

|

| 126 |

+

|

| 127 |

+

|

| 128 |

+

# =======================

|

| 129 |

+

# 🌍 WEB ADMIN INTERFACES

|

| 130 |

+

# =======================

|

| 131 |

+

|

| 132 |

+

# -------------------------

|

| 133 |

+

# Reverse HTTP tunnel admin

|

| 134 |

+

# -------------------------

|

| 135 |

+

ngrok:

|

| 136 |

+

@echo "📡 Opening ngrok agent in the browser ..\n"

|

| 137 |

+

@open http://localhost:4040

|

| 138 |

+

|

| 139 |

+

# ------------------------

|

| 140 |

+

# Postgres admin interface

|

| 141 |

+

# ------------------------

|

| 142 |

+

pgadmin:

|

| 143 |

+

@echo "👷♂️ Opening PG Admin in the browser ..\n"

|

| 144 |

+

@open http://localhost:5050

|

| 145 |

+

|

| 146 |

+

# ------------------------

|

| 147 |

+

# Container logs interface

|

| 148 |

+

# ------------------------

|

| 149 |

+

logs:

|

| 150 |

+

@echo "🔍 Opening container logs in the browser ..\n"

|

| 151 |

+

@open http://localhost:9999/

|

| 152 |

+

|

| 153 |

+

# =====================

|

| 154 |

+

# 👷 DEBUGGING COMMANDS

|

| 155 |

+

# =====================

|

| 156 |

+

|

| 157 |

+

# ---------------------------

|

| 158 |

+

# Startup just the API server

|

| 159 |

+

# ---------------------------

|

| 160 |

+

api:

|

| 161 |

+

@make db

|

| 162 |

+

@echo "🚀 Starting FastAPI and postgres ..\n"

|

| 163 |

+

@docker-compose -f docker-compose.yml up -d api

|

| 164 |

+

|

| 165 |

+

# ------------------------

|

| 166 |

+

# Startup just Postgres DB

|

| 167 |

+

# ------------------------

|

| 168 |

+

db:

|

| 169 |

+

@echo "🚀 Starting Postgres with pgvector ..\n"

|

| 170 |

+

@docker-compose -f docker-compose.yml up -d db

|

| 171 |

+

|

| 172 |

+

|

| 173 |

+

db-stop:

|

| 174 |

+

@echo " Stopping the database ..\n"

|

| 175 |

+

@docker-compose -f docker-compose.yml down db

|

| 176 |

+

|

| 177 |

+

|

| 178 |

+

db-reset:

|

| 179 |

+

@echo " Resetting the database ..\n"

|

| 180 |

+

@make db-purge

|

| 181 |

+

@make api

|

| 182 |

+

@make models

|

| 183 |

+

|

| 184 |

+

# -------------------------------

|

| 185 |

+

# Build the schema in Postgres DB

|

| 186 |

+

# -------------------------------

|

| 187 |

+

models:

|

| 188 |

+

@echo "💽 Building models in Postgres ..\n"

|

| 189 |

+

@docker-compose -f docker-compose.yml exec api /app/api/wait-for-it.sh db:5432 --timeout=60 -- python3 models.py

|

| 190 |

+

|

| 191 |

+

# -------------------------------

|

| 192 |

+

# Delete containers or bad images

|

| 193 |

+

# -------------------------------

|

| 194 |

+

purge:

|

| 195 |

+

@echo "🧹 Purging all containers and images ..\n"

|

| 196 |

+

@make stop

|

| 197 |

+

@docker system prune -a

|

| 198 |

+

@make install

|

| 199 |

+

|

| 200 |

+

# --------------------------------

|

| 201 |

+

# Delete the database mount volume

|

| 202 |

+

# --------------------------------

|

| 203 |

+

db-purge:

|

| 204 |

+

@echo "⛔ Are you sure you want to delete all data in the database? [y/N]\n"

|

| 205 |

+

@read confirmation; \

|

| 206 |

+

if [ "$$confirmation" = "y" ] || [ "$$confirmation" = "Y" ]; then \

|

| 207 |

+

echo "Deleting generated files .."; \

|

| 208 |

+

make stop; \

|

| 209 |

+

rm -rf ./mnt; \

|

| 210 |

+

echo "Deleted."; \

|

| 211 |

+

else \

|

| 212 |

+

echo "Aborted."; \

|

| 213 |

+

fi

|

| 214 |

+

|

| 215 |

+

# --------------------------------------

|

| 216 |

+

# Open a bash shell in the API container

|

| 217 |

+

# --------------------------------------

|

| 218 |

+

shell-api:

|

| 219 |

+

@echo "💻🐢 Opening a bash shell in the RasaGPT API container ..\n"

|

| 220 |

+

@if docker ps | grep api > /dev/null; then \

|

| 221 |

+

docker exec -it $$(docker ps | grep api | tr -d '\n' | awk '{print $$1}') /bin/bash; \

|

| 222 |

+

else \

|

| 223 |

+

echo "Container api is not running"; \

|

| 224 |

+

fi

|

| 225 |

+

|

| 226 |

+

# ---------------------------------------

|

| 227 |

+

# Open a bash shell in the Rasa container

|

| 228 |

+

# ---------------------------------------

|

| 229 |

+

shell-rasa:

|

| 230 |

+

@echo "💻🐢 Opening a bash shell in the rasa-core container ..\n"

|

| 231 |

+

@if docker ps | grep rasa-core > /dev/null; then \

|

| 232 |

+

docker exec -it $$(docker ps | grep rasa-core | tr -d '\n' | awk '{print $$1}') /bin/bash; \

|

| 233 |

+

else \

|

| 234 |

+

echo "Container rasa-core is not running"; \

|

| 235 |

+

fi

|

| 236 |

+

|

| 237 |

+

# -----------------------------------------------

|

| 238 |

+

# Open a bash shell in the Rasa actions container

|

| 239 |

+

# -----------------------------------------------

|

| 240 |

+

shell-actions:

|

| 241 |

+

@echo "💻🐢 Opening a bash shell in the rasa-actions container ..\n"

|

| 242 |

+

@if docker ps | grep rasa-actions > /dev/null; then \

|

| 243 |

+

docker exec -it $$(docker ps | grep rasa-actions | tr -d '\n' | awk '{print $$1}') /bin/bash; \

|

| 244 |

+

else \

|

| 245 |

+

echo "Container rasa-actions is not running"; \

|

| 246 |

+

fi

|

| 247 |

+

|

| 248 |

+

# -------------------------------------------

|

| 249 |

+

# Open a bash shell in the Postgres container

|

| 250 |

+

# -------------------------------------------

|

| 251 |

+

shell-db:

|

| 252 |

+

@echo "💻🐢 Opening a bash shell in the Postgres container ..\n"

|

| 253 |

+

@if docker ps | grep db > /dev/null; then \

|

| 254 |

+

docker exec -it $$(docker ps | grep db | tr -d '\n' | awk '{print $$1}') /bin/bash; \

|

| 255 |

+

else \

|

| 256 |

+

echo "Container db is not running"; \

|

| 257 |

+

fi

|

| 258 |

+

|

| 259 |

+

# ==================

|

| 260 |

+

# 💁 HELPER COMMANDS

|

| 261 |

+

# ==================

|

| 262 |

+

|

| 263 |

+

# -------------

|

| 264 |

+

# Check envvars

|

| 265 |

+

# -------------

|

| 266 |

+

env-var:

|

| 267 |

+

@echo "🔍 Checking if envvars are set ..\n";

|

| 268 |

+

@if ! test -e "./.env"; then \

|

| 269 |

+

@echo "❌ .env file not found. Please copy .env-example to .env and update values"; \

|

| 270 |

+

exit 1; \

|

| 271 |

+

else \

|

| 272 |

+

echo "✅ found .env\n"; \

|

| 273 |

+

fi

|

| 274 |

+

|

| 275 |

+

# -----------------

|

| 276 |

+

# Train Rasa models

|

| 277 |

+

# -----------------

|

| 278 |

+

rasa-train:

|

| 279 |

+

@echo "💽 Generating Rasa models ..\n"

|

| 280 |

+

@make rasa-start

|

| 281 |

+

@docker-compose -f docker-compose.yml exec rasa-core rasa train

|

| 282 |

+

@make rasa-stop

|

| 283 |

+

@echo "✅ Done\n"

|

README.md

ADDED

|

@@ -0,0 +1,679 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

|

| 3 |

+

|

| 4 |

+

<br/><br/>

|

| 5 |

+

|

| 6 |

+

|

| 7 |

+

# 🏠 Overview

|

| 8 |

+

|

| 9 |

+

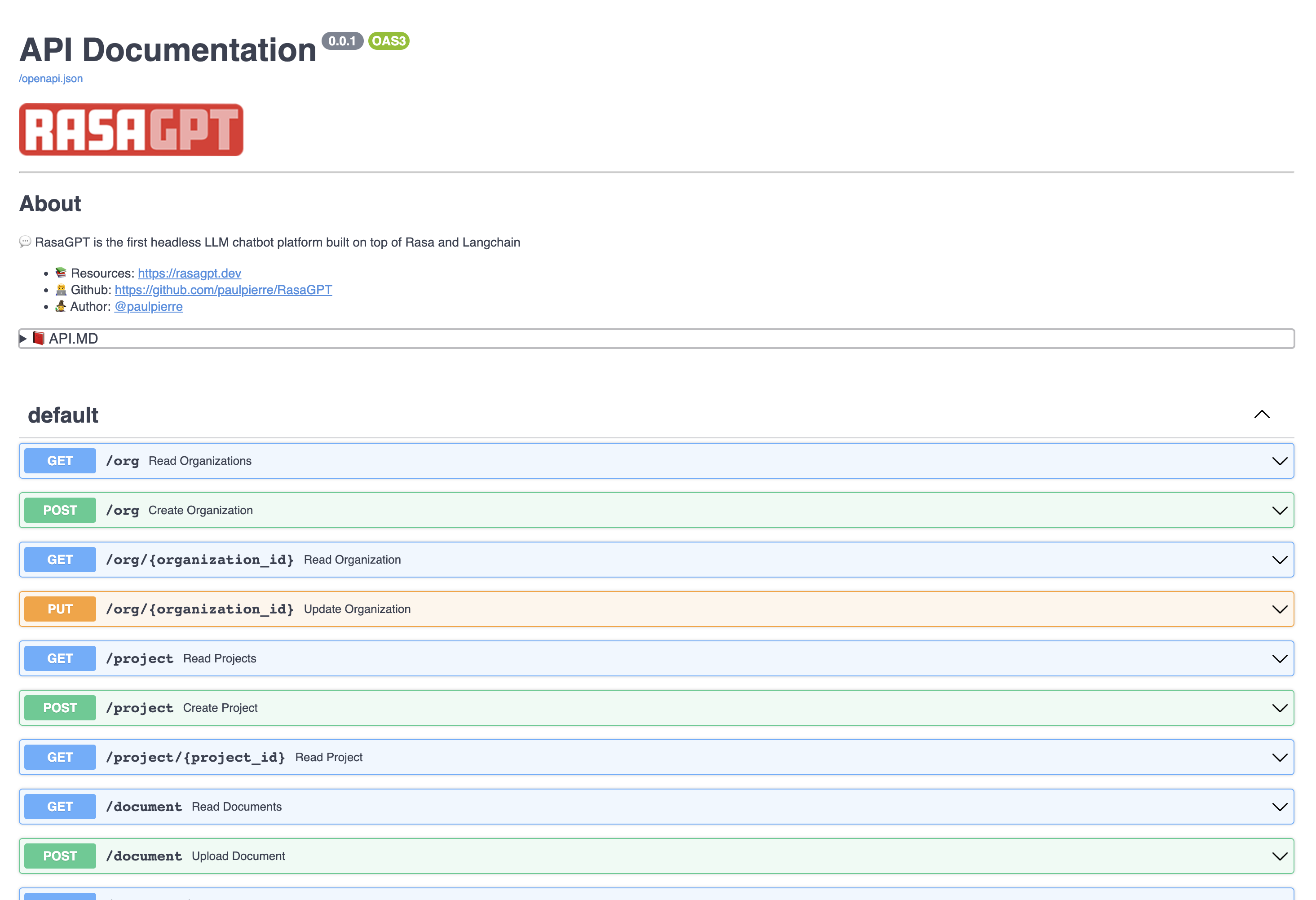

💬 RasaGPT is the first headless LLM chatbot platform built on top of [Rasa](https://github.com/RasaHQ/rasa) and [Langchain](https://github.com/hwchase17/langchain). It is boilerplate and a reference implementation of Rasa and Telegram utilizing an LLM library like Langchain for indexing, retrieval and context injection.

|

| 10 |

+

|

| 11 |

+

<br/><br/>

|

| 12 |

+

|

| 13 |

+

# 💁♀️ Why RasaGPT?

|

| 14 |

+

|

| 15 |

+

RasaGPT works out of the box. A lot of the implementing headaches were sorted out so you don’t have to, including:

|

| 16 |

+

|

| 17 |

+

- Creating your own proprietary bot end-point using FastAPI, document upload and “training” 'pipeline included

|

| 18 |

+

- How to integrate Langchain/LlamaIndex and Rasa

|

| 19 |

+

- Library conflicts with LLM libraries and passing metadata

|

| 20 |

+

- Dockerized [support on MacOS](https://github.com/khalo-sa/rasa-apple-silicon) for running Rasa

|

| 21 |

+

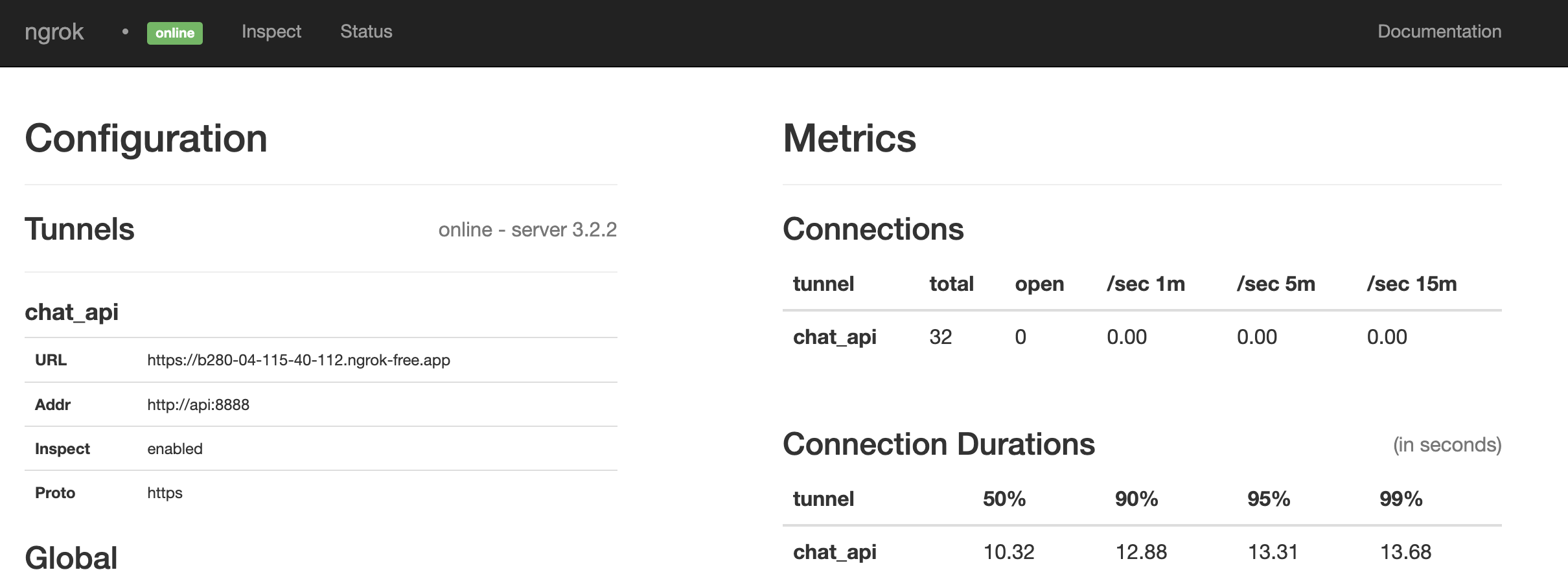

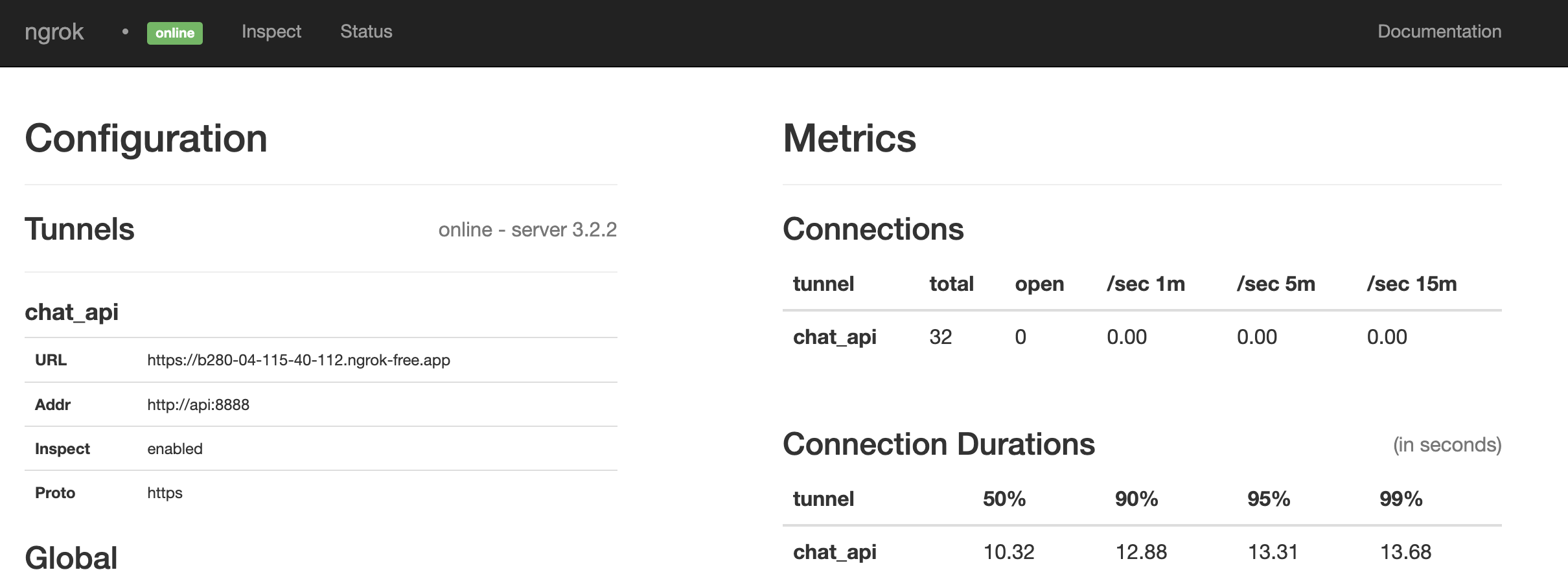

- Reverse proxy with chatbots [via ngrok](https://ngrok.com/docs/ngrok-agent/)

|

| 22 |

+

- Implementing pgvector with your own custom schema instead of using Langchain’s highly opinionated [PGVector class](https://python.langchain.com/en/latest/modules/indexes/vectorstores/examples/pgvector.html)

|

| 23 |

+

- Adding multi-tenancy (Rasa [doesn't natively support this](https://forum.rasa.com/t/multi-tenancy-in-rasa-core/2382)), sessions and metadata between Rasa and your own backend / application

|

| 24 |

+

|

| 25 |

+

The backstory is familiar. A friend came to me with a problem. I scoured Google and Github for a decent reference implementation of LLM’s integrated with Rasa but came up empty-handed. I figured this to be a great opportunity to satiate my curiosity and 2 days later I had a proof of concept, and a week later this is what I came up with.

|

| 26 |

+

|

| 27 |

+

<br/>

|

| 28 |

+

|

| 29 |

+

> ⚠️ **Caveat emptor:**

|

| 30 |

+

This is far from production code and rife with prompt injection and general security vulnerabilities. I just hope someone finds this useful 😊

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

<br/><br/>

|

| 34 |

+

|

| 35 |

+

# **✨** Quick start

|

| 36 |

+

|

| 37 |

+

Getting started is easy, just make sure you meet the dependencies below.

|

| 38 |

+

|

| 39 |

+

```bash

|

| 40 |

+

# Get the code

|

| 41 |

+

git clone https://github.com/paulpierre/RasaGPT.git

|

| 42 |

+

cd RasaGPT

|

| 43 |

+

|

| 44 |

+

## Setup the .env file

|

| 45 |

+

cp .env-example .env

|

| 46 |

+

|

| 47 |

+

# Edit your .env file and add all the necessary credentials

|

| 48 |

+

make install

|

| 49 |

+

|

| 50 |

+

# Type "make" to see more options

|

| 51 |

+

make

|

| 52 |

+

```

|

| 53 |

+

|

| 54 |

+

<br/><br/>

|

| 55 |

+

|

| 56 |

+

|

| 57 |

+

# 🔥 Features

|

| 58 |

+

|

| 59 |

+

## Full Application and API

|

| 60 |

+

|

| 61 |

+

- LLM “learns” on an arbitrary corpus of data using Langchain

|

| 62 |

+

- Upload documents and “train” all via [FastAPI](https://fastapi.tiangolo.com/)

|

| 63 |

+

- Document versioning and automatic “re-training” implemented on upload

|

| 64 |

+

- Customize your own async end-points and database models via [FastAPI](https://fastapi.tiangolo.com/) and [SQLModel](https://sqlmodel.tiangolo.com/)

|

| 65 |

+

- Bot determines whether human handoff is necessary

|

| 66 |

+

- Bot generates tags based on user questions and response automatically

|

| 67 |

+

- Full API documentation via [Swagger](https://github.com/swagger-api/swagger-ui) and [Redoc](https://redocly.github.io/redoc/) included

|

| 68 |

+

- [Ngrok](ngrok.com/docs) end-points are automatically generated for you on startup so your bot can always be accessed via `https://t.me/yourbotname`

|

| 69 |

+

- Embedding similarity search built into Postgres via [pgvector](https://github.com/pgvector/pgvector) and Postgres functions

|

| 70 |

+

- [Dummy data included](https://github.com/paulpierre/RasaGPT/tree/main/app/api/data/training_data) for you to test and experiment

|

| 71 |

+

- Unlimited use cases from help desk, customer support, quiz, e-learning, dungeon and dragons, and more

|

| 72 |

+

<br/><br/>

|

| 73 |

+

## Rasa integration

|

| 74 |

+

|

| 75 |

+

- Built on top of [Rasa](https://rasa.com/docs/rasa/), the open source gold-standard for chat platforms

|

| 76 |

+

- Supports MacOS M1/M2 via Docker (canonical Rasa image [lacks MacOS arch. support](https://github.com/khalo-sa/rasa-apple-silicon))

|

| 77 |

+

- Supports Telegram, easily integrate Slack, Whatsapp, Line, SMS, etc.

|

| 78 |

+

- Setup complex dialog pipelines using NLU models form Huggingface like BERT or libraries/frameworks like Keras, Tensorflow with OpenAI GPT as fallback

|

| 79 |

+

<br/><br/>

|

| 80 |

+

## Flexibility

|

| 81 |

+

|

| 82 |

+

- Extend agentic, memory, etc. capabilities with Langchain

|

| 83 |

+

- Schema supports multi-tenancy, sessions, data storage

|

| 84 |

+

- Customize agent personalities

|

| 85 |

+

- Saves all of chat history and creating embeddings from all interactions future-proofing your retrieval strategy

|

| 86 |

+

- Automatically generate embeddings from knowledge base corpus and client feedback

|

| 87 |

+

|

| 88 |

+

<br/><br/>

|

| 89 |

+

|

| 90 |

+

# 🧑💻 Installing

|

| 91 |

+

|

| 92 |

+

## Requirements

|

| 93 |

+

|

| 94 |

+

- Python 3.9

|

| 95 |

+

- Docker & Docker compose ([Docker desktop MacOS](https://www.docker.com/products/docker-desktop/))

|

| 96 |

+

- Open AI [API key](https://platform.openai.com/account/api-keys)

|

| 97 |

+

- Telegram [bot credentials](https://core.telegram.org/bots#how-do-i-create-a-bot)

|

| 98 |

+

- Ngrok [auth token](https://dashboard.ngrok.com/tunnels/authtokens)

|

| 99 |

+

- Make ([MacOS](https://formulae.brew.sh/formula/make)/[Windows](https://stackoverflow.com/questions/32127524/how-to-install-and-use-make-in-windows))

|

| 100 |

+

- SQLModel

|

| 101 |

+

|

| 102 |

+

<br/>

|

| 103 |

+

|

| 104 |

+

## Setup

|

| 105 |

+

|

| 106 |

+

```bash

|

| 107 |

+

git clone https://github.com/paulpierre/RasaGPT.git

|

| 108 |

+

cd RasaGPT

|

| 109 |

+

cp .env-example .env

|

| 110 |

+

|

| 111 |

+

# Edit your .env file and all the credentials

|

| 112 |

+

|

| 113 |

+

```

|

| 114 |

+

|

| 115 |

+

<br/>

|

| 116 |

+

|

| 117 |

+

|

| 118 |

+

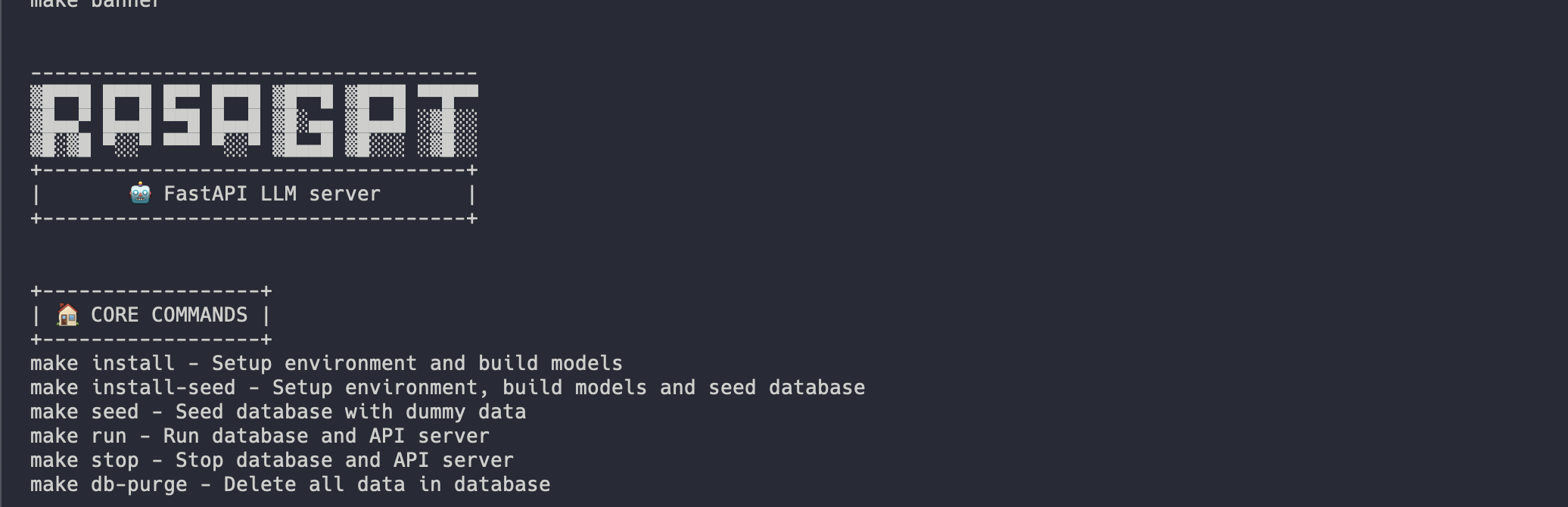

At any point feel free to just type in `make` and it will display the list of options, mostly useful for debugging:

|

| 119 |

+

|

| 120 |

+

<br/>

|

| 121 |

+

|

| 122 |

+

|

| 123 |

+

|

| 124 |

+

|

| 125 |

+

<br/>

|

| 126 |

+

|

| 127 |

+

## Docker-compose

|

| 128 |

+

|

| 129 |

+

The easiest way to get started is using the `Makefile` in the root directory. It will install and run all the services for RasaGPT in the correct order.

|

| 130 |

+

|

| 131 |

+

```bash

|

| 132 |

+

make install

|

| 133 |

+

|

| 134 |

+

# This will automatically install and run RasaGPT

|

| 135 |

+

# After installation, to run again you can simply run

|

| 136 |

+

|

| 137 |

+

make run

|

| 138 |

+

```

|

| 139 |

+

<br/>

|

| 140 |

+

|

| 141 |

+

## Local Python Environment

|

| 142 |

+

|

| 143 |

+

This is useful if you wish to focus on developing on top of the API, a separate `Makefile` was made for this. This will create a local virtual environment for you.

|

| 144 |

+

|

| 145 |

+

```bash

|

| 146 |

+

# Assuming you are already in the RasaGPT directory

|

| 147 |

+

cd app/api

|

| 148 |

+

make install

|

| 149 |

+

|

| 150 |

+

# This will automatically install and run RasaGPT

|

| 151 |

+

# After installation, to run again you can simply run

|

| 152 |

+

|

| 153 |

+

make run

|

| 154 |

+

```

|

| 155 |

+

<br/>

|

| 156 |

+

|

| 157 |

+

Similarly, enter `make` to see a full list of commands

|

| 158 |

+

|

| 159 |

+

|

| 160 |

+

|

| 161 |

+

<br/>

|

| 162 |

+

|

| 163 |

+

## Installation process

|

| 164 |

+

|

| 165 |

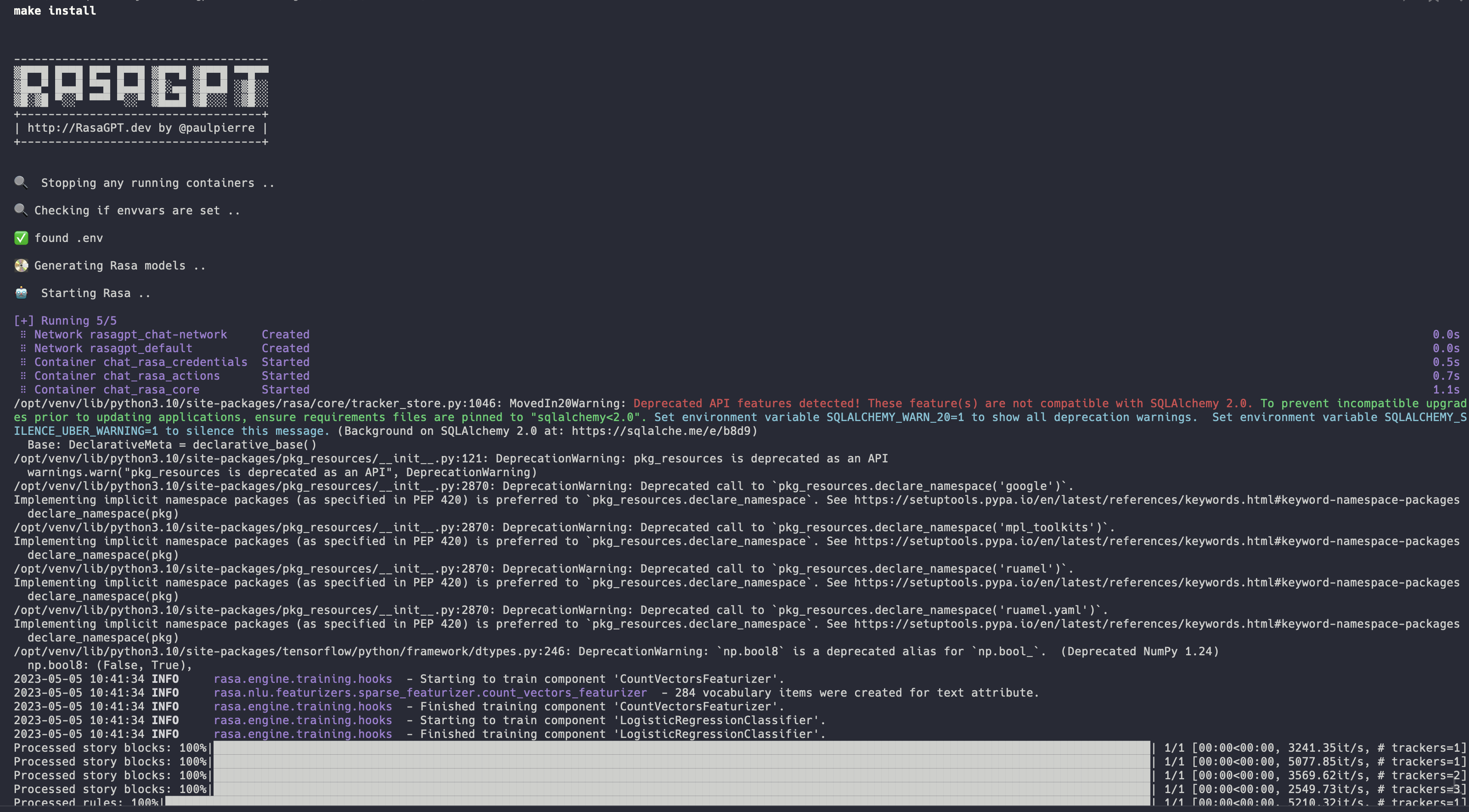

+

Installation should be automated should look like this:

|

| 166 |

+

|

| 167 |

+

|

| 168 |

+

|

| 169 |

+

👉 Full installation log: [https://app.warp.dev/block/vflua6Eue29EPk8EVvW8Kd](https://app.warp.dev/block/vflua6Eue29EPk8EVvW8Kd)

|

| 170 |

+

|

| 171 |

+

<br/>

|

| 172 |

+

|

| 173 |

+

The installation process for Docker takes the following steps at a high level

|

| 174 |

+

|

| 175 |

+

1. Check to make sure you have `.env` available

|

| 176 |

+

2. Database is initialized with [`pgvector`](https://github.com/pgvector/pgvector)

|

| 177 |

+

3. Database models create the database schema

|

| 178 |

+

4. Trains the Rasa model so it is ready to run

|

| 179 |

+

5. Sets up ngrok with Rasa so Telegram has a webhook back to your API server

|

| 180 |

+

6. Sets up the Rasa actions server so Rasa can talk to the RasaGPT API

|

| 181 |

+

7. Database is populated with dummy data via `seed.py`

|

| 182 |

+

|

| 183 |

+

<br/><br/>

|

| 184 |

+

|

| 185 |

+

# ☑️ Next steps

|

| 186 |

+

<br/>

|

| 187 |

+

|

| 188 |

+

## 💬 Start chatting

|

| 189 |

+

|

| 190 |

+

You can start chatting with your bot by visiting 👉 [https://t.me/yourbotsname](https://t.me/yourbotsname)

|

| 191 |

+

|

| 192 |

+

|

| 193 |

+

|

| 194 |

+

<br/><br/>

|

| 195 |

+

|

| 196 |

+

## 👀 View logs

|

| 197 |

+

|

| 198 |

+

You can view all of the log by visiting 👉 [https://localhost:9999/](https://localhost:9999/) which will displaying real-time logs of all the docker containers

|

| 199 |

+

|

| 200 |

+

|

| 201 |

+

|

| 202 |

+

<br/><br/>

|

| 203 |

+

|

| 204 |

+

## 📖 API documentation

|

| 205 |

+

|

| 206 |

+

View the API endpoint docs by visiting 👉 [https://localhost:8888/docs](https://localhost:8888/docs)

|

| 207 |

+

|

| 208 |

+

In this page you can create and update entities, as well as upload documents to the knowledge base.

|

| 209 |

+

|

| 210 |

+

|

| 211 |

+

|

| 212 |

+

<br/><br/>

|

| 213 |

+

|

| 214 |

+

# ✏️ Examples

|

| 215 |

+

|

| 216 |

+

The bot is just a proof-of-concept and has not been optimized for retrieval. It currently uses 1000 character length chunking for indexing and basic euclidean distance for retrieval and quality is hit or miss.

|

| 217 |

+

|

| 218 |

+

You can view example hits and misses with the bot in the [RESULTS.MD](https://github.com/paulpierre/RasaGPT/blob/main/RESULTS.md) file. Overall I estimate index optimization and LLM configuration changes can increase output quality by more than 70%.

|

| 219 |

+

|

| 220 |

+

<br/>

|

| 221 |

+

|

| 222 |

+

👉 Click to see the [Q&A results of the demo data in RESULTS.MD](https://github.com/paulpierre/RasaGPT/blob/main/RESULTS.md)

|

| 223 |

+

|

| 224 |

+

<br/><br/>

|

| 225 |

+

|

| 226 |

+

# 💻 API Architecture and Usage

|

| 227 |

+

|

| 228 |

+

The REST API is straight forward, please visit the documentation 👉 http://localhost:8888/docs

|

| 229 |

+

|

| 230 |

+

The entities below have basic CRUD operations and return JSON

|

| 231 |

+

|

| 232 |

+

<br/><br/>

|

| 233 |

+

|

| 234 |

+

## Organization

|

| 235 |

+

|

| 236 |

+

This can be thought of as a company that is your client in a SaaS / multi-tenant world. By default a list of dummy organizations have been provided

|

| 237 |

+

|

| 238 |

+

|

| 239 |

+

|

| 240 |

+

```bash

|

| 241 |

+

[

|

| 242 |

+

{

|

| 243 |

+

"id": 1,

|

| 244 |

+

"uuid": "d2a642e6-c81a-4a43-83e2-22cee3562452",

|

| 245 |

+

"display_name": "Pepe Corp.",

|

| 246 |

+

"namespace": "pepe",

|

| 247 |

+

"bot_url": null,

|

| 248 |

+

"created_at": "2023-05-05T10:42:45.933976",

|

| 249 |

+

"updated_at": "2023-05-05T10:42:45.933979"

|

| 250 |

+

},

|

| 251 |

+

{

|

| 252 |

+

"id": 2,

|

| 253 |

+

"uuid": "7d574f88-6c0b-4c1f-9368-367956b0e90f",

|

| 254 |

+

"display_name": "Umbrella Corp",

|

| 255 |

+

"namespace": "acme",

|

| 256 |

+

"bot_url": null,

|

| 257 |

+

"created_at": "2023-05-05T10:43:03.555484",

|

| 258 |

+

"updated_at": "2023-05-05T10:43:03.555488"

|

| 259 |

+

},

|

| 260 |

+

{

|

| 261 |

+

"id": 3,

|

| 262 |

+

"uuid": "65105a15-2ef0-4898-ac7a-8eafee0b283d",

|

| 263 |

+

"display_name": "Cyberdine Systems",

|

| 264 |

+

"namespace": "cyberdine",

|

| 265 |

+

"bot_url": null,

|

| 266 |

+

"created_at": "2023-05-05T10:43:04.175424",

|

| 267 |

+

"updated_at": "2023-05-05T10:43:04.175428"

|

| 268 |

+

},

|

| 269 |

+

{

|

| 270 |

+

"id": 4,

|

| 271 |

+

"uuid": "b7fb966d-7845-4581-a537-818da62645b5",

|

| 272 |

+

"display_name": "Bluth Companies",

|

| 273 |

+

"namespace": "bluth",

|

| 274 |

+

"bot_url": null,

|

| 275 |

+

"created_at": "2023-05-05T10:43:04.697801",

|

| 276 |

+

"updated_at": "2023-05-05T10:43:04.697804"

|

| 277 |

+

},

|

| 278 |

+

{

|

| 279 |

+

"id": 5,

|

| 280 |

+

"uuid": "9283d017-b24b-4ecd-bf35-808b45e258cf",

|

| 281 |

+

"display_name": "Evil Corp",

|

| 282 |

+

"namespace": "evil",

|

| 283 |

+

"bot_url": null,

|

| 284 |

+

"created_at": "2023-05-05T10:43:05.102546",

|

| 285 |

+

"updated_at": "2023-05-05T10:43:05.102549"

|

| 286 |

+

}

|

| 287 |

+

]

|

| 288 |

+

```

|

| 289 |

+

|

| 290 |

+

<br/>

|

| 291 |

+

|

| 292 |

+

### Project

|

| 293 |

+

|

| 294 |

+

This can be thought of as a product that belongs to a company. You can view the list of projects that belong to an organizations like so:

|

| 295 |

+

|

| 296 |

+

|

| 297 |

+

|

| 298 |

+

```bash

|

| 299 |

+

[

|

| 300 |

+

{

|

| 301 |

+

"id": 1,

|

| 302 |

+

"documents": [

|

| 303 |

+

{

|

| 304 |

+

"id": 1,

|

| 305 |

+

"uuid": "92604623-e37c-4935-bf08-0e9efa8b62f7",

|

| 306 |

+

"display_name": "project-pepetamine.md",

|

| 307 |

+

"node_count": 3

|

| 308 |

+

}

|

| 309 |

+

],

|

| 310 |

+

"document_count": 1,

|

| 311 |

+

"uuid": "44a4b60b-9280-4b21-a676-00612be9aa87",

|

| 312 |

+

"display_name": "Pepetamine",

|

| 313 |

+

"created_at": "2023-05-05T10:42:46.060930",

|

| 314 |

+

"updated_at": "2023-05-05T10:42:46.060934"

|

| 315 |

+

},

|

| 316 |

+

{

|

| 317 |

+

"id": 2,

|

| 318 |

+

"documents": [

|

| 319 |

+

{

|

| 320 |

+

"id": 2,

|

| 321 |

+

"uuid": "b408595a-3426-4011-9b9b-8e260b244f74",

|

| 322 |

+

"display_name": "project-frogonil.md",

|

| 323 |

+

"node_count": 3

|

| 324 |

+

}

|

| 325 |

+

],

|

| 326 |

+

"document_count": 1,

|

| 327 |

+

"uuid": "5ba6b812-de37-451d-83a3-8ccccadabd69",

|

| 328 |

+

"display_name": "Frogonil",

|

| 329 |

+

"created_at": "2023-05-05T10:42:48.043936",

|

| 330 |

+

"updated_at": "2023-05-05T10:42:48.043940"

|

| 331 |

+

},

|

| 332 |

+

{

|

| 333 |

+

"id": 3,

|

| 334 |

+

"documents": [

|

| 335 |

+

{

|

| 336 |

+

"id": 3,

|

| 337 |

+

"uuid": "b99d373a-3317-4699-a89e-90897ba00db6",

|

| 338 |

+

"display_name": "project-kekzal.md",

|

| 339 |

+

"node_count": 3

|

| 340 |

+

}

|

| 341 |

+

],

|

| 342 |

+

"document_count": 1,

|

| 343 |

+

"uuid": "1be4360c-f06e-4494-bf20-e7c73a56f003",

|

| 344 |

+

"display_name": "Kekzal",

|

| 345 |

+

"created_at": "2023-05-05T10:42:49.092675",

|

| 346 |

+

"updated_at": "2023-05-05T10:42:49.092678"

|

| 347 |

+

},

|

| 348 |

+

{

|

| 349 |

+

"id": 4,

|

| 350 |

+

"documents": [

|

| 351 |

+

{

|

| 352 |

+

"id": 4,

|

| 353 |

+

"uuid": "94da307b-5993-4ddd-a852-3d8c12f95f3f",

|

| 354 |

+

"display_name": "project-memetrex.md",

|

| 355 |

+

"node_count": 3

|

| 356 |

+

}

|

| 357 |

+

],

|

| 358 |

+

"document_count": 1,

|

| 359 |

+

"uuid": "1fd7e772-365c-451b-a7eb-4d529b0927f0",

|

| 360 |

+

"display_name": "Memetrex",

|

| 361 |

+

"created_at": "2023-05-05T10:42:50.184817",

|

| 362 |

+

"updated_at": "2023-05-05T10:42:50.184821"

|

| 363 |

+

},

|

| 364 |

+

{

|

| 365 |

+

"id": 5,

|

| 366 |

+

"documents": [

|

| 367 |

+

{

|

| 368 |

+

"id": 5,

|

| 369 |

+

"uuid": "6deff180-3e3e-4b09-ae5a-6502d031914a",

|

| 370 |

+

"display_name": "project-pepetrak.md",

|

| 371 |

+

"node_count": 4

|

| 372 |

+

}

|

| 373 |

+

],

|

| 374 |

+

"document_count": 1,

|

| 375 |

+

"uuid": "a389eb58-b504-48b4-9bc3-d3c93d2fbeaa",

|

| 376 |

+

"display_name": "PepeTrak",

|

| 377 |

+

"created_at": "2023-05-05T10:42:51.293352",

|

| 378 |

+

"updated_at": "2023-05-05T10:42:51.293355"

|

| 379 |

+

},

|

| 380 |

+

{

|

| 381 |

+

"id": 6,

|

| 382 |

+

"documents": [

|

| 383 |

+

{

|

| 384 |

+

"id": 6,

|

| 385 |

+

"uuid": "2e3c2155-cafa-4c6b-b7cc-02bb5156715b",

|

| 386 |

+

"display_name": "project-memegen.md",

|

| 387 |

+

"node_count": 5

|

| 388 |

+

}

|

| 389 |

+

],