+

+

+

+

+

+## Introduction

+

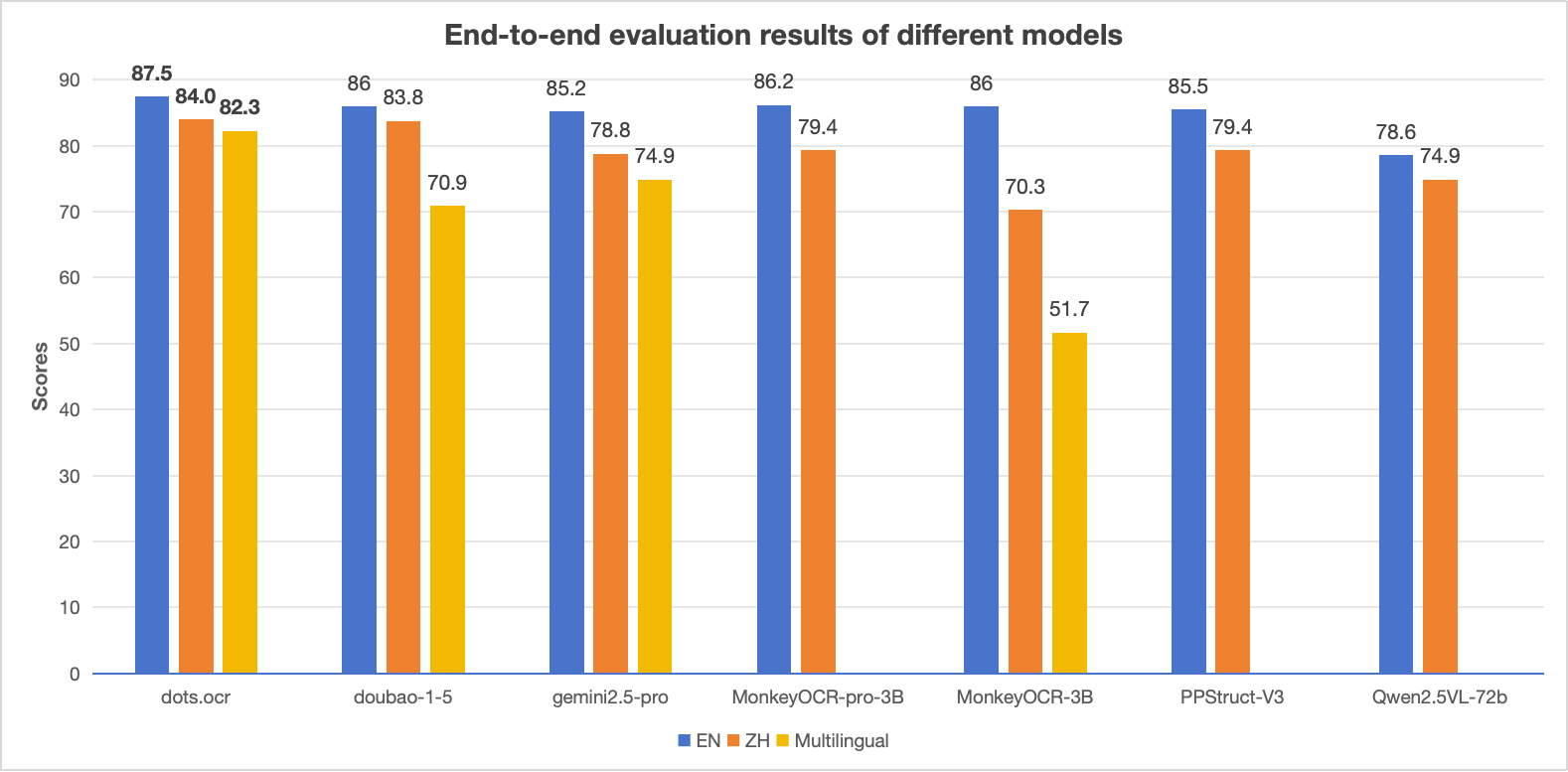

+**dots.ocr** is a powerful, multilingual document parser that unifies layout detection and content recognition within a single vision-language model while maintaining good reading order. Despite its compact 1.7B-parameter LLM foundation, it achieves state-of-the-art(SOTA) performance.

+

+1. **Powerful Performance:** **dots.ocr** achieves SOTA performance for text, tables, and reading order on [OmniDocBench](https://github.com/opendatalab/OmniDocBench), while delivering formula recognition results comparable to much larger models like Doubao-1.5 and gemini2.5-pro.

+2. **Multilingual Support:** **dots.ocr** demonstrates robust parsing capabilities for low-resource languages, achieving decisive advantages across both layout detection and content recognition on our in-house multilingual documents benchmark.

+3. **Unified and Simple Architecture:** By leveraging a single vision-language model, **dots.ocr** offers a significantly more streamlined architecture than conventional methods that rely on complex, multi-model pipelines. Switching between tasks is accomplished simply by altering the input prompt, proving that a VLM can achieve competitive detection results compared to traditional detection models like DocLayout-YOLO.

+4. **Efficient and Fast Performance:** Built upon a compact 1.7B LLM, **dots.ocr** provides faster inference speeds than many other high-performing models based on larger foundations.

+

+

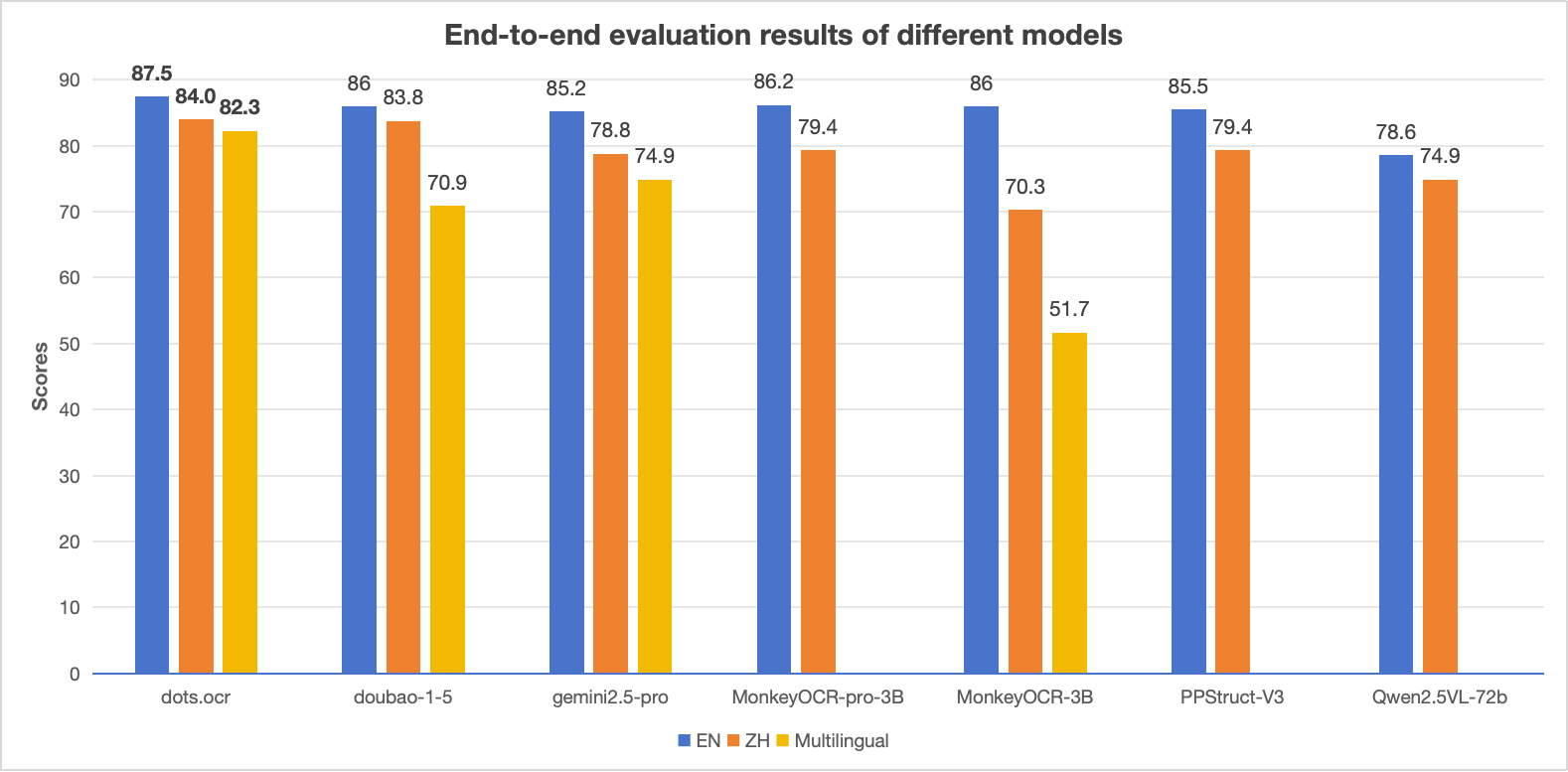

+### Performance Comparison: dots.ocr vs. Competing Models

+

+  +

+

+ +

+dots.ocr: Multilingual Document Layout Parsing in a Single Vision-Language Model +

+ +[](https://github.com/rednote-hilab/dots.ocr/blob/master/assets/blog.md) +[](https://huggingface.co/rednote-hilab/dots.ocr) + + + + + +

+> **Notes:**

+> - The EN, ZH metrics are the end2end evaluation results of [OmniDocBench](https://github.com/opendatalab/OmniDocBench), and Multilingual metric is the end2end evaluation results of dots.ocr-bench.

+

+

+## News

+* ```2025.07.30 ``` 🚀 We release [dots.ocr](https://github.com/rednote-hilab/dots.ocr), — a multilingual documents parsing model based on 1.7b llm, with SOTA performance.

+

+

+

+## Benchmark Results

+

+### 1. OmniDocBench

+

+#### The end-to-end evaluation results of different tasks.

+

+

+

+> **Notes:**

+> - The EN, ZH metrics are the end2end evaluation results of [OmniDocBench](https://github.com/opendatalab/OmniDocBench), and Multilingual metric is the end2end evaluation results of dots.ocr-bench.

+

+

+## News

+* ```2025.07.30 ``` 🚀 We release [dots.ocr](https://github.com/rednote-hilab/dots.ocr), — a multilingual documents parsing model based on 1.7b llm, with SOTA performance.

+

+

+

+## Benchmark Results

+

+### 1. OmniDocBench

+

+#### The end-to-end evaluation results of different tasks.

+

+| Model Type |

+Methods | +OverallEdit↓ | +TextEdit↓ | +FormulaEdit↓ | +TableTEDS↑ | +TableEdit↓ | +Read OrderEdit↓ | +||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| EN | +ZH | +EN | +ZH | +EN | +ZH | +EN | +ZH | +EN | +ZH | +EN | +ZH | +||

| Pipeline Tools |

+MinerU | +0.150 | +0.357 | +0.061 | +0.215 | +0.278 | +0.577 | +78.6 | +62.1 | +0.180 | +0.344 | +0.079 | +0.292 | +

| Marker | +0.336 | +0.556 | +0.080 | +0.315 | +0.530 | +0.883 | +67.6 | +49.2 | +0.619 | +0.685 | +0.114 | +0.340 | +|

| Mathpix | +0.191 | +0.365 | +0.105 | +0.384 | +0.306 | +0.454 | +77.0 | +67.1 | +0.243 | +0.320 | +0.108 | +0.304 | +|

| Docling | +0.589 | +0.909 | +0.416 | +0.987 | +0.999 | +1 | +61.3 | +25.0 | +0.627 | +0.810 | +0.313 | +0.837 | +|

| Pix2Text | +0.320 | +0.528 | +0.138 | +0.356 | +0.276 | +0.611 | +73.6 | +66.2 | +0.584 | +0.645 | +0.281 | +0.499 | +|

| Unstructured | +0.586 | +0.716 | +0.198 | +0.481 | +0.999 | +1 | +0 | +0.06 | +1 | +0.998 | +0.145 | +0.387 | +|

| OpenParse | +0.646 | +0.814 | +0.681 | +0.974 | +0.996 | +1 | +64.8 | +27.5 | +0.284 | +0.639 | +0.595 | +0.641 | +|

| PPStruct-V3 | +0.145 | +0.206 | +0.058 | +0.088 | +0.295 | +0.535 | +- | +- | +0.159 | +0.109 | +0.069 | +0.091 | +|

| Expert VLMs |

+GOT-OCR | +0.287 | +0.411 | +0.189 | +0.315 | +0.360 | +0.528 | +53.2 | +47.2 | +0.459 | +0.520 | +0.141 | +0.280 | +

| Nougat | +0.452 | +0.973 | +0.365 | +0.998 | +0.488 | +0.941 | +39.9 | +0 | +0.572 | +1.000 | +0.382 | +0.954 | +|

| Mistral OCR | +0.268 | +0.439 | +0.072 | +0.325 | +0.318 | +0.495 | +75.8 | +63.6 | +0.600 | +0.650 | +0.083 | +0.284 | +|

| OLMOCR-sglang | +0.326 | +0.469 | +0.097 | +0.293 | +0.455 | +0.655 | +68.1 | +61.3 | +0.608 | +0.652 | +0.145 | +0.277 | +|

| SmolDocling-256M | +0.493 | +0.816 | +0.262 | +0.838 | +0.753 | +0.997 | +44.9 | +16.5 | +0.729 | +0.907 | +0.227 | +0.522 | +|

| Dolphin | +0.206 | +0.306 | +0.107 | +0.197 | +0.447 | +0.580 | +77.3 | +67.2 | +0.180 | +0.285 | +0.091 | +0.162 | +|

| MinerU 2 | +0.139 | +0.240 | +0.047 | +0.109 | +0.297 | +0.536 | +82.5 | +79.0 | +0.141 | +0.195 | +0.069< | +0.118 | +|

| OCRFlux | +0.195 | +0.281 | +0.064 | +0.183 | +0.379 | +0.613 | +71.6 | +81.3 | +0.253 | +0.139 | +0.086 | +0.187 | +|

| MonkeyOCR-pro-3B | +0.138 | +0.206 | +0.067 | +0.107 | +0.246 | +0.421 | +81.5 | +87.5 | +0.139 | +0.111 | +0.100 | +0.185 | +|

| General VLMs |

+GPT4o | +0.233 | +0.399 | +0.144 | +0.409 | +0.425 | +0.606 | +72.0 | +62.9 | +0.234 | +0.329 | +0.128 | +0.251 | +

| Qwen2-VL-72B | +0.252 | +0.327 | +0.096 | +0.218 | +0.404 | +0.487 | +76.8 | +76.4 | +0.387 | +0.408 | +0.119 | +0.193 | +|

| Qwen2.5-VL-72B | +0.214 | +0.261 | +0.092 | +0.18 | +0.315 | +0.434 | +82.9 | +83.9 | +0.341 | +0.262 | +0.106 | +0.168 | +|

| Gemini2.5-Pro | +0.148 | +0.212 | +0.055 | +0.168 | +0.356 | +0.439 | +85.8 | +86.4 | +0.13 | +0.119 | +0.049 | +0.121 | +|

| doubao-1-5-thinking-vision-pro-250428 | +0.140 | +0.162 | +0.043 | +0.085 | +0.295 | +0.384 | +83.3 | +89.3 | +0.165 | +0.085 | +0.058 | +0.094 | +|

| Expert VLMs | +dots.ocr | +0.125 | +0.160 | +0.032 | +0.066 | +0.329 | +0.416 | +88.6 | +89.0 | +0.099 | +0.092 | +0.040 | +0.067 | +

| Model Type |

+Models | +Book | +Slides | +Financial Report |

+Textbook | +Exam Paper |

+Magazine | +Academic Papers |

+Notes | +Newspaper | +Overall | +

|---|---|---|---|---|---|---|---|---|---|---|---|

| Pipeline Tools |

+MinerU | +0.055 | +0.124 | +0.033 | +0.102 | +0.159 | +0.072 | +0.025 | +0.984 | +0.171 | +0.206 | +

| Marker | +0.074 | +0.340 | +0.089 | +0.319 | +0.452 | +0.153 | +0.059 | +0.651 | +0.192 | +0.274 | +|

| Mathpix | +0.131 | +0.220 | +0.202 | +0.216 | +0.278 | +0.147 | +0.091 | +0.634 | +0.690 | +0.300 | +|

| Expert VLMs |

+GOT-OCR | +0.111 | +0.222 | +0.067 | +0.132 | +0.204 | +0.198 | +0.179 | +0.388 | +0.771 | +0.267 | +

| Nougat | +0.734 | +0.958 | +1.000 | +0.820 | +0.930 | +0.830 | +0.214 | +0.991 | +0.871 | +0.806 | +|

| Dolphin | +0.091 | +0.131 | +0.057 | +0.146 | +0.231 | +0.121 | +0.074 | +0.363 | +0.307 | +0.177 | +|

| OCRFlux | +0.068 | +0.125 | +0.092 | +0.102 | +0.119 | +0.083 | +0.047 | +0.223 | +0.536 | +0.149 | +|

| MonkeyOCR-pro-3B | +0.084 | +0.129 | +0.060 | +0.090 | +0.107 | +0.073 | +0.050 | +0.171 | +0.107 | +0.100 | +|

| General VLMs |

+GPT4o | +0.157 | +0.163 | +0.348 | +0.187 | +0.281 | +0.173 | +0.146 | +0.607 | +0.751 | +0.316 | +

| Qwen2.5-VL-7B | +0.148 | +0.053 | +0.111 | +0.137 | +0.189 | +0.117 | +0.134 | +0.204 | +0.706 | +0.205 | +|

| InternVL3-8B | +0.163 | +0.056 | +0.107 | +0.109 | +0.129 | +0.100 | +0.159 | +0.150 | +0.681 | +0.188 | +|

| doubao-1-5-thinking-vision-pro-250428 | +0.048 | +0.048 | +0.024 | +0.062 | +0.085 | +0.051 | +0.039 | +0.096 | +0.181 | +0.073 | +|

| Expert VLMs | +dots.ocr | +0.031 | +0.047 | +0.011 | +0.082 | +0.079 | +0.028 | +0.029 | +0.109 | +0.056 | +0.055 | +

| Methods | +OverallEdit↓ | +TextEdit↓ | +FormulaEdit↓ | +TableTEDS↑ | +TableEdit↓ | +Read OrderEdit↓ | +MonkeyOCR-3B | +0.483 | +0.445 | +0.627 | +50.93 | +0.452 | +0.409 | + +

|---|---|---|---|---|---|---|

| doubao-1-5-thinking-vision-pro-250428 | +0.291 | +0.226 | +0.440 | +71.2 | +0.260 | +0.238 | +

| doubao-1-6 | +0.299 | +0.270 | +0.417 | +71.0 | +0.258 | +0.253 | +

| Gemini2.5-Pro | +0.251 | +0.163 | +0.402 | +77.1 | +0.236 | +0.202 | +

| dots.ocr | +0.177 | +0.075 | +0.297 | +79.2 | +0.186 | +0.152 | +

| Method | +F1@IoU=.50:.05:.95↑ | +F1@IoU=.50↑ | +||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Overall | +Text | +Formula | +Table | +Picture | +Overall | +Text | +Formula | +Table | +Picture | +DocLayout-YOLO-DocStructBench | +0.733 | +0.694 | +0.480 | +0.803 | +0.619 | +0.806 | +0.779 | +0.620 | +0.858 | +0.678 | + + +

| dots.ocr-parse all | +0.831 | +0.801 | +0.654 | +0.838 | +0.748 | +0.922 | +0.909 | +0.770 | +0.888 | +0.831 | +

| dots.ocr-detection only | +0.845 | +0.816 | +0.716 | +0.875 | +0.765 | +0.930 | +0.917 | +0.832 | +0.918 | +0.843 | +

| Model | +ArXiv | +Old Scans Math |

+Tables | +Old Scans | +Headers and Footers |

+Multi column |

+Long Tiny Text |

+Base | +Overall | +

|---|---|---|---|---|---|---|---|---|---|

| GOT OCR | +52.7 | +52.0 | +0.2 | +22.1 | +93.6 | +42.0 | +29.9 | +94.0 | +48.3 ± 1.1 | +

| Marker | +76.0 | +57.9 | +57.6 | +27.8 | +84.9 | +72.9 | +84.6 | +99.1 | +70.1 ± 1.1 | +

| MinerU | +75.4 | +47.4 | +60.9 | +17.3 | +96.6 | +59.0 | +39.1 | +96.6 | +61.5 ± 1.1 | +

| Mistral OCR | +77.2 | +67.5 | +60.6 | +29.3 | +93.6 | +71.3 | +77.1 | +99.4 | +72.0 ± 1.1 | +

| Nanonets OCR | +67.0 | +68.6 | +77.7 | +39.5 | +40.7 | +69.9 | +53.4 | +99.3 | +64.5 ± 1.1 | +

| GPT-4o (No Anchor) |

+51.5 | +75.5 | +69.1 | +40.9 | +94.2 | +68.9 | +54.1 | +96.7 | +68.9 ± 1.1 | +

| GPT-4o (Anchored) |

+53.5 | +74.5 | +70.0 | +40.7 | +93.8 | +69.3 | +60.6 | +96.8 | +69.9 ± 1.1 | +

| Gemini Flash 2 (No Anchor) |

+32.1 | +56.3 | +61.4 | +27.8 | +48.0 | +58.7 | +84.4 | +94.0 | +57.8 ± 1.1 | +

| Gemini Flash 2 (Anchored) |

+54.5 | +56.1 | +72.1 | +34.2 | +64.7 | +61.5 | +71.5 | +95.6 | +63.8 ± 1.2 | +

| Qwen 2 VL (No Anchor) |

+19.7 | +31.7 | +24.2 | +17.1 | +88.9 | +8.3 | +6.8 | +55.5 | +31.5 ± 0.9 | +

| Qwen 2.5 VL (No Anchor) |

+63.1 | +65.7 | +67.3 | +38.6 | +73.6 | +68.3 | +49.1 | +98.3 | +65.5 ± 1.2 | +

| olmOCR v0.1.75 (No Anchor) |

+71.5 | +71.4 | +71.4 | +42.8 | +94.1 | +77.7 | +71.0 | +97.8 | +74.7 ± 1.1 | +

| olmOCR v0.1.75 (Anchored) |

+74.9 | +71.2 | +71.0 | +42.2 | +94.5 | +78.3 | +73.3 | +98.3 | +75.5 ± 1.0 | +

| MonkeyOCR-pro-3B | +83.8 | +68.8 | +74.6 | +36.1 | +91.2 | +76.6 | +80.1 | +95.3 | +75.8 ± 1.0 | +

| dots.ocr | +82.1 | +64.2 | +88.3 | +40.9 | +94.1 | +82.4 | +81.2 | +99.5 | +79.1 ± 1.0 | +

+

+

+### Hugginface inference with CPU

+Please refer to [CPU inference](https://github.com/rednote-hilab/dots.ocr/issues/1#issuecomment-3148962536)

+

+

+## 3. Document Parse

+**Based on vLLM server**, you can parse an image or a pdf file using the following commands:

+```bash

+

+# Parse all layout info, both detection and recognition

+# Parse a single image

+python3 dots_ocr/parser.py demo/demo_image1.jpg

+# Parse a single PDF

+python3 dots_ocr/parser.py demo/demo_pdf1.pdf --num_thread 64 # try bigger num_threads for pdf with a large number of pages

+

+# Layout detection only

+python3 dots_ocr/parser.py demo/demo_image1.jpg --prompt prompt_layout_only_en

+

+# Parse text only, except Page-header and Page-footer

+python3 dots_ocr/parser.py demo/demo_image1.jpg --prompt prompt_ocr

+

+# Parse layout info by bbox

+python3 dots_ocr/parser.py demo/demo_image1.jpg --prompt prompt_grounding_ocr --bbox 163 241 1536 705

+

+```

+**Based on Transformers**, you can parse an image or a pdf file using the same commands above, just add `--use_hf true`.

+

+> Notice: transformers is slower than vllm, if you want to use demo/* with transformers,just add `use_hf=True` in `DotsOCRParser(..,use_hf=True)`

+

+Hugginface inference details

+ +```python +import torch +from transformers import AutoModelForCausalLM, AutoProcessor, AutoTokenizer +from qwen_vl_utils import process_vision_info +from dots_ocr.utils import dict_promptmode_to_prompt + +model_path = "./weights/DotsOCR" +model = AutoModelForCausalLM.from_pretrained( + model_path, + attn_implementation="flash_attention_2", + torch_dtype=torch.bfloat16, + device_map="auto", + trust_remote_code=True +) +processor = AutoProcessor.from_pretrained(model_path, trust_remote_code=True) + +image_path = "demo/demo_image1.jpg" +prompt = """Please output the layout information from the PDF image, including each layout element's bbox, its category, and the corresponding text content within the bbox. + +1. Bbox format: [x1, y1, x2, y2] + +2. Layout Categories: The possible categories are ['Caption', 'Footnote', 'Formula', 'List-item', 'Page-footer', 'Page-header', 'Picture', 'Section-header', 'Table', 'Text', 'Title']. + +3. Text Extraction & Formatting Rules: + - Picture: For the 'Picture' category, the text field should be omitted. + - Formula: Format its text as LaTeX. + - Table: Format its text as HTML. + - All Others (Text, Title, etc.): Format their text as Markdown. + +4. Constraints: + - The output text must be the original text from the image, with no translation. + - All layout elements must be sorted according to human reading order. + +5. Final Output: The entire output must be a single JSON object. +""" + +messages = [ + { + "role": "user", + "content": [ + { + "type": "image", + "image": image_path + }, + {"type": "text", "text": prompt} + ] + } + ] + +# Preparation for inference +text = processor.apply_chat_template( + messages, + tokenize=False, + add_generation_prompt=True +) +image_inputs, video_inputs = process_vision_info(messages) +inputs = processor( + text=[text], + images=image_inputs, + videos=video_inputs, + padding=True, + return_tensors="pt", +) + +inputs = inputs.to("cuda") + +# Inference: Generation of the output +generated_ids = model.generate(**inputs, max_new_tokens=24000) +generated_ids_trimmed = [ + out_ids[len(in_ids) :] for in_ids, out_ids in zip(inputs.input_ids, generated_ids) +] +output_text = processor.batch_decode( + generated_ids_trimmed, skip_special_tokens=True, clean_up_tokenization_spaces=False +) +print(output_text) + +``` + +

+

+

+## 4. Demo

+You can run the demo with the following command, or try directly at [live demo](https://dotsocr.xiaohongshu.com/)

+```bash

+python demo/demo_gradio.py

+```

+

+We also provide a demo for grounding ocr:

+```bash

+python demo/demo_gradio_annotion.py

+```

+

+

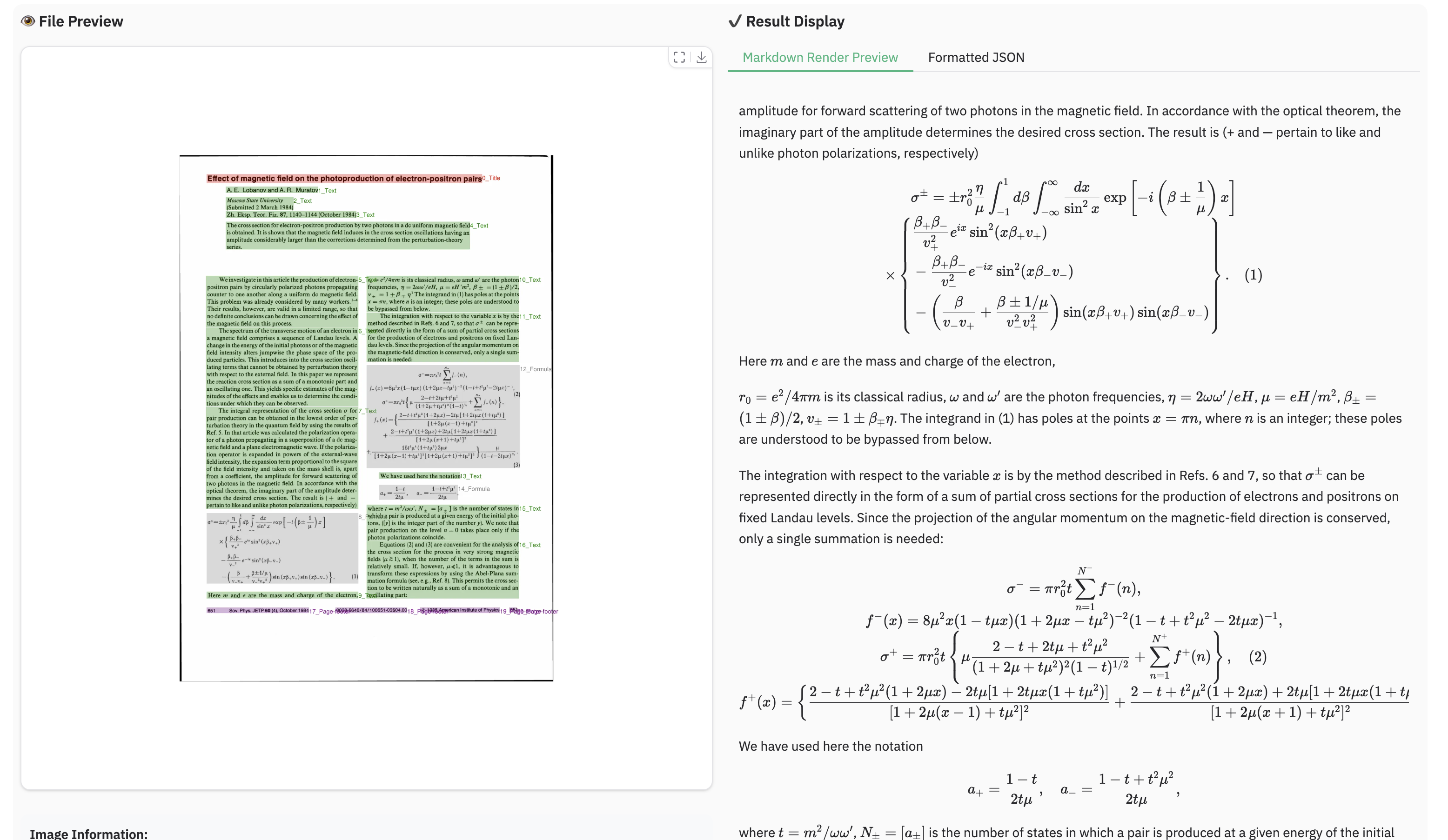

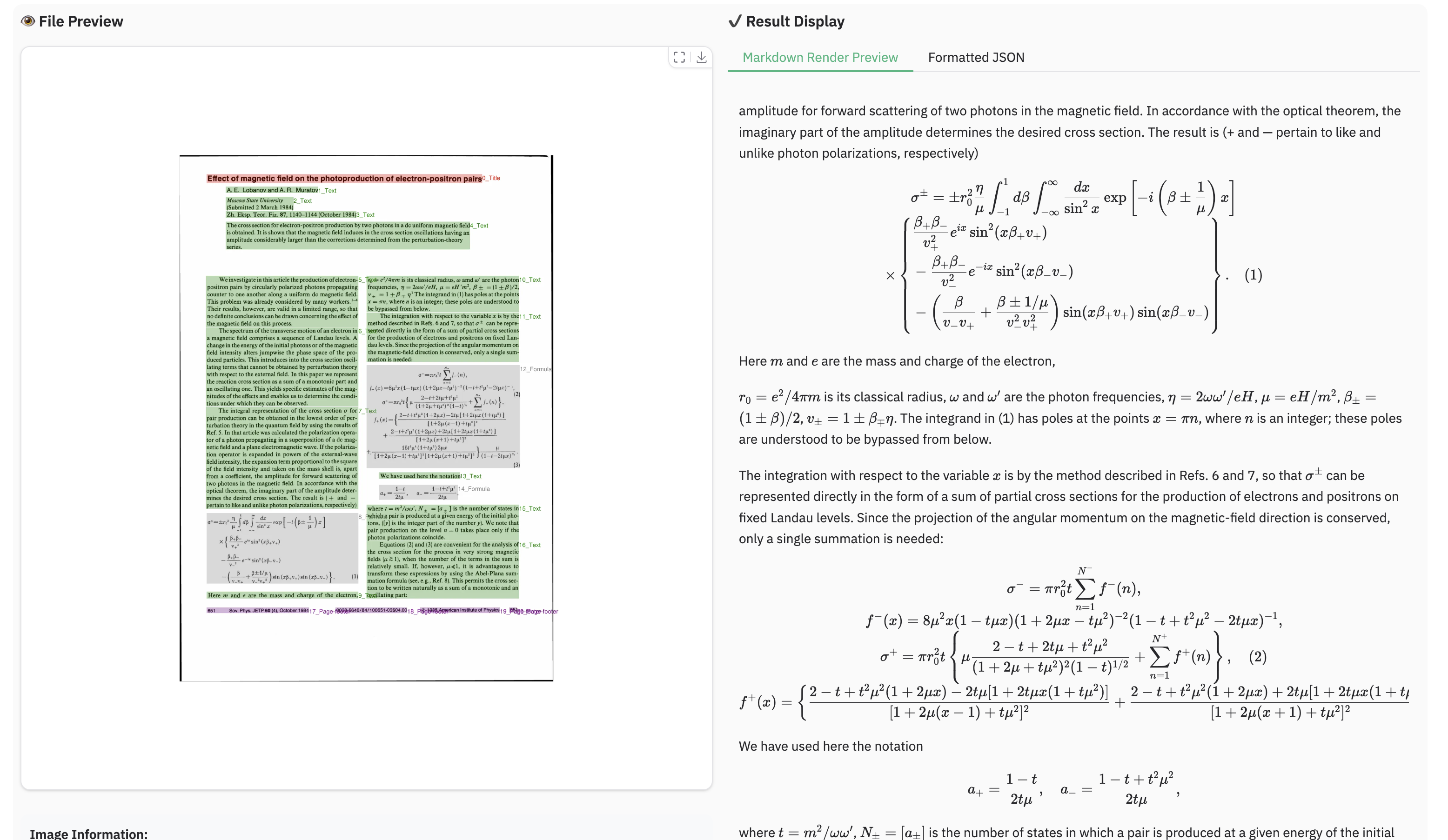

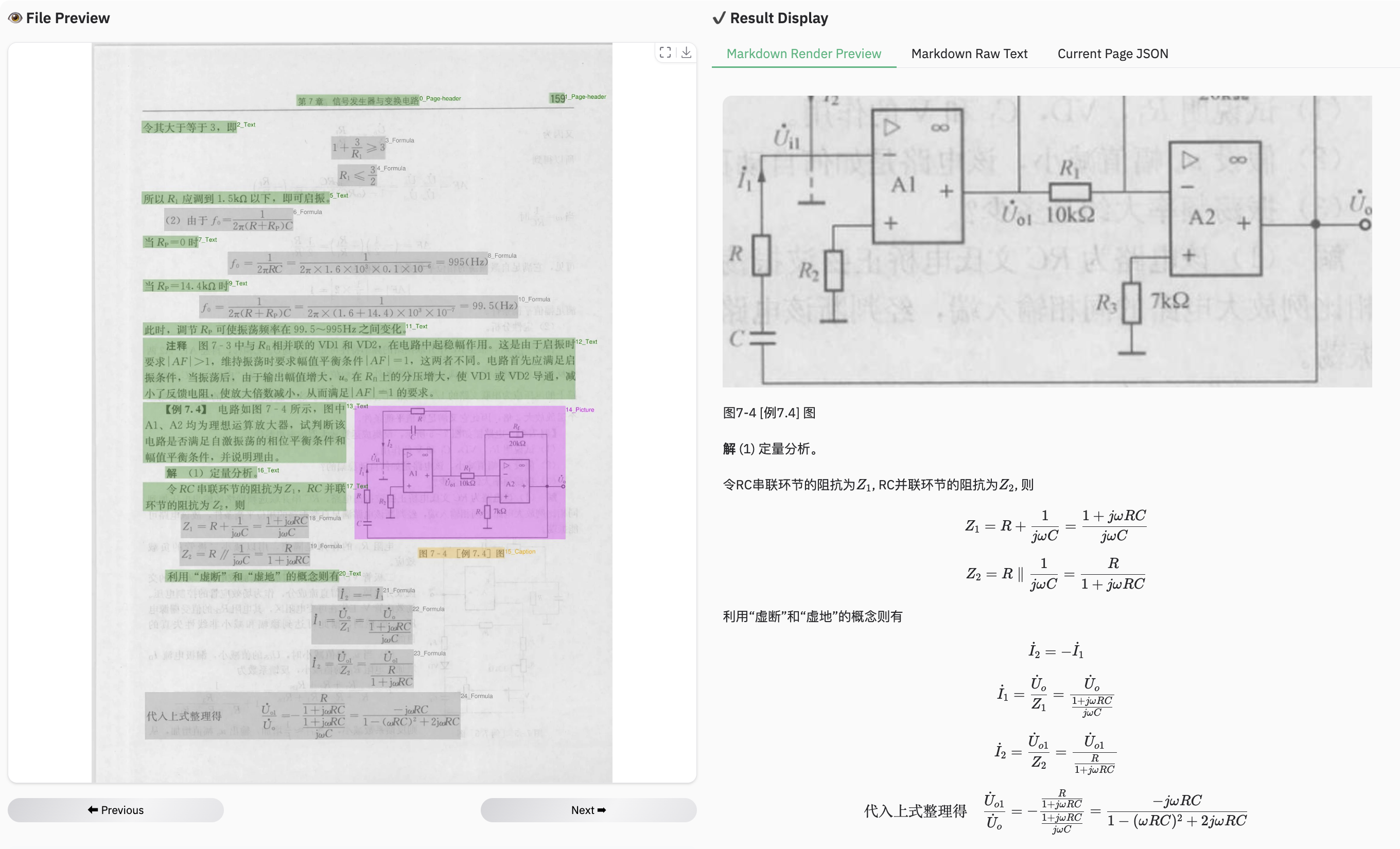

+### Example for formula document

+Output Results

+ +1. **Structured Layout Data** (`demo_image1.json`): A JSON file containing the detected layout elements, including their bounding boxes, categories, and extracted text. +2. **Processed Markdown File** (`demo_image1.md`): A Markdown file generated from the concatenated text of all detected cells. + * An additional version, `demo_image1_nohf.md`, is also provided, which excludes page headers and footers for compatibility with benchmarks like Omnidocbench and olmOCR-bench. +3. **Layout Visualization** (`demo_image1.jpg`): The original image with the detected layout bounding boxes drawn on it. + + +

+ +

+ +

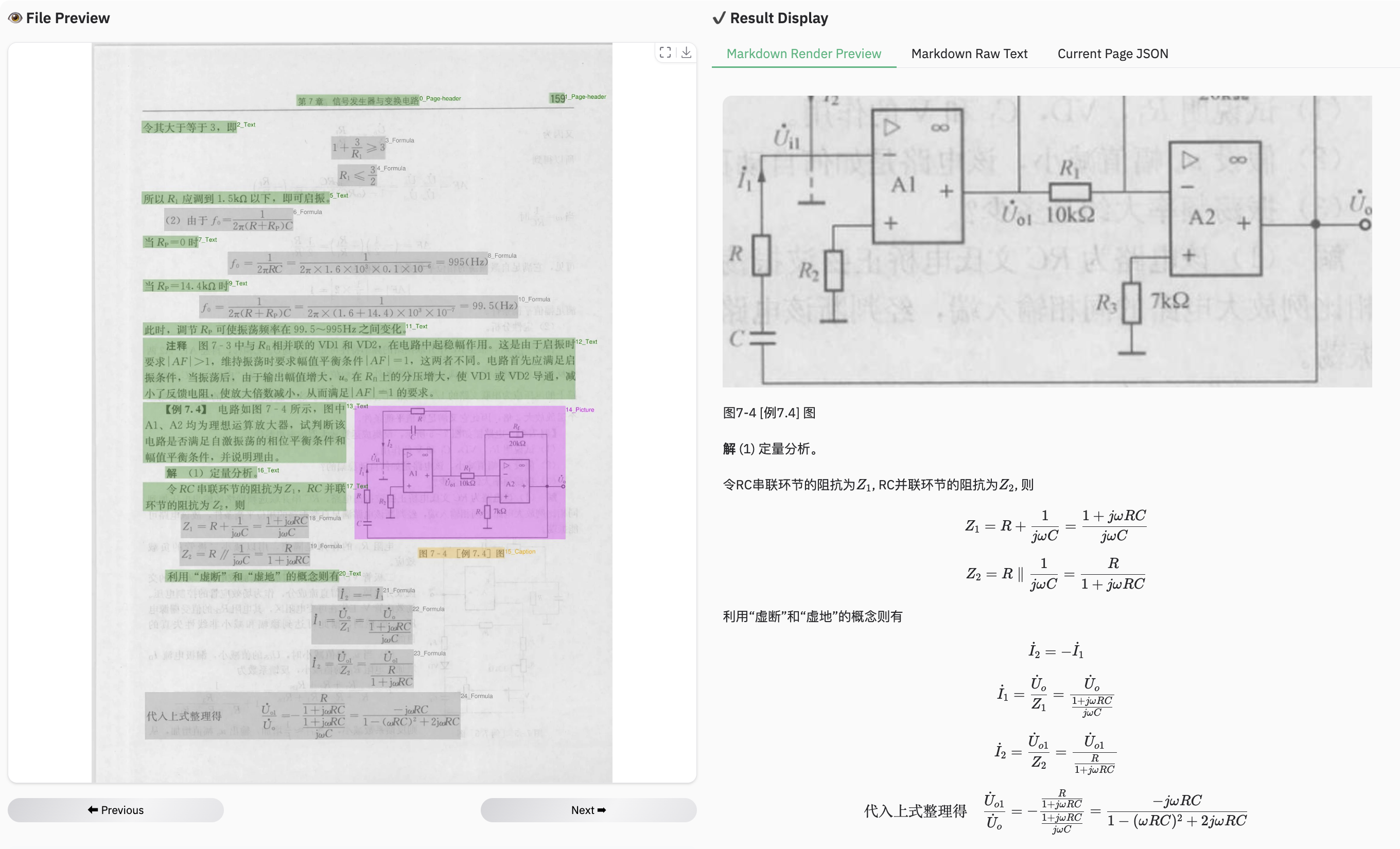

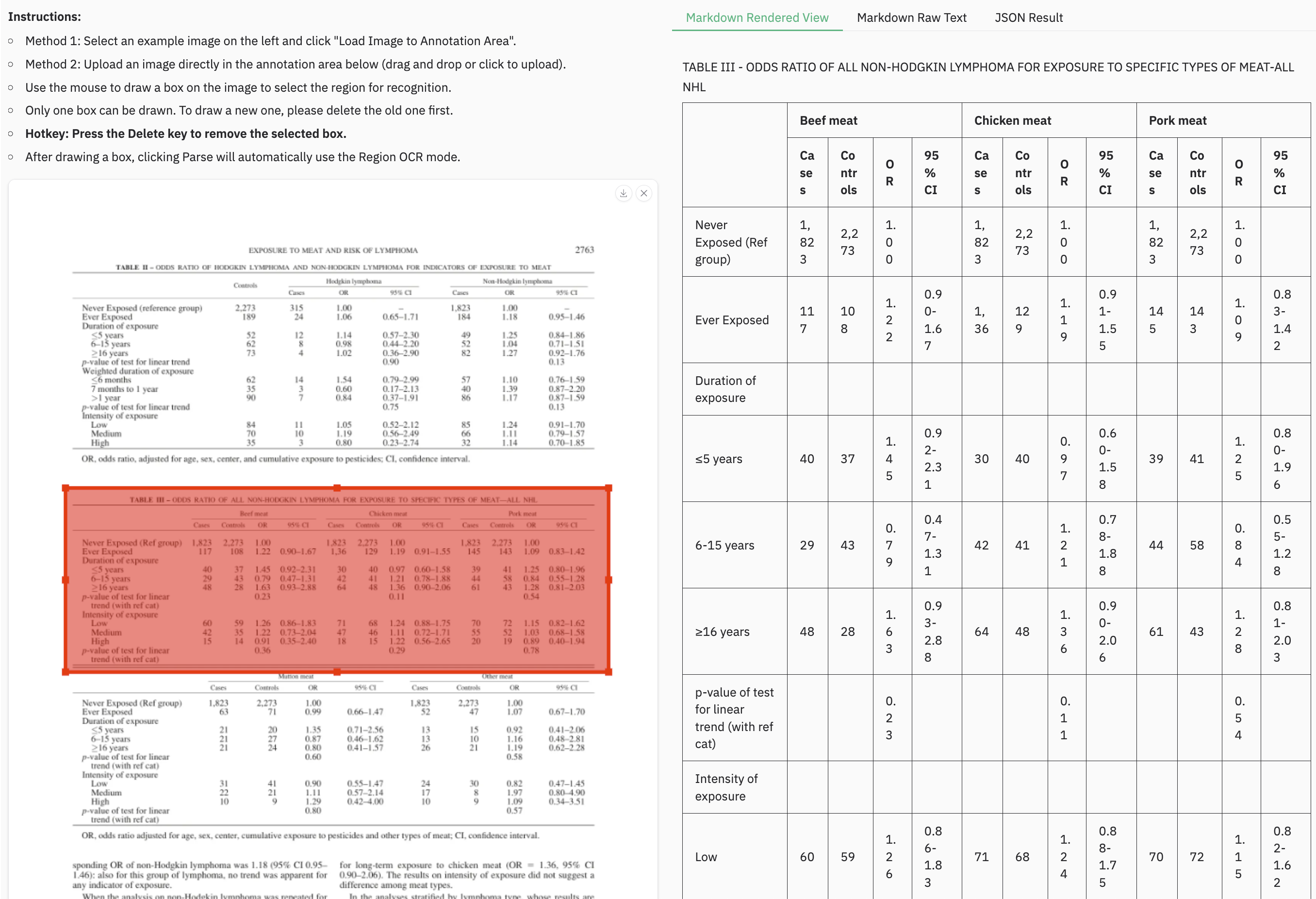

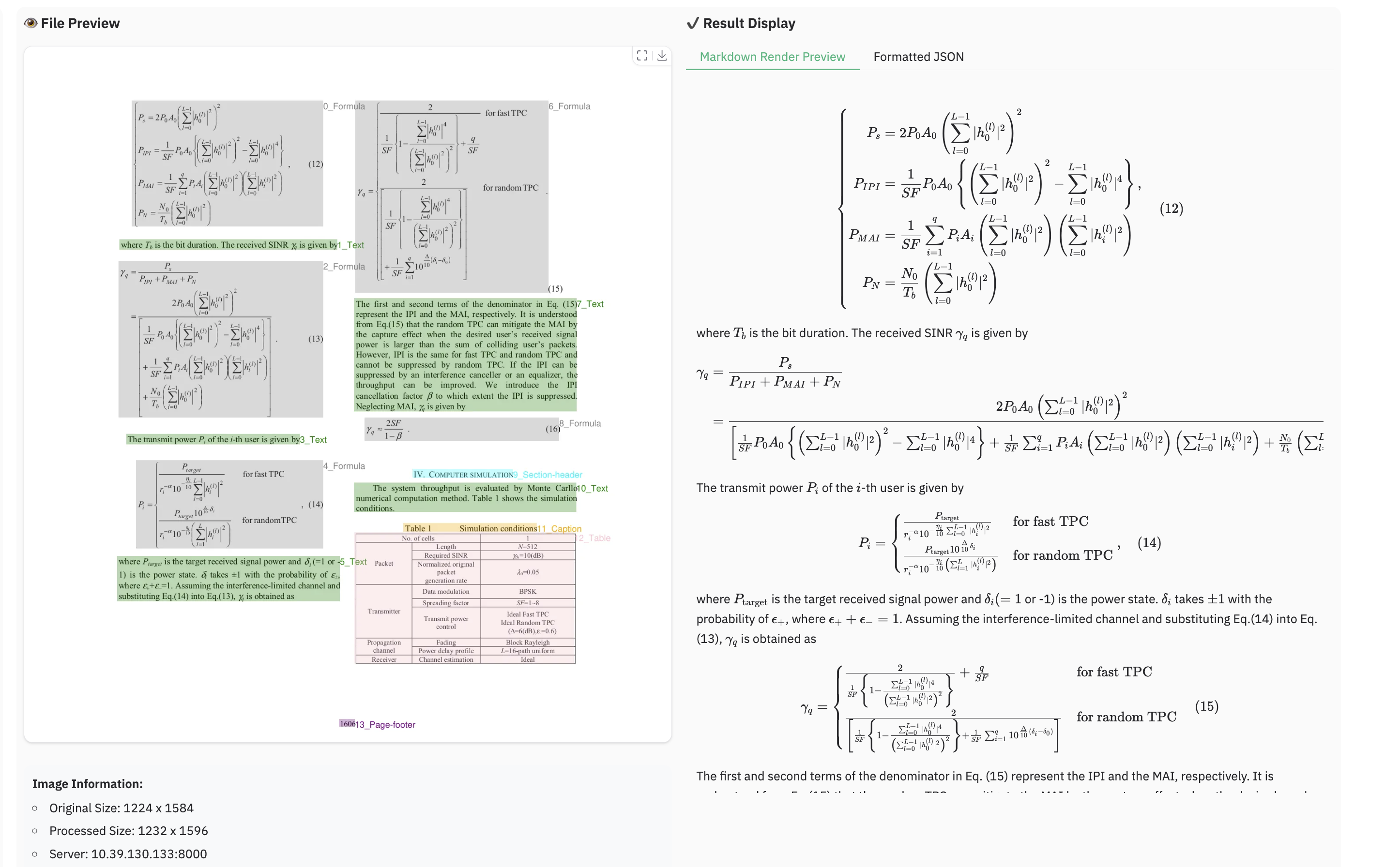

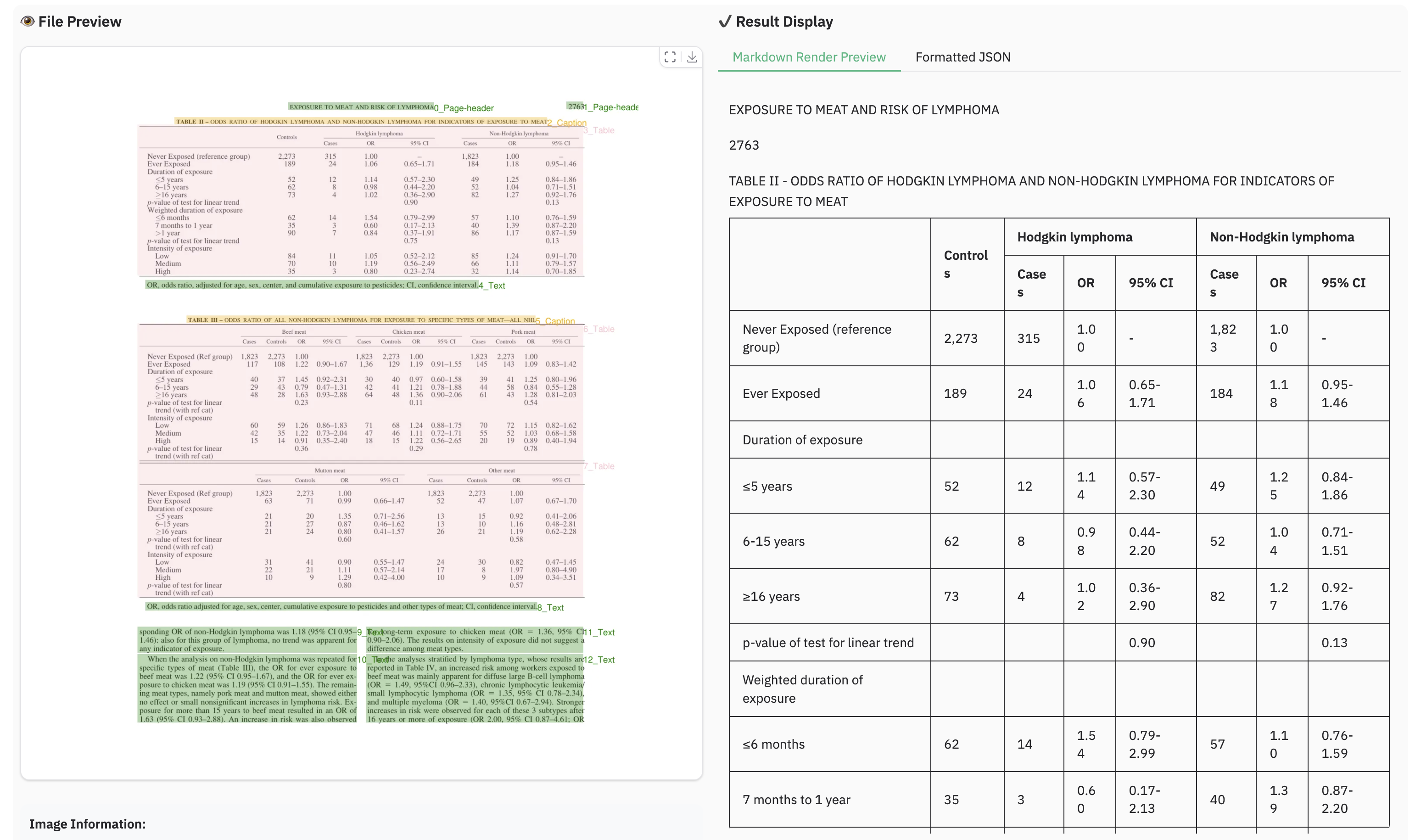

+### Example for table document

+

+

+### Example for table document

+ +

+ +

+ +

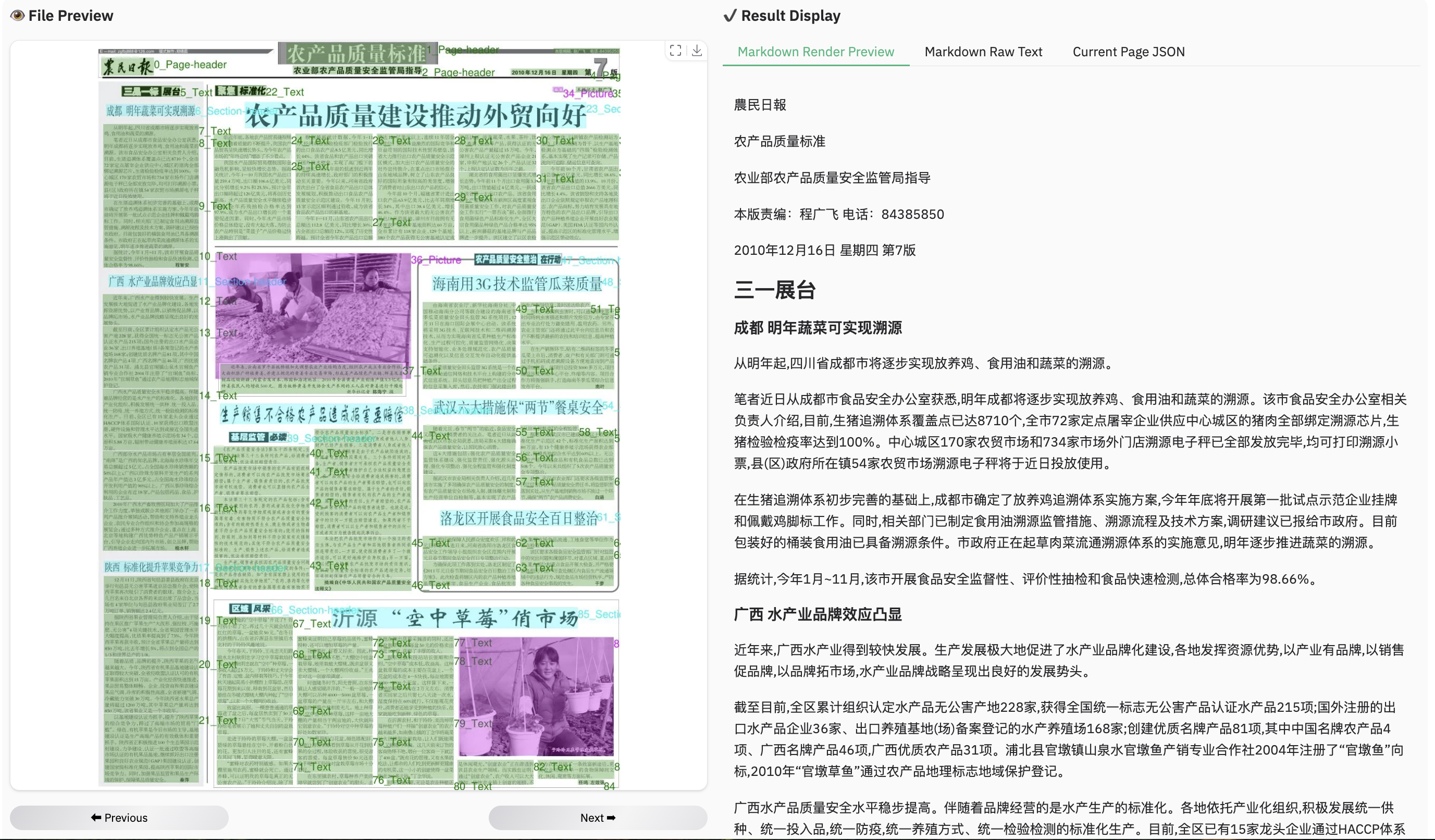

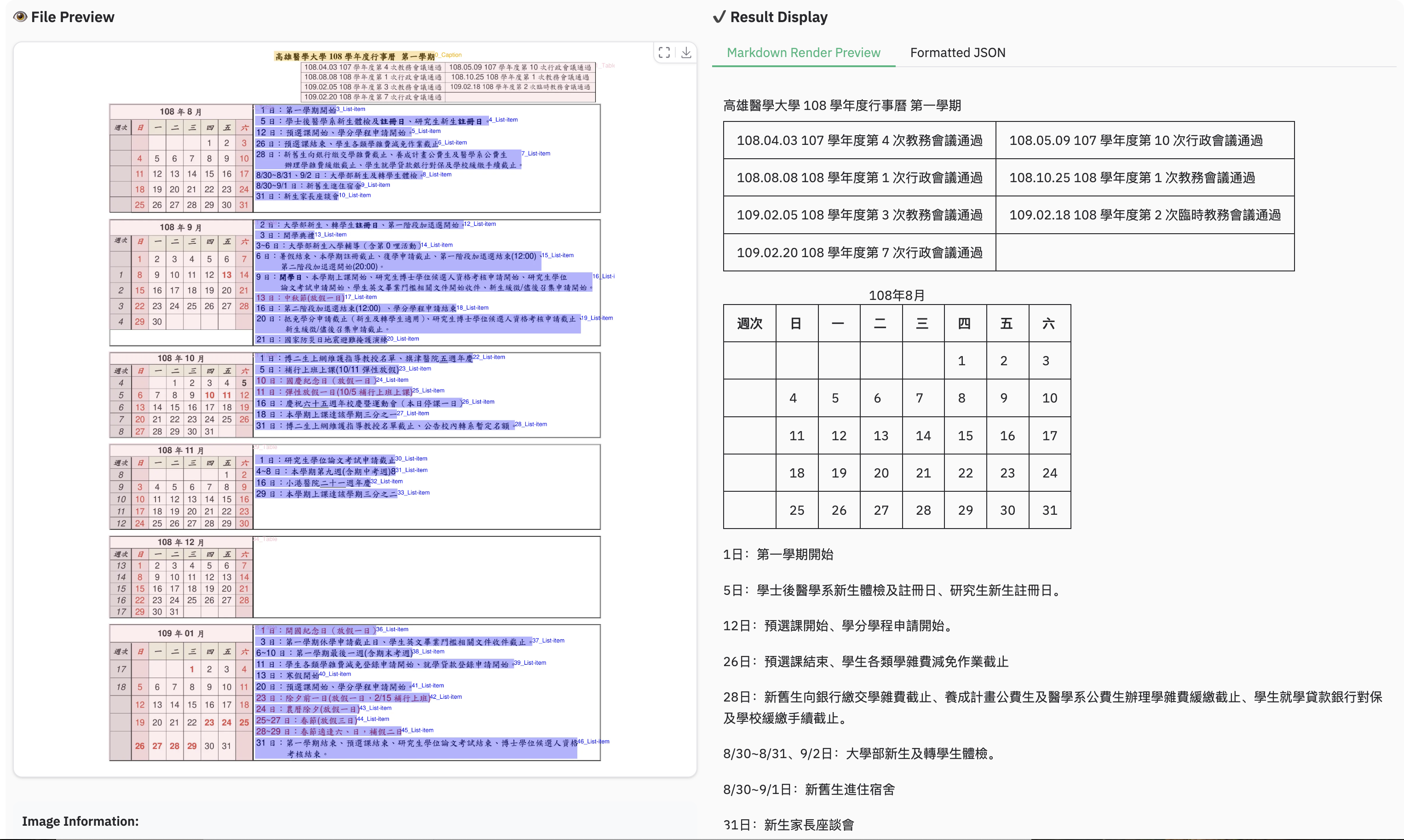

+### Example for multilingual document

+

+

+### Example for multilingual document

+ +

+ +

+ +

+ +

+ +

+### Example for reading order

+

+

+### Example for reading order

+ +

+### Example for grounding ocr

+

+

+### Example for grounding ocr

+ +

+

+## Acknowledgments

+We would like to thank [Qwen2.5-VL](https://github.com/QwenLM/Qwen2.5-VL), [aimv2](https://github.com/apple/ml-aim), [MonkeyOCR](https://github.com/Yuliang-Liu/MonkeyOCR),

+[OmniDocBench](https://github.com/opendatalab/OmniDocBench), [PyMuPDF](https://github.com/pymupdf/PyMuPDF), for providing code and models.

+

+We also thank [DocLayNet](https://github.com/DS4SD/DocLayNet), [M6Doc](https://github.com/HCIILAB/M6Doc), [CDLA](https://github.com/buptlihang/CDLA), [D4LA](https://github.com/AlibabaResearch/AdvancedLiterateMachinery) for providing valuable datasets.

+

+## Limitation & Future Work

+

+- **Complex Document Elements:**

+ - **Table&Formula**: dots.ocr is not yet perfect for high-complexity tables and formula extraction.

+ - **Picture**: Pictures in documents are currently not parsed.

+

+- **Parsing Failures:** The model may fail to parse under certain conditions:

+ - When the character-to-pixel ratio is excessively high. Try enlarging the image or increasing the PDF parsing DPI (a setting of 200 is recommended). However, please note that the model performs optimally on images with a resolution under 11289600 pixels.

+ - Continuous special characters, such as ellipses (`...`) and underscores (`_`), may cause the prediction output to repeat endlessly. In such scenarios, consider using alternative prompts like `prompt_layout_only_en`, `prompt_ocr`, or `prompt_grounding_ocr` ([details here](https://github.com/rednote-hilab/dots.ocr/blob/master/dots_ocr/utils/prompts.py)).

+

+- **Performance Bottleneck:** Despite its 1.7B parameter LLM foundation, **dots.ocr** is not yet optimized for high-throughput processing of large PDF volumes.

+

+We are committed to achieving more accurate table and formula parsing, as well as enhancing the model's OCR capabilities for broader generalization, all while aiming for **a more powerful, more efficient model**. Furthermore, we are actively considering the development of **a more general-purpose perception model** based on Vision-Language Models (VLMs), which would integrate general detection, image captioning, and OCR tasks into a unified framework. **Parsing the content of the pictures in the documents** is also a key priority for our future work.

+We believe that collaboration is the key to tackling these exciting challenges. If you are passionate about advancing the frontiers of document intelligence and are interested in contributing to these future endeavors, we would love to hear from you. Please reach out to us via email at: [yanqing4@xiaohongshu.com].

diff --git a/assets/blog.md b/assets/blog.md

new file mode 100644

index 0000000000000000000000000000000000000000..f276bba5ac44c425fbe8b5a9d4735cabb874509e

--- /dev/null

+++ b/assets/blog.md

@@ -0,0 +1,1044 @@

+

+

+

+## Acknowledgments

+We would like to thank [Qwen2.5-VL](https://github.com/QwenLM/Qwen2.5-VL), [aimv2](https://github.com/apple/ml-aim), [MonkeyOCR](https://github.com/Yuliang-Liu/MonkeyOCR),

+[OmniDocBench](https://github.com/opendatalab/OmniDocBench), [PyMuPDF](https://github.com/pymupdf/PyMuPDF), for providing code and models.

+

+We also thank [DocLayNet](https://github.com/DS4SD/DocLayNet), [M6Doc](https://github.com/HCIILAB/M6Doc), [CDLA](https://github.com/buptlihang/CDLA), [D4LA](https://github.com/AlibabaResearch/AdvancedLiterateMachinery) for providing valuable datasets.

+

+## Limitation & Future Work

+

+- **Complex Document Elements:**

+ - **Table&Formula**: dots.ocr is not yet perfect for high-complexity tables and formula extraction.

+ - **Picture**: Pictures in documents are currently not parsed.

+

+- **Parsing Failures:** The model may fail to parse under certain conditions:

+ - When the character-to-pixel ratio is excessively high. Try enlarging the image or increasing the PDF parsing DPI (a setting of 200 is recommended). However, please note that the model performs optimally on images with a resolution under 11289600 pixels.

+ - Continuous special characters, such as ellipses (`...`) and underscores (`_`), may cause the prediction output to repeat endlessly. In such scenarios, consider using alternative prompts like `prompt_layout_only_en`, `prompt_ocr`, or `prompt_grounding_ocr` ([details here](https://github.com/rednote-hilab/dots.ocr/blob/master/dots_ocr/utils/prompts.py)).

+

+- **Performance Bottleneck:** Despite its 1.7B parameter LLM foundation, **dots.ocr** is not yet optimized for high-throughput processing of large PDF volumes.

+

+We are committed to achieving more accurate table and formula parsing, as well as enhancing the model's OCR capabilities for broader generalization, all while aiming for **a more powerful, more efficient model**. Furthermore, we are actively considering the development of **a more general-purpose perception model** based on Vision-Language Models (VLMs), which would integrate general detection, image captioning, and OCR tasks into a unified framework. **Parsing the content of the pictures in the documents** is also a key priority for our future work.

+We believe that collaboration is the key to tackling these exciting challenges. If you are passionate about advancing the frontiers of document intelligence and are interested in contributing to these future endeavors, we would love to hear from you. Please reach out to us via email at: [yanqing4@xiaohongshu.com].

diff --git a/assets/blog.md b/assets/blog.md

new file mode 100644

index 0000000000000000000000000000000000000000..f276bba5ac44c425fbe8b5a9d4735cabb874509e

--- /dev/null

+++ b/assets/blog.md

@@ -0,0 +1,1044 @@

++dots.ocr: Multilingual Document Layout Parsing in a Single Vision-Language Model +

+ + +## Introduction + +**dots.ocr** is a powerful, multilingual document parser that unifies layout detection and content recognition within a single vision-language model while maintaining good reading order. Despite its compact 1.7B-parameter LLM foundation, it achieves state-of-the-art(SOTA) performance. + +1. **Powerful Performance:** **dots.ocr** achieves SOTA performance for text, tables, and reading order on [OmniDocBench](https://github.com/opendatalab/OmniDocBench), while delivering formula recognition results comparable to much larger models like Doubao-1.5 and gemini2.5-pro. +2. **Multilingual Support:** **dots.ocr** demonstrates robust parsing capabilities for low-resource languages, achieving decisive advantages across both layout detection and content recognition on our in-house multilingual documents benchmark. +3. **Unified and Simple Architecture:** By leveraging a single vision-language model, **dots.ocr** offers a significantly more streamlined architecture than conventional methods that rely on complex, multi-model pipelines. Switching between tasks is accomplished simply by altering the input prompt, proving that a VLM can achieve competitive detection results compared to traditional detection models like DocLayout-YOLO. +4. **Efficient and Fast Performance:** Built upon a compact 1.7B LLM, **dots.ocr** provides faster inference speeds than many other high-performing models based on larger foundations. + + +### Performance Comparison on Document Parsing Benchmarks + +

+> **Notes:**

+> - The EN, ZH metrics are the end2end evaluation results of [OmniDocBench](https://github.com/opendatalab/OmniDocBench), and Multilingual metric is the end2end evaluation results of dots.ocr-bench.

+

+

+## Show Case

+### Example for formula document

+

+

+> **Notes:**

+> - The EN, ZH metrics are the end2end evaluation results of [OmniDocBench](https://github.com/opendatalab/OmniDocBench), and Multilingual metric is the end2end evaluation results of dots.ocr-bench.

+

+

+## Show Case

+### Example for formula document

+ +

+ +

+ +

+### Example for table document

+

+

+### Example for table document

+ +

+ +

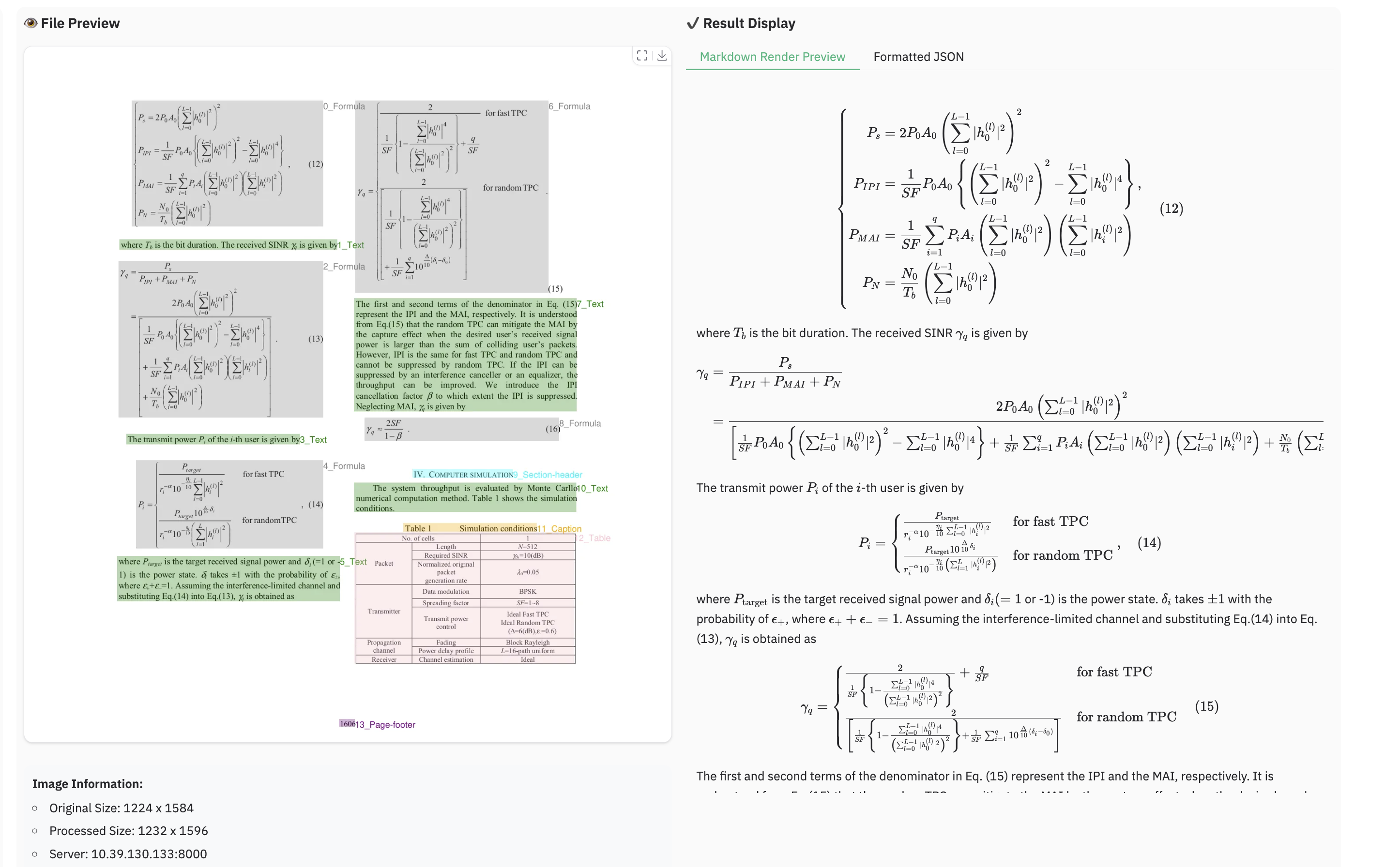

+ +

+### Example for multilingual document

+

+

+### Example for multilingual document

+ +

+ +

+ +

+ +

+ +

+### Example for reading order

+

+

+### Example for reading order

+ +

+### Example for grounding ocr

+

+

+### Example for grounding ocr

+ +

+

+

+## Benchmark Results

+

+### 1. OmniDocBench

+

+#### The end-to-end evaluation results of different tasks.

+

+

+

+

+

+## Benchmark Results

+

+### 1. OmniDocBench

+

+#### The end-to-end evaluation results of different tasks.

+

+| Model Type |

+Methods | +OverallEdit↓ | +TextEdit↓ | +FormulaEdit↓ | +TableTEDS↑ | +TableEdit↓ | +Read OrderEdit↓ | +||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| EN | +ZH | +EN | +ZH | +EN | +ZH | +EN | +ZH | +EN | +ZH | +EN | +ZH | +||

| Pipeline Tools |

+MinerU | +0.150 | +0.357 | +0.061 | +0.215 | +0.278 | +0.577 | +78.6 | +62.1 | +0.180 | +0.344 | +0.079 | +0.292 | +

| Marker | +0.336 | +0.556 | +0.080 | +0.315 | +0.530 | +0.883 | +67.6 | +49.2 | +0.619 | +0.685 | +0.114 | +0.340 | +|

| Mathpix | +0.191 | +0.365 | +0.105 | +0.384 | +0.306 | +0.454 | +77.0 | +67.1 | +0.243 | +0.320 | +0.108 | +0.304 | +|

| Docling | +0.589 | +0.909 | +0.416 | +0.987 | +0.999 | +1 | +61.3 | +25.0 | +0.627 | +0.810 | +0.313 | +0.837 | +|

| Pix2Text | +0.320 | +0.528 | +0.138 | +0.356 | +0.276 | +0.611 | +73.6 | +66.2 | +0.584 | +0.645 | +0.281 | +0.499 | +|

| Unstructured | +0.586 | +0.716 | +0.198 | +0.481 | +0.999 | +1 | +0 | +0.06 | +1 | +0.998 | +0.145 | +0.387 | +|

| OpenParse | +0.646 | +0.814 | +0.681 | +0.974 | +0.996 | +1 | +64.8 | +27.5 | +0.284 | +0.639 | +0.595 | +0.641 | +|

| PPStruct-V3 | +0.145 | +0.206 | +0.058 | +0.088 | +0.295 | +0.535 | +- | +- | +0.159 | +0.109 | +0.069 | +0.091 | +|

| Expert VLMs |

+GOT-OCR | +0.287 | +0.411 | +0.189 | +0.315 | +0.360 | +0.528 | +53.2 | +47.2 | +0.459 | +0.520 | +0.141 | +0.280 | +

| Nougat | +0.452 | +0.973 | +0.365 | +0.998 | +0.488 | +0.941 | +39.9 | +0 | +0.572 | +1.000 | +0.382 | +0.954 | +|

| Mistral OCR | +0.268 | +0.439 | +0.072 | +0.325 | +0.318 | +0.495 | +75.8 | +63.6 | +0.600 | +0.650 | +0.083 | +0.284 | +|

| OLMOCR-sglang | +0.326 | +0.469 | +0.097 | +0.293 | +0.455 | +0.655 | +68.1 | +61.3 | +0.608 | +0.652 | +0.145 | +0.277 | +|

| SmolDocling-256M | +0.493 | +0.816 | +0.262 | +0.838 | +0.753 | +0.997 | +44.9 | +16.5 | +0.729 | +0.907 | +0.227 | +0.522 | +|

| Dolphin | +0.206 | +0.306 | +0.107 | +0.197 | +0.447 | +0.580 | +77.3 | +67.2 | +0.180 | +0.285 | +0.091 | +0.162 | +|

| MinerU 2 | +0.139 | +0.240 | +0.047 | +0.109 | +0.297 | +0.536 | +82.5 | +79.0 | +0.141 | +0.195 | +0.069< | +0.118 | +|

| OCRFlux | +0.195 | +0.281 | +0.064 | +0.183 | +0.379 | +0.613 | +71.6 | +81.3 | +0.253 | +0.139 | +0.086 | +0.187 | +|

| MonkeyOCR-pro-3B | +0.138 | +0.206 | +0.067 | +0.107 | +0.246 | +0.421 | +81.5 | +87.5 | +0.139 | +0.111 | +0.100 | +0.185 | +|

| General VLMs |

+GPT4o | +0.233 | +0.399 | +0.144 | +0.409 | +0.425 | +0.606 | +72.0 | +62.9 | +0.234 | +0.329 | +0.128 | +0.251 | +

| Qwen2-VL-72B | +0.252 | +0.327 | +0.096 | +0.218 | +0.404 | +0.487 | +76.8 | +76.4 | +0.387 | +0.408 | +0.119 | +0.193 | +|

| Qwen2.5-VL-72B | +0.214 | +0.261 | +0.092 | +0.18 | +0.315 | +0.434 | +82.9 | +83.9 | +0.341 | +0.262 | +0.106 | +0.168 | +|

| Gemini2.5-Pro | +0.148 | +0.212 | +0.055 | +0.168 | +0.356 | +0.439 | +85.8 | +86.4 | +0.13 | +0.119 | +0.049 | +0.121 | +|

| doubao-1-5-thinking-vision-pro-250428 | +0.140 | +0.162 | +0.043 | +0.085 | +0.295 | +0.384 | +83.3 | +89.3 | +0.165 | +0.085 | +0.058 | +0.094 | +|

| Expert VLMs | +dots.ocr | +0.125 | +0.160 | +0.032 | +0.066 | +0.329 | +0.416 | +88.6 | +89.0 | +0.099 | +0.092 | +0.040 | +0.067 | +

| Model Type |

+Models | +Book | +Slides | +Financial Report |

+Textbook | +Exam Paper |

+Magazine | +Academic Papers |

+Notes | +Newspaper | +Overall | +

|---|---|---|---|---|---|---|---|---|---|---|---|

| Pipeline Tools |

+MinerU | +0.055 | +0.124 | +0.033 | +0.102 | +0.159 | +0.072 | +0.025 | +0.984 | +0.171 | +0.206 | +

| Marker | +0.074 | +0.340 | +0.089 | +0.319 | +0.452 | +0.153 | +0.059 | +0.651 | +0.192 | +0.274 | +|

| Mathpix | +0.131 | +0.220 | +0.202 | +0.216 | +0.278 | +0.147 | +0.091 | +0.634 | +0.690 | +0.300 | +|

| Expert VLMs |

+GOT-OCR | +0.111 | +0.222 | +0.067 | +0.132 | +0.204 | +0.198 | +0.179 | +0.388 | +0.771 | +0.267 | +

| Nougat | +0.734 | +0.958 | +1.000 | +0.820 | +0.930 | +0.830 | +0.214 | +0.991 | +0.871 | +0.806 | +|

| Dolphin | +0.091 | +0.131 | +0.057 | +0.146 | +0.231 | +0.121 | +0.074 | +0.363 | +0.307 | +0.177 | +|

| OCRFlux | +0.068 | +0.125 | +0.092 | +0.102 | +0.119 | +0.083 | +0.047 | +0.223 | +0.536 | +0.149 | +|

| MonkeyOCR-pro-3B | +0.084 | +0.129 | +0.060 | +0.090 | +0.107 | +0.073 | +0.050 | +0.171 | +0.107 | +0.100 | +|

| General VLMs |

+GPT4o | +0.157 | +0.163 | +0.348 | +0.187 | +0.281 | +0.173 | +0.146 | +0.607 | +0.751 | +0.316 | +

| Qwen2.5-VL-7B | +0.148 | +0.053 | +0.111 | +0.137 | +0.189 | +0.117 | +0.134 | +0.204 | +0.706 | +0.205 | +|

| InternVL3-8B | +0.163 | +0.056 | +0.107 | +0.109 | +0.129 | +0.100 | +0.159 | +0.150 | +0.681 | +0.188 | +|

| doubao-1-5-thinking-vision-pro-250428 | +0.048 | +0.048 | +0.024 | +0.062 | +0.085 | +0.051 | +0.039 | +0.096 | +0.181 | +0.073 | +|

| Expert VLMs | +dots.ocr | +0.031 | +0.047 | +0.011 | +0.082 | +0.079 | +0.028 | +0.029 | +0.109 | +0.056 | +0.055 | +

| Methods | +OverallEdit↓ | +TextEdit↓ | +FormulaEdit↓ | +TableTEDS↑ | +TableEdit↓ | +Read OrderEdit↓ | +MonkeyOCR-3B | +0.483 | +0.445 | +0.627 | +50.93 | +0.452 | +0.409 | + +

|---|---|---|---|---|---|---|

| doubao-1-5-thinking-vision-pro-250428 | +0.291 | +0.226 | +0.440 | +71.2 | +0.260 | +0.238 | +

| doubao-1-6 | +0.299 | +0.270 | +0.417 | +71.0 | +0.258 | +0.253 | +

| Gemini2.5-Pro | +0.251 | +0.163 | +0.402 | +77.1 | +0.236 | +0.202 | +

| dots.ocr | +0.177 | +0.075 | +0.297 | +79.2 | +0.186 | +0.152 | +

| Method | +F1@IoU=.50:.05:.95↑ | +F1@IoU=.50↑ | +||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Overall | +Text | +Formula | +Table | +Picture | +Overall | +Text | +Formula | +Table | +Picture | +DocLayout-YOLO-DocStructBench | +0.733 | +0.694 | +0.480 | +0.803 | +0.619 | +0.806 | +0.779 | +0.620 | +0.858 | +0.678 | + + +

| dots.ocr-parse all | +0.831 | +0.801 | +0.654 | +0.838 | +0.748 | +0.922 | +0.909 | +0.770 | +0.888 | +0.831 | +

| dots.ocr-detection only | +0.845 | +0.816 | +0.716 | +0.875 | +0.765 | +0.930 | +0.917 | +0.832 | +0.918 | +0.843 | +

| Model | +ArXiv | +Old Scans Math |

+Tables | +Old Scans | +Headers and Footers |

+Multi column |

+Long Tiny Text |

+Base | +Overall | +

|---|---|---|---|---|---|---|---|---|---|

| GOT OCR | +52.7 | +52.0 | +0.2 | +22.1 | +93.6 | +42.0 | +29.9 | +94.0 | +48.3 ± 1.1 | +

| Marker | +76.0 | +57.9 | +57.6 | +27.8 | +84.9 | +72.9 | +84.6 | +99.1 | +70.1 ± 1.1 | +

| MinerU | +75.4 | +47.4 | +60.9 | +17.3 | +96.6 | +59.0 | +39.1 | +96.6 | +61.5 ± 1.1 | +

| Mistral OCR | +77.2 | +67.5 | +60.6 | +29.3 | +93.6 | +71.3 | +77.1 | +99.4 | +72.0 ± 1.1 | +

| Nanonets OCR | +67.0 | +68.6 | +77.7 | +39.5 | +40.7 | +69.9 | +53.4 | +99.3 | +64.5 ± 1.1 | +

| GPT-4o (No Anchor) |

+51.5 | +75.5 | +69.1 | +40.9 | +94.2 | +68.9 | +54.1 | +96.7 | +68.9 ± 1.1 | +

| GPT-4o (Anchored) |

+53.5 | +74.5 | +70.0 | +40.7 | +93.8 | +69.3 | +60.6 | +96.8 | +69.9 ± 1.1 | +

| Gemini Flash 2 (No Anchor) |

+32.1 | +56.3 | +61.4 | +27.8 | +48.0 | +58.7 | +84.4 | +94.0 | +57.8 ± 1.1 | +

| Gemini Flash 2 (Anchored) |

+54.5 | +56.1 | +72.1 | +34.2 | +64.7 | +61.5 | +71.5 | +95.6 | +63.8 ± 1.2 | +

| Qwen 2 VL (No Anchor) |

+19.7 | +31.7 | +24.2 | +17.1 | +88.9 | +8.3 | +6.8 | +55.5 | +31.5 ± 0.9 | +

| Qwen 2.5 VL (No Anchor) |

+63.1 | +65.7 | +67.3 | +38.6 | +73.6 | +68.3 | +49.1 | +98.3 | +65.5 ± 1.2 | +

| olmOCR v0.1.75 (No Anchor) |

+71.5 | +71.4 | +71.4 | +42.8 | +94.1 | +77.7 | +71.0 | +97.8 | +74.7 ± 1.1 | +

| olmOCR v0.1.75 (Anchored) |

+74.9 | +71.2 | +71.0 | +42.2 | +94.5 | +78.3 | +73.3 | +98.3 | +75.5 ± 1.0 | +

| MonkeyOCR-pro-3B | +83.8 | +68.8 | +74.6 | +36.1 | +91.2 | +76.6 | +80.1 | +95.3 | +75.8 ± 1.0 | +

| dots.ocr | +82.1 | +64.2 | +88.3 | +40.9 | +94.1 | +82.4 | +81.2 | +99.5 | +79.1 ± 1.0 | +

0 / 0

", session_state

+

+ file_ext = os.path.splitext(file_path)[1].lower()

+

+ try:

+ if file_ext == '.pdf':

+ pages = load_images_from_pdf(file_path)

+ pdf_cache["file_type"] = "pdf"

+ elif file_ext in ['.jpg', '.jpeg', '.png']:

+ image = Image.open(file_path)

+ pages = [image]

+ pdf_cache["file_type"] = "image"

+ else:

+ return None, "Unsupported file format

", session_state

+ except Exception as e:

+ return None, f"PDF loading failed: {str(e)}

", session_state

+

+ pdf_cache["images"] = pages

+ pdf_cache["current_page"] = 0

+ pdf_cache["total_pages"] = len(pages)

+ pdf_cache["is_parsed"] = False

+ pdf_cache["results"] = []

+

+ return pages[0], f"1 / {len(pages)}

", session_state

+

+def turn_page(direction, session_state):

+ """Page turning function"""

+ pdf_cache = session_state['pdf_cache']

+

+ if not pdf_cache["images"]:

+ return None, "0 / 0

", "", session_state

+

+ if direction == "prev":

+ pdf_cache["current_page"] = max(0, pdf_cache["current_page"] - 1)

+ elif direction == "next":

+ pdf_cache["current_page"] = min(pdf_cache["total_pages"] - 1, pdf_cache["current_page"] + 1)

+

+ index = pdf_cache["current_page"]

+ current_image = pdf_cache["images"][index] # Use the original image by default

+ page_info = f"{index + 1} / {pdf_cache['total_pages']}

"

+

+ current_json = ""

+ if pdf_cache["is_parsed"] and index < len(pdf_cache["results"]):

+ result = pdf_cache["results"][index]

+ if 'cells_data' in result and result['cells_data']:

+ try:

+ current_json = json.dumps(result['cells_data'], ensure_ascii=False, indent=2)

+ except:

+ current_json = str(result.get('cells_data', ''))

+ if 'layout_image' in result and result['layout_image']:

+ current_image = result['layout_image']

+

+ return current_image, page_info, current_json, session_state

+

+def get_test_images():

+ """Gets the list of test images"""

+ test_images = []

+ test_dir = current_config['test_images_dir']

+ if os.path.exists(test_dir):

+ test_images = [os.path.join(test_dir, name) for name in os.listdir(test_dir)

+ if name.lower().endswith(('.png', '.jpg', '.jpeg', '.pdf'))]

+ return test_images

+

+def create_temp_session_dir():

+ """Creates a unique temporary directory for each processing request"""

+ session_id = uuid.uuid4().hex[:8]

+ temp_dir = os.path.join(tempfile.gettempdir(), f"dots_ocr_demo_{session_id}")

+ os.makedirs(temp_dir, exist_ok=True)

+ return temp_dir, session_id

+

+def parse_image_with_high_level_api(parser, image, prompt_mode, fitz_preprocess=False):

+ """

+ Processes using the high-level API parse_image from DotsOCRParser

+ """

+ # Create a temporary session directory

+ temp_dir, session_id = create_temp_session_dir()

+

+ try:

+ # Save the PIL Image as a temporary file

+ temp_image_path = os.path.join(temp_dir, f"input_{session_id}.png")

+ image.save(temp_image_path, "PNG")

+

+ # Use the high-level API parse_image

+ filename = f"demo_{session_id}"

+ results = parser.parse_image(

+ input_path=image,

+ filename=filename,

+ prompt_mode=prompt_mode,

+ save_dir=temp_dir,

+ fitz_preprocess=fitz_preprocess

+ )

+

+ # Parse the results

+ if not results:

+ raise ValueError("No results returned from parser")

+

+ result = results[0] # parse_image returns a list with a single result

+

+ layout_image = None

+ if 'layout_image_path' in result and os.path.exists(result['layout_image_path']):

+ layout_image = Image.open(result['layout_image_path'])

+

+ cells_data = None

+ if 'layout_info_path' in result and os.path.exists(result['layout_info_path']):

+ with open(result['layout_info_path'], 'r', encoding='utf-8') as f:

+ cells_data = json.load(f)

+

+ md_content = None

+ if 'md_content_path' in result and os.path.exists(result['md_content_path']):

+ with open(result['md_content_path'], 'r', encoding='utf-8') as f:

+ md_content = f.read()

+

+ return {

+ 'layout_image': layout_image,

+ 'cells_data': cells_data,

+ 'md_content': md_content,

+ 'filtered': result.get('filtered', False),

+ 'temp_dir': temp_dir,

+ 'session_id': session_id,

+ 'result_paths': result,

+ 'input_width': result.get('input_width', 0),

+ 'input_height': result.get('input_height', 0),

+ }

+ except Exception as e:

+ if os.path.exists(temp_dir):

+ shutil.rmtree(temp_dir, ignore_errors=True)

+ raise e

+

+def parse_pdf_with_high_level_api(parser, pdf_path, prompt_mode):

+ """

+ Processes using the high-level API parse_pdf from DotsOCRParser

+ """

+ # Create a temporary session directory

+ temp_dir, session_id = create_temp_session_dir()

+

+ try:

+ # Use the high-level API parse_pdf

+ filename = f"demo_{session_id}"

+ results = parser.parse_pdf(

+ input_path=pdf_path,

+ filename=filename,

+ prompt_mode=prompt_mode,

+ save_dir=temp_dir

+ )

+

+ # Parse the results

+ if not results:

+ raise ValueError("No results returned from parser")

+

+ # Handle multi-page results

+ parsed_results = []

+ all_md_content = []

+ all_cells_data = []

+

+ for i, result in enumerate(results):

+ page_result = {

+ 'page_no': result.get('page_no', i),

+ 'layout_image': None,

+ 'cells_data': None,

+ 'md_content': None,

+ 'filtered': False

+ }

+

+ # Read the layout image

+ if 'layout_image_path' in result and os.path.exists(result['layout_image_path']):

+ page_result['layout_image'] = Image.open(result['layout_image_path'])

+

+ # Read the JSON data

+ if 'layout_info_path' in result and os.path.exists(result['layout_info_path']):

+ with open(result['layout_info_path'], 'r', encoding='utf-8') as f:

+ page_result['cells_data'] = json.load(f)

+ all_cells_data.extend(page_result['cells_data'])

+

+ # Read the Markdown content

+ if 'md_content_path' in result and os.path.exists(result['md_content_path']):

+ with open(result['md_content_path'], 'r', encoding='utf-8') as f:

+ page_content = f.read()

+ page_result['md_content'] = page_content

+ all_md_content.append(page_content)

+ page_result['filtered'] = result.get('filtered', False)

+ parsed_results.append(page_result)

+

+ combined_md = "\n\n---\n\n".join(all_md_content) if all_md_content else ""

+ return {

+ 'parsed_results': parsed_results,

+ 'combined_md_content': combined_md,

+ 'combined_cells_data': all_cells_data,

+ 'temp_dir': temp_dir,

+ 'session_id': session_id,

+ 'total_pages': len(results)

+ }

+

+ except Exception as e:

+ if os.path.exists(temp_dir):

+ shutil.rmtree(temp_dir, ignore_errors=True)

+ raise e

+

+# ==================== Core Processing Function ====================

+def process_image_inference(session_state, test_image_input, file_input,

+ prompt_mode, server_ip, server_port, min_pixels, max_pixels,

+ fitz_preprocess=False

+ ):

+ """Core function to handle image/PDF inference"""

+ # Use session_state instead of global variables

+ processing_results = session_state['processing_results']

+ pdf_cache = session_state['pdf_cache']

+

+ if processing_results.get('temp_dir') and os.path.exists(processing_results['temp_dir']):

+ try:

+ shutil.rmtree(processing_results['temp_dir'], ignore_errors=True)

+ except Exception as e:

+ print(f"Failed to clean up previous temporary directory: {e}")

+

+ # Reset processing results for the current session

+ session_state['processing_results'] = get_initial_session_state()['processing_results']

+ processing_results = session_state['processing_results']

+

+ current_config.update({

+ 'ip': server_ip,

+ 'port_vllm': server_port,

+ 'min_pixels': min_pixels,

+ 'max_pixels': max_pixels

+ })

+

+ # Update parser configuration

+ dots_parser.ip = server_ip

+ dots_parser.port = server_port

+ dots_parser.min_pixels = min_pixels

+ dots_parser.max_pixels = max_pixels

+

+ input_file_path = file_input if file_input else test_image_input

+

+ if not input_file_path:

+ return None, "Please upload image/PDF file or select test image", "", "", gr.update(value=None), None, "", session_state

+

+ file_ext = os.path.splitext(input_file_path)[1].lower()

+

+ try:

+ if file_ext == '.pdf':

+ # MINIMAL CHANGE: The `process_pdf_file` function is now inlined and uses session_state.

+ preview_image, page_info, session_state = load_file_for_preview(input_file_path, session_state)

+ pdf_result = parse_pdf_with_high_level_api(dots_parser, input_file_path, prompt_mode)

+

+ session_state['pdf_cache']["is_parsed"] = True

+ session_state['pdf_cache']["results"] = pdf_result['parsed_results']

+

+ processing_results.update({

+ 'markdown_content': pdf_result['combined_md_content'],

+ 'cells_data': pdf_result['combined_cells_data'],

+ 'temp_dir': pdf_result['temp_dir'],

+ 'session_id': pdf_result['session_id'],

+ 'pdf_results': pdf_result['parsed_results']

+ })

+

+ total_elements = len(pdf_result['combined_cells_data'])

+ info_text = f"**PDF Information:**\n- Total Pages: {pdf_result['total_pages']}\n- Server: {current_config['ip']}:{current_config['port_vllm']}\n- Total Detected Elements: {total_elements}\n- Session ID: {pdf_result['session_id']}"

+

+ current_page_layout_image = preview_image

+ current_page_json = ""

+ if session_state['pdf_cache']["results"]:

+ first_result = session_state['pdf_cache']["results"][0]

+ if 'layout_image' in first_result and first_result['layout_image']:

+ current_page_layout_image = first_result['layout_image']

+ if first_result.get('cells_data'):

+ try:

+ current_page_json = json.dumps(first_result['cells_data'], ensure_ascii=False, indent=2)

+ except:

+ current_page_json = str(first_result['cells_data'])

+

+ download_zip_path = None

+ if pdf_result['temp_dir']:

+ download_zip_path = os.path.join(pdf_result['temp_dir'], f"layout_results_{pdf_result['session_id']}.zip")

+ with zipfile.ZipFile(download_zip_path, 'w', zipfile.ZIP_DEFLATED) as zipf:

+ for root, _, files in os.walk(pdf_result['temp_dir']):

+ for file in files:

+ if not file.endswith('.zip'): zipf.write(os.path.join(root, file), os.path.relpath(os.path.join(root, file), pdf_result['temp_dir']))

+

+ return (

+ current_page_layout_image, info_text, pdf_result['combined_md_content'] or "No markdown content generated",

+ pdf_result['combined_md_content'] or "No markdown content generated",

+ gr.update(value=download_zip_path, visible=bool(download_zip_path)), page_info, current_page_json, session_state

+ )

+

+ else: # Image processing

+ image = read_image_v2(input_file_path)

+ session_state['pdf_cache'] = get_initial_session_state()['pdf_cache']

+

+ original_image = image

+ parse_result = parse_image_with_high_level_api(dots_parser, image, prompt_mode, fitz_preprocess)

+

+ if parse_result['filtered']:

+ info_text = f"**Image Information:**\n- Original Size: {original_image.width} x {original_image.height}\n- Processing: JSON parsing failed, using cleaned text output\n- Server: {current_config['ip']}:{current_config['port_vllm']}\n- Session ID: {parse_result['session_id']}"

+ processing_results.update({

+ 'original_image': original_image, 'markdown_content': parse_result['md_content'],

+ 'temp_dir': parse_result['temp_dir'], 'session_id': parse_result['session_id'],

+ 'result_paths': parse_result['result_paths']

+ })

+ return original_image, info_text, parse_result['md_content'], parse_result['md_content'], gr.update(visible=False), None, "", session_state

+

+ md_content_raw = parse_result['md_content'] or "No markdown content generated"

+ processing_results.update({

+ 'original_image': original_image, 'layout_result': parse_result['layout_image'],

+ 'markdown_content': parse_result['md_content'], 'cells_data': parse_result['cells_data'],

+ 'temp_dir': parse_result['temp_dir'], 'session_id': parse_result['session_id'],

+ 'result_paths': parse_result['result_paths']

+ })

+

+ num_elements = len(parse_result['cells_data']) if parse_result['cells_data'] else 0

+ info_text = f"**Image Information:**\n- Original Size: {original_image.width} x {original_image.height}\n- Model Input Size: {parse_result['input_width']} x {parse_result['input_height']}\n- Server: {current_config['ip']}:{current_config['port_vllm']}\n- Detected {num_elements} layout elements\n- Session ID: {parse_result['session_id']}"

+

+ current_json = json.dumps(parse_result['cells_data'], ensure_ascii=False, indent=2) if parse_result['cells_data'] else ""

+

+ download_zip_path = None

+ if parse_result['temp_dir']:

+ download_zip_path = os.path.join(parse_result['temp_dir'], f"layout_results_{parse_result['session_id']}.zip")

+ with zipfile.ZipFile(download_zip_path, 'w', zipfile.ZIP_DEFLATED) as zipf:

+ for root, _, files in os.walk(parse_result['temp_dir']):

+ for file in files:

+ if not file.endswith('.zip'): zipf.write(os.path.join(root, file), os.path.relpath(os.path.join(root, file), parse_result['temp_dir']))

+

+ return (

+ parse_result['layout_image'], info_text, parse_result['md_content'] or "No markdown content generated",

+ md_content_raw, gr.update(value=download_zip_path, visible=bool(download_zip_path)),

+ None, current_json, session_state

+ )

+ except Exception as e:

+ import traceback

+ traceback.print_exc()

+ return None, f"Error during processing: {e}", "", "", gr.update(value=None), None, "", session_state

+

+# MINIMAL CHANGE: Functions now take `session_state` as an argument.

+def clear_all_data(session_state):

+ """Clears all data"""

+ processing_results = session_state['processing_results']

+

+ if processing_results.get('temp_dir') and os.path.exists(processing_results['temp_dir']):

+ try:

+ shutil.rmtree(processing_results['temp_dir'], ignore_errors=True)

+ except Exception as e:

+ print(f"Failed to clean up temporary directory: {e}")

+

+ # Reset the session state by returning a new initial state

+ new_session_state = get_initial_session_state()

+

+ return (

+ None, # Clear file input

+ "", # Clear test image selection

+ None, # Clear result image

+ "Waiting for processing results...", # Reset info display

+ "## Waiting for processing results...", # Reset Markdown display

+ "🕐 Waiting for parsing result...", # Clear raw Markdown text

+ gr.update(visible=False), # Hide download button

+ "0 / 0

", # Reset page info

+ "🕐 Waiting for parsing result...", # Clear current page JSON

+ new_session_state

+ )

+

+def update_prompt_display(prompt_mode):

+ """Updates the prompt display content"""

+ return dict_promptmode_to_prompt[prompt_mode]

+

+# ==================== Gradio Interface ====================

+def create_gradio_interface():

+ """Creates the Gradio interface"""

+

+ # CSS styles, matching the reference style

+ css = """

+

+ #parse_button {

+ background: #FF576D !important; /* !important 确保覆盖主题默认样式 */

+ border-color: #FF576D !important;

+ }

+ /* 鼠标悬停时的颜色 */

+ #parse_button:hover {

+ background: #F72C49 !important;

+ border-color: #F72C49 !important;

+ }

+

+ #page_info_html {

+ display: flex;

+ align-items: center;

+ justify-content: center;

+ height: 100%;

+ margin: 0 12px;

+ }

+

+ #page_info_box {

+ padding: 8px 20px;

+ font-size: 16px;

+ border: 1px solid #bbb;

+ border-radius: 8px;

+ background-color: #f8f8f8;

+ text-align: center;

+ min-width: 80px;

+ box-shadow: 0 1px 3px rgba(0,0,0,0.1);

+ }

+

+ #markdown_output {

+ min-height: 800px;

+ overflow: auto;

+ }

+

+ footer {

+ visibility: hidden;

+ }

+

+ #info_box {

+ padding: 10px;

+ background-color: #f8f9fa;

+ border-radius: 8px;

+ border: 1px solid #dee2e6;

+ margin: 10px 0;

+ font-size: 14px;

+ }

+

+ #result_image {

+ border-radius: 8px;

+ }

+

+ #markdown_tabs {

+ height: 100%;

+ }

+ """

+

+ with gr.Blocks(theme="ocean", css=css, title='dots.ocr') as demo:

+ session_state = gr.State(value=get_initial_session_state())

+

+ # Title

+ gr.HTML("""

+

+

+ 🔍 dots.ocr

+

+ Supports image/PDF layout analysis and structured output

+

+ """)

+

+ with gr.Row():

+ # Left side: Input and Configuration

+ with gr.Column(scale=1, elem_id="left-panel"):

+ gr.Markdown("### 📥 Upload & Select")

+ file_input = gr.File(

+ label="Upload PDF/Image",

+ type="filepath",

+ file_types=[".pdf", ".jpg", ".jpeg", ".png"],

+ )

+

+ test_images = get_test_images()

+ test_image_input = gr.Dropdown(

+ label="Or Select an Example",

+ choices=[""] + test_images,

+ value="",

+ )

+

+ gr.Markdown("### ⚙️ Prompt & Actions")

+ prompt_mode = gr.Dropdown(

+ label="Select Prompt",

+ choices=["prompt_layout_all_en", "prompt_layout_only_en", "prompt_ocr"],

+ value="prompt_layout_all_en",

+ )

+

+ # Display current prompt content

+ prompt_display = gr.Textbox(

+ label="Current Prompt Content",

+ value=dict_promptmode_to_prompt[list(dict_promptmode_to_prompt.keys())[0]],

+ lines=4,

+ max_lines=8,

+ interactive=False,

+ show_copy_button=True

+ )

+

+ with gr.Row():

+ process_btn = gr.Button("🔍 Parse", variant="primary", scale=2, elem_id="parse_button")

+ clear_btn = gr.Button("🗑️ Clear", variant="secondary", scale=1)

+

+ with gr.Accordion("🛠️ Advanced Configuration", open=False):

+ fitz_preprocess = gr.Checkbox(

+ label="Enable fitz_preprocess for images",

+ value=True,

+ info="Processes image via a PDF-like pipeline (image->pdf->200dpi image). Recommended if your image DPI is low."

+ )

+ with gr.Row():

+ server_ip = gr.Textbox(label="Server IP", value=DEFAULT_CONFIG['ip'])

+ server_port = gr.Number(label="Port", value=DEFAULT_CONFIG['port_vllm'], precision=0)

+ with gr.Row():

+ min_pixels = gr.Number(label="Min Pixels", value=DEFAULT_CONFIG['min_pixels'], precision=0)

+ max_pixels = gr.Number(label="Max Pixels", value=DEFAULT_CONFIG['max_pixels'], precision=0)

+ # Right side: Result Display

+ with gr.Column(scale=6, variant="compact"):

+ with gr.Row():

+ # Result Image

+ with gr.Column(scale=3):

+ gr.Markdown("### 👁️ File Preview")

+ result_image = gr.Image(

+ label="Layout Preview",

+ visible=True,

+ height=800,

+ show_label=False

+ )

+

+ # Page navigation (shown during PDF preview)

+ with gr.Row():

+ prev_btn = gr.Button("⬅ Previous", size="sm")

+ page_info = gr.HTML(

+ value="0 / 0

",

+ elem_id="page_info_html"

+ )

+ next_btn = gr.Button("Next ➡", size="sm")

+

+ # Info Display

+ info_display = gr.Markdown(

+ "Waiting for processing results...",

+ elem_id="info_box"

+ )

+

+ # Markdown Result

+ with gr.Column(scale=3):

+ gr.Markdown("### ✔️ Result Display")

+

+ with gr.Tabs(elem_id="markdown_tabs"):

+ with gr.TabItem("Markdown Render Preview"):

+ md_output = gr.Markdown(

+ "## Please click the parse button to parse or select for single-task recognition...",

+ max_height=600,

+ latex_delimiters=[

+ {"left": "$$", "right": "$$", "display": True},

+ {"left": "$", "right": "$", "display": False}

+ ],

+ show_copy_button=False,

+ elem_id="markdown_output"

+ )

+

+ with gr.TabItem("Markdown Raw Text"):

+ md_raw_output = gr.Textbox(

+ value="🕐 Waiting for parsing result...",

+ label="Markdown Raw Text",

+ max_lines=100,

+ lines=38,

+ show_copy_button=True,

+ elem_id="markdown_output",

+ show_label=False

+ )

+

+ with gr.TabItem("Current Page JSON"):

+ current_page_json = gr.Textbox(

+ value="🕐 Waiting for parsing result...",

+ label="Current Page JSON",

+ max_lines=100,

+ lines=38,

+ show_copy_button=True,

+ elem_id="markdown_output",

+ show_label=False

+ )

+

+ # Download Button

+ with gr.Row():

+ download_btn = gr.DownloadButton(

+ "⬇️ Download Results",

+ visible=False

+ )

+

+ # When the prompt mode changes, update the display content

+ prompt_mode.change(

+ fn=update_prompt_display,

+ inputs=prompt_mode,

+ outputs=prompt_display,

+ )

+

+ # Show preview on file upload

+ file_input.upload(

+ # fn=lambda file_data, state: load_file_for_preview(file_data, state),

+ fn=load_file_for_preview,

+ inputs=[file_input, session_state],

+ outputs=[result_image, page_info, session_state]

+ )

+

+ # Also handle test image selection

+ test_image_input.change(

+ # fn=lambda path, state: load_file_for_preview(path, state),

+ fn=load_file_for_preview,

+ inputs=[test_image_input, session_state],

+ outputs=[result_image, page_info, session_state]

+ )

+

+ prev_btn.click(

+ fn=lambda s: turn_page("prev", s),

+ inputs=[session_state],

+ outputs=[result_image, page_info, current_page_json, session_state]

+ )

+

+ next_btn.click(

+ fn=lambda s: turn_page("next", s),

+ inputs=[session_state],

+ outputs=[result_image, page_info, current_page_json, session_state]

+ )

+

+ process_btn.click(

+ fn=process_image_inference,

+ inputs=[

+ session_state, test_image_input, file_input,

+ prompt_mode, server_ip, server_port, min_pixels, max_pixels,

+ fitz_preprocess

+ ],

+ outputs=[

+ result_image, info_display, md_output, md_raw_output,

+ download_btn, page_info, current_page_json, session_state

+ ]

+ )

+

+ clear_btn.click(

+ fn=clear_all_data,

+ inputs=[session_state],

+ outputs=[

+ file_input, test_image_input,

+ result_image, info_display, md_output, md_raw_output,

+ download_btn, page_info, current_page_json, session_state

+ ]

+ )

+

+ return demo

+

+# ==================== Main Program ====================

+if __name__ == "__main__":

+ import sys

+ port = int(sys.argv[1])

+ demo = create_gradio_interface()

+ demo.queue().launch(

+ server_name="0.0.0.0",

+ server_port=port,

+ debug=True

+ )

diff --git a/demo/demo_gradio_annotion.py b/demo/demo_gradio_annotion.py

new file mode 100644

index 0000000000000000000000000000000000000000..a9c695c44fee122224dbabab2078bef3be84ceb0

--- /dev/null

+++ b/demo/demo_gradio_annotion.py

@@ -0,0 +1,666 @@

+"""

+Layout Inference Web Application with Gradio - Annotation Version

+

+A Gradio-based layout inference tool that supports image uploads and multiple backend inference engines.

+This version adds an image annotation feature, allowing users to draw bounding boxes on an image and send both the image and the boxes to the model.

+"""

+

+import gradio as gr

+import json

+import os

+import io

+import tempfile

+import base64

+import zipfile

+import uuid

+import re

+from pathlib import Path

+from PIL import Image

+import requests

+from gradio_image_annotation import image_annotator

+

+# Local utility imports

+from dots_ocr.utils import dict_promptmode_to_prompt

+from dots_ocr.utils.consts import MIN_PIXELS, MAX_PIXELS

+from dots_ocr.utils.demo_utils.display import read_image

+from dots_ocr.utils.doc_utils import load_images_from_pdf

+

+# Add DotsOCRParser import

+from dots_ocr.parser import DotsOCRParser

+

+# ==================== Configuration ====================

+DEFAULT_CONFIG = {

+ 'ip': "127.0.0.1",

+ 'port_vllm': 8000,

+ 'min_pixels': MIN_PIXELS,

+ 'max_pixels': MAX_PIXELS,

+ 'test_images_dir': "./assets/showcase_origin",

+}

+

+# ==================== Global Variables ====================

+# Store the current configuration

+current_config = DEFAULT_CONFIG.copy()

+

+# Create a DotsOCRParser instance

+dots_parser = DotsOCRParser(

+ ip=DEFAULT_CONFIG['ip'],

+ port=DEFAULT_CONFIG['port_vllm'],

+ dpi=200,

+ min_pixels=DEFAULT_CONFIG['min_pixels'],

+ max_pixels=DEFAULT_CONFIG['max_pixels']

+)

+

+# Store processing results

+processing_results = {

+ 'original_image': None,

+ 'processed_image': None,

+ 'layout_result': None,

+ 'markdown_content': None,

+ 'cells_data': None,

+ 'temp_dir': None,

+ 'session_id': None,

+ 'result_paths': None,

+ 'annotation_data': None # Store annotation data

+}

+

+# ==================== Utility Functions ====================

+def read_image_v2(img):

+ """Reads an image, supporting URLs and local paths."""

+ if isinstance(img, str) and img.startswith(("http://", "https://")):

+ with requests.get(img, stream=True) as response:

+ response.raise_for_status()

+ img = Image.open(io.BytesIO(response.content))

+ elif isinstance(img, str):

+ img, _, _ = read_image(img, use_native=True)

+ elif isinstance(img, Image.Image):

+ pass

+ else:

+ raise ValueError(f"Invalid image type: {type(img)}")

+ return img

+

+def get_test_images():

+ """Gets the list of test images."""

+ test_images = []

+ test_dir = current_config['test_images_dir']

+ if os.path.exists(test_dir):

+ test_images = [os.path.join(test_dir, name) for name in os.listdir(test_dir)

+ if name.lower().endswith(('.png', '.jpg', '.jpeg'))]

+ return test_images

+

+def create_temp_session_dir():

+ """Creates a unique temporary directory for each processing request."""

+ session_id = uuid.uuid4().hex[:8]

+ temp_dir = os.path.join(tempfile.gettempdir(), f"dots_ocr_demo_{session_id}")

+ os.makedirs(temp_dir, exist_ok=True)

+ return temp_dir, session_id

+

+def parse_image_with_bbox(parser, image, prompt_mode, bbox=None, fitz_preprocess=False):

+ """

+ Processes an image using DotsOCRParser, with support for the bbox parameter.

+ """

+ # Create a temporary session directory

+ temp_dir, session_id = create_temp_session_dir()

+

+ try:

+ # Save the PIL Image to a temporary file

+ temp_image_path = os.path.join(temp_dir, f"input_{session_id}.png")

+ image.save(temp_image_path, "PNG")

+

+ # Use the high-level parse_image interface, passing the bbox parameter

+ filename = f"demo_{session_id}"

+ results = parser.parse_image(

+ input_path=temp_image_path,

+ filename=filename,

+ prompt_mode=prompt_mode,

+ save_dir=temp_dir,

+ bbox=bbox,

+ fitz_preprocess=fitz_preprocess

+ )

+

+ # Parse the results

+ if not results:

+ raise ValueError("No results returned from parser")

+

+ result = results[0] # parse_image returns a list with a single result

+

+ # Read the result files

+ layout_image = None

+ cells_data = None

+ md_content = None

+ filtered = False

+

+ # Read the layout image

+ if 'layout_image_path' in result and os.path.exists(result['layout_image_path']):

+ layout_image = Image.open(result['layout_image_path'])

+

+ # Read the JSON data

+ if 'layout_info_path' in result and os.path.exists(result['layout_info_path']):

+ with open(result['layout_info_path'], 'r', encoding='utf-8') as f:

+ cells_data = json.load(f)

+

+ # Read the Markdown content

+ if 'md_content_path' in result and os.path.exists(result['md_content_path']):

+ with open(result['md_content_path'], 'r', encoding='utf-8') as f:

+ md_content = f.read()

+

+ # Check for the original response file (if JSON parsing fails)

+ if 'filtered' in result:

+ filtered = result['filtered']

+

+ return {

+ 'layout_image': layout_image,

+ 'cells_data': cells_data,

+ 'md_content': md_content,

+ 'filtered': filtered,

+ 'temp_dir': temp_dir,

+ 'session_id': session_id,

+ 'result_paths': result

+ }

+

+ except Exception as e:

+ # Clean up the temporary directory on error

+ import shutil

+ if os.path.exists(temp_dir):

+ shutil.rmtree(temp_dir, ignore_errors=True)

+ raise e

+

+def process_annotation_data(annotation_data):

+ """Processes annotation data, converting it to the format required by the model."""

+ if not annotation_data or not annotation_data.get('boxes'):

+ return None, None

+

+ # Get image and box data

+ image = annotation_data.get('image')

+ boxes = annotation_data.get('boxes', [])

+

+ if not boxes:

+ return image, None

+

+ # Ensure the image is in PIL Image format

+ if image is not None:

+ import numpy as np

+ if isinstance(image, np.ndarray):

+ image = Image.fromarray(image)

+ elif not isinstance(image, Image.Image):

+ # If it's another format, try to convert it

+ try:

+ image = Image.open(image) if isinstance(image, str) else Image.fromarray(image)

+ except Exception as e:

+ print(f"Image format conversion failed: {e}")

+ return None, None

+

+ # Get the coordinate information of the box (only one box)

+ box = boxes[0]

+ bbox = [box['xmin'], box['ymin'], box['xmax'], box['ymax']]

+

+ return image, bbox

+

+# ==================== Core Processing Function ====================

+def process_image_inference_with_annotation(annotation_data, test_image_input,

+ prompt_mode, server_ip, server_port, min_pixels, max_pixels,

+ fitz_preprocess=False

+ ):

+ """Core function for image inference, supporting annotation data."""

+ global current_config, processing_results, dots_parser

+

+ # First, clean up previous processing results

+ if processing_results.get('temp_dir') and os.path.exists(processing_results['temp_dir']):

+ import shutil

+ try:

+ shutil.rmtree(processing_results['temp_dir'], ignore_errors=True)

+ except Exception as e:

+ print(f"Failed to clean up previous temporary directory: {e}")

+

+ # Reset processing results

+ processing_results = {

+ 'original_image': None,

+ 'processed_image': None,

+ 'layout_result': None,

+ 'markdown_content': None,

+ 'cells_data': None,

+ 'temp_dir': None,

+ 'session_id': None,

+ 'result_paths': None,

+ 'annotation_data': annotation_data

+ }

+

+ # Update configuration

+ current_config.update({

+ 'ip': server_ip,

+ 'port_vllm': server_port,

+ 'min_pixels': min_pixels,

+ 'max_pixels': max_pixels

+ })

+

+ # Update parser configuration

+ dots_parser.ip = server_ip

+ dots_parser.port = server_port

+ dots_parser.min_pixels = min_pixels

+ dots_parser.max_pixels = max_pixels

+

+ # Determine the input source and process annotation data

+ image = None

+ bbox = None

+

+ # Prioritize processing annotation data

+ if annotation_data and annotation_data.get('image') is not None:

+ image, bbox = process_annotation_data(annotation_data)

+ if image is not None:

+ # If there's a bbox, force the use of 'prompt_grounding_ocr' mode

+ assert bbox is not None

+ prompt_mode = "prompt_grounding_ocr"

+

+ # If there's no annotation data, check the test image input

+ if image is None and test_image_input and test_image_input != "":

+ try:

+ image = read_image_v2(test_image_input)

+ except Exception as e:

+ return None, f"Failed to read test image: {e}", "", "", gr.update(value=None), ""

+

+ if image is None:

+ return None, "Please select a test image or add an image in the annotation component", "", "", gr.update(value=None), ""

+ if bbox is None:

+ return "Please select a bounding box by mouse", "Please select a bounding box by mouse", "", "", gr.update(value=None)

+

+ try:

+ # Process using DotsOCRParser, passing the bbox parameter

+ original_image = image

+ parse_result = parse_image_with_bbox(dots_parser, image, prompt_mode, bbox, fitz_preprocess)

+

+ # Extract parsing results

+ layout_image = parse_result['layout_image']

+ cells_data = parse_result['cells_data']

+ md_content = parse_result['md_content']

+ filtered = parse_result['filtered']

+

+ # Store the results

+ processing_results.update({

+ 'original_image': original_image,

+ 'processed_image': None,

+ 'layout_result': layout_image,

+ 'markdown_content': md_content,

+ 'cells_data': cells_data,

+ 'temp_dir': parse_result['temp_dir'],

+ 'session_id': parse_result['session_id'],

+ 'result_paths': parse_result['result_paths'],

+ 'annotation_data': annotation_data

+ })

+

+ # Handle the case where parsing fails

+ if filtered:

+ info_text = f"""

+**Image Information:**

+- Original Dimensions: {original_image.width} x {original_image.height}

+- Processing Mode: {'Region OCR' if bbox else 'Full Image OCR'}

+- Processing Status: JSON parsing failed, using cleaned text output

+- Server: {current_config['ip']}:{current_config['port_vllm']}

+- Session ID: {parse_result['session_id']}

+- Box Coordinates: {bbox if bbox else 'None'}

+ """

+

+ return (

+ md_content or "No markdown content generated",

+ info_text,

+ md_content or "No markdown content generated",

+ md_content or "No markdown content generated",

+ gr.update(visible=False),

+ ""

+ )

+

+ # Handle the case where JSON parsing succeeds

+ num_elements = len(cells_data) if cells_data else 0

+ info_text = f"""

+**Image Information:**

+- Original Dimensions: {original_image.width} x {original_image.height}

+- Processing Mode: {'Region OCR' if bbox else 'Full Image OCR'}

+- Server: {current_config['ip']}:{current_config['port_vllm']}

+- Detected {num_elements} layout elements

+- Session ID: {parse_result['session_id']}

+- Box Coordinates: {bbox if bbox else 'None'}

+ """

+

+ # Current page JSON output

+ current_json = ""

+ if cells_data:

+ try:

+ current_json = json.dumps(cells_data, ensure_ascii=False, indent=2)

+ except:

+ current_json = str(cells_data)

+

+ # Create a downloadable ZIP file

+ download_zip_path = None

+ if parse_result['temp_dir']:

+ download_zip_path = os.path.join(parse_result['temp_dir'], f"layout_results_{parse_result['session_id']}.zip")

+ try:

+ with zipfile.ZipFile(download_zip_path, 'w', zipfile.ZIP_DEFLATED) as zipf:

+ for root, dirs, files in os.walk(parse_result['temp_dir']):

+ for file in files:

+ if file.endswith('.zip'):

+ continue

+ file_path = os.path.join(root, file)

+ arcname = os.path.relpath(file_path, parse_result['temp_dir'])

+ zipf.write(file_path, arcname)

+ except Exception as e:

+ print(f"Failed to create download ZIP: {e}")

+ download_zip_path = None

+

+ return (

+ md_content or "No markdown content generated",

+ info_text,

+ md_content or "No markdown content generated",

+ md_content or "No markdown content generated",

+ gr.update(value=download_zip_path, visible=True) if download_zip_path else gr.update(visible=False),

+ current_json

+ )

+

+ except Exception as e:

+ return f"An error occurred during processing: {e}", f"An error occurred during processing: {e}", "", "", gr.update(value=None), ""

+

+def load_image_to_annotator(test_image_input):

+ """Loads an image into the annotation component."""

+ image = None

+

+ # Check the test image input

+ if test_image_input and test_image_input != "":

+ try:

+ image = read_image_v2(test_image_input)

+ except Exception as e:

+ return None

+

+ if image is None:

+ return None

+

+ # Return the format required by the annotation component

+ return {

+ "image": image,

+ "boxes": []

+ }

+

+def clear_all_data():

+ """Clears all data."""

+ global processing_results

+

+ # Clean up the temporary directory

+ if processing_results.get('temp_dir') and os.path.exists(processing_results['temp_dir']):

+ import shutil

+ try:

+ shutil.rmtree(processing_results['temp_dir'], ignore_errors=True)

+ except Exception as e:

+ print(f"Failed to clean up temporary directory: {e}")

+

+ # Reset processing results

+ processing_results = {

+ 'original_image': None,

+ 'processed_image': None,

+ 'layout_result': None,

+ 'markdown_content': None,

+ 'cells_data': None,

+ 'temp_dir': None,

+ 'session_id': None,

+ 'result_paths': None,

+ 'annotation_data': None

+ }

+

+ return (

+ "", # Clear test image selection

+ None, # Clear annotation component

+ "Waiting for processing results...", # Reset info display

+ "## Waiting for processing results...", # Reset Markdown display

+ "🕐 Waiting for parsing results...", # Clear raw Markdown text

+ gr.update(visible=False), # Hide download button

+ "🕐 Waiting for parsing results..." # Clear JSON

+ )

+

+def update_prompt_display(prompt_mode):

+ """Updates the displayed prompt content."""

+ return dict_promptmode_to_prompt[prompt_mode]

+

+# ==================== Gradio Interface ====================

+def create_gradio_interface():

+ """Creates the Gradio interface."""

+

+ # CSS styling to match the reference style

+ css = """

+ footer {

+ visibility: hidden;

+ }

+

+ #info_box {

+ padding: 10px;

+ background-color: #f8f9fa;

+ border-radius: 8px;

+ border: 1px solid #dee2e6;

+ margin: 10px 0;

+ font-size: 14px;

+ }

+

+ #markdown_tabs {

+ height: 100%;

+ }

+

+ #annotation_component {

+ border-radius: 8px;

+ }

+ """

+

+ with gr.Blocks(theme="ocean", css=css, title='dots.ocr - Annotation') as demo:

+

+ # Title

+ gr.HTML("""

+

+

+ 🔍 dots.ocr - Annotation Version

+

+ Supports image annotation, drawing boxes, and sending box information to the model for OCR.

+

+ """)

+

+ with gr.Row():

+ # Left side: Input and Configuration

+ with gr.Column(scale=1, variant="compact"):

+ gr.Markdown("### 📁 Select Example")

+ test_images = get_test_images()

+ test_image_input = gr.Dropdown(

+ label="Select Example",

+ choices=[""] + test_images,

+ value="",

+ show_label=True

+ )

+

+ # Button to load image into the annotation component

+ load_btn = gr.Button("📷 Load Image to Annotation Area", variant="secondary")

+

+ prompt_mode = gr.Dropdown(

+ label="Select Prompt",

+ # choices=["prompt_layout_all_en", "prompt_layout_only_en", "prompt_ocr", "prompt_grounding_ocr"],

+ choices=["prompt_grounding_ocr"],

+ value="prompt_grounding_ocr",

+ show_label=True,

+ info="If a box is drawn, 'prompt_grounding_ocr' mode will be used automatically."

+ )

+

+ # Display the current prompt content

+ prompt_display = gr.Textbox(

+ label="Current Prompt Content",

+ # value=dict_promptmode_to_prompt[list(dict_promptmode_to_prompt.keys())[0]],

+ value=dict_promptmode_to_prompt["prompt_grounding_ocr"],

+ lines=4,

+ max_lines=8,

+ interactive=False,

+ show_copy_button=True

+ )

+

+ gr.Markdown("### ⚙️ Actions")

+ process_btn = gr.Button("🔍 Parse", variant="primary")

+ clear_btn = gr.Button("🗑️ Clear", variant="secondary")

+

+ gr.Markdown("### 🛠️ Configuration")

+

+ fitz_preprocess = gr.Checkbox(

+ label="Enable fitz_preprocess",

+ value=False,

+ info="Performs fitz preprocessing on the image input, converting the image to a PDF and then to a 200dpi image."

+ )

+

+ with gr.Row():

+ server_ip = gr.Textbox(

+ label="Server IP",

+ value=DEFAULT_CONFIG['ip']

+ )

+ server_port = gr.Number(

+ label="Port",

+ value=DEFAULT_CONFIG['port_vllm'],

+ precision=0

+ )

+

+ with gr.Row():

+ min_pixels = gr.Number(

+ label="Min Pixels",

+ value=DEFAULT_CONFIG['min_pixels'],

+ precision=0

+ )

+ max_pixels = gr.Number(

+ label="Max Pixels",

+ value=DEFAULT_CONFIG['max_pixels'],

+ precision=0

+ )

+

+ # Right side: Result Display

+ with gr.Column(scale=6, variant="compact"):

+ with gr.Row():

+ # Image Annotation Area

+ with gr.Column(scale=3):

+ gr.Markdown("### 🎯 Image Annotation Area")

+ gr.Markdown("""

+ **Instructions:**

+ - Method 1: Select an example image on the left and click "Load Image to Annotation Area".

+ - Method 2: Upload an image directly in the annotation area below (drag and drop or click to upload).

+ - Use the mouse to draw a box on the image to select the region for recognition.

+ - Only one box can be drawn. To draw a new one, please delete the old one first.

+ - **Hotkey: Press the Delete key to remove the selected box.**

+ - After drawing a box, clicking Parse will automatically use the Region OCR mode.

+ """)

+

+ annotator = image_annotator(

+ value=None,

+ label="Image Annotation",

+ height=600,

+ show_label=False,

+ elem_id="annotation_component",

+ single_box=True, # Only allow one box; a new box will replace the old one

+ box_min_size=10,

+ interactive=True,

+ disable_edit_boxes=True, # Disable the edit dialog

+ label_list=["OCR Region"], # Set the default label

+ label_colors=[(255, 0, 0)], # Set color to red

+ use_default_label=True, # Use the default label

+ image_type="pil" # Ensure it returns a PIL Image format

+ )

+

+ # Information Display

+ info_display = gr.Markdown(

+ "Waiting for processing results...",

+ elem_id="info_box"

+ )

+

+ # Result Display Area

+ with gr.Column(scale=3):

+ gr.Markdown("### ✅ Results")

+

+ with gr.Tabs(elem_id="markdown_tabs"):

+ with gr.TabItem("Markdown Rendered View"):

+ md_output = gr.Markdown(

+ "## Please upload an image and click the Parse button for recognition...",

+ label="Markdown Preview",