Spaces:

Runtime error

Runtime error

Create app.py

Browse files

app.py

ADDED

|

@@ -0,0 +1,72 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from deepsparse import Pipeline

|

| 2 |

+

import time

|

| 3 |

+

import gradio as gr

|

| 4 |

+

|

| 5 |

+

markdownn = '''

|

| 6 |

+

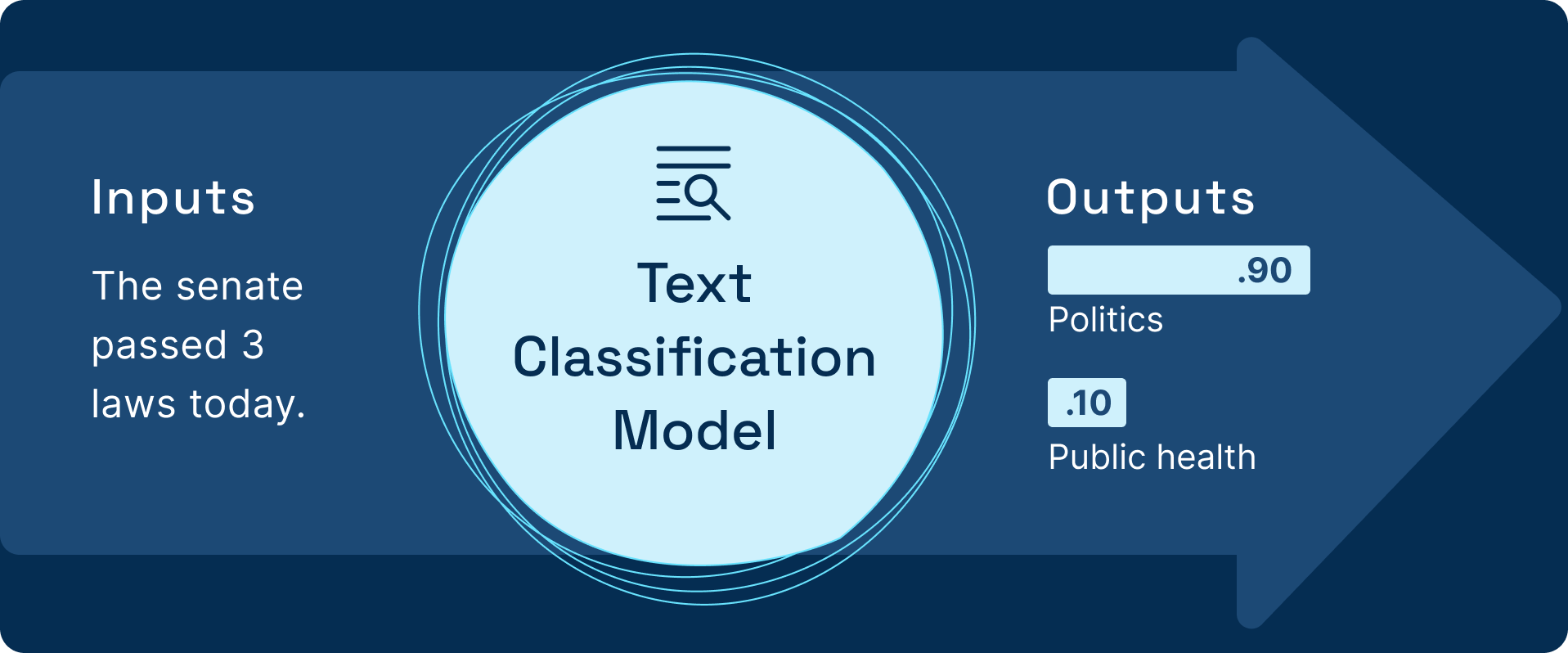

# Text Classification Pipeline with DeepSparse

|

| 7 |

+

Text Classification involves assigning a label to a given text. For example, sentiment analysis is an example of a text classification use case.

|

| 8 |

+

|

| 9 |

+

## What is DeepSparse

|

| 10 |

+

DeepSparse is an inference runtime offering GPU-class performance on CPUs and APIs to integrate ML into your application. Sparsification is a powerful technique for optimizing models for inference, reducing the compute needed with a limited accuracy tradeoff. DeepSparse is designed to take advantage of model sparsity, enabling you to deploy models with the flexibility and scalability of software on commodity CPUs with the best-in-class performance of hardware accelerators, enabling you to standardize operations and reduce infrastructure costs.

|

| 11 |

+

Similar to Hugging Face, DeepSparse provides off-the-shelf pipelines for computer vision and NLP that wrap the model with proper pre- and post-processing to run performantly on CPUs by using sparse models.

|

| 12 |

+

The text classification Pipeline, for example, wraps an NLP model with the proper preprocessing and postprocessing pipelines, such as tokenization.

|

| 13 |

+

### Inference API Example

|

| 14 |

+

Here is sample code for a text classification pipeline:

|

| 15 |

+

```

|

| 16 |

+

from deepsparse import Pipeline

|

| 17 |

+

pipeline = Pipeline.create(task="zero_shot_text_classification", model_path="zoo:nlp/text_classification/distilbert-none/pytorch/huggingface/mnli/pruned80_quant-none-vnni",model_scheme="mnli",model_config={"hypothesis_template": "This text is related to {}"},)

|

| 18 |

+

text = "The senate passed 3 laws today"

|

| 19 |

+

inference = pipeline(sequences= text,labels=['politics', 'public health', 'Europe'],)

|

| 20 |

+

print(inference)

|

| 21 |

+

```

|

| 22 |

+

## Use Case Description

|

| 23 |

+

Customer review classification is a great example of text classification in action.

|

| 24 |

+

The ability to quickly classify sentiment from customers is an added advantage for any business.

|

| 25 |

+

Therefore, whichever solution you deploy for classifying the customer reviews should deliver results in the shortest time possible.

|

| 26 |

+

By being fast the solution will process more volume, hence cheaper computational resources are utilized.

|

| 27 |

+

When deploying a text classification model, decreasing the model’s latency and increasing its throughput is critical. This is why DeepSparse Pipelines have sparse text classification models.

|

| 28 |

+

[Want to train a sparse model on your data? Checkout the documentation on sparse transfer learning](https://docs.neuralmagic.com/use-cases/natural-language-processing/question-answering)

|

| 29 |

+

'''

|

| 30 |

+

task = "zero_shot_text_classification"

|

| 31 |

+

sparse_classification_pipeline = Pipeline.create(

|

| 32 |

+

task=task,

|

| 33 |

+

model_path="zoo:nlp/text_classification/distilbert-none/pytorch/huggingface/mnli/pruned80_quant-none-vnni",

|

| 34 |

+

model_scheme="mnli",

|

| 35 |

+

model_config={"hypothesis_template": "This text is related to {}"},

|

| 36 |

+

)

|

| 37 |

+

def run_pipeline(text):

|

| 38 |

+

sparse_start = time.perf_counter()

|

| 39 |

+

sparse_output = sparse_classification_pipeline(sequences= text,labels=['politics', 'public health', 'Europe'],)

|

| 40 |

+

sparse_result = dict(sparse_output)

|

| 41 |

+

sparse_end = time.perf_counter()

|

| 42 |

+

sparse_duration = (sparse_end - sparse_start) * 1000.0

|

| 43 |

+

dict_r = {sparse_result['labels'][0]:sparse_result['scores'][0],sparse_result['labels'][1]:sparse_result['scores'][1], sparse_result['labels'][2]:sparse_result['scores'][2]}

|

| 44 |

+

return dict_r, sparse_duration

|

| 45 |

+

|

| 46 |

+

|

| 47 |

+

with gr.Blocks() as demo:

|

| 48 |

+

with gr.Row():

|

| 49 |

+

with gr.Column():

|

| 50 |

+

gr.Markdown(markdownn)

|

| 51 |

+

|

| 52 |

+

with gr.Column():

|

| 53 |

+

gr.Markdown("""

|

| 54 |

+

### Text classification demo

|

| 55 |

+

""")

|

| 56 |

+

text = gr.Text(label="Text")

|

| 57 |

+

btn = gr.Button("Submit")

|

| 58 |

+

|

| 59 |

+

sparse_answers = gr.Label(label="Sparse model answers",

|

| 60 |

+

num_top_classes=3

|

| 61 |

+

)

|

| 62 |

+

sparse_duration = gr.Number(label="Sparse Latency (ms):")

|

| 63 |

+

gr.Examples([["The senate passed 3 laws today"],["Who are you voting for in 2020?"],["Public health is very important"]],inputs=[text],)

|

| 64 |

+

|

| 65 |

+

btn.click(

|

| 66 |

+

run_pipeline,

|

| 67 |

+

inputs=[text],

|

| 68 |

+

outputs=[sparse_answers,sparse_duration],

|

| 69 |

+

)

|

| 70 |

+

|

| 71 |

+

if __name__ == "__main__":

|

| 72 |

+

demo.launch()

|