diff --git a/.coveragerc b/.coveragerc

new file mode 100644

index 0000000000000000000000000000000000000000..4fc07d951c8e95c3af76fe21a692ccc908fa4cfe

--- /dev/null

+++ b/.coveragerc

@@ -0,0 +1,56 @@

+[run]

+source = .

+omit =

+ */tests/*

+ */test/*

+ */__pycache__/*

+ */venv/*

+ */env/*

+ */build/*

+ */dist/*

+ */cdk/*

+ */docs/*

+ */example_data/*

+ */examples/*

+ */feedback/*

+ */logs/*

+ */old_code/*

+ */output/*

+ */tmp/*

+ */usage/*

+ */tld/*

+ */tesseract/*

+ */poppler/*

+ config*.py

+ setup.py

+ lambda_entrypoint.py

+ entrypoint.sh

+ cli_redact.py

+ load_dynamo_logs.py

+ load_s3_logs.py

+ *.spec

+ Dockerfile

+ *.qmd

+ *.md

+ *.txt

+ *.yml

+ *.yaml

+ *.json

+ *.csv

+ *.env

+ *.bat

+ *.ps1

+ *.sh

+

+[report]

+exclude_lines =

+ pragma: no cover

+ def __repr__

+ if self.debug:

+ if settings.DEBUG

+ raise AssertionError

+ raise NotImplementedError

+ if 0:

+ if __name__ == .__main__.:

+ class .*\bProtocol\):

+ @(abc\.)?abstractmethod

diff --git a/.dockerignore b/.dockerignore

new file mode 100644

index 0000000000000000000000000000000000000000..16691dfeaaa00c0eb8451f7c0ab2a164b20fb961

--- /dev/null

+++ b/.dockerignore

@@ -0,0 +1,40 @@

+*.url

+*.ipynb

+*.pyc

+.venv/*

+examples/*

+processing/*

+tools/__pycache__/*

+old_code/*

+tesseract/*

+poppler/*

+build/*

+dist/*

+docs/*

+build_deps/*

+user_guide/*

+cdk/config/*

+tld/*

+cdk/config/*

+cdk/cdk.out/*

+cdk/archive/*

+cdk.json

+cdk.context.json

+.quarto/*

+logs/

+output/

+input/

+feedback/

+config/

+usage/

+test/config/*

+test/feedback/*

+test/input/*

+test/logs/*

+test/output/*

+test/tmp/*

+test/usage/*

+.ruff_cache/*

+model_cache/*

+sanitized_file/*

+src/doc_redaction.egg-info/*

diff --git a/.gitattributes b/.gitattributes

new file mode 100644

index 0000000000000000000000000000000000000000..674c5a2ce45c516d0d6787bccfdc540cdd2d5791

--- /dev/null

+++ b/.gitattributes

@@ -0,0 +1,8 @@

+*.pdf filter=lfs diff=lfs merge=lfs -text

+*.jpg filter=lfs diff=lfs merge=lfs -text

+*.xls filter=lfs diff=lfs merge=lfs -text

+*.xlsx filter=lfs diff=lfs merge=lfs -text

+*.docx filter=lfs diff=lfs merge=lfs -text

+*.doc filter=lfs diff=lfs merge=lfs -text

+*.png filter=lfs diff=lfs merge=lfs -text

+*.ico filter=lfs diff=lfs merge=lfs -text

diff --git a/.github/scripts/setup_test_data.py b/.github/scripts/setup_test_data.py

new file mode 100644

index 0000000000000000000000000000000000000000..615d2269ad0075266f470d90cf8da7e4d1aab98e

--- /dev/null

+++ b/.github/scripts/setup_test_data.py

@@ -0,0 +1,311 @@

+#!/usr/bin/env python3

+"""

+Setup script for GitHub Actions test data.

+Creates dummy test files when example data is not available.

+"""

+

+import os

+import sys

+

+import pandas as pd

+

+

+def create_directories():

+ """Create necessary directories."""

+ dirs = ["example_data", "example_data/example_outputs"]

+

+ for dir_path in dirs:

+ os.makedirs(dir_path, exist_ok=True)

+ print(f"Created directory: {dir_path}")

+

+

+def create_dummy_pdf():

+ """Create dummy PDFs for testing."""

+

+ # Install reportlab if not available

+ try:

+ from reportlab.lib.pagesizes import letter

+ from reportlab.pdfgen import canvas

+ except ImportError:

+ import subprocess

+

+ subprocess.check_call(["pip", "install", "reportlab"])

+ from reportlab.lib.pagesizes import letter

+ from reportlab.pdfgen import canvas

+

+ try:

+ # Create the main test PDF

+ pdf_path = (

+ "example_data/example_of_emails_sent_to_a_professor_before_applying.pdf"

+ )

+ print(f"Creating PDF: {pdf_path}")

+ print(f"Directory exists: {os.path.exists('example_data')}")

+

+ c = canvas.Canvas(pdf_path, pagesize=letter)

+ c.drawString(100, 750, "This is a test document for redaction testing.")

+ c.drawString(100, 700, "Email: test@example.com")

+ c.drawString(100, 650, "Phone: 123-456-7890")

+ c.drawString(100, 600, "Name: John Doe")

+ c.drawString(100, 550, "Address: 123 Test Street, Test City, TC 12345")

+ c.showPage()

+

+ # Add second page

+ c.drawString(100, 750, "Second page content")

+ c.drawString(100, 700, "More test data: jane.doe@example.com")

+ c.drawString(100, 650, "Another phone: 987-654-3210")

+ c.save()

+

+ print(f"Created dummy PDF: {pdf_path}")

+

+ # Create Partnership Agreement Toolkit PDF

+ partnership_pdf_path = "example_data/Partnership-Agreement-Toolkit_0_0.pdf"

+ print(f"Creating PDF: {partnership_pdf_path}")

+ c = canvas.Canvas(partnership_pdf_path, pagesize=letter)

+ c.drawString(100, 750, "Partnership Agreement Toolkit")

+ c.drawString(100, 700, "This is a test partnership agreement document.")

+ c.drawString(100, 650, "Contact: partnership@example.com")

+ c.drawString(100, 600, "Phone: (555) 123-4567")

+ c.drawString(100, 550, "Address: 123 Partnership Street, City, State 12345")

+ c.showPage()

+

+ # Add second page

+ c.drawString(100, 750, "Page 2 - Partnership Details")

+ c.drawString(100, 700, "More partnership information here.")

+ c.drawString(100, 650, "Contact: info@partnership.org")

+ c.showPage()

+

+ # Add third page

+ c.drawString(100, 750, "Page 3 - Terms and Conditions")

+ c.drawString(100, 700, "Terms and conditions content.")

+ c.drawString(100, 650, "Legal contact: legal@partnership.org")

+ c.save()

+

+ print(f"Created dummy PDF: {partnership_pdf_path}")

+

+ # Create Graduate Job Cover Letter PDF

+ cover_letter_pdf_path = "example_data/graduate-job-example-cover-letter.pdf"

+ print(f"Creating PDF: {cover_letter_pdf_path}")

+ c = canvas.Canvas(cover_letter_pdf_path, pagesize=letter)

+ c.drawString(100, 750, "Cover Letter Example")

+ c.drawString(100, 700, "Dear Hiring Manager,")

+ c.drawString(100, 650, "I am writing to apply for the position.")

+ c.drawString(100, 600, "Contact: applicant@example.com")

+ c.drawString(100, 550, "Phone: (555) 987-6543")

+ c.drawString(100, 500, "Address: 456 Job Street, Employment City, EC 54321")

+ c.drawString(100, 450, "Sincerely,")

+ c.drawString(100, 400, "John Applicant")

+ c.save()

+

+ print(f"Created dummy PDF: {cover_letter_pdf_path}")

+

+ except ImportError:

+ print("ReportLab not available, skipping PDF creation")

+ # Create simple text files instead

+ with open(

+ "example_data/example_of_emails_sent_to_a_professor_before_applying.pdf",

+ "w",

+ ) as f:

+ f.write("This is a dummy PDF file for testing")

+

+ with open(

+ "example_data/Partnership-Agreement-Toolkit_0_0.pdf",

+ "w",

+ ) as f:

+ f.write("This is a dummy Partnership Agreement PDF file for testing")

+

+ with open(

+ "example_data/graduate-job-example-cover-letter.pdf",

+ "w",

+ ) as f:

+ f.write("This is a dummy cover letter PDF file for testing")

+

+ print("Created dummy text files instead of PDFs")

+

+

+def create_dummy_csv():

+ """Create dummy CSV files for testing."""

+ # Main CSV

+ csv_data = {

+ "Case Note": [

+ "Client visited for consultation regarding housing issues",

+ "Follow-up appointment scheduled for next week",

+ "Documentation submitted for review",

+ ],

+ "Client": ["John Smith", "Jane Doe", "Bob Johnson"],

+ "Date": ["2024-01-15", "2024-01-16", "2024-01-17"],

+ }

+ df = pd.DataFrame(csv_data)

+ df.to_csv("example_data/combined_case_notes.csv", index=False)

+ print("Created dummy CSV: example_data/combined_case_notes.csv")

+

+ # Lambeth CSV

+ lambeth_data = {

+ "text": [

+ "Lambeth 2030 vision document content",

+ "Our Future Our Lambeth strategic plan",

+ "Community engagement and development",

+ ],

+ "page": [1, 2, 3],

+ }

+ df_lambeth = pd.DataFrame(lambeth_data)

+ df_lambeth.to_csv(

+ "example_data/Lambeth_2030-Our_Future_Our_Lambeth.pdf.csv", index=False

+ )

+ print("Created dummy CSV: example_data/Lambeth_2030-Our_Future_Our_Lambeth.pdf.csv")

+

+

+def create_dummy_word_doc():

+ """Create dummy Word document."""

+ try:

+ from docx import Document

+

+ doc = Document()

+ doc.add_heading("Test Document for Redaction", 0)

+ doc.add_paragraph("This is a test document for redaction testing.")

+ doc.add_paragraph("Contact Information:")

+ doc.add_paragraph("Email: test@example.com")

+ doc.add_paragraph("Phone: 123-456-7890")

+ doc.add_paragraph("Name: John Doe")

+ doc.add_paragraph("Address: 123 Test Street, Test City, TC 12345")

+

+ doc.save("example_data/Bold minimalist professional cover letter.docx")

+ print("Created dummy Word document")

+

+ except ImportError:

+ print("python-docx not available, skipping Word document creation")

+

+

+def create_allow_deny_lists():

+ """Create dummy allow/deny lists."""

+ # Allow lists

+ allow_data = {"word": ["test", "example", "document"]}

+ pd.DataFrame(allow_data).to_csv(

+ "example_data/test_allow_list_graduate.csv", index=False

+ )

+ pd.DataFrame(allow_data).to_csv(

+ "example_data/test_allow_list_partnership.csv", index=False

+ )

+ print("Created allow lists")

+

+ # Deny lists

+ deny_data = {"word": ["sensitive", "confidential", "private"]}

+ pd.DataFrame(deny_data).to_csv(

+ "example_data/partnership_toolkit_redact_custom_deny_list.csv", index=False

+ )

+ pd.DataFrame(deny_data).to_csv(

+ "example_data/Partnership-Agreement-Toolkit_test_deny_list_para_single_spell.csv",

+ index=False,

+ )

+ print("Created deny lists")

+

+ # Whole page redaction list

+ page_data = {"page": [1, 2]}

+ pd.DataFrame(page_data).to_csv(

+ "example_data/partnership_toolkit_redact_some_pages.csv", index=False

+ )

+ print("Created whole page redaction list")

+

+

+def create_ocr_output():

+ """Create dummy OCR output CSV."""

+ ocr_data = {

+ "page": [1, 2, 3],

+ "text": [

+ "This is page 1 content with some text",

+ "This is page 2 content with different text",

+ "This is page 3 content with more text",

+ ],

+ "left": [0.1, 0.3, 0.5],

+ "top": [0.95, 0.92, 0.88],

+ "width": [0.05, 0.02, 0.02],

+ "height": [0.01, 0.02, 0.02],

+ "line": [1, 2, 3],

+ }

+ df = pd.DataFrame(ocr_data)

+ df.to_csv(

+ "example_data/example_outputs/doubled_output_joined.pdf_ocr_output.csv",

+ index=False,

+ )

+ print("Created dummy OCR output CSV")

+

+

+def create_dummy_image():

+ """Create dummy image for testing."""

+ try:

+ from PIL import Image, ImageDraw, ImageFont

+

+ img = Image.new("RGB", (800, 600), color="white")

+ draw = ImageDraw.Draw(img)

+

+ # Try to use a system font

+ try:

+ font = ImageFont.truetype(

+ "/usr/share/fonts/truetype/dejavu/DejaVuSans.ttf", 20

+ )

+ except Exception as e:

+ print(f"Error loading DejaVuSans font: {e}")

+ try:

+ font = ImageFont.truetype("/System/Library/Fonts/Arial.ttf", 20)

+ except Exception as e:

+ print(f"Error loading Arial font: {e}")

+ font = ImageFont.load_default()

+

+ # Add text to image

+ draw.text((50, 50), "Test Document for Redaction", fill="black", font=font)

+ draw.text((50, 100), "Email: test@example.com", fill="black", font=font)

+ draw.text((50, 150), "Phone: 123-456-7890", fill="black", font=font)

+ draw.text((50, 200), "Name: John Doe", fill="black", font=font)

+ draw.text((50, 250), "Address: 123 Test Street", fill="black", font=font)

+

+ img.save("example_data/example_complaint_letter.jpg")

+ print("Created dummy image")

+

+ except ImportError:

+ print("PIL not available, skipping image creation")

+

+

+def main():

+ """Main setup function."""

+ print("Setting up test data for GitHub Actions...")

+ print(f"Current working directory: {os.getcwd()}")

+ print(f"Python version: {sys.version}")

+

+ create_directories()

+ create_dummy_pdf()

+ create_dummy_csv()

+ create_dummy_word_doc()

+ create_allow_deny_lists()

+ create_ocr_output()

+ create_dummy_image()

+

+ print("\nTest data setup complete!")

+ print("Created files:")

+ for root, dirs, files in os.walk("example_data"):

+ for file in files:

+ file_path = os.path.join(root, file)

+ print(f" {file_path}")

+ # Verify the file exists and has content

+ if os.path.exists(file_path):

+ file_size = os.path.getsize(file_path)

+ print(f" Size: {file_size} bytes")

+ else:

+ print(" WARNING: File does not exist!")

+

+ # Verify critical files exist

+ critical_files = [

+ "example_data/Partnership-Agreement-Toolkit_0_0.pdf",

+ "example_data/graduate-job-example-cover-letter.pdf",

+ "example_data/example_of_emails_sent_to_a_professor_before_applying.pdf",

+ ]

+

+ print("\nVerifying critical test files:")

+ for file_path in critical_files:

+ if os.path.exists(file_path):

+ file_size = os.path.getsize(file_path)

+ print(f"✅ {file_path} exists ({file_size} bytes)")

+ else:

+ print(f"❌ {file_path} MISSING!")

+

+

+if __name__ == "__main__":

+ main()

diff --git a/.github/workflow_README.md b/.github/workflow_README.md

new file mode 100644

index 0000000000000000000000000000000000000000..19582f83810ccae7513bd8ef9a5d2b517b5c56ee

--- /dev/null

+++ b/.github/workflow_README.md

@@ -0,0 +1,183 @@

+# GitHub Actions CI/CD Setup

+

+This directory contains GitHub Actions workflows for automated testing of the CLI redaction application.

+

+## Workflows Overview

+

+### 1. **Simple Test Run** (`.github/workflows/simple-test.yml`)

+- **Purpose**: Basic test execution

+- **Triggers**: Push to main/dev, Pull requests

+- **OS**: Ubuntu Latest

+- **Python**: 3.11

+- **Features**:

+ - Installs system dependencies

+ - Sets up test data

+ - Runs CLI tests

+ - Runs pytest

+

+### 2. **Comprehensive CI/CD** (`.github/workflows/ci.yml`)

+- **Purpose**: Full CI/CD pipeline

+- **Features**:

+ - Linting (Ruff, Black)

+ - Unit tests (Python 3.10, 3.11, 3.12)

+ - Integration tests

+ - Security scanning (Safety, Bandit)

+ - Coverage reporting

+ - Package building (on main branch)

+

+### 3. **Multi-OS Testing** (`.github/workflows/multi-os-test.yml`)

+- **Purpose**: Cross-platform testing

+- **OS**: Ubuntu, macOS (Windows not included currently but may be reintroduced)

+- **Python**: 3.10, 3.11, 3.12

+- **Features**: Tests compatibility across different operating systems

+

+### 4. **Basic Test Suite** (`.github/workflows/test.yml`)

+- **Purpose**: Original test workflow

+- **Features**:

+ - Multiple Python versions

+ - System dependency installation

+ - Test data creation

+ - Coverage reporting

+

+## Setup Scripts

+

+### Test Data Setup (`.github/scripts/setup_test_data.py`)

+Creates dummy test files when example data is not available:

+- PDF documents

+- CSV files

+- Word documents

+- Images

+- Allow/deny lists

+- OCR output files

+

+## Usage

+

+### Running Tests Locally

+

+```bash

+# Install dependencies

+pip install -r requirements.txt

+pip install pytest pytest-cov

+

+# Setup test data

+python .github/scripts/setup_test_data.py

+

+# Run tests

+cd test

+python test.py

+```

+

+### GitHub Actions Triggers

+

+1. **Push to main/dev**: Runs all tests

+2. **Pull Request**: Runs tests and linting

+3. **Daily Schedule**: Runs tests at 2 AM UTC

+4. **Manual Trigger**: Can be triggered manually from GitHub

+

+## Configuration

+

+### Environment Variables

+- `PYTHON_VERSION`: Default Python version (3.11)

+- `PYTHONPATH`: Set automatically for test discovery

+

+### Caching

+- Pip dependencies are cached for faster builds

+- Cache key based on requirements.txt hash

+

+### Artifacts

+- Test results (JUnit XML)

+- Coverage reports (HTML, XML)

+- Security reports

+- Build artifacts (on main branch)

+

+## Test Data

+

+The workflows automatically create test data when example files are missing:

+

+### Required Files Created:

+- `example_data/example_of_emails_sent_to_a_professor_before_applying.pdf`

+- `example_data/combined_case_notes.csv`

+- `example_data/Bold minimalist professional cover letter.docx`

+- `example_data/example_complaint_letter.jpg`

+- `example_data/test_allow_list_*.csv`

+- `example_data/partnership_toolkit_redact_*.csv`

+- `example_data/example_outputs/doubled_output_joined.pdf_ocr_output.csv`

+

+### Dependencies Installed:

+- **System**: tesseract-ocr, poppler-utils, OpenGL libraries

+- **Python**: All requirements.txt packages + pytest, reportlab, pillow

+

+## Workflow Status

+

+### Success Criteria:

+- ✅ All tests pass

+- ✅ No linting errors

+- ✅ Security checks pass

+- ✅ Coverage meets threshold (if configured)

+

+### Failure Handling:

+- Tests are designed to skip gracefully if files are missing

+- AWS tests are expected to fail without credentials

+- System dependency failures are handled with fallbacks

+

+## Customization

+

+### Adding New Tests:

+1. Add test methods to `test/test.py`

+2. Update test data in `setup_test_data.py` if needed

+3. Tests will automatically run in all workflows

+

+### Modifying Workflows:

+1. Edit the appropriate `.yml` file

+2. Test locally first

+3. Push to trigger the workflow

+

+### Environment-Specific Settings:

+- **Ubuntu**: Full system dependencies

+- **Windows**: Python packages only

+- **macOS**: Homebrew dependencies

+

+## Troubleshooting

+

+### Common Issues:

+

+1. **Missing Dependencies**:

+ - Check system dependency installation

+ - Verify Python package versions

+

+2. **Test Failures**:

+ - Check test data creation

+ - Verify file paths

+ - Review test output logs

+

+3. **AWS Test Failures**:

+ - Expected without credentials

+ - Tests are designed to handle this gracefully

+

+4. **System Dependency Issues**:

+ - Different OS have different requirements

+ - Check the specific OS section in workflows

+

+### Debug Mode:

+Add `--verbose` or `-v` flags to pytest commands for more detailed output.

+

+## Security

+

+- Dependencies are scanned with Safety

+- Code is scanned with Bandit

+- No secrets are exposed in logs

+- Test data is temporary and cleaned up

+

+## Performance

+

+- Tests run in parallel where possible

+- Dependencies are cached

+- Only necessary system packages are installed

+- Test data is created efficiently

+

+## Monitoring

+

+- Workflow status is visible in GitHub Actions tab

+- Coverage reports are uploaded to Codecov

+- Test results are available as artifacts

+- Security reports are generated and stored

diff --git a/.github/workflows/archive_workflows/multi-os-test.yml b/.github/workflows/archive_workflows/multi-os-test.yml

new file mode 100644

index 0000000000000000000000000000000000000000..de332621c498db6694aebbbe915f2cb96b38e136

--- /dev/null

+++ b/.github/workflows/archive_workflows/multi-os-test.yml

@@ -0,0 +1,109 @@

+name: Multi-OS Test

+

+on:

+ push:

+ branches: [ main ]

+ pull_request:

+ branches: [ main ]

+

+permissions:

+ contents: read

+ actions: read

+

+jobs:

+ test:

+ runs-on: ${{ matrix.os }}

+ strategy:

+ matrix:

+ os: [ubuntu-latest, macos-latest] # windows-latest, not included as tesseract cannot be installed silently

+ python-version: ["3.11", "3.12", "3.13"]

+ exclude:

+ # Exclude some combinations to reduce CI time

+ #- os: windows-latest

+ # python-version: ["3.12", "3.13"]

+ - os: macos-latest

+ python-version: ["3.12", "3.13"]

+

+ steps:

+ - uses: actions/checkout@v6

+

+ - name: Set up Python ${{ matrix.python-version }}

+ uses: actions/setup-python@v6

+ with:

+ python-version: ${{ matrix.python-version }}

+

+ - name: Install system dependencies (Ubuntu)

+ if: matrix.os == 'ubuntu-latest'

+ run: |

+ sudo apt-get update

+ sudo apt-get install -y \

+ tesseract-ocr \

+ tesseract-ocr-eng \

+ poppler-utils \

+ libgl1-mesa-dri \

+ libglib2.0-0

+

+ - name: Install system dependencies (macOS)

+ if: matrix.os == 'macos-latest'

+ run: |

+ brew install tesseract poppler

+

+ - name: Install system dependencies (Windows)

+ if: matrix.os == 'windows-latest'

+ run: |

+ # Create tools directory

+ if (!(Test-Path "C:\tools")) {

+ mkdir C:\tools

+ }

+

+ # Download and install Tesseract

+ $tesseractUrl = "https://github.com/tesseract-ocr/tesseract/releases/download/5.5.0/tesseract-ocr-w64-setup-5.5.0.20241111.exe"

+ $tesseractInstaller = "C:\tools\tesseract-installer.exe"

+ Invoke-WebRequest -Uri $tesseractUrl -OutFile $tesseractInstaller

+

+ # Install Tesseract silently

+ Start-Process -FilePath $tesseractInstaller -ArgumentList "/S", "/D=C:\tools\tesseract" -Wait

+

+ # Download and extract Poppler

+ $popplerUrl = "https://github.com/oschwartz10612/poppler-windows/releases/download/v25.07.0-0/Release-25.07.0-0.zip"

+ $popplerZip = "C:\tools\poppler.zip"

+ Invoke-WebRequest -Uri $popplerUrl -OutFile $popplerZip

+

+ # Extract Poppler

+ Expand-Archive -Path $popplerZip -DestinationPath C:\tools\poppler -Force

+

+ # Add to PATH

+ echo "C:\tools\tesseract" >> $env:GITHUB_PATH

+ echo "C:\tools\poppler\poppler-25.07.0\Library\bin" >> $env:GITHUB_PATH

+

+ # Set environment variables for your application

+ echo "TESSERACT_FOLDER=C:\tools\tesseract" >> $env:GITHUB_ENV

+ echo "POPPLER_FOLDER=C:\tools\poppler\poppler-25.07.0\Library\bin" >> $env:GITHUB_ENV

+ echo "TESSERACT_DATA_FOLDER=C:\tools\tesseract\tessdata" >> $env:GITHUB_ENV

+

+ # Verify installation using full paths (since PATH won't be updated in current session)

+ & "C:\tools\tesseract\tesseract.exe" --version

+ & "C:\tools\poppler\poppler-25.07.0\Library\bin\pdftoppm.exe" -v

+

+ - name: Install Python dependencies

+ run: |

+ python -m pip install --upgrade pip

+ pip install -r requirements.txt

+ pip install pytest pytest-cov reportlab pillow

+

+ - name: Download spaCy model

+ run: |

+ python -m spacy download en_core_web_lg

+

+ - name: Setup test data

+ run: |

+ python .github/scripts/setup_test_data.py

+

+ - name: Run CLI tests

+ run: |

+ cd test

+ python test.py

+

+ - name: Run tests with pytest

+ run: |

+ pytest test/test.py -v --tb=short

diff --git a/.github/workflows/ci.yml b/.github/workflows/ci.yml

new file mode 100644

index 0000000000000000000000000000000000000000..dc4743c0118c4651dd836a65bb45c52cef9d8881

--- /dev/null

+++ b/.github/workflows/ci.yml

@@ -0,0 +1,260 @@

+name: CI/CD Pipeline

+

+on:

+ push:

+ branches: [ main ]

+ pull_request:

+ branches: [ main ]

+ #schedule:

+ # Run tests daily at 2 AM UTC

+ # - cron: '0 2 * * *'

+

+permissions:

+ contents: read

+ actions: read

+ pull-requests: write

+ issues: write

+

+env:

+ PYTHON_VERSION: "3.11"

+

+jobs:

+ lint:

+ runs-on: ubuntu-latest

+ steps:

+ - uses: actions/checkout@v6

+

+ - name: Set up Python

+ uses: actions/setup-python@v6

+ with:

+ python-version: ${{ env.PYTHON_VERSION }}

+

+ - name: Install dependencies

+ run: |

+ python -m pip install --upgrade pip

+ pip install ruff black

+

+ - name: Run Ruff linter

+ run: ruff check .

+

+ - name: Run Black formatter check

+ run: black --check .

+

+ test-unit:

+ runs-on: ubuntu-latest

+ strategy:

+ matrix:

+ python-version: [3.11, 3.12, 3.13]

+

+ steps:

+ - uses: actions/checkout@v6

+

+ - name: Set up Python ${{ matrix.python-version }}

+ uses: actions/setup-python@v6

+ with:

+ python-version: ${{ matrix.python-version }}

+

+ - name: Cache pip dependencies

+ uses: actions/cache@v5

+ with:

+ path: ~/.cache/pip

+ key: ${{ runner.os }}-pip-${{ hashFiles('**/requirements.txt') }}

+ restore-keys: |

+ ${{ runner.os }}-pip-

+

+ - name: Install system dependencies

+ run: |

+ sudo apt-get update

+ sudo apt-get install -y \

+ tesseract-ocr \

+ tesseract-ocr-eng \

+ poppler-utils \

+ libgl1-mesa-dri \

+ libglib2.0-0 \

+ libsm6 \

+ libxext6 \

+ libxrender-dev \

+ libgomp1

+

+ - name: Install Python dependencies

+ run: |

+ python -m pip install --upgrade pip

+ pip install -r requirements_lightweight.txt

+ pip install pytest pytest-cov pytest-html pytest-xdist reportlab pillow

+

+ - name: Download spaCy model

+ run: |

+ python -m spacy download en_core_web_lg

+

+ - name: Setup test data

+ run: |

+ python .github/scripts/setup_test_data.py

+ echo "Setup script completed. Checking results:"

+ ls -la example_data/ || echo "example_data directory not found"

+

+ - name: Verify test data files

+ run: |

+ echo "Checking if critical test files exist:"

+ ls -la example_data/

+ echo "Checking for specific PDF files:"

+ ls -la example_data/*.pdf || echo "No PDF files found"

+ echo "Checking file sizes:"

+ find example_data -name "*.pdf" -exec ls -lh {} \;

+

+ - name: Clean up problematic config files

+ run: |

+ rm -f config*.py || true

+

+ - name: Run CLI tests

+ run: |

+ cd test

+ python test.py

+

+ - name: Run tests with pytest

+ run: |

+ pytest test/test.py -v --tb=short --junitxml=test-results.xml

+

+ - name: Run tests with coverage

+ run: |

+ pytest test/test.py --cov=. --cov-config=.coveragerc --cov-report=xml --cov-report=html --cov-report=term

+

+ #- name: Upload coverage to Codecov - not necessary

+ # uses: codecov/codecov-action@v3

+ # if: matrix.python-version == '3.11'

+ # with:

+ # file: ./coverage.xml

+ # flags: unittests

+ # name: codecov-umbrella

+ # fail_ci_if_error: false

+

+ - name: Upload test results

+ uses: actions/upload-artifact@v6

+ if: always()

+ with:

+ name: test-results-python-${{ matrix.python-version }}

+ path: |

+ test-results.xml

+ htmlcov/

+ coverage.xml

+

+ test-integration:

+ runs-on: ubuntu-latest

+ needs: [lint, test-unit]

+

+ steps:

+ - uses: actions/checkout@v6

+

+ - name: Set up Python

+ uses: actions/setup-python@v6

+ with:

+ python-version: ${{ env.PYTHON_VERSION }}

+

+ - name: Install dependencies

+ run: |

+ python -m pip install --upgrade pip

+ pip install -r requirements_lightweight.txt

+ pip install pytest pytest-cov reportlab pillow

+

+ - name: Install system dependencies

+ run: |

+ sudo apt-get update

+ sudo apt-get install -y \

+ tesseract-ocr \

+ tesseract-ocr-eng \

+ poppler-utils \

+ libgl1-mesa-dri \

+ libglib2.0-0

+

+ - name: Download spaCy model

+ run: |

+ python -m spacy download en_core_web_lg

+

+ - name: Setup test data

+ run: |

+ python .github/scripts/setup_test_data.py

+ echo "Setup script completed. Checking results:"

+ ls -la example_data/ || echo "example_data directory not found"

+

+ - name: Verify test data files

+ run: |

+ echo "Checking if critical test files exist:"

+ ls -la example_data/

+ echo "Checking for specific PDF files:"

+ ls -la example_data/*.pdf || echo "No PDF files found"

+ echo "Checking file sizes:"

+ find example_data -name "*.pdf" -exec ls -lh {} \;

+

+ - name: Run integration tests

+ run: |

+ cd test

+ python demo_single_test.py

+

+ - name: Test CLI help

+ run: |

+ python cli_redact.py --help

+

+ - name: Test CLI version

+ run: |

+ python -c "import sys; print(f'Python {sys.version}')"

+

+ security:

+ runs-on: ubuntu-latest

+ steps:

+ - uses: actions/checkout@v6

+

+ - name: Set up Python

+ uses: actions/setup-python@v6

+ with:

+ python-version: ${{ env.PYTHON_VERSION }}

+

+ - name: Install dependencies

+ run: |

+ python -m pip install --upgrade pip

+ pip install safety bandit

+

+ #- name: Run safety scan - removed as now requires login

+ # run: |

+ # safety scan -r requirements.txt

+

+ - name: Run bandit security check

+ run: |

+ bandit -r . -f json -o bandit-report.json || true

+

+ - name: Upload security report

+ uses: actions/upload-artifact@v6

+ if: always()

+ with:

+ name: security-report

+ path: bandit-report.json

+

+ build:

+ runs-on: ubuntu-latest

+ needs: [lint, test-unit]

+ if: github.event_name == 'push' && github.ref == 'refs/heads/main'

+

+ steps:

+ - uses: actions/checkout@v6

+

+ - name: Set up Python

+ uses: actions/setup-python@v6

+ with:

+ python-version: ${{ env.PYTHON_VERSION }}

+

+ - name: Install build dependencies

+ run: |

+ python -m pip install --upgrade pip

+ pip install build twine

+

+ - name: Build package

+ run: |

+ python -m build

+

+ - name: Check package

+ run: |

+ twine check dist/*

+

+ - name: Upload build artifacts

+ uses: actions/upload-artifact@v6

+ with:

+ name: dist

+ path: dist/

diff --git a/.github/workflows/simple-test.yml b/.github/workflows/simple-test.yml

new file mode 100644

index 0000000000000000000000000000000000000000..6a4d430a18d030fb8a7f5491963536253422143c

--- /dev/null

+++ b/.github/workflows/simple-test.yml

@@ -0,0 +1,67 @@

+name: Simple Test Run

+

+on:

+ push:

+ branches: [ dev ]

+ pull_request:

+ branches: [ dev ]

+

+permissions:

+ contents: read

+ actions: read

+

+jobs:

+ test:

+ runs-on: ubuntu-latest

+

+ steps:

+ - uses: actions/checkout@v6

+

+ - name: Set up Python 3.12

+ uses: actions/setup-python@v6

+ with:

+ python-version: "3.12"

+

+ - name: Install system dependencies

+ run: |

+ sudo apt-get update

+ sudo apt-get install -y \

+ tesseract-ocr \

+ tesseract-ocr-eng \

+ poppler-utils \

+ libgl1-mesa-dri \

+ libglib2.0-0

+

+ - name: Install Python dependencies

+ run: |

+ python -m pip install --upgrade pip

+ pip install -r requirements_lightweight.txt

+ pip install pytest pytest-cov reportlab pillow

+

+ - name: Download spaCy model

+ run: |

+ python -m spacy download en_core_web_lg

+

+ - name: Setup test data

+ run: |

+ python .github/scripts/setup_test_data.py

+ echo "Setup script completed. Checking results:"

+ ls -la example_data/ || echo "example_data directory not found"

+

+ - name: Verify test data files

+ run: |

+ echo "Checking if critical test files exist:"

+ ls -la example_data/

+ echo "Checking for specific PDF files:"

+ ls -la example_data/*.pdf || echo "No PDF files found"

+ echo "Checking file sizes:"

+ find example_data -name "*.pdf" -exec ls -lh {} \;

+

+ - name: Run CLI tests

+ run: |

+ cd test

+ python test.py

+

+ - name: Run tests with pytest

+ run: |

+ pytest test/test.py -v --tb=short

diff --git a/.github/workflows/sync_to_hf.yml b/.github/workflows/sync_to_hf.yml

new file mode 100644

index 0000000000000000000000000000000000000000..12624f979f7fa9734c9c5824a7cd535b143e0ea8

--- /dev/null

+++ b/.github/workflows/sync_to_hf.yml

@@ -0,0 +1,53 @@

+name: Sync to Hugging Face hub

+on:

+ push:

+ branches: [main]

+

+permissions:

+ contents: read

+

+jobs:

+ sync-to-hub:

+ runs-on: ubuntu-latest

+ steps:

+ - uses: actions/checkout@v6

+ with:

+ fetch-depth: 1 # Only get the latest state

+ lfs: true # Download actual LFS files so they can be pushed

+

+ - name: Install Git LFS

+ run: git lfs install

+

+ - name: Recreate repo history (single-commit force push)

+ run: |

+ # 1. Capture the message BEFORE we delete the .git folder

+ COMMIT_MSG=$(git log -1 --pretty=%B)

+ echo "Syncing commit message: $COMMIT_MSG"

+

+ # 2. DELETE the .git folder.

+ # This turns the repo into a standard folder of files.

+ rm -rf .git

+

+ # 3. Re-initialize a brand new git repo

+ git init -b main

+ git config --global user.name "$HF_USERNAME"

+ git config --global user.email "$HF_EMAIL"

+

+ # 4. Re-install LFS (needs to be done after git init)

+ git lfs install

+

+ # 5. Add the remote

+ git remote add hf https://$HF_USERNAME:$HF_TOKEN@huggingface.co/spaces/$HF_USERNAME/$HF_REPO_ID

+

+ # 6. Add all files

+ # Since this is a fresh init, Git sees EVERY file as "New"

+ git add .

+

+ # 7. Commit and Force Push

+ git commit -m "Sync: $COMMIT_MSG"

+ git push --force hf main

+ env:

+ HF_TOKEN: ${{ secrets.HF_TOKEN }}

+ HF_USERNAME: ${{ secrets.HF_USERNAME }}

+ HF_EMAIL: ${{ secrets.HF_EMAIL }}

+ HF_REPO_ID: ${{ secrets.HF_REPO_ID }}

\ No newline at end of file

diff --git a/.github/workflows/sync_to_hf_zero_gpu.yml b/.github/workflows/sync_to_hf_zero_gpu.yml

new file mode 100644

index 0000000000000000000000000000000000000000..662b836210a1295c5a8dda0be4d998e08eda6454

--- /dev/null

+++ b/.github/workflows/sync_to_hf_zero_gpu.yml

@@ -0,0 +1,53 @@

+name: Sync to Hugging Face hub Zero GPU

+on:

+ push:

+ branches: [dev]

+

+permissions:

+ contents: read

+

+jobs:

+ sync-to-hub-zero-gpu:

+ runs-on: ubuntu-latest

+ steps:

+ - uses: actions/checkout@v6

+ with:

+ fetch-depth: 1 # Only get the latest state

+ lfs: true # Download actual LFS files so they can be pushed

+

+ - name: Install Git LFS

+ run: git lfs install

+

+ - name: Recreate repo history (single-commit force push)

+ run: |

+ # 1. Capture the message BEFORE we delete the .git folder

+ COMMIT_MSG=$(git log -1 --pretty=%B)

+ echo "Syncing commit message: $COMMIT_MSG"

+

+ # 2. DELETE the .git folder.

+ # This turns the repo into a standard folder of files.

+ rm -rf .git

+

+ # 3. Re-initialize a brand new git repo

+ git init -b main

+ git config --global user.name "$HF_USERNAME"

+ git config --global user.email "$HF_EMAIL"

+

+ # 4. Re-install LFS (needs to be done after git init)

+ git lfs install

+

+ # 5. Add the remote

+ git remote add hf https://$HF_USERNAME:$HF_TOKEN@huggingface.co/spaces/$HF_USERNAME/$HF_REPO_ID_ZERO_GPU

+

+ # 6. Add all files

+ # Since this is a fresh init, Git sees EVERY file as "New"

+ git add .

+

+ # 7. Commit and Force Push

+ git commit -m "Sync: $COMMIT_MSG"

+ git push --force hf main

+ env:

+ HF_TOKEN: ${{ secrets.HF_TOKEN }}

+ HF_USERNAME: ${{ secrets.HF_USERNAME }}

+ HF_EMAIL: ${{ secrets.HF_EMAIL }}

+ HF_REPO_ID_ZERO_GPU: ${{ secrets.HF_REPO_ID_ZERO_GPU }}

\ No newline at end of file

diff --git a/.gitignore b/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..496a491daefe5f3d15658cd2b0a38f78d57d096a

--- /dev/null

+++ b/.gitignore

@@ -0,0 +1,45 @@

+*.url

+*.ipynb

+*.pyc

+.venv/*

+examples/*

+processing/*

+input/*

+output/*

+tools/__pycache__/*

+old_code/*

+tesseract/*

+poppler/*

+build/*

+dist/*

+build_deps/*

+logs/*

+usage/*

+feedback/*

+config/*

+user_guide/*

+cdk/config/*

+cdk/cdk.out/*

+cdk/archive/*

+tld/*

+tmp/*

+docs/*

+cdk.out/*

+cdk.json

+cdk.context.json

+.quarto/*

+/.quarto/

+/_site/

+test/config/*

+test/feedback/*

+test/input/*

+test/logs/*

+test/output/*

+test/tmp/*

+test/usage/*

+.ruff_cache/*

+model_cache/*

+sanitized_file/*

+src/doc_redaction.egg-info/*

+

+**/*.quarto_ipynb

diff --git a/Dockerfile b/Dockerfile

new file mode 100644

index 0000000000000000000000000000000000000000..a4992aeb35eea541eaaf01ad1f05d67483f8d418

--- /dev/null

+++ b/Dockerfile

@@ -0,0 +1,222 @@

+# Stage 1: Build dependencies and download models

+FROM public.ecr.aws/docker/library/python:3.12.13-slim-trixie AS builder

+

+# Install system dependencies

+RUN apt-get update \

+ && apt-get upgrade -y \

+ && apt-get install -y --no-install-recommends \

+ g++ \

+ make \

+ cmake \

+ unzip \

+ libcurl4-openssl-dev \

+ git \

+ && pip install --upgrade pip \

+ && apt-get clean \

+ && rm -rf /var/lib/apt/lists/*

+

+WORKDIR /src

+

+COPY requirements_lightweight.txt .

+

+RUN pip install --verbose --no-cache-dir --target=/install -r requirements_lightweight.txt && rm requirements_lightweight.txt

+

+# Optionally install PaddleOCR if the INSTALL_PADDLEOCR environment variable is set to True. Note that GPU-enabled PaddleOCR is unlikely to work in the same environment as a GPU-enabled version of PyTorch, so it is recommended to install PaddleOCR as a CPU-only version if you want to use GPU-enabled PyTorch.

+

+ARG INSTALL_PADDLEOCR=False

+ENV INSTALL_PADDLEOCR=${INSTALL_PADDLEOCR}

+

+ARG PADDLE_GPU_ENABLED=False

+ENV PADDLE_GPU_ENABLED=${PADDLE_GPU_ENABLED}

+

+RUN if [ "$INSTALL_PADDLEOCR" = "True" ] && [ "$PADDLE_GPU_ENABLED" = "False" ]; then \

+ pip install --verbose --no-cache-dir --target=/install "protobuf<=7.34.0" && \

+ pip install --verbose --no-cache-dir --target=/install "paddlepaddle<=3.2.1" && \

+ pip install --verbose --no-cache-dir --target=/install "paddleocr<=3.3.0"; \

+elif [ "$INSTALL_PADDLEOCR" = "True" ] && [ "$PADDLE_GPU_ENABLED" = "True" ]; then \

+ pip install --verbose --no-cache-dir --target=/install "protobuf<=7.34.0" && \

+ pip install --verbose --no-cache-dir --target=/install "paddlepaddle-gpu<=3.2.1" --index-url https://www.paddlepaddle.org.cn/packages/stable/cu129/ && \

+ pip install --verbose --no-cache-dir --target=/install "paddleocr<=3.3.0"; \

+fi

+

+ARG INSTALL_VLM=False

+ENV INSTALL_VLM=${INSTALL_VLM}

+

+ARG TORCH_GPU_ENABLED=False

+ENV TORCH_GPU_ENABLED=${TORCH_GPU_ENABLED}

+

+# Optionally install VLM/LLM packages if the INSTALL_VLM environment variable is set to True.

+RUN if [ "$INSTALL_VLM" = "True" ] && [ "$TORCH_GPU_ENABLED" = "False" ]; then \

+ pip install --verbose --no-cache-dir --target=/install \

+ "torch==2.9.1+cpu" \

+ "torchvision==0.24.1+cpu" \

+ "transformers<=5.30.0" \

+ "accelerate<=1.13.0" \

+ "bitsandbytes<=0.49.2" \

+ "sentencepiece<=0.2.1" \

+ --extra-index-url https://download.pytorch.org/whl/cpu; \

+elif [ "$INSTALL_VLM" = "True" ] && [ "$TORCH_GPU_ENABLED" = "True" ]; then \

+ pip install --verbose --no-cache-dir --target=/install "torch<=2.8.0" --index-url https://download.pytorch.org/whl/cu129 && \

+ pip install --verbose --no-cache-dir --target=/install "torchvision<=0.23.0" --index-url https://download.pytorch.org/whl/cu129 && \

+ pip install --verbose --no-cache-dir --target=/install \

+ "transformers<=5.30.0" \

+ "accelerate<=1.13.0" \

+ "bitsandbytes<=0.49.2" \

+ "sentencepiece<=0.2.1" && \

+ pip install --verbose --no-cache-dir --target=/install "optimum<=2.1.0" && \

+ pip install --verbose --no-cache-dir --target=/install https://github.com/Dao-AILab/flash-attention/releases/download/v2.8.3/flash_attn-2.8.3+cu12torch2.8cxx11abiTRUE-cp312-cp312-linux_x86_64.whl && \

+ pip install --verbose --no-cache-dir --target=/install https://github.com/ModelCloud/GPTQModel/releases/download/v5.8.0/gptqmodel-5.8.0+cu128torch2.8-cp312-cp312-linux_x86_64.whl; \

+fi

+

+# ===================================================================

+# Stage 2: A common base for both Lambda and Gradio

+# ===================================================================

+FROM public.ecr.aws/docker/library/python:3.12.13-slim-trixie AS base

+

+# MUST re-declare ARGs in every stage where they are used in RUN commands

+ARG TORCH_GPU_ENABLED=False

+ARG PADDLE_GPU_ENABLED=False

+

+ENV TORCH_GPU_ENABLED=${TORCH_GPU_ENABLED}

+ENV PADDLE_GPU_ENABLED=${PADDLE_GPU_ENABLED}

+

+RUN apt-get update && apt-get install -y --no-install-recommends \

+ tesseract-ocr \

+ poppler-utils \

+ libgl1 \

+ libglib2.0-0 && \

+ if [ "$TORCH_GPU_ENABLED" = "True" ] || [ "$PADDLE_GPU_ENABLED" = "True" ]; then \

+ apt-get install -y --no-install-recommends libgomp1; \

+ fi && \

+ apt-get clean && rm -rf /var/lib/apt/lists/*

+

+ENV APP_HOME=/home/user

+

+# Set env variables for Gradio & other apps

+ENV GRADIO_TEMP_DIR=/tmp/gradio_tmp/ \

+ TLDEXTRACT_CACHE=/tmp/tld/ \

+ MPLCONFIGDIR=/tmp/matplotlib_cache/ \

+ GRADIO_OUTPUT_FOLDER=$APP_HOME/app/output/ \

+ GRADIO_INPUT_FOLDER=$APP_HOME/app/input/ \

+ FEEDBACK_LOGS_FOLDER=$APP_HOME/app/feedback/ \

+ ACCESS_LOGS_FOLDER=$APP_HOME/app/logs/ \

+ USAGE_LOGS_FOLDER=$APP_HOME/app/usage/ \

+ CONFIG_FOLDER=$APP_HOME/app/config/ \

+ XDG_CACHE_HOME=/tmp/xdg_cache/user_1000 \

+ TESSERACT_DATA_FOLDER=/usr/share/tessdata \

+ GRADIO_SERVER_NAME=0.0.0.0 \

+ GRADIO_SERVER_PORT=7860 \

+ PATH=$APP_HOME/.local/bin:$PATH \

+ PYTHONPATH=$APP_HOME/app \

+ PYTHONUNBUFFERED=1 \

+ PYTHONDONTWRITEBYTECODE=1 \

+ GRADIO_ALLOW_FLAGGING=never \

+ GRADIO_NUM_PORTS=1 \

+ GRADIO_ANALYTICS_ENABLED=False

+

+# Copy Python packages from the builder stage

+COPY --from=builder /install /usr/local/lib/python3.12/site-packages/

+COPY --from=builder /install/bin /usr/local/bin/

+

+# Reinstall protobuf into the final site-packages. Builder uses multiple `pip install --target=/install`

+# passes; that can break the `google` namespace so `google.protobuf` is missing and Paddle fails at import.

+RUN pip install --no-cache-dir "protobuf<=7.34.0"

+

+# Copy your application code and entrypoint

+COPY . ${APP_HOME}/app

+COPY entrypoint.sh ${APP_HOME}/app/entrypoint.sh

+# Fix line endings and set execute permissions

+RUN sed -i 's/\r$//' ${APP_HOME}/app/entrypoint.sh \

+ && chmod +x ${APP_HOME}/app/entrypoint.sh

+

+WORKDIR ${APP_HOME}/app

+

+# ===================================================================

+# FINAL Stage 3: The Lambda Image (runs as root for simplicity)

+# ===================================================================

+FROM base AS lambda

+# Set runtime ENV for Lambda mode

+ENV APP_MODE=lambda

+ENTRYPOINT ["/home/user/app/entrypoint.sh"]

+CMD ["lambda_entrypoint.lambda_handler"]

+

+# ===================================================================

+# FINAL Stage 4: The Gradio Image (runs as a secure, non-root user)

+# ===================================================================

+FROM base AS gradio

+# Set runtime ENV for Gradio mode

+ENV APP_MODE=gradio

+

+# Create non-root user

+RUN useradd -m -u 1000 user

+

+# Create the base application directory and set its ownership

+RUN mkdir -p ${APP_HOME}/app && chown user:user ${APP_HOME}/app

+

+# Create required sub-folders within the app directory and set their permissions

+# This ensures these specific directories are owned by 'user'

+RUN mkdir -p \

+ ${APP_HOME}/app/output \

+ ${APP_HOME}/app/input \

+ ${APP_HOME}/app/logs \

+ ${APP_HOME}/app/usage \

+ ${APP_HOME}/app/feedback \

+ ${APP_HOME}/app/config \

+ && chown user:user \

+ ${APP_HOME}/app/output \

+ ${APP_HOME}/app/input \

+ ${APP_HOME}/app/logs \

+ ${APP_HOME}/app/usage \

+ ${APP_HOME}/app/feedback \

+ ${APP_HOME}/app/config \

+ && chmod 755 \

+ ${APP_HOME}/app/output \

+ ${APP_HOME}/app/input \

+ ${APP_HOME}/app/logs \

+ ${APP_HOME}/app/usage \

+ ${APP_HOME}/app/feedback \

+ ${APP_HOME}/app/config

+

+# Now handle the /tmp and /var/tmp directories and their subdirectories, paddle, spacy, tessdata

+RUN mkdir -p /tmp/gradio_tmp /tmp/tld /tmp/matplotlib_cache /tmp /var/tmp ${XDG_CACHE_HOME} \

+ && chown user:user /tmp /var/tmp /tmp/gradio_tmp /tmp/tld /tmp/matplotlib_cache ${XDG_CACHE_HOME} \

+ && chmod 1777 /tmp /var/tmp /tmp/gradio_tmp /tmp/tld /tmp/matplotlib_cache \

+ && chmod 700 ${XDG_CACHE_HOME} \

+ && mkdir -p ${APP_HOME}/.paddlex \

+ && chown user:user ${APP_HOME}/.paddlex \

+ && chmod 755 ${APP_HOME}/.paddlex \

+ && mkdir -p ${APP_HOME}/.local/share/spacy/data \

+ && chown user:user ${APP_HOME}/.local/share/spacy/data \

+ && chmod 755 ${APP_HOME}/.local/share/spacy/data \

+ && mkdir -p /usr/share/tessdata \

+ && chown user:user /usr/share/tessdata \

+ && chmod 755 /usr/share/tessdata

+

+# Fix apply user ownership to all files in the home directory

+RUN chown -R user:user /home/user

+

+# Set permissions for Python executable

+RUN chmod 755 /usr/local/bin/python

+

+# Declare volumes (NOTE: runtime mounts will override permissions — handle with care)

+VOLUME ["/tmp/matplotlib_cache"]

+VOLUME ["/tmp/gradio_tmp"]

+VOLUME ["/tmp/tld"]

+VOLUME ["/home/user/app/output"]

+VOLUME ["/home/user/app/input"]

+VOLUME ["/home/user/app/logs"]

+VOLUME ["/home/user/app/usage"]

+VOLUME ["/home/user/app/feedback"]

+VOLUME ["/home/user/app/config"]

+VOLUME ["/home/user/.paddlex"]

+VOLUME ["/home/user/.local/share/spacy/data"]

+VOLUME ["/usr/share/tessdata"]

+VOLUME ["/tmp"]

+VOLUME ["/var/tmp"]

+

+USER user

+

+EXPOSE $GRADIO_SERVER_PORT

+

+ENTRYPOINT ["/home/user/app/entrypoint.sh"]

+CMD ["python", "app.py"]

\ No newline at end of file

diff --git a/README.md b/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..92103d802e441da25cbc509be6015e053b3f5300

--- /dev/null

+++ b/README.md

@@ -0,0 +1,1471 @@

+---

+title: Document redaction

+emoji: 📝

+colorFrom: blue

+colorTo: yellow

+sdk: docker

+app_file: app.py

+pinned: true

+license: agpl-3.0

+short_description: OCR / redact PDF documents and tabular data

+---

+# Document redaction

+

+version: 2.0.1

+

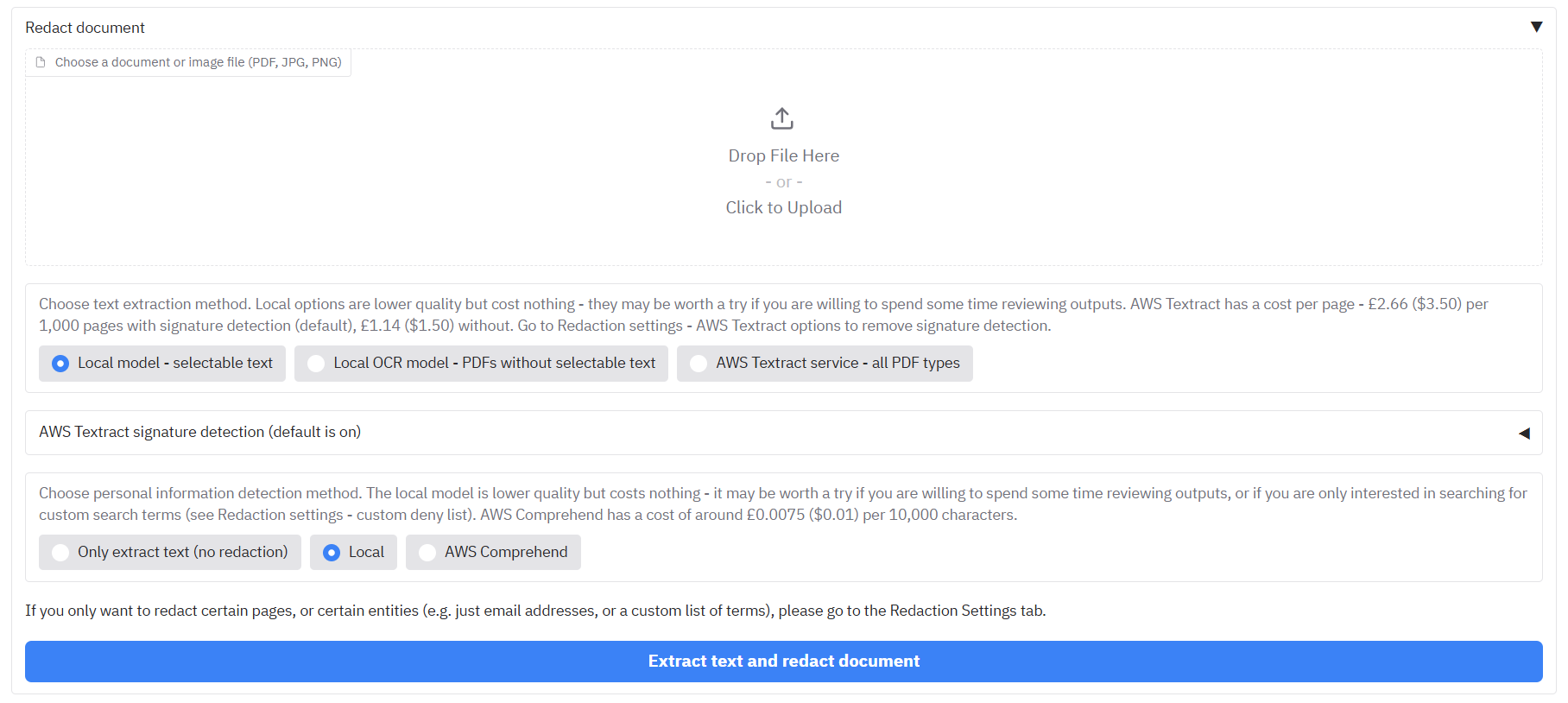

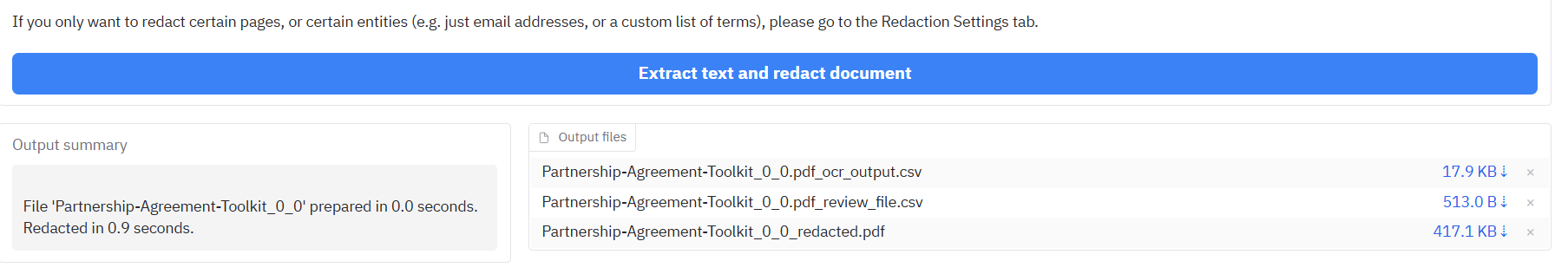

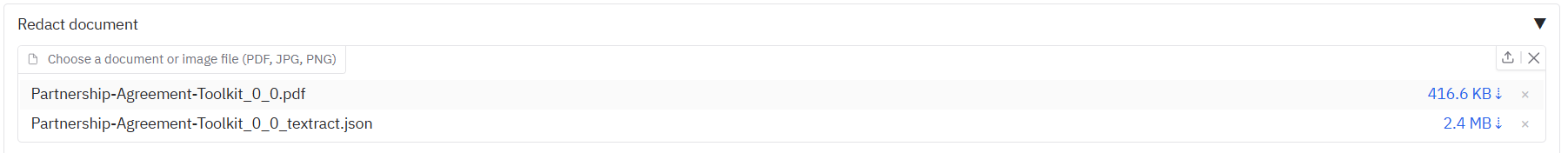

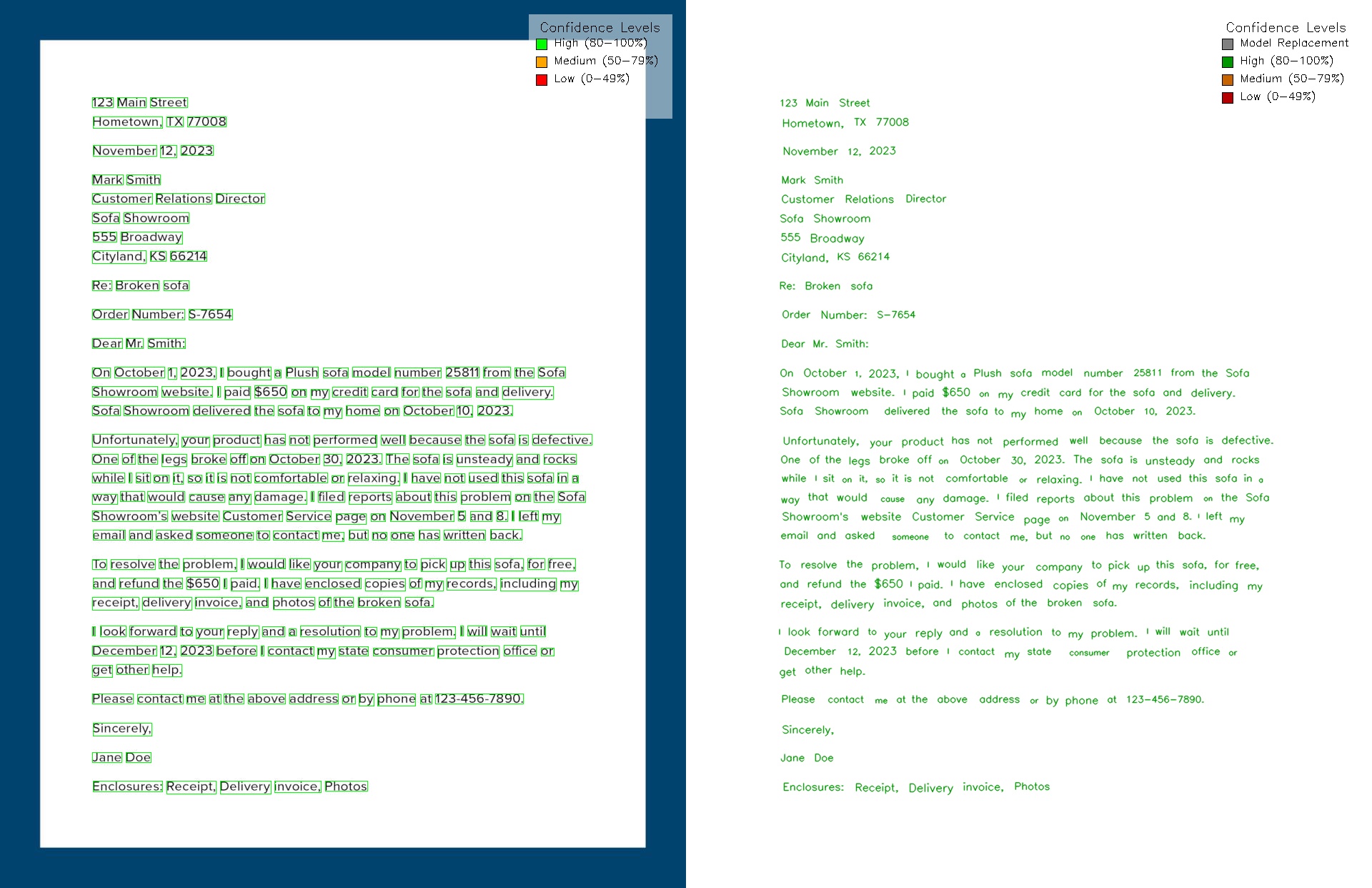

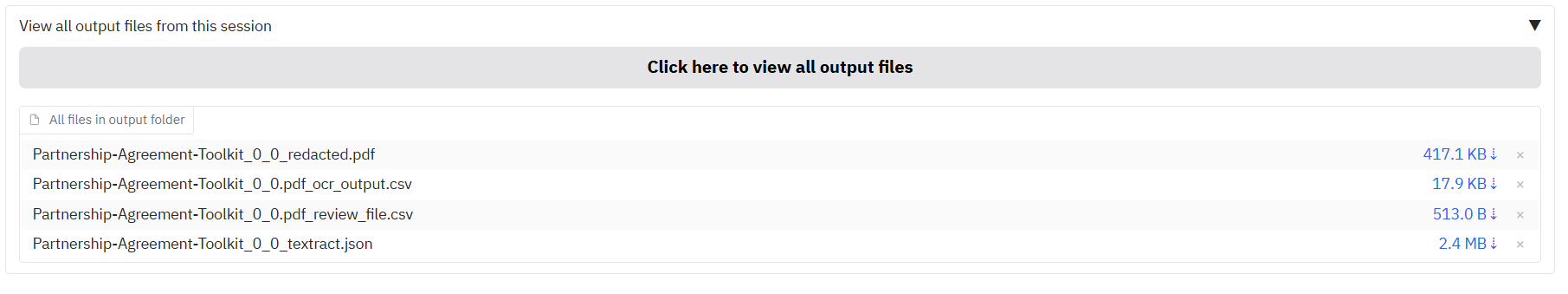

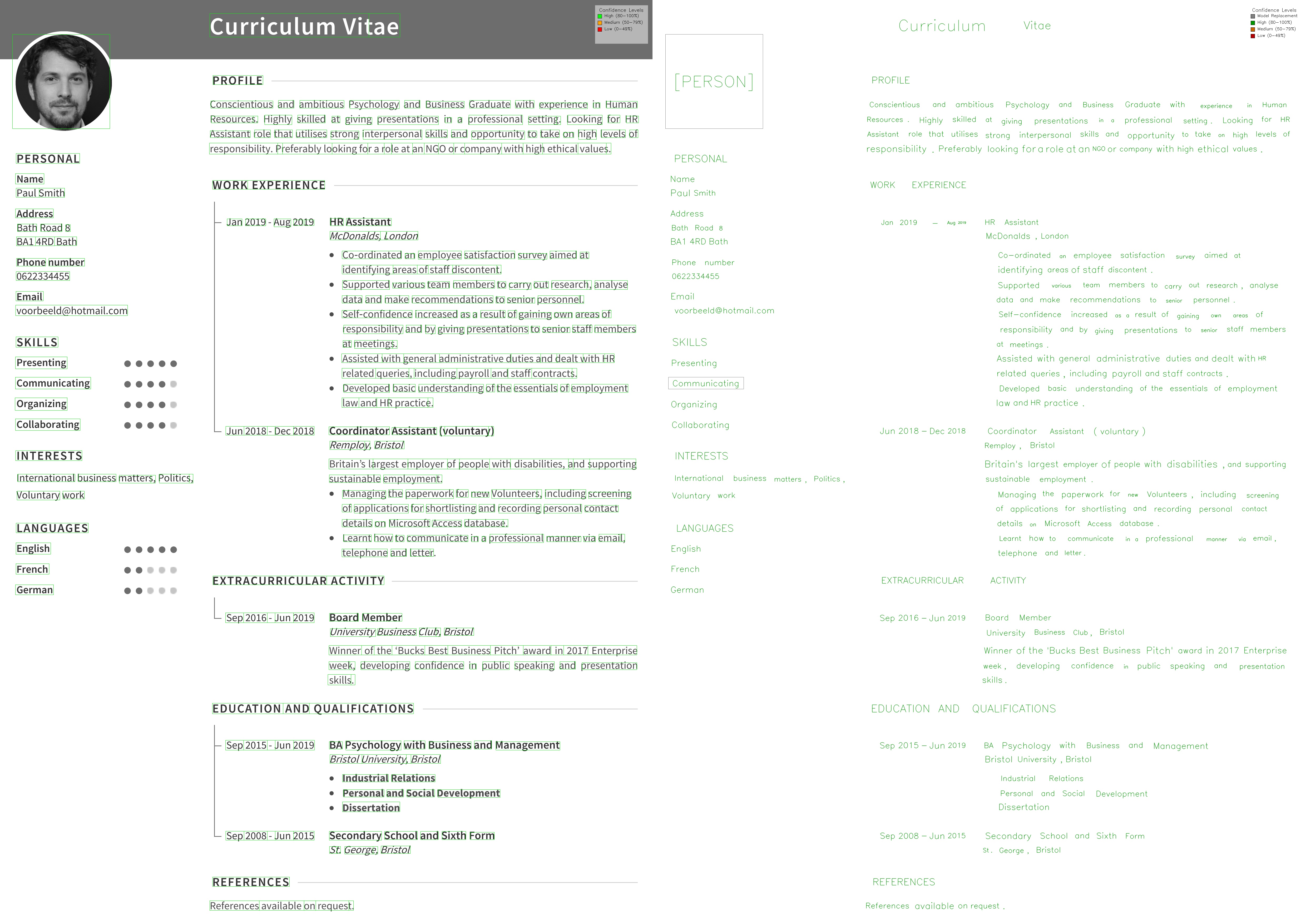

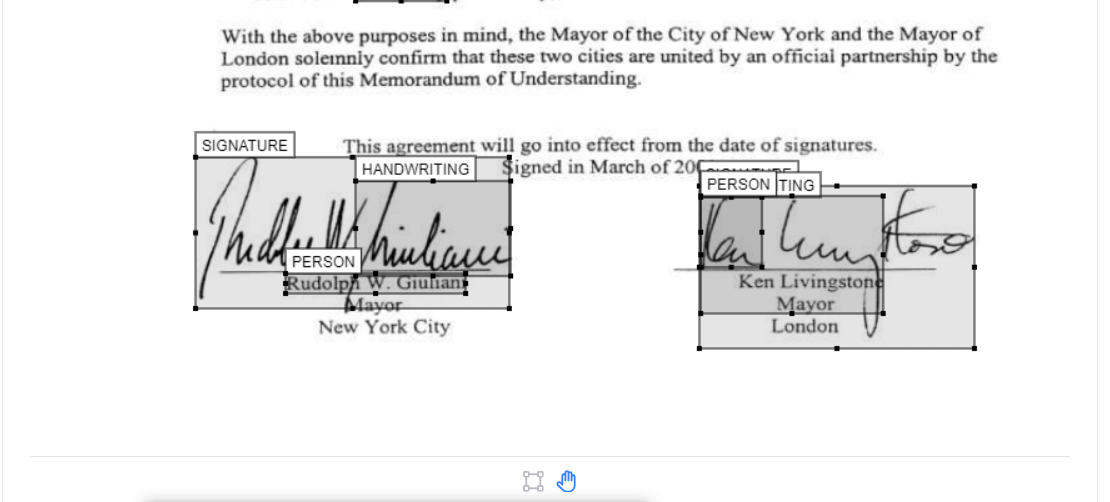

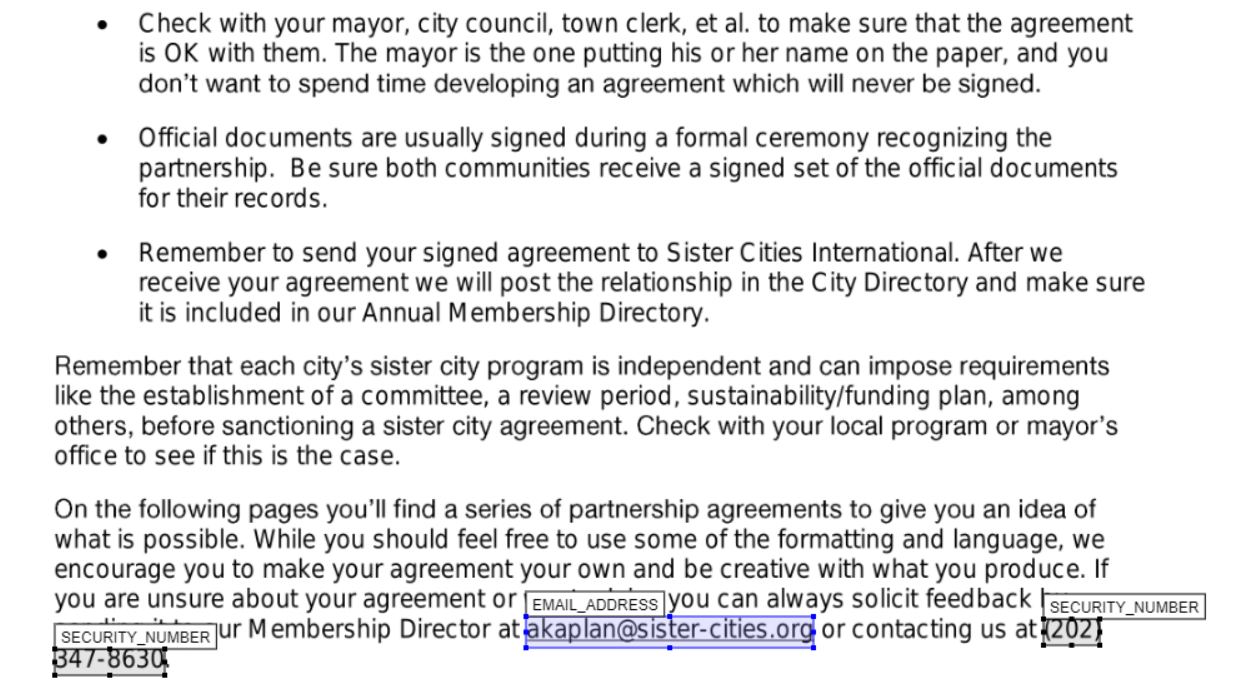

+Redact personally identifiable information (PII) from documents (PDF, PNG, JPG), Word files (DOCX), or tabular data (XLSX/CSV/Parquet). Please see the [User Guide](#user-guide) for a full walkthrough of all the features in the app.

+

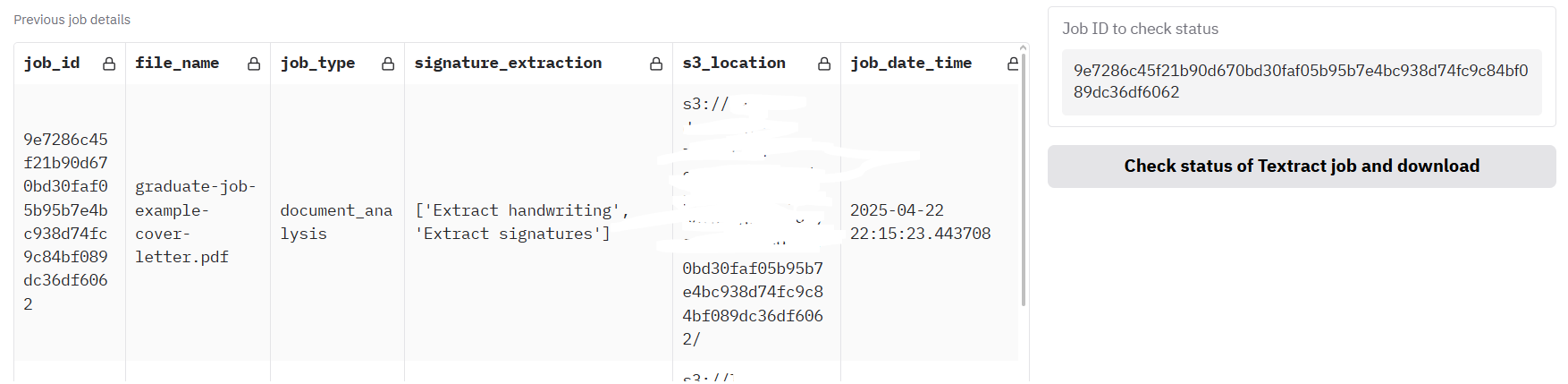

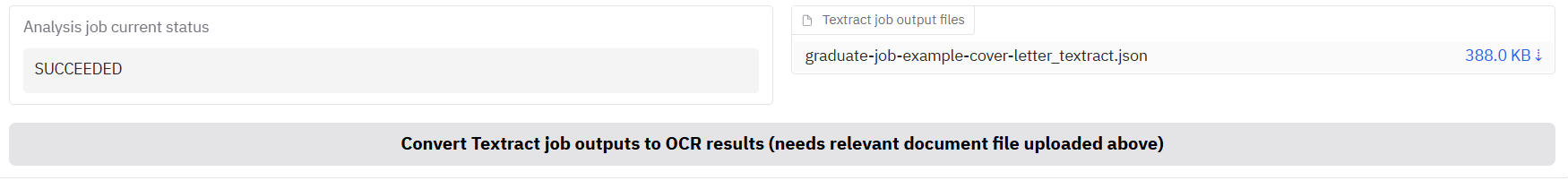

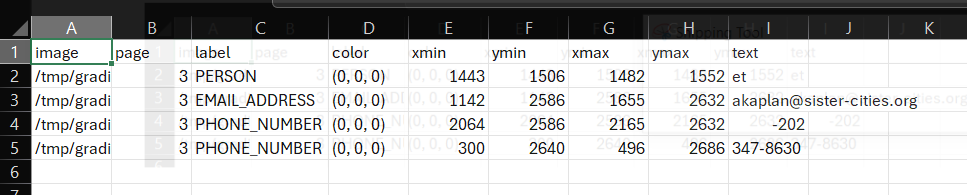

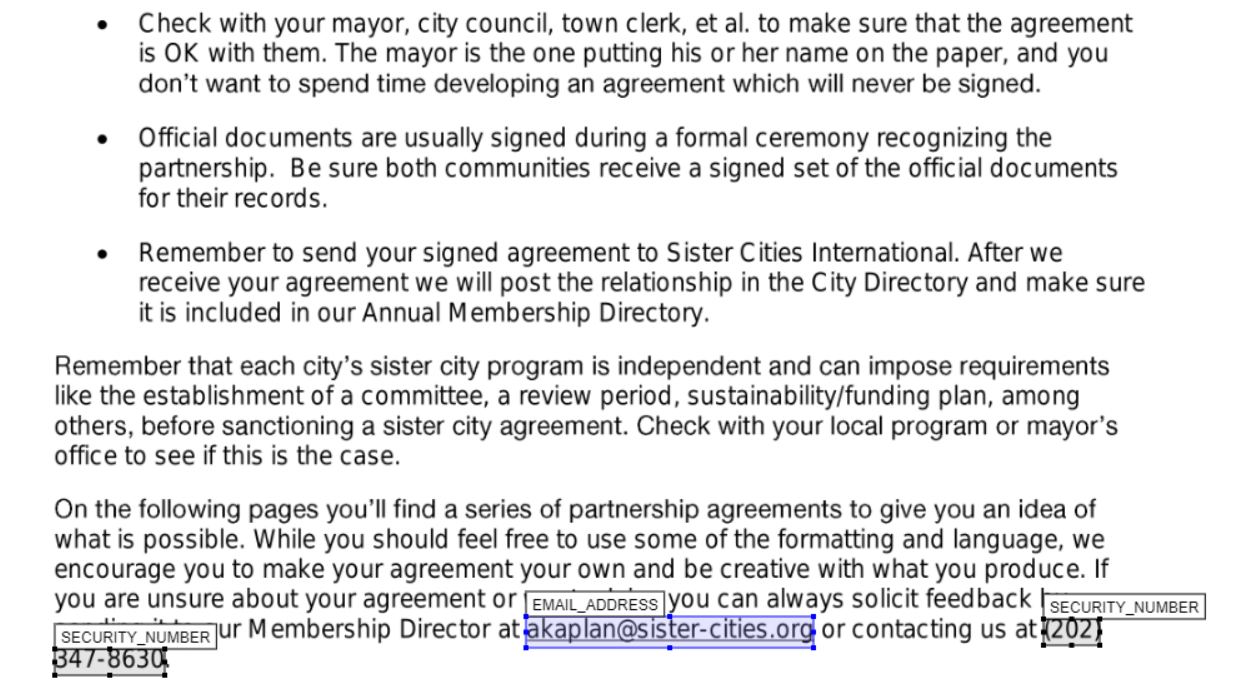

+To extract text from documents, the 'Local' options are PikePDF for PDFs with selectable text, and OCR with Tesseract. Use AWS Textract to extract more complex elements e.g. handwriting, signatures, or unclear text. PaddleOCR and VLM support is also provided (see the installation instructions below).

+

+For PII identification, 'Local' (based on spaCy) gives good results if you are looking for common names or terms, or a custom list of terms to redact (see Redaction settings). AWS Comprehend gives better results at a small cost.

+

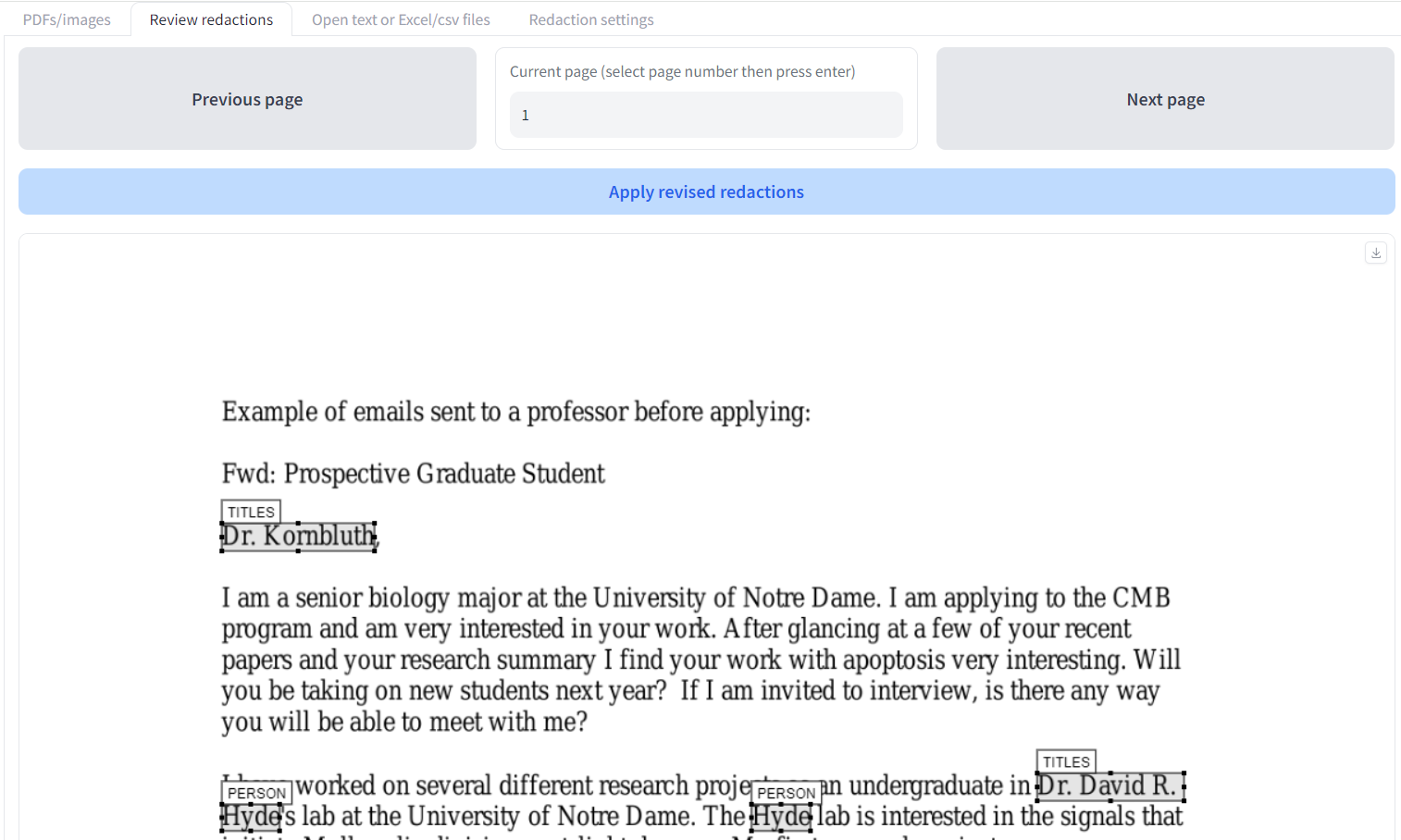

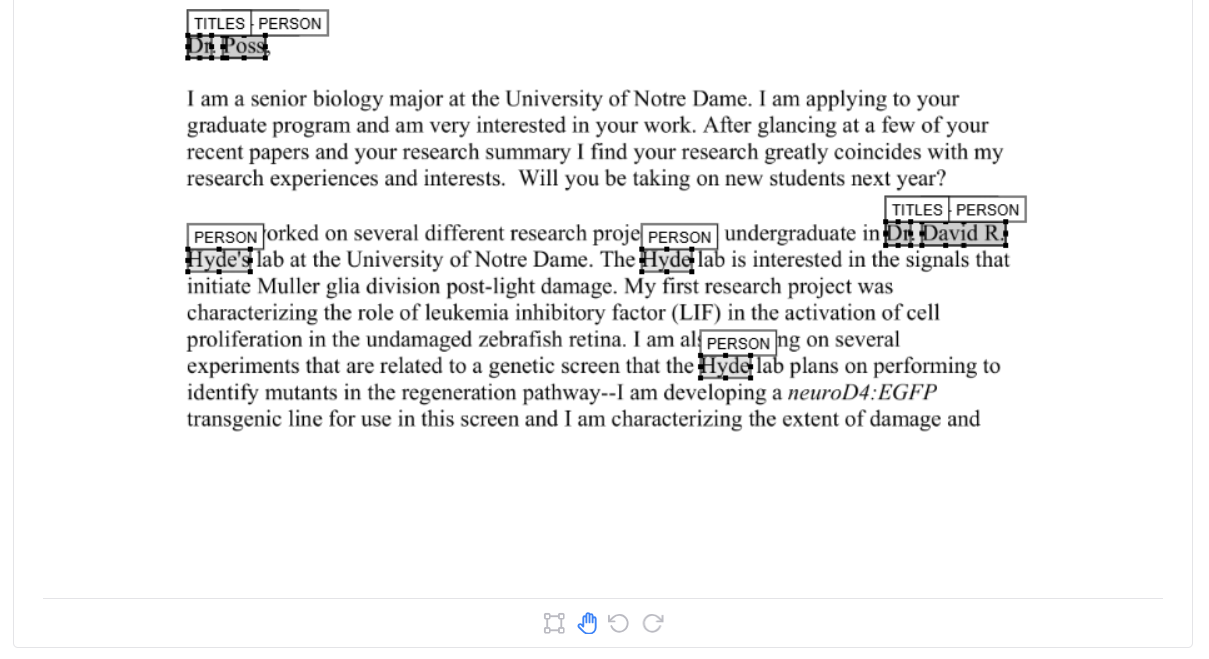

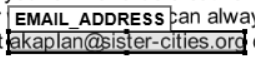

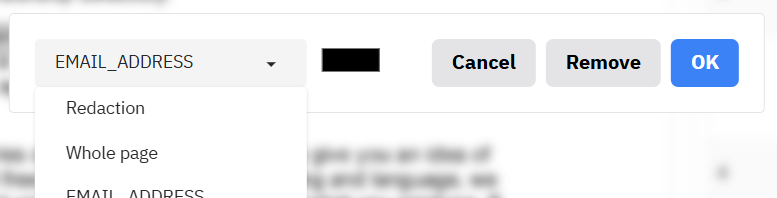

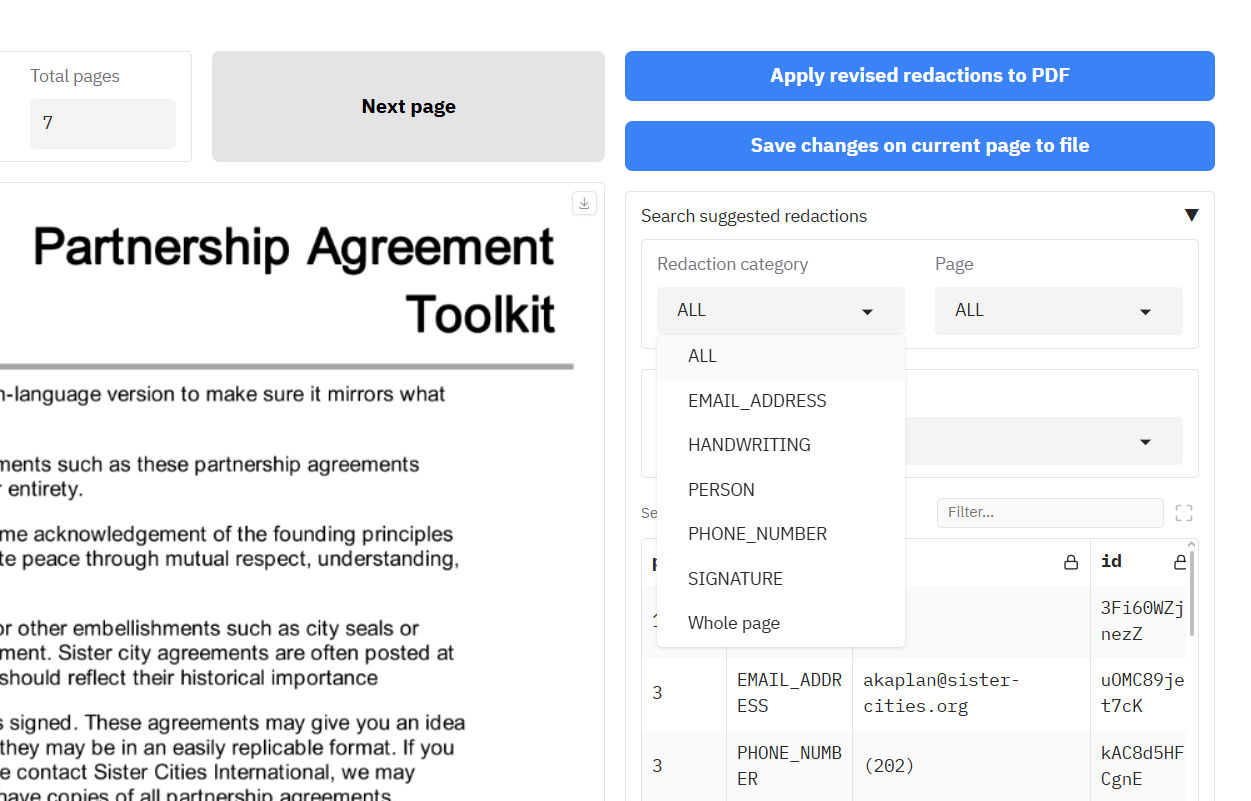

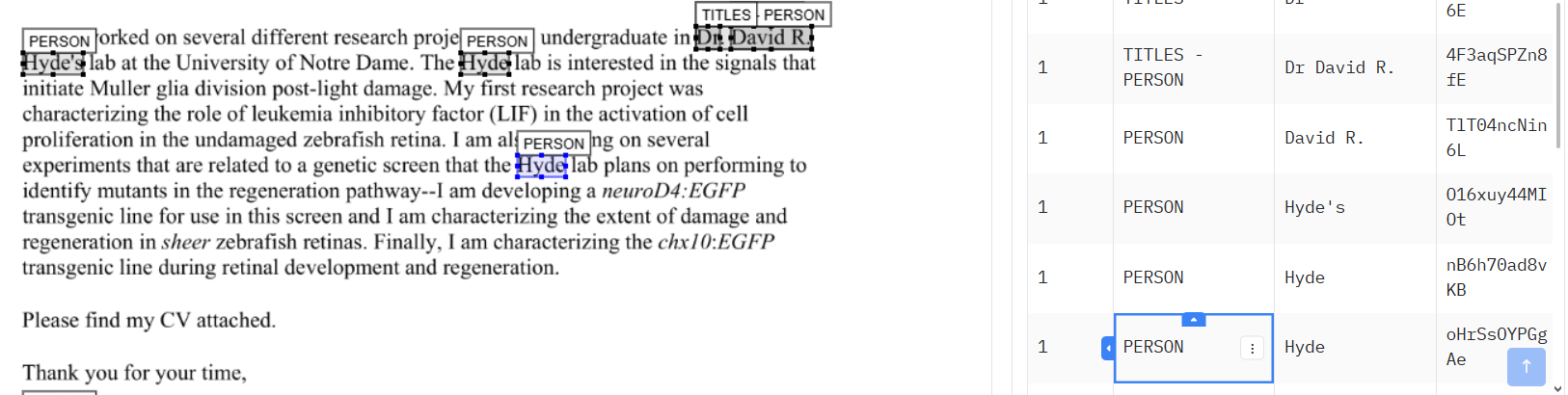

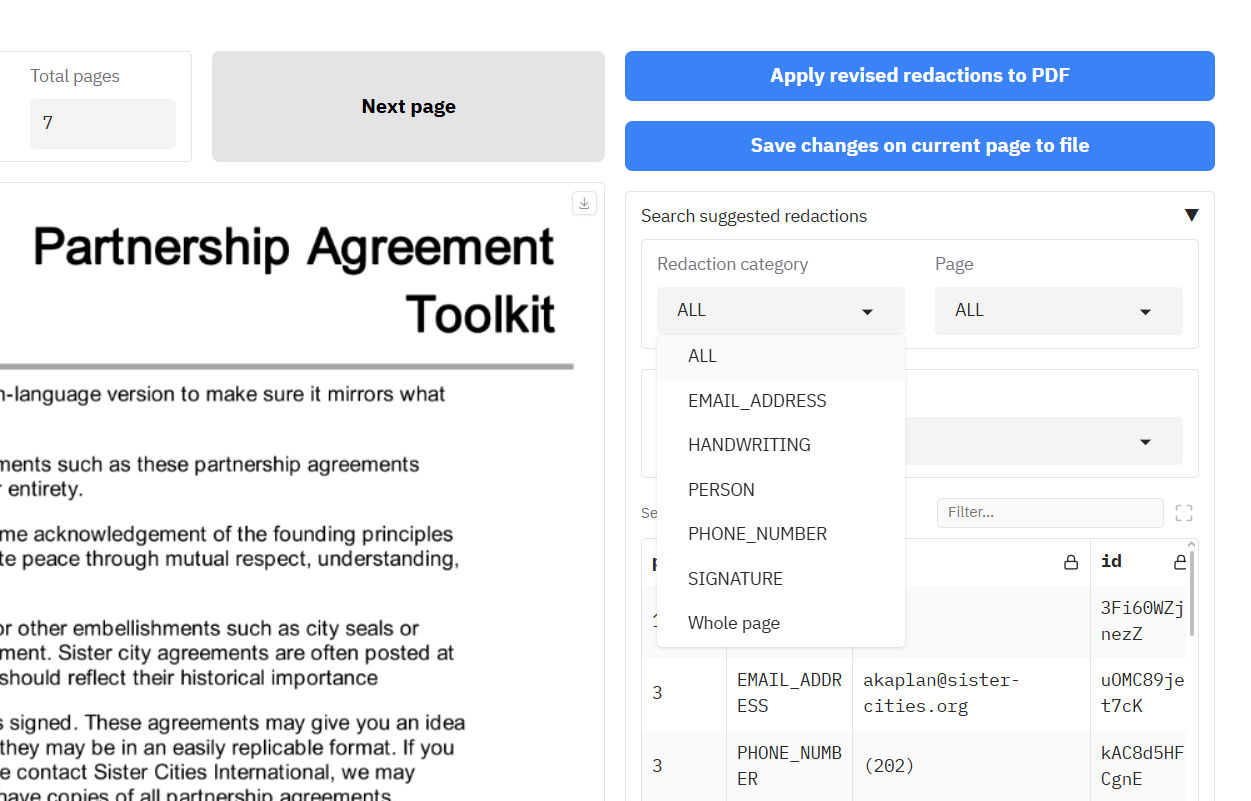

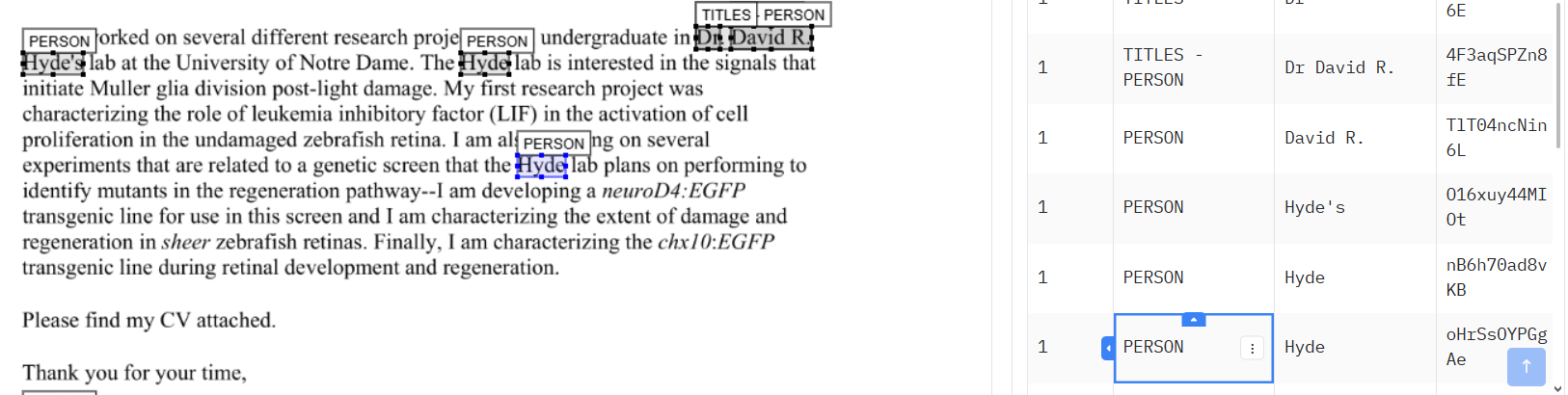

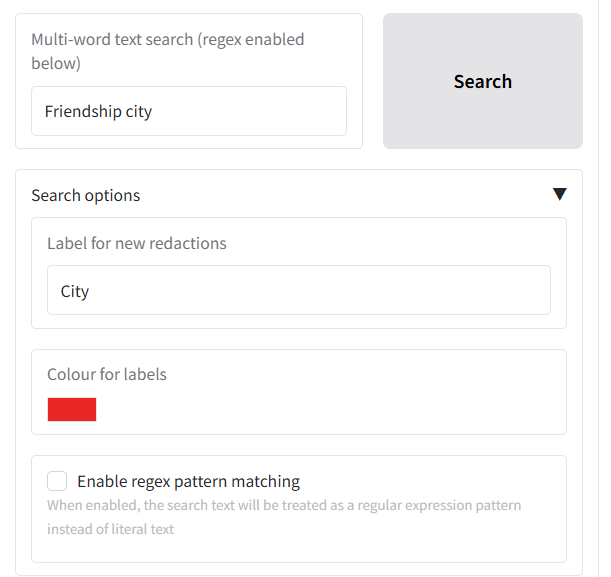

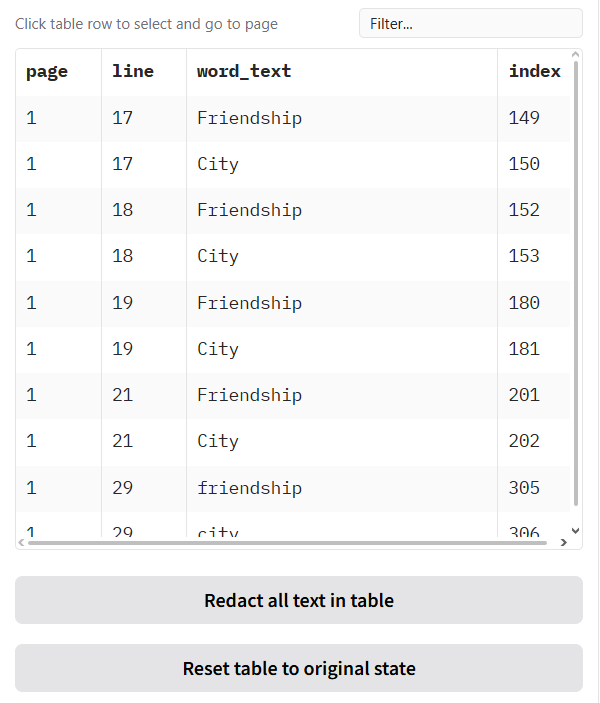

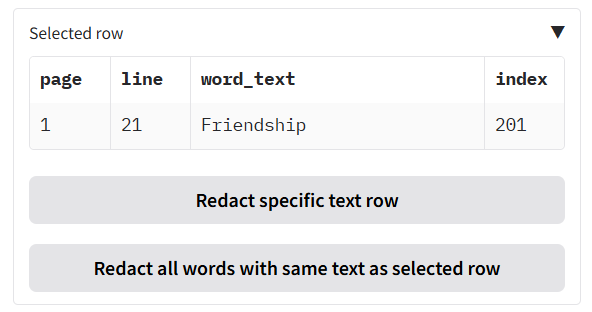

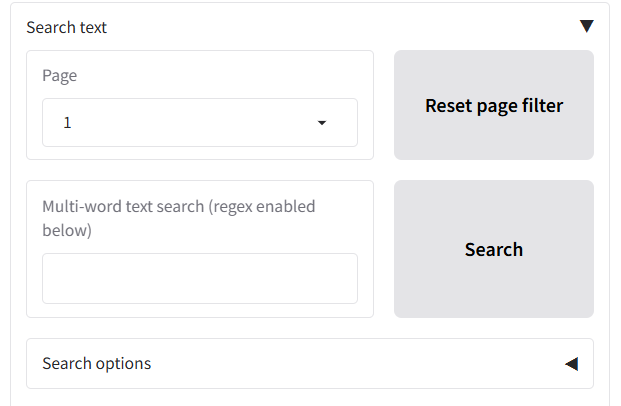

+Additional options on the 'Redaction settings' include, the type of information to redact (e.g. people, places), custom terms to include/ exclude from redaction, fuzzy matching, language settings, and whole page redaction. After redaction is complete, you can view and modify suggested redactions on the 'Review redactions' tab to quickly create a final redacted document.

+

+NOTE: The app is not 100% accurate, and it will miss some personal information. It is essential that all outputs are reviewed **by a human** before using the final outputs.

+

+---

+

+## 🚀 Quick Start - Installation and first run

+

+Follow these instructions to get the document redaction application running on your local machine.

+

+### 1. Prerequisites: System Dependencies

+

+This application relies on two external tools for OCR (Tesseract) and PDF processing (Poppler). Please install them on your system before proceeding.

+

+---

+

+

+#### **On Windows**

+

+Installation on Windows requires downloading installers and adding the programs to your system's PATH.

+

+1. **Install Tesseract OCR:**

+ * Download the installer from the official Tesseract at [UB Mannheim page](https://github.com/UB-Mannheim/tesseract/wiki) (e.g., `tesseract-ocr-w64-setup-v5.X.X...exe`).

+ * Run the installer.

+ * **IMPORTANT:** During installation, ensure you select the option to "Add Tesseract to system PATH for all users" or a similar option. This is crucial for the application to find the Tesseract executable.

+

+

+2. **Install Poppler:**

+ * Download the latest Poppler binary for Windows. A common source is the [Poppler for Windows](https://github.com/oschwartz10612/poppler-windows) GitHub releases page. Download the `.zip` file (e.g., `poppler-25.07.0-win.zip`).

+ * Extract the contents of the zip file to a permanent location on your computer, for example, `C:\Program Files\poppler\`.

+ * You must add the `bin` folder from your Poppler installation to your system's PATH environment variable.

+ * Search for "Edit the system environment variables" in the Windows Start Menu and open it.

+ * Click the "Environment Variables..." button.

+ * In the "System variables" section, find and select the `Path` variable, then click "Edit...".

+ * Click "New" and add the full path to the `bin` directory inside your Poppler folder (e.g., `C:\Program Files\poppler\poppler-24.02.0\bin`).

+ * Click OK on all windows to save the changes.

+

+ To verify, open a new Command Prompt and run `tesseract --version` and `pdftoppm -v`. If they both return version information, you have successfully installed the prerequisites.

+

+---

+

+#### **On Linux (Debian/Ubuntu)**

+

+Open your terminal and run the following command to install Tesseract and Poppler:

+

+```bash

+sudo apt-get update && sudo apt-get install -y tesseract-ocr poppler-utils

+```

+

+#### **On Linux (Fedora/CentOS/RHEL)**

+

+Open your terminal and use the `dnf` or `yum` package manager:

+

+```bash

+sudo dnf install -y tesseract poppler-utils

+```

+---

+

+

+### 2. Installation: Code and Python Packages

+

+Once the system prerequisites are installed, you can set up the Python environment.

+

+#### Step 1: Clone the Repository

+

+Open your terminal or Git Bash and clone this repository:

+```bash

+git clone https://github.com/seanpedrick-case/doc_redaction.git

+cd doc_redaction

+```

+

+#### Step 2: Create and Activate a Virtual Environment (Recommended)

+

+It is highly recommended to use a virtual environment to isolate project dependencies and avoid conflicts with other Python projects.

+

+```bash

+# Create the virtual environment

+python -m venv venv

+

+# Activate it

+# On Windows:

+.\venv\Scripts\activate

+

+# On macOS/Linux:

+source venv/bin/activate

+```

+

+#### Step 3: Install Python Dependencies

+

+##### Lightweight version (without PaddleOCR and VLM support)

+

+This project uses `pyproject.toml` to manage dependencies. You can install everything with a single pip command. This process will also download the required Spacy models and other packages directly from their URLs.

+

+```bash

+pip install .

+```

+

+Alternatively, you can install from the `requirements_lightweight.txt` file:

+```bash

+pip install -r requirements_lightweight.txt

+```

+

+##### Full version (with Paddle and VLM support)

+

+Run the following command to install the additional dependencies:

+

+```bash

+pip install .[paddle,vlm]

+```

+

+Alternatively, you can use the full `requirements.txt` file, that contains references to the PaddleOCR and related Torch/transformers dependencies (for cuda 12.9):

+```bash

+pip install -r requirements.txt

+```

+

+Note that the versions of both PaddleOCR and Torch installed by default are the CPU-only versions. If you want to install the equivalent GPU versions, you will need to run the following commands:

+```bash

+pip install paddlepaddle-gpu==3.2.1 --index-url https://www.paddlepaddle.org.cn/packages/stable/cu129/

+```

+

+**Note:** It is difficult to get paddlepaddle gpu working in an environment alongside torch. You may well need to reinstall the cpu version to ensure compatibility, and run paddlepaddle-gpu in a separate environment without torch installed. If you get errors related to .dll files following paddle gpu install, you may need to install the latest c++ redistributables. For Windows, you can find them [here](https://learn.microsoft.com/en-us/cpp/windows/latest-supported-vc-redist?view=msvc-170)

+

+```bash

+pip install torch==2.8.0 --index-url https://download.pytorch.org/whl/cu129

+pip install torchvision --index-url https://download.pytorch.org/whl/cu129

+```

+

+#### Docker installation

+

+The doc_redaction Redaction app can be installed by using the [Dockerfile](https://github.com/seanpedrick-case/doc_redaction/blob/main/Dockerfile) or Docker compose files ([llama.cpp](https://github.com/ggml-org/llama.cpp), [vLLM](https://docs.vllm.ai/en/stable/)) provided in the repo.

+

+##### Without Llama.cpp / vLLM inference server

+

+If you want a working Docker installation without GPU support, you can install from the [Dockerfile](https://github.com/seanpedrick-case/doc_redaction/blob/main/Dockerfile) in the repo. A working example of this, with the CPU version of PaddleOCR, can be found on [Hugging Face](https://huggingface.co/spaces/seanpedrickcase/document_redaction). You can adjust the INSTALL_PADDLEOCR, PADDLE_GPU_ENABLED, INSTALL_VLM, and TORCH_GPU_ENABLED config variables to adjust for PaddleOCR and Transformers packages for local VLM support. Note that GPU-enabled PaddleOCR, and GPU-enabled Transformers/Torch often don't work well together, which is one reason why a Llama.cpp/vLLM inference server Docker installation option is provided below.

+

+##### With Llama.cpp / vLLM inference server

+

+The project now has Docker and Docker compose files available to pair running the Redaction app with local inference servers powered by [llama.cpp](https://github.com/ggml-org/llama.cpp), or [vLLM](https://docs.vllm.ai/en/stable/). Llama.cpp is more flexible than vLLM for low VRAM systems, as Llama.cpp will offload to cpu/system RAM automatically rather than failing as vLLM tends to do.

+

+For Llama.cpp, you can use the [docker-compose_llama.yml](https://github.com/seanpedrick-case/doc_redaction/blob/main/docker-compose_llama.yml) file, and for vLLM, you can use the [docker-compose_vllm.yml](https://github.com/seanpedrick-case/doc_redaction/blob/main/docker-compose_vllm.yml) file. To run, Docker / Docker Desktop should be installed, and then you can run the commands suggested in the top of the files to run the servers.

+

+You will need ~40-50GB of disk space to run everything depending on the model chosen from the compose file. For the vLLM server, you will need 24 GB VRAM. For the Llama.cpp server, 24 GB VRAM is needed to run at full speed, but the n-gpu-layers and n-cpu-moe parameters in the Docker compose file can be adjusted to fit into your system. I would suggest that 8 GB VRAM is needed as a bare minimum for decent inference speed. See the [Unsloth guide](https://unsloth.ai/docs/models/qwen3.5) for more details on working with GGUF files for Qwen 3.5.

+

+### 3. Run the Application

+

+With all dependencies installed, you can now start the Gradio application.

+

+```bash

+python app.py

+```

+

+After running the command, the application will start, and you will see a local URL in your terminal (usually `http://127.0.0.1:7860`).

+

+Open this URL in your web browser to use the document redaction tool

+

+#### Command line interface

+

+If instead you want to run redactions or other app functions in CLI mode, run the following for instructions:

+

+```bash

+python cli_redact.py --help

+```

+

+---

+

+

+### 4. ⚙️ Configuration (Optional)

+

+You can customise the application's behavior by creating a configuration file. This allows you to change settings without modifying the source code, such as enabling AWS features, changing logging behavior, or pointing to local Tesseract/Poppler installations. A full overview of all the potential settings you can modify in the app_config.env file can be seen in tools/config.py, with explanation on the documentation website for [the github repo](https://seanpedrick-case.github.io/doc_redaction/)

+

+To get started:

+1. Locate the `example_config.env` file in the root of the project.

+2. Create a new file named `app_config.env` inside the `config/` directory (i.e., `config/app_config.env`).

+3. Copy the contents from `example_config.env` into your new `config/app_config.env` file.

+4. Modify the values in `config/app_config.env` to suit your needs. The application will automatically load these settings on startup.

+

+If you do not create this file, the application will run with default settings.

+

+#### Configuration Breakdown

+

+Here is an overview of the most important settings, separated by whether they are for local use or require AWS.

+

+---

+

+#### **Local & General Settings (No AWS Required)**

+

+These settings are useful for all users, regardless of whether you are using AWS.

+

+* `TESSERACT_FOLDER` / `POPPLER_FOLDER`

+ * Use these if you installed Tesseract or Poppler to a custom location on **Windows** and did not add them to the system PATH.

+ * Provide the path to the respective installation folders (for Poppler, point to the `bin` sub-directory).

+ * **Examples:** `POPPLER_FOLDER=C:/Program Files/poppler-24.02.0/bin/` `TESSERACT_FOLDER=tesseract/`

+

+* `SHOW_LANGUAGE_SELECTION=True`

+ * Set to `True` to display a language selection dropdown in the UI for OCR processing.

+

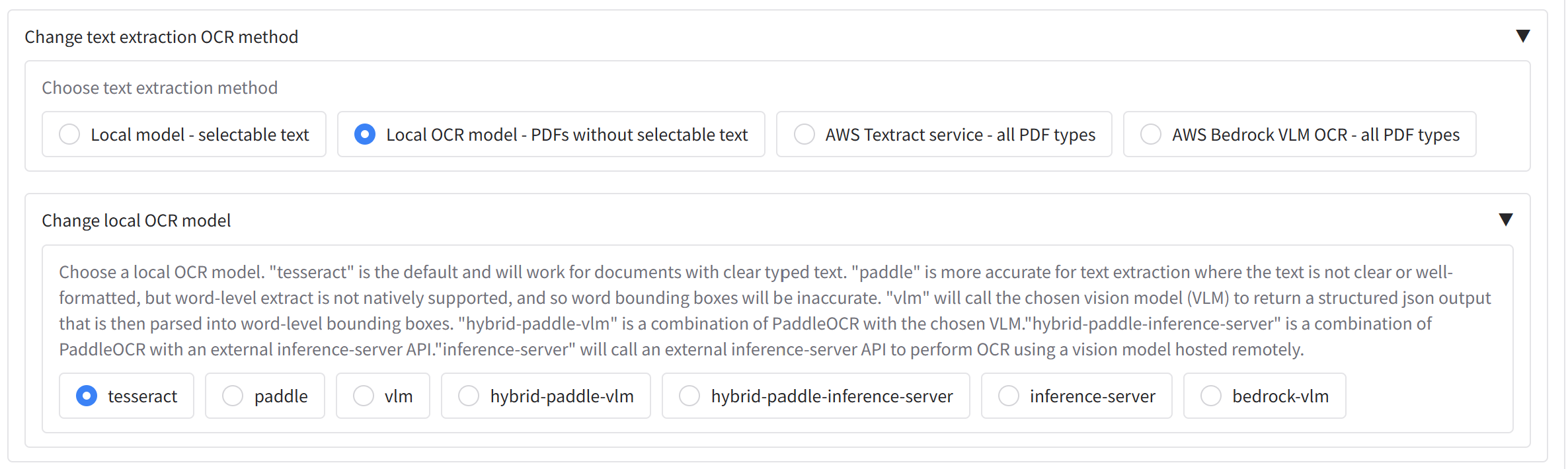

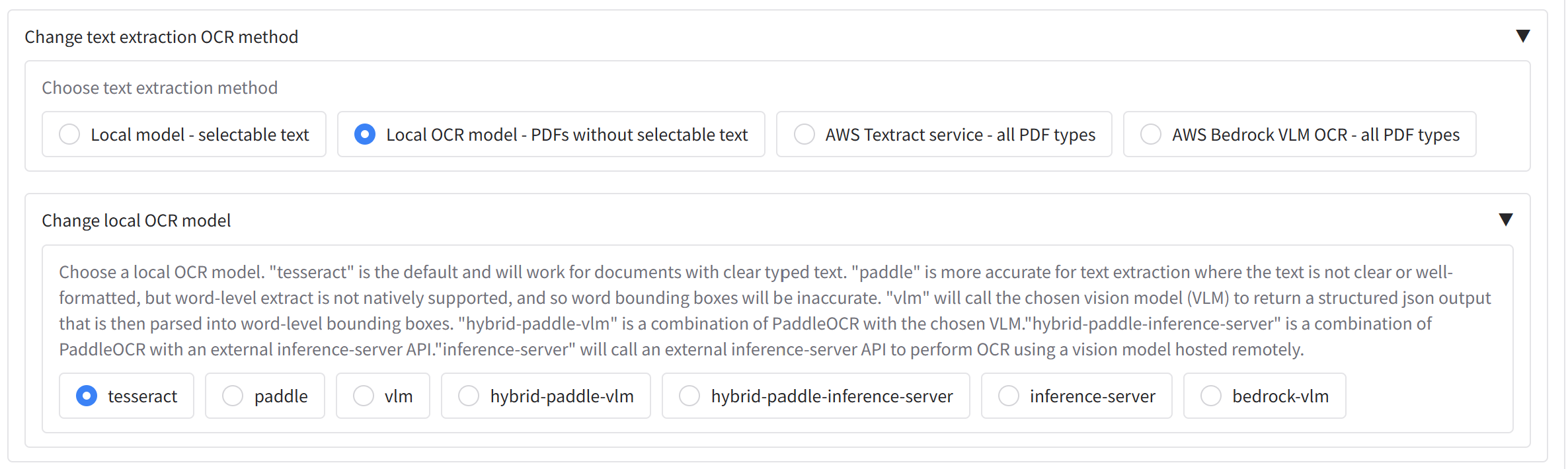

+* `DEFAULT_LOCAL_OCR_MODEL=tesseract`"

+ * Choose the backend for local OCR. Options are `tesseract`, `paddle`, or `hybrid`. "Tesseract" is the default, and is recommended. "hybrid-paddle" is a combination of the two - first pass through the redactions will be done with Tesseract, and then a second pass will be done with PaddleOCR on words with low confidence. "paddle" will only return whole line text extraction, and so will only work for OCR, not redaction.

+

+* `SESSION_OUTPUT_FOLDER=False`

+ * If `True`, redacted files will be saved in unique subfolders within the `output/` directory for each session.

+

+* `DISPLAY_FILE_NAMES_IN_LOGS=False`

+ * For privacy, file names are not recorded in usage logs by default. Set to `True` to include them.

+

+---

+

+#### **AWS-Specific Settings**

+

+These settings are only relevant if you intend to use AWS services like Textract for OCR and Comprehend for PII detection.

+

+* `RUN_AWS_FUNCTIONS=True`

+ * **This is the master switch.** You must set this to `True` to enable any AWS functionality. If it is `False`, all other AWS settings will be ignored.

+

+* **UI Options:**

+ * `SHOW_AWS_TEXT_EXTRACTION_OPTIONS=True`: Adds "AWS Textract" as an option in the text extraction dropdown.

+ * `SHOW_AWS_PII_DETECTION_OPTIONS=True`: Adds "AWS Comprehend" as an option in the PII detection dropdown.

+

+* **Core AWS Configuration:**

+ * `AWS_REGION=example-region`: Set your AWS region (e.g., `us-east-1`).

+ * `DOCUMENT_REDACTION_BUCKET=example-bucket`: The name of the S3 bucket the application will use for temporary file storage and processing.

+

+* **AWS Logging:**

+ * `SAVE_LOGS_TO_DYNAMODB=True`: If enabled, usage and feedback logs will be saved to DynamoDB tables.

+ * `ACCESS_LOG_DYNAMODB_TABLE_NAME`, `USAGE_LOG_DYNAMODB_TABLE_NAME`, etc.: Specify the names of your DynamoDB tables for logging.

+

+* **Advanced AWS Textract Features:**

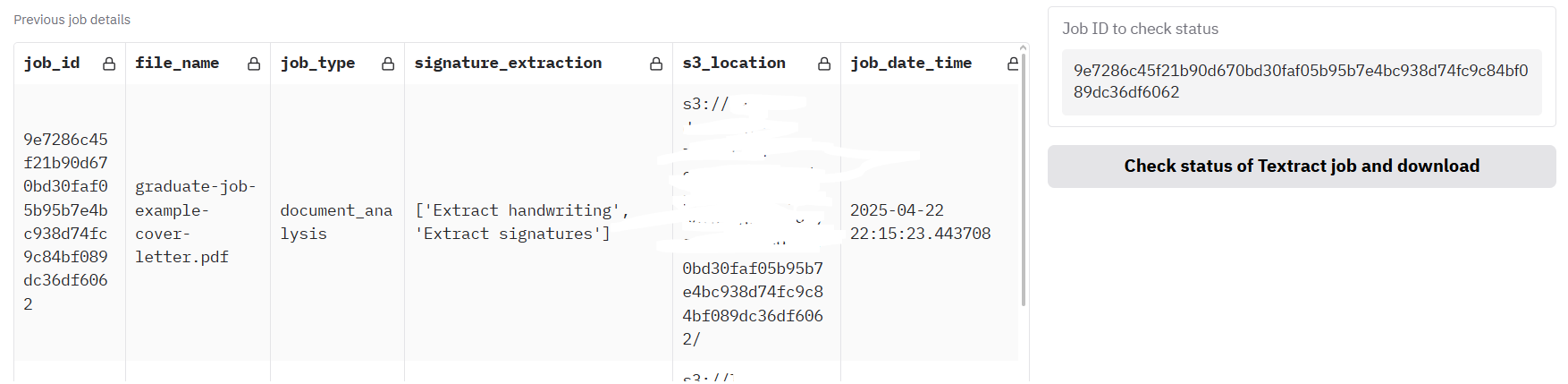

+ * `SHOW_WHOLE_DOCUMENT_TEXTRACT_CALL_OPTIONS=True`: Enables UI components for large-scale, asynchronous document processing via Textract.

+ * `TEXTRACT_WHOLE_DOCUMENT_ANALYSIS_BUCKET=example-bucket-output`: A separate S3 bucket for the final output of asynchronous Textract jobs.

+ * `LOAD_PREVIOUS_TEXTRACT_JOBS_S3=True`: If enabled, the app will try to load the status of previously submitted asynchronous jobs from S3.

+

+* **Cost Tracking (for internal accounting):**

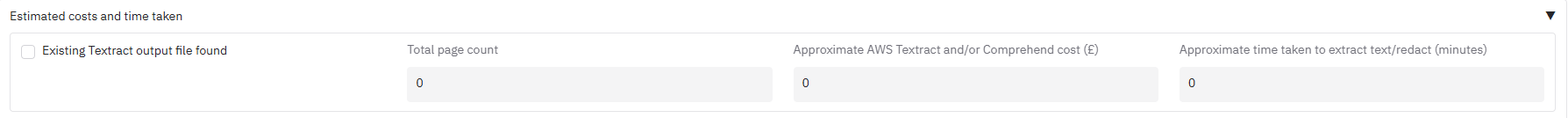

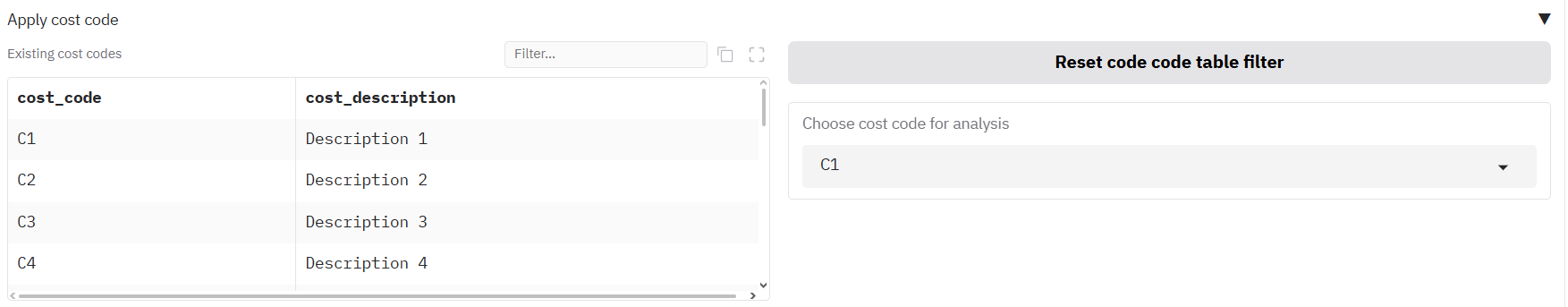

+ * `SHOW_COSTS=True`: Displays an estimated cost for AWS operations. Can be enabled even if AWS functions are off.

+ * `GET_COST_CODES=True`: Enables a dropdown for users to select a cost code before running a job.

+ * `COST_CODES_PATH=config/cost_codes.csv`: The local path to a CSV file containing your cost codes.

+ * `ENFORCE_COST_CODES=True`: Makes selecting a cost code mandatory before starting a redaction.

+

+Now you have the app installed, what follows is a guide on how to use it for basic and advanced redaction.

+

+# User guide

+

+## Table of contents

+

+### Getting Started

+- [Quickstart - Test the app with built-in examples](#quickstart---test-the-app-with-built-in-examples)

+ - [PDF document examples](#pdf-document-examples)

+ - [CSV/Excel file examples](#csvexcel-file-examples)

+- [Basic redaction](#basic-redaction)

+ - [Upload files to the app](#upload-files-to-the-app)

+ - [Text extraction](#text-extraction)

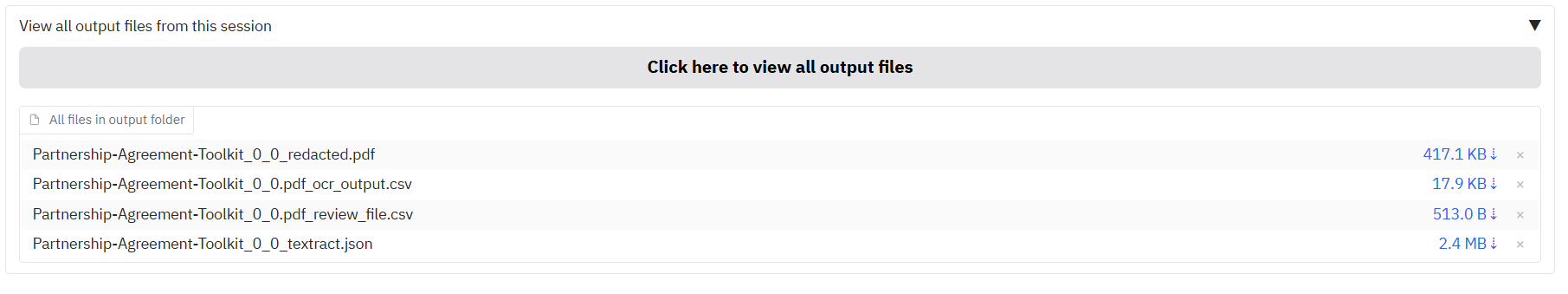

+ - [AWS Textract signature extraction](#aws-textract-signature-extraction)

+ - [PII redaction method](#pii-redaction-method)

+ - [Duplicate page redaction](#duplicate-page-redaction)

+ - [Allow list, deny list, and whole-page redaction](#allow-list-deny-list-and-whole-page-redaction)

+ - [Cost and time estimation](#cost-and-time-estimation)

+ - [Cost code selection](#cost-code-selection)

+ - [Redact only specific pages](#redact-only-specific-pages)

+ - [Run redaction](#run-redaction)

+ - [Redaction outputs](#redaction-outputs)

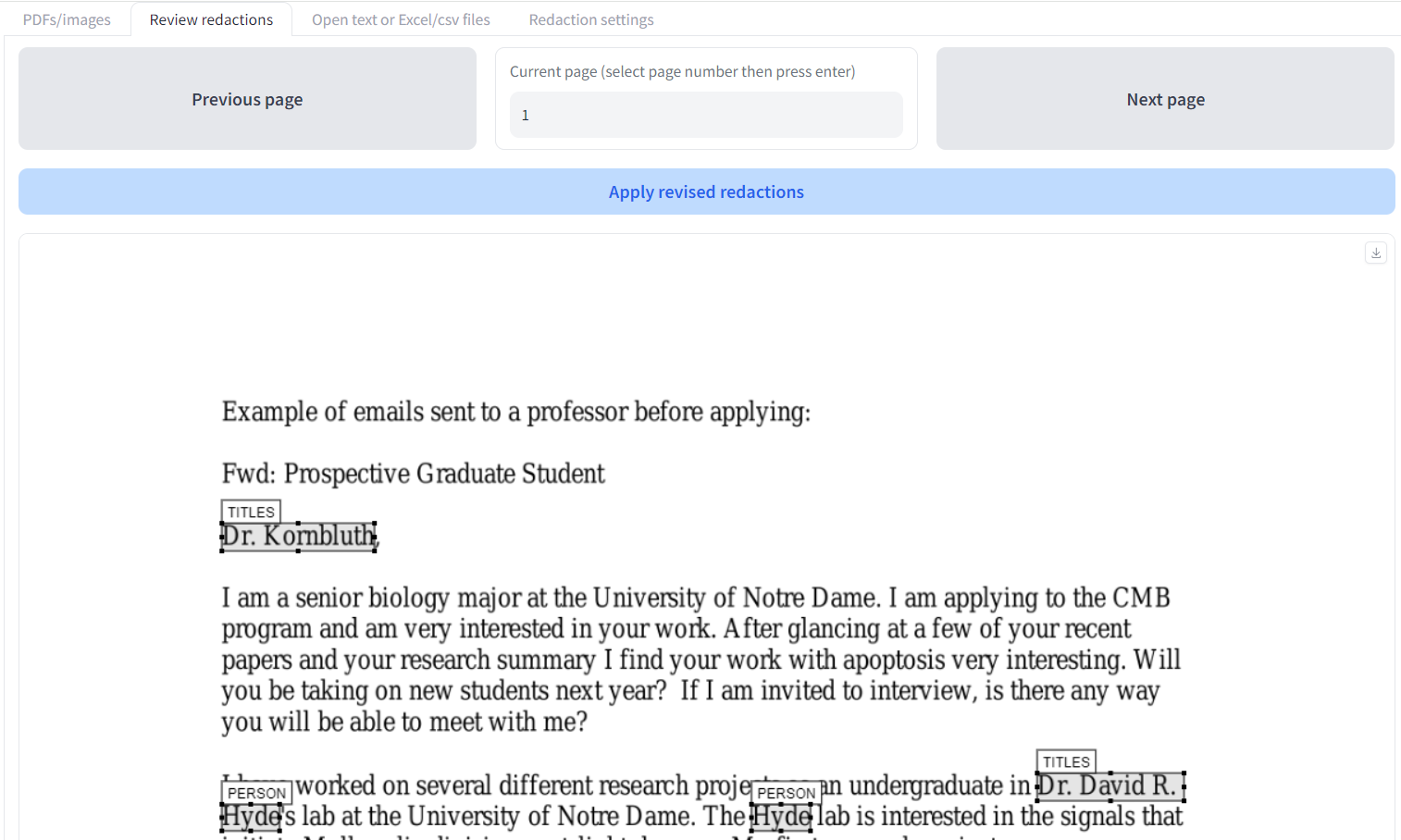

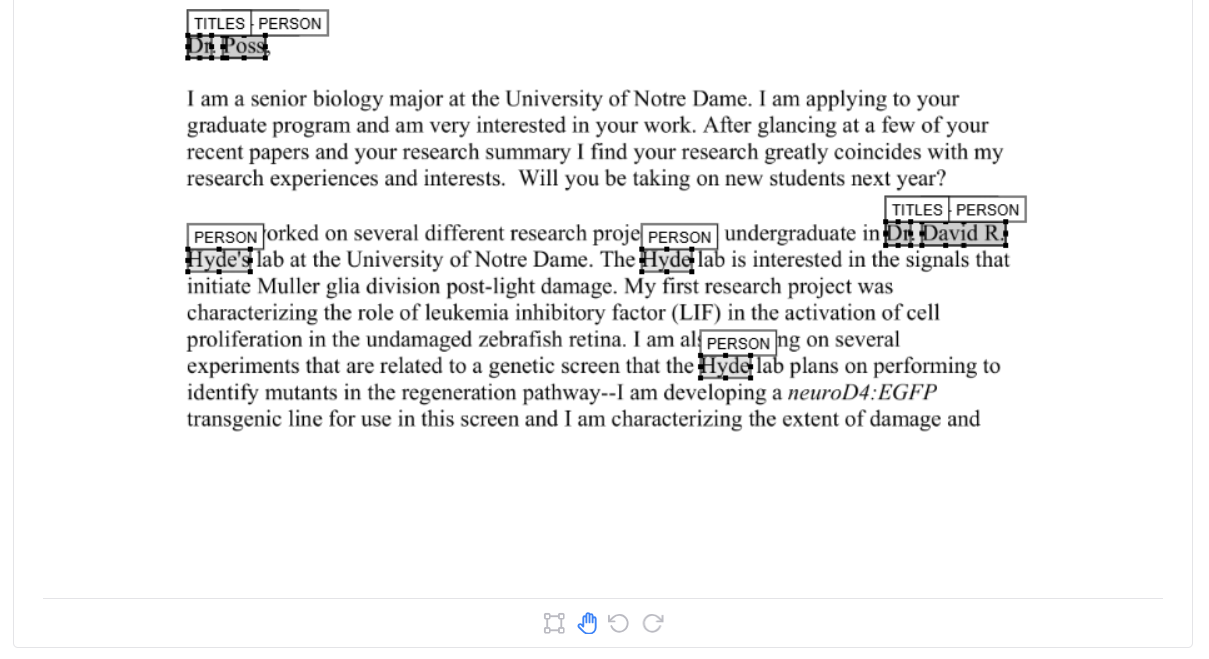

+- [Reviewing and modifying suggested redactions](#reviewing-and-modifying-suggested-redactions)

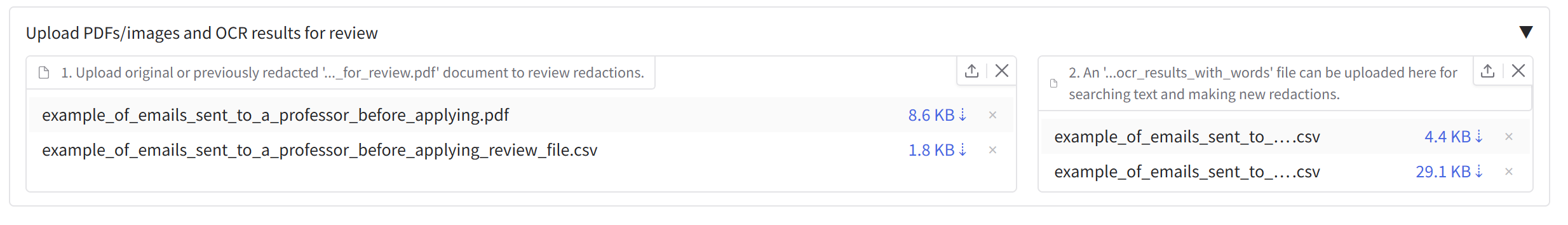

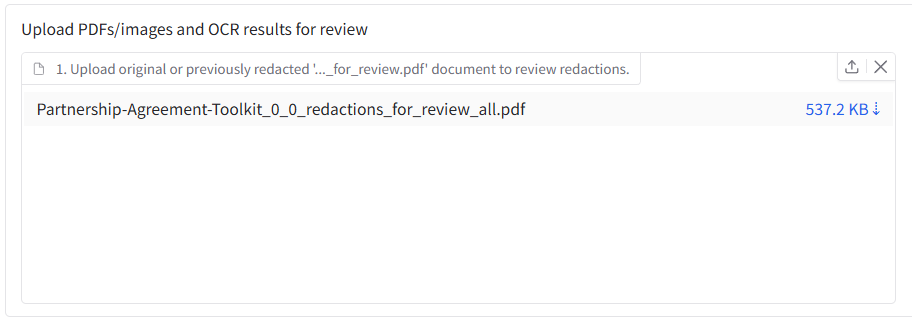

+ - [Uploading documents for review](#uploading-documents-for-review)

+ - [Page navigation](#page-navigation)

+ - [Document viewer](#document-viewer)

+ - [Modify existing redactions](#modify-existing-redactions)

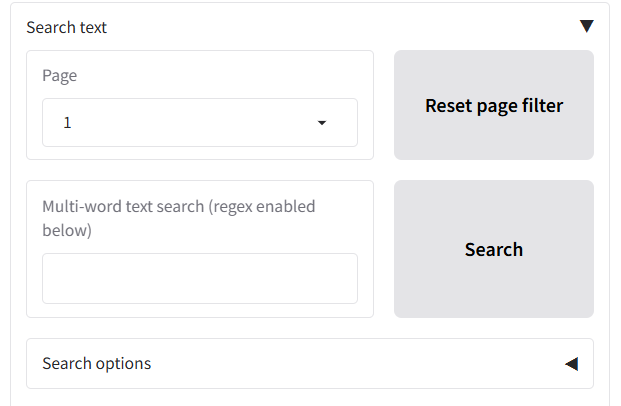

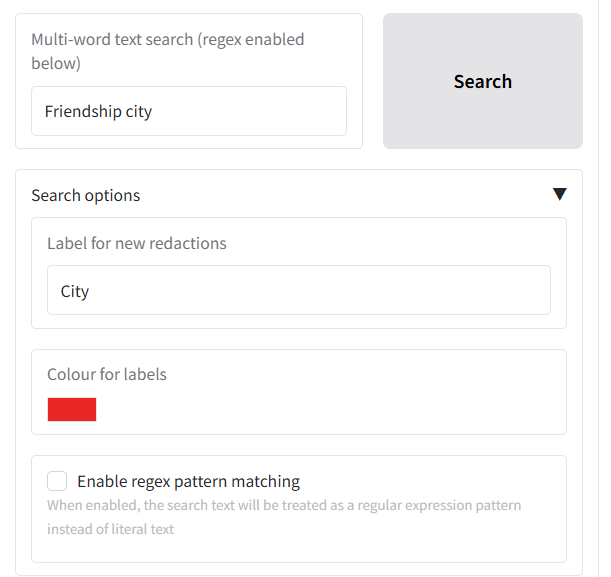

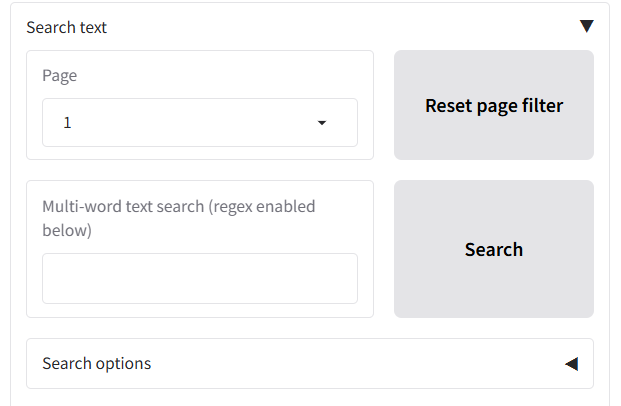

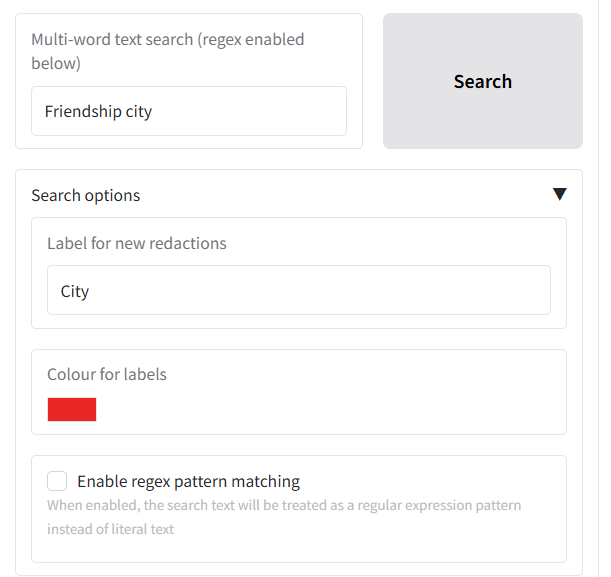

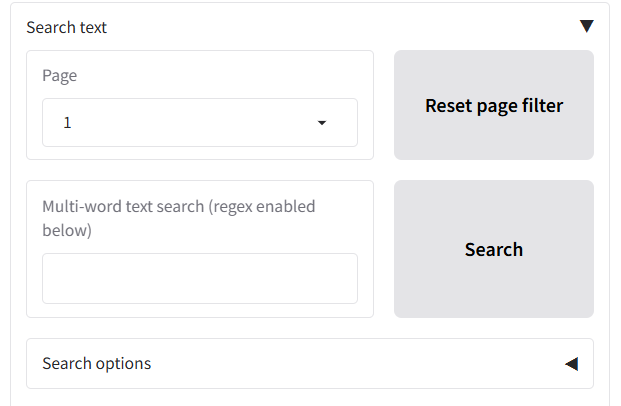

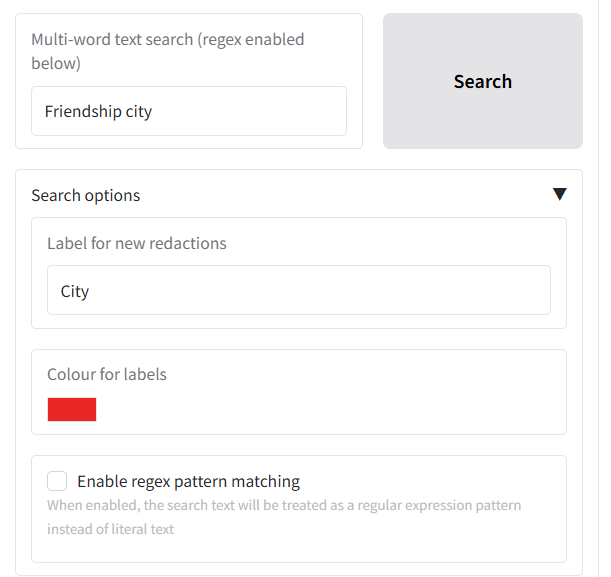

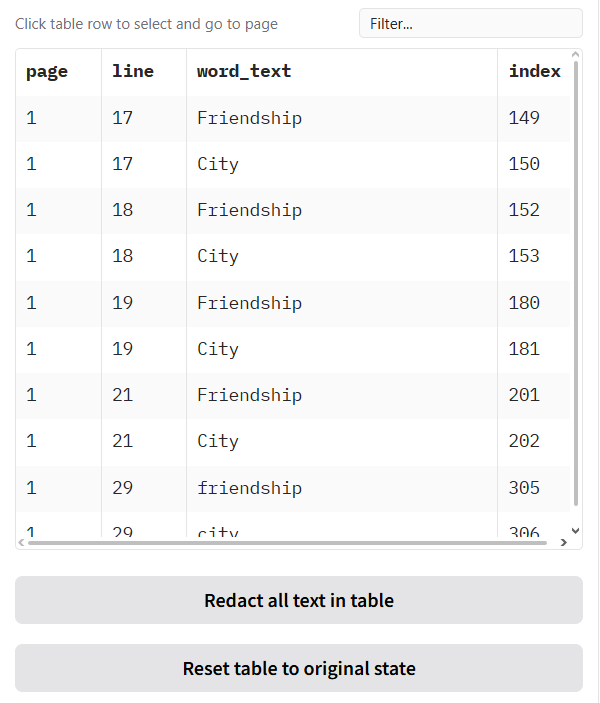

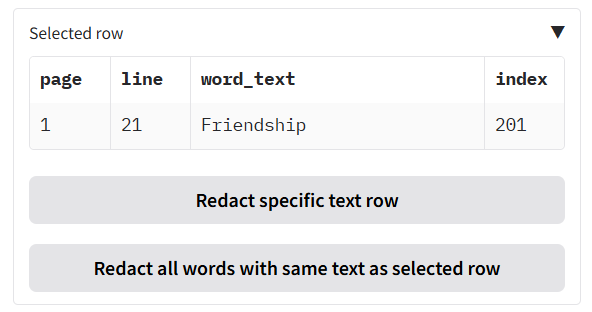

+ - [Search text and redact](#search-text-and-redact)

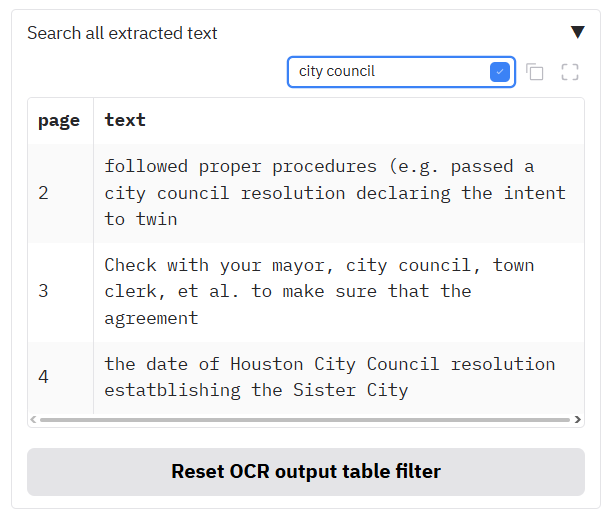

+ - [Navigating through the document using 'View text'](#navigating-through-the-document-using-view-text)

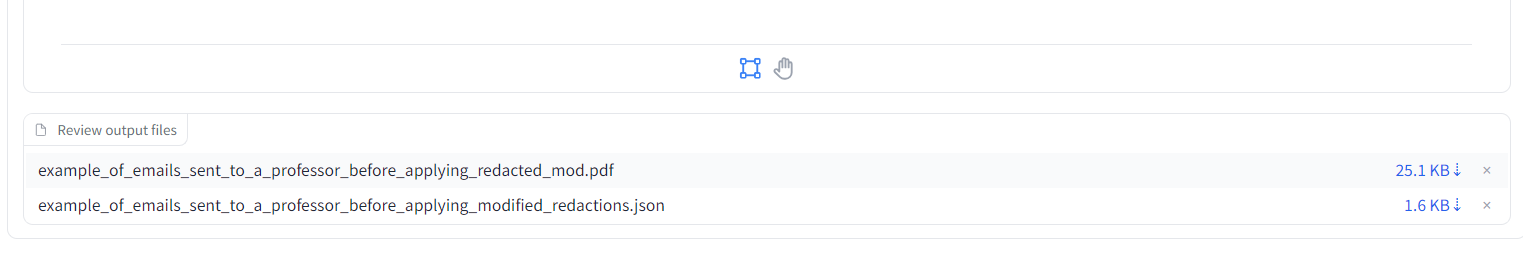

+ - [Apply revised redactions to PDF](#apply-revised-redactions-to-pdf)

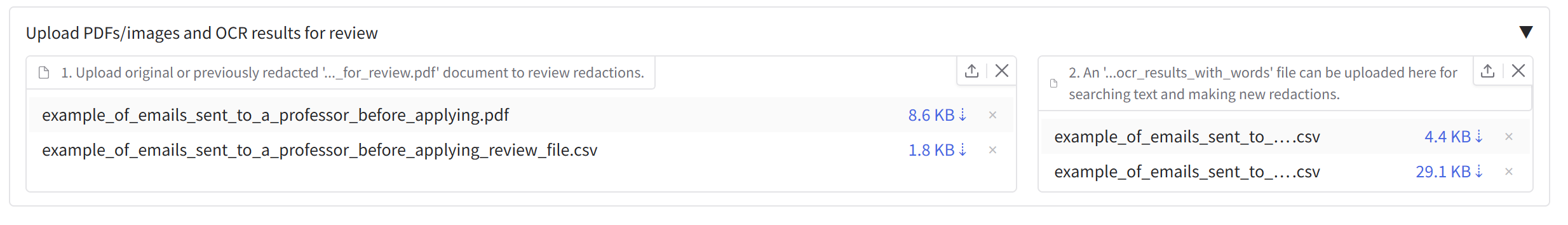

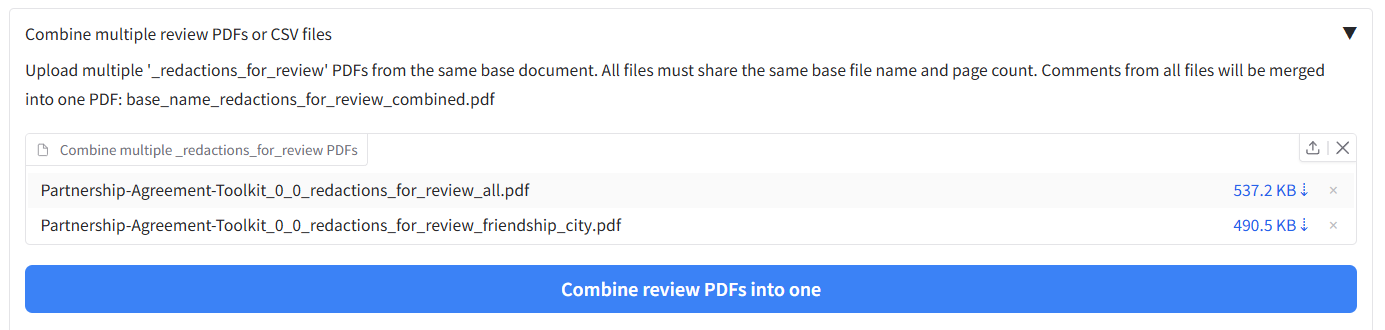

+- [Loading in previous results to continue redaction](#loading-in-previous-results-to-continue-redaction)

+ - [Loading in previous results from the redactions_for_review.pdf file](#loading-in-previous-results-from-the-redactions_for_reviewpdf-file)

+ - [Loading in OCR results to search for new redactions](#loading-in-ocr-results-to-search-for-new-redactions)

+ - [Using a previous OCR results file to skip redoing OCR for future redaction tasks](#using-a-previous-ocr-results-file-to-skip-redoing-ocr-for-future-redaction-tasks)

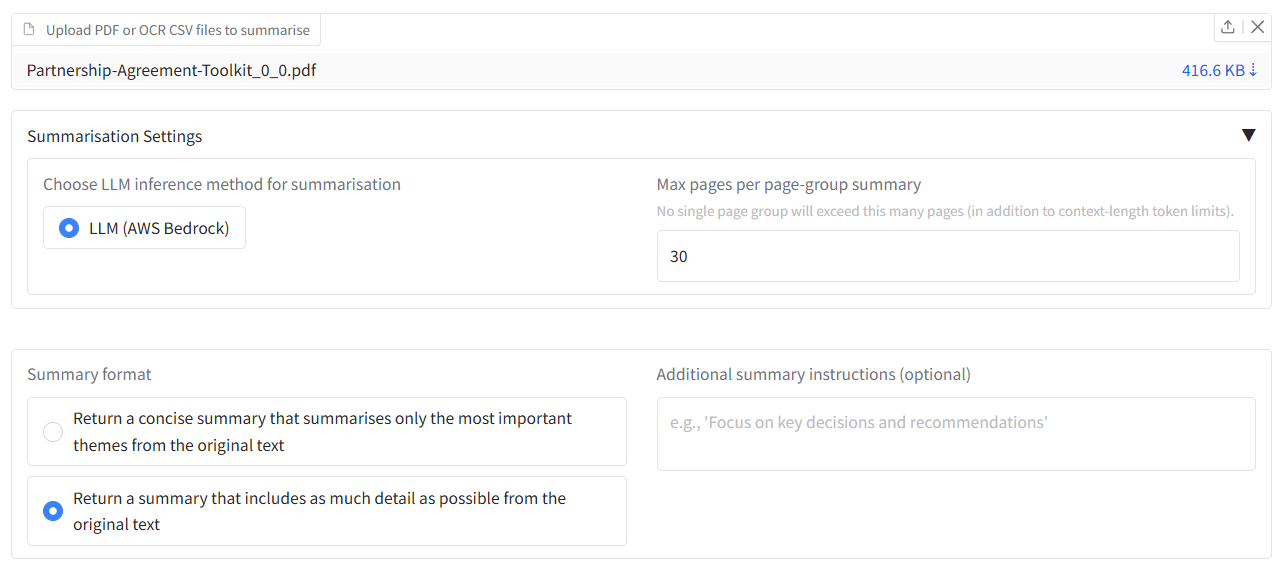

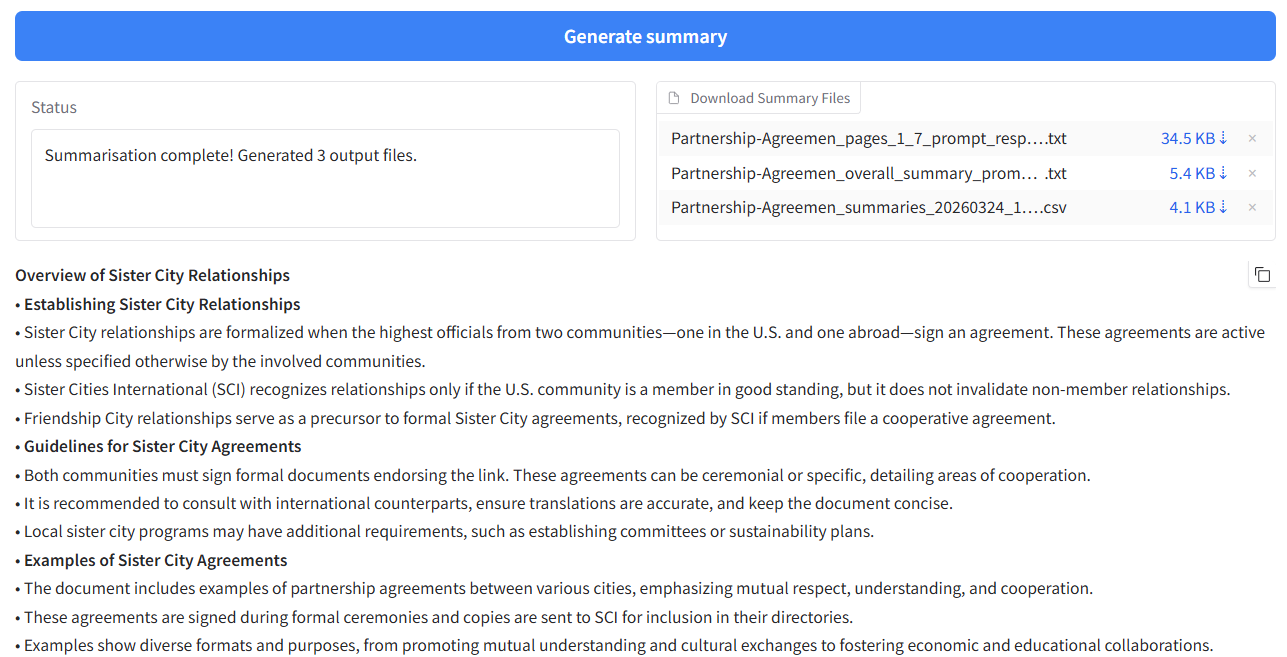

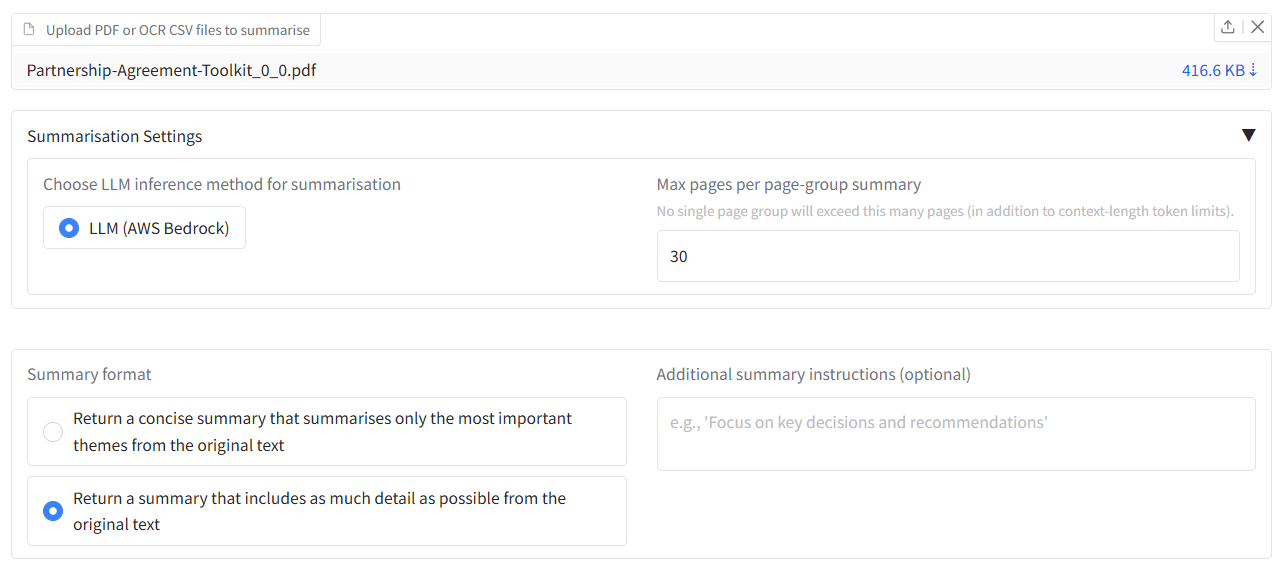

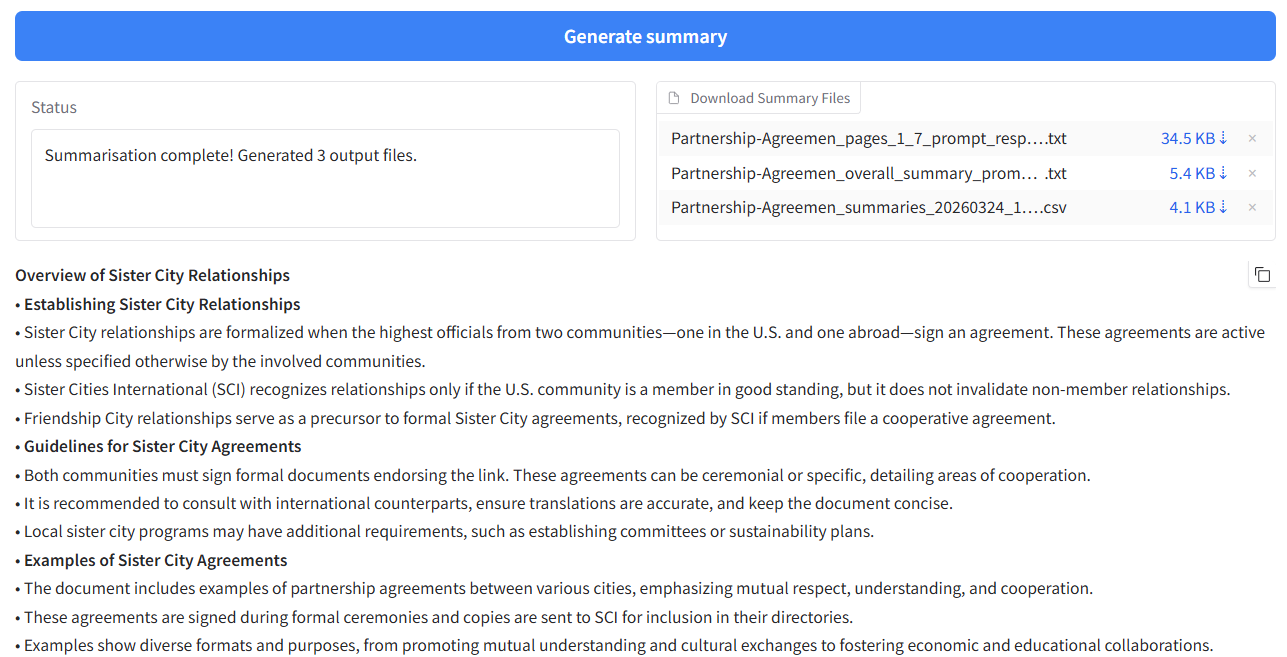

+- [Document summarisation](#document-summarisation)

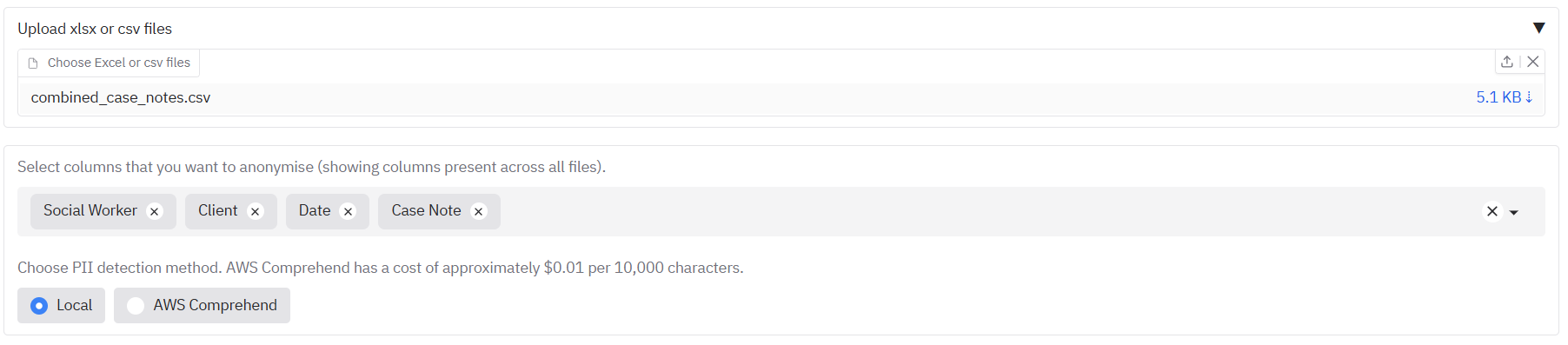

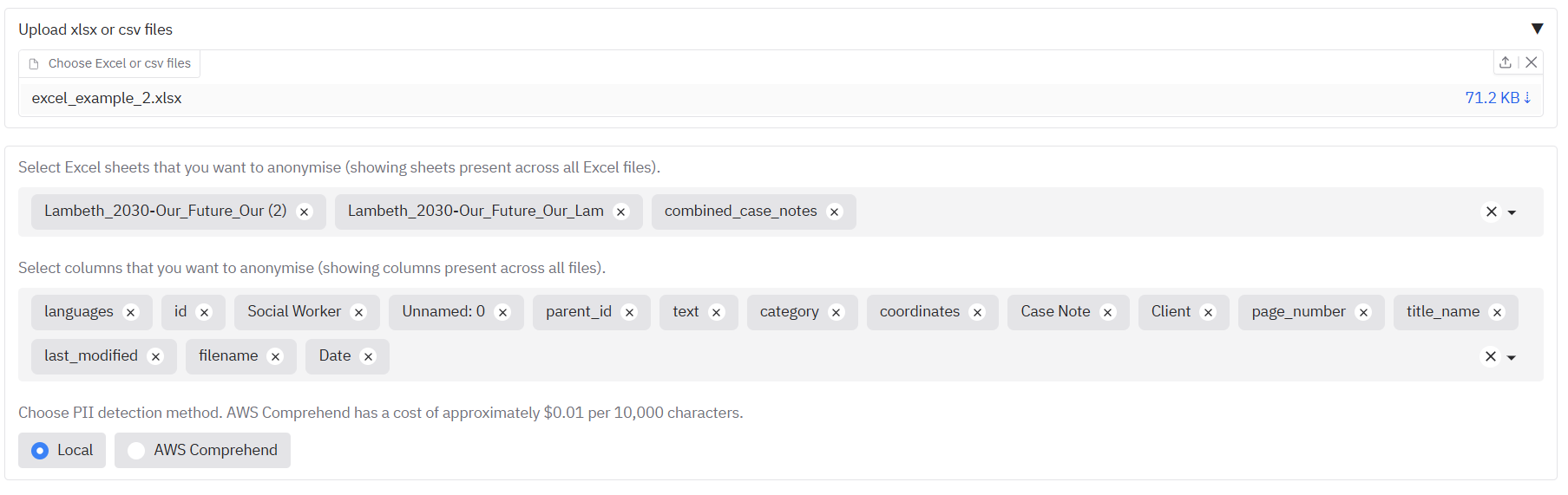

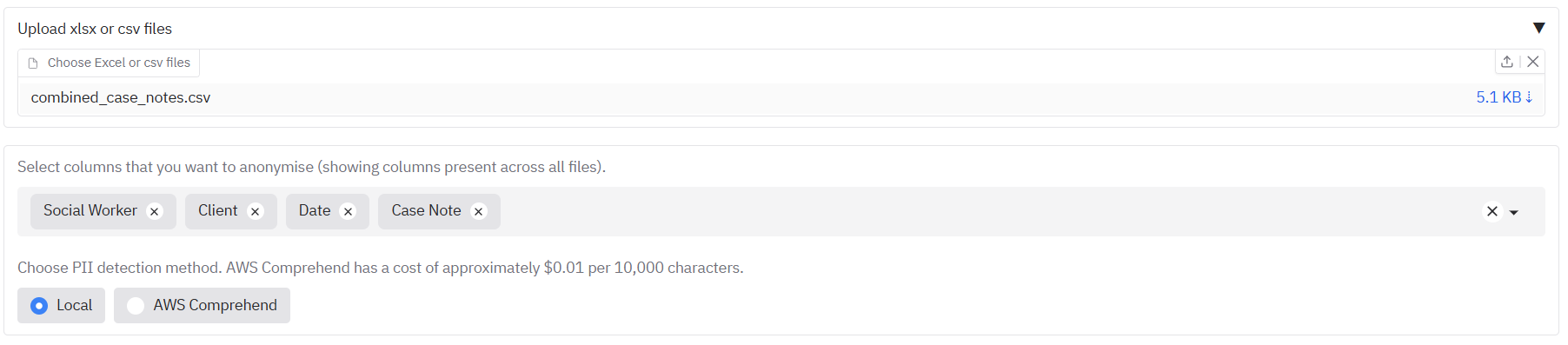

+- [Redacting Word, tabular data files (CSV/XLSX) or open text](#redacting-word-tabular-data-files-xlsxcsv-or-open-text)

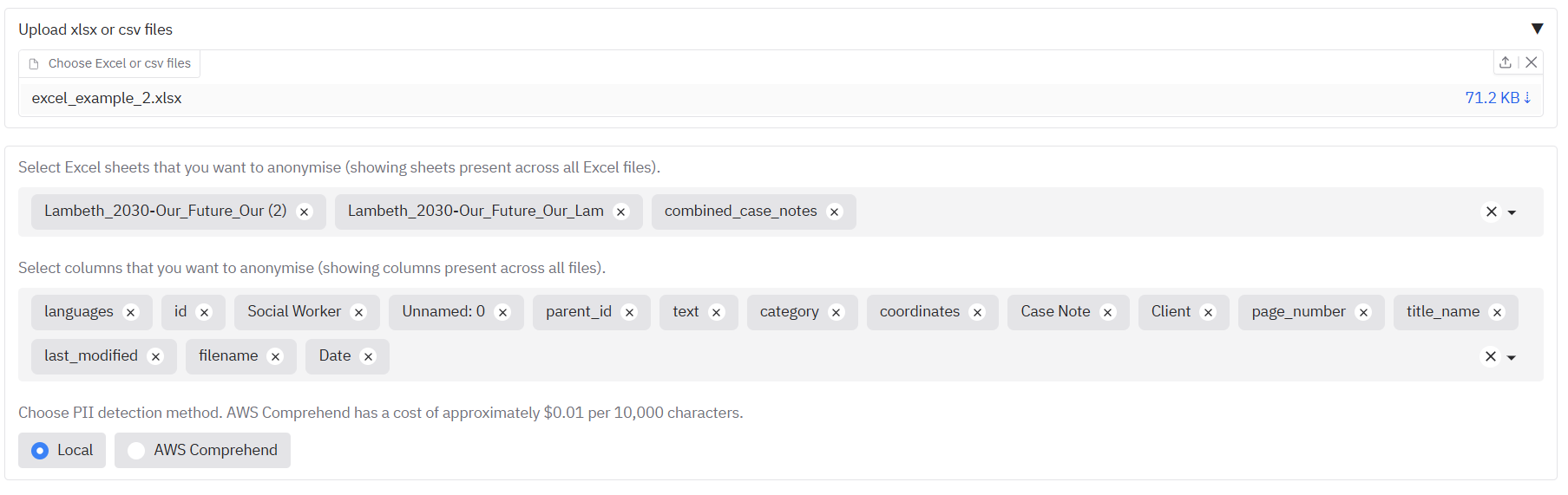

+ - [Word or tabular data files (XLSX/CSV)](#word-or-tabular-data-files-xlsxcsv)

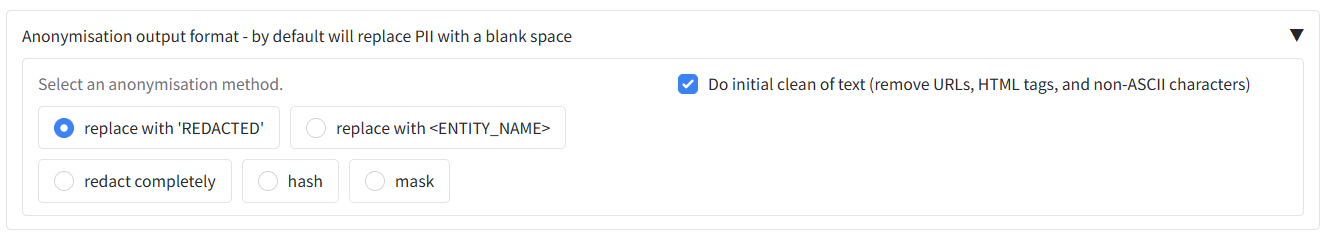

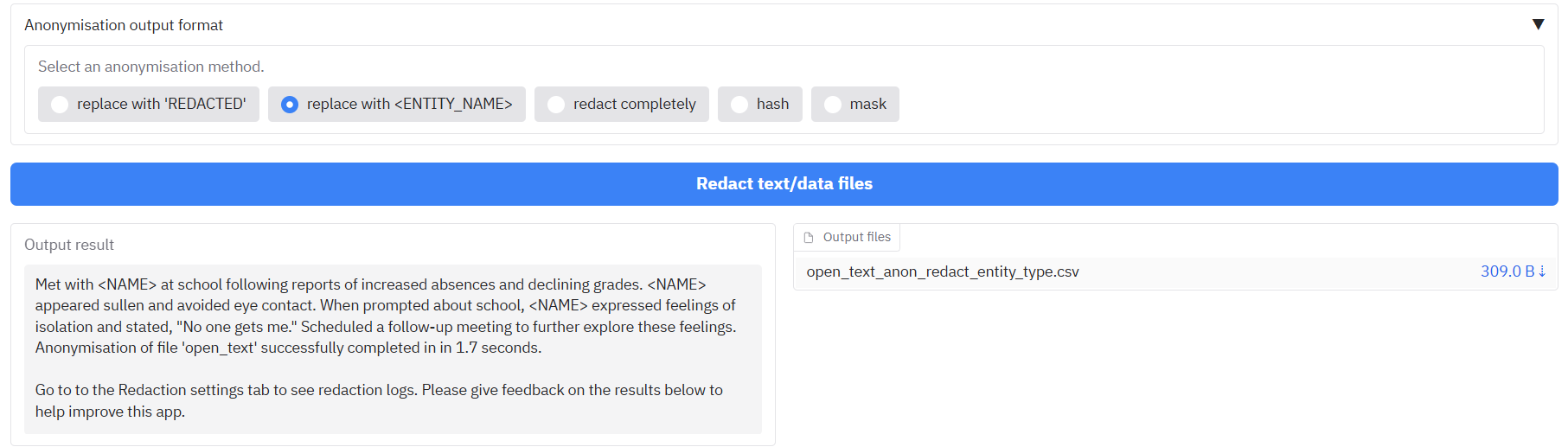

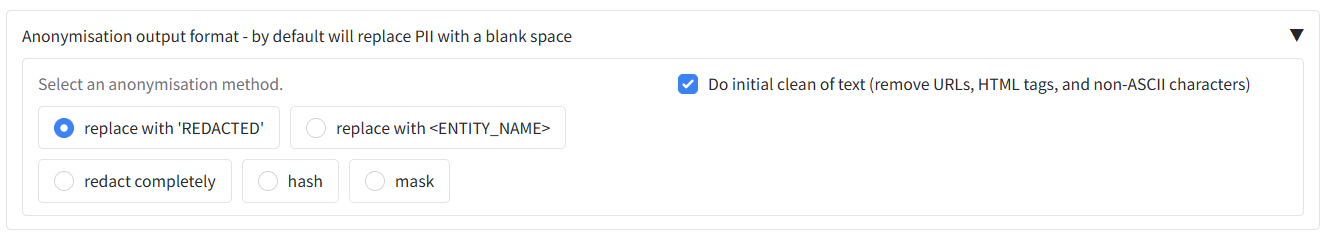

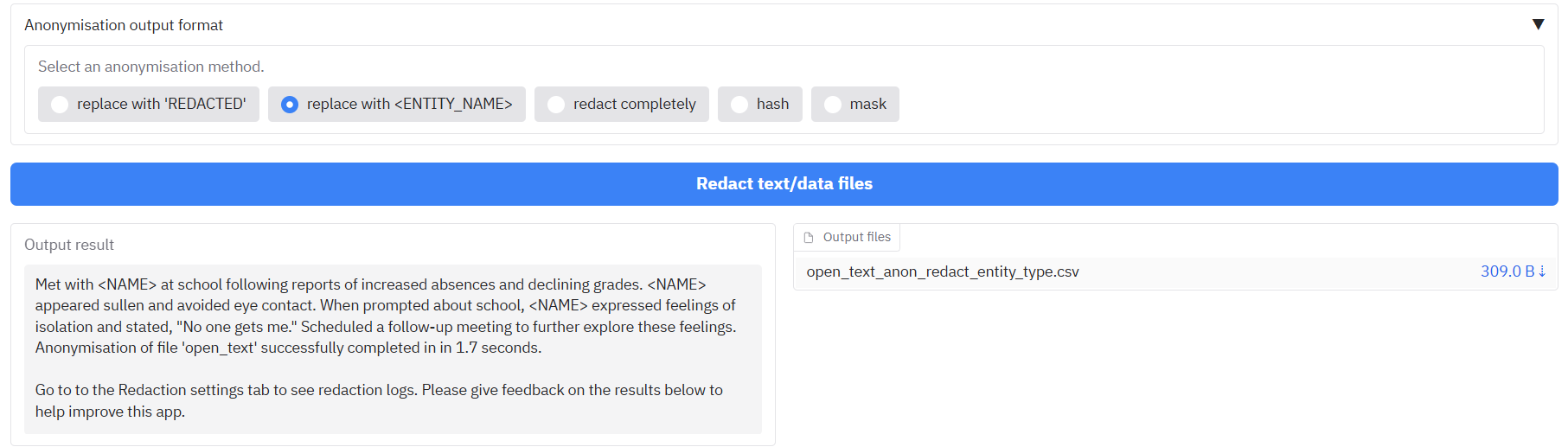

+ - [Choosing output anonymisation format](#choosing-output-anonymisation-format)

+ - [Redacting open text](#redacting-open-text)

+

+### Advanced user guide

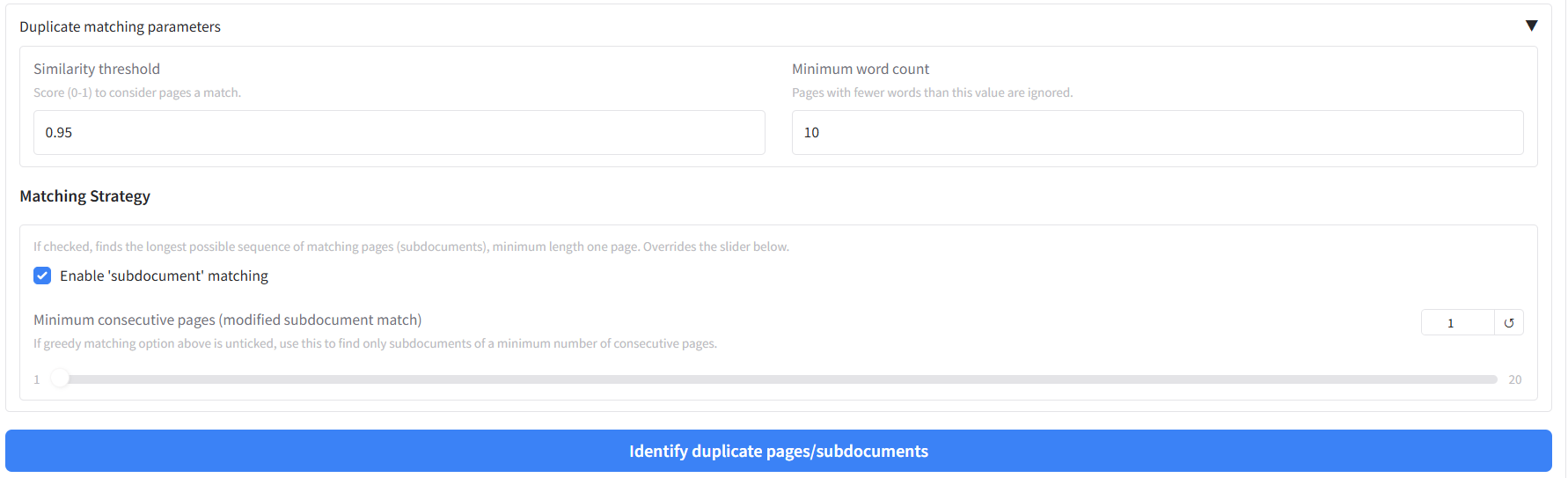

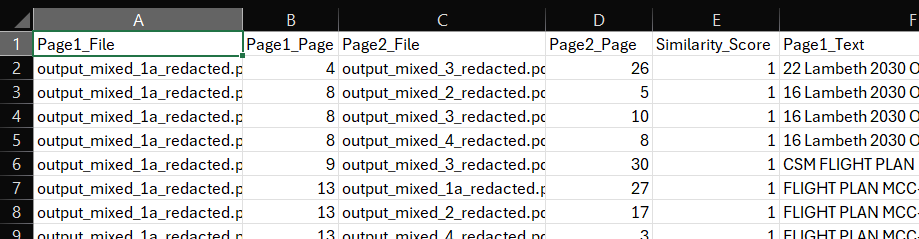

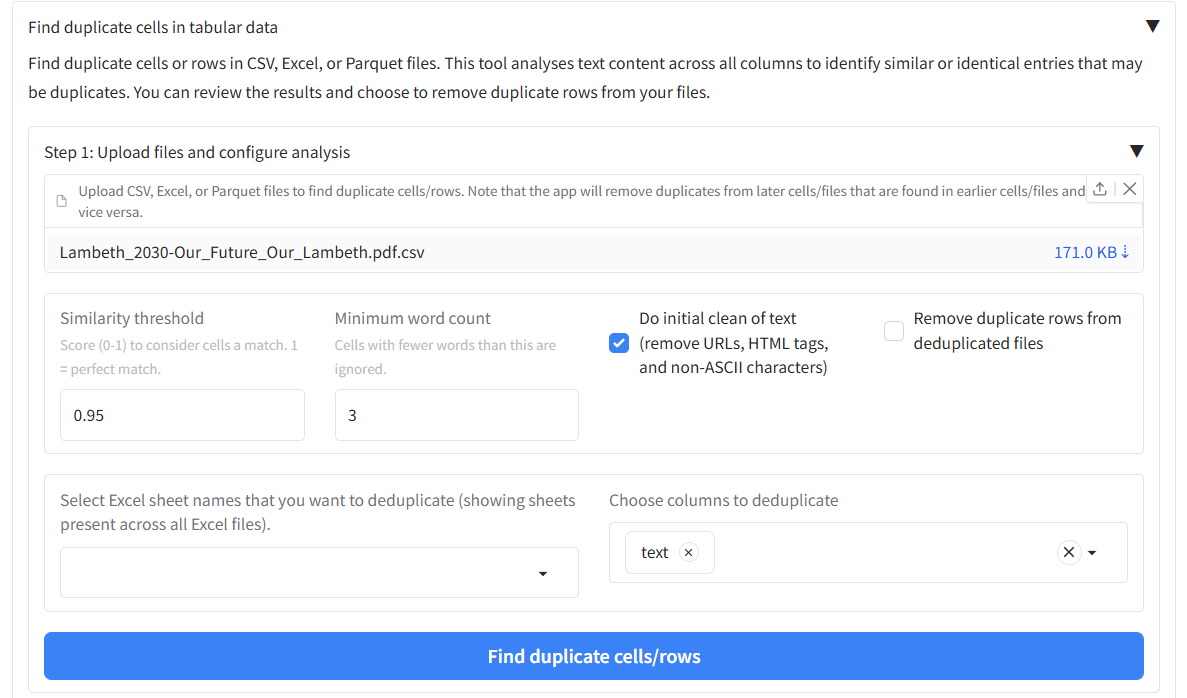

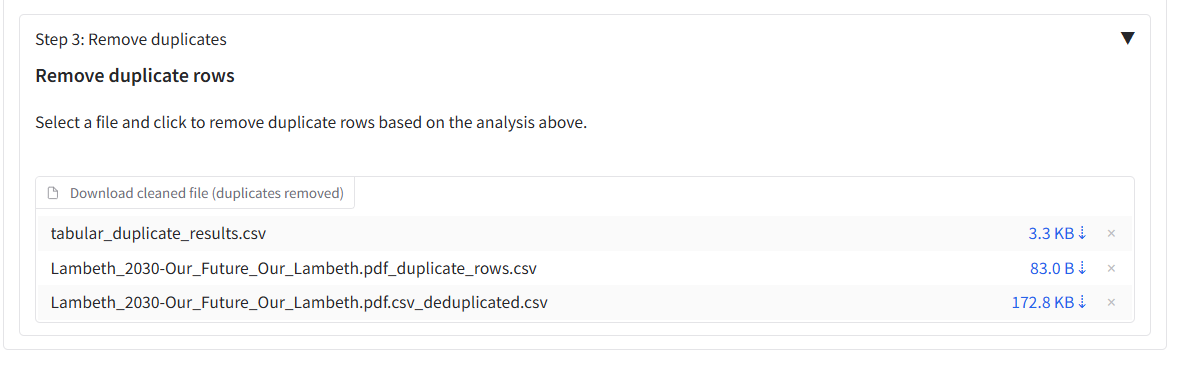

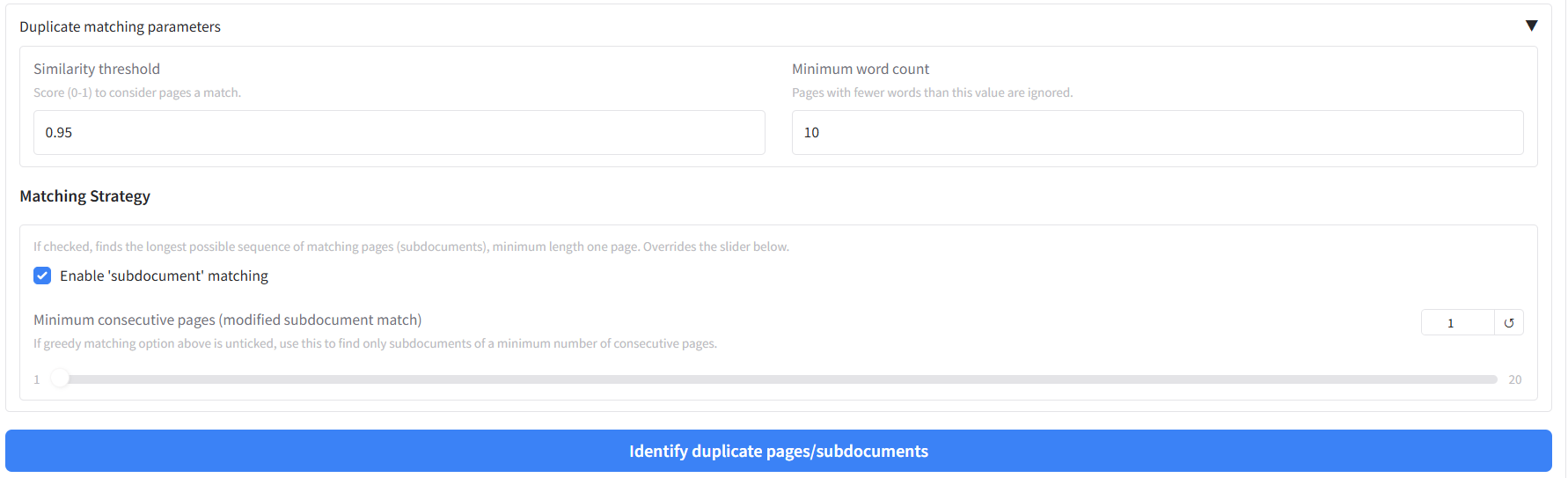

+- [Identifying and redacting duplicate pages with custom settings](#identifying-and-redacting-duplicate-pages-with-custom-settings)

+ - [Duplicate page detection in documents](#duplicate-page-detection-in-documents)

+ - [Duplicate detection in tabular data](#duplicate-detection-in-tabular-data)

+- [Export redacted document files to Adobe Acrobat](#export-redacted-document-files-to-adobe-acrobat)

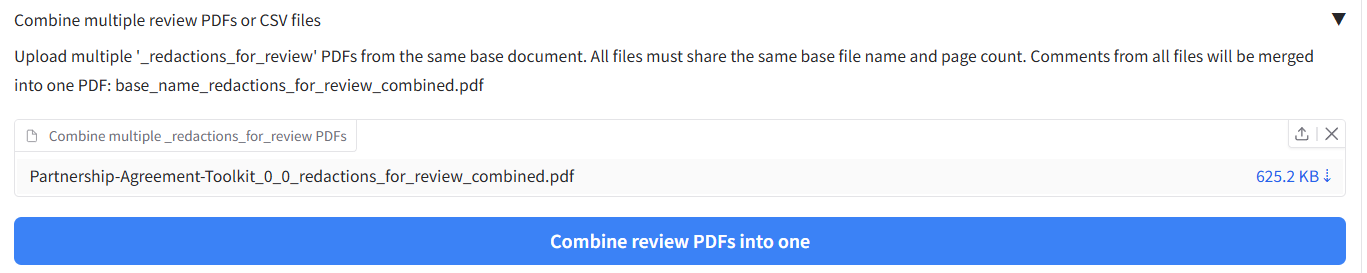

+ - [Using redactions_for_review.pdf files with Adobe Acrobat](#using-redactions_for_reviewpdf-files-with-adobe-acrobat)

+ - [Exporting comment files to Adobe Acrobat](#exporting-comment-files-to-adobe-acrobat)

+ - [Importing comment files from Adobe Acrobat](#importing-comment-files-from-adobe-acrobat)

+- [Submit documents to the AWS Textract API service for faster OCR](#submit-documents-to-the-aws-textract-api-service-for-faster-ocr)

+- [Advanced OCR settings - Efficient OCR, overwrite existing OCR](#advanced-ocr-settings---efficient-ocr-overwrite-existing-ocr)

+- [Modifying existing redaction review files](#modifying-existing-redaction-review-files)

+

+### Features for expert users/system administrators

+- [Using AWS Textract and Comprehend when not running in an AWS environment](#using-aws-textract-and-comprehend-when-not-running-in-an-aws-environment)

+- [Advanced OCR model options (inlcuding Hybrid OCR)](#advanced-ocr-options-hybrid-ocr)

+- [PII identification with LLMs](#pii-identification-with-llms)

+- [Command Line Interface (CLI)](#command-line-interface-cli)

+

+## Quickstart - Test the app with built-in examples

+

+### PDF document examples

+

+The app provides some built-in examples so you can see how it works before trying one of your own files.

+

+**For PDF/image redaction:** On the 'Redact PDFs/images' tab, you'll see a section titled "Try an example - Click on an example below and then the 'Extract text and redact document' button". Simply click on any of the available examples to load them with pre-configured settings:

+

+- **PDF with selectable text redaction** - Uses local text extraction with standard PII detection

+- **Image redaction with local OCR** - Processes an image file using OCR

+- **PDF redaction with custom entities** - Demonstrates custom entity selection (Titles, Person, Dates)

+- **PDF redaction with AWS services and signature detection** - Shows AWS Textract with signature extraction (if AWS is enabled)

+- **PDF redaction with custom deny list and whole page redaction** - Demonstrates the use of redacting specific named terms and whole pages

+

+Once you have clicked on an example, you can click the 'Extract text and redact document' button to redact the document. You can then click the 'Review and modify redactions' button below this to review and modify suggested redactions. See the 'Basic redaction' section below for more details on redacting your own documents.

+

+### CSV/Excel file examples

+

+**For tabular data:** On the 'Word or Excel/CSV files' tab, you'll find examples for both redaction and duplicate detection:

+

+- **CSV file redaction** - Shows how to redact specific columns in tabular data

+- **Word document redaction** - Demonstrates Word document processing

+- **Excel file duplicate detection** - Shows how to find duplicate rows in spreadsheet data

+

+Once you have clicked on an example, you can click the 'Redact text/data files' button directly to redact the example file. Once done, you can click the 'Review redactions' button to review and modify suggested redaction boxes.

+

+## Basic redaction

+

+The document redaction app can detect personally-identifiable information (PII) in documents. Documents can be redacted directly, or suggested redactions can be reviewed and modified using a graphical user interface. Basic document redaction can be performed quickly using the default options.

+

+**Where to work:** All of the main redaction options and the redact button are on the **'Redact PDFs/images'** tab.

+

+

+

+### Upload files to the app

+

+On the **'Redact PDFs/images'** tab, the **'Redaction settings'** accordion at the top accepts PDFs and image files (JPG, PNG) for redaction. Click on the **'Drop files here or Click to Upload'** area, or select from one of the [examples provided](#pdf-document-examples).

+

+### Text extraction

+

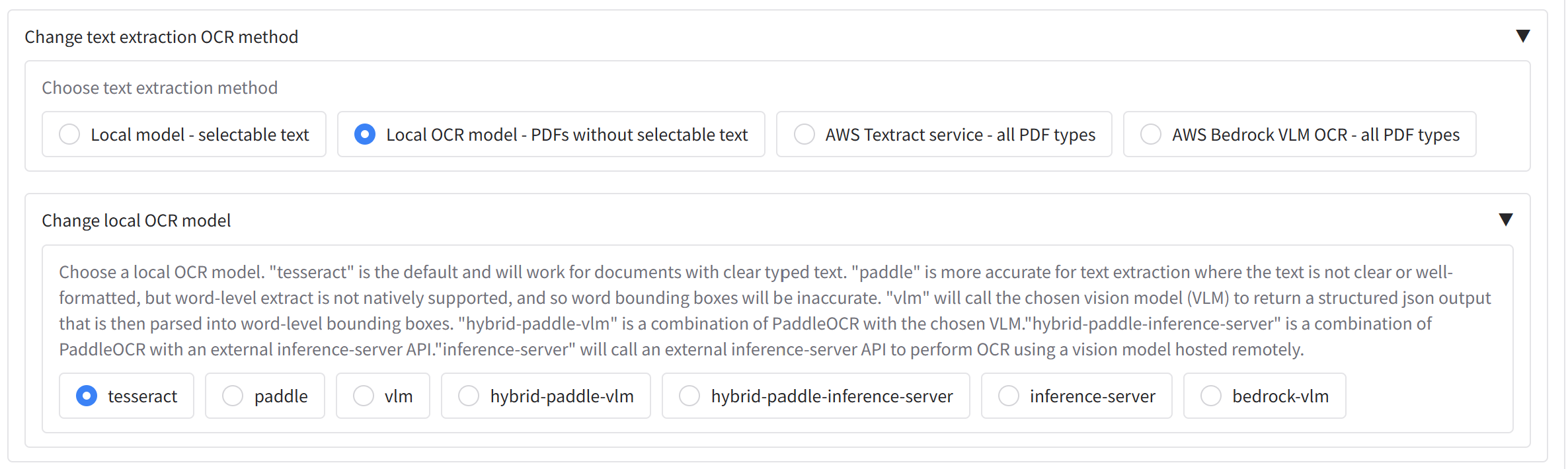

+Under **'Redaction settings'** accordion, you can see **'Change default text extraction settings'**. You may have the following options available depending on your configuration - if not, AWS Textract will likely be the default option:

+

+- **'Local model - selectable text'** - (optional) Reads text directly from PDFs that have selectable text.

+- **'Local OCR model - PDFs without selectable text'** - (optional) Uses a local OCR model to extract text from PDFs/images. Handles most typed text without selectable text but is less accurate for handwriting and signatures; use the AWS Textract option in this case.

+- **'AWS Textract service - all PDF types'** - Available when the app is configured for AWS. Textract runs in the cloud and is more capable for complex layouts, handwriting, and signatures. It incurs a (relatively small) cost per page.

+

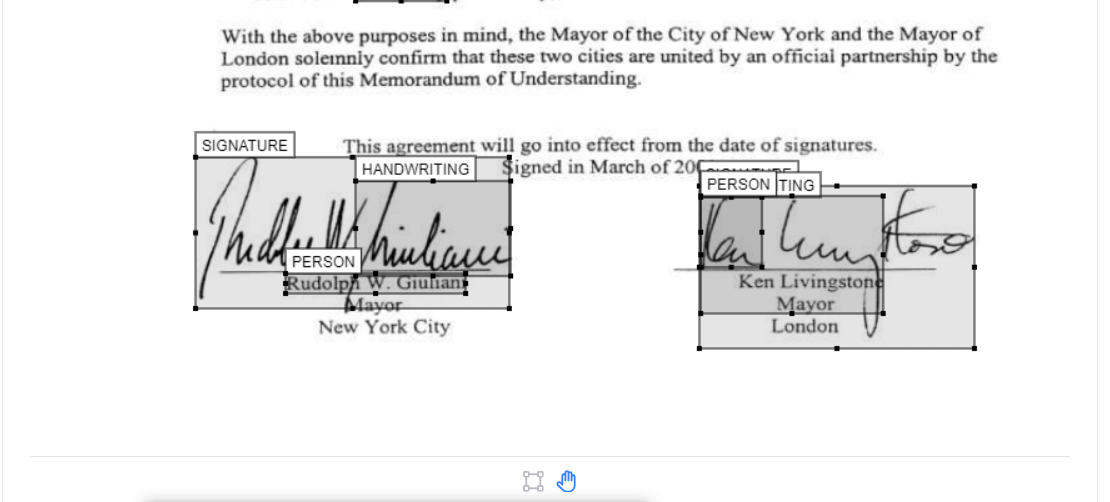

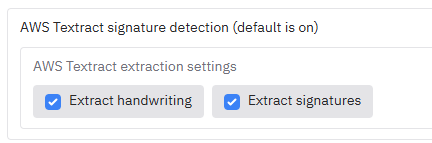

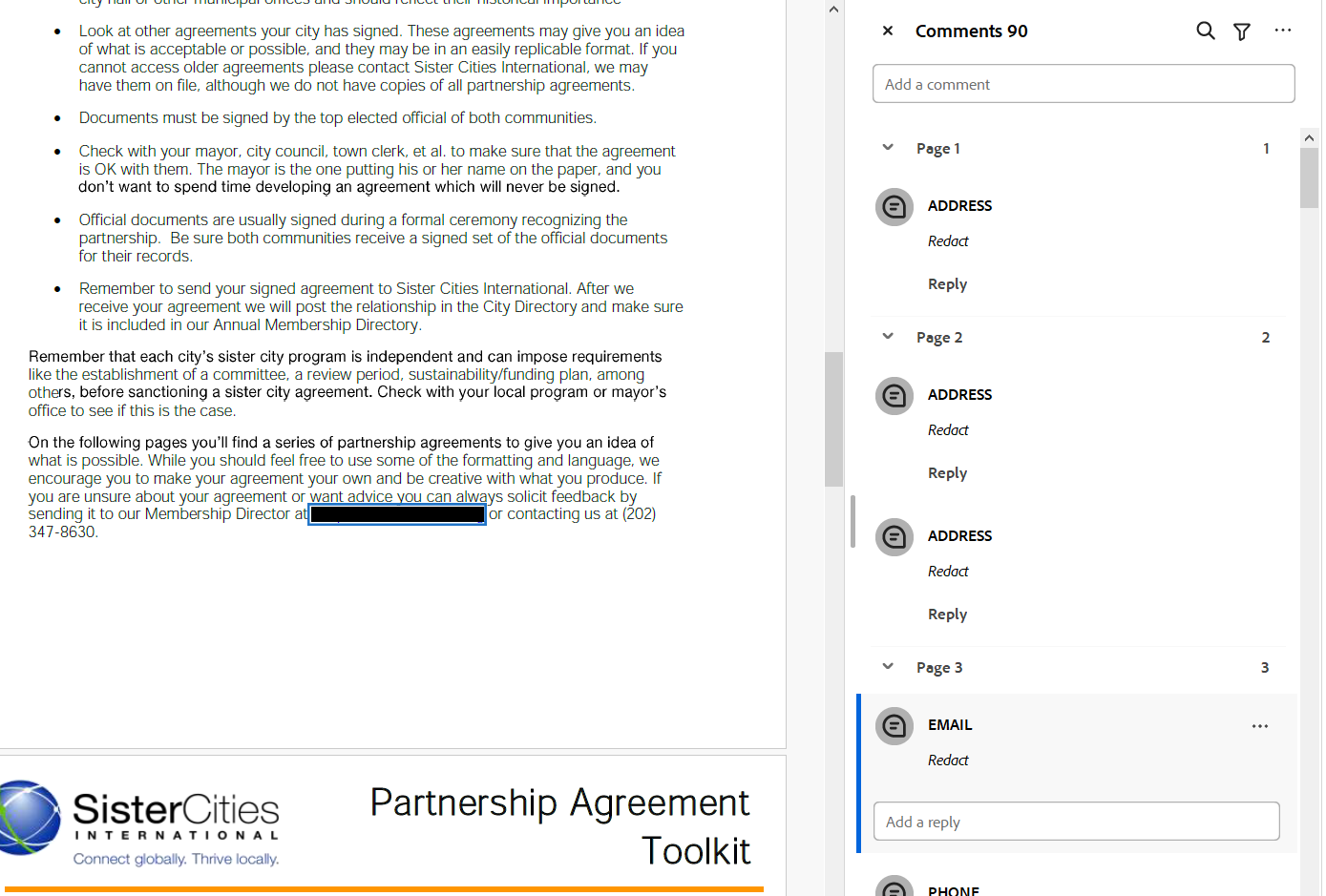

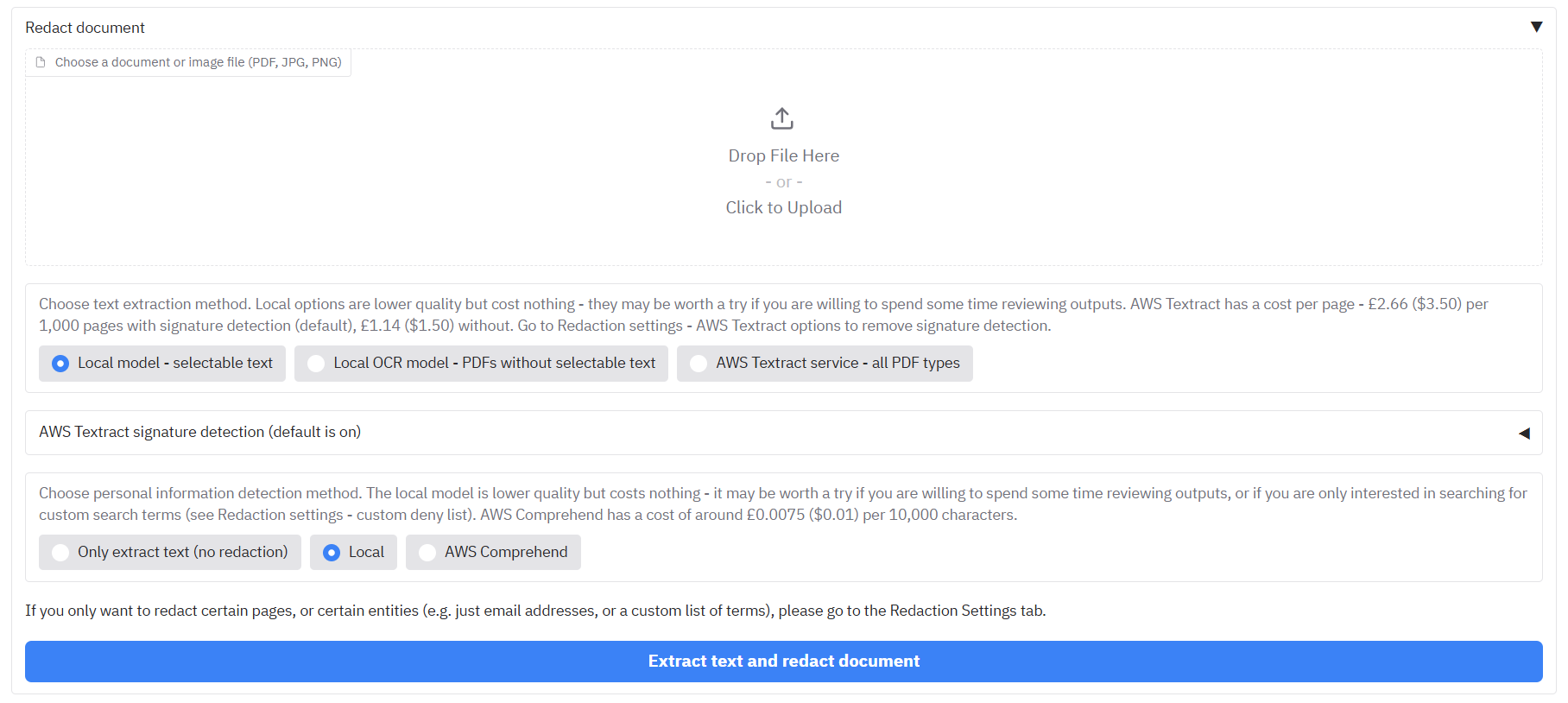

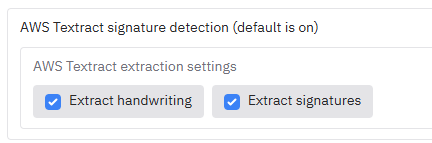

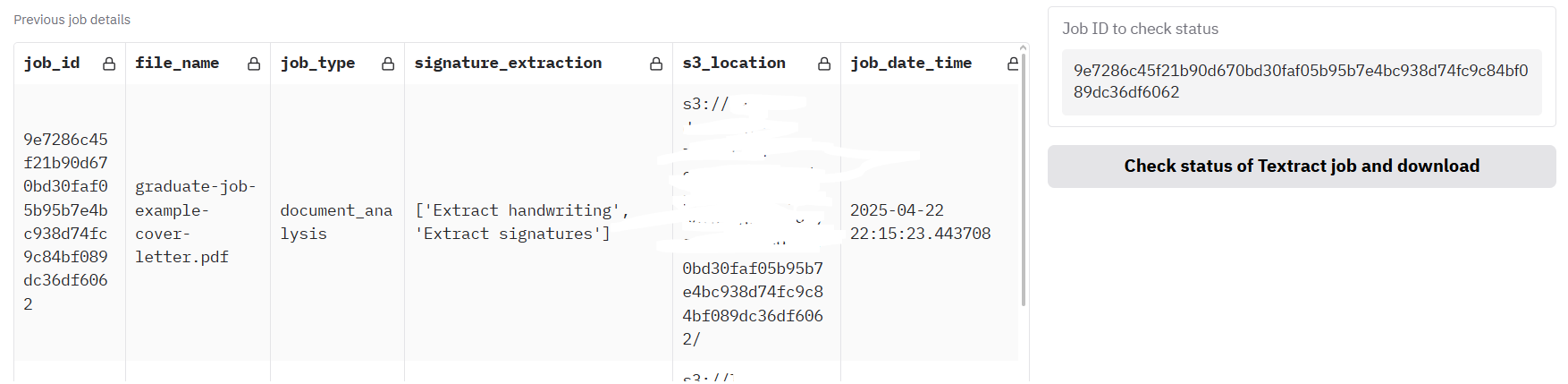

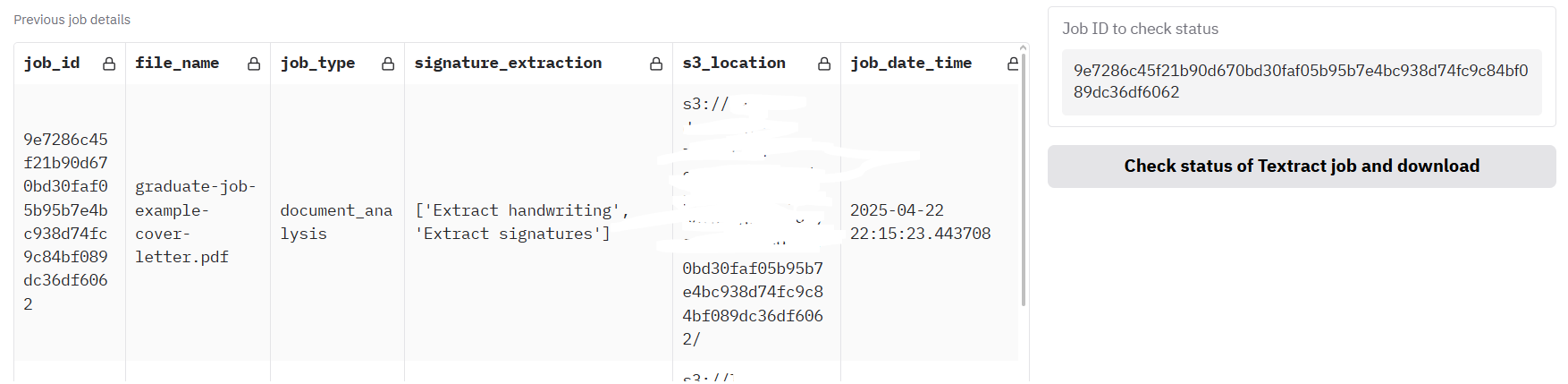

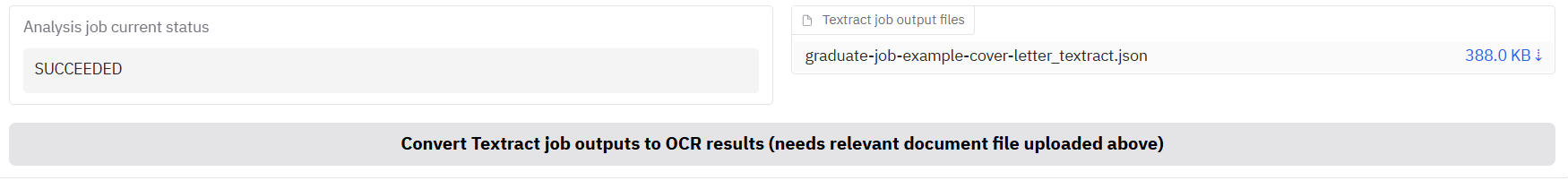

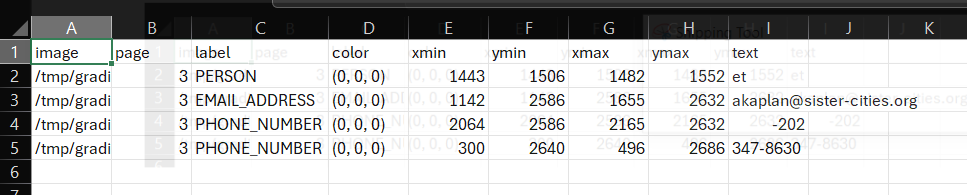

+### AWS Textract signature extraction

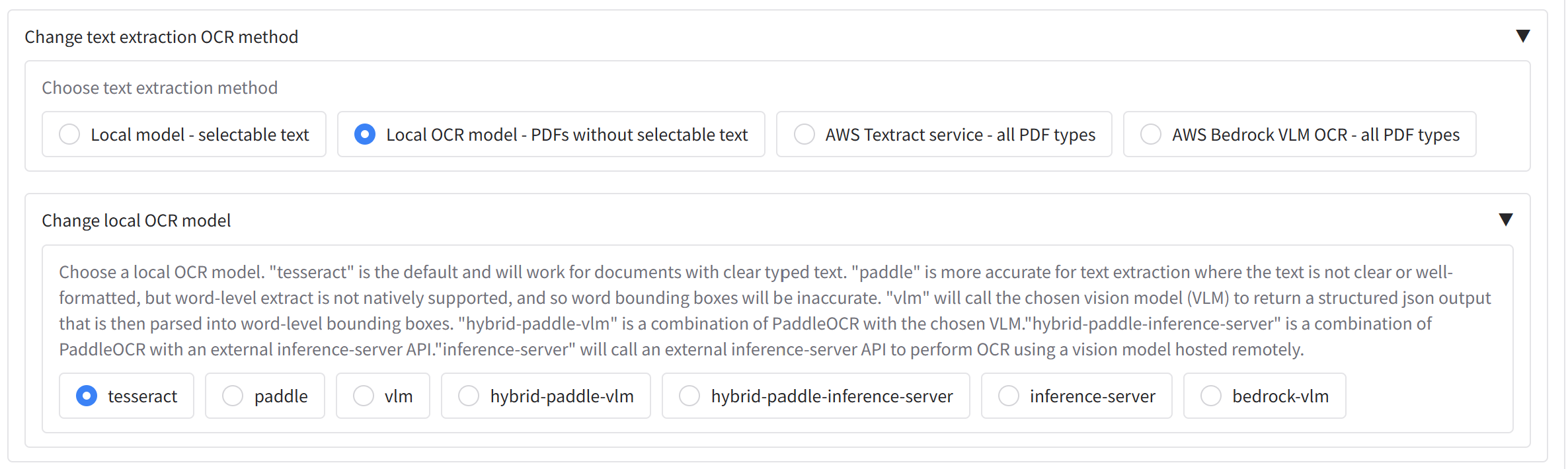

+

+

+

+If you select **'AWS Textract service - all PDF types'** as the text extraction method, an accordion **'Enable AWS Textract signature detection (default is off)'** appears. Open it to turn on handwriting and/or signature detection. Enabling signatures has a cost impact (~£2.66 ($3.50) per 1,000 pages vs ~£1.14 ($1.50) per 1,000 pages without signature detection).

+

+

+  +

+

+

+**NOTE:** Form, layout, table extraction, or face detection can be enabled if required for specific use cases; they are off by default - please contact your system administrator if you need these features.

+

+### PII redaction method

+

+Next we need to choose our model to identify personally-identifiable information (PII) in the document. Under **'Change PII identification method'** accordion (under **'Change default redaction settings'**) you will see **'Choose redaction method'**, a radio with three options:

+- **'Extract text only'** runs text extraction without redaction — useful when you only need OCR output or want to review text before redacting.

+- **'Redact all PII'** (the default) uses the chosen PII detection method to find and redact personal information across a range of standard entity types, e.g. addresses, names, dates, etc.

+- **'Redact selected terms'** will focus redaction only on the specific terms in the [custom allow/deny lists](#allow-list-deny-list-and-whole-page-redaction) below.

+

+Still under **'Change default redaction settings'**, you may see the **'Change PII identification model'** section, if enabled, which lets you choose how PII is detected. You may have the choice of the following options. If not, AWS Comprehend will likely be the default option:

+

+- **'Local'** - (optional) Uses a local model (e.g. spaCy) to detect PII at no extra cost, but with less accuracy than the alternative options.

+- **'AWS Comprehend'** - Uses AWS Comprehend for PII detection when the app is configured for AWS; typically more accurate but incurs a cost (around £0.0075 ($0.01) per 10,000 characters).

+- Other options may be available depending on the app settings (e.g. AWS Bedrock, local LLM models).

+

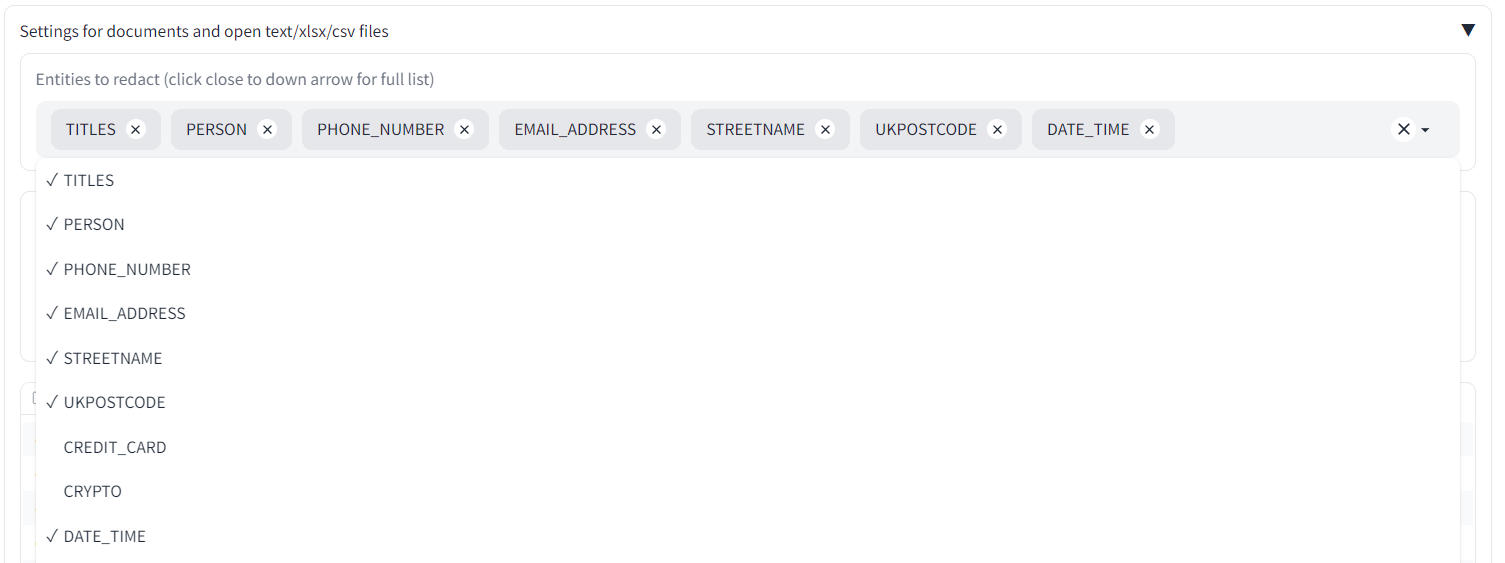

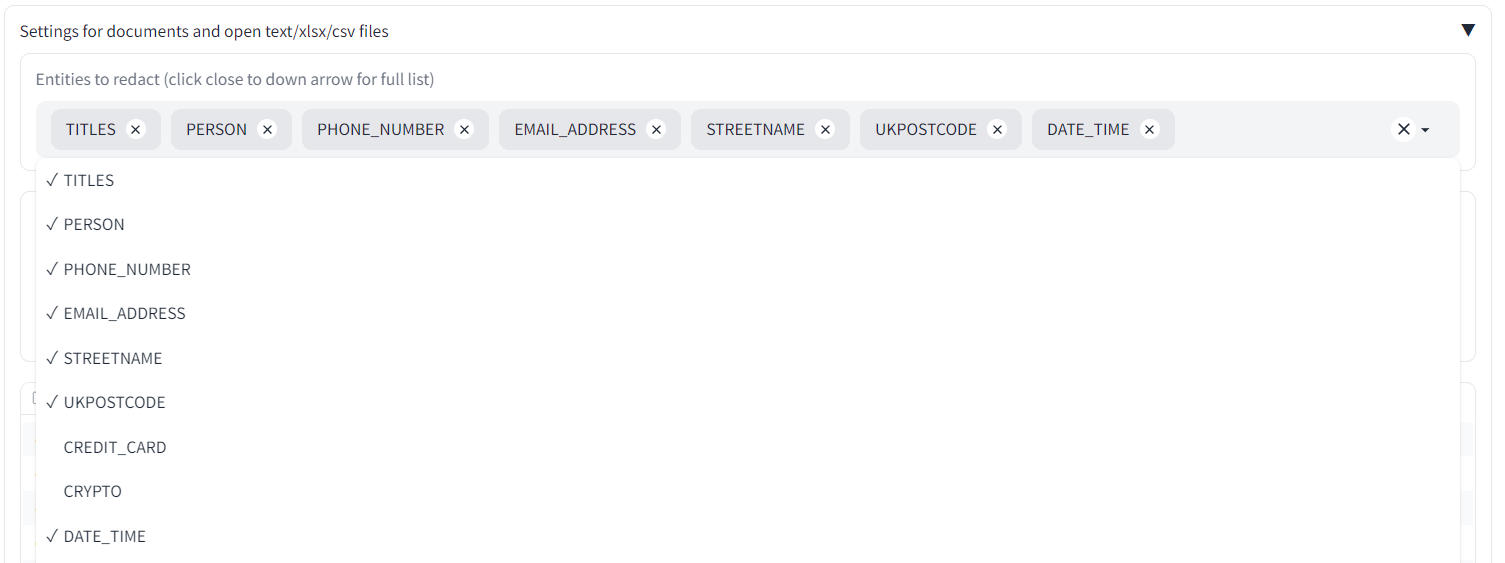

+Under **'Select entity types to redact'** you can choose which types of PII to redact (e.g. names, emails, dates). Click in the box or near the dropdown arrow to see the full list. Any entity type that remains in the box will be searched for during the redaction process.

+

+

+

+### Duplicate page redaction

+

+Alongside the 'Change PII identification method' section, you will see 'Redact duplicate pages'. If this is enabled, following the main redaction process, the app will identify pages with duplicate text in the document and redact them in the same run. If you want to modify the duplicate page detection settings, you can do so on the **Identify duplicate pages** tab - please refer to the [Identifying and redacting duplicate pages](#identifying-and-redacting-duplicate-pages) section for more details.

+

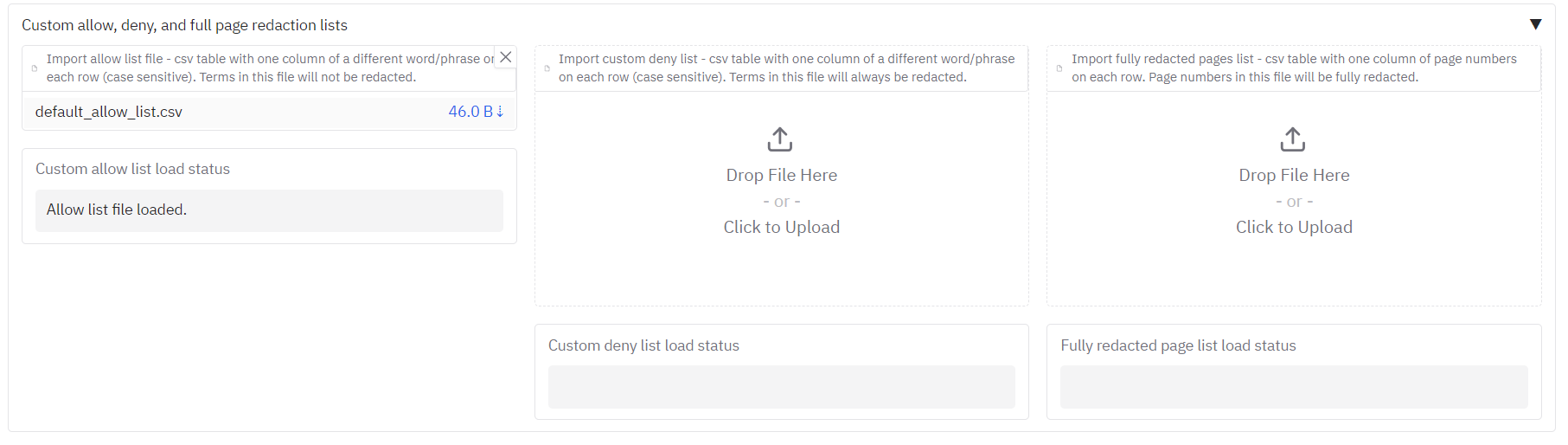

+### Allow list, deny list, and whole-page redaction

+

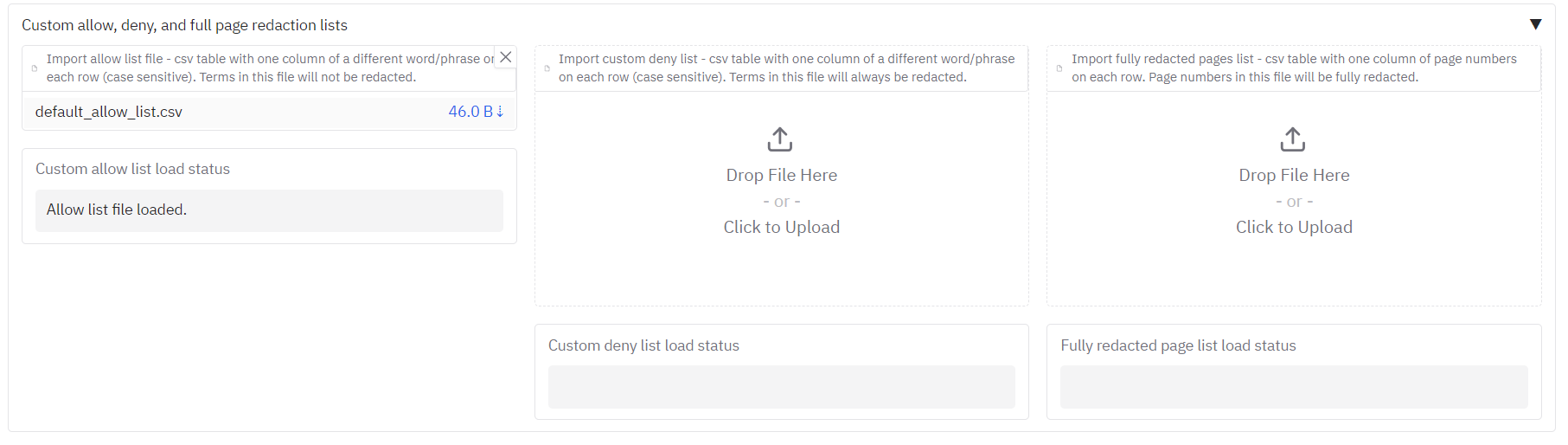

+Underneath you will see **'Terms to always include or exclude in redactions, and whole page redaction'**. Here you can:

+

+- **Allow list** – Terms that if found, will never be redacted. To use, ensure that CUSTOM is selected in the **'Select entity types to redact'** dropdown.

+- **Deny list** – Terms that if found, will always be redacted. To use, ensure that CUSTOM is selected in the **'Select entity types to redact'** dropdown.

+- **Fully redact these pages** – Page numbers that will be fully redacted with a box that covers the entire page.