Spaces:

Sleeping

Sleeping

Commit ·

b0d4092

1

Parent(s): c9535bf

Upload folder using huggingface_hub

Browse files- .github/workflows/huggingface.yaml +20 -0

- .github/workflows/update_space.yml +28 -0

- .gitignore +11 -0

- Constants.py +11 -0

- LICENSE +21 -0

- README.md +33 -8

- __pycache__/Constants.cpython-310.pyc +0 -0

- __pycache__/apiKey.cpython-310.pyc +0 -0

- __pycache__/db_types.cpython-310.pyc +0 -0

- __pycache__/ingest_data.cpython-310.pyc +0 -0

- __pycache__/metadatainfo.cpython-310.pyc +0 -0

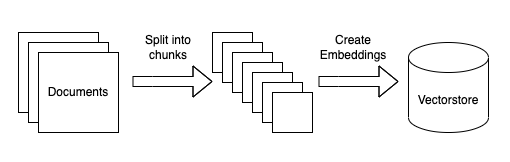

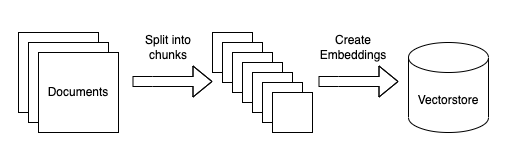

- __pycache__/notionMetadataInfo.cpython-310.pyc +0 -0

- __pycache__/query_data.cpython-310.pyc +0 -0

- __pycache__/read_notion.cpython-310.pyc +0 -0

- __pycache__/utilities.cpython-310.pyc +0 -0

- apiKey.py +2 -0

- app.py +215 -0

- assets/logo/logo.jpg +0 -0

- blogpost.md +330 -0

- chromaclient.py +17 -0

- chromadb/cd6d665c-a1d1-4b9b-b8a5-cfa0f731d4d0/length.bin +3 -0

- cli_app.py +34 -0

- data/Evan Cover.docx +0 -0

- data/Josua Krause.docx +0 -0

- data/Navid.docx +0 -0

- data/Neal Patel.docx +0 -0

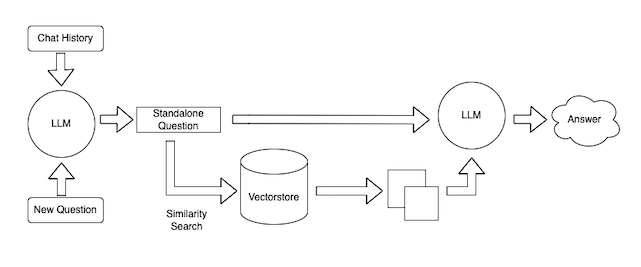

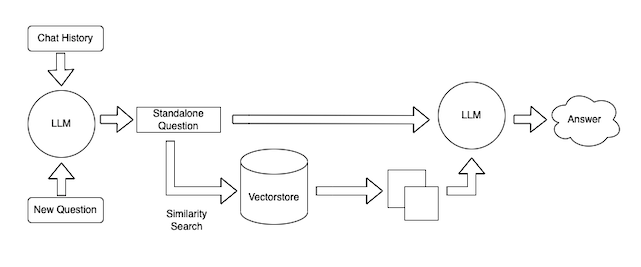

- data/Siva_values.docx +0 -0

- data/Tanmay Chopra.docx +0 -0

- data_back/.DS_Store +0 -0

- data_back/Evan Cover.docx +0 -0

- data_back/Josua Krause.docx +0 -0

- data_back/Navid.docx +0 -0

- data_back/Neal Patel.docx +0 -0

- data_back/Siva_values.docx +0 -0

- data_back/Tanmay Chopra.docx +0 -0

- db_types.py +6 -0

- ingest_data.py +242 -0

- logs/output.log +7 -0

- metadatainfo.py +27 -0

- myvectorstore.pkl +3 -0

- notionMetadataInfo.py +23 -0

- notiondb/chroma.sqlite3 +0 -0

- old/app_copy.py +140 -0

- query_data.py +212 -0

- read_notion.py +47 -0

- requirements.txt +7 -0

- utilities.py +31 -0

- vectorstore.pkl +3 -0

.github/workflows/huggingface.yaml

ADDED

|

@@ -0,0 +1,20 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

name: Sync to Hugging Face hub

|

| 2 |

+

on:

|

| 3 |

+

push:

|

| 4 |

+

branches: [main]

|

| 5 |

+

|

| 6 |

+

# to run this workflow manually from the Actions tab

|

| 7 |

+

workflow_dispatch:

|

| 8 |

+

|

| 9 |

+

jobs:

|

| 10 |

+

sync-to-hub:

|

| 11 |

+

runs-on: ubuntu-latest

|

| 12 |

+

steps:

|

| 13 |

+

- uses: actions/checkout@v3

|

| 14 |

+

with:

|

| 15 |

+

fetch-depth: 0

|

| 16 |

+

lfs: true

|

| 17 |

+

- name: Push to hub

|

| 18 |

+

env:

|

| 19 |

+

HF_TOKEN: ${{ secrets.HF_TOKEN }}

|

| 20 |

+

run: git push https://snehasquasher:$HF_TOKEN@huggingface.co/spaces/snehasquasher/spur-mvp main

|

.github/workflows/update_space.yml

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

name: Run Python script

|

| 2 |

+

|

| 3 |

+

on:

|

| 4 |

+

push:

|

| 5 |

+

branches:

|

| 6 |

+

- main

|

| 7 |

+

|

| 8 |

+

jobs:

|

| 9 |

+

build:

|

| 10 |

+

runs-on: ubuntu-latest

|

| 11 |

+

|

| 12 |

+

steps:

|

| 13 |

+

- name: Checkout

|

| 14 |

+

uses: actions/checkout@v2

|

| 15 |

+

|

| 16 |

+

- name: Set up Python

|

| 17 |

+

uses: actions/setup-python@v2

|

| 18 |

+

with:

|

| 19 |

+

python-version: '3.9'

|

| 20 |

+

|

| 21 |

+

- name: Install Gradio

|

| 22 |

+

run: python -m pip install gradio

|

| 23 |

+

|

| 24 |

+

- name: Log in to Hugging Face

|

| 25 |

+

run: python -c 'import huggingface_hub; huggingface_hub.login(token="${{ secrets.hf_token }}")'

|

| 26 |

+

|

| 27 |

+

- name: Deploy to Spaces

|

| 28 |

+

run: gradio deploy

|

.gitignore

ADDED

|

@@ -0,0 +1,11 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

apiKey.py

|

| 2 |

+

logs

|

| 3 |

+

logs/*.*

|

| 4 |

+

__pycache__

|

| 5 |

+

__pycache__/*

|

| 6 |

+

*.pyc

|

| 7 |

+

chromadb/*

|

| 8 |

+

data

|

| 9 |

+

notiondb

|

| 10 |

+

.DS_Store

|

| 11 |

+

chroma.sqlite3

|

Constants.py

ADDED

|

@@ -0,0 +1,11 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

DB_TYPE ="notion" #faiss or chromadb or notion

|

| 2 |

+

PERSIST_DIRECTORY="./"

|

| 3 |

+

CHROMA_PERSIST_DIRECTORY="./chromadb"

|

| 4 |

+

NOTION_PERSIST_DIRECTORY="./notiondb"

|

| 5 |

+

COLLECTION_NAME="chatdata"

|

| 6 |

+

CHROMA_COLLECTION_NAME="LLMData"

|

| 7 |

+

NOTION_COLLECTION_NAME="notionData"

|

| 8 |

+

DATA_DIRECTORY="./data"

|

| 9 |

+

LOG_FILE="./logs/output.log"

|

| 10 |

+

NOTION_DB="0c3bfaa0a33c4038aeeb988c16f83abb"

|

| 11 |

+

#MAX_PAGES_TO_READ=

|

LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT License

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2023 Harrison Chase

|

| 4 |

+

|

| 5 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 6 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 7 |

+

in the Software without restriction, including without limitation the rights

|

| 8 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 9 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 10 |

+

furnished to do so, subject to the following conditions:

|

| 11 |

+

|

| 12 |

+

The above copyright notice and this permission notice shall be included in all

|

| 13 |

+

copies or substantial portions of the Software.

|

| 14 |

+

|

| 15 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 16 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 17 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 18 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 19 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 20 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 21 |

+

SOFTWARE.

|

README.md

CHANGED

|

@@ -1,12 +1,37 @@

|

|

| 1 |

---

|

| 2 |

-

title:

|

| 3 |

-

emoji: 🏃

|

| 4 |

-

colorFrom: yellow

|

| 5 |

-

colorTo: green

|

| 6 |

-

sdk: gradio

|

| 7 |

-

sdk_version: 3.41.2

|

| 8 |

app_file: app.py

|

| 9 |

-

|

|

|

|

| 10 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 11 |

|

| 12 |

-

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

+

title: spur-chatbot

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 3 |

app_file: app.py

|

| 4 |

+

sdk: gradio

|

| 5 |

+

sdk_version: 3.40.1

|

| 6 |

---

|

| 7 |

+

# Chat-Your-Data

|

| 8 |

+

|

| 9 |

+

Create a ChatGPT like experience over your custom docs using [LangChain](https://github.com/langchain-ai/langchain).

|

| 10 |

+

|

| 11 |

+

See [this blog post](blogpost.md) for a more detailed explanation.

|

| 12 |

+

|

| 13 |

+

## Step 0: Install requirements

|

| 14 |

+

|

| 15 |

+

`pip install -r requirements.txt`

|

| 16 |

+

|

| 17 |

+

## Step 1: Set your open AI Key

|

| 18 |

+

|

| 19 |

+

```sh

|

| 20 |

+

export OPENAI_API_KEY="Your OpenAPI Key"

|

| 21 |

+

```

|

| 22 |

+

## Step 2: Query data

|

| 23 |

+

|

| 24 |

+

Custom prompts are used to ground the answers in the state of the union text file.

|

| 25 |

+

|

| 26 |

+

## Step 3: Running the Application

|

| 27 |

+

|

| 28 |

+

By running `python app.py` from the command line you can easily interact with your ChatGPT over your own data.

|

| 29 |

+

|

| 30 |

+

# Others

|

| 31 |

+

|

| 32 |

+

## Notion Integration

|

| 33 |

|

| 34 |

+

## Step 1: Set your Notion API Key

|

| 35 |

+

```sh

|

| 36 |

+

export NOTION_API_KEY= "Your Notion API Key"

|

| 37 |

+

```

|

__pycache__/Constants.cpython-310.pyc

ADDED

|

Binary file (389 Bytes). View file

|

|

|

__pycache__/apiKey.cpython-310.pyc

ADDED

|

Binary file (226 Bytes). View file

|

|

|

__pycache__/db_types.cpython-310.pyc

ADDED

|

Binary file (417 Bytes). View file

|

|

|

__pycache__/ingest_data.cpython-310.pyc

ADDED

|

Binary file (3.76 kB). View file

|

|

|

__pycache__/metadatainfo.cpython-310.pyc

ADDED

|

Binary file (553 Bytes). View file

|

|

|

__pycache__/notionMetadataInfo.cpython-310.pyc

ADDED

|

Binary file (450 Bytes). View file

|

|

|

__pycache__/query_data.cpython-310.pyc

ADDED

|

Binary file (6.79 kB). View file

|

|

|

__pycache__/read_notion.cpython-310.pyc

ADDED

|

Binary file (1.03 kB). View file

|

|

|

__pycache__/utilities.cpython-310.pyc

ADDED

|

Binary file (921 Bytes). View file

|

|

|

apiKey.py

ADDED

|

@@ -0,0 +1,2 @@

|

|

|

|

|

|

|

|

|

|

| 1 |

+

OPENAI_API_KEY="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"

|

| 2 |

+

NOTION_API_KEY="secret_OfFuUM9P85js07guQiRpyVFGiNMvTgQf57QBOBOsB94"

|

app.py

ADDED

|

@@ -0,0 +1,215 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import os

|

| 2 |

+

import sys

|

| 3 |

+

from typing import Optional, Tuple

|

| 4 |

+

from threading import Lock

|

| 5 |

+

import json

|

| 6 |

+

import shutil

|

| 7 |

+

import gradio as gr

|

| 8 |

+

from query_data import chain_options

|

| 9 |

+

from query_data import get_basic_qa_chain

|

| 10 |

+

from zipfile import ZipFile

|

| 11 |

+

from ingest_data import ingestData

|

| 12 |

+

|

| 13 |

+

from query_data import (get_basic_qa_chain,

|

| 14 |

+

get_qa_with_sources_chain,

|

| 15 |

+

get_custom_prompt_qa_chain,

|

| 16 |

+

get_condense_prompt_qa_chain,

|

| 17 |

+

get_retrievalqa_with_sources_chain)

|

| 18 |

+

|

| 19 |

+

from metadatainfo import metadata_field_info

|

| 20 |

+

from Constants import *

|

| 21 |

+

from apiKey import *

|

| 22 |

+

|

| 23 |

+

def set_openai_api_key(api_key: str):

|

| 24 |

+

"""Set the api key and return chain.

|

| 25 |

+

If no api_key, then None is returned.

|

| 26 |

+

"""

|

| 27 |

+

if api_key:

|

| 28 |

+

os.environ["OPENAI_API_KEY"] = api_key

|

| 29 |

+

chain = getChainSelectedByUser(chainType)

|

| 30 |

+

os.environ["OPENAI_API_KEY"] = ""

|

| 31 |

+

return chain

|

| 32 |

+

'''

|

| 33 |

+

os.environ["OPENAI_API_KEY"] = api_key

|

| 34 |

+

chain=get_basic_qa_chain()

|

| 35 |

+

return chain'''

|

| 36 |

+

|

| 37 |

+

def getChainSelectedByUser(chainType: gr.Dropdown) :

|

| 38 |

+

chain = get_basic_qa_chain()

|

| 39 |

+

|

| 40 |

+

if (chainType == "with_sources" ):

|

| 41 |

+

chain = get_qa_with_sources_chain()

|

| 42 |

+

elif (chainType == "custom_prompt"):

|

| 43 |

+

chain = get_custom_prompt_qa_chain()

|

| 44 |

+

elif (chainType == "condense_prompt"):

|

| 45 |

+

chain = get_condense_prompt_qa_chain()

|

| 46 |

+

elif (chainType == "retrieval_sources_chain"):

|

| 47 |

+

chain = get_retrievalqa_with_sources_chain()

|

| 48 |

+

|

| 49 |

+

return chain

|

| 50 |

+

|

| 51 |

+

class Logger:

|

| 52 |

+

def __init__(self, filename):

|

| 53 |

+

self.terminal = sys.stdout

|

| 54 |

+

self.log = open(filename, "w")

|

| 55 |

+

|

| 56 |

+

def write(self, message):

|

| 57 |

+

self.terminal.write(message)

|

| 58 |

+

self.log.write(message)

|

| 59 |

+

|

| 60 |

+

def flush(self):

|

| 61 |

+

self.terminal.flush()

|

| 62 |

+

self.log.flush()

|

| 63 |

+

|

| 64 |

+

def isatty(self):

|

| 65 |

+

return False

|

| 66 |

+

|

| 67 |

+

sys.stdout = Logger(LOG_FILE)

|

| 68 |

+

|

| 69 |

+

def read_logs():

|

| 70 |

+

sys.stdout.flush()

|

| 71 |

+

with open(LOG_FILE, "r") as f:

|

| 72 |

+

return f.read()

|

| 73 |

+

|

| 74 |

+

def upload_file(files):

|

| 75 |

+

file_paths = [file.name for file in files]

|

| 76 |

+

for f in file_paths:

|

| 77 |

+

print("moving file :" + f)

|

| 78 |

+

shutil.copy(f, DATA_DIRECTORY)

|

| 79 |

+

return file_paths

|

| 80 |

+

|

| 81 |

+

def ingest():

|

| 82 |

+

ingestData()

|

| 83 |

+

|

| 84 |

+

class ChatWrapper:

|

| 85 |

+

|

| 86 |

+

def __init__(self):

|

| 87 |

+

self.lock = Lock()

|

| 88 |

+

|

| 89 |

+

def __call__(

|

| 90 |

+

self, api_key: str, inp: str, history: Optional[Tuple[str, str]], chain, chainType

|

| 91 |

+

):

|

| 92 |

+

"""Execute the chat functionality."""

|

| 93 |

+

self.lock.acquire()

|

| 94 |

+

try:

|

| 95 |

+

history = history or []

|

| 96 |

+

# If chain is None, that is because no API key was provided.

|

| 97 |

+

if chain is None:

|

| 98 |

+

'''os.environ["OPENAI_API_KEY"] = api_key

|

| 99 |

+

chain=get_basic_qa_chain()'''

|

| 100 |

+

history.append((inp, "Please paste your OpenAI key to use"))

|

| 101 |

+

return history, history

|

| 102 |

+

# Set OpenAI key

|

| 103 |

+

import openai

|

| 104 |

+

|

| 105 |

+

openai.api_key = api_key

|

| 106 |

+

print("calling chain of type " + str(type(chain)))

|

| 107 |

+

# Run chain and append input.

|

| 108 |

+

results = chain({"question": inp})

|

| 109 |

+

#metadata=metadata_field_info,

|

| 110 |

+

#include_run_info=True)

|

| 111 |

+

print("result keys :")

|

| 112 |

+

print(*results, sep=" " )

|

| 113 |

+

|

| 114 |

+

output = results["answer"]

|

| 115 |

+

|

| 116 |

+

if (chainType == "with_sources") :

|

| 117 |

+

print("document source count :"+str(len(results["source_documents"])))

|

| 118 |

+

for s in results["source_documents"]:

|

| 119 |

+

for key in s.metadata:

|

| 120 |

+

output = output + "<br>" + key + ":"+ s.metadata[key] + "<br>"

|

| 121 |

+

|

| 122 |

+

elif (chainType == "retrieval_sources_chain"):

|

| 123 |

+

print("results")

|

| 124 |

+

#output = output + "<br>" + "SOURCE:" + results["sources"]

|

| 125 |

+

history.append((inp, output))

|

| 126 |

+

except Exception as e:

|

| 127 |

+

raise e

|

| 128 |

+

finally:

|

| 129 |

+

self.lock.release()

|

| 130 |

+

return history, history

|

| 131 |

+

|

| 132 |

+

chat = ChatWrapper()

|

| 133 |

+

|

| 134 |

+

block = gr.Blocks(gr.themes.Soft(),

|

| 135 |

+

analytics_enabled=True)

|

| 136 |

+

|

| 137 |

+

with block :

|

| 138 |

+

with gr.Row():

|

| 139 |

+

#api_key=OPENAI_API_KEY

|

| 140 |

+

gr.Markdown(

|

| 141 |

+

"<h3><center>Chat-Your-Data</center></h3>")

|

| 142 |

+

|

| 143 |

+

openai_api_key_textbox = gr.Textbox(

|

| 144 |

+

#value=api_key,

|

| 145 |

+

placeholder="Paste your OpenAI API key (sk-...)",

|

| 146 |

+

show_label=False,

|

| 147 |

+

lines=1,

|

| 148 |

+

type="password",

|

| 149 |

+

)

|

| 150 |

+

#set_openai_api_key(api_key)

|

| 151 |

+

chatbot = gr.Chatbot()

|

| 152 |

+

|

| 153 |

+

|

| 154 |

+

with gr.Row():

|

| 155 |

+

message = gr.Textbox(

|

| 156 |

+

value="ask me something about your data",

|

| 157 |

+

label="What's your question?",

|

| 158 |

+

placeholder="Ask questions about the most recent state of the union",

|

| 159 |

+

lines=1,

|

| 160 |

+

)

|

| 161 |

+

submit = gr.Button(value="Send", variant="secondary").style(

|

| 162 |

+

scale=1)

|

| 163 |

+

|

| 164 |

+

gr.Examples(

|

| 165 |

+

examples=[

|

| 166 |

+

"Who is Tanmay Chopra?",

|

| 167 |

+

"Which persons know about the topics LLM?",

|

| 168 |

+

"What did Navid say about LLM?",

|

| 169 |

+

],

|

| 170 |

+

inputs=message,

|

| 171 |

+

)

|

| 172 |

+

|

| 173 |

+

with gr.Row():

|

| 174 |

+

chainType = gr.Dropdown(list(chain_options.keys()),

|

| 175 |

+

label="Chain Type", value="basic"

|

| 176 |

+

|

| 177 |

+

)

|

| 178 |

+

|

| 179 |

+

with gr.Accordion(label="show_logs"):

|

| 180 |

+

logs = gr.Textbox(label="Console")

|

| 181 |

+

block.load(read_logs, None, logs, every=1)

|

| 182 |

+

|

| 183 |

+

file_output = gr.File()

|

| 184 |

+

upload_button = gr.UploadButton("Click to Upload a File", file_types=[".docx", ".pdf",".txt",".json"], file_count="multiple")

|

| 185 |

+

files = upload_button.upload(upload_file, upload_button, file_output)

|

| 186 |

+

# gr.Gallery(files)

|

| 187 |

+

btn = gr.Button(value="Ingest")

|

| 188 |

+

btn.click(ingest)

|

| 189 |

+

gr.HTML("Demo application of a LangChain chain.")

|

| 190 |

+

|

| 191 |

+

gr.HTML(

|

| 192 |

+

"<center>Powered by <a href='https://github.com/hwchase17/langchain'>LangChain 🦜️🔗</a></center>"

|

| 193 |

+

)

|

| 194 |

+

|

| 195 |

+

state = gr.State()

|

| 196 |

+

agent_state = gr.State()

|

| 197 |

+

|

| 198 |

+

submit.click(chat, inputs=[openai_api_key_textbox,message,

|

| 199 |

+

state, agent_state, chainType], outputs=[chatbot, state])

|

| 200 |

+

message.submit(chat, inputs=[

|

| 201 |

+

openai_api_key_textbox, message, state, agent_state, chainType], outputs=[chatbot, state])

|

| 202 |

+

|

| 203 |

+

openai_api_key_textbox.change(

|

| 204 |

+

set_openai_api_key,

|

| 205 |

+

inputs=[openai_api_key_textbox],

|

| 206 |

+

outputs=[agent_state],

|

| 207 |

+

)

|

| 208 |

+

|

| 209 |

+

chainType.change(

|

| 210 |

+

getChainSelectedByUser,

|

| 211 |

+

inputs=[chainType],

|

| 212 |

+

outputs=[agent_state],

|

| 213 |

+

)

|

| 214 |

+

|

| 215 |

+

block.queue().launch(debug=True)

|

assets/logo/logo.jpg

ADDED

|

blogpost.md

ADDED

|

@@ -0,0 +1,330 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

**_Note: See the accompanying GitHub repo for this blogpost [here](https://github.com/hwchase17/chat-your-data)._**

|

| 2 |

+

**Note: Last updated by [Bill Chambers](http://billchambers.me/). August, 2023.**

|

| 3 |

+

|

| 4 |

+

ChatGPT has taken the world by storm. But while it’s great for general purpose knowledge, it only knows information about what it has been trained on, which is pre-2021 generally available internet data. It doesn’t know about your private data nor does it know recent sources of data.

|

| 5 |

+

|

| 6 |

+

Wouldn’t it be useful if it did?

|

| 7 |

+

|

| 8 |

+

This blog post is a tutorial on how to set up your own version of ChatGPT over a specific corpus of data. There is an [accompanying GitHub repo](https://github.com/hwchase17/chat-your-data) that has the relevant code referenced in this post. Specifically, this deals with text data. For how to interact with other sources of data with a natural language layer, see the below tutorials:

|

| 9 |

+

|

| 10 |

+

* [SQL Database](https://python.langchain.com/docs/modules/chains/popular/sqlite)

|

| 11 |

+

* [APIs](https://python.langchain.com/docs/modules/chains/popular/api)

|

| 12 |

+

|

| 13 |

+

## High Level Overview

|

| 14 |

+

|

| 15 |

+

At a high level, there are two components to setting up ChatGPT over your own data: (1) ingestion of the data, (2) chatbot over the data. Let's talk a bit about the steps involved in each of those.

|

| 16 |

+

|

| 17 |

+

### Ingestion of data

|

| 18 |

+

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

Ingestion involves several steps. The steps are:

|

| 22 |

+

|

| 23 |

+

1. **Load data sources to text**: this involves loading your data from arbitrary sources to text in a form that it can be used downstream. This is one place where we hope the community will help out!

|

| 24 |

+

2. **Chunk text**: this involves chunking the loaded text into smaller chunks. This is necessary because language models generally have a limit to the amount of text (tokens) they can deal with. "Chunk size" is something to be tuned over time.

|

| 25 |

+

3. **Embed text**: this involves creating a numerical embedding for each chunk of text. This is necessary because we only want to select the most relevant chunks of text for a given question, and we will do this by finding the most similar chunks in the embedding space.

|

| 26 |

+

4. **Load embeddings to vectorstore**: this involves putting embeddings and documents into a vectorstore. Vectorstores help us find the most similar chunks in the embedding space quickly and efficiently.

|

| 27 |

+

|

| 28 |

+

Langchain strives to be modular, so that each of these steps are straightforward to swap out with other components or approaches.

|

| 29 |

+

|

| 30 |

+

### Querying of Data

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

|

| 34 |

+

This can also be broken down into a few steps. The high level steps are:

|

| 35 |

+

|

| 36 |

+

1. **Get input from the user**: we'll use a web interface and a cli interface to receive input from the user about the documents.

|

| 37 |

+

2. **Combine that input with chat history**: we'll combine chat history and a new question into a single standalone question. This is often necessary because we want to allow for the ability to ask follow up questions (an important UX consideration).

|

| 38 |

+

3. **Lookup relevant documents**: using the vectorstore created during ingestion, we will look up relevant documents for the answer.

|

| 39 |

+

4. **Generate a response**: Given the standalone question and the relevant documents, we will use a language model to generate a response.

|

| 40 |

+

|

| 41 |

+

In this post, we'll explore some design decisions you have with history, prompts, and the chat experience. We won't touch on the deployment, but for more information see [our deployment guide](https://python.langchain.com/docs/guides/deployments/).

|

| 42 |

+

|

| 43 |

+

## Step by Step Details

|

| 44 |

+

|

| 45 |

+

This section dives into more detail on the steps necessary to ingest data.

|

| 46 |

+

|

| 47 |

+

|

| 48 |

+

|

| 49 |

+

### Load data

|

| 50 |

+

|

| 51 |

+

First, we need to load data into a standard format. In langchain, a [`Document`](https://docs.langchain.com/docs/components/schema/document) consists of (1) the text itself, (2) any metadata associated with that text (where it came from, etc). This is often critical for understanding and communicating the context for testing or for the end user.

|

| 52 |

+

|

| 53 |

+

The community has contributed dozens of document loaders and we look forward to seeing more and more join the community. [See our documention (and over 120 data loaders) for more information about document loaders](https://python.langchain.com/docs/integrations/document_loaders/). Please open a pull request or file an issue if you'd like to contribute (or request) a new document loader.

|

| 54 |

+

|

| 55 |

+

The line below contains the line of code responsible for loading the relevant documents.

|

| 56 |

+

|

| 57 |

+

```py

|

| 58 |

+

print("Loading data...")

|

| 59 |

+

loader = UnstructuredFileLoader("state_of_the_union.txt")

|

| 60 |

+

raw_documents = loader.load()

|

| 61 |

+

```

|

| 62 |

+

|

| 63 |

+

### Split Text

|

| 64 |

+

|

| 65 |

+

Splitting documents into smaller units of text for input into the model is critical for getting relevant information back from our chatbot. When documents are too big, you'll include irrelevant information to the model. Conversely, when they're too small, you'll not include enough information and the model may be confused about what is actually relevant.

|

| 66 |

+

|

| 67 |

+

The chunk size isn't quite a science, so you'll have to experiment to see if you can get good results.

|

| 68 |

+

|

| 69 |

+

```py

|

| 70 |

+

print("Splitting text...")

|

| 71 |

+

text_splitter = CharacterTextSplitter(

|

| 72 |

+

separator="\n\n",

|

| 73 |

+

chunk_size=600,

|

| 74 |

+

chunk_overlap=100,

|

| 75 |

+

length_function=len,

|

| 76 |

+

)

|

| 77 |

+

documents = text_splitter.split_documents(raw_documents)

|

| 78 |

+

```

|

| 79 |

+

|

| 80 |

+

### Create embeddings and store in vectorstore

|

| 81 |

+

|

| 82 |

+

Next, now that we have small chunks of text we need to create embeddings for each piece of text and store them in a vectorstore. We create embeddings because this is an efficient way of storing this text data and subsequently querying the store for documents relevant to our query.

|

| 83 |

+

|

| 84 |

+

Here we use OpenAI’s embeddings and a [FAISS vectorstore](https://faiss.ai/index.html) and store that as a python pickle file for later use.

|

| 85 |

+

|

| 86 |

+

```py

|

| 87 |

+

print("Creating vectorstore...")

|

| 88 |

+

embeddings = OpenAIEmbeddings()

|

| 89 |

+

vectorstore = FAISS.from_documents(documents, embeddings)

|

| 90 |

+

with open("vectorstore.pkl", "wb") as f:

|

| 91 |

+

pickle.dump(vectorstore, f)

|

| 92 |

+

```

|

| 93 |

+

|

| 94 |

+

Run `python ingest_data.py` to create the vectorstore. This is necessary after changing how you split the text or loading new documents. If you're making changes, adding documents, or splitting text different, you'll have to re-run things.

|

| 95 |

+

|

| 96 |

+

## Query data

|

| 97 |

+

|

| 98 |

+

So now that we’ve ingested the data, we can now use it in a chatbot interface. In order to do this, we will use the [ConversationalRetrievalChain](https://python.langchain.com/docs/use_cases/question_answering/how_to/chat_vector_db).

|

| 99 |

+

|

| 100 |

+

|

| 101 |

+

|

| 102 |

+

There are several different options when it comes to querying the data. Do you allow follow up questions? Want to include other user context? There are lots of design decisions and below we'll discuss some of the most critical.

|

| 103 |

+

|

| 104 |

+

### Do you want to have conversation history?

|

| 105 |

+

|

| 106 |

+

This is table stakes from a UX perspective because it allows for follow up questions. Adding memory is simple, you can either use a built in module.

|

| 107 |

+

|

| 108 |

+

```py

|

| 109 |

+

llm = ChatOpenAI(model_name="gpt-4", temperature=0)

|

| 110 |

+

retriever = load_retriever()

|

| 111 |

+

memory = ConversationBufferMemory(

|

| 112 |

+

memory_key="chat_history", return_messages=True)

|

| 113 |

+

# model = RetrievalQA.from_llm(llm=llm, retriever=retriever)

|

| 114 |

+

# if you don't want memory use the above, you will have to change

|

| 115 |

+

# the app.py or cli_app.py file to include `query` in the input instead of `question`

|

| 116 |

+

model = ConversationalRetrievalChain.from_llm(

|

| 117 |

+

llm=llm,

|

| 118 |

+

retriever=retriever,

|

| 119 |

+

memory=memory)

|

| 120 |

+

```

|

| 121 |

+

|

| 122 |

+

Alternatively, you can specify memory and pass it into the model, tracking it on your own. Run this example from the github repo with the following, then read the code in `query_data.py`.

|

| 123 |

+

|

| 124 |

+

```sh

|

| 125 |

+

python cli_app.py

|

| 126 |

+

|

| 127 |

+

Which QA model would you like to work with? [basic/with_sources/custom_prompt/condense_prompt] (basic):

|

| 128 |

+

Chat with your docs!

|

| 129 |

+

---------------

|

| 130 |

+

Your Question: (what did the president say about ketanji brown?):

|

| 131 |

+

Answer: The President nominated Ketanji Brown Jackson to serve on the United States Supreme Court, describing her as one of the nation's top legal minds who will continue Justice Breyer's legacy of excellence. He also mentioned that she

|

| 132 |

+

is a former top litigator in private practice, a former federal public defender, and comes from a family of public school educators and police officers. He referred to her as a consensus builder and noted that since her nomination, she

|

| 133 |

+

has received a broad range of support from various groups, including the Fraternal Order of Police and former judges appointed by both Democrats and Republicans.

|

| 134 |

+

---------------

|

| 135 |

+

```

|

| 136 |

+

|

| 137 |

+

### Do you want to customize the QA prompt?

|

| 138 |

+

|

| 139 |

+

You can easily customize the QA prompt by passing in a prompt of your choice. This is similar in experience to most all chains in langchain. [Learn more about custom prompts here.](https://python.langchain.com/docs/use_cases/question_answering/how_to/vector_db_qa#return-source-documents)

|

| 140 |

+

|

| 141 |

+

```py

|

| 142 |

+

template = """You are an AI assistant for answering questions about the most recent state of the union address.

|

| 143 |

+

You are given the following extracted parts of a long document and a question. Provide a conversational answer.

|

| 144 |

+

If you don't know the answer, just say "Hmm, I'm not sure." Don't try to make up an answer.

|

| 145 |

+

If the question is not about the most recent state of the union, politely inform them that you are tuned to only answer questions about the most recent state of the union.

|

| 146 |

+

Lastly, answer the question as if you were a pirate from the south seas and are just coming back from a pirate expedition where you found a treasure chest full of gold doubloons.

|

| 147 |

+

Question: {question}

|

| 148 |

+

=========

|

| 149 |

+

{context}

|

| 150 |

+

=========

|

| 151 |

+

Answer in Markdown:"""

|

| 152 |

+

|

| 153 |

+

QA_PROMPT = PromptTemplate(template=template, input_variables=[

|

| 154 |

+

"question", "context"])

|

| 155 |

+

llm = ChatOpenAI(model_name="gpt-4", temperature=0)

|

| 156 |

+

retriever = load_retriever()

|

| 157 |

+

memory = ConversationBufferMemory(

|

| 158 |

+

memory_key="chat_history", return_messages=True)

|

| 159 |

+

model = ConversationalRetrievalChain.from_llm(

|

| 160 |

+

llm=llm,

|

| 161 |

+

retriever=retriever,

|

| 162 |

+

memory=memory,

|

| 163 |

+

combine_docs_chain_kwargs={"prompt": QA_PROMPT})

|

| 164 |

+

```

|

| 165 |

+

|

| 166 |

+

Run this example from the github repo with the following, then read the code in `query_data.py`.

|

| 167 |

+

|

| 168 |

+

```sh

|

| 169 |

+

python cli_app.py

|

| 170 |

+

Which QA model would you like to work with? [basic/with_sources/custom_prompt/condense_prompt] (basic): custom_prompt

|

| 171 |

+

Chat with your docs!

|

| 172 |

+

---------------

|

| 173 |

+

Your Question: (what did the president say about ketanji brown?):

|

| 174 |

+

Answer: Arr matey, the cap'n, I mean the President, he did speak of Ketanji Brown Jackson, he did. He nominated her to the United States Supreme Court, he did, just 4 days before his address. He spoke highly of her, he did, callin' her

|

| 175 |

+

one of the nation's top legal minds. He believes she'll continue Justice Breyer’s legacy of excellence, he does.

|

| 176 |

+

|

| 177 |

+

She's been a top litigator in private practice, a federal public defender, and comes from a family of public school educators and police officers. She's a consensus builder, she is. Since her nomination, she's received support from all

|

| 178 |

+

over, from the Fraternal Order of Police to former judges appointed by both Democrats and Republicans. So, that's what the President had to say about Ketanji Brown Jackson, it is.

|

| 179 |

+

---------------

|

| 180 |

+

Your Question: (what did the president say about ketanji brown?): who did she succeed?

|

| 181 |

+

Answer: Arr matey, ye be askin' about who Judge Ketanji Brown Jackson be succeedin'. From the words of the President himself, she be takin' over from Justice Breyer, continuin' his legacy of excellence on the United States Supreme

|

| 182 |

+

Court. Now, let's get back to countin' me gold doubloons, aye?

|

| 183 |

+

---------------

|

| 184 |

+

```

|

| 185 |

+

|

| 186 |

+

### Do you expect long conversations?

|

| 187 |

+

|

| 188 |

+

If so, you're going to want to condense previous questions and history in order to add context into the prompt. If you embed the whole chat history along with the new question to look up relevant documents, you may pull in documents no longer relevant to the conversation (if the new question is not related at all). Therefor, this step of condensing the chat history and a new question to a standalone question is very important.

|

| 189 |

+

|

| 190 |

+

```py

|

| 191 |

+

_template = """Given the following conversation and a follow up question, rephrase the follow up question to be a standalone question.

|

| 192 |

+

You can assume the question about the most recent state of the union address.

|

| 193 |

+

|

| 194 |

+

Chat History:

|

| 195 |

+

{chat_history}

|

| 196 |

+

Follow Up Input: {question}

|

| 197 |

+

Standalone question:"""

|

| 198 |

+

CONDENSE_QUESTION_PROMPT = PromptTemplate.from_template(_template)

|

| 199 |

+

|

| 200 |

+

|

| 201 |

+

llm = ChatOpenAI(model_name="gpt-4", temperature=0)

|

| 202 |

+

retriever = load_retriever()

|

| 203 |

+

memory = ConversationBufferMemory(

|

| 204 |

+

memory_key="chat_history", return_messages=True)

|

| 205 |

+

# see: https://github.com/langchain-ai/langchain/issues/5890

|

| 206 |

+

model = ConversationalRetrievalChain.from_llm(

|

| 207 |

+

llm=llm,

|

| 208 |

+

retriever=retriever,

|

| 209 |

+

memory=memory,

|

| 210 |

+

condense_question_prompt=CONDENSE_QUESTION_PROMPT,

|

| 211 |

+

combine_docs_chain_kwargs={"prompt": QA_PROMPT}) # includes the custom prompt as well

|

| 212 |

+

```

|

| 213 |

+

|

| 214 |

+

Read the code in `query_data.py` for some example code to apply to your own projects.

|

| 215 |

+

|

| 216 |

+

### Do you want the model to cite sources?

|

| 217 |

+

|

| 218 |

+

[Langchain can cite source documents in the model.](https://python.langchain.com/docs/use_cases/question_answering/how_to/vector_db_qa#return-source-documents). There's a lot you can do here, you can add your own metadata, your own sections, and other relevant information to return the most relevant metadata for your query.

|

| 219 |

+

|

| 220 |

+

```py

|

| 221 |

+

llm = ChatOpenAI(model_name="gpt-4", temperature=0)

|

| 222 |

+

retriever = load_retriever()

|

| 223 |

+

history = []

|

| 224 |

+

model = ConversationalRetrievalChain.from_llm(

|

| 225 |

+

llm=llm,

|

| 226 |

+

retriever=retriever,

|

| 227 |

+

return_source_documents=True)

|

| 228 |

+

|

| 229 |

+

def model_func(question):

|

| 230 |

+

# bug: this doesn't work with the built-in memory

|

| 231 |

+

# see: https://github.com/langchain-ai/langchain/issues/5630

|

| 232 |

+

new_input = {"question": question['question'], "chat_history": history}

|

| 233 |

+

result = model(new_input)

|

| 234 |

+

history.append((question['question'], result['answer']))

|

| 235 |

+

return result

|

| 236 |

+

|

| 237 |

+

model_func({"question":"some question you have"})

|

| 238 |

+

# this is the same interface as all the other models.

|

| 239 |

+

```

|

| 240 |

+

|

| 241 |

+

Run this example from the github repo with the following, then read the code in `query_data.py`.

|

| 242 |

+

|

| 243 |

+

```sh

|

| 244 |

+

python cli_app.py

|

| 245 |

+

Which QA model would you like to work with? [basic/with_sources/custom_prompt/condense_prompt] (basic): with_sources

|

| 246 |

+

Chat with your docs!

|

| 247 |

+

---------------

|

| 248 |

+

Your Question: (what did the president say about ketanji brown?):

|

| 249 |

+

Answer: The President nominated Ketanji Brown Jackson to serve on the United States Supreme Court, describing her as one of the nation's top legal minds who will continue Justice Breyer's legacy of excellence. He also mentioned that she

|

| 250 |

+

is a former top litigator in private practice, a former federal public defender, and comes from a family of public school educators and police officers. Since her nomination, she has received a broad range of support, including from the

|

| 251 |

+

Fraternal Order of Police and former judges appointed by both Democrats and Republicans.

|

| 252 |

+

Sources:

|

| 253 |

+

state_of_the_union.txt

|

| 254 |

+

One of the most serious constitutional responsibilities a President has is nominating someone to serve on the United States Supreme Court.

|

| 255 |

+

|

| 256 |

+

And I did that 4 days ago, when I nominated Circuit Court of Appeals Judge Ketanji Brown Jackson. One of our nation’s top legal minds, who will continue Justice Breyer’s legacy of excellence.

|

| 257 |

+

state_of_the_union.txt

|

| 258 |

+

As I said last year, especially to our younger transgender Americans, I will always have your back as your President, so you can be yourself and reach your God-given potential.

|

| 259 |

+

|

| 260 |

+

While it often appears that we never agree, that isn’t true. I signed 80 bipartisan bills into law last year. From preventing government shutdowns to protecting Asian-Americans from still-too-common hate crimes to reforming military

|

| 261 |

+

justice.

|

| 262 |

+

state_of_the_union.txt

|

| 263 |

+

But in my administration, the watchdogs have been welcomed back.

|

| 264 |

+

|

| 265 |

+

We’re going after the criminals who stole billions in relief money meant for small businesses and millions of Americans.

|

| 266 |

+

|

| 267 |

+

And tonight, I’m announcing that the Justice Department will name a chief prosecutor for pandemic fraud.

|

| 268 |

+

|

| 269 |

+

By the end of this year, the deficit will be down to less than half what it was before I took office.

|

| 270 |

+

|

| 271 |

+

The only president ever to cut the deficit by more than one trillion dollars in a single year.

|

| 272 |

+

|

| 273 |

+

Lowering your costs also means demanding more competition.

|

| 274 |

+

state_of_the_union.txt

|

| 275 |

+

A former top litigator in private practice. A former federal public defender. And from a family of public school educators and police officers. A consensus builder. Since she’s been nominated, she’s received a broad range of

|

| 276 |

+

support—from the Fraternal Order of Police to former judges appointed by Democrats and Republicans.

|

| 277 |

+

|

| 278 |

+

And if we are to advance liberty and justice, we need to secure the Border and fix the immigration system.

|

| 279 |

+

|

| 280 |

+

We can do both. At our border, we’ve installed new technology like cutting-edge scanners to better detect drug smuggling.

|

| 281 |

+

---------------

|

| 282 |

+

Your Question: (what did the president say about ketanji brown?): where did she work before?

|

| 283 |

+

Answer: Before her nomination to the United States Supreme Court, Ketanji Brown Jackson worked as a Circuit Court of Appeals Judge. She was also a former top litigator in private practice and a former federal public defender.

|

| 284 |

+

Sources:

|

| 285 |

+

state_of_the_union.txt

|

| 286 |

+

One of the most serious constitutional responsibilities a President has is nominating someone to serve on the United States Supreme Court.

|

| 287 |

+

|

| 288 |

+

And I did that 4 days ago, when I nominated Circuit Court of Appeals Judge Ketanji Brown Jackson. One of our nation’s top legal minds, who will continue Justice Breyer’s legacy of excellence.

|

| 289 |

+

state_of_the_union.txt

|

| 290 |

+

A former top litigator in private practice. A former federal public defender. And from a family of public school educators and police officers. A consensus builder. Since she’s been nominated, she’s received a broad range of

|

| 291 |

+

support—from the Fraternal Order of Police to former judges appointed by Democrats and Republicans.

|

| 292 |

+

|

| 293 |

+

And if we are to advance liberty and justice, we need to secure the Border and fix the immigration system.

|

| 294 |

+

|

| 295 |

+

We can do both. At our border, we’ve installed new technology like cutting-edge scanners to better detect drug smuggling.

|

| 296 |

+

state_of_the_union.txt

|

| 297 |

+

We cannot let this happen.

|

| 298 |

+

|

| 299 |

+

Tonight. I call on the Senate to: Pass the Freedom to Vote Act. Pass the John Lewis Voting Rights Act. And while you’re at it, pass the Disclose Act so Americans can know who is funding our elections.

|

| 300 |

+

|

| 301 |

+

Tonight, I’d like to honor someone who has dedicated his life to serve this country: Justice Stephen Breyer—an Army veteran, Constitutional scholar, and retiring Justice of the United States Supreme Court. Justice Breyer, thank you for

|

| 302 |

+

your service.

|

| 303 |

+

state_of_the_union.txt

|

| 304 |

+

Vice President Harris and I ran for office with a new economic vision for America.

|

| 305 |

+

|

| 306 |

+

Invest in America. Educate Americans. Grow the workforce. Build the economy from the bottom up and the middle out, not from the top down.

|

| 307 |

+

|

| 308 |

+

Because we know that when the middle class grows, the poor have a ladder up and the wealthy do very well.

|

| 309 |

+

|

| 310 |

+

America used to have the best roads, bridges, and airports on Earth.

|

| 311 |

+

|

| 312 |

+

Now our infrastructure is ranked 13th in the world.

|

| 313 |

+

|

| 314 |

+

We won’t be able to compete for the jobs of the 21st Century if we don’t fix that.

|

| 315 |

+

---------------

|

| 316 |

+

```

|

| 317 |

+

|

| 318 |

+

### Language Model

|

| 319 |

+

|

| 320 |

+

The final lever to pull is what language model you use to power your chatbot. In our example we use the OpenAI LLM, but this can easily be substituted to other language models that LangChain supports, or you can even write your own wrapper.

|

| 321 |

+

|

| 322 |

+

## Putting it all together

|

| 323 |

+

|

| 324 |

+

After making all the necessary customizations, and running `python ingest_data.py`, you can now interact with the chatbot.

|

| 325 |

+

|

| 326 |

+

We’ve exposed a really simple interface through which you can do. You can access this just by running `python cli_app.py` and this will open in the terminal a way to ask questions and get back answers. Try it out!

|

| 327 |

+

|

| 328 |

+

We also have an example of deploying this app via Gradio! You can do so by running `python app.py`. This can also easily be deployed to Hugging Face spaces - see [example space here](https://huggingface.co/spaces/hwchase17/chat-your-data-state-of-the-union).

|

| 329 |

+

|

| 330 |

+

|

chromaclient.py

ADDED

|

@@ -0,0 +1,17 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import chromadb

|

| 2 |

+

import openai

|

| 3 |

+

from Constants import *

|

| 4 |

+

from langchain.embeddings import OpenAIEmbeddings

|

| 5 |

+

import os

|

| 6 |

+

from langchain.vectorstores import Chroma

|

| 7 |

+

openai.api_key = OPENAI_API_KEY

|

| 8 |

+

os.environ["OPENAI_API_KEY"] = OPENAI_API_KEY

|

| 9 |

+

#chroma_client = chromadb.PersistentClient(path=CHROMA_PERSIST_DIRECTORY, embeddings=OpenAIEmbeddings())

|

| 10 |

+

|

| 11 |

+

vectorstore = Chroma(persist_directory=CHROMA_PERSIST_DIRECTORY,collection_name=CHROMA_COLLECTION_NAME,embedding_function=OpenAIEmbeddings())

|

| 12 |

+

print("Chroma collection count : " + str(vectorstore._collection.count()))

|

| 13 |

+

#collection = chroma_client.get_collection(name="myname")

|

| 14 |

+

#results = collection.query(query_texts=["Who is Tanmay"], n_results=10)

|

| 15 |

+

#print(collection.get(include=["embeddings", "documents", "metadatas"]))

|

| 16 |

+

#collection.get(include=["embeddings", "documents", "metadatas"])

|

| 17 |

+

|

chromadb/cd6d665c-a1d1-4b9b-b8a5-cfa0f731d4d0/length.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:fcefd9550ec0a4b4cf0550e48b6589a991eaf7590c089fdaafa4298dab5c6f90

|

| 3 |

+

size 4000

|

cli_app.py

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import os

|

| 2 |

+

from query_data import chain_options

|

| 3 |

+

from rich.console import Console

|

| 4 |

+

from rich.prompt import Prompt

|

| 5 |

+

from Constants import *

|

| 6 |

+

from apiKey import *

|

| 7 |

+

|

| 8 |

+

os.environ["OPENAI_API_KEY"] = OPENAI_API_KEY

|

| 9 |

+

if __name__ == "__main__":

|

| 10 |

+

c = Console()

|

| 11 |

+

model = Prompt.ask("Which QA model would you like to work with?",

|

| 12 |

+

choices=list(chain_options.keys()),

|

| 13 |

+

default="basic")

|

| 14 |

+

chain = chain_options[model]()

|

| 15 |

+

|

| 16 |

+

c.print("[bold]Chat with your docs!")

|

| 17 |

+