Add files using upload-large-folder tool

Browse files- .gitattributes +1 -0

- LICENSE +202 -0

- README.md +355 -0

- config.json +38 -0

- config_1m.json +0 -0

- generation_config.json +13 -0

- merges.txt +0 -0

- model-00003-of-00118.safetensors +3 -0

- model-00011-of-00118.safetensors +3 -0

- model-00015-of-00118.safetensors +3 -0

- model-00016-of-00118.safetensors +3 -0

- model-00017-of-00118.safetensors +3 -0

- model-00020-of-00118.safetensors +3 -0

- model-00021-of-00118.safetensors +3 -0

- model-00027-of-00118.safetensors +3 -0

- model-00030-of-00118.safetensors +3 -0

- model-00031-of-00118.safetensors +3 -0

- model-00032-of-00118.safetensors +3 -0

- model-00035-of-00118.safetensors +3 -0

- model-00036-of-00118.safetensors +3 -0

- model-00039-of-00118.safetensors +3 -0

- model-00042-of-00118.safetensors +3 -0

- model-00044-of-00118.safetensors +3 -0

- model-00045-of-00118.safetensors +3 -0

- model-00056-of-00118.safetensors +3 -0

- model-00059-of-00118.safetensors +3 -0

- model-00063-of-00118.safetensors +3 -0

- model-00064-of-00118.safetensors +3 -0

- model-00066-of-00118.safetensors +3 -0

- model-00072-of-00118.safetensors +3 -0

- model-00075-of-00118.safetensors +3 -0

- model-00088-of-00118.safetensors +3 -0

- model-00089-of-00118.safetensors +3 -0

- model-00090-of-00118.safetensors +3 -0

- model-00092-of-00118.safetensors +3 -0

- model-00094-of-00118.safetensors +3 -0

- model-00096-of-00118.safetensors +3 -0

- model-00100-of-00118.safetensors +3 -0

- model-00101-of-00118.safetensors +3 -0

- model-00103-of-00118.safetensors +3 -0

- model-00107-of-00118.safetensors +3 -0

- model-00109-of-00118.safetensors +3 -0

- model-00111-of-00118.safetensors +3 -0

- model-00113-of-00118.safetensors +3 -0

- model-00116-of-00118.safetensors +3 -0

- model-00117-of-00118.safetensors +3 -0

- model-00118-of-00118.safetensors +3 -0

- model.safetensors.index.json +0 -0

- tokenizer_config.json +239 -0

- vocab.json +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

LICENSE

ADDED

|

@@ -0,0 +1,202 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

Apache License

|

| 3 |

+

Version 2.0, January 2004

|

| 4 |

+

http://www.apache.org/licenses/

|

| 5 |

+

|

| 6 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 7 |

+

|

| 8 |

+

1. Definitions.

|

| 9 |

+

|

| 10 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 11 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 12 |

+

|

| 13 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 14 |

+

the copyright owner that is granting the License.

|

| 15 |

+

|

| 16 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 17 |

+

other entities that control, are controlled by, or are under common

|

| 18 |

+

control with that entity. For the purposes of this definition,

|

| 19 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 20 |

+

direction or management of such entity, whether by contract or

|

| 21 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 22 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 23 |

+

|

| 24 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 25 |

+

exercising permissions granted by this License.

|

| 26 |

+

|

| 27 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 28 |

+

including but not limited to software source code, documentation

|

| 29 |

+

source, and configuration files.

|

| 30 |

+

|

| 31 |

+

"Object" form shall mean any form resulting from mechanical

|

| 32 |

+

transformation or translation of a Source form, including but

|

| 33 |

+

not limited to compiled object code, generated documentation,

|

| 34 |

+

and conversions to other media types.

|

| 35 |

+

|

| 36 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 37 |

+

Object form, made available under the License, as indicated by a

|

| 38 |

+

copyright notice that is included in or attached to the work

|

| 39 |

+

(an example is provided in the Appendix below).

|

| 40 |

+

|

| 41 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 42 |

+

form, that is based on (or derived from) the Work and for which the

|

| 43 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 44 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 45 |

+

of this License, Derivative Works shall not include works that remain

|

| 46 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 47 |

+

the Work and Derivative Works thereof.

|

| 48 |

+

|

| 49 |

+

"Contribution" shall mean any work of authorship, including

|

| 50 |

+

the original version of the Work and any modifications or additions

|

| 51 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 52 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 53 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 54 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 55 |

+

means any form of electronic, verbal, or written communication sent

|

| 56 |

+

to the Licensor or its representatives, including but not limited to

|

| 57 |

+

communication on electronic mailing lists, source code control systems,

|

| 58 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 59 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 60 |

+

excluding communication that is conspicuously marked or otherwise

|

| 61 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 62 |

+

|

| 63 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 64 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 65 |

+

subsequently incorporated within the Work.

|

| 66 |

+

|

| 67 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 68 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 69 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 70 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 71 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 72 |

+

Work and such Derivative Works in Source or Object form.

|

| 73 |

+

|

| 74 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 75 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 76 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 77 |

+

(except as stated in this section) patent license to make, have made,

|

| 78 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 79 |

+

where such license applies only to those patent claims licensable

|

| 80 |

+

by such Contributor that are necessarily infringed by their

|

| 81 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 82 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 83 |

+

institute patent litigation against any entity (including a

|

| 84 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 85 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 86 |

+

or contributory patent infringement, then any patent licenses

|

| 87 |

+

granted to You under this License for that Work shall terminate

|

| 88 |

+

as of the date such litigation is filed.

|

| 89 |

+

|

| 90 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 91 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 92 |

+

modifications, and in Source or Object form, provided that You

|

| 93 |

+

meet the following conditions:

|

| 94 |

+

|

| 95 |

+

(a) You must give any other recipients of the Work or

|

| 96 |

+

Derivative Works a copy of this License; and

|

| 97 |

+

|

| 98 |

+

(b) You must cause any modified files to carry prominent notices

|

| 99 |

+

stating that You changed the files; and

|

| 100 |

+

|

| 101 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 102 |

+

that You distribute, all copyright, patent, trademark, and

|

| 103 |

+

attribution notices from the Source form of the Work,

|

| 104 |

+

excluding those notices that do not pertain to any part of

|

| 105 |

+

the Derivative Works; and

|

| 106 |

+

|

| 107 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 108 |

+

distribution, then any Derivative Works that You distribute must

|

| 109 |

+

include a readable copy of the attribution notices contained

|

| 110 |

+

within such NOTICE file, excluding those notices that do not

|

| 111 |

+

pertain to any part of the Derivative Works, in at least one

|

| 112 |

+

of the following places: within a NOTICE text file distributed

|

| 113 |

+

as part of the Derivative Works; within the Source form or

|

| 114 |

+

documentation, if provided along with the Derivative Works; or,

|

| 115 |

+

within a display generated by the Derivative Works, if and

|

| 116 |

+

wherever such third-party notices normally appear. The contents

|

| 117 |

+

of the NOTICE file are for informational purposes only and

|

| 118 |

+

do not modify the License. You may add Your own attribution

|

| 119 |

+

notices within Derivative Works that You distribute, alongside

|

| 120 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 121 |

+

that such additional attribution notices cannot be construed

|

| 122 |

+

as modifying the License.

|

| 123 |

+

|

| 124 |

+

You may add Your own copyright statement to Your modifications and

|

| 125 |

+

may provide additional or different license terms and conditions

|

| 126 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 127 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 128 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 129 |

+

the conditions stated in this License.

|

| 130 |

+

|

| 131 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 132 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 133 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 134 |

+

this License, without any additional terms or conditions.

|

| 135 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 136 |

+

the terms of any separate license agreement you may have executed

|

| 137 |

+

with Licensor regarding such Contributions.

|

| 138 |

+

|

| 139 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 140 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 141 |

+

except as required for reasonable and customary use in describing the

|

| 142 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 143 |

+

|

| 144 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 145 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 146 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 147 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 148 |

+

implied, including, without limitation, any warranties or conditions

|

| 149 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 150 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 151 |

+

appropriateness of using or redistributing the Work and assume any

|

| 152 |

+

risks associated with Your exercise of permissions under this License.

|

| 153 |

+

|

| 154 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 155 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 156 |

+

unless required by applicable law (such as deliberate and grossly

|

| 157 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 158 |

+

liable to You for damages, including any direct, indirect, special,

|

| 159 |

+

incidental, or consequential damages of any character arising as a

|

| 160 |

+

result of this License or out of the use or inability to use the

|

| 161 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 162 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 163 |

+

other commercial damages or losses), even if such Contributor

|

| 164 |

+

has been advised of the possibility of such damages.

|

| 165 |

+

|

| 166 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 167 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 168 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 169 |

+

or other liability obligations and/or rights consistent with this

|

| 170 |

+

License. However, in accepting such obligations, You may act only

|

| 171 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 172 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 173 |

+

defend, and hold each Contributor harmless for any liability

|

| 174 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 175 |

+

of your accepting any such warranty or additional liability.

|

| 176 |

+

|

| 177 |

+

END OF TERMS AND CONDITIONS

|

| 178 |

+

|

| 179 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 180 |

+

|

| 181 |

+

To apply the Apache License to your work, attach the following

|

| 182 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 183 |

+

replaced with your own identifying information. (Don't include

|

| 184 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 185 |

+

comment syntax for the file format. We also recommend that a

|

| 186 |

+

file or class name and description of purpose be included on the

|

| 187 |

+

same "printed page" as the copyright notice for easier

|

| 188 |

+

identification within third-party archives.

|

| 189 |

+

|

| 190 |

+

Copyright 2024 Alibaba Cloud

|

| 191 |

+

|

| 192 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 193 |

+

you may not use this file except in compliance with the License.

|

| 194 |

+

You may obtain a copy of the License at

|

| 195 |

+

|

| 196 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 197 |

+

|

| 198 |

+

Unless required by applicable law or agreed to in writing, software

|

| 199 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 200 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 201 |

+

See the License for the specific language governing permissions and

|

| 202 |

+

limitations under the License.

|

README.md

ADDED

|

@@ -0,0 +1,355 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

library_name: transformers

|

| 3 |

+

license: apache-2.0

|

| 4 |

+

license_link: https://huggingface.co/Qwen/Qwen3-235B-A22B-Instruct-2507/blob/main/LICENSE

|

| 5 |

+

pipeline_tag: text-generation

|

| 6 |

+

---

|

| 7 |

+

|

| 8 |

+

# Qwen3-235B-A22B-Instruct-2507

|

| 9 |

+

<a href="https://chat.qwen.ai/" target="_blank" style="margin: 2px;">

|

| 10 |

+

<img alt="Chat" src="https://img.shields.io/badge/%F0%9F%92%9C%EF%B8%8F%20Qwen%20Chat%20-536af5" style="display: inline-block; vertical-align: middle;"/>

|

| 11 |

+

</a>

|

| 12 |

+

|

| 13 |

+

## Highlights

|

| 14 |

+

|

| 15 |

+

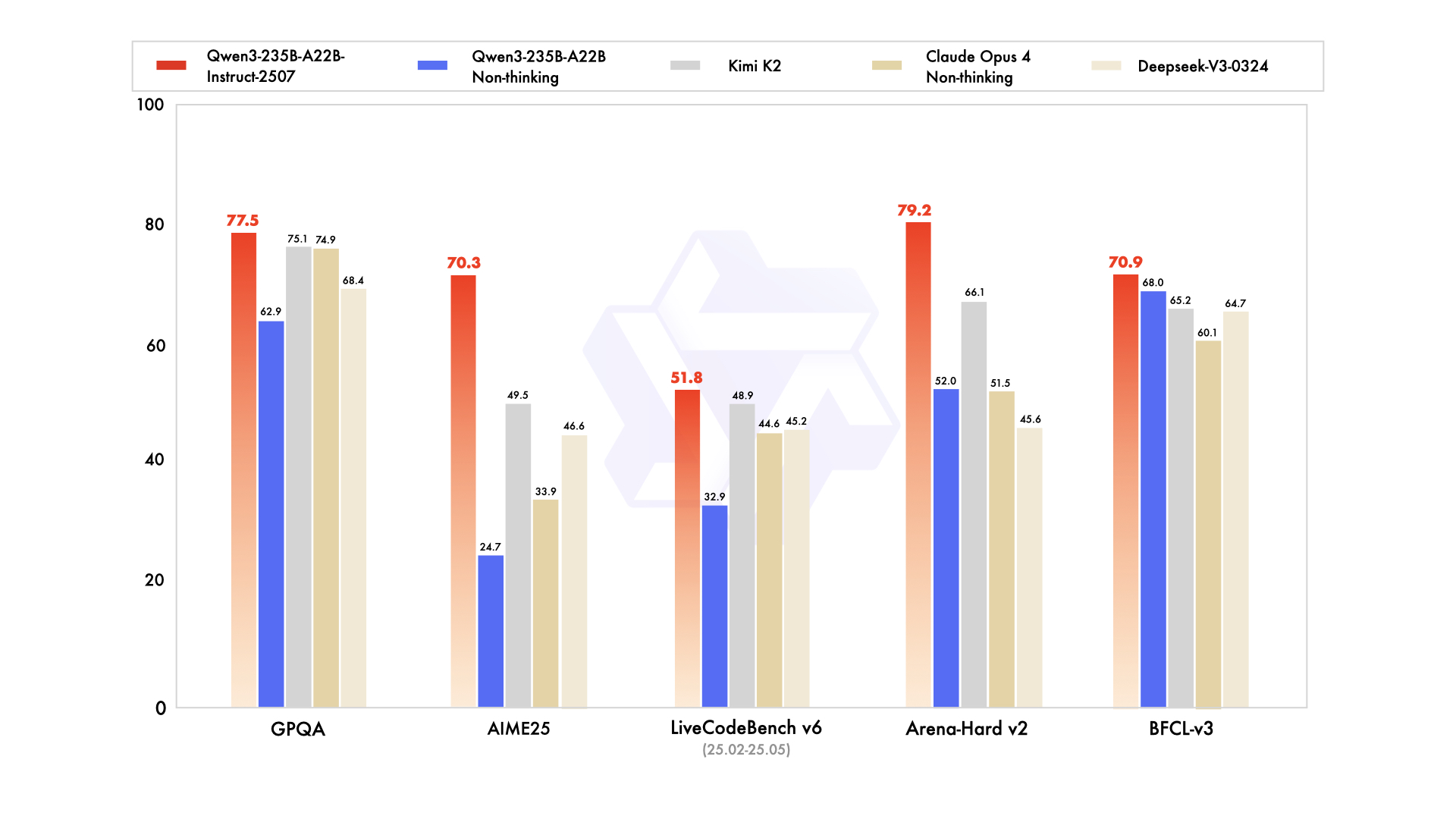

We introduce the updated version of the **Qwen3-235B-A22B non-thinking mode**, named **Qwen3-235B-A22B-Instruct-2507**, featuring the following key enhancements:

|

| 16 |

+

|

| 17 |

+

- **Significant improvements** in general capabilities, including **instruction following, logical reasoning, text comprehension, mathematics, science, coding and tool usage**.

|

| 18 |

+

- **Substantial gains** in long-tail knowledge coverage across **multiple languages**.

|

| 19 |

+

- **Markedly better alignment** with user preferences in **subjective and open-ended tasks**, enabling more helpful responses and higher-quality text generation.

|

| 20 |

+

- **Enhanced capabilities** in **256K long-context understanding**.

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

|

| 25 |

+

## Model Overview

|

| 26 |

+

|

| 27 |

+

**Qwen3-235B-A22B-Instruct-2507** has the following features:

|

| 28 |

+

- Type: Causal Language Models

|

| 29 |

+

- Training Stage: Pretraining & Post-training

|

| 30 |

+

- Number of Parameters: 235B in total and 22B activated

|

| 31 |

+

- Number of Paramaters (Non-Embedding): 234B

|

| 32 |

+

- Number of Layers: 94

|

| 33 |

+

- Number of Attention Heads (GQA): 64 for Q and 4 for KV

|

| 34 |

+

- Number of Experts: 128

|

| 35 |

+

- Number of Activated Experts: 8

|

| 36 |

+

- Context Length: **262,144 natively and extendable up to 1,010,000 tokens**

|

| 37 |

+

|

| 38 |

+

**NOTE: This model supports only non-thinking mode and does not generate ``<think></think>`` blocks in its output. Meanwhile, specifying `enable_thinking=False` is no longer required.**

|

| 39 |

+

|

| 40 |

+

For more details, including benchmark evaluation, hardware requirements, and inference performance, please refer to our [blog](https://qwenlm.github.io/blog/qwen3/), [GitHub](https://github.com/QwenLM/Qwen3), and [Documentation](https://qwen.readthedocs.io/en/latest/).

|

| 41 |

+

|

| 42 |

+

|

| 43 |

+

## Performance

|

| 44 |

+

|

| 45 |

+

| | Deepseek-V3-0324 | GPT-4o-0327 | Claude Opus 4 Non-thinking | Kimi K2 | Qwen3-235B-A22B Non-thinking | Qwen3-235B-A22B-Instruct-2507 |

|

| 46 |

+

|--- | --- | --- | --- | --- | --- | ---|

|

| 47 |

+

| **Knowledge** | | | | | | |

|

| 48 |

+

| MMLU-Pro | 81.2 | 79.8 | **86.6** | 81.1 | 75.2 | 83.0 |

|

| 49 |

+

| MMLU-Redux | 90.4 | 91.3 | **94.2** | 92.7 | 89.2 | 93.1 |

|

| 50 |

+

| GPQA | 68.4 | 66.9 | 74.9 | 75.1 | 62.9 | **77.5** |

|

| 51 |

+

| SuperGPQA | 57.3 | 51.0 | 56.5 | 57.2 | 48.2 | **62.6** |

|

| 52 |

+

| SimpleQA | 27.2 | 40.3 | 22.8 | 31.0 | 12.2 | **54.3** |

|

| 53 |

+

| CSimpleQA | 71.1 | 60.2 | 68.0 | 74.5 | 60.8 | **84.3** |

|

| 54 |

+

| **Reasoning** | | | | | | |

|

| 55 |

+

| AIME25 | 46.6 | 26.7 | 33.9 | 49.5 | 24.7 | **70.3** |

|

| 56 |

+

| HMMT25 | 27.5 | 7.9 | 15.9 | 38.8 | 10.0 | **55.4** |

|

| 57 |

+

| ARC-AGI | 9.0 | 8.8 | 30.3 | 13.3 | 4.3 | **41.8** |

|

| 58 |

+

| ZebraLogic | 83.4 | 52.6 | - | 89.0 | 37.7 | **95.0** |

|

| 59 |

+

| LiveBench 20241125 | 66.9 | 63.7 | 74.6 | **76.4** | 62.5 | 75.4 |

|

| 60 |

+

| **Coding** | | | | | | |

|

| 61 |

+

| LiveCodeBench v6 (25.02-25.05) | 45.2 | 35.8 | 44.6 | 48.9 | 32.9 | **51.8** |

|

| 62 |

+

| MultiPL-E | 82.2 | 82.7 | **88.5** | 85.7 | 79.3 | 87.9 |

|

| 63 |

+

| Aider-Polyglot | 55.1 | 45.3 | **70.7** | 59.0 | 59.6 | 57.3 |

|

| 64 |

+

| **Alignment** | | | | | | |

|

| 65 |

+

| IFEval | 82.3 | 83.9 | 87.4 | **89.8** | 83.2 | 88.7 |

|

| 66 |

+

| Arena-Hard v2* | 45.6 | 61.9 | 51.5 | 66.1 | 52.0 | **79.2** |

|

| 67 |

+

| Creative Writing v3 | 81.6 | 84.9 | 83.8 | **88.1** | 80.4 | 87.5 |

|

| 68 |

+

| WritingBench | 74.5 | 75.5 | 79.2 | **86.2** | 77.0 | 85.2 |

|

| 69 |

+

| **Agent** | | | | | | |

|

| 70 |

+

| BFCL-v3 | 64.7 | 66.5 | 60.1 | 65.2 | 68.0 | **70.9** |

|

| 71 |

+

| TAU1-Retail | 49.6 | 60.3# | **81.4** | 70.7 | 65.2 | 71.3 |

|

| 72 |

+

| TAU1-Airline | 32.0 | 42.8# | **59.6** | 53.5 | 32.0 | 44.0 |

|

| 73 |

+

| TAU2-Retail | 71.1 | 66.7# | **75.5** | 70.6 | 64.9 | 74.6 |

|

| 74 |

+

| TAU2-Airline | 36.0 | 42.0# | 55.5 | **56.5** | 36.0 | 50.0 |

|

| 75 |

+

| TAU2-Telecom | 34.0 | 29.8# | 45.2 | **65.8** | 24.6 | 32.5 |

|

| 76 |

+

| **Multilingualism** | | | | | | |

|

| 77 |

+

| MultiIF | 66.5 | 70.4 | - | 76.2 | 70.2 | **77.5** |

|

| 78 |

+

| MMLU-ProX | 75.8 | 76.2 | - | 74.5 | 73.2 | **79.4** |

|

| 79 |

+

| INCLUDE | 80.1 | **82.1** | - | 76.9 | 75.6 | 79.5 |

|

| 80 |

+

| PolyMATH | 32.2 | 25.5 | 30.0 | 44.8 | 27.0 | **50.2** |

|

| 81 |

+

|

| 82 |

+

*: For reproducibility, we report the win rates evaluated by GPT-4.1.

|

| 83 |

+

|

| 84 |

+

\#: Results were generated using GPT-4o-20241120, as access to the native function calling API of GPT-4o-0327 was unavailable.

|

| 85 |

+

|

| 86 |

+

|

| 87 |

+

## Quickstart

|

| 88 |

+

|

| 89 |

+

The code of Qwen3-MoE has been in the latest Hugging Face `transformers` and we advise you to use the latest version of `transformers`.

|

| 90 |

+

|

| 91 |

+

With `transformers<4.51.0`, you will encounter the following error:

|

| 92 |

+

```

|

| 93 |

+

KeyError: 'qwen3_moe'

|

| 94 |

+

```

|

| 95 |

+

|

| 96 |

+

The following contains a code snippet illustrating how to use the model generate content based on given inputs.

|

| 97 |

+

```python

|

| 98 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 99 |

+

|

| 100 |

+

model_name = "Qwen/Qwen3-235B-A22B-Instruct-2507"

|

| 101 |

+

|

| 102 |

+

# load the tokenizer and the model

|

| 103 |

+

tokenizer = AutoTokenizer.from_pretrained(model_name)

|

| 104 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 105 |

+

model_name,

|

| 106 |

+

torch_dtype="auto",

|

| 107 |

+

device_map="auto"

|

| 108 |

+

)

|

| 109 |

+

|

| 110 |

+

# prepare the model input

|

| 111 |

+

prompt = "Give me a short introduction to large language model."

|

| 112 |

+

messages = [

|

| 113 |

+

{"role": "user", "content": prompt}

|

| 114 |

+

]

|

| 115 |

+

text = tokenizer.apply_chat_template(

|

| 116 |

+

messages,

|

| 117 |

+

tokenize=False,

|

| 118 |

+

add_generation_prompt=True,

|

| 119 |

+

)

|

| 120 |

+

model_inputs = tokenizer([text], return_tensors="pt").to(model.device)

|

| 121 |

+

|

| 122 |

+

# conduct text completion

|

| 123 |

+

generated_ids = model.generate(

|

| 124 |

+

**model_inputs,

|

| 125 |

+

max_new_tokens=16384

|

| 126 |

+

)

|

| 127 |

+

output_ids = generated_ids[0][len(model_inputs.input_ids[0]):].tolist()

|

| 128 |

+

|

| 129 |

+

content = tokenizer.decode(output_ids, skip_special_tokens=True)

|

| 130 |

+

|

| 131 |

+

print("content:", content)

|

| 132 |

+

```

|

| 133 |

+

|

| 134 |

+

For deployment, you can use `sglang>=0.4.6.post1` or `vllm>=0.8.5` or to create an OpenAI-compatible API endpoint:

|

| 135 |

+

- SGLang:

|

| 136 |

+

```shell

|

| 137 |

+

python -m sglang.launch_server --model-path Qwen/Qwen3-235B-A22B-Instruct-2507 --tp 8 --context-length 262144

|

| 138 |

+

```

|

| 139 |

+

- vLLM:

|

| 140 |

+

```shell

|

| 141 |

+

vllm serve Qwen/Qwen3-235B-A22B-Instruct-2507 --tensor-parallel-size 8 --max-model-len 262144

|

| 142 |

+

```

|

| 143 |

+

|

| 144 |

+

**Note: If you encounter out-of-memory (OOM) issues, consider reducing the context length to a shorter value, such as `32,768`.**

|

| 145 |

+

|

| 146 |

+

For local use, applications such as Ollama, LMStudio, MLX-LM, llama.cpp, and KTransformers have also supported Qwen3.

|

| 147 |

+

|

| 148 |

+

## Agentic Use

|

| 149 |

+

|

| 150 |

+

Qwen3 excels in tool calling capabilities. We recommend using [Qwen-Agent](https://github.com/QwenLM/Qwen-Agent) to make the best use of agentic ability of Qwen3. Qwen-Agent encapsulates tool-calling templates and tool-calling parsers internally, greatly reducing coding complexity.

|

| 151 |

+

|

| 152 |

+

To define the available tools, you can use the MCP configuration file, use the integrated tool of Qwen-Agent, or integrate other tools by yourself.

|

| 153 |

+

```python

|

| 154 |

+

from qwen_agent.agents import Assistant

|

| 155 |

+

|

| 156 |

+

# Define LLM

|

| 157 |

+

llm_cfg = {

|

| 158 |

+

'model': 'Qwen3-235B-A22B-Instruct-2507',

|

| 159 |

+

|

| 160 |

+

# Use a custom endpoint compatible with OpenAI API:

|

| 161 |

+

'model_server': 'http://localhost:8000/v1', # api_base

|

| 162 |

+

'api_key': 'EMPTY',

|

| 163 |

+

}

|

| 164 |

+

|

| 165 |

+

# Define Tools

|

| 166 |

+

tools = [

|

| 167 |

+

{'mcpServers': { # You can specify the MCP configuration file

|

| 168 |

+

'time': {

|

| 169 |

+

'command': 'uvx',

|

| 170 |

+

'args': ['mcp-server-time', '--local-timezone=Asia/Shanghai']

|

| 171 |

+

},

|

| 172 |

+

"fetch": {

|

| 173 |

+

"command": "uvx",

|

| 174 |

+

"args": ["mcp-server-fetch"]

|

| 175 |

+

}

|

| 176 |

+

}

|

| 177 |

+

},

|

| 178 |

+

'code_interpreter', # Built-in tools

|

| 179 |

+

]

|

| 180 |

+

|

| 181 |

+

# Define Agent

|

| 182 |

+

bot = Assistant(llm=llm_cfg, function_list=tools)

|

| 183 |

+

|

| 184 |

+

# Streaming generation

|

| 185 |

+

messages = [{'role': 'user', 'content': 'https://qwenlm.github.io/blog/ Introduce the latest developments of Qwen'}]

|

| 186 |

+

for responses in bot.run(messages=messages):

|

| 187 |

+

pass

|

| 188 |

+

print(responses)

|

| 189 |

+

```

|

| 190 |

+

|

| 191 |

+

## Processing Ultra-Long Texts

|

| 192 |

+

|

| 193 |

+

To support **ultra-long context processing** (up to **1 million tokens**), we integrate two key techniques:

|

| 194 |

+

|

| 195 |

+

- **[Dual Chunk Attention](https://arxiv.org/abs/2402.17463) (DCA)**: A length extrapolation method that splits long sequences into manageable chunks while preserving global coherence.

|

| 196 |

+

- **[MInference](https://arxiv.org/abs/2407.02490)**: A sparse attention mechanism that reduces computational overhead by focusing on critical token interactions.

|

| 197 |

+

|

| 198 |

+

Together, these innovations significantly improve both **generation quality** and **inference efficiency** for sequences beyond 256K tokens. On sequences approaching 1M tokens, the system achieves up to a **3× speedup** compared to standard attention implementations.

|

| 199 |

+

|

| 200 |

+

For full technical details, see the [Qwen2.5-1M Technical Report](https://arxiv.org/abs/2501.15383).

|

| 201 |

+

|

| 202 |

+

### How to Enable 1M Token Context

|

| 203 |

+

|

| 204 |

+

> [!NOTE]

|

| 205 |

+

> To effectively process a 1 million token context, users will require approximately **1000 GB** of total GPU memory. This accounts for model weights, KV-cache storage, and peak activation memory demands.

|

| 206 |

+

|

| 207 |

+

#### Step 1: Update Configuration File

|

| 208 |

+

|

| 209 |

+

Download the model and replace the content of your `config.json` with `config_1m.json`, which includes the config for length extrapolation and sparse attention.

|

| 210 |

+

|

| 211 |

+

```bash

|

| 212 |

+

export MODELNAME=Qwen3-235B-A22B-Instruct-2507

|

| 213 |

+

huggingface-cli download Qwen/${MODELNAME} --local-dir ${MODELNAME}

|

| 214 |

+

mv ${MODELNAME}/config.json ${MODELNAME}/config.json.bak

|

| 215 |

+

mv ${MODELNAME}/config_1m.json ${MODELNAME}/config.json

|

| 216 |

+

```

|

| 217 |

+

|

| 218 |

+

#### Step 2: Launch Model Server

|

| 219 |

+

|

| 220 |

+

After updating the config, proceed with either **vLLM** or **SGLang** for serving the model.

|

| 221 |

+

|

| 222 |

+

#### Option 1: Using vLLM

|

| 223 |

+

|

| 224 |

+

To run Qwen with 1M context support:

|

| 225 |

+

|

| 226 |

+

```bash

|

| 227 |

+

pip install -U vllm \

|

| 228 |

+

--torch-backend=auto \

|

| 229 |

+

--extra-index-url https://wheels.vllm.ai/nightly

|

| 230 |

+

```

|

| 231 |

+

|

| 232 |

+

Then launch the server with Dual Chunk Flash Attention enabled:

|

| 233 |

+

|

| 234 |

+

```bash

|

| 235 |

+

VLLM_ATTENTION_BACKEND=DUAL_CHUNK_FLASH_ATTN VLLM_USE_V1=0 \

|

| 236 |

+

vllm serve ./Qwen3-235B-A22B-Instruct-2507 \

|

| 237 |

+

--tensor-parallel-size 8 \

|

| 238 |

+

--max-model-len 1010000 \

|

| 239 |

+

--enable-chunked-prefill \

|

| 240 |

+

--max-num-batched-tokens 131072 \

|

| 241 |

+

--enforce-eager \

|

| 242 |

+

--max-num-seqs 1 \

|

| 243 |

+

--gpu-memory-utilization 0.85

|

| 244 |

+

```

|

| 245 |

+

|

| 246 |

+

##### Key Parameters

|

| 247 |

+

|

| 248 |

+

| Parameter | Purpose |

|

| 249 |

+

|--------|--------|

|

| 250 |

+

| `VLLM_ATTENTION_BACKEND=DUAL_CHUNK_FLASH_ATTN` | Enables the custom attention kernel for long-context efficiency |

|

| 251 |

+

| `--max-model-len 1010000` | Sets maximum context length to ~1M tokens |

|

| 252 |

+

| `--enable-chunked-prefill` | Allows chunked prefill for very long inputs (avoids OOM) |

|

| 253 |

+

| `--max-num-batched-tokens 131072` | Controls batch size during prefill; balances throughput and memory |

|

| 254 |

+

| `--enforce-eager` | Disables CUDA graph capture (required for dual chunk attention) |

|

| 255 |

+

| `--max-num-seqs 1` | Limits concurrent sequences due to extreme memory usage |

|

| 256 |

+

| `--gpu-memory-utilization 0.85` | Set the fraction of GPU memory to be used for the model executor |

|

| 257 |

+

|

| 258 |

+

#### Option 2: Using SGLang

|

| 259 |

+

|

| 260 |

+

First, clone and install the specialized branch:

|

| 261 |

+

|

| 262 |

+

```bash

|

| 263 |

+

git clone https://github.com/sgl-project/sglang.git

|

| 264 |

+

cd sglang

|

| 265 |

+

pip install -e "python[all]"

|

| 266 |

+

```

|

| 267 |

+

|

| 268 |

+

Launch the server with DCA support:

|

| 269 |

+

|

| 270 |

+

```bash

|

| 271 |

+

python3 -m sglang.launch_server \

|

| 272 |

+

--model-path ./Qwen3-235B-A22B-Instruct-2507 \

|

| 273 |

+

--context-length 1010000 \

|

| 274 |

+

--mem-frac 0.75 \

|

| 275 |

+

--attention-backend dual_chunk_flash_attn \

|

| 276 |

+

--tp 8 \

|

| 277 |

+

--chunked-prefill-size 131072

|

| 278 |

+

```

|

| 279 |

+

|

| 280 |

+

##### Key Parameters

|

| 281 |

+

|

| 282 |

+

| Parameter | Purpose |

|

| 283 |

+

|---------|--------|

|

| 284 |

+

| `--attention-backend dual_chunk_flash_attn` | Activates Dual Chunk Flash Attention |

|

| 285 |

+

| `--context-length 1010000` | Defines max input length |

|

| 286 |

+

| `--mem-frac 0.75` | The fraction of the memory used for static allocation (model weights and KV cache memory pool). Use a smaller value if you see out-of-memory errors. |

|

| 287 |

+

| `--tp 8` | Tensor parallelism size (matches model sharding) |

|

| 288 |

+

| `--chunked-prefill-size 131072` | Prefill chunk size for handling long inputs without OOM |

|

| 289 |

+

|

| 290 |

+

#### Troubleshooting:

|

| 291 |

+

|

| 292 |

+

1. Encountering the error: "The model's max sequence length (xxxxx) is larger than the maximum number of tokens that can be stored in the KV cache." or "RuntimeError: Not enough memory. Please try to increase --mem-fraction-static."

|

| 293 |

+

|

| 294 |

+

The VRAM reserved for the KV cache is insufficient.

|

| 295 |

+

- vLLM: Consider reducing the ``max_model_len`` or increasing the ``tensor_parallel_size`` and ``gpu_memory_utilization``. Alternatively, you can reduce ``max_num_batched_tokens``, although this may significantly slow down inference.

|

| 296 |

+

- SGLang: Consider reducing the ``context-length`` or increasing the ``tp`` and ``mem-frac``. Alternatively, you can reduce ``chunked-prefill-size``, although this may significantly slow down inference.

|

| 297 |

+

|

| 298 |

+

2. Encountering the error: "torch.OutOfMemoryError: CUDA out of memory."

|

| 299 |

+

|

| 300 |

+

The VRAM reserved for activation weights is insufficient. You can try lowering ``gpu_memory_utilization`` or ``mem-frac``, but be aware that this might reduce the VRAM available for the KV cache.

|

| 301 |

+

|

| 302 |

+

3. Encountering the error: "Input prompt (xxxxx tokens) + lookahead slots (0) is too long and exceeds the capacity of the block manager." or "The input (xxx xtokens) is longer than the model's context length (xxx tokens)."

|

| 303 |

+

|

| 304 |

+

The input is too lengthy. Consider using a shorter sequence or increasing the ``max_model_len`` or ``context-length``.

|

| 305 |

+

|

| 306 |

+

#### Long-Context Performance

|

| 307 |

+

|

| 308 |

+

We test the model on an 1M version of the [RULER](https://arxiv.org/abs/2404.06654) benchmark.

|

| 309 |

+

|

| 310 |

+

| Model Name | Acc avg | 4k | 8k | 16k | 32k | 64k | 96k | 128k | 192k | 256k | 384k | 512k | 640k | 768k | 896k | 1000k |

|

| 311 |

+

|---------------------------------------------|---------|------|------|------|------|------|------|------|------|------|------|------|------|------|------|-------|

|

| 312 |

+

| Qwen3-235B-A22B (Non-Thinking) | 83.9 | 97.7 | 96.1 | 97.5 | 96.1 | 94.2 | 90.3 | 88.5 | 85.0 | 82.1 | 79.2 | 74.4 | 70.0 | 71.0 | 68.5 | 68.0 |

|

| 313 |

+

| Qwen3-235B-A22B-Instruct-2507 (Full Attention) | 92.5 | 98.5 | 97.6 | 96.9 | 97.3 | 95.8 | 94.9 | 93.9 | 94.5 | 91.0 | 92.2 | 90.9 | 87.8 | 84.8 | 86.5 | 84.5 |

|

| 314 |

+

| Qwen3-235B-A22B-Instruct-2507 (Sparse Attention) | 91.7 | 98.5 | 97.2 | 97.3 | 97.7 | 96.6 | 94.6 | 92.8 | 94.3 | 90.5 | 89.7 | 89.5 | 86.4 | 83.6 | 84.2 | 82.5 |

|

| 315 |

+

|

| 316 |

+

|

| 317 |

+

* All models are evaluated with Dual Chunk Attention enabled.

|

| 318 |

+

* Since the evaluation is time-consuming, we use 260 samples for each length (13 sub-tasks, 20 samples for each).

|

| 319 |

+

|

| 320 |

+

## Best Practices

|

| 321 |

+

|

| 322 |

+

To achieve optimal performance, we recommend the following settings:

|

| 323 |

+

|

| 324 |

+

1. **Sampling Parameters**:

|

| 325 |

+

- We suggest using `Temperature=0.7`, `TopP=0.8`, `TopK=20`, and `MinP=0`.

|

| 326 |

+

- For supported frameworks, you can adjust the `presence_penalty` parameter between 0 and 2 to reduce endless repetitions. However, using a higher value may occasionally result in language mixing and a slight decrease in model performance.

|

| 327 |

+

|

| 328 |

+

2. **Adequate Output Length**: We recommend using an output length of 16,384 tokens for most queries, which is adequate for instruct models.

|

| 329 |

+

|

| 330 |

+

3. **Standardize Output Format**: We recommend using prompts to standardize model outputs when benchmarking.

|

| 331 |

+

- **Math Problems**: Include "Please reason step by step, and put your final answer within \boxed{}." in the prompt.

|

| 332 |

+

- **Multiple-Choice Questions**: Add the following JSON structure to the prompt to standardize responses: "Please show your choice in the `answer` field with only the choice letter, e.g., `"answer": "C"`."

|

| 333 |

+

|

| 334 |

+

### Citation

|

| 335 |

+

|

| 336 |

+

If you find our work helpful, feel free to give us a cite.

|

| 337 |

+

|

| 338 |

+

```

|

| 339 |

+

@misc{qwen3technicalreport,

|

| 340 |

+

title={Qwen3 Technical Report},

|

| 341 |

+

author={Qwen Team},

|

| 342 |

+

year={2025},

|

| 343 |

+

eprint={2505.09388},

|

| 344 |

+

archivePrefix={arXiv},

|

| 345 |

+

primaryClass={cs.CL},

|

| 346 |

+

url={https://arxiv.org/abs/2505.09388},

|

| 347 |

+

}

|

| 348 |

+

|

| 349 |

+

@article{qwen2.5-1m,

|

| 350 |

+

title={Qwen2.5-1M Technical Report},

|

| 351 |

+

author={An Yang and Bowen Yu and Chengyuan Li and Dayiheng Liu and Fei Huang and Haoyan Huang and Jiandong Jiang and Jianhong Tu and Jianwei Zhang and Jingren Zhou and Junyang Lin and Kai Dang and Kexin Yang and Le Yu and Mei Li and Minmin Sun and Qin Zhu and Rui Men and Tao He and Weijia Xu and Wenbiao Yin and Wenyuan Yu and Xiafei Qiu and Xingzhang Ren and Xinlong Yang and Yong Li and Zhiying Xu and Zipeng Zhang},

|

| 352 |

+

journal={arXiv preprint arXiv:2501.15383},

|

| 353 |

+

year={2025}

|

| 354 |

+

}

|

| 355 |

+

```

|

config.json

ADDED

|

@@ -0,0 +1,38 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"Qwen3MoeForCausalLM"

|

| 4 |

+

],

|

| 5 |

+

"attention_bias": false,

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"bos_token_id": 151643,

|

| 8 |

+

"decoder_sparse_step": 1,

|

| 9 |

+

"eos_token_id": 151645,

|

| 10 |

+

"head_dim": 128,

|

| 11 |

+

"hidden_act": "silu",

|

| 12 |

+

"hidden_size": 4096,

|

| 13 |

+

"initializer_range": 0.02,

|

| 14 |

+

"intermediate_size": 12288,

|

| 15 |

+

"max_position_embeddings": 262144,

|

| 16 |

+

"max_window_layers": 94,

|

| 17 |

+

"mlp_only_layers": [],

|

| 18 |

+

"model_type": "qwen3_moe",

|

| 19 |

+

"moe_intermediate_size": 1536,

|

| 20 |

+

"norm_topk_prob": true,

|

| 21 |

+

"num_attention_heads": 64,

|

| 22 |

+

"num_experts": 128,

|

| 23 |

+

"num_experts_per_tok": 8,

|

| 24 |

+

"num_hidden_layers": 94,

|

| 25 |

+

"num_key_value_heads": 4,

|

| 26 |

+

"output_router_logits": false,

|

| 27 |

+

"rms_norm_eps": 1e-06,

|

| 28 |

+

"rope_scaling": null,

|

| 29 |

+

"rope_theta": 5000000,

|

| 30 |

+

"router_aux_loss_coef": 0.001,

|

| 31 |

+

"sliding_window": null,

|

| 32 |

+

"tie_word_embeddings": false,

|

| 33 |

+

"torch_dtype": "bfloat16",

|

| 34 |

+

"transformers_version": "4.51.0",

|

| 35 |

+

"use_cache": true,

|

| 36 |

+

"use_sliding_window": false,

|

| 37 |

+

"vocab_size": 151936

|

| 38 |

+

}

|

config_1m.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

generation_config.json

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token_id": 151643,

|

| 3 |

+

"do_sample": true,

|

| 4 |

+

"eos_token_id": [

|

| 5 |

+

151645,

|

| 6 |

+

151643

|

| 7 |

+

],

|

| 8 |

+

"pad_token_id": 151643,

|

| 9 |

+

"temperature": 0.7,

|

| 10 |

+

"top_k": 20,

|

| 11 |

+

"top_p": 0.8,

|

| 12 |

+

"transformers_version": "4.51.0"

|

| 13 |

+

}

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

model-00003-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:7f9ec818fea58d6598e8bc0973ca2fbdddb52d26588901005366a6617e138fb2

|

| 3 |

+

size 3994081856

|

model-00011-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:7025ba6dd9370fe368ea782b5c28d03695d97bc2dd148c1f4e19059cdb213aab

|

| 3 |

+

size 3994081856

|

model-00015-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:062424a25df6b24be8841882eb5d2abb957148cf09f2e9d29f865b73568834e7

|

| 3 |

+

size 3994082088

|

model-00016-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:df7b88e1c2d5ea7289af8a0880d239563f62b6ecb5e4dc0a34b20d5700a0bf48

|

| 3 |

+

size 3994082176

|

model-00017-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:410fd5557ce7eec0c40a15d09ad256680d65e28128ab0a180bd69049fbe05c2c

|

| 3 |

+

size 3994082176

|

model-00020-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:cd40c113b9e6870c9f941f0efae194fc224a689133106e7d53ad48526d11cc47

|

| 3 |

+

size 3994082088

|

model-00021-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:82bfeab2fd2d5a1e678a2d659ec0b8d0e1a231690e159ed7bd943b59a667ea33

|

| 3 |

+

size 3994082160

|

model-00027-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5e4f3de86d634b02aeee58382dcff68f405abf4afbe0617c4a56bf070c9f3c7f

|

| 3 |

+

size 3994082176

|

model-00030-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:03da01a0f239b5325e0fcf253813de2e9507faff036525df197c3634e8ea24f9

|

| 3 |

+

size 3997211632

|

model-00031-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:09667e2b0f389c0481780da715b5951e873f717f0bf03da61341c6a2475611d7

|

| 3 |

+

size 3994082160

|

model-00032-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:799b6ed83c23e2706a3bcdfd700c7a2f552f70bacb891ce58cf9d109fedaf0a8

|

| 3 |

+

size 3994082176

|

model-00035-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5b077a58a27872786ffff22bf0aeb58de25d372c3206bf7b214f1bdb60b45201

|

| 3 |

+

size 3988822680

|

model-00036-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:674872e3bb982c32268f4c237469f9dc93bac314d1de941729c0dbc6ec88a0e1

|

| 3 |

+

size 3994082152

|

model-00039-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c98c5bcbcbf22f7aefb99d9ae19d972ac55e9f247047b5b9435209a1ed18d3ed

|

| 3 |

+

size 3994082200

|

model-00042-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0d4dba05f7e8166b8d3077624686d07dd0ea7ed8746edaa29253eba8904842dc

|

| 3 |

+

size 3994082176

|

model-00044-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:27fb5f22dfaab7fa781fb4ae665c24449938c07e85c425a8d0606182c568f990

|

| 3 |

+

size 3994082192

|

model-00045-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0fa9dcbbffd6844c319cdc0ee9a4d07d3dc54cb6f5fbf79263d798a07d424122

|

| 3 |

+

size 3988822704

|

model-00056-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0176da7e69047270eb02002bc5bda382b9ece009b2485d9ec0da5420625e3c07

|

| 3 |

+

size 3994082128

|

model-00059-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9aee2021a40e85188399af11251f0225424dfdfb14a67fbd4fd1f0f48efb211f

|

| 3 |

+

size 3994082176

|

model-00063-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d9b174c1a4e0ad2061051658f4c253d7279afe07aca023fa3a041616514513d9

|

| 3 |

+

size 3994082176

|

model-00064-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:015430bc00f0b42cb74fc18701a2a4d6a47139d2121298736908bf21a028b576

|

| 3 |

+

size 3994082176

|

model-00066-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f63f53989b4228e6ad3ee1e86f719263ddbfea90f15fab638892742d78f6f511

|

| 3 |

+

size 3994082120

|

model-00072-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c3f072e339ee962bc7c55a257a758a6af02ea7c90c6f85d329d20d53e1e72571

|

| 3 |

+

size 3994082176

|

model-00075-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9197a1e288418a449dd26d97f6b4c6319de670b918d96b99af74b6fecba7708e

|

| 3 |

+

size 3988822752

|

model-00088-of-00118.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|