Commit ·

db843e4

0

Parent(s):

Duplicate from diffusers/sdxl-instructpix2pix-768

Browse filesCo-authored-by: Sayak Paul <sayakpaul@users.noreply.huggingface.co>

This view is limited to 50 files because it contains too many changes. See raw diff

- .gitattributes +35 -0

- README.md +89 -0

- model_index.json +34 -0

- scheduler/scheduler_config.json +18 -0

- text_encoder/config.json +25 -0

- text_encoder/model.safetensors +3 -0

- text_encoder_2/config.json +25 -0

- text_encoder_2/model.safetensors +3 -0

- tokenizer/merges.txt +0 -0

- tokenizer/special_tokens_map.json +24 -0

- tokenizer/tokenizer_config.json +33 -0

- tokenizer/vocab.json +0 -0

- tokenizer_2/merges.txt +0 -0

- tokenizer_2/special_tokens_map.json +24 -0

- tokenizer_2/tokenizer_config.json +33 -0

- tokenizer_2/vocab.json +0 -0

- unet/config.json +71 -0

- unet/diffusion_pytorch_model.safetensors +3 -0

- vae/config.json +32 -0

- vae/diffusion_pytorch_model.safetensors +3 -0

- validation_images/step_10000_val_img_0.png +0 -0

- validation_images/step_10000_val_img_1.png +0 -0

- validation_images/step_10000_val_img_2.png +0 -0

- validation_images/step_10000_val_img_3.png +0 -0

- validation_images/step_1000_val_img_0.png +0 -0

- validation_images/step_1000_val_img_1.png +0 -0

- validation_images/step_1000_val_img_2.png +0 -0

- validation_images/step_1000_val_img_3.png +0 -0

- validation_images/step_100_val_img_0.png +0 -0

- validation_images/step_100_val_img_1.png +0 -0

- validation_images/step_100_val_img_2.png +0 -0

- validation_images/step_100_val_img_3.png +0 -0

- validation_images/step_10100_val_img_0.png +0 -0

- validation_images/step_10100_val_img_1.png +0 -0

- validation_images/step_10100_val_img_2.png +0 -0

- validation_images/step_10100_val_img_3.png +0 -0

- validation_images/step_10200_val_img_0.png +0 -0

- validation_images/step_10200_val_img_1.png +0 -0

- validation_images/step_10200_val_img_2.png +0 -0

- validation_images/step_10200_val_img_3.png +0 -0

- validation_images/step_10300_val_img_0.png +0 -0

- validation_images/step_10300_val_img_1.png +0 -0

- validation_images/step_10300_val_img_2.png +0 -0

- validation_images/step_10300_val_img_3.png +0 -0

- validation_images/step_10400_val_img_0.png +0 -0

- validation_images/step_10400_val_img_1.png +0 -0

- validation_images/step_10400_val_img_2.png +0 -0

- validation_images/step_10400_val_img_3.png +0 -0

- validation_images/step_10500_val_img_0.png +0 -0

- validation_images/step_10500_val_img_1.png +0 -0

.gitattributes

ADDED

|

@@ -0,0 +1,35 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,89 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: openrail++

|

| 3 |

+

base_model: stabilityai/stable-diffusion-xl-base-1.0

|

| 4 |

+

tags:

|

| 5 |

+

- stable-diffusion-xl

|

| 6 |

+

- stable-diffusion-xl-diffusers

|

| 7 |

+

- text-to-image

|

| 8 |

+

- diffusers

|

| 9 |

+

- instruct-pix2pix

|

| 10 |

+

inference: false

|

| 11 |

+

datasets:

|

| 12 |

+

- timbrooks/instructpix2pix-clip-filtered

|

| 13 |

+

---

|

| 14 |

+

|

| 15 |

+

# SDXL InstructPix2Pix (768768)

|

| 16 |

+

|

| 17 |

+

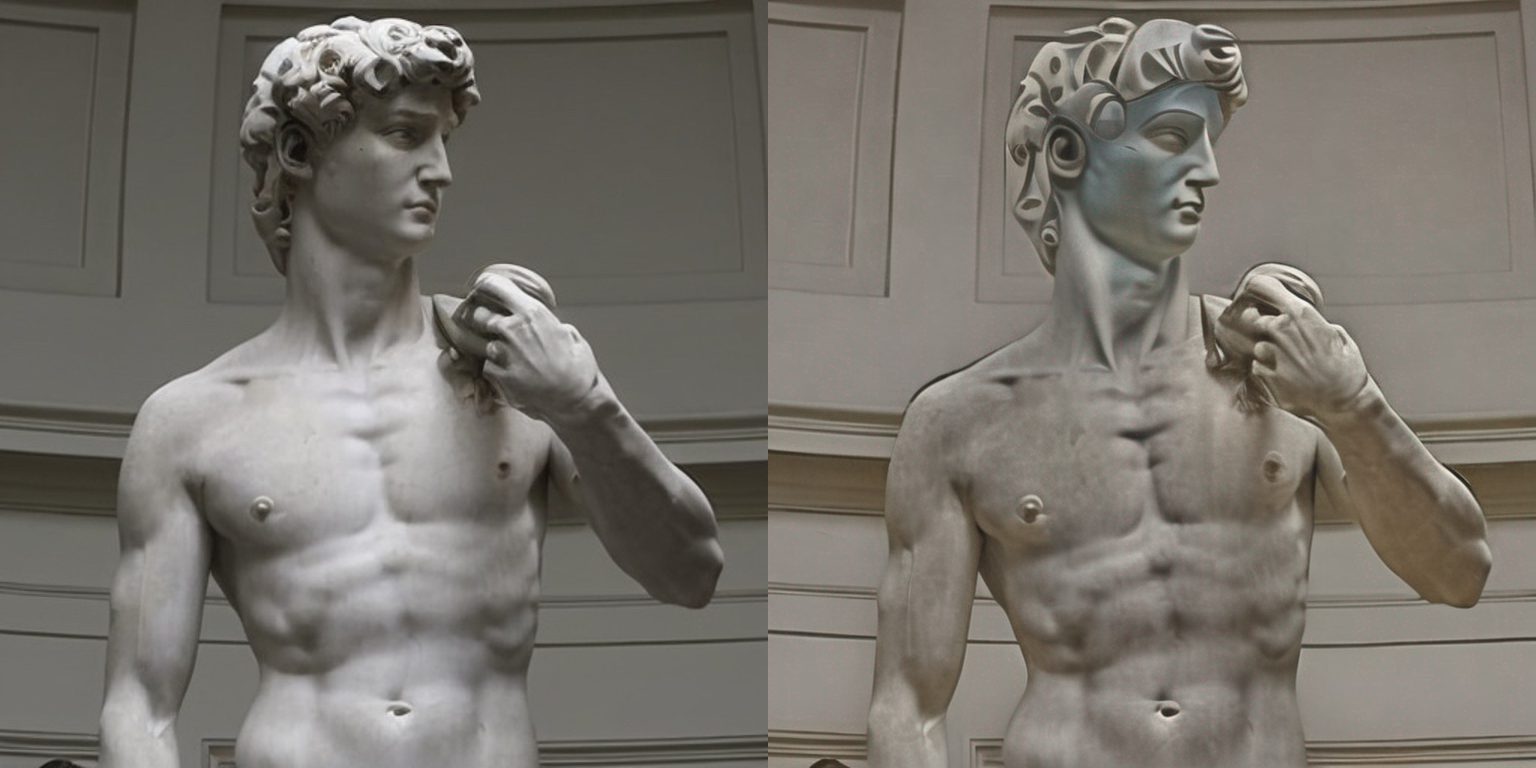

Instruction fine-tuning of [Stable Diffusion XL (SDXL)](https://hf.co/papers/2307.01952) à la [InstructPix2Pix](https://huggingface.co/papers/2211.09800). Some results below:

|

| 18 |

+

|

| 19 |

+

|

| 20 |

+

**Edit instruction**: *"Turn sky into a cloudy one"*

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

**Edit instruction**: *"Make it a picasso painting"*

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

|

| 28 |

+

**Edit instruction**: *"make the person older"*

|

| 29 |

+

|

| 30 |

+

|

| 31 |

+

|

| 32 |

+

## Usage in 🧨 diffusers

|

| 33 |

+

|

| 34 |

+

Make sure to install the libraries first:

|

| 35 |

+

|

| 36 |

+

```bash

|

| 37 |

+

pip install accelerate transformers

|

| 38 |

+

pip install git+https://github.com/huggingface/diffusers

|

| 39 |

+

```

|

| 40 |

+

|

| 41 |

+

```python

|

| 42 |

+

import torch

|

| 43 |

+

from diffusers import StableDiffusionXLInstructPix2PixPipeline

|

| 44 |

+

from diffusers.utils import load_image

|

| 45 |

+

|

| 46 |

+

resolution = 768

|

| 47 |

+

image = load_image(

|

| 48 |

+

"https://hf.co/datasets/diffusers/diffusers-images-docs/resolve/main/mountain.png"

|

| 49 |

+

).resize((resolution, resolution))

|

| 50 |

+

edit_instruction = "Turn sky into a cloudy one"

|

| 51 |

+

|

| 52 |

+

pipe = StableDiffusionXLInstructPix2PixPipeline.from_pretrained(

|

| 53 |

+

"diffusers/sdxl-instructpix2pix-768", torch_dtype=torch.float16

|

| 54 |

+

).to("cuda")

|

| 55 |

+

|

| 56 |

+

edited_image = pipe(

|

| 57 |

+

prompt=edit_instruction,

|

| 58 |

+

image=image,

|

| 59 |

+

height=resolution,

|

| 60 |

+

width=resolution,

|

| 61 |

+

guidance_scale=3.0,

|

| 62 |

+

image_guidance_scale=1.5,

|

| 63 |

+

num_inference_steps=30,

|

| 64 |

+

).images[0]

|

| 65 |

+

edited_image.save("edited_image.png")

|

| 66 |

+

```

|

| 67 |

+

|

| 68 |

+

To know more, refer to the [documentation](https://huggingface.co/docs/diffusers/main/en/api/pipelines/pix2pix).

|

| 69 |

+

|

| 70 |

+

🚨 Note that this checkpoint is experimental in nature and there's a lot of room for improvements. Please use the "Discussions" tab of this repository to open issues and discuss. 🚨

|

| 71 |

+

|

| 72 |

+

## Training

|

| 73 |

+

We fine-tuned SDXL using the InstructPix2Pix training methodology for 15000 steps using a fixed learning rate of 5e-6 on an image resolution of 768x768.

|

| 74 |

+

|

| 75 |

+

Our training scripts and other utilities can be found [here](https://github.com/sayakpaul/instructpix2pix-sdxl/tree/b9acc91d6ddf1f2aa2f9012b68216deb40e178f3) and they were built on top of our [official training script](https://huggingface.co/docs/diffusers/main/en/training/instructpix2pix).

|

| 76 |

+

|

| 77 |

+

Our training logs are available on Weights and Biases [here](https://wandb.ai/sayakpaul/instruct-pix2pix-sdxl-new/runs/sw53gxmc). Refer to this link for details on all the hyperparameters.

|

| 78 |

+

|

| 79 |

+

### Training data

|

| 80 |

+

We used this dataset: [timbrooks/instructpix2pix-clip-filtered](https://huggingface.co/datasets/timbrooks/instructpix2pix-clip-filtered).

|

| 81 |

+

|

| 82 |

+

### Compute

|

| 83 |

+

one 8xA100 machine

|

| 84 |

+

|

| 85 |

+

### Batch size

|

| 86 |

+

Data parallel with a single gpu batch size of 8 for a total batch size of 32.

|

| 87 |

+

|

| 88 |

+

### Mixed precision

|

| 89 |

+

FP16

|

model_index.json

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "StableDiffusionXLInstructPix2PixPipeline",

|

| 3 |

+

"_diffusers_version": "0.21.0.dev0",

|

| 4 |

+

"_name_or_path": "stabilityai/stable-diffusion-xl-base-1.0",

|

| 5 |

+

"force_zeros_for_empty_prompt": true,

|

| 6 |

+

"scheduler": [

|

| 7 |

+

"diffusers",

|

| 8 |

+

"EulerDiscreteScheduler"

|

| 9 |

+

],

|

| 10 |

+

"text_encoder": [

|

| 11 |

+

"transformers",

|

| 12 |

+

"CLIPTextModel"

|

| 13 |

+

],

|

| 14 |

+

"text_encoder_2": [

|

| 15 |

+

"transformers",

|

| 16 |

+

"CLIPTextModelWithProjection"

|

| 17 |

+

],

|

| 18 |

+

"tokenizer": [

|

| 19 |

+

"transformers",

|

| 20 |

+

"CLIPTokenizer"

|

| 21 |

+

],

|

| 22 |

+

"tokenizer_2": [

|

| 23 |

+

"transformers",

|

| 24 |

+

"CLIPTokenizer"

|

| 25 |

+

],

|

| 26 |

+

"unet": [

|

| 27 |

+

"diffusers",

|

| 28 |

+

"UNet2DConditionModel"

|

| 29 |

+

],

|

| 30 |

+

"vae": [

|

| 31 |

+

"diffusers",

|

| 32 |

+

"AutoencoderKL"

|

| 33 |

+

]

|

| 34 |

+

}

|

scheduler/scheduler_config.json

ADDED

|

@@ -0,0 +1,18 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "EulerDiscreteScheduler",

|

| 3 |

+

"_diffusers_version": "0.21.0.dev0",

|

| 4 |

+

"beta_end": 0.012,

|

| 5 |

+

"beta_schedule": "scaled_linear",

|

| 6 |

+

"beta_start": 0.00085,

|

| 7 |

+

"clip_sample": false,

|

| 8 |

+

"interpolation_type": "linear",

|

| 9 |

+

"num_train_timesteps": 1000,

|

| 10 |

+

"prediction_type": "epsilon",

|

| 11 |

+

"sample_max_value": 1.0,

|

| 12 |

+

"set_alpha_to_one": false,

|

| 13 |

+

"skip_prk_steps": true,

|

| 14 |

+

"steps_offset": 1,

|

| 15 |

+

"timestep_spacing": "leading",

|

| 16 |

+

"trained_betas": null,

|

| 17 |

+

"use_karras_sigmas": false

|

| 18 |

+

}

|

text_encoder/config.json

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "stabilityai/stable-diffusion-xl-base-1.0",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"CLIPTextModel"

|

| 5 |

+

],

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"bos_token_id": 0,

|

| 8 |

+

"dropout": 0.0,

|

| 9 |

+

"eos_token_id": 2,

|

| 10 |

+

"hidden_act": "quick_gelu",

|

| 11 |

+

"hidden_size": 768,

|

| 12 |

+

"initializer_factor": 1.0,

|

| 13 |

+

"initializer_range": 0.02,

|

| 14 |

+

"intermediate_size": 3072,

|

| 15 |

+

"layer_norm_eps": 1e-05,

|

| 16 |

+

"max_position_embeddings": 77,

|

| 17 |

+

"model_type": "clip_text_model",

|

| 18 |

+

"num_attention_heads": 12,

|

| 19 |

+

"num_hidden_layers": 12,

|

| 20 |

+

"pad_token_id": 1,

|

| 21 |

+

"projection_dim": 768,

|

| 22 |

+

"torch_dtype": "float16",

|

| 23 |

+

"transformers_version": "4.31.0",

|

| 24 |

+

"vocab_size": 49408

|

| 25 |

+

}

|

text_encoder/model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:660c6f5b1abae9dc498ac2d21e1347d2abdb0cf6c0c0c8576cd796491d9a6cdd

|

| 3 |

+

size 246144152

|

text_encoder_2/config.json

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "stabilityai/stable-diffusion-xl-base-1.0",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"CLIPTextModelWithProjection"

|

| 5 |

+

],

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"bos_token_id": 0,

|

| 8 |

+

"dropout": 0.0,

|

| 9 |

+

"eos_token_id": 2,

|

| 10 |

+

"hidden_act": "gelu",

|

| 11 |

+

"hidden_size": 1280,

|

| 12 |

+

"initializer_factor": 1.0,

|

| 13 |

+

"initializer_range": 0.02,

|

| 14 |

+

"intermediate_size": 5120,

|

| 15 |

+

"layer_norm_eps": 1e-05,

|

| 16 |

+

"max_position_embeddings": 77,

|

| 17 |

+

"model_type": "clip_text_model",

|

| 18 |

+

"num_attention_heads": 20,

|

| 19 |

+

"num_hidden_layers": 32,

|

| 20 |

+

"pad_token_id": 1,

|

| 21 |

+

"projection_dim": 1280,

|

| 22 |

+

"torch_dtype": "float16",

|

| 23 |

+

"transformers_version": "4.31.0",

|

| 24 |

+

"vocab_size": 49408

|

| 25 |

+

}

|

text_encoder_2/model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ec310df2af79c318e24d20511b601a591ca8cd4f1fce1d8dff822a356bcdb1f4

|

| 3 |

+

size 1389382176

|

tokenizer/merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

tokenizer/special_tokens_map.json

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token": {

|

| 3 |

+

"content": "<|startoftext|>",

|

| 4 |

+

"lstrip": false,

|

| 5 |

+

"normalized": true,

|

| 6 |

+

"rstrip": false,

|

| 7 |

+

"single_word": false

|

| 8 |

+

},

|

| 9 |

+

"eos_token": {

|

| 10 |

+

"content": "<|endoftext|>",

|

| 11 |

+

"lstrip": false,

|

| 12 |

+

"normalized": true,

|

| 13 |

+

"rstrip": false,

|

| 14 |

+

"single_word": false

|

| 15 |

+

},

|

| 16 |

+

"pad_token": "<|endoftext|>",

|

| 17 |

+

"unk_token": {

|

| 18 |

+

"content": "<|endoftext|>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": true,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

}

|

| 24 |

+

}

|

tokenizer/tokenizer_config.json

ADDED

|

@@ -0,0 +1,33 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_prefix_space": false,

|

| 3 |

+

"bos_token": {

|

| 4 |

+

"__type": "AddedToken",

|

| 5 |

+

"content": "<|startoftext|>",

|

| 6 |

+

"lstrip": false,

|

| 7 |

+

"normalized": true,

|

| 8 |

+

"rstrip": false,

|

| 9 |

+

"single_word": false

|

| 10 |

+

},

|

| 11 |

+

"clean_up_tokenization_spaces": true,

|

| 12 |

+

"do_lower_case": true,

|

| 13 |

+

"eos_token": {

|

| 14 |

+

"__type": "AddedToken",

|

| 15 |

+

"content": "<|endoftext|>",

|

| 16 |

+

"lstrip": false,

|

| 17 |

+

"normalized": true,

|

| 18 |

+

"rstrip": false,

|

| 19 |

+

"single_word": false

|

| 20 |

+

},

|

| 21 |

+

"errors": "replace",

|

| 22 |

+

"model_max_length": 77,

|

| 23 |

+

"pad_token": "<|endoftext|>",

|

| 24 |

+

"tokenizer_class": "CLIPTokenizer",

|

| 25 |

+

"unk_token": {

|

| 26 |

+

"__type": "AddedToken",

|

| 27 |

+

"content": "<|endoftext|>",

|

| 28 |

+

"lstrip": false,

|

| 29 |

+

"normalized": true,

|

| 30 |

+

"rstrip": false,

|

| 31 |

+

"single_word": false

|

| 32 |

+

}

|

| 33 |

+

}

|

tokenizer/vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

tokenizer_2/merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

tokenizer_2/special_tokens_map.json

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token": {

|

| 3 |

+

"content": "<|startoftext|>",

|

| 4 |

+

"lstrip": false,

|

| 5 |

+

"normalized": true,

|

| 6 |

+

"rstrip": false,

|

| 7 |

+

"single_word": false

|

| 8 |

+

},

|

| 9 |

+

"eos_token": {

|

| 10 |

+

"content": "<|endoftext|>",

|

| 11 |

+

"lstrip": false,

|

| 12 |

+

"normalized": true,

|

| 13 |

+

"rstrip": false,

|

| 14 |

+

"single_word": false

|

| 15 |

+

},

|

| 16 |

+

"pad_token": "!",

|

| 17 |

+

"unk_token": {

|

| 18 |

+

"content": "<|endoftext|>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": true,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

}

|

| 24 |

+

}

|

tokenizer_2/tokenizer_config.json

ADDED

|

@@ -0,0 +1,33 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_prefix_space": false,

|

| 3 |

+

"bos_token": {

|

| 4 |

+

"__type": "AddedToken",

|

| 5 |

+

"content": "<|startoftext|>",

|

| 6 |

+

"lstrip": false,

|

| 7 |

+

"normalized": true,

|

| 8 |

+

"rstrip": false,

|

| 9 |

+

"single_word": false

|

| 10 |

+

},

|

| 11 |

+

"clean_up_tokenization_spaces": true,

|

| 12 |

+

"do_lower_case": true,

|

| 13 |

+

"eos_token": {

|

| 14 |

+

"__type": "AddedToken",

|

| 15 |

+

"content": "<|endoftext|>",

|

| 16 |

+

"lstrip": false,

|

| 17 |

+

"normalized": true,

|

| 18 |

+

"rstrip": false,

|

| 19 |

+

"single_word": false

|

| 20 |

+

},

|

| 21 |

+

"errors": "replace",

|

| 22 |

+

"model_max_length": 77,

|

| 23 |

+

"pad_token": "!",

|

| 24 |

+

"tokenizer_class": "CLIPTokenizer",

|

| 25 |

+

"unk_token": {

|

| 26 |

+

"__type": "AddedToken",

|

| 27 |

+

"content": "<|endoftext|>",

|

| 28 |

+

"lstrip": false,

|

| 29 |

+

"normalized": true,

|

| 30 |

+

"rstrip": false,

|

| 31 |

+

"single_word": false

|

| 32 |

+

}

|

| 33 |

+

}

|

tokenizer_2/vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

unet/config.json

ADDED

|

@@ -0,0 +1,71 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.21.0.dev0",

|

| 4 |

+

"_name_or_path": "stabilityai/stable-diffusion-xl-base-1.0",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"addition_embed_type": "text_time",

|

| 7 |

+

"addition_embed_type_num_heads": 64,

|

| 8 |

+

"addition_time_embed_dim": 256,

|

| 9 |

+

"attention_head_dim": [

|

| 10 |

+

5,

|

| 11 |

+

10,

|

| 12 |

+

20

|

| 13 |

+

],

|

| 14 |

+

"attention_type": "default",

|

| 15 |

+

"block_out_channels": [

|

| 16 |

+

320,

|

| 17 |

+

640,

|

| 18 |

+

1280

|

| 19 |

+

],

|

| 20 |

+

"center_input_sample": false,

|

| 21 |

+

"class_embed_type": null,

|

| 22 |

+

"class_embeddings_concat": false,

|

| 23 |

+

"conv_in_kernel": 3,

|

| 24 |

+

"conv_out_kernel": 3,

|

| 25 |

+

"cross_attention_dim": 2048,

|

| 26 |

+

"cross_attention_norm": null,

|

| 27 |

+

"down_block_types": [

|

| 28 |

+

"DownBlock2D",

|

| 29 |

+

"CrossAttnDownBlock2D",

|

| 30 |

+

"CrossAttnDownBlock2D"

|

| 31 |

+

],

|

| 32 |

+

"downsample_padding": 1,

|

| 33 |

+

"dual_cross_attention": false,

|

| 34 |

+

"encoder_hid_dim": null,

|

| 35 |

+

"encoder_hid_dim_type": null,

|

| 36 |

+

"flip_sin_to_cos": true,

|

| 37 |

+

"freq_shift": 0,

|

| 38 |

+

"in_channels": 8,

|

| 39 |

+

"layers_per_block": 2,

|

| 40 |

+

"mid_block_only_cross_attention": null,

|

| 41 |

+

"mid_block_scale_factor": 1,

|

| 42 |

+

"mid_block_type": "UNetMidBlock2DCrossAttn",

|

| 43 |

+

"norm_eps": 1e-05,

|

| 44 |

+

"norm_num_groups": 32,

|

| 45 |

+

"num_attention_heads": null,

|

| 46 |

+

"num_class_embeds": null,

|

| 47 |

+

"only_cross_attention": false,

|

| 48 |

+

"out_channels": 4,

|

| 49 |

+

"projection_class_embeddings_input_dim": 2816,

|

| 50 |

+

"resnet_out_scale_factor": 1.0,

|

| 51 |

+

"resnet_skip_time_act": false,

|

| 52 |

+

"resnet_time_scale_shift": "default",

|

| 53 |

+

"sample_size": 128,

|

| 54 |

+

"time_cond_proj_dim": null,

|

| 55 |

+

"time_embedding_act_fn": null,

|

| 56 |

+

"time_embedding_dim": null,

|

| 57 |

+

"time_embedding_type": "positional",

|

| 58 |

+

"timestep_post_act": null,

|

| 59 |

+

"transformer_layers_per_block": [

|

| 60 |

+

1,

|

| 61 |

+

2,

|

| 62 |

+

10

|

| 63 |

+

],

|

| 64 |

+

"up_block_types": [

|

| 65 |

+

"CrossAttnUpBlock2D",

|

| 66 |

+

"CrossAttnUpBlock2D",

|

| 67 |

+

"UpBlock2D"

|

| 68 |

+

],

|

| 69 |

+

"upcast_attention": null,

|

| 70 |

+

"use_linear_projection": true

|

| 71 |

+

}

|

unet/diffusion_pytorch_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:01d538927edfdf63511a38840b9d0cd8d8dcd4e40001566b83c9104ef1a2f92f

|

| 3 |

+

size 10270123816

|

vae/config.json

ADDED

|

@@ -0,0 +1,32 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "AutoencoderKL",

|

| 3 |

+

"_diffusers_version": "0.21.0.dev0",

|

| 4 |

+

"_name_or_path": "madebyollin/sdxl-vae-fp16-fix",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"block_out_channels": [

|

| 7 |

+

128,

|

| 8 |

+

256,

|

| 9 |

+

512,

|

| 10 |

+

512

|

| 11 |

+

],

|

| 12 |

+

"down_block_types": [

|

| 13 |

+

"DownEncoderBlock2D",

|

| 14 |

+

"DownEncoderBlock2D",

|

| 15 |

+

"DownEncoderBlock2D",

|

| 16 |

+

"DownEncoderBlock2D"

|

| 17 |

+

],

|

| 18 |

+

"force_upcast": false,

|

| 19 |

+

"in_channels": 3,

|

| 20 |

+

"latent_channels": 4,

|

| 21 |

+

"layers_per_block": 2,

|

| 22 |

+

"norm_num_groups": 32,

|

| 23 |

+

"out_channels": 3,

|

| 24 |

+

"sample_size": 512,

|

| 25 |

+

"scaling_factor": 0.13025,

|

| 26 |

+

"up_block_types": [

|

| 27 |

+

"UpDecoderBlock2D",

|

| 28 |

+

"UpDecoderBlock2D",

|

| 29 |

+

"UpDecoderBlock2D",

|

| 30 |

+

"UpDecoderBlock2D"

|

| 31 |

+

]

|

| 32 |

+

}

|

vae/diffusion_pytorch_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:98a14dc6fe8d71c83576f135a87c61a16561c9c080abba418d2cc976ee034f88

|

| 3 |

+

size 334643268

|

validation_images/step_10000_val_img_0.png

ADDED

|

validation_images/step_10000_val_img_1.png

ADDED

|

validation_images/step_10000_val_img_2.png

ADDED

|

validation_images/step_10000_val_img_3.png

ADDED

|

validation_images/step_1000_val_img_0.png

ADDED

|

validation_images/step_1000_val_img_1.png

ADDED

|

validation_images/step_1000_val_img_2.png

ADDED

|

validation_images/step_1000_val_img_3.png

ADDED

|

validation_images/step_100_val_img_0.png

ADDED

|

validation_images/step_100_val_img_1.png

ADDED

|

validation_images/step_100_val_img_2.png

ADDED

|

validation_images/step_100_val_img_3.png

ADDED

|

validation_images/step_10100_val_img_0.png

ADDED

|

validation_images/step_10100_val_img_1.png

ADDED

|

validation_images/step_10100_val_img_2.png

ADDED

|

validation_images/step_10100_val_img_3.png

ADDED

|

validation_images/step_10200_val_img_0.png

ADDED

|

validation_images/step_10200_val_img_1.png

ADDED

|

validation_images/step_10200_val_img_2.png

ADDED

|

validation_images/step_10200_val_img_3.png

ADDED

|

validation_images/step_10300_val_img_0.png

ADDED

|

validation_images/step_10300_val_img_1.png

ADDED

|

validation_images/step_10300_val_img_2.png

ADDED

|

validation_images/step_10300_val_img_3.png

ADDED

|

validation_images/step_10400_val_img_0.png

ADDED

|

validation_images/step_10400_val_img_1.png

ADDED

|

validation_images/step_10400_val_img_2.png

ADDED

|

validation_images/step_10400_val_img_3.png

ADDED

|

validation_images/step_10500_val_img_0.png

ADDED

|

validation_images/step_10500_val_img_1.png

ADDED

|