Delete sd-webui-stablesr

Browse files- sd-webui-stablesr/.gitignore +0 -7

- sd-webui-stablesr/LICENSE +0 -35

- sd-webui-stablesr/LICENSE2 +0 -437

- sd-webui-stablesr/README.md +0 -156

- sd-webui-stablesr/README_CN.md +0 -150

- sd-webui-stablesr/scripts/__pycache__/stablesr.cpython-310.pyc +0 -0

- sd-webui-stablesr/scripts/stablesr.py +0 -276

- sd-webui-stablesr/srmodule/__pycache__/attn.cpython-310.pyc +0 -0

- sd-webui-stablesr/srmodule/__pycache__/colorfix.cpython-310.pyc +0 -0

- sd-webui-stablesr/srmodule/__pycache__/spade.cpython-310.pyc +0 -0

- sd-webui-stablesr/srmodule/__pycache__/struct_cond.cpython-310.pyc +0 -0

- sd-webui-stablesr/srmodule/attn.py +0 -111

- sd-webui-stablesr/srmodule/colorfix.py +0 -114

- sd-webui-stablesr/srmodule/spade.py +0 -206

- sd-webui-stablesr/srmodule/struct_cond.py +0 -353

- sd-webui-stablesr/tools/extract_srmodule.py +0 -20

- sd-webui-stablesr/tools/extract_vaecfw.py +0 -20

sd-webui-stablesr/.gitignore

DELETED

|

@@ -1,7 +0,0 @@

|

|

| 1 |

-

# meta

|

| 2 |

-

.vscode/

|

| 3 |

-

__pycache__/

|

| 4 |

-

.DS_Store

|

| 5 |

-

|

| 6 |

-

# settings

|

| 7 |

-

models/*.ckpt

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

sd-webui-stablesr/LICENSE

DELETED

|

@@ -1,35 +0,0 @@

|

|

| 1 |

-

S-Lab License 1.0

|

| 2 |

-

|

| 3 |

-

Copyright 2022 S-Lab

|

| 4 |

-

|

| 5 |

-

Redistribution and use for non-commercial purpose in source and

|

| 6 |

-

binary forms, with or without modification, are permitted provided

|

| 7 |

-

that the following conditions are met:

|

| 8 |

-

|

| 9 |

-

1. Redistributions of source code must retain the above copyright

|

| 10 |

-

notice, this list of conditions and the following disclaimer.

|

| 11 |

-

|

| 12 |

-

2. Redistributions in binary form must reproduce the above copyright

|

| 13 |

-

notice, this list of conditions and the following disclaimer in

|

| 14 |

-

the documentation and/or other materials provided with the

|

| 15 |

-

distribution.

|

| 16 |

-

|

| 17 |

-

3. Neither the name of the copyright holder nor the names of its

|

| 18 |

-

contributors may be used to endorse or promote products derived

|

| 19 |

-

from this software without specific prior written permission.

|

| 20 |

-

|

| 21 |

-

THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS

|

| 22 |

-

"AS IS" AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT

|

| 23 |

-

LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR

|

| 24 |

-

A PARTICULAR PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT

|

| 25 |

-

HOLDER OR CONTRIBUTORS BE LIABLE FOR ANY DIRECT, INDIRECT, INCIDENTAL,

|

| 26 |

-

SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING, BUT NOT

|

| 27 |

-

LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES; LOSS OF USE,

|

| 28 |

-

DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND ON ANY

|

| 29 |

-

THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY, OR TORT

|

| 30 |

-

(INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE

|

| 31 |

-

OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

|

| 32 |

-

|

| 33 |

-

In the event that redistribution and/or use for commercial purpose in

|

| 34 |

-

source or binary forms, with or without modification is required,

|

| 35 |

-

please contact the contributor(s) of the work.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

sd-webui-stablesr/LICENSE2

DELETED

|

@@ -1,437 +0,0 @@

|

|

| 1 |

-

Attribution-NonCommercial-ShareAlike 4.0 International

|

| 2 |

-

|

| 3 |

-

=======================================================================

|

| 4 |

-

|

| 5 |

-

Creative Commons Corporation ("Creative Commons") is not a law firm and

|

| 6 |

-

does not provide legal services or legal advice. Distribution of

|

| 7 |

-

Creative Commons public licenses does not create a lawyer-client or

|

| 8 |

-

other relationship. Creative Commons makes its licenses and related

|

| 9 |

-

information available on an "as-is" basis. Creative Commons gives no

|

| 10 |

-

warranties regarding its licenses, any material licensed under their

|

| 11 |

-

terms and conditions, or any related information. Creative Commons

|

| 12 |

-

disclaims all liability for damages resulting from their use to the

|

| 13 |

-

fullest extent possible.

|

| 14 |

-

|

| 15 |

-

Using Creative Commons Public Licenses

|

| 16 |

-

|

| 17 |

-

Creative Commons public licenses provide a standard set of terms and

|

| 18 |

-

conditions that creators and other rights holders may use to share

|

| 19 |

-

original works of authorship and other material subject to copyright

|

| 20 |

-

and certain other rights specified in the public license below. The

|

| 21 |

-

following considerations are for informational purposes only, are not

|

| 22 |

-

exhaustive, and do not form part of our licenses.

|

| 23 |

-

|

| 24 |

-

Considerations for licensors: Our public licenses are

|

| 25 |

-

intended for use by those authorized to give the public

|

| 26 |

-

permission to use material in ways otherwise restricted by

|

| 27 |

-

copyright and certain other rights. Our licenses are

|

| 28 |

-

irrevocable. Licensors should read and understand the terms

|

| 29 |

-

and conditions of the license they choose before applying it.

|

| 30 |

-

Licensors should also secure all rights necessary before

|

| 31 |

-

applying our licenses so that the public can reuse the

|

| 32 |

-

material as expected. Licensors should clearly mark any

|

| 33 |

-

material not subject to the license. This includes other CC-

|

| 34 |

-

licensed material, or material used under an exception or

|

| 35 |

-

limitation to copyright. More considerations for licensors:

|

| 36 |

-

wiki.creativecommons.org/Considerations_for_licensors

|

| 37 |

-

|

| 38 |

-

Considerations for the public: By using one of our public

|

| 39 |

-

licenses, a licensor grants the public permission to use the

|

| 40 |

-

licensed material under specified terms and conditions. If

|

| 41 |

-

the licensor's permission is not necessary for any reason--for

|

| 42 |

-

example, because of any applicable exception or limitation to

|

| 43 |

-

copyright--then that use is not regulated by the license. Our

|

| 44 |

-

licenses grant only permissions under copyright and certain

|

| 45 |

-

other rights that a licensor has authority to grant. Use of

|

| 46 |

-

the licensed material may still be restricted for other

|

| 47 |

-

reasons, including because others have copyright or other

|

| 48 |

-

rights in the material. A licensor may make special requests,

|

| 49 |

-

such as asking that all changes be marked or described.

|

| 50 |

-

Although not required by our licenses, you are encouraged to

|

| 51 |

-

respect those requests where reasonable. More considerations

|

| 52 |

-

for the public:

|

| 53 |

-

wiki.creativecommons.org/Considerations_for_licensees

|

| 54 |

-

|

| 55 |

-

=======================================================================

|

| 56 |

-

|

| 57 |

-

Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International

|

| 58 |

-

Public License

|

| 59 |

-

|

| 60 |

-

By exercising the Licensed Rights (defined below), You accept and agree

|

| 61 |

-

to be bound by the terms and conditions of this Creative Commons

|

| 62 |

-

Attribution-NonCommercial-ShareAlike 4.0 International Public License

|

| 63 |

-

("Public License"). To the extent this Public License may be

|

| 64 |

-

interpreted as a contract, You are granted the Licensed Rights in

|

| 65 |

-

consideration of Your acceptance of these terms and conditions, and the

|

| 66 |

-

Licensor grants You such rights in consideration of benefits the

|

| 67 |

-

Licensor receives from making the Licensed Material available under

|

| 68 |

-

these terms and conditions.

|

| 69 |

-

|

| 70 |

-

|

| 71 |

-

Section 1 -- Definitions.

|

| 72 |

-

|

| 73 |

-

a. Adapted Material means material subject to Copyright and Similar

|

| 74 |

-

Rights that is derived from or based upon the Licensed Material

|

| 75 |

-

and in which the Licensed Material is translated, altered,

|

| 76 |

-

arranged, transformed, or otherwise modified in a manner requiring

|

| 77 |

-

permission under the Copyright and Similar Rights held by the

|

| 78 |

-

Licensor. For purposes of this Public License, where the Licensed

|

| 79 |

-

Material is a musical work, performance, or sound recording,

|

| 80 |

-

Adapted Material is always produced where the Licensed Material is

|

| 81 |

-

synched in timed relation with a moving image.

|

| 82 |

-

|

| 83 |

-

b. Adapter's License means the license You apply to Your Copyright

|

| 84 |

-

and Similar Rights in Your contributions to Adapted Material in

|

| 85 |

-

accordance with the terms and conditions of this Public License.

|

| 86 |

-

|

| 87 |

-

c. BY-NC-SA Compatible License means a license listed at

|

| 88 |

-

creativecommons.org/compatiblelicenses, approved by Creative

|

| 89 |

-

Commons as essentially the equivalent of this Public License.

|

| 90 |

-

|

| 91 |

-

d. Copyright and Similar Rights means copyright and/or similar rights

|

| 92 |

-

closely related to copyright including, without limitation,

|

| 93 |

-

performance, broadcast, sound recording, and Sui Generis Database

|

| 94 |

-

Rights, without regard to how the rights are labeled or

|

| 95 |

-

categorized. For purposes of this Public License, the rights

|

| 96 |

-

specified in Section 2(b)(1)-(2) are not Copyright and Similar

|

| 97 |

-

Rights.

|

| 98 |

-

|

| 99 |

-

e. Effective Technological Measures means those measures that, in the

|

| 100 |

-

absence of proper authority, may not be circumvented under laws

|

| 101 |

-

fulfilling obligations under Article 11 of the WIPO Copyright

|

| 102 |

-

Treaty adopted on December 20, 1996, and/or similar international

|

| 103 |

-

agreements.

|

| 104 |

-

|

| 105 |

-

f. Exceptions and Limitations means fair use, fair dealing, and/or

|

| 106 |

-

any other exception or limitation to Copyright and Similar Rights

|

| 107 |

-

that applies to Your use of the Licensed Material.

|

| 108 |

-

|

| 109 |

-

g. License Elements means the license attributes listed in the name

|

| 110 |

-

of a Creative Commons Public License. The License Elements of this

|

| 111 |

-

Public License are Attribution, NonCommercial, and ShareAlike.

|

| 112 |

-

|

| 113 |

-

h. Licensed Material means the artistic or literary work, database,

|

| 114 |

-

or other material to which the Licensor applied this Public

|

| 115 |

-

License.

|

| 116 |

-

|

| 117 |

-

i. Licensed Rights means the rights granted to You subject to the

|

| 118 |

-

terms and conditions of this Public License, which are limited to

|

| 119 |

-

all Copyright and Similar Rights that apply to Your use of the

|

| 120 |

-

Licensed Material and that the Licensor has authority to license.

|

| 121 |

-

|

| 122 |

-

j. Licensor means the individual(s) or entity(ies) granting rights

|

| 123 |

-

under this Public License.

|

| 124 |

-

|

| 125 |

-

k. NonCommercial means not primarily intended for or directed towards

|

| 126 |

-

commercial advantage or monetary compensation. For purposes of

|

| 127 |

-

this Public License, the exchange of the Licensed Material for

|

| 128 |

-

other material subject to Copyright and Similar Rights by digital

|

| 129 |

-

file-sharing or similar means is NonCommercial provided there is

|

| 130 |

-

no payment of monetary compensation in connection with the

|

| 131 |

-

exchange.

|

| 132 |

-

|

| 133 |

-

l. Share means to provide material to the public by any means or

|

| 134 |

-

process that requires permission under the Licensed Rights, such

|

| 135 |

-

as reproduction, public display, public performance, distribution,

|

| 136 |

-

dissemination, communication, or importation, and to make material

|

| 137 |

-

available to the public including in ways that members of the

|

| 138 |

-

public may access the material from a place and at a time

|

| 139 |

-

individually chosen by them.

|

| 140 |

-

|

| 141 |

-

m. Sui Generis Database Rights means rights other than copyright

|

| 142 |

-

resulting from Directive 96/9/EC of the European Parliament and of

|

| 143 |

-

the Council of 11 March 1996 on the legal protection of databases,

|

| 144 |

-

as amended and/or succeeded, as well as other essentially

|

| 145 |

-

equivalent rights anywhere in the world.

|

| 146 |

-

|

| 147 |

-

n. You means the individual or entity exercising the Licensed Rights

|

| 148 |

-

under this Public License. Your has a corresponding meaning.

|

| 149 |

-

|

| 150 |

-

|

| 151 |

-

Section 2 -- Scope.

|

| 152 |

-

|

| 153 |

-

a. License grant.

|

| 154 |

-

|

| 155 |

-

1. Subject to the terms and conditions of this Public License,

|

| 156 |

-

the Licensor hereby grants You a worldwide, royalty-free,

|

| 157 |

-

non-sublicensable, non-exclusive, irrevocable license to

|

| 158 |

-

exercise the Licensed Rights in the Licensed Material to:

|

| 159 |

-

|

| 160 |

-

a. reproduce and Share the Licensed Material, in whole or

|

| 161 |

-

in part, for NonCommercial purposes only; and

|

| 162 |

-

|

| 163 |

-

b. produce, reproduce, and Share Adapted Material for

|

| 164 |

-

NonCommercial purposes only.

|

| 165 |

-

|

| 166 |

-

2. Exceptions and Limitations. For the avoidance of doubt, where

|

| 167 |

-

Exceptions and Limitations apply to Your use, this Public

|

| 168 |

-

License does not apply, and You do not need to comply with

|

| 169 |

-

its terms and conditions.

|

| 170 |

-

|

| 171 |

-

3. Term. The term of this Public License is specified in Section

|

| 172 |

-

6(a).

|

| 173 |

-

|

| 174 |

-

4. Media and formats; technical modifications allowed. The

|

| 175 |

-

Licensor authorizes You to exercise the Licensed Rights in

|

| 176 |

-

all media and formats whether now known or hereafter created,

|

| 177 |

-

and to make technical modifications necessary to do so. The

|

| 178 |

-

Licensor waives and/or agrees not to assert any right or

|

| 179 |

-

authority to forbid You from making technical modifications

|

| 180 |

-

necessary to exercise the Licensed Rights, including

|

| 181 |

-

technical modifications necessary to circumvent Effective

|

| 182 |

-

Technological Measures. For purposes of this Public License,

|

| 183 |

-

simply making modifications authorized by this Section 2(a)

|

| 184 |

-

(4) never produces Adapted Material.

|

| 185 |

-

|

| 186 |

-

5. Downstream recipients.

|

| 187 |

-

|

| 188 |

-

a. Offer from the Licensor -- Licensed Material. Every

|

| 189 |

-

recipient of the Licensed Material automatically

|

| 190 |

-

receives an offer from the Licensor to exercise the

|

| 191 |

-

Licensed Rights under the terms and conditions of this

|

| 192 |

-

Public License.

|

| 193 |

-

|

| 194 |

-

b. Additional offer from the Licensor -- Adapted Material.

|

| 195 |

-

Every recipient of Adapted Material from You

|

| 196 |

-

automatically receives an offer from the Licensor to

|

| 197 |

-

exercise the Licensed Rights in the Adapted Material

|

| 198 |

-

under the conditions of the Adapter's License You apply.

|

| 199 |

-

|

| 200 |

-

c. No downstream restrictions. You may not offer or impose

|

| 201 |

-

any additional or different terms or conditions on, or

|

| 202 |

-

apply any Effective Technological Measures to, the

|

| 203 |

-

Licensed Material if doing so restricts exercise of the

|

| 204 |

-

Licensed Rights by any recipient of the Licensed

|

| 205 |

-

Material.

|

| 206 |

-

|

| 207 |

-

6. No endorsement. Nothing in this Public License constitutes or

|

| 208 |

-

may be construed as permission to assert or imply that You

|

| 209 |

-

are, or that Your use of the Licensed Material is, connected

|

| 210 |

-

with, or sponsored, endorsed, or granted official status by,

|

| 211 |

-

the Licensor or others designated to receive attribution as

|

| 212 |

-

provided in Section 3(a)(1)(A)(i).

|

| 213 |

-

|

| 214 |

-

b. Other rights.

|

| 215 |

-

|

| 216 |

-

1. Moral rights, such as the right of integrity, are not

|

| 217 |

-

licensed under this Public License, nor are publicity,

|

| 218 |

-

privacy, and/or other similar personality rights; however, to

|

| 219 |

-

the extent possible, the Licensor waives and/or agrees not to

|

| 220 |

-

assert any such rights held by the Licensor to the limited

|

| 221 |

-

extent necessary to allow You to exercise the Licensed

|

| 222 |

-

Rights, but not otherwise.

|

| 223 |

-

|

| 224 |

-

2. Patent and trademark rights are not licensed under this

|

| 225 |

-

Public License.

|

| 226 |

-

|

| 227 |

-

3. To the extent possible, the Licensor waives any right to

|

| 228 |

-

collect royalties from You for the exercise of the Licensed

|

| 229 |

-

Rights, whether directly or through a collecting society

|

| 230 |

-

under any voluntary or waivable statutory or compulsory

|

| 231 |

-

licensing scheme. In all other cases the Licensor expressly

|

| 232 |

-

reserves any right to collect such royalties, including when

|

| 233 |

-

the Licensed Material is used other than for NonCommercial

|

| 234 |

-

purposes.

|

| 235 |

-

|

| 236 |

-

|

| 237 |

-

Section 3 -- License Conditions.

|

| 238 |

-

|

| 239 |

-

Your exercise of the Licensed Rights is expressly made subject to the

|

| 240 |

-

following conditions.

|

| 241 |

-

|

| 242 |

-

a. Attribution.

|

| 243 |

-

|

| 244 |

-

1. If You Share the Licensed Material (including in modified

|

| 245 |

-

form), You must:

|

| 246 |

-

|

| 247 |

-

a. retain the following if it is supplied by the Licensor

|

| 248 |

-

with the Licensed Material:

|

| 249 |

-

|

| 250 |

-

i. identification of the creator(s) of the Licensed

|

| 251 |

-

Material and any others designated to receive

|

| 252 |

-

attribution, in any reasonable manner requested by

|

| 253 |

-

the Licensor (including by pseudonym if

|

| 254 |

-

designated);

|

| 255 |

-

|

| 256 |

-

ii. a copyright notice;

|

| 257 |

-

|

| 258 |

-

iii. a notice that refers to this Public License;

|

| 259 |

-

|

| 260 |

-

iv. a notice that refers to the disclaimer of

|

| 261 |

-

warranties;

|

| 262 |

-

|

| 263 |

-

v. a URI or hyperlink to the Licensed Material to the

|

| 264 |

-

extent reasonably practicable;

|

| 265 |

-

|

| 266 |

-

b. indicate if You modified the Licensed Material and

|

| 267 |

-

retain an indication of any previous modifications; and

|

| 268 |

-

|

| 269 |

-

c. indicate the Licensed Material is licensed under this

|

| 270 |

-

Public License, and include the text of, or the URI or

|

| 271 |

-

hyperlink to, this Public License.

|

| 272 |

-

|

| 273 |

-

2. You may satisfy the conditions in Section 3(a)(1) in any

|

| 274 |

-

reasonable manner based on the medium, means, and context in

|

| 275 |

-

which You Share the Licensed Material. For example, it may be

|

| 276 |

-

reasonable to satisfy the conditions by providing a URI or

|

| 277 |

-

hyperlink to a resource that includes the required

|

| 278 |

-

information.

|

| 279 |

-

3. If requested by the Licensor, You must remove any of the

|

| 280 |

-

information required by Section 3(a)(1)(A) to the extent

|

| 281 |

-

reasonably practicable.

|

| 282 |

-

|

| 283 |

-

b. ShareAlike.

|

| 284 |

-

|

| 285 |

-

In addition to the conditions in Section 3(a), if You Share

|

| 286 |

-

Adapted Material You produce, the following conditions also apply.

|

| 287 |

-

|

| 288 |

-

1. The Adapter's License You apply must be a Creative Commons

|

| 289 |

-

license with the same License Elements, this version or

|

| 290 |

-

later, or a BY-NC-SA Compatible License.

|

| 291 |

-

|

| 292 |

-

2. You must include the text of, or the URI or hyperlink to, the

|

| 293 |

-

Adapter's License You apply. You may satisfy this condition

|

| 294 |

-

in any reasonable manner based on the medium, means, and

|

| 295 |

-

context in which You Share Adapted Material.

|

| 296 |

-

|

| 297 |

-

3. You may not offer or impose any additional or different terms

|

| 298 |

-

or conditions on, or apply any Effective Technological

|

| 299 |

-

Measures to, Adapted Material that restrict exercise of the

|

| 300 |

-

rights granted under the Adapter's License You apply.

|

| 301 |

-

|

| 302 |

-

|

| 303 |

-

Section 4 -- Sui Generis Database Rights.

|

| 304 |

-

|

| 305 |

-

Where the Licensed Rights include Sui Generis Database Rights that

|

| 306 |

-

apply to Your use of the Licensed Material:

|

| 307 |

-

|

| 308 |

-

a. for the avoidance of doubt, Section 2(a)(1) grants You the right

|

| 309 |

-

to extract, reuse, reproduce, and Share all or a substantial

|

| 310 |

-

portion of the contents of the database for NonCommercial purposes

|

| 311 |

-

only;

|

| 312 |

-

|

| 313 |

-

b. if You include all or a substantial portion of the database

|

| 314 |

-

contents in a database in which You have Sui Generis Database

|

| 315 |

-

Rights, then the database in which You have Sui Generis Database

|

| 316 |

-

Rights (but not its individual contents) is Adapted Material,

|

| 317 |

-

including for purposes of Section 3(b); and

|

| 318 |

-

|

| 319 |

-

c. You must comply with the conditions in Section 3(a) if You Share

|

| 320 |

-

all or a substantial portion of the contents of the database.

|

| 321 |

-

|

| 322 |

-

For the avoidance of doubt, this Section 4 supplements and does not

|

| 323 |

-

replace Your obligations under this Public License where the Licensed

|

| 324 |

-

Rights include other Copyright and Similar Rights.

|

| 325 |

-

|

| 326 |

-

|

| 327 |

-

Section 5 -- Disclaimer of Warranties and Limitation of Liability.

|

| 328 |

-

|

| 329 |

-

a. UNLESS OTHERWISE SEPARATELY UNDERTAKEN BY THE LICENSOR, TO THE

|

| 330 |

-

EXTENT POSSIBLE, THE LICENSOR OFFERS THE LICENSED MATERIAL AS-IS

|

| 331 |

-

AND AS-AVAILABLE, AND MAKES NO REPRESENTATIONS OR WARRANTIES OF

|

| 332 |

-

ANY KIND CONCERNING THE LICENSED MATERIAL, WHETHER EXPRESS,

|

| 333 |

-

IMPLIED, STATUTORY, OR OTHER. THIS INCLUDES, WITHOUT LIMITATION,

|

| 334 |

-

WARRANTIES OF TITLE, MERCHANTABILITY, FITNESS FOR A PARTICULAR

|

| 335 |

-

PURPOSE, NON-INFRINGEMENT, ABSENCE OF LATENT OR OTHER DEFECTS,

|

| 336 |

-

ACCURACY, OR THE PRESENCE OR ABSENCE OF ERRORS, WHETHER OR NOT

|

| 337 |

-

KNOWN OR DISCOVERABLE. WHERE DISCLAIMERS OF WARRANTIES ARE NOT

|

| 338 |

-

ALLOWED IN FULL OR IN PART, THIS DISCLAIMER MAY NOT APPLY TO YOU.

|

| 339 |

-

|

| 340 |

-

b. TO THE EXTENT POSSIBLE, IN NO EVENT WILL THE LICENSOR BE LIABLE

|

| 341 |

-

TO YOU ON ANY LEGAL THEORY (INCLUDING, WITHOUT LIMITATION,

|

| 342 |

-

NEGLIGENCE) OR OTHERWISE FOR ANY DIRECT, SPECIAL, INDIRECT,

|

| 343 |

-

INCIDENTAL, CONSEQUENTIAL, PUNITIVE, EXEMPLARY, OR OTHER LOSSES,

|

| 344 |

-

COSTS, EXPENSES, OR DAMAGES ARISING OUT OF THIS PUBLIC LICENSE OR

|

| 345 |

-

USE OF THE LICENSED MATERIAL, EVEN IF THE LICENSOR HAS BEEN

|

| 346 |

-

ADVISED OF THE POSSIBILITY OF SUCH LOSSES, COSTS, EXPENSES, OR

|

| 347 |

-

DAMAGES. WHERE A LIMITATION OF LIABILITY IS NOT ALLOWED IN FULL OR

|

| 348 |

-

IN PART, THIS LIMITATION MAY NOT APPLY TO YOU.

|

| 349 |

-

|

| 350 |

-

c. The disclaimer of warranties and limitation of liability provided

|

| 351 |

-

above shall be interpreted in a manner that, to the extent

|

| 352 |

-

possible, most closely approximates an absolute disclaimer and

|

| 353 |

-

waiver of all liability.

|

| 354 |

-

|

| 355 |

-

|

| 356 |

-

Section 6 -- Term and Termination.

|

| 357 |

-

|

| 358 |

-

a. This Public License applies for the term of the Copyright and

|

| 359 |

-

Similar Rights licensed here. However, if You fail to comply with

|

| 360 |

-

this Public License, then Your rights under this Public License

|

| 361 |

-

terminate automatically.

|

| 362 |

-

|

| 363 |

-

b. Where Your right to use the Licensed Material has terminated under

|

| 364 |

-

Section 6(a), it reinstates:

|

| 365 |

-

|

| 366 |

-

1. automatically as of the date the violation is cured, provided

|

| 367 |

-

it is cured within 30 days of Your discovery of the

|

| 368 |

-

violation; or

|

| 369 |

-

|

| 370 |

-

2. upon express reinstatement by the Licensor.

|

| 371 |

-

|

| 372 |

-

For the avoidance of doubt, this Section 6(b) does not affect any

|

| 373 |

-

right the Licensor may have to seek remedies for Your violations

|

| 374 |

-

of this Public License.

|

| 375 |

-

|

| 376 |

-

c. For the avoidance of doubt, the Licensor may also offer the

|

| 377 |

-

Licensed Material under separate terms or conditions or stop

|

| 378 |

-

distributing the Licensed Material at any time; however, doing so

|

| 379 |

-

will not terminate this Public License.

|

| 380 |

-

|

| 381 |

-

d. Sections 1, 5, 6, 7, and 8 survive termination of this Public

|

| 382 |

-

License.

|

| 383 |

-

|

| 384 |

-

|

| 385 |

-

Section 7 -- Other Terms and Conditions.

|

| 386 |

-

|

| 387 |

-

a. The Licensor shall not be bound by any additional or different

|

| 388 |

-

terms or conditions communicated by You unless expressly agreed.

|

| 389 |

-

|

| 390 |

-

b. Any arrangements, understandings, or agreements regarding the

|

| 391 |

-

Licensed Material not stated herein are separate from and

|

| 392 |

-

independent of the terms and conditions of this Public License.

|

| 393 |

-

|

| 394 |

-

|

| 395 |

-

Section 8 -- Interpretation.

|

| 396 |

-

|

| 397 |

-

a. For the avoidance of doubt, this Public License does not, and

|

| 398 |

-

shall not be interpreted to, reduce, limit, restrict, or impose

|

| 399 |

-

conditions on any use of the Licensed Material that could lawfully

|

| 400 |

-

be made without permission under this Public License.

|

| 401 |

-

|

| 402 |

-

b. To the extent possible, if any provision of this Public License is

|

| 403 |

-

deemed unenforceable, it shall be automatically reformed to the

|

| 404 |

-

minimum extent necessary to make it enforceable. If the provision

|

| 405 |

-

cannot be reformed, it shall be severed from this Public License

|

| 406 |

-

without affecting the enforceability of the remaining terms and

|

| 407 |

-

conditions.

|

| 408 |

-

|

| 409 |

-

c. No term or condition of this Public License will be waived and no

|

| 410 |

-

failure to comply consented to unless expressly agreed to by the

|

| 411 |

-

Licensor.

|

| 412 |

-

|

| 413 |

-

d. Nothing in this Public License constitutes or may be interpreted

|

| 414 |

-

as a limitation upon, or waiver of, any privileges and immunities

|

| 415 |

-

that apply to the Licensor or You, including from the legal

|

| 416 |

-

processes of any jurisdiction or authority.

|

| 417 |

-

|

| 418 |

-

=======================================================================

|

| 419 |

-

|

| 420 |

-

Creative Commons is not a party to its public

|

| 421 |

-

licenses. Notwithstanding, Creative Commons may elect to apply one of

|

| 422 |

-

its public licenses to material it publishes and in those instances

|

| 423 |

-

will be considered the “Licensor.” The text of the Creative Commons

|

| 424 |

-

public licenses is dedicated to the public domain under the CC0 Public

|

| 425 |

-

Domain Dedication. Except for the limited purpose of indicating that

|

| 426 |

-

material is shared under a Creative Commons public license or as

|

| 427 |

-

otherwise permitted by the Creative Commons policies published at

|

| 428 |

-

creativecommons.org/policies, Creative Commons does not authorize the

|

| 429 |

-

use of the trademark "Creative Commons" or any other trademark or logo

|

| 430 |

-

of Creative Commons without its prior written consent including,

|

| 431 |

-

without limitation, in connection with any unauthorized modifications

|

| 432 |

-

to any of its public licenses or any other arrangements,

|

| 433 |

-

understandings, or agreements concerning use of licensed material. For

|

| 434 |

-

the avoidance of doubt, this paragraph does not form part of the

|

| 435 |

-

public licenses.

|

| 436 |

-

|

| 437 |

-

Creative Commons may be contacted at creativecommons.org.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

sd-webui-stablesr/README.md

DELETED

|

@@ -1,156 +0,0 @@

|

|

| 1 |

-

# StableSR for Stable Diffusion WebUI

|

| 2 |

-

|

| 3 |

-

Licensed under S-Lab License 1.0

|

| 4 |

-

|

| 5 |

-

[![CC BY-NC-SA 4.0][cc-by-nc-sa-shield]][cc-by-nc-sa]

|

| 6 |

-

|

| 7 |

-

English|[中文](README_CN.md)

|

| 8 |

-

|

| 9 |

-

- StableSR is a competitive super-resolution method originally proposed by Jianyi Wang et al.

|

| 10 |

-

- This repository is a migration of the StableSR project to the Automatic1111 WebUI.

|

| 11 |

-

|

| 12 |

-

Relevant Links

|

| 13 |

-

|

| 14 |

-

> Click to view high-quality official examples!

|

| 15 |

-

|

| 16 |

-

- [Project Page](https://iceclear.github.io/projects/stablesr/)

|

| 17 |

-

- [Official Repository](https://github.com/IceClear/StableSR)

|

| 18 |

-

- [Paper on arXiv](https://arxiv.org/abs/2305.07015)

|

| 19 |

-

|

| 20 |

-

> If you find this project useful, please give me & Jianyi Wang a star! ⭐

|

| 21 |

-

|

| 22 |

-

***

|

| 23 |

-

|

| 24 |

-

## Features

|

| 25 |

-

|

| 26 |

-

1. **High-fidelity detailed image upscaling**:

|

| 27 |

-

- Being very detailed while keeping the face identity of your characters.

|

| 28 |

-

- Suitable for most images (Realistic or Anime, Photography or AIGC, SD 1.5 or Midjourney images...) [Official Examples](https://iceclear.github.io/projects/stablesr/)

|

| 29 |

-

2. **Less VRAM consumption**

|

| 30 |

-

- I remove the VRAM-expensive modules in the official implementation.

|

| 31 |

-

- The remaining model is much smaller than ControlNet Tile model and requires less VRAM.

|

| 32 |

-

- When combined with Tiled Diffusion & VAE, you can do 4k image super-resolution with limited VRAM (e.g., < 12 GB).

|

| 33 |

-

> Please be aware that sdp may lead to OOM for some unknown reasons. You may use xformers instead.

|

| 34 |

-

3. **Wavelet Color Fix**

|

| 35 |

-

- The official StableSR will significantly change the color of the generated image. The problem will be even more prominent when upscaling in tiles.

|

| 36 |

-

- I implement a powerful post-processing technique that effectively matches the color of the upscaled image to the original. See [Wavelet Color Fix Example](https://imgsli.com/MTgwNDg2/).

|

| 37 |

-

|

| 38 |

-

***

|

| 39 |

-

|

| 40 |

-

## Usage

|

| 41 |

-

|

| 42 |

-

### 1. Installation

|

| 43 |

-

|

| 44 |

-

⚪ Method 1: Official Market

|

| 45 |

-

|

| 46 |

-

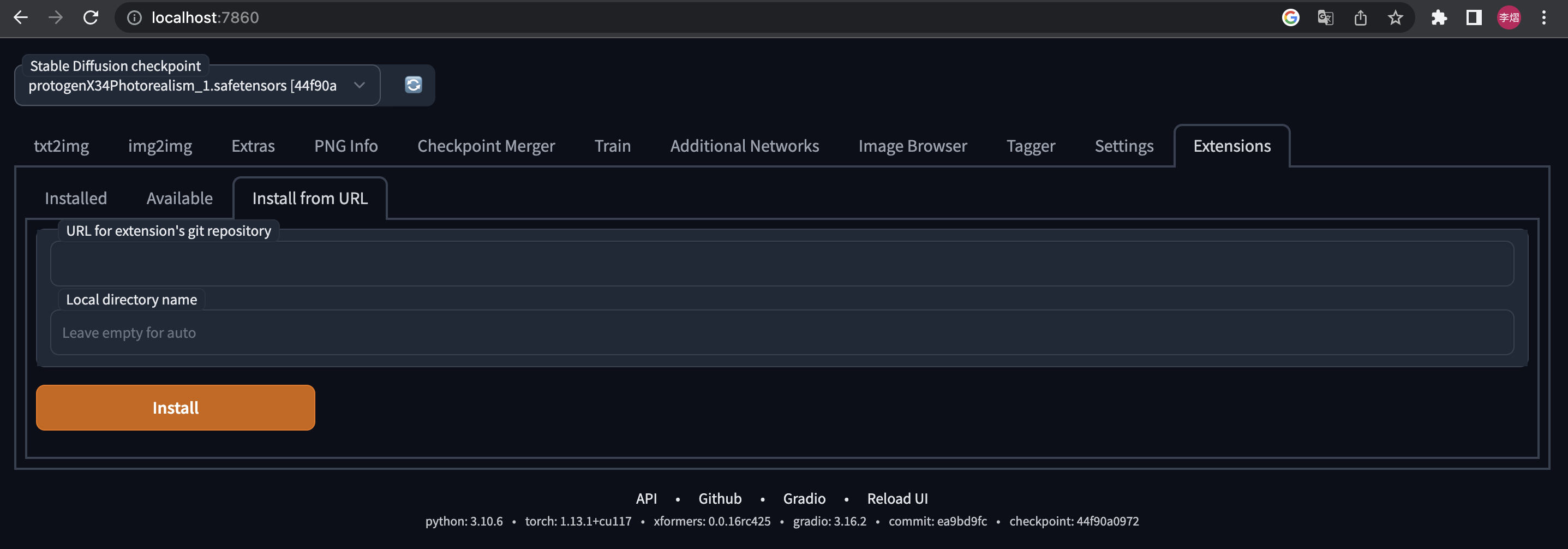

- Open Automatic1111 WebUI -> Click Tab "Extensions" -> Click Tab "Available" -> Find "StableSR" -> Click "Install"

|

| 47 |

-

|

| 48 |

-

⚪ Method 2: URL Install

|

| 49 |

-

|

| 50 |

-

- Open Automatic1111 WebUI -> Click Tab "Extensions" -> Click Tab "Install from URL" -> type in https://github.com/pkuliyi2015/sd-webui-stablesr.git -> Click "Install"

|

| 51 |

-

|

| 52 |

-

|

| 53 |

-

|

| 54 |

-

### 2. Download the main components

|

| 55 |

-

|

| 56 |

-

- You MUST use the Stable Diffusion V2.1 512 **EMA** checkpoint (~5.21GB) from StabilityAI

|

| 57 |

-

- You can download it from [HuggingFace](https://huggingface.co/stabilityai/stable-diffusion-2-1-base)

|

| 58 |

-

- Put into stable-diffusion-webui/models/Stable-Diffusion/

|

| 59 |

-

|

| 60 |

-

> While it requires a SD2.1 checkpoint, you can still upscale ANY image (even from SD1.5 or NSFW). Your image won't be censored and the output quality won't be affected.

|

| 61 |

-

|

| 62 |

-

- Download the extracted StableSR module

|

| 63 |

-

- Official resources: [HuggingFace](https://huggingface.co/Iceclear/StableSR/resolve/main/weibu_models.zip) (~1.2 G). Note that this is a zip file containing both the StableSR module and the VQVAE.

|

| 64 |

-

- My resources: <[GoogleDrive](https://drive.google.com/file/d/1tWjkZQhfj07sHDR4r9Ta5Fk4iMp1t3Qw/view?usp=sharing)> <[百度网盘-提取码aguq](https://pan.baidu.com/s/1Nq_6ciGgKnTu0W14QcKKWg?pwd=aguq)>

|

| 65 |

-

- Put the StableSR module (~400MB) into your stable-diffusion-webui/extensions/sd-webui-stablesr/models/

|

| 66 |

-

|

| 67 |

-

### 3. Optional components

|

| 68 |

-

|

| 69 |

-

- Install [Tiled Diffusion & VAE]((https://github.com/pkuliyi2015/multidiffusion-upscaler-for-automatic1111)) extension

|

| 70 |

-

- The original StableSR easily gets OOM for large images > 512.

|

| 71 |

-

- For better quality and less VRAM usage, we recommend Tiled Diffusion & VAE.

|

| 72 |

-

- Use the Official VQGAN VAE

|

| 73 |

-

- Official resources: See the link in 2.

|

| 74 |

-

- My resources: <[GoogleDrive](https://drive.google.com/file/d/1ARtDMia3_CbwNsGxxGcZ5UP75W4PeIEI/view?usp=share_link)> <[百度网盘-提取码83u9](https://pan.baidu.com/s/1YCYmGBethR9JZ8-eypoIiQ?pwd=83u9)>

|

| 75 |

-

- Put the VQVAE (~700MB) into your stable-diffusion-webui/models/VAE

|

| 76 |

-

|

| 77 |

-

### 4. Extension Usage

|

| 78 |

-

|

| 79 |

-

- At the top of the WebUI, select the v2-1_512-ema-pruned checkpoint you downloaded.

|

| 80 |

-

- Switch to img2img tag. Find the "Scripts" dropdown at the bottom of the page.

|

| 81 |

-

- Select the StableSR script.

|

| 82 |

-

- Click the refresh button and select the StableSR checkpoint you have downloaded.

|

| 83 |

-

- Choose a scale factor.

|

| 84 |

-

- Upload your image and start generation (can work without prompts).

|

| 85 |

-

- Euler a sampler is recommended. CFG Scale<=2, Steps >= 20.

|

| 86 |

-

- For output image size > 512, we recommend using Tiled Diffusion & VAE, otherwise, the image quality may not be ideal, and the VRAM usage will be huge.

|

| 87 |

-

- Here are the official Tiled Diffusion settings:

|

| 88 |

-

- Method = Mixture of Diffusers

|

| 89 |

-

- Latent tile size = 64, Latent tile overlap = 32

|

| 90 |

-

- Latent tile batch size as large as possible before Out of Memory.

|

| 91 |

-

- Upscaler MUST be None (will not upscale here; instead, upscale in StableSR).

|

| 92 |

-

- The following figure shows the recommended settings for 24GB VRAM.

|

| 93 |

-

- For a 6GB device, **just change Tiled Diffusion Latent tile batch size to 1, Tiled VAE Encoder Tile Size to 1024, Decoder Tile Size to 128.**

|

| 94 |

-

- SDP attention optimization may lead to OOM. Please use xformers in that case.

|

| 95 |

-

- You DON'T need to change other settings in Tiled Diffusion & Tiled VAE unless you have a very deep understanding. **These params are almost optimal for StableSR.**

|

| 96 |

-

|

| 97 |

-

|

| 98 |

-

|

| 99 |

-

|

| 100 |

-

### 5. Options Explained

|

| 101 |

-

|

| 102 |

-

- What is "Pure Noise"?

|

| 103 |

-

- Pure Noise refers to starting from a fully random noise tensor instead of your image. **This is the default behavior in the StableSR paper.**

|

| 104 |

-

- When enabling it, the script ignores your denoising strength and gives you much more detailed images, but also changes the color & sharpness significantly

|

| 105 |

-

- When disabling it, the script starts by adding some noise to your image. The result will be not fully detailed, even if you set denoising strength = 1 (but maybe aesthetically good). See [Comparison](https://imgsli.com/MTgwMTMx).

|

| 106 |

-

- If you disable Pure Noise, we recommend denoising strength=1

|

| 107 |

-

- What is "Color Fix"?

|

| 108 |

-

- This is to mitigate the color shift problem from StableSR and the tiling process.

|

| 109 |

-

- AdaIN simply adjusts the color statistics between the original and the outcome images. This is the official algorithm but ineffective in many cases.

|

| 110 |

-

- Wavelet decomposes the original and the outcome images into low and high frequency, and then replace the outcome image's low-frequency part (colors) with the original image's. This is very powerful for uneven color shifting. The algorithm is from GIMP and Krita, which will take several seconds for each image.

|

| 111 |

-

- When enabling color fix, the original image will also show up in your preview window, but will NOT be saved automatically.

|

| 112 |

-

|

| 113 |

-

### 6. Important Notice

|

| 114 |

-

|

| 115 |

-

> Why my results are different from the offical examples?

|

| 116 |

-

|

| 117 |

-

- It is not your or our fault.

|

| 118 |

-

- This extension has the same UNet model weights as the StableSR if installed correctly.

|

| 119 |

-

- If you install the optional VQVAE, the whole model weights will be the same as the official model with fusion weights=0.

|

| 120 |

-

- However, your result will be **not as good as** the official results, because:

|

| 121 |

-

- Sampler Difference:

|

| 122 |

-

- The official repo does 100 or 200 steps of legacy DDPM sampling with a custom timestep scheduler, and samples without negative prompts.

|

| 123 |

-

- However, WebUI doesn't offer such a sampler, and it must sample with negative prompts. **This is the main difference.**

|

| 124 |

-

- VQVAE Decoder Difference:

|

| 125 |

-

- The official VQVAE Decoder takes some Encoder features as input.

|

| 126 |

-

- However, in practice, I found these features are astonishingly huge for large images. (>10G for 4k images even in float16!)

|

| 127 |

-

- Hence, **I removed the CFW component in VAE Decoder**. As this lead to inferior fidelity in details, I will try to add it back later as an option.

|

| 128 |

-

|

| 129 |

-

***

|

| 130 |

-

## License

|

| 131 |

-

|

| 132 |

-

This project is licensed under:

|

| 133 |

-

|

| 134 |

-

- S-Lab License 1.0.

|

| 135 |

-

- [Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License][cc-by-nc-sa], due to the use of the NVIDIA SPADE module.

|

| 136 |

-

|

| 137 |

-

[![CC BY-NC-SA 4.0][cc-by-nc-sa-image]][cc-by-nc-sa]

|

| 138 |

-

|

| 139 |

-

[cc-by-nc-sa]: http://creativecommons.org/licenses/by-nc-sa/4.0/

|

| 140 |

-

[cc-by-nc-sa-image]: https://licensebuttons.net/l/by-nc-sa/4.0/88x31.png

|

| 141 |

-

[cc-by-nc-sa-shield]: https://img.shields.io/badge/License-CC%20BY--NC--SA%204.0-lightgrey.svg

|

| 142 |

-

|

| 143 |

-

### Disclaimer

|

| 144 |

-

|

| 145 |

-

- All code in this extension is for research purposes only.

|

| 146 |

-

- The commercial use of the code and checkpoint is **strictly prohibited**.

|

| 147 |

-

|

| 148 |

-

### Important Notice for Outcome Images

|

| 149 |

-

|

| 150 |

-

- Please note that the CC BY-NC-SA 4.0 license in the NVIDIA SPADE module also prohibits the commercial use of outcome images.

|

| 151 |

-

- Jianyi Wang may change the SPADE module to a commercial-friendly one but he is busy.

|

| 152 |

-

- If you wish to *speed up* his process for commercial purposes, please contact him through email: iceclearwjy@gmail.com

|

| 153 |

-

|

| 154 |

-

## Acknowledgments

|

| 155 |

-

|

| 156 |

-

I would like to thank Jianyi Wang et al. for the original StableSR method.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

sd-webui-stablesr/README_CN.md

DELETED

|

@@ -1,150 +0,0 @@

|

|

| 1 |

-

# StableSR - Stable Diffusion WebUI

|

| 2 |

-

|

| 3 |

-

Licensed under S-Lab License 1.0

|

| 4 |

-

|

| 5 |

-

[![CC BY-NC-SA 4.0][cc-by-nc-sa-shield]][cc-by-nc-sa]

|

| 6 |

-

|

| 7 |

-

[English](README.md) | 中文

|

| 8 |

-

|

| 9 |

-

- StableSR 是由 Jianyi Wang 等人提出的强力超分辨率项目。

|

| 10 |

-

- 本仓库将 StableSR 项目迁移到 Automatic1111 WebUI。

|

| 11 |

-

|

| 12 |

-

相关链接

|

| 13 |

-

|

| 14 |

-

> 点击查看大量官方示例!

|

| 15 |

-

|

| 16 |

-

- [项目页面](https://iceclear.github.io/projects/stablesr/)

|

| 17 |

-

- [官方仓库](https://github.com/IceClear/StableSR)

|

| 18 |

-

- [论文](https://arxiv.org/abs/2305.07015)

|

| 19 |

-

|

| 20 |

-

> 如果你觉得这个项目有帮助,请给我和 Jianyi Wang 的仓库点个星!⭐

|

| 21 |

-

***

|

| 22 |

-

|

| 23 |

-

## 功能

|

| 24 |

-

|

| 25 |

-

1. **高保真图像放大**:

|

| 26 |

-

- 不修改人物脸部的同时添加非常细致的细节和纹理

|

| 27 |

-

- 适合大多数图片(真实或动漫,摄影作品或AIGC,SD 1.5或Midjourney图片...)

|

| 28 |

-

2. **较少的显存消耗**:

|

| 29 |

-

- 我移除了官方实现中显存消耗高的模块。

|

| 30 |

-

- 剩下的模型比ControlNet Tile模型小得多,需要的显存也少得多。

|

| 31 |

-

- 当结合Tiled Diffusion & VAE时,你可以在有限的显存(例如,<12GB)中进行4k图像放大。

|

| 32 |

-

> 注意,sdp可能会不明原因炸显存。建议使用xformers。

|

| 33 |

-

3. **小波分解颜色修正**:

|

| 34 |

-

- StableSR官方实现有明显的颜色偏移,这一问题在分块放大时更加明显。

|

| 35 |

-

- 我实现了一个强大的后处理技术,有效地匹配放大图像与原图的颜色。请看[小波分解颜色修正例子](https://imgsli.com/MTgwNDg2/)。

|

| 36 |

-

|

| 37 |

-

***

|

| 38 |

-

## 使用

|

| 39 |

-

|

| 40 |

-

### 1. 安装

|

| 41 |

-

|

| 42 |

-

⚪ 方法 1: 官方市场

|

| 43 |

-

|

| 44 |

-

- 打开Automatic1111 WebUI -> 点击“扩展”选项卡 -> 点击“可用”选项卡 -> 找到“StableSR” -> 点击“安装”

|

| 45 |

-

|

| 46 |

-

⚪ 方法 2: URL 安装

|

| 47 |

-

|

| 48 |

-

- 打开 Automatic1111 WebUI -> 点击 "Extensions" 标签页 -> 点击 "Install from URL" 标签页 -> 输入 https://github.com/pkuliyi2015/sd-webui-stablesr.git -> 点击 "Install"

|

| 49 |

-

|

| 50 |

-

|

| 51 |

-

|

| 52 |

-

### 2. 必须模型

|

| 53 |

-

|

| 54 |

-

- 你必须使用 StabilityAI 官方的 Stable Diffusion V2.1 512 **EMA** 模型(约 5.21GB)

|

| 55 |

-

- 你可以从 [HuggingFace](https://huggingface.co/stabilityai/stable-diffusion-2-1-base) 下载

|

| 56 |

-

- 放入 stable-diffusion-webui/models/Stable-Diffusion/ 文件夹

|

| 57 |

-

> 虽然StableSR需要一个SD2.1的模型权重,但你仍然可以放大来自SD1.5的图片。NSFW图片不会被模型扭曲,输出质量也不会受到影响。

|

| 58 |

-

- 下载 StableSR 模块

|

| 59 |

-

- 官方资源:[HuggingFace](https://huggingface.co/Iceclear/StableSR/resolve/main/weibu_models.zip) (约1.2G)。请注意这是一个zip文件,同时包含StableSR模块和可选组件VQVAE.

|

| 60 |

-

- 我的资源:<[GoogleDrive](https://drive.google.com/file/d/1tWjkZQhfj07sHDR4r9Ta5Fk4iMp1t3Qw/view?usp=sharing)> <[百度网盘-提取码aguq](https://pan.baidu.com/s/1Nq_6ciGgKnTu0W14QcKKWg?pwd=aguq)>

|

| 61 |

-

- 把StableSR模块(约400M大小)放入 stable-diffusion-webui/extensions/sd-webui-stablesr/models/ 文件夹

|

| 62 |

-

|

| 63 |

-

### 3. 可选组件

|

| 64 |

-

|

| 65 |

-

- 安装 [Tiled Diffusion & VAE](https://github.com/pkuliyi2015/multidiffusion-upscaler-for-automatic1111) 扩展

|

| 66 |

-

- 原始的 StableSR 对大于 512 的大图像容易出现 OOM。

|

| 67 |

-

- 为了获得更好的质量和更少的 VRAM 使用,我们建议使用 Tiled Diffusion & VAE。

|

| 68 |

-

- 使用官方 VQGAN VAE

|

| 69 |

-

- 官方资源:同2中的链接

|

| 70 |

-

- 我的资源:<[GoogleDrive](https://drive.google.com/file/d/1ARtDMia3_CbwNsGxxGcZ5UP75W4PeIEI/view?usp=share_link)> <[百度网盘-提取码83u9](https://pan.baidu.com/s/1YCYmGBethR9JZ8-eypoIiQ?pwd=83u9)>

|

| 71 |

-

- 把VQVAE(约750MB大小)放在你的 stable-diffusion-webui/models/VAE 中

|

| 72 |

-

|

| 73 |

-

### 4. 扩展使用

|

| 74 |

-

|

| 75 |

-

- 在 WebUI 的顶部,选择你下载的 v2-1_512-ema-pruned 模型。

|

| 76 |

-

- 切换到 img2img 标签。在页面底部找到 "Scripts" 下拉列表。

|

| 77 |

-

- 选择 StableSR 脚本。

|

| 78 |

-

- 点击刷新按钮,选择你已下载的 StableSR 检查点。

|

| 79 |

-

- 选择一个放大因子。

|

| 80 |

-

- 上传你的图像并开始生成(无需提示也能工作)。

|

| 81 |

-

- 推荐使用 Euler a 采样器,CFG值<=2,步数 >= 20。

|

| 82 |

-

- 如果生成图像尺寸 > 512,我们推荐使用 Tiled Diffusion & VAE,否则,图像质量可能不理想,VRAM 使用量也会很大。

|

| 83 |

-

- 这里是官方推荐的 Tiled Diffusion 设置。

|

| 84 |

-

- 方法 = Mixture of Diffusers

|

| 85 |

-

- 隐空间Tile大小 = 64,隐空间Tile重叠 = 32

|

| 86 |

-

- Tile批大小尽可能大,直到差一点点就炸显存为止。

|

| 87 |

-

- Upscaler**必须**选择None。

|

| 88 |

-

- 下图是24GB显存的推荐设置。

|

| 89 |

-

- 对于4GB的设备,**只需将Tiled Diffusion Latent tile批处理大小改为1,Tiled VAE编码器Tile大小改为1024,解码器Tile大小改为128。**

|

| 90 |

-

- SDP注意力优化可能会导致OOM(内存不足),因此推荐使用xformers。

|

| 91 |

-

- 除非你有深入的理解,否则你**不要**改变Tiled Diffusion & Tiled VAE中的其他设置。**这些参数对于StableSR基本上是最优��。**

|

| 92 |

-

|

| 93 |

-

|

| 94 |

-

### 5. 参数解释

|

| 95 |

-

|

| 96 |

-

- 什么是 "Pure Noise"?

|

| 97 |

-

- Pure Noise也就是纯噪声,指的是从完全随机的噪声张量开始,而不是从你的图像开始。**这是 StableSR 论文中的默认做法。**

|

| 98 |

-

- 启用这个选项时,脚本会忽略你的重绘幅度设置。产出将会是更详细的图像,但也会显著改变颜色和锐度。

|

| 99 |

-

- 禁用这个选项时,脚本会开始添加一些噪声到你的图像。即使你将去噪强度设为1,结果也不会那么的细节(但可能更和谐好看)。参见 [对比图](https://imgsli.com/MTgwMTMx)。

|

| 100 |

-

- 如果禁用Pure Noise,推荐重绘幅度设置为1

|

| 101 |

-

- 什么是"颜色修正"?

|

| 102 |

-

- 这是为了缓解来自StableSR和Tile处理过程中的颜色偏移问题。

|

| 103 |

-

- AdaIN简单地匹配原图和结果图的颜色统计信息。这是StableSR官方算法,但常常效果不佳。

|

| 104 |

-

- Wavelet将原图和结果图分解为低频和高频,然后用原图的低频信息(颜色)替换掉结果图的低频信息。该算法对于不均匀的颜色偏移非常强力。算法来自GIMP和Krita,对每张图像需要几秒钟的时间。

|

| 105 |

-

- 启用颜色修正时,原图也会出现在您的预览窗口中,但不会被自动保存。

|

| 106 |

-

|

| 107 |

-

### 6. 重要问题

|

| 108 |

-

|

| 109 |

-

> 为什么我的结果和官方示例不同?

|

| 110 |

-

|

| 111 |

-

- 这不是你或我们的错。

|

| 112 |

-

- 如果正确安装,这个扩展有与 StableSR 相同的 UNet 模型权重。

|

| 113 |

-

- 如果你安装了可选的 VQVAE,整个模型权重将与融合权重为 0 的官方模型相同。

|

| 114 |

-

- 但是,你的结果将**不如**官方结果,因为:

|

| 115 |

-

- 采样器差异:

|

| 116 |

-

- 官方仓库进行 100 或 200 步的 legacy DDPM 采样,并使用自定义的时间步调度器,采样时不使用负提示。

|

| 117 |

-

- 然而,WebUI 不提供这样的采样器,必须带有负提示进行采样。**这是主要的差异。**

|

| 118 |

-

- VQVAE 解码器差异:

|

| 119 |

-

- 官方 VQVAE 解码器将一些编码器特征作为输入。

|

| 120 |

-

- 然而,在实践中,我发现这些特征对于大图像来说非常大。 (>10G 用于 4k 图像,即使是在 float16!)

|

| 121 |

-

- 因此,**我移除了 VAE 解码器中的 CFW 组件**。由于这导致了对细节的较低保真度,我将尝试将它作为一个选项添加回去。

|

| 122 |

-

|

| 123 |

-

***

|

| 124 |

-

## 许可

|

| 125 |

-

|

| 126 |

-

此项目在以下许可下授权:

|

| 127 |

-

|

| 128 |

-

- S-Lab License 1.0.

|

| 129 |

-

- [Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License][cc-by-nc-sa],由于使用了 NVIDIA SPADE 模块。

|

| 130 |

-

|

| 131 |

-

[![CC BY-NC-SA 4.0][cc-by-nc-sa-image]][cc-by-nc-sa]

|

| 132 |

-

|

| 133 |

-

[cc-by-nc-sa]: http://creativecommons.org/licenses/by-nc-sa/4.0/