diff --git a/InternVL/.github/CONTRIBUTING.md b/InternVL/.github/CONTRIBUTING.md

new file mode 100644

index 0000000000000000000000000000000000000000..19668fe9e40ae1b8d91f14375ba7428c39c19edb

--- /dev/null

+++ b/InternVL/.github/CONTRIBUTING.md

@@ -0,0 +1,234 @@

+## Contributing to InternLM

+

+Welcome to the InternLM community, all kinds of contributions are welcomed, including but not limited to

+

+**Fix bug**

+

+You can directly post a Pull Request to fix typo in code or documents

+

+The steps to fix the bug of code implementation are as follows.

+

+1. If the modification involve significant changes, you should create an issue first and describe the error information and how to trigger the bug. Other developers will discuss with you and propose an proper solution.

+

+2. Posting a pull request after fixing the bug and adding corresponding unit test.

+

+**New Feature or Enhancement**

+

+1. If the modification involve significant changes, you should create an issue to discuss with our developers to propose an proper design.

+2. Post a Pull Request after implementing the new feature or enhancement and add corresponding unit test.

+

+**Document**

+

+You can directly post a pull request to fix documents. If you want to add a document, you should first create an issue to check if it is reasonable.

+

+### Pull Request Workflow

+

+If you're not familiar with Pull Request, don't worry! The following guidance will tell you how to create a Pull Request step by step. If you want to dive into the develop mode of Pull Request, you can refer to the [official documents](https://docs.github.com/en/github/collaborating-with-issues-and-pull-requests/about-pull-requests)

+

+#### 1. Fork and clone

+

+If you are posting a pull request for the first time, you should fork the OpenMMLab repositories by clicking the **Fork** button in the top right corner of the GitHub page, and the forked repositories will appear under your GitHub profile.

+

+ +

+Then, you can clone the repositories to local:

+

+```shell

+git clone git@github.com:{username}/lmdeploy.git

+```

+

+After that, you should add official repository as the upstream repository

+

+```bash

+git remote add upstream git@github.com:InternLM/lmdeploy.git

+```

+

+Check whether remote repository has been added successfully by `git remote -v`

+

+```bash

+origin git@github.com:{username}/lmdeploy.git (fetch)

+origin git@github.com:{username}/lmdeploy.git (push)

+upstream git@github.com:InternLM/lmdeploy.git (fetch)

+upstream git@github.com:InternLM/lmdeploy.git (push)

+```

+

+> Here's a brief introduction to origin and upstream. When we use "git clone", we create an "origin" remote by default, which points to the repository cloned from. As for "upstream", we add it ourselves to point to the target repository. Of course, if you don't like the name "upstream", you could name it as you wish. Usually, we'll push the code to "origin". If the pushed code conflicts with the latest code in official("upstream"), we should pull the latest code from upstream to resolve the conflicts, and then push to "origin" again. The posted Pull Request will be updated automatically.

+

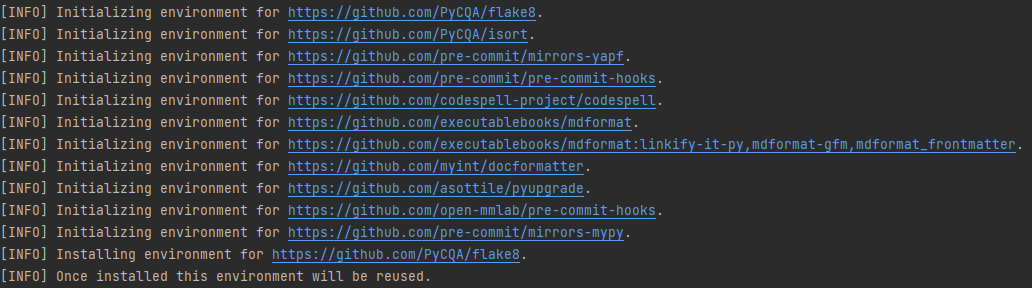

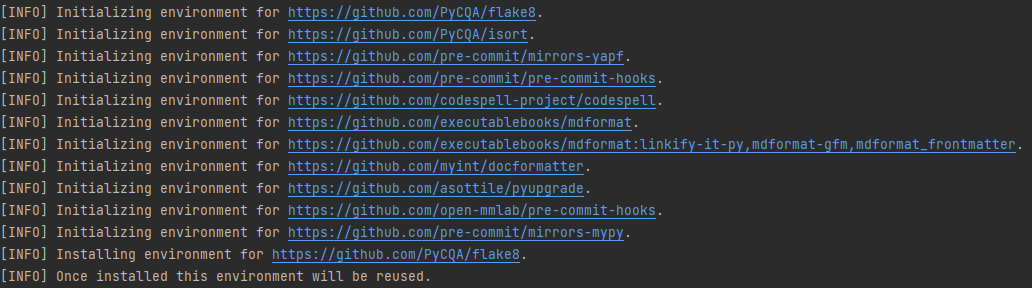

+#### 2. Configure pre-commit

+

+You should configure [pre-commit](https://pre-commit.com/#intro) in the local development environment to make sure the code style matches that of InternLM. **Note**: The following code should be executed under the lmdeploy directory.

+

+```shell

+pip install -U pre-commit

+pre-commit install

+```

+

+Check that pre-commit is configured successfully, and install the hooks defined in `.pre-commit-config.yaml`.

+

+```shell

+pre-commit run --all-files

+```

+

+

+

+Then, you can clone the repositories to local:

+

+```shell

+git clone git@github.com:{username}/lmdeploy.git

+```

+

+After that, you should add official repository as the upstream repository

+

+```bash

+git remote add upstream git@github.com:InternLM/lmdeploy.git

+```

+

+Check whether remote repository has been added successfully by `git remote -v`

+

+```bash

+origin git@github.com:{username}/lmdeploy.git (fetch)

+origin git@github.com:{username}/lmdeploy.git (push)

+upstream git@github.com:InternLM/lmdeploy.git (fetch)

+upstream git@github.com:InternLM/lmdeploy.git (push)

+```

+

+> Here's a brief introduction to origin and upstream. When we use "git clone", we create an "origin" remote by default, which points to the repository cloned from. As for "upstream", we add it ourselves to point to the target repository. Of course, if you don't like the name "upstream", you could name it as you wish. Usually, we'll push the code to "origin". If the pushed code conflicts with the latest code in official("upstream"), we should pull the latest code from upstream to resolve the conflicts, and then push to "origin" again. The posted Pull Request will be updated automatically.

+

+#### 2. Configure pre-commit

+

+You should configure [pre-commit](https://pre-commit.com/#intro) in the local development environment to make sure the code style matches that of InternLM. **Note**: The following code should be executed under the lmdeploy directory.

+

+```shell

+pip install -U pre-commit

+pre-commit install

+```

+

+Check that pre-commit is configured successfully, and install the hooks defined in `.pre-commit-config.yaml`.

+

+```shell

+pre-commit run --all-files

+```

+

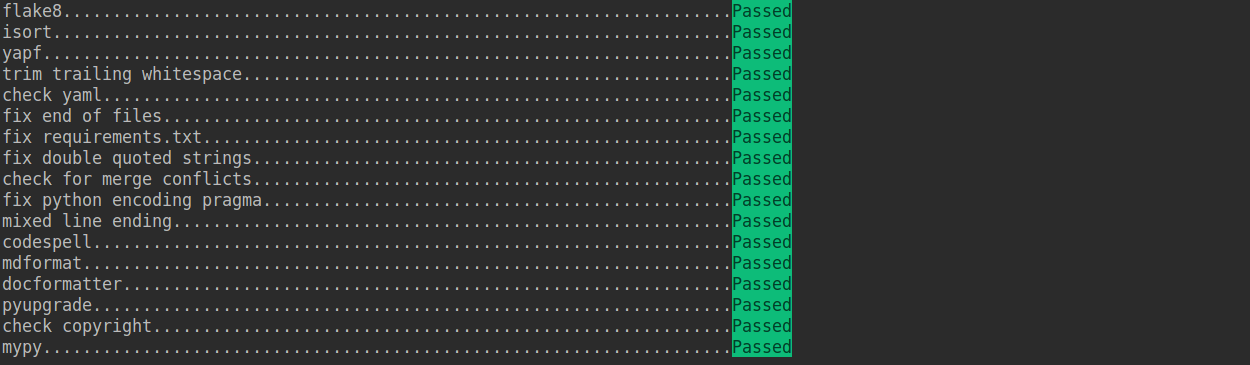

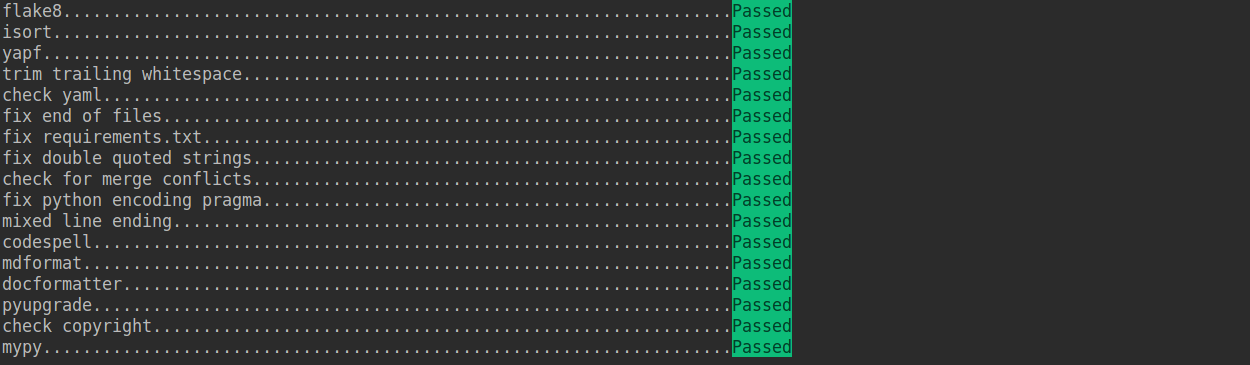

+ +

+

+

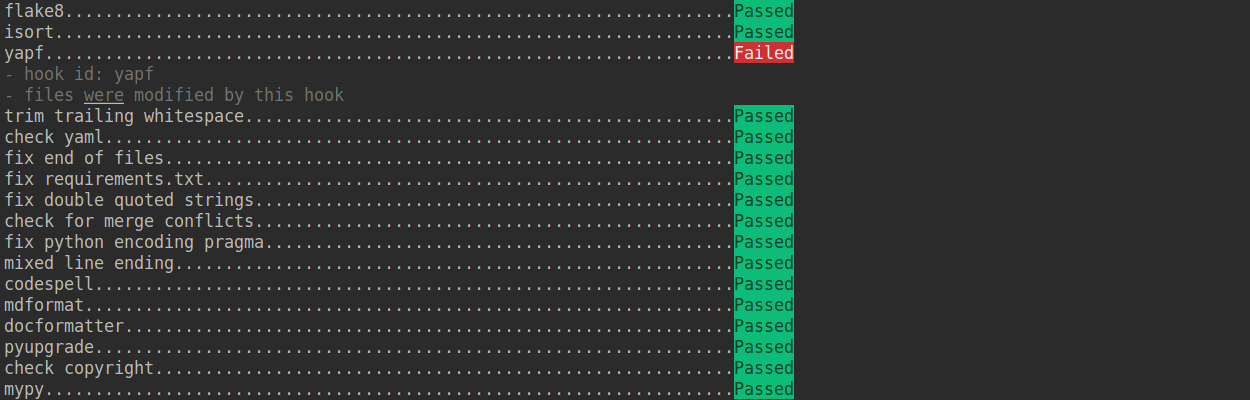

+ +

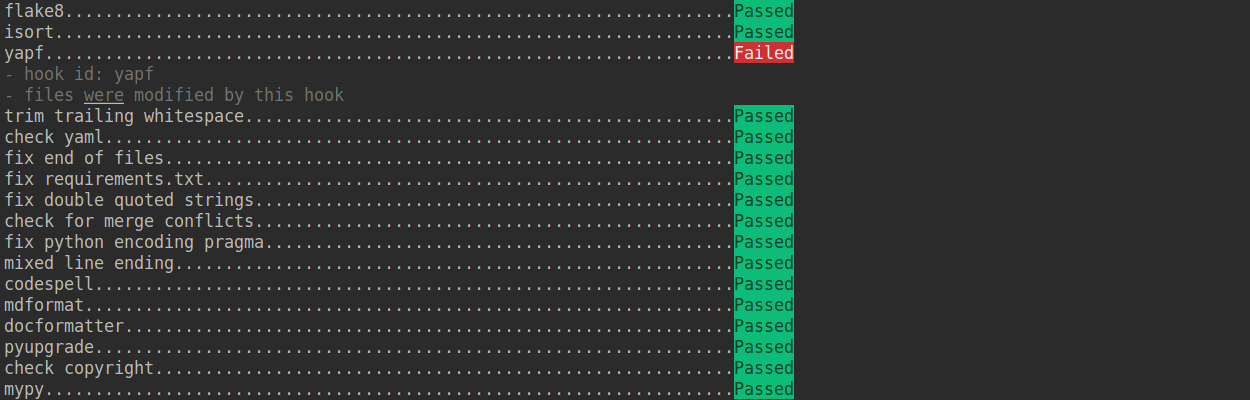

+If the installation process is interrupted, you can repeatedly run `pre-commit run ... ` to continue the installation.

+

+If the code does not conform to the code style specification, pre-commit will raise a warning and fixes some of the errors automatically.

+

+

+

+If the installation process is interrupted, you can repeatedly run `pre-commit run ... ` to continue the installation.

+

+If the code does not conform to the code style specification, pre-commit will raise a warning and fixes some of the errors automatically.

+

+ +

+If we want to commit our code bypassing the pre-commit hook, we can use the `--no-verify` option(**only for temporarily commit**).

+

+```shell

+git commit -m "xxx" --no-verify

+```

+

+#### 3. Create a development branch

+

+After configuring the pre-commit, we should create a branch based on the master branch to develop the new feature or fix the bug. The proposed branch name is `username/pr_name`

+

+```shell

+git checkout -b yhc/refactor_contributing_doc

+```

+

+In subsequent development, if the master branch of the local repository is behind the master branch of "upstream", we need to pull the upstream for synchronization, and then execute the above command:

+

+```shell

+git pull upstream master

+```

+

+#### 4. Commit the code and pass the unit test

+

+- lmdeploy introduces mypy to do static type checking to increase the robustness of the code. Therefore, we need to add Type Hints to our code and pass the mypy check. If you are not familiar with Type Hints, you can refer to [this tutorial](https://docs.python.org/3/library/typing.html).

+

+- The committed code should pass through the unit test

+

+ ```shell

+ # Pass all unit tests

+ pytest tests

+

+ # Pass the unit test of runner

+ pytest tests/test_runner/test_runner.py

+ ```

+

+ If the unit test fails for lack of dependencies, you can install the dependencies referring to the [guidance](#unit-test)

+

+- If the documents are modified/added, we should check the rendering result referring to [guidance](#document-rendering)

+

+#### 5. Push the code to remote

+

+We could push the local commits to remote after passing through the check of unit test and pre-commit. You can associate the local branch with remote branch by adding `-u` option.

+

+```shell

+git push -u origin {branch_name}

+```

+

+This will allow you to use the `git push` command to push code directly next time, without having to specify a branch or the remote repository.

+

+#### 6. Create a Pull Request

+

+(1) Create a pull request in GitHub's Pull request interface

+

+

+

+If we want to commit our code bypassing the pre-commit hook, we can use the `--no-verify` option(**only for temporarily commit**).

+

+```shell

+git commit -m "xxx" --no-verify

+```

+

+#### 3. Create a development branch

+

+After configuring the pre-commit, we should create a branch based on the master branch to develop the new feature or fix the bug. The proposed branch name is `username/pr_name`

+

+```shell

+git checkout -b yhc/refactor_contributing_doc

+```

+

+In subsequent development, if the master branch of the local repository is behind the master branch of "upstream", we need to pull the upstream for synchronization, and then execute the above command:

+

+```shell

+git pull upstream master

+```

+

+#### 4. Commit the code and pass the unit test

+

+- lmdeploy introduces mypy to do static type checking to increase the robustness of the code. Therefore, we need to add Type Hints to our code and pass the mypy check. If you are not familiar with Type Hints, you can refer to [this tutorial](https://docs.python.org/3/library/typing.html).

+

+- The committed code should pass through the unit test

+

+ ```shell

+ # Pass all unit tests

+ pytest tests

+

+ # Pass the unit test of runner

+ pytest tests/test_runner/test_runner.py

+ ```

+

+ If the unit test fails for lack of dependencies, you can install the dependencies referring to the [guidance](#unit-test)

+

+- If the documents are modified/added, we should check the rendering result referring to [guidance](#document-rendering)

+

+#### 5. Push the code to remote

+

+We could push the local commits to remote after passing through the check of unit test and pre-commit. You can associate the local branch with remote branch by adding `-u` option.

+

+```shell

+git push -u origin {branch_name}

+```

+

+This will allow you to use the `git push` command to push code directly next time, without having to specify a branch or the remote repository.

+

+#### 6. Create a Pull Request

+

+(1) Create a pull request in GitHub's Pull request interface

+

+ +

+(2) Modify the PR description according to the guidelines so that other developers can better understand your changes

+

+

+

+(2) Modify the PR description according to the guidelines so that other developers can better understand your changes

+

+ +

+Find more details about Pull Request description in [pull request guidelines](#pr-specs).

+

+**note**

+

+(a) The Pull Request description should contain the reason for the change, the content of the change, and the impact of the change, and be associated with the relevant Issue (see [documentation](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue))

+

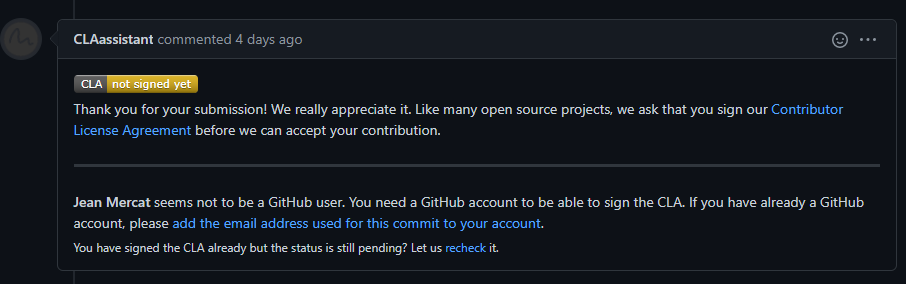

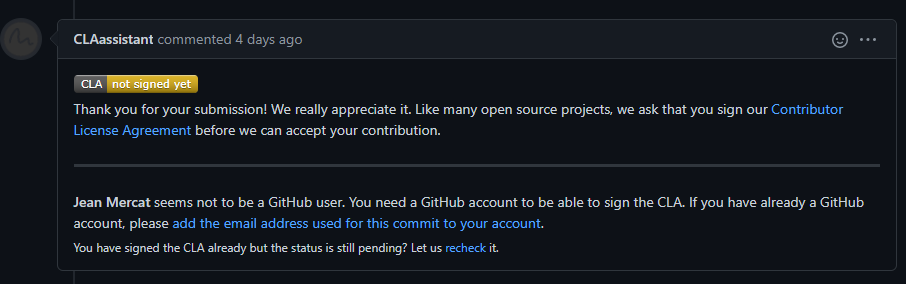

+(b) If it is your first contribution, please sign the CLA

+

+

+

+Find more details about Pull Request description in [pull request guidelines](#pr-specs).

+

+**note**

+

+(a) The Pull Request description should contain the reason for the change, the content of the change, and the impact of the change, and be associated with the relevant Issue (see [documentation](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue))

+

+(b) If it is your first contribution, please sign the CLA

+

+ +

+(c) Check whether the Pull Request pass through the CI

+

+

+

+(c) Check whether the Pull Request pass through the CI

+

+ +

+IternLM will run unit test for the posted Pull Request on different platforms (Linux, Window, Mac), based on different versions of Python, PyTorch, CUDA to make sure the code is correct. We can see the specific test information by clicking `Details` in the above image so that we can modify the code.

+

+(3) If the Pull Request passes the CI, then you can wait for the review from other developers. You'll modify the code based on the reviewer's comments, and repeat the steps [4](#4-commit-the-code-and-pass-the-unit-test)-[5](#5-push-the-code-to-remote) until all reviewers approve it. Then, we will merge it ASAP.

+

+

+

+IternLM will run unit test for the posted Pull Request on different platforms (Linux, Window, Mac), based on different versions of Python, PyTorch, CUDA to make sure the code is correct. We can see the specific test information by clicking `Details` in the above image so that we can modify the code.

+

+(3) If the Pull Request passes the CI, then you can wait for the review from other developers. You'll modify the code based on the reviewer's comments, and repeat the steps [4](#4-commit-the-code-and-pass-the-unit-test)-[5](#5-push-the-code-to-remote) until all reviewers approve it. Then, we will merge it ASAP.

+

+ +

+#### 7. Resolve conflicts

+

+If your local branch conflicts with the latest master branch of "upstream", you'll need to resolove them. There are two ways to do this:

+

+```shell

+git fetch --all --prune

+git rebase upstream/master

+```

+

+or

+

+```shell

+git fetch --all --prune

+git merge upstream/master

+```

+

+If you are very good at handling conflicts, then you can use rebase to resolve conflicts, as this will keep your commit logs tidy. If you are not familiar with `rebase`, then you can use `merge` to resolve conflicts.

+

+### Guidance

+

+#### Document rendering

+

+If the documents are modified/added, we should check the rendering result. We could install the dependencies and run the following command to render the documents and check the results:

+

+```shell

+pip install -r requirements/docs.txt

+cd docs/zh_cn/

+# or docs/en

+make html

+# check file in ./docs/zh_cn/_build/html/index.html

+```

+

+### Code style

+

+#### Python

+

+We adopt [PEP8](https://www.python.org/dev/peps/pep-0008/) as the preferred code style.

+

+We use the following tools for linting and formatting:

+

+- [flake8](https://github.com/PyCQA/flake8): A wrapper around some linter tools.

+- [isort](https://github.com/timothycrosley/isort): A Python utility to sort imports.

+- [yapf](https://github.com/google/yapf): A formatter for Python files.

+- [codespell](https://github.com/codespell-project/codespell): A Python utility to fix common misspellings in text files.

+- [mdformat](https://github.com/executablebooks/mdformat): Mdformat is an opinionated Markdown formatter that can be used to enforce a consistent style in Markdown files.

+- [docformatter](https://github.com/myint/docformatter): A formatter to format docstring.

+

+We use [pre-commit hook](https://pre-commit.com/) that checks and formats for `flake8`, `yapf`, `isort`, `trailing whitespaces`, `markdown files`,

+fixes `end-of-files`, `double-quoted-strings`, `python-encoding-pragma`, `mixed-line-ending`, sorts `requirments.txt` automatically on every commit.

+The config for a pre-commit hook is stored in [.pre-commit-config](../.pre-commit-config.yaml).

+

+#### C++ and CUDA

+

+The clang-format config is stored in [.clang-format](../.clang-format). And it's recommended to use clang-format version **11**. Please do not use older or newer versions as they will result in differences after formatting, which can cause the [lint](https://github.com/InternLM/lmdeploy/blob/main/.github/workflows/lint.yml#L25) to fail.

+

+### PR Specs

+

+1. Use [pre-commit](https://pre-commit.com) hook to avoid issues of code style

+

+2. One short-time branch should be matched with only one PR

+

+3. Accomplish a detailed change in one PR. Avoid large PR

+

+ - Bad: Support Faster R-CNN

+ - Acceptable: Add a box head to Faster R-CNN

+ - Good: Add a parameter to box head to support custom conv-layer number

+

+4. Provide clear and significant commit message

+

+5. Provide clear and meaningful PR description

+

+ - Task name should be clarified in title. The general format is: \[Prefix\] Short description of the PR (Suffix)

+ - Prefix: add new feature \[Feature\], fix bug \[Fix\], related to documents \[Docs\], in developing \[WIP\] (which will not be reviewed temporarily)

+ - Introduce main changes, results and influences on other modules in short description

+ - Associate related issues and pull requests with a milestone

diff --git a/InternVL/internvl_chat_llava/LICENSE b/InternVL/internvl_chat_llava/LICENSE

new file mode 100644

index 0000000000000000000000000000000000000000..261eeb9e9f8b2b4b0d119366dda99c6fd7d35c64

--- /dev/null

+++ b/InternVL/internvl_chat_llava/LICENSE

@@ -0,0 +1,201 @@

+ Apache License

+ Version 2.0, January 2004

+ http://www.apache.org/licenses/

+

+ TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

+

+ 1. Definitions.

+

+ "License" shall mean the terms and conditions for use, reproduction,

+ and distribution as defined by Sections 1 through 9 of this document.

+

+ "Licensor" shall mean the copyright owner or entity authorized by

+ the copyright owner that is granting the License.

+

+ "Legal Entity" shall mean the union of the acting entity and all

+ other entities that control, are controlled by, or are under common

+ control with that entity. For the purposes of this definition,

+ "control" means (i) the power, direct or indirect, to cause the

+ direction or management of such entity, whether by contract or

+ otherwise, or (ii) ownership of fifty percent (50%) or more of the

+ outstanding shares, or (iii) beneficial ownership of such entity.

+

+ "You" (or "Your") shall mean an individual or Legal Entity

+ exercising permissions granted by this License.

+

+ "Source" form shall mean the preferred form for making modifications,

+ including but not limited to software source code, documentation

+ source, and configuration files.

+

+ "Object" form shall mean any form resulting from mechanical

+ transformation or translation of a Source form, including but

+ not limited to compiled object code, generated documentation,

+ and conversions to other media types.

+

+ "Work" shall mean the work of authorship, whether in Source or

+ Object form, made available under the License, as indicated by a

+ copyright notice that is included in or attached to the work

+ (an example is provided in the Appendix below).

+

+ "Derivative Works" shall mean any work, whether in Source or Object

+ form, that is based on (or derived from) the Work and for which the

+ editorial revisions, annotations, elaborations, or other modifications

+ represent, as a whole, an original work of authorship. For the purposes

+ of this License, Derivative Works shall not include works that remain

+ separable from, or merely link (or bind by name) to the interfaces of,

+ the Work and Derivative Works thereof.

+

+ "Contribution" shall mean any work of authorship, including

+ the original version of the Work and any modifications or additions

+ to that Work or Derivative Works thereof, that is intentionally

+ submitted to Licensor for inclusion in the Work by the copyright owner

+ or by an individual or Legal Entity authorized to submit on behalf of

+ the copyright owner. For the purposes of this definition, "submitted"

+ means any form of electronic, verbal, or written communication sent

+ to the Licensor or its representatives, including but not limited to

+ communication on electronic mailing lists, source code control systems,

+ and issue tracking systems that are managed by, or on behalf of, the

+ Licensor for the purpose of discussing and improving the Work, but

+ excluding communication that is conspicuously marked or otherwise

+ designated in writing by the copyright owner as "Not a Contribution."

+

+ "Contributor" shall mean Licensor and any individual or Legal Entity

+ on behalf of whom a Contribution has been received by Licensor and

+ subsequently incorporated within the Work.

+

+ 2. Grant of Copyright License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ copyright license to reproduce, prepare Derivative Works of,

+ publicly display, publicly perform, sublicense, and distribute the

+ Work and such Derivative Works in Source or Object form.

+

+ 3. Grant of Patent License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ (except as stated in this section) patent license to make, have made,

+ use, offer to sell, sell, import, and otherwise transfer the Work,

+ where such license applies only to those patent claims licensable

+ by such Contributor that are necessarily infringed by their

+ Contribution(s) alone or by combination of their Contribution(s)

+ with the Work to which such Contribution(s) was submitted. If You

+ institute patent litigation against any entity (including a

+ cross-claim or counterclaim in a lawsuit) alleging that the Work

+ or a Contribution incorporated within the Work constitutes direct

+ or contributory patent infringement, then any patent licenses

+ granted to You under this License for that Work shall terminate

+ as of the date such litigation is filed.

+

+ 4. Redistribution. You may reproduce and distribute copies of the

+ Work or Derivative Works thereof in any medium, with or without

+ modifications, and in Source or Object form, provided that You

+ meet the following conditions:

+

+ (a) You must give any other recipients of the Work or

+ Derivative Works a copy of this License; and

+

+ (b) You must cause any modified files to carry prominent notices

+ stating that You changed the files; and

+

+ (c) You must retain, in the Source form of any Derivative Works

+ that You distribute, all copyright, patent, trademark, and

+ attribution notices from the Source form of the Work,

+ excluding those notices that do not pertain to any part of

+ the Derivative Works; and

+

+ (d) If the Work includes a "NOTICE" text file as part of its

+ distribution, then any Derivative Works that You distribute must

+ include a readable copy of the attribution notices contained

+ within such NOTICE file, excluding those notices that do not

+ pertain to any part of the Derivative Works, in at least one

+ of the following places: within a NOTICE text file distributed

+ as part of the Derivative Works; within the Source form or

+ documentation, if provided along with the Derivative Works; or,

+ within a display generated by the Derivative Works, if and

+ wherever such third-party notices normally appear. The contents

+ of the NOTICE file are for informational purposes only and

+ do not modify the License. You may add Your own attribution

+ notices within Derivative Works that You distribute, alongside

+ or as an addendum to the NOTICE text from the Work, provided

+ that such additional attribution notices cannot be construed

+ as modifying the License.

+

+ You may add Your own copyright statement to Your modifications and

+ may provide additional or different license terms and conditions

+ for use, reproduction, or distribution of Your modifications, or

+ for any such Derivative Works as a whole, provided Your use,

+ reproduction, and distribution of the Work otherwise complies with

+ the conditions stated in this License.

+

+ 5. Submission of Contributions. Unless You explicitly state otherwise,

+ any Contribution intentionally submitted for inclusion in the Work

+ by You to the Licensor shall be under the terms and conditions of

+ this License, without any additional terms or conditions.

+ Notwithstanding the above, nothing herein shall supersede or modify

+ the terms of any separate license agreement you may have executed

+ with Licensor regarding such Contributions.

+

+ 6. Trademarks. This License does not grant permission to use the trade

+ names, trademarks, service marks, or product names of the Licensor,

+ except as required for reasonable and customary use in describing the

+ origin of the Work and reproducing the content of the NOTICE file.

+

+ 7. Disclaimer of Warranty. Unless required by applicable law or

+ agreed to in writing, Licensor provides the Work (and each

+ Contributor provides its Contributions) on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

+ implied, including, without limitation, any warranties or conditions

+ of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

+ PARTICULAR PURPOSE. You are solely responsible for determining the

+ appropriateness of using or redistributing the Work and assume any

+ risks associated with Your exercise of permissions under this License.

+

+ 8. Limitation of Liability. In no event and under no legal theory,

+ whether in tort (including negligence), contract, or otherwise,

+ unless required by applicable law (such as deliberate and grossly

+ negligent acts) or agreed to in writing, shall any Contributor be

+ liable to You for damages, including any direct, indirect, special,

+ incidental, or consequential damages of any character arising as a

+ result of this License or out of the use or inability to use the

+ Work (including but not limited to damages for loss of goodwill,

+ work stoppage, computer failure or malfunction, or any and all

+ other commercial damages or losses), even if such Contributor

+ has been advised of the possibility of such damages.

+

+ 9. Accepting Warranty or Additional Liability. While redistributing

+ the Work or Derivative Works thereof, You may choose to offer,

+ and charge a fee for, acceptance of support, warranty, indemnity,

+ or other liability obligations and/or rights consistent with this

+ License. However, in accepting such obligations, You may act only

+ on Your own behalf and on Your sole responsibility, not on behalf

+ of any other Contributor, and only if You agree to indemnify,

+ defend, and hold each Contributor harmless for any liability

+ incurred by, or claims asserted against, such Contributor by reason

+ of your accepting any such warranty or additional liability.

+

+ END OF TERMS AND CONDITIONS

+

+ APPENDIX: How to apply the Apache License to your work.

+

+ To apply the Apache License to your work, attach the following

+ boilerplate notice, with the fields enclosed by brackets "[]"

+ replaced with your own identifying information. (Don't include

+ the brackets!) The text should be enclosed in the appropriate

+ comment syntax for the file format. We also recommend that a

+ file or class name and description of purpose be included on the

+ same "printed page" as the copyright notice for easier

+ identification within third-party archives.

+

+ Copyright [yyyy] [name of copyright owner]

+

+ Licensed under the Apache License, Version 2.0 (the "License");

+ you may not use this file except in compliance with the License.

+ You may obtain a copy of the License at

+

+ http://www.apache.org/licenses/LICENSE-2.0

+

+ Unless required by applicable law or agreed to in writing, software

+ distributed under the License is distributed on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ See the License for the specific language governing permissions and

+ limitations under the License.

diff --git a/InternVL/internvl_chat_llava/README.md b/InternVL/internvl_chat_llava/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..8c162ea562ae96c627500d867c06c003e8ea8bd9

--- /dev/null

+++ b/InternVL/internvl_chat_llava/README.md

@@ -0,0 +1,506 @@

+# InternVL for Multimodal Dialogue using LLaVA Codebase

+

+This folder contains the implementation of the InternVL-Chat V1.0, which corresponds to Section 4.4 of our [InternVL 1.0 paper](https://arxiv.org/pdf/2312.14238).

+

+In this part, we mainly use the [LLaVA codebase](https://github.com/haotian-liu/LLaVA) to evaluate InternVL in creating multimodal dialogue systems. Thanks for this great work.

+We have retained the original documentation of LLaVA-1.5 as a more detailed manual. In most cases, you will only need to refer to the new documentation that we have added.

+

+> Note: To unify the environment across different tasks, we have made some compatibility modifications to the LLaVA-1.5 code, allowing it to support `transformers==4.37.2` (originally locked at 4.31.0). Please note that `transformers==4.37.2` should be installed.

+

+## 🛠️ Installation

+

+First, follow the [installation guide](../INSTALLATION.md) to perform some basic installations.

+

+In addition, using this codebase requires executing the following steps:

+

+- Install other requirements:

+

+ ```bash

+ pip install --upgrade pip # enable PEP 660 support

+ pip install -e .

+ ```

+

+## 📦 Model Preparation

+

+| model name | type | download | size |

+| ----------------------- | ----------- | ---------------------------------------------------------------------- | :-----: |

+| InternViT-6B-224px | huggingface | 🤗 [HF link](https://huggingface.co/OpenGVLab/InternViT-6B-224px) | 12 GB |

+| InternViT-6B-448px-V1-0 | huggingface | 🤗 [HF link](https://huggingface.co/OpenGVLab/InternViT-6B-448px-V1-0) | 12 GB |

+| vicuna-13b-v1.5 | huggingface | 🤗 [HF link](https://huggingface.co/lmsys/vicuna-7b-v1.5) | 13.5 GB |

+| vicuna-7b-v1.5 | huggingface | 🤗 [HF link](https://huggingface.co/lmsys/vicuna-13b-v1.5) | 26.1 GB |

+

+Please download the above model weights and place them in the `pretrained/` folder.

+

+```sh

+cd pretrained/

+# pip install -U huggingface_hub

+huggingface-cli download --resume-download --local-dir-use-symlinks False OpenGVLab/InternViT-6B-224px --local-dir InternViT-6B-224px

+huggingface-cli download --resume-download --local-dir-use-symlinks False OpenGVLab/InternViT-6B-448px-V1-0 --local-dir InternViT-6B-448px

+huggingface-cli download --resume-download --local-dir-use-symlinks False lmsys/vicuna-13b-v1.5 --local-dir vicuna-13b-v1.5

+huggingface-cli download --resume-download --local-dir-use-symlinks False lmsys/vicuna-7b-v1.5 --local-dir vicuna-7b-v1.5

+```

+

+The directory structure is:

+

+```sh

+pretrained

+│── InternViT-6B-224px/

+│── InternViT-6B-448px/

+│── vicuna-13b-v1.5/

+└── vicuna-7b-v1.5/

+```

+

+## 🔥 Training

+

+- InternViT-6B-224px + Vicuna-7B:

+

+```shell

+# pretrain

+CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 sh scripts_internvl/pretrain_internvit6b_224to336_vicuna7b.sh

+# finetune

+CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 sh scripts_internvl/finetune_internvit6b_224to336_vicuna7b.sh

+```

+

+- InternViT-6B-224px + Vicuna-13B:

+

+```shell

+# pretrain

+CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 sh scripts_internvl/pretrain_internvit6b_224to336_vicuna13b.sh

+# finetune

+CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 sh scripts_internvl/finetune_internvit6b_224to336_vicuna13b.sh

+```

+

+- InternViT-6B-448px + Vicuna-7B:

+

+```shell

+# pretrain

+CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 sh scripts_internvl/pretrain_internvit6b_448_vicuna7b.sh

+# finetune

+CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 sh scripts_internvl/finetune_internvit6b_448_vicuna7b.sh

+```

+

+- InternViT-6B-448px + Vicuna-13B:

+

+```shell

+# pretrain

+CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 sh scripts_internvl/pretrain_internvit6b_448_vicuna13b.sh

+# finetune

+CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 sh scripts_internvl/finetune_internvit6b_448_vicuna13b.sh

+```

+

+## 🤗 Model Zoo

+

+| method | vision encoder | LLM | res. | VQAv2 | GQA | VizWiz | SQA | TextVQA | POPE | MME | MMB | MMBCN | MMVet | Download |

+| ----------------- | :------------: | :---: | :--: | :---: | :--: | :----: | :--: | :-----: | :--: | :----: | :--: | :--------------: | :---: | :----------------------------------------------------------------------------------: |

+| LLaVA-1.5 | CLIP-L-336px | V-7B | 336 | 78.5 | 62.0 | 50.0 | 66.8 | 58.2 | 85.9 | 1510.7 | 64.3 | 58.3 | 30.5 | 🤗 [HF link](https://huggingface.co/liuhaotian/llava-v1.5-7b) |

+| LLaVA-1.5 | CLIP-L-336px | V-13B | 336 | 80.0 | 63.3 | 53.6 | 71.6 | 61.3 | 85.9 | 1531.3 | 67.7 | 63.6 | 35.4 | 🤗 [HF link](https://huggingface.co/liuhaotian/llava-v1.5-13b) |

+| InternVL-Chat-1.0 | IViT-6B-224px | V-7B | 336 | 79.3 | 62.9 | 52.5 | 66.2 | 57.0 | 86.4 | 1525.1 | 64.6 | 57.6 | 31.2 | 🤗 [HF link](https://huggingface.co/OpenGVLab/InternVL-Chat-ViT-6B-Vicuna-7B) |

+| InternVL-Chat-1.0 | IViT-6B-224px | V-13B | 336 | 80.2 | 63.9 | 54.6 | 70.1 | 58.7 | 87.1 | 1546.9 | 66.5 | 61.9 | 33.7 | 🤗 [HF link](https://huggingface.co/OpenGVLab/InternVL-Chat-ViT-6B-Vicuna-13B) |

+| InternVL-Chat-1.0 | IViT-6B-448px | V-13B | 448 | 82.0 | 64.1 | 60.1 | 71.6 | 64.8 | 87.2 | 1579.0 | 68.2 | 64.0 | 36.7 | 🤗 [HF link](https://huggingface.co/OpenGVLab/InternVL-Chat-ViT-6B-Vicuna-13B-448px) |

+

+Please download the above model weights and place them in the `pretrained/` folder.

+

+```shell

+cd pretrained/

+# pip install -U huggingface_hub

+huggingface-cli download --resume-download --local-dir-use-symlinks False OpenGVLab/InternVL-Chat-ViT-6B-Vicuna-7B --local-dir InternVL-Chat-ViT-6B-Vicuna-7B

+huggingface-cli download --resume-download --local-dir-use-symlinks False OpenGVLab/InternVL-Chat-ViT-6B-Vicuna-13B --local-dir InternVL-Chat-ViT-6B-Vicuna-13B

+huggingface-cli download --resume-download --local-dir-use-symlinks False OpenGVLab/InternVL-Chat-ViT-6B-Vicuna-13B-448px --local-dir InternVL-Chat-ViT-6B-Vicuna-13B-448px

+

+```

+

+The directory structure is:

+

+```

+pretrained

+│── InternViT-6B-224px/

+│── InternViT-6B-448px/

+│── vicuna-13b-v1.5/

+│── vicuna-7b-v1.5/

+│── InternVL-Chat-ViT-6B-Vicuna-7B/

+│── InternVL-Chat-ViT-6B-Vicuna-13B/

+└── InternVL-Chat-ViT-6B-Vicuna-13B-448px/

+```

+

+## 🖥️ Demo

+

+The method for deploying the demo is consistent with LLaVA-1.5. You only need to change the model path. The specific steps are as follows:

+

+**Launch a controller**

+

+```shell

+python -m llava.serve.controller --host 0.0.0.0 --port 10000

+```

+

+**Launch a gradio web server**

+

+```shell

+python -m llava.serve.gradio_web_server --controller http://localhost:10000 --model-list-mode reload --port 10038

+```

+

+**Launch a model worker**

+

+```shell

+# OpenGVLab/InternVL-Chat-ViT-6B-Vicuna-7B

+python -m llava.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port 40000 --worker http://localhost:40000 --model-path ./pretrained/InternVL-Chat-ViT-6B-Vicuna-7B

+# OpenGVLab/InternVL-Chat-ViT-6B-Vicuna-13B

+python -m llava.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port 40001 --worker http://localhost:40001 --model-path ./pretrained/InternVL-Chat-ViT-6B-Vicuna-13B

+```

+

+After completing the above steps, you can access the web demo at `http://localhost:10038` and see the following page. Note that the models deployed here are `InternVL-Chat-ViT-6B-Vicuna-7B` and `InternVL-Chat-ViT-6B-Vicuna-13B`, which are the two models of our InternVL 1.0. The only difference from LLaVA-1.5 is that the CLIP-ViT-300M has been replaced with our InternViT-6B.

+

+If you need a more effective MLLM, please check out our InternVL2 series models.

+For more details on deploying the demo, please refer to [here](#gradio-web-ui).

+

+

+

+## 💡 Testing

+

+The method for testing the model remains the same as LLaVA-1.5; you just need to change the path of the script. Our scripts are located in `scripts_internvl/`.

+

+For example, testing `MME` using a single GPU:

+

+```shell

+sh scripts_internvl/eval/mme.sh pretrained/InternVL-Chat-ViT-6B-Vicuna-7B/

+```

+

+______________________________________________________________________

+

+## 🌋 LLaVA: Large Language and Vision Assistant

+

+*Visual instruction tuning towards large language and vision models with GPT-4 level capabilities.*

+

+\[[Project Page](https://llava-vl.github.io/)\] \[[Demo](https://llava.hliu.cc/)\] \[[Data](https://github.com/haotian-liu/LLaVA/blob/main/docs/Data.md)\] \[[Model Zoo](https://github.com/haotian-liu/LLaVA/blob/main/docs/MODEL_ZOO.md)\]

+

+🤝Community Contributions: \[[llama.cpp](https://github.com/ggerganov/llama.cpp/pull/3436)\] \[[Colab](https://github.com/camenduru/LLaVA-colab)\] \[[🤗Space](https://huggingface.co/spaces/badayvedat/LLaVA)\]

+

+**Improved Baselines with Visual Instruction Tuning** \[[Paper](https://arxiv.org/abs/2310.03744)\]

+

+#### 7. Resolve conflicts

+

+If your local branch conflicts with the latest master branch of "upstream", you'll need to resolove them. There are two ways to do this:

+

+```shell

+git fetch --all --prune

+git rebase upstream/master

+```

+

+or

+

+```shell

+git fetch --all --prune

+git merge upstream/master

+```

+

+If you are very good at handling conflicts, then you can use rebase to resolve conflicts, as this will keep your commit logs tidy. If you are not familiar with `rebase`, then you can use `merge` to resolve conflicts.

+

+### Guidance

+

+#### Document rendering

+

+If the documents are modified/added, we should check the rendering result. We could install the dependencies and run the following command to render the documents and check the results:

+

+```shell

+pip install -r requirements/docs.txt

+cd docs/zh_cn/

+# or docs/en

+make html

+# check file in ./docs/zh_cn/_build/html/index.html

+```

+

+### Code style

+

+#### Python

+

+We adopt [PEP8](https://www.python.org/dev/peps/pep-0008/) as the preferred code style.

+

+We use the following tools for linting and formatting:

+

+- [flake8](https://github.com/PyCQA/flake8): A wrapper around some linter tools.

+- [isort](https://github.com/timothycrosley/isort): A Python utility to sort imports.

+- [yapf](https://github.com/google/yapf): A formatter for Python files.

+- [codespell](https://github.com/codespell-project/codespell): A Python utility to fix common misspellings in text files.

+- [mdformat](https://github.com/executablebooks/mdformat): Mdformat is an opinionated Markdown formatter that can be used to enforce a consistent style in Markdown files.

+- [docformatter](https://github.com/myint/docformatter): A formatter to format docstring.

+

+We use [pre-commit hook](https://pre-commit.com/) that checks and formats for `flake8`, `yapf`, `isort`, `trailing whitespaces`, `markdown files`,

+fixes `end-of-files`, `double-quoted-strings`, `python-encoding-pragma`, `mixed-line-ending`, sorts `requirments.txt` automatically on every commit.

+The config for a pre-commit hook is stored in [.pre-commit-config](../.pre-commit-config.yaml).

+

+#### C++ and CUDA

+

+The clang-format config is stored in [.clang-format](../.clang-format). And it's recommended to use clang-format version **11**. Please do not use older or newer versions as they will result in differences after formatting, which can cause the [lint](https://github.com/InternLM/lmdeploy/blob/main/.github/workflows/lint.yml#L25) to fail.

+

+### PR Specs

+

+1. Use [pre-commit](https://pre-commit.com) hook to avoid issues of code style

+

+2. One short-time branch should be matched with only one PR

+

+3. Accomplish a detailed change in one PR. Avoid large PR

+

+ - Bad: Support Faster R-CNN

+ - Acceptable: Add a box head to Faster R-CNN

+ - Good: Add a parameter to box head to support custom conv-layer number

+

+4. Provide clear and significant commit message

+

+5. Provide clear and meaningful PR description

+

+ - Task name should be clarified in title. The general format is: \[Prefix\] Short description of the PR (Suffix)

+ - Prefix: add new feature \[Feature\], fix bug \[Fix\], related to documents \[Docs\], in developing \[WIP\] (which will not be reviewed temporarily)

+ - Introduce main changes, results and influences on other modules in short description

+ - Associate related issues and pull requests with a milestone

diff --git a/InternVL/internvl_chat_llava/LICENSE b/InternVL/internvl_chat_llava/LICENSE

new file mode 100644

index 0000000000000000000000000000000000000000..261eeb9e9f8b2b4b0d119366dda99c6fd7d35c64

--- /dev/null

+++ b/InternVL/internvl_chat_llava/LICENSE

@@ -0,0 +1,201 @@

+ Apache License

+ Version 2.0, January 2004

+ http://www.apache.org/licenses/

+

+ TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

+

+ 1. Definitions.

+

+ "License" shall mean the terms and conditions for use, reproduction,

+ and distribution as defined by Sections 1 through 9 of this document.

+

+ "Licensor" shall mean the copyright owner or entity authorized by

+ the copyright owner that is granting the License.

+

+ "Legal Entity" shall mean the union of the acting entity and all

+ other entities that control, are controlled by, or are under common

+ control with that entity. For the purposes of this definition,

+ "control" means (i) the power, direct or indirect, to cause the

+ direction or management of such entity, whether by contract or

+ otherwise, or (ii) ownership of fifty percent (50%) or more of the

+ outstanding shares, or (iii) beneficial ownership of such entity.

+

+ "You" (or "Your") shall mean an individual or Legal Entity

+ exercising permissions granted by this License.

+

+ "Source" form shall mean the preferred form for making modifications,

+ including but not limited to software source code, documentation

+ source, and configuration files.

+

+ "Object" form shall mean any form resulting from mechanical

+ transformation or translation of a Source form, including but

+ not limited to compiled object code, generated documentation,

+ and conversions to other media types.

+

+ "Work" shall mean the work of authorship, whether in Source or

+ Object form, made available under the License, as indicated by a

+ copyright notice that is included in or attached to the work

+ (an example is provided in the Appendix below).

+

+ "Derivative Works" shall mean any work, whether in Source or Object

+ form, that is based on (or derived from) the Work and for which the

+ editorial revisions, annotations, elaborations, or other modifications

+ represent, as a whole, an original work of authorship. For the purposes

+ of this License, Derivative Works shall not include works that remain

+ separable from, or merely link (or bind by name) to the interfaces of,

+ the Work and Derivative Works thereof.

+

+ "Contribution" shall mean any work of authorship, including

+ the original version of the Work and any modifications or additions

+ to that Work or Derivative Works thereof, that is intentionally

+ submitted to Licensor for inclusion in the Work by the copyright owner

+ or by an individual or Legal Entity authorized to submit on behalf of

+ the copyright owner. For the purposes of this definition, "submitted"

+ means any form of electronic, verbal, or written communication sent

+ to the Licensor or its representatives, including but not limited to

+ communication on electronic mailing lists, source code control systems,

+ and issue tracking systems that are managed by, or on behalf of, the

+ Licensor for the purpose of discussing and improving the Work, but

+ excluding communication that is conspicuously marked or otherwise

+ designated in writing by the copyright owner as "Not a Contribution."

+

+ "Contributor" shall mean Licensor and any individual or Legal Entity

+ on behalf of whom a Contribution has been received by Licensor and

+ subsequently incorporated within the Work.

+

+ 2. Grant of Copyright License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ copyright license to reproduce, prepare Derivative Works of,

+ publicly display, publicly perform, sublicense, and distribute the

+ Work and such Derivative Works in Source or Object form.

+

+ 3. Grant of Patent License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ (except as stated in this section) patent license to make, have made,

+ use, offer to sell, sell, import, and otherwise transfer the Work,

+ where such license applies only to those patent claims licensable

+ by such Contributor that are necessarily infringed by their

+ Contribution(s) alone or by combination of their Contribution(s)

+ with the Work to which such Contribution(s) was submitted. If You

+ institute patent litigation against any entity (including a

+ cross-claim or counterclaim in a lawsuit) alleging that the Work

+ or a Contribution incorporated within the Work constitutes direct

+ or contributory patent infringement, then any patent licenses

+ granted to You under this License for that Work shall terminate

+ as of the date such litigation is filed.

+

+ 4. Redistribution. You may reproduce and distribute copies of the

+ Work or Derivative Works thereof in any medium, with or without

+ modifications, and in Source or Object form, provided that You

+ meet the following conditions:

+

+ (a) You must give any other recipients of the Work or

+ Derivative Works a copy of this License; and

+

+ (b) You must cause any modified files to carry prominent notices

+ stating that You changed the files; and

+

+ (c) You must retain, in the Source form of any Derivative Works

+ that You distribute, all copyright, patent, trademark, and

+ attribution notices from the Source form of the Work,

+ excluding those notices that do not pertain to any part of

+ the Derivative Works; and

+

+ (d) If the Work includes a "NOTICE" text file as part of its

+ distribution, then any Derivative Works that You distribute must

+ include a readable copy of the attribution notices contained

+ within such NOTICE file, excluding those notices that do not

+ pertain to any part of the Derivative Works, in at least one

+ of the following places: within a NOTICE text file distributed

+ as part of the Derivative Works; within the Source form or

+ documentation, if provided along with the Derivative Works; or,

+ within a display generated by the Derivative Works, if and

+ wherever such third-party notices normally appear. The contents

+ of the NOTICE file are for informational purposes only and

+ do not modify the License. You may add Your own attribution

+ notices within Derivative Works that You distribute, alongside

+ or as an addendum to the NOTICE text from the Work, provided

+ that such additional attribution notices cannot be construed

+ as modifying the License.

+

+ You may add Your own copyright statement to Your modifications and

+ may provide additional or different license terms and conditions

+ for use, reproduction, or distribution of Your modifications, or

+ for any such Derivative Works as a whole, provided Your use,

+ reproduction, and distribution of the Work otherwise complies with

+ the conditions stated in this License.

+

+ 5. Submission of Contributions. Unless You explicitly state otherwise,

+ any Contribution intentionally submitted for inclusion in the Work

+ by You to the Licensor shall be under the terms and conditions of

+ this License, without any additional terms or conditions.

+ Notwithstanding the above, nothing herein shall supersede or modify

+ the terms of any separate license agreement you may have executed

+ with Licensor regarding such Contributions.

+

+ 6. Trademarks. This License does not grant permission to use the trade

+ names, trademarks, service marks, or product names of the Licensor,

+ except as required for reasonable and customary use in describing the

+ origin of the Work and reproducing the content of the NOTICE file.

+

+ 7. Disclaimer of Warranty. Unless required by applicable law or

+ agreed to in writing, Licensor provides the Work (and each

+ Contributor provides its Contributions) on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

+ implied, including, without limitation, any warranties or conditions

+ of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

+ PARTICULAR PURPOSE. You are solely responsible for determining the

+ appropriateness of using or redistributing the Work and assume any

+ risks associated with Your exercise of permissions under this License.

+

+ 8. Limitation of Liability. In no event and under no legal theory,

+ whether in tort (including negligence), contract, or otherwise,

+ unless required by applicable law (such as deliberate and grossly

+ negligent acts) or agreed to in writing, shall any Contributor be

+ liable to You for damages, including any direct, indirect, special,

+ incidental, or consequential damages of any character arising as a

+ result of this License or out of the use or inability to use the

+ Work (including but not limited to damages for loss of goodwill,

+ work stoppage, computer failure or malfunction, or any and all

+ other commercial damages or losses), even if such Contributor

+ has been advised of the possibility of such damages.

+

+ 9. Accepting Warranty or Additional Liability. While redistributing

+ the Work or Derivative Works thereof, You may choose to offer,

+ and charge a fee for, acceptance of support, warranty, indemnity,

+ or other liability obligations and/or rights consistent with this

+ License. However, in accepting such obligations, You may act only

+ on Your own behalf and on Your sole responsibility, not on behalf

+ of any other Contributor, and only if You agree to indemnify,

+ defend, and hold each Contributor harmless for any liability

+ incurred by, or claims asserted against, such Contributor by reason

+ of your accepting any such warranty or additional liability.

+

+ END OF TERMS AND CONDITIONS

+

+ APPENDIX: How to apply the Apache License to your work.

+

+ To apply the Apache License to your work, attach the following

+ boilerplate notice, with the fields enclosed by brackets "[]"

+ replaced with your own identifying information. (Don't include

+ the brackets!) The text should be enclosed in the appropriate

+ comment syntax for the file format. We also recommend that a

+ file or class name and description of purpose be included on the

+ same "printed page" as the copyright notice for easier

+ identification within third-party archives.

+

+ Copyright [yyyy] [name of copyright owner]

+

+ Licensed under the Apache License, Version 2.0 (the "License");

+ you may not use this file except in compliance with the License.

+ You may obtain a copy of the License at

+

+ http://www.apache.org/licenses/LICENSE-2.0

+

+ Unless required by applicable law or agreed to in writing, software

+ distributed under the License is distributed on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ See the License for the specific language governing permissions and

+ limitations under the License.

diff --git a/InternVL/internvl_chat_llava/README.md b/InternVL/internvl_chat_llava/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..8c162ea562ae96c627500d867c06c003e8ea8bd9

--- /dev/null

+++ b/InternVL/internvl_chat_llava/README.md

@@ -0,0 +1,506 @@

+# InternVL for Multimodal Dialogue using LLaVA Codebase

+

+This folder contains the implementation of the InternVL-Chat V1.0, which corresponds to Section 4.4 of our [InternVL 1.0 paper](https://arxiv.org/pdf/2312.14238).

+

+In this part, we mainly use the [LLaVA codebase](https://github.com/haotian-liu/LLaVA) to evaluate InternVL in creating multimodal dialogue systems. Thanks for this great work.

+We have retained the original documentation of LLaVA-1.5 as a more detailed manual. In most cases, you will only need to refer to the new documentation that we have added.

+

+> Note: To unify the environment across different tasks, we have made some compatibility modifications to the LLaVA-1.5 code, allowing it to support `transformers==4.37.2` (originally locked at 4.31.0). Please note that `transformers==4.37.2` should be installed.

+

+## 🛠️ Installation

+

+First, follow the [installation guide](../INSTALLATION.md) to perform some basic installations.

+

+In addition, using this codebase requires executing the following steps:

+

+- Install other requirements:

+

+ ```bash

+ pip install --upgrade pip # enable PEP 660 support

+ pip install -e .

+ ```

+

+## 📦 Model Preparation

+

+| model name | type | download | size |

+| ----------------------- | ----------- | ---------------------------------------------------------------------- | :-----: |

+| InternViT-6B-224px | huggingface | 🤗 [HF link](https://huggingface.co/OpenGVLab/InternViT-6B-224px) | 12 GB |

+| InternViT-6B-448px-V1-0 | huggingface | 🤗 [HF link](https://huggingface.co/OpenGVLab/InternViT-6B-448px-V1-0) | 12 GB |

+| vicuna-13b-v1.5 | huggingface | 🤗 [HF link](https://huggingface.co/lmsys/vicuna-7b-v1.5) | 13.5 GB |

+| vicuna-7b-v1.5 | huggingface | 🤗 [HF link](https://huggingface.co/lmsys/vicuna-13b-v1.5) | 26.1 GB |

+

+Please download the above model weights and place them in the `pretrained/` folder.

+

+```sh

+cd pretrained/

+# pip install -U huggingface_hub

+huggingface-cli download --resume-download --local-dir-use-symlinks False OpenGVLab/InternViT-6B-224px --local-dir InternViT-6B-224px

+huggingface-cli download --resume-download --local-dir-use-symlinks False OpenGVLab/InternViT-6B-448px-V1-0 --local-dir InternViT-6B-448px

+huggingface-cli download --resume-download --local-dir-use-symlinks False lmsys/vicuna-13b-v1.5 --local-dir vicuna-13b-v1.5

+huggingface-cli download --resume-download --local-dir-use-symlinks False lmsys/vicuna-7b-v1.5 --local-dir vicuna-7b-v1.5

+```

+

+The directory structure is:

+

+```sh

+pretrained

+│── InternViT-6B-224px/

+│── InternViT-6B-448px/

+│── vicuna-13b-v1.5/

+└── vicuna-7b-v1.5/

+```

+

+## 🔥 Training

+

+- InternViT-6B-224px + Vicuna-7B:

+

+```shell

+# pretrain

+CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 sh scripts_internvl/pretrain_internvit6b_224to336_vicuna7b.sh

+# finetune

+CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 sh scripts_internvl/finetune_internvit6b_224to336_vicuna7b.sh

+```

+

+- InternViT-6B-224px + Vicuna-13B:

+

+```shell

+# pretrain

+CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 sh scripts_internvl/pretrain_internvit6b_224to336_vicuna13b.sh

+# finetune

+CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 sh scripts_internvl/finetune_internvit6b_224to336_vicuna13b.sh

+```

+

+- InternViT-6B-448px + Vicuna-7B:

+

+```shell

+# pretrain

+CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 sh scripts_internvl/pretrain_internvit6b_448_vicuna7b.sh

+# finetune

+CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 sh scripts_internvl/finetune_internvit6b_448_vicuna7b.sh

+```

+

+- InternViT-6B-448px + Vicuna-13B:

+

+```shell

+# pretrain

+CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 sh scripts_internvl/pretrain_internvit6b_448_vicuna13b.sh

+# finetune

+CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 sh scripts_internvl/finetune_internvit6b_448_vicuna13b.sh

+```

+

+## 🤗 Model Zoo

+

+| method | vision encoder | LLM | res. | VQAv2 | GQA | VizWiz | SQA | TextVQA | POPE | MME | MMB | MMBCN | MMVet | Download |

+| ----------------- | :------------: | :---: | :--: | :---: | :--: | :----: | :--: | :-----: | :--: | :----: | :--: | :--------------: | :---: | :----------------------------------------------------------------------------------: |

+| LLaVA-1.5 | CLIP-L-336px | V-7B | 336 | 78.5 | 62.0 | 50.0 | 66.8 | 58.2 | 85.9 | 1510.7 | 64.3 | 58.3 | 30.5 | 🤗 [HF link](https://huggingface.co/liuhaotian/llava-v1.5-7b) |

+| LLaVA-1.5 | CLIP-L-336px | V-13B | 336 | 80.0 | 63.3 | 53.6 | 71.6 | 61.3 | 85.9 | 1531.3 | 67.7 | 63.6 | 35.4 | 🤗 [HF link](https://huggingface.co/liuhaotian/llava-v1.5-13b) |

+| InternVL-Chat-1.0 | IViT-6B-224px | V-7B | 336 | 79.3 | 62.9 | 52.5 | 66.2 | 57.0 | 86.4 | 1525.1 | 64.6 | 57.6 | 31.2 | 🤗 [HF link](https://huggingface.co/OpenGVLab/InternVL-Chat-ViT-6B-Vicuna-7B) |

+| InternVL-Chat-1.0 | IViT-6B-224px | V-13B | 336 | 80.2 | 63.9 | 54.6 | 70.1 | 58.7 | 87.1 | 1546.9 | 66.5 | 61.9 | 33.7 | 🤗 [HF link](https://huggingface.co/OpenGVLab/InternVL-Chat-ViT-6B-Vicuna-13B) |

+| InternVL-Chat-1.0 | IViT-6B-448px | V-13B | 448 | 82.0 | 64.1 | 60.1 | 71.6 | 64.8 | 87.2 | 1579.0 | 68.2 | 64.0 | 36.7 | 🤗 [HF link](https://huggingface.co/OpenGVLab/InternVL-Chat-ViT-6B-Vicuna-13B-448px) |

+

+Please download the above model weights and place them in the `pretrained/` folder.

+

+```shell

+cd pretrained/

+# pip install -U huggingface_hub

+huggingface-cli download --resume-download --local-dir-use-symlinks False OpenGVLab/InternVL-Chat-ViT-6B-Vicuna-7B --local-dir InternVL-Chat-ViT-6B-Vicuna-7B

+huggingface-cli download --resume-download --local-dir-use-symlinks False OpenGVLab/InternVL-Chat-ViT-6B-Vicuna-13B --local-dir InternVL-Chat-ViT-6B-Vicuna-13B

+huggingface-cli download --resume-download --local-dir-use-symlinks False OpenGVLab/InternVL-Chat-ViT-6B-Vicuna-13B-448px --local-dir InternVL-Chat-ViT-6B-Vicuna-13B-448px

+

+```

+

+The directory structure is:

+

+```

+pretrained

+│── InternViT-6B-224px/

+│── InternViT-6B-448px/

+│── vicuna-13b-v1.5/

+│── vicuna-7b-v1.5/

+│── InternVL-Chat-ViT-6B-Vicuna-7B/

+│── InternVL-Chat-ViT-6B-Vicuna-13B/

+└── InternVL-Chat-ViT-6B-Vicuna-13B-448px/

+```

+

+## 🖥️ Demo

+

+The method for deploying the demo is consistent with LLaVA-1.5. You only need to change the model path. The specific steps are as follows:

+

+**Launch a controller**

+

+```shell

+python -m llava.serve.controller --host 0.0.0.0 --port 10000

+```

+

+**Launch a gradio web server**

+

+```shell

+python -m llava.serve.gradio_web_server --controller http://localhost:10000 --model-list-mode reload --port 10038

+```

+

+**Launch a model worker**

+

+```shell

+# OpenGVLab/InternVL-Chat-ViT-6B-Vicuna-7B

+python -m llava.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port 40000 --worker http://localhost:40000 --model-path ./pretrained/InternVL-Chat-ViT-6B-Vicuna-7B

+# OpenGVLab/InternVL-Chat-ViT-6B-Vicuna-13B

+python -m llava.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port 40001 --worker http://localhost:40001 --model-path ./pretrained/InternVL-Chat-ViT-6B-Vicuna-13B

+```

+

+After completing the above steps, you can access the web demo at `http://localhost:10038` and see the following page. Note that the models deployed here are `InternVL-Chat-ViT-6B-Vicuna-7B` and `InternVL-Chat-ViT-6B-Vicuna-13B`, which are the two models of our InternVL 1.0. The only difference from LLaVA-1.5 is that the CLIP-ViT-300M has been replaced with our InternViT-6B.

+

+If you need a more effective MLLM, please check out our InternVL2 series models.

+For more details on deploying the demo, please refer to [here](#gradio-web-ui).

+

+

+

+## 💡 Testing

+

+The method for testing the model remains the same as LLaVA-1.5; you just need to change the path of the script. Our scripts are located in `scripts_internvl/`.

+

+For example, testing `MME` using a single GPU:

+

+```shell

+sh scripts_internvl/eval/mme.sh pretrained/InternVL-Chat-ViT-6B-Vicuna-7B/

+```

+

+______________________________________________________________________

+

+## 🌋 LLaVA: Large Language and Vision Assistant

+

+*Visual instruction tuning towards large language and vision models with GPT-4 level capabilities.*

+

+\[[Project Page](https://llava-vl.github.io/)\] \[[Demo](https://llava.hliu.cc/)\] \[[Data](https://github.com/haotian-liu/LLaVA/blob/main/docs/Data.md)\] \[[Model Zoo](https://github.com/haotian-liu/LLaVA/blob/main/docs/MODEL_ZOO.md)\]

+

+🤝Community Contributions: \[[llama.cpp](https://github.com/ggerganov/llama.cpp/pull/3436)\] \[[Colab](https://github.com/camenduru/LLaVA-colab)\] \[[🤗Space](https://huggingface.co/spaces/badayvedat/LLaVA)\]

+

+**Improved Baselines with Visual Instruction Tuning** \[[Paper](https://arxiv.org/abs/2310.03744)\]

+[Haotian Liu](https://hliu.cc), [Chunyuan Li](https://chunyuan.li/), [Yuheng Li](https://yuheng-li.github.io/), [Yong Jae Lee](https://pages.cs.wisc.edu/~yongjaelee/)

+

+**Visual Instruction Tuning** (NeurIPS 2023, **Oral**) \[[Paper](https://arxiv.org/abs/2304.08485)\]

+[Haotian Liu\*](https://hliu.cc), [Chunyuan Li\*](https://chunyuan.li/), [Qingyang Wu](https://scholar.google.ca/citations?user=HDiw-TsAAAAJ&hl=en/), [Yong Jae Lee](https://pages.cs.wisc.edu/~yongjaelee/) (\*Equal Contribution)

+

+### Release

+

+- \[10/12\] 🔥 Check out the Korean LLaVA (Ko-LLaVA), created by ETRI, who has generously supported our research! \[[🤗 Demo](https://huggingface.co/spaces/etri-vilab/Ko-LLaVA)\]

+

+- \[10/12\] LLaVA is now supported in [llama.cpp](https://github.com/ggerganov/llama.cpp/pull/3436) with 4-bit / 5-bit quantization support!

+

+- \[10/11\] The training data and scripts of LLaVA-1.5 are released [here](https://github.com/haotian-liu/LLaVA#train), and evaluation scripts are released [here](https://github.com/haotian-liu/LLaVA/blob/main/docs/Evaluation.md)!

+

+- \[10/5\] 🔥 LLaVA-1.5 is out! Achieving SoTA on 11 benchmarks, with just simple modifications to the original LLaVA, utilizes all public data, completes training in ~1 day on a single 8-A100 node, and surpasses methods like Qwen-VL-Chat that use billion-scale data. Check out the [technical report](https://arxiv.org/abs/2310.03744), and explore the [demo](https://llava.hliu.cc/)! Models are available in [Model Zoo](https://github.com/haotian-liu/LLaVA/blob/main/docs/MODEL_ZOO.md).

+

+- \[9/26\] LLaVA is improved with reinforcement learning from human feedback (RLHF) to improve fact grounding and reduce hallucination. Check out the new SFT and RLHF checkpoints at project [\[LLavA-RLHF\]](https://llava-rlhf.github.io/)

+

+- \[9/22\] [LLaVA](https://arxiv.org/abs/2304.08485) is accepted by NeurIPS 2023 as **oral presentation**, and [LLaVA-Med](https://arxiv.org/abs/2306.00890) is accepted by NeurIPS 2023 Datasets and Benchmarks Track as **spotlight presentation**.

+

+- \[9/20\] We summarize our empirical study of training 33B and 65B LLaVA models in a [note](https://arxiv.org/abs/2309.09958). Further, if you are interested in the comprehensive review, evolution and trend of multimodal foundation models, please check out our recent survey paper [\`\`Multimodal Foundation Models: From Specialists to General-Purpose Assistants''.](https://arxiv.org/abs/2309.10020)

+

+

+  +

+

+

+- \[7/19\] 🔥 We release a major upgrade, including support for LLaMA-2, LoRA training, 4-/8-bit inference, higher resolution (336x336), and a lot more. We release [LLaVA Bench](https://github.com/haotian-liu/LLaVA/blob/main/docs/LLaVA_Bench.md) for benchmarking open-ended visual chat with results from Bard and Bing-Chat. We also support and verify training with RTX 3090 and RTX A6000. Check out [LLaVA-from-LLaMA-2](https://github.com/haotian-liu/LLaVA/blob/main/docs/LLaVA_from_LLaMA2.md), and our [model zoo](https://github.com/haotian-liu/LLaVA/blob/main/docs/MODEL_ZOO.md)!

+

+- \[6/26\] [CVPR 2023 Tutorial](https://vlp-tutorial.github.io/) on **Large Multimodal Models: Towards Building and Surpassing Multimodal GPT-4**! Please check out \[[Slides](https://datarelease.blob.core.windows.net/tutorial/vision_foundation_models_2023/slides/Chunyuan_cvpr2023_tutorial_lmm.pdf)\] \[[Notes](https://arxiv.org/abs/2306.14895)\] \[[YouTube](https://youtu.be/mkI7EPD1vp8)\] \[[Bilibli](https://www.bilibili.com/video/BV1Ng4y1T7v3/)\].

+

+- \[6/11\] We released the preview for the most requested feature: DeepSpeed and LoRA support! Please see documentations [here](./docs/LoRA.md).

+

+- \[6/1\] We released **LLaVA-Med: Large Language and Vision Assistant for Biomedicine**, a step towards building biomedical domain large language and vision models with GPT-4 level capabilities. Checkout the [paper](https://arxiv.org/abs/2306.00890) and [page](https://github.com/microsoft/LLaVA-Med).

+

+- \[5/6\] We are releasing [LLaVA-Lighting-MPT-7B-preview](https://huggingface.co/liuhaotian/LLaVA-Lightning-MPT-7B-preview), based on MPT-7B-Chat! See [here](#LLaVA-MPT-7b) for more details.

+

+- \[5/2\] 🔥 We are releasing LLaVA-Lighting! Train a lite, multimodal GPT-4 with just $40 in 3 hours! See [here](#train-llava-lightning) for more details.

+

+- \[4/27\] Thanks to the community effort, LLaVA-13B with 4-bit quantization allows you to run on a GPU with as few as 12GB VRAM! Try it out [here](https://github.com/oobabooga/text-generation-webui/tree/main/extensions/llava).

+

+- \[4/17\] 🔥 We released **LLaVA: Large Language and Vision Assistant**. We propose visual instruction tuning, towards building large language and vision models with GPT-4 level capabilities. Checkout the [paper](https://arxiv.org/abs/2304.08485) and [demo](https://llava.hliu.cc/).

+

+

+

+[](https://github.com/tatsu-lab/stanford_alpaca/blob/main/LICENSE)

+[](https://github.com/tatsu-lab/stanford_alpaca/blob/main/DATA_LICENSE)

+**Usage and License Notices**: The data and checkpoint is intended and licensed for research use only. They are also restricted to uses that follow the license agreement of LLaMA, Vicuna and GPT-4. The dataset is CC BY NC 4.0 (allowing only non-commercial use) and models trained using the dataset should not be used outside of research purposes.

+

+### Contents

+

+- [Install](#install)

+- [LLaVA Weights](#llava-weights)

+- [Demo](#Demo)

+- [Model Zoo](https://github.com/haotian-liu/LLaVA/blob/main/docs/MODEL_ZOO.md)

+- [Dataset](https://github.com/haotian-liu/LLaVA/blob/main/docs/Data.md)

+- [Train](#train)

+- [Evaluation](#evaluation)

+

+### Install

+

+1. Clone this repository and navigate to LLaVA folder

+

+ ```bash

+ git clone https://github.com/haotian-liu/LLaVA.git

+ cd LLaVA

+ ```

+

+2. Install Package

+

+ ```Shell

+ conda create -n llava python=3.10 -y

+ conda activate llava

+ pip install --upgrade pip # enable PEP 660 support

+ pip install -e .

+ ```

+

+3. Install additional packages for training cases

+

+ ```

+ pip install ninja

+ pip install flash-attn --no-build-isolation

+ ```

+

+#### Upgrade to latest code base

+

+```Shell

+git pull

+pip uninstall transformers

+pip install -e .

+```

+

+### LLaVA Weights

+

+Please check out our [Model Zoo](https://github.com/haotian-liu/LLaVA/blob/main/docs/MODEL_ZOO.md) for all public LLaVA checkpoints, and the instructions of how to use the weights.

+

+### Demo

+

+To run our demo, you need to prepare LLaVA checkpoints locally. Please follow the instructions [here](#llava-weights) to download the checkpoints.

+

+#### Gradio Web UI

+

+To launch a Gradio demo locally, please run the following commands one by one. If you plan to launch multiple model workers to compare between different checkpoints, you only need to launch the controller and the web server *ONCE*.

+

+##### Launch a controller

+

+```Shell

+python -m llava.serve.controller --host 0.0.0.0 --port 10000

+```

+

+##### Launch a gradio web server.

+

+```Shell

+python -m llava.serve.gradio_web_server --controller http://localhost:10000 --model-list-mode reload

+```

+

+You just launched the Gradio web interface. Now, you can open the web interface with the URL printed on the screen. You may notice that there is no model in the model list. Do not worry, as we have not launched any model worker yet. It will be automatically updated when you launch a model worker.

+

+##### Launch a model worker

+

+This is the actual *worker* that performs the inference on the GPU. Each worker is responsible for a single model specified in `--model-path`.

+

+```Shell

+python -m llava.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port 40000 --worker http://localhost:40000 --model-path liuhaotian/llava-v1.5-13b

+```

+

+Wait until the process finishes loading the model and you see "Uvicorn running on ...". Now, refresh your Gradio web UI, and you will see the model you just launched in the model list.

+

+You can launch as many workers as you want, and compare between different model checkpoints in the same Gradio interface. Please keep the `--controller` the same, and modify the `--port` and `--worker` to a different port number for each worker.

+

+```Shell

+python -m llava.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port --worker http://localhost: --model-path

+```

+

+If you are using an Apple device with an M1 or M2 chip, you can specify the mps device by using the `--device` flag: `--device mps`.

+

+##### Launch a model worker (Multiple GPUs, when GPU VRAM \<= 24GB)

+

+If the VRAM of your GPU is less than 24GB (e.g., RTX 3090, RTX 4090, etc.), you may try running it with multiple GPUs. Our latest code base will automatically try to use multiple GPUs if you have more than one GPU. You can specify which GPUs to use with `CUDA_VISIBLE_DEVICES`. Below is an example of running with the first two GPUs.

+

+```Shell

+CUDA_VISIBLE_DEVICES=0,1 python -m llava.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port 40000 --worker http://localhost:40000 --model-path liuhaotian/llava-v1.5-13b

+```

+

+##### Launch a model worker (4-bit, 8-bit inference, quantized)

+

+You can launch the model worker with quantized bits (4-bit, 8-bit), which allows you to run the inference with reduced GPU memory footprint, potentially allowing you to run on a GPU with as few as 12GB VRAM. Note that inference with quantized bits may not be as accurate as the full-precision model. Simply append `--load-4bit` or `--load-8bit` to the **model worker** command that you are executing. Below is an example of running with 4-bit quantization.

+

+```Shell

+python -m llava.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port 40000 --worker http://localhost:40000 --model-path liuhaotian/llava-v1.5-13b --load-4bit

+```

+

+##### Launch a model worker (LoRA weights, unmerged)

+

+You can launch the model worker with LoRA weights, without merging them with the base checkpoint, to save disk space. There will be additional loading time, while the inference speed is the same as the merged checkpoints. Unmerged LoRA checkpoints do not have `lora-merge` in the model name, and are usually much smaller (less than 1GB) than the merged checkpoints (13G for 7B, and 25G for 13B).

+

+To load unmerged LoRA weights, you simply need to pass an additional argument `--model-base`, which is the base LLM that is used to train the LoRA weights. You can check the base LLM of each LoRA weights in the [model zoo](https://github.com/haotian-liu/LLaVA/blob/main/docs/MODEL_ZOO.md).

+

+```Shell

+python -m llava.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port 40000 --worker http://localhost:40000 --model-path liuhaotian/llava-v1-0719-336px-lora-vicuna-13b-v1.3 --model-base lmsys/vicuna-13b-v1.3

+```

+

+#### CLI Inference

+

+Chat about images using LLaVA without the need of Gradio interface. It also supports multiple GPUs, 4-bit and 8-bit quantized inference. With 4-bit quantization, for our LLaVA-1.5-7B, it uses less than 8GB VRAM on a single GPU.

+

+```Shell

+python -m llava.serve.cli \

+ --model-path liuhaotian/llava-v1.5-7b \

+ --image-file "https://llava-vl.github.io/static/images/view.jpg" \

+ --load-4bit

+```

+

+### Train

+

+*Below is the latest training configuration for LLaVA v1.5. For legacy models, please refer to README of [this](https://github.com/haotian-liu/LLaVA/tree/v1.0.1) version for now. We'll add them in a separate doc later.*

+

+LLaVA training consists of two stages: (1) feature alignment stage: use our 558K subset of the LAION-CC-SBU dataset to connect a *frozen pretrained* vision encoder to a *frozen LLM*; (2) visual instruction tuning stage: use 150K GPT-generated multimodal instruction-following data, plus around 515K VQA data from academic-oriented tasks, to teach the model to follow multimodal instructions.

+

+LLaVA is trained on 8 A100 GPUs with 80GB memory. To train on fewer GPUs, you can reduce the `per_device_train_batch_size` and increase the `gradient_accumulation_steps` accordingly. Always keep the global batch size the same: `per_device_train_batch_size` x `gradient_accumulation_steps` x `num_gpus`.

+

+#### Hyperparameters

+

+We use a similar set of hyperparameters as Vicuna in finetuning. Both hyperparameters used in pretraining and finetuning are provided below.

+

+1. Pretraining

+

+| Hyperparameter | Global Batch Size | Learning rate | Epochs | Max length | Weight decay |