Upload folder using huggingface_hub

Browse files- .gitattributes +1 -0

- BIAS.md +4 -0

- EXPLAINABILITY.md +13 -0

- PRIVACY.md +10 -0

- README.md +277 -0

- SAFETY.md +7 -0

- added_tokens.json +24 -0

- chat_template.jinja +20 -0

- config.json +95 -0

- generation_config.json +6 -0

- merges.txt +0 -0

- model-00001-of-00007.safetensors +3 -0

- model-00002-of-00007.safetensors +3 -0

- model-00003-of-00007.safetensors +3 -0

- model-00004-of-00007.safetensors +3 -0

- model-00005-of-00007.safetensors +3 -0

- model-00006-of-00007.safetensors +3 -0

- model-00007-of-00007.safetensors +3 -0

- model.safetensors.index.json +778 -0

- special_tokens_map.json +31 -0

- tokenizer.json +3 -0

- tokenizer_config.json +209 -0

- vocab.json +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

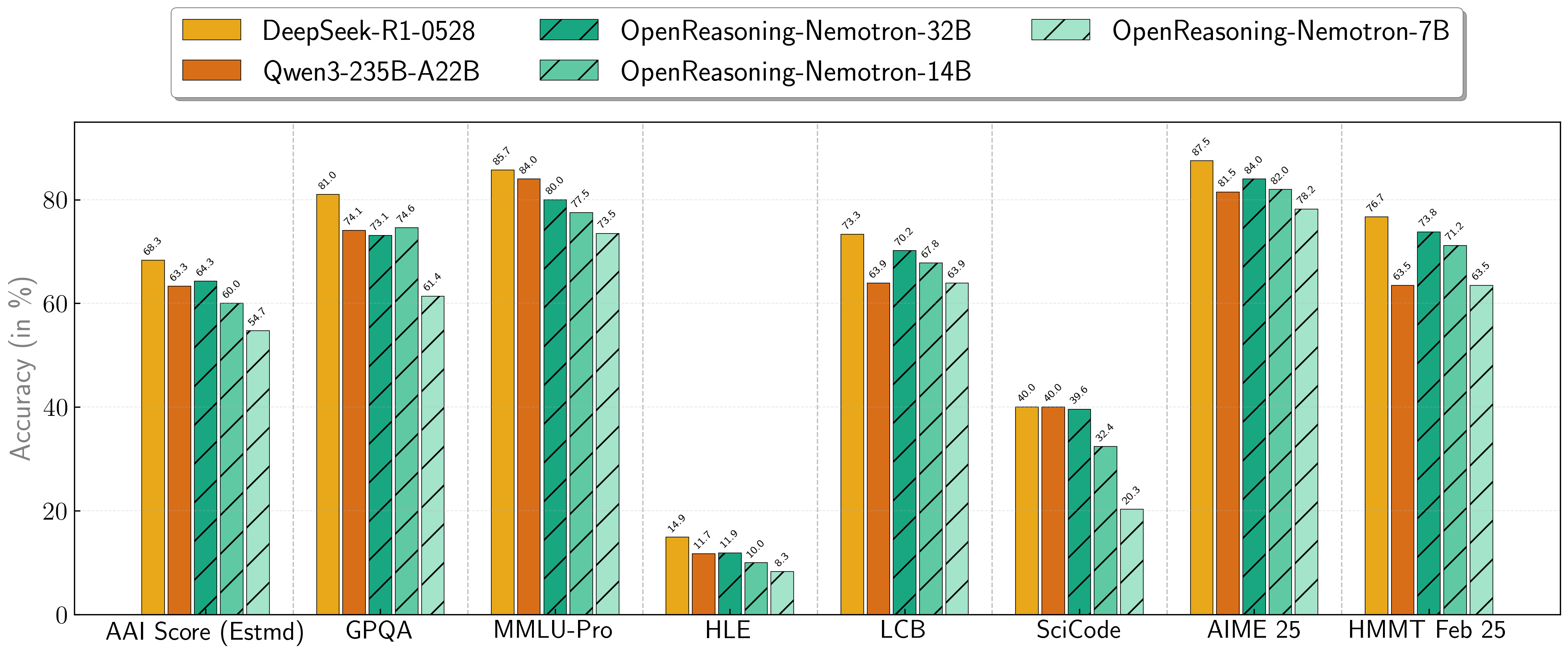

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

BIAS.md

ADDED

|

@@ -0,0 +1,4 @@

|

|

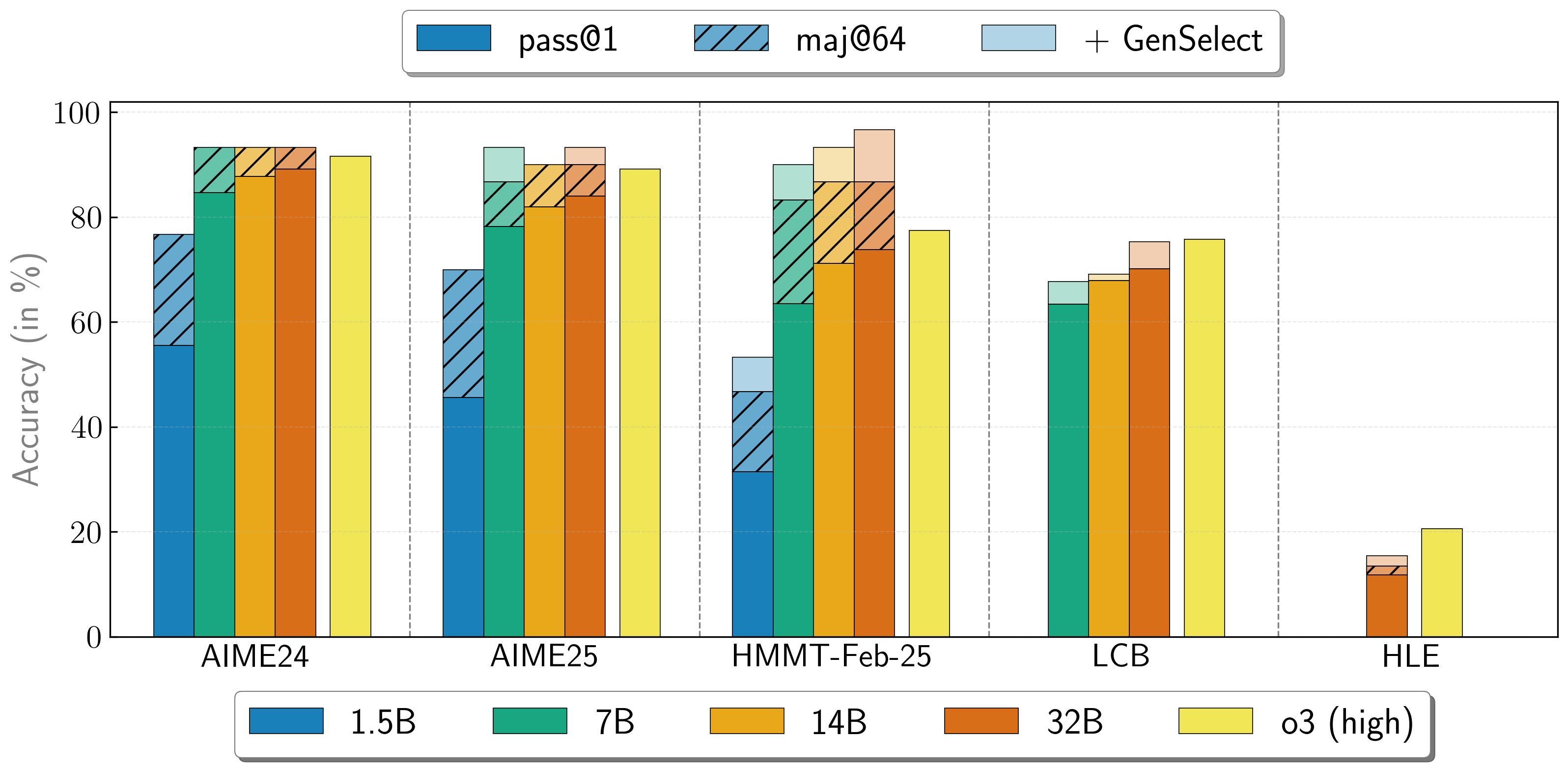

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Field | Response

|

| 2 |

+

:---------------------------------------------------------------------------------------------------|:---------------

|

| 3 |

+

Participation considerations from adversely impacted groups [protected classes](https://www.senate.ca.gov/content/protected-classes) in model design and testing: | None

|

| 4 |

+

Measures taken to mitigate against unwanted bias: | None

|

EXPLAINABILITY.md

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Field | Response

|

| 2 |

+

:------------------------------------------------------------------------------------------------------|:---------------------------------------------------------------------------------

|

| 3 |

+

Intended Task/Domain: | Reasoning for Math, Code Science Solution Generation

|

| 4 |

+

Model Type: | Transformer

|

| 5 |

+

Intended Users: | Solving competitive programming questions and evaluation for benchmark comparison.

|

| 6 |

+

Output: | Text

|

| 7 |

+

Describe how the model works: | The model generates a reasoning trace and responds with a final solution in response to a user prompting a programming question.

|

| 8 |

+

Name the adversely impacted groups this has been tested to deliver comparable outcomes regardless of: | Not Applicable

|

| 9 |

+

Technical Limitations & Mitigation: | This model is not applicable for Software Engineering tasks. It primarily should be used for competitive coding challenges that require optimized code solutions that can operate in appropriate space and time complexity.

|

| 10 |

+

Verified to have met prescribed NVIDIA quality standards: | Yes

|

| 11 |

+

Performance Metrics: | Pass@1 score

|

| 12 |

+

Potential Known Risks: | The model may provide incorrect code solutions that fail to solve the problem. The model may enter a feedback loop and constantly generate reasoning tokens without generating the final solution.

|

| 13 |

+

Licensing: | [CC-BY-4.0](https://creativecommons.org/licenses/by/4.0/deed.en/)

|

PRIVACY.md

ADDED

|

@@ -0,0 +1,10 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Field | Response

|

| 2 |

+

:----------------------------------------------------------------------------------------------------------------------------------|:-----------------------------------------------

|

| 3 |

+

Generatable or reverse engineerable personal data? | No

|

| 4 |

+

Personal data used to create this model? | No

|

| 5 |

+

How often is the dataset reviewed? | Before Release

|

| 6 |

+

Is there provenance for all datasets used in training? | Yes

|

| 7 |

+

Does data labeling (annotation, metadata) comply with privacy laws? | Yes

|

| 8 |

+

Is data compliant with data subject requests for data correction or removal, if such a request was made? | No, not possible with externally-sourced data.

|

| 9 |

+

Applicable Privacy Policy | https://www.nvidia.com/en-us/about-nvidia/privacy-policy/

|

| 10 |

+

|

README.md

ADDED

|

@@ -0,0 +1,277 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: cc-by-4.0

|

| 3 |

+

language:

|

| 4 |

+

- en

|

| 5 |

+

base_model:

|

| 6 |

+

- nvidia/OpenReasoning-Nemotron-32B

|

| 7 |

+

pipeline_tag: text-generation

|

| 8 |

+

library_name: transformers

|

| 9 |

+

tags:

|

| 10 |

+

- nvidia

|

| 11 |

+

- unsloth

|

| 12 |

+

- code

|

| 13 |

+

---

|

| 14 |

+

> [!NOTE]

|

| 15 |

+

> Includes Unsloth **chat template fixes**! <br> For `llama.cpp`, use `--jinja`

|

| 16 |

+

>

|

| 17 |

+

|

| 18 |

+

<div>

|

| 19 |

+

<p style="margin-top: 0;margin-bottom: 0;">

|

| 20 |

+

<em><a href="https://docs.unsloth.ai/basics/unsloth-dynamic-v2.0-gguf">Unsloth Dynamic 2.0</a> achieves superior accuracy & outperforms other leading quants.</em>

|

| 21 |

+

</p>

|

| 22 |

+

<div style="display: flex; gap: 5px; align-items: center; ">

|

| 23 |

+

<a href="https://github.com/unslothai/unsloth/">

|

| 24 |

+

<img src="https://github.com/unslothai/unsloth/raw/main/images/unsloth%20new%20logo.png" width="133">

|

| 25 |

+

</a>

|

| 26 |

+

<a href="https://discord.gg/unsloth">

|

| 27 |

+

<img src="https://github.com/unslothai/unsloth/raw/main/images/Discord%20button.png" width="173">

|

| 28 |

+

</a>

|

| 29 |

+

<a href="https://docs.unsloth.ai/">

|

| 30 |

+

<img src="https://raw.githubusercontent.com/unslothai/unsloth/refs/heads/main/images/documentation%20green%20button.png" width="143">

|

| 31 |

+

</a>

|

| 32 |

+

</div>

|

| 33 |

+

</div>

|

| 34 |

+

|

| 35 |

+

# OpenReasoning-Nemotron-32B Overview

|

| 36 |

+

|

| 37 |

+

## Description: <br>

|

| 38 |

+

OpenReasoning-Nemotron-32B is a large language model (LLM) which is a derivative of Qwen2.5-32B-Instruct (AKA the reference model). It is a reasoning model that is post-trained for reasoning about math, code and science solution generation. We evaluated this model with up to 64K output tokens. The OpenReasoning model is available in the following sizes: 1.5B, 7B and 14B and 32B. <br>

|

| 39 |

+

|

| 40 |

+

This model is ready for commercial/non-commercial research use. <br>

|

| 41 |

+

|

| 42 |

+

### License/Terms of Use: <br>

|

| 43 |

+

GOVERNING TERMS: Use of the models listed above are governed by the [Creative Commons Attribution 4.0 International License (CC-BY-4.0)](https://creativecommons.org/licenses/by/4.0/legalcode.en). ADDITIONAL INFORMATION: [Apache 2.0 License](https://huggingface.co/Qwen/Qwen2.5-32B-Instruct/blob/main/LICENSE)

|

| 44 |

+

|

| 45 |

+

## Scores on Reasoning Benchmarks

|

| 46 |

+

|

| 47 |

+

|

| 48 |

+

|

| 49 |

+

Our models demonstrate exceptional performance across a suite of challenging reasoning benchmarks. The 7B, 14B, and 32B models consistently set new state-of-the-art records for their size classes.

|

| 50 |

+

|

| 51 |

+

| **Model** | **AritificalAnalysisIndex*** | **GPQA** | **MMLU-PRO** | **HLE** | **LiveCodeBench*** | **SciCode** | **AIME24** | **AIME25** | **HMMT FEB 25** |

|

| 52 |

+

| :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- |

|

| 53 |

+

| **1.5B**| 31.0 | 31.6 | 47.5 | 5.5 | 28.6 | 2.2 | 55.5 | 45.6 | 31.5 |

|

| 54 |

+

| **7B** | 54.7 | 61.1 | 71.9 | 8.3 | 63.3 | 16.2 | 84.7 | 78.2 | 63.5 |

|

| 55 |

+

| **14B** | 60.9 | 71.6 | 77.5 | 10.1 | 67.8 | 23.5 | 87.8 | 82.0 | 71.2 |

|

| 56 |

+

| **32B** | 64.3 | 73.1 | 80.0 | 11.9 | 70.2 | 28.5 | 89.2 | 84.0 | 73.8 |

|

| 57 |

+

|

| 58 |

+

\* This is our estimation of the Artificial Analysis Intelligence Index, not an official score.

|

| 59 |

+

|

| 60 |

+

\* LiveCodeBench version 6, date range 2408-2505.

|

| 61 |

+

|

| 62 |

+

## Combining the work of multiple agents

|

| 63 |

+

OpenReasoning-Nemotron models can be used in a "heavy" mode by starting multiple parallel generations and combining them together via [generative solution selection (GenSelect)](https://arxiv.org/abs/2504.16891). To add this "skill" we follow the original GenSelect training pipeline except we do not train on the selection summary but use the full reasoning trace of DeepSeek R1 0528 671B instead. We only train models to select the best solution for math problems but surprisingly find that this capability directly generalizes to code and science questions! With this "heavy" GenSelect inference mode, OpenReasoning-Nemotron-32B model surpasses O3 (High) on math and coding benchmarks.

|

| 64 |

+

|

| 65 |

+

|

| 66 |

+

|

| 67 |

+

| **Model** | **Pass@1 (Avg@64)** | **Majority@64** | **GenSelect** |

|

| 68 |

+

| :--- | :--- | :--- | :--- |

|

| 69 |

+

| **1.5B** | | | |

|

| 70 |

+

| **AIME24** | 55.5 | 76.7 | 76.7 |

|

| 71 |

+

| **AIME25** | 45.6 | 70.0 | 70.0 |

|

| 72 |

+

| **HMMT Feb 25** | 31.5 | 46.7 | 53.3 |

|

| 73 |

+

| **7B** | | | |

|

| 74 |

+

| **AIME24** | 84.7 | 93.3 | 93.3 |

|

| 75 |

+

| **AIME25** | 78.2 | 86.7 | 93.3 |

|

| 76 |

+

| **HMMT Feb 25** | 63.5 | 83.3 | 90.0 |

|

| 77 |

+

| **LCB v6 2408-2505** | 63.4 | n/a | 67.7 |

|

| 78 |

+

| **14B** | | | |

|

| 79 |

+

| **AIME24** | 87.8 | 93.3 | 93.3 |

|

| 80 |

+

| **AIME25** | 82.0 | 90.0 | 90.0 |

|

| 81 |

+

| **HMMT Feb 25** | 71.2 | 86.7 | 93.3 |

|

| 82 |

+

| **LCB v6 2408-2505** | 67.9 | n/a | 69.1 |

|

| 83 |

+

| **32B** | | | |

|

| 84 |

+

| **AIME24** | 89.2 | 93.3 | 93.3 |

|

| 85 |

+

| **AIME25** | 84.0 | 90.0 | 93.3 |

|

| 86 |

+

| **HMMT Feb 25** | 73.8 | 86.7 | 96.7 |

|

| 87 |

+

| **LCB v6 2408-2505** | 70.2 | n/a | 75.3 |

|

| 88 |

+

| **HLE** | 11.8 | 13.4 | 15.5 |

|

| 89 |

+

|

| 90 |

+

|

| 91 |

+

## How to use the models?

|

| 92 |

+

|

| 93 |

+

To run inference on coding problems:

|

| 94 |

+

|

| 95 |

+

````python

|

| 96 |

+

import transformers

|

| 97 |

+

import torch

|

| 98 |

+

model_id = "nvidia/OpenReasoning-Nemotron-32B"

|

| 99 |

+

pipeline = transformers.pipeline(

|

| 100 |

+

"text-generation",

|

| 101 |

+

model=model_id,

|

| 102 |

+

model_kwargs={"torch_dtype": torch.bfloat16},

|

| 103 |

+

device_map="auto",

|

| 104 |

+

)

|

| 105 |

+

# Code generation prompt

|

| 106 |

+

prompt = """You are a helpful and harmless assistant. You should think step-by-step before responding to the instruction below.

|

| 107 |

+

Please use python programming language only.

|

| 108 |

+

You must use ```python for just the final solution code block with the following format:

|

| 109 |

+

```python

|

| 110 |

+

# Your code here

|

| 111 |

+

```

|

| 112 |

+

{user}

|

| 113 |

+

"""

|

| 114 |

+

|

| 115 |

+

# Math generation prompt

|

| 116 |

+

# prompt = """Solve the following math problem. Make sure to put the answer (and only answer) inside \\boxed{}.

|

| 117 |

+

#

|

| 118 |

+

# {user}

|

| 119 |

+

# """

|

| 120 |

+

|

| 121 |

+

# Science generation prompt

|

| 122 |

+

# You can refer to prompts here -

|

| 123 |

+

# https://github.com/NVIDIA/NeMo-Skills/blob/main/nemo_skills/prompt/config/generic/hle.yaml (HLE)

|

| 124 |

+

# https://github.com/NVIDIA/NeMo-Skills/blob/main/nemo_skills/prompt/config/eval/aai/mcq-4choices-boxed.yaml (for GPQA)

|

| 125 |

+

# https://github.com/NVIDIA/NeMo-Skills/blob/main/nemo_skills/prompt/config/eval/aai/mcq-10choices-boxed.yaml (MMLU-Pro)

|

| 126 |

+

|

| 127 |

+

messages = [

|

| 128 |

+

{

|

| 129 |

+

"role": "user",

|

| 130 |

+

"content": prompt.format(user="Write a program to calculate the sum of the first $N$ fibonacci numbers")},

|

| 131 |

+

]

|

| 132 |

+

outputs = pipeline(

|

| 133 |

+

messages,

|

| 134 |

+

max_new_tokens=64000,

|

| 135 |

+

)

|

| 136 |

+

print(outputs[0]["generated_text"][-1]['content'])

|

| 137 |

+

````

|

| 138 |

+

|

| 139 |

+

## Citation

|

| 140 |

+

|

| 141 |

+

If you find the data useful, please cite:

|

| 142 |

+

```

|

| 143 |

+

@article{ahmad2025opencodereasoning,

|

| 144 |

+

title={OpenCodeReasoning: Advancing Data Distillation for Competitive Coding},

|

| 145 |

+

author={Wasi Uddin Ahmad, Sean Narenthiran, Somshubra Majumdar, Aleksander Ficek, Siddhartha Jain, Jocelyn Huang, Vahid Noroozi, Boris Ginsburg},

|

| 146 |

+

year={2025},

|

| 147 |

+

eprint={2504.01943},

|

| 148 |

+

archivePrefix={arXiv},

|

| 149 |

+

primaryClass={cs.CL},

|

| 150 |

+

url={https://arxiv.org/abs/2504.01943},

|

| 151 |

+

}

|

| 152 |

+

```

|

| 153 |

+

|

| 154 |

+

```

|

| 155 |

+

@misc{ahmad2025opencodereasoningiisimpletesttime,

|

| 156 |

+

title={OpenCodeReasoning-II: A Simple Test Time Scaling Approach via Self-Critique},

|

| 157 |

+

author={Wasi Uddin Ahmad and Somshubra Majumdar and Aleksander Ficek and Sean Narenthiran and Mehrzad Samadi and Jocelyn Huang and Siddhartha Jain and Vahid Noroozi and Boris Ginsburg},

|

| 158 |

+

year={2025},

|

| 159 |

+

eprint={2507.09075},

|

| 160 |

+

archivePrefix={arXiv},

|

| 161 |

+

primaryClass={cs.CL},

|

| 162 |

+

url={https://arxiv.org/abs/2507.09075},

|

| 163 |

+

}

|

| 164 |

+

```

|

| 165 |

+

|

| 166 |

+

```

|

| 167 |

+

@misc{moshkov2025aimo2winningsolutionbuilding,

|

| 168 |

+

title={AIMO-2 Winning Solution: Building State-of-the-Art Mathematical Reasoning Models with OpenMathReasoning dataset},

|

| 169 |

+

author={Ivan Moshkov and Darragh Hanley and Ivan Sorokin and Shubham Toshniwal and Christof Henkel and Benedikt Schifferer and Wei Du and Igor Gitman},

|

| 170 |

+

year={2025},

|

| 171 |

+

eprint={2504.16891},

|

| 172 |

+

archivePrefix={arXiv},

|

| 173 |

+

primaryClass={cs.AI},

|

| 174 |

+

url={https://arxiv.org/abs/2504.16891},

|

| 175 |

+

}

|

| 176 |

+

```

|

| 177 |

+

|

| 178 |

+

## Additional Information:

|

| 179 |

+

|

| 180 |

+

### Deployment Geography:

|

| 181 |

+

Global<br>

|

| 182 |

+

|

| 183 |

+

### Use Case: <br>

|

| 184 |

+

This model is intended for developers and researchers who work on competitive math, code and science problems. It has been trained via only supervised fine-tuning to achieve strong scores on benchmarks. <br>

|

| 185 |

+

|

| 186 |

+

### Release Date: <br>

|

| 187 |

+

Huggingface [07/16/2025] via https://huggingface.co/nvidia/OpenReasoning-Nemotron-32B/ <br>

|

| 188 |

+

|

| 189 |

+

## Reference(s):

|

| 190 |

+

* [2504.01943] OpenCodeReasoning: Advancing Data Distillation for Competitive Coding

|

| 191 |

+

* [2504.01943] OpenCodeReasoning: Advancing Data Distillation for Competitive Coding

|

| 192 |

+

* [2504.16891] AIMO-2 Winning Solution: Building State-of-the-Art Mathematical Reasoning Models with OpenMathReasoning dataset

|

| 193 |

+

<br>

|

| 194 |

+

|

| 195 |

+

## Model Architecture: <br>

|

| 196 |

+

Architecture Type: Dense decoder-only Transformer model

|

| 197 |

+

Network Architecture: Qwen-32B-Instruct

|

| 198 |

+

<br>

|

| 199 |

+

**This model was developed based on Qwen2.5-32B-Instruct and has 32B model parameters. <br>**

|

| 200 |

+

|

| 201 |

+

**OpenReasoning-Nemotron-1.5B was developed based on Qwen2.5-1.5B-Instruct and has 1.5B model parameters. <br>**

|

| 202 |

+

|

| 203 |

+

**OpenReasoning-Nemotron-7B was developed based on Qwen2.5-7B-Instruct and has 7B model parameters. <br>**

|

| 204 |

+

|

| 205 |

+

**OpenReasoning-Nemotron-14B was developed based on Qwen2.5-14B-Instruct and has 14B model parameters. <br>**

|

| 206 |

+

|

| 207 |

+

**OpenReasoning-Nemotron-32B was developed based on Qwen2.5-32B-Instruct and has 32B model parameters. <br>**

|

| 208 |

+

|

| 209 |

+

## Input: <br>

|

| 210 |

+

**Input Type(s):** Text <br>

|

| 211 |

+

**Input Format(s):** String <br>

|

| 212 |

+

**Input Parameters:** One-Dimensional (1D) <br>

|

| 213 |

+

**Other Properties Related to Input:** Trained for up to 64,000 output tokens <br>

|

| 214 |

+

|

| 215 |

+

## Output: <br>

|

| 216 |

+

**Output Type(s):** Text <br>

|

| 217 |

+

**Output Format:** String <br>

|

| 218 |

+

**Output Parameters:** One-Dimensional (1D) <br>

|

| 219 |

+

**Other Properties Related to Output:** Trained for up to 64,000 output tokens <br>

|

| 220 |

+

|

| 221 |

+

Our AI models are designed and/or optimized to run on NVIDIA GPU-accelerated systems. By leveraging NVIDIA’s hardware (e.g. GPU cores) and software frameworks (e.g., CUDA libraries), the model achieves faster training and inference times compared to CPU-only solutions. <br>

|

| 222 |

+

|

| 223 |

+

## Software Integration : <br>

|

| 224 |

+

* Runtime Engine: NeMo 2.3.0 <br>

|

| 225 |

+

* Recommended Hardware Microarchitecture Compatibility: <br>

|

| 226 |

+

NVIDIA Ampere <br>

|

| 227 |

+

NVIDIA Hopper <br>

|

| 228 |

+

* Preferred/Supported Operating System(s): Linux <br>

|

| 229 |

+

|

| 230 |

+

## Model Version(s):

|

| 231 |

+

1.0 (7/16/2025) <br>

|

| 232 |

+

OpenReasoning-Nemotron-32B<br>

|

| 233 |

+

OpenReasoning-Nemotron-14B<br>

|

| 234 |

+

OpenReasoning-Nemotron-7B<br>

|

| 235 |

+

OpenReasoning-Nemotron-1.5B<br>

|

| 236 |

+

|

| 237 |

+

# Training and Evaluation Datasets: <br>

|

| 238 |

+

|

| 239 |

+

## Training Dataset:

|

| 240 |

+

|

| 241 |

+

The training corpus for OpenReasoning-Nemotron-32B is comprised of questions from [OpenCodeReasoning](https://huggingface.co/datasets/nvidia/OpenCodeReasoning) dataset, [OpenCodeReasoning-II](https://arxiv.org/abs/2507.09075), [OpenMathReasoning](https://huggingface.co/datasets/nvidia/OpenMathReasoning), and the Synthetic Science questions from the [Llama-Nemotron-Post-Training-Dataset](https://huggingface.co/datasets/nvidia/Llama-Nemotron-Post-Training-Dataset). All responses are generated using DeepSeek-R1-0528. We also include the instruction following and tool calling data from Llama-Nemotron-Post-Training-Dataset without modification.

|

| 242 |

+

|

| 243 |

+

Data Collection Method: Hybrid: Automated, Human, Synthetic <br>

|

| 244 |

+

Labeling Method: Hybrid: Automated, Human, Synthetic <br>

|

| 245 |

+

Properties: 5M DeepSeek-R1-0528 generated responses from OpenCodeReasoning questions (https://huggingface.co/datasets/nvidia/OpenCodeReasoning), [OpenMathReasoning](https://huggingface.co/datasets/nvidia/OpenMathReasoning), and the Synthetic Science questions from the [Llama-Nemotron-Post-Training-Dataset](https://huggingface.co/datasets/nvidia/Llama-Nemotron-Post-Training-Dataset). We also include the instruction following and tool calling data from Llama-Nemotron-Post-Training-Dataset without modification.

|

| 246 |

+

|

| 247 |

+

## Evaluation Dataset:

|

| 248 |

+

We used the following benchmarks to evaluate the model holistically.

|

| 249 |

+

|

| 250 |

+

### Math

|

| 251 |

+

- AIME 2024/2025 <br>

|

| 252 |

+

- HMMT <br>

|

| 253 |

+

- BRUNO 2025 <br>

|

| 254 |

+

|

| 255 |

+

### Code

|

| 256 |

+

- LiveCodeBench <br>

|

| 257 |

+

- SciCode <br>

|

| 258 |

+

|

| 259 |

+

### Science

|

| 260 |

+

- GPQA <br>

|

| 261 |

+

- MMLU-PRO <br>

|

| 262 |

+

- HLE <br>

|

| 263 |

+

|

| 264 |

+

|

| 265 |

+

Data Collection Method: Hybrid: Automated, Human, Synthetic <br>

|

| 266 |

+

Labeling Method: Hybrid: Automated, Human, Synthetic <br>

|

| 267 |

+

|

| 268 |

+

## Inference:

|

| 269 |

+

**Acceleration Engine:** vLLM, Tensor(RT)-LLM <br>

|

| 270 |

+

**Test Hardware** NVIDIA H100-80GB <br>

|

| 271 |

+

|

| 272 |

+

## Ethical Considerations:

|

| 273 |

+

NVIDIA believes Trustworthy AI is a shared responsibility and we have established policies and practices to enable development for a wide array of AI applications. When downloaded or used in accordance with our terms of service, developers should work with their internal model team to ensure this model meets requirements for the relevant industry and use case and addresses unforeseen product misuse.

|

| 274 |

+

|

| 275 |

+

For more detailed information on ethical considerations for this model, please see the Model Card++ Explainability, Bias, Safety & Security, and Privacy Subcards.

|

| 276 |

+

|

| 277 |

+

Please report model quality, risk, security vulnerabilities or NVIDIA AI Concerns [here](https://www.nvidia.com/en-us/support/submit-security-vulnerability/).

|

SAFETY.md

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Field | Response

|

| 2 |

+

:---------------------------------------------------|:----------------------------------

|

| 3 |

+

Model Application Field(s): | Reasoning for Code Generation<br>

|

| 4 |

+

Describe the life critical impact (if present). | Not Applicable <br>

|

| 5 |

+

Use Case Restrictions: | Abide by CC BY 4.0 <br>

|

| 6 |

+

Model and dataset restrictions: | The Principle of least privilege (PoLP) is applied limiting access for dataset generation and model development. Restrictions enforce dataset access during training, and dataset license constraints adhered to.

|

| 7 |

+

|

added_tokens.json

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"</tool_call>": 151658,

|

| 3 |

+

"<tool_call>": 151657,

|

| 4 |

+

"<|box_end|>": 151649,

|

| 5 |

+

"<|box_start|>": 151648,

|

| 6 |

+

"<|endoftext|>": 151643,

|

| 7 |

+

"<|file_sep|>": 151664,

|

| 8 |

+

"<|fim_middle|>": 151660,

|

| 9 |

+

"<|fim_pad|>": 151662,

|

| 10 |

+

"<|fim_prefix|>": 151659,

|

| 11 |

+

"<|fim_suffix|>": 151661,

|

| 12 |

+

"<|im_end|>": 151645,

|

| 13 |

+

"<|im_start|>": 151644,

|

| 14 |

+

"<|image_pad|>": 151655,

|

| 15 |

+

"<|object_ref_end|>": 151647,

|

| 16 |

+

"<|object_ref_start|>": 151646,

|

| 17 |

+

"<|quad_end|>": 151651,

|

| 18 |

+

"<|quad_start|>": 151650,

|

| 19 |

+

"<|repo_name|>": 151663,

|

| 20 |

+

"<|video_pad|>": 151656,

|

| 21 |

+

"<|vision_end|>": 151653,

|

| 22 |

+

"<|vision_pad|>": 151654,

|

| 23 |

+

"<|vision_start|>": 151652

|

| 24 |

+

}

|

chat_template.jinja

ADDED

|

@@ -0,0 +1,20 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{%- if messages[0]['role'] == 'system' %}

|

| 2 |

+

{{- '<|im_start|>system

|

| 3 |

+

' + messages[0]['content'] + '<|im_end|>

|

| 4 |

+

' }}

|

| 5 |

+

{%- else %}

|

| 6 |

+

{{- '<|im_start|>system

|

| 7 |

+

<|im_end|>

|

| 8 |

+

' }}

|

| 9 |

+

{%- endif %}

|

| 10 |

+

{%- for message in messages %}

|

| 11 |

+

{%- if (message.role == 'user') or (message.role == 'system' and not loop.first) or (message.role == 'assistant') %}

|

| 12 |

+

{{- '<|im_start|>' + message.role + '

|

| 13 |

+

' + message.content + '<|im_end|>' + '

|

| 14 |

+

' }}

|

| 15 |

+

{%- endif %}

|

| 16 |

+

{%- endfor %}

|

| 17 |

+

{%- if add_generation_prompt %}

|

| 18 |

+

{{- '<|im_start|>assistant

|

| 19 |

+

' }}

|

| 20 |

+

{%- endif %}

|

config.json

ADDED

|

@@ -0,0 +1,95 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"Qwen2ForCausalLM"

|

| 4 |

+

],

|

| 5 |

+

"attention_dropout": 0.0,

|

| 6 |

+

"eos_token_id": 151643,

|

| 7 |

+

"hidden_act": "silu",

|

| 8 |

+

"hidden_size": 5120,

|

| 9 |

+

"initializer_range": 0.02,

|

| 10 |

+

"intermediate_size": 27648,

|

| 11 |

+

"layer_types": [

|

| 12 |

+

"full_attention",

|

| 13 |

+

"full_attention",

|

| 14 |

+

"full_attention",

|

| 15 |

+

"full_attention",

|

| 16 |

+

"full_attention",

|

| 17 |

+

"full_attention",

|

| 18 |

+

"full_attention",

|

| 19 |

+

"full_attention",

|

| 20 |

+

"full_attention",

|

| 21 |

+

"full_attention",

|

| 22 |

+

"full_attention",

|

| 23 |

+

"full_attention",

|

| 24 |

+

"full_attention",

|

| 25 |

+

"full_attention",

|

| 26 |

+

"full_attention",

|

| 27 |

+

"full_attention",

|

| 28 |

+

"full_attention",

|

| 29 |

+

"full_attention",

|

| 30 |

+

"full_attention",

|

| 31 |

+

"full_attention",

|

| 32 |

+

"full_attention",

|

| 33 |

+

"full_attention",

|

| 34 |

+

"full_attention",

|

| 35 |

+

"full_attention",

|

| 36 |

+

"full_attention",

|

| 37 |

+

"full_attention",

|

| 38 |

+

"full_attention",

|

| 39 |

+

"full_attention",

|

| 40 |

+

"full_attention",

|

| 41 |

+

"full_attention",

|

| 42 |

+

"full_attention",

|

| 43 |

+

"full_attention",

|

| 44 |

+

"full_attention",

|

| 45 |

+

"full_attention",

|

| 46 |

+

"full_attention",

|

| 47 |

+

"full_attention",

|

| 48 |

+

"full_attention",

|

| 49 |

+

"full_attention",

|

| 50 |

+

"full_attention",

|

| 51 |

+

"full_attention",

|

| 52 |

+

"full_attention",

|

| 53 |

+

"full_attention",

|

| 54 |

+

"full_attention",

|

| 55 |

+

"full_attention",

|

| 56 |

+

"full_attention",

|

| 57 |

+

"full_attention",

|

| 58 |

+

"full_attention",

|

| 59 |

+

"full_attention",

|

| 60 |

+

"full_attention",

|

| 61 |

+

"full_attention",

|

| 62 |

+

"full_attention",

|

| 63 |

+

"full_attention",

|

| 64 |

+

"full_attention",

|

| 65 |

+

"full_attention",

|

| 66 |

+

"full_attention",

|

| 67 |

+

"full_attention",

|

| 68 |

+

"full_attention",

|

| 69 |

+

"full_attention",

|

| 70 |

+

"full_attention",

|

| 71 |

+

"full_attention",

|

| 72 |

+

"full_attention",

|

| 73 |

+

"full_attention",

|

| 74 |

+

"full_attention",

|

| 75 |

+

"full_attention"

|

| 76 |

+

],

|

| 77 |

+

"max_position_embeddings": 131072,

|

| 78 |

+

"max_window_layers": 64,

|

| 79 |

+

"model_type": "qwen2",

|

| 80 |

+

"num_attention_heads": 40,

|

| 81 |

+

"num_hidden_layers": 64,

|

| 82 |

+

"num_key_value_heads": 8,

|

| 83 |

+

"pad_token_id": 151654,

|

| 84 |

+

"rms_norm_eps": 1e-05,

|

| 85 |

+

"rope_scaling": null,

|

| 86 |

+

"rope_theta": 1000000.0,

|

| 87 |

+

"sliding_window": null,

|

| 88 |

+

"tie_word_embeddings": false,

|

| 89 |

+

"torch_dtype": "bfloat16",

|

| 90 |

+

"transformers_version": "4.53.2",

|

| 91 |

+

"unsloth_fixed": true,

|

| 92 |

+

"use_cache": true,

|

| 93 |

+

"use_sliding_window": false,

|

| 94 |

+

"vocab_size": 152064

|

| 95 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"bos_token_id": 151643,

|

| 4 |

+

"eos_token_id": 151643,

|

| 5 |

+

"transformers_version": "4.47.1"

|

| 6 |

+

}

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

model-00001-of-00007.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a33b4071d66ce4b7619d6c6afc58a94c4c502e0bbabad04d88e54b481798cc59

|

| 3 |

+

size 9767790336

|

model-00002-of-00007.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:01d1f06666b14c42151739d69a76fc9054236e6192d99d595f1f19920a92319d

|

| 3 |

+

size 9752118784

|

model-00003-of-00007.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:18bf2cd1f1dd2c559272627ad8460b8ad7f83c7ae60a6a268315f0b96dd92491

|

| 3 |

+

size 9752118816

|

model-00004-of-00007.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9c56e81bc54f1cff3ce84d244a65c1126bafb8a1e4b80a19b44618fba9ce479d

|

| 3 |

+

size 9752118816

|

model-00005-of-00007.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:82997b9f1ba9457dc9b863b817b6952c6a750c188f37e842c1d862ede02febba

|

| 3 |

+

size 9752118816

|

model-00006-of-00007.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f3baa296d7d556ee239179714f4f1dfb0b693e8a7b0fd68d5e0725c915acfa6a

|

| 3 |

+

size 9752118816

|

model-00007-of-00007.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:354a694ed0b7e0d5961cfe2f36cc970066ec39414abfb8170cc2f3c4c0c5ad59

|

| 3 |

+

size 6999457200

|

model.safetensors.index.json

ADDED

|

@@ -0,0 +1,778 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"metadata": {

|

| 3 |

+

"total_size": 65527752704

|

| 4 |

+

},

|

| 5 |

+

"weight_map": {

|

| 6 |

+

"lm_head.weight": "model-00007-of-00007.safetensors",

|

| 7 |

+

"model.embed_tokens.weight": "model-00001-of-00007.safetensors",

|

| 8 |

+

"model.layers.0.input_layernorm.weight": "model-00001-of-00007.safetensors",

|

| 9 |

+

"model.layers.0.mlp.down_proj.weight": "model-00001-of-00007.safetensors",

|

| 10 |

+

"model.layers.0.mlp.gate_proj.weight": "model-00001-of-00007.safetensors",

|

| 11 |

+

"model.layers.0.mlp.up_proj.weight": "model-00001-of-00007.safetensors",

|

| 12 |

+

"model.layers.0.post_attention_layernorm.weight": "model-00001-of-00007.safetensors",

|

| 13 |

+

"model.layers.0.self_attn.k_proj.bias": "model-00001-of-00007.safetensors",

|

| 14 |

+

"model.layers.0.self_attn.k_proj.weight": "model-00001-of-00007.safetensors",

|

| 15 |

+

"model.layers.0.self_attn.o_proj.weight": "model-00001-of-00007.safetensors",

|

| 16 |

+

"model.layers.0.self_attn.q_proj.bias": "model-00001-of-00007.safetensors",

|

| 17 |

+

"model.layers.0.self_attn.q_proj.weight": "model-00001-of-00007.safetensors",

|

| 18 |

+

"model.layers.0.self_attn.v_proj.bias": "model-00001-of-00007.safetensors",

|

| 19 |

+

"model.layers.0.self_attn.v_proj.weight": "model-00001-of-00007.safetensors",

|

| 20 |

+

"model.layers.1.input_layernorm.weight": "model-00001-of-00007.safetensors",

|

| 21 |

+

"model.layers.1.mlp.down_proj.weight": "model-00001-of-00007.safetensors",

|

| 22 |

+

"model.layers.1.mlp.gate_proj.weight": "model-00001-of-00007.safetensors",

|

| 23 |

+

"model.layers.1.mlp.up_proj.weight": "model-00001-of-00007.safetensors",

|

| 24 |

+

"model.layers.1.post_attention_layernorm.weight": "model-00001-of-00007.safetensors",

|

| 25 |

+

"model.layers.1.self_attn.k_proj.bias": "model-00001-of-00007.safetensors",

|

| 26 |

+

"model.layers.1.self_attn.k_proj.weight": "model-00001-of-00007.safetensors",

|

| 27 |

+

"model.layers.1.self_attn.o_proj.weight": "model-00001-of-00007.safetensors",

|

| 28 |

+

"model.layers.1.self_attn.q_proj.bias": "model-00001-of-00007.safetensors",

|

| 29 |

+

"model.layers.1.self_attn.q_proj.weight": "model-00001-of-00007.safetensors",

|

| 30 |

+

"model.layers.1.self_attn.v_proj.bias": "model-00001-of-00007.safetensors",

|

| 31 |

+

"model.layers.1.self_attn.v_proj.weight": "model-00001-of-00007.safetensors",

|

| 32 |

+

"model.layers.10.input_layernorm.weight": "model-00002-of-00007.safetensors",

|

| 33 |

+

"model.layers.10.mlp.down_proj.weight": "model-00002-of-00007.safetensors",

|

| 34 |

+

"model.layers.10.mlp.gate_proj.weight": "model-00002-of-00007.safetensors",

|

| 35 |

+

"model.layers.10.mlp.up_proj.weight": "model-00002-of-00007.safetensors",

|

| 36 |

+

"model.layers.10.post_attention_layernorm.weight": "model-00002-of-00007.safetensors",

|

| 37 |

+

"model.layers.10.self_attn.k_proj.bias": "model-00002-of-00007.safetensors",

|

| 38 |

+

"model.layers.10.self_attn.k_proj.weight": "model-00002-of-00007.safetensors",

|

| 39 |

+

"model.layers.10.self_attn.o_proj.weight": "model-00002-of-00007.safetensors",

|

| 40 |

+

"model.layers.10.self_attn.q_proj.bias": "model-00002-of-00007.safetensors",

|

| 41 |

+

"model.layers.10.self_attn.q_proj.weight": "model-00002-of-00007.safetensors",

|

| 42 |

+

"model.layers.10.self_attn.v_proj.bias": "model-00002-of-00007.safetensors",

|

| 43 |

+

"model.layers.10.self_attn.v_proj.weight": "model-00002-of-00007.safetensors",

|

| 44 |

+

"model.layers.11.input_layernorm.weight": "model-00002-of-00007.safetensors",

|

| 45 |

+

"model.layers.11.mlp.down_proj.weight": "model-00002-of-00007.safetensors",

|

| 46 |

+

"model.layers.11.mlp.gate_proj.weight": "model-00002-of-00007.safetensors",

|

| 47 |

+

"model.layers.11.mlp.up_proj.weight": "model-00002-of-00007.safetensors",

|

| 48 |

+

"model.layers.11.post_attention_layernorm.weight": "model-00002-of-00007.safetensors",

|

| 49 |

+

"model.layers.11.self_attn.k_proj.bias": "model-00002-of-00007.safetensors",

|

| 50 |

+

"model.layers.11.self_attn.k_proj.weight": "model-00002-of-00007.safetensors",

|

| 51 |

+

"model.layers.11.self_attn.o_proj.weight": "model-00002-of-00007.safetensors",

|

| 52 |

+

"model.layers.11.self_attn.q_proj.bias": "model-00002-of-00007.safetensors",

|

| 53 |

+

"model.layers.11.self_attn.q_proj.weight": "model-00002-of-00007.safetensors",

|

| 54 |

+

"model.layers.11.self_attn.v_proj.bias": "model-00002-of-00007.safetensors",

|

| 55 |

+

"model.layers.11.self_attn.v_proj.weight": "model-00002-of-00007.safetensors",

|

| 56 |

+

"model.layers.12.input_layernorm.weight": "model-00002-of-00007.safetensors",

|

| 57 |

+