Instructions to use unsloth/Qwen3-Coder-Next-FP8 with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use unsloth/Qwen3-Coder-Next-FP8 with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("text-generation", model="unsloth/Qwen3-Coder-Next-FP8") messages = [ {"role": "user", "content": "Who are you?"}, ] pipe(messages)# Load model directly from transformers import AutoTokenizer, AutoModelForMultimodalLM tokenizer = AutoTokenizer.from_pretrained("unsloth/Qwen3-Coder-Next-FP8") model = AutoModelForMultimodalLM.from_pretrained("unsloth/Qwen3-Coder-Next-FP8") messages = [ {"role": "user", "content": "Who are you?"}, ] inputs = tokenizer.apply_chat_template( messages, add_generation_prompt=True, tokenize=True, return_dict=True, return_tensors="pt", ).to(model.device) outputs = model.generate(**inputs, max_new_tokens=40) print(tokenizer.decode(outputs[0][inputs["input_ids"].shape[-1]:])) - Notebooks

- Google Colab

- Kaggle

- Local Apps Settings

- vLLM

How to use unsloth/Qwen3-Coder-Next-FP8 with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "unsloth/Qwen3-Coder-Next-FP8" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "unsloth/Qwen3-Coder-Next-FP8", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker

docker model run hf.co/unsloth/Qwen3-Coder-Next-FP8

- SGLang

How to use unsloth/Qwen3-Coder-Next-FP8 with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "unsloth/Qwen3-Coder-Next-FP8" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "unsloth/Qwen3-Coder-Next-FP8", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "unsloth/Qwen3-Coder-Next-FP8" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "unsloth/Qwen3-Coder-Next-FP8", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }' - Unsloth Studio

How to use unsloth/Qwen3-Coder-Next-FP8 with Unsloth Studio:

Install Unsloth Studio (macOS, Linux, WSL)

curl -fsSL https://unsloth.ai/install.sh | sh # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for unsloth/Qwen3-Coder-Next-FP8 to start chatting

Install Unsloth Studio (Windows)

irm https://unsloth.ai/install.ps1 | iex # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for unsloth/Qwen3-Coder-Next-FP8 to start chatting

Using HuggingFace Spaces for Unsloth

# No setup required # Open https://huggingface.co/spaces/unsloth/studio in your browser # Search for unsloth/Qwen3-Coder-Next-FP8 to start chatting

Load model with FastModel

pip install unsloth from unsloth import FastModel model, tokenizer = FastModel.from_pretrained( model_name="unsloth/Qwen3-Coder-Next-FP8", max_seq_length=2048, ) - Docker Model Runner

How to use unsloth/Qwen3-Coder-Next-FP8 with Docker Model Runner:

docker model run hf.co/unsloth/Qwen3-Coder-Next-FP8

Upload folder using huggingface_hub

Browse filesThis view is limited to 50 files because it contains too many changes. See raw diff

- .gitattributes +2 -0

- README.md +240 -3

- added_tokens.json +28 -0

- chat_template.jinja +117 -0

- config.json +277 -0

- generation_config.json +13 -0

- merges.txt +0 -0

- model-00001-of-00040.safetensors +3 -0

- model-00002-of-00040.safetensors +3 -0

- model-00003-of-00040.safetensors +3 -0

- model-00004-of-00040.safetensors +3 -0

- model-00005-of-00040.safetensors +3 -0

- model-00006-of-00040.safetensors +3 -0

- model-00007-of-00040.safetensors +3 -0

- model-00008-of-00040.safetensors +3 -0

- model-00009-of-00040.safetensors +3 -0

- model-00010-of-00040.safetensors +3 -0

- model-00011-of-00040.safetensors +3 -0

- model-00012-of-00040.safetensors +3 -0

- model-00013-of-00040.safetensors +3 -0

- model-00014-of-00040.safetensors +3 -0

- model-00015-of-00040.safetensors +3 -0

- model-00016-of-00040.safetensors +3 -0

- model-00017-of-00040.safetensors +3 -0

- model-00018-of-00040.safetensors +3 -0

- model-00019-of-00040.safetensors +3 -0

- model-00020-of-00040.safetensors +3 -0

- model-00021-of-00040.safetensors +3 -0

- model-00022-of-00040.safetensors +3 -0

- model-00023-of-00040.safetensors +3 -0

- model-00024-of-00040.safetensors +3 -0

- model-00025-of-00040.safetensors +3 -0

- model-00026-of-00040.safetensors +3 -0

- model-00027-of-00040.safetensors +3 -0

- model-00028-of-00040.safetensors +3 -0

- model-00029-of-00040.safetensors +3 -0

- model-00030-of-00040.safetensors +3 -0

- model-00031-of-00040.safetensors +3 -0

- model-00032-of-00040.safetensors +3 -0

- model-00033-of-00040.safetensors +3 -0

- model-00034-of-00040.safetensors +3 -0

- model-00035-of-00040.safetensors +3 -0

- model-00036-of-00040.safetensors +3 -0

- model-00037-of-00040.safetensors +3 -0

- model-00038-of-00040.safetensors +3 -0

- model-00039-of-00040.safetensors +3 -0

- model-00040-of-00040.safetensors +3 -0

- model.safetensors.index.json +3 -0

- qwen3_coder_detector_sgl.py +474 -0

- qwen3coder_tool_parser_vllm.py +690 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,5 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

model.safetensors.index.json filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

README.md

CHANGED

|

@@ -1,3 +1,240 @@

|

|

| 1 |

-

---

|

| 2 |

-

|

| 3 |

-

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

tags:

|

| 3 |

+

- unsloth

|

| 4 |

+

base_model:

|

| 5 |

+

- Qwen/Qwen3-Coder-Next-FP8

|

| 6 |

+

library_name: transformers

|

| 7 |

+

license: apache-2.0

|

| 8 |

+

license_link: https://huggingface.co/Qwen/Qwen3-Coder-Next/blob/main/LICENSE

|

| 9 |

+

pipeline_tag: text-generation

|

| 10 |

+

---

|

| 11 |

+

> [!NOTE]

|

| 12 |

+

> Includes Unsloth **chat template fixes**! <br> For `llama.cpp`, use `--jinja`

|

| 13 |

+

>

|

| 14 |

+

|

| 15 |

+

<div>

|

| 16 |

+

<p style="margin-top: 0;margin-bottom: 0;">

|

| 17 |

+

<em><a href="https://docs.unsloth.ai/basics/unsloth-dynamic-v2.0-gguf">Unsloth Dynamic 2.0</a> achieves superior accuracy & outperforms other leading quants.</em>

|

| 18 |

+

</p>

|

| 19 |

+

<div style="display: flex; gap: 5px; align-items: center; ">

|

| 20 |

+

<a href="https://github.com/unslothai/unsloth/">

|

| 21 |

+

<img src="https://github.com/unslothai/unsloth/raw/main/images/unsloth%20new%20logo.png" width="133">

|

| 22 |

+

</a>

|

| 23 |

+

<a href="https://discord.gg/unsloth">

|

| 24 |

+

<img src="https://github.com/unslothai/unsloth/raw/main/images/Discord%20button.png" width="173">

|

| 25 |

+

</a>

|

| 26 |

+

<a href="https://docs.unsloth.ai/">

|

| 27 |

+

<img src="https://raw.githubusercontent.com/unslothai/unsloth/refs/heads/main/images/documentation%20green%20button.png" width="143">

|

| 28 |

+

</a>

|

| 29 |

+

</div>

|

| 30 |

+

</div>

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

# Qwen3-Coder-Next-FP8

|

| 34 |

+

|

| 35 |

+

## Highlights

|

| 36 |

+

|

| 37 |

+

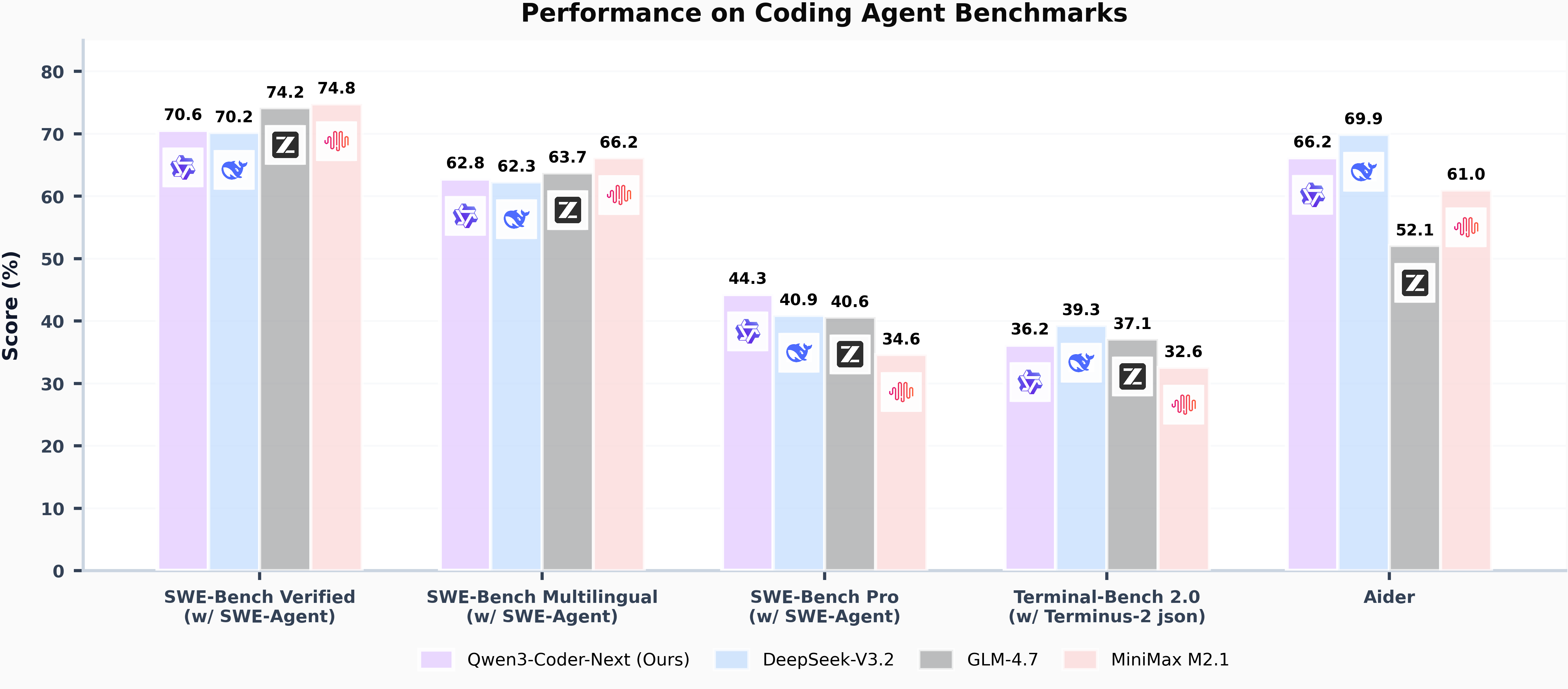

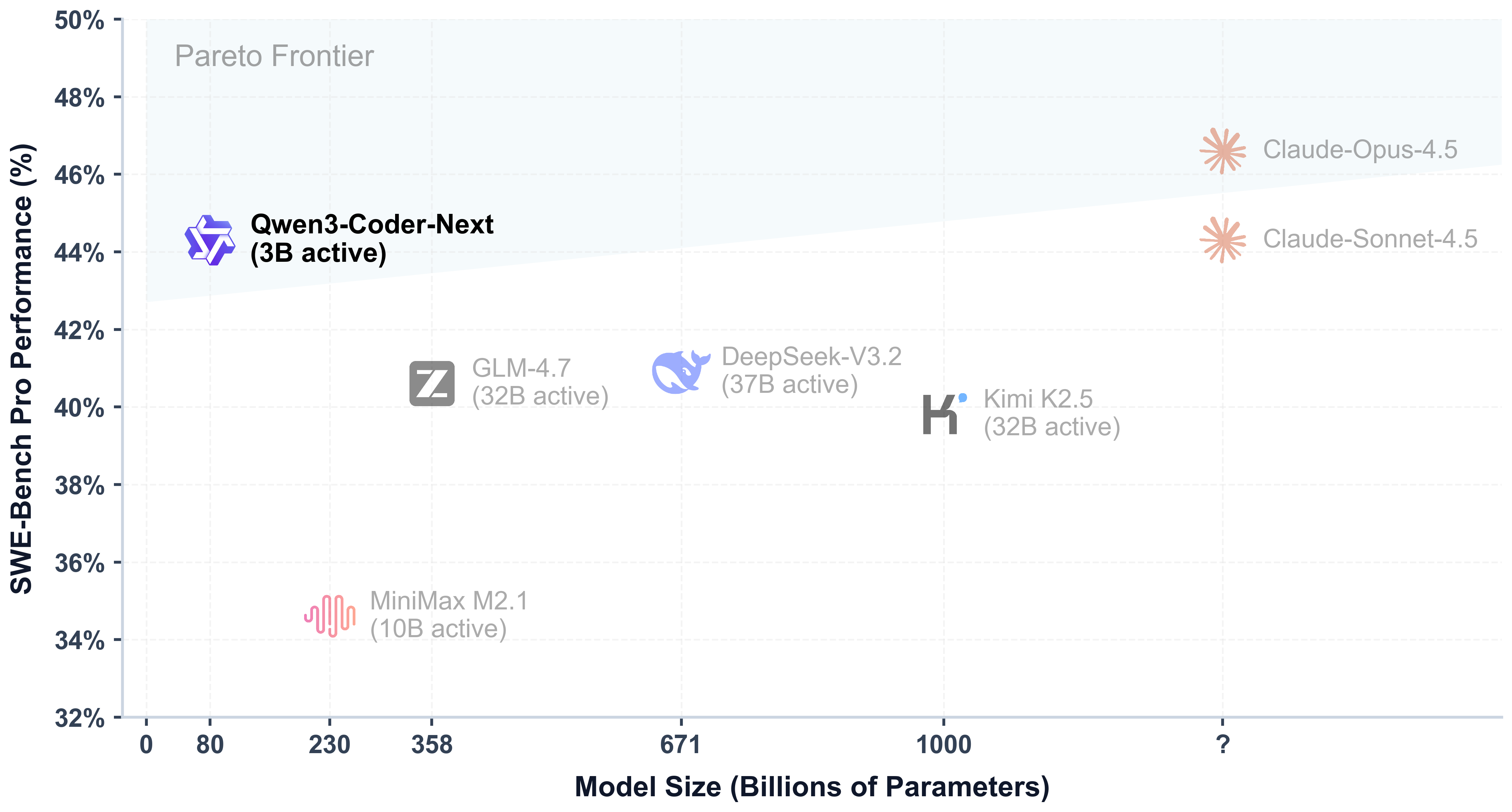

Today, we're announcing **Qwen3-Coder-Next-FP8**, an open-weight language model designed specifically for coding agents and local development. It features the following key enhancements:

|

| 38 |

+

|

| 39 |

+

- **Super Efficient with Significant Performance**: With only 3B activated parameters (80B total parameters), it achieves performance comparable to models with 10–20x more active parameters, making it highly cost-effective for agent deployment.

|

| 40 |

+

- **Advanced Agentic Capabilities**: Through an elaborate training recipe, it excels at long-horizon reasoning, complex tool usage, and recovery from execution failures, ensuring robust performance in dynamic coding tasks.

|

| 41 |

+

- **Versatile Integration with Real-World IDE**: Its 256k context length, combined with adaptability to various scaffold templates, enables seamless integration with different CLI/IDE platforms (e.g., Claude Code, Qwen Code, Qoder, Kilo, Trae, Cline, etc.), supporting diverse development environments.

|

| 42 |

+

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

|

| 46 |

+

|

| 47 |

+

> [!Note]

|

| 48 |

+

> This repository contains the **FP8-quantized Qwen3-Coder-Next** model checkpoint for convenience and performance.

|

| 49 |

+

> The quantization method is "fine-grained fp8" quantization with block size of 128.

|

| 50 |

+

> You can find more details in the `quantization_config` field in `config.json`.

|

| 51 |

+

>

|

| 52 |

+

> In addition, the experimental results presented in this model card are obtained from the original bfloat16 model prior to FP8 quantization.

|

| 53 |

+

|

| 54 |

+

## Model Overview

|

| 55 |

+

|

| 56 |

+

**Qwen3-Coder-Next-FP8** has the following features:

|

| 57 |

+

- Type: Causal Language Models

|

| 58 |

+

- Training Stage: Pretraining & Post-training

|

| 59 |

+

- Number of Parameters: 80B in total and 3B activated

|

| 60 |

+

- Number of Parameters (Non-Embedding): 79B

|

| 61 |

+

- Hidden Dimension: 2048

|

| 62 |

+

- Number of Layers: 48

|

| 63 |

+

- Hybrid Layout: 12 \* (3 \* (Gated DeltaNet -> MoE) -> 1 \* (Gated Attention -> MoE))

|

| 64 |

+

- Gated Attention:

|

| 65 |

+

- Number of Attention Heads: 16 for Q and 2 for KV

|

| 66 |

+

- Head Dimension: 256

|

| 67 |

+

- Rotary Position Embedding Dimension: 64

|

| 68 |

+

- Gated DeltaNet:

|

| 69 |

+

- Number of Linear Attention Heads: 32 for V and 16 for QK

|

| 70 |

+

- Head Dimension: 128

|

| 71 |

+

- Mixture of Experts:

|

| 72 |

+

- Number of Experts: 512

|

| 73 |

+

- Number of Activated Experts: 10

|

| 74 |

+

- Number of Shared Experts: 1

|

| 75 |

+

- Expert Intermediate Dimension: 512

|

| 76 |

+

- Context Length: 262,144 natively

|

| 77 |

+

|

| 78 |

+

**NOTE: This model supports only non-thinking mode and does not generate ``<think></think>`` blocks in its output. Meanwhile, specifying `enable_thinking=False` is no longer required.**

|

| 79 |

+

|

| 80 |

+

For more details, including benchmark evaluation, hardware requirements, and inference performance, please refer to our [blog](https://qwen.ai/blog?id=qwen3-coder-next), [GitHub](https://github.com/QwenLM/Qwen3-Coder), and [Documentation](https://qwen.readthedocs.io/en/latest/).

|

| 81 |

+

|

| 82 |

+

|

| 83 |

+

## Quickstart

|

| 84 |

+

|

| 85 |

+

We advise you to use the latest version of `transformers`.

|

| 86 |

+

|

| 87 |

+

The following contains a code snippet illustrating how to use the model generate content based on given inputs.

|

| 88 |

+

```python

|

| 89 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 90 |

+

|

| 91 |

+

model_name = "Qwen/Qwen3-Coder-Next-FP8"

|

| 92 |

+

|

| 93 |

+

# load the tokenizer and the model

|

| 94 |

+

tokenizer = AutoTokenizer.from_pretrained(model_name)

|

| 95 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 96 |

+

model_name,

|

| 97 |

+

torch_dtype="auto",

|

| 98 |

+

device_map="auto"

|

| 99 |

+

)

|

| 100 |

+

|

| 101 |

+

# prepare the model input

|

| 102 |

+

prompt = "Write a quick sort algorithm."

|

| 103 |

+

messages = [

|

| 104 |

+

{"role": "user", "content": prompt}

|

| 105 |

+

]

|

| 106 |

+

text = tokenizer.apply_chat_template(

|

| 107 |

+

messages,

|

| 108 |

+

tokenize=False,

|

| 109 |

+

add_generation_prompt=True,

|

| 110 |

+

)

|

| 111 |

+

model_inputs = tokenizer([text], return_tensors="pt").to(model.device)

|

| 112 |

+

|

| 113 |

+

# conduct text completion

|

| 114 |

+

generated_ids = model.generate(

|

| 115 |

+

**model_inputs,

|

| 116 |

+

max_new_tokens=65536

|

| 117 |

+

)

|

| 118 |

+

output_ids = generated_ids[0][len(model_inputs.input_ids[0]):].tolist()

|

| 119 |

+

|

| 120 |

+

content = tokenizer.decode(output_ids, skip_special_tokens=True)

|

| 121 |

+

|

| 122 |

+

print("content:", content)

|

| 123 |

+

```

|

| 124 |

+

|

| 125 |

+

**Note: If you encounter out-of-memory (OOM) issues, consider reducing the context length to a shorter value, such as `32,768`.**

|

| 126 |

+

|

| 127 |

+

For local use, applications such as Ollama, LMStudio, MLX-LM, llama.cpp, and KTransformers have also supported Qwen3.

|

| 128 |

+

|

| 129 |

+

## Deployment

|

| 130 |

+

|

| 131 |

+

For deployment, you can use the latest `sglang` or `vllm` to create an OpenAI-compatible API endpoint.

|

| 132 |

+

|

| 133 |

+

### SGLang

|

| 134 |

+

|

| 135 |

+

[SGLang](https://github.com/sgl-project/sglang) is a fast serving framework for large language models and vision language models.

|

| 136 |

+

SGLang could be used to launch a server with OpenAI-compatible API service.

|

| 137 |

+

|

| 138 |

+

`sglang>=v0.5.8` is required for Qwen3-Coder-Next-FP8, which can be installed using:

|

| 139 |

+

```shell

|

| 140 |

+

pip install 'sglang[all]>=v0.5.8'

|

| 141 |

+

```

|

| 142 |

+

See [its documentation](https://docs.sglang.ai/get_started/install.html) for more details.

|

| 143 |

+

|

| 144 |

+

The following command can be used to create an API endpoint at `http://localhost:30000/v1` with maximum context length 256K tokens using tensor parallel on 4 GPUs.

|

| 145 |

+

```shell

|

| 146 |

+

python -m sglang.launch_server --model Qwen/Qwen3-Coder-Next-FP8 --port 30000 --tp-size 2 --tool-call-parser qwen3_coder```

|

| 147 |

+

```

|

| 148 |

+

|

| 149 |

+

> [!Note]

|

| 150 |

+

> The default context length is 256K. Consider reducing the context length to a smaller value, e.g., `32768`, if the server fails to start.

|

| 151 |

+

|

| 152 |

+

|

| 153 |

+

### vLLM

|

| 154 |

+

|

| 155 |

+

[vLLM](https://github.com/vllm-project/vllm) is a high-throughput and memory-efficient inference and serving engine for LLMs.

|

| 156 |

+

vLLM could be used to launch a server with OpenAI-compatible API service.

|

| 157 |

+

|

| 158 |

+

`vllm>=0.15.0` is required for Qwen3-Coder-Next-FP8, which can be installed using:

|

| 159 |

+

```shell

|

| 160 |

+

pip install 'vllm>=0.15.0'

|

| 161 |

+

```

|

| 162 |

+

See [its documentation](https://docs.vllm.ai/en/stable/getting_started/installation/index.html) for more details.

|

| 163 |

+

|

| 164 |

+

The following command can be used to create an API endpoint at `http://localhost:8000/v1` with maximum context length 256K tokens using tensor parallel on 4 GPUs.

|

| 165 |

+

```shell

|

| 166 |

+

vllm serve Qwen/Qwen3-Coder-Next-FP8 --port 8000 --tensor-parallel-size 2 --enable-auto-tool-choice --tool-call-parser qwen3_coder

|

| 167 |

+

```

|

| 168 |

+

|

| 169 |

+

> [!Note]

|

| 170 |

+

> The default context length is 256K. Consider reducing the context length to a smaller value, e.g., `32768`, if the server fails to start.

|

| 171 |

+

|

| 172 |

+

|

| 173 |

+

## Agentic Coding

|

| 174 |

+

|

| 175 |

+

Qwen3-Coder-Next-FP8 excels in tool calling capabilities.

|

| 176 |

+

|

| 177 |

+

You can simply define or use any tools as following example.

|

| 178 |

+

```python

|

| 179 |

+

# Your tool implementation

|

| 180 |

+

def square_the_number(num: float) -> dict:

|

| 181 |

+

return num ** 2

|

| 182 |

+

|

| 183 |

+

# Define Tools

|

| 184 |

+

tools=[

|

| 185 |

+

{

|

| 186 |

+

"type":"function",

|

| 187 |

+

"function":{

|

| 188 |

+

"name": "square_the_number",

|

| 189 |

+

"description": "output the square of the number.",

|

| 190 |

+

"parameters": {

|

| 191 |

+

"type": "object",

|

| 192 |

+

"required": ["input_num"],

|

| 193 |

+

"properties": {

|

| 194 |

+

'input_num': {

|

| 195 |

+

'type': 'number',

|

| 196 |

+

'description': 'input_num is a number that will be squared'

|

| 197 |

+

}

|

| 198 |

+

},

|

| 199 |

+

}

|

| 200 |

+

}

|

| 201 |

+

}

|

| 202 |

+

]

|

| 203 |

+

|

| 204 |

+

from openai import OpenAI

|

| 205 |

+

# Define LLM

|

| 206 |

+

client = OpenAI(

|

| 207 |

+

# Use a custom endpoint compatible with OpenAI API

|

| 208 |

+

base_url='http://localhost:8000/v1', # api_base

|

| 209 |

+

api_key="EMPTY"

|

| 210 |

+

)

|

| 211 |

+

|

| 212 |

+

messages = [{'role': 'user', 'content': 'square the number 1024'}]

|

| 213 |

+

|

| 214 |

+

completion = client.chat.completions.create(

|

| 215 |

+

messages=messages,

|

| 216 |

+

model="Qwen3-Coder-Next-FP8",

|

| 217 |

+

max_tokens=65536,

|

| 218 |

+

tools=tools,

|

| 219 |

+

)

|

| 220 |

+

|

| 221 |

+

print(completion.choices[0])

|

| 222 |

+

```

|

| 223 |

+

|

| 224 |

+

## Best Practices

|

| 225 |

+

|

| 226 |

+

To achieve optimal performance, we recommend the following sampling parameters: `temperature=1.0`, `top_p=0.95`, `top_k=40`.

|

| 227 |

+

|

| 228 |

+

|

| 229 |

+

## Citation

|

| 230 |

+

|

| 231 |

+

If you find our work helpful, feel free to give us a cite.

|

| 232 |

+

|

| 233 |

+

```

|

| 234 |

+

@techreport{qwen_qwen3_coder_next_tech_report,

|

| 235 |

+

title = {Qwen3-Coder-Next Technical Report},

|

| 236 |

+

author = {{Qwen Team}},

|

| 237 |

+

url = {https://github.com/QwenLM/Qwen3-Coder/blob/main/qwen3_coder_next_tech_report.pdf},

|

| 238 |

+

note = {Accessed: 2026-02-03}

|

| 239 |

+

}

|

| 240 |

+

```

|

added_tokens.json

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"</think>": 151668,

|

| 3 |

+

"</tool_call>": 151658,

|

| 4 |

+

"</tool_response>": 151666,

|

| 5 |

+

"<think>": 151667,

|

| 6 |

+

"<tool_call>": 151657,

|

| 7 |

+

"<tool_response>": 151665,

|

| 8 |

+

"<|box_end|>": 151649,

|

| 9 |

+

"<|box_start|>": 151648,

|

| 10 |

+

"<|endoftext|>": 151643,

|

| 11 |

+

"<|file_sep|>": 151664,

|

| 12 |

+

"<|fim_middle|>": 151660,

|

| 13 |

+

"<|fim_pad|>": 151662,

|

| 14 |

+

"<|fim_prefix|>": 151659,

|

| 15 |

+

"<|fim_suffix|>": 151661,

|

| 16 |

+

"<|im_end|>": 151645,

|

| 17 |

+

"<|im_start|>": 151644,

|

| 18 |

+

"<|image_pad|>": 151655,

|

| 19 |

+

"<|object_ref_end|>": 151647,

|

| 20 |

+

"<|object_ref_start|>": 151646,

|

| 21 |

+

"<|quad_end|>": 151651,

|

| 22 |

+

"<|quad_start|>": 151650,

|

| 23 |

+

"<|repo_name|>": 151663,

|

| 24 |

+

"<|video_pad|>": 151656,

|

| 25 |

+

"<|vision_end|>": 151653,

|

| 26 |

+

"<|vision_pad|>": 151654,

|

| 27 |

+

"<|vision_start|>": 151652

|

| 28 |

+

}

|

chat_template.jinja

ADDED

|

@@ -0,0 +1,117 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{% macro render_extra_keys(json_dict, handled_keys) %}

|

| 2 |

+

{%- if json_dict is mapping %}

|

| 3 |

+

{%- for json_key in json_dict if json_key not in handled_keys %}

|

| 4 |

+

{%- if json_dict[json_key] is string %}

|

| 5 |

+

{{-'\n<' ~ json_key ~ '>' ~ (json_dict[json_key] | string) ~ '</' ~ json_key ~ '>' }}

|

| 6 |

+

{%- else %}

|

| 7 |

+

{{- '\n<' ~ json_key ~ '>' ~ (json_dict[json_key] | tojson | safe) ~ '</' ~ json_key ~ '>' }}

|

| 8 |

+

{%- endif %}

|

| 9 |

+

{%- endfor %}

|

| 10 |

+

{%- endif %}

|

| 11 |

+

{%- endmacro %}

|

| 12 |

+

|

| 13 |

+

{%- if messages[0]["role"] == "system" %}

|

| 14 |

+

{%- set system_message = messages[0]["content"] %}

|

| 15 |

+

{%- set loop_messages = messages[1:] %}

|

| 16 |

+

{%- else %}

|

| 17 |

+

{%- set loop_messages = messages %}

|

| 18 |

+

{%- endif %}

|

| 19 |

+

|

| 20 |

+

{%- if not tools is defined %}

|

| 21 |

+

{%- set tools = [] %}

|

| 22 |

+

{%- endif %}

|

| 23 |

+

|

| 24 |

+

{%- if system_message is defined %}

|

| 25 |

+

{{- "<|im_start|>system\n" + system_message }}

|

| 26 |

+

{%- else %}

|

| 27 |

+

{%- if tools is iterable and tools | length > 0 %}

|

| 28 |

+

{{- "<|im_start|>system\nYou are Qwen, a helpful AI assistant that can interact with a computer to solve tasks." }}

|

| 29 |

+

{%- endif %}

|

| 30 |

+

{%- endif %}

|

| 31 |

+

{%- if tools is iterable and tools | length > 0 %}

|

| 32 |

+

{{- "\n\n# Tools\n\nYou have access to the following functions:\n\n" }}

|

| 33 |

+

{{- "<tools>" }}

|

| 34 |

+

{%- for tool in tools %}

|

| 35 |

+

{%- if tool.function is defined %}

|

| 36 |

+

{%- set tool = tool.function %}

|

| 37 |

+

{%- endif %}

|

| 38 |

+

{{- "\n<function>\n<name>" ~ tool.name ~ "</name>" }}

|

| 39 |

+

{%- if tool.description is defined %}

|

| 40 |

+

{{- '\n<description>' ~ (tool.description | trim) ~ '</description>' }}

|

| 41 |

+

{%- endif %}

|

| 42 |

+

{{- '\n<parameters>' }}

|

| 43 |

+

{%- if tool.parameters is defined and tool.parameters is mapping and tool.parameters.properties is defined and tool.parameters.properties is mapping %}

|

| 44 |

+

{%- for param_name, param_fields in tool.parameters.properties|items %}

|

| 45 |

+

{{- '\n<parameter>' }}

|

| 46 |

+

{{- '\n<name>' ~ param_name ~ '</name>' }}

|

| 47 |

+

{%- if param_fields.type is defined %}

|

| 48 |

+

{{- '\n<type>' ~ (param_fields.type | string) ~ '</type>' }}

|

| 49 |

+

{%- endif %}

|

| 50 |

+

{%- if param_fields.description is defined %}

|

| 51 |

+

{{- '\n<description>' ~ (param_fields.description | trim) ~ '</description>' }}

|

| 52 |

+

{%- endif %}

|

| 53 |

+

{%- set handled_keys = ['name', 'type', 'description'] %}

|

| 54 |

+

{{- render_extra_keys(param_fields, handled_keys) }}

|

| 55 |

+

{{- '\n</parameter>' }}

|

| 56 |

+

{%- endfor %}

|

| 57 |

+

{%- endif %}

|

| 58 |

+

{%- set handled_keys = ['type', 'properties'] %}

|

| 59 |

+

{{- render_extra_keys(tool.parameters, handled_keys) }}

|

| 60 |

+

{{- '\n</parameters>' }}

|

| 61 |

+

{%- set handled_keys = ['type', 'name', 'description', 'parameters'] %}

|

| 62 |

+

{{- render_extra_keys(tool, handled_keys) }}

|

| 63 |

+

{{- '\n</function>' }}

|

| 64 |

+

{%- endfor %}

|

| 65 |

+

{{- "\n</tools>" }}

|

| 66 |

+

{{- '\n\nIf you choose to call a function ONLY reply in the following format with NO suffix:\n\n<tool_call>\n<function=example_function_name>\n<parameter=example_parameter_1>\nvalue_1\n</parameter>\n<parameter=example_parameter_2>\nThis is the value for the second parameter\nthat can span\nmultiple lines\n</parameter>\n</function>\n</tool_call>\n\n<IMPORTANT>\nReminder:\n- Function calls MUST follow the specified format: an inner <function=...></function> block must be nested within <tool_call></tool_call> XML tags\n- Required parameters MUST be specified\n- You may provide optional reasoning for your function call in natural language BEFORE the function call, but NOT after\n- If there is no function call available, answer the question like normal with your current knowledge and do not tell the user about function calls\n</IMPORTANT>' }}

|

| 67 |

+

{%- endif %}

|

| 68 |

+

{%- if system_message is defined %}

|

| 69 |

+

{{- '<|im_end|>\n' }}

|

| 70 |

+

{%- else %}

|

| 71 |

+

{%- if tools is iterable and tools | length > 0 %}

|

| 72 |

+

{{- '<|im_end|>\n' }}

|

| 73 |

+

{%- endif %}

|

| 74 |

+

{%- endif %}

|

| 75 |

+

{%- for message in loop_messages %}

|

| 76 |

+

{%- if message.role == "assistant" and message.tool_calls is defined and message.tool_calls is iterable and message.tool_calls | length > 0 %}

|

| 77 |

+

{{- '<|im_start|>' + message.role }}

|

| 78 |

+

{%- if message.content is defined and message.content is string and message.content | trim | length > 0 %}

|

| 79 |

+

{{- '\n' + message.content | trim + '\n' }}

|

| 80 |

+

{%- endif %}

|

| 81 |

+

{%- for tool_call in message.tool_calls %}

|

| 82 |

+

{%- if tool_call.function is defined %}

|

| 83 |

+

{%- set tool_call = tool_call.function %}

|

| 84 |

+

{%- endif %}

|

| 85 |

+

{{- '\n<tool_call>\n<function=' + tool_call.name + '>\n' }}

|

| 86 |

+

{%- if tool_call.arguments is defined %}

|

| 87 |

+

{%- for args_name, args_value in tool_call.arguments|items %}

|

| 88 |

+

{{- '<parameter=' + args_name + '>\n' }}

|

| 89 |

+

{%- set args_value = args_value if args_value is string else args_value | tojson | safe %}

|

| 90 |

+

{{- args_value }}

|

| 91 |

+

{{- '\n</parameter>\n' }}

|

| 92 |

+

{%- endfor %}

|

| 93 |

+

{%- endif %}

|

| 94 |

+

{{- '</function>\n</tool_call>' }}

|

| 95 |

+

{%- endfor %}

|

| 96 |

+

{{- '<|im_end|>\n' }}

|

| 97 |

+

{%- elif message.role == "user" or message.role == "system" or message.role == "assistant" %}

|

| 98 |

+

{{- '<|im_start|>' + message.role + '\n' + message.content + '<|im_end|>' + '\n' }}

|

| 99 |

+

{%- elif message.role == "tool" %}

|

| 100 |

+

{%- if loop.previtem and loop.previtem.role != "tool" %}

|

| 101 |

+

{{- '<|im_start|>user' }}

|

| 102 |

+

{%- endif %}

|

| 103 |

+

{{- '\n<tool_response>\n' }}

|

| 104 |

+

{{- message.content }}

|

| 105 |

+

{{- '\n</tool_response>' }}

|

| 106 |

+

{%- if not loop.last and loop.nextitem.role != "tool" %}

|

| 107 |

+

{{- '<|im_end|>\n' }}

|

| 108 |

+

{%- elif loop.last %}

|

| 109 |

+

{{- '<|im_end|>\n' }}

|

| 110 |

+

{%- endif %}

|

| 111 |

+

{%- else %}

|

| 112 |

+

{{- '<|im_start|>' + message.role + '\n' + message.content + '<|im_end|>\n' }}

|

| 113 |

+

{%- endif %}

|

| 114 |

+

{%- endfor %}

|

| 115 |

+

{%- if add_generation_prompt %}

|

| 116 |

+

{{- '<|im_start|>assistant\n' }}

|

| 117 |

+

{%- endif %}

|

config.json

ADDED

|

@@ -0,0 +1,277 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"Qwen3NextForCausalLM"

|

| 4 |

+

],

|

| 5 |

+

"attention_bias": false,

|

| 6 |

+

"attention_dropout": 0,

|

| 7 |

+

"decoder_sparse_step": 1,

|

| 8 |

+

"torch_dtype": "bfloat16",

|

| 9 |

+

"eos_token_id": 151645,

|

| 10 |

+

"full_attention_interval": 4,

|

| 11 |

+

"head_dim": 256,

|

| 12 |

+

"hidden_act": "silu",

|

| 13 |

+

"hidden_size": 2048,

|

| 14 |

+

"initializer_range": 0.02,

|

| 15 |

+

"intermediate_size": 5120,

|

| 16 |

+

"layer_types": [

|

| 17 |

+

"linear_attention",

|

| 18 |

+

"linear_attention",

|

| 19 |

+

"linear_attention",

|

| 20 |

+

"full_attention",

|

| 21 |

+

"linear_attention",

|

| 22 |

+

"linear_attention",

|

| 23 |

+

"linear_attention",

|

| 24 |

+

"full_attention",

|

| 25 |

+

"linear_attention",

|

| 26 |

+

"linear_attention",

|

| 27 |

+

"linear_attention",

|

| 28 |

+

"full_attention",

|

| 29 |

+

"linear_attention",

|

| 30 |

+

"linear_attention",

|

| 31 |

+

"linear_attention",

|

| 32 |

+

"full_attention",

|

| 33 |

+

"linear_attention",

|

| 34 |

+

"linear_attention",

|

| 35 |

+

"linear_attention",

|

| 36 |

+

"full_attention",

|

| 37 |

+

"linear_attention",

|

| 38 |

+

"linear_attention",

|

| 39 |

+

"linear_attention",

|

| 40 |

+

"full_attention",

|

| 41 |

+

"linear_attention",

|

| 42 |

+

"linear_attention",

|

| 43 |

+

"linear_attention",

|

| 44 |

+

"full_attention",

|

| 45 |

+

"linear_attention",

|

| 46 |

+

"linear_attention",

|

| 47 |

+

"linear_attention",

|

| 48 |

+

"full_attention",

|

| 49 |

+

"linear_attention",

|

| 50 |

+

"linear_attention",

|

| 51 |

+

"linear_attention",

|

| 52 |

+

"full_attention",

|

| 53 |

+

"linear_attention",

|

| 54 |

+

"linear_attention",

|

| 55 |

+

"linear_attention",

|

| 56 |

+

"full_attention",

|

| 57 |

+

"linear_attention",

|

| 58 |

+

"linear_attention",

|

| 59 |

+

"linear_attention",

|

| 60 |

+

"full_attention",

|

| 61 |

+

"linear_attention",

|

| 62 |

+

"linear_attention",

|

| 63 |

+

"linear_attention",

|

| 64 |

+

"full_attention"

|

| 65 |

+

],

|

| 66 |

+

"linear_conv_kernel_dim": 4,

|

| 67 |

+

"linear_key_head_dim": 128,

|

| 68 |

+

"linear_num_key_heads": 16,

|

| 69 |

+

"linear_num_value_heads": 32,

|

| 70 |

+

"linear_value_head_dim": 128,

|

| 71 |

+

"max_position_embeddings": 262144,

|

| 72 |

+

"mlp_only_layers": [],

|

| 73 |

+

"model_type": "qwen3_next",

|

| 74 |

+

"moe_intermediate_size": 512,

|

| 75 |

+

"norm_topk_prob": true,

|

| 76 |

+

"num_attention_heads": 16,

|

| 77 |

+

"num_experts": 512,

|

| 78 |

+

"num_experts_per_tok": 10,

|

| 79 |

+

"num_hidden_layers": 48,

|

| 80 |

+

"num_key_value_heads": 2,

|

| 81 |

+

"output_router_logits": false,

|

| 82 |

+

"pad_token_id": 151654,

|

| 83 |

+

"partial_rotary_factor": 0.25,

|

| 84 |

+

"quantization_config": {

|

| 85 |

+

"act_per_tensor": false,

|

| 86 |

+

"activation_scheme": "dynamic",

|

| 87 |

+

"modules_to_not_convert": [

|

| 88 |

+

"lm_head",

|

| 89 |

+

"model.embed_tokens",

|

| 90 |

+

"model.layers.0.linear_attn.conv1d",

|

| 91 |

+

"model.layers.0.linear_attn.in_proj_ba",

|

| 92 |

+

"model.layers.0.mlp.gate",

|

| 93 |

+

"model.layers.0.mlp.shared_expert_gate",

|

| 94 |

+

"model.layers.1.linear_attn.conv1d",

|

| 95 |

+

"model.layers.1.linear_attn.in_proj_ba",

|

| 96 |

+

"model.layers.1.mlp.gate",

|

| 97 |

+

"model.layers.1.mlp.shared_expert_gate",

|

| 98 |

+

"model.layers.10.linear_attn.conv1d",

|

| 99 |

+

"model.layers.10.linear_attn.in_proj_ba",

|

| 100 |

+

"model.layers.10.mlp.gate",

|

| 101 |

+

"model.layers.10.mlp.shared_expert_gate",

|

| 102 |

+

"model.layers.11.mlp.gate",

|

| 103 |

+

"model.layers.11.mlp.shared_expert_gate",

|

| 104 |

+

"model.layers.12.linear_attn.conv1d",

|

| 105 |

+

"model.layers.12.linear_attn.in_proj_ba",

|

| 106 |

+

"model.layers.12.mlp.gate",

|

| 107 |

+

"model.layers.12.mlp.shared_expert_gate",

|

| 108 |

+

"model.layers.13.linear_attn.conv1d",

|

| 109 |

+

"model.layers.13.linear_attn.in_proj_ba",

|

| 110 |

+

"model.layers.13.mlp.gate",

|

| 111 |

+

"model.layers.13.mlp.shared_expert_gate",

|

| 112 |

+

"model.layers.14.linear_attn.conv1d",

|

| 113 |

+

"model.layers.14.linear_attn.in_proj_ba",

|

| 114 |

+

"model.layers.14.mlp.gate",

|

| 115 |

+

"model.layers.14.mlp.shared_expert_gate",

|

| 116 |

+

"model.layers.15.mlp.gate",

|

| 117 |

+

"model.layers.15.mlp.shared_expert_gate",

|

| 118 |

+

"model.layers.16.linear_attn.conv1d",

|

| 119 |

+

"model.layers.16.linear_attn.in_proj_ba",

|

| 120 |

+

"model.layers.16.mlp.gate",

|

| 121 |

+

"model.layers.16.mlp.shared_expert_gate",

|

| 122 |

+

"model.layers.17.linear_attn.conv1d",

|

| 123 |

+

"model.layers.17.linear_attn.in_proj_ba",

|

| 124 |

+

"model.layers.17.mlp.gate",

|

| 125 |

+

"model.layers.17.mlp.shared_expert_gate",

|

| 126 |

+

"model.layers.18.linear_attn.conv1d",

|

| 127 |

+

"model.layers.18.linear_attn.in_proj_ba",

|

| 128 |

+

"model.layers.18.mlp.gate",

|

| 129 |

+

"model.layers.18.mlp.shared_expert_gate",

|

| 130 |

+

"model.layers.19.mlp.gate",

|

| 131 |

+

"model.layers.19.mlp.shared_expert_gate",

|

| 132 |

+

"model.layers.2.linear_attn.conv1d",

|

| 133 |

+

"model.layers.2.linear_attn.in_proj_ba",

|

| 134 |

+

"model.layers.2.mlp.gate",

|

| 135 |

+

"model.layers.2.mlp.shared_expert_gate",

|

| 136 |

+

"model.layers.20.linear_attn.conv1d",

|

| 137 |

+

"model.layers.20.linear_attn.in_proj_ba",

|

| 138 |

+

"model.layers.20.mlp.gate",

|

| 139 |

+

"model.layers.20.mlp.shared_expert_gate",

|

| 140 |

+

"model.layers.21.linear_attn.conv1d",

|

| 141 |

+

"model.layers.21.linear_attn.in_proj_ba",

|

| 142 |

+

"model.layers.21.mlp.gate",

|

| 143 |

+

"model.layers.21.mlp.shared_expert_gate",

|

| 144 |

+

"model.layers.22.linear_attn.conv1d",

|

| 145 |

+

"model.layers.22.linear_attn.in_proj_ba",

|

| 146 |

+

"model.layers.22.mlp.gate",

|

| 147 |

+

"model.layers.22.mlp.shared_expert_gate",

|

| 148 |

+

"model.layers.23.mlp.gate",

|

| 149 |

+

"model.layers.23.mlp.shared_expert_gate",

|

| 150 |

+

"model.layers.24.linear_attn.conv1d",

|

| 151 |

+

"model.layers.24.linear_attn.in_proj_ba",

|

| 152 |

+

"model.layers.24.mlp.gate",

|

| 153 |

+

"model.layers.24.mlp.shared_expert_gate",

|

| 154 |

+

"model.layers.25.linear_attn.conv1d",

|

| 155 |

+

"model.layers.25.linear_attn.in_proj_ba",

|

| 156 |

+

"model.layers.25.mlp.gate",

|

| 157 |

+

"model.layers.25.mlp.shared_expert_gate",

|

| 158 |

+

"model.layers.26.linear_attn.conv1d",

|

| 159 |

+

"model.layers.26.linear_attn.in_proj_ba",

|

| 160 |

+

"model.layers.26.mlp.gate",

|

| 161 |

+

"model.layers.26.mlp.shared_expert_gate",

|

| 162 |

+

"model.layers.27.mlp.gate",

|

| 163 |

+

"model.layers.27.mlp.shared_expert_gate",

|

| 164 |

+

"model.layers.28.linear_attn.conv1d",

|

| 165 |

+

"model.layers.28.linear_attn.in_proj_ba",

|

| 166 |

+

"model.layers.28.mlp.gate",

|

| 167 |

+

"model.layers.28.mlp.shared_expert_gate",

|

| 168 |

+

"model.layers.29.linear_attn.conv1d",

|

| 169 |

+

"model.layers.29.linear_attn.in_proj_ba",

|

| 170 |

+

"model.layers.29.mlp.gate",

|

| 171 |

+

"model.layers.29.mlp.shared_expert_gate",

|

| 172 |

+

"model.layers.3.mlp.gate",

|

| 173 |

+

"model.layers.3.mlp.shared_expert_gate",

|

| 174 |

+

"model.layers.30.linear_attn.conv1d",

|

| 175 |

+

"model.layers.30.linear_attn.in_proj_ba",

|

| 176 |

+

"model.layers.30.mlp.gate",

|

| 177 |

+

"model.layers.30.mlp.shared_expert_gate",

|

| 178 |

+

"model.layers.31.mlp.gate",

|

| 179 |

+

"model.layers.31.mlp.shared_expert_gate",

|

| 180 |

+

"model.layers.32.linear_attn.conv1d",

|

| 181 |

+

"model.layers.32.linear_attn.in_proj_ba",

|

| 182 |

+

"model.layers.32.mlp.gate",

|

| 183 |

+

"model.layers.32.mlp.shared_expert_gate",

|

| 184 |

+

"model.layers.33.linear_attn.conv1d",

|

| 185 |

+

"model.layers.33.linear_attn.in_proj_ba",

|

| 186 |

+

"model.layers.33.mlp.gate",

|

| 187 |

+

"model.layers.33.mlp.shared_expert_gate",

|

| 188 |

+

"model.layers.34.linear_attn.conv1d",

|

| 189 |

+

"model.layers.34.linear_attn.in_proj_ba",

|

| 190 |

+

"model.layers.34.mlp.gate",

|

| 191 |

+

"model.layers.34.mlp.shared_expert_gate",

|

| 192 |

+

"model.layers.35.mlp.gate",

|

| 193 |

+

"model.layers.35.mlp.shared_expert_gate",

|

| 194 |

+

"model.layers.36.linear_attn.conv1d",

|

| 195 |

+

"model.layers.36.linear_attn.in_proj_ba",

|

| 196 |

+

"model.layers.36.mlp.gate",

|

| 197 |

+

"model.layers.36.mlp.shared_expert_gate",

|

| 198 |

+

"model.layers.37.linear_attn.conv1d",

|

| 199 |

+

"model.layers.37.linear_attn.in_proj_ba",

|

| 200 |

+

"model.layers.37.mlp.gate",

|

| 201 |

+

"model.layers.37.mlp.shared_expert_gate",

|

| 202 |

+

"model.layers.38.linear_attn.conv1d",

|

| 203 |

+

"model.layers.38.linear_attn.in_proj_ba",

|

| 204 |

+

"model.layers.38.mlp.gate",

|

| 205 |

+

"model.layers.38.mlp.shared_expert_gate",

|

| 206 |

+

"model.layers.39.mlp.gate",

|

| 207 |

+

"model.layers.39.mlp.shared_expert_gate",

|

| 208 |

+

"model.layers.4.linear_attn.conv1d",

|

| 209 |

+

"model.layers.4.linear_attn.in_proj_ba",

|

| 210 |

+

"model.layers.4.mlp.gate",

|

| 211 |

+

"model.layers.4.mlp.shared_expert_gate",

|

| 212 |

+

"model.layers.40.linear_attn.conv1d",

|

| 213 |

+

"model.layers.40.linear_attn.in_proj_ba",

|

| 214 |

+

"model.layers.40.mlp.gate",

|

| 215 |

+

"model.layers.40.mlp.shared_expert_gate",

|

| 216 |

+

"model.layers.41.linear_attn.conv1d",

|

| 217 |

+

"model.layers.41.linear_attn.in_proj_ba",

|

| 218 |

+

"model.layers.41.mlp.gate",

|

| 219 |

+

"model.layers.41.mlp.shared_expert_gate",

|

| 220 |

+

"model.layers.42.linear_attn.conv1d",

|

| 221 |

+

"model.layers.42.linear_attn.in_proj_ba",

|

| 222 |

+

"model.layers.42.mlp.gate",

|

| 223 |

+

"model.layers.42.mlp.shared_expert_gate",

|

| 224 |

+

"model.layers.43.mlp.gate",

|

| 225 |

+

"model.layers.43.mlp.shared_expert_gate",

|

| 226 |

+

"model.layers.44.linear_attn.conv1d",

|

| 227 |

+

"model.layers.44.linear_attn.in_proj_ba",

|

| 228 |

+

"model.layers.44.mlp.gate",

|

| 229 |

+

"model.layers.44.mlp.shared_expert_gate",

|

| 230 |

+

"model.layers.45.linear_attn.conv1d",

|

| 231 |

+

"model.layers.45.linear_attn.in_proj_ba",

|

| 232 |

+

"model.layers.45.mlp.gate",

|

| 233 |

+

"model.layers.45.mlp.shared_expert_gate",

|

| 234 |

+

"model.layers.46.linear_attn.conv1d",

|

| 235 |

+

"model.layers.46.linear_attn.in_proj_ba",

|

| 236 |

+

"model.layers.46.mlp.gate",

|

| 237 |

+

"model.layers.46.mlp.shared_expert_gate",

|

| 238 |

+

"model.layers.47.mlp.gate",

|

| 239 |

+

"model.layers.47.mlp.shared_expert_gate",

|

| 240 |

+

"model.layers.5.linear_attn.conv1d",

|

| 241 |

+

"model.layers.5.linear_attn.in_proj_ba",

|

| 242 |

+

"model.layers.5.mlp.gate",

|

| 243 |

+

"model.layers.5.mlp.shared_expert_gate",

|

| 244 |

+

"model.layers.6.linear_attn.conv1d",

|

| 245 |

+

"model.layers.6.linear_attn.in_proj_ba",

|

| 246 |

+

"model.layers.6.mlp.gate",

|

| 247 |

+

"model.layers.6.mlp.shared_expert_gate",

|

| 248 |

+

"model.layers.7.mlp.gate",

|

| 249 |

+

"model.layers.7.mlp.shared_expert_gate",

|

| 250 |

+

"model.layers.8.linear_attn.conv1d",

|

| 251 |

+

"model.layers.8.linear_attn.in_proj_ba",

|

| 252 |

+

"model.layers.8.mlp.gate",

|

| 253 |

+

"model.layers.8.mlp.shared_expert_gate",

|

| 254 |

+

"model.layers.9.linear_attn.conv1d",

|

| 255 |

+

"model.layers.9.linear_attn.in_proj_ba",

|

| 256 |

+

"model.layers.9.mlp.gate",

|

| 257 |

+

"model.layers.9.mlp.shared_expert_gate"

|

| 258 |

+

],

|

| 259 |

+

"quant_method": "fp8",

|

| 260 |

+

"weight_block_size": [

|

| 261 |

+

128,

|

| 262 |

+

128

|

| 263 |

+

],

|

| 264 |

+

"weight_per_tensor": false

|

| 265 |

+

},

|

| 266 |

+

"rms_norm_eps": 1e-06,

|

| 267 |

+

"rope_scaling": null,

|

| 268 |

+

"rope_theta": 5000000,

|

| 269 |

+

"router_aux_loss_coef": 0.001,

|

| 270 |

+

"shared_expert_intermediate_size": 512,

|

| 271 |

+

"tie_word_embeddings": false,

|

| 272 |

+

"transformers_version": "4.57.6",

|

| 273 |

+

"unsloth_fixed": true,

|

| 274 |

+

"use_cache": true,

|

| 275 |

+

"use_sliding_window": false,

|

| 276 |

+

"vocab_size": 151936

|

| 277 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token_id": 151643,

|

| 3 |

+

"do_sample": true,

|

| 4 |

+

"eos_token_id": [

|

| 5 |

+

151645,

|

| 6 |

+

151643

|

| 7 |

+

],

|

| 8 |

+

"pad_token_id": 151643,

|

| 9 |

+

"temperature": 1.0,

|

| 10 |

+

"top_k": 40,

|

| 11 |

+

"top_p": 0.95,

|

| 12 |

+

"transformers_version": "4.57.3"

|

| 13 |

+

}

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

model-00001-of-00040.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:81fc8306344bb5d37b3a1d6c267fb7b0f2cf05ecb05cd065016aab87e6a55a33

|

| 3 |

+

size 2313917472

|

model-00002-of-00040.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:7c1eb3b175b9122bf28e64dca9dac63696c229bd6e0ced2351a4e2c414aa33af

|

| 3 |

+

size 2001731528

|

model-00003-of-00040.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:62c034d5f7b21b25cc2497a9cebfbebbda500d0d3a9b08381d476c481dc2df5b

|

| 3 |

+

size 2001406544

|

model-00004-of-00040.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:879eaa1ce8ba3b7c3e48c24deb039a9972bcb724f790945a916003efd4312616

|

| 3 |

+

size 2001732024

|

model-00005-of-00040.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:785259af0e3e7959d48360927f9556f1b636a5d800dcbc8d2a11c295b9caab4f

|

| 3 |

+

size 2001732960

|

model-00006-of-00040.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:79c183f8388e35516509d9488c2e6a6f0aaafc1ff5a3c8b1c872d5d23622c385

|

| 3 |

+

size 2002790192

|

model-00007-of-00040.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5b33fd40d09ef4ddd75ac342c6661a3658e26d1b3ca7e38d0774a0b9d235c73a

|

| 3 |

+

size 2001731792

|

model-00008-of-00040.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:3993df6622752249b565409a8c339f933e2bc326d432c75385ade37f74c18257

|

| 3 |

+

size 2001731960

|

model-00009-of-00040.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:074c68c97085550d3746cc6fb0e0ec74ee634c09c9bd59a9d1abb099e251ae89

|

| 3 |

+

size 2001737552

|

model-00010-of-00040.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9ff4156992002e43012eabf9f0c7dcc7c9e7060d124af9ac188461aa4f93ef0d

|

| 3 |

+

size 2001411064

|

model-00011-of-00040.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:7a94ef41eabb382b138e781ac0f6054e649ee6e9583298e0b84a0dd75f4d4f97

|

| 3 |

+

size 2003119416

|

model-00012-of-00040.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:185b7d1bfc64adfa9076918c56b77391d589e99d254c84b281fd79c8a2b8b98d

|

| 3 |

+

size 2001735720

|

model-00013-of-00040.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+