Upload folder using huggingface_hub

Browse files- README.md +31 -3

- chat_template.json +3 -0

- config.json +2 -5

- generation_config.json +13 -12

- model-00001-of-00004.safetensors +2 -2

- model-00002-of-00004.safetensors +2 -2

- model-00003-of-00004.safetensors +2 -2

- model-00004-of-00004.safetensors +2 -2

- model.safetensors.index.json +448 -449

README.md

CHANGED

|

@@ -5,7 +5,12 @@ base_model:

|

|

| 5 |

- Qwen/Qwen3-VL-8B-Instruct

|

| 6 |

license: apache-2.0

|

| 7 |

pipeline_tag: image-text-to-text

|

|

|

|

| 8 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

| 9 |

<div>

|

| 10 |

<p style="margin-top: 0;margin-bottom: 0;">

|

| 11 |

<em><a href="https://docs.unsloth.ai/basics/unsloth-dynamic-v2.0-gguf">Unsloth Dynamic 2.0</a> achieves superior accuracy & outperforms other leading quants.</em>

|

|

@@ -79,10 +84,10 @@ This is the weight repository for Qwen3-VL-8B-Instruct.

|

|

| 79 |

|

| 80 |

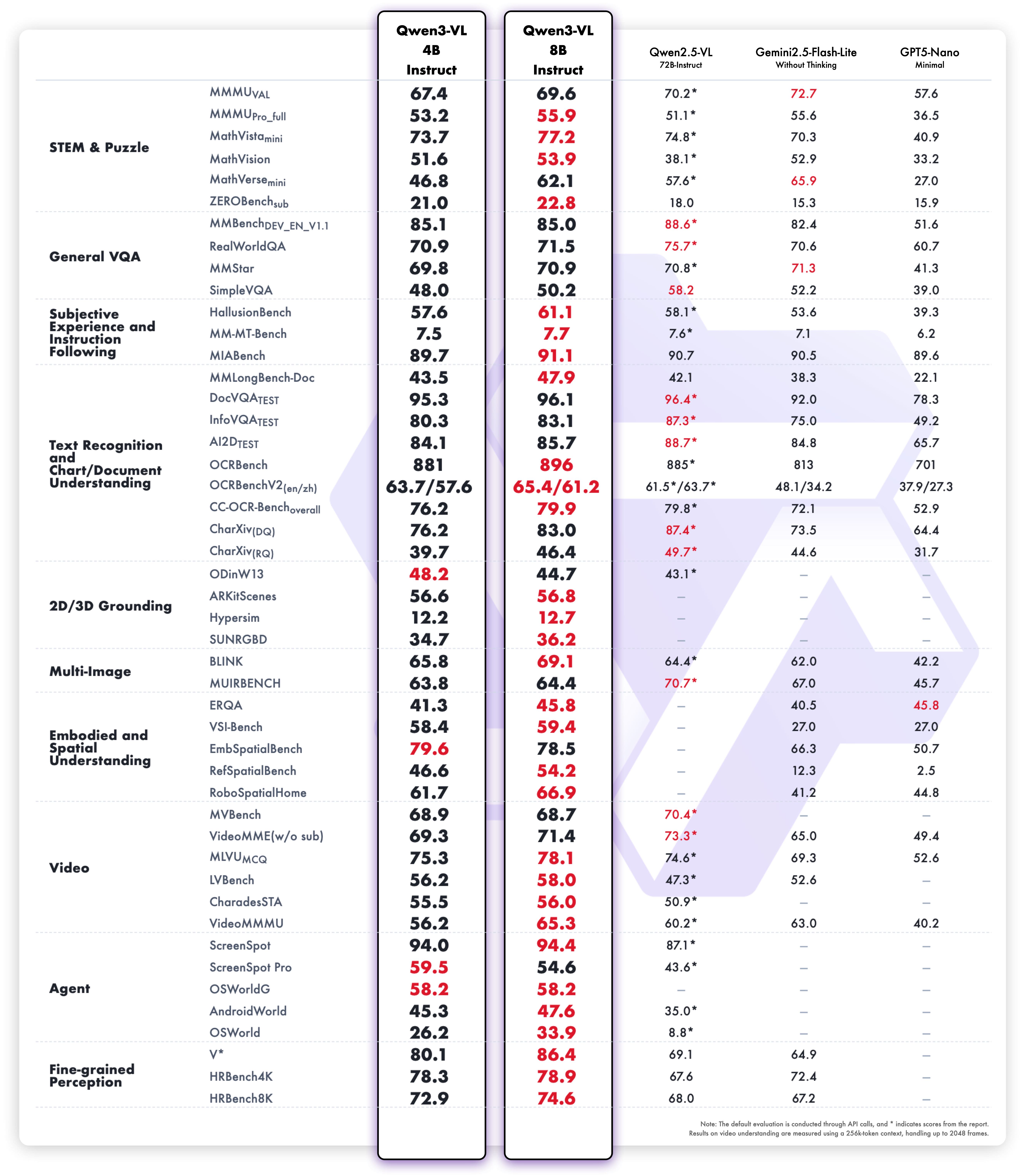

**Multimodal performance**

|

| 81 |

|

| 82 |

-

|

| 116 |

|

| 117 |

-

processor = AutoProcessor.from_pretrained("Qwen/

|

| 118 |

|

| 119 |

messages = [

|

| 120 |

{

|

|

@@ -137,6 +142,7 @@ inputs = processor.apply_chat_template(

|

|

| 137 |

return_dict=True,

|

| 138 |

return_tensors="pt"

|

| 139 |

)

|

|

|

|

| 140 |

|

| 141 |

# Inference: Generation of the output

|

| 142 |

generated_ids = model.generate(**inputs, max_new_tokens=128)

|

|

@@ -149,6 +155,28 @@ output_text = processor.batch_decode(

|

|

| 149 |

print(output_text)

|

| 150 |

```

|

| 151 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 152 |

|

| 153 |

|

| 154 |

## Citation

|

|

|

|

| 5 |

- Qwen/Qwen3-VL-8B-Instruct

|

| 6 |

license: apache-2.0

|

| 7 |

pipeline_tag: image-text-to-text

|

| 8 |

+

library_name: transformers

|

| 9 |

---

|

| 10 |

+

> [!NOTE]

|

| 11 |

+

> Includes Unsloth **chat template fixes**! <br> For `llama.cpp`, use `--jinja`

|

| 12 |

+

>

|

| 13 |

+

|

| 14 |

<div>

|

| 15 |

<p style="margin-top: 0;margin-bottom: 0;">

|

| 16 |

<em><a href="https://docs.unsloth.ai/basics/unsloth-dynamic-v2.0-gguf">Unsloth Dynamic 2.0</a> achieves superior accuracy & outperforms other leading quants.</em>

|

|

|

|

| 84 |

|

| 85 |

**Multimodal performance**

|

| 86 |

|

| 87 |

+

|

| 88 |

|

| 89 |

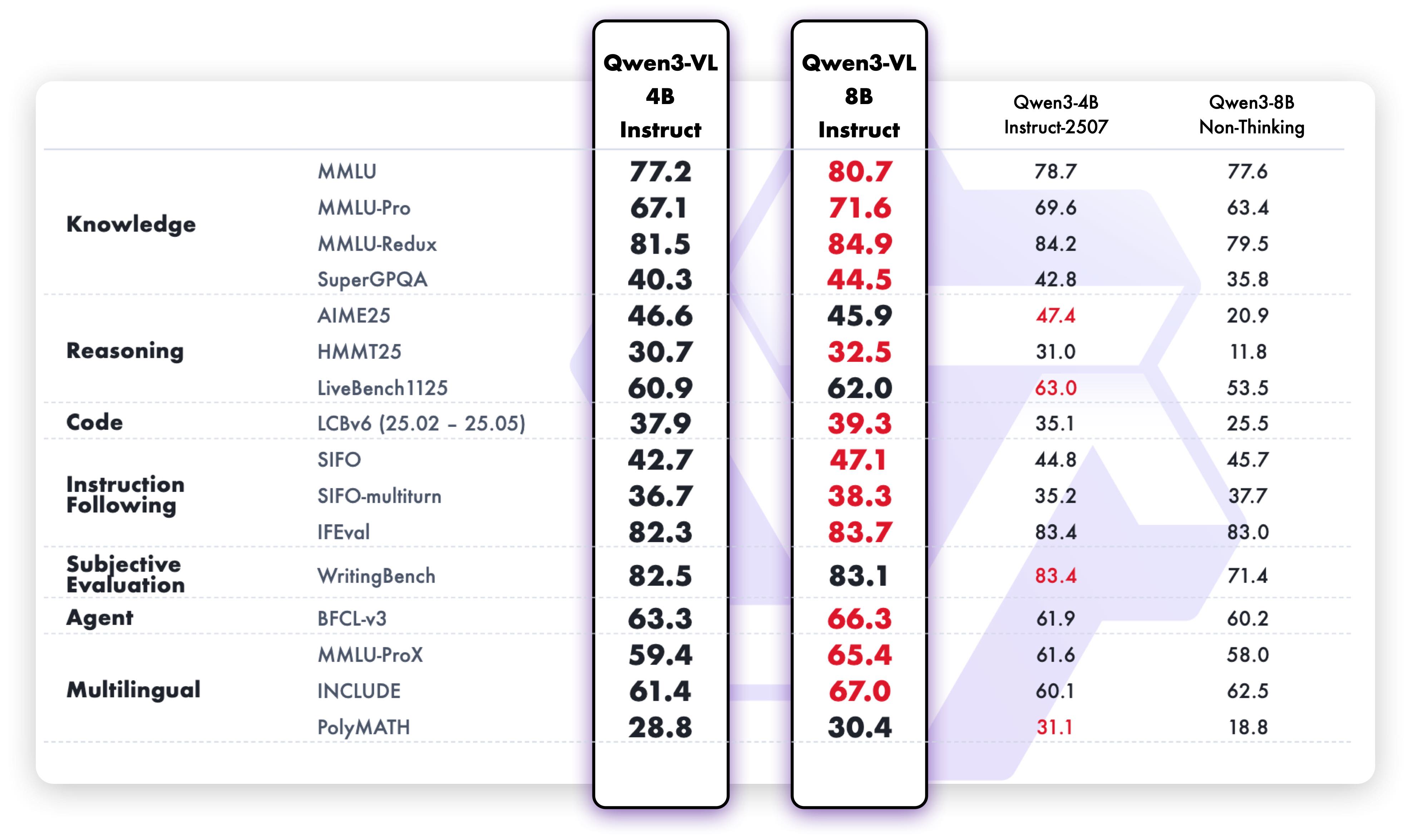

**Pure text performance**

|

| 90 |

+

|

| 91 |

|

| 92 |

## Quickstart

|

| 93 |

|

|

|

|

| 119 |

# device_map="auto",

|

| 120 |

# )

|

| 121 |

|

| 122 |

+

processor = AutoProcessor.from_pretrained("Qwen/Qwen3-VL-8B-Instruct")

|

| 123 |

|

| 124 |

messages = [

|

| 125 |

{

|

|

|

|

| 142 |

return_dict=True,

|

| 143 |

return_tensors="pt"

|

| 144 |

)

|

| 145 |

+

inputs = inputs.to(model.device)

|

| 146 |

|

| 147 |

# Inference: Generation of the output

|

| 148 |

generated_ids = model.generate(**inputs, max_new_tokens=128)

|

|

|

|

| 155 |

print(output_text)

|

| 156 |

```

|

| 157 |

|

| 158 |

+

### Generation Hyperparameters

|

| 159 |

+

#### VL

|

| 160 |

+

```bash

|

| 161 |

+

export greedy='false'

|

| 162 |

+

export top_p=0.8

|

| 163 |

+

export top_k=20

|

| 164 |

+

export temperature=0.7

|

| 165 |

+

export repetition_penalty=1.0

|

| 166 |

+

export presence_penalty=1.5

|

| 167 |

+

export out_seq_length=16384

|

| 168 |

+

```

|

| 169 |

+

|

| 170 |

+

#### Text

|

| 171 |

+

```bash

|

| 172 |

+

export greedy='false'

|

| 173 |

+

export top_p=1.0

|

| 174 |

+

export top_k=40

|

| 175 |

+

export repetition_penalty=1.0

|

| 176 |

+

export presence_penalty=2.0

|

| 177 |

+

export temperature=1.0

|

| 178 |

+

export out_seq_length=32768

|

| 179 |

+

```

|

| 180 |

|

| 181 |

|

| 182 |

## Citation

|

chat_template.json

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"chat_template": "{%- if tools %}\n {{- '<|im_start|>system\\n' }}\n {%- if messages[0].role == 'system' %}\n {%- if messages[0].content is string %}\n {{- messages[0].content }}\n {%- else %}\n {%- for content in messages[0].content %}\n {%- if 'text' in content %}\n {{- content.text }}\n {%- endif %}\n {%- endfor %}\n {%- endif %}\n {{- '\\n\\n' }}\n {%- endif %}\n {{- \"# Tools\\n\\nYou may call one or more functions to assist with the user query.\\n\\nYou are provided with function signatures within <tools></tools> XML tags:\\n<tools>\" }}\n {%- for tool in tools %}\n {{- \"\\n\" }}\n {{- tool | tojson }}\n {%- endfor %}\n {{- \"\\n</tools>\\n\\nFor each function call, return a json object with function name and arguments within <tool_call></tool_call> XML tags:\\n<tool_call>\\n{\\\"name\\\": <function-name>, \\\"arguments\\\": <args-json-object>}\\n</tool_call><|im_end|>\\n\" }}\n{%- else %}\n {%- if messages[0].role == 'system' %}\n {{- '<|im_start|>system\\n' }}\n {%- if messages[0].content is string %}\n {{- messages[0].content }}\n {%- else %}\n {%- for content in messages[0].content %}\n {%- if 'text' in content %}\n {{- content.text }}\n {%- endif %}\n {%- endfor %}\n {%- endif %}\n {{- '<|im_end|>\\n' }}\n {%- endif %}\n{%- endif %}\n{%- set image_count = namespace(value=0) %}\n{%- set video_count = namespace(value=0) %}\n{%- for message in messages %}\n {%- if message.role == \"user\" %}\n {{- '<|im_start|>' + message.role + '\\n' }}\n {%- if message.content is string %}\n {{- message.content }}\n {%- else %}\n {%- for content in message.content %}\n {%- if content.type == 'image' or 'image' in content or 'image_url' in content %}\n {%- set image_count.value = image_count.value + 1 %}\n {%- if add_vision_id %}Picture {{ image_count.value }}: {% endif -%}\n <|vision_start|><|image_pad|><|vision_end|>\n {%- elif content.type == 'video' or 'video' in content %}\n {%- set video_count.value = video_count.value + 1 %}\n {%- if add_vision_id %}Video {{ video_count.value }}: {% endif -%}\n <|vision_start|><|video_pad|><|vision_end|>\n {%- elif 'text' in content %}\n {{- content.text }}\n {%- endif %}\n {%- endfor %}\n {%- endif %}\n {{- '<|im_end|>\\n' }}\n {%- elif message.role == \"assistant\" %}\n {{- '<|im_start|>' + message.role + '\\n' }}\n {%- if message.content is string %}\n {{- message.content }}\n {%- else %}\n {%- for content_item in message.content %}\n {%- if 'text' in content_item %}\n {{- content_item.text }}\n {%- endif %}\n {%- endfor %}\n {%- endif %}\n {%- if message.tool_calls %}\n {%- for tool_call in message.tool_calls %}\n {%- if (loop.first and message.content) or (not loop.first) %}\n {{- '\\n' }}\n {%- endif %}\n {%- if tool_call.function %}\n {%- set tool_call = tool_call.function %}\n {%- endif %}\n {{- '<tool_call>\\n{\"name\": \"' }}\n {{- tool_call.name }}\n {{- '\", \"arguments\": ' }}\n {%- if tool_call.arguments is string %}\n {{- tool_call.arguments }}\n {%- else %}\n {{- tool_call.arguments | tojson }}\n {%- endif %}\n {{- '}\\n</tool_call>' }}\n {%- endfor %}\n {%- endif %}\n {{- '<|im_end|>\\n' }}\n {%- elif message.role == \"tool\" %}\n {%- if loop.first or (messages[loop.index0 - 1].role != \"tool\") %}\n {{- '<|im_start|>user' }}\n {%- endif %}\n {{- '\\n<tool_response>\\n' }}\n {%- if message.content is string %}\n {{- message.content }}\n {%- else %}\n {%- for content in message.content %}\n {%- if content.type == 'image' or 'image' in content or 'image_url' in content %}\n {%- set image_count.value = image_count.value + 1 %}\n {%- if add_vision_id %}Picture {{ image_count.value }}: {% endif -%}\n <|vision_start|><|image_pad|><|vision_end|>\n {%- elif content.type == 'video' or 'video' in content %}\n {%- set video_count.value = video_count.value + 1 %}\n {%- if add_vision_id %}Video {{ video_count.value }}: {% endif -%}\n <|vision_start|><|video_pad|><|vision_end|>\n {%- elif 'text' in content %}\n {{- content.text }}\n {%- endif %}\n {%- endfor %}\n {%- endif %}\n {{- '\\n</tool_response>' }}\n {%- if loop.last or (messages[loop.index0 + 1].role != \"tool\") %}\n {{- '<|im_end|>\\n' }}\n {%- endif %}\n {%- endif %}\n{%- endfor %}\n{%- if add_generation_prompt %}\n {{- '<|im_start|>assistant\\n' }}\n{%- endif %}\n"

|

| 3 |

+

}

|

config.json

CHANGED

|

@@ -2,8 +2,6 @@

|

|

| 2 |

"architectures": [

|

| 3 |

"Qwen3VLForConditionalGeneration"

|

| 4 |

],

|

| 5 |

-

"torch_dtype": "bfloat16",

|

| 6 |

-

"eos_token_id": 151645,

|

| 7 |

"image_token_id": 151655,

|

| 8 |

"model_type": "qwen3_vl",

|

| 9 |

"pad_token_id": 151654,

|

|

@@ -38,7 +36,7 @@

|

|

| 38 |

"vocab_size": 151936

|

| 39 |

},

|

| 40 |

"tie_word_embeddings": false,

|

| 41 |

-

"transformers_version": "4.57.

|

| 42 |

"unsloth_fixed": true,

|

| 43 |

"video_token_id": 151656,

|

| 44 |

"vision_config": {

|

|

@@ -48,7 +46,6 @@

|

|

| 48 |

24

|

| 49 |

],

|

| 50 |

"depth": 27,

|

| 51 |

-

"torch_dtype": "bfloat16",

|

| 52 |

"hidden_act": "gelu_pytorch_tanh",

|

| 53 |

"hidden_size": 1152,

|

| 54 |

"in_channels": 3,

|

|

@@ -64,4 +61,4 @@

|

|

| 64 |

},

|

| 65 |

"vision_end_token_id": 151653,

|

| 66 |

"vision_start_token_id": 151652

|

| 67 |

-

}

|

|

|

|

| 2 |

"architectures": [

|

| 3 |

"Qwen3VLForConditionalGeneration"

|

| 4 |

],

|

|

|

|

|

|

|

| 5 |

"image_token_id": 151655,

|

| 6 |

"model_type": "qwen3_vl",

|

| 7 |

"pad_token_id": 151654,

|

|

|

|

| 36 |

"vocab_size": 151936

|

| 37 |

},

|

| 38 |

"tie_word_embeddings": false,

|

| 39 |

+

"transformers_version": "4.57.1",

|

| 40 |

"unsloth_fixed": true,

|

| 41 |

"video_token_id": 151656,

|

| 42 |

"vision_config": {

|

|

|

|

| 46 |

24

|

| 47 |

],

|

| 48 |

"depth": 27,

|

|

|

|

| 49 |

"hidden_act": "gelu_pytorch_tanh",

|

| 50 |

"hidden_size": 1152,

|

| 51 |

"in_channels": 3,

|

|

|

|

| 61 |

},

|

| 62 |

"vision_end_token_id": 151653,

|

| 63 |

"vision_start_token_id": 151652

|

| 64 |

+

}

|

generation_config.json

CHANGED

|

@@ -1,13 +1,14 @@

|

|

| 1 |

{

|

| 2 |

-

|

| 3 |

-

|

| 4 |

-

|

| 5 |

-

|

| 6 |

-

|

| 7 |

-

|

| 8 |

-

|

| 9 |

-

|

| 10 |

-

|

| 11 |

-

|

| 12 |

-

|

| 13 |

-

|

|

|

|

|

|

| 1 |

{

|

| 2 |

+

"bos_token_id": 151643,

|

| 3 |

+

"pad_token_id": 151643,

|

| 4 |

+

"do_sample": true,

|

| 5 |

+

"eos_token_id": [

|

| 6 |

+

151645,

|

| 7 |

+

151643

|

| 8 |

+

],

|

| 9 |

+

"top_k": 20,

|

| 10 |

+

"top_p": 0.8,

|

| 11 |

+

"repetition_penalty": 1.0,

|

| 12 |

+

"temperature": 0.7,

|

| 13 |

+

"transformers_version": "4.56.0"

|

| 14 |

+

}

|

model-00001-of-00004.safetensors

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d5d0aef0eb170fc7453a296c43c0849a56f510555d3588e4fd662bb35490aefa

|

| 3 |

+

size 4902275944

|

model-00002-of-00004.safetensors

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:8be88fb5501e4d5719a6d4cc212e6a13480330e74f3e8c77daa1a68f199106b5

|

| 3 |

+

size 4915962496

|

model-00003-of-00004.safetensors

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:83de00eafe6e0d57ccd009dbcf71c9974d74df2f016c27afb7e95aafd16b2192

|

| 3 |

+

size 4999831048

|

model-00004-of-00004.safetensors

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0a88b98e9f96270973f567e6a2c103ede6ccdf915ca3075e21c755604d0377a5

|

| 3 |

+

size 2716270024

|

model.safetensors.index.json

CHANGED

|

@@ -1,6 +1,5 @@

|

|

| 1 |

{

|

| 2 |

"metadata": {

|

| 3 |

-

"total_parameters": 8767123696,

|

| 4 |

"total_size": 17534247392

|

| 5 |

},

|

| 6 |

"weight_map": {

|

|

@@ -127,11 +126,11 @@

|

|

| 127 |

"model.language_model.layers.18.self_attn.q_norm.weight": "model-00002-of-00004.safetensors",

|

| 128 |

"model.language_model.layers.18.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 129 |

"model.language_model.layers.18.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 130 |

-

"model.language_model.layers.19.input_layernorm.weight": "model-

|

| 131 |

-

"model.language_model.layers.19.mlp.down_proj.weight": "model-

|

| 132 |

"model.language_model.layers.19.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 133 |

-

"model.language_model.layers.19.mlp.up_proj.weight": "model-

|

| 134 |

-

"model.language_model.layers.19.post_attention_layernorm.weight": "model-

|

| 135 |

"model.language_model.layers.19.self_attn.k_norm.weight": "model-00002-of-00004.safetensors",

|

| 136 |

"model.language_model.layers.19.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 137 |

"model.language_model.layers.19.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

|

@@ -149,39 +148,39 @@

|

|

| 149 |

"model.language_model.layers.2.self_attn.q_norm.weight": "model-00001-of-00004.safetensors",

|

| 150 |

"model.language_model.layers.2.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

| 151 |

"model.language_model.layers.2.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

| 152 |

-

"model.language_model.layers.20.input_layernorm.weight": "model-

|

| 153 |

-

"model.language_model.layers.20.mlp.down_proj.weight": "model-

|

| 154 |

-

"model.language_model.layers.20.mlp.gate_proj.weight": "model-

|

| 155 |

-

"model.language_model.layers.20.mlp.up_proj.weight": "model-

|

| 156 |

-

"model.language_model.layers.20.post_attention_layernorm.weight": "model-

|

| 157 |

-

"model.language_model.layers.20.self_attn.k_norm.weight": "model-

|

| 158 |

-

"model.language_model.layers.20.self_attn.k_proj.weight": "model-

|

| 159 |

-

"model.language_model.layers.20.self_attn.o_proj.weight": "model-

|

| 160 |

-

"model.language_model.layers.20.self_attn.q_norm.weight": "model-

|

| 161 |

-

"model.language_model.layers.20.self_attn.q_proj.weight": "model-

|

| 162 |

-

"model.language_model.layers.20.self_attn.v_proj.weight": "model-

|

| 163 |

-

"model.language_model.layers.21.input_layernorm.weight": "model-

|

| 164 |

-

"model.language_model.layers.21.mlp.down_proj.weight": "model-

|

| 165 |

-

"model.language_model.layers.21.mlp.gate_proj.weight": "model-

|

| 166 |

-

"model.language_model.layers.21.mlp.up_proj.weight": "model-

|

| 167 |

-

"model.language_model.layers.21.post_attention_layernorm.weight": "model-

|

| 168 |

-

"model.language_model.layers.21.self_attn.k_norm.weight": "model-

|

| 169 |

-

"model.language_model.layers.21.self_attn.k_proj.weight": "model-

|

| 170 |

-

"model.language_model.layers.21.self_attn.o_proj.weight": "model-

|

| 171 |

-

"model.language_model.layers.21.self_attn.q_norm.weight": "model-

|

| 172 |

-

"model.language_model.layers.21.self_attn.q_proj.weight": "model-

|

| 173 |

-

"model.language_model.layers.21.self_attn.v_proj.weight": "model-

|

| 174 |

-

"model.language_model.layers.22.input_layernorm.weight": "model-

|

| 175 |

"model.language_model.layers.22.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 176 |

"model.language_model.layers.22.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

| 177 |

"model.language_model.layers.22.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

| 178 |

-

"model.language_model.layers.22.post_attention_layernorm.weight": "model-

|

| 179 |

-

"model.language_model.layers.22.self_attn.k_norm.weight": "model-

|

| 180 |

-

"model.language_model.layers.22.self_attn.k_proj.weight": "model-

|

| 181 |

-

"model.language_model.layers.22.self_attn.o_proj.weight": "model-

|

| 182 |

-

"model.language_model.layers.22.self_attn.q_norm.weight": "model-

|

| 183 |

-

"model.language_model.layers.22.self_attn.q_proj.weight": "model-

|

| 184 |

-

"model.language_model.layers.22.self_attn.v_proj.weight": "model-

|

| 185 |

"model.language_model.layers.23.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 186 |

"model.language_model.layers.23.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 187 |

"model.language_model.layers.23.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

|

@@ -292,39 +291,39 @@

|

|

| 292 |

"model.language_model.layers.31.self_attn.q_norm.weight": "model-00003-of-00004.safetensors",

|

| 293 |

"model.language_model.layers.31.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 294 |

"model.language_model.layers.31.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

| 295 |

-

"model.language_model.layers.32.input_layernorm.weight": "model-

|

| 296 |

-

"model.language_model.layers.32.mlp.down_proj.weight": "model-

|

| 297 |

-

"model.language_model.layers.32.mlp.gate_proj.weight": "model-

|

| 298 |

-

"model.language_model.layers.32.mlp.up_proj.weight": "model-

|

| 299 |

-

"model.language_model.layers.32.post_attention_layernorm.weight": "model-

|

| 300 |

"model.language_model.layers.32.self_attn.k_norm.weight": "model-00003-of-00004.safetensors",

|

| 301 |

"model.language_model.layers.32.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

| 302 |

"model.language_model.layers.32.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

| 303 |

"model.language_model.layers.32.self_attn.q_norm.weight": "model-00003-of-00004.safetensors",

|

| 304 |

"model.language_model.layers.32.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 305 |

"model.language_model.layers.32.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

| 306 |

-

"model.language_model.layers.33.input_layernorm.weight": "model-

|

| 307 |

-

"model.language_model.layers.33.mlp.down_proj.weight": "model-

|

| 308 |

-

"model.language_model.layers.33.mlp.gate_proj.weight": "model-

|

| 309 |

-

"model.language_model.layers.33.mlp.up_proj.weight": "model-

|

| 310 |

-

"model.language_model.layers.33.post_attention_layernorm.weight": "model-

|

| 311 |

-

"model.language_model.layers.33.self_attn.k_norm.weight": "model-

|

| 312 |

-

"model.language_model.layers.33.self_attn.k_proj.weight": "model-

|

| 313 |

-

"model.language_model.layers.33.self_attn.o_proj.weight": "model-

|

| 314 |

-

"model.language_model.layers.33.self_attn.q_norm.weight": "model-

|

| 315 |

-

"model.language_model.layers.33.self_attn.q_proj.weight": "model-

|

| 316 |

-

"model.language_model.layers.33.self_attn.v_proj.weight": "model-

|

| 317 |

-

"model.language_model.layers.34.input_layernorm.weight": "model-

|

| 318 |

-

"model.language_model.layers.34.mlp.down_proj.weight": "model-

|

| 319 |

-

"model.language_model.layers.34.mlp.gate_proj.weight": "model-

|

| 320 |

-

"model.language_model.layers.34.mlp.up_proj.weight": "model-

|

| 321 |

-

"model.language_model.layers.34.post_attention_layernorm.weight": "model-

|

| 322 |

-

"model.language_model.layers.34.self_attn.k_norm.weight": "model-

|

| 323 |

-

"model.language_model.layers.34.self_attn.k_proj.weight": "model-

|

| 324 |

-

"model.language_model.layers.34.self_attn.o_proj.weight": "model-

|

| 325 |

-

"model.language_model.layers.34.self_attn.q_norm.weight": "model-

|

| 326 |

-

"model.language_model.layers.34.self_attn.q_proj.weight": "model-

|

| 327 |

-

"model.language_model.layers.34.self_attn.v_proj.weight": "model-

|

| 328 |

"model.language_model.layers.35.input_layernorm.weight": "model-00004-of-00004.safetensors",

|

| 329 |

"model.language_model.layers.35.mlp.down_proj.weight": "model-00004-of-00004.safetensors",

|

| 330 |

"model.language_model.layers.35.mlp.gate_proj.weight": "model-00004-of-00004.safetensors",

|

|

@@ -332,9 +331,9 @@

|

|

| 332 |

"model.language_model.layers.35.post_attention_layernorm.weight": "model-00004-of-00004.safetensors",

|

| 333 |

"model.language_model.layers.35.self_attn.k_norm.weight": "model-00004-of-00004.safetensors",

|

| 334 |

"model.language_model.layers.35.self_attn.k_proj.weight": "model-00004-of-00004.safetensors",

|

| 335 |

-

"model.language_model.layers.35.self_attn.o_proj.weight": "model-

|

| 336 |

"model.language_model.layers.35.self_attn.q_norm.weight": "model-00004-of-00004.safetensors",

|

| 337 |

-

"model.language_model.layers.35.self_attn.q_proj.weight": "model-

|

| 338 |

"model.language_model.layers.35.self_attn.v_proj.weight": "model-00004-of-00004.safetensors",

|

| 339 |

"model.language_model.layers.4.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

| 340 |

"model.language_model.layers.4.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

|

@@ -358,401 +357,401 @@

|

|

| 358 |

"model.language_model.layers.5.self_attn.q_norm.weight": "model-00001-of-00004.safetensors",

|

| 359 |

"model.language_model.layers.5.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

| 360 |

"model.language_model.layers.5.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

| 361 |

-

"model.language_model.layers.6.input_layernorm.weight": "model-

|

| 362 |

-

"model.language_model.layers.6.mlp.down_proj.weight": "model-

|

| 363 |

"model.language_model.layers.6.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

| 364 |

"model.language_model.layers.6.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

| 365 |

-

"model.language_model.layers.6.post_attention_layernorm.weight": "model-

|

| 366 |

"model.language_model.layers.6.self_attn.k_norm.weight": "model-00001-of-00004.safetensors",

|

| 367 |

"model.language_model.layers.6.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

| 368 |

"model.language_model.layers.6.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

| 369 |

"model.language_model.layers.6.self_attn.q_norm.weight": "model-00001-of-00004.safetensors",

|

| 370 |

"model.language_model.layers.6.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

| 371 |

"model.language_model.layers.6.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

| 372 |

-

"model.language_model.layers.7.input_layernorm.weight": "model-

|

| 373 |

-

"model.language_model.layers.7.mlp.down_proj.weight": "model-

|

| 374 |

-

"model.language_model.layers.7.mlp.gate_proj.weight": "model-

|

| 375 |

-

"model.language_model.layers.7.mlp.up_proj.weight": "model-

|

| 376 |

-

"model.language_model.layers.7.post_attention_layernorm.weight": "model-

|

| 377 |

-

"model.language_model.layers.7.self_attn.k_norm.weight": "model-

|

| 378 |

-

"model.language_model.layers.7.self_attn.k_proj.weight": "model-

|

| 379 |

-

"model.language_model.layers.7.self_attn.o_proj.weight": "model-

|

| 380 |

-

"model.language_model.layers.7.self_attn.q_norm.weight": "model-

|

| 381 |

-

"model.language_model.layers.7.self_attn.q_proj.weight": "model-

|

| 382 |

-

"model.language_model.layers.7.self_attn.v_proj.weight": "model-

|

| 383 |

-

"model.language_model.layers.8.input_layernorm.weight": "model-

|

| 384 |

-

"model.language_model.layers.8.mlp.down_proj.weight": "model-

|

| 385 |

-

"model.language_model.layers.8.mlp.gate_proj.weight": "model-

|

| 386 |

-

"model.language_model.layers.8.mlp.up_proj.weight": "model-

|

| 387 |

-

"model.language_model.layers.8.post_attention_layernorm.weight": "model-

|

| 388 |

-

"model.language_model.layers.8.self_attn.k_norm.weight": "model-

|

| 389 |

-

"model.language_model.layers.8.self_attn.k_proj.weight": "model-

|

| 390 |

-

"model.language_model.layers.8.self_attn.o_proj.weight": "model-

|

| 391 |

-

"model.language_model.layers.8.self_attn.q_norm.weight": "model-

|

| 392 |

-

"model.language_model.layers.8.self_attn.q_proj.weight": "model-

|

| 393 |

-

"model.language_model.layers.8.self_attn.v_proj.weight": "model-

|

| 394 |

-

"model.language_model.layers.9.input_layernorm.weight": "model-

|

| 395 |

"model.language_model.layers.9.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 396 |

-

"model.language_model.layers.9.mlp.gate_proj.weight": "model-

|

| 397 |

"model.language_model.layers.9.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 398 |

-

"model.language_model.layers.9.post_attention_layernorm.weight": "model-

|

| 399 |

-

"model.language_model.layers.9.self_attn.k_norm.weight": "model-

|

| 400 |

-

"model.language_model.layers.9.self_attn.k_proj.weight": "model-

|

| 401 |

-

"model.language_model.layers.9.self_attn.o_proj.weight": "model-

|

| 402 |

-

"model.language_model.layers.9.self_attn.q_norm.weight": "model-

|

| 403 |

-

"model.language_model.layers.9.self_attn.q_proj.weight": "model-

|

| 404 |

-

"model.language_model.layers.9.self_attn.v_proj.weight": "model-

|

| 405 |

"model.language_model.norm.weight": "model-00004-of-00004.safetensors",

|

| 406 |

-

"model.visual.blocks.0.attn.proj.bias": "model-

|

| 407 |

-

"model.visual.blocks.0.attn.proj.weight": "model-

|

| 408 |

-

"model.visual.blocks.0.attn.qkv.bias": "model-

|

| 409 |

-

"model.visual.blocks.0.attn.qkv.weight": "model-

|

| 410 |

-

"model.visual.blocks.0.mlp.linear_fc1.bias": "model-

|

| 411 |

-

"model.visual.blocks.0.mlp.linear_fc1.weight": "model-

|

| 412 |

-

"model.visual.blocks.0.mlp.linear_fc2.bias": "model-

|

| 413 |

-

"model.visual.blocks.0.mlp.linear_fc2.weight": "model-

|

| 414 |

-

"model.visual.blocks.0.norm1.bias": "model-

|

| 415 |

-

"model.visual.blocks.0.norm1.weight": "model-

|

| 416 |

-

"model.visual.blocks.0.norm2.bias": "model-

|

| 417 |

-

"model.visual.blocks.0.norm2.weight": "model-

|

| 418 |

-

"model.visual.blocks.1.attn.proj.bias": "model-

|

| 419 |

-

"model.visual.blocks.1.attn.proj.weight": "model-

|

| 420 |

-

"model.visual.blocks.1.attn.qkv.bias": "model-

|

| 421 |

-

"model.visual.blocks.1.attn.qkv.weight": "model-

|

| 422 |

-

"model.visual.blocks.1.mlp.linear_fc1.bias": "model-

|

| 423 |

-

"model.visual.blocks.1.mlp.linear_fc1.weight": "model-

|

| 424 |

-

"model.visual.blocks.1.mlp.linear_fc2.bias": "model-

|

| 425 |

-

"model.visual.blocks.1.mlp.linear_fc2.weight": "model-

|

| 426 |

-

"model.visual.blocks.1.norm1.bias": "model-

|

| 427 |

-

"model.visual.blocks.1.norm1.weight": "model-

|

| 428 |

-

"model.visual.blocks.1.norm2.bias": "model-

|

| 429 |

-

"model.visual.blocks.1.norm2.weight": "model-

|

| 430 |

-

"model.visual.blocks.10.attn.proj.bias": "model-

|

| 431 |

-

"model.visual.blocks.10.attn.proj.weight": "model-

|

| 432 |

-

"model.visual.blocks.10.attn.qkv.bias": "model-

|

| 433 |

-

"model.visual.blocks.10.attn.qkv.weight": "model-

|

| 434 |

-

"model.visual.blocks.10.mlp.linear_fc1.bias": "model-

|

| 435 |

-

"model.visual.blocks.10.mlp.linear_fc1.weight": "model-

|

| 436 |

-

"model.visual.blocks.10.mlp.linear_fc2.bias": "model-

|

| 437 |

-

"model.visual.blocks.10.mlp.linear_fc2.weight": "model-

|

| 438 |

-

"model.visual.blocks.10.norm1.bias": "model-

|

| 439 |

-

"model.visual.blocks.10.norm1.weight": "model-

|

| 440 |

-

"model.visual.blocks.10.norm2.bias": "model-

|

| 441 |

-

"model.visual.blocks.10.norm2.weight": "model-

|

| 442 |

-

"model.visual.blocks.11.attn.proj.bias": "model-

|

| 443 |

-

"model.visual.blocks.11.attn.proj.weight": "model-

|

| 444 |

-

"model.visual.blocks.11.attn.qkv.bias": "model-

|

| 445 |

-

"model.visual.blocks.11.attn.qkv.weight": "model-

|

| 446 |

-

"model.visual.blocks.11.mlp.linear_fc1.bias": "model-

|

| 447 |

-

"model.visual.blocks.11.mlp.linear_fc1.weight": "model-

|

| 448 |

-

"model.visual.blocks.11.mlp.linear_fc2.bias": "model-

|

| 449 |

-

"model.visual.blocks.11.mlp.linear_fc2.weight": "model-

|

| 450 |

-

"model.visual.blocks.11.norm1.bias": "model-

|

| 451 |

-

"model.visual.blocks.11.norm1.weight": "model-

|

| 452 |

-

"model.visual.blocks.11.norm2.bias": "model-

|

| 453 |

-

"model.visual.blocks.11.norm2.weight": "model-

|

| 454 |

-

"model.visual.blocks.12.attn.proj.bias": "model-

|

| 455 |

-

"model.visual.blocks.12.attn.proj.weight": "model-

|

| 456 |

-

"model.visual.blocks.12.attn.qkv.bias": "model-

|

| 457 |

-

"model.visual.blocks.12.attn.qkv.weight": "model-

|

| 458 |

-

"model.visual.blocks.12.mlp.linear_fc1.bias": "model-

|

| 459 |

-

"model.visual.blocks.12.mlp.linear_fc1.weight": "model-

|

| 460 |

-

"model.visual.blocks.12.mlp.linear_fc2.bias": "model-

|

| 461 |

-

"model.visual.blocks.12.mlp.linear_fc2.weight": "model-

|

| 462 |

-

"model.visual.blocks.12.norm1.bias": "model-

|

| 463 |

-

"model.visual.blocks.12.norm1.weight": "model-

|

| 464 |

-

"model.visual.blocks.12.norm2.bias": "model-

|

| 465 |

-

"model.visual.blocks.12.norm2.weight": "model-

|

| 466 |

-

"model.visual.blocks.13.attn.proj.bias": "model-

|

| 467 |

-

"model.visual.blocks.13.attn.proj.weight": "model-

|

| 468 |

-

"model.visual.blocks.13.attn.qkv.bias": "model-

|

| 469 |

-

"model.visual.blocks.13.attn.qkv.weight": "model-

|

| 470 |

-

"model.visual.blocks.13.mlp.linear_fc1.bias": "model-

|

| 471 |

-

"model.visual.blocks.13.mlp.linear_fc1.weight": "model-

|

| 472 |

-

"model.visual.blocks.13.mlp.linear_fc2.bias": "model-

|

| 473 |

-

"model.visual.blocks.13.mlp.linear_fc2.weight": "model-

|

| 474 |

-

"model.visual.blocks.13.norm1.bias": "model-

|

| 475 |

-

"model.visual.blocks.13.norm1.weight": "model-

|

| 476 |

-

"model.visual.blocks.13.norm2.bias": "model-

|

| 477 |

-

"model.visual.blocks.13.norm2.weight": "model-

|

| 478 |

-

"model.visual.blocks.14.attn.proj.bias": "model-

|

| 479 |

-

"model.visual.blocks.14.attn.proj.weight": "model-

|

| 480 |

-

"model.visual.blocks.14.attn.qkv.bias": "model-

|

| 481 |

-

"model.visual.blocks.14.attn.qkv.weight": "model-

|

| 482 |

-

"model.visual.blocks.14.mlp.linear_fc1.bias": "model-

|

| 483 |

-

"model.visual.blocks.14.mlp.linear_fc1.weight": "model-

|

| 484 |

-

"model.visual.blocks.14.mlp.linear_fc2.bias": "model-

|

| 485 |

-

"model.visual.blocks.14.mlp.linear_fc2.weight": "model-

|

| 486 |

-

"model.visual.blocks.14.norm1.bias": "model-

|

| 487 |

-

"model.visual.blocks.14.norm1.weight": "model-

|

| 488 |

-

"model.visual.blocks.14.norm2.bias": "model-

|

| 489 |

-

"model.visual.blocks.14.norm2.weight": "model-

|

| 490 |

-

"model.visual.blocks.15.attn.proj.bias": "model-

|

| 491 |

-

"model.visual.blocks.15.attn.proj.weight": "model-

|

| 492 |

-

"model.visual.blocks.15.attn.qkv.bias": "model-

|

| 493 |

-

"model.visual.blocks.15.attn.qkv.weight": "model-

|

| 494 |

-

"model.visual.blocks.15.mlp.linear_fc1.bias": "model-

|

| 495 |

-

"model.visual.blocks.15.mlp.linear_fc1.weight": "model-

|

| 496 |

-

"model.visual.blocks.15.mlp.linear_fc2.bias": "model-

|

| 497 |

-

"model.visual.blocks.15.mlp.linear_fc2.weight": "model-

|

| 498 |

-

"model.visual.blocks.15.norm1.bias": "model-

|

| 499 |

-

"model.visual.blocks.15.norm1.weight": "model-

|

| 500 |

-

"model.visual.blocks.15.norm2.bias": "model-

|

| 501 |

-

"model.visual.blocks.15.norm2.weight": "model-

|

| 502 |

-

"model.visual.blocks.16.attn.proj.bias": "model-

|

| 503 |

-

"model.visual.blocks.16.attn.proj.weight": "model-

|

| 504 |

-

"model.visual.blocks.16.attn.qkv.bias": "model-

|

| 505 |

-

"model.visual.blocks.16.attn.qkv.weight": "model-

|

| 506 |

-

"model.visual.blocks.16.mlp.linear_fc1.bias": "model-

|

| 507 |

-

"model.visual.blocks.16.mlp.linear_fc1.weight": "model-

|

| 508 |

-

"model.visual.blocks.16.mlp.linear_fc2.bias": "model-

|

| 509 |

-

"model.visual.blocks.16.mlp.linear_fc2.weight": "model-

|

| 510 |

-

"model.visual.blocks.16.norm1.bias": "model-

|

| 511 |

-

"model.visual.blocks.16.norm1.weight": "model-

|

| 512 |

-

"model.visual.blocks.16.norm2.bias": "model-

|

| 513 |

-

"model.visual.blocks.16.norm2.weight": "model-

|

| 514 |

-

"model.visual.blocks.17.attn.proj.bias": "model-

|

| 515 |

-

"model.visual.blocks.17.attn.proj.weight": "model-

|

| 516 |

-

"model.visual.blocks.17.attn.qkv.bias": "model-

|

| 517 |

-

"model.visual.blocks.17.attn.qkv.weight": "model-

|

| 518 |

-

"model.visual.blocks.17.mlp.linear_fc1.bias": "model-

|

| 519 |

-

"model.visual.blocks.17.mlp.linear_fc1.weight": "model-

|

| 520 |

-

"model.visual.blocks.17.mlp.linear_fc2.bias": "model-

|

| 521 |

-

"model.visual.blocks.17.mlp.linear_fc2.weight": "model-

|

| 522 |

-

"model.visual.blocks.17.norm1.bias": "model-

|

| 523 |

-

"model.visual.blocks.17.norm1.weight": "model-

|

| 524 |

-

"model.visual.blocks.17.norm2.bias": "model-

|

| 525 |

-

"model.visual.blocks.17.norm2.weight": "model-

|

| 526 |

-

"model.visual.blocks.18.attn.proj.bias": "model-

|

| 527 |

-

"model.visual.blocks.18.attn.proj.weight": "model-

|

| 528 |

-

"model.visual.blocks.18.attn.qkv.bias": "model-

|

| 529 |

-

"model.visual.blocks.18.attn.qkv.weight": "model-

|

| 530 |

-

"model.visual.blocks.18.mlp.linear_fc1.bias": "model-

|

| 531 |

-

"model.visual.blocks.18.mlp.linear_fc1.weight": "model-

|

| 532 |

-

"model.visual.blocks.18.mlp.linear_fc2.bias": "model-

|

| 533 |

-

"model.visual.blocks.18.mlp.linear_fc2.weight": "model-

|

| 534 |

-

"model.visual.blocks.18.norm1.bias": "model-

|

| 535 |

-

"model.visual.blocks.18.norm1.weight": "model-

|

| 536 |

-

"model.visual.blocks.18.norm2.bias": "model-

|

| 537 |

-

"model.visual.blocks.18.norm2.weight": "model-

|

| 538 |

-

"model.visual.blocks.19.attn.proj.bias": "model-

|

| 539 |

-

"model.visual.blocks.19.attn.proj.weight": "model-

|

| 540 |

-

"model.visual.blocks.19.attn.qkv.bias": "model-

|

| 541 |

-

"model.visual.blocks.19.attn.qkv.weight": "model-

|

| 542 |

-

"model.visual.blocks.19.mlp.linear_fc1.bias": "model-

|

| 543 |

-

"model.visual.blocks.19.mlp.linear_fc1.weight": "model-

|

| 544 |

-

"model.visual.blocks.19.mlp.linear_fc2.bias": "model-

|

| 545 |

-

"model.visual.blocks.19.mlp.linear_fc2.weight": "model-

|

| 546 |

-

"model.visual.blocks.19.norm1.bias": "model-

|

| 547 |

-

"model.visual.blocks.19.norm1.weight": "model-

|

| 548 |

-

"model.visual.blocks.19.norm2.bias": "model-

|

| 549 |

-

"model.visual.blocks.19.norm2.weight": "model-

|

| 550 |

-

"model.visual.blocks.2.attn.proj.bias": "model-

|

| 551 |

-

"model.visual.blocks.2.attn.proj.weight": "model-

|

| 552 |

-

"model.visual.blocks.2.attn.qkv.bias": "model-

|

| 553 |

-

"model.visual.blocks.2.attn.qkv.weight": "model-

|

| 554 |

-

"model.visual.blocks.2.mlp.linear_fc1.bias": "model-

|

| 555 |

-

"model.visual.blocks.2.mlp.linear_fc1.weight": "model-

|

| 556 |

-

"model.visual.blocks.2.mlp.linear_fc2.bias": "model-

|

| 557 |

-

"model.visual.blocks.2.mlp.linear_fc2.weight": "model-

|

| 558 |

-

"model.visual.blocks.2.norm1.bias": "model-

|

| 559 |

-

"model.visual.blocks.2.norm1.weight": "model-

|

| 560 |

-

"model.visual.blocks.2.norm2.bias": "model-

|

| 561 |

-

"model.visual.blocks.2.norm2.weight": "model-

|

| 562 |

-

"model.visual.blocks.20.attn.proj.bias": "model-

|

| 563 |

-

"model.visual.blocks.20.attn.proj.weight": "model-

|

| 564 |

-

"model.visual.blocks.20.attn.qkv.bias": "model-

|

| 565 |

-

"model.visual.blocks.20.attn.qkv.weight": "model-

|

| 566 |

-

"model.visual.blocks.20.mlp.linear_fc1.bias": "model-

|

| 567 |

-

"model.visual.blocks.20.mlp.linear_fc1.weight": "model-

|

| 568 |

-

"model.visual.blocks.20.mlp.linear_fc2.bias": "model-

|

| 569 |

-

"model.visual.blocks.20.mlp.linear_fc2.weight": "model-

|

| 570 |

-

"model.visual.blocks.20.norm1.bias": "model-

|

| 571 |

-

"model.visual.blocks.20.norm1.weight": "model-

|

| 572 |

-

"model.visual.blocks.20.norm2.bias": "model-

|

| 573 |

-

"model.visual.blocks.20.norm2.weight": "model-

|

| 574 |

-

"model.visual.blocks.21.attn.proj.bias": "model-

|

| 575 |

-

"model.visual.blocks.21.attn.proj.weight": "model-

|

| 576 |

-

"model.visual.blocks.21.attn.qkv.bias": "model-

|

| 577 |

-

"model.visual.blocks.21.attn.qkv.weight": "model-

|

| 578 |

-

"model.visual.blocks.21.mlp.linear_fc1.bias": "model-

|

| 579 |

-

"model.visual.blocks.21.mlp.linear_fc1.weight": "model-

|

| 580 |

-

"model.visual.blocks.21.mlp.linear_fc2.bias": "model-

|

| 581 |

-

"model.visual.blocks.21.mlp.linear_fc2.weight": "model-

|

| 582 |

-

"model.visual.blocks.21.norm1.bias": "model-

|

| 583 |

-

"model.visual.blocks.21.norm1.weight": "model-

|

| 584 |

-

"model.visual.blocks.21.norm2.bias": "model-

|

| 585 |

-

"model.visual.blocks.21.norm2.weight": "model-

|

| 586 |

-

"model.visual.blocks.22.attn.proj.bias": "model-

|

| 587 |

-

"model.visual.blocks.22.attn.proj.weight": "model-

|

| 588 |

-

"model.visual.blocks.22.attn.qkv.bias": "model-

|

| 589 |

-

"model.visual.blocks.22.attn.qkv.weight": "model-

|

| 590 |

-

"model.visual.blocks.22.mlp.linear_fc1.bias": "model-

|

| 591 |

-

"model.visual.blocks.22.mlp.linear_fc1.weight": "model-

|

| 592 |

-

"model.visual.blocks.22.mlp.linear_fc2.bias": "model-

|

| 593 |

-

"model.visual.blocks.22.mlp.linear_fc2.weight": "model-

|

| 594 |

-

"model.visual.blocks.22.norm1.bias": "model-

|

| 595 |

-

"model.visual.blocks.22.norm1.weight": "model-

|

| 596 |

-

"model.visual.blocks.22.norm2.bias": "model-

|

| 597 |

-

"model.visual.blocks.22.norm2.weight": "model-

|

| 598 |

-

"model.visual.blocks.23.attn.proj.bias": "model-

|

| 599 |

-

"model.visual.blocks.23.attn.proj.weight": "model-

|

| 600 |

-

"model.visual.blocks.23.attn.qkv.bias": "model-

|

| 601 |

-

"model.visual.blocks.23.attn.qkv.weight": "model-

|

| 602 |

-

"model.visual.blocks.23.mlp.linear_fc1.bias": "model-

|

| 603 |

-

"model.visual.blocks.23.mlp.linear_fc1.weight": "model-

|

| 604 |

-

"model.visual.blocks.23.mlp.linear_fc2.bias": "model-

|

| 605 |

-

"model.visual.blocks.23.mlp.linear_fc2.weight": "model-

|

| 606 |

-

"model.visual.blocks.23.norm1.bias": "model-

|

| 607 |

-

"model.visual.blocks.23.norm1.weight": "model-

|

| 608 |

-

"model.visual.blocks.23.norm2.bias": "model-

|

| 609 |

-

"model.visual.blocks.23.norm2.weight": "model-

|

| 610 |

-

"model.visual.blocks.24.attn.proj.bias": "model-

|

| 611 |

-

"model.visual.blocks.24.attn.proj.weight": "model-

|

| 612 |

-

"model.visual.blocks.24.attn.qkv.bias": "model-

|

| 613 |

-

"model.visual.blocks.24.attn.qkv.weight": "model-

|

| 614 |

-

"model.visual.blocks.24.mlp.linear_fc1.bias": "model-

|

| 615 |

-

"model.visual.blocks.24.mlp.linear_fc1.weight": "model-

|

| 616 |

-

"model.visual.blocks.24.mlp.linear_fc2.bias": "model-

|

| 617 |

-

"model.visual.blocks.24.mlp.linear_fc2.weight": "model-

|

| 618 |

-

"model.visual.blocks.24.norm1.bias": "model-

|

| 619 |

-

"model.visual.blocks.24.norm1.weight": "model-

|

| 620 |

-

"model.visual.blocks.24.norm2.bias": "model-

|

| 621 |

-

"model.visual.blocks.24.norm2.weight": "model-

|

| 622 |

-

"model.visual.blocks.25.attn.proj.bias": "model-

|

| 623 |

-

"model.visual.blocks.25.attn.proj.weight": "model-

|

| 624 |

-

"model.visual.blocks.25.attn.qkv.bias": "model-

|

| 625 |

-

"model.visual.blocks.25.attn.qkv.weight": "model-

|

| 626 |

-

"model.visual.blocks.25.mlp.linear_fc1.bias": "model-

|

| 627 |

-

"model.visual.blocks.25.mlp.linear_fc1.weight": "model-

|

| 628 |

-

"model.visual.blocks.25.mlp.linear_fc2.bias": "model-

|

| 629 |

-

"model.visual.blocks.25.mlp.linear_fc2.weight": "model-

|

| 630 |

-

"model.visual.blocks.25.norm1.bias": "model-

|

| 631 |

-

"model.visual.blocks.25.norm1.weight": "model-

|

| 632 |

-

"model.visual.blocks.25.norm2.bias": "model-

|

| 633 |

-

"model.visual.blocks.25.norm2.weight": "model-

|

| 634 |

-

"model.visual.blocks.26.attn.proj.bias": "model-

|

| 635 |

-

"model.visual.blocks.26.attn.proj.weight": "model-

|

| 636 |

-

"model.visual.blocks.26.attn.qkv.bias": "model-

|

| 637 |

-

"model.visual.blocks.26.attn.qkv.weight": "model-

|

| 638 |

-

"model.visual.blocks.26.mlp.linear_fc1.bias": "model-

|

| 639 |

-

"model.visual.blocks.26.mlp.linear_fc1.weight": "model-

|

| 640 |

-

"model.visual.blocks.26.mlp.linear_fc2.bias": "model-

|

| 641 |

-

"model.visual.blocks.26.mlp.linear_fc2.weight": "model-

|

| 642 |

-

"model.visual.blocks.26.norm1.bias": "model-

|

| 643 |

-

"model.visual.blocks.26.norm1.weight": "model-

|

| 644 |

-

"model.visual.blocks.26.norm2.bias": "model-

|

| 645 |

-

"model.visual.blocks.26.norm2.weight": "model-

|

| 646 |

-

"model.visual.blocks.3.attn.proj.bias": "model-

|

| 647 |

-

"model.visual.blocks.3.attn.proj.weight": "model-

|

| 648 |

-

"model.visual.blocks.3.attn.qkv.bias": "model-

|

| 649 |

-

"model.visual.blocks.3.attn.qkv.weight": "model-

|

| 650 |

-

"model.visual.blocks.3.mlp.linear_fc1.bias": "model-

|

| 651 |

-

"model.visual.blocks.3.mlp.linear_fc1.weight": "model-

|

| 652 |

-

"model.visual.blocks.3.mlp.linear_fc2.bias": "model-

|

| 653 |

-

"model.visual.blocks.3.mlp.linear_fc2.weight": "model-

|

| 654 |

-

"model.visual.blocks.3.norm1.bias": "model-

|

| 655 |

-

"model.visual.blocks.3.norm1.weight": "model-

|

| 656 |

-

"model.visual.blocks.3.norm2.bias": "model-

|

| 657 |

-

"model.visual.blocks.3.norm2.weight": "model-

|

| 658 |

-

"model.visual.blocks.4.attn.proj.bias": "model-

|

| 659 |

-

"model.visual.blocks.4.attn.proj.weight": "model-

|

| 660 |

-

"model.visual.blocks.4.attn.qkv.bias": "model-

|

| 661 |

-

"model.visual.blocks.4.attn.qkv.weight": "model-

|

| 662 |

-

"model.visual.blocks.4.mlp.linear_fc1.bias": "model-

|

| 663 |

-

"model.visual.blocks.4.mlp.linear_fc1.weight": "model-

|

| 664 |

-

"model.visual.blocks.4.mlp.linear_fc2.bias": "model-

|

| 665 |

-

"model.visual.blocks.4.mlp.linear_fc2.weight": "model-

|

| 666 |

-

"model.visual.blocks.4.norm1.bias": "model-

|

| 667 |

-

"model.visual.blocks.4.norm1.weight": "model-

|

| 668 |

-

"model.visual.blocks.4.norm2.bias": "model-

|

| 669 |

-

"model.visual.blocks.4.norm2.weight": "model-

|

| 670 |

-

"model.visual.blocks.5.attn.proj.bias": "model-

|

| 671 |

-

"model.visual.blocks.5.attn.proj.weight": "model-

|

| 672 |

-

"model.visual.blocks.5.attn.qkv.bias": "model-

|

| 673 |

-

"model.visual.blocks.5.attn.qkv.weight": "model-

|

| 674 |

-

"model.visual.blocks.5.mlp.linear_fc1.bias": "model-

|

| 675 |

-

"model.visual.blocks.5.mlp.linear_fc1.weight": "model-

|

| 676 |

-

"model.visual.blocks.5.mlp.linear_fc2.bias": "model-

|

| 677 |

-

"model.visual.blocks.5.mlp.linear_fc2.weight": "model-

|

| 678 |

-

"model.visual.blocks.5.norm1.bias": "model-

|

| 679 |

-

"model.visual.blocks.5.norm1.weight": "model-

|

| 680 |

-

"model.visual.blocks.5.norm2.bias": "model-

|

| 681 |

-

"model.visual.blocks.5.norm2.weight": "model-

|

| 682 |

-

"model.visual.blocks.6.attn.proj.bias": "model-

|

| 683 |

-

"model.visual.blocks.6.attn.proj.weight": "model-

|

| 684 |

-

"model.visual.blocks.6.attn.qkv.bias": "model-

|

| 685 |

-

"model.visual.blocks.6.attn.qkv.weight": "model-

|

| 686 |

-

"model.visual.blocks.6.mlp.linear_fc1.bias": "model-

|

| 687 |

-

"model.visual.blocks.6.mlp.linear_fc1.weight": "model-

|

| 688 |

-

"model.visual.blocks.6.mlp.linear_fc2.bias": "model-

|

| 689 |

-

"model.visual.blocks.6.mlp.linear_fc2.weight": "model-

|

| 690 |

-

"model.visual.blocks.6.norm1.bias": "model-

|

| 691 |

-

"model.visual.blocks.6.norm1.weight": "model-

|

| 692 |

-

"model.visual.blocks.6.norm2.bias": "model-

|

| 693 |

-

"model.visual.blocks.6.norm2.weight": "model-

|

| 694 |

-

"model.visual.blocks.7.attn.proj.bias": "model-

|

| 695 |

-

"model.visual.blocks.7.attn.proj.weight": "model-

|

| 696 |

-

"model.visual.blocks.7.attn.qkv.bias": "model-

|

| 697 |

-

"model.visual.blocks.7.attn.qkv.weight": "model-

|

| 698 |

-

"model.visual.blocks.7.mlp.linear_fc1.bias": "model-

|

| 699 |

-

"model.visual.blocks.7.mlp.linear_fc1.weight": "model-

|

| 700 |

-

"model.visual.blocks.7.mlp.linear_fc2.bias": "model-

|

| 701 |

-

"model.visual.blocks.7.mlp.linear_fc2.weight": "model-

|

| 702 |

-

"model.visual.blocks.7.norm1.bias": "model-

|

| 703 |

-

"model.visual.blocks.7.norm1.weight": "model-

|

| 704 |

-

"model.visual.blocks.7.norm2.bias": "model-

|

| 705 |

-

"model.visual.blocks.7.norm2.weight": "model-

|

| 706 |

-

"model.visual.blocks.8.attn.proj.bias": "model-

|

| 707 |

-

"model.visual.blocks.8.attn.proj.weight": "model-

|

| 708 |

-

"model.visual.blocks.8.attn.qkv.bias": "model-

|

| 709 |

-

"model.visual.blocks.8.attn.qkv.weight": "model-

|

| 710 |

-

"model.visual.blocks.8.mlp.linear_fc1.bias": "model-

|

| 711 |

-

"model.visual.blocks.8.mlp.linear_fc1.weight": "model-

|

| 712 |

-

"model.visual.blocks.8.mlp.linear_fc2.bias": "model-

|

| 713 |

-

"model.visual.blocks.8.mlp.linear_fc2.weight": "model-

|

| 714 |

-

"model.visual.blocks.8.norm1.bias": "model-

|

| 715 |

-

"model.visual.blocks.8.norm1.weight": "model-

|

| 716 |

-

"model.visual.blocks.8.norm2.bias": "model-

|

| 717 |

-

"model.visual.blocks.8.norm2.weight": "model-

|

| 718 |

-

"model.visual.blocks.9.attn.proj.bias": "model-

|

| 719 |

-

"model.visual.blocks.9.attn.proj.weight": "model-

|

| 720 |

-

"model.visual.blocks.9.attn.qkv.bias": "model-

|

| 721 |

-

"model.visual.blocks.9.attn.qkv.weight": "model-

|

| 722 |

-

"model.visual.blocks.9.mlp.linear_fc1.bias": "model-

|

| 723 |

-

"model.visual.blocks.9.mlp.linear_fc1.weight": "model-

|

| 724 |

-

"model.visual.blocks.9.mlp.linear_fc2.bias": "model-

|

| 725 |

-

"model.visual.blocks.9.mlp.linear_fc2.weight": "model-

|

| 726 |

-

"model.visual.blocks.9.norm1.bias": "model-

|

| 727 |

-

"model.visual.blocks.9.norm1.weight": "model-

|

| 728 |

-

"model.visual.blocks.9.norm2.bias": "model-

|

| 729 |

-

"model.visual.blocks.9.norm2.weight": "model-

|

| 730 |

-

"model.visual.deepstack_merger_list.0.linear_fc1.bias": "model-

|

| 731 |

-

"model.visual.deepstack_merger_list.0.linear_fc1.weight": "model-

|

| 732 |

-

"model.visual.deepstack_merger_list.0.linear_fc2.bias": "model-

|

| 733 |

-

"model.visual.deepstack_merger_list.0.linear_fc2.weight": "model-

|

| 734 |

-

"model.visual.deepstack_merger_list.0.norm.bias": "model-

|

| 735 |

-

"model.visual.deepstack_merger_list.0.norm.weight": "model-

|

| 736 |

-

"model.visual.deepstack_merger_list.1.linear_fc1.bias": "model-

|

| 737 |

-

"model.visual.deepstack_merger_list.1.linear_fc1.weight": "model-

|

| 738 |

-

"model.visual.deepstack_merger_list.1.linear_fc2.bias": "model-

|

| 739 |

-

"model.visual.deepstack_merger_list.1.linear_fc2.weight": "model-

|

| 740 |

-

"model.visual.deepstack_merger_list.1.norm.bias": "model-

|

| 741 |

-

"model.visual.deepstack_merger_list.1.norm.weight": "model-

|

| 742 |

-

"model.visual.deepstack_merger_list.2.linear_fc1.bias": "model-

|

| 743 |

-

"model.visual.deepstack_merger_list.2.linear_fc1.weight": "model-

|

| 744 |

-

"model.visual.deepstack_merger_list.2.linear_fc2.bias": "model-

|

| 745 |

-

"model.visual.deepstack_merger_list.2.linear_fc2.weight": "model-

|

| 746 |

-

"model.visual.deepstack_merger_list.2.norm.bias": "model-

|

| 747 |

-

"model.visual.deepstack_merger_list.2.norm.weight": "model-

|

| 748 |

-

"model.visual.merger.linear_fc1.bias": "model-

|

| 749 |

-

"model.visual.merger.linear_fc1.weight": "model-

|

| 750 |

-

"model.visual.merger.linear_fc2.bias": "model-

|

| 751 |

-

"model.visual.merger.linear_fc2.weight": "model-

|

| 752 |

-

"model.visual.merger.norm.bias": "model-

|

| 753 |

-

"model.visual.merger.norm.weight": "model-

|

| 754 |

-

"model.visual.patch_embed.proj.bias": "model-

|

| 755 |

-

"model.visual.patch_embed.proj.weight": "model-

|

| 756 |

-

"model.visual.pos_embed.weight": "model-

|

| 757 |

}

|

| 758 |

}

|

|

|

|

| 1 |

{

|

| 2 |

"metadata": {

|

|

|

|

| 3 |

"total_size": 17534247392

|

| 4 |

},

|

| 5 |

"weight_map": {

|

|

|

|

| 126 |

"model.language_model.layers.18.self_attn.q_norm.weight": "model-00002-of-00004.safetensors",

|

| 127 |

"model.language_model.layers.18.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 128 |

"model.language_model.layers.18.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 129 |

+

"model.language_model.layers.19.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 130 |

+

"model.language_model.layers.19.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 131 |

"model.language_model.layers.19.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 132 |

+

"model.language_model.layers.19.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 133 |

+

"model.language_model.layers.19.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 134 |

"model.language_model.layers.19.self_attn.k_norm.weight": "model-00002-of-00004.safetensors",

|

| 135 |

"model.language_model.layers.19.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 136 |

"model.language_model.layers.19.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

|

|

|

| 148 |

"model.language_model.layers.2.self_attn.q_norm.weight": "model-00001-of-00004.safetensors",

|

| 149 |

"model.language_model.layers.2.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

| 150 |

"model.language_model.layers.2.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

| 151 |

+

"model.language_model.layers.20.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 152 |

+

"model.language_model.layers.20.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 153 |

+

"model.language_model.layers.20.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 154 |

+

"model.language_model.layers.20.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 155 |

+

"model.language_model.layers.20.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 156 |

+

"model.language_model.layers.20.self_attn.k_norm.weight": "model-00002-of-00004.safetensors",

|

| 157 |

+

"model.language_model.layers.20.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 158 |

+

"model.language_model.layers.20.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 159 |

+

"model.language_model.layers.20.self_attn.q_norm.weight": "model-00002-of-00004.safetensors",

|

| 160 |

+

"model.language_model.layers.20.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 161 |

+

"model.language_model.layers.20.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 162 |

+

"model.language_model.layers.21.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 163 |

+

"model.language_model.layers.21.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 164 |

+

"model.language_model.layers.21.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 165 |

+

"model.language_model.layers.21.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 166 |

+

"model.language_model.layers.21.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 167 |

+

"model.language_model.layers.21.self_attn.k_norm.weight": "model-00002-of-00004.safetensors",

|

| 168 |

+

"model.language_model.layers.21.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 169 |

+

"model.language_model.layers.21.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 170 |

+

"model.language_model.layers.21.self_attn.q_norm.weight": "model-00002-of-00004.safetensors",

|

| 171 |

+

"model.language_model.layers.21.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 172 |

+

"model.language_model.layers.21.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 173 |

+

"model.language_model.layers.22.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 174 |

"model.language_model.layers.22.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 175 |

"model.language_model.layers.22.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

| 176 |

"model.language_model.layers.22.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

| 177 |

+

"model.language_model.layers.22.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 178 |

+

"model.language_model.layers.22.self_attn.k_norm.weight": "model-00002-of-00004.safetensors",

|

| 179 |

+

"model.language_model.layers.22.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 180 |

+

"model.language_model.layers.22.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 181 |

+

"model.language_model.layers.22.self_attn.q_norm.weight": "model-00002-of-00004.safetensors",

|

| 182 |

+

"model.language_model.layers.22.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 183 |

+

"model.language_model.layers.22.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 184 |

"model.language_model.layers.23.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 185 |

"model.language_model.layers.23.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 186 |

"model.language_model.layers.23.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

|

|

|

| 291 |

"model.language_model.layers.31.self_attn.q_norm.weight": "model-00003-of-00004.safetensors",

|

| 292 |

"model.language_model.layers.31.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 293 |

"model.language_model.layers.31.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

| 294 |

+

"model.language_model.layers.32.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 295 |

+

"model.language_model.layers.32.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 296 |

+

"model.language_model.layers.32.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

| 297 |

+

"model.language_model.layers.32.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

| 298 |

+

"model.language_model.layers.32.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 299 |

"model.language_model.layers.32.self_attn.k_norm.weight": "model-00003-of-00004.safetensors",

|

| 300 |

"model.language_model.layers.32.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

| 301 |

"model.language_model.layers.32.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

| 302 |

"model.language_model.layers.32.self_attn.q_norm.weight": "model-00003-of-00004.safetensors",

|

| 303 |

"model.language_model.layers.32.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 304 |

"model.language_model.layers.32.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

| 305 |

+

"model.language_model.layers.33.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 306 |

+

"model.language_model.layers.33.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 307 |

+

"model.language_model.layers.33.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

| 308 |

+

"model.language_model.layers.33.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

| 309 |

+

"model.language_model.layers.33.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 310 |

+

"model.language_model.layers.33.self_attn.k_norm.weight": "model-00003-of-00004.safetensors",

|

| 311 |

+

"model.language_model.layers.33.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

| 312 |

+

"model.language_model.layers.33.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

| 313 |

+

"model.language_model.layers.33.self_attn.q_norm.weight": "model-00003-of-00004.safetensors",

|

| 314 |

+

"model.language_model.layers.33.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 315 |

+

"model.language_model.layers.33.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

| 316 |

+

"model.language_model.layers.34.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 317 |

+

"model.language_model.layers.34.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

| 318 |

+

"model.language_model.layers.34.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

| 319 |

+

"model.language_model.layers.34.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

| 320 |

+

"model.language_model.layers.34.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

| 321 |

+

"model.language_model.layers.34.self_attn.k_norm.weight": "model-00003-of-00004.safetensors",

|

| 322 |

+

"model.language_model.layers.34.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

| 323 |

+

"model.language_model.layers.34.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

| 324 |

+

"model.language_model.layers.34.self_attn.q_norm.weight": "model-00003-of-00004.safetensors",

|

| 325 |

+

"model.language_model.layers.34.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 326 |

+

"model.language_model.layers.34.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

| 327 |

"model.language_model.layers.35.input_layernorm.weight": "model-00004-of-00004.safetensors",

|

| 328 |

"model.language_model.layers.35.mlp.down_proj.weight": "model-00004-of-00004.safetensors",

|

| 329 |

"model.language_model.layers.35.mlp.gate_proj.weight": "model-00004-of-00004.safetensors",

|

|

|

|

| 331 |

"model.language_model.layers.35.post_attention_layernorm.weight": "model-00004-of-00004.safetensors",

|

| 332 |

"model.language_model.layers.35.self_attn.k_norm.weight": "model-00004-of-00004.safetensors",

|

| 333 |

"model.language_model.layers.35.self_attn.k_proj.weight": "model-00004-of-00004.safetensors",

|

| 334 |

+

"model.language_model.layers.35.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

| 335 |

"model.language_model.layers.35.self_attn.q_norm.weight": "model-00004-of-00004.safetensors",

|

| 336 |

+

"model.language_model.layers.35.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

| 337 |

"model.language_model.layers.35.self_attn.v_proj.weight": "model-00004-of-00004.safetensors",

|

| 338 |

"model.language_model.layers.4.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

| 339 |

"model.language_model.layers.4.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

|

|

|

| 357 |

"model.language_model.layers.5.self_attn.q_norm.weight": "model-00001-of-00004.safetensors",

|

| 358 |

"model.language_model.layers.5.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

| 359 |

"model.language_model.layers.5.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

| 360 |

+

"model.language_model.layers.6.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

| 361 |

+

"model.language_model.layers.6.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

| 362 |

"model.language_model.layers.6.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

| 363 |

"model.language_model.layers.6.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

| 364 |

+

"model.language_model.layers.6.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

| 365 |

"model.language_model.layers.6.self_attn.k_norm.weight": "model-00001-of-00004.safetensors",

|

| 366 |

"model.language_model.layers.6.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

| 367 |

"model.language_model.layers.6.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

| 368 |

"model.language_model.layers.6.self_attn.q_norm.weight": "model-00001-of-00004.safetensors",

|

| 369 |

"model.language_model.layers.6.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

| 370 |

"model.language_model.layers.6.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

| 371 |

+

"model.language_model.layers.7.input_layernorm.weight": "model-00001-of-00004.safetensors",

|