Create README.md

Browse files

README.md

ADDED

|

@@ -0,0 +1,80 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

---

|

| 4 |

+

<h2 align="center" style="line-height: 25px;">

|

| 5 |

+

FocusDiff: Advancing Fine-Grained Text-Image Alignment for Autoregressive Visual Generation through RL

|

| 6 |

+

</h2>

|

| 7 |

+

|

| 8 |

+

<p align="center">

|

| 9 |

+

<a href="https://arxiv.org/abs/2506.05501">

|

| 10 |

+

<img src="https://img.shields.io/badge/Paper-red?style=flat&logo=arxiv">

|

| 11 |

+

</a>

|

| 12 |

+

<a href="https://focusdiff.github.io/">

|

| 13 |

+

<img src="https://img.shields.io/badge/Project Page-white?style=flat&logo=google-docs">

|

| 14 |

+

</a>

|

| 15 |

+

<a href="https://github.com/wendell0218/FocusDiff">

|

| 16 |

+

<img src="https://img.shields.io/badge/Code-black?style=flat&logo=github">

|

| 17 |

+

</a>

|

| 18 |

+

<a href="https://huggingface.co/wendell0218/Janus-FocusDiff-7B">

|

| 19 |

+

<img src="https://img.shields.io/badge/-%F0%9F%A4%97%20Checkpoint-orange?style=flat"/>

|

| 20 |

+

</a>

|

| 21 |

+

<a href="">

|

| 22 |

+

<img src="https://img.shields.io/badge/-%F0%9F%A4%97%20Data-orange?style=flat"/>

|

| 23 |

+

</a>

|

| 24 |

+

<a href="">

|

| 25 |

+

<img src="https://img.shields.io/github/last-commit/wendell0218/FocusDiff?color=green">

|

| 26 |

+

</a>

|

| 27 |

+

</p>

|

| 28 |

+

|

| 29 |

+

<div align="center">

|

| 30 |

+

Kaihang Pan<sup>1*</sup>, Wendong Bu<sup>1*</sup>, Yuruo Wu<sup>1*</sup>, Yang Wu<sup>2</sup>, Kai Shen<sup>1</sup>, Yunfei Li<sup>2</sup>,

|

| 31 |

+

|

| 32 |

+

Hang Zhao<sup>2</sup>, Juncheng Li<sup>1†</sup>, Siliang Tang<sup>1</sup>, Yueting Zhuang<sup>1</sup>

|

| 33 |

+

|

| 34 |

+

<sup>1</sup>Zhejiang University, <sup>2</sup>Ant Group

|

| 35 |

+

|

| 36 |

+

\*Equal Contribution, <sup>†</sup>Corresponding Authors

|

| 37 |

+

|

| 38 |

+

</div>

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

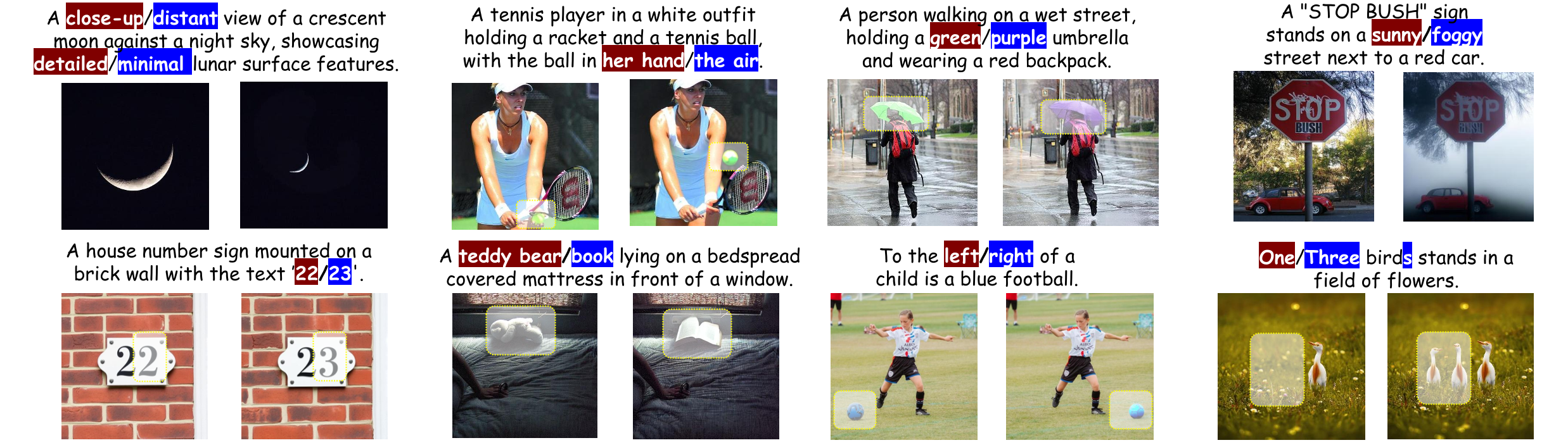

## 🚀 Overview

|

| 43 |

+

|

| 44 |

+

**FocusDiff** is a new method for improving fine-grained text-image alignment in autoregressive text-to-image models. By introducing the **FocusDiff-Data** dataset and a novel **Pair-GRPO** reinforcement learning framework, we help models learn subtle semantic differences between similar text-image pairs. Based on paired data in FocusDiff-Data, we further introduce the **PairComp** Benchmark, which focuses on subtle semantic differences.

|

| 45 |

+

|

| 46 |

+

Key Contributions:

|

| 47 |

+

1. **PairComp Benchmark**: A new benchmark focusing on fine-grained differences in text prompts.

|

| 48 |

+

|

| 49 |

+

<img src="https://raw.githubusercontent.com/wendell0218/FocusDiff/refs/heads/main/assets/benchmark.png" width="100%"/>

|

| 50 |

+

|

| 51 |

+

2. **FocusDiff Approach**: A method using paired data and reinforcement learning to enhance fine-grained text-image alignment.

|

| 52 |

+

|

| 53 |

+

<div style="text-align: center;">

|

| 54 |

+

<img src="https://raw.githubusercontent.com/wendell0218/FocusDiff/refs/heads/main/assets/grpo.png" width="80%" />

|

| 55 |

+

</div>

|

| 56 |

+

|

| 57 |

+

3. **SOTA Results**: Our model is evaluated with the top performance on multiple benchmarks including **GenEval**, **T2I-CompBench**, **DPG-Bench**, and our newly proposed **PairComp** benchmark.

|

| 58 |

+

|

| 59 |

+

## ✨️ Quickstart

|

| 60 |

+

|

| 61 |

+

|

| 62 |

+

## 🤝 Acknowledgment

|

| 63 |

+

|

| 64 |

+

Our project is developed based on the following repositories:

|

| 65 |

+

|

| 66 |

+

- [Janus-Series](https://github.com/deepseek-ai/Janus): Unified Multimodal Understanding and Generation Models

|

| 67 |

+

- [Open-R1](https://github.com/huggingface/open-r1): Fully open reproduction of DeepSeek-R1

|

| 68 |

+

|

| 69 |

+

## 📜 Citation

|

| 70 |

+

|

| 71 |

+

If you find this work useful for your research, please cite our paper and star our git repo:

|

| 72 |

+

|

| 73 |

+

```bibtex

|

| 74 |

+

@article{pan2025focusdiff,

|

| 75 |

+

title={FocusDiff: Advancing Fine-Grained Text-Image Alignment for Autoregressive Visual Generation through RL},

|

| 76 |

+

author={Pan, Kaihang and Bu, Wendong and Wu, Yuruo and Wu, Yang and Shen, Kai and Li, Yunfei and Zhao, Hang and Li, Juncheng and Tang, Siliang and Zhuang, Yueting},

|

| 77 |

+

journal={arXiv preprint arXiv:2506.05501},

|

| 78 |

+

year={2025}

|

| 79 |

+

}

|

| 80 |

+

```

|