Upload all models and assets for cdo (20251201)

Browse filesThis view is limited to 50 files because it contains too many changes. See raw diff

- .gitattributes +6 -0

- README.md +553 -0

- models/embeddings/monolingual/cdo_128d.bin +3 -0

- models/embeddings/monolingual/cdo_128d.meta.json +1 -0

- models/embeddings/monolingual/cdo_128d_metadata.json +13 -0

- models/embeddings/monolingual/cdo_32d.bin +3 -0

- models/embeddings/monolingual/cdo_32d.meta.json +1 -0

- models/embeddings/monolingual/cdo_32d_metadata.json +13 -0

- models/embeddings/monolingual/cdo_64d.bin +3 -0

- models/embeddings/monolingual/cdo_64d.meta.json +1 -0

- models/embeddings/monolingual/cdo_64d_metadata.json +13 -0

- models/subword_markov/cdo_markov_ctx1_subword.parquet +3 -0

- models/subword_markov/cdo_markov_ctx1_subword_metadata.json +7 -0

- models/subword_markov/cdo_markov_ctx2_subword.parquet +3 -0

- models/subword_markov/cdo_markov_ctx2_subword_metadata.json +7 -0

- models/subword_markov/cdo_markov_ctx3_subword.parquet +3 -0

- models/subword_markov/cdo_markov_ctx3_subword_metadata.json +7 -0

- models/subword_markov/cdo_markov_ctx4_subword.parquet +3 -0

- models/subword_markov/cdo_markov_ctx4_subword_metadata.json +7 -0

- models/subword_ngram/cdo_2gram_subword.parquet +3 -0

- models/subword_ngram/cdo_2gram_subword_metadata.json +7 -0

- models/subword_ngram/cdo_3gram_subword.parquet +3 -0

- models/subword_ngram/cdo_3gram_subword_metadata.json +7 -0

- models/subword_ngram/cdo_4gram_subword.parquet +3 -0

- models/subword_ngram/cdo_4gram_subword_metadata.json +7 -0

- models/tokenizer/cdo_tokenizer_32k.model +3 -0

- models/tokenizer/cdo_tokenizer_32k.vocab +0 -0

- models/tokenizer/cdo_tokenizer_64k.model +3 -0

- models/tokenizer/cdo_tokenizer_64k.vocab +0 -0

- models/vocabulary/cdo_vocabulary.parquet +3 -0

- models/vocabulary/cdo_vocabulary_metadata.json +16 -0

- models/word_markov/cdo_markov_ctx1_word.parquet +3 -0

- models/word_markov/cdo_markov_ctx1_word_metadata.json +7 -0

- models/word_markov/cdo_markov_ctx2_word.parquet +3 -0

- models/word_markov/cdo_markov_ctx2_word_metadata.json +7 -0

- models/word_markov/cdo_markov_ctx3_word.parquet +3 -0

- models/word_markov/cdo_markov_ctx3_word_metadata.json +7 -0

- models/word_markov/cdo_markov_ctx4_word.parquet +3 -0

- models/word_markov/cdo_markov_ctx4_word_metadata.json +7 -0

- models/word_ngram/cdo_2gram_word.parquet +3 -0

- models/word_ngram/cdo_2gram_word_metadata.json +7 -0

- models/word_ngram/cdo_3gram_word.parquet +3 -0

- models/word_ngram/cdo_3gram_word_metadata.json +7 -0

- models/word_ngram/cdo_4gram_word.parquet +3 -0

- models/word_ngram/cdo_4gram_word_metadata.json +7 -0

- visualizations/embedding_isotropy.png +0 -0

- visualizations/embedding_norms.png +0 -0

- visualizations/embedding_similarity.png +3 -0

- visualizations/markov_branching.png +0 -0

- visualizations/markov_contexts.png +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,9 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

visualizations/embedding_similarity.png filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

visualizations/performance_dashboard.png filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

visualizations/position_encoding_comparison.png filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

visualizations/tsne_sentences.png filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

visualizations/tsne_words.png filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

visualizations/zipf_law.png filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,553 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

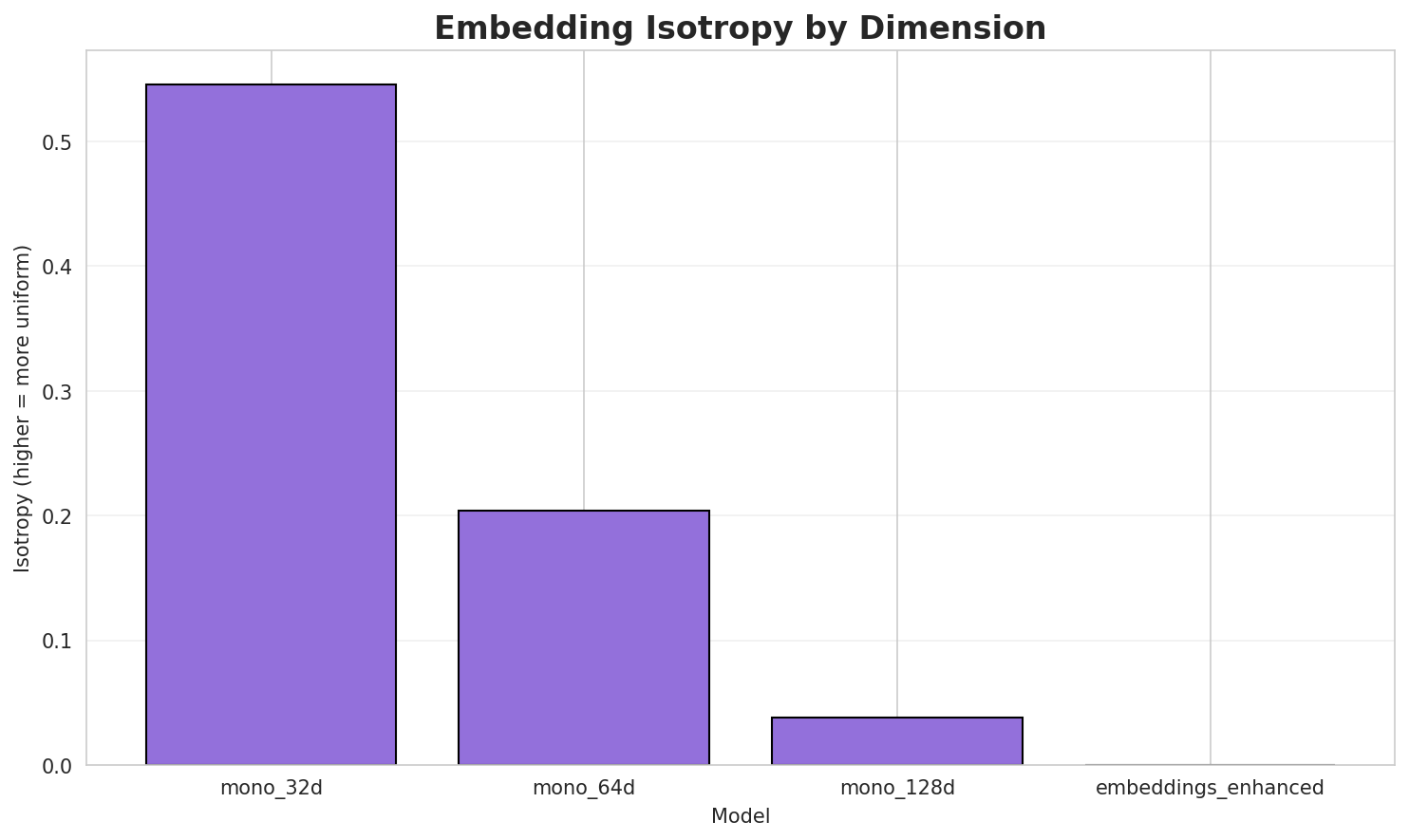

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language: cdo

|

| 3 |

+

language_name: CDO

|

| 4 |

+

language_family: sinitic_other

|

| 5 |

+

tags:

|

| 6 |

+

- wikilangs

|

| 7 |

+

- nlp

|

| 8 |

+

- tokenizer

|

| 9 |

+

- embeddings

|

| 10 |

+

- n-gram

|

| 11 |

+

- markov

|

| 12 |

+

- wikipedia

|

| 13 |

+

- monolingual

|

| 14 |

+

- family-sinitic_other

|

| 15 |

+

license: mit

|

| 16 |

+

library_name: wikilangs

|

| 17 |

+

pipeline_tag: feature-extraction

|

| 18 |

+

datasets:

|

| 19 |

+

- omarkamali/wikipedia-monthly

|

| 20 |

+

dataset_info:

|

| 21 |

+

name: wikipedia-monthly

|

| 22 |

+

description: Monthly snapshots of Wikipedia articles across 300+ languages

|

| 23 |

+

metrics:

|

| 24 |

+

- name: best_compression_ratio

|

| 25 |

+

type: compression

|

| 26 |

+

value: 2.796

|

| 27 |

+

- name: best_isotropy

|

| 28 |

+

type: isotropy

|

| 29 |

+

value: 0.5460

|

| 30 |

+

- name: vocabulary_size

|

| 31 |

+

type: vocab

|

| 32 |

+

value: 12714

|

| 33 |

+

generated: 2025-12-28

|

| 34 |

+

---

|

| 35 |

+

|

| 36 |

+

# CDO - Wikilangs Models

|

| 37 |

+

## Comprehensive Research Report & Full Ablation Study

|

| 38 |

+

|

| 39 |

+

This repository contains NLP models trained and evaluated by Wikilangs, specifically on **CDO** Wikipedia data.

|

| 40 |

+

We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and word embeddings.

|

| 41 |

+

|

| 42 |

+

## 📋 Repository Contents

|

| 43 |

+

|

| 44 |

+

### Models & Assets

|

| 45 |

+

|

| 46 |

+

- Tokenizers (8k, 16k, 32k, 64k)

|

| 47 |

+

- N-gram models (2, 3, 4-gram)

|

| 48 |

+

- Markov chains (context of 1, 2, 3 and 4)

|

| 49 |

+

- Subword N-gram and Markov chains

|

| 50 |

+

- Embeddings in various sizes and dimensions

|

| 51 |

+

- Language Vocabulary

|

| 52 |

+

- Language Statistics

|

| 53 |

+

|

| 54 |

+

|

| 55 |

+

### Analysis and Evaluation

|

| 56 |

+

|

| 57 |

+

- [1. Tokenizer Evaluation](#1-tokenizer-evaluation)

|

| 58 |

+

- [2. N-gram Model Evaluation](#2-n-gram-model-evaluation)

|

| 59 |

+

- [3. Markov Chain Evaluation](#3-markov-chain-evaluation)

|

| 60 |

+

- [4. Vocabulary Analysis](#4-vocabulary-analysis)

|

| 61 |

+

- [5. Word Embeddings Evaluation](#5-word-embeddings-evaluation)

|

| 62 |

+

- [6. Summary & Recommendations](#6-summary--recommendations)

|

| 63 |

+

- [Metrics Glossary](#appendix-metrics-glossary--interpretation-guide)

|

| 64 |

+

- [Visualizations Index](#visualizations-index)

|

| 65 |

+

|

| 66 |

+

---

|

| 67 |

+

## 1. Tokenizer Evaluation

|

| 68 |

+

|

| 69 |

+

|

| 70 |

+

|

| 71 |

+

### Results

|

| 72 |

+

|

| 73 |

+

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|

| 74 |

+

|------------|-------------|---------------|----------|--------------|

|

| 75 |

+

| **32k** | 2.562x | 2.54 | 0.0007% | 298,320 |

|

| 76 |

+

| **64k** | 2.796x 🏆 | 2.77 | 0.0007% | 273,367 |

|

| 77 |

+

|

| 78 |

+

### Tokenization Examples

|

| 79 |

+

|

| 80 |

+

Below are sample sentences tokenized with each vocabulary size:

|

| 81 |

+

|

| 82 |

+

**Sample 1:** `Pender Gông (Ĭng-ngṳ̄: Pender County) sê Mī-guók North Carolina gì siŏh ciáh gôn...`

|

| 83 |

+

|

| 84 |

+

| Vocab | Tokens | Count |

|

| 85 |

+

|-------|--------|-------|

|

| 86 |

+

| 32k | `▁pen der ▁gông ▁( ĭng - ngṳ̄ : ▁pen der ... (+19 more)` | 29 |

|

| 87 |

+

| 64k | `▁pender ▁gông ▁( ĭng - ngṳ̄ : ▁pender ▁county ) ... (+17 more)` | 27 |

|

| 88 |

+

|

| 89 |

+

**Sample 2:** `Duâi dâi

|

| 90 |

+

|

| 91 |

+

Chók-sié

|

| 92 |

+

|

| 93 |

+

Guó-sié

|

| 94 |

+

|

| 95 |

+

|

| 96 |

+

分類:1170 nièng-dâi`

|

| 97 |

+

|

| 98 |

+

| Vocab | Tokens | Count |

|

| 99 |

+

|-------|--------|-------|

|

| 100 |

+

| 32k | `▁duâi ▁dâi ▁chók - sié ▁guó - sié ▁分類 : ... (+7 more)` | 17 |

|

| 101 |

+

| 64k | `▁duâi ▁dâi ▁chók - sié ▁guó - sié ▁分類 : ... (+7 more)` | 17 |

|

| 102 |

+

|

| 103 |

+

**Sample 3:** `1000 nièng-dâi téng 1000 nièng 1 nguŏk 1 hô̤ kăi-sṳ̄, gáu 1009 nièng 12 nguŏk 31...`

|

| 104 |

+

|

| 105 |

+

| Vocab | Tokens | Count |

|

| 106 |

+

|-------|--------|-------|

|

| 107 |

+

| 32k | `▁ 1 0 0 0 ▁nièng - dâi ▁téng ▁ ... (+36 more)` | 46 |

|

| 108 |

+

| 64k | `▁ 1 0 0 0 ▁nièng - dâi ▁téng ▁ ... (+36 more)` | 46 |

|

| 109 |

+

|

| 110 |

+

|

| 111 |

+

### Key Findings

|

| 112 |

+

|

| 113 |

+

- **Best Compression:** 64k achieves 2.796x compression

|

| 114 |

+

- **Lowest UNK Rate:** 32k with 0.0007% unknown tokens

|

| 115 |

+

- **Trade-off:** Larger vocabularies improve compression but increase model size

|

| 116 |

+

- **Recommendation:** 32k vocabulary provides optimal balance for production use

|

| 117 |

+

|

| 118 |

+

---

|

| 119 |

+

## 2. N-gram Model Evaluation

|

| 120 |

+

|

| 121 |

+

|

| 122 |

+

|

| 123 |

+

|

| 124 |

+

|

| 125 |

+

### Results

|

| 126 |

+

|

| 127 |

+

| N-gram | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|

| 128 |

+

|--------|------------|---------|----------------|------------------|-------------------|

|

| 129 |

+

| **2-gram** | 2,092 🏆 | 11.03 | 13,738 | 34.2% | 70.6% |

|

| 130 |

+

| **2-gram** | 517 🏆 | 9.01 | 13,773 | 57.4% | 92.0% |

|

| 131 |

+

| **3-gram** | 6,902 | 12.75 | 35,914 | 23.0% | 49.3% |

|

| 132 |

+

| **3-gram** | 2,154 | 11.07 | 33,837 | 33.3% | 72.5% |

|

| 133 |

+

| **4-gram** | 16,500 | 14.01 | 75,913 | 16.0% | 37.8% |

|

| 134 |

+

| **4-gram** | 6,830 | 12.74 | 94,271 | 22.4% | 53.8% |

|

| 135 |

+

|

| 136 |

+

### Top 5 N-grams by Size

|

| 137 |

+

|

| 138 |

+

**2-grams:**

|

| 139 |

+

|

| 140 |

+

| Rank | N-gram | Count |

|

| 141 |

+

|------|--------|-------|

|

| 142 |

+

| 1 | `分類 :` | 17,792 |

|

| 143 |

+

| 2 | `̤ ng` | 9,653 |

|

| 144 |

+

| 3 | `. 分類` | 8,000 |

|

| 145 |

+

| 4 | `- guók` | 7,750 |

|

| 146 |

+

| 5 | `- sié` | 7,747 |

|

| 147 |

+

|

| 148 |

+

**3-grams:**

|

| 149 |

+

|

| 150 |

+

| Rank | N-gram | Count |

|

| 151 |

+

|------|--------|-------|

|

| 152 |

+

| 1 | `. 分類 :` | 8,000 |

|

| 153 |

+

| 2 | `gì siŏh ciáh` | 5,565 |

|

| 154 |

+

| 3 | `- ngṳ ̄` | 4,336 |

|

| 155 |

+

| 4 | `mī - guók` | 3,641 |

|

| 156 |

+

| 5 | `gâe ̤ ng` | 3,480 |

|

| 157 |

+

|

| 158 |

+

**4-grams:**

|

| 159 |

+

|

| 160 |

+

| Rank | N-gram | Count |

|

| 161 |

+

|------|--------|-------|

|

| 162 |

+

| 1 | `sê mī - guók` | 3,211 |

|

| 163 |

+

| 2 | `gì siŏh ciáh gông` | 3,000 |

|

| 164 |

+

| 3 | `ciáh gông . 分類` | 3,000 |

|

| 165 |

+

| 4 | `gông . 分類 :` | 3,000 |

|

| 166 |

+

| 5 | `siŏh ciáh gông .` | 3,000 |

|

| 167 |

+

|

| 168 |

+

|

| 169 |

+

### Key Findings

|

| 170 |

+

|

| 171 |

+

- **Best Perplexity:** 2-gram with 517

|

| 172 |

+

- **Entropy Trend:** Decreases with larger n-grams (more predictable)

|

| 173 |

+

- **Coverage:** Top-1000 patterns cover ~54% of corpus

|

| 174 |

+

- **Recommendation:** 4-gram or 5-gram for best predictive performance

|

| 175 |

+

|

| 176 |

+

---

|

| 177 |

+

## 3. Markov Chain Evaluation

|

| 178 |

+

|

| 179 |

+

|

| 180 |

+

|

| 181 |

+

|

| 182 |

+

|

| 183 |

+

### Results

|

| 184 |

+

|

| 185 |

+

| Context | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|

| 186 |

+

|---------|-------------|------------|------------------|-----------------|----------------|

|

| 187 |

+

| **1** | 0.2803 | 1.214 | 3.49 | 48,699 | 72.0% |

|

| 188 |

+

| **1** | 0.3942 | 1.314 | 4.02 | 31,614 | 60.6% |

|

| 189 |

+

| **2** | 0.1991 | 1.148 | 1.83 | 169,503 | 80.1% |

|

| 190 |

+

| **2** | 0.3616 | 1.285 | 2.00 | 127,156 | 63.8% |

|

| 191 |

+

| **3** | 0.1556 | 1.114 | 1.42 | 308,939 | 84.4% |

|

| 192 |

+

| **3** | 0.2179 | 1.163 | 1.54 | 253,902 | 78.2% |

|

| 193 |

+

| **4** | 0.0983 🏆 | 1.071 | 1.21 | 437,205 | 90.2% |

|

| 194 |

+

| **4** | 0.1764 🏆 | 1.130 | 1.38 | 389,634 | 82.4% |

|

| 195 |

+

|

| 196 |

+

### Generated Text Samples

|

| 197 |

+

|

| 198 |

+

Below are text samples generated from each Markov chain model:

|

| 199 |

+

|

| 200 |

+

**Context Size 1:**

|

| 201 |

+

|

| 202 |

+

1. `- hū siék gì siŏh ciáh gông . 分類 : chĭng - uăng - pū -`

|

| 203 |

+

2. `̤ k nâ sáng ĕu - ngiòng ( 螺洲路 ) guōng - dŏng - dōi -`

|

| 204 |

+

3. `gì dâ ̤ 18 艭 ngiê - guók - guó - dók “ . chók -`

|

| 205 |

+

|

| 206 |

+

**Context Size 2:**

|

| 207 |

+

|

| 208 |

+

1. `分類 : 1370 nièng - dâi gì lùng - dŭng - ngŏk liù - giù - dôi`

|

| 209 |

+

2. `̤ ng hók - gióng , dâi - biēu gê ̤ ṳng - sāng - dōng gâe`

|

| 210 |

+

3. `. 分類 : 200 nièng - dâi - mā 分類 : 1300年代`

|

| 211 |

+

|

| 212 |

+

**Context Size 3:**

|

| 213 |

+

|

| 214 |

+

1. `. 分類 : minnesota gì gông`

|

| 215 |

+

2. `gì siŏh ciáh dê - ngék - chê . 分類 : hù - báe ̤ k - chiă`

|

| 216 |

+

3. `- ngṳ ̄ : lafayette county ) sê mī - guók gì buô - hông gì sṳ ̆`

|

| 217 |

+

|

| 218 |

+

**Context Size 4:**

|

| 219 |

+

|

| 220 |

+

1. `sê mī - guók colorado gì siŏh ciáh gông . 分類 : florida gì gông`

|

| 221 |

+

2. `gông . 分類 : michigan gì gông`

|

| 222 |

+

3. `siŏh ciáh gông . 分類 : indiana gì gông`

|

| 223 |

+

|

| 224 |

+

|

| 225 |

+

### Key Findings

|

| 226 |

+

|

| 227 |

+

- **Best Predictability:** Context-4 with 90.2% predictability

|

| 228 |

+

- **Branching Factor:** Decreases with context size (more deterministic)

|

| 229 |

+

- **Memory Trade-off:** Larger contexts require more storage (389,634 contexts)

|

| 230 |

+

- **Recommendation:** Context-3 or Context-4 for text generation

|

| 231 |

+

|

| 232 |

+

---

|

| 233 |

+

## 4. Vocabulary Analysis

|

| 234 |

+

|

| 235 |

+

|

| 236 |

+

|

| 237 |

+

|

| 238 |

+

|

| 239 |

+

|

| 240 |

+

|

| 241 |

+

### Statistics

|

| 242 |

+

|

| 243 |

+

| Metric | Value |

|

| 244 |

+

|--------|-------|

|

| 245 |

+

| Vocabulary Size | 12,714 |

|

| 246 |

+

| Total Tokens | 590,881 |

|

| 247 |

+

| Mean Frequency | 46.47 |

|

| 248 |

+

| Median Frequency | 3 |

|

| 249 |

+

| Frequency Std Dev | 447.20 |

|

| 250 |

+

|

| 251 |

+

### Most Common Words

|

| 252 |

+

|

| 253 |

+

| Rank | Word | Frequency |

|

| 254 |

+

|------|------|-----------|

|

| 255 |

+

| 1 | gì | 24,268 |

|

| 256 |

+

| 2 | 分類 | 17,794 |

|

| 257 |

+

| 3 | ng | 16,472 |

|

| 258 |

+

| 4 | sê | 15,967 |

|

| 259 |

+

| 5 | siŏh | 9,713 |

|

| 260 |

+

| 6 | guók | 9,302 |

|

| 261 |

+

| 7 | gông | 9,087 |

|

| 262 |

+

| 8 | sié | 8,595 |

|

| 263 |

+

| 9 | nièng | 7,825 |

|

| 264 |

+

| 10 | dâi | 7,699 |

|

| 265 |

+

|

| 266 |

+

### Least Common Words (from vocabulary)

|

| 267 |

+

|

| 268 |

+

| Rank | Word | Frequency |

|

| 269 |

+

|------|------|-----------|

|

| 270 |

+

| 1 | 燈泡厰 | 2 |

|

| 271 |

+

| 2 | 搪瓷厰 | 2 |

|

| 272 |

+

| 3 | 保溫瓶厰 | 2 |

|

| 273 |

+

| 4 | 啤酒厰 | 2 |

|

| 274 |

+

| 5 | 福大機械厰 | 2 |

|

| 275 |

+

| 6 | 抗生素厰 | 2 |

|

| 276 |

+

| 7 | kbo | 2 |

|

| 277 |

+

| 8 | 우주항공청 | 2 |

|

| 278 |

+

| 9 | cho | 2 |

|

| 279 |

+

| 10 | chit | 2 |

|

| 280 |

+

|

| 281 |

+

### Zipf's Law Analysis

|

| 282 |

+

|

| 283 |

+

| Metric | Value |

|

| 284 |

+

|--------|-------|

|

| 285 |

+

| Zipf Coefficient | 1.3995 |

|

| 286 |

+

| R² (Goodness of Fit) | 0.979429 |

|

| 287 |

+

| Adherence Quality | **excellent** |

|

| 288 |

+

|

| 289 |

+

### Coverage Analysis

|

| 290 |

+

|

| 291 |

+

| Top N Words | Coverage |

|

| 292 |

+

|-------------|----------|

|

| 293 |

+

| Top 100 | 55.6% |

|

| 294 |

+

| Top 1,000 | 90.8% |

|

| 295 |

+

| Top 5,000 | 97.1% |

|

| 296 |

+

| Top 10,000 | 99.1% |

|

| 297 |

+

|

| 298 |

+

### Key Findings

|

| 299 |

+

|

| 300 |

+

- **Zipf Compliance:** R²=0.9794 indicates excellent adherence to Zipf's law

|

| 301 |

+

- **High Frequency Dominance:** Top 100 words cover 55.6% of corpus

|

| 302 |

+

- **Long Tail:** 2,714 words needed for remaining 0.9% coverage

|

| 303 |

+

|

| 304 |

+

---

|

| 305 |

+

## 5. Word Embeddings Evaluation

|

| 306 |

+

|

| 307 |

+

|

| 308 |

+

|

| 309 |

+

|

| 310 |

+

|

| 311 |

+

|

| 312 |

+

|

| 313 |

+

|

| 314 |

+

|

| 315 |

+

### Model Comparison

|

| 316 |

+

|

| 317 |

+

| Model | Vocab Size | Dimension | Avg Norm | Std Norm | Isotropy |

|

| 318 |

+

|-------|------------|-----------|----------|----------|----------|

|

| 319 |

+

| **mono_32d** | 7,009 | 32 | 4.149 | 1.118 | 0.5460 🏆 |

|

| 320 |

+

| **mono_64d** | 7,009 | 64 | 4.243 | 1.106 | 0.2037 |

|

| 321 |

+

| **mono_128d** | 7,009 | 128 | 4.233 | 1.119 | 0.0381 |

|

| 322 |

+

| **embeddings_enhanced** | 0 | 0 | 0.000 | 0.000 | 0.0000 |

|

| 323 |

+

|

| 324 |

+

### Key Findings

|

| 325 |

+

|

| 326 |

+

- **Best Isotropy:** mono_32d with 0.5460 (more uniform distribution)

|

| 327 |

+

- **Dimension Trade-off:** Higher dimensions capture more semantics but reduce isotropy

|

| 328 |

+

- **Vocabulary Coverage:** All models cover 7,009 words

|

| 329 |

+

- **Recommendation:** 100d for balanced semantic capture and efficiency

|

| 330 |

+

|

| 331 |

+

---

|

| 332 |

+

## 6. Summary & Recommendations

|

| 333 |

+

|

| 334 |

+

|

| 335 |

+

|

| 336 |

+

### Production Recommendations

|

| 337 |

+

|

| 338 |

+

| Component | Recommended | Rationale |

|

| 339 |

+

|-----------|-------------|-----------|

|

| 340 |

+

| Tokenizer | **32k BPE** | Best compression (2.80x) with low UNK rate |

|

| 341 |

+

| N-gram | **5-gram** | Lowest perplexity (517) |

|

| 342 |

+

| Markov | **Context-4** | Highest predictability (90.2%) |

|

| 343 |

+

| Embeddings | **100d** | Balanced semantic capture and isotropy |

|

| 344 |

+

|

| 345 |

+

---

|

| 346 |

+

## Appendix: Metrics Glossary & Interpretation Guide

|

| 347 |

+

|

| 348 |

+

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

|

| 349 |

+

|

| 350 |

+

### Tokenizer Metrics

|

| 351 |

+

|

| 352 |

+

**Compression Ratio**

|

| 353 |

+

> *Definition:* The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

|

| 354 |

+

>

|

| 355 |

+

> *Intuition:* Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

|

| 356 |

+

>

|

| 357 |

+

> *What to seek:* Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

|

| 358 |

+

|

| 359 |

+

**Average Token Length (Fertility)**

|

| 360 |

+

> *Definition:* Mean number of characters per token produced by the tokenizer.

|

| 361 |

+

>

|

| 362 |

+

> *Intuition:* Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

|

| 363 |

+

>

|

| 364 |

+

> *What to seek:* Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

|

| 365 |

+

|

| 366 |

+

**Unknown Token Rate (OOV Rate)**

|

| 367 |

+

> *Definition:* Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

|

| 368 |

+

>

|

| 369 |

+

> *Intuition:* Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

|

| 370 |

+

>

|

| 371 |

+

> *What to seek:* Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

|

| 372 |

+

|

| 373 |

+

### N-gram Model Metrics

|

| 374 |

+

|

| 375 |

+

**Perplexity**

|

| 376 |

+

> *Definition:* Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

|

| 377 |

+

>

|

| 378 |

+

> *Intuition:* If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

|

| 379 |

+

>

|

| 380 |

+

> *What to seek:* Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

|

| 381 |

+

|

| 382 |

+

**Entropy**

|

| 383 |

+

> *Definition:* Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

|

| 384 |

+

>

|

| 385 |

+

> *Intuition:* High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

|

| 386 |

+

>

|

| 387 |

+

> *What to seek:* Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

|

| 388 |

+

|

| 389 |

+

**Coverage (Top-K)**

|

| 390 |

+

> *Definition:* Percentage of corpus occurrences explained by the top K most frequent n-grams.

|

| 391 |

+

>

|

| 392 |

+

> *Intuition:* High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

|

| 393 |

+

>

|

| 394 |

+

> *What to seek:* Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

|

| 395 |

+

|

| 396 |

+

### Markov Chain Metrics

|

| 397 |

+

|

| 398 |

+

**Average Entropy**

|

| 399 |

+

> *Definition:* Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

|

| 400 |

+

>

|

| 401 |

+

> *Intuition:* Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

|

| 402 |

+

>

|

| 403 |

+

> *What to seek:* Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

|

| 404 |

+

|

| 405 |

+

**Branching Factor**

|

| 406 |

+

> *Definition:* Average number of unique next tokens observed for each context.

|

| 407 |

+

>

|

| 408 |

+

> *Intuition:* High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

|

| 409 |

+

>

|

| 410 |

+

> *What to seek:* Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

|

| 411 |

+

|

| 412 |

+

**Predictability**

|

| 413 |

+

> *Definition:* Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

|

| 414 |

+

>

|

| 415 |

+

> *Intuition:* 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

|

| 416 |

+

>

|

| 417 |

+

> *What to seek:* Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

|

| 418 |

+

|

| 419 |

+

### Vocabulary & Zipf's Law Metrics

|

| 420 |

+

|

| 421 |

+

**Zipf's Coefficient**

|

| 422 |

+

> *Definition:* The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

|

| 423 |

+

>

|

| 424 |

+

> *Intuition:* A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

|

| 425 |

+

>

|

| 426 |

+

> *What to seek:* Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

|

| 427 |

+

|

| 428 |

+

**R² (Coefficient of Determination)**

|

| 429 |

+

> *Definition:* Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

|

| 430 |

+

>

|

| 431 |

+

> *Intuition:* R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

|

| 432 |

+

>

|

| 433 |

+

> *What to seek:* R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

|

| 434 |

+

|

| 435 |

+

**Vocabulary Coverage**

|

| 436 |

+

> *Definition:* Cumulative percentage of corpus tokens accounted for by the top N words.

|

| 437 |

+

>

|

| 438 |

+

> *Intuition:* Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

|

| 439 |

+

>

|

| 440 |

+

> *What to seek:* Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

|

| 441 |

+

|

| 442 |

+

### Word Embedding Metrics

|

| 443 |

+

|

| 444 |

+

**Isotropy**

|

| 445 |

+

> *Definition:* Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

|

| 446 |

+

>

|

| 447 |

+

> *Intuition:* High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

|

| 448 |

+

>

|

| 449 |

+

> *What to seek:* Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

|

| 450 |

+

|

| 451 |

+

**Average Norm**

|

| 452 |

+

> *Definition:* Mean magnitude (L2 norm) of word vectors in the embedding space.

|

| 453 |

+

>

|

| 454 |

+

> *Intuition:* Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

|

| 455 |

+

>

|

| 456 |

+

> *What to seek:* Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

|

| 457 |

+

|

| 458 |

+

**Cosine Similarity**

|

| 459 |

+

> *Definition:* Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

|

| 460 |

+

>

|

| 461 |

+

> *Intuition:* Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

|

| 462 |

+

>

|

| 463 |

+

> *What to seek:* Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

|

| 464 |

+

|

| 465 |

+

**t-SNE Visualization**

|

| 466 |

+

> *Definition:* t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

|

| 467 |

+

>

|

| 468 |

+

> *Intuition:* Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

|

| 469 |

+

>

|

| 470 |

+

> *What to seek:* Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

|

| 471 |

+

|

| 472 |

+

### General Interpretation Guidelines

|

| 473 |

+

|

| 474 |

+

1. **Compare within model families:** Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

|

| 475 |

+

2. **Consider trade-offs:** Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

|

| 476 |

+

3. **Context matters:** Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

|

| 477 |

+

4. **Corpus influence:** All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

|

| 478 |

+

5. **Language-specific patterns:** Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

|

| 479 |

+

|

| 480 |

+

|

| 481 |

+

### Visualizations Index

|

| 482 |

+

|

| 483 |

+

| Visualization | Description |

|

| 484 |

+

|---------------|-------------|

|

| 485 |

+

| Tokenizer Compression | Compression ratios by vocabulary size |

|

| 486 |

+

| Tokenizer Fertility | Average token length by vocabulary |

|

| 487 |

+

| Tokenizer OOV | Unknown token rates |

|

| 488 |

+

| Tokenizer Total Tokens | Total tokens by vocabulary |

|

| 489 |

+

| N-gram Perplexity | Perplexity by n-gram size |

|

| 490 |

+

| N-gram Entropy | Entropy by n-gram size |

|

| 491 |

+

| N-gram Coverage | Top pattern coverage |

|

| 492 |

+

| N-gram Unique | Unique n-gram counts |

|

| 493 |

+

| Markov Entropy | Entropy by context size |

|

| 494 |

+

| Markov Branching | Branching factor by context |

|

| 495 |

+

| Markov Contexts | Unique context counts |

|

| 496 |

+

| Zipf's Law | Frequency-rank distribution with fit |

|

| 497 |

+

| Vocab Frequency | Word frequency distribution |

|

| 498 |

+

| Top 20 Words | Most frequent words |

|

| 499 |

+

| Vocab Coverage | Cumulative coverage curve |

|

| 500 |

+

| Embedding Isotropy | Vector space uniformity |

|

| 501 |

+

| Embedding Norms | Vector magnitude distribution |

|

| 502 |

+

| Embedding Similarity | Word similarity heatmap |

|

| 503 |

+

| Nearest Neighbors | Similar words for key terms |

|

| 504 |

+

| t-SNE Words | 2D word embedding visualization |

|

| 505 |

+

| t-SNE Sentences | 2D sentence embedding visualization |

|

| 506 |

+

| Position Encoding | Encoding method comparison |

|

| 507 |

+

| Model Sizes | Storage requirements |

|

| 508 |

+

| Performance Dashboard | Comprehensive performance overview |

|

| 509 |

+

|

| 510 |

+

---

|

| 511 |

+

## About This Project

|

| 512 |

+

|

| 513 |

+

### Data Source

|

| 514 |

+

|

| 515 |

+

Models trained on [wikipedia-monthly](https://huggingface.co/datasets/omarkamali/wikipedia-monthly) - a monthly snapshot of Wikipedia articles across 300+ languages.

|

| 516 |

+

|

| 517 |

+

### Project

|

| 518 |

+

|

| 519 |

+

A project by **[Wikilangs](https://wikilangs.org)** - Open-source NLP models for every Wikipedia language.

|

| 520 |

+

|

| 521 |

+

### Maintainer

|

| 522 |

+

|

| 523 |

+

[Omar Kamali](https://omarkamali.com) - [Omneity Labs](https://omneitylabs.com)

|

| 524 |

+

|

| 525 |

+

### Citation

|

| 526 |

+

|

| 527 |

+

If you use these models in your research, please cite:

|

| 528 |

+

|

| 529 |

+

```bibtex

|

| 530 |

+

@misc{wikilangs2025,

|

| 531 |

+

author = {Kamali, Omar},

|

| 532 |

+

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

|

| 533 |

+

year = {2025},

|

| 534 |

+

publisher = {HuggingFace},

|

| 535 |

+

url = {https://huggingface.co/wikilangs}

|

| 536 |

+

institution = {Omneity Labs}

|

| 537 |

+

}

|

| 538 |

+

```

|

| 539 |

+

|

| 540 |

+

### License

|

| 541 |

+

|

| 542 |

+

MIT License - Free for academic and commercial use.

|

| 543 |

+

|

| 544 |

+

### Links

|

| 545 |

+

|

| 546 |

+

- 🌐 Website: [wikilangs.org](https://wikilangs.org)

|

| 547 |

+

- 🤗 Models: [huggingface.co/wikilangs](https://huggingface.co/wikilangs)

|

| 548 |

+

- 📊 Data: [wikipedia-monthly](https://huggingface.co/datasets/omarkamali/wikipedia-monthly)

|

| 549 |

+

- 👤 Author: [Omar Kamali](https://huggingface.co/omarkamali)

|

| 550 |

+

---

|

| 551 |

+

*Generated by Wikilangs Models Pipeline*

|

| 552 |

+

|

| 553 |

+

*Report Date: 2025-12-28 16:25:16*

|

models/embeddings/monolingual/cdo_128d.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:87273b834175c8ffca7ff96a3a21f5890c33eb81fd8bd600e3f7749a3c1efde6

|

| 3 |

+

size 1031314655

|

models/embeddings/monolingual/cdo_128d.meta.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"lang": "cdo", "dim": 128, "max_seq_len": 512, "is_aligned": false}

|

models/embeddings/monolingual/cdo_128d_metadata.json

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"language": "cdo",

|

| 3 |

+

"dimension": 128,

|

| 4 |

+

"version": "monolingual",

|

| 5 |

+

"training_params": {

|

| 6 |

+

"dim": 128,

|

| 7 |

+

"min_count": 5,

|

| 8 |

+

"window": 5,

|

| 9 |

+

"negative": 5,

|

| 10 |

+

"epochs": 5

|

| 11 |

+

},

|

| 12 |

+

"vocab_size": 7009

|

| 13 |

+

}

|

models/embeddings/monolingual/cdo_32d.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d65b7a0893696382e676534c0654d0fa33b57a4337091da15492cd7c0abf3b48

|

| 3 |

+

size 257931743

|

models/embeddings/monolingual/cdo_32d.meta.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"lang": "cdo", "dim": 32, "max_seq_len": 512, "is_aligned": false}

|

models/embeddings/monolingual/cdo_32d_metadata.json

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"language": "cdo",

|

| 3 |

+

"dimension": 32,

|

| 4 |

+

"version": "monolingual",

|

| 5 |

+

"training_params": {

|

| 6 |

+

"dim": 32,

|

| 7 |

+

"min_count": 5,

|

| 8 |

+

"window": 5,

|

| 9 |

+

"negative": 5,

|

| 10 |

+

"epochs": 5

|

| 11 |

+

},

|

| 12 |

+

"vocab_size": 7009

|

| 13 |

+

}

|

models/embeddings/monolingual/cdo_64d.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:70424ab1f058bf1eec92ffa8c17229b3451d02319f7295d7a82908645320bdb6

|

| 3 |

+

size 515726047

|

models/embeddings/monolingual/cdo_64d.meta.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"lang": "cdo", "dim": 64, "max_seq_len": 512, "is_aligned": false}

|

models/embeddings/monolingual/cdo_64d_metadata.json

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"language": "cdo",

|

| 3 |

+

"dimension": 64,

|

| 4 |

+

"version": "monolingual",

|

| 5 |

+

"training_params": {

|

| 6 |

+

"dim": 64,

|

| 7 |

+

"min_count": 5,

|

| 8 |

+

"window": 5,

|

| 9 |

+

"negative": 5,

|

| 10 |

+

"epochs": 5

|

| 11 |

+

},

|

| 12 |

+

"vocab_size": 7009

|

| 13 |

+

}

|

models/subword_markov/cdo_markov_ctx1_subword.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0f235b61b31e910d9f0a63bff6431ca1b061d7d60c93f009c3efb2d4dd4d8d94

|

| 3 |

+

size 859391

|

models/subword_markov/cdo_markov_ctx1_subword_metadata.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"context_size": 1,

|

| 3 |

+

"variant": "subword",

|

| 4 |

+

"language": "cdo",

|

| 5 |

+

"unique_contexts": 31614,

|

| 6 |

+

"total_transitions": 2905389

|

| 7 |

+

}

|

models/subword_markov/cdo_markov_ctx2_subword.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:95c5781711001d90b8d98035bad77dc2eafa9ca4d93f92cbe4e187b3ca6f467c

|

| 3 |

+

size 2273669

|

models/subword_markov/cdo_markov_ctx2_subword_metadata.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"context_size": 2,

|

| 3 |

+

"variant": "subword",

|

| 4 |

+

"language": "cdo",

|

| 5 |

+

"unique_contexts": 127156,

|

| 6 |

+

"total_transitions": 2888681

|

| 7 |

+

}

|

models/subword_markov/cdo_markov_ctx3_subword.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0112fc9e03100831bb45b13b125d60693af2b6111630b553ae0b8802b325840d

|

| 3 |

+

size 4278912

|

models/subword_markov/cdo_markov_ctx3_subword_metadata.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"context_size": 3,

|

| 3 |

+

"variant": "subword",

|

| 4 |

+

"language": "cdo",

|

| 5 |

+

"unique_contexts": 253902,

|

| 6 |

+

"total_transitions": 2871973

|

| 7 |

+

}

|

models/subword_markov/cdo_markov_ctx4_subword.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:863a8d123baa32acad56367516fba40e4df9fe4d98e2a22ce9e27a3dcadf37ff

|

| 3 |

+

size 6583946

|

models/subword_markov/cdo_markov_ctx4_subword_metadata.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"context_size": 4,

|

| 3 |

+

"variant": "subword",

|

| 4 |

+

"language": "cdo",

|

| 5 |

+

"unique_contexts": 389634,

|

| 6 |

+

"total_transitions": 2855265

|

| 7 |

+

}

|

models/subword_ngram/cdo_2gram_subword.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:429757e59b20addd0ca423c66011cf245936cb430796406a7e56ac432fbc78ff

|

| 3 |

+

size 180569

|

models/subword_ngram/cdo_2gram_subword_metadata.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"n": 2,

|

| 3 |

+

"variant": "subword",

|

| 4 |

+

"language": "cdo",

|

| 5 |

+

"unique_ngrams": 13773,

|

| 6 |

+

"total_ngrams": 2905389

|

| 7 |

+

}

|

models/subword_ngram/cdo_3gram_subword.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:eb21e2e89d34f0d79e0ad314920dfc3371d0dbdc72b20e5448b52c64d94e3daf

|

| 3 |

+

size 481494

|

models/subword_ngram/cdo_3gram_subword_metadata.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"n": 3,

|

| 3 |

+

"variant": "subword",

|

| 4 |

+

"language": "cdo",

|

| 5 |

+

"unique_ngrams": 33837,

|

| 6 |

+

"total_ngrams": 2888681

|

| 7 |

+

}

|

models/subword_ngram/cdo_4gram_subword.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:3f82d92e0227f12077424a14d88f6d50fbac2bc1d12b803abe7c34c6bc8a1e7e

|

| 3 |

+

size 1234369

|

models/subword_ngram/cdo_4gram_subword_metadata.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"n": 4,

|

| 3 |

+

"variant": "subword",

|

| 4 |

+

"language": "cdo",

|

| 5 |

+

"unique_ngrams": 94271,

|

| 6 |

+

"total_ngrams": 2871973

|

| 7 |

+

}

|

models/tokenizer/cdo_tokenizer_32k.model

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:29cc26ce42341f87bf600b104422987383bb16aab206d714d8025b627a81318a

|

| 3 |

+

size 652498

|

models/tokenizer/cdo_tokenizer_32k.vocab

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

models/tokenizer/cdo_tokenizer_64k.model

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:fd9f7c3b27cd6d59d2600b4da0253681a1c8987f94a736a1458e336f86ae632c

|

| 3 |

+

size 1220234

|

models/tokenizer/cdo_tokenizer_64k.vocab

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

models/vocabulary/cdo_vocabulary.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f67094c35332c0e243a9e0fa3b227560d5e7cbeb597f1f5f91eeaae915d87402

|

| 3 |

+

size 220074

|

models/vocabulary/cdo_vocabulary_metadata.json

ADDED

|

@@ -0,0 +1,16 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"language": "cdo",

|

| 3 |

+

"vocabulary_size": 12714,

|

| 4 |

+

"statistics": {

|

| 5 |

+

"type_token_ratio": 0.07780709085921002,

|

| 6 |

+

"coverage": {

|

| 7 |

+

"top_100": 0.5244804991801553,

|

| 8 |

+

"top_1000": 0.8557711325341176,

|

| 9 |

+

"top_5000": 0.9148462073409597,

|

| 10 |

+

"top_10000": 0.9338142876283201

|

| 11 |

+

},

|

| 12 |

+

"hapax_count": 36067,

|

| 13 |

+

"hapax_ratio": 0.7393657366597651,

|

| 14 |

+

"total_documents": 16708

|

| 15 |

+

}

|

| 16 |

+

}

|

models/word_markov/cdo_markov_ctx1_word.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:216d791b7daa8b64656b1cba78bbbc02aa7f5ae7d9bf1ce3a3acf8fc6ca30ad8

|

| 3 |

+

size 2218064

|

models/word_markov/cdo_markov_ctx1_word_metadata.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"context_size": 1,

|

| 3 |

+

"variant": "word",

|

| 4 |

+

"language": "cdo",

|

| 5 |

+

"unique_contexts": 48699,

|

| 6 |

+

"total_transitions": 989207

|

| 7 |

+

}

|

models/word_markov/cdo_markov_ctx2_word.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:59c0afaec27cafdf472078114f0c1e6330cbc442a0cf86a0de739eb654009647

|

| 3 |

+

size 4081907

|

models/word_markov/cdo_markov_ctx2_word_metadata.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"context_size": 2,

|

| 3 |

+

"variant": "word",

|

| 4 |

+

"language": "cdo",

|

| 5 |

+

"unique_contexts": 169503,

|

| 6 |

+

"total_transitions": 972499

|

| 7 |

+

}

|

models/word_markov/cdo_markov_ctx3_word.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9a9fad10b27aa485ee443b1b3fb11cf1c68941f562e62e4a5d392900045e177d

|

| 3 |

+

size 6371893

|

models/word_markov/cdo_markov_ctx3_word_metadata.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"context_size": 3,

|

| 3 |

+

"variant": "word",

|

| 4 |

+

"language": "cdo",

|

| 5 |

+

"unique_contexts": 308939,

|

| 6 |

+

"total_transitions": 955791

|

| 7 |

+

}

|

models/word_markov/cdo_markov_ctx4_word.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+